Abstract

Uncertain differential equation is a type of differential equation involving uncertain process. This paper will give uncertainty distributions of the extreme values, first hitting time, and integral of the solution of uncertain differential equation. Some solution methods are also documented in this paper.

Similar content being viewed by others

Background

Probability theory, since it was founded by Kolmogorov in 1933, has been a crucial tool to model indeterminacy phenomena when probability distributions of the possible events are available. However, due to economical or technological reasons, we often cannot obtain sample data, based on which we estimate the probability distribution via statistics. In this case, we have to invite domain experts to evaluate their belief degree. Since human beings tend to overweight unlikely events, the belief degree usually has a much larger range than the real frequency (Kahneman and Tversky [1]). As a result, it cannot be treated as probability, otherwise some counterintuitive results may happen. An extreme counterexample was proposed by Liu [2].

In order to model the belief degree, an uncertainty theory was founded by Liu [3], and refined by Liu [4] based on normality, duality, subadditivity and product axioms. So far, it has been applied to many areas, and has brought many branches such as uncertain programming (Liu [5]), uncertain risk analysis (Liu [6]), uncertain inference (Liu [7]), uncertain logic (Liu [8]), and uncertain statistics (Liu [4]).

In order to describe the evolution of an uncertain phenomenon, Liu [9] proposed a concept of uncertain process. Then Liu [10] designed a Liu process that is an uncertain process with stationary and independent normal uncertain increments, and founded uncertain calculus to deal with the integral and differential of an uncertain process with respect to Liu process. Then Liu and Yao [11] extended uncertain integral from single Liu process to multiple ones. Besides, Chen and Ralescu [12] founded uncertain calculus with respect to general Liu process. As a complementary, Yao [13] founded uncertain calculus with respect to uncertain renewal process.

Uncertain differential equation was first proposed by Liu [9] as a type of differential equation driven by Liu process. Chen and Liu [14] gave an analytic solution for linear uncertain differential equation. Following that, Liu [15] and Yao [16] gave some methods to solve two types of nonlinear uncertain differential equations. Then Yao and Chen [17] proposed a numerical method for solving uncertain differential equation. As extensions of uncertain differential equation, uncertain differential equation with jumps was proposed by Yao [13], and uncertain delayed differential equation was studied among others by Barbacioru [18], Liu and Fei [19], and Ge and Zhu [20]. In addition, backward uncertain differential equation was proposed by Ge and Zhu [21].

Due to the paradox of stochastic finance theory (Liu [22]), Liu [10] presented an uncertain stock model via uncertain differential equation, and gave its European option pricing formulas, opening the door of uncertain finance theory. Then Chen [23] derived the American option pricing formulas of the stock model. After that, Peng and Yao [24] presented a mean-reverting uncertain stock model, and Chen et al[25]proposed an uncertain stock model with periodic dividends. Besides, Chen and Gao [26] proposed an uncertain interest rate model, and Liu et al[27] proposed an uncertain currency model. In addition, Zhu [28] applied uncertain differential equation to optimal control problems.

With many applications of uncertain differential equation, the study on properties of the solutions was also developed well. Chen and Liu [14] gave a sufficient condition for an uncertain differential equation having a unique solution. Then Gao [29] weakened the condition. After that, Yao et al[30] gave a sufficient condition for an uncertain differential equation being stable.

In this paper, we will consider the extreme values, first hitting time, and integral of the solution of an uncertain diffusion process. The rest of this paper is organized as follows. In the section of Preliminary, we will review some basic concepts about uncertain variable, uncertain process and uncertain differential equation. After that, we study the extreme values of the solution of an uncertain differential equation, and give their uncertainty distributions in the section of Extreme values. Then by the relationship between first hitting time and extreme value, we give an uncertainty distribution of the first hitting time of the solution of an uncertain differential equation in the section of First hitting time. Following that, we consider the integral of the solution of an uncertain differential equation, and give its inverse uncertainty distribution in the section of Integral. At last, some remarks are given in the section of Conclusions.

Preliminary

In this section, we will first review some basic concepts and results in uncertainty theory. Then we introduce the concept of uncertain process, uncertain calculus and uncertain differential equation.

Uncertainty theory

Definition 1.

(Liu [3]) Let be a σ-algebra on a nonempty set Γ. A set function is called an uncertain measure if it satisfies the following axioms:

Axiom 1: (Normality Axiom) for the universal set Γ.

Axiom 2: (Duality Axiom) for any event Λ.

Axiom 3: (Subadditivity Axiom) For every countable sequence of events Λ1,Λ2,⋯, we have

Besides, in order to provide the operational law, Liu [10] defined the product uncertain measure on the product σ-algebre as follows.

Axiom 4: (Product Axiom) Let be uncertainty spaces for k = 1,2,⋯ Then the product uncertain measure is an uncertain measure satisfying

where Λ k are arbitrarily chosen events from for k = 1,2,⋯, respectively.

An uncertain variable is essentially a measurable function from an uncertainty space to the set of real numbers. In order to describe an uncertain variable, a concept of uncertainty distribution is defined as follows.

Definition 2.

(Liu [3]) The uncertainty distribution of an uncertain variable ξ is defined by

for any

Expected value is regarded as the average value of an uncertain variable in the sense of uncertain measure.

Definition 3.

(Liu [3]) The expected value of an uncertain variable ξ is defined by

provided that at least one of the two integrals exists.

Assuming ξ has an uncertainty distribution Φ, Liu [3] proved that the expected value of ξ is

The inverse function Φ−1 of the uncertainty distribution Φ of uncertain variable ξ is called the inverse uncertainty distribution of ξ if it exists and is unique for each α ∈ (0,1). Inverse uncertainty distribution plays a crucial role in operations of independent uncertain variables.

Definition 4.

(Liu[10]) The uncertain variables ξ 1,ξ 2,⋯,ξ n are said to be independent if

for any Borel set B 1,B 2,⋯,B n of real numbers.

Theorem 1.

(Liu [4]) Let ξ 1,ξ 2,⋯,ξ n be independent uncertain variables with uncertainty distributions Φ1,Φ2,⋯,Φ n , respectively. If f(x 1,x 2,⋯,x n ) is strictly increasing with respect to x 1,x 2,⋯,x m and strictly decreasing with respect to x m+1,x m+2,⋯,x n , then ξ = f (ξ 1,ξ 2,⋯,ξ n ) is an uncertain variable with an inverse uncertainty distribution

Theorem 2.

(Liu and Ha [31]) Let ξ 1,ξ 2,⋯,ξ n be independent uncertain variables with uncertainty distributions Φ1,Φ2,⋯,Φ n , respectively. If f (x 1,x 2,⋯,x n ) is strictly increasing with respect to x 1,x 2,⋯,x m and strictly decreasing with respect to x m+1,x m+2,⋯,x n , then the expected value of uncertain variable ξ = f(ξ 1,ξ 2,⋯,ξ n ) is

Uncertain process

In order to model the evolution of uncertain phenomena, an uncertain process was proposed by Liu [9] as a sequence of uncertain variables driven by time or space.

Definition 5.

(Liu [9]) Let T be an index set, and let be an uncertainty space. An uncertain process is a measurable function from to the set of real numbers, i.e., for each t ∈ T and any Borel set B of real numbers, the set

is an event.

Definition 6.

Let X t be an uncertain process and let z be a given level. Then the uncertain variable

is called the first hitting time that X t reaches the level z.

Independent increment uncertain process is an important type of uncertain processes. Its formal definition is given below.

Definition 7.

(Liu [9]) An uncertain process is said to have independent increments if

are independent uncertain variables where t 0 is the initial time and t 1,t 2,⋯,t k are any times with t 0 < t 1 < ⋯ < t k .

For a sample-continuous independent increment process X t , Liu [32] proved the following extreme value theorem,

Definition 8.

(Liu [9]) An uncertain process is said to have stationary increments if for any given t > 0, the increments X t+s − X s are identically distributed uncertain variables for all s>0.

Uncertain calculus

Definition 9.

(Liu [10]) An uncertain process C t is said to be a canonical Liu process if

(i) C 0 = 0 and almost all sample paths are Lipschitz continuous,

(ii) C t has stationary and independent increments,

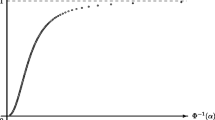

(iii) every increment C s+t − C s is a normal uncertain variable with expected value 0 and variance t2, whose uncertainty distribution is

Definition 10.

(Liu [10]) Let X t be an uncertain process and C t be a canonical Liu process. For any partition of closed interval [a,b] with a = t 1 < t 2 < ⋯ < t k+1 = b, the mesh is written as

Then Liu integral of X t is defined by

provided that the limit exists almost surely and is finite. In this case, the uncertain process X t is said to be Liu integrable.

Definition 11.

(Liu [10]) Let C t be a canonical Liu process, and μ s and σ s be two uncertain processes. Then the uncertain process

is called a Liu process with drift μ t and diffusion σ t . The differential form of Liu process is written as

Theorem 3.

(Liu [10]) (Fundamental Theorem of Uncertain Calculus) Let C t be a canonical Liu process, and h(t,c) be a continuously differentiable function. Then Z t = h(t,C t ) is a Liu process with

Uncertain differential equation

An uncertain differential equation is essentially a type of differential equation driven by Liu process.

Definition 12.

(Liu [9]) Suppose C t is a canonical Liu process, and f and g are two given functions. Then

is called an uncertain differential equation.

Yao and Chen [17] proposed a concept of α-path, and found a connection between an uncertain differential equation and a spectrum of ordinary differential equations.

Definition 13.

(Yao and Chen [17]) Let α be a number with 0 < α < 1. An uncertain differential equation

is said to have an α-path if it solves the corresponding ordinary differential equation

where Φ−1(α) is the inverse uncertainty distribution of a standard normal uncertain variable.

Theorem 4.

(Yao and Chen [17]) Let X t and be the solution and α-path of the uncertain differential equation

respectively. Then

As a corollary, the solution X t has an inverse uncertainty distribution

Extreme values

In this section, we study the extreme values of the solution of an uncertain differential equation, and give their uncertainty distributions. In addition, we design some numerical methods to obtain the uncertainty distributions.

Supremum

Theorem 5.

Let X t and be the solution and α-path of the uncertain differential equation

respectively. Then for a strictly increasing function J(x), the supremum

has an inverse uncertainty distribution

Proof.

Since J(x) is a strictly increasing function, we have

and

By Theorem 4 and the monotonicity of uncertain measure, we have

and

It follows from the duality axiom of uncertain measure that

i.e., the supremum

has an inverse uncertainty distribution

In order to calculate the inverse uncertainty distribution of the supremum, we design a numerical method as below. □

Step 1: Fix α in (0,1), and fix h as the step length. Set i = 0, N = s/h, , and H = J(X 0).

Step 2: Employ the recursion formula

and calculate

Step 3: Set

Step 4: Repeat Step 2 and Step 3 for N times.

Step 5: The inverse uncertainty distribution of

is determined by

Theorem 6.

Let X t be the solution of an uncertain differential equation dX t = f(t,X t )dt + g(t,X t )dC t . Assume X t has an uncertainty distribution Φ t (x) at each time t. Then for a strictly increasing function J(x), the supremum

has an uncertainty distribution

Proof.

Since we have

by Theorem 5. Write

i.e.,

Then we have

In other words, the supremum

has an uncertainty distribution

□

Theorem 7.

Let X t and be the solution and α-path of the uncertain differential equation

respectively. Then for a strictly decreasing function J(x), the supremum

has an inverse uncertainty distribution

Proof.

Since J(x) is a strictly decreasing function, we have

and

By Theorem 4 and the monotonicity of uncertain measure, we have

and

It follows from the duality axiom of uncertain measure that

i.e., the supremum

has an inverse uncertainty distribution

□

Theorem 8.

Let X t be the solution of an uncertain differential equation dX t = f(t,X t )dt + g(t,X t )dC t . Assume X t has an uncertainty distribution Φ t (x) at each time t. Then for a strictly decreasing function J(x), the supremum

has an uncertainty distribution

Proof.

Since we have

by Theorem 7. Write

i.e.,

Then we have

In other words, the supremum

has an uncertainty distribution

□

Infimum

Theorem 9.

Let X t and be the solution and α-path of the uncertain differential equation

respectively. Then for a strictly increasing function J(x), the infimum

has an inverse uncertainty distribution

Proof.

Since J(x) is a strictly increasing function, we have

and

By Theorem 4 and the monotonicity of uncertain measure, we have

and

It follows from the duality axiom of uncertain measure that

i.e., the infimum

has an inverse uncertainty distribution

In order to calculate the uncertainty distribution of the infimum, we design a numerical method as below. □

Step 1: Fix α in (0,1), and fix h as the step length. Set i = 0, N = s/h, , and H = J(X 0).

Step 2: Employ the recursion formula

and calculate and

Step 3: Set

Step 4: Repeat Step 2 and Step 3 for N times.

Step 5: The inverse uncertainty distribution of is determined by

Theorem 10.

Let X t be the solution of an uncertain differential equation dX t = f(t,X t )dt + g(t,X t )dC t . Assume X t has an uncertainty distribution Φ t (x) at each time t. Then for a strictly increasing function J(x), the infimum

has an uncertainty distribution

Proof.

Since we have

by Theorem 9. Write

i.e.,

Then we have

In other words, the infimum

has an uncertainty distribution

□

Theorem 11.

Let X t and be the solution and α-path of the uncertain differential equation

respectively. Then for a strictly decreasing function J(x), the infimum

has an inverse uncertainty distribution

Proof.

Since J(x) is a strictly decreasing function, we have

and

By Theorem 4 and the monotonicity of uncertain measure, we have

and

It follows from the duality axiom of uncertain measure that

i.e., the infimum

has an inverse uncertainty distribution

□

Theorem 12.

Let X t be the solution of an uncertain differential equation dX t = f(t,X t )dt + g(t,X t )dC t . Assume X t has an uncertainty distribution Φ t (x) at each time t. Then for a strictly decreasing function J(x), the infimum

has an uncertainty distribution

Proof.

Since we have

by Theorem 11. Write

i.e.,

Then we have

In other words, the infimum

has an uncertainty distribution

□

First hitting time

In this section, we study the first hitting time of the solution of an uncertain differential equation, and give the uncertainty distributions in different cases.

First hitting time of strictly increasing function of the solution

Theorem 13.

Let X t and be the solution and α-path of the uncertain differential equation

with an initial value X 0, respectively. Given a strictly increasing function J(x), and a level z > J(X 0), the first hitting time τ z that J(X t ) reaches z has an uncertainty distribution

Proof.

Write

Since J(x) is a strictly increasing function, we have

By Theorem 4 and the monotonicity of uncertain measure, we have

It follows from the duality axiom of uncertain measure that

This completes the proof. □

For a strictly increasing function J(x), in order to calculate the uncertainty distribution Ψ(s) of the first hitting time τ z that J(X t ) reaches z when J(X 0) < z, we design a numerical method as below.

Step 1: Fix ε as the accuracy, and fix h as the step length. Set N = s/h.

Step 2: Employ the recursion formula

for N times, and calculate If

then return 1 − ε and stop.

Step 3: Employ the recursion formula

for N times, and calculate If

then return ε and stop.

Step 4: Set α 1 = ε, α 2 = 1 − ε.

Step 5: Set α = (α 1 + α 2)/2.

Step 6: Employ the recursion formula

for N times, and calculate If

then set α 1 = α. Otherwise, set α 2 = α.

Step 7: If |α 2 − α 1| ≤ ε, then return 1 − α and stop. Otherwise, go to Step 5.

Theorem 14.

Let X t be the solution of an uncertain differential equation dX t = f(t,X t )dt + g(t,X t )dC t with an initial value X 0. Assume X t has an uncertainty distribution Φ t (x) at each time t. Then given a strictly increasing function J(x) and a level z > J(X 0), the first hitting time τ z that J(X t ) reaches z has an uncertainty distribution

Proof.

Since the event {τ z ≤ s} is equivalent to the event

provided z > J(X 0), it follows from Theorem 6 that

This completes the proof. □

Theorem 15.

Let X t and be the solution and α-path of the uncertain differential equation

with an initial value X 0, respectively. Given a strictly increasing function J(x) and a level z < J(X 0), the first hitting time τ z that J(X t ) reaches z has an uncertainty distribution

Proof.

Write

Then

By Theorem 4 and the monotonicity of uncertain measure, we have

It follows from the duality axiom of uncertain measure that

This completes the proof. □

For a strictly increasing function J(x), in order to calculate the uncertainty distribution ϒ(s) of the first hitting time τ z that J(X t ) reaches z when J(X 0) > z, we design a numerical method as below.

Step 1: Fix ε as the accuracy, and fix h as the step length. Set N = s/h.

Step 2: Employ the recursion formula

for N times, and calculate If

then return 1 − ε and stop.

Step 3: Employ the recursion formula

for N times, and calculate If

then return ε and stop.

Step 4: Set α 1 = ε, α 2 = 1 − ε.

Step 5: Set α = (α 1 + α 2)/2.

Step 6: Employ the recursion formula

for N times, and calculate If

then set α 1 = α. Otherwise, set α 2 = α.

Step 7: If |α 2 − α 1| ≤ ε, then return α and stop. Otherwise, go to Step 5.

Theorem 16.

Let X t be the solution of an uncertain differential equation dX t = f(t,X t )dt + g(t,X t )dC t with an initial value X 0. Assume X t has an uncertainty distribution Φ t (x) at each time t. Then given a strictly increasing function J(x) and a level z < J(X 0), the first hitting time τ z that J(X t ) reaches z has an uncertainty distribution

Proof.

Since the event {τ z ≤ s} is equivalent to the event

provided z < J(X 0), it follows from Theorem 10 that

This completes the proof. □

First hitting time of strictly decreasing function of the solution

Theorem 17.

Let X t and be the solution and α-path of the uncertain differential equation

with an initial value X 0, respectively. Given a strictly decreasing function J(x), and a level z > J(X 0), the first hitting time τ z vthat J(X t ) reaches z has an uncertainty distribution

Proof.

Write

Since J(x) is a strictly decreasing function, we have

By Theorem 4 and the monotonicity of uncertain measure, we have

It follows from the duality axiom of uncertain measure that

This completes the proof. □

Theorem 18.

Let X t be the solution of an uncertain differential equation dX t = f(t,X t )dt + g(t,X t )dC t with an initial value X 0. Assume X t has an uncertainty distribution Φ t (x) at each time t. Then given a strictly decreasing function J(x) and a level z > J(X 0), the first hitting time τ z that J(X t ) reaches z has an uncertainty distribution

Proof.

Since the event {τ z ≤ s} is equivalent to the event

provided z > J(X 0), it follows from Theorem 10 that

This completes the proof. □

Theorem 19.

Let X t and be the solution and α-path of the uncertain differential equation

with an initial value X 0, respectively. Given a strictly decreasing function J(x) and a level z < J(X 0), the first hitting time τ z that J(X t ) reaches z has an uncertainty distribution

Proof.

Write

Then

By Theorem 4 and the monotonicity of uncertain measure, we have

It follows from the duality axiom of uncertain measure that

This completes the proof. □

Theorem 20.

Let X t be the solution of an uncertain differential equation dX t = f(t,X t )dt + g(t,X t )dC t with an initial value X 0. Assume X t has an uncertainty distribution Φ t (x) at each time t. Then given a strictly decreasing function J(x) and a level z < J(X 0), the first hitting time τ z that J(X t ) reaches z has an uncertainty distribution

Proof.

Since the event {τ z ≤ s} is equivalent to the event

provided z < J(X 0), it follows from Theorem 6 that

This completes the proof. □

Integral

In this section, we study the integral of the solution of an uncertain differential equation, and give its uncertainty distribution. Besides, we design a numerical method to obtain the uncertainty distribution.

Theorem 21.

Let X t and be the solution and α-path of the uncertain differential equation

respectively. Assume J(x) is a strictly increasing function. Then the integral

has an inverse uncertainty distribution

Proof.

Since J(x) is a strictly increasing function, we have

and

By Theorem 4 and the monotonicity of uncertain measure, we have

and

It follows from the duality axiom of uncertain measure that

In other words, the integral

has an inverse uncertainty distribution

□

Example 1.

Let X t and be the solution and α-path of the uncertain differential equation dX t = f(t,X t )dt + g(t,X t )dC t , respectively. Consider a function h(t,x) = exp(−r t) x. Since h(t,x) is strictly increasing with respect to x, the integral

has an inverse uncertainty distribution

When J(x) is a strictly increasing function, in order to calculate the uncertainty distribution of the integral of J(X t ), we design a numerical method as below.

Step 1: Fix α in (0,1), and fix h as the step length. Set i = 0, N = s/h, and

Step 2: Employ the recursion formula

and calculate and

Step 3: Set i ← i + 1.

Step 4: Repeat Step 2 and Step 3 for N times.

Step 5: The inverse uncertainty distribution of

is determined by

Theorem 22.

Let X t and be the solution and α-path of the uncertain differential equation

respectively. Assume J(x) is a strictly decreasing function. Then the integral

has an inverse uncertainty distribution

Proof.

Since J(x) is a strictly decreasing function, we have

and

By Theorem 4 and the monotonicity of uncertain measure, we have

and

It follows from the duality axiom of uncertain measure that

In other words, the integral

has an inverse uncertainty distribution

□

Conclusions

This paper considered the solution of an uncertain differential equation, and gave the uncertainty distributions of its extreme values, first hitting time, and integral. In addition, we designed some numerical methods to obtain the uncertainty distributions.

References

Kahneman D, Tversky A: Prospect theory: An analysis of decision under risk. Econometrica 1979,47(2):263–292. 10.2307/1914185

Liu B: Why is there a need for uncertainty theory. J. Uncertain Syst 2012,6(1):3–10.

Liu B: Uncertainty Theory. Springer, Berlin; 2007.

Liu B: Uncertainty Theory: A Branch of Mathematics for Modeling Human Uncertainty. Springer, Berlin; 2010.

Liu B: Theory and Practice of Uncertain Programming. Springer, Berlin; 2009.

Liu B: Uncertain risk analysis and uncertain reliability analysis. J. Uncertain Syst 2010,4(3):163–170.

Liu B: Uncertain set theory and uncertain inference rule with application to uncertain control. J. Uncertain Syst 2010,4(2):83–98.

Liu B: Uncertain logic for modeling human language. J. Uncertain Syst 2011,5(1):3–20.

Liu B: Fuzzy process, hybrid process and uncertain process. J. Uncertain Syst 2008,2(1):3–16.

Liu B: Some research problems in uncertainty theory. J. Uncertain Syst 2009,3(1):3–10.

Liu B, Yao K: Uncertain integral with respect to multiple canonical processes. J. Uncertain Syst 2012,6(4):249–254.

Chen X, Ralescu DA: Liu process and uncertain calculus. J. Uncertainty Anal. Appl 2013., 1: Article 3

Yao K: Uncertain calculus with renewal process. Fuzzy Optimization and Decis. Mak 2012,11(3):285–297.

Chen X, Liu B: Existence and uniqueness theorem for uncertain differential equations. Fuzzy Optimization and Decis. Mak 2010,9(1):69–81.

Liu YH: An analytic method for solving uncertain differential equations. J. Uncertain Syst 2012,6(4):243–248.

Yao K: A type of nonlinear uncertain differential equations with analytic solution. 2013.

Yao K, Chen X: A numerical method for solving uncertain differential equations. J. Intell. and Fuzzy Syst. 2013,25(3):825–832. 10.3233/IFS-120688

Barbacioru I: Uncertainty functional differential equations for finance. Surv. Math. Appl 2010, 5: 275–284.

Liu HJ, Fei WY: Neutral uncertain delay differential equations. Inf.: An Int. Interdiscip. J 2013,16(2):1225–1232.

Ge X, Zhu Y: Existence and uniqueness theorem for uncertain delay differential equations. J. Comput. Inf. Syst 2012,8(20):8341–8347.

Ge X, Zhu Y: A necessary condition of optimality for uncertain optimal control problem. Fuzzy Optimization and Decis. Mak 2013,12(1):41–51.

Liu B: Toward uncertain finance theory. J. Uncertainty Anal. Appl 2013., 1: Article 1

Chen X: American option pricing formula for uncertain financial market. Int. J. Oper. Res 2011,8(2):32–37.

Peng J, Yao K: A new option pricing model for stocks in uncertainty markets. Int. J. Oper. Res 2011,8(2):18–26.

Chen X, Liu YH, Ralescu DA: Uncertain stock model with periodic dividends. Fuzzy Optimization and Decis. Mak 2013b,12(1):111–123.

Chen X, Gao J: Uncertain term structure model of interest rate. Soft Computing 2013,17(4):597–604. 10.1007/s00500-012-0927-0

Liu YH, Ralescu DA, Chen X: Uncertain currency model and currency option pricing. International Journal of Intelligent Systems, to be published

Zhu Y: Uncertain optimal control with application to a portfolio selection model. Cybern. Syst 2010,41(7):535–547. 10.1080/01969722.2010.511552

Gao Y: Existence and uniqueness theorem on uncertain differential equations with local Lipschitz condition. J. Uncertain Syst 2012,6(3):223–232.

Yao K, Gao J, Gao Y: Some stability theorems of uncertain differential equation. Fuzzy Optimization and Decis. Mak 2013,12(1):3–13.

Liu YH, Ha MH: Expected value of function of uncertain variables. J. Uncertain Syst 2010,4(3):181–186.

Liu B: Extreme value theorems of uncertain process with application to insurance risk model. Soft Comput 2013,17(4):549–556. 10.1007/s00500-012-0930-5

Acknowledgements

This work was supported by National Natural Science Foundation of China Grants No.61273044.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 2.0 International License (https://creativecommons.org/licenses/by/2.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Yao, K. Extreme values and integral of solution of uncertain differential equation. J. Uncertain. Anal. Appl. 1, 2 (2013). https://doi.org/10.1186/2195-5468-1-2

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/2195-5468-1-2