Abstract

Background

Although allied health is considered to be one 'unit' of healthcare providers, it comprises a range of disciplines which have different training and ways of thinking, and different tasks and methods of patient care. Very few empirical studies on evidence-based practice (EBP) have directly compared allied health professionals. The objective of this study was to examine the impact of a structured model of journal club (JC), known as i CAHE (International Centre for Allied Health Evidence) JC, on the EBP knowledge, skills and behaviour of the different allied health disciplines.

Methods

A pilot, pre-post study design using maximum variation sampling was undertaken. Recruitment was conducted in groups and practitioners such as physiotherapists, occupational therapists, speech pathologists, social workers, psychologists, nutritionists/dieticians and podiatrists were invited to participate. All participating groups received the i CAHE JC for six months. Quantitative data using the Adapted Fresno Test (McCluskey & Bishop) and Evidence-based Practice Questionnaire (Upton & Upton) were collected prior to the implementation of the JC, with follow-up measurements six months later. Mean percentage change and confidence intervals were calculated to compare baseline and post JC scores for all outcome measures.

Results

The results of this study demonstrate variability in EBP outcomes across disciplines after receiving the i CAHE JC. Only physiotherapists showed statistically significant improvements in all outcomes; speech pathologists and occupational therapists demonstrated a statistically significant increase in knowledge but not for attitude and evidence uptake; social workers and dieticians/nutritionists showed statistically significant positive changes in their knowledge, and evidence uptake but not for attitude.

Conclusions

There is evidence to suggest that a JC such as the i CAHE model is an effective method for improving the EBP knowledge and skills of allied health practitioners. It may be used as a single intervention to facilitate evidence uptake in some allied health disciplines but may need to be integrated with other strategies to influence practice behaviour in other practitioners. An in-depth analysis of other factors (e.g. individual, contextual, organisational), or the relative contribution of these variables is required to better understand the determinants of evidence uptake in allied health.

Similar content being viewed by others

Background

This paper presents the findings of a pre-post study which examined the impact of a structured model of journal club on the knowledge, skills and behaviour of allied health practitioners relevant to evidence-based practice (EBP).

Allied health perspectives on uptake of research evidence into practice

The literature suggests that allied health practitioners (AHPs) in general have positive attitudes toward EBP, and believe their clinical decisions should be supported by research evidence[1–3]. However, despite their recognition of its importance and value, the uptake of research evidence in clinical practice remains limited[1, 4, 5]. For example, a survey of paediatric occupational therapists and physiotherapists revealed wide variations and gaps between their actual practice and best practice guidelines in the treatment of cerebral palsy[6]. In another study which examined the current practices of occupational therapists, physiotherapists and speech pathologists, best practices in post-stroke rehabilitation were not routinely applied[7]. For many AHPs, the move towards regularly utilising evidence in practice is still an ongoing challenge.

Previous research outlines differences between and within allied health disciplines in terms of their knowledge and skills relevant to EBP[8]. Their learning needs vary according to their profession and prior research experience[9, 10]. There are also considerable differences in terms of access to evidence sources and perceived support from the organisation/institution[9, 11]. This body of evidence suggests that there is no ‘one-size-fits-all’ strategy that is likely to be effective across all disciplines. There needs to be recognition of the differences within and between allied health practices which require different approaches in order to influence practice behaviour.

Journal club as a medium to bridge the gap between research and practice

A journal club (JC) is a group of individuals who regularly meet to discuss current articles from scientific journals[12]. There is evidence to suggest that JCs are one approach which can be used to bridge the gap between research and clinical practice[13–17]. They can be used to provide structured time for reading and overcome difficulties associated with understanding research findings, which have both been reported as barriers to implementing evidence into practice[15, 18].

Journal clubs have been reported in different health care settings but mostly for medical and nursing professions[12, 19, 20]. In medicine, the literature reports significant improvements not only in JC participants’ reading habits[12, 21, 22] but also in their knowledge of biostatistics, research design and critical appraisal[23–27]. In nursing, on the other hand, JC participation led to improvements in critical appraisal skills of members, and better social networking among staff[28–31]. There is little information about the effectiveness of JCs in allied health.

An innovative model of journal club –iCAHE journal club

In traditional models of JC, the presenter randomly selects an article for discussion and meetings generally consist of summarising the article based on the author’s results and conclusions[32]. Furthermore, most presenters do not examine the quality of the articles because of lack of skills in critical appraisal. As a result, the information obtained from the article is rarely reflected upon and any learnings are seldom processed for clinical use[32]. To address these issues, a structured, innovative model of JC was developed by the International Centre for Allied Health Evidence (i CAHE). A detailed description of the i CAHE model of JC, including its development and structure, has been reported elsewhere[33]. Briefly, the i CAHE JC aims to provide a sustainable model of JC to keep AHPs informed of the current best evidence and ultimately promote research evidence uptake. This model is based on the principles of Adult Learning or Andragogical Theory and integral to it is the nomination of facilitators who will act as the point of contact between i CAHE and AHPs from each JC. The i CAHE JC utilises a collaborative approach, where researchers from i CAHE and AHPs from JCs share responsibilities, as shown in Table1. The current format of the i CAHE JC provides a standardised structure for conducting JCs, and the collaboration between researchers and practitioners from JCs address issues associated with lack of skills in appraisal, which makes it preferable over traditional models of JCs[34].

The objective of this study was to examine the impact of the i CAHE JC on the EBP knowledge, skills and behaviour of the different allied health disciplines.

Methods

Ethics

This study was approved by the University of South Australia Human Research Ethics Committee and the Human Research Ethics Committee (Tasmania) Network. Written informed consent was obtained from all participants.

Research design

A single arm, pre-post study design, combining quantitative and qualitative approaches, was used in the study. Only the quantitative findings are reported in this paper.

Sampling and participants

As this was a pilot study, no formal sample calculation was performed. Sampling however, aimed for maximum variation in the JCs so that a diverse range of AHPs can be studied. The objective of maximum variation sampling is to select a sample that is more representative than a random sample when a small number of participants are to be selected, which was the case in the current study[35]. Practitioners who belong to the category of allied health therapy[36] were invited to participate, including physiotherapists, occupational therapists, speech pathologists, social workers, psychologists, nutritionists/dieticians and podiatrists. To avoid sample contamination, recruitment was undertaken mainly in Tasmania, Australia where i CAHE had not established any JCs. Recruitment of participants was undertaken in groups rather than as individuals and therefore allied health managers were approached for nomination of groups to participate in this study. Groups were eligible to join if they satisfied the following criteria: (1) work in a health care facility in Australia, (2) agree to meet once a month for six months, and (3) have two committed facilitators. Individual practitioners were qualified to participate if they practice in Australia and were part of a group who agreed to participate in the study. Groups or individual practitioners who were previously involved in an i CAHE JC were excluded from the study.

Intervention – iCAHE model of journal club

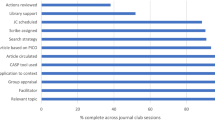

The intervention consisted of six monthly journal club sessions using the i CAHE model, each lasting an hour. All participating groups nominated two facilitators who were required to attend a once-off training workshop by i CAHE in aspects of EBP such as formulating clinical questions, developing a search strategy, critical appraisal, evidence implementation and evaluation. The facilitators were, in turn, instructed to train their members prior to the first JC session. Each round of JC involved the steps described in Figure1.

For every meeting the facilitator led the discussion and provided members the opportunity to discuss key findings of the study, its methodological quality and issues pertaining to the implementation of research evidence to clinical practice. Self-help kits on statistics were provided by i CAHE when necessary. Every discussion ended with the resolution of a clinical problem and with a view towards utilising the best available evidence in making clinical decisions and evaluating its effect on practice and health care outcomes. Regular contact with i CAHE was maintained throughout the study.

Data collection and analysis

Quantitative data were collected prior to the implementation of the JC, with follow-up measurements six months later. Measurements comprised the following questionnaires:

-

Objective knowledge was assessed using the Adapted Fresno Test (AFT)[37]. The AFT is a seven- item instrument for assessing knowledge and skills in the major domains of EBP, such as formulating clinical questions, searching for and critically appraising research evidence. The test has acceptable validity, inter-rater reliability and internal consistency[37].

-

EBP uptake was measured using the questionnaire developed by Upton and Upton[8]. EBP uptake referred to the extent to which the key steps involved in EBP (formulating a clinical question, searching for the most appropriate evidence to address the question, critically appraising the retrieved evidence, incorporating the evidence into a strategy for action, and evaluating the effects of any decisions and action taken) were integrated into day-to-day practice. This questionnaire has been reported to have adequate levels of validity and reliability[8]. In addition to EBP uptake, this questionnaire measured attitude to, and perceived knowledge about EBP.

The participants were asked to individually complete the paper and pencil version or electronic version of the questionnaires, prior to the first JC at a time convenient for them.

All analyses were performed using SAS version 9.3. An intention-to-treat analysis was applied, which regarded all non-completers as unchanged. In other words, for participants with missing post-intervention data, the baseline measurement (i.e. last observation) was carried forward as their post intervention measurement[38]. Data for baseline and post JC outcomes were presented as means and standard deviations. Mean percentage change and confidence intervals were calculated to compare baseline and post JC scores for all outcome measures. Although allied health is considered to be one 'unit' of healthcare providers for organisational purposes, it comprises a range of disciplines which have different training and ways of thinking, and different tasks and methods of patient care. Therefore the data were analysed per discipline rather than as an allied health group because the authors were interested in whether there were discipline-specific differences in responses to JC. The one-way analysis of variance (ANOVA) was used to analyse baseline differences across allied health disciplines[39, 40]. A statistical test with a p value<0.05 was considered statistically significant.

Results

Characteristics of the sample

Of the fourteen groups of AHPs nominated by the allied health managers, only twelve groups (i.e. journal clubs) agreed to participate in the study. Heavy clinical workload was the reason provided by the two groups who did not participate. Table2 presents the demographic characteristics of the participants. A total of 93 AHPs, including speech pathologists (SPs), physiotherapists (PTs), social workers (SWs), occupational therapists (OTs) and dieticians/nutritionists (DNs), participated in the study. The majority of participants worked full time in an acute hospital setting, held undergraduate degrees, and had more than 10 years of clinical experience. Less than half were members of professional associations.

Baseline scores

At baseline, there were statistically significant differences in objective knowledge (as measured by AFT) when allied health disciplines were compared (p = 0.03). The PTs showed the highest score followed by DNs, SPs, SWs and OTs. The attitude scores were also statistically different across disciplines (p = 0.02); SPs showed the highest attitude score, followed by OTs, DNs, SWs and PTs. In terms of self-reported (i.e. perceived) knowledge, scores were not statistically different across disciplines (p = 0.42). Similarly for EBP uptake, no statistically significant difference was noted when disciplines were compared (p = 0.13).

As a result of significant differences in baseline data, change in scores was interpreted as percentage change (Post-JC score – Baseline score/Baseline score x 100) from baseline. This strategy standardised change relative to the baseline scores.

Table3 shows the pre and post JC scores and the mean percentage change per outcome measure for every discipline.

Change in scores

Figure2 shows the pre-post scores for all outcomes in every discipline.

Following JC exposure, the AFT scores improved significantly in all disciplines. The PTs obtained the greatest change in score, followed by OTs, SWs, SPs, and DNs. There were also significant improvements in self-reported knowledge in all disciplines; greatest change was achieved by PTs, followed by OTs, DNs, SPs and SWs. No significant improvement in attitude was observed in all disciplines except for PTs. Post-JC, statistically significant improvements in EBP uptake were found for PTs, SWs and DNs but not for SPs and OTs. Table4 summarises significant findings for all outcome measures across disciplines.

Discussion

The majority of EBP studies in allied health have been conducted within individual disciplines and very few empirical studies have directly compared allied health professionals. The aim of the current study was to examine the effect of an innovative model of JC – i CAHE model – on the EBP knowledge, attitude and behaviour of the different allied health disciplines. The results of this study demonstrated variability in EBP outcomes across disciplines after receiving the same intervention. Only the PTs showed improvements in all outcomes; SPs and OTs demonstrated an increase in both objective and perceived knowledge but not for attitude and EBP uptake; SWs and DNs showed positive changes in their objective and perceived knowledge, and EBP uptake but not for attitude. To the authors’ knowledge, this is the first study to compare EBP outcomes across allied health disciplines following an education intervention such as the i CAHE JC.

Based on the AFT and self-reported questionnaire, there were significant improvements in objective and perceived knowledge following exposure to i CAHE JC, irrespective of the discipline. This finding is consistent with the results of recent systematic reviews which showed evidence that JCs as a teaching method can increase the knowledge and confidence of health practitioners[41, 42]. The literature proposes that for JCs to be successful there should be elements of adult learning principles, clearly set goals, regular meetings, use of structured critical appraisal tool, mentoring and training and distribution of journal article before the meeting[41, 43, 44]. The i CAHE model was developed in accordance with these guidelines, which could explain the satisfactory improvements in EBP knowledge and skills observed in the JC participants. There appears to be another key component in the i CAHE model which potentially led to the positive outcomes found in this study – the partnership between researchers and AHPs from the JCs, which was a unique feature of the i CAHE model. This partnership ensured that the tasks of searching, identifying and appraising relevant literature, which have all been reported as barriers to research evidence uptake, were addressed by the involvement of researchers. Therefore, the i CAHE model of JC did not only serve as a medium to educate AHPs with the key processes involved in EBP, but it also addressed the barriers associated with implementing evidence into practice.

This study found that AHPs vary in their attitude and behaviour outcomes to an educational intervention aimed at promoting EBP. The SPs, PTs, OTs, SWs and DNs who participated in the i CAHE JCs belong to the same umbrella, ‘allied health therapy.’ In 2009, a model for Australian allied health was reported by Turnbull et al., which clustered the different allied health disciplines into ‘allied health therapy’, ‘allied health diagnostic and technical’, ‘scientific services’, and ‘complementary services’. This model reflects the core tasks, training, competencies and consumer focus of the disciplines[36]. The participants of this study, even though they are grouped under the same category, responded differently to the i CAHE JC. The SWs and DNs showed positive changes in all EBP outcomes except for attitude while the SPs and OTs improved only in their knowledge scores. Only the PTs demonstrated significant improvements in all outcomes. There are obvious differences across allied health disciplines which can explain the variability in their outcomes. The academic and clinical training required varies considerably across professions. There are clear distinctions regarding their philosophy, scope of practice, educational standards, and competency requirements. Differences in learning styles of allied health disciplines have also been widely reported in the literature[45–47]. The research or evidence base and availability of EBP resources may also vary across disciplines[9, 11]. Therefore, it is not surprising to find that while all participants were under the same classification (i.e. allied health practitioners), they showed differences in their responses to an identical intervention. The results of this study highlight the need to distinguish between disciplines, which are often treated by the EBP or research community as homogenous.

The lack of improvement in attitude following exposure to JC (except for PTs) suggests that practitioners already had positive attitude towards EBP prior to their participation in the JC, which indicates the presence of a ceiling effect. Compared to attitude, the other outcome variables showed far greater variation in scores (as shown by the larger standard deviations), which may have also played a role. Future research could explore the impact of i CAHE journal club in practitioners with varying levels of attitude. On the other hand, while there were a couple of disciplines (SPs and OTs) which did not show change in evidence uptake there is still reason to believe that participation in i CAHE JC may promote practice behaviour change. In a study by McQueen et al. (2006), findings indicated changes in practice as a result of new learning from the JC. The JC participants learned about the evidence base, and reported usage of new interventions that had been previously available but were unused due to lack of knowledge[16]. Honey and Baker (2011) reported that a JC can be used as an effective means for clinical education which can ‘foster critical thinking about clinical practices and generate creative thinking about how practices may be carried out differently.’ The participation in a JC emphasises the importance of critical thinking and reflective attitude in an individual practitioner, which may increase the likelihood of changing practice behaviour.

Implications for practice

Based on the results of this study, the authors propose the use of a structured JC such as the i CAHE JC to improve EBP knowledge and skills in allied health. The authors believe that even though the outcomes for evidence uptake varied across disciplines, i CAHE JC has the potential to influence practice behaviour. However, the variability across disciplines indicates that for an EBP intervention to be effective, the strategy should be tailored to the professional discipline to facilitate and sustain an evidence-based behaviour. This could mean integrating the JC with other strategies to improve the practice behaviour of the different allied health disciplines.

Implications for research

The authors recognise that there are other factors or variables which could have played a role in the variability of outcomes across disciplines despite receiving the same intervention. There is evidence from the literature that factors such as the characteristics of the health professional, characteristics of the organisation and contextual issues may influence evidence uptake[48, 49]. Therefore, further research and an in-depth analysis of the interaction of the individual and contextual factors, or the relative importance or contribution of these variables is required to better understand the determinants of evidence uptake in allied health.

Limitations

As with other researches, this study has limitations which need to be considered when interpreting the results. First, as this was not a controlled study, the effect of participation in other EBP-related activities or training, cannot be excluded. Second, the study did not examine the quality of the facilitation of the JC, which could have potentially affected the impact of the JC. Third, the instrument used to measure evidence uptake was a self-report questionnaire. Within the EBP literature, there is evidence to suggest that an individual’s self-assessment is often an inaccurate representation of their abilities[50, 51]. The use of objective and psychometrically sound instrument to measure practice behaviour change continues to be an area which require further research. Fourth, as this was a pilot study, the findings were based on a small sample of practitioners who volunteered to participate and may not represent all AHPs. Nevertheless, the authors believe that overall, the degree of improvement demonstrated in this study lends sufficient evidence to support the i CAHE JC as a medium for facilitating EBP.

Conclusions

There is evidence to suggest that a JC such as the i CAHE model is an effective method for improving the objective and perceived EBP knowledge and skills of AHPs. It may be used as a single intervention to facilitate evidence uptake in some allied health disciplines but may need to be integrated with other strategies to influence practice behaviour in other practitioners. The results of this study highlight the need to distinguish between disciplines and implement interventions tailored to their needs in order to achieve positive and sustainable changes in behaviour.

References

Heiwe S, Kajermo K, Tyni-Lenné R, Guidetti S, Samuelsson M, Andersson I, Wengström Y: Evidence-based practice: attitudes, knowledge and behaviour among allied health care professionals. Int J Qual Health Care. 2011, 23: 198-209. 10.1093/intqhc/mzq083.

Jette DU, Bacon K, Batty C, Carlson M, Ferland A, Hemingway RD, Hill JC, Ogilvie L, Volk D: Evidence – based practice: beliefs, attitudes, knowledge and behaviors of physical therapists. Phys Ther. 2003, 83: 786-805.

Iles R, Davidson M: Evidence based practice: a survey of physiotherapists’ current practice. Physiother Res Int. 2006, 11: 93-103. 10.1002/pri.328.

Stevenson K, Lewis M, Hay E: Does physiotherapy management of low back pain change as a result of evidence – based educational programmes. J Eval Clin Pract. 2006, 12: 365-375. 10.1111/j.1365-2753.2006.00565.x.

Salls J, Dohli C, Silverman L, Hansen M: The use of evidence based practice by occupational therapists. Occup Ther Health Care. 2009, 23: 134-145. 10.1080/07380570902773305.

Saleh MN, Korner-Bitensky N, Snider L, Malouin F, Mazer B, Kennedy E, Roy MA: Actual vs. best practices for young children with cerebral palsy: a survey of paediatric occupational therapists and physical therapists in Quebec, Canada. Dev Neurorehabil. 2008, 11: 60-80. 10.1080/17518420701544230.

Rochette A, Korner-Bitensky N, Desrosiers J: Actual vs. best practice for families post-stroke according to three rehabilitation disciplines. J Rehabil Med. 2007, 39: 513-519. 10.2340/16501977-0082.

Upton D, Upton P: Knowledge and use of evidence based practice by allied health and health science professionals in the United Kingdom. J Allied Health. 2006, 35: 127-133.

Metcalfe C, Lewin R, Wisher S, Perry S, Bannigan K, Moffett JK: Barriers to implementing the evidence base in four NHS therapies: dieticians, occupational therapists, physiotherapists, speech and language therapists. Physiotherapy. 2001, 87: 433-441. 10.1016/S0031-9406(05)65462-4.

Hadley J, Hassan I, Khan K: Knowledge and beliefs concerning evidence-based practice amongst complementary and alternative medicine health care practitioners and allied health care professionals: a questionnaire survey. BMC Complement Alter Med. 2008, 8: 45-10.1186/1472-6882-8-45.

Gosling AS, Westbrook JI: Allied health professionals’ use of online evidence: a survey of 290 staff working in the Australian public hospital system. Int J Med Inf. 2004, 73: 391-401. 10.1016/j.ijmedinf.2003.12.017.

Linzer M: The journal club and medical education: over one hundred years of unrecorded history. Postgrad Med J. 1987, 63: 475-478. 10.1136/pgmj.63.740.475.

Tibbles L, Sandford R: The research journal club: a mechanism for research utilization. Clin Nurs Spec. 1984, 8: 23-26.

Kirchoff K, Beck S: Using the journal club as a component of the research utilization process. Heart Lung. 1995, 24: 246-250. 10.1016/S0147-9563(05)80044-5.

Goodfellow L: Can a journal club bridge the gap between research and practice?. Nurse Educ. 2004, 29 (Suppl 3): 107-110.

McQueen J, Miller C, Nivison C, Husband V: An investigation into the use of journal club for evidence – based practice. Int J Ther Rehabil. 2006, 13: 311-317.

Luby M, Riley J, Towne G: Nursing research journal clubs: bridging the gap between practice and research. Medsurg Nurs. 2006, 15: 100-102.

Bannigan K, Hooper L: How journal clubs can overcome barriers to research utilization. Int J Ther Rehabil. 2002, 9 (Suppl 8): 299-303.

Alguire P: A review of journal clubs in postgraduate medical education. J Gen Intern Med. 1998, 13: 347-353. 10.1046/j.1525-1497.1998.00102.x.

Ebbert J, Montori V, Schultz H: The journal club in postgraduate medical education: a systematic review. Med Teach. 2001, 23 (Suppl 5): 455-461.

Linzer M, Brown T, Frazier L, DeLong E, Siegel W: Impact of a medical journal club on house-staff reading habits, knowledge, and critical appraisal skills: a randomized control trial. JAMA. 1988, 260: 2537-2541. 10.1001/jama.1988.03410170085039.

Khan K, Dwarakanath LS, Pakkal M, Brace V, Awonuga A: Postgraduate journal club as a means of promoting evidence-based obstetrics and gynaecology. J Obstet Gynaecol Can. 1999, 19 (Suppl 3): 231-234.

Seelig C: Affecting residents’ literature reading attitudes, behaviors, and knowledge through a journal club intervention. J Gen Intern Med. 1991, 6: 330-334. 10.1007/BF02597431.

Burstein J, Hollander J, Barlas D: Enhancing the value of journal club: use of a structured review instrument. Am J Emerg Med. 1996, 14: 561-563.

Spillane A, Crowe P: The role of the journal club in surgical training. Aust N Z J Surg. 1998, 68: 288-291. 10.1111/j.1445-2197.1998.tb02085.x.

MacRae H, Regehr G, McKenzie M, Henteleff H, Taylor M, Barkun J, Fitzgerald GW, Hill A, Richard C, Webber EM, McLeod RS: Teaching practicing surgeons critical appraisal skills with an internet-based journal club: a randomized, controlled trial. Surgery. 2004, 136 (Suppl 3): 641-646.

Mukherjee R, Owen K, Hollins S: Evaluating qualitative papers in a multidisciplinary evidence-based journal club: a pilot study. Psychiatr Bul R Coll Psychiatr. 2006, 30: 31-34. 10.1192/pb.30.1.31.

Kartes S, Kamel H: Geriatric journal club for nursing: a forum to enhance evidence-based nursing care in long-term settings. J Am Med Dir Assoc. 2003, 4/5: 264-267.

Belanger L: The Vancouver General Hospital Acute Spine Journal Club: a success story. SCI Nurs. 2004, 21 (Suppl 1): 29-30.

Pierre J: Changing nursing practice through a nursing journal club. Medsurg Nurs. 2005, 14 (Suppl 6): 390-392.

Rich K: The journal club: a means to promote nursing research. J Vasc Nurs. 2006, 24 (Suppl 1): 27-28.

Khan K, Gee H: A new approach to teaching and learning in journal club. Med Teach. 1999, 21 (Suppl 3): 289-293.

Lizarondo L, Kumar S, Grimmer-Somers K: Supporting allied health practitioners in evidence-based practice: a case report. Int J Ther Rehabil. 2009, 16 (Suppl 4): 226-236.

Lizarondo L, Grimmer-Somers K, Kumar S: Exploring the perspectives of allied health practitioners toward the use of journal clubs as a medium for promoting evidence-based practice: a qualitative study. BMC Med Educ. 2011, 11: 66-10.1186/1472-6920-11-66.

Elder S: ILO school-to-work transition survey: a methodological guide.http://www.ilo.org/wcmsp5/groups/public/@ed_emp/documents/instructionalmaterial/wcms_140859.pdf,

Turnbull C, Grimmer-Somers K, Kumar S, May E, Law D, Ashworth E: Allied, scientific and complementary health professionals: a new model for Australian allied health. Aust Health Rev. 2009, 33 (Suppl 1): 27-37.

McCluskey A, Bishop B: The Adapted Fresno Test of competence in evidence-based practice. J Contin Educ Health Profs. 2009, 29: 119-126. 10.1002/chp.20021.

Streiner D, Geddes J: Intention to treat analysis in clinical trials when there are missing data. Evid Based Mental Health. 2001, 4: 70-71. 10.1136/ebmh.4.3.70.

DeCoster J: Testing group differences using t-tests, ANOVA, and nonparametric measures.http://www.stat-help.com/ANOVA%202006-01-11.pdf,

Kao L, Green C: Analysis of Variance: Is there a difference in means and what does it mean?. J Surg Res. 2008, 144 (1): 158-170. 10.1016/j.jss.2007.02.053.

Honey CP, Baker JA: Exploring the impact of journal clubs: a systematic review. Nurse Educ Today. 2011, 31: 825-831. 10.1016/j.nedt.2010.12.020.

Ahmadi N, McKenzie ME, MacLean A, Brown C, Mastracci T, McLeod R: Teaching evidence-based medicine to surgery residents – is journal club the best format? A systematic review of the literature. J Surg Educ. 2012, 69: 91-100. 10.1016/j.jsurg.2011.07.004.

Deenadayalan Y, Grimmer-Somers K, Prior M, Kumar S: How to run an effective journal club: a systematic review. J Eval Clin Pract. 2008, 14: 898-911. 10.1111/j.1365-2753.2008.01050.x.

Harris J, Kearley K, Heneghan C, Meats E, Roberts N, Perera R, Kearley-Shiers K: Are journal club effective in supporting evidence-based decision making? A systematic review. BEME guide No. 16. Med Teach. 2011, 33: 9-23. 10.3109/0142159X.2011.530321.

Hauer P, Straub C, Wolf S: Learning styles of allied health students using Kolb’s LSI-IIa. J Allied Health. 2005, 34: 177-182.

Brown T, Cosgriff T, French G: Learning style preferences of occupational therapy, physiotherapy and speech pathology students: a comparative study. Int J Allied Health Sci Prac. 2008, 6: 1-12.

Zoghi M, Brown T, Williams B, Roller L, Jaberdazah S, Palermo C, McKenna L: Learning style preferences of Australian Health Science Students. J Allied Health. 2010, 39: 95-103.

Logan J, Graham I: Toward a comprehensive interdisciplinary model of health care research use. Sci Commun. 1998, 20: 227-246. 10.1177/1075547098020002004.

Estabrooks CA, Midodzi WK, Cummings GG, Wallin L: Predicting research use in nursing organizations: a multilevel analysis. Nurs Res. 2007, 56: S7-S23. 10.1097/01.NNR.0000280647.18806.98.

Tracey J, Arroll B, Barham P, Richmond D: The validity of general practitioners’ self-assessment of knowledge: cross sectional study. BMJ. 1997, 315: 1426-1428. 10.1136/bmj.315.7120.1426.

Khan K, Awonuga A, Dwarakanath L, Taylor R: Assessments in evidence-based medicine workshops: loose connection between perception of knowledge and its objective assessment. Med Teach. 2001, 23: 92-94. 10.1080/01421590150214654.

Acknowledgements

The authors would like to acknowledge the support from Leonie Steindl, practice development manager at the Royal Hobart Hospital in Tasmania, who assisted in the recruitment of participants.

Author information

Authors and Affiliations

Corresponding author

Additional information

Competing interests

The authors declare that they have no competing interests.

Authors’ contributions

LML conceived of the study, collected and analysed data, and drafted the manuscript. KGS collected and analysed data and helped draft the manuscript. SK and AC participated in the design of the study and analysed data. All authors read and approved the final manuscript.

Authors’ original submitted files for images

Below are the links to the authors’ original submitted files for images.

Rights and permissions

This article is published under license to BioMed Central Ltd. This is an Open Access article distributed under the terms of the Creative Commons Attribution License (http://creativecommons.org/licenses/by/2.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Lizarondo, L.M., Grimmer-Somers, K., Kumar, S. et al. Does journal club membership improve research evidence uptake in different allied health disciplines: a pre-post study. BMC Res Notes 5, 588 (2012). https://doi.org/10.1186/1756-0500-5-588

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/1756-0500-5-588