Abstract

In this paper, we provide a new criterion on the existence and uniqueness of stationary distribution for diffusion processes. An example is given to illustrate our results.

MSC:60H10, 37A25.

Similar content being viewed by others

1 Introduction

In many applications such as finance and biology, we often wish to replace the time dependent instantaneous measure by a stationary (or ergodic) measure. Thus, we face the following questions: Do the systems possess ergodic properties? Under what conditions do the systems have the desired properties of ergodicity? There are many criteria for the existence and uniqueness of stationary distribution for diffusion processes. See, for example, Hasminskii [1], Pinsky [2], Prato and Zabcyk [3], Yin and Zhu [4], Zhang [5]. However, most criteria suppose the infinitesimal operators satisfy the uniform ellipticity condition. An interesting question is: What happens if the infinitesimal operators can be degenerate? Is there a simple and general sufficiency condition? In this paper, we will give a new sufficient condition to verify the existence and uniqueness of stationary distribution for general diffusion processes.

Let be a family of diffusion processes on some probability space , where stands for the state space. Let be the continuous functions from into ℝ with continuous derivatives up to order two and with compact support in . Let be a diffusion process on with a transition function and for f in with infinitesimal generator

on a domain . The infinitesimal generator corresponding processes are termed diffusion processes. Besides, we assume the diffusion processes satisfy the following basic assumptions:

-

(i)

and are locally bounded and measurable functions on D;

-

(ii)

a is continuous on D;

-

(iii)

there exits a unique strong solution ;

-

(iv)

the solution does not explode at any finite time.

For any , the set of bounded continuous functions on , define

For simplicity, denote by . Recall that a sequence of probability measures on is said to converge weakly to a probability measure (on ), for every , if

holds for all bounded continuous functions f on . A diffusion process is said to have the (weak) Feller property if for any bounded and continuous function f, is a bounded continuous function. Besides, if , , then the processes become deterministic stochastic processes. In this case, for any bounded continuous function f,

Thus, the deterministic processes do not have the weak Feller property. Therefore, to consider the Feller property at any time t, the random variable can take on at least a countable number of values with positive probabilities. More precisely, the diffusion whose semigroup should be irreducible (see, for example, Cerrai [6]). Hence, we impose the following assumption on the diffusion semigroup:

-

(v)

the semigroup is irreducible.

The aim of the present paper is twofold. First, we aim to give a new criterion for general diffusions, especially when the coefficients of diffusions are non-Lipschitz or coefficients of diffusions are degenerate. This is highly non-trivial because when the diffusion matrix is singular, the corresponding infinitesimal operator L is a class of non-elliptic operators, which the maximum principle on an elliptic operator fails. Our second aim is to give general sufficient conditions on the stationary distribution for population dynamical systems.

To proceed, we list several notions:

: the transpose of any matrix or vector A;

: the trace norm of matrix A, i.e., ;

and ;

K: a generic positive constant whose values may vary at its different appearances.

2 Main results

Before we show the main result, we first impose the following assumptions:

(A1) for each ;

(A2) .

To begin with, we cite a known result from Bhattacharya and Waymire [7] as a lemma.

Lemma 2.1 [[7], pp.643-645]

Let be probability measures on for every . The following are equivalent statements.

-

(a)

converge weakly to .

-

(b)

Equation (1.3) holds for all infinitely differentiable functions vanishing outside a bounded set.

Lemma 2.2 Let assumptions (i)-(v) and (A1) hold, the diffusion process has the weak Feller property.

Proof The proof of this lemma is essentially the same as that of Lemma 3.2 of Tong et al. [8]. □

Theorem 2.1 If assumptions (i)-(v) and (A2) hold, then the diffusion process has a unique stationary distribution.

Proof The proof of this theorem is divided into two steps as follows.

Step 1: We show that the Markov process whose transition semigroup has an invariant measure. For this end, we first prove that for some , the family is tight, i.e., given , there exists such that . It will follow from the Prohorov theorem that there exist and a probability measure π, perhaps depending on , such that

By Chebyshev’s inequality, ,

Therefore, the tightness of the family follows from assumption (A2).

The next task is to prove that the limit in (2.1) does not depend on . To this end, define

By Chebyshev’s inequality,

and we have, for any , , whenever r is large enough. Therefore, is tight, namely, there exists a subsequence , the sequence converges weakly to a measure π. By the Krylov-Boyoliubov theorem(see, e.g., Prato and Zabczyk [3], Corollary 3.1.2, p.22), one can follow π is an invariant measure for , . In other words,

Step 2: We show that (2.5) is the unique stationary distribution. To this end, for every bounded continuous function , (2.5) implies

By Lebesgue’s dominated convergence theorem, applied to the sequence , we have

By Lemma 2.2, if f is bounded and continuous, then is a bounded continuous function. Therefore, applying (2.6) to yields that

Note that , the limits in (2.7) and (2.8) coincide,

i.e., if has a distribution π, then for all . Namely, π is a stationary distribution.

To prove the uniqueness, let be any invariant probability, then for all bounded continuous ,

But by (2.6), the left-hand side of equality (2.9) converges to . Therefore, , which implies . □

3 An example

In this section, we give an example to illustrate our conditions and results.

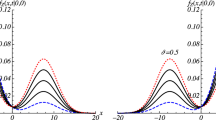

Example 3.1 Recently, Mao [9] has considered the stationary distribution of stochastic population dynamics. They assumed that population sizes follow the following stochastic differential equations:

where stands for the population size of species i at time t, is the intrinsic growth rate of species i, represents the effect of interspecies (if ) or intraspecies (if ) interaction. Here is an independent one-dimensional Brownian motion. Let be an n-dimensional Brownian motion. Then Eq. (3.1) can be rewritten as

where , , , , . Besides, we suppose that and are independent and for , are nonnegative constants, and for some i. If , , , , then the model (3.2) is termed the facultative Lotka-Volterra model. If , , , , then the model (3.2) is termed the competitive Lotka-Volterra model. Both Lotka-Volterra models have been extensively studied by many authors (see, e.g., Bao et al. [10, 11]). For the competitive model (3.2) with Poisson jumps, Bao et al. [10, 11] show that Eq. (3.2) has some nice results such as global positive solution, existence of an invariant measure, some asymptotic properties. To obtain our results, we impose the following assumptions:

(H1) −A is a nonsingular M-matrix;

(H2) , , .

Lemma 3.1 If one of assumptions (H1) and (H2) holds, then there is a positive constant K such that for any initial value ,

Proof The proof is essentially the same as the proof of Theorem 3.1 of Mao [9] and Theorem 3.1 of Bao [11]. We omit the proof. □

Theorem 3.1 If one of assumptions (H1) and (H2) holds, then the model (3.2) has a unique stationary distribution.

Proof According to the results obtained by Mao [9], Tong et al. [8], and Bao et al. [11], it is not hard to check that the model (3.2) satisfies all the conditions of Theorem 2.1 together with Lemma 3.1. Hence, the uniqueness of stationary distribution follows immediately. □

Remark 3.1 Assumption (H1) relaxes the sufficient conditions obtained by Tong et al. [8]. Assumption (H2) means that if population dynamics is competitive, then it has a unique stationary distribution, which implies ergodic properties of the model (3.2). This gives a new method to estimate parameters for competitive population dynamics.

References

Hasminskii RZ: Stochastic Stability of Differential Equations. Sijthoff & Noordhoff, Rockville; 1980:71–155.

Pinsky RG: Positive Harmonic Function and Diffusion. Cambridge University Press, Cambridge; 1995:235–282.

Da Prato G, Zabczyk J: Ergodicity for Infinite Dimensional Systems. Cambridge University Press, Cambridge; 1996:11–168.

Yin G, Zhu C: Hybrid Switching Diffusions. Springer, Berlin; 2010:27–133.

Zhang XC: Exponential ergodicity of non-Lipschitz stochastic differential equations. Proc. Am. Math. Soc. 2009, 137: 329–337.

Cerrai S Lecture Notes in Mathematics 1762. In Second Order PDE’s in Finite and Infinite Dimension. A Probabilistic Approach. Springer, Berlin; 2001:65–77.

Bhattacharya RN, Waymire EC: Stochastic Processes with Applications. Wiley, New York; 1990:643–646.

Tong JY, Zhang ZZ, Bao JH: The stationary distribution of the facultative population model with a degenerate noise. Stat. Probab. Lett. 2013, 83: 655–664. 10.1016/j.spl.2012.11.003

Mao XR: Stationary distribution of stochastic population systems. Syst. Control Lett. 2011, 60: 398–405. 10.1016/j.sysconle.2011.02.013

Bao JH, Yuan CG: Stochastic population dynamics driven by Lévy noise. J. Math. Anal. Appl. 2012, 391: 363–375. 10.1016/j.jmaa.2012.02.043

Bao JH, Mao XR, Yin G, Yuan CG: Competitive Lotka-Volterra population dynamics with jumps. Nonlinear Anal. 2011, 74: 6601–6616. 10.1016/j.na.2011.06.043

Acknowledgements

The authors are grateful to the anonymous referees for their valuable comments and suggestions which led to improvements in this manuscript. The research of Z. Zhang was partially supported by the National Natural Science Foundation of China (Nos. 11071037, 11171062, 11126253 and 11201062), the Fundamental Research Funds for the Central Universities, and the Innovation Program of Shanghai Municipal Education Commission (No. 12ZZ063).

Author information

Authors and Affiliations

Corresponding author

Additional information

Competing interests

The authors declare that they have no competing interests.

Authors’ contributions

The authors have made the same contribution. All authors read and approved the final manuscript.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 2.0 International License (https://creativecommons.org/licenses/by/2.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Zhang, Z., Chen, D. A new criterion on existence and uniqueness of stationary distribution for diffusion processes. Adv Differ Equ 2013, 13 (2013). https://doi.org/10.1186/1687-1847-2013-13

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/1687-1847-2013-13