Abstract

Background

In Mozambique, malaria is the principal cause of morbidity and mortality. Efforts are being made to increase control activities within communities. These activities require management decisions based on evidence of malaria incidence. Although some data generated are of poor quality, there is little research towards improving the reporting systems.

Methods

An analysis of the quality of routine malaria data was performed in selected districts in Southern Mozambique from August to September 2003.

The aim was to assess the quality of the source data in terms of completeness, correctness and consistency across management levels.

Results

Analysis revealed primary data to be of poor quality. The diversity of reporting systems with limited coordination give rise to redundancies and wastage of resources.

There was evidence of "invention" of data in health facilities contributing to an incorrect representation of malaria incidence. Large, "non-clinical", time-based variations of malaria cases due to reporting delays were also noted, contributing to false alerts of outbreaks.

Furthermore, targets established in the national strategic plan for malaria cannot be calculated through the existing systems; this is the case, for example, for data related to pregnant women and children under-five years.

Discussion and recommendations

The existing reporting system for malaria is currently not satisfying the information needs of managers. It is suggested that one standardized system, including the creation of one form to include the essential variables required for the calculation of key indicators by age, gender and pregnancy status, and to establish a national database that maps malaria by location.

Similar content being viewed by others

Background

Malaria is by far the world's most important tropical parasitic disease, with annual estimates from 300 to 500 million clinical cases, of which over 86% are in Africa. Every two minutes, three children are reported to die of malaria, with a majority of them in sub Saharan African countries [1, 2]. The estimated cost of malaria, in terms of strains on health systems and economic activity lost, are enormous. In 1997 an estimated US$1.8 to $2 billion was spent in Africa on both direct costs of malaria (prevention and care) and on indirect costs (such as lost of productivity or income with illness or death). This figure was also projected to rise to US$3.6 billion or more by end of 2000 [3].

In Southern Africa it is estimated that out of a total population of about 145 million, 92 million people live in malarious areas. In Mozambique, malaria is endemic and accounts for the highest incidence of disease. The burden is greatest among children under five years of age and amongst pregnant women [4, 5].

Attempts to control malaria in Mozambique date as far back as the 1960s [6]. However, the escalation of civil war in the late 1970s led to a complete breakdown of malaria control measures. Following the cessation of hostilities in the 1990s there was a renewed interest in malaria control with the reintroduction of vector control by house spraying with insecticides complemented by case treatment in suburban areas in the majority of provincial capitals. These efforts were enhanced in 1999, when Roll Back Malaria (RBM, a global partnership between WHO, UNICEF, the World Bank and UNDP) started to extend its action to the community by enrolling members of society to actively participate in preventive and malaria control activities [7]. The Mozambique RBM strategic framework for 2002 – 2005 was subsequently launched aimed at reducing mortality and morbidity attributed to malaria, specifically amongst children under five years of age [8]. Additionally, in 2000 the Lubombo Spatial Development Initiative (LSDI), a trilateral agreement between Mozambique, Swaziland and South Africa, introduced vector control by house spraying in the rural parts of the Maputo province [9]. Recently the project received extensive funding from the Global Fund to scale up malaria control, particularly in Mozambique as part of RBM. The major malaria control activities include increased vector control campaigns and increased access to cost-effective anti-malarial drugs [10].

The scaling up of these interventions requires a robust surveillance system to monitor and evaluate their impact. In addition, good health-information systems (HIS) at district, provincial and national levels is fundamental for evidence based decision-making and improved management of intervention programmes, for example to allow effective and equitable resource allocation in different areas. Information systems can support the management of malaria programmes by: (a) drawing malaria density maps by health area according to different targets and (b) by estimating the number of children under-five years of age living in malaria areas by health facility catchments area, as well as by district or province, thus allowing rational planning based on evidence. All these may identify imbalances between needs and resources. For example, a study in Kenya showed that insecticide-treated bed net programmes were concentrated in areas where nongovernmental organizations had strong links, rather than in areas where malaria risk was greatest [11]. It is argued in this paper that the potential of information systems to support the management of malaria programmes is not being effectively realised at present.

Though the national strategic framework recognizes the weaknesses of the HIS in the country, with regards to multiple and inconsistent data collection tools, lack of consistent methodology for analysis and interpretation, and the fact that large amounts of currently available malaria data are not being adequately used in decision-making [8], no systematic assessment and/or evaluation of disease surveillance or their health-information systems has been carried out in the country.

Time, human and financial constraints limit the cope of this paper to the analysis of routinely collected malaria data, generated at peripheral health facilities and sent to district, provincial and national levels.

The key research questions addressed in this paper are as follows:

- What are the processes of data capturing and reporting from health facilities to national decision-makers?

- What is the quality of the information used to calculate the core malaria indicators?

The methods adopted to answer these research questions are now described.

Methods

This is a descriptive study based on qualitative methods which involved observation of work practices, review of existing documents and interviews with key informants.

Study area and design

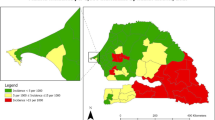

The study focused on two malaria endemic provinces in southern Mozambique (Figure 1). The research was carried in August and September 2003 in the provinces of Inhambane (in 2 out of 14 districts) and Gaza (in 2 out of 11 districts) respectively. The facilities were selected in order to obtain the flow of information, i.e. in each province data were gathered first from selected health facilities (health centres and district hospitals) and its flow and quality was examined up to the district and provincial levels (see Table 1). Another criterion was to explore both rural (usually more distant to their district offices) and urban health facilities (usually more crowded). Physically, there are no major differences in the health districts, they present quite similar malaria pattern throughout the country and use more or less similar working procedures.

Data collection and analysis

Data were gathered through observation of work practices, semi-structured interviews with key informants (Table 1) and review of existing documents including reports and registers. This involved the following task at each site:

(a) Collating available data in both paper and electronic formats

(b) Analysing data for completeness, correctness and consistency

(c) Selecting a list of malaria indicators used

(d) Identifying data elements used to calculate essential indicators

Health workers were asked questions regarding clinical practice, malaria patient management and treatment, use of register books, their contribution in statistics, the usefulness of data reported and the interplay with persons responsible for statistics and managers. Laboratory workers were asked the same questions including the procedures and laboratory work (laboratory network, internal and external quality control). The persons working on statistics and the managers were asked questions pertaining to collation of data and forms, maps controlling the reception of forms, data usefulness, data quality control, fates of reports and managerial decisions.

The observations took place over a two month period by the first author. Initially the work practices of malaria routine (including malaria patient management) were observed during two weeks in the Maxixe health centre and Chicuque rural hospital. In other health facilities one to two days were spent in order to capture local variations, particularities and exceptions. Typically, in the district and provincial offices, one to four days were spent depending on the availability of the health workers and managers. The observation bias was minimised in all places by visiting each place more than once to confirm or reconfirm the earlier observations and discuss the main findings with the respondents and other colleagues. During visits, a research diary was used to take notes.

Due to ethical considerations, most of the data presented in this paper are not connected to the real locations (health districts, health facilities). We use the names of specific places only where we understand that data being presented do not have implications for the informants.

Results

A total of 50 interviews were conducted and these included 19 health and laboratory workers, 17 persons responsible for statistics, and 14 managers (Table 1).

Findings from this research are presented following the information cycle [12] in relation to work practices. In the information cycle, data are collected (in register books), then collated (in forms), reported and validated (checking for completeness, correctness and consistency) to allow analysis through which are turned into useful information (indicators) for decision-making.

Data source and collection tools

The primary source of malaria cases is patients presenting in health facilities with symptoms indicative of malaria. These are documented in the register books. However, most of the peripheral consultations do not have appropriate register books. For example, in one health centre, register books being used in screening consultations were designed specifically for maternities and included columns such as focus at entrance, labour, newborn, maternal death, etc. These columns were overwritten by hand leading to reading constraints and thus contributing to errors in collated data.

Other common errors include illegible handwriting, ink blots, incompleteness, wrong data items and writing errors, arguably due to work overload and time constraints. It is common that each clinician in a health centre sees about sixty patients per day.

All malaria data being reported from health facilities are primarily gathered from register books in consultation rooms, infirmaries and laboratories. In the National Health System, infirmaries and laboratories are physically located within ordinary health facilities such as health centres, district, provincial and central hospitals which are part of the established network. The health posts are the most peripheral and typically have only rudimentary facilities and limited staff with no infirmaries or laboratories.

Data flow

In general, as can be appreciated in the Figure 2, malaria data flows through four reporting channels, namely (a) the weekly epidemiological bulletin from the Department of Epidemiology within the National Directorate of Health; (b) the specific malaria programme reporting system; (c) the monthly summary for inpatients from district hospitals as part of the main health information system from the Department of Health Information within the National Directorate of Planning and Cooperation and (d) the clinical laboratory reporting system. In this paper, data from clinical laboratories are presented as part of some reporting systems mentioned above and not as an isolated system even though its system reports data regarding two main tasks: one for reporting the volume of laboratory tests performed (including malaria slides) and another for reporting needs in terms of reagents and other supplies.

Several malaria case reporting channels in Mozambique Malaria data are gathered from register books and flow through four reporting channels, namely (a) a specific Malaria Programme reporting system; (b) the weekly epidemiological bulletin; (c) the monthly summary for inpatients from district hospitals and (d) the clinical laboratory reporting system.

This multiplicity of channels contributes to duplication of effort, consumes time and contributes to low validity, incorrectness and incompleteness of data.

Data quality

In this section we present issues of data quality from different malaria reporting systems mentioned above.

Weekly epidemiological bulletin (BES)

On a weekly basis, health workers report data from all health facilities (i.e. health posts and centres, district, province and central hospitals), which are supposed to alert managers of outbreaks identified through clinical time-based abnormal variation in the data. Through this system, cases and deaths of the following diseases are notified: malaria, measles, tetanus, meningitis, diarrhoea, dysentery, cholera, acute flaccid paralysis (poliomyelitis), sleeping sickness, and rabies. The system is paper-based at the district level and converted into a digital format at the provincial and national levels.

Malaria cases are based either on clinical (clinical malaria, suspected or relative) or laboratory (confirmed or objective) diagnosis and this system does not differentiate between the two types of malaria case data. The value of such reporting is limited because clinical cases can easily be misdiagnosed as malaria can mimic symptoms of meningitis, typhoid fever, septicaemia, influenza, hepatitis, all types of viral encephalitis, gastro-enteritis and haemorrhagic fevers [13]. Another major problem with this system is that the primary data collating form does not identify the service or the individual who has completed it making quality control difficult. As a consequence, we observed in some health centres that cases were not being reported and later deliberately "invented" to cover the missing data. In another example, in one health centre, two out of the six screening clinicians had not reported data in two consecutive weeks (from the 14th to 27th of April 2003). However, this gap could not be identified by the chief of statistics, leading to less cases being reported. In addition, there are extreme delays in sending the completed form from the health facilities to the district. Based on the records available in the district office, we counted the number of times a report was not sent on schedule (every week) by the health facilities during the 1st semester 2003 (Table 2). It is clear that all health facilities had delayed between four to eight times in sending their reports to the district headquarters. As a consequence there were large time-based variations, demonstrating "non-clinical" week to week variations of malaria data as shown in Figure 3. This could lead to false indications of the outbreaks.

In the case of delayed reports, the subsequent week's form usually provided the missing week's data aggregated with the current data, with some comments on the reverse of the form. Such untimely reporting contributed to the poor quality of data. We compared the district totals with the sum of the individual health facilities for the full 1st semester 2003. These totals were nearly identical (variation from 1 to 5%) because the discrepancies pointed to in figure 3 had already been hidden through data aggregation. Additionally, we compared the district totals reported by the province (paper-based) with the district totals at the national level (computer-based). Again, the totals were nearly identical (variation from 1 to 4%). This suggests that data quality constraints encountered in health facilities and within health district domains, get masked prior to reaching the provincial or national levels.

Malaria Programme reporting system

The specific Malaria Programme reporting system refers to monthly malaria notification system of outpatient and inpatient case data from all health facilities carried out in some provinces. The forms used include variables that are essential in calculating most of the relevant malaria indicators. The data collected are grouped by age (0–4; 5–15 and above 15 years old), and indicate whether or not they are laboratory confirmed cases. The data are aggregated by the district and then sent to the provincial level where they are further compiled and sent to the national level. The system is completely paper-based and seems to theoretically be the most appropriate for decision-making because of inclusion of the key indicators.

A major problem identified in this system is the huge discrepancy between laboratory confirmed cases reported on the forms and positive tests counted in the laboratory register book. In general the cases reported by far outnumber the tests registered in the laboratory and show unusual variations (see figure 4). In March, 2003 for instance, one health centre had reported 2721 confirmed cases, while we have counted only 255 positive malaria tests. One of the reasons that can explain this was suggested by provincial malaria managers and also verified in the field. This is that there are many suspected cases (non-confirmed from the laboratories) and even cases with negative test results that are reported as "confirmed cases" arguably because some clinicians do not trust the laboratory results. The majority of cases were not microscopically confirmed due to limited laboratory facilities. In the Inhambane Province, for instance, the ratio of confirmed malaria to clinical malaria cases was only 17% for the 1st semester 2003. Nevertheless, the ideal standard should be at least 50% [Inhambane Directorate of Health, 2003]. Furthermore, we could verify from observations of the work practices in the laboratories that although there is work overload and the register books were improvised from ordinary exercise books, laboratory workers did indeed recorded almost all results.

District hospitals reporting system

The District hospitals reporting system was created in order to provide data from inpatient wards in categories such as surgery, maternity, paediatric and medicine including the important causes of admitting patients e.g. laboratory confirmed malaria, diarrhoea, AIDS, tuberculosis, anaemia, etc. These data are first aggregated in a paper format at a district level then converted into a digital format at the provincial level and sent to the National Department of Information for processing and analyses. We compared inpatients malaria cases and deaths of adults categories in paper format at a district level with the digital data at the provincial level for the 1st semester 2003. The matching result has shown a significant discrepancy of 62% for cases and 48% for deaths reported (see figure 5). Possible reason for this may be related to data entering errors from the person dealing with statistics at the provincial level.

Discussion and recommendations

In the previous section, two major issues are described. First, the process of data capturing and reporting from selected peripheral health facilities to provincial and national levels, and second, the degree of accuracy of data being sent across different levels.

Despite the fact that this study was based on small sample locations in one part of the country and may, therefore, not be representative of the whole country, it provides an idea of the reality in peripheral health care settings and shows how numbers of malaria cases are being used at all management levels. Based on available data, it was ascertained how the current sources of malaria data, mainly register books, contribute to a large extent to the poor quality and sub-notification of data reported and, thus, that decisions made are based on an incomplete and often incorrect picture.

This situation is aggravated by the existing four reporting channels that are very fragmented with little or no communication between them.

The weekly reports present systematically large "non-clinical" variations from one week to another due to reporting delays leading to false alerts to the managers. The reporting system specific to the Malaria Programme seems to gather more data and is thus better suited as a basis for calculating essential indicators, but is only used in few provinces and shows inconsistencies when compared to laboratory tests. The discrepancies of inpatients data in district hospital reporting systems between data in paper format and digital data at a provincial level is also a course of concern. This suggests data entering errors and has serious implications for data checks and verification, contributing to lethality rates based on an incorrect picture.

There is also important data that are not gathered but are necessary for the targets and indicators established in the national malaria strategic framework, including data by age groups, gender and the status of pregnant women [8]. All these are important for accurate and reliable estimates of trend in incidence and mortality.

The quality of laboratory tests being done in district health facilities is a cause for concern. Even though most of the laboratory tests done relied on the use of a microscope and the tests for malaria were the most commonly requested, external quality control and supervision visits appeared to be frequent, for example for tuberculosis tests rather than for malaria tests. All patients admitted with malaria diagnosis should always be microscopically confirmed.

It is recommended that all malaria case reporting systems should be integrated into one standardized system in terms of using the same data collection forms and tools. One approach could be the diagram presented in Figure 6, where malaria data would be reported weekly from the health facility to district/provincial levels and reported monthly from the provincial to the national levels [14].

Following the diagram, on the ground (health facilities), the routinely collected malaria cases (both laboratory and clinical) should include age groups, gender and pregnancy status. All this information should be collected in one form and sent to the district office. At the district level, the person dealing with statistics should quality check, aggregate the items per health facility, analyse and send the data to the province. In addition, this person should also evaluate time trends for eventual feedback to the health facilities. At the provincial level data should be aggregated by health district and sent to the national managers on a monthly basis as well as back to the districts for feedback. In addition, data regarding monitoring of therapeutic failures, drug efficacy and laboratory efficacy testing should be obtained quarterly from sentinel district hospitals (Figure 6).

This could thus constitute a force generator to develop a rational database supporting GIS application to better map malaria cases by health areas as is being strongly promoted by WHO-SAMC throughout the Southern-Africa region.

Finally, although this study was carried out on a limited scale with few analytical tools, it has been able to ascertain constraints related to poor routine health data. This should, therefore, be seen as a basis for a much more comprehensive analysis across the country. This research, by attempting to show the reporting systems where malaria is endemic and is the principal cause of mortality, will contribute not only to the Mozambican Malaria Managers and Public Health Specialists but also to the world affected by malaria burden.

References

Alnwick D: Rejecting 'business as usual' for Malaria Control in Emergencies. Health in Emergencies WHO. 2001, 2-6.

White NJ, Breman JG: Malaria and Babesiosis: Diseases Caused by Red Blood Cell Parasites. In: Harrison's Principles of Internal Medicine. Edited by: Braunwald E, Isselbacher KJ, Kasper DL, Hauser SL, Longo DL, Jameson JL. 2001, 1-21. 15

Foster S, Phillips M: Economics and its contribution to the fight against malaria. Ann Trop Med Parasitol. 1998, 92: 391-398. 10.1080/00034989859375.

Hammerich A, Campbell O, Chandramohan D: Unstable malaria transmission and maternal mortality – experiences from Rwanda. Trop Med Int Health. 2002, 7: 573-576. 10.1046/j.1365-3156.2002.00898.x.

Mozambique CCC: Mozambican National Initiative To Accelerate Access To Prevention, Care, Support, And Treatment For Persons Affected By HIV/AIDS, Tuberculosis And Malaria. Global Fund To Fight AIDS, Tuberculosis and Malaria. Global Fund Secretatiat. 2002

Schwalbach J, de la Maza M: A malária em Moçambique (1937–1973). Maputo, Moçambique: Instituto Nacional de Saúde, Ministério da Saúde. 1985

Africa Malaria Report. [http://www.afro.who.int/amd_2003/mainreport.pdf]

MISAU: Roll Back Malaria; Strategic Plan for 2002–2005. Ministry of Health Mozambique and WHO. 1999

Malaria control in the Lubombo region. [http://www.malaria.org.za/lsdi/Overview/overview.html]

MISAU: Plano Anual Integrado 2002 – Epidemiologia e Grandes Endemias. Ministry of Health Mozambique. Maputo. 2002

Greenwood B, Mutabingwa T: Malaria in 2002. Nature. 2002, 415: 670-672. 10.1038/415670a.

Heywood A, Rohde J: Using Information for Action: A manual for health workers at facility level. 2001, 21-95.

NEHC: Navy Medical Department – Pocket Guide to Malaria Prevention and Control. Virtual Naval Hospital. 2000

Malaria, Norms and Standards in Epidemiology: Guidelines for Epidemiological Surveillance. [http://www.paho.org/english/sha/be_v20n2-cover.htm]

Acknowledgements

We are grateful for the cooperation from the staff of the health districts in Mozambique, with special attention to the health workers and managers in Maxixe, Inhambane-city, Chókwe and Xai-Xai districts. We are especially grateful to Dr Francisco Saúte and Jagrati Jani (Malaria Programme Headquarters, MISAU), Dr Martinho Dgedge (National Health Directorate), Dr Artur Machava (Chokwe Rural Hospital), Dr Ladino (Chicuque Rural Hospital), Dr Naftal Matusse (Maxixe Directorate of Health) and Mr Adriano (Malaria Programme, Inhambane). Finally and most importantly, we thank Sundeep Sahay, Jennifer Blechar, the HISP team members, the external Reviewers and the Editor-in-Chief of the Malaria Journal for support and encouragement.

Author information

Authors and Affiliations

Corresponding author

Additional information

Authors' contributions

Chilundo has conducted the work in the field, edited and prepared the manuscript. Sundby and Aanestad have contributed in analysing the findings and in the final editing of the manuscript.

Authors’ original submitted files for images

Below are the links to the authors’ original submitted files for images.

Rights and permissions

This article is published under an open access license. Please check the 'Copyright Information' section either on this page or in the PDF for details of this license and what re-use is permitted. If your intended use exceeds what is permitted by the license or if you are unable to locate the licence and re-use information, please contact the Rights and Permissions team.

About this article

Cite this article

Chilundo, B., Sundby, J. & Aanestad, M. Analysing the quality of routine malaria data in Mozambique. Malar J 3, 3 (2004). https://doi.org/10.1186/1475-2875-3-3

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/1475-2875-3-3