Abstract

Background

There have been numerous efforts to improve and assure the quality of treatment and follow-up of people with Type 2 diabetes (PT2D) in general practice. Facilitated by the increasing usability and validity of guidelines, indicators and databases, feedback on diabetes care is a promising tool in this aspect. Our goal was to assess the effect of feedback to general practitioners (GPs) on the quality of care for PT2D based on the available literature.

Methods

Systematic review searches were conducted using October 2008 updates of Medline (Pubmed), Cochrane library and Embase databases. Additional searches in reference lists and related articles were conducted. Papers were included if published in English, performed as randomized controlled trials, studying diabetes, having general practice as setting and using feedback to GPs on diabetes care. The papers were assessed according to predefined criteria.

Results

Ten studies complied with the inclusion criteria. Feedback improved the care for PT2D, particularly process outcomes such as foot exams, eye exams and Hba1c measurements. Clinical outcomes like lowering of blood pressure, Hba1c and cholesterol levels were seen in few studies. Many process and outcome measures did not improve, while none deteriorated. Meta analysis was unfeasible due to heterogeneity of the studies included. Two studies used electronic feedback.

Conclusion

Based on this review, feedback seems a promising tool for quality improvement in diabetes care, but more research is needed, especially of electronic feedback.

Similar content being viewed by others

Background

In our efforts to improve and assure the quality of care in general practice, information technology is becoming increasingly used [1]. Quality improvement tools for diabetes, often delivered electronically, include general information and clinical guidelines, feedback on the quality of care as well as patient information letters. Furthermore, many general practitioners (GPs) use electronic patient's records. The use of information technology in general practice is facilitated by the increasing numbers and validity of clinical databases [2]. These databases make it possible to extract accurate information in an easy and low cost way.

In 2006, a Cochrane review concluded that "Audit and feedback can be effective in improving professional practice (...) the relative effectiveness of audit and feedback is likely to be greater when baseline adherence to recommended practice is low (...)[3].

Recent published figures [4] show that 88% of native Danish PT2D had their Hba1c measured at least once during year 2003; for eye examinations the figure was 33% increasing to only 61% over a four year period. 70% of the native Danish PT2Ds had an annual serum cholesterol measurement. These figures show that there is room for improvement when it comes to caring for PT2Ds.

Recent reviewing of prompting clinicians about preventive care measures have revealed a modest consequence, with cardiac care and smoking cessation reminders being most effective [5]. The aim of this paper was to review the available literature on the effect of feedback on diabetes care in general practice, and more specifically whether there was an effect of feedback to GPs on process and outcome measures for the quality of care for PT2D and to what extent electronic feedback had been investigated. We define feedback as "any summary of clinical performance of health care over a specified period of time". Electronic feedback is defined in the same way but delivered to the end user via computer [3].

Methods

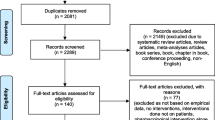

A search was conducted using October 2008 updates of Medline (Pubmed), Cochrane library and Embase databases. Only English written papers were included in this review. The searches were not time period limited

Separate searches were conducted on the following MeSH and in-text terms:

#1: Type 2 Diabetes, #2: General Practice, #3: Family medicine, #4: Family medicin, #5: Family Practice, #6: Feedback, #7 Decision support, #8: Reminder system.

These searches were then combined into: #9: (#2 or #3 or #4 or #5) and #10: (#6 or #7 or #8). Finally, all searches were combined into #11: (#1 and #9 and #10).

The search and assessment strategies used in this review were based on course material from the DIRAC course of Systematic reviews and Meta Analysis held in Copenhagen in August 2007 [6]. However, as the aim was not to perform a meta-analysis and a thorough rating of the evidence, only one researcher did the searching and primary assessments. First author (TLG) did all the searches and assessing and discussed any doubts with the rest of the research team. Further, all results were scrutinised by the research group.

The search term "Type 2 Diabetes" was chosen above "Diabetes Mellitus" to fulfil the aim of this review, which does not consider "Type 1 Diabetes". Even though the Mesh term "Type 2 Diabetes" is relatively new, it realised papers published between 1994 and 2005. One unpublished study was considered for inclusion, based on published work concerning the methodology of the study. However, correspondence with the research team running the trial was inconclusive and left the study irrelevant for reviewing. Reviews that appeared in the searches were used in order to check the reference lists for includable randomized trials. The term "reminder system" was included as it was discovered that this term often covered what we defined as feedback. To distinguish between reminder system and feedback we assessed each of the retrieved papers separately.

The reference lists of the papers retrieved were checked for includable papers as were the "related articles" facility in PubMed. An initial database search retrieved six papers and a search of the related articles and reference list included an additional eight papers. Ten of the14 papers complied with the following inclusion criteria:

• Randomised trial

• Study concerning diabetes

• Study set in general practice

• Interventions aiming at using feedback to GPs on diabetes care.

The remaining four articles were excluded because they did not concern feedback.

Assessment

The included studies were assessed according to predefined criteria:

• Aim of the study (How well did design cohere with study aim)

• Method of evaluation (How well did methods of analysis cohere with study design?)

• Format of feedback/intervention (Electronic or not)

• Effect measure divided into:

+ Process measures, i.e. are things done? Is, e.g. HbA1c measured according to the study-based guidelines?

+ Outcome measures, i.e. has the level improved? Have, e.g. the HbA1c levels improved according to the aims of the study-based guidelines?

• Effect of feedback

Data collection

• Problems identified in randomization, sampling, blinding and drop-out

Results

The ten papers presented ten different studies. Of these, six were conducted in USA, one in New Zealand, one in The Netherlands and two in Scandinavia. The papers were published between 1994 and 2005, of these two before 2000. The duration of the trials varied between two months and six years, with a median duration of 12 months.

Table 1 summarizes the studies on design, number of participants, trial duration and data collection. The method of evaluation and effect measures are summarized in Table 2 and statistical significant effect measures are summarized in Table 3.

Aim of the studies

All studies aimed at improving the GPs' adherence to the diabetes guidelines in order to improve the care for PT2D. All the studies leaned on existing guidelines or developed guidelines as a part of the intervention.

Format of feedback (interventions)

Nine studies used feedback distributed to the GPs in printed format [7–14] and two used electronic feedback [14, 15]. One study using electronic feedback also distributed the reports on paper [12]. Eight studies generated patient specific feedback [7, 9–12, 14, 15] while two generated aggregated feedback for specific practices or physicians [8, 16].

Study design used to evaluate feedback varied according to whether the feedback was the single aim of evaluation, or the feedback was only part of a larger intervention with the aim of evaluating other means of quality improvement as well. Six study groups concentrated only on feedback in their interventions [9, 10, 13–16]. Of these, one added benchmarks to the feedback in one intervention arm [16], and one let the patients fill in the feedback reports in the GP's waiting room [13].

Three studies compared regular feedback to other interventions, or combined feedback with other means of support: One combined feedback with outreach visits [8]; one compared feedback with face to face evaluation with an endocrinologist [7]. One study compared feedback filled in by patients in the waiting room and delivered in paper format with a computer reminder consisting of a blinking icon on the GP's computer screen [11].

One study categorised the intervention as multifaceted, and feedback to the GPs was part of the intervention [12].

Effect measures

All studies used process measures that were part of routine diabetes management, i.e. measuring blood glucose and serum cholesterol. Some used composite outcomes such as compliance rates, measuring the compliance of the GPs to the diabetes guidelines, while others focused on specific process or outcome measures (Table 2).

One study differed from the others by using groups of endpoints defined as: PT2D satisfaction (PS), Diabetes specific quality of life (Dsql) and Depression severity symptoms (Dss) evaluated by using validated questionnaires [13]. These endpoints were based on questionnaires and process measures combined.

Data collection

Data were collected via chart review [9, 10, 15, 16], encounter forms filled in by the participating GPs [8, 11], databases [14], or by combining the methods [10, 11]. In one study, all included patients were examined by one data collecting member of the research team [7].

Effect of feedback

One study showed no statistical, significant changes [10]. Nine papers reported a total of 23 statistically significant positive changes [7–9, 11–16]. Fifty one variables in nine papers did not change statistically significantly. No negative changes were reported (See Table 3). The process measure most often improved was foot examination. Aggregated outcomes improved significantly in three trials [9, 13, 14].

Long term effects

Our searches revealed 3 interventions which lasted more than three years [7, 12, 16]. All three interventions showed significant positive results. In one trial, the researchers concentrated on process measures. They showed significant results on foot examination, Hba1c measurement and influenza vaccination [16]. The two other trials both included effect measures, and both showed significant improvements on Hba1c levels, serum cholesterol levels and blood pressure levels [7, 12]. One long term trial included effect measures such as mortality, diabetic retinopathy, AMI, stroke, angina pectoris, claudicatio and amputation, but did not manage to show significant changes on any of these measures over a period of six years.

Methodological problems identified

In two studies, the participating GPs all worked in the same health facility [7, 10]. This may have produced a spill-over effect. There was a profound sampling variation in the included studies, for example: One study group chose to set up inclusion criteria for the GPs' participation in the study [9]. Another study group invited the participating GPs through ads in newspapers [8]. In one study, only 5% of the invited GPs participated in the study [13]. This variation in sampling makes comparison between the included studies difficult and meta-analysis irrelevant.

All studies were un-blinded to participating doctors, as allocation concealment was not possible. Some attempts of blinding were made in one study [17]. However, three study groups did anticipate the problem of contamination between intervention and control groups. One performed statistical analysis to investigate the size of the problem, which was seemingly insignificant [7]. Another study group argued against contamination due to lack of blinding in the study, based on recorded differences between control an intervention group, but without performing any statistical analysis of the size of the problem [8]. Lastly, one study group, as mentioned above, attempted blinding. However, the researchers themselves argue in the paper that the blinding was not extensive enough.

Electronic feedback

Two trials complied with the definition of using electronic feedback [14, 15]. Both trials obtained statistical significant effects on single measurements: Cholesterol measurement and blood pressure level, respectively. In addition, one of the trials demonstrated a statistical significant improvement of the compliance rate (Table 3).

Discussion

Main findings

This review demonstrates that it seems possible to improve the quality of diabetes care using feedback to the GPs. Significant positive changes are primarily seen on process, but also on outcome of Type 2 diabetes care. In many studies, large numbers of effect measures showed no significant change, but no study groups report deterioration in effect measures. In table 2 effect measures which showed significant positive changes are marked. All of the included studies leaned on national diabetes guidelines or developed diabetes guidelines for good control and care within the study.

Implications of findings

The findings of this review indicate that feedback could be a valuable asset in quality improvement efforts. In the 2006 Cochrane Review referred to earlier in this paper, it was stated that "(...) the relative effectiveness of audit and feedback is likely to be greater when baseline adherence to recommended practice is low (...)" [3]. The results of this review support that statement to a certain extent: Among the endpoints reviewed, three endpoints showed significant improvement in more than one trial, namely numbers of foot examination made and the mean level of Hba1c and blood pressure. Foot examination is traditionally an endpoint with low guideline adherence. Mean level of Hba1c and blood pressure are influenced by life style changes and medication alterations, and so is very dependent on a strong commitment in the patient to alter their life style. Often, the strong dependency on life style changes can be unappealing to the doctors, because motivating the patient for life style changes is a difficult path to embark on [18].

Strengths and weaknesses

The strength of this review is the specific aim limiting the searches to include only feedback trials on diabetes care in general practice. Ideally, this would optimise the homogeneity of the studies and strengthen the conclusions of the review.

One weakness was the risk of missing important trials. Papers written in other languages than English were excluded and it has been reported that such exclusion of trials in systematic reviews increases the likelihood of systematic errors and reduces precision [19].

It was not possible to perform meta-analysis on the findings due to heterogeneity of the studies included: The designs and methods of feedback were too inconsistent and the endpoints measured and the duration of the trials varied too much. The aim of all studies included however, was to improve diabetes care in general practice, according to the relevant guidelines. This common goal, paired with the delivering of feedback to GPs in all the studies included makes it relevant to draw conclusions based on this review.

Delivering feedback

Clinicians have limited time to concentrate on quality improvement in daily practice which is why efforts to improve and sustain quality should be made as easily available and useable as possible [20]. Using computers is generally considered to be a positive thing saving time to the daily routines for GPs [21, 22]. Considering this, it is striking that so few trials have tested electronic feedback on diabetes care in general practice.

Evaluating feedback

An important point when evaluating research within the field of quality improvement is choosing the relevant effect measures [18]. The studies included in this review were not consistent in choosing effect measures.

Another issue is whether the changes detected in the effect measures are actually attributable to feedback. In this paper we included one obvious multifaceted intervention [12]. While multifaceted interventions certainly have their place in investigating quality improvement in diabetic care, it can be difficult to know exactly which efforts made the difference. We are aware that attributing the significant changes in the care of the PT2D in the included multifaceted intervention solely to the efforts of providing feedback to the GPs probably is an over-interpretation. However, the effort of providing GPs with continuous feedback was made, and therefore the intervention should be considered in this review.

In this study we have limited our search to randomized controlled trials (RCTs). This approach is debatable. One could argue that, while RCTs are often referred to as the gold standard of trial designs, it provides a one-shot effect, which often covers a short time frame of the patients medical history. It is evident, when researching within the field of chronic disease management, that long term effects of interventions are very relevant. However, follow-up over time and without specific interventions demonstrates huge improvements in diabetic care [23]. Thus, it is difficult in non-RCTs to be sure that the improvement in care is due to the specific intervention applied. Within the field of feedback on diabetes care to general practitioners, non-RCT long term effect research has revealed effects on process, outcome and the overall provision of diabetes care [24, 25]. Even though these studies have not been included in this review due to design choices made, the results still relevantly support the conclusion that feedback on diabetes care to general practitioners can lead to better quality of care, and that this improvement of care could possibly be long term.

Conclusion

We found that disease specific feedback to GPs has an effect on diabetes care in General Practice. Pointing out which effect measures that are mostly affected by the interventions proved difficult.

Even though feedback seems a promising tool for quality improvement, it was only possible to identify ten very heterogeneous studies that evaluated feedback to GPs on diabetes care in randomized, controlled trials. Only two trials evaluated electronic feedback in randomized trials and it was not possible to quantify the added effect of electronic feedback. Therefore, more research of this area is required.

Abbreviations

- GP:

-

General practitioner

- PT2D:

-

People with type 2-diabetes

- Boc:

-

Based on own calculations on data extracted from paper

- Duc:

-

Data unavailable for further calculations

- OR:

-

Odds ratio

- HR:

-

Hazard ratio

- Inh:

-

inhibitor

- Vac:

-

vaccination

- Exam:

-

Examination.

References

Heathfield H, Pitty D, Hanka R: Evaluating information technology in health care: barriers and challenges. BMJ. 1998, 316: 1959-1961.

Thiru K, Hassey A, Sullivan F: Systematic review of scope and quality of electronic patient record data in primary care. BMJ. 2003, 326: 1070-10.1136/bmj.326.7398.1070.

Jamtvedt G, Young JM, Kristoffersen DT, Thomson O'Brien MA, Oxman AD: Audit and feedback: effects on professional practice and health care outcomes. Cochrane Database Syst Rev. 2003, CD000259-2

Kristensen JK, Bak JF, Wittrup I, Lauritzen T: Diabetes prevalence and quality of diabetes care among Lebanese or Turkish immigrants compared to a native Danish population. Primary Care Diabetes. 2007, 1: 159-165. 10.1016/j.pcd.2007.07.007.

Dexheimer JW, Talbot TR, Sanders DL, Rosenbloom ST, Aronsky D: Prompting clinicians about preventive care measures: a systematic review of randomized controlled trials. J Am Med Inform Assoc. 2008, 15: 311-320. 10.1197/jamia.M2555.

Götzsche PC: DIRAC Course of Systematic Reviews and Meta Analysis. 2007, [http://www.diracforsk.dk]

Phillips LS, Ziemer DC, Doyle JP, Barnes CS, Kolm P, Branch WT, Caudle JM, Cook CB, Dunbar VG, El-Kebbi IM, et al: An Endocrinologist-Supported Intervention Aimed at Providers Improves Diabetes Management in a Primary Care Site: Improving Primary Care of African Americans with Diabetes (IPCAAD) 7. Diabetes Care. 2005, 28: 2352-2360. 10.2337/diacare.28.10.2352.

Frijling BD, Lobo CM, Hulscher ME, Akkermans RP, Braspenning JC, Prins A, Wouden van der JC, Grol RP: Multifaceted support to improve clinical decision making in diabetes care: a randomized controlled trial in general practice. Diabet Med. 2002, 19: 836-842. 10.1046/j.1464-5491.2002.00810.x.

Lobach DF, Hammond WE: Development and evaluation of a Computer-Assisted Management Protocol (CAMP): improved compliance with care guidelines for diabetes mellitus. Proc Annu Symp Comput Appl Med Care. 1994, 787-791.

Nilasena DS, Lincoln MJ: A computer-generated reminder system improves physician compliance with diabetes preventive care guidelines. Proc Annu Symp Comput Appl Med Care. 1995, 640-645.

Kenealy T, Arroll B, Petrie KJ: Patients and Computers as Reminders to Screen for Diabetes in Family Practice. Randomized-Controlled Trial. Journal of General Internal Medicine. 2005, 20: 916-921. 10.1111/j.1525-1497.2005.0197.x.

Olivarius NF, Beck-Nielsen H, Andreasen AH, Horder M, Pedersen PA: Randomised controlled trial of structured personal care of type 2 diabetes mellitus. BMJ. 2001, 323: 970-975. 10.1136/bmj.323.7319.970.

Glasgow RE, Nutting PA, King DK, Nelson CC, Cutter G, Gaglio B, Rahm AK, Whitesides H, Amthauer H: A Practical Randomized Trial to Improve Diabetes Care. Journal of General Internal Medicine. 2004, 19: 1167-1174. 10.1111/j.1525-1497.2004.30425.x.

Sequist TD, Gandhi TK, Karson AS, Fiskio JM, Bugbee D, Sperling M, Cook EF, Orav EJ, Fairchild DG, Bates DW: A randomized trial of electronic clinical reminders to improve quality of care for diabetes and coronary artery disease. J Am Med Inform Assoc. 2005, 12: 431-437. 10.1197/jamia.M1788.

Hetlevik I, Holmen J, Kruger O, Kristensen P, Iversen H, Furuseth K: Implementing clinical guidelines in the treatment of diabetes mellitus in general practice. Evaluation of effort, process, and patient outcome related to implementation of a computer-based decision support system. Int J Technol Assess Health Care. 2000, 16: 210-227. 10.1017/S0266462300161185.

Kiefe CI, Allison JJ, Williams OD, Person SD, Weaver MT, Weissman NW: Improving quality improvement using achievable benchmarks for physician feedback: a randomized controlled trial. JAMA. 2001, 285: 2871-2879. 10.1001/jama.285.22.2871.

Lobach MPMD, Hammond P: Computerized Decision Support Based on a Clinical Practice Guideline Improves Compliance with Care Standards. The American Journal of Medicine. 1997, 102: 89-98. 10.1016/S0002-9343(96)00382-8.

Jallinoja P, Absetz P, Kuronen R, Nissinen A, Talja M, Uutela A, Patja K: The dilemma of patient responsibility for lifestyle change: Perceptions among primary care physicians and nurses. Scandinavian Journal of Primary Health Care. 2007, 25: 244-249. 10.1080/02813430701691778.

Moher D, Fortin P, Jadad A, Jüni P, Klassen T, Le Lorier J, Liberati A, Linde K, Penna A: Completeness of reporting of trials published in languages other than English: implications for conduct and reporting of systematic reviews. Lancet. 1996, 347 (8998): 363-6. 10.1016/S0140-6736(96)90538-3.

Langley C, Faulkner A, Watkins C, Gray S, Harvey I: Use of guidelines in primary care – practitioners' perspectives. Fam Pract. 1998, 15: 105-111. 10.1093/fampra/15.2.105.

Gandhi TK, Sequist TD, Poon EG, Karson AS, Murff H, Fairchild DG, Kuperman GJ, Bates DW: Primary care clinician attitudes towards electronic clinical reminders and clinical practice guidelines. AMIA Annu Symp Proc. 2003, 848-

Stead WW, Haynes RB, Fuller S, Friedman CP, Travis LE, Beck JR, Fenichel CH, Chandrasekaran B, Buchanan BG, Abola EE, et al: Designing medical informatics research and library – resource projects to increase what is learned. J Am Med Inform Assoc. 1994, 1: 28-33.

Kristensen JK, Lauritzen T: [Quality indicators of type 2-diabetes monitoring during 2000–2005]. Ugeskr Laeger. 2009, 171: 130-134.

de Grauw WJ, van Gerwen WH, Lisdonk van de EH, Hoogen van den HJ, Bosch van den WJ, van WC: Outcomes of audit-enhanced monitoring of patients with type 2 diabetes. J Fam Pract. 2002, 51: 459-464.

Valk GD, Renders CM, Kriegsman DM, Newton KM, Twisk JW, van Eijk JT, van der WG, Wagner EH: Quality of care for patients with type 2 diabetes mellitus in the Netherlands and the United States: a comparison of two quality improvement programs. Health Serv Res. 2004, 39: 709-725. 10.1111/j.1475-6773.2004.00254.x.

Pre-publication history

The pre-publication history for this paper can be accessed here:http://www.biomedcentral.com/1471-2296/10/30/prepub

Acknowledgements

Funding was given by Regional and National Public Foundations, as well as Vissings Foundation, Danielsens Foundation and The A.P. Møllers Foundation Promoting Medical Science.

Author information

Authors and Affiliations

Corresponding author

Additional information

Competing interests

The authors declare that they have no competing interests.

Authors' contributions

TLG co-designed the study, carried out searches and assessments, and drafted the manuscript. TL conceived of the study and participated in the writing process. JKK revised the manuscript and co-designed the study. PV co-designed the study, and was part of the assessments and the writing process. All authors have read and approved the final manuscript.

Authors’ original submitted files for images

Below are the links to the authors’ original submitted files for images.

Rights and permissions

This article is published under license to BioMed Central Ltd. This is an Open Access article distributed under the terms of the Creative Commons Attribution License (http://creativecommons.org/licenses/by/2.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Guldberg, T.L., Lauritzen, T., Kristensen, J.K. et al. The effect of feedback to general practitioners on quality of care for people with type 2 diabetes. A systematic review of the literature. BMC Fam Pract 10, 30 (2009). https://doi.org/10.1186/1471-2296-10-30

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/1471-2296-10-30