Abstract

Background

More than 200 studies related to nucleic acid amplification (NAA) tests to detect Mycobacterium tuberculosis directly from clinical specimens have appeared in the world literature since this technology was first introduced. NAA tests come as either commercial kits or as tests designed by the reporting investigators themselves (in-house tests). In-house tests vary widely in their accuracy, and factors that contribute to heterogeneity in test accuracy are not well characterized. Here, we used meta-analytical methods, including meta-regression, to identify factors related to study design and assay protocols that affect test accuracy in order to identify those factors associated with high estimates of accuracy.

Results

By searching multiple databases and sources, we identified 2520 potentially relevant citations, and analyzed 84 separate studies from 65 publications that dealt with in-house NAA tests to detect M. tuberculosis in sputum samples. Sources of heterogeneity in test accuracy estimates were determined by subgroup and meta-regression analyses. Among 84 studies analyzed, the sensitivity and specificity estimates varied widely; sensitivity varied from 9.4% to 100%, and specificity estimates ranged from 5.6% to 100%. In the meta-regression analysis, the use of IS6110 as a target, and the use of nested PCR methods appeared to be significantly associated with higher diagnostic accuracy.

Conclusion

Estimates of accuracy of in-house NAA tests for tuberculosis are highly heterogeneous. The use of IS6110 as an amplification target, and the use of nested PCR methods appeared to be associated with higher diagnostic accuracy. However, the substantial heterogeneity in both sensitivity and specificity of the in-house NAA tests rendered clinically useful estimates of test accuracy difficult. Future development of NAA-based tests to detect M. tuberculosis from sputum specimens should take into consideration these findings in improving accuracy of in-house NAA tests.

Similar content being viewed by others

Background

Accurate and early diagnosis of tuberculosis (TB) is a critical step in the management and control of TB. Because conventional tests for TB have several limitations, nucleic acid amplification (NAA) tests have emerged as promising alternatives. The polymerase chain reaction (PCR) is the best-known and most widely used NAA test. NAA tests are categorized as commercial (kit-based) or in-house ("home-brew"). In-house tests are those assays where the investigators design their own protocols. In-house tests are commonly used in developing countries where commercial kits may not be affordable. The accuracy of NAA tests for TB has been extensively studied since the early 1990s. Since hundreds of studies have evaluated the accuracy of NAA tests, it is now possible to evaluate their overall performance using meta-analysis methods and determine which study design or test-related characteristics are associated with higher diagnostic accuracy.

Several systematic reviews and meta-analyses have been published in the past few years on the accuracy of NAA tests for pulmonary and extra-pulmonary TB [1, 5]. Because these meta-analyses and reviews have synthesized data from over 200 primary studies, and because their results are highly consistent with each other, they provide us with the best available evidence on the accuracy of NAA tests. The following are the main findings of the meta-analyses and reviews: most of the studies on NAA tests reported very high estimates of specificity, for both pulmonary and extra-pulmonary TB [1–5]. Sensitivity estimates, in contrast, have been much lower and highly variable (heterogeneous) [1–5]. Sensitivity estimates have been lower in paucibacillary forms of TB (smear-negative pulmonary and extra-pulmonary TB), and higher in smear-positive pulmonary TB [2, 3]. Another striking result is the widespread lack of consistency in accuracy estimates across studies – studies have reported highly variable estimates of test accuracy [1–5]. For example, in our previous meta-analysis on NAA tests for tuberculous meningitis, sensitivity estimates varied between 0 – 100% [3]. In general, almost all the meta-analyses have demonstrated that the sensitivity and specificity of in-house PCR assays have been more variable and inconsistent than commercial tests [2, 3, 5].

Why do studies on in-house PCR assays produce such highly variable estimates of sensitivity and specificity? Is the variability due to differences in study design or to differences in assay characteristics and laboratory techniques? Are there specific study design features and assay characteristics that yield higher estimates of accuracy? Answers to these questions might help to identify features of NAA tests that maximize accuracy. However, these questions are difficult to address in individual studies. Meta-analytic methods, on the other hand, are well suited to explore the issue of why studies produce variable results. By synthesizing data from multiple studies and increasing the power of analyses, meta-analyses are able to employ techniques that help to identify sources of heterogeneity in study findings. In this meta-analysis, we reviewed 65 published studies on in-house NAA tests for pulmonary TB. The main objective of our meta-analysis was to determine factors associated with heterogeneity as well as higher accuracy estimates of accuracy in studies that evaluated in-house PCR for the diagnosis of pulmonary TB.

Results

Study selection

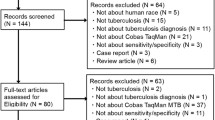

By searching multiple databases and sources we identified 2520 potentially relevant citations on NAA tests for tuberculosis. After screening titles and abstracts, 434 English and Spanish articles were selected for full-text review and 129 articles reported inclusion of sputum specimens tested by an in-house PCR assay. Sixty-one articles were then excluded mainly because data were not separately provided for sputum samples (sputum and other clinical specimens were analyzed together). Also, three articles were excluded because real time PCR was used and the number was insufficient to place them in a separate category. A total of 65 articles [10–74], were included in the final analysis. Four articles were in Spanish [19, 41, 46, 53]. Thirteen articles reported evaluations of more than one NAA test against a common reference standard [11, 13, 14, 20, 28, 36, 47, 54, 60, 62, 68, 69, 72]. Each such test comparison was counted as a separate study. Thus, the total number of test comparisons (hereafter referred to as studies) was 84.

Characteristics of included studies

The summary characteristics of the included studies are shown in Table 1. The average sample size of the included studies was 149 (range 14 to 727). Our data, as seen in Table 1, were affected by the poor quality of reporting in the primary studies. Fifty-five of 84 (65.5%) studies did not report blinded interpretation of NAA test independent of the reference standard, while only 29 of 84 (34.5%) reported single or double blinding of NAA test and the reference standard. Most of the studies, 60 of 84 (72%), were cross-sectional whereas 24 (28%) were case-control studies. The studies differed greatly in terms of laboratory characteristics. Fifty-four of 84 (65%) studies used IS6110 as amplification target by itself or in combination with other targets, and 30 studies (35%) used other targets (e.g. MPB64, 38 kDa). The studies were categorized as those using any chemical method for DNA extraction (including phenol-chloroform) and those in which any physical or mechanical extraction method was used. Sixty-eight of 84 (81%) studies reported a simple PCR protocol (including multiplex PCR), whereas 16 (19%) studies used a nested or seminested PCR protocol. Lastly, 49 of 84 (58%) studies used UV transillumination of an electrophoretic gel, and 35 (42%) used DNA hybridization probes to detect the amplification products.

Overall diagnostic accuracy

When all 84 studies were evaluated together, the sensitivity estimates ranged from 9.4% to 100%, and specificity estimates ranged from 5.6% to 100%. Both measures were highly heterogeneous (P < 0.001 for test of heterogeneity). Figure 1 shows the overall accuracy of PCR in a summary receiver operating characteristic (SROC) plot. The symmetric curve shows a trade off between sensitivity and specificity. The area under the SROC curve was 97%, and summary DOR was 159.4, indicating high accuracy. However, the significant heterogeneity in sensitivity and specificity estimates precluded the determination of clinically useful summary measures.

Exploration of heterogeneity

In order to identify factors associated with heterogeneity, we performed stratified (subgroup) analyses. Table 2 presents the study quality and assay factors assessed and their effect on the estimated summary Diagnostic Odds Ratio (DOR). As seen in Table 2, studies that did not report the use of blinding produced a DOR nearly 2.5 times higher than studies that reported blinded interpretation of index test and reference standard results. Studies with PCR tests that used a nested protocol had almost 2 times higher DOR than those using a regular PCR protocol. The use of IS6110 target in comparison with those using any other target for amplification showed a DOR 1.7 times higher than studies that used other targets. A similar result was obtained when studies that used UV transillumination of a gel were compared to those that used a probe for detection. Studies that used chemical reagents for DNA extraction produced DOR estimates that were about 1.12 times greater than studies that used physical methods, indicating that the use of chemicals (including phenol chloroform) does not significantly improve the test accuracy. Only five studies reported the analysis on smear negative samples. When stratified by smear status, no major difference was seen in the DOR. But this result may be due to the small number of studies reporting only smear negative samples for diagnosis.

As in our previous meta-analyses [2, 3], none of the stratified analyses for DOR results fully explained the significant heterogeneity across studies in this review; the statistical tests for heterogeneity were significant even within the various strata (data not shown). Therefore, a meta-regression analysis was performed (Table 3) to simultaneously evaluate multiple covariates in the same analysis. The outcome of the regression analysis is reported as the Relative Diagnostic Odds Ratios (RDOR).

As shown in Table 3, studies that used IS6110 as target of amplification, and studies that used nested PCR methods produced RDOR that were significantly higher than those that used other amplification targets or PCR methods. We present the SROC curves for these subgroups in figures 3A and 3B for target and amplification technique, respectively, to show the trade off between sensitivity and specificity. Blinding, detection technique and smear status showed a slightly higher RDOR but they were not statistically significant in the final regression model. Chemical-based DNA extraction did not produce a significant RDOR, indicating that the use of any chemical reagent for DNA extraction did not substantially affect diagnostic accuracy. No difference was seen in DOR in those studies that used phenol-chloroform versus any other DNA extraction method (data not shown).

Discussion

Principal findings

Diagnostic methods and, therefore, control of tuberculosis would be greatly improved by the standardization and application of nucleic acid amplification tests. Our meta-analytical review of 84 in-house PCR-based studies to detect M. tuberculosis in sputum samples showed a summary receiver operator characteristic (SROC) of 97%, indicating an overall high accuracy of these tests. However, because of significant heterogeneity in sensitivity and specificity estimates, clinically meaningful estimates of accuracy could not be derived. Our analysis showed substantial variability in specificity and sensitivity estimates, and it is clear that in-house PCR tests produce highly inconsistent estimates of diagnostic accuracy. Heterogeneity was clearly evident in the results and could not been explained fully even after stratified analyses. Variability in study design, study quality, and differences in thresholds (cut-points) across studies might account for some of the observed heterogeneity. Nevertheless, the meta-regression analysis highlighted some variables that do appear to yield higher accuracy estimates. The use of IS6110 as the amplification target, and the use of a nested PCR protocol appear to enhance accuracy. It is therefore worth considering the inclusion of these elements in in-house PCR protocols. Our analyses also suggest that the methods used for DNA extraction and signal detection were not critical.

Clinical implications

Because of the observed heterogeneity in sensitivity and specificity, it is difficult to determine clinically useful estimates of accuracy. On the other hand, our findings have some relevance for the clinical microbiology laboratory. Our results suggest that the use of IS6110 target sequence in the protocol, and the use of nested PCR methods appear to significantly increase the diagnostic accuracy of PCR. In our previous meta-analysis on NAA test for tuberculous pleuritis, we found that NAA tests that used IS6110 targets produced DOR estimates 2.5 times higher than tests that used other target sequences [48]. Lack of blinding has been found to be associated with higher accuracy in previous meta-analyses [49, 53]. Nevertheless we did not find a significant effect in our meta-regression model. Our stratified analyses, however, did show that unblinded studies were associated with higher summary DOR than blinded studies. Previous empiric research [37] and our earlier meta-analyses [48, 49] suggest that studies that use a case-control design tend to overestimate diagnostic accuracy. Surprisingly, study design had little impact on diagnostic accuracy in our current analyses. It is possible that laboratory factors (such as target sequence and amplification technique) had a much stronger impact on accuracy than study design features in our analyses.

Limitations of the review

In our review, we found only five studies reporting the analysis of smear negative specimens. Therefore, we could not determine the effect of smear status on accuracy of PCR. Since clinical sputum specimens frequently include smear-negative samples, the conclusions made in this meta-analysis may not apply to studies that included a large number of smear-negative samples. The accuracy estimates for smear-negative specimens have mostly been derived from studies on commercial kits, which have shown high specificity but lower and variable sensitivity [53]. The US Food and Drug Administration (FDA) approved the use of specific commercial kits initially only for smear positive samples, and recently for smear negative specimens [75]. Our review also excluded more recent studies that used other protocols for the detection of amplified DNA, such as real time PCR. We found only three such studies, and hence they could not be subject to meta-analyses. In the future, such methods may prove to enhance NAA test accuracy.

Implications for research

One test characteristic significantly associated with increased accuracy was the use of IS6110 as a target of amplification. IS6110 is present in the M. tuberculosis genome, usually as multiple copies, which helps to increase the sensitivity of a PCR test. Potential problem with using this target is that some strains from certain parts of the world lack this insertion sequence [76]. A possible solution may be to use more than one target. However, we found that multiplex PCR did not contribute to increase the diagnostic accuracy.

Conclusion

In summary, this meta-analytical review of various protocols for PCR-based diagnosis of pulmonary TB identified a few factors associated with improved diagnostic accuracy, and others that did not make a substantial difference. Future development of NAA-based tests to detect M. tuberculosis from sputum specimens should take into consideration these test characteristics as a way to improve accuracy of in-house NAA tests to diagnose pulmonary TB.

Methods

Identification of studies

We searched the following databases: PUBMED (1985–2002), EMBASE (1988–2002), Web of Science (1990–2002), BIOSIS (1993–2002), Cochrane Library (2002; Issue 2), and LILACS (1990–2002). All searches were up to date as of August 2002. The PubMed search was repeated in March 2004, to cover recent literature. The Journal of Clinical Microbiology, a high-yield journal with respect to diagnostic studies was separately searched (1992–2003). The search terms used included "tuberculosis", "mycobacterium tuberculosis", "nucleic acid amplification techniques", "direct amplification test", "polymerase chain reaction", "ligase chain reaction", "molecular diagnostic techniques", "sensitivity and specificity", "accuracy", or "predictive value". Citations were searched from multiple databases as well as obtained from experts in the field and from manufacturers of commercial tests. Reference lists from primary and review articles were searched. English and Spanish articles were selected for final full-text review. Conference abstracts were excluded because they universally contained inadequate data to permit evaluation. This criterion had been used and reported in previous papers [3].

Study eligibility

Our search strategy aimed to include all available studies on in-house NAA tests for direct detection of M. tuberculosis in sputum specimens. To be included in the meta-analysis, a study should have: 1) included at least one comparison of an in-house PCR with an appropriate reference standard (i.e. culture), for detection of M. tuberculosis complex; 2) provided sufficient information on sensitivity and specificity; 3) provided enough information to judge methodological quality of the study.

The following studies were excluded from the review: 1) case reports; 2) evaluation of NAA tests on animal specimens; 3) studies on use of PCR assays for typing of strains; 4) studies on use of PCR assays for determining drug resistance; 5) studies on use of PCR for detection of only non-tuberculosis mycobacteria and 6) studies using only commercial NAA kits.

Data extraction

The final analyses included all available studies on in-house PCR tests for direct detection of M. tuberculosis in sputum specimens. Two reviewers (LLF and MP) determined study eligibility independently. After study selection, data were extracted from each included study using a standardized data extraction form.

The final set of English and Spanish articles was assessed by one reviewer (LLF) and a sample of these was assessed by a second reviewer (MP) to check accuracy in data extraction. For each study, the following quality criteria were scored as met or not: 1) independent comparison of NAA test against reference standard; 2) cross-sectional versus case-control study design; 3) blinded (single or double) interpretation of test and reference standard results. The test methodology criteria included: 1) species identification, 2) methodology used for DNA extraction 3) type of PCR performed (nested-seminested vs. regular, including multiplex), 4) amplification target (IS6110 vs. any other target) 5) method of detection of the final product (ultra-violet (UV) transillumination of an electrophoretic gel vs. use of labeled probes for DNA hybridization), 6) measures taken to avoid contamination and 7) inclusion of positive and negative controls in the assay. If no data on the above criteria were reported in the primary studies, we contacted the authors of the studies for such information. For the purposes of analyses, responses coded as "not reported" were grouped together with "not met".

Since discrepant analysis (where discordant results between test and culture results are resolved, post-hoc, using clinical data) may be a potential source of bias in NAA test assessments, we preferentially included unresolved data where available.

Meta-analysis methods

We used standard methods recommended for meta-analyses of diagnostic test evaluations [6]. Data were analyzed using Stata (version 8) and Meta-Disc (version 1.1) software. Our analyses focused on the following measures of diagnostic accuracy: sensitivity (true positive rate [TPR]), specificity (1-false positive rate [FPR]), and diagnostic odds ratio (DOR). Sensitivity is the proportion positive test results among those with the target disease. Specificity is the proportion negative test results among those without the disease. The DOR is a single indicator of test accuracy [7] that combines the data from sensitivity and specificity into a single number. The DOR of a test is the ratio of the odds of positive test results in the diseased relative to the odds of positive test results in the non-diseased. The value of a DOR ranges from 0 to infinity, with higher values indicating better discriminatory test performance (higher accuracy). A DOR of 1.0 indicates that a test does not discriminate between patients with the disorder and those without it. DOR values lower than 1.0 suggest improper test interpretation (a greater proportion of negative test results in the group with disease). Mathematically, the DOR can be computed using any of the following equations [7]:

DOR = (TP/FN) / (FP/TN)

DOR = [sensitivity/(1 - specificity)] / [(1 - specificity)/specificity]

DOR = Positive likelihood ratio / Negative likelihood ratio

Each study in the meta-analysis contributed a pair of numbers: TPR and FPR. Since these measures are correlated and vary with the thresholds (cut points for determining test positives) employed in the individual studies, it is customary to analyze TPR and FPR proportions as pairs, and to also explore the effect of threshold on study results [6]. We summarized the joint distribution of sensitivity and specificity using the Summary Receiver Operating Characteristic (SROC) curve [8]. Unlike a traditional ROC plot that explores the effect of varying thresholds on sensitivity and specificity in a single study, each data point in the SROC space represents a separate study. The SROC curve is obtained by fitting a symmetric regression curve to pairs of TPR and FPR. The SROC curve and the area under it present an overall summary of test performance, and display the trade off between sensitivity and specificity. A shoulder-like ROC curve suggests that variability in thresholds employed could, in part, explain variability in study results. It also suggests a common, homogeneous underlying DOR that does not change with the diagnostic threshold. The area under the SROC curve is a global measure of overall test accuracy. An area under the curve of 100% indicates perfect discriminatory ability. Heterogeneity in meta-analysis refers to a high degree of variability in study results [9]. Such heterogeneity could be due to variability in thresholds (cut-points), disease spectrum, test methods, and study quality across studies [9]. In the presence of significant heterogeneity, pooled, summary estimates from meta-analyses are not meaningful. We investigated heterogeneity using a meta-regression analysis. The meta-regression analysis was an extension of the SROC model [8, 9]. In this unweighted linear regression model, studies (and not patients or specimens) were the units of analysis. The DOR was the outcome (dependent) variable. The independent variables were the covariates that might be associated with the variability in the DOR. Based on our previous meta-analyses [1–3], the following covariates were specified a priori as potential sources of variability: study design, blinded interpretation of NAA test and reference standard, type of PCR test, target sequence amplified, use of probes to detect amplification products, and use of phenol-chloroform for DNA extraction. The results of the meta-regression model are expressed as relative diagnostic odds ratios (RDOR) [7, 9]. An RDOR of 1.0 indicates that a particular covariate (e.g. blinded study design) does not affect the overall DOR. An RDOR >1.0 indicates that studies with a particular characteristic (e.g. those that employed a specific target sequence in the PCR) have a higher DOR than studies without this characteristic. For a RDOR <1.0, the reverse holds.

References

Flores LL: M. tuberculosis in clinical samples from patients with pulmonary tuberculosis: a systematic review and meta-analysis. Master's in Public Health Thesis. 2004, University of California, Berkeley, Department of Infectious Diseases. Spring

Pai M, Flores LL, Hubbard A, Riley LW, Colford JM: Nucleic acid amplification in the diagnosis of tuberculous pleuritis: a systematic review and meta-analysis. BMC Infect Dis. 2004, 4: 6-10.1186/1471-2334-4-6.

Pai M, Flores LL, Pai N, Hubbard A, Riley LW, Colford JM: Diagnostic accuracy of nucleic acid amplification tests for tuberculous meningitis: a systematic review and meta-analysis. Lancet Infect Dis. 2003, 3: 633-643. 10.1016/S1473-3099(03)00772-2.

Piersimoni C, Scarparo C: Relevance of commercial amplification methods for direct detection of Mycobacterium tuberculosis complex in clinical samples. J Clin Microbiol. 2003, 41: 5355-5365. 10.1128/JCM.41.12.5355-5365.2003.

Sarmiento OL, Weigle K, Alexander J, Weber DJ, Miller WC: Assessment by meta-analysis of PCR for diagnosis of smear-negative pulmonary tuberculosis. J Clin Microbiol. 2003, 41: 3233-3240. 10.1128/JCM.41.7.3233-3240.2003.

Pai M, McCulloch M, Enanoria W, Colford JM: Systematic reviews of diagnostic test evaluations: What's behind the scenes?. Evid Based Med. 2004, 9: 101-103. 10.1136/ebm.9.4.101.

Glas AS, Lijmer JG, Prins MH, Bonsel GJ, Bossuyt PM: The diagnostic odds ratio: a single indicator of test performance. J Clin Epidemiol. 2003, 56: 1129-1135. 10.1016/S0895-4356(03)00177-X.

Littenberg B, Moses L: Estimating diagnostic accuracy from multiple conflicting reports: a new meta-analytic method. Med Decis Making. 1993, 13: 313-321.

Lijmer JG, Bossuyt PM, Heisterkamp SH: Exploring sources of heterogeneity in systematic reviews of diagnostic tests. Stat Med. 2002, 21: 1525-537. 10.1002/sim.1185.

Altamirano M, Kelly MT, Wong A, Bessuille ET, Black WA, Smith JA: Characterization of a DNA probe for detection of Mycobacterium tuberculosis complex in clinical samples by polymerase chain reaction. J Clin Microbiol. 1992, 30: 2173-2176.

Amicosante M, Richeldi L, Trenti G, Paone G, Campa M, Bisetti A, Saltini C: Inactivation of polymerase inhibitors for Mycobacterium tuberculosis DNA amplification in sputum by using capture resin. J Clin Microbiol. 1995, 33: 629-630.

Andersen AB, Thybo S, Godfrey-Faussett P, Stoker NG: Polymerase chain reaction for detection of Mycobacterium tuberculosis in sputum. Eur J Clin Microbiol Infect Dis. 1993, 12: 922-927. 10.1007/BF01992166.

Aslanzadeh J, de la Viuda M, Fille M, Smith WB, Namdari H: Comparison of culture and acid-fast bacilli stain to PCR for detection of Mycobacterium tuberculosis in clinical samples. Mol Cell Probes. 1998, 12: 207-211. 10.1006/mcpr.1998.0174.

Beige J, Lokies J, Schaberg T, Finckh U, Fischer M, Mauch H, Lode H, Kohler B, Rolfs A: Clinical evaluation of a Mycobacterium tuberculosis PCR assay. J Clin Microbiol. 1995, 33: 90-95.

Brisson-Noel A, Gicquel B, Lecossier D, Levy-Frebault V, Nassif X, Hance AJ: Rapid diagnosis of tuberculosis by amplification of mycobacterial DNA in clinical samples. Lancet. 1989, 2: 1069-1071. 10.1016/S0140-6736(89)91082-9.

Chaiprasert A, Prammananan T, Samerpitak K, Pattanakitsakul S, Yenjitsomanus P, Jearanaisilawong J, Charoenratanakul S: Direct detection of M. tuberculosis in sputum by reamplification with 16S rRNA-based primer. Asia-Pacific Journal of Molecular Biology and Biotechnology. 1996, 4: 250-259.

Choi YJ, Hu Y, Mahmood A: Clinical significance of a polymerase chain reaction assay for the detection of Mycobacterium tuberculosis. Am J Clin Pathol. 1996, 105: 200-204.

Clarridge JE, Shawar RM, Shinnick TM, Plikaytis BB: Large-scale use of polymerase chain reaction for detection of Mycobacterium tuberculosis in a routine mycobacteriology laboratory. J Clin Microbiol. 1993, 31: 2049-2056.

Codina G, Gonzalez-Fuente T, Martin N: DNA amplification with PCR for tuberculosis diagnosis. [Spanish]. Enfermedades Infecciosas y Microbiologia Clinica. 1992, 10: 277-280.

Cohen RA, Muzaffar S, Schwartz D, Bashir S, Luke S, McGartland LP, Kaul K: Diagnosis of pulmonary tuberculosis using PCR assays on sputum collected within 24 hours of hospital admission. Am J Respir Crit Care Med. 1998, 157: 156-161.

Dar L, Sharma SK, Bhanu NV, Broor S, Chakraborty M, Pande JN, Seth P: Diagnosis of pulmonary tuberculosis by polymerase chain reaction for MPB64 gene: an evaluation in a blind study. Indian J Chest Dis Allied Sci. 1998, 40: 5-16.

Del Portillo P, Murillo LA, Patarroyo ME: Amplification of a species-specific DNA fragment of Mycobacterium tuberculosis and its possible use in diagnosis. J Clin Microbiol. 1991, 29: 2163-2168.

Del Portillo P, Thomas MC, Martinez E, Maranon C, Valladares B, Patarroyo ME, Lopez MC: Multiprimer PCR system for differential identification of mycobacteria in clinical samples. J Clin Microbiol. 1996, 34: 324-328.

Down JA, O'Connell MA, Dey MS, Walters AH, Howard DR, Little MC, Keating WE, Zwadyk P, Haaland PD, McLaurin DA, Cole G: Detection of Mycobacterium tuberculosis in respiratory specimens by strand displacement amplification of DNA. J ClinMicrobiol. 1996, 34: 860-865.

Eisenach KD, Sifford MD, Cave MD, Bates JH, Crawford JT: Detection of Mycobacterium tuberculosis in sputum samples using a polymerase chain reaction. Am Rev Respir Dis. 1991, 144: 1160-1163.

Folgueira L, Delgado R, Palenque E, Noriega AR: Detection of Mycobacterium tuberculosis DNA in clinical samples by using a simple lysis method and polymerase chain reaction. J Clin Microbiol. 1993, 31: 1019-1021.

Forbes BA, Hicks KE: Direct detection of Mycobacterium tuberculosis in respiratory specimens in a clinical laboratory by polymerase chain reaction. J Clin Microbiol. 1993, 31: 1688-1694.

Garcia-Quintanilla A, Garcia L, Tudo G, Navarro M, Gonzalez J, Jimenez de Anta MT: Single-tube balanced heminested PCR for detecting Mycobacterium tuberculosis in smear-negative samples. J Clin Microbiol. 2000, 38: 1166-1169.

Gengvinij N, Pattanakitsakul SN, Chierakul N, Chaiprasert A: Detection of Mycobacterium tuberculosis from sputum specimens using one-tube nested PCR. Southeast Asian J Trop Med Public Health. 2001, 32: 114-125.

Ginesu F, Pirina P, Sechi LA, Molicotti P, Santoru L, Porcu L, Fois A, Arghittu P, Zanetti S, Fadda G: Microbiological diagnosis of tuberculosis: a comparison of old and new methods. J Chemother. 1998, 10: 295-300.

Gunisha P, Madhavan HN, Jayanthi U, Therese KL: Polymerase chain reaction using IS6110 primer to detect Mycobacterium tuberculosis in clinical samples. Indian J Pathol Microbiol. 2001, 44: 97-102.

Hashimoto T, Suzuki K, Amitani R, Kuze F: Rapid Detection of Mycobacterium tuberculosis in Sputa by the Amplification of IS6110. Internal Medicine. 1995, 34: 605-610.

Ikonomopoulos JA, Gorgoulis VG, Zacharatos PV, Kotsinas A, Tsoli E, Karameris A, Panagou P, Kittas C: Multiplex PCR assay for the detection of mycobacterial DNA sequences directly from sputum. In Vivo. 1998, 12: 547-552.

Ikonomopoulos JA, Gorgoulis VG, Zacharatos PV, Manolis EN, Kanavaros P, Rassidakis A, Kittas C: Multiplex polymerase chain reaction for the detection of mycobacterial DNA in cases of tuberculosis and sarcoidosis. Modern Pathology. 1999, 12: 854-862.

Kambashi B, Mbulo G, McNerney R, Tembwe R, Kambashi A, Tihon V, Godfrey-Faussett P: Utility of nucleic acid amplification techniques for the diagnosis of pulmonary tuberculosis in sub-Saharan Africa. Int J Tuberc Lung Dis. 2001, 5: 364-369.

Kearns AM, Freeman R, Steward M, Magee JG: A rapid polymerase chain reaction technique for detecting M. tuberculosis in a variety of clinical specimens. J Clin Pathol. 1998, 51: 922-924.

Kocagoz T, Yilmaz E, Ozkara S, Kocagoz S, Hayran M, Sachedeva M, Chambers HF: Detection of Mycobacterium tuberculosis in sputum samples by polymerase chain reaction using a simplified procedure. J Clin Microbiol. 1993, 31: 1435-1438.

Kolk AH, Schuitema AR, Kuijper S, van Leeuwen J, Hermans PW, van Embden JD, Hartskeerl RA: Detection of Mycobacterium tuberculosis in clinical samples by using polymerase chain reaction and a nonradioactive detection system. J Clin Microbiol. 1992, 30: 2567-2575.

Kox LF, Jansen HM, Kuijper S, Kolk AH: Multiplex PCR assay for immediate identification of the infecting species in patients with mycobacterial disease. J Clin Microbiol. 1997, 35: 1492-1498.

Kox LF, Rhienthong D, Miranda AM, Udomsantisuk N, Ellis K, van Leeuwen J, van Heusden S, Kuijper S, Kolk AH: A more reliable PCR for detection of Mycobacterium tuberculosis in clinical samples. J Clin Microbiol. 1994, 32: 672-678.

Laniado-Laborin R, Enriquez-Rosales ML, Licea Navarroll AF: Diagnosis of tuberculosis by detection of Mycobacterium tuberculosis with a noncommercial polymerase chain reaction. [Spanish]. Revista del Instituto Nacional de Enfermedades Respiratorias. 2001, 14: 22-26.

Maher M, Glennon M, Martinazzo G, Turchetti E, Marcolini S, Smith T, Dawson MT: Evaluation of a novel PCR-based diagnostic assay for detection of Mycobacterium tuberculosis in sputum samples. J Clin Microbiol. 1996, 34: 2307-2308.

Manjunath N, Shankar P, Rajan L, Bhargava A, Saluja S, Shriniwas : Evaluation of a polymerase chain reaction for the diagnosis of tuberculosis. Tubercle. 1991, 72: 21-27. 10.1016/0041-3879(91)90020-S.

Mitarai S, Oishi K, Fukasawa M, Yamashita H, Nagatake T, Matsumoto K: Clinical-Evaluation of Polymerase Chain-Reaction DNA Amplification Method for the Diagnosis of Pulmonary Tuberculosis in Patients with Negative Acid-Fast Bacilli Smear. Tohoku Journal of Experimental Medicine. 1995, 177: 13-23.

Miyazaki Y, Koga H, Kohno S, Kaku M: Nested polymerase chain reaction for detection of Mycobacterium tuberculosis in clinical samples. J Clin Microbiol. 1993, 31: 2228-2232.

Moran Moguel MC, Hernandez DA, Pena-Montes de Oca PM, Gallegos Arreola MP, Flores-Martinez SE, Montoya-Fuentes H, Figuera LE, Villa-Manzanares L, Sanchez-Corona J: [Detection of Mycobacterium tuberculosis by polymerase chain reaction in a selected population in northwestern Mexico]. Rev Panam Salud Publica. 2000, 7: 389-394.

Mustafa AS, Chugh TD, Abal AT: Polymerase chain reaction targeting of single- and multiple-copy genes of mycobacteria in the diagnosis of tuberculosis. Nutrition. 1995, 11: 665-669.

Nolte FS, Metchock B, McGowan JE, Edwards A, Okwumabua O, Thurmond C, Mitchell PS, Plikaytis B, Shinnick T: Direct detection of Mycobacterium tuberculosis in sputum by polymerase chain reaction and DNA hybridization. J Clin Microbiol. 1993, 31: 1777-1782.

Noordhoek GT, Kaan JA, Mulder S, Wilke H, Kolk AH: Routine application of the polymerase chain reaction for detection of Mycobacterium tuberculosis in clinical samples. J Clin Pathol. 1995, 48: 810-814.

Pao CC, Yen TS, You JB, Maa JS, Fiss EH, Chang CH: Detection and identification of Mycobacterium tuberculosis by DNA amplification. J Clin Microbiol. 1990, 28: 1877-1880.

Patnaik M, Liegmann K, Peter JB: Rapid detection of smear-negative Mycobacterium tuberculosis by PCR and sequencing for rifampin resistance with DNA extracted directly from slides. J Clin Microbiol. 2001, 39: 51-52. 10.1128/JCM.39.1.51-52.2001.

Rodriguez JC, Fuentes E, Royo G: Comparison of two different PCR detection methods: Application to the diagnosis of pulmonary tuberculosis. Apmis. 1997, 105: 612-616.

Rodriguez JC, Fuentes E, Royo G: Detection of Mycobacterium tuberculosis in sputum by PCR and revealed chemoluminescence. [Spanish]. Enfermedades Infecciosas y Microbiologia Clinica. 1997, 15: 186-189.

Rossetti ML, Jardim SB, Moura AR, Oliveira H, Zaha A: Improvement of Mycobacterium tuberculosis detection in clinical samples using DNA purified by glass matrix. J Microbiol Methods. 1997, 28: 139-146. 10.1016/S0167-7012(97)00978-0.

Savic B, Sjobring U, Alugupalli S, Larsson L, Miorner H: Evaluation of polymerase chain reaction, tuberculostearic acid analysis, and direct microscopy for the detection of Mycobacterium tuberculosis in sputum. J Infect Dis. 1992, 166: 1177-1180.

Seethalakshmi S, Korath MP, Kareem F, Jagadeesan K: Detection of Mycobacterium tuberculosis by polymerase chain reaction in 301 biological samples – a comparative study. J Assoc Physicians India. 1998, 46: 763-766.

Soini H, Agha SA, El-Fiky A, Viljanen MK: Comparison of amplicor and 32-kilodalton PCR for detection of Mycobacterium tuberculosis from sputum specimens. J Clin Microbiol. 1996, 34: 1829-1830.

Soini H, Skurnik M, Liippo K, Tala E, Viljanen MK: Detection and identification of mycobacteria by amplification of a segment of the gene coding for the 32-kilodalton protein. J Clin Microbiol. 1992, 30: 2025-2028.

Sritharan V, Barker RH: A simple method for diagnosing M. tuberculosis infection in clinical samples using PCR. Mol Cell Probes. 1991, 5: 385-395.

Su WJ, Tsou AP, Yang MH, Huang CY, Perng RP: Clinical experience in using polymerase chain reaction for rapid diagnosis of pulmonary tuberculosis. Chin Med J (Taipei). 2000, 63: 521-526.

Tan MF, Ng WC, Chan SH, Tan WC: Comparative usefulness of PCR in the detection of Mycobacterium tuberculosis in different clinical specimens. J Med Microbiol. 1997, 46: 164-169.

Tansuphasiri U, Chinrat B, Rienthong S: Evaluation of culture and PCR-based assay for detection of Mycobacterium tuberculosis from sputum collected and stored on filter paper. Southeast Asian J Trop Med Public Health. 2001, 32: 844-855.

Thierry D, Brisson-Noel A, Vincent-Levy-Frebault V, Nguyen S, Guesdon JL, Gicquel B: Characterization of a Mycobacterium tuberculosis insertion sequence, IS6110, and its application in diagnosis. J Clin Microbiol. 1990, 28: 2668-2673.

Thierry D, Chureau C, Aznar C, Guesdon JL: The detection of Mycobacterium tuberculosis in uncultured clinical specimens using the polymerase chain reaction and a non-radioactive DNA probe. Mol Cell Probes. 1992, 6: 181-191. 10.1016/0890-8508(92)90015-P.

Totsch M, Schmid KW, Brommelkamp E, Stucker A, Puelacher C, Sidoroff G, Mikuz G, Bocker W, Dockhorn-Dworniczak B: Rapid detection of mycobacterial DNA in clinical samples by multiplex PCR. Diagn Mol Pathol. 1994, 3: 260-264.

van Helden PD, Toit R, Jordaan A, Taljaard B, Pitout J, Victor T: The use of the polymerase chain reaction test in the diagnosis of tuberculosis. S Afr Med J. 1991, 80: 515-516.

Verma A, Rattan A, Tyagi JS: Development of a 23S rRNA-based PCR assay for the detection of mycobacteria. Indian J Biochem Biophys. 1994, 31: 288-294.

Victor T, du Toit R, van Helden PD: Purification of sputum samples through sucrose improves detection of Mycobacterium tuberculosis by polymerase chain reaction. J Clin Microbiol. 1992, 30: 1514-1517.

Walker DA, Taylor IK, Mitchell DM, Shaw RJ: Comparison of polymerase chain reaction amplification of two mycobacterial DNA sequences, IS6110 and the 65kDa antigen gene, in the diagnosis of tuberculosis. Thorax. 1992, 47: 690-694.

Weekes KM, Pearse MJ, Sievers A, Ross BC, d'Apice AJ: The diagnostic use of the polymerase chain reaction for the detection of Mycobacterium tuberculosis. Pathology. 1994, 26: 482-486. 10.1080/00313029400169232.

Williams DL, Spring L, Gillis TP, Salfinger M, Persing DH: Evaluation of a polymerase chain reaction-based universal heteroduplex generator assay for direct detection of rifampin susceptibility of Mycobacterium tuberculosis from sputum specimens. Clin Infect Dis. 1998, 26: 446-450.

Wilson SM, McNerney R, Nye PM, Godfrey-Faussett PD, Stoker NG, Voller A: Progress toward a simplified polymerase chain reaction and its application to diagnosis of tuberculosis. J Clin Microbiol. 1993, 31: 776-782.

Yoon KH, Cho SN, Lee TY, Cheon SH, Chang J, Kim SK, Chong Y, Chung DH, Lee WY, Kim JD: Detection of Mycobacterium tuberculosis in clinical samples from patients with tuberculosis or other pulmonary diseases by polymerase chain reaction. Yonsei Med J. 1992, 33: 209-216.

Yuen KY, Chan KS, Chan CM, Ho BS, Dai LK, Chau PY, Ng MH: Use of PCR in routine diagnosis of treated and untreated pulmonary tuberculosis. J Clin Pathol. 1993, 46: 318-322.

CDC: Nucleic Acid Amplification Tests for Tuberculosis. MMWR. 2000, 49: 593-594.

Das S, Paramasivan CN, Lowrie DB, Prabhakar R, Narayanan PR: IS6110 Restriction Fragment Length Polymorphism Typing of Clinical Isolates of Mycobacterium tuberculosis from Patients with Pulmonary Tuberculosis in Madras, South India. Tuber Lung Dis. 1995, 76: 550-554. 10.1016/0962-8479(95)90533-2.

Acknowledgements

LLF is supported by the Fogarty Tuberculosis supplementary grant (TW00905-S1) and the Consejo Nacional de Ciencia y Tecnologia (CONACyT), National Council of Science and Technology, Mexico (scholarship number 129617). MP acknowledges the support of the National Institutes of Health (NIH), Fogarty AIDS International Training Program (1-D43-TW00003-16). LLF and MP were also partially supported by NIH/NIAID (RO1 AI 34238).

Author information

Authors and Affiliations

Corresponding author

Additional information

Authors' contributions

LLF designed the study, carried out the study selection, data abstraction, analysis and drafted the manuscript. MP participated in study selection, checked data abstraction and analysis. JC participated in the design of the study and critically reviewed the manuscript. LWR provided input in study design and critically reviewed the manuscript.

Authors’ original submitted files for images

Below are the links to the authors’ original submitted files for images.

Rights and permissions

Open Access This article is published under license to BioMed Central Ltd. This is an Open Access article is distributed under the terms of the Creative Commons Attribution License ( https://creativecommons.org/licenses/by/2.0 ), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Flores, L.L., Pai, M., Colford, J.M. et al. In-house nucleic acid amplification tests for the detection of Mycobacterium tuberculosis in sputum specimens: meta-analysis and meta-regression. BMC Microbiol 5, 55 (2005). https://doi.org/10.1186/1471-2180-5-55

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/1471-2180-5-55