Abstract

Background

One-dimensional protein structures such as secondary structures or contact numbers are useful for three-dimensional structure prediction and helpful for intuitive understanding of the sequence-structure relationship. Accurate prediction methods will serve as a basis for these and other purposes.

Results

We implemented a program CRNPRED which predicts secondary structures, contact numbers and residue-wise contact orders. This program is based on a novel machine learning scheme called critical random networks. Unlike most conventional one-dimensional structure prediction methods which are based on local windows of an amino acid sequence, CRNPRED takes into account the whole sequence. CRNPRED achieves, on average per chain, Q3 = 81% for secondary structure prediction, and correlation coefficients of 0.75 and 0.61 for contact number and residue-wise contact order predictions, respectively.

Conclusion

CRNPRED will be a useful tool for computational as well as experimental biologists who need accurate one-dimensional protein structure predictions.

Similar content being viewed by others

Explore related subjects

Find the latest articles, discoveries, and news in related topics.Background

One-dimensional (1D) structures of a protein are residue-wise quantities or symbols onto which some features of the native three-dimensional (3D) structure are projected. 1D structures are of interest for several reasons. For example, predicted secondary structures, a kind of 1D structures, are often used to limit the conformational space to be searched in 3D structure prediction. Furthermore, it has recently been shown that certain sets of the native (as opposed to predicted) 1D structures of a protein contain sufficient information to recover the native 3D structure [1, 2]. These 1D structures are either the principal eigenvector of the contact map [1] or a set of secondary structures (SS), contact numbers (CN) and residue-wise contact orders (RWCO) [2]. Therefore, it is possible, at least in principle, to predict the native 3D structure by first predicting the 1D structures, and then by constructing the 3D structure from these 1D structures. 1D structures are not only useful for 3D structure predictions, but also helpful for intuitive understanding of the correspondence between the protein structure and its amino acid sequence due to the residue-wise characteristics of 1D structures. Therefore, accurate prediction of 1D protein structures is of fundamental biological interest.

Secondary structure prediction has a long history [3]. Almost all the modern predictors are based on position-specific scoring matrices (PSSM) and some kind of machine learning techniques such as neural networks or support vector machines. Currently the best predictors achieve Q3 of 77–79% [4, 5]. The study of contact number prediction also started long time ago [6, 7], but further improvements were made only recently [8–10]. These recent methods are based on the ideas developed in SS predictions (i.e., PSSM and machine learning), and achieve a correlation coefficient of 0.68–0.73.

Recently, we have developed a new method for accurately predicting SS, CN, and RWCO based on a novel machine learning scheme, critical random networks (CRN) [10]. In this paper, we briefly describe the formulation of the method, and recent improvements leading to even better predictions. The computer program for SS, CN, and RWCO prediction named CRNPRED has been developed for the convenience of the general user, and a web interface and source code are made available online.

Implementation

Definition of 1D structures

Secondary structures (SS)

Secondary structures were defined by the DSSP program [11]. For three-state SS prediction, the simple encoding scheme (the so-called CK mapping) was employed [12]. That is, α helices (H), β strands (E), and other structures ("coils") defined by DSSP were encoded as H, E, and C, respectively. Note that we do not use the CASP-style conversion scheme (the so-called EHL mapping) in which DSSP's H, G (310 helix) and I (π helix) are encoded as H, and DSSP's E and B (β bridge) as E. We believe the CK mapping is more natural and useful for 3D structure predictions (e.g., geometrical restraints should be different between an α helix and a 310 helix). For SS prediction, we introduce feature variables () to represent each type of secondary structures at the i-th residue position, so that H is represented as (1, -1, -1), E as (-1, 1, -1), and C as (-1, -1, 1).

Contact numbers (CN)

Let Ci,jrepresent the contact map of a protein. Usually, the contact map is defined so that Ci,j= 1 if the i-th and j-th residues are in contact by some definition, or Ci,j= 0, otherwise. As in our previous study, we slightly modify the definition using a sigmoid function. That is,

Ci,j= 1/{1 + exp [w(ri,j- d)]} (1)

where ri,jis the distance between C β (C α for glycines) atoms of the i-th and j-th residues, d = 12Å is a cutoff distance, and w is a sharpness parameter of the sigmoid function which is set to 3 [8, 2]. The rather generous cutoff length of 12Å was shown to optimize the prediction accuracy [8]. The use of the sigmoid function enables us to use the contact numbers in molecular dynamics simulations [2]. Using the above definition of the contact map, the contact number of the i-th residue of a protein is defined as

The feature variable y i for CN is defined as y i = n i /log L where L is the sequence length of a target protein. The normalization factor log L is introduced because we have observed that the contact number averaged over a protein chain is roughly proportional to log L, and thus division by this value removes the size-dependence of predicted contact numbers.

Residue-wise contact orders (RWCO)

RWCO was first introduced in [2]. This quantity measures the extent to which a residue makes long-range contacts in a native protein structure. Using the same notation as contact numbers, the RWCO of the i-th residue in a protein structure is defined by

The feature variable y i for RWCO is defined as y i = o i /L where L is the sequence length. Due to the similar reason as CN, the normalization factor L was introduced to remove the size-dependence of the predicted RWCOs (the RWCO averaged over a protein chain is roughly proportional to the chain length).

Critical random networks

Here we briefly describe the critical random network (CRN) method introduced in [10] which should be referred to for the details. Unlike most conventional methods for 1D structure prediction [except for some including the bidirectional recurrent neural networks [13, 5, 14]], the CRN method takes the whole amino acid sequence into account. In the CRN method, an N-dimensional state vector x i is assigned to the i-th residue of the target sequence (we use N = 5000 throughout this paper). Neighboring state vectors along the sequence are connected via a random N × N orthogonal matrix W. This matrix is also block-diagonal with the size of blocks ranging uniformly randomly between 2 and 50. The input to the CRN is the position-specific scoring matrix (PSSM), U = (u1, ..., u L ) of the target sequence obtained by PSI-BLAST [15] (L is the sequence length of the target protein). We impose that the state vectors satisfy the following equation of state:

x i = tanh [βW (xi-1+ xi+1) + αV u i ] (4)

for i = 1, ..., L where V is an N × 21 random matrix (the 21st component of u i is always set to unity), and β and α are scalar parameters. The fixed boundary condition is imposed (x0 = xL+1= 0). By setting β = 0.5, the system of state vectors is made to be near a critical point in a certain sense, and thus the range of site-site correlation is expected to be long when α is sufficiently small but finite [10]. The value of α was chosen so that the resulting solution x i oscillates continuously with respect to the residue number i; that is, each component of x i having values from -1 to 1, rather than being a discrete sequence of -1 or 1. It can be shown that there exists a unique solution of Eq. 4 for a given PSSM U (provided the above boundary condition and β = 0.5). The solution {x i } of Eq. 4 (i.e., the state vectors) can be interpreted as some kind of patterns that reflect the complicated interactions among neighboring residues along the amino acid sequence. In this way, each state vector implicitly incorporates long-range correlations, and its components serve as additional independent variables to the linear predictor described in the following. The 1D structure of the i-th residue is predicted as a linear projection of a local window of the PSSM and the state vector obtained by solving Eq. 4:

where y i is the predicted quantity, and Dm,aand E k are the regression parameters. In the first summation, each PSSM column is extended to include the "terminal" residue. Since Eq. 5 is a simple linear equation once the equation of state (Eq. 4) has been solved, learning the parameters Dm,aand E k reduces to an ordinary linear regression problem. For SS prediction, the triple () is calculated simultaneously, and the SS class is predicted as arg maxs∈{H,E,C}. For the CN and RWCO prediction, real values are predicted. 2-state prediction is also made for CN using the average CN for each residue type as the threshold for "exposed" or "buried" as in [16]. We have noted earlier [8] that the apparent accuracy of 2-state CN prediction depend on the threshold. Although we proposed that using the median instead of average CN should be more appropriate for the threshold, here we use the average in order to compare our results with others. The half window size M is set to 9 for SS and CN predictions, and to 26 for RWCO. Note that the solution of the equation of state (Eq. 4) is determined solely by the PSSM. Therefore, obtaining the solution to Eq. (4) can be regarded as a kind of unsupervised learning, and the method for solving the equation of state is irrelevant for learning the parameters.

Ensemble prediction

Since the CRN-based prediction is parametrized by the random matrices W and V, slightly different predictions are obtained for different pairs of W and V. We can improve the prediction by taking the average over an ensemble of such different predictions. 20 CRN-based predictors were constructed using 20 sets of different random matrices W and V. CN and RWCO are predicted as uniform averages of these 20 predictions.

For SS prediction, we employ further training. Let be the prediction results of the n-th predictor for 1D structure t (H, E, C, CN, and RWCO) of the i-th residue. The second stage SS prediction is made by the following linear scheme:

where ss = H, E, C, and wn,t,mis the weight obtained from a training set. Finally, the feature variable for each SS class of the i-th residue is obtained by ()/4. This last procedure was found particularly effective for improving the segment overlap (SOV) measure.

Additional input

Another improvement is the addition of the amino acid composition of the target sequence to the predictor [9]: The term was added to Eq. 5 where F a is a regression parameter, and f a is the fraction of the amino acid type a. From a preliminary work based on a linear predictor [10], it was observed that this input slightly improved the accuracy by ~0.2%.

Training and test data set

We carried out a 15-fold cross-validation test following exactly the same procedure and the same data set as the previous study [10]. In the data set, there are 680 protein domains, each of which represents a superfamily according to the SCOP database (version 1.65) [17]. This data set was randomly divided so that 630 domains were used for training and the remaining 50 domains for testing, and the random division was repeated 15 times [See Additional File 1]. No pair of these domains belong to the same superfamily, and hence they are not expected to be homologous. Thus, the present benchmark is a very stringent one. For obtaining PSSMs by running PSI-BLAST, we use the UniRef100 (version 6.8) amino acid sequence database [18] containing some 3 million entries. Also the number of iterations in PSI-BLAST homology searches was reduced to 3 times from 10 used in the previous study. This especially increased the accuracy of SS predictions. These results are consistent with the study of [19].

Numerics

One drawback of the CRN method is the computational time required for numerically solving the equation of state (Eq. 4). For that purpose, instead of the Gauss-Seidel-like method previously used, we implemented a successive over-relaxation method which was found to be much more efficient. Let v denote the stage of iteration. We set the initial value of the state vectors (with v = 0) as

Then, for i = 1, ..., L (in increasing order of i), we update the state vectors by

Next, we update them in the reverse order. That is, for i = L, ..., 1 (in decreasing order of i),

We then set v ← v + 1, and iterate Eqs. (8) and (9) until {x i } converges. The acceleration parameter of ω = 1.4 was found effective. The convergence criterion is

where ||·|| R N denotes the Euclidean norm. This criterion is much less stringent than previous study (10-7), but this does not affect the prediction accuracy significantly. Convergence is typically achieved within 10 to 12 iterations for one protein.

It is noted that the algorithm and parameters presented in this subsection are determined only for efficiently solving the equation of state (Eq. 4). As such, the choice of the parameters such as ω or the threshold of convergence has little, if any, impact on the prediction accuracy.

Results and discussion

There are two main ingredients for the improved one-dimensional protein structure prediction in the present study. First is the use of large-scale critical random networks of 5000 dimension and 20 ensemble predictors. Second is the use of a large sequence database (UniRef100) for PSI-BLAST searches. As demonstrated in Table 1, the CRN method achieves remarkably accurate predictions. In comparison with the previous study [10] based on 2000-dimensional CRNs (10 ensemble predictors), the Q3 and SOV measures in SS predictions improved from 77.8% and 77.3% to 80.5% and 80.0%, respectively. Similarly, the average correlation coefficient improved from 0.726 to 0.746 for CN predictions, and from 0.601 to 0.613 for RWCO predictions. The 2-state predictions for CN yield, on average, Q2 = 76.8% per chain and 76.7% per residue, and Matthews' correlation coefficient of 0.533.

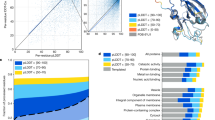

The dependence of the SS prediction accuracy on the dimension and ensemble size of CRNs shows clearly that larger scale CRNs lead to better predictions (Fig. 1). The difference between "CRN2000×10 (old)" and "CRN2000×10" (i.e., both 10 sets of CRNs with 2000-dimensional state vectors) signifies the improvement due to the use of larger sequence database, which is in fact quite significant. On the other hand, the difference between CRN3000×10 and CRN3000×20 exemplifies the difference due to the the ensemble size (i.e., 10 vs. 20). Increasing the ensemble size does improve the accuracy, but the effect is relatively small. The accuracy steadily increases as we use 4000 and 5000 dimensional state vectors. Finally, a small improvement is made by the use of the second stage filter. It may be possible to employ even larger state vectors to further improve the accuracy, but we did not try such possibility because of the hardware limitations.

A closer examination of the SS prediction results (Table 2) reveals the drastic improvement of β strand prediction from Q E = 61.9% to 69.3% (per residue). Although the values of Q C and are slightly lower than in the previous study by 0.6–1.0%, the accuracies of other classes have improved by 2.5–4%. Comparison of one prediction method with others is a difficult problem. Different methods are based on different data sets for both training and testing as well as the definition of secondary structural categories. In addition, prediction accuracies usually depend on the number of homologs used (see below), and the number of homologs, in turn, depends on the sequence database used. There are also ambiguities in what to compare: the best possible accuracies by any means or learning capacity of the method from a specified set of data, etc. With these cautions in mind, we present below the comparison between CRNPRED and other methods. The widely used PSIPRED program [4, 20] which is based on conventional feed-forward neural networks achieves Q3 of 78%. A more recently developed method, Porter, [5] which is based on bidirectional recurrent neural networks achieves Q3 of 79%. An even more intricate method based on bidirectional segmented-memory recurrent neural networks [14] shows an accuracy of Q3 = 73% (this rather low accuracy may be attributed to the small size of the training set used). To further examine the performance of CRNPRED in comparison with other methods, we extracted recently solved protein structures (71 protein chains) from the EVA server [21] that do not include any of the proteins in the training set. For this purpose, we trained the parameters by using 2254 SCOP (version 1.65) domain representatives. The application of CRNPRED to these proteins yielded the average Q3 of 77.3%, whereas the values for the same set of proteins obtained by PSIPRED[20] and Porter[5] were 78.5% and 79.8%, respectively. That is, the CRNPRED result was inferior to these methods by 1–3% on the basis of the EVA data set. When the data set was limited to those 16 proteins with more than 300 homologs in the sequence database, the Q3 obtained by CRNPRED, PSIPRED, and Porter were 79.9%, 79.6%, and 80.9%, respectively. CRNPRED seems to be sensitive to the number of homologs used for constructing a PSSM. Regarding the contact number prediction, CRNPRED, achieving Cor = 0.75, is the most accurate method available today. The simple linear method [8] with multiple sequence alignment derived from the HSSP database [22] showed a correlation coefficient of 0.63. A more advanced method based on support vector machines (local window-based) achieves a correlation of 0.68 per chain[9].

It is known that the number of homologs found by the PSI-BLAST searches significantly affects the prediction accuracies [19]. We have examined this effect by plotting the accuracy measures for a given minimum number of homologs found by PSI-BLAST (Fig. 2). For example, we see in Fig. 2 that, for those proteins with more than 100 homologs, the average Q3 for SS predictions is 82.2%. The effect of the number of homologs significantly depends on the type of 1D structure. For SS prediction, Q3 steadily increases as the number of homologs increases up to 100, but it stays in the range between 82.0 and 82.4 until the minimum number of homologs reaches around 400, and then it starts to decrease. For CN prediction, Cor also increases steadily but more slowly, and it does not degrade when the minimum number of homologs reaches 500. This tendency implies that CN is more conservative than SS during protein evolution, which is consistent with previous observations [23, 24]. On the contrary, RWCO exhibits a peculiar behavior. The value of Cor reaches its peak at the minimum number of homologs of 80 beyond which the value rapidly decreases. This indicates that RWCO is not evolutionarily well conserved. It was observed that the accuracies of SS and CN predictions constantly increased when the dimension of CRNs was increased from 2000 to 5000, but such was not the case for RWCO (data not shown). RWCO seems to be such delicate a quantity that it is very difficult to extract relevant information from the amino acid sequence.

Finally, we note on practical applicability of predicted 1D structures. We do not believe, at present, that the construction of a 3D structure purely from the predicted 1D structures is practical, if possible at all, because of the limited accuracy of the RWCO prediction. However, SS and CN predictions are very accurate for many proteins so that they may already serve as valuable restraints for 3D structure predictions. Also, SS and CN predictions may be applied to domain identification often necessary for experimental determination of protein structures. CRNPRED has been proved useful for such a purpose [25]. Although of the limited accuracy, predicted RWCOs still exhibit significant correlations with the correct values. Since RWCOs reflect the extent to which a residue is involved in long-range contacts, predicted RWCOs may be useful for enumerating potentially structurally important residues[26]. An interesting alternative application of the CRN framework is to regard the solution of the equation of state (Eq. 4) as an extended sequence profile. By so doing, it is straightforward to apply the solution to the profile-profile comparison for fold recognition [27]. Such an application may be also pursued in the future.

Conclusion

We have developed the CRNPRED program that predicts secondary structures (SS), contact numbers (CN), and residue-wise contact orders (RWCO) of a protein given its amino acid sequence. The method is based on large-scale critical random networks. The achieved accuracies are at least as high as other predictors for SS and currently the best for CN and RWCO, although the success for RWCO prediction is still limited. CRNPRED will be a useful tool for computational as well as experimental biologists who need accurate one-dimensional protein structure predictions.

Availability and requirements

Project name: CRNPRED

Project home page: http://bioinformatics.org/crnpred/

Operating system: UNIX-like OS (including Linux and Mac OS X).

Programming language: C.

Other requirements: zsh, PSI-BLAST (blastpgp), The UniRef100 amino acid sequence database.

License: Public domain.

Any restrictions to use by non-academics: None.

Abbreviations

- CRN:

-

critical random network

- SS:

-

secondary structure

- CN:

-

contact number

- RWCO:

-

residue-wise contact order

- 1D:

-

one-dimensional

- 3D:

-

three-dimensional.

References

Porto M, Bastolla U, Roman HE, Vendruscolo M: Reconstruction of protein structures from a vectorial representation. Phys Rev Lett 2004, 92: 218101.

Kinjo AR, Nishikawa K: Recoverable one-dimensional encoding of three-dimensional protein structures. Bioinformatics 2005, 21: 2167–2170. [Doi:10.1093/bioinformatics/bti330] [Doi:10.1093/bioinformatics/bti330]

Rost B: Prediction in 1D: secondary structure, membrane helices, and accessibility. In Structural Bioinformatics. Edited by: Bourne PE, Weissig H. Hoboken, U.S.A.: Wiley-Liss, Inc; 2003:559–587.

Jones DT: Protein secondary structure prediction based on position-specific scoring matrices. J Mol Biol 1999, 292: 195–202.

Pollastri G, McLysaght A: Porter: a new, accurate server for protein secondary structure prediction. Bioinformatics 2005, 21: 1719–1720.

Nishikawa K, Ooi T: Prediction of the surface-interior diagram of globular proteins by an empirical method. Int J Pept Protein Res 1980, 16(1):19–32.

Nishikawa K, Ooi T: Radial locations of amino acid residues in a globular protein: Correlation with the sequence. J Biochem 1986, 100: 1043–1047.

Kinjo AR, Horimoto K, Nishikawa K: Predicting absolute contact numbers of native protein structure from amino acid sequence. Proteins 2005, 58: 158–165. [Doi:10.1002/prot.20300] [Doi:10.1002/prot.20300]

Yuan Z: Better prediction of protein contact number using a support vector regression analysis of amino acid sequence. BMC Bioinformatics 2005, 6: 248.

Kinjo AR, Nishikawa K: Predicting secondary structures, contact numbers, and residue-wise contact orders of native protein structure from amino acid sequence using critical random networks. BIOPHYSICS 2005, 1: 67–74. [Doi:10.2142/biophysics.1.67] http://www.jstage.jst.go.jp/article/biophysics/1/0/1_67/ [Doi:10.2142/biophysics.1.67]

Kabsch W, Sander C: Dictionary of Protein Secondary Structure: Pattern recognition of hydrogen bonded and geometrical features. Biopolymers 1983, 22: 2577–2637.

Crooks GE, Brenner SE: Protein secondary structure: entropy, correlations and prediction. Bioinformatics 2004, 20: 1603–1611.

Baldi P, Brunak S, Frasconi P, Soda G, Pollastri G: Exploiting the past and the future in protein secondary structure prediction. Bioinformatics 1999, 15: 937–946.

Chen J, Chaudhari NS: Bidirectional segmented-memory recurrent neural network for protein secondary structure prediction. Soft Computing 2006, 10: 315–324.

Altschul SF, Madden TL, Schaffer AA, Zhang J, Zhang Z, Miller W, Lipman DL: Gapped Blast and PSI-Blast: A new generation of protein database search programs. Nucleic Acids Res 1997, 25: 3389–3402.

Pollastri G, Baldi P, Fariselli P, Casadio R: Prediction of coordination number and relative solvent accessibility in proteins. Proteins 2002, 47: 142–153.

Murzin AG, Brenner SE, Hubbard T, Chothia C: SCOP: A structural classification of proteins database for the investigation of sequences and structures. J Mol Biol 1995, 247: 536–540.

Bairoch A, Apweiler R, Wu CH, Barker WC, Boeckmann B, Ferro S, Gasteiger E, Huang H, Lopez R, Magrane M, Martin MJ, Natale D, O'Donovan C, Redaschi N, Yeh LS: The universal protein resource (UniProt). Nucleic Acids Res 2005, 33: D154-D159.

Przybylski D, Rost B: Alignments grow, secondary structure prediction improves. Proteins 2002, 46: 197–205.

McGuffin LJ, Bryson K, Jones DT: The PSIPRED protein structure prediction server. Bioinformatics 2000, 16: 404–405.

Eyrich VA, Marti-Renom MA, Przybylski D, Madhusudhan MS, Fiser A, Pazos F, Valencia A, Sali A, Rost B: EVA: continuous automatic evaluation of protein structure prediction servers. Bioinformatics 2001, 17: 1242–1243.

Sander C, Schneider R: Database of homology-derived protein structures. Proteins 1991, 9: 56–68.

Kinjo AR, Nishikawa K: Eigenvalue analysis of amino acid substitution matrices reveals a sharp transition of the mode of sequence conservation in proteins. Bioinformatics 2004, 20: 2504–2508.

Bastolla U, Porto M, Roman HE, Vendruscolo M: Principal eigenvector of contact matrices and hydrophobicity profiles in proteins. Proteins 2005, 58: 22–30.

Minezaki Y, Homma K, Kinjo AR, Nishikawa K: Human transcription factors contain a high fraction of intrinsically disordered regions essential for transcriptional regulation. J Mol Biol 2006, 359: 1137–1149.

Kihara D: The effect of long-range interactions on the secondary structure formation of proteins. Protein Sci 2005, 14: 1955–1963.

Tomii K, Akiyama Y: FORTE: a profile-profile comparison tool for protein fold recognition. Bioinformatics 2004, 20: 594–595.

Zemla A, Venclovas C, Fidelis K, Rost B: A modified definition of Sov, a segment-based measure for protein secondary structure prediction assessment. Proteins 1999, 34: 220–223.

Acknowledgements

We thank Yasumasa Shigemoto for helping construct the CRNPRED web interface. This work was supported in part by the MEXT, Japan.

Author information

Authors and Affiliations

Corresponding author

Additional information

Authors' contributions

A. R. K. designed and implemented the method, carried out benchmarks, wrote the first draft of the manuscript. A. R. K. and K. N. analyzed the results and improved the manuscript.

Electronic supplementary material

12859_2006_1140_MOESM1_ESM.gz

Additional File 1: List of the SCOP domains used for cross-validation. (compressed (gzip) archive of text files). (GZ 23 KB)

Authors’ original submitted files for images

Below are the links to the authors’ original submitted files for images.

Rights and permissions

This article is published under license to BioMed Central Ltd. This is an Open Access article distributed under the terms of the Creative Commons Attribution License (http://creativecommons.org/licenses/by/2.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Kinjo, A.R., Nishikawa, K. CRNPRED: highly accurate prediction of one-dimensional protein structures by large-scale critical random networks. BMC Bioinformatics 7, 401 (2006). https://doi.org/10.1186/1471-2105-7-401

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/1471-2105-7-401