Abstract

Background

Previous studies on tumor classification based on gene expression profiles suggest that gene selection plays a key role in improving the classification performance. Moreover, finding important tumor-related genes with the highest accuracy is a very important task because these genes might serve as tumor biomarkers, which is of great benefit to not only tumor molecular diagnosis but also drug development.

Results

This paper proposes a novel gene selection method with rich biomedical meaning based on Heuristic Breadth-first Search Algorithm (HBSA) to find as many optimal gene subsets as possible. Due to the curse of dimensionality, this type of method could suffer from over-fitting and selection bias problems. To address these potential problems, a HBSA-based ensemble classifier is constructed using majority voting strategy from individual classifiers constructed by the selected gene subsets, and a novel HBSA-based gene ranking method is designed to find important tumor-related genes by measuring the significance of genes using their occurrence frequencies in the selected gene subsets. The experimental results on nine tumor datasets including three pairs of cross-platform datasets indicate that the proposed method can not only obtain better generalization performance but also find many important tumor-related genes.

Conclusions

It is found that the frequencies of the selected genes follow a power-law distribution, indicating that only a few top-ranked genes can be used as potential diagnosis biomarkers. Moreover, the top-ranked genes leading to very high prediction accuracy are closely related to specific tumor subtype and even hub genes. Compared with other related methods, the proposed method can achieve higher prediction accuracy with fewer genes. Moreover, they are further justified by analyzing the top-ranked genes in the context of individual gene function, biological pathway, and protein-protein interaction network.

Similar content being viewed by others

Background

Tumor involves many pathways, distinct genes and exogenous factors, and is considered as systems biology diseases [1]. Despite tremendous efforts in research, the mechanism of tumor genesis and development has not been thoroughly known yet. Treatment of later stage cancers is often not therapeutically effective, and medical experts agree that early diagnosis of tumor is of great benefit to successful therapies. However, early tumor detection is extremely difficult using traditional tumor mass detection techniques such as X-ray imaging. Furthermore, different subtypes of tumor show very different responses to therapy, indicating that they are molecularly distinct entities. Thus, accurate classification of tumor samples based on molecular signatures is essential for efficient cancer treatment. Since the first paper on the classification of leukemia subtype based on Gene Expression Profiles (GEP) was published [2], this research field has been studied extensively and become a research hotspot [3–8]. Many datasets on different tumors have been published such as colon tumor [9], Small Round Blue Cell Tumor (SRBCT) [10], Diffuse Large B-Cell Lymphomas (DLBCL) [11], and prostate tumor [12], etc. All of the published tumor datasets have very high dimensionality and small sample size mainly due to limited resources and the time required for collecting and genotyping specimens [13]. Many supervised classification methods in pattern recognition, such as Support Vector Machines (SVM) [14, 15], Artificial Neural Networks (ANN) [16–20], k-Nearest Neighbor (KNN) [12, 21], and nearest shrunken centroids [22], have been successfully applied to GEP-based tumor classification over the last decade. All these studies have shown that GEP-based tumor classification methods hold great promises for early diagnosis and clinical prognosis of tumor. However, due to the challenges from the curse of dimensionality that the number of genes far exceeds the size of sample set, dimensionality reduction including feature extraction such as total principal component regression [23] and gene selection [2] should be performed before constructing classification model [24]. Compared to feature extraction, gene selection do not alter the original representation of genes, so it can not only improve the performance of tumor classification by removing redundant and irrelevant genes but also select informative gene subsets that may serve as cancer biomarkers and potential drug targets. More importantly, it may provide insight into the underlying molecular mechanism of tumor development. Therefore gene selection plays a very important role in tumor classification [25].

Generally, gene selection can be classified into two categories: Filters and Wrappers [26]. Filters are independent from the following classification stage. They evaluate the discriminability of genes by using only the intrinsic information of data themselves and subclass information, such as relative entropy [27], information gain and t-test [28], as well as Minimum Redundancy-Maximum Relevance (mRMR) [29]. Because gene selection is not associated to any specific classifiers, the gene subsets selected by Filters can avoid over-fitting phenomena. The advantage of Filters is that they can be easily catered to very high-dimensional datasets, and are computationally simple and fast [25]. On the contrary, Wrappers evaluate the discriminability of each gene subset using the evaluation function of learning algorithm, such as Genetic Algorithm (GA)/SVM method [30] and GA/KNN method [21]. Wrappers often deliver better performance than Filters in gene selection [26] because they utilize the feedback information of classification accuracy. However, their computational cost must be seriously taken into account [31] due to the fact that hunting for the smallest feature sets in a high-dimensional space is an NP-complete problem [32, 33]. Practically for all Wrappers a good solution is to adopt heuristic method in a condensed search space to approximately find out the smallest feature sets. One example is to adopt GA to find the most informative gene subsets [21, 34]. Another example is to combine gene ranking with clustering analysis to select a small set of informative genes [35].

Three general modes are commonly adopted in gene selection strategies: Increasing Mode, Decreasing Mode, and Hybrid Mode, which are respectively introduced as follows. 1) Increasing Mode selects a gene subset starting from empty set until a gene subset with the highest classification accuracy is selected through appending potential genes into the gene subset, such as Sequential Forward Search (SFS) [36]. 2) Decreasing Mode starts from the whole gene set to remove irrelevant and redundant genes, and keeps the least gene subset among the subsets with the same classification accuracy, such as the well-known Support Vector Machine-Recursive Feature Elimination (SVM-RFE) [14] that selects informative genes in a sequential backward elimination manner by starting with the whole gene set and eliminating one or several redundant gene in each iteration, and the extension of SVM-RFE(MSVM-RFE) [37] that solves the multi-class gene selection problem by simultaneously considering all subclasses during the gene selection process. 3) Hybrid Mode, such as Sequential Forward Floating Search (SFFS) algorithm [36] and Markov blanket-embedded genetic based gene selection algorithm [34], combines Increasing Mode with Decreasing Mode by starting from an arbitrary gene set. However, Reunanen [38] proved that intensive search strategies such as SFFS do not necessarily outperform a simpler and faster method like SFS, provided that the comparison is done properly.

In fact, due to the characteristics of GEP, more complex methods are not obviously superior to the simpler ones and the loss of biomedical meaning derived from the over-complex methods may be not sufficiently compensated by the little improvement of predictive performance [39]. Therefore, designing biologically interpretable methods that obtains minimum gene subsets with the highest or nearly highest classification accuracy is very important for robust tumor classification. Furthermore, identifying minimum gene subsets means discarding most noise and redundancy in dataset to the utmost extent, which may not only improve classification accuracy but also decrease the tumor diagnosis cost by suggesting the fewest biomarkers in clinical application as suggested by [35, 40, 41]. However, the curse of dimensionality from GEP implicates two problems in selecting a small gene subset with the highest or nearly highest accuracy from thousands of genes: over-fitting and selection bias, because it may be just by chance to find a small gene subset with perfect classification performance from such tremendous gene space even in random dataset [42–44]. So the over-fitting and selection bias problems must be avoided in order to obtain robust classification performance.

Ambroise et al.[45] found that overoptimistic results incurred by selection bias could happen if test set is not thoroughly excluded from gene selection process. Therefore, test set must be independent of the training process of classifier. Wang L.P. et al.[46] further pointed out that many previous studies, such as [47] and [10], had gained overoptimistic performance according to this criterion, and they proposed a simple method with resultant accurate tumor classification by using a very few genes. This method combines gene ranking with exhaustive search method to find minimum gene subsets so as to achieve the unbiased accuracy. Although their methods achieve good and unbiased classification results, the high computational cost makes it infeasible when the number of initially selected genes is very large (e.g., more than 300). Our previous work [48] designed a gene selection approach that was used to find the minimum gene subsets with the highest classification accuracy, but seriously upward bias occurred because that initially selecting differentially expressed genes on whole dataset and over-fitting is performed in gene selection stage. In this study, based on the Heuristic Breadth-first Search Algorithm (HBSA), we further construct a HBSA-based ensemble classifier and design a HBSA-based gene ranking method by counting its occurrence frequency on the basis of gene subsets selected only on training set so as to avoid over-fitting case and selection bias. Our novel method manages to simultaneously achieve the two conflict goals [49]: 1) Design a simple classifier to achieve nearly highest and unbiased prediction accuracy; and 2) Mine as many important tumor-related genes as possible, which may provide insight into the mechanism of tumor genesis and help find diagnosis biomarkers and new therapeutic targets [50].

In this following section, we firstly describe the classification problem and introduce the search strategy of HBSA. The implementation of HBSA is given, and its biomedical interpreter is also illustrated. Then two methods including HBSA-based ensemble classification and HBSA-based gene ranking are designed to obtain unbiased prediction accuracy and find important tumor-related genes. The results obtained on nine actual tumor datasets including three pairs of cross-platform datasets demonstrate the feasibility and effectiveness of our method. Comparison with other related methods also indicate the superiority of our method. The biomedical analysis of the selected genes in the context of individual gene function, pathway analysis and Protein-Protein Interaction (PPI) network further justify our methods.

Methods

Problem description

Let G = {g1, ⋯, g n } be a set of genes and S = {s1, ⋯, s m } be a set of samples. |G| = n denotes the number of genes, and |S| = m denotes the number of samples. The corresponding GEP can be represented as matrix X = (xi,j) mn , 1 ≤ i ≤ m, 1 ≤ j ≤ n, where xi,j is the expression level of gene g j in sample s i , and usually n ≫ m. Each vector s i in the gene expression matrix can be regarded as a point in n-dimensional space. And each of the m rows consists of an n-element expression vector for a single sample. Let L = {c1, ⋯, c k } denote the label set and |L| = k denote the number of subclasses. Usually, the subclass of each sample is known, so S × L = {(s i , l i )|s i ∈ Rn, l i ∈ Rn, l i ∈ L, i = 1, 2, ⋯, m} denotes the labeled sample space.

Selecting an informative gene subset T with the highest classification accuracy from gene space P(G) (the power set of G) is a crucial problem, but it is an NP-complete problem [33]. Moreover, which and how many genes are relevant to a specific tumor subtype are not clear for biomedical scientists so far. We therefore assume that the gene subsets with powerful classification ability are relevant to a specific tumor subtype. Let Acc(T) denote the classification ability of a gene subset T on sample set, which is usually measured by the accuracy of a classifier. We hope that the selected informative gene subset T simultaneously satisfies the following two goals:

where |T| denotes the cardinal number of gene subset T. The gene subset simultaneously satisfying (1) and (2) is called an optimal gene subset T*. Note that usually more than one optimal gene subset T* may exist in that the genes belonging to the same pathway in a cell usually have similar expression pattern and function. Optimal subsets A* comprise all of the optimal gene subsets T*, i.e., A* = {T*|T* ⊂ G T* simultaneously satisfies (1) and (2)}. Although finding only one gene subset T* is sufficient for tumor classification, finding as many optimal gene subsets as possible is very useful to gain an insight into tumor dataset structure and discover more important tumor-related genes.

Due to a large |G| = n (e.g., one sample usually includes 2 000 ~ 30 000 genes), it is impractical to apply an exhaustive search method to find out A* in the space of 2n gene subsets. A good solution is to adopt a heuristic method in a condensed search space to approximately find out A*. However, different gene subsets with different cardinal numbers may be selected by using different methods, so it is difficult to determine the minimum number of optimal gene subset for a specific tumor dataset by only designing methods. Thus we must balance the minimum number and the classification accuracy. Jain et al.[51] suggested a criterion (3) that the number of training samples per subclass is at least five times the number of features in designing a classifier to avoid the curse of dimensionality, i.e.

where k is the number of subclasses, m t is the number of training samples, and n s denotes the number of the selected genes, and for more complex classifier the ratio of sample size to dimensionality should be larger. For example, we should consider at most eight informative genes for two-subclass tumor dataset with only 80 training samples to design a classifier with acceptable generalization performance [52]. Considering that very high accuracy can always be obtained by selecting sufficient genes in a small size of sample set, we aim to find minimal gene subsets with nearly maximal accuracy rather than to obtain maximal accuracy with much more genes. Therefore, those gene subsets approximately satisfying (1) and (2) are also included into our optimal gene subsets A*. Based on these optimal gene subsets, how to obtain more reliable accuracy and find more important tumor-related genes are two key problems.

Gene pre-selection

It is widely accepted that tumor-related genes are differentially expressed ones, so the Filters-based gene ranking techniques are usually used to pre-select the differentially expressed genes from the original gene space even though those differentially expressed genes are not always tumor-related ones due to the noises in dataset. Its main idea is to assign each gene a single score that denotes the significance of each gene according to a certain scoring criterion. Many single variable methods such as t-test and Bhattacharyya distance are extensively used as discrimination criterions. However, these methods require the dataset to follow Gaussian distribution. Otherwise, these methods may not achieve optimal experimental performance. Deng et al.[53] reported that usually tumor datasets do not follow Gaussian distribution and showed that Wilcoxon rank sum test (WRST) is superior to t-test method in gene selection on three binary tumor datasets. However, WRST is only suitable for the binary classification problem. Kruskal-Wallis rank sum test (KWRST) is suitable for multi-class problem. The WRST or KWRST-based gene selection method was reported to perform very well in GEP-based tumor classification on the basis of the extensive comparison studies [24, 54]. Taking it into consideration that KWRST does not require a certain distribution of data and is also suitable for small dataset, in our experiments we use KWRST to pre-select an initial informative gene set G* = {g1, ⋯g p }, which contains p candidate genes with good discriminating ability.

Heuristic breadth-first search

Search strategy

We aim at finding as many optimal gene subsets as possible. When p, the number of the informative genes pre-selected by KWRST, is small, breadth-first search algorithm can realize our goals (1) and (2). However, when p is very big (e.g. p = 300), the required CPU time of such a search algorithm is intolerable. We therefore design a heuristic breadth-first search algorithm (HBSA) with heuristic information measured by Acc(T) to find the optimal gene subsets A*, which can drastically reduce the search space.

Usually, in the process of search an expanded tree is generated by HBSA from G* = {g1,.,g p }, which is the differentially expressed gene set pre-selected by KWRST, as shown in Figure 1, where Nij denotes a node with i representing the layer of the node (0 ≤ i ≤ p) and j the serial number of the node in layer i. The data structure of each node is defined as follows:

Node = Begin

set;

parent;

path;

c;

End

where Nij.set denotes a set only containing single gene, Nij.parent the parent node of the node Nij, and Nij.path a gene set containing all genes on the path from the root node N01 to the node Nij itself. Obviously the length of the gene set Nij.path is i, i.e., Nij.path = Nij.parent.path ∪ Nij.set. Let Nij.c = Acc(Nij.path) denote the classification accuracy of the gene subset Nij.path, serving as the heuristic information to guide the node selection in layer i, evaluated by SVM and KNN classifiers here. For the root node N01, N01.set = ∅ (∅ is empty set), N01.path = ∅, N01.parent = nil, and N01.c = 0. The root node is expanded to p child nodes guided by the heuristic information KWRST(g), where we set Nij.set = {g j } and N01.path = {g j }, g j ∈ G*, 1 ≤ j ≤ p. Next all p nodes are expanded again in next layer. Each node Nij(1 ≤ j ≤ p) in layer 1 is expanded to p − 1 child nodes, thus there are p(p − 1) nodes in layer 2, where N2j.set = {g i }, N2j.path = N2j.set ∪ N2j.parent.path, N2j.c = Acc(N2j.path), g i ∈ G* ∧ g i ∉ N2j.parent.path, where 1 ≤ j ≤ p(p − 1), 0 ≤ i ≤ p. Then we descendingly rank all nodes in layer 2 by their N2j.c, and examine whether Acc max (2) = max1 ≤ j ≤ p(p−1)(N2j.c) is greater than a given threshold Acc_Max or not, where Acc max (2) denotes the maximal accuracy in layer 2.If Acc max (2) ≥ Acc_Max, where Acc_Max is a given threshold, which indicates that at least one optimal gene subset is found, the searching process is stopped. Otherwise in layer 2 we select the w top-ranked nodes as open nodes to be expanded in next layer, where the parameter w denotes the search breadth. In fact, whatever w is set to, w always takes p value in layer 1. The rest may be deduced by analogy. Note that some gene subsets on different paths are possibly the same regardless of gene order in these gene subsets. Except nodes in layer 0 and 1, if the classification accuracy of a node has been previously computed, the accuracy of this node is set to zero so as to avoid the unnecessary expansion and this node is called closed node that will not be expanded in next layer. Finally, when the search process is stopped, all gene subsets in the w top-ranked nodes in last layer are selected into the optimal gene subsets A*. An example of HBSA is illustrated in Additional file 1: Figure S1.

The goal of HBSA is to select as many optimal gene subsets as possible only on training set. For each gene subset in A* constructed from empty set, its classification accuracy monotonously increases with the increase of its size, so when the classification accuracy of the gene subset achieves Acc_Max threshold or the maximal value (100%), the size of the gene subset obtained is minimal. It, therefore, is apparent that the optimal gene subsets in A* just approximately satisfy the two goals in (1) and (2). If the search breadth w is set appropriately, the error prone of searching process can be avoided to some extent so that as many optimal gene subsets as possible can be selected.

Obviously, the search breadth of increase with the increase HBSA does not exponentially of search depth. Thus our HBSA is a beam search algorithm, or an optimization of best-first search that searches a graph by ordering all partial solutions according to some heuristic information. As a result, only the best partial solutions of the predetermined number are kept as candidates. That is, only the most promising nodes are retained for further expanding at each layer of the search tree, while the remaining nodes are pruned off permanently [55]. Generally speaking, in local view the HBSA-based gene selection belongs to the Increasing mode, while in global view such gene selection belongs to Hybrid mode in that most of the gene combinations with lower classification accuracy are discarded in the search process. The HBSA can be implemented more flexibly. For example, it is unnecessary to select fixed w top-ranked nodes to be expanded in each layer, that is, w can be set to different values in different layers. There are two modes to set w. 1) For each layer, w can be determined by the distribution of the classification accuracy of all nodes in the corresponding layer. 2) Set different Acc_Max thresholds for different layers, and the given threshold of each layer must be less than that of its next layer, which leads to different numbers of the selected nodes in different layers. Thus, one advantage of HBSA is its adaptability.

Another advantage of HBSA is its biomedical interpretation. Suppose T i = {g1′, ⋯, g i ′} is a selected gene subset with high accuracy in the i-th layer, where g j ′ ∈ G*, 1 ≤ j ≤ i. If g’i+1 ∈ G* could be appended into T i to make Acc (Ti+1 = {g’1, ⋯, g’ i , g’i+1}) increase maximally, g’i+1 should be independent of or very weakly related to the genes in gene subset T i ideally. Otherwise, if Acc (Ti+1) increases only a little or even decreases, the subset Ti+1 will be discarded in layer i + 1. Therefore, ideally, all genes in the optimal set T* should be independent of each other, and each optimal gene subset T* selected should be an independent variable group. It implies that those genes in subset T* should be on the different regulatory pathways, but, due to much noise in GEP, the gene latterly appended into the gene subset might be weakly tumor-related.

Moreover, to distinguish which genes are more important ones, the significance of a gene is measured by its occurrence frequency counted in the optimal set A*. The bigger the occurrence frequency of a gene, the more important the gene. This definition also has its biomedical interpretation. For example, given two three-gene subsets G1 = {g1′, g2′, g 3 ′} and G2 = {g1″, g2″, g 3 ″}, where we assume that all genes in G1 are on pathway 1 and all genes in G2 are on pathway 2, as shown in Figure 2, and that the tumor-related strengths of the genes in G1 and G2 decrease with their orders in G1 and G2, respectively. Generally, gene subsets such as {g1′, g2′} and {g1″, g2″} might not be selected by HBSA because both Acc({g1′, g2′}) and Acc({g1″, g2″}) might be lower than that of other irrelevant gene combinations such as {g1′, g2″} due to the expression similarity of genes on the same pathways. Thus the potential gene combinations include nine gene subsets possibly selected: {g1′, g1″}, {g1′, g2″}, {g1′, g3″}, {g2′, g1″}, {g2′, g2″}, {g2′, g3″}, {g3′, g1″}, {g3′, g2″} and {g3′, g3″}. Particularly, such gene subsets including g1′ and g1″ tend to be selected by HBSA, while those gene subsets including g3′ and g3″ incline to be discarded by HBSA, which results in high occurrence frequency of those important tumor-related genes such as g1′ and g1″ in gene set A*. Thus, the resultant occurrence frequency of a gene is a reasonable measure of its importance from this point of view.

Implementation

In practice, there is no need to construct searching tree to obtain the optimal gene subsets A*. It is enough to preserve the potential gene subsets and their classification accuracy in the searching process. To conveniently implement HBSA, a classification matrix CM = (a i,j )w×p is defined as follows:

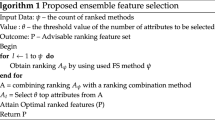

Adopting row label vector Row = (T1, T2, ⋯TW) to label every row of CM in turn, where Ti(1 ≤ i ≤ w) denotes the selected gene subsets. Adopting column label vector Column = ({g1}, ⋯ {g j }, ⋯ {g p }) to label each column of CM in turn, where g j ∈ G*,and a i,j = Acc(Row[i] ∪ Column[j]), where Row[i] denote the gene subset of the i-th row in CM matrix and Column[j] denote the single gene set of the j-th column in CM matrix, 1 ≤ i ≤ w, 1 ≤ j ≤ p. The framework of HBSA is shown in Algorithm 1, where Acc(T) is defined as the classification accuracy of gene subset T. For example, if Row[5] = {g1,g4} and Column[3] = {g6}, a5,3 = Acc(Row[5] ∪ Column[3]) = Acc({g1,g4,g6}), which is the prediction accuracy of the gene subset {g1,g4,g6}.

Algorithm 1: HBSA(M, p, w, Acc_Max, Depth)

Input: M denotes gene expression profiles, p the number of pre-selected genes, w the number of the selected gene subsets in each layer (searching breadth), Acc_Max a given maximal accuracy threshold, and Depth the upper bound of searching depth.

Output: A set of optimal gene subsets A*.

-

1:

For each gene g j ∈ G do

-

2:

B[j]: = KWRST(g j ); //Compute p-value for each gene by Kruskal-Wallisrank sum test.

-

3:

End For

-

4:

B: = Sort(B); //Rank B by ascending order.

-

5:

G*: = Selected(G, B, p); //Select the p top-ranked genes as initial informative gene set G*from original gene set G according to B.

-

6:

For each i ∈ {1,2,⋯, p}

-

7:

Column[i]: = {g i };

-

8:

Row[i]: = Column[i];

-

9:

End For

-

10:

iter: = 1; //The times of iteration.

-

11:

Repeat //If CM is firstly computed, CM is a symmetric matrix, so only the lower triangle matrix of CM is computed.

-

12:

Construct the classification matrix CM, label each row of CM with each component of Row vector, and label each column of CM with corresponding component Column vector.

-

13:

Compute classification matrix CM, where a i,j = Acc(Row[i] ∪ Column[j]), 1 ≤ i ≤ w, 1 ≤ j ≤ p; //Before a i,j is computed, the sample set labeled with Row[i] ∪ Column[j] must be normalized (where the sample mean is zero while the variance is 1); function Acc(.) is measured by SVM with Gaussian radial basis function (RBF) kernel or KNN classifier. Computing matrix CM is equivalent to doing the classification accuracy of all nodes in a layer shown in Figure 1.

-

14:

Convert CM to the vector V: = (v1, v2, ⋯, vw×p), and set V[(i − 1) × p + j].subset: = Row[i] ∪ Column[j], and V[(i − 1) × p + j].c: = ai,j, 1 ≤ i ≤ w, 1 ≤ j ≤ p, then rank vector V by V.c in descending order. Select w top-ranked components to reconstruct label vector Row[i]: = V[i].subset, 1 ≤ i ≤ w, where the row dimensionality of matrix CM can be dynamically changed according to the requirement.

-

15:

Accurancy: = max(V.c);

-

16:

iter: = iter + 1;

-

17:

Until (Accurancy ≥ Acc_Max) or (iter = Depth); //When the maximal classification accuracy is obtained or the iteration times is equal to Depth, the searching process ends.

-

18:

Select all gene subsets with the highest or nearly highest accuracy and append them into the optimal gene subsets A*.

-

19:

Return A*; //Return the optimal gene subsets A*, |A*| is the number of the optimal gene subsets, and ∪ A* might be the tumor-related gene set.

Algorithm end

Three stopping criterions are predefined in HBSA:

-

1)

When a gene subset whose accuracy on overall training set is no less than Acc_Max threshold is found, the algorithm ends.

-

2)

If no gene subset with Acc_Max accuracy is found, the HBSA ends with the maximum iteration times Depth, which can guarantee the end of this algorithm. Usually, we do not know how to select an appropriate Depth. If Depth is set inappropriately, the selected gene subsets might not be optimal.

-

3)

An alternative criterion is that the HBSA ends with the criterion |Accuracy iter+1 − Accuracy iter | < δ, where δ is set to a very small positive real number and Accuracy iter denotes the maximum classification accuracy in the iter-th iteration.

The most time-consuming operation in the HBSA is to compute Acc(T). If we assume that computing Acc(T) only costs one unit time, the time complexity of computing the classification matrix CM is O(w × p), and the time complexity for the whole algorithm is O(Depth × w × p). Although HBSA is an algorithm of polynomial time complexity, it is still very time-consuming. However, since the task of finding optimal gene subset is mainly performed in laboratory phase and the clinical tumor diagnosis phase only uses the selected gene subsets, which takes only a little CPU time (e.g., within at most several seconds on general PC computer). Thus, our HBSA-based gene selection method is feasible.

Evaluation criterion

We adopt two machine learning methods, KNN and SVM, to measure the classification accuracy, Acc(T), of a gene subset T in HBSA, respectively. KNN is a common non-parametric method. To classify an unknown sample x, KNN extracts the k closest vectors from training set by using similarity measures such as Euclidean distance, and decides the label of the unknown sample x by using the majority subclass label of the k nearest neighbors. k is set to an odd number to avoid tied votes. In our experiments Euclidean distance and five nearest neighbors are adopted to measure the similarity of samples and make decisions. The HBSA with KNN is called HBSA-KNN.

SVM [56] with Gaussian Radial Basis Function (RBF) K(x y) = exp(−γ||x − y ||2) (SVM-RBF) is also adopted to evaluate the classification performance of the selected gene subsets. LIBSVM [57] is used in the study, where the combinations of penalized parameter C and Gaussian kernel parameter γ need to be optimized when training SVM classifier. Parameter C is the penalty factor of the samples classified mistakenly, while parameter γ dominates the sensitivity to the change of input data. Because of the large search space, the general grid-search method (for example, C = 2−5, 2−4, ⋯, 215γ = 2−15, 2−14, ⋯, 23) [58, 59] is time-consuming in finding the optimal parameter combinations (C γ). Furthermore, we find that normalized tumor datasets are not sensitive to parameter C, and that search space can be reduced with parameter γ being set within the range of [10-5,10] and C being set to 200 and 400 or even fixed to 200. Specifically, if γ takes the value in O(10-1), γ may take 0.1, 0.2, ⋯, 0.9, respectively; if γ takes the value in O(10-2), γ may take 0.01, 0.02, ⋯, 0.09, respectively. And the others are set similarly. The HBSA with SVM is called HBSA-SVM.

The k-fold Cross-Validation (k-fold CV) is commonly used to evaluate classification model. Here it is applied only on training set to measure Acc(T). If k is set to Tr n (the size of training set), the k-fold CV is called Leave-One-Out Cross-Validation (LOOCV). If k is set to 2, the k-fold CV is known as the holdout method. When k is set too low, the accuracy of k-fold CV tends to have high bias and low variance. On the contrary, when k is set too high (e.g., k = Tr n ), the accuracy of k-fold CV will have low bias but high variance [51, 60]. Breiman et al.[61] found that 10-fold CV method outperforms the LOOCV method to some extent. Ambroise et al.[45] and Asyali et al.[52] also recommended 10-fold CV methods in tumor classification, but whether 10-fold CV method outperforms LOOCV method depends on datasets. To balance the bias and variance, here we design a new method to evaluate the experimental results. Let CV(k) denote the accuracyof k-fold CV classification, where 2 ≤ k ≤ m and m is the total number of samples in training set. Then the mean of the accuracy is defined as:

The standard deviation is defined as:

This method is called Full-fold CV method. The mean of the accuracy evaluated by this method is called Full-fold CV accuracy. Since the computational cost of HBSA would greatly increase by using Full-fold CV to compute Acc(T), 10-fold CV is still used to evaluate Acc(T) as the heuristic information of HBSA. While Full-fold CV method is only used to evaluate the resultant gene subsets in A* with the highest or nearly highest 10-fold CV accuracy.

The implementation of HBSA-KNN is similar, but different in some ways, to that of HBSA-SVM. For HBSA-KNN, we randomly divide training set into 10 parts when using 10-fold CV method, but different divisions can slightly affect the experimental results. To eliminate the effects of different divisions, HBSA-KNN is performed five times with different divisions of training set, thus we could obtain five optimal sets A*. Then the occurrence frequency of each gene is counted from the obtained five optimal sets A*. However, for HBSA-SVM, the division of training set for 10-fold CV method, provided by LIBSVM, is definite in each run. It is sufficient to perform HBSA-SVM only once.

Usually, for HBSA-SVM, final prediction accuracy is evaluated on independent test set by SVM-RBF classifier constructed by optimizing parameter pair only on training set, which is called HBSA-SVM (Unbiased). However, more than one parameter pairs can make the constructed classifiers obtain the highest 10-fold CV accuracy on training set, while the classifiers constructed with these different parameter pairs obtain different prediction accuracy on independent test set. So, in contrast with HBSA-SVM (Unbiased), a biased HBSA-SVM, selecting the parameter pair that makes the constructed classifier obtain the highest prediction accuracy on test set, is also used to evaluate the performance of the selected gene subsets, which is called HBSA-SVM (Biased).

Receiver Operator Characteristics (ROC) analysis is a visual method for evaluating the performance of binary classification model [62].Usually, a few performance measures can be derived from the number of true positives (TP), true negatives (TN), false positives (FP) and false negatives (FN) in test set to measure the performance of classification model, i.e., the true-positive rate or sensitivity (TPR), the false-positive rate(FPR), positive predictive value(PPV), and negative predictive value(NPV). Here ROC curve that is a TPR (on the y_axis) versus FPR (on the x_axis) plot is used, and the Area Under ROC Curve (AUC) is used to measure the performance of classification model.

Analysis framework

Flowchart of analysis

After HBSA is applied to gene selection from the differentially expressed genes initially selected by KWRST on training set, numerous optimal gene subsets are obtained. However, finding optimal gene subsets in such tremendous gene space tends to over-fit training set. Some tumor-unrelated genes are very likely to be selected mistakenly into optimal gene subsets, which might introduce serious bias in the gene selection. The generalization performance of these gene subsets containing tumor-unrelated genes is possibly very poor in predicting unknown tumor samples. To address this problem, we design a HBSA-based ensemble classifier and a HBSA-based gene ranking method to obtain unbiased prediction accuracy and find as many important tumor-related genes as possible. The flowchart of our analysis method is shown in Figure 3.

HBSA-based ensemble classifier

The HBSA-based ensemble classifier consists of the individual classifiers constructed from the optimal gene subsets, and the corresponding prediction accuracies (Biased and Unbiased) are determined by the ensemble classifiers constructed by SVM (Biased) and SVM (Unbiased) on test set, respectively. Final decisions are made by simple majority voting strategy in our experiments. To illustrate the results, the construction of an ensemble SVM classifier with w individual SVM classifiers is shown in Figure 4, where each individual SVM classifier is constructed by each optimal gene subset T obtained by HBSA-SVM.

To measure the reliability of the classification for each test sample by the ensemble classifier constructed with N individual classifiers, a confidence level is defined. Assume that a dataset has k subclasses denoted by L = {c1, ⋯, c k }, a test sample is assigned a voting vector (m1, ⋯, m k ), where each component m i denotes the number of the obtained votes for the corresponding subclasses c i in L = {c1, ⋯, c k }, where . Let m max and m sec denote the maximum and next maximum number in voting vector (m1, ⋯, m k ), respectively. The confidence level conf of a test sample can be defined as conf = m max /m sec . If m sec = 0, conf is set to N, where 1 ≤ conf ≤ N. The bigger the conf is, the more reliably is the test sample correctly or mistakenly classified.

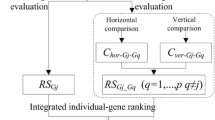

HBSA-based gene ranking method

The HBSA-based gene ranking method, which ranks genes according to the occurrence frequency count of each gene in the final optimal gene subsets A*, is designed to find important tumor-related genes. That is, the significance of a gene is measured by its occurrence frequency. The top-ranked genes with the highest occurrence frequency are considered to be the most important tumor-related ones and should have superior and robust generalization performance.

Results

Tumor datasets

Nine publicly available tumor datasets are applied: Small Round Blue Cell Tumor (SRBCT) [10], Acute Lymphoblastic Leukemia (ALL) [63], Colon tumor [9], Leukemia72 [2], Leukemia52 [64], Diffuse Large B-cell Lymphomas (DLBCL77) [11], DLBCL21 (obtained from R. Dalla-Favera’s lab at Columbia University) [65], Prostate102 [12], and Prostate34 [66] datasets. Among these datasets, three pairs of cross-platform datasets are used to evaluate the generalization performance for our classification model. The division of training set and test set is shown in Table 1. More details about the datasets are available in the Additional file 1: Tables S1-S4.

HBSA-SVM classification performance

The gene selection procedure of HBSA-SVM is performed only on training set. Considering the computational performance of our computer, we initially select 300 top-ranked genes by KWRST. Then training sets and test sets are normalized by genes using z-score normalization method that makes dataset with mean zero and standard deviation one, respectively. Other parameters in HBSA are set: p = 300, w = 300, Acc_Max = 100, and Depth = 15, respectively. After the experiments are performed on six training sets, for each dataset 300 optimal gene subsets are selected according to 10-fold CV method. Part of the optimal gene subsets selected are shown in Table 2, which shows that at least one gene subset with 100% training accuracy is always obtained for each tumor dataset. It is also found that the prediction accuracy of HBSA-SVM (Unbiased) is always not greater than that of HBSA-SVM (Biased). The experiments further indicate that searching optimal gene subsets costs high computationally. For example, for the ALL dataset, it costs about 11 days by using HBSA-SVM at the worst case on our computational platform of Core (TM) 2 Duo 2.20 GHz CPU and 2 G RAM.

Over-fitting occurs in selecting gene subsets on all six training sets as shown in Additional file 1: Table S2. For example, for the leukemia dataset, 2-gene subset {X95735, Y07604} with 100% training accuracy has only 73.08% prediction accuracy on Leukemia52. For SRBCT, 3-gene subset {859359, 769716, 134748} with 100% training accuracy obtained only 60% prediction accuracy. Some gene subsets may obtain very high prediction accuracy (e.g., for DLBCL77, 3-gene subset {L06132, D78134, Z35227} with 100% training accuracy also obtains 100% prediction accuracy on DLBCL21). It may be only by chance to find such gene subsets because the high training accuracy obtained by this gene subset cannot represent good generalization performance of the obtained classifier due to the biased gene selection procedure on whole training set. In fact, some gene subsets with nearly 100% training accuracy obtaining very high prediction accuracy on test set indicate that these gene subsets probably contain important tumor-related genes. For example, for the leukemia dataset, two genes {X95735, M63838} with only 97.22% training accuracy can obtain 96.15% prediction accuracy on Leukemia52, in which X95735 is an important tumor-related gene. Moreover, some redundant or noise genes may potentially degrade the classification performance by masking the contribution of the relevant genes. For example, for SRBCT, 2-gene subset {859359, 769716} can obtain 70% prediction accuracy on the corresponding test set, but 3-gene subset {859359, 769716, 134748} only obtains 60% prediction accuracy. Similarly, HBSA-KNN can also lead to over-fitting phenomena.

The genes in the same gene subset usually come from different pathways. For instance, for the ALL dataset, the six genes in gene subset {BLNK(AF068180), AP1B1(L13939), PTP4A3(AF041434), MPP1(M64925), IDI1(X17025), PARP1(J03473)}with 100% training accuracy take part in different pathways. BLNK takes part in base excision repair and B cell receptor signaling pathways. PARP1 takes part in the primary immunodeficiency pathway. For the gene subset {DNTT(M11722), LAIR1(AF013249), PTTG1IP(Z50022), IDI1(X17025), PARP1(J03473), CDKN1A(U03106)}, although these genes are enriched in 12 important pathways, there are no two genes taking part in the same pathway. For the SRBCT dataset, the 3-gene subset {CD99 (1435862), RCVRN(383188),ERBB2(141768)}are involved in 11 major pathways, but all these three genes come from different pathways. The genes in subset {CDK6(295985), NF2(769716),GNA11(221826)} participate in 13 important pathways such as non-small cell lung cancer, p53 signaling pathway, etc., but there are no two genes in the gene subset on the same pathway. In addition, we find that the majority of the genes selected are involved in important tumor-related biological pathways. For example, the gene CDK6 is involved in non-small cell lung cancer, p53 signaling pathway, Melanoma, etc., in total 9 pathways. Thus, the results are generally consistent with our interpretation of HBSA.

Ensemble classifier

HBSA-SVM-based ensemble classification

To solve the above over-fitting problem, HBSA-SVM-based ensemble schemes are constructed by using a simple majority voting strategy to integrate the individual classifiers. The number of the gene subsets used to construct an ensemble classifier is determined by experiments. The results of three different ensemble classifiers based on different modes are shown in Table 3. For example, for the leukemia dataset, the item Top 300 gene subsets, 300 top-ranked gene subsets with the highest training accuracy are selected, but only 147 gene subsets among the 300 gene subsets share with the Leukemia52 test set. Thus the final ensemble classifier consists of the 147 individual classifiers respectively constructed from these 147 common gene subsets. The corresponding prediction accuracies (Biased and Unbiased) are obtained on the Leukemia52 test set, respectively.

To analyze the reliability of classification, the confidence level for each sample is calculated. Taking the colon tumor dataset as an example, the confidence levels of 20 test samples are shown in Table 4 by HBSA-SVM(Unbiased), in which the 7th, 9th and 13thsamples are mistakenly classified with very high confidence levels, 3.3478, 3.1096 and 99, respectively. Compared with the results in Additional file 1: Table S30 obtained by HBSA-SVM(Biased), most of the samples mistakenly classified in Table 4 are the ones mistakenly or correctly classified with low confidence levels in Additional file 1: Table S30.

HBSA-KNN-based ensemble classification

HBSA-KNN-based ensemble classifier is also constructed by using majority voting strategy to combine 300 individual classifiers constructed by 300 optimal gene subsets selected by HBSA-KNN. Unlike HBSA-SVM, for each dataset random division of 10-fold CV on training set are run for five times, and the average of the five accuracies is used as the final prediction accuracy. For the cross-platform datasets, only the gene subsets shared between the training set and the corresponding test set within the selected 300 gene subsets are used to construct an ensemble classifier. The prediction accuracies of the constructed ensemble KNN classifier are listed in Table 5. Compared with prediction accuracies obtained by the ensemble HBSA-SVM(Unbiased) classifier, shown in Table 3, the prediction accuracy of the ensemble KNN classifier is no less than that of the ensemble SVM(Unbiased) classifier except the prostate dataset.

HBSA-based gene ranking

To prioritize genes so as to find important tumor-related genes, we simply count the occurrence frequency of each gene in all of the optimal gene subsets to measure the gene significance. The 50 top-ranked genes selected by HBSA-SVM and HBSA-KNN for each dataset are shown in Additional file 1: Tables S5-S10 and S17-S22, respectively. It is shown that only few genes have relatively higher frequency, and that the respective top 10 genes selected by HBSA-SVM and HBSA-KNN are mostly shared on the same dataset. The result suggests that our HBSA-based gene ranking method is robust and valid.

We also find that the most frequently selected genes are not always the most differentially expressed ones. For DLBCL, MCM7 that is ranked the first by KWRST is ranked the third in the corresponding list of gene frequency by HBSA-SVM. However, RHOH that is ranked the first in the frequency list by HBSA-SVM is ranked the 258-th by KWRST. However, for SRBCT, most of the top 10 genes selected by HBSA-SVM are included in the top 10 genes selected by KWRST, suggesting that the most differentially expressed genes in this dataset are the most important tumor-related genes. Therefore the most important tumor-related genes are not necessarily the most differentially expressed ones.

Figure 5 shows the relationship between the occurrence frequency of genes and their rank orders. An important aspect of the occurrence frequency of gene in Figure 5 is the linearity of the log-log plots, so it can be inferred that the occurrence frequency of the selected genes follows power-law distribution with respect to the number of genes whose frequencies are greater than the corresponding frequency. This discovered trend is consistent with a previous study [67]. The gene frequency of HBSA-KNN is the accumulated frequency of gene from five runs of HBSA-KNN for each dataset, which indicates the characteristic of rich-get-richer.

Figure 6 shows that the classification accuracy varies with the number of top-ranked genes sorted by the gene frequencies for HBSA-SVM(Biased), HBSA-SVM(Unbiased) and HBSA-KNN, respectively. Table 6 lists the prediction accuracies of some representative number of top-ranked genes selected by HBSA-SVM(Biased), HBSA-SVM(Unbiased) and HBSA-KNN on independent test sets. It is found that a few top-ranked genes are enough for achieving the highest or nearly highest classification accuracy. Moreover, the prediction accuracy of HBSA-KNN is comparable to HBSA-SVM(Unbiased). For example, for HBSA-KNN on SRBCT, five genes can obtain 100% prediction accuracy, while 28 genes are needed to obtain the same accuracy by HBSA-SVM(Unbiased). High accuracy obtained with few genes could be more objective and reliable than that with much more genes since the latter easily leads to classification bias [68]. Interestingly, for HBSA-SVM and HBSA-KNN, when the number of the top-ranked genes approximates the number of subclasses in dataset, the prediction accuracy of the classification model constructed by these gene subsets can achieve similar performance or even outperform that of the corresponding ensemble classifier.

Comparison of HBSA-KNN and HBSA-SVM

The ensemble HBSA-KNN classifier slightly outperforms ensemble HBSA-SVM(Unbiased) classifier in prediction accuracy. Further comparison of the prediction accuracies of HBSA-SVM(Biased), HBSA-SVM(Unbiased) and HBSA-KNN varying with different number of top-ranked genes is shown in Figure 6. The comparison indicates that HBSA-KNN is slightly superior to HBSA-SVM(Unbiased) in prediction accuracy when the number of top-ranked genes selected is small enough. To further reveal how the biased SVM affect classification results, the gene list obtained by HBSA-KNN is further evaluated by SVM-RBF classifier constructed by biased and unbiased methods again, respectively (Additional file 1: Figure S2).The two methods are named HBSA-KNN-SVM(Biased) and HBSA-KNN-SVM(Unbiased), respectively. Additional file 1: Figure S2 shows that HBSA-KNN-SVM(Unbiased) can always obtain the prediction accuracy no greater than that of HBSA-KNN-SVM(Biased) and that HBSA-KNN is also slightly superior to HBSA-KNN-SVM(Biased) in prediction accuracy when the number of top-ranked genes is small. To further evaluate the effectiveness of HBSA-KNN in gene selection, the eight top-ranked genes are selected to construct HBSA-SVM(Unbiased) and HBSA-KNN-SVM(Unbiased) classifiers on four binary datasets, respectively, which are evaluated by ROC (Figure 7). It is clear that HBSA-KNN-SVM(Unbiased) is slightly superior to HBSA-SVM(Unbiased) in AUC, indicating that the classification ability of the gene subsets selected by HBSA-KNN is slightly stronger than that obtained by HBSA-SVM.

Comparisons with other related methods

Compared with the exhaustive search method, proposed by Wang L.P. et al.[46], our methods are less computationally demanding. Moreover, the ensemble strategy adopted is also superior to their average strategy which averages the prediction accuracy of all gene subsets selected from training set. Coincidently, the 3-gene subsets {IGF2, AF1q(MLLT11), CD99},selected by exhaustive search method [46], with 95% prediction accuracy is identical to the first three genes selected by our HBSA-KNN (see Additional file 1: Table S17), which indicates that our HBSA is feasible and can achieve the same good results as the exhaustive search method. The Prediction Analysis of Microarrays (PAM) proposed by Tibshirani et al.[22] can identify a small subset of genes that best characterize each subclass by shrinking weak components of class-centroids with a shrinkage parameter for tumor subclass prediction. Its experimental results on SRBCT and leukemia datasets demonstrated that their method is very efficient in finding informative genes with high classification accuracy. Of the 43 genes selected by PAM on SRBCT dataset, 21 genes are also found by our method on the same dataset (where only the 50 top-ranked genes are considered as shown in Additional file 1: Table S5). On the other hand, although one of their goals was to find the smallest gene subsets, the size of their selected gene subsets with satisfactory accuracy was still too large from the viewpoint of classification and clinical diagnosis.

In addition, Dabney et al.[69, 70] proposed a Classification to Nearest Centroids (ClaNC) method for class-specific gene selection. To find the theoretically optimal gene subset, they further provided a theoretical result showing how to determine the gene subsets of a given size that maximizes the classification accuracy for high-dimensional nearest centroid classifiers. Their results suggest that ClaNC outperforms PAM in prediction accuracy. However, before gene selection, ClaNC requires a given number of genes, which is difficult to determine how many genes are appropriate.

Our method is similar to PAM and ClaNC methods in three aspects. 1) Find minimum gene subsets with maximum accuracy. 2) Consider the discriminative power of multiple genes when searching for gene subsets. 3) Seek the simplest method with biomedical interpretability.

To achieve more objective comparison, the classification performance of PAM, ClaNC and our method are obtained on the two cross-platform datasets (leukemia and DLBCL) that are realigned by those shared genes between the training set and the corresponding test set, respectively. For the leukemia dataset, 4606 genes are shared between Leukemia72 and Leukemia52. For DLBCL, 4072 genes are shared between DLBCL77 and DLBCL21.

Since HBSA-KNN is slightly superior to HBSA-SVM(Unbiased) in gene selection, we just compare HBSA-KNN with PAM and ClaNC methods in prediction accuracy. The conclusion from the comparisons of the classification accuracy, shown in Table 7, is that although ClaNC outperforms PAM in accuracy, the accuracy obtained by ClaNC is lower than ours on all six independent test sets when the number of the top-ranked genes selected is small enough, i.e., when the size of the selected gene subset approximately satisfies (4) as shown in Table 7. For example, for ALL (six subclasses), when the number of the top-ranked genes selected by HBSA-KNN is five, 94% prediction accuracy can be obtained, while ClaNC obtains only 86% accuracy with six genes (one gene selected per subclass). For SRBCT (four subclasses), our method obtain 100% prediction accuracy with only five genes, while eight genes (two genes selected per subclass) are needed to obtain 95%prediction accuracy by ClaNC. For the prostate dataset (two subclasses), our method obtains 88.24% accuracy with two genes, while only 74% accuracy is obtained by ClaNC with the same number of genes. Obviously, our method can achieve higher accuracy with the same or fewer top-ranked genes. From Table 7 we can see that the PAM method does not performs well in the classification of some cross-platform datasets because the same accuracy is obtained when different number of genes for the DLBCL and Prostate cross-platform datasets are used, which are possibly caused by the fact that the cross-platform training set and test set are not on the same measurement scale.

Note that the prediction accuracy may be affected by different data normalization methods. The results in Table 7 are obtained with the z-score normalization method on the tumor datasets. If we use another 0–1 normalization method that scales all data into the range of [0, 1] with the formula (x − min(x))/(max (x) − min(x)), where x is a vector that denotes a set of expression values of a gene in different samples, the results may vary with the same gene subset as shown in Table 7 and Additional file 1: Table S29. For example, for the leukemia dataset, the first three genes obtain 94.23% prediction accuracy on the Leukemia52 test set with the former z-score method, but the same three genes can obtain 98.08% prediction accuracy with the latter 0–1 normalization. The prediction accuracies of PAM and ClaNC methods are obviously improved on the cross-platform prostate dataset normalized with 0–1 normalization method, but the prediction accuracy becomes worse on the leukemia dataset similarly normalized. The results with 0–1 normalization also indicate that our method is still superior to PAM and ClaNC in prediction accuracy when the number of top-ranked genes is small enough.

We further compare HBSA-KNN-based gene ranking method with the other two well-known gene ranking methods: Kruskal-Wallis rank sum test (KWRST) and Relief-F [71]. The results in Figure 8 show that our method consistently outperforms KWRST and Relief-F in prediction accuracy when the number of top-ranked genes is small enough. Although for the prostate dataset only top two genes obtain high prediction accuracy (88.24%) that is obviously greater than that of KWRST and Relief-F with the same number of genes, our method is still effective because this case still conforms to our goal that the most important tumor-related gene is ranked first. However, our method aims at finding as many more important tumor-related genes as possible, even though the important genes might include redundant ones from the viewpoint of classification. Thus the prediction accuracy might be worse as the number of top-ranked genes increases. For example, the prediction accuracy curves of leukemia and prostate in Figure 8 appear the situation.

Moreover, better results can be achieved with more pre-selected genes by KWRST and with an acceptable search breadth increased in HBSA. For example, on the cross platform leukemia dataset, with the top 400 genes pre-selected by KWRST and the search breadth w of 450, the top eight genes selected by HBSA-KNN are {L09209, M23197, M11722, X95735, HG1612-HT1612, X62654, U77948, M31523} in which three genes L09209, HG1612-HT1612 and X62654 are not in the Leukemia 52 test set. Among these shared genes, the set of the top three genes {M23197, M11722, X95735} obtains 94.23% prediction accuracy on the independent test set, and the top five genes {M23197, M11722, X95735, U77948, M31523} and the top 84 genes can result in 96.15% and 98.08% prediction accuracies, respectively. More importantly, these important genes selected with this search breadth are shared with those genes shown in Additional file 1: Table S21. For the ALL dataset, the top eight genes selected by HBSA-KNN are {36985_at, 38242_at, 32207_at, 1287_at, 37470_at, 35974_at, 34168_at, 38518_at} in which only the rank orders of a few genes are changed compared with the same genes in Additional file 1: Table S18. The top seven, 20 and 25 genes can obtain 96%, 98% and 99% prediction accuracies, respectively, which are obviously improved compared with the corresponding results (shown in Tables 6 and 7) with less preselected genes and narrower search breadth. In conclusion, if the number of the initially selected genes and the search breadth are more appropriate, the prediction accuracy by HBSA will be further improved, which further proves that our method is indeed robust.

Biological validation of the top-ranked genes

The association of top-ranked genes with tumor is analyzed in the context of individual gene function, pathway analysis, and protein-protein interaction (PPI) network to validate the effectiveness of the results. We first validate the top-ranked genes as tumor-related genes by known cancer gene list. Some unvalidated genes are validated by Cancer Linker Degree (CLD) analysis and relevant biomedical literature. Moreover, the selected genes are further validated by the fact that some pathways involving in the selected genes are closely related to tumor development. The following analysis is mainly based on the results obtained by HBSA-KNN.

Individual gene based literature validation

The top 50 genes selected by HSBA-SVM and HSBA-KNN are listed in Additional file 1: Tables S5-S10 and S17-S22, respectively. The known cancer genes were downloaded from the website (http://cbio.mskcc.org/cancergenes). 1086 known cancer genes are collected by querying the website for “oncogene”, “tumor suppressor” and “stability” [72]. The top 50 genes selected are analyzed through relevant biomedical literature. Here two case studies of the top-ranked genes on leukemia and prostate are presented as following. More analyses are available in the Additional file 1: Section 11.

Among the top 50 genes selected by HSBA-KNN on leukemia dataset, 10 genes (20%) are known cancer genes as listed in Additional file 1: Table S21. For other genes, by means of biomedical literature search and CLD calculation validation only on those among top 10 ones, we have successfully validated all the ten genes. The evidence of their involvement in cancer and the number or PubMed IDs of references documenting each gene-cancer association are shown in Table 8. CD33 (M23197) is expressed on the malignant blast cells in most cases of acute myeloid leukemia (AML) but not on normal hematopoietic pluripotent stem cells [73]. In vivo ablation of CD33+ cells achieves good results when treating patients with acute myeloid leukemia [74]. MARCKSL1, also named multidrug resistant associated protein (MRP), are found to be increasedly expressed in some vincristine-resistant cell lines [75]. SP3, a nuclear protein identified in numerous different biochemical assays at translocation break points, is associated with a subtype of acute myeloid leukemia [76]. CD63 (X62654) belongs to a newly defined family of genes for membrane proteins including CD33 which was recognized by monoclonal antibodies inhibitory to human T cell leukemia virus type 1-induced syncytium formation [77]. TCF3 (M31523) is involved in 19p13 chromosome rearrangement and acts as a tumor suppressor gene in B-cell precursor acute lymphoblastic leukemia [78]. CST3, also named cystatin C, was elevated in cancer patients than in controls.

For the prostate dataset, among the top 50 genes ranked by HSBA-KNN, 12 genes (24%) are known cancer genes (Additional file 1: Table S22). For other selected genes, we perform manual literature validation only on those among top 10 ones. We successfully validate nine of these ten genes (Table 9).

A major 11-locus haplotype of SNPs in the HEPSIN gene (HPN), is significantly associated with prostate cancer, which supports that HPN (X07732) is a potentially important candidate gene involved in prostate cancer susceptibility [79]. SLC25A6, also named ANT3, is selectively required for TNF-α and oxidative stress-induced cell death in MCF-7 cells [80]. KIBRA is involved in estrogen receptor transactivation in breast cancer cells. Altered RBP1 expression and hypermethylation are common in prostate carcinoma. Both prostate adenocarcinoma and intraepithelial neoplasia show frequent RBP1 overexpression. CHD9 and NELL2 have CLD of four and two respectively as shown in the following network based analysis. The gene A2R6W1 was identified from Aspergillus niger and is hypothesized as a nucleus protein binding zinc ion and DNA for transcription regulation. Its relation with cancer deserves investigation.

Network-based analysis for top 10 genes

Aragues et al.[81] demonstrated that CLD of a protein, defined as the number of cancer genes to which it is connected, was a good indicator of the probability of being a cancer gene. We apply a protein-network-based method to analyze the neighborhood partners of the selected genes using all interactions in the Human Protein Reference Database (HPRD) [82]. The results are shown in Additional file 1: Figures S4-S8. For the leukemia dataset, among the 10 top-ranked genes, two genes (ZYX and CCND3) are cancer ones, five (APLP2, CD33, SP3, CD63, PSME1) and one other genes (CST3) are directly or indirectly associated with cancer genes, respectively. The CLDs of APLP2, CD33, SP3, CD63, PSME1, which are ranked first, second, fifth, seventh and ninth, respectively, are 4, 3, 9, 2 and 1, respectively. TTC3 and MARCKSL1 show no cancer gene linking, of which MARCKSL1 are increasedly expressed in some vincristine-resistant cell lines [75].

For the prostate dataset, our results show that three genes, namely MAF, ABL1 and SERPINB5, are cancer ones and most other top-ranked genes have a direct interaction with known cancer genes. The CLD of MAF, HPN, ABL1, CHD9, WWC1, NELL2 and RBP1 is 7, 1, 46, 4, 2, 2 and 4, respectively. We therefore infer that even if the remaining few genes not reported as cancer genes by previous studies are very possibly play a critical role in tumor genesis and cancer cell process as suggested by the fact that they interconnect directly with known cancer genes and ‘guilt of association’ rule.

Validation based on pathway analysis

The top-ranked genes are analyzed in the context of biological pathways on the websitehttp://vortex.cs.wayne.edu/projects.htm pathways that the genes selected are most likely involved in are listed in Additional file 1: Tables S11–S16 and Tables S23–S28, where p-values are calculated by (14) and only ten pathways with the lowest p-values are selected. This approach is based on the assumption that the numbers of genes participating in different pathways conform to hypergeometric distribution. Given N genes in which M genes participate in a pathway F, we randomly select K genes which are considered to be significant. Then the p-value of having x or fewer genes in F can be calculated by summing the probabilities of a random list of K genes having 1, 2, ⋯, x genes of category F:

When N is very large, the hypergeometric distribution tends to be binominal. In this case, the p-value could also be calculated as:

The top-ranked pathways in which the top 50 genes are involved include cell proliferation (such as cell cycle, DNA replication [83]), genomic stability (base excision repair, mismatch repair, etc), angiogenesis (like vascular endothelial growth factor(VEGF) signaling pathway),cancer metastasis (such as the pathway of cell adhesion molecules [84]),tumor suppressor pathway (such as p53 signaling pathway [85]), immunity escape (like pathways of antigen processing and presentation, B cell receptor signaling pathway, primary immunodeficiency, etc.) or progression of one specific or more than one kinds of cancers, etc.

Owing to a large number of top pathways involved, by means of biomedical literature we validate the tumor relevance of only four pathways supported by both HBSA-SVM and HBSA-KNN. For leukemia, B-cell antigen receptor (BCR) signal pathway is important for the survival of chronic lymphocytic leukemia cells which is regulated by overexpressed active protein kinase Cβ [86]. Heterogeneity in leukemia stem cell self-renewal potential supports the hypothesis that they derive from normal Hematopoietic stem cells [87]. Many transcription factors are either tumor suppressors or oncogenes, thus, mutations or aberrant regulation of them is associated with cancer [88]. DNA excision repair profiles of normal and leukemic human lymphocytes are different [89].

For the prostate dataset, Osman et al.[90] hypothesized that a pathway of prostate cancer progression involves p53 inactivation by mdm2 overexpression and that p21 transactivation via an alternative signaling system, rather than through a p53-dependent mechanism. Insulin signalling pathway is involved in the pathogenesis of various malignancies, increase cancer risk through its effect on cell proliferation, differentiation and apoptosis, and was reported to be involved in the tumorigenesis and neoplastic growth of the prostate [91]. The linkage of the morphological and functional changes of nucleolus and ribosome to cancer are reviewed in literature [92]. For cell cycle pathway, investigation has revealed that androgen acts as a master regulator of G1-S phase progression, able to induce signals that promote G1 cyclin-dependent kinase in prostate cancer cells [93].

We can conclude from the above signal pathway analysis that most of the pathways involving the selected genes are associated to the tumorigenesis, neoplastic growth or metastasis of tumor. From the analysis in the context of the molecular basis, the PPI networks and the pathways, we infer that the top-ranked genes are useful for cancer diagnosis as important potential biomarkers and may also provide insights into the mechanism of tumor genesis, development and metastasis.

Discussions

To find optimal gene subsets from tremendous gene space, a challenge is how to avoid the effects of the curse of dimensionality. Usually, preliminary gene selection is often regarded as an indispensable step of classifier construction process. However, if it is performed only once on whole dataset and the performance is further evaluated by CV method, such gene selection may lead to an overoptimistic classification performance [24]. Even though the optimal gene subsets are selected independently on training set without the feedback of the test set and evaluated on independent test set, gene selection may also lead to over-fit the training set even test set if done improperly. On the other hand, over-fitting can also be easily caused by too many potential genes to discriminate among a small number of samples [68], which is evident by the fact that among the numerous gene subsets that can obtain 100% or nearly 100% k-fold CV accuracy on training set, only few can obtain very high prediction accuracy on independent test set. This is the reason why different methods usually find different optimal gene subsets and why many existing gene selection methods cannot consistently perform well on all tumor datasets. To address the over-fitting and selection bias problems, we adopt simple majority voting strategy to construct HBSA-based ensemble classifier with the optimal gene subsets. The results show that our ensemble classifier can efficiently avoid over-fitting and improve the stability of prediction performance.

Intuitively, the construction of classification model with more genes would obtain better generalization performance, but in fact the classifier constructed in such way usually leads to the bias of results. More importantly, we do not determine which genes contribute more to the classifier if a complicate classifier is used. As stated by Dabney [69], “a complicated classification model may be rejected for a simpler alternative even if the simpler alternative does not perform as well.” We observed that although simpler classification model constructed with fewer genes may be a little worse in accuracy than that with more genes, the results obtained by the simpler model result in less bias. We conclude that only a few top-ranked genes are enough for obtaining good classification performance. Particularly, when the number of the discriminate genes approximately equals to the number of subclasses in a dataset, high prediction accuracy is always obtained.

To prioritize genes for a specific tumor, the occurrence frequency of each gene in the selected gene subsets is counted and these genes are ranked according to the counted frequency to measure the importance of corresponding genes with respect to tumor. Our analysis based on protein-protein interaction network, individual gene function through relevant literatures and biological pathway demonstrate: 1) most of the top-ranked genes are important cancer genes or linked with cancer genes; 2) they are involved in cancer genesis, development, invasion, metastasis or angiogenesis. Thus these few top-ranked genes are useful for the screening of cancer genes and cancer biomarkers for tumor diagnosis, molecular treatment targets as a cancer-related gene pool and may also provide some insight into the mechanism of carcinogenesis and cancer development.

We also find that the occurrence frequency of a gene with respect to the number of those genes whose frequencies are greater than the corresponding frequency follows power-law distribution. As we know, power-law distribution is a universal phenomenon in nature. Gene regulatory network is widely accepted as a complex scare-free one with the property of power-law degree distribution. In such network, nodes represent genes and a link between two genes represents interaction between the two genes, and some nodes are more highly connected [94, 95]. No doubt that the nodes with high degree play a very important role in network because structure always affects function. There may be no or weak interaction (minimum relevance) among the genes in the same optimal gene subset selected by HBSA, but the classification accuracy is the combined effect of the genes in an optimal gene subset. If we design a cooperation network in which nodes represent genes in an optimal gene subset and a link between each two genes in the gene subset represents cooperation between the two genes. There is no cooperation between two genes belonging to different optimal gene subsets. The node-degree distribution of the network constructed in such way by all gene subsets in A* obviously follows power-law degree distribution. In such virtual network the genes with high node-degree correspond to the ones with high frequency in the optimal gene subsets, and these genes should closely involved in an actual gene regulatory network related to tumor.

However, the PPI network based analyses suggest that tumor-related genes are not always highly linked or hub ones in biological processes as indicated by the node linking degree. The node linking degree is the number of proteins that a node (protein) directly links and the nodes with higher degree are assumed as more important or hub proteins. For the prostate dataset, ABL1, a cytoplasmic and nuclear protein tyrosine kinase encoded by its proto-oncogene, ranked the third in Additional file 1: Table S22, is implicated in cell processes of cell differentiation, cell division, cell adhesion, and stress response, has a node linking degree of 100, with a CLD of 46. However, the protein MAF encoded by the first ranked gene MAF, another proto-oncogene, has a linking degree of only 12 degree in the PPI network, with a CLD of seven. For the SRBCT dataset, the fourth gene CAV1 in Additional file 1: Table S17, a tumor suppressor gene candidate that encodes protein Caveolin 1, has a linking degree of 73 in the PPI network, while the three proteins encoded by the top three genes CD99, MLLT11 and IGF2 in Additional file 1: Table S17 are 9, 0, and 16, respectively and have much lower CLD. One reason is that the protein-protein interactions in HPRD are most physical ones while our referred interactions of the selected genes are most functional. Since protein-protein interactions are highly dynamic in different cell states or highly different in different types of cells, the divergence may also be explained by the fact that the biological pathways in cancer cells may be greatly different or changed from pathways in normal cells, where many abnormal protein-protein interactions may be opened and normal interactions are closed. However, the real position of the cancer-related genes in the cancer oncogenesis and development pathways needs further study.

Our contribution in this paper is to propose two methods, namely the construction method of HBSA-based ensemble classifier and the HBSA-based gene ranking method, to obtain unbiased classification performance and find important tumor-related genes more biologically meaning in molecular tumor diagnosis. Unlike other search-based gene selection methods, such as GA/SVM [30] and sequential forward search (SFS) [36] that find only one optimal gene subsets, our HBSA can find as many optimal gene subsets as possible on training set and obtain determined results in each run. More importantly, these gene subsets by HBSA have the same minimum cardinal number which can ensure that it is reasonable to measure the significance of gene by using its occurrence frequency. Generally, HBSA-based gene ranking method is also different from many traditional gene ranking methods because our method simultaneously takes into account the discrimilability of individual gene and the relationship among multiple genes (the discrimilability of gene subset), while many traditional univariate Filters-based gene selection methods often select the top-ranked genes only according to their individual discriminative power and a few multivariate Filters-based methods only consider gene dependencies to improve classification performance. However, our method does not remove the redundant genes from the top-ranked genes because these redundant genes might be very important tumor-related genes [49]. On the other hand, our ensemble classifier is constructed by simplest but optimum individual classifiers on training set, which is different from other ensemble classifiers such as Bagging [96], Boosting [97] and random subspace method [98], in which individual classifiers are constructed by randomly resampling in sample set or feature set.

Conclusions

Many machine learning and statistical algorithms for GEP-based tumor classification are available, but many of these methods might suffer from the problems of over-fitting and gene selection bias because the number of genes far exceeds the number of tumor tissue samples. Thus, we proposed two novel and robust methods (HBSA-based ensemble classification and HBSA-based gene ranking methods) to obtain high but unbiased prediction accuracy on independent test set and to find the most important tumor-related genes. HBSA-based ensemble classifier is constructed by using majority voting strategy on the basis of the selected optimum gene subsets selected by HBSA to improve the stability of the classification performance. HBSA-based gene ranking method is to prioritize the genes by using their occurrence frequencies counted in all of the selected gene subsets so that a set of significant genes can be found, which can be used as the biomarker of clinical tumor diagnosis and prognosis. Although HBSA implicates two problems: over-fitting and selection bias, both the proposed HBSA-based ensemble classifier and HBSA-based gene ranking method can successfully avoid the two problems. Moreover, the two methods are robust, stable and global optimum when such gene subsets selected are enough because the two methods are statistically established on the basis of the optimal gene subsets. Particularly, our methods not only are simple but also have rich biomedical interpretability. The experimental results indicate that our method can obtain high prediction accuracy with approximately minimum gene subset, and it overcomes the problem that too many genes can also lead to over-fitting phenomenon [68].