Abstract

We review lattice results related to pion, kaon, D-meson, B-meson, and nucleon physics with the aim of making them easily accessible to the nuclear and particle physics communities. More specifically, we report on the determination of the light-quark masses, the form factor \(f_+(0)\) arising in the semileptonic \(K \rightarrow \pi \) transition at zero momentum transfer, as well as the decay constant ratio \(f_K/f_\pi \) and its consequences for the CKM matrix elements \(V_{us}\) and \(V_{ud}\). Furthermore, we describe the results obtained on the lattice for some of the low-energy constants of \(SU(2)_L\times SU(2)_R\) and \(SU(3)_L\times SU(3)_R\) Chiral Perturbation Theory. We review the determination of the \(B_K\) parameter of neutral kaon mixing as well as the additional four B parameters that arise in theories of physics beyond the Standard Model. For the heavy-quark sector, we provide results for \(m_c\) and \(m_b\) as well as those for the decay constants, form factors, and mixing parameters of charmed and bottom mesons and baryons. These are the heavy-quark quantities most relevant for the determination of CKM matrix elements and the global CKM unitarity-triangle fit. We review the status of lattice determinations of the strong coupling constant \(\alpha _s\). We consider nucleon matrix elements, and review the determinations of the axial, scalar and tensor bilinears, both isovector and flavor diagonal. Finally, in this review we have added a new section reviewing determinations of scale-setting quantities.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Flavour physics provides an important opportunity for exploring the limits of the Standard Model of particle physics and for constraining possible extensions that go beyond it. As the LHC explores a new energy frontier and as experiments continue to extend the precision frontier, the importance of flavour physics will grow, both in terms of searches for signatures of new physics through precision measurements and in terms of attempts to construct the theoretical framework behind direct discoveries of new particles. Crucial to such searches for new physics is the ability to quantify strong-interaction effects. Large-scale numerical simulations of lattice QCD allow for the computation of these effects from first principles. The scope of the Flavour Lattice Averaging Group (FLAG) is to review the current status of lattice results for a variety of physical quantities that are important for flavour physics. Set up in November 2007, it comprises experts in Lattice Field Theory, Chiral Perturbation Theory and Standard Model phenomenology. Our aim is to provide an answer to the frequently posed question “What is currently the best lattice value for a particular quantity?” in a way that is readily accessible to those who are not expert in lattice methods. This is generally not an easy question to answer; different collaborations use different lattice actions (discretizations of QCD) with a variety of lattice spacings and volumes, and with a range of masses for the u- and d-quarks. Not only are the systematic errors different, but also the methodology used to estimate these uncertainties varies between collaborations. In the present work, we summarize the main features of each of the calculations and provide a framework for judging and combining the different results. Sometimes it is a single result that provides the “best” value; more often it is a combination of results from different collaborations. Indeed, the consistency of values obtained using different formulations adds significantly to our confidence in the results.

The first four editions of the FLAG review were made public in 2010 [1], 2013 [2], 2016 [3], and 2019 [4] (and will be referred to as FLAG 10, FLAG 13, FLAG 16, and FLAG 19, respectively). The fourth edition reviewed results related to both light (u-, d- and s-), and heavy (c- and b-) flavours. The quantities related to pion and kaon physics were light-quark masses, the form factor \(f_+(0)\) arising in semileptonic \(K \rightarrow \pi \) transitions (evaluated at zero momentum transfer), the decay constants \(f_K\) and \(f_\pi \), the \(B_K\) parameter from neutral kaon mixing, and the kaon mixing matrix elements of new operators that arise in theories of physics beyond the Standard Model. Their implications for the CKM matrix elements \(V_{us}\) and \(V_{ud}\) were also discussed. Furthermore, results were reported for some of the low-energy constants of \(SU(2)_L \times SU(2)_R\) and \(SU(3)_L \times SU(3)_R\) Chiral Perturbation Theory. The quantities related to D- and B-meson physics that were reviewed were the masses of the charm and bottom quarks together with the decay constants, form factors, and mixing parameters of B- and D-mesons. These are the heavy-light quantities most relevant to the determination of CKM matrix elements and the global CKM unitarity-triangle fit. The current status of lattice results on the QCD coupling \(\alpha _s\) was reviewed. Last but not least, we reviewed calculations of nucleon matrix elements of flavor nonsinglet and singlet bilinear operators, including the nucleon axial charge \(g_A\) and the nucleon sigma term. These results are relevant for constraining \(V_{ud}\), for searches for new physics in neutron decays and other processes, and for dark matter searches.

In the present paper we provide updated results for all the above-mentioned quantities, but also extend the scope of the review by adding a section on scale setting, Sect. 11. The motivation for adding this section is that uncertainties in the value of the lattice spacing a are a major source of error for the calculation of a wide range of quantities. Thus we felt that a systematic compilation of results, comparing the different approaches to setting the scale, and summarizing the present status, would be a useful resource for the lattice community. An additional update is the inclusion, in Sect. 6.2, of a brief description of the status of lattice calculations of \(K\rightarrow \pi \pi \) decay amplitudes. Although some aspects of these calculations are not yet at the stage to be included in our averages, they are approaching this stage, and we felt that, given their phenomenological relevance, a brief review was appropriate.

For the most precisely determined quantities, isospin breaking – both from the up-down quark mass difference and from QED – must be included. A short review of methods used to include QED in lattice-QCD simulations is given in Sect. 3.1.3. An important issue here is that, in the context of a QED\(+\)QCD theory, the separation into QED and QCD contributions to a given physical quantity is ambiguous – there are several ways of defining such a separation. This issue is discussed from different viewpoints in the section on quark masses – see Sect. 3.1.1 – and that on scale setting – see Sect. 11. We stress, however, that the physical observable in \(\hbox {QCD}+\hbox {QED}\) is defined unambiguously. Any ambiguity only arises because we are trying to separate a well-defined, physical quantity into two unphysical parts that provide useful information for phenomenology.

Our main results are collected in Tables 1, 2, 3, 4 and 5. As is clear from the tables, for most quantities there are results from ensembles with different values for \(N_f\). In most cases, there is reasonable agreement among results with \(N_f=2\), \(2+1\), and \(2+1+1\). As precision increases, we may some day be able to distinguish among the different values of \(N_f\), in which case, presumably \(2+1+1\) would be the most realistic. (If isospin violation is critical, then \(1+1+1\) or \(1+1+1+1\) might be desired.) At present, for some quantities the errors in the \(N_f=2+1\) results are smaller than those with \(N_f=2+1+1\) (e.g., for \(m_c\)), while for others the relative size of the errors is reversed. Our suggestion to those using the averages is to take whichever of the \(N_f=2+1\) or \(N_f=2+1+1\) results has the smaller error. We do not recommend using the \(N_f=2\) results, except for studies of the \(N_f\)-dependence of condensates and \(\alpha _s\), as these have an uncontrolled systematic error coming from quenching the strange quark.

Our plan is to continue providing FLAG updates, in the form of a peer reviewed paper, roughly on a triennial basis. This effort is supplemented by our more frequently updated website http://flag.unibe.ch [5], where figures as well as pdf-files for the individual sections can be downloaded. The papers reviewed in the present edition have appeared before the closing date 30 April 2021.Footnote 1

This review is organized as follows. In the remainder of Sect. 1 we summarize the composition and rules of FLAG and discuss general issues that arise in modern lattice calculations. In Sect. 2, we explain our general methodology for evaluating the robustness of lattice results. We also describe the procedures followed for combining results from different collaborations in a single average or estimate (see Sect. 2.2 for our definition of these terms). The rest of the paper consists of sections, each dedicated to a set of closely connected physical quantities, or, for the final section, to the determination of the lattice scale. Each of these sections is accompanied by an Appendix with explicatory notes.Footnote 2

In previous editions, we have provided, in an appendix, a glossary summarizing some standard lattice terminology and describing the most commonly used lattice techniques and methodologies. Since no significant updates in this information have occurred since our previous edition, we have decided, in the interests of reducing the length of this review, to omit this glossary, and refer the reader to FLAG 19 for this information [4]. This appendix also contained, in previous versions, a tabulation of the actions used in the papers that were reviewed. Since this information is available in the discussions in the separate sections, and is time-consuming to collect from the sections, we have dropped these tables. We have, however, kept a short appendix, Appendix B.1, describing the parameterizations of semileptonic form factors that are used in Sect. 8. Moreover, in Appendix A, we have added a summary and explanations of acronyms introduced in the manuscript. Collaborations referred to by an acronym can be identified through the corresponding bibliographic reference.

1.1 FLAG composition, guidelines and rules

FLAG strives to be representative of the lattice community, both in terms of the geographical location of its members and the lattice collaborations to which they belong. We aspire to provide the nuclear- and particle-physics communities with a single source of reliable information on lattice results.

In order to work reliably and efficiently, we have adopted a formal structure and a set of rules by which all FLAG members abide. The collaboration presently consists of an Advisory Board (AB), an Editorial Board (EB), and nine Working Groups (WG). The rôle of the Advisory Board is to provide oversight of the content, procedures, schedule and membership of FLAG, to help resolve disputes, to serve as a source of advice to the EB and to FLAG as a whole, and to provide a critical assessment of drafts. They also give their approval of the final version of the preprint before it is rendered public. The Editorial Board coordinates the activities of FLAG, sets priorities and intermediate deadlines, organizes votes on FLAG procedures, writes the introductory sections, and takes care of the editorial work needed to amalgamate the sections written by the individual working groups into a uniform and coherent review. The working groups concentrate on writing the review of the physical quantities for which they are responsible, which is subsequently circulated to the whole collaboration for critical evaluation.

The current list of FLAG members and their Working Group assignments is:

-

Advisory Board (AB):G. Colangelo, M. Golterman, P. Hernandez, T. Onogi, and R. Van de Water

-

Editorial Board (EB):S. Gottlieb, A. Jüttner, S. Hashimoto, S.R. Sharpe, and U. Wenger

-

Working Groups (coordinator listed first):

-

Quark masses T. Blum, A. Portelli, and A. Ramos

-

\(V_{us},V_{ud}\) T. Kaneko, J. N. Simone, S. Simula, and N. Tantalo

-

LEC S. Dürr, H. Fukaya, and U.M. Heller

-

\(B_K\) P. Dimopoulos, X. Feng, and G. Herdoiza

-

\(f_{B_{(s)}}\), \(f_{D_{(s)}}\), \(B_B\) Y. Aoki, M. Della Morte, and C. Monahan

-

b and c semileptonic and radiative decays E. Lunghi, S. Meinel, and C. Pena

-

\(\alpha _s\) S. Sint, R. Horsley, and P. Petreczky

-

NME R. Gupta, S. Collins, A. Nicholson, and H. Wittig

-

Scale setting R. Sommer, N. Tantalo, and U. Wenger

-

The most important FLAG guidelines and rules are the following:

-

the composition of the AB reflects the main geographical areas in which lattice collaborations are active, with members from America, Asia/Oceania, and Europe;

-

the mandate of regular members is not limited in time, but we expect that a certain turnover will occur naturally;

-

whenever a replacement becomes necessary this has to keep, and possibly improve, the balance in FLAG, so that different collaborations, from different geographical areas are represented;

-

in all working groups the members must belong to different lattice collaborations;

-

a paper is in general not reviewed (nor colour-coded, as described in the next section) by any of its authors;

-

lattice collaborations will be consulted on the colour coding of their calculation;

-

there are also internal rules regulating our work, such as voting procedures.

As for FLAG 19, for this review we sought the advice of external reviewers once a complete draft of the review was available. For each review section, we have asked one lattice expert (who could be a FLAG alumnus/alumna) and one nonlattice phenomenologist for a critical assessment. The one exception is the scale-setting section, where only a lattice expert has been asked to provide input. This is similar to the procedure followed by the Particle Data Group in the creation of the Review of Particle Physics. The reviewers provide comments and feedback on scientific and stylistic matters. They are not anonymous, and enter into a discussion with the authors of the WG. Our aim with this additional step is to make sure that a wider array of viewpoints enter into the discussions, so as to make this review more useful for its intended audience.

1.2 Citation policy

We draw attention to this particularly important point. As stated above, our aim is to make lattice-QCD results easily accessible to those without lattice expertise, and we are well aware that it is likely that some readers will only consult the present paper and not the original lattice literature. It is very important that this paper not be the only one cited when our results are quoted. We strongly suggest that readers also cite the original sources. In order to facilitate this, in Tables 1, 2, 3, 4, and 5, besides summarizing the main results of the present review, we also cite the original references from which they have been obtained. In addition, for each figure we make a bibtex file available on our webpage [5] which contains the bibtex entries of all the calculations contributing to the FLAG average or estimate. The bibliography at the end of this paper should also make it easy to cite additional papers. Indeed, we hope that the bibliography will be one of the most widely used elements of the whole paper.

1.3 General issues

Several general issues concerning the present review are thoroughly discussed in Sec. 1.1 of our initial 2010 paper [1], and we encourage the reader to consult the relevant pages. In the remainder of the present subsection, we focus on a few important points. Though the discussion has been duly updated, it is similar to that of Sec. 1.2 in the previous three reviews [2,3,4].

The present review aims to achieve two distinct goals: first, to provide a description of the relevant work done on the lattice; and, second, to draw conclusions on the basis of that work, summarizing the results obtained for the various quantities of physical interest.

The core of the information about the work done on the lattice is presented in the form of tables, which not only list the various results, but also describe the quality of the data that underlie them. We consider it important that this part of the review represents a generally accepted description of the work done. For this reason, we explicitly specify the quality requirements used and provide sufficient details in appendices so that the reader can verify the information given in the tables.Footnote 3

On the other hand, the conclusions drawn on the basis of the available lattice results are the responsibility of FLAG alone. Preferring to err on the side of caution, in several cases we draw conclusions that are more conservative than those resulting from a plain weighted average of the available lattice results. This cautious approach is usually adopted when the average is dominated by a single lattice result, or when only one lattice result is available for a given quantity. In such cases, one does not have the same degree of confidence in results and errors as when there is agreement among several different calculations using different approaches. The reader should keep in mind that the degree of confidence cannot be quantified, and it is not reflected in the quoted errors.

Each discretization has its merits, but also its shortcomings. For most topics covered in this review we have an increasingly broad database, and for most quantities lattice calculations based on totally different discretizations are now available. This is illustrated by the dense population of the tables and figures in most parts of this review. Those calculations that do satisfy our quality criteria indeed lead, in almost all cases, to consistent results, confirming universality within the accuracy reached. The consistency between independent lattice results, obtained with different discretizations, methods, and simulation parameters, is an important test of lattice QCD, and observing such consistency also provides further evidence that systematic errors are fully under control.

In the sections dealing with heavy quarks and with \(\alpha _s\), the situation is not the same. Since the b-quark mass can barely be resolved with current lattice spacings, most lattice methods for treating b quarks use effective field theory at some level. This introduces additional complications not present in the light-quark sector. An overview of the issues specific to heavy-quark quantities is given in the introduction of Sect. 8. For B- and D-meson leptonic decay constants, there already exists a good number of different independent calculations that use different heavy-quark methods, but there are only a few independent calculations of semileptonic B, \(\Lambda _b\), and D form factors and of \(B-\bar{B}\) mixing parameters. For \(\alpha _s\), most lattice methods involve a range of scales that need to be resolved and controlling the systematic error over a large range of scales is more demanding. The issues specific to determinations of the strong coupling are summarized in Sect. 9.

Number of sea quarks in lattice simulations

Lattice-QCD simulations currently involve two, three or four flavours of dynamical quarks. Most simulations set the masses of the two lightest quarks to be equal, while the strange and charm quarks, if present, are heavier (and tuned to lie close to their respective physical values). Our notation for these simulations indicates which quarks are nondegenerate, e.g., \(N_{\,f}=2+1\) if \(m_u=m_d < m_s\) and \(N_{\,f}=2+1+1\) if \(m_u=m_d< m_s < m_c\). Calculations with \(N_{\,f}=2\), i.e., two degenerate dynamical flavours, often include strange valence quarks interacting with gluons, so that bound states with the quantum numbers of the kaons can be studied, albeit neglecting strange sea-quark fluctuations. The quenched approximation (\(N_f=0\)), in which all sea-quark contributions are omitted, has uncontrolled systematic errors and is no longer used in modern lattice simulations with relevance to phenomenology. Accordingly, we will review results obtained with \(N_f=2\), \(N_f=2+1\), and \(N_f = 2+1+1\), but omit earlier results with \(N_f=0\). The only exception concerns the QCD coupling constant \(\alpha _s\). Since this observable does not require valence light quarks, it is theoretically well defined also in the \(N_f=0\) theory, which is simply pure gluodynamics. The \(N_f\)-dependence of \(\alpha _s\), or more precisely of the related quantity \(r_0 \Lambda _{\overline{\textrm{MS}}}\), is a theoretical issue of considerable interest; here \(r_0\) is a quantity with the dimension of length that sets the physical scale, as discussed in Sect. 11. We stress, however, that only results with \(N_f \ge 3\) are used to determine the physical value of \(\alpha _s\) at a high scale.

Lattice actions, simulation parameters, and scale setting

The remarkable progress in the precision of lattice calculations is due to improved algorithms, better computing resources, and, last but not least, conceptual developments. Examples of the latter are improved actions that reduce lattice artifacts and actions that preserve chiral symmetry to very good approximation. A concise characterization of the various discretizations that underlie the results reported in the present review is given in Appendix A.1 of FLAG 19.

Physical quantities are computed in lattice simulations in units of the lattice spacing so that they are dimensionless. For example, the pion decay constant that is obtained from a simulation is \(f_\pi a\), where a is the spacing between two neighboring lattice sites. (All simulations with results quoted in this review use hypercubic lattices, i.e., with the same spacing in all four Euclidean directions.) To convert these results to physical units requires knowledge of the lattice spacing a at the fixed values of the bare QCD parameters (quark masses and gauge coupling) used in the simulation. This is achieved by requiring agreement between the lattice calculation and experimental measurement of a known quantity, which thus “sets the scale” of a given simulation. Given the central importance of this procedure, we include in this edition of FLAG a dedicated section, Sect. 11, discussing the issues and results.

Renormalization and scheme dependence

Several of the results covered by this review, such as quark masses, the gauge coupling, and B-parameters, are for quantities defined in a given renormalization scheme and at a specific renormalization scale. The schemes employed (e.g., regularization-independent MOM schemes) are often chosen because of their specific merits when combined with the lattice regularization. For a brief discussion of their properties, see Appendix A.3 of FLAG 19. The conversion of the results obtained in these so-called intermediate schemes to more familiar regularization schemes, such as the \({\overline{\textrm{MS}}}\)-scheme, is done with the aid of perturbation theory. It must be stressed that the renormalization scales accessible in simulations are limited, because of the presence of an ultraviolet (UV) cutoff of \(\sim \pi /a\). To safely match to \({\overline{\textrm{MS}}}\), a scheme defined in perturbation theory, Renormalization Group (RG) running to higher scales is performed, either perturbatively or nonperturbatively (the latter using finite-size scaling techniques).

Extrapolations

Because of limited computing resources, lattice simulations are often performed at unphysically heavy pion masses, although results at the physical point have become increasingly common. Further, numerical simulations must be done at nonzero lattice spacing, and in a finite (four-dimensional) volume. In order to obtain physical results, lattice data are obtained at a sequence of pion masses and a sequence of lattice spacings, and then extrapolated to the physical pion mass and to the continuum limit. In principle, an extrapolation to infinite volume is also required. However, for most quantities discussed in this review, finite-volume effects are exponentially small in the linear extent of the lattice in units of the pion mass, and, in practice, one often verifies volume independence by comparing results obtained on a few different physical volumes, holding other parameters fixed. To control the associated systematic uncertainties, these extrapolations are guided by effective theories. For light-quark actions, the lattice-spacing dependence is described by Symanzik’s effective theory [121, 122]; for heavy quarks, this can be extended and/or supplemented by other effective theories such as Heavy-Quark Effective Theory (HQET). The pion-mass dependence can be parameterized with Chiral Perturbation Theory (\(\chi \)PT), which takes into account the Nambu–Goldstone nature of the lowest excitations that occur in the presence of light quarks. Similarly, one can use Heavy-Light Meson Chiral Perturbation Theory (HM\(\chi \)PT) to extrapolate quantities involving mesons composed of one heavy (b or c) and one light quark. One can combine Symanzik’s effective theory with \(\chi \)PT to simultaneously extrapolate to the physical pion mass and the continuum; in this case, the form of the effective theory depends on the discretization. See Appendix A.4 of FLAG 19 for a brief description of the different variants in use and some useful references. Finally, \(\chi \)PT can also be used to estimate the size of finite-volume effects measured in units of the inverse pion mass, thus providing information on the systematic error due to finite-volume effects in addition to that obtained by comparing simulations at different volumes.

Excited-state contamination

In all the hadronic matrix elements discussed in this review, the hadron in question is the lightest state with the chosen quantum numbers. This implies that it dominates the required correlation functions as their extent in Euclidean time is increased. Excited-state contributions are suppressed by \(e^{-\Delta E \Delta \tau }\), where \(\Delta E\) is the gap between the ground and excited states, and \(\Delta \tau \) the relevant separation in Euclidean time. The size of \(\Delta E\) depends on the hadron in question, and in general is a multiple of the pion mass. In practice, as discussed at length in Sect. 10, the contamination of signals due to excited-state contributions is a much more challenging problem for baryons than for the other particles discussed here. This is in part due to the fact that the signal-to-noise ratio drops exponentially for baryons, which reduces the values of \(\Delta \tau \) that can be used.

Critical slowing down

The lattice spacings reached in recent simulations go down to 0.05 fm or even smaller. In this regime, long autocorrelation times slow down the sampling of the configurations [123,124,125,126,127,128,129,130,131,132]. Many groups check for autocorrelations in a number of observables, including the topological charge, for which a rapid growth of the autocorrelation time is observed with decreasing lattice spacing. This is often referred to as topological freezing. A solution to the problem consists in using open boundary conditions in time [133], instead of the more common antiperiodic ones. More recently, two other approaches have been proposed, one based on a multiscale thermalization algorithm [134, 135] and another based on defining QCD on a nonorientable manifold [136]. The problem is also touched upon in Sect. 9.2.1, where it is stressed that attention must be paid to this issue. While large scale simulations with open boundary conditions are already far advanced [137], few results reviewed here have been obtained with any of the above methods. It is usually assumed that the continuum limit can be reached by extrapolation from the existing simulations, and that potential systematic errors due to the long autocorrelation times have been adequately controlled. Partially or completely frozen topology would produce a mixture of different \(\theta \) vacua, and the difference from the desired \(\theta =0\) result may be estimated in some cases using chiral perturbation theory, which gives predictions for the \(\theta \)-dependence of the physical quantity of interest [138, 139]. These ideas have been systematically and successfully tested in various models in [140, 141], and a numerical test on MILC ensembles indicates that the topology dependence for some of the physical quantities reviewed here is small, consistent with theoretical expectations [142].

Simulation algorithms and numerical errors

Most of the modern lattice-QCD simulations use exact algorithms such as those of Refs. [143, 144], which do not produce any systematic errors when exact arithmetic is available. In reality, one uses numerical calculations at double (or in some cases even single) precision, and some errors are unavoidable. More importantly, the inversion of the Dirac operator is carried out iteratively and it is truncated once some accuracy is reached, which is another source of potential systematic error. In most cases, these errors have been confirmed to be much less than the statistical errors. In the following we assume that this source of error is negligible. Some of the most recent simulations use an inexact algorithm in order to speed up the computation, though it may produce systematic effects. Currently available tests indicate that errors from the use of inexact algorithms are under control [145].

2 Quality criteria, averaging and error estimation

The essential characteristics of our approach to the problem of rating and averaging lattice quantities have been outlined in our first publication [1]. Our aim is to help the reader assess the reliability of a particular lattice result without necessarily studying the original article in depth. This is a delicate issue, since the ratings may make things appear simpler than they are. Nevertheless, it safeguards against the possibility of using lattice results, and drawing physics conclusions from them, without a critical assessment of the quality of the various calculations. We believe that, despite the risks, it is important to provide some compact information about the quality of a calculation. We stress, however, the importance of the accompanying detailed discussion of the results presented in the various sections of the present review.

2.1 Systematic errors and colour code

The major sources of systematic error are common to most lattice calculations. These include, as discussed in detail below, the chiral, continuum, and infinite-volume extrapolations. To each such source of error for which systematic improvement is possible we assign one of three coloured symbols: green star, unfilled green circle (which replaced in Ref. [2] the amber disk used in the original FLAG review [1]) or red square. These correspond to the following ratings:

:

:-

the parameter values and ranges used to generate the data sets allow for a satisfactory control of the systematic uncertainties;

:

:-

the parameter values and ranges used to generate the data sets allow for a reasonable attempt at estimating systematic uncertainties, which however could be improved;

:

:-

the parameter values and ranges used to generate the data sets are unlikely to allow for a reasonable control of systematic uncertainties.

The appearance of a red tag, even in a single source of systematic error of a given lattice result, disqualifies it from inclusion in the global average.

Note that in the first two editions [1, 2], FLAG used the three symbols in order to rate the reliability of the systematic errors attributed to a given result by the paper’s authors. Starting with FLAG 16 [3] the meaning of the symbols has changed slightly – they now rate the quality of a particular simulation, based on the values and range of the chosen parameters, and its aptness to obtain well-controlled systematic uncertainties. They do not rate the quality of the analysis performed by the authors of the publication. The latter question is deferred to the relevant sections of the present review, which contain detailed discussions of the results contributing (or not) to each FLAG average or estimate.

For most quantities the colour-coding system refers to the following sources of systematic errors: (i) chiral extrapolation; (ii) continuum extrapolation; (iii) finite volume. As we will see below, renormalization is another source of systematic uncertainties in several quantities. This we also classify using the three coloured symbols listed above, but now with a different rationale: they express how reliably these quantities are renormalized, from a field-theoretic point of view (namely, nonperturbatively, or with 2-loop or 1-loop perturbation theory).

Given the sophisticated status that the field has attained, several aspects, besides those rated by the coloured symbols, need to be evaluated before one can conclude whether a particular analysis leads to results that should be included in an average or estimate. Some of these aspects are not so easily expressible in terms of an adjustable parameter such as the lattice spacing, the pion mass or the volume. As a result of such considerations, it sometimes occurs, albeit rarely, that a given result does not contribute to the FLAG average or estimate, despite not carrying any red tags. This happens, for instance, whenever aspects of the analysis appear to be incomplete (e.g., an incomplete error budget), so that the presence of inadequately controlled systematic effects cannot be excluded. This mostly refers to results with a statistical error only, or results in which the quoted error budget obviously fails to account for an important contribution.

Of course, any colour coding has to be treated with caution; we emphasize that the criteria are subjective and evolving. Sometimes, a single source of systematic error dominates the systematic uncertainty and it is more important to reduce this uncertainty than to aim for green stars for other sources of error. In spite of these caveats, we hope that our attempt to introduce quality measures for lattice simulations will prove to be a useful guide. In addition, we would like to stress that the agreement of lattice results obtained using different actions and procedures provides further validation.

2.1.1 Systematic effects and rating criteria

The precise criteria used in determining the colour coding are unavoidably time-dependent; as lattice calculations become more accurate, the standards against which they are measured become tighter. For this reason FLAG reassesses criteria with each edition and as a result some of the quality criteria (the one on chiral extrapolation for instance) have been tightened up over time [1,2,3,4].

In the following, we present the rating criteria used in the current report. While these criteria apply to most quantities without modification there are cases where they need to be amended or additional criteria need to be defined. For instance, when discussing results obtained in the \(\epsilon \)-regime of chiral perturbation theory in Sect. 5 the finite volume criterion listed below for the p-regime is no longer appropriate.Footnote 4 Similarly, the discussion of the strong coupling constant in Sect. 9 requires tailored criteria for renormalization, perturbative behaviour, and continuum extrapolation. Finally, in the section on nuclear matrix elements, Sect. 10, the chiral extrapolation criterion is made slightly stronger, and a new criterion is adopted for excited-state contributions. In such cases, the modified criteria are discussed in the respective sections. Apart from only a few exceptions the following colour code applies in the tables:

-

Chiral extrapolation:

:

:-

\(M_{\pi ,\textrm{min}}< 200\) MeV, with three or more pion masses used in the extrapolation

or two values of \(M_\pi \) with one lying within 10 MeV of 135 MeV (the physical neutral pion mass) and the other one below 200 MeV

:

:-

200 MeV \(\le M_{\pi ,{\textrm{min}}} \le \) 400 MeV, with three or more pion masses used in the extrapolation

or two values of \(M_\pi \) with \(M_{\pi ,{\textrm{min}}}<\) 200 MeV

or a single value of \(M_\pi \), lying within 10 MeV of 135 MeV (the physical neutral pion mass)

:

:-

otherwise

This criterion is unchanged from FLAG 19. In Sect. 10 the upper end of the range for \(M_{\pi ,{\textrm{min}}}\) in the green circle criterion is lowered to 300 MeV, as in FLAG 19.

-

Continuum extrapolation:

:

:-

at least three lattice spacings and at least two points below 0.1 fm and a range of lattice spacings satisfying \( [a_{{\textrm{max}}}/a_{\textrm{min}}]^2 \ge 2\)

:

:-

at least two lattice spacings and at least one point below 0.1 fm and a range of lattice spacings satisfying \([a_{{\textrm{max}}}/a_{\textrm{min}}]^2 \ge 1.4\)

:

:-

otherwise

It is assumed that the lattice action is \({\mathcal {O}}(a)\)-improved (i.e., the discretization errors vanish quadratically with the lattice spacing); otherwise this will be explicitly mentioned. For unimproved actions an additional lattice spacing is required. This condition is unchanged from FLAG 19.

-

Finite-volume effects:

The finite-volume colour code used for a result is chosen to be the worse of the QCD and the QED codes, as described below. If only QCD is used the QED colour code is ignored. – For QCD:

-

\([M_{\pi ,\textrm{min}} / M_{\pi ,\textrm{fid}}]^2 \exp \{4-M_{\pi ,\textrm{min}}[L(M_{\pi ,\textrm{min}})]_{{\textrm{max}}}\} < 1\), or at least three volumes

\([M_{\pi ,\textrm{min}} / M_{\pi ,\textrm{fid}}]^2 \exp \{4-M_{\pi ,\textrm{min}}[L(M_{\pi ,\textrm{min}})]_{{\textrm{max}}}\} < 1\), or at least three volumes -

\([M_{\pi ,\textrm{min}} / M_{\pi ,\textrm{fid}}]^2 \exp \{3-M_{\pi ,\textrm{min}}[L(M_{\pi ,\textrm{min}})]_{{\textrm{max}}}\} < 1\), or at least two volumes

\([M_{\pi ,\textrm{min}} / M_{\pi ,\textrm{fid}}]^2 \exp \{3-M_{\pi ,\textrm{min}}[L(M_{\pi ,\textrm{min}})]_{{\textrm{max}}}\} < 1\), or at least two volumes -

otherwise

otherwise

where we have introduced \([L(M_{\pi ,\textrm{min}})]_{{\textrm{max}}}\), which is the maximum box size used in the simulations performed at the smallest pion mass \(M_{\pi ,{{\textrm{min}}}}\), as well as a fiducial pion mass \(M_{\pi ,\textrm{fid}}\), which we set to 200 MeV (the cutoff value for a green star in the chiral extrapolation). It is assumed here that calculations are in the p-regime of chiral perturbation theory, and that all volumes used exceed 2 fm. The rationale for this condition is as follows. Finite volume effects contain the universal factor \(\exp \{- L~M_\pi \}\), and if this were the only contribution a criterion based on the values of \(M_{\pi ,\text {min}} L\) would be appropriate. However, as pion masses decrease, one must also account for the weakening of the pion couplings. In particular, 1-loop chiral perturbation theory [146] reveals a behaviour proportional to \(M_\pi ^2 \exp \{- L~M_\pi \}\). Our condition includes this weakening of the coupling, and ensures, for example, that simulations with \(M_{\pi ,\textrm{min}} = 135~\textrm{MeV}\) and \(L~M_{\pi ,\textrm{min}} = 3.2\) are rated equivalently to those with \(M_{\pi ,\textrm{min}} = 200~\textrm{MeV}\) and \(L~M_{\pi ,\textrm{min}} = 4\). – For QED (where applicable):

-

\(1/([M_{\pi ,\textrm{min}}L(M_{\pi ,\textrm{min}})]_{{\textrm{max}}})^{n_{\textrm{min}}}<0.02\), or at least four volumes

\(1/([M_{\pi ,\textrm{min}}L(M_{\pi ,\textrm{min}})]_{{\textrm{max}}})^{n_{\textrm{min}}}<0.02\), or at least four volumes -

\(1/([M_{\pi ,\textrm{min}}L(M_{\pi ,\textrm{min}})]_{{\textrm{max}}})^{n_{\textrm{min}}}<0.04\), or at least three volumes

\(1/([M_{\pi ,\textrm{min}}L(M_{\pi ,\textrm{min}})]_{{\textrm{max}}})^{n_{\textrm{min}}}<0.04\), or at least three volumes -

otherwise

otherwise

Because of the infrared-singular structure of QED, electromagnetic finite-volume effects decay only like a power of the inverse spatial extent. In several cases like mass splittings [147, 148] or leptonic decays [149], the leading corrections are known to be universal, i.e., independent of the structure of the involved hadrons. In such cases, the leading universal effects can be directly subtracted exactly from the lattice data. We denote \(n_{\textrm{min}}\) the smallest power of \(\frac{1}{L}\) at which such a subtraction cannot be done. In the widely used finite-volume formulation \(\textrm{QED}_L\), one always has \(n_{\textrm{min}}\le 3\) due to the nonlocality of the theory [150]. The QED criteria are used here only in Sect. 3. Both QCD and QED criteria are unchanged from FLAG 19.

-

-

Isospin breaking effects (where applicable):

-

all leading isospin breaking effects are included in the lattice calculation

all leading isospin breaking effects are included in the lattice calculation -

isospin breaking effects are included using the electro-quenched approximation

isospin breaking effects are included using the electro-quenched approximation -

otherwise

otherwise

This criterion is used for quantities which are breaking isospin symmetry or which can be determined at the sub-percent accuracy where isospin breaking effects, if not included, are expected to be the dominant source of uncertainty. In the current edition, this criterion is only used for the up- and down-quark masses, and related quantities (\(\epsilon \), \(Q^2\) and \(R^2\)). The criteria for isospin breaking effects are unchanged from FLAG 19.

-

-

Renormalization (where applicable):

-

nonperturbative

nonperturbative -

1-loop perturbation theory or higher with a reasonable estimate of truncation errors

1-loop perturbation theory or higher with a reasonable estimate of truncation errors -

otherwise

otherwise

In Ref. [1], we assigned a red square to all results which were renormalized at 1-loop in perturbation theory. In FLAG 13 [2], we decided that this was too restrictive, since the error arising from renormalization constants, calculated in perturbation theory at 1-loop, is often estimated conservatively and reliably. These criteria have remained unchanged since then.

-

-

Renormalization Group (RG) running (where applicable):

For scale-dependent quantities, such as quark masses or \(B_K\), it is essential that contact with continuum perturbation theory can be established. Various different methods are used for this purpose (cf. Appendix A.3 in FLAG 19 [4]): Regularization-independent Momentum Subtraction (RI/MOM), the Schrödinger functional, and direct comparison with (resummed) perturbation theory. Irrespective of the particular method used, the uncertainty associated with the choice of intermediate renormalization scales in the construction of physical observables must be brought under control. This is best achieved by performing comparisons between nonperturbative and perturbative running over a reasonably broad range of scales. These comparisons were initially only made in the Schrödinger functional approach, but are now also being performed in RI/MOM schemes. We mark the data for which information about nonperturbative running checks is available and give some details, but do not attempt to translate this into a colour code.

The pion mass plays an important role in the criteria relevant for chiral extrapolation and finite volume. For some of the regularizations used, however, it is not a trivial matter to identify this mass. In the case of twisted-mass fermions, discretization effects give rise to a mass difference between charged and neutral pions even when the up- and down-quark masses are equal: the charged pion is found to be the heavier of the two for twisted-mass Wilson fermions (cf. Ref. [151]). In early works, typically referring to \(N_f=2\) simulations (e.g., Refs. [151] and [87]), chiral extrapolations are based on chiral perturbation theory formulae which do not take these regularization effects into account. After the importance of accounting for isospin breaking when doing chiral fits was shown in Ref. [152], later works, typically referring to \(N_f=2+1+1\) simulations, have taken these effects into account [7]. We use \(M_{\pi ^\pm }\) for \(M_{\pi ,\textrm{min}}\) in the chiral-extrapolation rating criterion. On the other hand, we identify \(M_{\pi ,\textrm{min}}\) with the root mean square (RMS) of \(M_{\pi ^+}\), \(M_{\pi ^-}\) and \(M_{\pi ^0}\) in the finite-volume rating criterion.

In the case of staggered fermions, discretization effects give rise to several light states with the quantum numbers of the pion.Footnote 5 The mass splitting among these “taste” partners represents a discretization effect of \({\mathcal {O}}(a^2)\), which can be significant at large lattice spacings but shrinks as the spacing is reduced. In the discussion of the results obtained with staggered quarks given in the following sections, we assume that these artifacts are under control. We conservatively identify \(M_{\pi ,\textrm{min}}\) with the root mean square (RMS) average of the masses of all the taste partners, both for chiral-extrapolation and finite-volume criteria.

In some of the simulations, the fermion formulations employed for the valence quarks are different from those used for the sea quarks. Even when the fermion formulations are the same, there are cases where the sea and valence quark masses differ. In such cases, we use the smaller of the valence-valence and valence-sea \(M_{\pi _{{\textrm{min}}}}\) values in the finite-volume criteria, since either of these channels may give the leading contribution depending on the quantity of interest at the one-loop level of chiral perturbation theory. For the chiral-extrapolation criteria, on the other hand, we use the unitary point, where the sea and valence quark masses are the same, to define \(M_{\pi _{{\textrm{min}}}}\).

The strong coupling \(\alpha _s\) is computed in lattice QCD with methods differing substantially from those used in the calculations of the other quantities discussed in this review. Therefore, we have established separate criteria for \(\alpha _s\) results, which will be discussed in Sect. 9.2.1.

In the section on nuclear matrix elements, Sect. 10, an additional criterion is used. This concerns the level of control over contamination from excited states, which is a more challenging issue for nucleons than for mesons. In response to an improved understanding of the impact of this contamination, the excited-state contamination criterion has been made more stringent compared to that in FLAG 19.

2.1.2 Heavy-quark actions

For the b quark, the discretization of the heavy-quark action follows a very different approach from that used for light flavours. There are several different methods for treating heavy quarks on the lattice, each with its own issues and considerations. Most of these methods use Effective Field Theory (EFT) at some point in the computation, either via direct simulation of the EFT, or by using EFT as a tool to estimate the size of cutoff errors, or by using EFT to extrapolate from the simulated lattice quark masses up to the physical b-quark mass. Because of the use of an EFT, truncation errors must be considered together with discretization errors.

The charm quark lies at an intermediate point between the heavy and light quarks. In our earlier reviews, the calculations involving charm quarks often treated it using one of the approaches adopted for the b quark. Since FLAG 16 [3], however, most calculations simulate the charm quark using light-quark actions. This has become possible thanks to the increasing availability of dynamical gauge field ensembles with fine lattice spacings. But clearly, when charm quarks are treated relativistically, discretization errors are more severe than those of the corresponding light-quark quantities.

In order to address these complications, the heavy-quark section adds an additional, bipartite, treatment category to the rating system. The purpose of this criterion is to provide a guideline for the level of action and operator improvement needed in each approach to make reliable calculations possible, in principle.

A description of the different approaches to treating heavy quarks on the lattice can be found in Appendix A.1.3 of FLAG 19 [4]. For truncation errors we use HQET power counting throughout, since this review is focused on heavy-quark quantities involving B and D mesons rather than bottomonium or charmonium quantities. Here we describe the criteria for how each approach must be implemented in order to receive an acceptable rating ( ) for both the heavy-quark actions and the weak operators. Heavy-quark implementations without the level of improvement described below are rated not acceptable (

) for both the heavy-quark actions and the weak operators. Heavy-quark implementations without the level of improvement described below are rated not acceptable ( ). The matching is evaluated together with renormalization, using the renormalization criteria described in Sect. 2.1.1. We emphasize that the heavy-quark implementations rated as acceptable and described below have been validated in a variety of ways, such as via phenomenological agreement with experimental measurements, consistency between independent lattice calculations, and numerical studies of truncation errors. These tests are summarized in Sect. 8.

). The matching is evaluated together with renormalization, using the renormalization criteria described in Sect. 2.1.1. We emphasize that the heavy-quark implementations rated as acceptable and described below have been validated in a variety of ways, such as via phenomenological agreement with experimental measurements, consistency between independent lattice calculations, and numerical studies of truncation errors. These tests are summarized in Sect. 8.

Relativistic heavy-quark actions

at least tree-level \({\mathcal {O}}(a)\) improved action and weak operators

at least tree-level \({\mathcal {O}}(a)\) improved action and weak operators

This is similar to the requirements for light-quark actions. All current implementations of relativistic heavy-quark actions satisfy this criterion.

NRQCD

tree-level matched through \({\mathcal {O}}(1/m_h)\) and improved through \({\mathcal {O}}(a^2)\)

tree-level matched through \({\mathcal {O}}(1/m_h)\) and improved through \({\mathcal {O}}(a^2)\)

The current implementations of NRQCD satisfy this criterion, and also include tree-level corrections of \({\mathcal {O}}(1/m_h^2)\) in the action.

HQET

tree-level matched through \({\mathcal {O}}(1/m_h)\) with discretization errors starting at \({\mathcal {O}}(a^2)\)

tree-level matched through \({\mathcal {O}}(1/m_h)\) with discretization errors starting at \({\mathcal {O}}(a^2)\)

The current implementation of HQET by the ALPHA collaboration satisfies this criterion, since both action and weak operators are matched nonperturbatively through \({\mathcal {O}}(1/m_h)\). Calculations that exclusively use a static-limit action do not satisfy this criterion, since the static-limit action, by definition, does not include \(1/m_h\) terms. We therefore include static computations in our final estimates only if truncation errors (in \(1/m_h\)) are discussed and included in the systematic uncertainties.

Light-quark actions for heavy quarks

discretization errors starting at \({\mathcal {O}}(a^2)\) or higher

discretization errors starting at \({\mathcal {O}}(a^2)\) or higher

This applies to calculations that use the twisted-mass Wilson action, a nonperturbatively improved Wilson action, domain wall fermions or the HISQ action for charm-quark quantities. It also applies to calculations that use these light quark actions in the charm region and above together with either the static limit or with an HQET-inspired extrapolation to obtain results at the physical b-quark mass. In these cases, the continuum-extrapolation criteria described earlier must be applied to the entire range of heavy-quark masses used in the calculation.

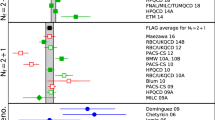

2.1.3 Conventions for the figures

For a coherent assessment of the present situation, the quality of the data plays a key role, but the colour coding cannot be carried over to the figures. On the other hand, simply showing all data on equal footing might give the misleading impression that the overall consistency of the information available on the lattice is questionable. Therefore, in the figures we indicate the quality of the data in a rudimentary way, using the following symbols:

:

:-

corresponds to results included in the average or estimate (i.e., results that contribute to the black square below);

:

:-

corresponds to results that are not included in the average but pass all quality criteria;

:

:-

corresponds to all other results;

:

:-

corresponds to FLAG averages or estimates; they are also highlighted by a gray vertical band.

The reason for not including a given result in the average is not always the same: the result may fail one of the quality criteria; the paper may be unpublished; it may be superseded by newer results; or it may not offer a complete error budget.

Symbols other than squares are used to distinguish results with specific properties and are always explained in the caption.Footnote 6

Often, nonlattice data are also shown in the figures for comparison. For these we use the following symbols:

:

:-

corresponds to nonlattice results;

- \(\blacktriangle \):

-

corresponds to Particle Data Group (PDG) results.

2.2 Averages and estimates

FLAG results of a given quantity are denoted either as averages or as estimates. Here we clarify this distinction. To start with, both averages and estimates are based on results without any red tags in their colour coding. For many observables there are enough independent lattice calculations of good quality, with all sources of error (not merely those related to the colour-coded criteria), as analyzed in the original papers, appearing to be under control. In such cases, it makes sense to average these results and propose such an average as the best current lattice number. The averaging procedure applied to this data and the way the error is obtained is explained in detail in Sect. 2.3. In those cases where only a sole result passes our rating criteria (colour coding), we refer to it as our FLAG average, provided it also displays adequate control of all other sources of systematic uncertainty.

On the other hand, there are some cases in which this procedure leads to a result that, in our opinion, does not cover all uncertainties. Systematic errors are by their nature often subjective and difficult to estimate, and may thus end up being underestimated in one or more results that receive green symbols for all explicitly tabulated criteria. Adopting a conservative policy, in these cases we opt for an estimate (or a range), which we consider as a fair assessment of the knowledge acquired on the lattice at present. This estimate is not obtained with a prescribed mathematical procedure, but reflects what we consider the best possible analysis of the available information. The hope is that this will encourage more detailed investigations by the lattice community.

There are two other important criteria that also play a role in this respect, but that cannot be colour coded, because a systematic improvement is not possible. These are: (i) the publication status, and (ii) the number of sea-quark flavours \(N_{\,f}\). As far as the former criterion is concerned, we adopt the following policy: we average only results that have been published in peer-reviewed journals, i.e., they have been endorsed by referee(s). The only exception to this rule consists in straightforward updates of previously published results, typically presented in conference proceedings. Such updates, which supersede the corresponding results in the published papers, are included in the averages. Note that updates of earlier results rely, at least partially, on the same gauge-field-configuration ensembles. For this reason, we do not average updates with earlier results. Nevertheless, all results are listed in the tables,Footnote 7 and their publication status is identified by the following symbols:

-

Publication status:

A published or plain update of published results

P preprint

C conference contribution

In the present edition, the publication status on the 30th of April 2021 is relevant. If the paper appeared in print after that date, this is accounted for in the bibliography, but does not affect the averages.Footnote 8

As noted above, in this review we present results from simulations with \(N_f=2\), \(N_f=2+1\) and \(N_f=2+1+1\) (except for \( r_0 \Lambda _{\overline{\textrm{MS}}}\) where we also give the \(N_f=0\) result). We are not aware of an a priori way to quantitatively estimate the difference between results produced in simulations with a different number of dynamical quarks. We therefore average results at fixed \(N_{\,f}\) separately; averages of calculations with different \(N_{\,f}\) are not provided.

To date, no significant differences between results with different values of \(N_f\) have been observed in the quantities listed in Tables 1, 2, 3, 4, and 5. In particular, differences between results from simulations with \(N_{\,f}= 2\) and \(N_{\,f}= 2 + 1\) would reflect Zweig-rule violations related to strange-quark loops. Although not of direct phenomenological relevance, the size of such violations is an interesting theoretical issue per se, and one that can be quantitatively addressed only with lattice calculations. It remains to be seen whether the status presented here will change in the future, since this will require dedicated \(N_f=2\) and \(N_f=2+1\) calculations, which are not a priority of present lattice work.

The question of differences between results with \(N_{\,f}=2+1\) and \(N_{\,f}=2+1+1\) is more subtle. The dominant effect of including the charm sea quark is to shift the lattice scale, an effect that is accounted for by fixing this scale nonperturbatively using physical quantities. For most of the quantities discussed in this review, it is expected that residual effects are small in the continuum limit, suppressed by \(\alpha _s(m_c)\) and powers of \(\Lambda ^2/m_c^2\). Here \(\Lambda \) is a hadronic scale that can only be roughly estimated and depends on the process under consideration. Note that the \(\Lambda ^2/m_c^2\) effects have been addressed in Refs. [158,159,160,161,162], and found to be small for the quantities considered. Assuming that such effects are generically small, it might be reasonable to average the results from \(N_{\,f}=2+1\) and \(N_{\,f}=2+1+1\) simulations, although we do not do so here.

2.3 Averaging procedure and error analysis

In the present report, we repeatedly average results obtained by different collaborations, and estimate the error on the resulting averages. Here we provide details on how averages are obtained.

2.3.1 Averaging – generic case

We follow the procedure of the previous two editions [2, 3], which we describe here in full detail.

One of the problems arising when forming averages is that not all of the data sets are independent. In particular, the same gauge-field configurations, produced with a given fermion discretization, are often used by different research teams with different valence-quark lattice actions, obtaining results that are not really independent. Our averaging procedure takes such correlations into account.

Consider a given measurable quantity Q, measured by M distinct, not necessarily uncorrelated, numerical experiments (simulations). The result of each of these measurement is expressed as

where \(x_i\) is the value obtained by the ith experiment (\(i = 1, \ldots , M\)) and \(\sigma ^{(\alpha )}_i\) (for \(\alpha = 1, \ldots , E\)) are the various errors. Typically \(\sigma ^{(1)}_i\) stands for the statistical error and \(\sigma ^{(\alpha )}_i\) (\(\alpha \ge 2\)) are the different systematic errors from various sources. For each individual result, we estimate the total error \(\sigma _i \) by adding statistical and systematic errors in quadrature:

With the weight factor of each total error estimated in standard fashion,

the central value of the average over all simulations is given by

The above central value corresponds to a \(\chi _{{\textrm{min}}}^2\) weighted average, evaluated by adding statistical and systematic errors in quadrature. If the fit is not of good quality (\(\chi _{{\textrm{min}}}^2/\textrm{dof} > 1\)), the statistical and systematic error bars are stretched by a factor \(S = \sqrt{\chi ^2/\textrm{dof}}\).

Next, we examine error budgets for individual calculations and look for potentially correlated uncertainties. Specific problems encountered in connection with correlations between different data sets are described in the text that accompanies the averaging. If there is reason to believe that a source of error is correlated between two calculations, a \(100\%\) correlation is assumed. The correlation matrix \(C_{ij}\) for the set of correlated lattice results is estimated by a prescription due to Schmelling [163]. This consists in defining

with \(\sum _{\alpha }^\prime \) running only over those errors of \(x_i\) that are correlated with the corresponding errors of the measurement \(x_j\). This expresses the part of the uncertainty in \(x_i\) that is correlated with the uncertainty in \(x_j\). If no such correlations are known to exist, then we take \(\sigma _{i;j} =0\). The diagonal and off-diagonal elements of the correlation matrix are then taken to be

Finally, the error of the average is estimated by

and the FLAG average is

2.3.2 Nested averaging

We have encountered one case where the correlations between results are more involved, and a nested averaging scheme is required. This concerns the B-meson bag parameters discussed in Sect. 8.2. In the following, we describe the details of the nested averaging scheme. This is an updated version of the section added in the web update of the FLAG 16 report.

The issue arises for a quantity Q that is given by a ratio, \(Q=Y/Z\). In most simulations, both Y and Z are calculated, and the error in Q can be obtained in each simulation in the standard way. However, in other simulations only Y is calculated, with Z taken from a global average of some type. The issue to be addressed is that this average value \(\overline{Z}\) has errors that are correlated with those in Q.

In the example that arises in Sect. 8.2, \(Q=B_B\), \(Y=B_B f_B^2\) and \(Z=f_B^2\). In one of the simulations that contribute to the average, Z is replaced by \(\overline{Z}\), the PDG average for \(f_B^2\) [164] (obtained with an averaging procedure similar to that used by FLAG). This simulation is labeled with \(i=1\), so that

The other simulations have results labeled \(Q_j\), with \(j\ge 2\). In this set up, the issue is that \(\overline{Z}\) is correlated with the \(Q_j\), \(j\ge 2\).Footnote 9

We begin by decomposing the error in \(Q_1\) in the same schematic form as above,

Here the last term represents the error propagating from that in \(\overline{Z}\), while the others arise from errors in \(Y_1\). For the remaining \(Q_j\) (\(j\ge 2\)) the decomposition is as in Eq. (1). The total error of \(Q_1\) then reads

while that for the \(Q_j\) (\(j\ge 2\)) is

Correlations between \(Q_j\) and \(Q_k\) (\(j,k\ge 2\)) are taken care of by Schmelling’s prescription, as explained above. What is new here is how the correlations between \(Q_1\) and \(Q_j\) (\(j\ge 2\)) are taken into account.

To proceed, we recall from Eq. (7) that \(\sigma _{\overline{Z}}\) is given by

Here the indices \(i'\) and \(j'\) run over the \(M'\) simulations that contribute to \(\overline{Z}\), which, in general, are different from those contributing to the results for Q. The weights \(\omega [Z]\) and correlation matrix C[Z] are given an explicit argument Z to emphasize that they refer to the calculation of this quantity and not to that of Q. C[Z] is calculated using the Schmelling prescription [Eqs. (5)–(7)] in terms of the errors, \(\sigma [Z]_{i'}^{(\alpha )}\), taking into account the correlations between the different calculations of Z.

We now generalize Schmelling’s prescription for \(\sigma _{i;j}\), Eq. (5), to that for \(\sigma _{1;k}\) (\(k\ge 2\)), i.e., the part of the error in \(Q_1\) that is correlated with \(Q_k\). We take

The first term under the square root sums those sources of error in \(Y_1\) that are correlated with \(Q_k\). Here we are using a more explicit notation from that in Eq. (5), with \((\alpha ) \leftrightarrow k\) indicating that the sum is restricted to the values of \(\alpha \) for which the error \(\sigma _{Y_1}^{(\alpha )}\) is correlated with \(Q_k\). The second term accounts for the correlations within \(\overline{Z}\) with \(Q_k\), and is the nested part of the present scheme. The new matrix \(C[Z]_{i'j'\leftrightarrow k}\) is a restriction of the full correlation matrix C[Z], and is defined as follows. Its diagonal elements are given by

where the summation \(\sum ^\prime _{(\alpha )\leftrightarrow k}\) over \((\alpha )\) is restricted to those \(\sigma [Z]_{i'}^{(\alpha )}\) that are correlated with \(Q_k\). The off-diagonal elements are

where the summation \(\sum ^\prime _{(\alpha )\leftrightarrow j'k}\) over \((\alpha )\) is restricted to \(\sigma [Z]_{i'}^{(\alpha )}\) that are correlated with both \(Z_{j'}\) and \(Q_k\).

The last quantity that we need to define is \(\sigma _{k;1}\).

where the summation \(\sum ^\prime _{(\alpha )\leftrightarrow 1}\) is restricted to those \(\sigma _k^{(\alpha )}\) that are correlated with one of the terms in Eq. (11).

In summary, we construct the correlation matrix \(C_{ij}\) using Eq. (6), as in the generic case, except the expressions for \(\sigma _{1;k}\) and \(\sigma _{k;1}\) are now given by Eqs. (14) and (19), respectively. All other \(\sigma _{i;j}\) are given by the original Schmelling prescription, Eq. (5). In this way we extend the philosophy of Schmelling’s approach while accounting for the more involved correlations.

3 Quark masses

Authors: T. Blum, A. Portelli, A. Ramos

Quark masses are fundamental parameters of the Standard Model. An accurate determination of these parameters is important for both phenomenological and theoretical applications. The bottom- and charm-quark masses, for instance, are important sources of parametric uncertainties in several Higgs decay modes. The up-, down- and strange-quark masses govern the amount of explicit chiral symmetry breaking in QCD. From a theoretical point of view, the values of quark masses provide information about the flavour structure of physics beyond the Standard Model. The Review of Particle Physics of the Particle Data Group contains a review of quark masses [165], which covers light as well as heavy flavours. Here we also consider light- and heavy-quark masses, but focus on lattice results and discuss them in more detail. We do not discuss the top quark, however, because it decays weakly before it can hadronize, and the nonperturbative QCD dynamics described by present day lattice simulations is not relevant. The lattice determination of light- (up, down, strange), charm- and bottom-quark masses is considered below in Sects. 3.1, 3.2, and 3.3, respectively.

Quark masses cannot be measured directly in experiment because quarks cannot be isolated, as they are confined inside hadrons. From a theoretical point of view, in QCD with \(N_f\) flavours, a precise definition of quark masses requires one to choose a particular renormalization scheme. This renormalization procedure introduces a renormalization scale \(\mu \), and quark masses depend on this renormalization scale according to the Renormalization Group (RG) equations. In mass-independent renormalization schemes the RG equations read

where the function \(\tau ({\bar{g}})\) is the anomalous dimension, which depends only on the value of the strong coupling \(\alpha _s={\bar{g}}^2/(4\pi )\). Note that in QCD \(\tau ({\bar{g}})\) is the same for all quark flavours. The anomalous dimension is scheme dependent, but its perturbative expansion

has a leading coefficient \(d_0 = 8/(4\pi )^2\), which is scheme independent.Footnote 10 Equation (20), being a first order differential equation, can be solved exactly by using Eq. (21) as the boundary condition. The formal solution of the RG equation reads

where \(b_0 = (11-2N_f/3) / (4\pi )^2\) is the universal leading perturbative coefficient in the expansion of the \(\beta \)-function, \(\beta (\bar{g}) \equiv d\bar{g}^2/d\log \mu ^2\), which governs the running of the strong coupling constant near the scale \(\mu \). The renormalization group invariant (RGI) quark masses \(M_i\) are formally integration constants of the RG Eq. (20). They are scale independent, and due to the universality of the coefficient \(d_0\), they are also scheme independent. Moreover, they are nonperturbatively defined by Eq. (22). They only depend on the number of flavours \(N_f\), making them a natural candidate to quote quark masses and compare determinations from different lattice collaborations. Nevertheless, it is customary in the phenomenology community to use the \(\overline{\textrm{MS}}\) scheme at a scale \(\mu = 2\) GeV to compare different results for light-quark masses, and use a scale equal to its own mass for the charm and bottom quarks. In this review, we will quote the final averages of both quantities.

Results for quark masses are always quoted in the four-flavour theory. \(N_{f}=2+1\) results have to be converted to the four-flavour theory. Fortunately, the charm quark is heavy \((\Lambda _\textrm{QCD}/m_c)^2<1\), and this conversion can be performed in perturbation theory with negligible (\(\sim 0.2\%\)) perturbative uncertainties. Nonperturbative corrections in this matching are more difficult to estimate. Since these effects are suppressed by a factor of \(1/N_\textrm{c}\), and a factor of the strong coupling at the scale of the charm mass, naive power counting arguments would suggest that the effects are \(\sim 1\%\). In practice, numerical nonperturbative studies [158, 160, 167] have found this power counting argument to be an overestimate by one order of magnitude in the determination of simple hadronic quantities or the \(\Lambda \)-parameter. Moreover, lattice determinations do not show any significant deviation between the \(N_{f}=2+1\) and \(N_{f}=2+1+1\) simulations. For example, the difference in the final averages for the mass of the strange quark \(m_s\) between \(N_f=2+1\) and \(N_f=2+1+1\) determinations is about 1.3%, or about one standard deviation.

We quote all final averages at 2 GeV in the \(\overline{\textrm{MS}}\) scheme and also the RGI values (in the four-flavour theory). We use the exact RG Eq. (22). Note that to use this equation we need the value of the strong coupling in the \(\overline{\textrm{MS}}\) scheme at a scale \(\mu = 2\) GeV. All our results are obtained from the RG equation in the \(\overline{\textrm{MS}}\) scheme and the 5-loop beta function together with the value of the \(\Lambda \)-parameter in the four-flavour theory \(\Lambda ^{(4)}_{\overline{\textrm{MS}}} = 294(12)\, \textrm{MeV}\) obtained in this review (see Sect. 9). In the uncertainties of the RGI masses we separate the contributions from the determination of the quark masses and the propagation of the uncertainty of \(\Lambda ^{(4)}_{\overline{\textrm{MS}}}\). These are identified with the subscripts m and \(\Lambda \), respectively.

Conceptually, all lattice determinations of quark masses contain three basic ingredients:

-

1.

Tuning the lattice bare-quark masses to match the experimental values of some quantities. Pseudo-scalar meson masses provide the most common choice, since they have a strong dependence on the values of quark masses. In pure QCD with \(N_f\) quark flavours these values are not known, since the electromagnetic interactions affect the experimental values of meson masses. Therefore, pure QCD determinations use model/lattice information to determine the location of the physical point. This is discussed at length in Sect. 3.1.1.

-

2.

Renormalization of the bare-quark masses. Bare-quark masses determined with the above-mentioned criteria have to be renormalized. Many of the latest determinations use some nonperturbatively defined scheme. One can also use perturbation theory to connect directly the values of the bare-quark masses to the values in the \(\overline{\textrm{MS}}\) scheme at 2 GeV. Experience shows that 1-loop calculations are unreliable for the renormalization of quark masses: usually at least two loops are required to have trustworthy results.

-

3.

If quark masses have been nonperturbatively renormalized, for example, to some MOM/SF scheme, the values in this scheme must be converted to the phenomenologically useful values in the \(\overline{\textrm{MS}}\) scheme (or to the scheme/scale independent RGI masses). Either option requires the use of perturbation theory. The larger the energy scale of this matching with perturbation theory, the better, and many recent computations in MOM schemes do a nonperturbative running up to 3–4 GeV. Computations in the SF scheme allow us to perform this running nonperturbatively over large energy scales and match with perturbation theory directly at the electro-weak scale \(\sim 100\) GeV.

Note that many lattice determinations of quark masses make use of perturbation theory at a scale of a few GeV.

We mention that lattice-QCD calculations of the b-quark mass have an additional complication which is not present in the case of the charm and light quarks. At the lattice spacings currently used in numerical simulations the direct treatment of the b quark with the fermionic actions commonly used for light quarks is very challenging. Only two determinations of the b-quark mass use this approach, reaching the physical b-quark mass region at two lattice spacings with \(aM\sim 1\). There are a few widely used approaches to treat the b quark on the lattice, which have been already discussed in the FLAG 13 review (see Sec. 8 of Ref. [2]). Those relevant for the determination of the b-quark mass will be briefly described in Sect. 3.3.

3.1 Masses of the light quarks

Light-quark masses are particularly difficult to determine because they are very small (for the up and down quarks) or small (for the strange quark) compared to typical hadronic scales. Thus, their impact on typical hadronic observables is minute, and it is difficult to isolate their contribution accurately.

Fortunately, the spontaneous breaking of \(SU(3)_L\times SU(3)_R\) chiral symmetry provides observables which are particularly sensitive to the light-quark masses: the masses of the resulting Nambu–Goldstone bosons (NGB), i.e., pions, kaons, and eta. Indeed, the Gell–Mann–Oakes–Renner relation [168] predicts that the squared mass of a NGB is directly proportional to the sum of the masses of the quark and antiquark which compose it, up to higher-order mass corrections. Moreover, because these NGBs are light, and are composed of only two valence particles, their masses have a particularly clean statistical signal in lattice-QCD calculations. In addition, the experimental uncertainties on these meson masses are negligible. Thus, in lattice calculations, light-quark masses are typically obtained by renormalizing the input quark mass and tuning them to reproduce NGB masses, as described above.

3.1.1 The physical point and isospin symmetry

As mentioned in Sect. 2.1, the present review relies on the hypothesis that, at low energies, the Lagrangian  describes nature to a high degree of precision. However, most of the results presented below are obtained in pure QCD calculations, which do not include QED. Quite generally, when comparing QCD calculations with experiment, radiative corrections need to be applied. In pure QCD simulations, where the parameters are fixed in terms of the masses of some of the hadrons, the electromagnetic contributions to these masses must be discussed. How the matching is done is generally ambiguous because it relies on the unphysical separation of QCD and QED contributions. In this section, and in the following, we discuss this issue in detail. A related discussion, in the context of scale setting, is given in Sect. 11.3. Of course, once QED is included in lattice calculations, the subtraction of electromagnetic contributions is no longer necessary.

describes nature to a high degree of precision. However, most of the results presented below are obtained in pure QCD calculations, which do not include QED. Quite generally, when comparing QCD calculations with experiment, radiative corrections need to be applied. In pure QCD simulations, where the parameters are fixed in terms of the masses of some of the hadrons, the electromagnetic contributions to these masses must be discussed. How the matching is done is generally ambiguous because it relies on the unphysical separation of QCD and QED contributions. In this section, and in the following, we discuss this issue in detail. A related discussion, in the context of scale setting, is given in Sect. 11.3. Of course, once QED is included in lattice calculations, the subtraction of electromagnetic contributions is no longer necessary.

Let us start from the unambiguous case of QCD+QED. As explained in the introduction of this section, the physical quark masses are the parameters of the Lagrangian such that a given set of experimentally measured, dimensionful hadronic quantities are reproduced by the theory. Many choices are possible for these quantities, but in practice many lattice groups use pseudoscalar meson masses, as they are easily and precisely obtained both by experiment, and through lattice simulations. For example, in the four-flavour case, one can solve the system

where we assumed that

-

all the equations are in the continuum and infinite-volume limits;

-