Abstract

The final results of the search for the lepton flavour violating decay \(\mathrm {\mu }^+ \rightarrow \mathrm {e}^+ \mathrm {\gamma }\) based on the full dataset collected by the MEG experiment at the Paul Scherrer Institut in the period 2009–2013 and totalling \(7.5\times 10^{14}\) stopped muons on target are presented. No significant excess of events is observed in the dataset with respect to the expected background and a new upper limit on the branching ratio of this decay of \( \mathcal{B} (\mu ^+ \rightarrow \mathrm{e}^+ \gamma ) < 4.2 \times 10^{-13}\) (90 % confidence level) is established, which represents the most stringent limit on the existence of this decay to date.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

The standard model (SM) of particle physics allows charged lepton flavour violating (CLFV) processes with only extremely small branching ratios (\(\ll \) \(10^{-50}\)) even when accounting for measured neutrino mass differences and mixing angles. Therefore, such decays are free from SM physics backgrounds associated with processes involving, either directly or indirectly, hadronic states and are ideal laboratories for searching for new physics beyond the SM. A positive signal would be an unambiguous evidence for physics beyond the SM.

The existence of such decays at measurable rates not far below current upper limits is suggested by many SM extensions, such as supersymmetry [1]. An extensive review of the theoretical expectations for CLFV is provided in [2]. CLFV searches with improved sensitivity probe new regions of the parameter spaces of SM extensions, and CLFV decay \(\mu ^+ \rightarrow \mathrm {e}^+ \gamma \) is particularly sensitive to new physics. The MEG collaboration has searched for \(\mu ^+ \rightarrow \mathrm {e}^+ \gamma \) decay at the Paul Scherrer Institut (PSI) in Switzerland in the period 2008–2013. A detailed report of the experiment motivation, design criteria, and goals is available in reference [3, 4] and references therein. We have previously reported [5–7] results of partial datasets including a limit on the branching ratio for this decay \( \mathcal{B} < 5.7\times 10^{-13}\) at 90 % C.L.

The signal consists of a positron and a photon back-to-back, each with energy of 52.83 MeV (half of the muon mass), and with a common origin in space and time. Figure 1 shows cut schematic views of the MEG apparatus. Positive muons are stopped in a thin plastic target at the centre of a spectrometer based on a superconducting solenoid. The decay positron’s trajectory is measured in a magnetic field by a set of low-mass drift chambers and a scintillation counter array is used to measure its time. The photon momentum vector, interaction point and timing are measured by a homogeneous liquid xenon calorimeter located outside the magnet and covering the angular region opposite to the acceptance of the spectrometer. The total geometrical acceptance of the detector for the signal is \(\approx \) \(11\) %.

The signal can be mimicked by various processes, with the positron and photon originating either from a single radiative muon decay (RMD) (\(\mu ^+ \rightarrow \mathrm {e}^+\gamma \nu \bar{\nu }\)) or from the accidental coincidence of a positron and a photon from different processes. In the latter case, the photon can be produced by radiative muon decay or by Bremsstrahlung or positron annihilation-in-flight (AIF) (\(\mathrm {e}^+ \mathrm {e}^- \rightarrow \gamma \gamma \)). Accidental coincidences between a positron and a photon from different processes, each close in energy to their kinematic limit and with origin, direction and timing coincident within the detector resolutions are the dominant source of background.

Since the rate of accidental coincidences is proportional to the square of the \(\mu ^+\) decay rate, the signal to background ratio and data collection efficiency are optimised by using a direct-current rather than pulsed beam. Hence, the high intensity continuous surface \(\mu ^+\) beam (see Sect. 2.1) at PSI is the ideal facility for such a search.

The remainder of this paper is organised as follows. After a brief introduction to the detector and to the data acquisition system (Sect. 2), the reconstruction algorithms are presented in detail (Sect. 3), followed by an in-depth discussion of the analysis of the full MEG dataset and of the results (Sect. 4). Finally, in the conclusions, some prospects for future improvements are outlined (Sect. 5).

2 MEG detector

The MEG detector is briefly presented in the following, emphasising the aspects relevant to the analysis; a detailed description is available in [8]. Briefly, it consists of the \(\mu ^+\) beam, a thin stopping target, a thin-walled, superconducting magnet, a drift chamber array (DCH), scintillating timing counters (TC), and a liquid xenon calorimeter (LXe detector).

In this paper we adopt a cylindrical coordinate system \({ (r,\phi ,z)}\) with origin at the centre of the magnet (see Fig. 1). The z-axis is parallel to the magnet axis and directed along the \(\mu ^+\) beam. The axis defining \(\phi =90^\circ \) (the y-axis of the corresponding Cartesian coordinate system) is directed upwards and, as a consequence, the x-axis is directed opposite to the centre of the LXe detector. Positrons move along trajectories with decreasing \(\phi \)-coordinate. When required, the polar angle \(\theta \) with respect to the z-axis is also used. The region with \({ z}<0\) is referred to as upstream, that with \({ z}>0\) as downstream.

2.1 Muon beam

The requirement to stop a large number of \(\mu ^+\) in a thin target of small transverse size drives the beam requirements: high flux, small transverse size, small momentum spread and small contamination, e.g. from positrons. These goals are met by the 2.2 mA PSI proton cyclotron and \(\pi \)E5 channel in combination with the MEG beam line, which produces one of the world’s most intense continuous \(\mu ^+\) beams. It is a surface muon beam produced by \(\pi ^+\) decay near the surface of the production target. It can deliver more than \(10^8\) \(\mu ^+\)/s at 28 MeV/c in a momentum bite of 5–7 %. To maximise the experiment’s sensitivity, the beam is tuned to a \(\mu ^+\) stopping rate of 3\(\times 10^7\), limited by the rate capabilities of the tracking system and the rate of accidental backgrounds, given the MEG detector resolutions. The ratio of \(\mathrm {e^+}\) to \(\mu ^+\) flux in the beam is \(\approx \)8, and the positrons are efficiently removed by a combination of a Wien filter and collimator system. The muon momentum distribution at the target is optimised by a degrader system comprised of a 300 \(\upmu \)m thick mylar® foil and the He-air atmosphere inside the spectrometer in front of the target. The round, Gaussian beam-spot profile has \(\sigma _{x,y} \approx \)10 mm.

The muons at the production target are produced fully polarized (\(P_{\mu ^+}=-1\)) and they reach the stopping target with a residual polarization \(P_{\mu ^+} = -0.86\, \pm \, 0.02 ~ \mathrm{(stat)} ~ { }^{+ 0.05}_{-0.06} ~ \mathrm{(syst)}\) consistent with the expectations [9].

Other beam tunes are used for calibration purposes, including a \(\pi ^-\) tune at 70.5 MeV/c used to produce monochromatic photons via pion charge exchange and a 53 MeV/c positron beam tune to produce Mott-scattered positrons close to the energy of a signal positron (Sect. 2.7).

2.2 Muon stopping target

Positive muons are stopped in a thin target at the centre of the spectrometer, where they decay at rest. The target is optimised to satisfy conflicting goals of maximising stopping efficiency (\(\approx \) \(80~\%\)) while minimising multiple scattering, Bremsstrahlung and AIF of positrons from muon decays. The target is composed of a 205 \(\upmu \)m thick layer of polyethylene and polyester (density 0.895 g/cm\(^3\)) with an elliptical shape with semi-major and semi-minor axes of 10 cm and 4 cm. The target foil is equipped with seven cross marks and eight holes of radius 0.5 cm, used for optical survey and for software alignment purposes. The foil is mounted in a Rohacell® frame, which is attached to the tracking system support frame and positioned with the target normal vector in the horizontal plane and at an angle \(\theta \) \(\approx \) \(70^\circ \). The target before installation in the detector is shown in Fig. 2.

2.3 COBRA magnet

The COBRA (constant bending radius) magnet [10] is a thin-walled, superconducting magnet with an axially graded magnetic field, ranging from 1.27 T at the centre to 0.49 T at either end of the magnet cryostat. The graded field has the advantage with respect to a uniform solenoidal field that particles produced with small longitudinal momentum have a much shorter latency time in the spectrometer, allowing stable operation in a high-rate environment. Additionally, the graded magnetic field is designed so that positrons emitted from the target follow a trajectory with almost constant projected bending radius, only weakly dependent on the emission polar angle \(\theta _{\mathrm {e^+}}\) (see Fig. 3a), even for positrons emitted with substantial longitudinal momentum.

The central part of the coil and cryostat accounts for \(0.197\,{X}_0\), thereby maintaining high transmission of signal photons to the LXe detector outside the COBRA cryostat. The COBRA magnet is also equipped with a pair of compensation coils to reduce the stray field to the level necessary to operate the photomultiplier tubes (PMTs) in the LXe detector.

The COBRA magnetic field was measured with a commercial Hall probe mounted on a wagon moving along z, r and \(\phi \) in the ranges \(|z|<110\,\mathrm {cm}\), \(0^{\circ }<\phi <360^{\circ }\) and \(0< r < 29\,\mathrm {cm}\), covering most of the positron tracking volume. The probe contained three Hall sensors orthogonally aligned to measure \(B_z, B_r\) and \(B_\phi \) individually. Because the main (axial) field component is much larger than the others, even small angular misalignments of the other probes could cause large errors in \(B_r\) and \(B_\phi \). Therefore, only the measured values of \(B_z\) are used in the analysis and the secondary components \(B_r\) and \(B_\phi \) are reconstructed from the measured \(B_z\) using Maxwell’s equations as

The measured values of \(B_r\) and \(B_\phi \) are required only at \(z_\mathrm {B}=1\,\mathrm {mm}\) near the symmetry plane of the magnet where the measured value of \(B_r\) is minimised (\(|B_r(z_\mathrm {B}, r, \phi )|<2\times 10^{-3}\) T) as expected. The effect of the misalignment of the \(B_\phi \)-measuring sensor on \(B_\phi (z_\mathrm {B}, r, \phi )\) is estimated by checking the consistency of the reconstructed \(B_r\) and \(B_\phi \) with Maxwell’s equations.

The continuous magnetic field map used in the analysis is obtained by interpolating the reconstructed magnetic field at the measurement grid points by a B-spline fit [11].

2.4 Drift chamber system

The DCH system [12] is designed to ensure precise measurement of the trajectory and momentum of positrons from \(\mu ^+ \rightarrow \mathrm {e}^+ \gamma \) decays. It is designed to satisfy several requirements: operate at high rates, primarily from positrons from \(\mu ^+\) decays in the target; have low mass to improve kinematic resolution (dominated by scattering) and to minimise production of photons by positron AIF; and provide excellent resolution in the measurement of the radial and longitudinal coordinates.

Concept of the gradient magnetic field of COBRA. The positrons follow trajectories at constant bending radius weakly dependent on the emission angle \(\theta _{\mathrm {e^+}}\) (a) and those emitted from the target with small longitudinal momentum (\(\theta _{\mathrm {e^+}}\approx \) \(90^{\circ }\)) are quickly swept away from the central region (b)

The DCH system consists of 16 identical, independent modules placed inside COBRA, aligned in a semi-circle with 10.5\(^{\circ }\) spacing, and covering the azimuthal region between \(191.25^\circ \) and \(348.75^\circ \) and the radial region between 19.3 and 27.9 cm (see Fig. 4). Each module has a trapezoidal shape with base lengths of 40 and 104 cm, without supporting structure on the long (inner) side to reduce the amount of material intercepted by signal positrons. A module consists of two independent detector planes, each consisting of two cathode foils (12.5 \(\upmu \)m-thick aluminised polyamide) separated by 7 mm and filled with a 50:50 mixture of He:C\(_2\)H\(_6\). A plane of alternating axial anode and potential wires is situated midway between the cathode foils with a pitch of 4.5 mm. The two planes of cells are separated by 3 mm and the two wire arrays in the same module are staggered by half a drift cell to help resolve left-right position ambiguities (see Fig. 5). A double wedge pad structure is etched on both cathodes with a Vernier pattern of cycle \(\lambda = 5\) cm as shown in Fig. 6. The pad geometry is designed to allow a precise measurement of the axial coordinate of the hit by comparing the signals induced on the four pads in each cell. The average amount of material intercepted by a positron track in a DCH module is \(2.6\times 10^{-4}\,X_0\), with the total material along a typical signal positron track of \(2.0\times 10^{-3}\,X_0\).

2.5 Timing counter

The TC [13, 14] is designed to measure precisely the impact time and position of signal positrons and to infer the muon decay time by correcting for the track length from the target to the TC obtained from the DCH information.

The main requirements of the TC are:

-

provide full acceptance for signal positrons in the DCH acceptance matching the tight mechanical constraints dictated by the DCH system and COBRA;

-

ability to operate at high rate in a high and non-uniform magnetic field;

-

fast and approximate (\(\approx \) \(5\) cm resolution) determination of the positron impact point for the online trigger;

-

good (\(\approx \) \(1\) cm) positron impact point position resolution in the offline event analysis;

-

excellent (\(\approx \) \(50\) ps) time resolution of the positron impact point.

The system consists of an upstream and a downstream sector, as shown in Fig. 1.

Each sector (see Fig. 7) is barrel shaped with full angular coverage for signal positrons within the photon and positron acceptance of the LXe detector and DCH. It consists of an array of 15 scintillating bars with a \(10.5^\circ \) pitch between adjacent bars. Each bar has an approximate square cross-section of size \(4.0\times 4.0\times 79.6\,\mathrm {cm}^3\) and is read out by a fine-mesh, magnetic field tolerant, 2” PMT at each end. The inner radius of a sector is 29.5 cm, such that only positrons with a momentum close to that of signal positrons hit the TC.

2.6 Liquid xenon detector

The LXe photon detector [15, 16] requires excellent position, time and energy resolutions to minimise the number of accidental coincidences between photons and positrons from different muon decays, which comprise the dominant background process (see Sect. 4.4.1).

It is a homogeneous calorimeter able to contain fully the shower induced by a 52.83 MeV photon and measure the photon interaction vertex, interaction time and energy with high efficiency. The photon direction is not directly measured in the LXe detector, rather it is inferred by the direction of a line between the photon interaction vertex in the LXe detector and the intercept of the positron trajectory at the stopping target.

Liquid xenon, with its high density and short radiation length, is an efficient detection medium for photons; optimal resolution is achieved, at least at low energies, if both the ionisation and scintillation signals are detected. In the high rate MEG environment, only the scintillation light with its very fast signal, is detected.

A schematic view of the LXe detector is shown in Fig. 8. It has a C-shaped structure fitting the outer radius of COBRA. The fiducial volume is \(\approx \) \(800~\ell \), covering 11 % of the solid angle viewed from the centre of the stopping target. Scintillation light is detected in 846 PMTs submerged directly in the liquid xenon. They are placed on all six faces of the detector, with different PMT coverage on different faces. The detector’s depth is 38.5 cm, corresponding to \(\approx \) \(14\) X\(_0\).

2.7 Calibration

Multiple calibration and monitoring tools are integrated into the experiment [17] in order to continuously check the operation of single sub-detectors (e.g. LXe photodetector gain equalisation, TC bar cross-timing, LXe and spectrometer energy scale) and multiple-detector comparisons simultaneously (e.g. relative positron-photon timing).

Data for some of the monitoring and calibration tasks are recorded during normal data taking, making use of particles coming from muon decays, for example the end-points of the positron and photon spectra to check the energy scale, or the positron-photon timing in RMD to check the LXe–TC relative timing. Additional calibrations required the installation of new tools, devices or detectors. A list of these methods is presented in Table 1 and they are briefly discussed below.

Various processes can affect the LXe detector response: xenon purity, long-term PMT gain or quantum efficiency drifts from ageing, HV variations, etc. PMT gains are tracked using 44 blue LEDs immersed in the LXe at different positions. Dedicated runs for gain measurements in which LEDs are flashed at different intensities are taken every two days. In order to monitor the PMT long-term gain and efficiency variations, flashing LED events are constantly taken (1 Hz) during physics runs. Thin tungsten wires with point-like \(^{241}\mathrm {Am}\) \(\alpha \)-sources are also installed in precisely known positions in the detector fiducial volume. They are used for monitoring the xenon purity and measuring the PMT quantum efficiencies [18].

A dedicated Cockcroft–Walton accelerator [19] placed downstream of the muon beam line is installed to produce photons of known energy by impinging sub-MeV protons on a lithium tetraborate target. The accelerator was operated twice per week to generate single photons of relatively high energy (17.6 MeV from lithium) to monitor the LXe detector energy scale, and coincident photons (4.4 and 11.6 MeV from boron) to monitor the TC scintillator bar relative timing and the TC–LXe detectors’ relative timing (see Table 1 for the relevant reactions).

A dedicated calibration run is performed annually by stopping \(\pi ^-\) in a liquid hydrogen target placed at the centre of COBRA [20]. Coincident photons from \(\pi ^0\) decays produced in the charge exchange (CEX) reaction \(\pi ^- \mathrm{p} \rightarrow \pi ^0 \mathrm{n}\) are detected simultaneously in the LXe detector and a dedicated BGO crystal detector. By appropriate relative LXe and BGO geometrical selection and BGO energy selection, a nearly monochromatic sample of 55 MeV (and 83 MeV) photons incident on the LXe are used to measure the response of the LXe detector at these energies and set the absolute energy scale at the signal photon energy.

A low-energy calibration point is provided by 4.4 MeV photons from an \(^{241}\)Am/Be source that is moved periodically in front of the LXe detector during beam-off periods.

Finally, a neutron generator exploiting the \(\left( \mathrm{n},\gamma \right) \) reaction on nickel shown in Table 1 allows an energy calibration under various detector rate conditions, in particular normal MEG and CEX data taking.

Data with Mott-scattered positrons are also acquired annually to monitor and calibrate the spectrometer with all the benefits associated with the usage of a quasi-monochromatic energy line at \(\approx \) \(53\) MeV [21].

2.8 Front-end electronics

The digitisation and data acquisition system for MEG uses a custom, high frequency digitiser based on the switched capacitor array technique, the Domino Ring Sampler 4 (DRS4) [22]. For each of the \(\approx \) \(3000\) read-out channels with a signal above some threshold, it records a waveform of 1024 samples. The sampling rate is 1.6 GHz for the TC and LXe detectors, matched to the precise time measurements in these detectors, and 0.8 GHz for the DCH, matched to the drift velocity and intrinsic drift resolution.

Each waveform is processed offline by applying baseline subtraction, spectral analysis, noise filtering, digital constant fraction discrimination etc. so as to optimise the extraction of the variables relevant for the measurement. Saving the full waveform provides the advantage of being able to reprocess the full waveform information offline with improved algorithms.

2.9 Trigger

An experiment to search for ultra-rare events within a huge background due to a high muon stopping rate needs a quick and efficient event selection, which demands the combined use of high-resolution detection techniques with fast front-end, digitising electronics and trigger. The trigger system plays an essential role in processing the detector signals in order to find the signature of \(\mu ^+ \rightarrow \mathrm{e}^+ \gamma \) events in a high-background environment [23, 24]. The trigger must strike a compromise between a high efficiency for signal event selection, high live-time and a very high background rejection rate. The trigger rate should be kept below 10 Hz so as not to overload the data acquisition (DAQ) system.

The set of observables to be reconstructed at trigger level includes:

-

the photon energy;

-

the relative \(\mathrm {e}^+\gamma \) direction;

-

the relative \(\mathrm {e}^+\gamma \) timing.

The stringent limit due to the latency of the read-out electronics prevents the use of any information from the DCH, since the electron drift time toward the anode wires is too long. Therefore a reconstruction of the positron momentum cannot be obtained at the trigger level even if the requirement of a TC hit is equivalent to the requirement of positron momentum \(\gtrsim 45\) MeV. The photon energy is the most important observable to be reconstructed, due to the steep decrease in the spectrum at the end-point. For this reason the calibration factors for the PMT signals of the LXe detector (such as PMT gains and quantum efficiencies) are continuously monitored and periodically updated. The energy deposited in the LXe detector is estimated by the weighted linear sum of the PMT pulse amplitudes.

The amplitudes of the inner-face PMT pulses are also sent to comparator stages to extract the index of the PMT collecting the highest charge, which provides a robust estimator of the photon interaction vertex in the LXe detector. The line connecting this vertex and the target centre provides an estimate of the photon direction.

On the positron side, the coordinates of the TC interaction point are the only information available online. The radial coordinate is given simply by the radial location of the TC, while, due to its segmentation along \(\phi \), this coordinate is identified by the bar index of the first hit (first bar encountered moving along the positron trajectory). The local z-coordinate on the hit bar is measured by the ratio of charges on the PMTs on opposite sides of the bar with a resolution \(\approx \) \(5\) cm.

On the assumption of the momentum being that of a signal event and the direction opposite to that of the photon, by means of Monte Carlo (MC) simulations, each PMT index is associated with a region of the TC. If the online TC coordinates fall into this region, the relative \(\mathrm {e}^+\gamma \) direction is compatible with the back-to-back condition.

The interaction time of the photon in the LXe detector is extracted by a fit of the leading edge of PMT pulses with a \(\approx \) \(2\) ns resolution. The same procedure allows the estimation of the time of the positron hit on the TC with a comparable resolution. The relative time is obtained from their difference; fluctuations due to the time-of-flight of each particle are within the resolutions.

2.10 DAQ system

The DAQ challenge is to perform the complete read-out of all detector waveforms while maintaining the system efficiency, defined as the product of the online efficiency (\(\epsilon _\mathrm{trg}\)) and the DAQ live-time fraction (\(f_\mathrm {LT}\)), as high as possible.

At the beginning of data taking, with the help of a MC simulation, a trigger configuration which maximised the DAQ efficiency was found to have \(\epsilon _\mathrm{trg}\approx 90\,\%\) and \(f_\mathrm {LT}\approx 85\,\%\) and an associated event rate \(R_\mathrm{daq}\approx 7~\mathrm {Hz}\), almost seven orders of magnitude lower than the muon stopping rate.

The system bottleneck was found in the waveform read-out time from the VME boards to the online disks, lasting as much as \(t_\mathrm {ro}\approx \)24 \(\mathrm {ms/event}\); the irreducible contribution to the dead-time is the DRS4 read-out time and accounts for \(625~\mathrm {\upmu s}\). This limitation has been overcome, starting from the 2011 run, thanks to a multiple buffer read-out scheme, in our case consisting of three buffers. In this scheme, in case of a new trigger during the event read-out from a buffer, new waveforms are written in the following one; the system experiences dead-time only when there are no empty buffers left. This happens when three events occur within a time interval equal to the read-out time \(t_\mathrm {ro}\). The associated live-time is

and is \(\ge \) \(99\,\%\) for event rates up to \(\approx \) \(13\) Hz.

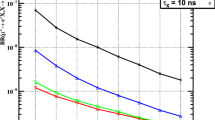

The multiple buffer scheme allows relaxation of the trigger conditions, in particular for what concerns the relative \(\mathrm {e}^+\gamma \) direction, leading to a much more efficient DAQ system, from 75 % in the 2009–2010 runs to 97 % in the 2011–2013 runs. Figure 9 shows the two described working points, the first part refers to the 2009–2010 runs, while the second refers to the 2011–2013 runs.

3 Reconstruction

In this section the reconstruction of high-level objects is presented. More information about low-level objects (e.g. waveform analysis, hit reconstruction) and calibration issues are available in [8].

3.1 Photon reconstruction

A 52.83 MeV photon interacts with LXe predominantly via the pair production process, followed by an electromagnetic shower. The major uncertainty in the reconstruction stems from the event-by-event fluctuations in the shower development. A series of algorithms provide the best estimates of the energy, the interaction vertex, and the interaction time of the incident photon and to identify and eliminate events with multiple photons in the same event.

For reconstruction inside the LXe detector, a special coordinate system (u, v, w) is used: u coincides with z in the MEG coordinate system; v is directed along the negative \(\phi \)-direction at the radius of the fiducial volume inner face (\(r_\mathrm {in} = 67.85\) cm); \(w=r-r_\mathrm {in}\), measures the depth. The fiducial volume of the LXe detector is defined as \(|u|<25\) cm, \(|v|<71\) cm, and 0 \(<w<38.5\) cm (\(|\cos \theta |<0.342\) and \(120^{\circ }<\phi <240^\circ \)) in order to ensure high resolutions, especially for energy and position measurements.

The reconstruction starts with a waveform analysis that extracts charge and time for each of the PMT waveforms. The digital-constant-fraction method is used to determine an (almost) amplitude independent pulse time, defined as the time when the signal reaches a predefined fraction (20 %) of the maximum pulse height. To minimise the effect of noise on the determination of the charge, a digital high-pass filterFootnote 1 with a cutoff frequency of \(\approx \) \(10\) MHz, is applied.

The charge on each PMT (\(Q_i\)) is converted into the number of photoelectrons (\(N_{\mathrm {pe},i}\)) and into the number of scintillation photons impinging on the PMT (\(N_{\mathrm {pho},i}\)) as follows:

where \(G_i(t)\) is the PMT gain and \(\mathcal {E}_i(t)\) is the product of the quantum efficiency of the photocathode and the collection efficiency to the first dynode. These quantities vary with timeFootnote 2 and, thus, are continuously monitored and calibrated using the calibration sources instrumented in the LXe detector (see Sect. 2.7).

The PMT gain is measured using blue LEDs, flashed at different intensities by exploiting the statistical relation between the mean and variance of the observed charge,

The time variation of the gain is tracked by using the LED events collected at \(\approx \) \(1\) Hz during physics data taking.

The quantity \(\mathcal {E}_i(t)\) is evaluated using \(\alpha \)-particles produced by \(^{241}\mathrm {Am}\) sources within the LXe volume and monochromatic (17.6-MeV) photons from a p-Li interaction (see Table 1) by comparing the observed number of photoelectrons with the expected number of scintillation photons evaluated with a MC simulation,

This calibration is performed two or three times per week to monitor the time dependence of this factor. The absolute energy scale is not sensitive to the absolute magnitude of this efficiency, and this calibration serves primarily to equalise the relative PMT responses and to remove time-dependent drifts, possibly different from PMT to PMT.

3.1.1 Photon position

The 3D position of the photon interaction vertex \({\varvec{r}}_{\gamma }=(u_{\gamma },v_{\gamma },w_{\gamma })\) is determined by a \(\chi ^2\)-fit of the distribution of the numbers of scintillation photons in the PMTs (\(N_\mathrm {pho}\)), taking into account the solid angle subtended by each PMT photocathode assuming an interaction vertex, to the observed \(N_\mathrm {pho}\) distribution. To minimise the effect of shower fluctuations, only PMTs inside a radius of 3.5 times the PMT spacing for the initial estimate of the position of the interaction vertex are used in the fit. The initial estimate of the position is calculated as the amplitude weighted mean position around the PMT with the maximum signal. For events resulting in \(w_{\gamma }< 12\) cm, the fit is repeated with a further reduced number of PMTs, inside a radius of twice the PMT spacing from the first fit result. The remaining bias on the result, due to the inclined incidence of the photon onto the inner face, is corrected using results from a MC simulation. The performance of the position reconstruction is evaluated by a MC simulation and has been verified in dedicated CEX runs by placing lead collimators in front of the LXe detector. The average position resolutions along the two orthogonal inner-face coordinates (u, v) and the depth direction (w) are estimated to be \(\approx \) \(5\) and \(\approx \) \(6\) mm, respectively.

The position is reconstructed in the LXe detector local coordinate system. The conversion to the MEG coordinate system relies on the alignment of the LXe detector with the rest of the MEG subsystems. The LXe detector position relative to the MEG coordinate system is precisely surveyed using a laser survey device at room temperature. After the thermal shrinkage of the cryostat and of the PMT support structures at LXe temperature are taken into account, the PMT positions are calculated based on the above information. The final alignment of the LXe detector with respect to the spectrometer is described in Sect. 3.3.1.

3.1.2 Photon timing

The determination of the photon emission time from the target \(t_{\mathrm {\gamma }}\) starts from the determination of the arrival time of the scintillation photons on the i-th PMT \(t_{\gamma ,i}^\mathrm {PMT}\) as described in Sect. 3.1. To relate this time to the photon conversion time, the propagation time of the scintillation photons must be subtracted as well as any hardware-induced time offset (e.g. due to cable length).

The propagation time of the scintillation photons is evaluated using the \(\pi ^0 \rightarrow \gamma \gamma \) events produced in CEX runs in which the time of one of the photons is measured by two plastic scintillator counters with a lead shower converter as a reference time. The primary contribution is expressed as a linear relation with the distance; the coefficient, i.e., the effective light velocity, is measured to be \(\approx \) \(8\) cm/ns. A remaining non-linear dependence is observed and an empirical function (2D function of the distance and incident angle) is calibrated from the data. This secondary effect comes from the fact that the fraction of indirect (scattered of reflected) scintillation photons increases with a larger incident angle and a larger distance. PMTs that do not directly view the interaction vertex \({\varvec{r}}_{\gamma }\), shaded by the inner face wall, are not used in the following timing reconstruction. After correcting for the scintillation photon propagation times, the remaining (constant) time offset is extracted for each PMT from the same \(\pi ^0 \rightarrow \gamma \gamma \) events by comparing the PMT hit time with the reference time.

After correcting for these effects, the photon conversion time \(t_{\mathrm {\gamma }}^\mathrm {LXe}\) is obtained by combining the timings of those PMTs \(t_{\gamma ,i}^\mathrm {PMT}\) which observe more than 50 \(N_\mathrm {pe}\) by a fit that minimises

PMTs with a large contribution to the \(\chi ^2\) are rejected during this fitting procedure to remove pile-up effects. The single-PMT time resolution is measured in the CEX runs to be \(\sigma _{t_{\mathrm {\gamma }}}^{\text {1-PMT}}(N_\mathrm {pe}=500) = 400\)–540 ps, depending on the location of the PMT, and approximately proportional to \(1/\sqrt{N_\mathrm {pe}}\). Typically 150 PMTs with \(\approx \) \(70\,000\) \(N_\mathrm {pe}\) in total are used to reconstruct 50-MeV photon times.

Finally, the photon emission time from the target \(t_\gamma \) is obtained by subtracting the time-of-flight between the point on the stopping target defined by the intercept of the positron trajectory at the stopping target and the reconstructed interaction vertex in the LXe detector from \(t_\gamma ^\mathrm {LXe}\).

The timing resolution \(\sigma _{t_{\mathrm {\gamma }}}\) is evaluated as the dispersion of the time difference between the two photons from \(\pi ^0\) decay after subtracting contributions due to the uncertainty of the \(\pi ^0\) decay position and to the timing resolution of the reference counters. From measurements at 55 and 83 MeV, the energy dependence is estimated and corrected, resulting in \(\sigma _{t_{\mathrm {\gamma }}} (E_{\mathrm {\gamma }}=52.83~\mathrm {MeV}) \approx 64\) ps.

3.1.3 Photon energy

The reconstruction of the photon energy \(E_{\mathrm {\gamma }}\) is based on the sum of scintillation photons collected by all PMTs. A summed waveform with the following coefficients over all the PMTs is formed and the energy is determined by integrating it:

where \(A_i\) is a correction factor for the fraction of photocathode coverage, which is dependent on the PMT location;Footnote 3 \(W_i({\varvec{r}}_{\gamma })\) is a weighting factor for the PMT that is common to all PMTs on a given face and is determined by minimising the resolution in response to 55-MeV photons from CEX. \(\Omega ({\varvec{r}}_{\gamma })\) is a correction factor for the solid angle subtended by photocathodes for scintillating photons emitted at the interaction vertex; it is applied only for shallow events (\(w_{\gamma }<3\) cm) for which the light collection efficiency is very sensitive to the relative position of each PMT and the interaction vertex. \(U({\varvec{r}}_{\gamma })\) is a position dependent non-uniformity correction factor determined by the responses to the 17.6- and 55-MeV photons. H(t) is a correction factor for the time-varying LXe scintillation light yield and S is a constant conversion factor of the energy scale, determined by the 55- and 83-MeV photons with a precision of 0.3 %.

A potential significant background is due to pile-up events with more than one photon in the detector nearly coincident in time. Approximately 15 % of triggered events suffer from pile-up at the nominal beam rate. The analysis identifies pile-up events and corrects the measured energy, thereby reducing background and increasing detection efficiency. Three methods are used to identify and extract the primary photon energy in pile-up events.

The first method identifies multiple photons with different timing using the \(\chi ^2/\mathrm {NDF}\) value in the time fit. In contrast to the time reconstruction, all the PMTs with more than 50 \(N_\mathrm {pe}\) are used to identify pile-up events.

The second method identifies pile-up events with photons at different positions by searching for spatially separated peaks in the inner and outer faces. If the event has two or more peaks whose energies cannot be determined using the third method below, a pile-up removal algorithm is applied to the PMT charge distribution. It uses a position dependent table containing the average charge of each PMT in response to 17.6-MeV photons. Once a pile-up event is identified, the energy of the primary photon is estimated by fitting the PMT charges to the table without using PMTs around the secondary photon. Then, the PMT charges around the secondary photon are replaced with the charges estimated by the fit. Finally, the energy is reconstructed as a sum of the individual PMT charges with the coefficients \(F_i\) (Eq. 1), instead of integrating the summed waveform.

The third method identifies multiple photons and unfolds them by combining the information from summed waveforms and the two methods above. First, the total summed waveform is searched for temporally separated pulses. Next, if the event is identified as a pile-up event by either of the two methods above, a summed waveform over PMTs near the secondary photon is formed to search for multiple pulses. The pulse found in the partial summed waveform is added to the list of pulses if the time is more than 5 ns apart from the other pulse times. Then, a superimposition of N template waveforms is fitted to the total summed waveform, where N is the number of pulses detected in this event. Figure 10 shows an example of the fitting, where three pulses are detected. Finally, the contributions of pile-up photons are subtracted and the remaining waveform is used for the primary energy estimation.

a Example of a LXe detector waveform for an event with three photons (2.5, 40.1 and 36.1 MeV). The cross markers show the waveform (with the digital high-pass filter) summed over all PMTs with the coefficients defined in the text, and the red line shows the fitted superposition of three template waveforms. b The unfolded main pulse (solid line) and the pile-up pulses (dashed)

The energy response of the LXe detector is studied in the CEX runs using \(\pi ^0\) decays with an opening angle between the two photons \(170^\circ \), for which each of the photons has an intrinsic line width small compared to the detector resolution. The measured line shape is shown in Fig. 11 at two different conversion depth (\(w_{\gamma }\)) regions. The line shape is asymmetric with a low energy tail mainly for two reasons: the interaction of the photon in the material in front of the LXe detector fiducial volume, and the albedo shower leakage from the inner face. The energy resolution is evaluated from the width of the line shape on the right-hand (high-energy) side (\(\sigma _{E_{\mathrm {\gamma }}}\)) by unfolding the finite width of the incident photon energy distribution due to the imperfect back-to-back selection and a small correction for the different background conditions between the muon and pion beams. Since the response of the detector depends on the position of the photon conversion, the fitted parameters of the line shape are functions of the 3D coordinates, mainly of \(w_{\gamma }\). The average resolution is measured to be \(\sigma _{E_{\mathrm {\gamma }}} = 2.3~\%\) (\(0< w_{\gamma }< 2~\mathrm {cm}\), event fraction 42 %) and 1.6 % (\(w_{\gamma }> 2~\mathrm {cm}\), 58 %).

The energy resolutions and energy scale are cross-checked by fitting the background spectra measured in the muon decay data with the MC spectra folded with the detector resolutions.

3.2 Positron reconstruction

3.2.1 DCH reconstruction

The reconstruction of positron trajectories in the DCH is performed in four steps: hit reconstruction in each single cell, clustering of hits within the same chamber, track finding in the spectrometer, and track fitting.

In step one, raw waveforms from anodes and cathodes are filtered in order to remove known noise contributions of fixed frequencies. A hit is defined as a negative signal appearing in the waveform collected at each end of the anode wire, with an amplitude of at least \(-5\) mV below the baseline. This level and its uncertainty \(\sigma _\mathrm {B}\) are estimated from the waveform itself in the region around 625 ns before the trigger time. The hit time is taken from the anode signal with larger amplitude as the time of the first sample more than \(-3\sigma _\mathrm {B}\) below the baseline.

The samples with amplitude below \(-2\sigma _\mathrm {B}\) from the baseline and in a range of \([-24,+56]\) ns around the peak, are used for charge integration. The range is optimised to minimise the uncertainty produced by the electronic noise. A first estimate of the z-coordinate, with a resolution of about 1 cm, is obtained from charge division on the anode wire, and it allows the determination of the Vernier cycle (see Sect. 2.4) in which the hit occurred. If one or more of the four cathode pad channels is known to be defective, the z-coordinate from charge division is used and is assigned a 1 cm uncertainty. Otherwise, charge integration is performed on the cathode pad waveforms and the resulting charges are combined to refine the z-measurement, exploiting the Vernier pattern. The charge asymmetries between the upper and lower sections of the inner and outer cathodes are given by

the position within the \(\lambda = 5\) cm Vernier cycle is given by:

At this stage, a first estimate of the position of the hit in the (x, y) plane is given by the wire position.

Once reconstructed, hits from nearby cells with similar z are grouped into clusters, taking into account that the z-measurement can be shifted by \(\lambda \) if the wrong Vernier cycle has been selected via charge division. These clusters are then used to build track seeds.

A seed is defined as a group of three clusters in four adjacent chambers, at large radius (\(r>24\) cm) where the chamber occupancy is lower and only particles with large momentum are found. The clusters are required to satisfy appropriate proximity criteria on their r and z values. A first estimate of the track curvature and total momentum is obtained from the coordinates of the hit wires, and is used to extend the track and search for other clusters, taking advantage of the adiabatic invariant \(p_T^2/B_z\), where \(p_T\) is the positron transverse momentum, for slowly varying axial magnetic fields. Having determined the approximate trajectory, the left/right ambiguity of the hits on each wire can be resolved in most cases. A first estimate of the track time (and hence the precise position of the hit within a cell) and further improvement of the left/right solutions can be obtained by minimising the \(\chi ^2\) of a circle fit of the hit positions in the (x, y) plane.

At this stage, in order to retain high efficiency, the same hit can belong to different clusters and the same cluster to different track candidates, which can result in duplicated tracks. Only after the track fit, when the best information on the track is available, independent tracks are defined.

A precise estimate of the (x, y) positions of the hits associated with the track candidate is then extracted from the drift time, defined as the difference between the hit and track times. The position is taken from tables relating (x, y) position to drift time. These are track-angle dependent and are derived using GARFIELD software [25]. The reconstructed (x, y) position is continuously updated during the tracking process, as the track information improves.

A track fit is finally performed with the Kalman filter technique [26, 27]. The GEANE software [28] is used to account for the effect of materials in the spectrometer during the propagation of the track and to estimate the error matrix. Exploiting the results of the first track fit, hits not initially included in the track candidate are added if appropriate and hits which are inconsistent with the fitted track are removed. The track is then propagated to the TC and matched to the hits in the bars (see Sect. 3.2.7 for details). The time of the matched TC hit (corrected for propagation delay) is used to provide a more accurate estimate of the track time, and hence the drift times. A final fit is then done with this refined information. Following the fit, the track is propagated backwards to the target. The decay vertex (\(x_\mathrm {e^+}\), \(y_\mathrm {e^+}\), \(z_\mathrm {e^+}\)) and the positron decay direction (\(\phi _\mathrm {e^+}\), \(\theta _\mathrm {e^+}\)) are defined as the point of intersection of the track with the target foil and the track direction at the decay vertex. The error matrix of the track parameters at the decay vertex is computed and used in the subsequent analysis.

Among tracks sharing at least one hit, a ranking is performed based on a linear combination of five variables denoting the quality of the track (the momentum, \(\theta _\mathrm {e^+}\) and \(\phi _\mathrm {e^+}\) errors at the target, the number of hits and the reduced \(\chi ^2\)). In order to optimise the performance of the ranking procedure, the linear combination is taken as the first component of a principal component analysis of the five variables. The ranking variables are also used to select tracks, along with other quality criteria as (for instance) the request that the backward track extrapolation intercepts the target within its fiducial volume. Since the subsequent analysis uses the errors associated with the track parameters event by event, the selection criteria are kept loose in order to preserve high efficiency while removing badly reconstructed tracks for which the fit and the associated errors might be unreliable. After the selection criteria are applied, the track quality ranking is used to select only one track among the surviving duplicate candidates.

3.2.2 DCH missing turn recovery

A positron can traverse the DCH system multiple times before it exits the spectrometer. An individual crossing of the DCH system is referred to as a positron ‘turn’. An intermediate merging step in the Kalman fit procedure, described previously, attempts to identify multi-turn positrons by combining and refitting individually reconstructed turns into a multi-turn track. However, it is possible that not all turns of a multi-turn positron are correctly reconstructed or merged into a multi-turn track. If this involves the first turn, i.e. the turn closest to the muon stopping target, this will lead to an incorrect determination of the muon decay point and time as well as an incorrect determination of the positron momentum and direction at the muon decay point, and therefore a loss of signal efficiency.

Example of a triple-turn positron in a year 2009 event. The positron was originally reconstructed as a double-turn track, formed by magenta hits, but the MFT recovery algorithm found a missing first track formed by the brown hits. The track was then refitted as a triple-turn one; the corresponding positron vector extrapolated at the target is shown as a blue arrow and compared with that coming from the original double-turn fitted track, shown as a magenta arrow

After the track reconstruction is completed, a missing first turn (MFT) recovery algorithm, developed and incorporated in the DCH reconstruction software expressly for this analysis, is used to identify and refit positron tracks with an MFT. Firstly, for each track in an event, the algorithm identifies all hits that may potentially be part of an MFT, based on the compatibility of their z-coordinates and wire locations in the DCH system with regard to the positron track. The vertex state vector of the track is propagated backwards to the point of closest approach with each potential MFT hit, and the hit selection is refined based on the r and z residuals between the potential MFT hits and their propagated state vector positions. Potential MFT candidates are subsequently selected if there are MFT hits in at least four DCH modules of which three are adjacent to one another, and the average signed z-difference between the hits and their propagated state vector positions as well as the standard deviation of the corresponding unsigned z-difference are smaller than 2.5 cm. A new MFT track is reconstructed using the Kalman filter technique based on the selected MFT hits and correspondingly propagated state vectors. Finally, the original positron and MFT tracks are combined and refitted using the Kalman filter technique, followed by a recalculation of the track quality ranking and the positron variables and their uncertainties at the target. An example of a multi-turn positron with a recovered MFT is shown in Fig. 12.

The improvement of the overall track reconstruction efficiency due to the use of the MFT recovery algorithm, defined as the ratio of the number of reconstructed Michel positrons with a recovered MFT to the total number of reconstructed Michel positrons, is measured using data and is shown as a function of \(E_\mathrm {e^+}\) and \(\theta _\mathrm {e^+}\) in Fig. 13. As can be seen from the left figure, the improvement of the track reconstruction efficiency at the signal energy due to the use of the MFT recovery algorithm, averaged over all angles, is \(\approx \) \(4~\%\). The efficiency improvement decreases with increasing energy because the nominal track reconstruction is more efficient at higher energy. The right figure shows that the efficiency improvement is maximal for positrons emitted perpendicular to the beam direction, as expected, since these positrons are more likely to have multiple turns and cross the target twice.

3.2.3 DCH alignment

Accurate positron track reconstruction requires precise knowledge of the location and orientation of the anode wires and cathode pads in the DCH system. This is achieved by an alignment procedure that consists of two parts: an optical survey alignment based on reference markers, and a software alignment based on reconstructed tracks.

Each DCH module is equipped with cross hair marks on the upstream and downstream sides of the module. Each module is fastened to carbon-fibre support structures on the upstream and downstream sides of the DCH system, which accommodate individual alignment pins with an optically detectable centre. Before the start of each data-taking period an optical survey of the cross hairs and pins is performed using a theodolite. The optical survey technique was improved in 2011 by adding corner cube reflectors next to the cross hairs, which were used in conjunction with a laser tracker system. The resolution of the laser method is \(\approx \) \(0.2\) mm for each coordinate.

Two independent software alignment methods are used to cross-check and further improve the alignment precision of the DCH system. The first method is based on the Millepede algorithm [29] and uses cosmic-rays reconstructed without magnetic field. During COBRA shutdown periods, cosmic-rays are triggered using dedicated scintillation counters located around the magnet cryostat. The alignment procedure utilises the reconstructed hit positions on the DCH modules to minimise the residuals with respect to straight tracks according to the Millepede algorithm. The global alignment parameters, three positional and three rotational degrees of freedom per module, are determined with an accuracy of better than 150 \(\upmu \)m for each coordinate.

The second method is based on an iterative algorithm using reconstructed Michel positrons and aims to improve the relative radial and longitudinal alignment of the DCH modules. The radial and longitudinal differences between the track position and the corresponding hit position at each module are recorded for a large number of tracks. The average hit-track residuals of each module are used to correct the radial and longitudinal position of the modules, while keeping the average correction over all modules equal to zero. This process is repeated several times while refitting the tracks after each iteration, until the alignment corrections converge and an accuracy of better than 50 \(\upmu \)m for each coordinate is reached. The method is cross-checked by using reconstructed Mott-scattered positrons (see Sect. 2.7), resulting in very similar alignment corrections.

The exact resolution reached by each approach depends on the resolution of the optical survey used as a starting position. For a low-resolution survey, the Millepede method obtains a better resolution, while the iterative method obtains a better resolution for a high-resolution survey. Based on these points, the Millepede method is adopted for the years 2009–2011 and the iterative method is used for the years 2012–2013 for which the novel optical survey data are available; in 2011, the first year with the novel optical survey data, the resulting resolution of both approaches is comparable.

3.2.4 Target alignment

Precise knowledge of the position of the target foil relative to the DCH system is crucial for an accurate determination of the muon decay vertex and positron direction at the vertex, which are calculated by propagating the reconstructed track back to the target, particularly when the trajectory of the track is far from the direction normal to the plane of the target.

Both optical alignment techniques and software tools using physics data are used to measure and cross-check the target position. The positions of the cross marks on the target foil (see Fig. 2) are surveyed each year using a theodolite, with an estimated accuracy of ±(0.5, 0.5, 1.5) mm in the (x, y, z) directions. For each year, a plane fit of the cross mark measurements is used in the propagation of tracks back to the target as a first approximation of the target foil position. However, the residuals between the cross mark measurements and the plane fits indicate that the target foil has developed a gradual aplanarity over time. This is confirmed by measurements of the target aplanarity performed with a high-precision FARO 3D laser scanner [30] at the end of 2013, as shown in the top panel of Fig. 14. Therefore, the propagation of tracks back to the target is improved by using a paraboloidal approximation \(z_\mathrm {t} - z_0\) \(=\) \(c_x(x_\mathrm {t}-x_0)^2\) \(+\) \(c_y(y_\mathrm {t}-y_0)^2\) of the target foil obtained by fitting separately the cross mark measurements for each year. In this function, \((x_\mathrm {t}, y_\mathrm {t}, z_\mathrm {t})\) is the local target coordinate system (i.e. not the nominal MEG coordinate system) in which \(x_\mathrm {t}\) (\(y_\mathrm {t}\)) is the coordinate along the semi-major (semi-minor) axis of the target, and \(z_\mathrm {t}\) is the coordinate perpendicular to the target plane. The fit parameters are \((x_0, y_0, z_0)\) for the position of the paraboloid extremum, and \(c_x\) and \(c_y\) for the paraboloid curvatures in the \(x_\mathrm {t}\) and \(y_\mathrm {t}\) directions. The paraboloidal fit of the 2013 cross mark measurements, shown in the bottom panel of Fig. 14, exhibits the largest aplanarity among all years. In this fit \(c_y=-0.03\) \(\mathrm{cm}^{-1}\), which corresponds to a focal length of \(\approx \) \(8\) cm for the semi-minor axis of the target in 2013.

Top FARO scan measurements of the target aplanarity in the local target reference frame, in which \(x_\mathrm {t}\) (\(y_\mathrm {t}\)) is the coordinate along the semi-major (semi-minor) axis of the target, and \(z_\mathrm {t}\) as indicated by the colour axis is the coordinate perpendicular to the target plane. Bottom the paraboloidal fit of the 2013 cross mark measurements. The paraboloidal approximation is valid since the vertices are concentrated at the centre of the target, as shown in Fig. 15

The alignment of the target foil in the \(z_\mathrm {t}\) direction and the corresponding systematic uncertainty have a significant effect on the analysis. In the paraboloidal approximation of the target foil, the value and uncertainty of \(z_0\) are the most relevant. The fitted \(z_0\)-values that are used in the track propagation are validated and corrected by imaging the holes in the target foil (see Fig. 2) using reconstructed Michel positrons. The target holes appear as dips in projections of the vertex distribution, as shown in Fig. 15. For each year, the \(z_0\)-value of the paraboloidal fit is checked by determining the reconstructed \(y_\mathrm {t}\) position of the four central target holes as a function of the positron angle \(\phi _\mathrm {e^+}\). Ideally the target hole positions should be independent of the track direction, while a \(z_0\)-displacement with respect to the fitted value would induce a dependence of \(y_\mathrm {t}\) on \(\tan \phi _\mathrm {e^+}\), to first order linear. Figure 16 shows the reconstructed \(y_\mathrm {t}\) position of the left-central target hole in 2011 as a function of \(\phi _\mathrm {e^+}\), fitted with a tangent function; the fit indicates a \(z_0\)-displacement of 1 mm towards the LXe detector. By imaging all four central holes for each year, the systematic uncertainty of \(z_0\) is estimated as \(\sigma ^\mathrm {sys}_{z_0} \approx 0.3\) mm for 2009–2012 data and \(\sigma ^\mathrm {sys}_{z_0} \approx 0.5\) mm for 2013 data.

The effect of the non-paraboloidal deformation on the analysis and its systematic uncertainty are estimated by using a 2D map of the \(z_\mathrm {t}\)-difference between the 2013 paraboloidal fit and the FARO measurements, as a function of \(x_\mathrm {t}\) and \(y_\mathrm {t}\) (i.e. the difference between the top and bottom panels of Fig. 14). As discussed in detail in Sect. 4.5, this map is scaled by a factor \(k_\mathrm {t}\) for each year, to represent the increase of the non-paraboloidal deformation of the target over time.

3.2.5 DCH performance

We developed a series of methods to extract, from data, an estimate of the resolution functions, defined for a generic observable q as the distribution of the errors, \(q - q_\mathrm {true}\).

A complete overview of the performance of the spectrometer can be found in [8], where the methods used to evaluate it are also described in detail. Two methods are used to extract the resolution functions for the positron parameters. The energy resolution function, including the absolute energy scale, is extracted with good accuracy from a fit to the energy spectrum of positrons from Michel decay. A core resolution of \(\sigma ^\mathrm {core}_{E_\mathrm {e^+}} \approx 330\) keV is found, with a \(\approx \) \(18\) % tail component with \(\sigma ^\mathrm {tail}_{E_\mathrm {e^+}} \approx 1.1\) MeV, with exact values depending on the data subset. The resolution functions for the positron angles and production vertex are extracted exploiting tracks that make two turns inside the spectrometer. The two turns are treated as independent tracks, and extrapolated to a prolongation of the target plane at a position between the two turns. The resulting differences in the position and direction of the two turns are then used to extract the position and angle resolutions. The same method is used to study the correlations among the variables and to cross-check the energy resolution. However, since the two-turn tracks are a biased sample with respect to the whole dataset, substantial MC-based corrections are necessary. These corrections are introduced as multiplicative factors to the width of the resolution functions, ranging from 0.75 to 1.20. Moreover, no information can be extracted about a possible reconstruction bias, which needs to be estimated from the detector alignment procedures described later in this paper. After applying the corrections, the following average resolutions are found: \(\sigma _{\theta _\mathrm {e^+}}=9.4\) mrad; \(\sigma _{\phi _\mathrm {e^+}}=8.4\) mrad; \(\sigma _{y_\mathrm {e^+}}= 1.1\) mm and \(\sigma _{z_\mathrm {e^+}}=2.5\) mm at the target.

In order to maximise the sensitivity of the analysis for the search for \(\mu ^+ \rightarrow \mathrm{e}^+ \gamma \) (discussed in detail in Sect. 4.5), instead of using these average resolutions we use the per-event estimate of the uncertainties, as provided by the track fit. It is done by replacing the resolution function of a generic observable q with the PDF of the corresponding pull:

where \(\sigma ^{\prime }_q\) is the uncertainty provided by the track fit. Following a well established procedure (see for instance [31]) this PDF is a gaussian function whose width \(\sigma _{q}\) accounts for the bias in the determination of \(\sigma ^{\prime }_q\). The correlations between variables in the signal PDF are treated as correlations between pulls.

3.2.6 TC reconstruction

Each of the timing counter (TC) bars acts as an independent detector. It exploits the fast scintillating photons released by the passage of a positron to infer the time and longitudinal position of the hit. A fraction of the scintillating photons reaches the bar ends where they are read-out by PMTs.

The signal from each TC PMT is processed with a Double Threshold Discriminator (DTD) to extract the arrival time of the scintillating photons minimising the time walk effect. A TC hit is formed when both PMTs on a single bar have signals above the higher DTD threshold. The times \(t^\mathrm {TC,in}_\mathrm {e^+}\) and \(t^\mathrm {TC,out}_\mathrm {e^+}\), measured by the two PMTs belonging to the same bar, are extracted by a template fit to a NIM waveform (square wave at level \(-0.8\) V) fired at the lower DTD threshold and digitised by a DRS.

The hit position along the bar is derived by the following technique. A positron impinging on a TC bar at time \(t^{TC}_\mathrm {e^+}\) has a relationship with the measured PMT times given by:

where \(b_{\mathrm {in,out}}\) are the offsets and \(W_\mathrm {in,out}\) are the contributions from the time walk effect from the inner and outer PMT, respectively, \(v_\mathrm {eff}\) is the effective velocity of light in the bar and L is the bar length; the z-axis points along the main axis of the bar and its origin is taken in the middle of the bar. Adding the two parts of Eq. 2 the result is:

Subtracting the two parts of Eq. 2 the longitudinal coordinate of the impact point along the bar is given by:

The time (longitudinal positions) resolution of TC is determined using tracks hitting multiple bars from the distribution of the time (longitudinal position) difference between hits on neighbouring bars corrected for the path length. The radial and azimuthal coordinates are taken as the corresponding coordinates of the centre of each bar.

The longitudinal position resolution is \(\sigma _{z^{\mathrm {TC}}_{\mathrm {e^+}}} \approx 1.0\) cm and the time resolution is \(\sigma _{t^{\mathrm {TC}}_{\mathrm {e^+}}} \approx 65\) ps.

The TC, therefore, provides the information required to reconstruct all positron variables necessary to match a DCH track (see Sect. 3.2.7) and recover the muon decay time by extrapolating the \(t^\mathrm {TC}_\mathrm {e^+}\) along the track trajectory back to the target to obtain the positron emission time \(t_\mathrm {e^+}\).

3.2.7 DCH-TC matching

The matching of DCH tracks with hits in the TC is performed as an intermediate step in the track fit procedure, in order to exploit the information from the TC in the track reconstruction.

After being reconstructed within the DCH system, a track is propagated to the first bar volume it encounters (reference bar). If no bar volume is crossed, the procedure is repeated with an extended volume to account for extrapolation uncertainties. Then, for each TC hit within \(\pm 5\) bars from the reference one, the track is propagated to the corresponding bar volume and the hit is matched with the track according to the following ranking:

-

1.

the TC hit belongs to the reference bar, with the longitudinal distance between the track and the hit \(\left| \Delta z^{\mathrm {TC}} \right| < 12\) cm (the track position defined as the entrance point of the track in the bar volume);

-

2.

the TC hit belongs to another bar whose extended volume is also crossed by the track, and \(\left| \Delta z^{\mathrm {TC}} \right| < 12\) cm (the track position defined as the entrance point of the track in the extended bar volume);

-

3.

the TC hit belongs to a bar whose extended volume is not crossed by the track, but where the distance of closest approach of the track to the bar axis is less than 5 cm, and \(\left| \Delta z^{\mathrm {TC}} \right| < 12\) cm (the track position defined as the point of closest approach of the track to the bar axis).

Among all successful matching candidates, those with the lowest ranking are chosen. Among them, the one with the smallest \(\Delta z^{\mathrm {TC}}\) is used.

The time of the matched TC hit is assigned to the track, which is then back-propagated to the chambers in order to correct the drift time of the hits for the track length timing contribution. The Kalman filter procedure is also applied to propagate the track back to the target to get the best estimate of the decay vertex parameters at the target, including the time \(t_\mathrm {e^+}\).

3.2.8 Positron AIF reconstruction

The photon background in an energy region very close to the signal is dominated by positron AIF in the detector (see Sect. 4.4.1.1). If the positron crosses part of the DCH before it annihilates, it can leave a trace of hits which are correlated to the subsequent photon signal. A pattern recognition algorithm has been developed that can identify these types of positron AIF events. Since positron AIF contributes to the accidental background, this algorithm can help to distinguish accidental background events from signal and RMD events. The algorithm is summarised in the following.

Example of a reconstructed positron AIF candidate in a 2009 event due to a downstream muon decay. The reconstructed AIF vertex is indicated as a blue star, visible in the upper plot at \(\left( x,y \right) = \left( -12,-21 \right) \) approximately. The AIF direction is indicated as a green arrow, originating from this star and pointing towards lower x and higher y coordinates. The vector connecting the AIF vertex and the photon conversion vertex in the LXe detector is indicated as a magenta dashed line. Note that the green arrow and the magenta line nearly overlap, as expected for a true AIF event

The procedure starts by building positron AIF seeds from all reconstructed clusters. An AIF seed is defined as a set of clusters on adjacent DCH modules which satisfy a number of minimum proximity criteria. A positron AIF candidate (\(\mathrm {e^+_{AIF}}\)) is reconstructed from each seed by performing a circle fit based on the xy-coordinates of all clusters in the seed. The circle fit is improved by considering the individual hits in all clusters. The xy-coordinates of hits in multi-hit clusters are refined and left/right solutions based on the initial circle fit are determined by taking into account the timing information of the individual hits, which also results in an estimate of the AIF time. The xy-coordinates of the AIF vertex are determined by the intersection point of the circle fit with the first DCH cathode plane after the last cluster hit. If the circle fit does not cross the next DCH cathode plane, the intersection point of the circle fit with the support structure of the next DCH module or the inner wall of COBRA is used. The z-coordinate of the AIF vertex is calculated by extrapolating the quadratic polynomial fit of the xz-positions of the last three clusters of the AIF candidate to the x-coordinate of the AIF vertex. The AIF candidate direction is taken as the direction tangent to both the circle fit and the quadratic polynomial fit at the AIF vertex. Figure 17 shows an example of a reconstructed AIF candidate.

3.3 Combined reconstruction

This section deals with variables requiring signals both in the spectrometer and in the LXe detector.

3.3.1 Relative photon–positron angles

Since the LXe detector is not capable of reconstructing the direction of the incoming photons, this direction is determined by connecting the reconstructed interaction vertex of the photon in the LXe detector to the reconstructed decay vertex on target: it is defined through its azimuthal and polar angles (\(\phi _\mathrm {\gamma }\), \(\theta _\mathrm {\gamma }\)).

The degree to which the photon and positron are not back-to-back is quantified in terms of the angle between the photon direction and the positron direction reversed at the target in terms of azimuthal and polar angle differences:

There are no direct calibration source for measuring the resolutions of the measurements of these relative angles. Hence, they are obtained by combining (1) the position resolution of the LXe detector and (2) the position and angular resolutions of the spectrometer, taking into account the relative alignment of the spectrometer and the calorimeter.

There are correlations among the errors in measurements of the positron observables at the target both due to the fit and also introduced by the extrapolation to the target. Additionally, the errors in the photon angles contain a contribution from the positron position error at the target. Due to the correlations, the relative angle resolutions are not the quadratic sum of the photon and positron angular resolutions.

The \(\theta _{\mathrm {e^+ \gamma }}\) resolution is evaluated as \(\sigma _{\theta _{\mathrm {e^+ \gamma }}} = (15.0 - 16.2)\) mrad depending on the year of data taking by taking into account the correlation between \(z_e\) and \(\theta _\mathrm {e^+}\). Since the true positron momentum and \(\theta _{\mathrm {e^+ \gamma }}\) of the \(\mu ^+ \rightarrow \mathrm{e}^+ \gamma \) signal are known, \(\phi _\mathrm {e^+}\) and \(y_\mathrm {e^+}\) can be corrected using the reconstructed energy of the positron and \(\theta _{\mathrm {e^+ \gamma }}\). The \(\phi _{\mathrm {e^+ \gamma }}\) resolution after correcting these correlations is evaluated as \(\sigma _{\phi _{\mathrm {e^+ \gamma }}}= (8.9-9.0)\) mrad depending on the year.

The systematic uncertainty of the positron emission angle relies on the accuracy of the relative alignment among the magnetic field, the DCH modules, and the target (see Sects. 3.2.3 and 3.2.4 for the alignment methods and the uncertainties). The position of the target (in particular the error in the position and orientation of the target plane) and any distortion of the target plane directly affect the emission angle measurement and are found to be one of the dominant sources of systematic uncertainty on the relative angles.

The measurement of the photon direction depends on the relative alignment between the spectrometer and the LXe detector. They are aligned separately using optical alignment techniques and calculations of LXe detector distortions and motion during filling and cool-down. Additionally, the relative alignment is cross-checked by directly measuring two types of physics processes that produce hits in both detectors:

-

Positron AIF events,

-

Cosmic rays without the COBRA magnetic field.

Each of the two measurements provides independent information of the displacement with a precision better than 1.0 mm in the longitudinal direction (\(\delta z\)) relative to the survey; however they are subject to systematic uncertainties due to the non-uniform distribution of both positron AIF and cosmic ray events and to the different shower development because those events are not coming from the target. The two results in \(\delta z\) (\(\delta z = 2.1 \pm 0.5\) mm for AIF and \(\delta z =1.8 \pm 0.9\) mm for cosmic-rays) agree well, resulting in an average \(\delta z = 2.0\pm 0.4\) mm. On the other hand, the survey data may have some unknown systematic uncertainties because the survey can be done only at room temperature and the effects of shrinkage and the detector distortions at LXe temperature are only taken into account by the calculation. The difference of \(\delta z=2.0\) mm between the survey and the two measurements suggests the existence of these possible uncertainties and we take the difference into account as the systematic uncertainties. The nominal displacement is taken as the average of the survey and the average of the measurements, \(\delta z = 1.0 \pm 0.6\) mm where the uncertainty is the systematic. This uncertainty corresponds to 0.85 mrad at the centre value of \(\theta _{\mathrm {e^+ \gamma }}\) (converted by the radial position of the LXe detector \(r_\mathrm {in} = 67.85\) cm). There is no cosmic ray measurement available in other degrees of freedom and we can not extract the systematic uncertainty of \(\phi _{\mathrm {e^+ \gamma }}\). Therefore, we regard the observed value of \(\delta z\) as an estimate of the systematic uncertainty of the survey while keeping all other survey results for the alignment. Finally, we assign the same systematic uncertainty estimated for \(\theta _{\mathrm {e^+ \gamma }}\) to \(\phi _{\mathrm {e^+ \gamma }}\).

3.3.2 Relative photon–positron time

The relative time \(t_{\mathrm {e^+ \gamma }}= t_{\mathrm {\gamma }}-t_\mathrm {e^+}\) is defined as the difference between the photon time (see Sect. 3.1.2) and the positron time (see Sect. 3.2.6) calculated at the target. The relative time is calibrated using the RMD peak observed in the energy side-bandFootnote 4 and shown in Fig. 18.

The centre of this distribution is used to correct the time offset between the TC and LXe detectors. The position of the RMD-peak corresponding to \(t_{\mathrm {e^+ \gamma }}= 0\) is monitored constantly during the physics data-taking period and found to be stable to within 15 ps. In order to obtain the resolution on \(t_{\mathrm {e^+ \gamma }}\) for signal events, the resolution of Fig. 18 must be corrected for the photon energy dependence as measured in the CEX calibration run and for the positron energy dependence (from a MC simulation), resulting in \(\sigma _{t_{\mathrm {e^+ \gamma }}}=122\pm 4\) ps.

The dominant contributions to the \(t_{\mathrm {e^+ \gamma }}\) resolution are the positron track length uncertainty (in timing units 75 ps), the TC intrinsic time resolution (65 ps), and the LXe detector time resolution (64 ps).

3.3.3 Photon-AIF analysis

In order to determine if a photon originates from positron AIF, the following three quantities are calculated for each possible \(\mathrm {e^+_{AIF}}\) \(\gamma \)-pair from all reconstructed \(\mathrm {e^+_{AIF}}\) candidates and photons in the event: the angular differences between the AIF candidate direction and the vector connecting the photon and the AIF vertex (\(\theta _\mathrm{AIF}\) and \(\phi _\mathrm{AIF}\)), and the time difference between the photon and the AIF candidate (\(t_\mathrm{AIF}\)). If there are multiple \(\mathrm {e^+_{AIF}}\) candidates per event, a ranking of \(\mathrm {e^+_{AIF}}\) \(\gamma \)-pairs is performed by minimising the \(\chi ^2\) based on these three observables.

A plot of \(\phi _\mathrm{AIF}\) vs. \(\theta _\mathrm{AIF}\) for the highest ranked \(\mathrm {e^+_{AIF}}\) \(\gamma \)-pairs per event in a random sample of year 2011 events is shown in Fig. 19. The peak at the centre is caused by photons originating from positron AIF in the DCH. The peak has a tail in the negative \(\phi _\mathrm{AIF}\) direction since the AIF vertex is reconstructed at the first DCH cathode foil immediately after the last hit in the \(\mathrm {e^+_{AIF}}\) candidate. However, if the last hit is located in the left plane of a DCH module, it is equally likely (to first order) that the AIF occurred in the first cathode foil of the next DCH module.

The observables \(\theta _\mathrm{AIF}\) and \(\phi _\mathrm{AIF}\) are combined into a 1D “distance” from the peak where the correlation between \(\theta _\mathrm{AIF}\) and \(\phi _\mathrm{AIF}\) and the structure of the two peaks are taken into account. The smaller the distance, the more likely the event is a true AIF background. Events falling within 0.7\(\sigma _\mathrm {AIF}\) of either of the two peaks are cut. The estimation for the fraction of rejected AIF background events is 1.9 %, while losing 1.1 % of signal events. The fraction of rejected RMD events is the same as that of the signal. The cut based on the AIF analysis is employed in the physics analysis to remove outlier events which happen to be signal-like with an AIF photon.

The \(\phi _\mathrm{AIF}\) versus \(\theta _\mathrm{AIF}\) distribution of the highest ranked \(\mathrm {e^+_{AIF}}\) \(\gamma \)-pairs per event in a sample of year 2009 events. The peak in the centre of the plot is caused by photons originating from positron-AIF in the DCH. The events located inside the dashed line contour are removed by the AIF cut

4 Analysis

4.1 Analysis strategy

The MEG analysis strategy is a combination of blind and maximum likelihood analysis. The blind analysis is chosen to prevent any bias in the evaluation of the expected background in the analysis region and the maximum likelihood analysis is preferred to the simpler box analysis in order to avoid boundary effects at the borders of the analysis region and to improve the sensitivity by correctly taking into account the probabilities of events being due to signal, RMD or accidental background.