Abstract

We review lattice results related to pion, kaon, \(D\)- and \(B\)-meson physics with the aim of making them easily accessible to the particle-physics community. More specifically, we report on the determination of the light-quark masses, the form factor \(f_+(0)\), arising in semileptonic \(K \rightarrow \pi \) transition at zero momentum transfer, as well as the decay-constant ratio \(f_K/f_\pi \) of decay constants and its consequences for the CKM matrix elements \(V_{us}\) and \(V_{ud}\). Furthermore, we describe the results obtained on the lattice for some of the low-energy constants of \(\hbox {SU}(2)_L\times \hbox {SU}(2)_R\) and \(\hbox {SU}(3)_L\times \hbox {SU}(3)_R\) Chiral Perturbation Theory and review the determination of the \(B_K\) parameter of neutral kaon mixing. The inclusion of heavy-quark quantities significantly expands the FLAG scope with respect to the previous review. Therefore, we focus here on \(D\)- and \(B\)-meson decay constants, form factors, and mixing parameters, since these are most relevant for the determination of CKM matrix elements and the global CKM unitarity-triangle fit. In addition we review the status of lattice determinations of the strong coupling constant \(\alpha _\mathrm{s}\).

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Flavour physics provides an important opportunity for exploring the limits of the Standard Model of particle physics and for constraining possible extensions of theories that go beyond it. As the LHC explores a new energy frontier and as experiments continue to extend the precision frontier, the importance of flavour physics will grow, both in terms of searches for signatures of new physics through precision measurements and in terms of attempts to unravel the theoretical framework behind direct discoveries of new particles. A major theoretical limitation consists in the precision with which strong interaction effects can be quantified. Large-scale numerical simulations of lattice QCD allow for the computation of these effects from first principles. The scope of the Flavour Lattice Averaging Group (FLAG) is to review the current status of lattice results for a variety of physical quantities in low-energy physics. Set up in November 2007,Footnote 1 it comprises experts in Lattice Field Theory and Chiral Perturbation Theory. Our aim is to provide an answer to the frequently posed question “What is currently the best lattice value for a particular quantity?”, in a way which is readily accessible to non-lattice-experts. This is generally not an easy question to answer; different collaborations use different lattice actions (discretisations of QCD) with a variety of lattice spacings and volumes, and with a range of masses for the \(u\)- and \(d\)-quarks. Not only are the systematic errors different, but also the methodology used to estimate these uncertainties varies between collaborations. In the present work we summarise the main features of each of the calculations and provide a framework for judging and combining the different results. Sometimes it is a single result which provides the “best” value; more often it is a combination of results from different collaborations. Indeed, the consistency of values obtained using different formulations adds significantly to our confidence in the results.

The first edition of the FLAG review was published in 2011 [1]. It was limited to lattice results related to pion and kaon physics: light-quark masses (\(u\)-, \(d\)- and \(s\)-flavours), the form factor \(f_+(0)\) arising in semileptonic \(K \rightarrow \pi \) transitions at zero momentum transfer and the decay constant ratio \(f_K/f_\pi \), as well as their implications for the CKM matrix elements \(V_{us}\) and \(V_{ud}\). Furthermore, results were reported for some of the low-energy constants of \(\hbox {SU}(2)_L \otimes \hbox {SU}(2)_R\) and \(\hbox {SU}(3)_L \otimes \hbox {SU}(3)_R\) Chiral Perturbation Theory and the \(B_K\) parameter of neutral kaon mixing. Results for all of these quantities have been updated in the present paper. Moreover, the scope of the present review has been extended by including lattice results related to \(D\)- and \(B\)-meson physics. We focus on \(B\)- and \(D\)-meson decay constants, form factors, and mixing parameters, which are most relevant for the determination of CKM matrix elements and the global CKM unitarity-triangle fit. Last but not least, the current status of lattice results on the QCD coupling \(\alpha _\mathrm{s}\) is also reviewed. Bottom- and charm-quark masses, though important parametric inputs to Standard Model calculations, have not been covered in the present edition. They will be included in a future FLAG report.

Our plan is to continue providing FLAG updates, in the form of a peer-reviewed paper, roughly on a biannual basis. This effort is supplemented by our more frequently updated website http://itpwiki.unibe.ch/flag, where figures as well as pdf-files for the individual sections can be downloaded. The papers reviewed in the present edition have appeared before the closing date 30 November 2013.

Finally, we draw attention to a particularly important point. As stated above, our aim is to make lattice QCD results easily accessible to non-lattice-experts and we are well aware that it is likely that some readers will only consult the present paper and not the original lattice literature. We consider it very important that this paper is not the only one which gets cited when the lattice results which are discussed and analysed here are quoted. Readers who find the review and compilations offered in this paper useful are therefore kindly requested to also cite the original sources. The bibliography at the end of this paper should make this task easier. Indeed we hope that the bibliography will be one of the most widely used elements of the whole paper.

This review is organised as follows. In the remainder of Sect. 1 we summarise the composition and rules of FLAG, describe the goals of the FLAG effort and general issues that arise in modern lattice calculations. For the reader’s convenience, Table 1 summarises the main results (averages and estimates) of the present review. In Sect. 2 we explain our general methodology for evaluating the robustness of lattice results which have appeared in the literature. We also describe the procedures followed for combining results from different collaborations in a single average or estimate (see Sect. 2.2 for our use of these terms). The rest of the paper consists of sections, each of which is dedicated to a single (or groups of closely connected) physical quantity(ies). Each of these sections is accompanied by an Appendix with explicatory notes.

indicate the number of results that enter our averages for each quantity. We emphasise that these numbers only give a very rough indication of how thoroughly the quantity in question has been explored on the lattice and recommend to consult the detailed tables and figures in the relevant section for more significant information For explanations on the source of the quoted errors for each quantity, the reader is advised to consult the corresponding section, as indicated in the second column

indicate the number of results that enter our averages for each quantity. We emphasise that these numbers only give a very rough indication of how thoroughly the quantity in question has been explored on the lattice and recommend to consult the detailed tables and figures in the relevant section for more significant information For explanations on the source of the quoted errors for each quantity, the reader is advised to consult the corresponding section, as indicated in the second column1.1 FLAG enlargement

Upon completion of the first review, it was decided to extend the project by adding new physical quantities and co-authors. FLAG became more representative of the lattice community, both in terms of the geographical location of its members and the lattice collaborations to which they belong. At the time a parallel effort had been made [2, 3]; the two efforts have now merged in order to provide a single source of information on lattice results to the particle-physics community.

The experience gained in managing the activities of a medium-sized group of co-authors taught us that it was necessary to have a more formal structure and a set of rules by which all concerned had to abide, in order to make the inner workings of FLAG function smoothly. The collaboration presently consists of an Advisory Board (AB), an Editorial Board (EB), and seven Working Groups (WG). The rôle of the Advisory Board is that of general supervision and consultation. Its members may interfere at any point in the process of drafting the paper, expressing their opinion and offering advice. They also give their approval of the final version of the preprint before it is rendered public. The Editorial Board coordinates the activities of FLAG, sets priorities and intermediate deadlines, and takes care of the editorial work needed to amalgamate the sections written by the individual working groups into a uniform and coherent review. The working groups concentrate on writing up the review of the physical quantities for which they are responsible, which is subsequently circulated to the whole collaboration for criticisms and suggestions.

The most important internal FLAG rules are the following:

-

members of the AB have a 4-year mandate (to avoid a simultaneous change of all members, some of the current members of the AB will have a shorter mandate);

-

the composition of the AB reflects the main geographical areas in which lattice collaborations are active: one member comes from America, one from Asia/Oceania and one from Europe;

-

the mandate of regular members is not limited in time, but we expect that a certain turnover will occur naturally;

-

whenever a replacement becomes necessary this has to keep, and possibly improve, the balance in FLAG;

-

in all working groups the three members must belong to three different lattice collaborations;Footnote 2

-

a paper is in general not reviewed (nor colour-coded, as described in the next section) by one of its authors;

-

lattice collaborations not represented in FLAG will be asked to check whether the colour coding of their calculation is correct.

The current list of FLAG members and their Working Group assignments is:

\(\bullet \) Advisory Board (AB): S. Aoki, C. Bernard, C. Sachrajda

\(\bullet \) Editorial Board (EB): G. Colangelo, H. Leutwyler,

A. Vladikas, U. Wenger

\(\bullet \) Working Groups (WG)

(each WG coordinator is listed first):

-

Quark masses: L. Lellouch, T. Blum, V. Lubicz

-

\(V_{us},V_{ud}\): A. Jüttner, T. Kaneko, S. Simula

-

LEC: S. Dürr, H. Fukaya, S. Necco

-

\(B_K\): H. Wittig, J. Laiho, S. Sharpe

-

\(f_{B_{(s)}}\), \(f_{D_{(s)}}\), \(B_B\): A. El-Khadra,Y. Aoki, M. Della Morte

-

\(B_{(s)}\), \(D\) semileptonic and radiative decays: R. Van de Water, E. Lunghi, C. Pena, J. ShigemitsuFootnote 3

-

\(\alpha _\mathrm{s}\): R. Sommer, R. Horsley, T. Onogi

1.2 General issues and summary of the main results

The present review aims at two distinct goals:

-

(a)

offer a description of the work done on the lattice concerning low-energy particle physics;

-

(b)

draw conclusions on the basis of that work, which summarise the results obtained for the various quantities of physical interest.

The core of the information about the work done on the lattice is presented in the form of tables, which not only list the various results, but also describe the quality of the data that underlie them. We consider it important that this part of the review represents a generally accepted description of the work done. For this reason, we explicitly specify the quality requirements used and provide sufficient details in the appendices so that the reader can verify the information given in the tables.

The conclusions drawn on the basis of the available lattice results, on the other hand, are the responsibility of FLAG alone. We aim at staying on the conservative side and in several cases reach conclusions which are more cautious than what a plain average of the available lattice results would give, in particular when this is dominated by a single lattice result. An additional issue occurs when only one lattice result is available for a given quantity. In such cases one does not have the same degree of confidence in results and errors as one has when there is agreement among many different calculations using different approaches. Since this degree of confidence cannot be quantified, it is not reflected in the quoted errors, but it should be kept in mind by the reader. At present, the issue of having only a single result occurs much more often in heavy-quark physics than in light-quark physics. We are confident that the heavy-quark calculations will soon reach the state that pertains in light-quark physics.

Several general issues concerning the present review are thoroughly discussed in Sect. 1.1 of our initial paper [1] and we encourage the reader to consult the relevant pages. In the remainder of the present section, we focus on a few important points.

Each discretisation has its merits but also its shortcomings. For the topics covered already in the first edition of the FLAG review, we have by now a remarkably broad data base, and for most quantities lattice calculations based on totally different discretisations are now available. This is illustrated by the dense population of the tables and figures shown in the first part of this review. Those calculations which do satisfy our quality criteria indeed lead to consistent results, confirming universality within the accuracy reached. In our opinion, the consistency between independent lattice results, obtained with different discretisations, methods and simulation parameters, is an important test of lattice QCD, and observing such consistency then also provides further evidence that systematic errors are fully under control.

In the sections dealing with heavy quarks and with \(\alpha _\mathrm{s}\), the situation is not the same. Since the \(b\)-quark mass cannot be resolved with current lattice spacings, all lattice methods for treating \(b\) quarks use effective field theory at some level. This introduces additional complications not present in the light-quark sector. An overview of the issues specific to heavy-quark quantities is given in the introduction of Sect. 8. For \(B\)- and \(D\)-meson leptonic decay constants, there already exist a good number of different independent calculations that use different heavy-quark methods, but there are only one or two independent calculations of semileptonic \(B\)- and \(D\)-meson form factors and \(B\) meson mixing parameters. For \(\alpha _\mathrm{s}\), most lattice methods involve a range of scales that need to be resolved and controlling the systematic error over a large range of scales is more demanding. The issues specific to determinations of the strong coupling are summarised in Sect. 9.

The lattice spacings reached in recent simulations go down to 0.05 fm or even smaller. In that region, growing autocorrelation times slow down the sampling of the configurations [4–8]. Many groups check for autocorrelations in a number of observables, including the topological charge, for which a rapid growth of the autocorrelation time is observed if the lattice spacing becomes small. In the following, we assume that the continuum limit can be reached by extrapolating the existing simulations.

Lattice simulations of QCD currently involve at most four dynamical quark flavours. Moreover, most of the data concern simulations for which the masses of the two lightest quarks are set equal. This is indicated by the notation \(N_\mathrm{f}=2+1+1\) which, in this case, denotes a lattice calculation with four dynamical quark flavours and \(m_{u} = m_{d} \ne m_{s} \ne m_{c}\). Note that calculations with \(N_\mathrm{f}=2\) dynamical flavours often include strange valence quarks interacting with gluons, so that bound states with the quantum numbers of the kaons can be studied, albeit neglecting strange sea quark fluctuations. The quenched approximation (\(N_\mathrm{f}=0\)), in which the sea quarks are treated as a mean field, is no longer used in modern lattice simulations. Accordingly, we will review results obtained with \(N_\mathrm{f}=2\), \(N_\mathrm{f}=2+1\) and \(N_\mathrm{f} = 2+1+1\), but we omit earlier results with \(N_\mathrm{f}=0\). On the other hand, the dependence of the QCD coupling constant \(\alpha _\mathrm{s}\) on the number of flavours is a theoretical issue of considerable interest, and we therefore include results obtained for gluodynamics in the \(\alpha _\mathrm{s}\) section. We stress, however, that only results with \(N_\mathrm{f} \ge 3\) are used to determine the physical value of \(\alpha _\mathrm{s}\) at a high scale.

The remarkable recent progress in the precision of lattice calculations is due to improved algorithms, better computing resources and, last but not least, conceptual developments, such as improved actions which reduce lattice artefacts, actions which preserve (remnants of) chiral symmetry, understanding finite-size effects, non-perturbative renormalisation, etc. A concise characterisation of the various discretisations that underlie the results reported in the present review is given in Appendix A.1.

Lattice simulations are performed at fixed values of the bare QCD parameters (gauge coupling and quark masses) and physical quantities with mass dimensions (e.g. quark masses, decay constants...) are computed in units of the lattice spacing; i.e. they are dimensionless. Their conversion to physical units requires knowledge of the lattice spacing at the fixed values of the bare QCD parameters of the simulations. This is achieved by requiring agreement between the lattice calculation and experimental measurement of a known quantity, which “sets the scale” of a given simulation. A few details on this procedure are provided in Appendix A.2.

Several of the results covered by this review, such as quark masses, the gauge coupling, and \(B\)-parameters, are quantities defined in a given renormalisation scheme and scale. The schemes employed are often chosen because of their specific merits when combined with the lattice regularisation. For a brief discussion of their properties, see Appendix A.3. The conversion of the results, obtained in these so-called intermediate schemes, to more familiar regularisation schemes, such as the \({\overline{\mathrm{MS}}}\)-scheme, is done with the aid of perturbation theory. It must be stressed that the renormalisation scales accessible by the simulations are subject to limitations, naturally arising in Field-Theory computations at finite UV and small non-zero IR cutoff. Typically, such scales are of the order of the UV cutoff, or \(\Lambda _\mathrm{QCD}\), depending on the chosen scheme. To safely match to \({\overline{\mathrm{MS}}}\), a scheme defined in perturbation theory, Renormalisation Group (RG) running to higher scales is performed, either perturbatively, or non-perturbatively (the latter using finite-size scaling techniques).

Because of limited computing resources, lattice simulations are often performed at unphysically heavy pion masses, although results at the physical point have recently become available. Further, numerical simulations must be done at finite lattice spacing. In order to obtain physical results, lattice data are generated at a sequence of pion masses and a sequence of lattice spacings, and then extrapolated to \(M_\pi \approx 135\) MeV and \(a \rightarrow 0\). To control the associated systematic uncertainties, these extrapolations are guided by effective theory. For light-quark actions, the lattice-spacing dependence is described by Symanzik’s effective theory [9, 10]; for heavy quarks, this can be extended and/or supplemented by other effective theories such as Heavy-Quark Effective Theory (HQET). The pion-mass dependence can be parameterised with Chiral Perturbation Theory (\(\chi \)PT), which takes into account the Nambu–Goldstone nature of the lowest excitations that occur in the presence of light quarks; similarly one can use Heavy-Light Meson Chiral Perturbation Theory (HM\(\chi \)PT) to extrapolate quantities involving mesons composed of one heavy (\(b\) or \(c\)) and one light quark. One can combine Symanzik’s effective theory with \(\chi \)PT to simultaneously extrapolate to the physical pion mass and continuum; in this case, the form of the effective theory depends on the discretisation. See Appendix A.4 for a brief description of the different variants in use and some useful references.

2 Quality criteria

The essential characteristics of our approach to the problem of rating and averaging lattice quantities reported by different collaborations have been outlined in our first publication [1]. Our aim is to help the reader assess the reliability of a particular lattice result without necessarily studying the original article in depth. This is a delicate issue, which may make things appear simpler than they are. However, it safeguards against the common practice of using lattice results and drawing physics conclusions from them, without a critical assessment of the quality of the various calculations. We believe that despite the risks, it is important to provide some compact information about the quality of a calculation. However, the importance of the accompanying detailed discussion of the results presented in the bulk of the present review cannot be underestimated.

2.1 Systematic errors and colour-coding

In Ref. [1], we identified a number of sources of systematic errors, for which a systematic improvement is possible, and assigned one of three coloured symbols to each calculation: green star, amber disc or red square. The appearance of a red tag, even in a single source of systematic error of a given lattice result, disqualified it from the global averaging. Since results with green and amber tags entered the averages, and since this policy has been retained in the present edition, we have decided to substitute the amber disc by a green unfilled circle. Thus the new colour coding is as follows:

-

the systematic error has been estimated in a satisfactory manner and convincingly shown to be under control;

the systematic error has been estimated in a satisfactory manner and convincingly shown to be under control; -

a reasonable attempt at estimating the systematic error has been made, although this could be improved;

a reasonable attempt at estimating the systematic error has been made, although this could be improved; -

no or a clearly unsatisfactory attempt at estimating the systematic error has been made. We stress once more that only results without a red tag in the systematic errors are averaged in order to provide a given FLAG estimate.

no or a clearly unsatisfactory attempt at estimating the systematic error has been made. We stress once more that only results without a red tag in the systematic errors are averaged in order to provide a given FLAG estimate.

The precise criteria used in determining the colour coding is unavoidably time-dependent; as lattice calculations become more accurate the standards against which they are measured become tighter. For quantities related to the light-quark sector, which have been dealt with in the first edition of the FLAG review [1], some of the quality criteria have remained the same, while others have been tightened up. We will compare them to those of Ref. [1], case-by-case, below. For the newly introduced physical quantities, related to heavy quark physics, the adoption of new criteria was necessary. This is due to the fact that, in most cases, the discretisation of the heavy quark action follows a very different approach to that of light flavours. Moreover, the two Working Groups dedicated to heavy flavours have opted for a somewhat different rating of the extrapolation of lattice results to the continuum limit. Finally, the strong coupling being in a class of its own, as far as methods for its computation are concerned, led to the introduction of dedicated rating criteria for it.

Of course any colour coding has to be treated with caution; we repeat that the criteria are subjective and evolving. Sometimes a single source of systematic error dominates the systematic uncertainty and it is more important to reduce this uncertainty than to aim for green stars for other sources of error. In spite of these caveats we hope that our attempt to introduce quality measures for lattice results will prove to be a useful guide. In addition we would like to stress that the agreement of lattice results obtained using different actions and procedures evident in many of the tables presented below provides further validation.

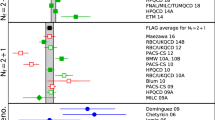

For a coherent assessment of the present situation, the quality of the data plays a key role, but the colour coding cannot be carried over to the figures. On the other hand, simply showing all data on equal footing would give the misleading impression that the overall consistency of the information available on the lattice is questionable. As a way out, the figures do indicate the quality in a rudimentary way:

-

results included in the average;

results included in the average; -

results that are not included in the average but pass all quality criteria;

-

all other results.

The reason for not including a given result in the average is not always the same: the paper may fail one of the quality criteria, may not be published, be superseded by other results or not offer a complete error budget. Symbols other than squares are used to distinguish results with specific properties and are always explained in the caption.

There are separate criteria for light-flavour, heavy-flavour, and \(\alpha _\mathrm{s}\) results. In the following the criteria for the former two are discussed in detail, while the criteria for the \(\alpha _\mathrm{s}\) results will be exposed separately in Sect. 9.2.

2.1.1 Light-quark physics

The colour code used in the tables is specified as follows:

\(\bullet \) Chiral extrapolation:

-

\(M_{\pi ,{\mathrm {min}}}< 200\) MeV

\(M_{\pi ,{\mathrm {min}}}< 200\) MeV -

200 MeV \(\le M_{\pi ,{\mathrm {min}}} \le \) 400 MeV

200 MeV \(\le M_{\pi ,{\mathrm {min}}} \le \) 400 MeV -

400 MeV \( < M_{\pi ,{\mathrm {min}}}\) It is assumed that the chiral extrapolation is done with at least a three-point analysis; otherwise this will be explicitly mentioned. Note that, compared to Ref. [1], chiral extrapolations are now treated in a somewhat more stringent manner and the cutoff between green star and green open circle (formerly amber disc), previously set at 250 MeV, is now lowered to 200 MeV.

400 MeV \( < M_{\pi ,{\mathrm {min}}}\) It is assumed that the chiral extrapolation is done with at least a three-point analysis; otherwise this will be explicitly mentioned. Note that, compared to Ref. [1], chiral extrapolations are now treated in a somewhat more stringent manner and the cutoff between green star and green open circle (formerly amber disc), previously set at 250 MeV, is now lowered to 200 MeV.

\(\bullet \) Continuum extrapolation:

-

three or more lattice spacings, at least two points below 0.1 fm

three or more lattice spacings, at least two points below 0.1 fm -

two or more lattice spacings, at least one point below 0.1 fm

two or more lattice spacings, at least one point below 0.1 fm -

otherwise

otherwiseIt is assumed that the action is \(O(a)\)-improved (i.e. the discretisation errors vanish quadratically with the lattice spacing); otherwise this will be explicitly mentioned. Moreover, for non-improved actions an additional lattice spacing is required. This criterion is the same as the one adopted in Ref. [1].

\(\bullet \) Finite-volume effects:

-

\(M_{\pi ,{\mathrm {min}}} L > 4\) or at least three volumes

\(M_{\pi ,{\mathrm {min}}} L > 4\) or at least three volumes -

\(M_{\pi ,{\mathrm {min}}} L > 3\) and at least two volumes

\(M_{\pi ,{\mathrm {min}}} L > 3\) and at least two volumes -

otherwise

otherwiseThese ratings apply to calculations in the \(p\)-regime and it is assumed that \(L_\mathrm{min}\ge 2\) fm; otherwise this will be explicitly mentioned and a red square will be assigned.

\(\bullet \) Renormalisation (where applicable):

-

non-perturbative

non-perturbative -

one-loop perturbation theory or higher with a reasonable estimate of truncation errors

one-loop perturbation theory or higher with a reasonable estimate of truncation errors -

otherwise

otherwiseIn Ref. [1], we assigned a red square to all results which were renormalised at one loop in perturbation theory. We now feel that this is too restrictive, since the error arising from renormalisation constants, calculated in perturbation theory at one loop, is often estimated conservatively and reliably.

\(\bullet \) Running (where applicable):

-

For scale-dependent quantities, such as quark masses or \(B_K\), it is essential that contact with continuum perturbation theory can be established. Various different methods are used for this purpose (cf. Appendix A.3): Regularisation-independent Momentum Subtraction (RI/MOM), Schrödinger functional, direct comparison with (resummed) perturbation theory. Irrespective of the particular method used, the uncertainty associated with the choice of intermediate renormalisation scales in the construction of physical observables must be brought under control. This is best achieved by performing comparisons between non-perturbative and perturbative running over a reasonably broad range of scales. These comparisons were initially only made in the Schrödinger functional (SF) approach, but they are now also being performed in RI/MOM schemes. We mark the data for which information about non-perturbative running checks is available and give some details, but we do not attempt to translate this into a colour-code.

The pion mass plays an important rôle in the criteria relevant for chiral extrapolation and finite volume. For some of the regularisations used, however, it is not a trivial matter to identify this mass. In the case of twisted-mass fermions, discretisation effects give rise to a mass difference between charged and neutral pions even when the up- and down-quark masses are equal, with the charged pion being the heavier of the two. The discussion of the twisted-mass results presented in the following sections assumes that the artificial isospin-breaking effects which occur in this regularisation are under control. In addition, we assume that the mass of the charged pion may be used when evaluating the chiral-extrapolation and finite-volume criteria. In the case of staggered fermions, discretisation effects give rise to several light states with the quantum numbers of the pion.Footnote 4 The mass splitting among these “taste” partners represents a discretisation effect of \({\mathcal {O}}(a^2)\), which can be significant at big lattice spacings but shrinks as the spacing is reduced. In the discussion of the results obtained with staggered quarks given in the following sections, we assume that these artefacts are under control. When evaluating the chiral-extrapolation criteria, we conservatively identify \(M_{\pi ,\mathrm{min}}\) with the root-mean square (RMS) of the mass of all taste partners. These masses are also used in Sects. 4 and 6 when evaluating the finite-volume criteria, while in Sects. 3, 5, 7 and 8, a more stringent finite-volume criterion is applied: \(M_{\pi ,\mathrm{min}}\) is identified with the mass of the lightest state.

2.1.2 Heavy-quark physics

This subsection discusses the criteria adopted for the heavy-quark quantities included in this review, characterised by non-zero charm and bottom quantum numbers. There are several different approaches to treating heavy quarks on the lattice, each with their own issues and considerations. In general all \(b\)-quark methods rely on the use of Effective Field Theory (EFT) at some point in the computation, either via direct simulation of the EFT, use of the EFT to estimate the size of cutoff errors, or use of the EFT to extrapolate from the simulated lattice quark mass up to the physical \(b\)-quark mass. Some simulations of charm-quark quantities use the same heavy-quark methods as for bottom quarks, but there are also computations that use improved light-quark actions to simulate charm quarks. Hence, with some methods and for some quantities, truncation effects must be considered together with discretisation errors. With other methods, discretisation errors are more severe for heavy-quark quantities than for the corresponding light-quark quantities.

In order to address these complications, we add a new heavy-quark treatment category to the ratings system. The purpose of this criterion is to provide a guideline for the level of action and operator improvement needed in each approach to make reliable calculations possible, in principle. In addition, we replace the rating criteria for the continuum extrapolations of Sect. 2.1.1 with a new empirical approach based on the size of observed discretisation errors in the lattice simulation data. This accounts for the fact that whether discretisation and truncation effects in a given calculation are sufficiently small as to be controllable depends not only on the range of lattice spacings used in the simulations, but also on the simulated heavy-quark masses and on the level of action and operator improvement. For the other categories, we adopt the same strict criteria as in Sect. 2.1.1, with one minor modification, as explained below.

\(\bullet \) Heavy-quark treatment

-

A description of the different approaches to treating heavy quarks on the lattice is given in Appendix A.1.3 including a discussion of the associated discretisation, truncation, and matching errors. For truncation errors we use HQET power counting throughout, since this review is focussed on heavy quark quantities involving \(B\) and \(D\) mesons. Here we describe the criteria for how each approach must be implemented in order to receive an acceptable (

) rating for both the heavy quark actions and the weak operators. Heavy-quark implementations without the level of improvement described below are rated not acceptable (

) rating for both the heavy quark actions and the weak operators. Heavy-quark implementations without the level of improvement described below are rated not acceptable ( ). The matching is evaluated together with renormalisation, using the renormalisation criteria described in Sect. 2.1.1. We emphasise that the heavy-quark implementations rated as acceptable and described below have been validated in a variety of ways, such as via phenomenological agreement with experimental measurements, consistency between independent lattice calculations, and numerical studies of truncation errors. These tests are summarised in Sect. 8.

). The matching is evaluated together with renormalisation, using the renormalisation criteria described in Sect. 2.1.1. We emphasise that the heavy-quark implementations rated as acceptable and described below have been validated in a variety of ways, such as via phenomenological agreement with experimental measurements, consistency between independent lattice calculations, and numerical studies of truncation errors. These tests are summarised in Sect. 8.

Relativistic heavy quark actions:

-

at least tree-level \(O(a)\) improved action and weak operators

at least tree-level \(O(a)\) improved action and weak operatorsThis is similar to the requirements for light quark actions. All current implementations of relativistic heavy quark actions satisfy these criteria.

NRQCD:

-

tree-level matched through \(O(1/m_{h})\) and improved through \(O(a^2)\)

tree-level matched through \(O(1/m_{h})\) and improved through \(O(a^2)\)

The current implementations of NRQCD satisfy these criteria, and also include tree-level corrections of \(O(1/m_{h}^2)\) in the action.

HQET:

-

tree-level matched through \(O(1/m_{h})\) with discretisation errors starting at \(O(a^2)\)

tree-level matched through \(O(1/m_{h})\) with discretisation errors starting at \(O(a^2)\)

The current implementation of HQET by the ALPHA collaboration satisfies these criteria with an action and weak operators that are non-perturbatively matched through \(O(1/m_{h})\). Calculations that exclusively use a static-limit action do not satisfy theses criteria, since the static-limit action, by definition, does not include \(1/m_{h}\) terms. However, for SU(3)-breaking ratios such as \(\xi \) and \(f_{B_{s}}/f_B\) truncation errors start at \(O((m_{s} - m_{d})/m_{h})\). We therefore consider lattice calculations of such ratios that use a static-limit action to still have controllable truncation errors.

Light-quark actions for heavy quarks:

-

discretisation errors starting at \(O(a^2)\) or higher This applies to calculations that use the tmWilson action, a non-perturbatively improved Wilson action, or the HISQ action for charm quark quantities. It also applies to calculations that use these light quark actions in the charm region and above together with either the static limit or with an HQET-inspired extrapolation to obtain results at the physical \(b\) quark mass. In these cases, the continuum-extrapolation criteria must be applied to the entire range of heavy quark masses used in the calculation.

discretisation errors starting at \(O(a^2)\) or higher This applies to calculations that use the tmWilson action, a non-perturbatively improved Wilson action, or the HISQ action for charm quark quantities. It also applies to calculations that use these light quark actions in the charm region and above together with either the static limit or with an HQET-inspired extrapolation to obtain results at the physical \(b\) quark mass. In these cases, the continuum-extrapolation criteria must be applied to the entire range of heavy quark masses used in the calculation.

\(\bullet \) Continuum extrapolation:

-

First we introduce the following definitions:

$$\begin{aligned} D(a) = \frac{Q(a) - Q(0)}{Q(a)}, \end{aligned}$$(1)where \(Q(a)\) denotes the central value of quantity \(Q\) obtained at lattice spacing \(a\) and \(Q(0)\) denotes the continuum extrapolated value. \(D(a)\) is a measure of how far the continuum extrapolated result is from the lattice data. We evaluate this quantity on the smallest lattice spacing used in the calculation, \(a_\mathrm{min}\).

$$\begin{aligned} \delta (a) = \frac{Q(a) - Q(0)}{\sigma _Q}, \end{aligned}$$(2)where \(\sigma _Q\) is the combined statistical and systematic (due to the continuum extrapolation) error. \(\delta (a)\) is a measure of how well the continuum-extrapolated result agrees with the lattice data within the statistical and systematic errors of the calculation. Again, we evaluate this quantity on the smallest lattice spacing used in the calculation, \(a_\mathrm{min}\).

-

(i) Three or more lattice spacings,

(i) Three or more lattice spacings,-

(ii)

\(a^2_\mathrm{max} / a^2_\mathrm{min} \ge 2\),

-

(iii)

\(D(a_\mathrm{min}) \le 2\,\%\), and

-

(iv)

\(\delta (a_\mathrm{min}) \le 1\)

-

(ii)

-

(i) Two or more lattice spacings,

(i) Two or more lattice spacings,-

(ii)

\(a^2_\mathrm{max} / a^2_\mathrm{min} \ge 1.4\),

-

(iii)

\(D(a_\mathrm{min}) \le 10\,\%\),

-

(iv)

\(\delta (a_\mathrm{min}) \le 2\),

-

(ii)

-

otherwise.

otherwise.For the time being, these new criteria for the quality of the continuum extrapolation have only been adopted for the heavy-quark quantities, but their use may be extended to all FLAG quantities in future reviews.

\(\bullet \) Finite-volume:

-

\(M_{\pi ,\mathrm{min}} L \gtrsim 3.7\) or two volumes at fixed parameters

\(M_{\pi ,\mathrm{min}} L \gtrsim 3.7\) or two volumes at fixed parameters -

\(M_{\pi ,\mathrm{min}} L \gtrsim 3\)

\(M_{\pi ,\mathrm{min}} L \gtrsim 3\)

-

otherwise

otherwise

Here the boundary between green star and open circle is slightly relaxed compared to that in Sect. 2.1.1 to account for the fact that heavy-quark quantities are less sensitive to this systematic error than light-quark quantities. A  rating requires an estimate of the finite-volume error either by analysing data on two or more physical volumes (with all other parameters fixed) or by using finite-volume chiral perturbation theory. In the case of staggered sea quarks, \(M_{\pi ,\mathrm{min}}\) refers to the lightest (taste Goldstone) pion mass.

rating requires an estimate of the finite-volume error either by analysing data on two or more physical volumes (with all other parameters fixed) or by using finite-volume chiral perturbation theory. In the case of staggered sea quarks, \(M_{\pi ,\mathrm{min}}\) refers to the lightest (taste Goldstone) pion mass.

2.2 Averages and estimates

For many observables there are enough independent lattice calculations of good quality that it makes sense to average them and propose such an average as the best current lattice number. In order to decide whether this is true for a certain observable, we rely on the colour coding. We restrict the averages to data for which the colour code does not contain any red tags. In some cases, the averaging procedure nevertheless leads to a result which in our opinion does not cover all uncertainties. This is related to the fact that procedures for estimating errors and the resulting conclusions necessarily have an element of subjectivity, and would vary between groups even with the same data set. In order to stay on the conservative side, we may replace the average by an estimate (or a range), which we consider as a fair assessment of the knowledge acquired on the lattice at present. This estimate is not obtained with a prescribed mathematical procedure, but it is based on a critical analysis of the available information.

There are two other important criteria which also play a role in this respect, but which cannot be colour coded, because a systematic improvement is not possible. These are: (i) the publication status, and (ii) the number of flavours \(N_\mathrm{f}\). As far as the former criterion is concerned, we adopt the following policy: we average only results which have been published in peer-reviewed journals, i.e. they have been endorsed by referee(s). The only exception to this rule consists in obvious updates of previously published results, typically presented in conference proceedings. Such updates, which supersede the corresponding results in the published papers, are included in the averages. Nevertheless, all results are listed and their publication status is identified by the following symbols:

\(\bullet \) Publication status:

-

A published or plain update of published results

-

P preprint

-

C conference contribution

Note that updates of earlier results rely, at least partially, on the same gauge-field configuration ensembles. For this reason, we do not average updates with earlier results. In the present edition, the publication status on November 30, 2013 is relevant. If the paper appeared in print after that date this is accounted for in the bibliography, but it does not affect the averages.

In this review we present results from simulations with \(N_\mathrm{f}=2\), \(N_\mathrm{f}=2+1\) and \(N_\mathrm{f}=2+1+1\) (for \( r_0 \Lambda _{\overline{\mathrm{MS}}}\) also with \(N_\mathrm{f}=0\)). We are not aware of an a priori way to quantitatively estimate the difference between results produced in simulations with a different number of dynamical quarks. We therefore average results at fixed \(N_\mathrm{f}\) separately; averages of calculations with different \(N_\mathrm{f}\) will not be provided.

To date, no significant differences between results with different values of \(N_\mathrm{f}\) have been observed. In the future, as the accuracy and the control over systematic effects in lattice calculations will increase, it will hopefully be possible to see a difference between \(N_\mathrm{f}= 2\) and \(N_\mathrm{f}= 2 + 1\) calculations and so determine the size of the Zweig-rule violations related to strange quark loops. This is a very interesting issue per se, and one which can be quantitatively addressed only with lattice calculations.

2.3 Averaging procedure and error analysis

In [1], the FLAG averages and their errors were estimated through the following procedure: Having added in quadrature statistical and systematic errors for each individual result, we obtained their weighted \(\chi ^2\) average. This was our central value. If the fit was of good quality (\(\chi _\mathrm{min}^2/\hbox {dof} \le 1\)), we calculated the net uncertainty \(\delta \) from \(\chi ^2 = \chi _\mathrm{min}^2 + 1\); otherwise, we inflated the result obtained in this way by the factor \(S = \sqrt{(}\chi ^2/\hbox {dof})\). Whenever this \(\chi ^2\) minimisation procedure resulted in a total error which was smaller than the smallest systematic error of any individual lattice result, we assigned the smallest systematic error of that result to the total systematic error in the average.

One of the problems arising when forming such averages is that not all of the data sets are independent; in fact, some rely on the same ensembles. In particular, the same gauge-field configurations, produced with a given fermion discretisation, are often used by different research teams with different valence quark lattice actions, obtaining results which are not really independent. In the present paper we have modified our averaging procedure, in order to account for such correlations. To start with, we examine error budgets for individual calculations and look for potentially correlated uncertainties. Specific problems encountered in connection with correlations between different data sets are commented in the text. If there is any reason to believe that a source of error is correlated between two calculations, a 100 % correlation is assumed. We then obtain the central value from a \(\chi ^2\) weighted average, evaluated by adding statistical and systematic errors in quadrature (just as in Ref. [1]): for a set of individual measurements \(x_i\) with error \(\sigma _i\) and correlation matrix \(C_{ij}\), central value and error of the average are given by

The correlation matrix for the set of correlated lattice results is estimated with Schmelling’s prescription [16]. When necessary, the statistical and systematic error bars are stretched by a factor \(S\), as specified in the previous paragraph.

3 Masses of the light quarks

Quark masses are fundamental parameters of the Standard Model. An accurate determination of these parameters is important for both phenomenological and theoretical applications. The charm and bottom masses, for instance, enter the theoretical expressions of several cross sections and decay rates in heavy-quark expansions. The up-, down- and strange-quark masses govern the amount of explicit chiral symmetry breaking in QCD. From a theoretical point of view, the values of quark masses provide information about the flavour structure of physics beyond the Standard Model. The Review of Particle Physics of the Particle Data Group contains a review of quark masses [17], which covers light as well as heavy flavours. The present summary only deals with the light-quark masses (those of the up, down and strange quarks), but it discusses the lattice results for these in more detail.

Quark masses cannot be measured directly with experiment because quarks cannot be isolated, as they are confined inside hadrons. On the other hand, quark masses are free parameters of the theory and, as such, cannot be obtained on the basis of purely theoretical considerations. Their values can only be determined by comparing the theoretical prediction for an observable, which depends on the quark mass of interest, with the corresponding experimental value. What makes light-quark masses particularly difficult to determine is the fact that they are very small (for the up and down) or small (for the strange) compared to typical hadronic scales. Thus, their impact on typical hadronic observables is minute and it is difficult to isolate their contribution accurately.

Fortunately, the spontaneous breaking of SU(3)\(_L\otimes \)SU(3)\(_R\) chiral symmetry provides observables which are particularly sensitive to the light-quark masses: the masses of the resulting Nambu–Goldstone bosons (NGB), i.e. pions, kaons and etas. Indeed, the Gell-Mann–Oakes–Renner relation [18] predicts that the squared mass of a NGB is directly proportional to the sum of the masses of the quark and antiquark which compose it, up to higher-order mass corrections. Moreover, because these NGBs are light and are composed of only two valence particles, their masses have a particularly clean statistical signal in lattice-QCD calculations. In addition, the experimental uncertainties on these meson masses are negligible.

Three flavour QCD has four free parameters: the strong coupling, \(\alpha _\mathrm{s}\) (alternatively \(\Lambda _\mathrm{QCD}\)) and the up, down and strange quark masses, \(m_{u}\), \(m_{d}\) and \(m_{s}\). However, present day lattice calculations are often performed in the isospin limit, and the up and down quark masses (especially those in the sea) usually get replaced by a single parameter: the isospin-averaged up- and down-quark mass, \(m_{ud}=\frac{1}{2}(m_{u}+m_{d})\). A lattice determination of these parameters requires two steps:

-

1.

Calculations of three experimentally measurable quantities are used to fix the three bare parameters. As already discussed, NGB masses are particularly appropriate for fixing the light-quark masses. Another observable, such as the mass of a member of the baryon octet, can be used to fix the overall scale. It is important to note that until recently, most calculations were performed at values of \(m_{ud}\) which were still substantially larger than its physical value, typically four times as large. Reaching the physical up- and down-quark mass point required a significant extrapolation. This situation is changing fast. The PACS-CS [19–21] and BMW [22, 23] calculations were performed with masses all the way down to their physical value (and even below in the case of BMW), albeit in very small volumes for PACS-CS. More recently, MILC [24] and RBC/UKQCD [25] have also extended their simulations almost down to the physical point, by considering pions with \(M_\pi \gtrsim 170\,\mathrm{MeV}\).Footnote 5 Regarding the strange quark, modern simulations can easily include them with masses that bracket its physical value, and only interpolations are needed.

-

2.

Renormalisations of these bare parameters must be performed to relate them to the corresponding cutoff-independent, renormalised parameters.Footnote 6 These are short-distance calculations, which may be performed perturbatively. Experience shows that one-loop calculations are unreliable for the renormalisation of quark masses: usually at least two loops are required to have trustworthy results. Therefore, it is best to perform the renormalisations non-perturbatively to avoid potentially large perturbative uncertainties due to neglected higher-order terms. However, we will include in our averages one-loop results which carry a solid estimate of the systematic uncertainty due to the truncation of the series.

Of course, in quark mass ratios the renormalisation factor cancels, so that this second step is no longer relevant.

3.1 Contributions from the electromagnetic interaction

As mentioned in Sect. 2.1, the present review relies on the hypothesis that, at low energies, the Lagrangian \(\mathcal{L}_{\mathrm{QCD}}+\mathcal{L}_{\mathrm{QED}}\) describes nature to a high degree of precision. Moreover, we assume that, at the accuracy reached by now and for the quantities discussed here, the difference between the results obtained from simulations with three dynamical flavours and full QCD is small in comparison with the quoted systematic uncertainties. This will soon no longer be the case. The electromagnetic (e.m.) interaction, on the other hand, cannot be ignored. Quite generally, when comparing QCD calculations with experiment, radiative corrections need to be applied. In lattice simulations, where the QCD parameters are fixed in terms of the masses of some of the hadrons, the electromagnetic contributions to these masses must be accounted for.Footnote 7

The electromagnetic interaction plays a crucial role in determinations of the ratio \(m_{u}/m_{d}\), because the isospin-breaking effects generated by this interaction are comparable to those from \(m_{u}\ne m_{d}\) (see Sect. 3.4). In determinations of the ratio \(m_{s}/m_{ud}\), the electromagnetic interaction is less important, but at the accuracy reached, it cannot be neglected. The reason is that, in the determination of this ratio, the pion mass enters as an input parameter. Because \(M_\pi \) represents a small symmetry-breaking effect, it is rather sensitive to the perturbations generated by QED.

We distinguish the physical mass \(M_P\), \(P\in \{\pi ^+, \pi ^0\), \(K^+\), \(K^0\}\), from the mass \(\hat{M}_P\) within QCD alone. The e.m. self-energy is the difference between the two, \(M_P^\gamma \equiv M_P-\hat{M}_P\). Because the self-energy of the Nambu–Goldstone bosons diverges in the chiral limit, it is convenient to replace it by the contribution of the e.m. interaction to the square of the mass,

The main effect of the e.m. interaction is an increase in the mass of the charged particles, generated by the photon cloud that surrounds them. The self-energies of the neutral ones are comparatively small, particularly for the Nambu–Goldstone bosons, which do not have a magnetic moment. Dashen’s theorem [31] confirms this picture, as it states that, to leading order (LO) of the chiral expansion, the self-energies of the neutral NGBs vanish, while the charged ones obey \(\Delta _{K^+}^\gamma = \Delta _{\pi ^+}^\gamma \). It is convenient to express the self-energies of the neutral particles as well as the mass difference between the charged and neutral pions within QCD in units of the observed mass difference, \(\Delta _\pi \equiv M_{\pi ^+}^2-M_{\pi ^0}^2\):

In this notation, the self-energies of the charged particles are given by

where the dimensionless coefficient \(\epsilon \) parameterises the violation of Dashen’s theorem,Footnote 8

Any determination of the light-quark masses based on a calculation of the masses of \(\pi ^+,K^+\) and \(K^0\) within QCD requires an estimate for the coefficients \(\epsilon \), \(\epsilon _{\pi ^0}\), \(\epsilon _{K^0}\) and \(\epsilon _{m}\).

The first determination of the self-energies on the lattice was carried out by Duncan et al. [33]. Using the quenched approximation, they arrived at \(M_{K^+}^\gamma -M_{K^0}^\gamma = 1.9\,\hbox {MeV}\). Actually, the parameterisation of the masses given in that paper yields an estimate for all but one of the coefficients introduced above (since the mass splitting between the charged and neutral pions in QCD is neglected, the parameterisation amounts to setting \(\epsilon _{m}=0\) ab initio). Evaluating the differences between the masses obtained at the physical value of the electromagnetic coupling constant and at \(e=0\), we obtain \(\epsilon = 0.50(8)\), \(\epsilon _{\pi ^0} = 0.034(5)\) and \(\epsilon _{K^0} = 0.23(3)\). The errors quoted are statistical only: an estimate of lattice systematic errors is not possible from the limited results of Duncan et al. [33]. The result for \(\epsilon \) indicates that the violation of Dashen’s theorem is sizeable: according to this calculation, the non-leading contributions to the self-energy difference of the kaons amount to 50 % of the leading term. The result for the self-energy of the neutral pion cannot be taken at face value, because it is small, comparable to the neglected mass difference \(\hat{M}_{\pi ^+}-\hat{M}_{\pi ^0}\). To illustrate this, we note that the numbers quoted above are obtained by matching the parameterisation with the physical masses for \(\pi ^0\), \(K^+\) and \(K^0\). This gives a mass for the charged pion that is too high by 0.32 MeV. Tuning the parameters instead such that \(M_{\pi ^+}\) comes out correctly, the result for the self-energy of the neutral pion becomes larger: \(\epsilon _{\pi ^0}=0.10(7)\) where, again, the error is statistical only.

In an update of this calculation by the RBC collaboration [34] (RBC 07), the electromagnetic interaction is still treated in the quenched approximation, but the strong interaction is simulated with \(N_\mathrm{f}=2\) dynamical quark flavours. The quark masses are fixed with the physical masses of \(\pi ^0\), \(K^+\) and \(K^0\). The outcome for the difference in the electromagnetic self-energy of the kaons reads \(M_{K^+}^\gamma -M_{K^0}^\gamma = 1.443(55)\,\hbox {MeV}\). This corresponds to a remarkably small violation of Dashen’s theorem. Indeed, a recent extension of this work to \(N_\mathrm{f}=2+1\) dynamical flavours [32] leads to a significantly larger self-energy difference: \(M_{K^+}^\gamma -M_{K^0}^\gamma = 1.87(10)\,\hbox {MeV}\), in good agreement with the estimate of Eichten et al. Expressed in terms of the coefficient \(\epsilon \) that measures the size of the violation of Dashen’s theorem, it corresponds to \(\epsilon =0.5(1)\).

The input for the electromagnetic corrections used by MILC is specified in [35]. In their analysis of the lattice data, \(\epsilon _{\pi ^0}\), \(\epsilon _{K^0}\) and \(\epsilon _{m}\) are set equal to zero. For the remaining coefficient, which plays a crucial role in determinations of the ratio \(m_{u}/m_{d}\), the very conservative range \(\epsilon =1\pm 1\) was used in MILC 04 [36], while in more recent work, in particular in MILC 09 [15] and MILC 09A [37], this input is replaced by \(\epsilon =1.2\pm 0.5\), as suggested by phenomenological estimates for the corrections to Dashen’s theorem [38, 39]. Results of an evaluation of the electromagnetic self-energies based on \(N_\mathrm{f}=2+1\) dynamical quarks in the QCD sector and on the quenched approximation in the QED sector are also reported by MILC [40–42]. Their preliminary result is \(\bar{\epsilon }=0.65(7)(14)(10)\), where the first error is statistical, the second systematic, and the third a separate systematic for the combined chiral and continuum extrapolation. The estimate of the systematic error does not yet include finite-volume effects. With the estimate for \(\epsilon _{m}\) given in (9), this result corresponds to \(\epsilon = 0.62(7)(14)(10)\). Similar preliminary results were previously reported by the BMW collaboration in conference proceedings [43, 44].

The RM123 collaboration employs a new technique to compute e.m. shifts in hadron masses in two-flavour QCD: the effects are included at leading order in the electromagnetic coupling \(\alpha \) through simple insertions of the fundamental electromagnetic interaction in quark lines of relevant Feynman graphs [45]. They find \(\epsilon =0.79(18)(18)\) where the first error is statistical and the second is the total systematic error resulting from chiral, finite-volume, discretisation, quenching and fitting errors all added in quadrature.

The effective Lagrangian that governs the self-energies to next-to-leading order (NLO) of the chiral expansion was set up in [46]. The estimates in [38, 39] are obtained by replacing QCD with a model, matching this model with the effective theory and assuming that the effective coupling constants obtained in this way represent a decent approximation to those of QCD. For alternative model estimates and a detailed discussion of the problems encountered in models based on saturation by resonances, see [47–49]. In the present review of the information obtained on the lattice, we avoid the use of models altogether.

There is an indirect phenomenological determination of \(\epsilon \), which is based on the decay \(\eta \rightarrow 3\pi \) and does not rely on models. The result for the quark mass ratio \(Q\), defined in (24) and obtained from a dispersive analysis of this decay, implies \(\epsilon = 0.70(28)\) (see Sect. 3.4). While the values found in older lattice calculations [32–34] are a little less than one standard deviation lower, the most recent determinations [40–45, 50], though still preliminary, are in excellent agreement with this result and have significantly smaller error bars. However, even in the more recent calculations, e.m. effects are treated in the quenched approximation. Thus, we choose to quote \(\epsilon = 0.7(3)\), which is essentially the \(\eta \rightarrow 3\pi \) result and covers generously the range of post 2010 lattice results. Note that this value has an uncertainty which is reduced by about 40 % compared to the result quoted in the first edition of the FLAG review [1].

We add a few comments concerning the physics of the self-energies and then specify the estimates used as an input in our analysis of the data. The Cottingham formula [51] represents the self-energy of a particle as an integral over electron scattering cross sections; elastic as well as inelastic reactions contribute. For the charged pion, the term due to elastic scattering, which involves the square of the e.m. form factor, makes a substantial contribution. In the case of the \(\pi ^0\), this term is absent, because the form factor vanishes on account of charge conjugation invariance. Indeed, the contribution from the form factor to the self-energy of the \(\pi ^+\) roughly reproduces the observed mass difference between the two particles. Furthermore, the numbers given in [52–54] indicate that the inelastic contributions are significantly smaller than the elastic contributions to the self-energy of the \(\pi ^+\). The low energy theorem of Das et al. [55] ensures that, in the limit \(m_{u},m_{d}\rightarrow 0\), the e.m. self-energy of the \(\pi ^0\) vanishes, while the one of the \(\pi ^+\) is given by an integral over the difference between the vector and axial-vector spectral functions. The estimates for \(\epsilon _{\pi ^0}\) obtained in [33] are consistent with the suppression of the self-energy of the \(\pi ^0\) implied by chiral SU(2) \(\times \) SU(2). In our opinion, \(\epsilon _{\pi ^0}=0.07(7)\) is a conservative estimate for this coefficient. The self-energy of the \(K^0\) is suppressed less strongly, because it remains different from zero if \(m_{u}\) and \(m_{d}\) are taken massless and only disappears if \(m_{s}\) is turned off as well. Note also that, since the e.m. form factor of the \(K^0\) is different from zero, the self-energy of the \(K^0\) does pick up an elastic contribution. The lattice result for \(\epsilon _{K^0}\) indicates that the violation of Dashen’s theorem is smaller than in the case of \(\epsilon \). In the following, we use \(\epsilon _{K^0}=0.3(3)\).

Finally, we consider the mass splitting between the charged and neutral pions in QCD. This effect is known to be very small, because it is of second order in \(m_{u}-m_{d}\). There is a parameter-free prediction, which expresses the difference \(\hat{M}_{\pi ^+}^2-\hat{M}_{\pi ^0}^2\) in terms of the physical masses of the pseudoscalar octet and is valid to NLO of the chiral perturbation series. Numerically, the relation yields \(\epsilon _{m}=0.04\) [56], indicating that this contribution does not play a significant role at the present level of accuracy. We attach a conservative error also to this coefficient: \(\epsilon _{m}=0.04(2)\). The lattice result for the self-energy difference of the pions, reported in [32], \(M_{\pi ^+}^\gamma -M_{\pi ^0}^\gamma = 4.50(23)\,\hbox {MeV}\), agrees with this estimate: expressed in terms of the coefficient \(\epsilon _{m}\) that measures the pion mass splitting in QCD, the result corresponds to \(\epsilon _{m}=0.04(5)\). The corrections of next-to-next-to-leading order (NNLO) have been worked out [57], but the numerical evaluation of the formulae again meets with the problem that the relevant effective coupling constants are not reliably known.

In summary, we use the following estimates for the e.m. corrections:

While the range used for the coefficient \(\epsilon \) affects our analysis in a significant way, the numerical values of the other coefficients only serve to set the scale of these contributions. The range given for \(\epsilon _{\pi ^0}\) and \(\epsilon _{K^0}\) may be overly generous, but because of the exploratory nature of the lattice determinations, we consider it advisable to use a conservative estimate.

Treating the uncertainties in the four coefficients as statistically independent and adding errors in quadrature, the numbers in equation (9) yield the following estimates for the e.m. self-energies,

and for the pion and kaon masses occurring in the QCD sector of the Standard Model,

The self-energy difference between the charged and neutral pion involves the same coefficient \(\epsilon _{m}\) that describes the mass difference in QCD—this is why the estimate for \( M_{\pi ^+}^\gamma -M_{\pi ^0}^\gamma \) is so sharp.

3.2 Pion and kaon masses in the isospin limit

As mentioned above, most of the lattice calculations concerning the properties of the light mesons are performed in the isospin limit of QCD (\(m_{u}-m_{d}\rightarrow 0\) at fixed \(m_{u}+m_{d}\)). We denote the pion and kaon masses in that limit by \(\overline{M}_{\pi }\) and \(\overline{M}_{K}\), respectively. Their numerical values can be estimated as follows. Since the operation \(u\leftrightarrow d\) interchanges \(\pi ^+\) with \(\pi ^-\) and \(K^+\) with \(K^0\), the expansion of the quantities \(\hat{M}_{\pi ^+}^2\) and \(\frac{1}{2}(\hat{M}_{K^+}^2+\hat{M}_{K^0}^2)\) in powers of \(m_{u}-m_{d}\) only contains even powers. As shown in [58], the effects generated by \(m_{u}-m_{d}\) in the mass of the charged pion are strongly suppressed: the difference \(\hat{M}_{\pi ^+}^2-\overline{M}_{\pi }^{\,2}\) represents a quantity of \(O[(m_{u}-m_{d})^2(m_{u}+m_{d})]\) and is therefore small compared to the difference \(\hat{M}_{\pi ^+}^2-\hat{M}_{\pi ^0}^2\), for which an estimate was given above. In the case of \(\frac{1}{2}(\hat{M}_{K^+}^2+\hat{M}_{K^0}^2)-\overline{M}_{K}^{\,2}\), the expansion does contain a contribution at NLO, determined by the combination \(2L_8-L_5\) of low-energy constants, but the lattice results for that combination show that this contribution is very small, too. Numerically, the effects generated by \(m_{u}-m_{d}\) in \(\hat{M}_{\pi ^+}^2\) and in \(\frac{1}{2}(\hat{M}_{K^+}^2+\hat{M}_{K^0}^2)\) are negligible compared to the uncertainties in the electromagnetic self-energies. The estimates for these given in Eq. (11) thus imply

This shows that, for the convention used above to specify the QCD sector of the Standard Model, and within the accuracy to which this convention can currently be implemented, the mass of the pion in the isospin limit agrees with the physical mass of the neutral pion: \(\overline{M}_{\pi }-M_{\pi 0}=-0.2(3)\) MeV.

3.3 Lattice determination of \(m_{s}\) and \(m_{ud}\)

We now turn to a review of the lattice calculations of the light-quark masses and begin with \(m_{s}\), the isospin-averaged up- and down-quark mass, \(m_{ud}\), and their ratio. Most groups quote only \(m_{ud}\), not the individual up- and down-quark masses. We then discuss the ratio \(m_{u}/m_{d}\) and the individual determination of \(m_{u}\) and \(m_{d}\).

Quark masses have been calculated on the lattice since the mid-1990s. However, early calculations were performed in the quenched approximation, leading to unquantifiable systematics. Thus in the following, we only review modern, unquenched calculations, which include the effects of light sea-quarks.

Tables 2 and 3 list the results of \(N_\mathrm{f}=2\) and \(N_\mathrm{f}=2+1\) lattice calculations of \(m_{s}\) and \(m_{ud}\). These results are given in the \({\overline{\mathrm{MS}}}\) scheme at \(2\,\mathrm{GeV}\), which is standard nowadays, though some groups are starting to quote results at higher scales (e.g. [25]). The tables also show the colour-coding of the calculations leading to these results. The corresponding results for \(m_{s}/m_{ud}\) are given in Table 4. As indicated earlier in this review, we treat \(N_\mathrm{f}=2\) and \(N_\mathrm{f}=2+1\) calculations separately. The latter include the effects of a strange sea-quark, but the former do not.

3.3.1 \(N_\mathrm{f}=2\) lattice calculations

We begin with \(N_\mathrm{f}=2\) calculations. A quick inspection of Table 2 indicates that only the most recent calculations, ALPHA 12 [59] and ETM 10B [60], control all systematic effects—the special case of Dürr 11 [61] is discussed below. Only ALPHA 12 [59], ETM 10B [60] and ETM 07 [62] really enter the chiral regime, with pion masses down to about 270 MeV for ALPHA and ETM. Because this pion mass is still quite far from the physical pion mass, ALPHA 12 refrain from determining \(m_{ud}\) and give only \(m_{s}\). All the other calculations have significantly more massive pions, the lightest being about 430 MeV, in the calculation by CP-PACS 01 [63]. Moreover, the latter calculation is performed on very coarse lattices, with lattice spacings \(a\ge 0.11\,\,{\mathrm {fm}}\) and only one-loop perturbation theory is used to renormalise the results.

ETM 10B’s [60] calculation of \(m_{ud}\) and \(m_{s}\) is an update of the earlier twisted-mass determination of ETM 07 [62]. In particular, they have added ensembles with a larger volume and three new lattice spacings, \(a = 0.054, 0.067\) and \(0.098\,\,{\mathrm {fm}}\), allowing for a continuum extrapolation. In addition, it presents analyses performed in SU(2) and \(\hbox {SU}(3) \chi \)PT.

The new ALPHA 12 [59] calculation of \(m_{s}\) is an update of ALPHA 05 [64], which pushes computations to finer lattices and much lighter pion masses. It also importantly includes a determination of the lattice spacing with the decay constant \(F_K\), whereas ALPHA 05 converted results to physical units using the scale parameter \(r_0\) [65], defined via the force between static quarks. In particular, the conversion relied on measurements of \(r_0/a\) by QCDSF/UKQCD 04 [66] which differ significantly from the new determination by ALPHA 12. As in ALPHA 05, in ALPHA 12 both non-perturbative running and non-perturbative renormalisation are performed in a controlled fashion, using Schrödinger functional methods.

The conclusion of our analysis of \(N_\mathrm{f}=2\) calculations is that the results of ALPHA 12 [59] and ETM 10B [60] (which update and extend ALPHA 05 [64] and ETM 07 [62], respectively), are the only ones to date which satisfy our selection criteria. Thus we average those two results for \(m_{s}\), obtaining 101(3) MeV. Regarding \(m_{ud}\), for which only ETM 10B [60] gives a value, we do not offer an average but simply quote ETM’s number. Because ALPHA’s result induces an increase by 7 % of our earlier average for \(m_{s}\) [1], while \(m_{ud}\) remains unchanged, our average for \(m_{s}/m_{ud}\) also increases by 7 %. For the latter, however, we retain the percent error quoted by ETM, who directly estimates this ratio, and add it in quadrature to the percent error on ALPHA’s \(m_{s}\). Thus, we quote as our estimates:

The errors on these results are 3, 6 and 4 %, respectively. The error is smaller in the ratio than one would get from combining the errors on \(m_{ud}\) and \(m_{s}\), because statistical and systematic errors cancel in ETM’s result for this ratio, most notably those associated with renormalisation and the setting of the scale. It is worth noting that thanks to ALPHA 12 [59], the total error on \(m_{s}\) has reduced significantly, from 7 % in the last edition of our report to 3 % now. It is also interesting to remark that ALPHA 12’s [59] central value for \(m_{s}\) is about 1 \(\sigma \) larger than that of ETM 10B [60] and nearly 2 \(\sigma \) larger than our present \(N_\mathrm{f}=2+1\) determination given in (14). Moreover, this larger value for \(m_{s}\) increases our \(N_\mathrm{f}=2\) determination of \(m_{s}/m_{ud}\), making it larger than ETM 10B’s direct measurement, though compatible within errors.

We have not discussed yet the precise results of Dürr 11 [61] which satisfy our selection criteria. This is because Dürr 11 pursue an approach which is sufficiently different from the one of other calculations that we prefer not to include it in an average at this stage. Following HPQCD 09A, 10 [72, 73], the observable which they actually compute is \(m_{c}/m_{s}=11.27(30)(26)\), with an accuracy of 3.5 %. This result is about 1.5 combined standard deviations below ETM 10B’s [60] result \(m_{c}/m_{s}=12.0(3)\). \(m_{s}\) is subsequently obtained using lattice and phenomenological determinations of \(m_{c}\) which rely on perturbation theory. The value of the charm-quark mass which they use is an average of those determinations, which they estimate to be \(m_{c}(2\,\mathrm{GeV})=1.093(13)\,\mathrm{GeV}\), with a 1.2 % total uncertainty. Note that this value is consistent with the PDG average \(m_{c}(2\,\mathrm{GeV})=1.094(21)\,\mathrm{GeV}\) [74], though the latter has a larger 2.0 % uncertainty. Dürr 11’s value of \(m_{c}\) leads to \(m_{s}=97.0(2.6)(2.5)\,\mathrm{MeV}\) given in Table 2, which has a total error of 3.7 %. The use of the PDG value for \(m_{c}\) [74] would lead to a very similar result. The result for \(m_{s}\) is perfectly compatible with our estimate given in (13) and has a comparable error bar. To determine \(m_{ud}\), Dürr 11 combine their result for \(m_{s}\) with the \(N_\mathrm{f}=2+1\) calculation of \(m_{s}/m_{ud}\) of BMW 10A, 10B [22, 23] discussed below. They obtain \(m_{ud}=3.52(10)(9)\,\mathrm{MeV}\) with a total uncertainty of less than 4 %, which is again fully consistent with our estimate of (13) and its uncertainty.

3.3.2 \(N_\mathrm{f}=2+1\) lattice calculations

We turn now to \(N_\mathrm{f}=2+1\) calculations. These and the corresponding results are summarised in Tables 3 and 4. Somewhat paradoxically, these calculations are more mature than those with \(N_\mathrm{f}=2\). This is thanks, in large part, to the head start and sustained effort of MILC, who have been performing \(N_\mathrm{f}=2+1\) rooted staggered fermion calculations for the past ten or so years. They have covered an impressive range of parameter space, with lattice spacings which, today, go down to 0.045 fm and valence pion masses down to approximately 180 MeV [37]. The most recent updates, MILC 10A [75] and MILC 09A [37], include significantly more data and use two-loop renormalisation. Since these data sets subsume those of their previous calculations, these latest results are the only ones that must be kept in any world average.

Since our last report [1] the situation for \(N_\mathrm{f}=2+1\) determinations of light quarks has undergone some evolution. There are new computations by RBC/UKQCD 12 [25], PACS-CS 12 [76] and Laiho 11 [77]. Furthermore, the results of BMW 10A, 10B [22, 23] have been published and can now be included in our averages.

The RBC/UKQCD 12 [25] computation improves on the one of RBC/UKQCD 10A [78] in a number of ways. In particular it involves a new simulation performed at a rather coarse lattice spacing of 0.144 fm, but with unitary pion masses down to 171(1) MeV and valence pion masses down to 143(1) MeV in a volume of \((4.6\,\,{\mathrm {fm}})^3\), compared, respectively, to 290 MeV, 225 MeV and \((2.7\,\,{\mathrm {fm}})^3\) in RBC/UKQCD 10A. This provides them with a significantly better control over the extrapolation to physical \(M_\pi \) and to the infinite-volume limit. As before, they perform non-perturbative renormalisation and running in RI/SMOM schemes. The only weaker point of the calculation comes from the fact that two of their three lattice spacings are larger than 0.1 fm and correspond to different discretisations, while the finest is only 0.085 fm, making it difficult to convincingly claim full control over the continuum limit. This is mitigated by the fact that the scaling violations which they observe on their coarsest lattice are for many quantities small, around 5 %.

The Laiho 11 results [77] are based on MILC staggered ensembles at the lattice spacings 0.15, 0.09 and 0.06 fm, on which they propagate domain-wall quarks. Moreover, they work in volumes of up to \((4.8\,\,{\mathrm {fm}})^3\). These features give them full control over the continuum and infinite-volume extrapolations. Their lightest RMS sea pion mass is 280 MeV and their valence pions have masses down to 210 MeV. The fact that their sea pions do not enter deeply into the chiral regime penalises somewhat their extrapolation to physical \(M_\pi \). Moreover, to renormalise the quark masses, they use one-loop perturbation theory for \(Z_A/Z_S-1\) which they combine with \(Z_A\) determined non-perturbatively from the axial-vector Ward identity. Although they conservatively estimate the uncertainty associated with the procedure to be 5 %, which is the size of their largest one-loop correction, this represents a weaker point of this calculation.