Abstract

In this review we will present the results of recent \(\beta \)-decay studies using the total absorption technique that cover topics of interest for applications, nuclear structure and astrophysics. The decays studied were selected primarily because they have a large impact on the prediction of (a) the decay heat in reactors, important for the safety of present and future reactors and (b) the reactor electron anti-neutrino spectrum, of interest for particle/nuclear physics and reactor monitoring. For these studies the total absorption technique was chosen, since it is the only method that allows one to obtain \(\beta \)-decay probabilities free from a systematic error called the Pandemonium effect. The total absorption technique is based on the detection of the \(\gamma \) cascades that follow the initial \(\beta \) decay. For this reason the technique requires the use of calorimeters with very high \(\gamma \) detection efficiency. The measurements presented and discussed here were performed mainly at the IGISOL facility of the University of Jyväskylä (Finland) using isotopically pure beams provided by the JYFLTRAP Penning trap. Examples are presented to show that the results of our measurements on selected nuclei have had a large impact on predictions of both the decay heat and the anti-neutrino spectrum from reactors. Some of the cases involve \(\beta \)-delayed neutron emission thus one can study the competition between \(\gamma \)- and neutron-emission from states above the neutron separation energy. The \(\gamma \)-to-neutron emission ratios can be used to constrain neutron capture (n,\(\gamma \)) cross sections for unstable nuclei of interest in astrophysics. The information obtained from the measurements can also be used to test nuclear model predictions of half-lives and Pn values for decays of interest in astrophysical network calculations. These comparisons also provide insights into aspects of nuclear structure in particular regions of the nuclear chart.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Our knowledge of the properties of atomic nuclei is derived almost entirely from studies of nuclear reactions and radioactive decays. The ground and excited states of nuclei exhibit many forms of decay but the most common are \(\alpha \), \(\beta \) and \(\gamma \)-ray emission. Our focus here is on \(\beta \) decay in its various manifestations. A glance at the Segrè Chart reveals that it is the most common way for the ground states of nuclei to decay and it is frequently the observation of such \(\beta \) decays that brings us our first knowledge of a particular nuclear species and its properties.

The study of \(\beta \) decay is intrinsically much more difficult than the study of either \(\alpha \) or \(\gamma \) decay. The reason for this is straightforward. Alpha particles and \(\gamma \)-rays are emitted with discrete energies determined by the differences in energy between the initial and final states involved. Thus characteristic \(\alpha \) and \(\gamma \)-ray spectra exhibit a series of discrete lines. It requires sophisticated detection and analysis techniques to determine the excitation energies of the states involved, their lifetimes and the transition rates between states. Beta decay carries the same information, but the difficulties of measurement and interpretation are compounded because the spectrum is continuous, not discrete. In 1930 this was explained by Pauli’s hypothesis [1] of the existence of a neutral, zero mass particle called in his letter the neutron that is emitted with the \(\beta \) particle. The sharing of momentum and energy then explains the continuous spectrum. Shortly afterwards Fermi [2] was able to formulate a theory of \(\beta \) decay based on this idea and coined the name neutrino (little neutral one) for the particle.

A knowledge of \(\beta \)-decay transition probabilities is of particular importance for application to (a) tests of nuclear model calculations, (b) the radioactive decay heat in reactors, (c) the reactor electron anti-neutrino spectrum and (d) reaction network calculations for nucleosynthesis in explosive stellar events. In this article we will provide examples of our recent studies of \(\beta \) decays that involve the use of total absorption gamma spectroscopy (TAGS) to tackle the topics listed above. The TAGS method was adopted in our measurements because it overcomes the difficulties inherent in the conventional use of Ge detector arrays for this purpose. Such arrays are an important and essential tool for constructing nuclear decay schemes since they are very well suited to the study of gamma-gamma coincidences, the main basis for building such schemes. The normal practice is then to derive \(\beta \)-decay transition probabilities for each level i populated from the difference in the total intensity of all the \(\gamma \)-rays feeding the level (see Fig. 1) and the sum of the intensities of all those de-exciting it, corrected by the effect of internal conversion. In principle this allows us to obtain the \(\beta \) branching to every level, assuming that we are able to determine by some other means the number of decays that go directly to the daughter ground state, which are not accompanied by \(\gamma \) emission.

Schematic picture of how the \(\beta \) feeding is determined in a \(\beta \)-decay experiment employing Ge detectors. The \(\beta \) feeding (I\(_{\beta }(i)\)) to level i is determined from the difference of the total intensity feeding the level (\(I^{tot}_{in}(i)\)) and those de-exciting it (\(I^{tot}_{out}(i)\)). The sum over (k) represents all transitions feeding or de-exciting the level. I\(_{\gamma _{k}}\) stands for the \(\gamma \) intensity of transition k and I\(_{CE_{k}}\) represents the conversion electron intensity

Unfortunately this "simple" procedure does not necessarily give us the correct answers. States at high excitation energies in the daughter nucleus can be populated if the \(Q_{\beta }\) value of the decay is large. In medium and heavy nuclei both the number of levels that can be directly populated by the \(\beta \) decay is large and the number of levels available to which they can \(\gamma \) decay is also large. As a result, in general, individual \(\gamma \)-rays (emitted by levels at high excitation energy) have low intensity. Ge detectors, indeed even \(\gamma \)-ray arrays, have limited detection efficiencies particularly at higher energies and thus weak transitions are often not detected in experiments. It is clear that this means that we have a problem that has become known as the Pandemonium effect [3] (see Fig. 2 for a simplified picture).

Simplified picture of a \(\beta \) decay where only one excited state is populated and it de-excites by the emission of a \(\gamma \) cascade. The left hand panel represents the case. The central panel presents the Pandemonium effect, in this example represented by missing, or not detecting the \(\gamma \) transition \(\gamma _2\). The right hand panel represents the displacement of the \(\beta \)-decay intensity because of the non detection of the transition \(\gamma _2\)

We can overcome this problem using the total absorption gamma spectroscopy technique, where we take a different approach. The method involves a large 4\(\pi \) scintillation detector and is based on the detection of the full de-excitation \(\gamma \) cascade for each populated level, rather than the individual \(\gamma \)-rays. The power of TAGS to find the missing \(\beta \) intensity has been demonstrated in a number of papers [4,5,6,7,8,9,10,11,12,13]. The use of the TAGS method began at ISOLDE [14]. Its development and history are described in [15, 16].

Looking at a wider picture we see that many entries in the international databases, that rely on measurements with Ge detectors alone, will have systematic errors. As we shall see in the sections that follow this means that the results cannot be relied on for certain applications. The answer to the resulting difficulties lies in the use of TAGS. In the remainder of this article we will describe the TAGS method in more detail and then use our results to illustrate how it can be applied.

The structure of this article is the following: in Sect. 2 details of the experimental method and the analysis of the spectra are described. Sects. 3, 4, 5 and 6 deal with \(\beta \)-decay studies related to (a) radioactive decay heat (DH), (b) reactor antineutrino spectra (c) nuclear models, and (d) astrophysical applications respectively. Finally, in Sect. 7, a summary will be presented.

2 TAGS measurements

In Sect. 1 it was already explained why we need TAGS measurements. Figure 3 shows how the simple \(\beta \) decay presented in Fig. 2 is detected by typical detectors used in \(\beta \)-decay experiments. Because a TAGS detector acts like a calorimeter, in an ideal TAGS experiment the detected spectrum will be proportional to the \(\beta \) intensity distribution. Such a spectrum is obtained in ideal conditions, where there is no penetration of the \(\beta \) particles, or the radiation generated by them, into the detector and the TAGS detector is 100\(\%\) efficient up to the full energy of the \(\gamma \)-rays that follow the \(\beta \) decay. That means that only the full absorption peak corresponding to the sum energy of the \(\gamma \) cascade is detected in the case of a \(\beta ^-\) decay.

Schematic picture of how the simple \(\beta \) decay depicted in Fig. 2 is seen ideally by different detectors used in \(\beta \) decay studies. Left panel, representation of a total absorption detector, rigth panel, ideally detected spectra with a \(\beta \) dectector (a silicon detector), a Ge detector and a total absorption detector after the simple decay represented in Fig. 2

A real experiment does not quite match this ideal. In order to achieve very high detection efficiencies, large, close to \(4\pi \), detector volumes are needed. Thus inorganic scintillation material has been the natural choice. Because of its good average properties, namely energy and time resolution and intrinsic \(\gamma \) efficiency, NaI(Tl) has been used in all except one (see later) of the existing spectrometers. Nevertheless we need some opening to take the sources to the centre of the spectrometer, either in the form of a radioactive beam or deposited onto a tape transport system. The latter may also be needed to remove the sources after some measuring time. We may also need ancillary detectors for detecting coincidences and selecting the events in which we are interested (see Fig. 4). In addition TAGS detectors require, in general, some form of encapsulation. All these requirements mean that we have dead material and holes in our detector system. Accordingly the \(\gamma \)-detection efficiency of our system will not be 100\(\%\) . The consequence is that to obtain the \(\beta \) intensity distribution we need to solve the inverse problem represented by the following equation:

where \(d_i\) is the content of bin i in the measured TAGS spectrum, \(R_{ij}\) is the response matrix of the TAGS setup and represents the probability that a decay that feeds level j in the level scheme of the daughter nucleus gives a count in bin i of the TAGS spectrum, \(f_j\) is the \(\beta \) feeding to the level j (our goal) and \(C_i\) is the contribution of the contaminants to bin i of the TAGS spectrum. The index j in the sum runs over the levels populated in the daugther nucleus in the \(\beta \) decay. The response matrix \(R_{ij}\) depends on the TAGS setup and on the assumed level scheme of the daughter nucleus. The dependence on the level scheme of the daughter nucleus is introduced through the branching ratio matrix B. This matrix contains the information of how the different levels in the assumed level scheme decay to the lower lying levels. To calculate the response matrix \(R_{ij}(B)\) the branching ratio matrix B has to be determined first.

Schematic picture of the Rocinante total absorption spectrometer used in one of the experiments performed at the IGISOL facility of the University of Jyväskylä. The spectrometer is composed of 12 BaF\(_2\) crystals. In the lower part the endcap with the Si detector, used as an ancillary detector, is also presented (not in scale). The thick black lines represent the tape used to move away the remaining activity and the blue line represents the direction of the pure radioactive beam that is implanted in the centre of the spectrometer. As mentioned in the text this detector is an exception among existing total absorption spectrometers, since most of them are constructed from NaI material. The reason, why BaF\(_2\) is used, is explained later in this section. Reprinted figure with permission from [28], Copyright (2017) by the American Physical Society

There are different ways to extract the feeding distribution from Eq. 1 or, in other words, to solve the TAGS inverse problem. One can assume the existence of "pseudo" levels that are added manually (with their decaying branches) to the known level scheme, calculate their response and see their effect in the calculated spectrum (see for example [17, 18]). In our analysis until now we have followed an alternative way for which the level scheme of the daughter nucleus is divided into two regions, a low excitation part and a high excitation part.

Conventionally the levels of the low excitation part and their \(\gamma \)-decay branchings are taken from high resolution measurements available in the literature, since it is assumed that the \(\gamma \)-branching ratios of these levels are well determined. Above a certain energy, the cut-off energy, a continuum of possible levels divided into 40 keV bins is assumed. From this energy up to the decay \(Q_\beta \) value, the statistical model is used to generate a branching ratio matrix for the high excitation part of the level scheme. The statistical model is based on a level density function and \(\gamma \)-strength functions of E1, M1, and E2 character. For the level density function different options have been used, depending on the availability of experimental data. One option has been to fit the number of known levels at low excitation and the number of resonances around the neutron separation energy with a Gilbert-Cameron level density formula if enough data are available. Lately, the favoured alternative is to use the theoretical Hartree-Fock-Bogoliubov (HFB) plus combinatorial nuclear level densities [19, 20] that are available at the RIPL-3 database [21] for any nucleus and include empirical corrections when enough data are available.

Once the branching ratio matrix (B) is defined, the response of the setup \(R_{ij}\) to that branching matrix B (or level scheme) is calculated using previously validated Monte Carlo simulations of the relevant electromagnetic interactions in the experimental setup. The validation of the Monte Carlo simulations is performed by reproducing measurements of well known radioactive sources, that are made under the same experimental conditions as the real experiment. The Monte Carlo simulations require a careful implementation of all the details of the geometry of the setup, a proper knowledge of the materials employed in the construction of the setup and testing to find the best Monte Carlo tracking options and physics models that reproduce the measurements with sources. It should be noted that from high resolution measurements we use only the branching ratios of the levels, and not the information on the feeding of these levels.

At this point it is worth mentioning how the cut-off energy is determined. This is obviously case dependent, and what we do is to decide the excitation energy up to which the level scheme in the daughter nucleus can be considered "complete" with defined spin-parity assignments. The well-defined spin-parity assignment to the levels is important because of the electromagnetic transitions or branching generated via the \(\gamma \)-strength function from levels in the continuum.

Once the response function (R(B)) is determined we can solve Eq. 1 using appropriate algorithms to determine the feeding (or \(\beta \)-intensity) distribution. In our analyses we follow the procedure developed by the Valencia group. In [22, 23] several algorithms were explored. From those that are possible, the expectation maximization (EM) algorithm is conventionally used, since it provides only positive solutions for the feeding distributions and no additional regularization parameters (or assumptions) are required to solve the TAGS inverse problem.

Clearly, the first level scheme (or defined branching ratio matrix) considered is not necessarily the one that will provide a good description of the measured TAGS spectrum. For that reason, as part of the analysis the cut-off energy and the parameters that define the branching ratio matrix can be varied until the best description of the experimental data is obtained. Also assumptions on the spin and parity of the ground state of the parent nucleus and on the spins and parities of the populated levels can be changed when they are not known unambigously, since to connect the levels in the continuum to levels in the known part of the level scheme we need information about their spins and parities as mentioned earlier. All these changes provide different branching ratio matrices (or daughter level schemes) that are considered during the analysis and for all of them the corresponding response matrices are calculated and Eq. 1 is solved. The final analysis is then based on the level scheme (or branching ratio matrix) that is consistent with the available information from high resolution measurements and at the same time provides the best description of the experimental data. So in practical terms the following steps are followed until the best description of the data is obtained: (a) define a branching ratio matrix B, (b) calculate the corresponding response matrix \(R_{ij}(B)\), (c) solve the corresponding Eq. 1 using an appropriate algorithm and (d) compare the generated spectrum after the analysis (\(R(B)f+C\)) with the experimental spectrum d.

We have only mentioned briefly how the response function \(R_{ij}(B)\) is calculated. More specifically the response for each level can be determined recursively starting from the lowest level in the following way [24]:

where \(R_j\) is the response to level j, \(g_{jk} \) is the response of the \(\gamma \) transition from level j to level k which is calculated using Monte Carlo simulations, \(b_{jk}\) is the branching ratio for the \(\gamma \) transition connecting level j to level k, and \(R_k\) is the response to level k. Here the index k runs for all the levels below the level j. For simplicity we have not included in the formula the convolution with the response of the \(\beta \) particles and only the \(\gamma \) part of the response is presented. Note that \(R_j\) is a vector that contains as elements the \(R_{ij}\) matrix elements mentioned above for all possible i-s (or channels) of the TAGS spectrum and the branching ratio matrix enters in the formula of the response matrix through the decay branches \(b_{jk}\)-s. In the real calculation of the responses the internal conversion process is also taken into account.

Prior to the analysis, the contaminants in the TAGS spectrum (\(C_i\)) have to be isolated and their individual contributions evaluated. The nucleus to be studied is produced in nuclear reactions together with a number of additional nuclei. Two alternative separation methods are normally used to isolate the nucleus of interest. On-line mass separators are used with low-energy radioactive beams to reduce the contamination represented by mass isobars. In-flight separation is used at high-energy fragmentation facilities to reduce the number of nuclear species in the ”cocktail beam" to suitable levels. Even if we can isolate the nucleus of interest, the activity of the daughter and other descendants can contaminate the measured spectrum depending on the half-life of the decay studied. This contamination can be determined through dedicated measurements on the decay of the contaminant nuclei under the same conditions as the one of interest. Another source of contamination of the spectrum is the pile-up of signals. The pile-up can distort the full TAGS spectrum and can generate counts in regions of the spectra where there should be no counts, as for example in the region beyond the \(Q_\beta \) value of the decay. Also it can distort the spectrum in regions where we expect reduced statistics as for example close to the \(Q_\beta \) value of the decay. This is the reason why estimating this contribution is of importance. Algorithms have been developed to evaluate this contribution [25, 26]. Its determination is based on the random superposition of true detector pulses, measured during the experiment, within the time interval defined by the acquisition gate of the data acquisition system.

Another possible contamination appears when the decay is accompanied by \(\beta \)-delayed particle emission, since this process can lead promptly to the emission of \(\gamma \)-rays from the final nucleus populated by the \(\beta \)-delayed particle emission. The case of the emission of \(\beta \)-delayed neutrons is even more complex. Neutrons interact easily with the detector material and release their energy through inelastic scattering and capture processes. The proper evaluation of this contamination is of great relevance in the study of \(\beta \) decays far from stability on the neutron-rich side of the Segrè chart and requires careful Monte Carlo simulations of the neutron-detector interactions [26, 27]. The reproduction of this contamination is complicated because it has two components: one, which is prompt with the \(\beta \) decay, is composed of \(\gamma \)-rays emitted in the final nucleus after the \(\beta \)-delayed neutron emission when an excited state is populated, the other component due to neutron interactions in the detector is delayed, since the speed of neutrons is much lower than that of \(\gamma \)-rays. To simulate these effects properly an event generator [28], that takes into account the relative contributions of the two components is required. It is also necessary to know the energy spectrum of the \(\beta \)-delayed neutrons. In addition, the Monte Carlo simulation code should include an adequate physics model of the neutron interactions.

As an example, in Fig. 5 [29] the contribution of the calculated \(\beta \)-delayed neutron contamination to the TAGS decay spectrum of \(^{95}\)Rb is presented. Two available neutron energy spectra were used in the simulations [30, 31], and clearly only one reproduces the experimental TAGS data at high excitation energies. This figure shows the relevance of the neutron spectrum used in the simulations (for more details see [29]). Due to these complications we have built Rocinante [28, 32] a spectrometer made of BaF\(_{2}\) material, aimed at the measurement of \(\beta \)-delayed neutron emitters (see Fig. 4). BaF\(_{2}\) has a neutron capture cross-section one order-of-magnitude smaller than the NaI(Tl), that is conventionally used. This spectrometer was also the first of a new generation of segmented devices designed to exploit the cascade multiplicity information to improve the TAGS analysis, as will be mentioned later.

Impact of the neutron energy spectrum (\(I_n\)) in the simulations of the contamination associated with the \(\beta \)-delayed neutrons in the TAGS spectrum (for more details see [29]). Only the spectrum measured by Kratz et al. [30] reproduces the TAGS spectrum at high excitation energies. The Monte Carlo (MC) spectra are normalized to the experimental spectrum around the neutron capture peak indicated with an arrow. The prompt 836.9 keV \(\gamma \)-ray peak from the first excited state in the final nucleus after the \(\beta \)-delayed neutron emission of \(^{94}\)Sr is highlighted. Reprinted figure with permission from [29], Copyright (2019) by the American Physical Society

It is important first to identify the different distortions or contaminations, but it is also important to determine properly their corresponding weight in the measured spectrum. Depending on the distortion, different strategies have been followed. For example, the contribution from contaminant decays can be evaluated if there is a clear peak identified in the spectrum that comes from this contamination that can be used for normalization. Another option is the assessment of this contribution from the solution of the Bateman equations, using the information on half-lives and measurement conditions (collection and measuring cycle times). In the case of high-energy fragmentation experiments where the contamination is due to \(\beta \)-\(\gamma \) events uncorrelated with the implanted ion it can be evaluated from correlations backward in time.

The pileup distortion can be evaluated based on the number of counts in the TAGS spectra which lie beyond the highest \(Q_\beta \) value in the decay chain and which are clearly above the contribution of the background, since we can assume that those counts can only come from this contribution. When this option is not possible because of inadequate statistics, a procedure is given in [25] for the normalisation of this contribution based on the counting rate and the length of the analogue to digital converter (ADC) gate.

Finally if there is a contamination arising from \(\beta \)-delayed neutrons, this contribution can be normalized to the broad high-energy structure generated by neutron captures in the detector material when possible, otherwise it should be normalized to the \(\beta \)-delayed neutron emission probability (Pn) value of the decay (see Sect. 6 for more details).

In Fig. 6, we present as an example a total absorption spectrum measured during our first experiment in Jyväskylä of the decay of \(^{104}\)Tc [7, 8] which is relevant for the decay heat application (see Sect. 3). In the upper panel of this figure we show the spectrum of this decay compared with the reproduction of the spectrum after the analysis and the contribution of the contaminants (background+daughter activity+pileup).

In this measurement a TAGS detector that consisted of two NaI(Tl) cylindrical crystals with dimensions: \(\diameter = 200\,\mathrm {mm} \times l = 200\, \mathrm {mm}\), and \(\diameter = 200\, \mathrm {mm} \times l = 100\, \mathrm {mm}\) was used (courtesy of Dr. L. Batist). The longer crystal has a longitudinal hole of \(\diameter = 43\, \mathrm {mm}\) for the positioning of the sources in the approximate geometrical centre of the spectrometer using a tape transport system. In the experiment the crystals were separated by 5 mm. This separation and the ideal position of the sources inside the spectrometer was studied prior to our experiment using Monte Carlo simulations in order to maximize the \(\gamma \) efficiency of the setup [33]. This TAGS had a 57% peak and 92% total efficiency for the 662 keV \(\gamma \) transition emitted in \(^{137}\)Cs decay and 27% peak and 70% total efficiency for a 5 MeV \(\gamma \) transition. This last value was obtained from previously validated Monte Carlo simulations. The efficiency of this setup is modest compared with recently developed total absorption spectrometers such as DTAS [34], or MTAS [35]. This detector was designed at the Nuclear Institute of St. Petersburg (Russia) [36].

This measurement was analyzed in singles, since the precision and reproducibility of the tape positioning system was not considered sufficiently good to allow coincidence counting. The positioning of the sources is critical in the determination of the efficiency of the Si detector used as an ancillary detector for coincidences with the \(\beta \) particles emitted in the decay. The efficiency of the \(\beta \) detector as a function of end-point energy has a direct impact on the normalization of the combined \(\beta \)-\(\gamma \) cascade response of the spectrometer (\(R_{ij}\)). Using singles has the advantage of providing much higher statistics in the analysis compared with gated spectra. However, the use of gated spectra is preferred, sometimes unavoidable, in order to reduce contamination from ambient background and the selection of events of particular interest.

The lower panel of Fig. 6 shows the feeding distribution deduced for the \(^{104}\)Tc decay obtained from the TAGS measurement compared with the distribution obtained from high resolution measurements. From the figure it is clear that the feeding distribution obtained with the TAGS is shifted to higher energies in the daughter nucleus, which is typical of a case suffering from the Pandemonium effect [7, 8, 37]. Similar measurements will be discussed in more detail in Sect. 3.

As mentioned earlier, in this measurement a detector was used that was composed of two crystals. The new generation of available detectors such as Rocinante [28], SUN [38], MTAS [35] and DTAS [34] exploit segmentation to a greater degree to extract additional information from the decay under study. Using the segmentation it is possible to measure the detector fold (number of detectors fired in an event) which is related to \(\gamma \)-cascade multiplicity as a function of excitation energy and ultimately to the de-excitation branching ratio matrix B. The lack of knowledge of the matrix B is the largest source of uncertainty in TAGS analysis and this can be greatly improved with segmented detectors. Our current approach to the iterative procedure for updating B described earlier in this section, is to include in step d) the comparison to fold-gated TAGS spectra and single module spectra. Reconstructed fold-gated spectra are obtained by MC simulation using the appropriate event generator since it is not possible to define a fold-gated response in a manner similar to Eq. 2. This also prevents us from including them as part of the inverse problem (Eq. 1). A different approach has been taken by the ORNL group to analyze MTAS data [39, 40]. They use the coincidence between one module and the sum of all the modules to define total energy gated single detector spectra that are fitted by the sum of a number of de-excitation cascades, usually taken from high resolution spectroscopy and supplemented when necessary with "pseudo" levels with estimated branching ratios and modified iteratively until the best reproduction is achieved.

Yet another approach is used by the NSCL group to extract B from SUN data [41]. They start from the same total energy gated single detector spectra but apply the so called Oslo-method [42] to obtain the branching ratio matrix for a subset of levels. Because of this, the TAGS analysis is not performed with this B but uses the "pseudo" level approach including in the fit the total absorption spectrum and the spectrum of detector multiplicities [43]. It should be noted that the traditional Oslo method is not strictly applicable to TAGS data because the assumed equivalence of total deposited energy with excitation energy does not hold in general, due to the non-ideal detector response.

Currently we are working on a method to solve the full non-linear inverse problem represented by Eq. 1, to obtain feedings and branching ratios from the complete data set provided by a segmented spectrometer: sum energy spectrum gated by detector fold, sum energy spectrum versus single crystal spectrum and crystal-crystal correlations.

Comparison of the measured TAGS spectrum of the decay of \(^{104}\)Tc with the spectrum generated after the analysis (reconstructed spectrum). This last spectrum is obtained by multiplying the response function of the decay with the determined feeding distribution (\(R(B)f^{final}\)). The lower panel shows the \(\beta \)-decay feeding distribution obtained compared with that previously known from high resolution measurements [7, 8, 37]. Reprinted figure with permission from [7], Copyright (2010) by the American Physical Society

Comparison of the measured TAGS spectrum of the decay of \(^{100}\)Tc with the spectrum generated after the analysis (reconstructed spectrum). For more details see also the figure caption to Fig. 6. The lower panel represents the relative deviations of both spectra. Reprinted figure with permission from [45], Copyright (2017) by the American Physical Society

Comparison of the obtained TAGS feeding distribution in the decay of \(^{100}\)Tc with the data available from high resolution measurements (ENSDF evaluation for \(^{100}\)Tc decay). This is an example of a case that did not suffer from the Pandemonium effect. Only very small differences between the high resolution and the total absorption results were observed and feeding was identified at high excitation energy to only one additional level in the daughter (for more details see [45]). Reprinted figure with permission from [45], Copyright (2017) by the American Physical Society

In Fig. 7 we show the spectrum of the \(\beta \) decay of \(^{100}\)Tc measured in a recent campaign of measurements at the IGISOL IV facility of the Univ. of Jyväskylä [44, 45] with the segmented DTAS detector. This single decay is part of the A=100 system of relevance for double \(\beta \) decay studies (\(^{100}\)Ru - \(^{100}\)Tc-\(^{100}\)Mo). Previous to this study, only a high resolution measurement existed for this single decay and there were doubts whether feeding at high excitation energy was detected in the high resolution measurements. Single decays, like this one can be of relevance for fixing model parameters used in theoretical calculations for neutrino and neutrinoless double \(\beta \) decay studies. Our TAGS results show only a modest improvement in relation to the earlier high resolution results, revealing that this decay did not suffer seriously from the Pandemonium systematic error (see Fig. 8). This decay is not only important in the framework of double \(\beta \)-decay studies, it has also recently attracted attention in another neutrino related topic [46]. The decay is a relevant contributor in a newly identified flux-dependent correction to the antineutrino spectrum produced in nuclear reactors that takes into account the contribution of the decay of nuclides that are produced by neutron capture of long lived fission products. In this particular case \(^{99}\)Tc is produced as a fission product, which after neutron capture becomes \(^{100}\)Tc that \(\beta \) decays. The effect has a nonlinear dependence on the neutron flux, because first a fission is required and later a neutron capture. Effects like this are considered in order to explain features of the predicted antineutrino spectrum for reactors that are not yet fully understood (see Sect. 4).

The study of this decay was the first time that the DTAS detector was used at a radioactive beam facility. Prior to the analysis of this case a full characterization of the detector was performed [26, 44]. This included a check on the ability to reproduce with MC simulations the spectrum of decays obtained with different detector multiplicity (fold) conditions. As an example we present in Fig. 9 the reproduction of the multiplicities for the \(^{22}\)Na source used in the characterization of the detector. The DTAS is constructed in a modular way that adds extra versatility to the setup [34]. Depending on the installation, it can be used in an 18 detector configuration for ISOL type facilities or in 16 detector configuration for fragmentation facilities, where the positioning of the implantation detectors normally requires more space.

Comparison of the measured spectrum of the decay of \(^{22}\)Na summing all coincident detector modules per event (total absorption spectrum, upper panel left) and with different multiplicity conditions on the number of detectors that fired (\(M_m\), fold) with the results of Monte Carlo simulations for the DTAS detector [26, 44]. How well the different multiplicity spectra are reproduced, is a stringent test of the quality of the branching ratio matrix used in the analysis. Modified figure with permission from [26], Copyright (2018) by Elsevier

3 Decay heat

Nuclear reactor applications require \(\beta \) decay data. The relevance of \(\beta \) decay is shown by the fact that each fission is followed by approximately six \(\beta \) decays. The energy balance released in fission is presented in Table 1 for \(^{235}\)U and \(^{239}\)Pu fissile isotopes [47]. In the case of \(^{235}\)U, for example, 7.4 \(\%\) of the energy released comes from the \(\beta \) decay of the fission products (FP) (gamma and beta energy). Depending on the composition of the fuel in the reactor this percentage can change, but it is of the order of 7\(\%\) of the total energy released for a working reactor. Once the reactor is shut-down, the decay energy becomes dominant and the related heat has to be removed. If for some reason this is not possible, it can produce accidents like the one caused originally by the tsunami that followed the Great East Japan Earthquake (2011) in the Fukushima Daiichi power plant. Clearly one needs to estimate this source of energy for the safety of any nuclear installation. This includes the design of a reactor, the storage of the nuclear waste, the analysis of a loss of coolant accident (LOCA), etc.

Decay heat is defined as the amount of energy released by the decay of fission products not taking into account the energy taken by the neutrinos. The first method to estimate the decay heat was introduced by Way and Wigner [48], which was based on statistical considerations of the fission process. Their results provide a good estimate of the heat released, but the precision reached is not sufficient for present-day safety standards. Nowadays the most extended way to estimate the decay heat is to perform summation calculations, which relies on the increased amount of available nuclear data. In this method, the power function of the decay heat f(t) is obtained as the sum of the activities of the fission products and actinides times the energy released per decay:

where \({\overline{E}}_{i}\) is the mean decay energy of the ith nuclide (\(\beta \) or charged-particle, \(\gamma \) or electromagnetic and \(\alpha \) or heavy particle components), \(\lambda _i\) is the decay constant of the ith nuclide, and \(N_i(t)\) is the number of nuclides of type i at the cooling time t. Note that \(E_{\alpha }\) has been added for completeness, but this component is small in a working reactor and at short cooling times after shutdown (\(E_{\alpha }\) can go up from 0.085 \(\%\) at reactor shut down to approximately 1.3 \(\%\) after 3 days [49]). The division into electromagnetic or \(E_{\gamma }\) (\(\gamma \), bremsstrahlung, X-rays, etc), light particle or \(E_{\beta }\) (betas, conversion electrons, Auger electrons, etc.) and heavy particle or \(E_{\alpha }\) components (alpha, neutron, recoil energy, etc.) is introduced for radiological reasons, since it is important to separate the radiations according to the range in the reactor or fuel containers.

In the following discussion we will concentrate on the \(E_\beta \) and \(E_\gamma \) components of the decay heat that are associated with the \(\beta \) decay of the fission products. These calculations require extensive libraries of cross sections, fission yields and decay data, since the method first requires the solution of a system of coupled differential equations to determine the inventory of nuclei \(N_i(t)\) produced in the working reactor and after shut-down.

Several ingredients of this method depend on decay data. The determination of the activities of the fission products (\(\lambda _i N_i(t)\)) requires a knowledge of the half-lives of the decaying isotopes. The other important quantities are the mean energies released per decay \(\left( {\overline{E}}_{\beta ,i}, {\overline{E}}_{\gamma ,i}\right) \). The mean energies released per decay i can be obtained by direct measurements as in the systematic studies by Rudstam et al. [50] and Tengblad et al. [51]. These integral measurements (energy per decay) require specific setups that are only sensitive to the energy of interest and a careful treatment of all possible systematic errors.

Alternatively the mean energies can be deduced from available decay data in nuclear databases such as the Evaluated Nuclear Structure Data File (ENSDF) [52] if the decay properties are properly known. The term "properly known" \(\beta \) decay implies a knowledge of the \(Q_\beta \) value of the decay, the half-life, the \(\beta \)-distribution probability to the levels in the daughter nucleus and the decay branching ratios of the populated levels. If all this information is available, then it is possible to deduce the mean energies released in the decay using the following relations:

where \(E_j\) is the energy of the level j in the daughter nucleus, \(I_j\) is the probability of a \(\beta \) transition to level j, and \(<E_\beta >_j\) is the mean energy of the \(\beta \) continuum populating level j. As can be seen from the formula the mean gamma energy is approximated by the sum of the energy levels populated in the decay weighted by the \(\beta \)-transition probability. This approximation assumes that each populated level decays by \(\gamma \) de-excitation and ignores conversion electrons which are taken into account in the complete treatment of the mean energy calculations. The mean beta energy, because of the continuum character of the \(\beta \) distribution emitted in the population of each level, requires the determination of the mean energy \(<E_\beta >_j\) released for each end-point energy of the \(\beta \) transition \(\left( Q_\beta -E_j\right) \). Then the mean beta energy \(\left( {\overline{E}}_{\beta }\right) \) is obtained as the weighted sum of the mean beta energies populating each level by the \(\beta \)-transition probability. For the determination of \(<E_\beta >_j \) for each level one needs to make assumptions about the type of the \(\beta \) transition (allowed, first forbidden, etc.) and knowledge of the \(Q_\beta \) value of the decay is needed to determine the \(\beta \) transition end-points.

Pandemonium can have an impact in the determination of the mean energies from data available in databases. If the \(\beta \) decay data suffer from the Pandemonium effect the \(\beta \)-decay probability distribution is distorted. This distortion, which implies increased \(\beta \) probability to lower lying levels in the daugther nucleus, causes an underestimation of the mean gamma energy and an overestimation of the mean beta energy. This is why TAGS measurements are relevant to this application.

In fission more than 1000 fission products can be produced. But not all of them are equally important. When addressing a particular problem, like the decay heat, it is of interest to identify which are the most relevant contributors among the large number of fission products. A group of experts working for the International Atomic Energy Agency (IAEA) [53] identified high priority lists of nuclei that are important contributors to the decay heat in reactors and that should be measured using the TAGS technique (see Tables 2, 3). These lists included nuclides that are produced with high yields in fission and for which the decay data were suspected of suffering from the Pandemonium effect. One argument used for this last selection was if the decay data show no levels fed in the daughter nucleus in the upper 1/3 excitation energy window of the \(Q_\beta \) value. It is worth noting that this can be considered only as an indication of Pandemonium and not a rigorous rule. Another way of looking for questionable (or odd) data was to look for cases that show different mean energies in the different international databases. The final lists were published in two separate reports, first for U/Pu fuels [54] and then later for the possible future Th/U fuel [55].

In 2004 we started a research programme aimed at studying the \(\beta \) decay of nuclei making important contributions to the decay heat in reactors. For the planning of any nuclear physics experiment the first step is to decide the best facility to perform it in terms of the availability of the beams, their cleanness and their intensity. Since some of the most important contributors to the priority list for \(^{235}\)U and \(^{239}\)Pu fission were refractory elements like Tc, Mo and Nb, the options were very limited. In a classical ISOL facility like ISOLDE [56], the development of a particular beam can take some time if the beam of a particular element is not available. It is a lengthy and complex task to find the optimum chemical and physical conditions in the ion source for the extraction of a particular element. We decided that the best option concerning the availability of the beams was to perform the measurements at the IGISOL facility in Jyväskylä (Finland) [57, 58]. The reason for that was the development of the ion-guide technique. The ion-guide technique, and more specifically the fission ion guide, allows the extraction of fission products independently of the element. In this technique, fission is produced by bombarding a thin target of natural U with a proton beam. The fission products that fly out of the target are stopped in a gas and transported through a differential pumping system into the first accelerator stage of the mass separator. The dimensions of the ion guide and the pressure conditions are optimized in such a way that the process is fast enough for the ions to survive as singly charged ions. As a result the system is chemically insensitive and very fast (sub-ms) [59] allowing the extraction of any element including those that are refractory.

Another important advantage of performing experiments at IGISOL is the availability of the JYFLTRAP Penning trap [60, 61] developed for high precision mass measurements at this facility. JYFLTRAP can also be used as a high resolution mass separator for trap assisted spectroscopy measurements, providing a mass resolving power \(\left( \frac{M}{\Delta M}\right) \) of the order of 100,000 to be compared with the resolving power of approximately 500 of the IGISOL separator magnet. The purity of the beams is particularly important for calorimetric measurements like those with TAGS since it reduces systematic errors that can be associated with contamination of the primary radioactive beams. This advantage has also been used in other types of calorimetric measurements at IGISOL such as the measurements of \(\beta \)-delayed neutrons using \(^{3}\)He counters embedded in a polyethylene matrix [62,63,64,65].

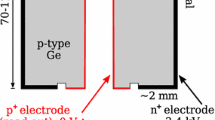

Three experimental campaigns have been performed at the IGISOL facility to study the \(\beta \) decay of important contributors to the decay heat and to the antineutrino spectrum in reactors using the TAGS technique [66,67,68,69]. One of the total absorption setups used in the experiments is presented in Fig. 4 (Rocinante TAGS). In a typical experiment, the radioactive beam extracted from IGISOL is first mass separated using the separator magnet and then further separated using the JYFLTRAP Penning trap. Then the beam is transported to the measuring position, at the centre of the total absorption spectrometer where it is implanted in a tape from a tape transport system. The tape is moved in cycles, which are optimized depending on the half-life of the decay of interest. As mentioned earlier, the reason for using a tape transport system is to reduce the effect of undesired daughter, grand-daughter, etc., decay contaminants in the measured spectrum. If necessary, these contaminants have to be substracted from the measured TAGS spectrum and require dedicated measurements. In this kind of measurement the TAGS detector is usually combined with a \(\beta \) detector as shown in the inset to Fig. 4. The \(\beta \) detector is used to record coincidences of the \(\beta \) particles with the TAGS spectrum, which essentially eliminates the effect of the ambient background.

Relevant histograms used in the analysis of the \(\beta \) decay of \(^{86}\)Br: measured spectrum (squares with errors), reconstructed spectrum response A (red line), reconstructed spectrum response B (blue line), summing-pileup contribution (orange line), background (green line). In the lower panel the relative differences of the experimental spectrum vs the reconstructed spectrum are shown. Response A corresponds to the conventional analysis. Response B corresponds to a modified branching ratio matrix to reproduce the measured \(\gamma \)-ray intensities. For more details see Rice et al. [71]. The small background contamination comes from an increased level of noise in the silicon detector in one of the runs during the experiment. Reprinted figure with permission from [71], Copyright (2017) by the American Physical Society

In Fig. 10 we show an example of the recently measured \(^{86}\)Br decay, which was considered priority one for the decay heat problem. The spectrum shows the TAGS spectrum obtained with the \(\beta \)-gate condition, and the contribution of the different contaminants. The analysis was performed as described earlier. Known levels up to the excitation value of 3560 keV were taken from the compiled high resolution data from [70]. From 3560 keV to the \(Q_\beta \)=7633(3) keV value, the statistical model is used to generate a branching ratio matrix using a level density function resulting from a fit to levels from [19, 20] and corrected to reproduce the level density at low excitation energy, and E1, M1, and E2 \(\gamma \)-strength functions taken from [21]. For more details see [71, 72].

In Fig. 10 we also show the comparison of the \(\beta \) gated TAGS spectrum with the results from the analysis. The reconstructed spectra are obtained by multiplying the response function of the detector with the final feeding distribution obtained from the analysis. In this particular case two results are presented. Response A corresponds to the conventionally calculated branching ratio matrix that fits better the experimental spectrum. Response B corresponds to a modified branching ratio matrix to reproduce the measured \(\gamma \)-ray intensities de-exciting each level as measured in high resolution experiments. In Fig. 11 the feeding distributions obtained are compared with those from the available high resolution measurements. This comparison shows that \(^{86}\)Br decay was suffering from the Pandemonium effect.

Comparison of the accumulated feeding distributions obtained in the work of Rice et al. [71] for the decay of \(^{86}\)Br with available high resolution measurements. Feeding A and B stands for the TAGS feedings determined. For more details see the text. Reprinted figure with permission from [71], Copyright (2017) by the American Physical Society

In Table 4 we show a summary of the mean energies deduced from TAS analyses obtained in our measurements performed at Jyväskylä. It shows that most of the cases addressed from the high priority list were suffering from the Pandemonium effect. Two cases, that originally were suspected to be Pandemonium cases \((^{102}\)Tc, \(^{101}\)Nb), were not. Those cases also show the necessity of the TAGS measurements to confirm the suspicion of Pandemonium. Clearly the non existence of feeding in the last \(Q_{\beta }/3\) excitation is a good indicator to select cases, but it is not always conclusive. The \(^{102}\)Tc, \(^{101}\)Nb cases have strong ground state feedings, which reduces the impact of the undetected \(\gamma \) branches at high excitation. This is clearly reflected in the differences of the deduced mean energies, when they are compared with the mean energies deduced from high resolution data. The values for one-third of the \(Q_{\beta }\)-value (\(Q_{\beta }/3\)) are also given for comparison with the mean energies. The \(Q_{\beta }/3\) value is an approach sometimes used by databases, when there is a lack of experimental data, which in practical terms divides the available decay energy in equal parts between the mean gamma, beta and antineutrino energies. In the table the mean energies deduced from the high resolution data (ENDSF database) are also given for comparison [73].

Our results can also be compared where possible with the results of Greenwood et al. [17] and Rudstam et al. [50]. Greenwood and co-workers performed a systematic study of fission products at the Idaho National Engineering Laboratory (INEL), Idaho Falls, USA, using a \(^{252}\)Cf source and the He-jet technique. They employed a total absorption spectrometer built of NaI(Tl) with the following dimensions, \( 25.4 \, \mathrm {cm} \diameter \times 30.5 \, \mathrm {cm}\) length with a \( 5.1 \, \mathrm {cm} \, \diameter \times 20.3 \, \mathrm {cm} \) long axial well. The analysis technique employed in those studies was based on the creation of a level scheme using the information from high resolution measurements and complementing it with the addition of "pseudo" levels by hand when necessary. The response of the detector was then calculated for the assumed level scheme using the Monte Carlo code CYLTRAN (for more details see [74]). The systematic study of Greenwood et al. provided TAGS data for 48 decays including the decay of three isomeric states. Since a different analysis technique was used, it is interesting to compare the results of Greenwood with the more recent results and look for possible systematic deviations for cases where the comparison is possible.

Differences between the mean gamma energies obtained with TAGS measurements (see Table 5 and the text for more details) and the direct measurements of Rudstam et al. [50] after renormalization by a factor of 1.14, which was deduced from the comparison of our newly determined mean energy for the decay of \(^{91}\)Rb [71] with that employed by Rudstam et al. [50]. Blue points represent Greenwood TAGS data and red points represent results from our collaboration. A systematic difference with a mean value of − 180 keV remains. Reprinted figure with permission from [71], Copyright (2017) by the American Physical Society

As mentioned earlier Rudstam et al., performed systematic measurements of \(\gamma \) and \(\beta \) spectra and deduced mean energies [50, 51] of interest for the prediction of decay heat. Beta spectra of interest for the prediction of the antineutrino spectrum from reactors were also measured [51]. The direct measurements were performed at ISOLDE [56] and at the OSIRIS separator [75] using setups optimized for the detection of the \(\gamma \)- and \(\beta \)-rays emitted by the fission products. In the case of the mean gamma energies, the setup required an absolute \(\gamma \) efficiency calibration with the assumption that the decay used in the calibration did not suffer from the Pandemonium effect [50]. In their measurements the \(\beta \) decay of \(^{91}\)Rb was used as calibration. This decay with a \(Q_\beta \) of 5907(9) keV shows a complex decay scheme from high resolution measurements that populates levels up to 4700 keV in the daughter nucleus. So it was assumed naturally by the authors of [50] that this decay was not a Pandemonium case. In their publication [50] it is mentioned that the \(\gamma \) spectrum extends up to 4500 keV.

In a contribution to the Working Party on International Evaluation Co-operation of the NEA Nuclear Science Committee (WPEC 25) group activities [53], the late O. Bersillon performed a comparison between the Greenwood and Rudstam mean gamma and beta energies for those decays that were available in both data sets. This comparison was revisited in [76]. A clear systematic difference was shown, pointing to possible systematic errors in one or both data sets [28, 71, 76]. For that reason it was decided to measure the \(^{91}\)Rb decay using the TAGS technique, to see if the decay was suffering from the Pandemonium effect or not and accordingly check if this decay was adequate as an absolute calibration point to obtain mean gamma energies in [50]. The 2669(95) keV mean gamma value obtained from our measurements [71] can be compared with the value used by Rudstam et al., (2335(33) keV) showing that this decay suffered from Pandemonium and also showing the necessity of renormalizing the Rudstam data by a factor of 1.14. With this renormalization, the mean value of the differences between the two data sets (TAGS vs Rudstam) reduces from − 360 to − 180 keV, but still the discrepancy remains [71]. This is shown in Fig. 12 and Table 5. It should be noted that our mean gamma energy value for this decay agrees nicely with the value obtained by Greenwood et al. (2708(76) keV) [17].

Impact of the inclusion of the total absorption measurements performed for 13 decays (\(^{86,87,88}\)Br, \(^{91,91,94}\)Rb, \(^{101}\)Nb, \(^{105}\)Mo, \(^{102,104,105,106,107}\)Tc) published in Refs. [7, 8, 28, 71, 77] on the gamma and beta components of the decay heat calculations for \(^{239}\)Pu and \(^{235}\)U. Upper panel, left: gamma component of \(^{239}\)Pu decay heat, upper pannel rigth: gamma component of \(^{235}\)U. Lower panel left: beta component of \(^{239}\)Pu, lower panel right: beta component of \(^{235}\)U. For more details on the calculations see the text

In Fig. 13 the total impact of the total absorption measurements of the 13 decays (\(^{86,87,88}\)Br, \(^{91,92,94}\)Rb, \(^{101}\)Nb, \(^{105}\)Mo, \(^{102,104,105,106,107}\)Tc) published in Refs. [7, 8, 28, 71, 77] is presented in comparison with the decay heat measurements reported by Tobias [78] and Dickens et al. [79] for the electromagnetic component (\(E_\gamma \) or EEM) and for the charged particle component (\(E_\beta \) or ELP) of the decay heat. To show the impact of the total absorption measurements the data base JEFF3.1.1 is used, in which no total absorption data are included. Calculations are performed using the bare JEFF3.1.1 and a modified version of the JEFF3.1.1 database with the inclusion of the total absorption data for the mean energies of the measured decays. This modified JEFF3.1.1 data base is represented as JEFF3.1.1 + TAGS in the figures. The results presented were provided by Dr. L. Giot [80] using the SERPENT code [81, 82]. In the figures, one should note the large impact of the decays mentioned and the relevance of the total absorption measurements for a proper assesment of the decay heat based on summation calculations.

In the presented comparisons the standards used in the field were employed (Tobias [78] and Dickens et al. [79]). Tobias’ data represent an evaluation of many available decay heat measurements collected until 1989 in order to define a benchmark for \(^{235}\)U and \(^{239}\)Pu. Dickens et al. represent a set of measurements for the thermal fission of \(^{235}\)U, \(^{239}\)Pu and \(^{241}\)Pu performed at the Oak Ridge Research Reactor. In Dicken’s measurements highly enriched targets and scintillator spectrometers for separate measurements of the beta and gamma components were used (for more details see the original publications and Ref. [83]). The discrepancy between the Tobias and Dickens data sets for \(^{235}\)U seen in the Fig. 13 is a well known issue in the field. Independently of the discrepancy between these data sets, from the Fig. 13 (right upper panel), it is clear that additional measurements are needed to improve the description of the \(^{235}\)U fuel, and new measurements are certainly required for future fuels like the \(^{233}\)U/\(^{232}\)Th case.

4 Neutrino applications

Nuclear reactors constitute an intense source of electron antineutrinos, with typically \(10^{20}\) antineutrinos per second emitted by a 1GWe reactor. The reactor at Savannah River was the site of the discovery of the neutrino in 1956 by Reines and Cowan [84], thus confirming Pauli’s predictions of 26 years earlier [1]. Just like the decay heat described above, antineutrinos arise from the beta decays of the fission products in-core. Their energy spectrum and flux depend on the distribution of the fission products which reflects the fuel content of a nuclear reactor. This property combined with the fact that neutrinos are sensitive only to the weak interaction could make antineutrino detection a new reactor monitoring tool [85]. Both particle and applied physics are the motivations of their study at power or research reactors nowadays with detectors of various sizes and designs placed at short or long distances. In the last decade, three large neutrino experiments with near and far detectors, were installed at Pressurized Water Reactors [86,87,88], to try to pin down the value of the \(\theta _{13}\) mixing angle parameter governing neutrino oscillations. These experiments have sought the disappearance of antineutrinos by comparing the flux and spectra measured at the two sites, both distances being carefully chosen to maximise the oscillation probability at the far site. The three experiments [86,87,88] have now achieved a precise measurement of the \(\theta _{13}\) mixing angle, paving the way for future experiments at reactors looking at the neutrino mass hierarchy or for experiments at accelerators for the determination of the delta phase, that governs the violation of CP symmetry in the leptonic sector, thus shedding light on why there is an abundance of matter rather than antimatter in the Universe.

Though they have used one or several near detectors in order to measure the initial flux and energy spectrum of the emitted antineutrinos, the prediction of the latter quantity still enters in the systematic uncertainties of their measurements, because their detectors are usually not placed on the isoflux lines of the several reactors of the plant at which they are installed [89]. In addition, the Double Chooz experiment started to take data with the far detector alone, implying the need to compare the first data with a prediction of the antineutrino emission by the two reactors of the Chooz plant. Two methods employed to calculate reactor antineutrino energy spectra were revisited at that time, i.e. the conversion and the summation methods.

The conversion method consists in converting the integral electron spectra measured at the research reactor at the Institut Laue-Langevin (ILL) in Grenoble (France) by Schreckenbach and co-workers [90,91,92,93] with \(^{235}\)U, \(^{239}\)Pu and \(^{241}\)Pu thin targets under a thermal neutron flux. These spectra exhibit rather small uncertainties and remain a reference as no other comparable measurement has been performed since. Being integral measures, no information is available on the individual \(\beta \) decay branches of the fission products. This prevents the use of the conservation of energy to convert the \(\beta \) into antineutrino spectra. Schreckenbach et al. developed a conversion model, in which they used 30 fictitious \(\beta \) branches spread over the beta energy spectrum to convert their measurements into antineutrinos.

In 2011 Mueller et al. [94] revisited the conversion method and improved it through the use of more realistic end-points and Z distributions of the fission products, available thanks to the wealth of nuclear data accumulated over 30 years, and through the application of the corrections to the Fermi theory at branch level in the calculation of the \(\beta \) and antineutrino spectra. After these revisions, the prediction of detected antineutrino flux at reactors compared with the measurements made at existing short baseline neutrino experiments revealed a deficit of 3%. The result was confirmed immediately by Huber [95] who carried out a similar calculation though he did not explicitly use \(\beta \) branches from nuclear data. This antineutrino deficit was even increased by the revision of the neutron lifetime and the influence of the long-lived fission products recalculated at the time, to a final amount of 6% [96]. A new neutrino anomaly was born: the reactor anomaly. Several research leads were followed since to explain this deficit. An exciting possibility is the oscillation of reactor antineutrinos into sterile neutrinos [96], which has triggered several new experimental projects worldwide [97,98,99]. In 2015, the mystery deepened when Daya Bay in China, Double Chooz in France and RENO in Korea, reported the detection of a distortion (colloquially called bump) in the measured antineutrino energy spectrum with respect to the converted spectrum, which could not be explained by any neutrino oscillation. The three experiments rely on the same detection technique and similar detector designs, which make it possible that they would all suffer from the same detection bias as suggested in [100]. But the three collaborations have thoroughly investigated this hypothesis without success. In the face of the observed discrepancies between the converted spectra and the measured reactor antineutrino spectra, it is worth considering more closely the existing methods used to compute them and other possible explanations such as the nonlinear corrections discussed in Sect. 2 in relation to the \(^{100}\)Tc decay [46].

Converted spectra rely on the unique measurements performed at the high flux ILL research reactor with the high resolution magnetic spectrometer BILL [101], using thin actinide target foils exposed to a thermal neutron flux that was well under control. This device was exceptional as it allowed the measurement of electron spectra ranging from 2 to 8 MeV in 50 keV bins (smoothed over 250 keV in the original publications) with an uncertainty dominated by the absolute normalization uncertainty of 3% at 90% C.L. except for the highest energy bins with poor statistics [90,91,92,93]. The calibration of the spectrometer was performed with conversion electron sources or (n,e\(^-\)) reactions on targets of \(^{207}\)Pb, \(^{197}\)Au, \(^{113}\)Cd and using the \(\beta \) decay of \(^{116}\)In thus providing calibration points up to 7.37 MeV. The irradiation duration ranged from 12 hours to 2 days. Two measurements of the \(^{235}\)U electron spectrum were performed, the first one lasting 1.5 days and the second one 12 hours. The normalisation of the two spectra disagrees because they were normalized using two different (n,e\(^-\)) reactions on \(^{197}\)Au and \(^{207}\)Pb respectively in chronological order. The measurement retained by the neutrino community is the second one. The conversion procedure consists in successive fits of the electron total spectrum with \(\beta \) branches starting with the largest end-points. The total electron spectrum is fitted iteratively bin by bin starting with the highest energy bins, and the contributions to the fitted bin are subtracted from the total spectrum. The reformulation of the finite size corrections, as well as a more realistic charge distribution of the fission products and a much larger set of \(\beta \) branches have been the key for the newly obtained converted antineutrino spectra of [94] and [95].

The possibility remains that the electron and/or converted spectra suffer from unforeseen additional uncertainties. Indeed the normalisation of the electron spectra relies on the (n,e\(^-\)) reactions quoted above and on internal conversion coefficient values. Both may have been re-evaluated since [102]. In addition, the exact position of the irradiation experiment in the reactor is not well known and may have an impact on the results as well [102]. Another concern is associated with the conversion model itself, where uncertainties may not take into account missing underlying nuclear physics. In Mueller’s conversion model, forbidden non-unique transitions are replaced by forbidden unique transitions, when the spins and parities are known! The shapes of the associated \(\beta \) and antineutrino spectra are not well known and the forbidden transitions dominate the flux and the spectrum above 4 MeV. Several theoretical works have attempted to estimate the uncertainties introduced by this lack of knowledge [103,104,105]. The latest study [105] reports a potential effect compatible with the observed shape and flux anomalies. Another source of uncertainties comes from the weak magnetism correction entering in the spectral calculation [95, 106] that is not well constrained experimentally in the mass region of the fission products. These two extra uncertainties affect converted spectra and are not included in the published uncertainties. Eventually the conversion process itself could be discussed, as the iterative fitting procedure is not the only possible conversion method and it is suspected of inducing additional uncertainties [107].

In order to identify what could be at the origin of these anomalies, the understanding of the underlying nuclear physics ingredients is mandatory. Indeed, only the decomposition of the reactor antineutrino spectra into their individual contributions and the study of the missing underlying nuclear physics will allow us to understand fully the problem and provide reliable predictions. The best tool to address these questions is to use the summation method. This method is based on the use of nuclear data combined in a sum of all the individual contributions of the \(\beta \) branches of the fission products, weighted by the amounts of the fissioning nuclei. Two types of datasets are thus involved in the calculation: fission product decay data, and fission yields. This method was originally developed by [108] followed by [109] and then by [51, 110]. The \(\beta \)/\({\bar{\nu }}\) spectrum per fission of a fissile isotope \(S_k(E)\) can be broken down into the sum of all fission product \(\beta \)/\({\bar{\nu }}\) spectra weighted by their activity \(\lambda _{i} N_{i}(t)\) similarly to the way it is done for decay heat calculations:

Eventually, the \(\beta \)/\({\bar{\nu }}\) spectrum of one fission product (\(S_i\)) is the sum over the \(\beta \) branches (or \(\beta \) transition probabilities) of all \(\beta \) decay spectra (or associated \({\bar{\nu }}\) spectra), \(S_{i}^{b}\) (in Eq. 6), of the parent nucleus to the daughter nucleus weighted by their respective \(\beta \)-branching ratios according to:

where \(f_{i}^{b}\) represents the \(\beta \)-transition probability of the b branch, \(Z_{i}\) and \(A_{i}\) the atomic number and the mass number of the daughter nucleus respectively and \(E_{0 i}^{b}\) is the endpoint of the \(\beta \) transition b.

In 1989 the measurement of 111 \(\beta \) spectra from fission products by Tengblad et al. [51] was used for a new calculation of the antineutrino energy spectra through the summation method. But the overall agreement with the integral \(\beta \) spectra measured by Hahn et al. [93] was at the level of 15–20% showing that a large amount of data was missing at that time. Lately, the summation calculations were re-investigated using updated nuclear databases. Indeed the summation method is the only one able to predict antineutrino spectra for which no integral \(\beta \) measurement has been performed. The existing aggregate \(\beta \) spectra needed to apply the conversion method are relatively few and were measured under irradiation conditions that are not exactly the same as those existing in power reactors. Among the discrepancies, the energy distributions of the neutrons generating the fissions in the ILL experiments are different from those in actual power reactors, and even more from the ones in innovative reactor designs such as fast breeder reactors. The aggregate \(\beta \) spectra were measured for finite irradiation times much shorter than the typical times encountered in power reactors. These few spectra and the specific conditions are not usable for innovative reactor fuels or require corrections for longer irradiation times, called off-equilibrium corrections, or more complex neutron energy distributions in-core. Until the recent measurement of the \(^{238}\)U \(\beta \) spectrum at Garching by [111], the conversion method could not be applied to obtain a prediction of the \(^{238}\)U fast fission antineutrino spectrum. This was one of the motivations for the first re-evaluation of the summation spectra that was performed in Mueller et al., the second being to provide off-equilibrium corrections [94] to the converted spectra. In this work, several important conclusions were already listed regarding summation calculations for antineutrinos.

The evaluated nuclear databases do not contain enough decay data to supply detailed \(\beta \) decay properties for all the fission products stored in the fission yields databases. The evaluated databases have thus to be supplemented by other data or by model calculations for the most exotic nuclei. The relative ratio of the aggregate \(\beta \) spectra with the obtained summation spectra from databases exhibited a shape typical of the Pandemonium effect, with an overestimate of the high energy part of the spectra in the nuclear data. The maximum amount of data free of the Pandemonium effect should thus be included in the summation calculations. The difficulty comes from the fact that these Pandemonium-free data are usually not included in the evaluated databases. One has thus to gather the existing decay data and compute the associated antineutrino spectra. The Pandemonium-free data are mostly existing TAGS measurements [17] and the electron spectra directly measured by Tengblad et al. [51]. They were included in an updated summation calculation performed in [112], in which the seven isotopes measured with the TAGS technique that had so much impact on the \(^{239}\)Pu electromagnetic decay heat, i.e. \(^{105}\)Mo, \(^{102,104-107}\)Tc, and \(^{101}\)Nb [7], were taken into account. The calculation revealed that these TAGS results had a very large impact on the calculated antineutrino energy spectra, reaching 8% in the Pu isotopes at 6 MeV. But it appeared that summation calculations still overestimate the \(\beta \) spectra at high energy, indicating that there were large contributions from nuclei where the data suffer from the Pandemonium effect in the decay databases. The situation is thus similar to that already encountered in the decay heat summation calculations. These conclusions reinforced the necessity for new experimental TAGS campaigns and spreading the message worldwide.

New summation calculations were developed and other experimental campaigns were launched using the TAGS technique [113,114,115]. In [113] a careful study of the existing evaluated fission yield databases was performed. It appeared that the choice of the fission yield database had a large impact on the summation spectra obtained, because of mistakes identified in the ENDF/B-VII.1 fission yields for which corrections were proposed. Once corrected, the ENDF/B-VII.1 fission yields provide spectral shapes in close agreement with the JEFF3.1 fission yields. In 2012, the agreement obtained was at the level of 10% with respect to the integral \(\beta \) spectra measured at ILL and the number of nuclei requiring new TAGS measurements was considered as achievable. Lists of priority for new TAGS measurements were established first by the Nantes group [77] (which triggered our first experimental campaign devoted to reactor antineutrinos in 2009), then by the BNL team [113] and eventually a table based on the Nantes summation method was published in the frame of TAGS consultant meetings organized by the Nuclear Data Section of the IAEA [116]. A portion of the table from [116] is shown in Table 6, with the measurements performed by our collaboration marked with asterisks. More than half of the first priority nuclei have been measured by our collaboration with the TAGS technique. The Oak Ridge group is involved in similar studies, see for example the results for several isotopes published in [114, 115].

In 2009, \(^{91-94}\)Rb and \(^{86-88}\)Br were measured with the Rocinante TAGS (for details see [28, 71] and Fig. 4) placed after the JYFLTRAP Penning trap of the IGISOL facility [57, 58]. Only \(^{92,93}\)Rb were in the top ten of the nuclei contributing significantly to the reactor antineutrino spectrum. \(^{92}\)Rb itself contributes 16% of the antineutrino spectrum emitted by a pressurized water reactor (PWR) between 5 and 8 MeV. Its contributions to the \(^{235}\)U and \(^{239}\)Pu antineutrino spectra are 32% and 25.7% in the 6 to 7 MeV bin and 34 and 33% in the 7–8 MeV bin. In 2009, the ground state (GS) to GS \(\beta \) intensity of this decay was set to 56% in ENSDF. This value was revised in 2014 to 95.2%. A maximum of 87%±2.5% was deduced from our TAGS data, having a large impact on the antineutrino spectra [77]. This value was confirmed by the Oak Ridge measurements [114]. The \(^{92}\)Rb case is worth noting because it is not a case suffering from Pandemonium, but its GS to GS \(\beta \) branch was underestimated in former evaluations. In the analysis of this nucleus, the sensitivity of the reconstructed spectrum (and thus of the \(\chi ^2\) obtained ) to the value of the GS-GS branch was very high, because of the large penetration of the electrons in the TAGS. The quoted uncertainties were obtained by varying the input parameters entering into the analysis, such as the calibration parameters, the thickness of the \(\beta \) detector, the level density, the normalisation of the backgrounds, etc. The \(\beta \) decay data for \(^{92}\)Rb that were used in the previous summation calculations [112] were those from Tengblad et al. The impact of replacing these data with the new TAGS results amounts to 4.5% for \(^{235}\)U, 3.5% for \(^{239}\)Pu, 2% for \(^{241}\)Pu, and 1.5% for \( ^{238}\)U. A similar impact was found on the summation model developed by Sonzogni et al. [113] but a much larger impact (more than 25% in \(^{235}\)U) was found on another model in which no Pandemonium-free data were included [117].