Abstract

We describe how to apply adjoint sensitivity methods to backward Monte Carlo schemes arising from simulations of particles passing through matter. Relying on this, we demonstrate derivative based techniques for solving inverse problems for such systems without approximations to underlying transport dynamics. We are implementing those algorithms for various scenarios within a general purpose differentiable programming C++17 library NOA (github.com/grinisrit/noa).

Similar content being viewed by others

REFERENCES

R. T. Q. Chen, Y. Rubanova, J. Bettencourt, and D. K. Duvenaud, in Advances in Neural Information Processing Systems, Proceedings of the 31st Conference NeurIPS 2018, Ed. by S. Bengio, H. Wallach, H. Larochelle, et al. (2018), p. 6571.

X. Li, T.-K. L. Wong, R. T. Q. Chen, and D. K. Duvenaud, in Proceedings of the International Conference on Artificial Intelligence and Statistics, 2020.

C. Rackauckas, Y. Ma, J. Martensen, C. Warner, K. Zubov, R. Supekar, D. Skinner, and A. Ramadhan, arXiv: 2001.04385 (2020).

L. Capriotti and M. B. Giles, SSRN Electron. J. (2011).

Differentiable Programming for Optimisation Algorithms over LibTorch. https://github.com/grinisrit/noa.

L. Desorgher, F. Lei, and G. Santin, Nucl. Instrum. Methods Phys. Res., Sect. A 621, 247 (2010).

V. Niess, A. Barnoud, C. Carloganu, and E. le Menedeu, Comput. Phys. Commun. 229 (54) (2018).

M. Girolami and B. Calderhead, J. R. Stat. Soc., Ser. B 73, 123 (2011).

A. Cobb, A. Baydin, A. Markham, and S. Roberts, arXiv: 1910.06243 (2019).

M. Betancourt, in Proceedings of the International Conference on Geometric Science of Information (Springer, 2013), p. 327.

M. Tao, J. Comput. Phys. 327, 245 (2016).

L. S. Pontryagin, E. F. Mishchenko, V. G. Boltyanskii, and R. V. Gamkrelidze, The Mathematical Theory of Optimal Processes (Wiley, New York, 1962).

ACKNOWLEDGMENTS

We would like to thank the MIPT-NPM lab and Alexander Nozik in particular for very fruitful discussions that have led to this work. We are very grateful to GrinisRIT for the support. This work has been initially presented in June 2021 at the QUARKS online workshops 2021—“Advanced Computing in Particle Physics.”

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

The author declares that he has no conflicts of interest.

Appendices

APPENDIX

CODE EXAMPLES

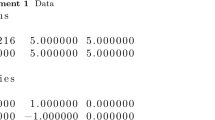

We have collected here the two BMC implementations for our basic example. You can reproduce all the calculations in this paper from the notebook differentiable_programming_pms.ipynb available in NOA [5].

For the specific code snippets here the only dependency is LibTorch:

# include <torch/torch.h>

The following routine will be used throughout and provides the rotations by a tensor angles for multiple scattering:

inline torch :: Tensor rot ( const torch :: Tensor &angles)

{

const auto n = angles.numel();

const auto c = torch :: cos(angles);

const auto s = torch :: sin(angles);

return torch :: stack ({c, –s, s, c}).t().view({n, 2, 2});

}

Given the set-up in example 1 we define:

const auto detector = torch :: zeros(2);

const auto materialA = 0.9f;

const auto materialB = 0.01 f;

inline const auto PI = 2.f * torch :: acos(torch :: tensor(0.f));

inline torch :: Tensor mix_density(

const torch :: Tensor &states,

const torch :: Tensor &vartheta)

{

return torch :: exp(–(states – vartheta.slice(0, 0, 2))

.pow(2).sum(–1)/vartheta [2].pow (2));

}

Example 4. This implementation relies completely on the AD engine for tensors. The whole trajectory is kept in memory to perform reverse-mode differentiation.

The routine accepts a tensor theta representing the angles for the readings on the detector, the tensor node encoding the mixture of the materials which is essentially our variable, and the number of particles npar.

It outputs the simulated flux on the detector corresponding to theta:

inline torch :: Tensor backward_mc(

const torch :: Tensor &theta,

const torch :: Tensor &node,

const int npar)

{

const auto length1 = 1.f – 0.2f * torch :: rand(npar);

const auto rot1 = rot(theta);

auto step1 = torch :: stack({torch :: zeros(npar), length1}).t()

;

step1 = rot1.matmul(step1.view({npar, 2, 1})).view({npar,2});

const auto state1 = detector + step1;

auto biasing = torch :: randint(0, 2, {npar});

auto density = mix_density(state 1, node);

auto weights =

torch :: where(biasing > 0,

(density/0.5) * materialA,

((1 – density)/0.5) * materialB) *

torch :: exp(0.1f * length1);

const auto length2 = 1.f – 0.2f * torch :: rand(npar);

const auto rot2 = rot 0.05f * PI * (torch :: rand(npar) – 0.5f

));

auto step2 =

length2.view({npar, 1}) * step 1/length1.view({npar,

1});

step2 = rot2.matmul(step2.view({npar, 2, 1})).view({npar,

2});

const auto state2 = state1 + step2;

biasing = torch :: randint(0, 2, {npar});

density = mix_density(state2, node);

weights *=

torch :: where(biasing > 0,

(density/0.5) * materialA,

((1 – density)/0.5) * materialB) *

torch :: exp(–0.1f * length2);

// assuming the flux is known equal to one at state2

return weights;

}

Example 5. This routine adopts the adjoint sensitivity algorithm to earlier Example 4. It outputs the value of the flux and the first order derivative w.r.t. the tensor node:

inline std :: tuple < torch :: Tensor, torch :: Tensor > backward_mc_grad(

const torch :: Tensor &theta,

const torch :: Tensor &node)

{

const auto npar = 1; //work with single particle

auto bmc_grad = torch :: zeros_like(node);

const auto length1 = 1.f – 0.2f * torch :: rand(npar);

const auto rot1 = rot(theta);

auto step1 = torch :: stack({torch :: zeros(npar), length1}).t( );

step1 = rot1.matmul(step1.view({npar, 2, 1})).view({npar, 2});

const auto state1 = detector + step1;

auto biasing = torch :: randint(0, 2, {npar});

auto node_leaf = node.detach().requires_grad_();

auto density = mix_density(state1, node_leaf);

auto weights_leaf = torch :: where(biasing > 0,

(density/0.5) * materialA,

((1 – density)/0.5) * materialB) * torch :: exp(–0.01f * length1);

bmc_grad += torch :: autograd :: grad({weights_leaf}, {node_leaf})[0];

auto weights = weights leaf.detach();

const auto length2 = 1.f – 0.2f * torch :: rand(npar);

const auto rot2 = rot(0.05f * PI * (torch :: rand(npar) – 0.5f));

auto step 2 = length2.view({npar, 1}) * step1/length1.view({npar, 1});

step 2 = rot2.matmul(step2.view({npar, 2, 1})).view({npar, 2});

const auto state2 = state1 + step2;

biasing = torch :: randint(0, 2, {npar});

node_leaf = node.detach().requires_grad_();

density = mix_density(state2, node_leaf);

weights_leaf = torch :: where(biasing > 0,

(density/0.5)* materialA,

((1 – density)/0.5) * materialB) * torch :: exp(–0.01f * length2);

const auto weight2 = weights_leaf.detach();

bmc_grad = weights * torch :: autograd :: grad({weights_leaf}, {node_leaf})[0]

+ weight2 * bmc_grad;

weights *= weight2;

// assuming the flux is known equal to one at state2

return std :: make_tuple(weights, bmc_grad);

}

Rights and permissions

About this article

Cite this article

Grinis, R. Differentiable Programming for Particle Physics Simulations. J. Exp. Theor. Phys. 134, 150–156 (2022). https://doi.org/10.1134/S1063776122020042

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1134/S1063776122020042