Abstract—

The characteristics of a specific event-related potential elicited by sound motion onset (motion-onset response, MOR) were estimated. Moving sound stimuli with delayed motion onset were presented to right-handed subjects in dichotic conditions. Sound motion was produced by linear changes of interaural time differences. The amplitude and latency of cN1 and cP2 components of evoked potentials were measured in a wide range of velocities. The latency of cN1 increased linearly with the increase in time of 1° azimuthal shift of a stimulus, whereas the cP2 latency remained nearly constant. The time interval of motion integration was about 140–180 ms. Thus, the latency of cN1 deflection may be considered as an electrophysiological correlate of integrative neuronal processes activated by sound motion presented as a separate auditory event.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

INTRODUCTION

Human perception of moving sound sources is still an object of interest for numerous neurophysiological studies. For many years the sensitivity of the human auditory system to sound motion has been studied with the help of psychophysical methods. The minimum audible angle (MAA) is a measure for auditory static spatial resolution, whereas minimum audible movement angle (MAMA) is used to describe the dynamic resolution. Acceleration of stimulus motion results in the linear growth of MAMA, while the motion detection time for the source of sound defined as time to achieve MAMA reduces with the increase of velocity [1–5]. Deterioration of spatial resolution with the growing motion velocity of stimulus is underlain by its response time, or the ability to detect motion of a sound source not instantaneously, but after a certain time interval needed for the integration of acoustic information. According to the available literature data, the integration time values for the auditory system of humans broadly vary, depending on the method of their assessment and type of auditory stimulation. The investigation of boundary conditions for motion perception in dichotic stimulation discovered the integration time of 80–150 ms [4, 6]. When determining the spatial resolution of hearing (MAMA) in a free sound field, this time period reached several hundreds of milliseconds [3, 7].

The exploration of neuronal mechanisms of auditory processing of the moving stimuli by analyzing electroencephalograms (EEGs), including auditory event-related potentials, expands the understanding of temporal characteristics of the reactions to movement. Temporal separation of stimulus presentation and the beginning of motion (a so-called delayed motion-onset sound paradigm) is used to assess the motion-onset response (MOR) distinctly from non-specific response to sound. MOR consists of early negative (cN1) and late positive (cP2) components and is considered as an electrophysiological correlate of moving stimulus localization [8–10]. MOR potential has a larger latency than response to stimulus onset (at least 140 ms after motion onset) and is primarily localized in the hemisphere contralateral to the direction of sound motion [11]. The influence of stimulus velocity on MOR parameters was described in studies of S. Getzmann; S. Getzmann, J. Lewald, and our previous research [10, 12, 13]. It was found that the amplitude of cN1 and cP2 components monotonically increased with the growing velocity of motion, while their latencies were reduced.

The correspondence between psychophysical and electrophysiological temporal characteristics of moving stimulus localization has not been thoroughly studied. The studies of this subject were primarily conducted with the focus on visual perception, detecting a correlation between the features of visual potentials evoked by motion onset and parallel psychophysical measures [14]. The authors succeeded to demonstrate an equal dependence of evoked potential latency and response time from stimulus coherence that modulated the degree of its spatial dynamics. It was later demonstrated that the latency and response time to the motion onset of visual evoked potential decreased with growing motion velocity, and both parameters were well approximated by the same negative power function [15]. The authors proposed that motion could be detected as soon as the evoked potential had reached a specific critical value.

Studies of sound localization discovered that the characteristics of MOR potential strongly correlated with the reaction time during subjective motion discrimination [10, 16]. As shown in our recent study performed with the help of a psychophysical method involving a delayed motion onset paradigm, motion detection time is a linear function of 1° azimuthal shift of a stimulus [5]. Referring to the available data, it can be presumed that MOR latency can serve as an objective measure for temporal integration of spatial information in the hearing system during movement of the sound source. The linear dependence of MOR latency on a 1° shift time and motion detection time could support this hypothesis.

As reported in the literature, the MOR potential can be registered during stimulus motion with the velocity exceeding 60 deg/s [9, 10, 12, 16–20], whereas humans are able to discriminate much slower motion on the psychophysical level [2, 21–27]. We suggest that such a discrepancy might originate from the proportion of time interval required for the generation of evoked response and time needed for the sound stimulus to move for the minimum audible movement angle (MAMA).

To summarize, the purpose of this study was to determine the time interval characterizing the integration of spatial information in MOR generation. The correlation between the components of MOR potential and sound motion velocity produced by changes of interaural time differences was investigated.

The aim of the work was to determine the boundary conditions for MOR generation by measuring the amplitude and latency of cN1 and cP2 components in a wide range of velocities including the minimum velocity providing reliable identification of MOR, and to find a correlation between MOR latency and a 1° azimuthal shift of a stimulus.

METHODS

Experimental conditions and subjects. The experiments involved 13 right-handed volunteers with normal hearing aged from 19 to 41 years (mean age of 26.1 ± 1.8 years, 2 men and 11 women).

Before beginning of experiment, all subjects underwent a standard audiometry. Subjects were seated on a chair in a shielded sound-attenuated chamber. The sound stimuli were presented in dichotic conditions, while subjects were reading a book of their choice.

Stimuli. Dichotic sound signals were presented through Etymotic ER–2 headphones (Etymotic Research Inc., United States). Sound ducts of the headphones were fixated in the auditory meatus with the help of earmolds providing exterior noise reduction by 30 dB. Inhomogeneity of amplitude-frequency characteristics of earphones within the range of 0.1–10 kHz was ±3 dB. The intensity of auditory signals was 50 dB above the hearing threshold. Fragments of white noise with the bandwidth of 100–1300 Hz were used as signals synthesized digitally at the sampling rate of 96 kHz. Signals were converted in the analog form with multichannel audio interface Gina24 (Echo Audio, United States). All sound signals had a duration of 2000 ms, ignoring the leading edge and trailing edge lasting 10 ms, smoothed with a cosine function.

Signals were spatially localized according to the interaural time difference (ΔT) for binaural signals. Stimuli were generated using the same reference signal by linear shift of readings on the motion region in accordance with the preselected ΔT value. Afterwards, resampling and spline interpolation of readings were performed. The transformed signal was presented to one of the ears, while the reference signal was given to another ear to imitate motion.

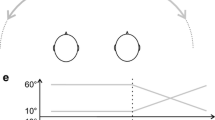

The moving stimuli consisted of three consecutive phases: the initial phase with zero interaural time difference (duration 1000 ms), further linear leading edge of interaural time difference from 0 to ±800 µs (duration 250, 375, 600, or 1000 ms), and the final phase with permanent interaural time difference ΔT = ±800 µs continuing until the end of signal with a total duration of 2000 ms (Fig. 1). No end stationary phase was produced for the slowest augmentation of ΔT for 1000 ms. In the beginning these stimuli provoked the sensation of a stationary auditory image along the head midline and gradual shift of an auditory image to the left or to the right from the head midline. Estimated angular velocities of stimuli calculated from the ratio 800 µs ↔ 90° were 360, 240, 150, and 90 deg/s, respectively. The ratio was chosen since ΔT = 800 µs provides a full lateralization of auditory stimulus in the majority of subjects [4]. Apart from the moving stimuli, a stationary stimulus with ΔT = 0 µs was applied to provoke the sensation of auditory image located in the head midline.

Event-related potentials in delayed motion onset paradigm. (a)—scheme of auditory stimuli modeling sound motion by changing interaural time difference (ΔT); (b)—grand average MOR potentials (N = 13). Time from motion onset is presented along the x-axis, a, 360; b, 240; c, 150; d, 90 deg/s; and e, stationary stimulus.

Experimental procedure. On the preliminary stage of each experiment the hearing thresholds of both ears were estimated for each subject. The sound intensity was set at 50 dB above the hearing threshold for each ear. All series consisted of four different motion velocities and included 40 stationary, 40 leftward, and 40 rightward stimuli moving to the left or to the right from the head midline in a quasi-random order (120 stimuli in total). Only one motion velocity was used within a single series; series with different motion velocities were presented in a quasi-random order as well.

The signals were given with an interval of 4000 ms. Each presentation cycle included a prestimulus pause for 1000 ms, signal with the duration of 2000 ms (variants described above), and poststimulus pause for 1000 ms. The duration of each series was 8 min. The subjects had a break every 25–30 min. Series at each motion velocity were repeated six times, resulting in 240 presentations for each experimental condition.

EEG Registration. EEG registration was performed using 32 silver chloride electrodes placed in accordance with the international 10–20 system, replacing PO3/PO4 electrodes with C5/C6. Additional electrodes outside the helmet were placed on the earlobes and nose. Horizontal and vertical electrooculograms were registered with electrodes located above the right and under the left eyes. EEG registration was performed using a digital encephalograph (Active Two, BIOSEMI, the Netherlands) and a specially elaborated program for signal filtration and recording on a computer hard drive. The EEG was converted within the bandwidth of 0–102 Hz and the sampling rate of 2048 Hz. Afterwards, the sampling rate was reduced to 512 Hz for data compression.

Data Analysis. A continuous EEG record was divided into epochs of 4000 ms. Epochs containing unconventional artifacts were manually deleted using visual scanning for excessive potential deflections in several channels (averagely 2% of epochs). After that the potential was calculated in common average reference and with the bandwidth of 0.5–45 Hz. On the next step the EEG was baseline corrected to a prestimulus window of 1000 ms. The remaining artifacts were removed with independent component analysis (ICA) following an infomax algorithm and reprocessed in the EEGLab module of MATLAB [28]. The algorithm was optimized for eye movement detection, blinking, and poor skin contact. Upon artifact removal the EEG was restored. A linked ear reference was used, the electrodes located on earlobes. To isolate event-related potentials, the EEG was additionally filtered in the bandwidth of 2–30 Hz. Then, the EEG was baseline corrected to the window of 200 ms before motion onset. Approximately the same number of epochs (235 ± 1) was selected for each subject in each condition, suitable for further analysis.

EEG records in each of the 32 channels were averaged individually for each type of stimuli and each subject. Next, the individual EEG records were averaged for 24 frontocentral electrodes (Fz, Cz, Fp1, AF3, F3, F7, FC1, FC5, C3, C5, T7, CP1, CP5, Fp2, AF4, F4, F8, FC2, FC6, C4, C6, T8, CP2, and CP6), and throughout the experimental group to receive grand average potentials. To assess sound MORs, the individual values of peak latencies and mean MOR amplitudes were measured in the time interval of 50 ms peak-centered for cN1 and cP2 components of grand average potentials.

Measured indices were processed with preliminary dispersion analysis to evaluate the impact of motion direction. Individual mean MOR values (N = 13) were compared using two-way repeated measures ANOVA (rmANOVA) with “Velocity” (90, 150, 240, and 360 deg/s) and “Direction” factors (leftward, rightward). The “Direction” factor did not show a significant effect on studied variables, therefore the responses to leftward and rightward stimuli were combined. One-way ANOVA (rmANOVA) was applied for final comparisons with the “Velocity” factor (90, 150, 240, and 360 deg/s). The Greenhouse–Geisser correction was used for data lacking sphericity. The Bonferroni correction was applied in multiple comparisons. All comparisons were performed with significance level p < 0.05.

RESULTS

Auditory stimulation induced two separate responses to stimulus onset and its motion. Sound onset responses consisted of classical negative N1 and positive P2 components. MOR consisted of negative cN1 and positive cP2 components. Motion response was received for the auditory stimuli moving with the velocity of 90, 150, 240, and 380 deg/s.

Amplitude and latency of MOR potential components strongly depended on stimulus motion velocity (Fig. 1, Table 1), conforming to the literature data [10, 29]. The validity of MOR formation in each experimental condition was demonstrated with one-tailed t-test compared to zero for individual mean samples (N = 13) of MOR amplitudes in the time interval of 50 ms, peak-centered for grand average curve. For movement velocities of 150, 240, and 360 deg/s, MOR was identified with high significance (p < 0.001), whereas only borderline significance was seen for stimuli moving at the velocity of 90 deg/s (p = 0.06).

Dispersion analysis (rmANOVA, “Velocity”: 90, 150, 240, and 360 deg/s) of cN1 and cP2 amplitudes proved a substantial effect of velocity (F(2.09, 25.12) = 19.28, p < 0.001 for cN1 and F(2.75, 32.99) = 4.80, p < 0.01 for cP2). Further pairwise comparisons of responses to adjacent velocities discovered significant differences only for the cN1 component at the velocities of 150 and 240 deg/s (p < 0.01). However, comparison of responses to detached values of velocities demonstrated a significant amplitude increase with the growth of velocity (p < 0.05). Amplitudes of the cP2 wave statistically differed only between the extremums (p < 0.01). The correlations of MOR component amplitude with motion velocity are presented in Fig. 2, illustrating a stronger influence of velocity on cN1 than on cP2.

Dispersion analysis (rmANOVA, “Velocity”: 90, 150, 240, and 360 deg/s) of cN1 and cP2 peak latencies also identified a substantial effect of velocity (F(1.63, 19.60) = 10.27, p < 0.01) for cN1, but not for cP2 (p > 0.05). According to pairwise comparisons, the statistical effect of velocity on cN1 latency was only seen in responses to detached velocities. The correlations between MOR component latency and motion velocity are presented in Fig. 3 together with the literature data.

Latency of MOR components as a function of stimulus motion velocity according to the literature data. Stimuli moved to the left or to the right from the head midline. Different markers indicate different conditions of auditory stimulation. Diamonds indicate dichotic stimulation with interaural time differences (ΔT) and/or intensity (ΔL); circles indicate virtual acoustic reality; crosses indicate free sound field. Markers are connected with a solid line for the results of the same study. Dashed lines with arrows indicate integration time interval measured referring to D.W. Chandler and D.W. Grantham, 1992 [3] (see text for details). 1, data of the present study (ΔT); 2, S. Getzmann, J. Lewald, 2010 (ΔT) [16]; 3, S. Getzmann, J. Lewald, 2010 (ΔL) [16]; 4, C.F. Altmann et al., 2017 (ΔT and ΔL) [20]; 5, K. Krumbholz et al., 2007 (ΔT) [9]; 6, S. Getzmann, 2009 (HTRF) [10]; 7, S. Getzmann, J. Lewald, 2010 (HTRF) [16]; 8, R. Grzeschik et al., 2010 (HTRF) [17]; 9, S. Getzmann, J. Lewald, 2012 (free field) [12]; and 10, S. Getzmann, J. Lewald, 2010 (free field) [16].

Since cP2 latency did not depend on velocity, all further calculations were performed using cN1 latency.

Referring to the estimated velocity values, the azimuthal shift of stimulus from the midline can be calculated using formula (1). To isolate the impact of specialized analysis of dynamical changes from the contribution of information transduction from peripheral receptors to the auditory cortex affecting the N1 wave latency, the shift time should be calculated as cN1 and N1 latency difference (Table 1).

where S (deg) is the azimuthal shift of stimulus; lat cN1 (ms) is the peak latency of cN1; lat N1 (ms) is the peak latency of N1; and v (deg/s) is the sound motion velocity. The estimated azimuthal shift for each velocity is presented in Table 1. The linear correlation between the shift and stimulus velocity is demonstrated in Fig. 4. The smallest shift of 10° associated with MOR generation was seen at the lowest motion velocity (90 deg/s).

Azimuthal shift of auditory stimuli as the function of motion velocity. Black circles with vertical lines indicate shift values calculated based on the experimental data and their standard errors. Equation expresses linear approximation of data. Diamond indicates shift value exceeding the minimum value by 25% used to determine the integration interval (see text for details).

An algorithm described in the study by D.W. Chandler and D.W. Grantham was applied to measure the optimal integration time for MOR generation, calculating the azimuthal shift exceeding the minimal shift of 10° by 25%, which was 1.25 × 10° = 12° [3]. Linear correlation between azimuthal shift and velocity (Fig. 4) allowed estimating the velocity corresponding to the stimulus shift by 12°, which was 158 deg/s. The corresponding cN1 latency could be considered as the optimal time for motion parameter processing during MOR formation. Referring to the correlation between latency and velocity given in Fig. 3, the optimal integration time might be around 180 ms from the motion onset.

After the 1° azimuthal shift of a stimulus t (ms/deg) was calculated for each motion velocity according to the formula (2):

where v (deg/s) is the velocity of sound motion. These values were inversely proportional to velocity: the higher velocity, the less time for a 1° shift. Figure 5 depicts MOR component latency as the function of the 1° shift. The dependence of cN1 latency from the 1° shift time appeared to be almost strictly linear (linear regression, R2 = 1.0). Extrapolation of this dependence to zero (intercept, Fig. 5) provided the minimal possible cN1 latency that could be theoretically seen during instantaneous stimulus translocation. Based on our experimental data, this value was 136 ms.

Latency of cP2 components was almost independent from the 1° shift time. Linear regression demonstrated an insignificant slope angle and lower determination coefficient than for cN1 (Fig. 5, linear regression R 2 = 0.4).

DISCUSSION

MOR characteristics found in the present study majorly conformed to the literature data. Comparison of results from different studies (Fig. 3) demonstrated that the latency of both MOR components reduced with the growth of stimulus velocity were more prominent for negative cN1 waves. MOR latencies were slightly lower during stimulation in a free sound field or virtual acoustic reality conditions than when using interaural time differences.

Time interval of motion integration. The pivotal theoretical question is how the time interval characterizing the integration of spatial information can be evaluated using MOR parameters. Sound motion sensation appears during the shift of sound source by the minimum detectable angle. As soon as the event occurred, the neural processes are induced, similar to those involved in the generation of the N1 component after stimulus onset [30, 31]. This results in the formation of a cN1 wave, its latency period from the beginning of motion substantially exceeding the N1 latency period from stimulus onset (Table 1). The specification of correlation type between cN1 latency and motion velocity is a critically important result of this experiment.

There is a fundamental connection between temporal characteristics of perception and stimulus motion velocity. The results of crucial studies of sound motion perception with psychophysical methods suggest that the minimum threshold stimulus duration for motion recognition depends on the stimulus velocity as a nearly hyperbolic decay function [2, 3, 27]. Similar dependence of both MOR latency and response time from stimulus velocity was detected for visual perception [15]. Our recent psychophysical trial did not only confirm the reduction of motion detection time (time to achieve MAMA) with the growing sound motion velocity, but also discovered its linear dependence from the 1° azimuthal shift time [5]. According to current results, cN1 latency was also linearly elevating with the increase of time of 1° azimuthal shift, in contrast with cP2 latency that remained nearly constant (Fig. 5), as shown by the absence of the statistically significant effect of velocity. The validation of linear dependence of cN1 latency from time of 1° azimuthal shift is a crucial result indicating the similarity of motion detection mechanisms on a preconscious level (the formation of cN1 wave) and conscious recognition (time to achieve MAMA) [5]. Based on these results, cN1 latency can be considered as the major electrophysiological parameter of integration of dynamic spatial information.

Such conclusion is supported by the concept of stepwise processing of moving auditory stimuli, implying that the analysis of the start point location for motion trajectory primarily affects the parameters of the cN1 wave, whereas the processing of information about motion direction (centripetal or centrifugal) takes more time and is represented by cP2 characteristics [10, 11, 32, 33]. Furthermore, there is experimental evidence of the connection between cN1 formation and activation of potential sources sensitive to motion direction along with the neural population capturing any spatial changes [19]. In addition, the characteristics of the cN1 component have little to no dependence from attention direction [34, 35]. Considering the abovementioned facts, the estimation of time interval required for integration of information about the motion parameters should rely on the latency of cN1 component.

Mentioned linear functions allow assessing the minimum changes in temporal characteristics of motion perception after obtaining the intercept. In this way V.V. Semenova et al., defined the time needed for psychophysical detection of instantaneous stimulus shift [5]. This time interval was equal to 10 ms and interpreted as the minimum time for integration of high sound velocities. Similarly, minimum possible cN1 latency that could be observed in instantaneous stimulus shift was determined by extrapolating the linear dependence of cN1 latency from the 1° azimuthal shift time to zero (Fig. 5). The estimated value of minimal latency was 136 ms, fully conforming to the results of our previous experiment on MOR registration, using instantaneous stimulus shift [13]. By rounding this value, it can be concluded that the characteristics of cN1 component represent the integration of spatial information for high motion velocities occurring within 140 ms after motion onset.

Nevertheless, apart from specialized analysis of dynamical changes, this time interval comprises nonspecific processes of information transduction from peripheral receptors to the auditory cortex. According to magnetoencephalography (MEG), the information about the most certain acoustic event, i.e., signal onset, travels from different areas of the auditory cortex for 20–80 ms [36]. The N1 wave with the peak latency around 100 ms predominantly reflects the activation of planum temporale and is determined by the occurrence of a new auditory event rather than by stimulus characteristics [30]. Assuming that the transduction of information about an event equally contributes to the latency of N1 and cN1 wave latencies by around 100 ms, it can be proposed that motion processing requires at least 40 ms additionally. This value corresponds to the time of localization delay calculated by the shift of perceived location of the starting point of sound motion trajectory [37], as well as to optimal integration time of spatial information received in our psychophysical trial [5]. As shown in the last study, stimuli moving at velocities of 90 deg/s and above manage to shift for an azimuthal distance corresponding to MAMA for these velocities in around 40 ms. Slower motion requires more than 40 ms to achieve MAMA.

D.W. Chandler and D.W Grantham proposed that the optimal integration time corresponds to the stimulus shift exceeding MAA by 25% [3]. Applying this criterion and using the dependence of cN1 latency from velocity shown in our experiment (Fig. 3), we evaluated the time required for complete major information accumulation about the stimulus motion in MOR formation. This time interval was about 180 ms after motion onset. The estimation of motion direction continues in later stages and affects the characteristics of cP2 components [11].

The slower stimulus is moving, the more time is needed to evaluate its motion. This indicates the dependence of cN1 latency from velocity. Maximum cN1 latency demonstrated in our experiment for slow stimulus motion was 208 ms. In the current study the slowest motion was modeled by linear change in interaural time difference ΔT from 0 to 800 µs for 1000 ms, corresponding to the estimated velocity of 90 deg/s. Statistical validity test of cN1 detection for velocities above 90 deg/s demonstrated high level of significance, however, at the velocity of 90 deg/s the significance reduced to boundary level (p = 0.06). Extrapolation of received amplitude dependence of cN1 (Fig. 2) found that the cN1 value approximated to zero at velocities below 90 deg/s, which resulted in the traditional application of relatively high angular velocities and azimuthal shifts in MOR registration of other experiments. Therefore, experimental estimation of the upper limit of time interval for MOR has not been performed until now.

Spatial integration window. Resolution of the auditory system in studies of sound motion perception with psychophysical methods is measured referring to MAMA, whereas MAA is the detection threshold for azimuthal shift. In theory, the MAA value can be derived from linear extrapolation of MAMA dependence from stimulus velocity [3]. In the present study the same subject sample was involved to assess MAMA, as in our experiment [5], where it was measured psychophysically. Extrapolation of MAMA dependence from the velocity allowed estimating the MAA value that was 4°.

A similar evaluation can be obtained referring to our results for different stimulus motion velocities (Fig. 4). Extrapolation of correlation presented in Fig. 4 results in a shift rate of 6°, falling in the MAA value range demonstrated in the majority of psychophysical researches [5, 26]. However, MOR cannot be registered for so short trajectories. According to our data, a shift of 10º was seen in the slowest stimulus, taking time needed to process dynamical characteristics of stimuli (208 – 101 = 107 ms). There is no doubt that such an evaluation of shift time is inaccurate; the actual shift time might be longer, though still undeterminable precisely. Taking into account these limitations, 10° can be considered the lowest shift angle received in the experiment of MOR formation.

For example, if the stimulus moves at the velocity of 50 grad/s, after 180 ms from the onset it will shift by about 50 × (180 – 100)/1000 = 4°. If the minimum azimuthal shift approximates 10° for MOR generation, a 4° shift might not induce the potential generation. Nevertheless, based on the available data, a gradual shift of stimuli with the duration of 100–200 ms by azimuthal distance exceeding 5° is well discernible during active localization and provokes the mismatch negativity during passive hearing [38–41]. To summarize, the evaluation of spatiotemporal MOR resolution discovered in this study suggests limited applicability of MOR potential as the activity index of the nervous system in sound motion perception.

CONCLUSIONS

The experimental results demonstrated that cN1 latency linearly increased with the increase in time of 1° azimuthal shift of a stimulus, whereas cP2 latency remained nearly constant. Thus, the latency of cN1 deflection may be considered as an electrophysiological correlate of integrative neuronal processes activated by sound motion presented as a separate auditory event. The time interval of motion integration was about 140–180 ms. The lower limit of this interval represented the time required for the processing of dynamic spatial information associated with fast sound motion. It exceeded the time needed for processing of an auditory event (as indexed by the latency of the N1 wave) by 40 ms. As follows from the behavioral measurements [5], such an interval was required for the hearing system to achieve an optimal spatial resolution during perception of sound motion. The minimum azimuthal shift required for MOR generation was 10º. At this stage of research spatial resolution of MOR seems to be significantly inferior compared to the psychophysical parameters.

REFERENCES

Perrott, D.R. and Musicant, A.D., Minimum audible movement angle: binaural localization moving sound, J. Acoust. Soc. Am., 1977, vol. 62, no. 6, p. 1463.

Grantham, D.W., Detection and discrimination of simulated motion of auditory targets in the horizontal plane, J. Acoust. Soc. Am., 1986, vol. 79, no. 6, p. 1939.

Chandler, D.W. and Grantham, D.W., Minimum audible movement angle in the horizontal plane as a function of stimulus frequency and bandwidth, source azimuth, and velocity, J. Acoust. Soc. Am., 1992, vol. 91, no. 3, p. 1624.

Al’tman, Ya.A., Prostranstvennyi slukh (Spatial Hearing), St. Petersburg: Inst. Fiziol. im. I. P. Pavlova Ross. Akad. Nauk, 2011.

Semenova, V.V., Shestopalova, L.B., Petropavlov-skaya, E.A., and Nikitin, N.I., Perception constants for delayed movement of sound stimuli, Usp. Fiziol. Nauk, 2020, vol. 51, no. 2, p. 55.

Al’tman, Ya.A., Lokalizatsiya dvizhushchegosya istochnika zvuka (Localization of a Moving Sound Source), Leningrad: Nauka, 1983.

Saberi, K. and Hafter, E.R., Binaural and Spatial Hearing in Real and Virtual Environments, Gilkey, R.H. and Anderson, T.R., Eds., Erlbaum, 1997.

Varfolomeev, A.L. and Starostina, L.V., Auditory evoked potentials in humans on illusory sound image movement, Ross. Fiziol. Zh. im. I. M. Sechenova, 2006, vol. 92, no. 9, p. 1046.

Krumbholz, K., Hewson-Stoate, N., and Schönwiesner, M., Cortical response to auditory motion suggests an asymmetry in the reliance on inter-hemispheric connections between the left and right auditory cortices, J. Neurophysiol., 2007, vol. 97, no. 2, p. 1649.

Getzmann, S., Effect of auditory motion velocity on reaction time and cortical processes, Neuropsychologia, 2009, vol. 47, no. 12, p. 2625. https://doi.org/10.1016/j.neuropsychologia.2009.05.012

Getzmann, S., Auditory motion perception: onset position and motion direction are encoded in discrete processing stages, Eur. J. Neurosci., 2011, vol. 33, no. 7, p. 1339. https://doi.org/10.1111/j.1460-9568.2011.07617.x

Getzmann, S. and Lewald, J., Cortical processing of change in sound location: smooth motion versus discontinuous displacement, Brain Res., 2012, vol. 1466, p. 119.

Shestopalova, L.B., Petropavlovskaya, E.A., Semenova, V.V., and Nikitin, N.I., Event-related potentials to sound stimuli with delayed onset of motion in conditions of active and passive listening, Neurosci. Behav. Physiol., 2018, vol. 48, no. 1, p. 90.

Patzwahl, D.R. and, Zanker, J.M., Mechanisms of human motion perception: combining evidence from evoked potentials, behavioural performance and computational modelling, Eur. J. Neurosci., 2000, vol. 12, no. 1, p. 273.

Kreegipuu, K. and Allik, J., Detection of motion onset and offset: кeaction time and visual evoked potential analysis, Psychol. Res., 2007, vol. 71, no. 6, p. 703.

Getzmann, S. and Lewald, J., Effects of natural versus artificial spatial cues on electrophysiological correlates of auditory motion, Hear. Res., 2010, vol. 259, nos. 1–2, p. 44.

Grzeschik, R., Böckmann-Barthel, M., Mühler, R., and Hoffmann, M.B., Motion-onset auditory-evoked potentials critically depend on history, Exp. Brain Res., 2010, vol. 203, no. 1, p. 159.

Grzeschik, R., Böckmann-Barthel, M., Mühler, R., et al., Direction-specific adaptation of motion-onset auditory evoked potentials, Eur. J. Neurosci., 2013, vol. 38, no. 4, p. 2557.

Grzeschik, R., Lewald, J., Verhey, J.L., et al., Absence of direction-specific cross-modal visual-auditory adaptation in motion-onset ERPs, Eur. J. Neurosci., 2016, vol. 43, no. 1, p. 66.

Altmann, C.F., Ueda, R., Bucher, B., et al., Trading of dynamic interaural time and level difference cues and its effect on the auditory motion-onset response measured with electroencephalography, NeuroImage, 2017, vol. 159, p. 185.

Harris, J.D. and Sergeant, R.L., Monaural/binaural minimum audible angles for a moving sound source, J. Speech Hear. Res., 1971, vol. 14, no. 3, p. 618.

Altman, J.A. and Viskov, O.V., Discrimination of perceived movement velocity for fused auditory image in dichotic stimulation, J. Acoust. Soc. Am., 1977, vol. 61, no. 3, p. 816.

Saberi K., Perrott D.R. Minimum audible movement angles as a function of sound source trajectory, J. Acoust. Soc. Am., 1990, vol. 88, no. 6, p. 2639.

Saberi, K. and Perrott, D.R., Discrimination of sound source velocity by human listeners, J. Acoust. Soc. Am., 2002, vol. 111, no. 26, p. 1026.

Getzmann, S., Lewald, J., and Guski, R., Representational momentum in spatial hearing, Perception, 2004, vol. 33, no. 5, p. 591.

Schmiedchen, K., Freigang, C., Rubsamen, R., and Richter, N., A comparison of visual and auditory representational momentum in spatial tasks, Atten. Percept. Psychophys., 2013, vol. 75, no. 7. 1507.

Carlile, S. and Leung, J., The perception of auditory motion, Trends Hear., 2016, vol. 20. https://doi.org/10.1177/2331216516644254

Delorme, A., Sejnowski, T., and Makeig, S., Enhanced detection of artifacts in EEG data using higher-order statistics and independent component analysis, NeuroImage, 2007, vol. 34, no. 4, p. 1443.

Shestopalova, L.B., Petropavlovskaia, E.A., Semenova, V.V., and Nikitin, N.I., Brain oscillations evoked by sound motion, Brain Res., 2021, vol. 1752, p. 147232.

Näätänen, R. and Picton, T.W., The N1 wave of the human electric and magnetic response to sound: a review and an analysis of the component structure, Psychophysiology, 1987, vol. 24, no. 4, p. 375.

Nishihara, M., Inui, K., Motomura, E., et al., Auditory N1 as a change-related automatic response, Neurosci. Res., 2011, vol. 71, no. 2, p. 145.

Ducommun, C.Y., Murray, M.M., Thut, G., et al., Segregated processing of auditory motion and auditory location: an ERP mapping study, NeuroImage, 2002, vol. 16, no. 1, p. 76.

Sarrou, M., Schmitz, P.M., Hamm, N., and Rübsamen, R., Sound frequency affects the auditory motion-onset response in humans, Exp. Brain Res., 2018, vol. 236, no. 10, p. 2713.

Kreitewolf, J., Lewald, J., and Getzmann, S., Effect of attention on cortical processing of sound motion: an EEG study, NeuroImage, 2011, vol. 54, no. 3, p. 2340.

Shestopalova, L.B., Petropavlovskaia, E.A., Semenova, V.V., and Nikitin, N.I., Sound stimulus movement-associated rhythmic activity in the human brain, Neurosci. Behav. Physiol., 2020, vol. 51, no. 4, p. 472.

Inui, K., Okamoto, H., Miki, K., et al., Serial and parallel processing in the human auditory cortex: a magnetoencephalographic study, Cereb. Cortex, 2006, vol. 16, no. 1, p. 18.

Petropavlovskaya, E.A., Shestopalova, L.B., and Vaitulevich, S.F., Sluggishness of auditory perception during localization of short moving sound images, Hum. Physiol., 2010, vol. 36, no. 4, p. 399. https://doi.org/10.1134/S0362119710040043

Petropavlovskaya, E.A., Shestopalova, L.B., and Vaitulevich, S.F., Predictive ability of the auditory system during smooth and abrupt movements of low-intensity sound images, Neurosci. Behav. Physiol., 2012, vol. 42, no. 8, p. 911. https://doi.org/10.1007/s11055-012-9657-0

Altman, J.A., Vaitulevich, S.F., Varfolomeev, A.L., et al., Mismatch negativity as a characteristic of the distinguishing locating capacity of the human auditory system, Hum. Physiol., 2007, vol. 33, no. 5, p. 531. https://doi.org/10.1134/S0362119707050039

Altman, J.A., Vaitulevich, S.Ph., Petropavlovskaya, E.A., and Shestopalova, L.B., Discrimination of the dynamic properties of sound source spatial location in humans: electrophysiology and psychophysics, Hum. Physiol., 2010, vol. 36, no. 1, p. 72. https://doi.org/10.1134/S0362119710010093

Altman, J.A., Vaitulevich, S.Ph., Shestopalova, L.B., and Petropavlovskaya, E.A., How does mismatch negativity reflect auditory motion? Hear. Res., 2010, vol. 268, nos. 1–2, p. 194.

Funding

The study was supported by the Scientific and Technical Development of the Russian Federation state program no. 47 (2019–2030), subject 0134-2019-0006, as well as the Russian Foundation for Basic Research (grant no. 19-315-90 016).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest. The authors declare that they do not have a conflict of interest.

Statement of compliance with standards of research involving humans as subjects. All procedures performed in studies involving human participants were in accordance with the biomedical principles of the 1964 Helsinki Declaration and its later amendments and approved by the local ethics committee of St. Petersburg State University (St. Petersburg). Informed consent was obtained from all individual participants involved in the study upon clarification of potential risks and advantages, and the type of upcoming trial.

Additional information

Translated by E. Sherstyuk

Rights and permissions

Open Access. This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons license and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Semenova, V.V., Shestopalova, L.B., Petropavlovskaia, E.A. et al. Latency of Motion Onset Response as an Integrative Measure of Processing Sound Movement. Hum Physiol 48, 401–410 (2022). https://doi.org/10.1134/S0362119722040107

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1134/S0362119722040107