Abstract

The novelty of this article lies in introducing a novel stochastic technique named the Hippopotamus Optimization (HO) algorithm. The HO is conceived by drawing inspiration from the inherent behaviors observed in hippopotamuses, showcasing an innovative approach in metaheuristic methodology. The HO is conceptually defined using a trinary-phase model that incorporates their position updating in rivers or ponds, defensive strategies against predators, and evasion methods, which are mathematically formulated. It attained the top rank in 115 out of 161 benchmark functions in finding optimal value, encompassing unimodal and high-dimensional multimodal functions, fixed-dimensional multimodal functions, as well as the CEC 2019 test suite and CEC 2014 test suite dimensions of 10, 30, 50, and 100 and Zigzag Pattern benchmark functions, this suggests that the HO demonstrates a noteworthy proficiency in both exploitation and exploration. Moreover, it effectively balances exploration and exploitation, supporting the search process. In light of the results from addressing four distinct engineering design challenges, the HO has effectively achieved the most efficient resolution while concurrently upholding adherence to the designated constraints. The performance evaluation of the HO algorithm encompasses various aspects, including a comparison with WOA, GWO, SSA, PSO, SCA, FA, GOA, TLBO, MFO, and IWO recognized as the most extensively researched metaheuristics, AOA as recently developed algorithms, and CMA-ES as high-performance optimizers acknowledged for their success in the IEEE CEC competition. According to the statistical post hoc analysis, the HO algorithm is determined to be significantly superior to the investigated algorithms. The source codes of the HO algorithm are publicly available at https://www.mathworks.com/matlabcentral/fileexchange/160088-hippopotamus-optimization-algorithm-ho.

Similar content being viewed by others

Introduction

Numerous issues and challenges in today's science, industry, and technology can be defined as optimization problems. All optimization problems have three parts: an objective function, constraints, and decision variables1. Optimization algorithms can be categorized in diverse manners for addressing such problems. Nonetheless, one prevalent classification method is based on its inherent approach to optimizing problems, distinguishing between stochastic and deterministic algorithms2. Unlike stochastic methods, deterministic methods require more extensive information about the problem3. However, stochastic methods do not guarantee finding a global optimal solution. In today's context, optimization problems we often encounter are nonlinear, complex, non-differentiable, piecewise functions, non-convex, and involve many decision variables4. For such problems, employing stochastic methods for their solution tends to be more straightforward and more suitable, especially when we have limited information about the problem or intend to treat it as a black box5.

One of the most important and widely used methods in stochastic approaches is metaheuristic algorithms. In metaheuristic algorithms, feasible initial solution candidates are randomly generated. Then, iteratively, these initial solutions are updated according to the specified relationships in the metaheuristic algorithm. In each step, feasible solutions with better costs are retained based on the number of search agents. This updating continues until the stopping iteration is satisfied, typically achieving a MaxIter such as the Number of Function Evaluations (NFE) or reaching a predefined cost value set by the user for the cost function. Because of the advantages of metaheuristic algorithms, they are used in various applications, and the results show that these algorithms can improve efficiency in these applications. A good optimization algorithm is able to create a balance between exploration and exploitation, in the sense that in exploration, attention is paid to global search, and in exploitation, attention is paid to local search around the obtained answers6.

Numerous optimization algorithms have been introduced; however, introducing and developing a new, highly innovative algorithm are still deemed necessary, as per the No Free Lunch (NFL) theorem7. The NFL theorem asserts that the superior performance of a metaheuristic algorithm in solving specific optimization problems does not guarantee similar success in solving different problems. Therefore, the need for an algorithm that demonstrates improved speed of convergence and the ability to find the optimal solution compared to other algorithms is highlighted. The broad scope of utilizing metaheuristic optimization algorithms has garnered attention from researchers across multiple disciplines and domains. Metaheuristic optimization algorithms find applications in a wide range of engineering disciplines, including medical engineering problems, such as improving classification accuracy by adjusting hyperparameters using metaheuristic optimization algorithms and adjusting weights in neural networks8 or fuzzy systems9.

Similarly, these algorithms contribute to intelligent fault diagnosis and tuning controller coefficients10 in control and mechanical engineering. In telecommunication engineering, they aid in identifying digital filters11, while in energy engineering, they assist in tasks such as modeling solar panels12, optimizing their placement, and even wind turbine placement13. In civil engineering, metaheuristic optimization algorithms are utilized for structural optimization14, while in the field of economics, they enhance stock portfolio optimization15. Additionally, metaheuristic optimization algorithms play a role in optimizing thermal systems in chemical engineering16, among other applications.

The distinctive contributions of this research lie in developing a novel metaheuristic algorithm termed the HO, rooted in the emulation of Hippopotamuses' behaviors in the natural environment. The primary achievements of this study work can be outlined as follows:

-

The design of HO is influenced by the intrinsic behaviors observed in hippopotamuses, such as their position update in the river or pond, defence tactics against predators, and methods of evading predators.

-

HO is mathematically formulated through a three-phase model comprising their position update, defence, and evading predators.

-

To evaluate the effectiveness of the HO in solving optimization problems, it undergoes testing on a set of 161 standard BFs of various types of UM, MM, ZP benchmark test, the CEC 2019, the CEC 2014 dimensions of 10, 30, 50, and 100 to investigate the effect of the dimensions of the problem on the performance of the HO algorithm

-

The performance of the HO is evaluated by comparing it with the performance of twelve widely well-kown metaheuristic algorithms.

-

The effectiveness of the HO in real-world applications is tested through its application to tackle four engineering design challenges.

The article is structured into five sections. The “Literature review’’ section focuses on related work, while the “Hippopotamus Optimization Algorithm” section covers the HO approach introduced, modelled, and HO's limitations. The “Simulation results and comparison” section presents simulation results and compares the performance of the different algorithms. The performance of HO in solving classical engineering problems is studied in the “Hippopotamus optimization algorithm for engineering problems” section, and “Conclusions and future works” section provides conclusions based on the article's findings.

Literature review

As mentioned in the introduction, it should be noted that optimization algorithms are not confined to a singular discipline or specialized research area. This is primarily because numerous real-world problems possess intricate attributes, including nonlinearity, non-differentiability, discontinuity, and non-convexity. Given these complexities and uncertainties, stochastic optimization algorithms demonstrate enhanced versatility and a heightened capacity to address such challenges effectively. Consequently, they exhibit a more remarkable ability to accommodate and navigate the intricacies and uncertainties inherent in these problems. Optimization algorithms often draw inspiration from natural phenomena, aiming to model and simulate natural processes. Physical laws, chemical reactions, animal behavior patterns, social behavior of animals, biological evolution, game theory principles, and human behavior have received significant attention in this regard. These natural phenomena serve as valuable sources of inspiration for developing optimization algorithms, offering insights into efficient and practical problem-solving strategies.

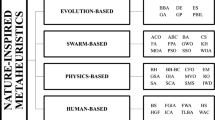

Optimization algorithms can be classified from multiple perspectives. In terms of objectives, they can be grouped into three categories: single-objective, multi-objective, and many-objective algorithms17. From the standpoint of decision variables, algorithms can be characterized as either continuous or discrete (or binary). Furthermore, they can be subdivided into constrained and unconstrained optimization algorithms, depending on whether constraints are imposed on the decision variables. Such classifications provide a framework for understanding and categorizing optimization algorithms based on different criteria. From another perspective, optimization algorithms can be categorized based on their sources of inspiration. These sources can be classified into six main categories: evolutionary algorithms, physics or chemistry-based algorithms, swarm-based algorithms, human-inspired algorithms, mathematic-based algorithms, and game theory-inspired algorithms. While the first four categories are well-established and widely recognized, the mathematic-based and game theory-inspired categories may need to be more known.

Optimization algorithms that draw inspiration from swarm-based are commonly utilized to model the collective behavior observed in animals, plants, and insects. For instance, the American Zebra Optimization Algorithm (ZOA)18. The inspiration for ZOA comes from the foraging behavior of zebras and their defensive behavior against predators during foraging. Similarly, the inspiration for Northern Goshawk Optimization (NGO)19 comes from the hunting behavior of the Northern Goshawk. Among the notable algorithms in this category are Particle Swarm Optimization (PSO)20, Ant Colony Optimization (ACO)21, and Artificial Bee Colony (ABC) algorithm22, Tunicate Swarm Algorithm (TSA)23, Beluga Whale Optimization (BWO)24, Aphid–Ant Mutualism (AAM)25, artificial Jellyfish Search (JS)26, Spotted Hyena Optimizer (SHO)27, Honey Badger Algorithm (HBA)28, Mantis Search Algorithm (MSA)29, Nutcraker Optimization Algorithm (NOA)30, Manta Ray Foraging Optimization (MRFO)31, Orca Predation Algorithm (OPA)32, Yellow Saddle Goatfish (YSG)33, Hermit Crab Optimization Algorithm (HCOA)34, Cheetah Optimizer (CO)35, Walrus Optimization Algorithm (WaOA)36, Red-Tailed Hawk algorithm (RTH)37, Barnacles Mating Optimizer (BMO)38, Meerkat Optimization Algorithm (MOA)39, Snake Optimizer (SO)40, Grasshopper Optimization Algorithm (GOA)41, Social Spider Optimization (SSO)42, Whale Optimization Algorithm (WOA)43, Ant Lion Optimizer (ALO)44, Grey Wolf Optimizer (GWO)45, Marine Predators Algorithm (MPA)46 ,Aquila Optimizer (AO)47, Mountain Gazelle Optimizer (MGO)48, Artificial Hummingbird Algorithm (AHA)49, African Vultures Optimization Algorithm (AVOA)50, Bonobo Optimizer (BO)51, Salp Swarm Algorithm (SSA)52, Harris Hawks Optimizer (HHO)53, Colony Predation Algorithm (CPA)54, Adaptive Fox Optimization (AFO)55, Slime Mould Algorithm (SMA)3, Spider Wasp Optimization (SWO)56, Artificial Gorilla Troops Optimizer (GTO)57, Krill Herd Optimization (KH)58, Alpine Skiing Optimization (ASO)59, Shuffled Frog-Leaping Algorithm (SFLA)60, Firefly Algorithms (FA)61, Komodo Mlipir Algorithm (KMA)62, Prairie Dog Optimization (PDO)63, Tasmanian Devil Optimization (TDO)64, Reptile Search Algorithm (RSA)65, Border Collie Optimization (BCO)66, Cuckoo Optimization Algorithm (COA)67 and Moth-flame optimization algorithm (MFO)68 are novel optimization algorithm that has been introduced in recent years. They belong to the category of swarm-based optimization algorithms. These algorithms encapsulate the principles of swarm intelligence, offering effective strategies for solving optimization problems by emulating the cooperative and adaptive behaviors found in natural swarms.

Another category of optimization algorithms is based on the origin of inspiration from biological evolution, genetics, and natural selection. The genetic optimization algorithm (GA)69 is one of the most well-known algorithms in this category. Among the notable algorithms in this category are Memetic Algorithm (MA)70, Differential Evolution (DE)71 Evolution Strategies (ES)72 Biogeography-Based Optimization (BBO)73, Liver Cancer Algorithm (LCA)74, Genetic Programming (GP)75, Invasive Weed Optimization algorithm (IWO)76, Electric Eel Foraging Optimization (EEFO)77, Greylag Goose Optimization (GGO)78 , and Puma Optimizer (PO)79. The Competitive Swarm Optimizer (CSO)80 is crafted explicitly for handling large-scale optimization challenges, taking inspiration from PSO while introducing a unique conceptual approach. In CSO, the adjustment of particle positions deviates from the inclusion of personal best positions or global best positions. Instead, it employs a pairwise competition mechanism, allowing the losing particle to learn from the winner and adjust its position accordingly. The Falcon Optimization Algorithm (FOA)81 is inspired by the hunting behavior of falcons. The Barnacles Mating Optimizer (BMO)82 algorithm takes inspiration from the mating behavior observed in barnacles in their natural habitat. The Pathfinder Algorithm (PFA)83 is tailored to address optimization problems with diverse structures. Drawing inspiration from the collective movement observed in animal groups and the hierarchical leadership within swarms, PFA seeks to discover optimal solutions akin to identifying food areas or prey.

Optimization algorithms are based on the origin of physical or chemical laws. As the name of this category suggests, the concepts are inspired by physical laws, chemical reactions, or chemical laws. Some of the algorithms in this category include Simulated Annealing (SA)84, Snow Ablation Optimizer (SAO)85, Electromagnetic Field Optimization (EFO)86, Light Spectrum Optimization (LSO)87, String Theory Algorithm (STA)88, Harmony Search (HS)89, Multi-Verse Optimizer (MVO)90, Black Hole Algorithm (BH)91, Gravitational Search Algorithm (GSA)92, Artificial Electric Field Algorithm (AEFA)93 draws inspiration from the principles of Coulomb's law governing electrostatic force. Magnetic Optimization Algorithm (MOA)94, Chemical Reaction Optimization (CRO)95 , Atom Search Optimization (ASO)96, Henry Gas Solubility Optimization (HGSO)97, Nuclear Reaction Optimization (NRO)98, Chernobyl Disaster Optimizer (CDO)99, Thermal Exchange Optimization (TEO)100, Turbulent Flow of Water-based Optimization (TFWO)101, Water Cycle Algorithm (WCA)102, Equilibrium Optimizer (EO)103, Lévy Flight Distribution (LFD)104, and Crystal Structure Algorithm (CryStAl)105 which takes inspiration from the symmetric arrangement of constituents in crystalline minerals like quartz.

Human-inspired algorithms derive inspiration from the social behavior, learning processes, and communication patterns found within human society. Some of the algorithms in this category include Driving Training-Based Optimization (DTBO)106, Fans Optimization (FO)107, Mother Optimization Algorithm (MOA)108, Mountaineering Team-Based Optimization (MTBO)109, Human Behavior-Based Optimization (HBBO)110, Chef-Based Optimization Algorithm (CBOA)111 is the process of acquiring culinary expertise through training programs. Teaching–Learning-Based Optimization (TLBO)112, Political Optimizer (PO)113, In the War Strategy Optimization (WSO)114 optimization algorithm, two human strategies during war, attack and defence, are modelled. EVolutive Election Based Optimization (EVEBO)115, Distance-Fitness Learning (DFL)116, and Cultural Algorithms (CA)117. Supply–Demand-Based Optimization (SDO)118 is inspired by the economic supply–demand mechanism and is crafted to emulate the dynamic interplay between consumers' demand and producers' supply. The Search and Rescue Optimization Algorithm (SAR)119 takes inspiration from the exploration behavior observed during search and rescue operations conducted by humans. The Student Psychology Based Optimization (SPBO)120 algorithm draws inspiration from the psychology of students who aim to enhance their exam performance and achieve the top position in their class. The Poor and Rich Optimization (PRO)121 algorithm is inspired by the dynamics between the efforts of poor and rich individuals to improve their economic situations. The algorithm mirrors the behavior of both the rich, who seek to widen the wealth gap, and the poor, who endeavor to accumulate wealth and narrow the gap with the affluent.

Game-based optimization algorithms often model the rules of a game. Some of the algorithms in this category include Squid Game Optimizer (SGO)122, Puzzle Optimization Algorithm (POA)123, and Darts Game Optimizer (DGO)124.

Mathematical theories inspire mathematical algorithms. For example, Arithmetic Optimization Algorithm (AOA)125 ,the Chaos Game Optimization (CGO)126 is inspired by chaos theory and fractal configuration principles. Another known algorithm in this category are Sine Cosine Algorithm (SCA)127, Evolution Strategy with Covariance Matrix Adaptation (CMA-ES)128, and Quadratic Interpolation Optimization (QIO).

Hippopotamus optimization algorithm

In this section, we articulate the foundational inspiration and theoretical underpinnings of the proposed HO Algorithm.

Hippopotamus

The hippopotamus is one of the fascinating creatures residing in Africa129. This animal falls under the classification of vertebrates and specifically belongs to the group of mammals within the vertebrate category130. Hippopotamuses are semi-aquatic organisms that predominantly occupy their time in aquatic environments, specifically rivers and ponds, as part of their habitat131,132. Hippopotamuses exhibit a social behavior wherein they reside in collective units referred to as pods or bloats, typically comprising a population ranging from 10 to 30 individuals133. Determining the gender of hippopotamuses is not easily accomplished as their sexual organs are not external, and the only distinguishing factor lies in the difference in their weight. Adult hippopotamuses can stay submerged underwater for up to 5 min. This species of animal, in terms of appearance, bears resemblance to venomous mammals such as the shrew, but its closest relatives are whales and dolphins, with whom they shared a common ancestor around 55 million years ago134.

Despite their herbivorous nature and reliance on a diet consisting mainly of grass, branches, leaves, reeds, flowers, stems, and plant husks135, hippopotamuses display inquisitiveness and actively explore alternative food sources. Biologists believe that consuming meat can cause digestive issues in hippopotamuses. These animals possess extremely powerful jaws, aggressive temperament, and territorial behavior, which has classified them as one of the most dangerous mammals in the world136. The weight of male hippopotamuses can reach up to 9,920 pounds, while females typically weigh around 3,000 pounds. They consume approximately 75 pounds of food daily. Hippopotamuses engage in frequent conflicts with one another, and occasionally, during these confrontations, one or multiple hippopotamus calves may sustain injuries or even perish. Due to their large size and formidable strength, predators generally do not attempt to hunt or attack adult hippopotamuses. However, young hippopotamuses or weakened adult individuals become vulnerable prey for Nile crocodiles, lions, and spotted hyenas134.

When attacked by predators, hippopotamuses exhibit a defensive behavior by rotating towards the assailant and opening their powerful jaws. This is accompanied by emitting a loud vocalization, reaching approximately 115 decibels, which instils fear and intimidation in the predator, often deterring them from pursuing such a risky prey. When the defensive approach of a hippopotamus proves ineffective or when the hippopotamus is not yet sufficiently strong, it retreats rapidly at speeds of approximately 30 km/h to distance itself from the threat. In most cases, it moves towards nearby water bodies such as ponds or rivers136.

Inspiration

The HO draws inspiration from three prominent behavioral patterns observed in the life of hippopotamuses. Hippopotamus groups are comprised of several female hippopotamuses, hippopotamus calves, multiple adult male hippopotamuses, and a dominant male hippopotamus (the leader of the herd)136. Due to their inherent curiosity, young and calves hippopotamuses often display a tendency to wander away from the group. As a consequence, they may become isolated and become targets for predators.

The secondary behavioral pattern of hippopotamuses is defensive in nature, triggered when they are under attack by predators or when other creatures intrude into their territory. Hippopotamuses exhibit a defensive response by rotating themselves toward the predator and employing their formidable jaws and vocalizations to deter and repel the attacker (Fig. 1). Predators such as lions and spotted hyenas possess an awareness of this phenomenon and actively seek to avoid direct exposure to the formidable jaws of a hippopotamus as a precautionary measure against potential injuries. The final behavioral pattern encompasses the hippopotamus' instinctual response of fleeing from predators and actively seeking to distance itself from areas of potential danger. In such circumstances, the hippopotamus strives to navigate toward the closest body of water, such as a river or pond, as lions and spotted hyenas frequently exhibit aversion to entering aquatic environments.

(a–d) shows the defensive behavior of the hippopotamus against the predator136.

Mathematical modelling of HO

The HO is a population-based optimization algorithm, in which search agents are hippopotamuses. In the HO algorithm, hippopotamuses are candidate solutions for the optimization problem, meaning that the position update of each hippopotamus in the search space represents values for the decision variables. Thus, each hippopotamus is represented as a vector, and the population of hippopotamuses is mathematically characterized by a matrix. Similar to conventional optimization algorithms, the initialization stage of the HO involves the generation of randomized initial solutions. During this step, the vector of decision variables is generated using the following formula:

where \({\chi }_{\mathcalligra{i}}\) represents the position of the \(i\) th candidate solution, \(r\) is a random number in the range of 0 to 1, and \(\mathcalligra{l}\mathcalligra{b}\) and \(\mathcalligra{u}\mathcalligra{b}\) denote the lower and upper bounds of the \(j\) th decision variable, respectively. Given that \(\mathcal{N}\) denotes the population size of hippopotamuses within the herd, and m represents the number of decision variables in the problem, the population matrix is formed by Eq. (2).

Phase 1: The hippopotamuses position update in the river or pond (Exploration)

Hippopotamus herds are composed of several adult female hippopotamuses, calves hippopotamuses, multiple adult male hippopotamuses, and dominant male hippopotamuses (the leader of the herd). The dominant hippopotamus is determined based on the objective function value iteration (The lowest for the minimization problem and the highest for the maximization problem). Typically, hippopotamuses tend to gather in close proximity to one another. Dominant male hippopotamuses protect the herd and territory from potential threats. Multiple female hippopotamuses are positioned around the male hippopotamuses. Upon reaching maturity, male hippopotamuses are ousted from the herd by the dominant male. Subsequently, these expelled male individuals are required to either attract females or engage in dominance contests with other established male members of the herd in order to establish their own dominance. Equation (3) expresses the mathematical representation of the position of male hippopotamus members of the herd in the lake or pond.

In Eq. (3) \({{\chi }_{\mathcalligra{i}}}^{\mathcalligra{m}\mathcalligra{h}\mathcalligra{i}\mathcalligra{p}\mathcalligra{p}\mathcalligra{o}}\) represents male hippopotamus position, \(\mathcal{D}\mathcalligra{h}\mathcalligra{i}\mathcalligra{p}\mathcalligra{p}\mathcalligra{o}\) denotes the dominant hippopotamus position (The hippopotamus that has the best cost in the current iteration). \({\overrightarrow{r}}_{1,\dots ,4}\) is a random vector between 0 and 1, \({r}_{5}\) is a random number between 0 and 1 (Eq. 4), \({I}_{1}\) and \({I}_{2}\) is an integer between 1 and 2 (Eqs. 3 and 6). \({\mathcalligra{m}\mathcal{G}}_{\mathcalligra{i}}\) refers to the mean values of some randomly selected hippopotamus with an equal probability of including the current considered hippopotamus (\({\chi }_{i}\)) and \({\mathcalligra{y}}_{1}\) is a random number between 0 and 1 (Eq. 3). In Eq. (4) \({\varrho }_{1}\) and \({\varrho }_{2}\) are integer random numbers that can be one or zero.

Equations (6) and (7) describe female or immature hippopotamus position (\({{\chi }_{\mathcalligra{i}}}^{\mathcal{F}\mathcal{B}\mathcalligra{h}\mathcalligra{i}\mathcalligra{p}\mathcalligra{p}\mathcalligra{o}}\)) within the herd. Most immature hippopotamuses are near their mothers, but due to curiosity, sometimes immature hippopotamuses are separated from the herd or away from their mothers. If \(T\) is greater than 0.6, it means the immature hippopotamus has distanced itself from its mother (Eq. 5). If \({r}_{6}\), which is a number between 0 and 1 (Eq. 7), is greater than 0.5, it means the immature hippopotamus has distanced itself from its mother but is still within or near the herd, Otherwise, it has separated from the herd. This behavior of immature and female hippopotamuses is modelled according to Eqs. (6) and (7). \({\mathcalligra{h}}_{1}\) and \({\mathcalligra{h}}_{2}\) are numbers or vectors randomly selected from the five scenarios in the \(\mathcalligra{h}\) equation. In Eq. (7) \({r}_{7}\) is a random number between zero and one. Equations (8), (9) describe male and female or immature hippopotamus position update within the herd. \({\mathcal{F}}_{\mathcalligra{i}}\) is objective function value.

Using \(\mathcalligra{h}\) vectors, \({I}_{1}\) and \({I}_{2}\) scenarios enhance the global search and improves exploration in the proposed algorithm. It leads to a better global search and enhances the exploration process in the proposed algorithm.

Phase 2: Hippopotamus defence against predators (Exploration)

One of the key reasons for the herd living of hippopotamuses can be attributed to their safety and security. The presence of these large and heavy-weighted herding’s of animals can deter predators from approaching them closely. Nevertheless, due to their inherent curiosity, immature hippopotamuses may occasionally deviate from the herd and become potential targets for Nile crocodiles, lions, and spotted hyenas, given their relatively lesser strength in comparison to adult hippopotamuses. Sick hippopotamuses, similar to immature ones, are also susceptible to being preyed upon by predators.

The primary defensive tactic employed by hippopotamuses is swiftly turning towards the predator and emitting loud vocalizations to deter the predator from approaching them closely (Fig. 2). During this phase, hippopotamuses may exhibit a behavior of approaching the predator to induce its retreat, thus effectively warding off the potential threat. Equation (10) represents the predator's position in search space.

where \({\overrightarrow{r}}_{8}\) represents a random vector ranging from zero to one.

Equation (11) indicates the distance of the \(\mathcalligra{i}th\) hippopotamus to the predator. During this time, the hippopotamus adopts a defensive behavior based on the factor \({\mathcal{F}}_{{\mathcal{P}redator}_{\mathcalligra{j}}}\) to protect itself against the predator. If \({\mathcal{F}}_{{\mathcal{P}redator}_{\mathcalligra{j}}}\) is less than \({\mathcal{F}}_{\mathcalligra{i}}\), indicating the predator is in very close proximity to the hippopotamus, in such a case, the hippopotamus swiftly turns towards the predator and moves towards it to make it retreat. If \({\mathcal{F}}_{{\mathcal{P}redator}_{\mathcalligra{j}}}\) is greater, it indicates that the predator or intruding entity is at a greater distance from the hippopotamus's territory Eq. (12). In this case, the hippopotamus turns towards the predator but with a more limited range of movement. The intention is to make the predator or intruder aware of its presence within its territory.

\({{\chi }_{\mathcalligra{i}}}^{\mathcal{H}\mathcalligra{i}\mathcalligra{p}\mathcalligra{p}\mathcalligra{o}\mathcal{R}}\) is a hippopotamus position which was faced to predator. \(\overrightarrow{RL}\) is a random vector with a Levy distribution, utilized for sudden changes in the predator's position during an attack on the hippopotamus. The mathematical model for the random movement of Lévy movement46 is calculated as Eq. (13). \(\mathcalligra{w}\) and \(\mathcalligra{v}\) are the random numbers in [0,1], respectively; \(\vartheta\) is a constant (\(\vartheta\) = 1.5), \(\Gamma\) is an abbreviation for Gamma function and \({\sigma }_{\mathcalligra{w}}\) can be obtained by Eq. (14).

In Eq. (12) \(\mathcalligra{f}\) is a uniform random number between 2 and 4, \(\mathcalligra{c}\) is a uniform random number between 1 and 1.5 and \(\mathcal{D}\) is a uniform random number between 2 and 3. \(\mathcalligra{g}\) represents a uniform random number between − 1 and 1. \({\overrightarrow{r}}_{9}\) is a random vector with dimensions \(1\times \mathcalligra{m}\).

According to the Eq. (15), if \({\mathcal{F}}_{\mathcalligra{i}}^{\mathcal{H}\mathcalligra{i}\mathcalligra{p}\mathcalligra{p}\mathcalligra{o}\mathcal{R}}\) is greater than \(\mathcal{F}\), it means that the hippopotamus has been hunted and another hippopotamus will replace it in the herd, otherwise the hunter will escape and this hippopotamus will return to the herd. Significant enhancements were observed in the global search process during the second phase. The first and second phases complement each other and effectively mitigate the risk of getting trapped in local minima.

Phase 3: Hippopotamus Escaping from the Predator (Exploitation)

Another behavior of a hippopotamus in the face of a predator is when the hippopotamus encounters a group of predators or is unable to repel the predator with its defensive behavior. In this situation, the hippopotamus tries to move away from the area (Fig. 3). Usually, the hippopotamus tries to run to the nearest lake or pond to avoid the harm of predators because spotted lions and hyenas avoid entering the lake or pond. This strategy leads to the hippopotamus finding a safe position close to its current location and modelling this behavior in Phase Three of the HO results in an enhanced ability for exploitation in local search. To simulate this behavior, a random position is generated near the current location of the hippopotamuses. This behavior of the hippopotamuses is modelled according to Eqs. (16–19). When the newly created position improves the cost function value, it indicates that the hippopotamus has found a safer position near its current location and has changed its position accordingly. \(\mathcalligra{t}\) denotes the current iteration, while \(\mathcal{T}\) represents the MaxIter.

In Eq. (17), \({{\chi }_{\mathcalligra{i}}}^{\mathcalligra{H}\mathcalligra{i}\mathcalligra{p}\mathcalligra{p}\mathcalligra{o}\mathcalligra{E}}\) is the position of hippopotamus which was searched to find the closest safe place. \({\mathcalligra{s}}_{1}\) is a random vector or number that is randomly selected from among three scenarios \(\mathcalligra{s}\) Eq. (18). The considered scenarios (\(\mathcalligra{s}\)) lead to a more suitable local search or, in other words, result in the proposed algorithm having a higher exploitation quality.

In Eq. (18) \({\overrightarrow{r}}_{11}\) represents a random vector between 0 and 1, while \({r}_{10}\) (Eq. 17) and \({r}_{13}\) denote random numbers generated within the range of 0 and 1. Additionally, \({r}_{12}\) is a normally distributed random number.

In the HO algorithm to update the population, we did not divide the population into three separate categories of immature, female, and male hippopotamus because although dividing them into separate categories would be better modelling of their nature, it would reduce the performance of the optimization algorithm.

Repetition process, and flowchart of HO

After completing each iteration of the HO algorithm, all population members are updated based on Phases 1 to 3 this process of updating the population according to Eqs. (3–19) continues until the final iteration.

During the execution of the algorithm, the best potential solution is consistently tracked and stored. Upon the completion of the entire algorithm, the best candidate, referred to as the dominant hippopotamus solution, is unveiled as the ultimate solution to the problem. The HO's procedural details are shown in Fig. 4 flowchart and Algorithm 1's pseudocode.

Computational complexity of HO

In this subsection, the HO computational complexity analysis is discussed. The total computational complexity of HO is equal to \(\mathcal{O}\left(\mathcal{N}\mathcalligra{m}\left(1+\frac{5\times \mathcal{T}}{2}\right)\right).\) The \(\mathcal{N}\mathcalligra{m}\) represents the computational complexity of the initial assignment of the algorithm, which is the same for all metaheuristic optimization algorithms. The computational complexity of the initial phase in HO is denoted as \(\mathcal{N}\mathcalligra{m}\mathcal{T}\). The computational complexity of the second phase in HO is \(\frac{\mathcal{N}\mathcalligra{m}\mathcal{T}}{2}\). Finally, the computational complexity of the third phase is \(\mathcal{N}\mathcalligra{m}\mathcal{T}\). Therefore, the total computational complexity of the main loop is \(\mathcal{N}\mathcalligra{m}\frac{5\times \mathcal{T}}{2}.\)

Regarding competitor algorithms, WOA, GWO, SSA, PSO, SCA, FA, GOA, CMA-ES, SSA, MFO, and IWO have a time complexity equal to \(\mathcal{O}\left(\mathcal{N}\mathcalligra{m}\left(1+\mathcal{T}\right)\right)\) and TLBO and AOA have a computational complexity equal to \(\mathcal{O}\left(\mathcal{N}\mathcalligra{m}\left(1+2\mathcal{T}\right)\right).\) Nevertheless, in order to ensure equitable comparative analysis, we standardized the population size for each algorithm within the simulation study, thereby ensuring uniformity in the total count of function evaluations across all algorithms utilized. Other algorithms with higher time complexity were introduced, for instance, CGO, which exhibits a computational complexity of \(\mathcal{O}\left(\mathcal{N}\mathcalligra{m}\left(1+4\mathcal{T}\right)\right)\).

Limitation of HO

The initial constraint of the HO, akin to all metaheuristic algorithms, lies in the absence of assurance regarding attaining the global optimum due to the stochastic search procedure. The second constraint stems from the NFL, implying the perpetual potential for newer metaheuristic algorithms to outperform HO. A further constraint involves the inability to assert HO as the preeminent optimizer across all optimization endeavors.

Simulation results and comparison

In this study, we juxtapose the efficacy of results attained through HO with a dozen established metaheuristic algorithms such as SCA, GWO, WOA, GOA, SSA, FA, TLBO, CMA-ES, IWO, MFO, AOA, and PSO. The adjustment of control parameters is detailed as per the specifications outlined in Table 1. This section presents simulation studies of the HO applied to various challenging optimization problems. The effectiveness of the HO in achieving optimal solutions is evaluated using a comprehensive set of 161 standard BFs. These functions encompass UM, high-dimensional, FM, and the CEC 2014, CEC 2019, ZP, and 4 engineering problems.

To enhance the performance of functions F1 to F2343, CEC 2019 test set, ZP, and engineering problems algorithms 30 independent runs encompassing 30,000 NFE and 60,000 NFE for CEC 2014 test set. The HO's population number is maintained at a constant of 24 members for AOA and TLBO set 30 and other algorithms is 60, and the MaxIter is set on 500 and 1000 (CEC 2014). A comprehensive set of six statistical metrics, namely mean, best, worst, Std., median, and rank, are utilized for presenting the optimization outcomes. The mean index is particularly employed as a pivotal ranking parameter for evaluating the efficacy of metaheuristic algorithms across each BF.

The specifications of the software and machines used for simulation are as follows; Core (TM) i3-1005G1 CPU processor with 1.20GHz with 8G for main memory and MacBook Air M1 with 8G for main memory.

Evaluation Unimodal benchmark functions

The assessment of functions was conducted, and the outcomes are presented in Table 2. Figure 6, shows convergence of the three most effective algorithms for optimizing F1-F23. This evaluation is to determine the ability of the algorithms to local search on seven separate UM functions, shown as F1-F7. The HO achieved global optimum for F1-F3 and F5-F6 a feat unattained by any of the 12 algorithms subjected to evaluation. Its performance in optimizing the F4 surpassed the others significantly. In a competitive scenario involving the F6, global optimum was achieved alongside four additional algorithms. Lastly, noteworthy superiority in performance was demonstrated by the HO for the F7. HO has consistently converged to zero Std. for F1- F4 and F6. For F7, the Std. is 4.10E-05, while for F5, it stands at 0.36343. The HO has the lowest Std. compared to the investigated algorithms.

Evaluation benchmark function high-dimensional multimodal

The outcomes of F8-F13 which were HM function using algorithms are presented in Table 3. The objective behind choosing these functions was to assess algorithm’s global search capabilities. The HO outperformed all other algorithms in F8 by a significant margin. In F9, it achieved global optimum along with the WOA, which indicates outstanding performance compared to other algorithms. For F10, it outperformed all other algorithms. F11 converged to global optimum alongside the TLBO, demonstrating superior performance compared to other algorithms. In F12, GOA outperformed HO and TLBO and ranked first. In F13, HO obtained the first rank. For F8, the HO's Std. is notably lower than the investigated algorithms. The F13 Std. is 0.012164, the lowest after the CMA-ES algorithm. This suggests that the HO demonstrates resilience in effectively addressing these functions (Fig. 6).

Evaluation fixed-dimension multimodal benchmark function

The objective was to examine the algorithm’s capacity to achieve a harmonious equilibrium between exploration and exploitation while conducting the search procedure on F14-F23. Results are reported in Table 4. HO performed best for F14-F23. The HO achieves a significantly lower Std. especially for F20-F22. The findings suggest that HO, characterized by its strong capability to balance exploration and exploitation, demonstrates superior performance when addressing FM and MM functions.

Figure 5 displays box plot diagrams depicting the optimal values of the objective function obtained from 30 separate runs for F1-F23, utilizing a set of HO and 12 algorithms.

Evaluation of the ZP

Kudela and Matousek introduced eight novel challenging benchmark functions, presenting a formidable challenge for bound-constrained single-objective optimization. These functions are crafted on the foundation of a ZP characterized by their non-differentiable nature and remarkable multimodality, and introduced functions incorporate three adjustable parameters, allowing for alterations in their behavior and level of difficulty137. Table 5 presents the results for eight ZP (ZP-F1 to ZP-F8). In ZP-F1 and ZP-F2, WOA outperformed HO and TLBO and ranked first. The HO exhibited superior performance across ZP-F3 to ZP-F8, achieving global optimum for the objective function in ZP-F3 and ZP-F8. HO outperformed all investigated algorithms for ZP-F3 and ZP-F4. Furthermore, the HO achieved a remarkable result by achieving global optimum for ZP-F5 and ZP-F6 across all criteria. In the case of ZP-F7, HO was in close competition with the GWO algorithm and secured the first rank by achieving global optimum. A similar success was observed for the ZP-F8 function, where HO competed with the AOA algorithm and achieved global optimum (Fig. 6).

In addition, when examining the boxplot diagrams in Fig. 7, it is evident that the HO consistently demonstrated a lower Std. than other algorithms. Figure 8, covering ZP-F1 to ZP-F8, demonstrates that the HO performs much faster than its competitors and reaches an unattainable optimal solution for other investigated algorithms.

Evaluation of the CEC 2019 test suite

CEC 2019 test BFs include ten complex functions described in138. The details of optimization are reported in Table 6. C19–F1 and C19–F10 functions from the CEC 2019 test designed for single-objective real parameter optimization. They aim to find the best possible outcome globally. These functions are ideal for assessing how well algorithms can perform in a thorough search for the best solution. The HO achieved the top rank in C19-F2–C19-F4 and C19-F7 functions. In C19-F1, it notably outperformed other algorithms across all criteria except the Best criterion Similar outcomes were observed in C19-F2, which ranked first with 3 top algorithms in converges (HO, PSO and SSA). The GWO achieved the top rank in C19-F1. In the case of C19-F3, HO secured the first position with a Std. better than that of the SSA algorithm. For C19-F4, both the Best and Mean criteria demonstrated significantly superior values compared to other algorithms. In C19-F5 CMA-ES surpassed of all algorithms.

The GOA achieved the top rank in C19-F6. In C19-F7 and C19-F9, it surpassed PSO by a slight margin, and in C19-F8 and C19-F10, it had a slight edge over the TLBO, respectively. Notably, in C19-F7, it outperformed PSO by a considerable margin. Finally, in C19-F8, HO emerged as the best across all criteria except the Best criterion while the TLBO found optimal value of C19-F8. In the box plots of Fig. 9, it is obvious that the HO has a dispersion of almost 0 in C19-F1 to C19-F4. Additionally, C19-F5 and C19-F6 have a much lower Std. than investigated algorithms. In the convergence plots of Fig. 10, we observe the excellent performance of the HO in achieving the optimal solution.

Evaluation of the CEC 2014 test suite

The CEC 2014 test suite encompasses a total of 30 standard BFs. These functions are categorized into UM functions (C14–F1 to C14–F3), MM functions with subcategories (C14–F4 to C14–F16), hybrid functions (C14–F17 to C14–F22), and composition functions (C14–F23 to C14–F30)139. The assessment of the HO is documented for CEC 2014 across varying dimensions (10, 30, 50, and 100) by employing 12 different algorithms. The results of this evaluation are presented in Table S1-S3 within the supplementary, accompanied by graphical representations depicted in Fig. S2-S9, illustrating the boxplots and convergence (The top 3 algorithms) diagrams HO has achieved the first rank in 83 out of 120 functions in finding optimal value. In the function (D = 30), C14-F13 had Std. worse than the first rank algorithm with a difference of 0.1 but better than the known GWO, GOA, and CMA-ES algorithms. The same happened in the functions (D = 50) C14-F13 and (D = 100) C14-F5.

In functions (D = 30) C14-F13, (D = 50) C14-F13, and (D = 100) C14-F5 had a slight difference with the first ranking algorithm only in the Std. value. In the function (D = 50), C14-F29 ranked second compared to the PSO algorithm and was not good in Std. and Best compared to the top 3 algorithms. C14–F4 and C14–F30 present ideal choices for assessing the proficiency of metaheuristic algorithms in local search and exploitation due to their absence of local optima. These functions possess a single extremum, prompting a focal objective of assessing the metaheuristic algorithms' efficacy in converging towards the global optimum during optimization endeavours.

Statistical analysis

To thoroughly evaluate the efficacy of the HO, we conduct a comprehensive statistical analysis by comparing it with the reviewed algorithms. The Wilcoxon nonparametric statistical signed-rank test140 checks if there's a big difference between pairs of data (See Table 7) It ranks the differences in size (ignoring whether they are positive or negative) and calculates a number based on those ranks. This number helps determine if the differences are likely due to chance or if they're significant. A small p-value means there's likely a big difference between the paired data. A big p-value means we can't be sure there's a significant difference.

The Friedman test is indeed a non-parametric statistical test used to determine if there are statistically significant differences among multiple related groups (Table 8). This research divided the benchmark functions into seven distinct groups to ensure the test's reliability. The initial group consists of functions delineated in Tables 2, 3, 4, encompassing unimodal, multimodal, and composition functions (F1-F23). The second group comprises the category of ZP functions illustrated in Table 5, while the third group is formed by CEC 2019 functions illustrated in Table 6. The fourth, fifth, sixth, and seventh groups included CEC 2014 functions in different dimensions, respectively (Table S1-S3)141.

A post-hoc Nemenyi test was utilized to delve deeper into the distinctions among the algorithms. If the null hypothesis is rejected, a post-hoc test can be conducted. The Nemenyi test is employed when conducting pairwise comparisons among all algorithms. The performance disparity between two classifiers is deemed significant if their respective average ranks exhibit a difference equal to or exceeding the \(CD\) (Eq. 20)141.

\(N\) represents the number of BFs in each group, \(k\) represents the number of algorithms under comparison and in each group, we selected the top 10 algorithms for comparison. At a significance level of \(\alpha = 0.05\), the critical value for 10 algorithms, the associated \(CD\) for each group has been specified in Fig. 11. To identify distinctions among the ten algorithms, the \(CD\) derived from the Nemenyi test was employed. The \(CD\) diagrams depicted in Fig. 11 offer straightforward and intuitive visualizations of the outcomes from a Nemenyi post-hoc test. This test is specifically designed to assess the statistical significance of differences in average ranks among a collection of ten algorithms, each evaluated on a set of seven groups.

Following the revelation of notable variations in performance among various algorithms, it becomes imperative to identify which algorithms exhibit significantly different performances compared to HO. HO is regarded as control algorithm in this context. Figure 11 displays the average ranking of each method across seven groups, with significance levels of 0.05 in 30 distinct runs. HO demonstrates significant superiority over algorithms whose average ranking exceeds the threshold line indicated in the figure. In group 1, HO held the first rank in all groups and exhibited significant superiority over TLBO, CMA-ES, GWO, WOA respectively. Moving to group 2, WOA secured the second position after HO and could significantly outperform AOA, GWO, and PSO while in group 3, PSO attained the second position following HO and TLBO, SSA, and GOA are ranked 3, 4, and 5, respectively. In group 4, TLBO outperforms algorithm PSO, and consequently, we observe the placement of algorithms, HO, TLBO, PSO, CMA-ES, SSA but within group 5, the PSO algorithm performs better than the TLBO algorithm. As a result, the arrangement or ranking of algorithms within this group is as follows: HO, PSO, TLBO, GOA, CMA-ES. Continuing, in group 6, it is observable that HO outperforms the other algorithms, and furthermore, the sequence of algorithms is as follows: PSO, TLBO, SSA, GOA, GWO. Lastly in group, the line-up of algorithms is as follows: HO, TLBO, PSO, CMA-ES, SSA.

A post-hoc analysis determines that if the disparity in mean Friedman values between the two algorithms falls below the \(CD\) threshold, there is no notable distinction between them; conversely, if it surpasses the \(CD\) value, a significant difference between the algorithms exists. In Table 9, a comparison has been conducted between 12 algorithms and HO across all seven BF groups. Algorithms that are not significantly different from the HO algorithm are highlighted with a red mark. Conversely, algorithms that are deemed significantly different from the HO algorithm are highlighted with a green mark in this table. In accordance with Table 9, none of the examined algorithms in this article can serve as a substitute for algorithm HO. This observation underscores the necessity of the existence of algorithm HO, which can potentially address limitations not covered by other algorithms.

Sensitivity analysis

HO is a swarm-based optimizer that conducts the optimization procedure through iterative calculations. Hence, it is anticipated that the hyperparameters \(\mathcal{N}\) (representing the population size) and \(\mathcal{T}\) (indicating the total number of algorithm iterations) will influence the optimization performance of HO. Consequently, the sensitivity analysis of HO to hyperparameters \(\mathcal{N}\) and \(\mathcal{T}\) is provided in this subsection. To analyze the sensitivity of HO to hyperparameter \(\mathcal{N}\), the proposed algorithm is employed for different values of \(\mathcal{N}\), specifically 20, 30, 50, and 100. This variation in \(\mathcal{N}\) is utilized to optimize functions from F1 to F23 BFs.

The optimization results are provided in Table 10, and the convergence curves of HO under this analysis are depicted in Fig. 12. What is evident from the analysis of HO’s sensitivity to the hyperparameter \(\mathcal{N}\) is that increasing the searcher agents improves HO’s search capability in scanning the search space, which enhances the performance of the proposed algorithm and reduces the values of the objective function.

To analyze the sensitivity of the proposed algorithm to hyperparameter \(\mathcal{T}\), HO is utilized for different values of \(\mathcal{T}\), specifically 200, 500, 800, and 1000. These variations in \(\mathcal{T}\) are employed to optimize functions from F1 to F23 BFs. The optimization results are provided in Table 11, and the convergence curves of HO under this analysis are depicted in Fig. 13. According results, it is observed that higher values of \(\mathcal{T}\) provide the algorithm with increased opportunities to converge to superior solutions, primarily due to enhanced exploitation ability. Hence, it is evident that as the values of \(\mathcal{T}\) increase, the optimization process becomes more efficient, leading to decreased values of the objective function.

According to Tables 10 which iteration hyperparameter is kept constant and Table 11 which population parameter is held constant, the performance of the HO algorithm improves with an increase in population and iteration, except for F8 as shown in Table 11. Based on the results, it is observed that the algorithm is less sensitive to changes in the iteration hyperparameter (Table 12).

Hippopotamus optimization algorithm for engineering problems

In this section, the effectiveness of the HO is evaluated in relation to its ability to address practical optimization problems in four of problem distinct engineering design challenges. The HO is employed to solve these problems, utilizing a total of 30,000 evaluations. The statistical outcomes obtained using various methodologies are showcased in Table 13. Additionally, Fig. 18 illustrates the boxplots of the algorithms.

TCS design

This problem's primary aim entails minimizing the mass associated with the spring, as illustrated in Fig. 14, considering whether it is stretched or compressed. In order to achieve optimal design, it is important to ensure wave frequency, deflection limits, and stress are met. The mathematical representation of this engineering design can be described by the equation in Supplementary142. Based on the obtained outcomes, the HO has successfully obtained the optimal solution. Simultaneously, it ensures compliance with the specified constraints, as detailed in the references45,102,142,143,144,145. The optimal solutions achieved through the utilization of HO for this particular problem are {\({\mathcalligra{z}}_{1}=0.051689714188651, {\mathcalligra{z}}_{2}= 0.356733450209264, {\mathcalligra{z}}_{3}= 11.288045038991518\)}.

WB design

The objective is to minimize the cost associated with the welding beam. This objective is achieved by simultaneously addressing seven constraints. The problem concerning the design of a welded beam is visually depicted in Fig. 15. The optimal design problem for the welded beam is formulated as described in Supplementary49. The HO has the capability to identify the most favourable value for the optimization variables. Statistical analysis determined that that the HO exhibits superior performance. The optimal solutions achieved through the utilization of HO for this particular problem are {\({\mathcalligra{z}}_{1}=0.205729639786079, {\mathcalligra{z}}_{2}=3.470488665628001, {\mathcalligra{z}}_{3}=9.036623910357633, {\mathcalligra{z}}_{4}= 0.205729639786079\)}.

PV design

The primary objective revolves around minimizing the overall cost associated with the tank under pressurized conditions, considering factors such as forming techniques, welding methods, and material costs, as depicted in Fig. 16. The design process involves considering four variables and four constraints. The PV design problem is formulated as described in Supplementary49 .According to the reported results, the HO outperformed other methods. The optimal solutions achieved through the utilization of HO for this particular problem are {\({\mathcalligra{z}}_{1}=13.4141563816526, {\mathcalligra{z}}_{2}=7.3495109848502, {\mathcalligra{z}}_{3}=42.0984455958549, {\mathcalligra{z}}_{4}=176.6365958424392\)}. Further details regarding these constraints can be found in references69 and145.

WFLO

We're figuring out where to place wind turbines on a 10 \(\times\) 10 grid. We have 100 different options for where to put the turbines. We can have anywhere from 1 to 39 turbines in the wind farm. We're simulating wind coming from 36 different directions, all at a steady speed of 12 m per second. The objective is to minimize expenditures, maximize the aggregate power output, reduce acoustic emissions, and optimize various performance and cost-related metrics13 (Fig. 17). The attributes of the wind turbine are documented in Table 12. The formulation of WFLO problem is articulated as follows:

Herein, \(\mathcalligra{z}\) represents a vector comprising design variables, while \({\mathcal{P}}_{\mathcalligra{t}\mathcalligra{o}\mathcalligra{t}\mathcalligra{a}\mathcalligra{l}}\) denotes the aggregate power output generated by a wind farm. The computation of the \(\mathcal{C}\mathcalligra{o}\mathcalligra{s}\mathcalligra{t}\) function can be derived according to the method described in146 (Fig. 18).

The HO demonstrating superior performance compared to alternative approaches.

Ethical approval

This article does not contain any studies with human participants or animals performed by any of the authors. Informed consent was not required as no human or animals were involved.

Conclusions and future works

In this paper, we introduced a novel nonparametric optimization algorithm called the Hippopotamus Optimization (HO). The real inspiration behind the HO is to simulate the behaviors of hippopotamuses, incorporating their spatial positioning in the water, defense strategies against threats, and evasion techniques from predators. The algorithm is outlined conceptually through a trinary-phase model of their position update in river and pound, defense, and evading predators, each mathematically defined. In light of the results from addressing four distinct engineering design challenges, the HO has effectively achieved the most efficient resolution while concurrently upholding adherence to the designated constraints. The acquired outcomes from the HO were compared with the performance of 12 established metaheuristic algorithms. The algorithm achieved the highest ranking across 115 out of 161 BFs in finding optimal value. These benchmarks span various function types, including UM and HM functions, FM functions, in addition to the CEC 2019 test suite and CEC 2014 dimensions encompassing 10, 30, 50, and 100, along with the ZP.

The results of CEC 2014 test suite indicate that the HO swiftly identifies optimal solutions, avoiding entrapment in local minima. It consistently pursues highly optimal solutions at an impressive pace by employing efficient local search strategies. Furthermore, upon evaluation using the CEC 2019 test, it can be confidently asserted that the HO effectively finds the global optimal solution. Additionally, in the ZP, the HO demonstrates significantly superior performance compared to its competitors, achieving an optimal solution that remains unattainable for other investigated algorithms. Moreover, the observed lower Std. than that of the other investigated algorithms suggests that the HO displays resilience and efficacy in effectively addressing these functions.

Considering the outcomes derived from tackling four unique engineering design challenges, the HO has effectively demonstrated the most efficient resolution while maintaining strict adherence to the specified constraints. The application of the Wilcoxon signed test, Friedman and Nemenyi post-hoc test confirms that the HO displays a remarkable and statistically significant advantage over the algorithms under investigation in effectively addressing the optimization problems scrutinized in this study. The findings indicate that Ho exhibits lower sensitivity to changes in the iteration hyperparameter than the population hyperparameter.

The suggested methodology, HO, presents numerous avenues for future research exploration. Particularly, an area ripe with potential is the advancement of binary and multi-objective variants based on this proposed methodology. Furthermore, an avenue worth investigating in forthcoming research involves employing HO in optimizing diverse problem sets across multiple domains and real-world contexts.

Data availability

All data generated or analyzed during this study are included directly in the text of this submitted manuscript. There are no additional external files with datasets.

Abbreviations

- MaxIter:

-

Max number of iterations

- BF:

-

Benchmark Function

- UM:

-

Unimodal

- MM:

-

Multimodal

- FM:

-

Fixed-dimension Multimodal

- HM:

-

High-dimensional Multimodal

- ZP:

-

Zigzag Pattern benchmark test

- TCS:

-

Tension/Compression Spring

- WB:

-

Welded Beam

- PV:

-

Pressure Vessel

- WFLO:

-

Wind Farm Layout Optimization

- F:

-

Function

- CEC:

-

IEEE Congress on Evolutionary Computation

- D:

-

Dimension

- C19:

-

CEC2019

- C14:

-

CEC2014

- CMA-ES:

-

Evolution Strategy with Covariance Matrix Adaptation

- MFO:

-

Moth-flame Optimization

- AOA:

-

Arithmetic Optimization Algorithm

- TLBO:

-

Teaching-Learning-Based Optimization

- IWO:

-

Invasive Weed Optimization

- GOA:

-

Grasshopper Optimization Algorithm

- FA:

-

Firefly Algorithm

- PSO:

-

Particle Swarm Optimization

- SSA:

-

Salp Swarm Algorithm

- CD:

-

Critical Difference

- GWO:

-

Gray Wolf Optimization

- SCA:

-

Sine Cosine Algorithm

- WOA:

-

Whale Optimization Algorithm

- Best:

-

The best result

- Worst:

-

The worst result

- Std.:

-

Standard Deviation

- Mean:

-

Average best result

References

Dhiman, G., Garg, M., Nagar, A., Kumar, V. & Dehghani, M. A novel algorithm for global optimization: Rat swarm optimizer. J. Ambient Intell. Humaniz Comput. 12, 8457–8482 (2021).

Chen, H. et al. An opposition-based sine cosine approach with local search for parameter estimation of photovoltaic models. Energy Convers Manag. 195, 927–942 (2019).

Li, S., Chen, H., Wang, M., Heidari, A. A. & Mirjalili, S. Slime mould algorithm: A new method for stochastic optimization. Futur. Gener. Comput. Syst. 111, 300–323 (2020).

Gharaei, A., Shekarabi, S. & Karimi, M. Modelling and optimal lot-sizing of the replenishments in constrained, multi-product and bi-objective EPQ models with defective products: Generalised cross decomposition. Int. J. Syst. Sci. https://doi.org/10.1080/23302674.2019.1574364 (2019).

Sayadi, R. & Awasthi, A. An integrated approach based on system dynamics and ANP for evaluating sustainable transportation policies. Int. J. Syst. Sci.: Op. Logist. 7, 1–10 (2018).

Golalipour, K. et al. The corona virus search optimizer for solving global and engineering optimization problems. Alex. Eng. J. 78, 614–642 (2023).

Wolpert, D. H. & Macready, W. G. No free lunch theorems for optimization. IEEE Trans. Evolut. Comput. 1, 67–82 (1997).

Emam, M. M., Samee, N. A., Jamjoom, M. M. & Houssein, E. H. Optimized deep learning architecture for brain tumor classification using improved hunger games search algorithm. Comput. Biol. Med. 160, 106966 (2023).

Lu, D. et al. Effective detection of Alzheimer’s disease by optimizing fuzzy K-nearest neighbors based on salp swarm algorithm. Comput. Biol. Med. 159, 106930 (2023).

Patel, H. R. & Shah, V. A. Fuzzy Logic Based Metaheuristic Algorithm for Optimization of Type-1 Fuzzy Controller: Fault-Tolerant Control for Nonlinear System with Actuator Fault⁎⁎The author(s) received funding for the ACODS-2022 registration fees from Dharmsinh Desai University, Nadiad-387001, Gujarat, India. IFAC-PapersOnLine 55, 715–721 (2022).

Ekinci, S. & Izci, D. Enhancing IIR system identification: Harnessing the synergy of gazelle optimization and simulated annealing algorithms. ePrime – Adv. Electr. Eng.Electron. Energy 5, 100225 (2023).

Refaat, A. et al. A novel metaheuristic MPPT technique based on enhanced autonomous group particle swarm optimization algorithm to track the GMPP under partial shading conditions - Experimental validation. Energy Convers Manag. 287, 117124 (2023).

Kunakote, T. et al. Comparative performance of twelve metaheuristics for wind farm layout optimisation. Archiv. Comput. Methods Eng. 29, 717–730 (2022).

Ocak, A., Melih Nigdeli, S. & Bekdaş, G. Optimization of the base isolator systems by considering the soil-structure interaction via metaheuristic algorithms. Structures 56, 104886 (2023).

Domínguez, A., Juan, A. & Kizys, R. A survey on financial applications of metaheuristics. ACM Comput. Surv. 50, 1–23 (2017).

Han, S. et al. Thermal-economic optimization design of shell and tube heat exchanger using an improved sparrow search algorithm. Therm. Sci. Eng. Progress 45, 102085 (2023).

Hazra, A., Rana, P., Adhikari, M. & Amgoth, T. Fog computing for next-generation Internet of Things: Fundamental, state-of-the-art and research challenges. Comput. Sci. Rev. 48, 100549 (2023).

Mohapatra, S. & Mohapatra, P. American zebra optimization algorithm for global optimization problems. Sci. Rep. 13, 5211 (2023).

Dehghani, M., Hubálovský, Š & Trojovský, P. Northern goshawk optimization: A new swarm-based algorithm for solving optimization problems. IEEE Access 9, 162059–162080 (2021).

Kennedy, J. & Eberhart, R. Particle swarm optimization. in Proceedings of ICNN’95 - International Conference on Neural Networks vol. 4 1942–1948 (1995).

Dorigo, M., Birattari, M. & Stutzle, T. Ant colony optimization. IEEE Comput. Intell. Mag. 1, 28–39 (2006).

Kang, F., Li, J. & Ma, Z. Rosenbrock artificial bee colony algorithm for accurate global optimization of numerical functions. Inf. Sci. 181, 3508–3531 (2011).

Kaur, S., Awasthi, L. K., Sangal, A. L. & Dhiman, G. Tunicate Swarm Algorithm: A new bio-inspired based metaheuristic paradigm for global optimization. Eng. Appl. Artif. Intell. 90, 103541 (2020).

Zhong, C., Li, G. & Meng, Z. Beluga whale optimization: A novel nature-inspired metaheuristic algorithm. Knowl. Based Syst. 251, 109215 (2022).

Eslami, N., Yazdani, S., Mirzaei, M. & Hadavandi, E. Aphid-Ant Mutualism: A novel nature-inspired metaheuristic algorithm for solving optimization problems. Math. Comput. Simul. 201, 362–395 (2022).

Chou, J.-S. & Truong, D.-N. A novel metaheuristic optimizer inspired by behavior of jellyfish in ocean. Appl Math Comput 389, 125535 (2021).

Dhiman, G. & Kumar, V. Spotted hyena optimizer: A novel bio-inspired based metaheuristic technique for engineering applications. Adv. Eng. Softw. 114, 48–70 (2017).

Hashim, F. A., Houssein, E. H., Hussain, K., Mabrouk, M. S. & Al-Atabany, W. Honey badger algorithm: New metaheuristic algorithm for solving optimization problems. Math. Comput. Simul. 192, 84–110 (2022).

Abdel-Basset, M., Mohamed, R., Zidan, M., Jameel, M. & Abouhawwash, M. Mantis search algorithm: A novel bio-inspired algorithm for global optimization and engineering design problems. Comput. Methods Appl. Mech. Eng. 415, 116200 (2023).

Abdel-Basset, M., Mohamed, R., Jameel, M. & Abouhawwash, M. Nutcracker optimizer: A novel nature-inspired metaheuristic algorithm for global optimization and engineering design problems. Knowl. Based Syst. 262, 110248 (2023).

Zhao, W., Zhang, Z. & Wang, L. Manta ray foraging optimization: An effective bio-inspired optimizer for engineering applications. Eng. Appl. Artif. Intell. 87, 103300 (2020).

Jiang, Y., Wu, Q., Zhu, S. & Zhang, L. Orca predation algorithm: A novel bio-inspired algorithm for global optimization problems. Expert Syst. Appl. 188, 116026 (2022).

Zaldívar, D. et al. A novel bio-inspired optimization model based on Yellow Saddle Goatfish behavior. Biosystems 174, 1–21 (2018).

Guo, J. et al. A novel hermit crab optimization algorithm. Sci. Rep. 13, 9934 (2023).

Akbari, M. A., Zare, M., Azizipanah-abarghooee, R., Mirjalili, S. & Deriche, M. The cheetah optimizer: A nature-inspired metaheuristic algorithm for large-scale optimization problems. Sci. Rep. 12, 10953 (2022).

Trojovský, P. & Dehghani, M. A new bio-inspired metaheuristic algorithm for solving optimization problems based on walruses behavior. Sci. Rep. 13, 8775 (2023).

Ferahtia, S. et al. Red-tailed hawk algorithm for numerical optimization and real-world problems. Sci. Rep. 13, 12950 (2023).

Ai, H. et al. Magnetic anomaly inversion through the novel barnacles mating optimization algorithm. Sci. Rep. 12, 22578 (2022).

Xian, S. & Feng, X. Meerkat optimization algorithm: A new meta-heuristic optimization algorithm for solving constrained engineering problems. Expert Syst. Appl. 231, 120482 (2023).

Hashim, F. A. & Hussien, A. G. Snake optimizer: A novel meta-heuristic optimization algorithm. Knowl. Based Syst. 242, 108320 (2022).

Saremi, S., Mirjalili, S. & Lewis, A. Grasshopper optimisation algorithm: Theory and application. Adv. Eng. Softw. 105, 30–47 (2017).

Yu, J. J. Q. & Li, V. O. K. A social spider algorithm for global optimization. Appl. Soft Comput. 30, 614–627 (2015).

Mirjalili, S. & Lewis, A. The whale optimization algorithm. Adv. Eng. Softw. 95, 51–67 (2016).

Mirjalili, S. The ant lion optimizer. Adv. Eng. Softw. 83, 80–98 (2015).

Mirjalili, S., Mirjalili, S. M. & Lewis, A. Grey wolf optimizer. Adv. Eng. Softw. 69, 46–61 (2014).

Faramarzi, A., Heidarinejad, M., Mirjalili, S. & Gandomi, A. H. Marine predators algorithm: A nature-inspired metaheuristic. Expert Syst. Appl. 152, 113377 (2020).

Abualigah, L. et al. Aquila Optimizer: A novel meta-heuristic optimization algorithm. Comput Ind Eng 157, 107250 (2021).

Abdollahzadeh, B., Gharehchopogh, F. S., Khodadadi, N. & Mirjalili, S. Mountain gazelle optimizer: A new nature-inspired metaheuristic algorithm for global optimization problems. Adv. Eng. Softw. 174, 103282 (2022).

Zhao, W., Wang, L. & Mirjalili, S. Artificial hummingbird algorithm: A new bio-inspired optimizer with its engineering applications. Comput. Methods Appl. Mech. Eng. 388, 114194 (2022).

Abdollahzadeh, B., Gharehchopogh, F. S. & Mirjalili, S. African vultures optimization algorithm: A new nature-inspired metaheuristic algorithm for global optimization problems. Comput. Ind. Eng. 158, 107408 (2021).

Das, A. K. & Pratihar, D. K. Bonobo optimizer (BO): An intelligent heuristic with self-adjusting parameters over continuous spaces and its applications to engineering problems. Appl. Intell. 52, 2942–2974 (2022).

Mirjalili, S. et al. Salp swarm algorithm: A bio-inspired optimizer for engineering design problems. Adv. Eng. Softw. 114, 163–191 (2017).

Heidari, A. A. et al. Harris hawks optimization: Algorithm and applications. Futur. Gener. Comput. Syst. 97, 849–872 (2019).

Tu, J., Chen, H., Wang, M. & Gandomi, A. H. The colony predation algorithm. J. Bionic. Eng. 18, 674–710 (2021).

ALRahhal, H. & Jamous, R. AFOX: A new adaptive nature-inspired optimization algorithm. Artif. Intell. Rev. https://doi.org/10.1007/s10462-023-10542-z (2023).

Abdel-Basset, M., Mohamed, R., Jameel, M. & Abouhawwash, M. Spider wasp optimizer: A novel meta-heuristic optimization algorithm. Artif. Intell. Rev. 56, 11675–11738 (2023).

Abdollahzadeh, B., Soleimanian Gharehchopogh, F. & Mirjalili, S. Artificial gorilla troops optimizer: A new nature-inspired metaheuristic algorithm for global optimization problems. Int. J. Intell. Syst. 36, 5887–5958 (2021).

Gandomi, A. H. & Alavi, A. H. Krill herd: A new bio-inspired optimization algorithm. Commun. Nonlinear Sci. Numer. Simul. 17, 4831–4845 (2012).

Yuan, Y. et al. Alpine skiing optimization: A new bio-inspired optimization algorithm. Adv. Eng. Softw. 170, 103158 (2022).

Eusuff, M., Lansey, K. & Pasha, F. Shuffled frog-leaping algorithm: A memetic meta-heuristic for discrete optimization. Eng. Optimiz. 38, 129–154 (2006).

Yang, X.-S. Chapter 8 - Firefly Algorithms. In Nature-Inspired Optimization Algorithms (ed. Yang, X.-S.) 111–127 (Elsevier, 2014).

Suyanto, S., Ariyanto, A. A. & Ariyanto, A. F. Komodo Mlipir Algorithm. Appl. Soft Comput. 114, 108043 (2022).

Ezugwu, A. E., Agushaka, J. O., Abualigah, L., Mirjalili, S. & Gandomi, A. H. Prairie dog optimization algorithm. Neural Comput. Appl. 34, 20017–20065 (2022).

Dehghani, M., Hubálovský, Š & Trojovský, P. Tasmanian devil optimization: A new bio-inspired optimization algorithm for solving optimization algorithm. IEEE Access 10, 19599–19620 (2022).

Abualigah, L., Elaziz, M. A., Sumari, P., Geem, Z. W. & Gandomi, A. H. Reptile search algorithm (RSA): A nature-inspired meta-heuristic optimizer. Expert Syst. Appl. 191, 116158 (2022).

Dutta, T., Bhattacharyya, S., Dey, S. & Platos, J. Border collie optimization. IEEE Access 8, 109177–109197 (2020).

Saba, J., Bozorg-Haddad, O. & Cuckoo, C. X. Cuckoo optimization algorithm (COA). In Advanced Optimization by Nature-Inspired Algorithms (ed. Bozorg-Haddad, O.) 39–49 (Springer Singapore, 2018).

Mirjalili, S. Moth-flame optimization algorithm: A novel nature-inspired heuristic paradigm. Knowl. Based Syst. 89, 228–249 (2015).

Whitley, D. A Genetic Algorithm Tutorial. Stat Comput 4, (1998).

Moscato, P. On Evolution, Search, Optimization, Genetic Algorithms and Martial Arts: Towards Memetic Algorithms. (1989).

Storn, R. & Price, K. Differential evolution – a simple and efficient heuristic for global optimization over continuous spaces. J. Global Optimiz. 11, 341–359 (1997).

Beyer, H.-G. & Schwefel, H.-P. Evolution strategies–a comprehensive introduction. Nat. Comput. 1, 3–52 (2002).

Simon, D. Biogeography-based optimization. IEEE Trans. Evolut. Comput. 12, 702–713 (2008).

Houssein, E. H., Oliva, D., Samee, N. A., Mahmoud, N. F. & Emam, M. M. Liver cancer algorithm: A novel bio-inspired optimizer. Comput. Biol. Med. https://doi.org/10.1016/j.compbiomed.2023.107389 (2023).

Banzhaf, W., Francone, F. D., Keller, R. E. & Nordin, P. Genetic Programming: An Introduction: On the Automatic Evolution of Computer Programs and Its Applications (Morgan Kaufmann Publishers Inc., 1998).

Xing, B. & Gao, W.-J. Invasive Weed Optimization Algorithm. In Innovative Computational Intelligence: A Rough Guide to 134 Clever Algorithms (eds Xing, B. & Gao, W.-J.) 177-181sZ (Springer International Publishing, 2014).

Zhao, W. et al. Electric eel foraging optimization: A new bio-inspired optimizer for engineering applications. Expert Syst. Appl. 238, 122200 (2024).

El-kenawy, E. S. M. et al. Greylag goose optimization: Nature-inspired optimization algorithm. Expert Syst Appl 238, 122147 (2024).

Abdollahzadeh, B. et al. Puma optimizer (PO): A novel metaheuristic optimization algorithm and its application in machine learning. Cluster Comput. https://doi.org/10.1007/S10586-023-04221-5/TABLES/28 (2024).

Cheng, R. & Jin, Y. A competitive swarm optimizer for large scale optimization. IEEE Trans. Cybern. 45, 191–204 (2015).

de Vasconcelos Segundo, E. H., Mariani, V. C. & dos Coelho, L. S. Design of heat exchangers using Falcon Optimization Algorithm. Appl. Therm. Eng. 156, 119–144 (2019).

Sulaiman, M. H., Mustaffa, Z., Saari, M. M. & Daniyal, H. Barnacles mating optimizer: A new bio-inspired algorithm for solving engineering optimization problems. Eng. Appl. Artif. Intell. 87, 103330 (2020).

Yapici, H. & Cetinkaya, N. A new meta-heuristic optimizer: Pathfinder algorithm. Appl. Soft Comput. 78, 545–568 (2019).

Kirkpatrick, S., Gelatt, C. D. & Vecchi, M. P. Optimization by simulated annealing. Science 1979(220), 671–680 (1983).

Deng, L. & Liu, S. Snow ablation optimizer: A novel metaheuristic technique for numerical optimization and engineering design. Expert Syst. Appl. 225, 120069 (2023).

Abedinpourshotorban, H., Mariyam Shamsuddin, S., Beheshti, Z. & Jawawi, D. N. A. Electromagnetic field optimization: A physics-inspired metaheuristic optimization algorithm. Swarm Evol. Comput. 26, 8–22 (2016).

Abdel-Basset, M., Mohamed, R., Sallam, K. M. & Chakrabortty, R. K. Light spectrum optimizer: A novel physics-inspired metaheuristic optimization algorithm. Mathematics 10, 3466 (2022).

Rodriguez, L., Castillo, O., Garcia, M. & Soria, J. A new meta-heuristic optimization algorithm based on a paradigm from physics: String theory. J. Intell. Fuzzy Syst. 41, 1657–1675 (2021).

Yang, X.-S. Harmony Search as a Metaheuristic Algorithm. In Music-Inspired Harmony Search Algorithm: Theory and Applications (ed. Geem, Z. W.) 1–14 (Springer, 2009).

Mirjalili, S., Mirjalili, S. M. & Hatamlou, A. Multi-Verse Optimizer: A nature-inspired algorithm for global optimization. Neural Comput. Appl. 27, 495–513 (2016).

Hatamlou, A. Black hole: A new heuristic optimization approach for data clustering. Inf. Sci. (N Y) 222, 175–184 (2013).

Rashedi, E., Nezamabadi-pour, H. & Saryazdi, S. G. S. A. A gravitational search algorithm. Inf. Sci. (N Y) 179, 2232–2248 (2009).

Anita, & Yadav, A. AEFA: Artificial electric field algorithm for global optimization. Swarm Evol. Comput. 48, 93–108 (2019).

Tayarani-N, M. H. & Akbarzadeh-T, M. R. Magnetic Optimization Algorithms a new synthesis. in 2008 IEEE Congress on Evolutionary Computation (IEEE World Congress on Computational Intelligence) 2659–2664 (2008). doi:https://doi.org/10.1109/CEC.2008.4631155.

Lam, A. Y. S. & Li, V. O. K. Chemical-reaction-inspired metaheuristic for optimization. IEEE Trans. Evolut. Comput. 14, 381–399 (2009).

Zhao, W., Wang, L. & Zhang, Z. Atom search optimization and its application to solve a hydrogeologic parameter estimation problem. Knowl. Based Syst. 163, 283–304 (2019).

Hashim, F. A., Houssein, E. H., Mabrouk, M. S., Al-Atabany, W. & Mirjalili, S. Henry gas solubility optimization: A novel physics-based algorithm. Future Gener. Comput. Syst. 101, 646–667 (2019).

Wei, Z., Huang, C., Wang, X., Han, T. & Li, Y. Nuclear reaction optimization: A novel and powerful physics-based algorithm for global optimization. IEEE Access 7, 66084–66109 (2019).

Shehadeh, H. Chernobyl disaster optimizer (CDO): A novel meta-heuristic method for global optimization. Neural Comput. Appl. https://doi.org/10.1007/s00521-023-08261-1 (2023).

Kaveh, A. & Dadras, A. A novel meta-heuristic optimization algorithm: Thermal exchange optimization. Adv. Eng. Softw. 110, 69–84 (2017).

Ghasemi, M. et al. A novel and effective optimization algorithm for global optimization and its engineering applications: Turbulent Flow of Water-based Optimization (TFWO). Eng. Appl. Artif. Intell. 92, 103666 (2020).

Eskandar, H., Sadollah, A., Bahreininejad, A. & Hamdi, M. Water cycle algorithm – A novel metaheuristic optimization method for solving constrained engineering optimization problems. Comput. Struct. 110–111, 151–166 (2012).

Faramarzi, A., Heidarinejad, M., Stephens, B. & Mirjalili, S. Equilibrium optimizer: A novel optimization algorithm. Knowl. Based Syst. 191, 105190 (2020).

Houssein, E. H., Saad, M. R., Hashim, F. A., Shaban, H. & Hassaballah, M. Lévy flight distribution: A new metaheuristic algorithm for solving engineering optimization problems. Eng. Appl. Artif. Intell. 94, 103731 (2020).

Talatahari, S., Azizi, M., Tolouei, M., Talatahari, B. & Sareh, P. Crystal structure algorithm (CryStAl): A metaheuristic optimization method. IEEE Access 9, 71244–71261 (2021).