Abstract

The exponential distribution optimizer (EDO) represents a heuristic approach, capitalizing on exponential distribution theory to identify global solutions for complex optimization challenges. This study extends the EDO's applicability by introducing its multi-objective version, the multi-objective EDO (MOEDO), enhanced with elite non-dominated sorting and crowding distance mechanisms. An information feedback mechanism (IFM) is integrated into MOEDO, aiming to balance exploration and exploitation, thus improving convergence and mitigating the stagnation in local optima, a notable limitation in traditional approaches. Our research demonstrates MOEDO's superiority over renowned algorithms such as MOMPA, NSGA-II, MOAOA, MOEA/D and MOGNDO. This is evident in 72.58% of test scenarios, utilizing performance metrics like GD, IGD, HV, SP, SD and RT across benchmark test collections (DTLZ, ZDT and various constraint problems) and five real-world engineering design challenges. The Wilcoxon Rank Sum Test (WRST) further confirms MOEDO as a competitive multi-objective optimization algorithm, particularly in scenarios where existing methods struggle with balancing diversity and convergence efficiency. MOEDO's robust performance, even in complex real-world applications, underscores its potential as an innovative solution in the optimization domain. The MOEDO source code is available at: https://github.com/kanak02/MOEDO.

Similar content being viewed by others

Introduction

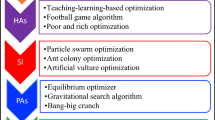

Design considerations inherently involve optimization, necessitating the application of suitable optimization techniques and algorithms1. Given the intricate nature of contemporary design tasks, conventional optimization strategies rooted in mathematical theories often fall short in delivering timely solutions. For instance, gradient-based algorithms tackle optimization challenges by leveraging the gradient of the target function2. Over the past several years, there has been a surge in interest to rectify the shortcomings of classical optimization algorithms (OAs) and introduce more potent OAs3. Thanks to technological progress, newer OAs that boast superior efficiency, precision and speed in addressing diverse optimization tasks are gaining traction4,5,6. Moreover, specific challenges like local optima and the irregularities and non-convexities of exploration domains have played a pivotal role in this evolution.

Such constraints on OAs have spurred scholars and industry experts to develop innovative metaheuristic algorithms to navigate diverse optimization hurdles7,8. Parallel to the growth of information technology, a plethora of optimization challenges have emerged across sectors like engineering, bioinformatics, operations research and geophysics9,10. Numerous optimization issues are categorized as NP-hard, implying their solutions are not achievable within polynomial time unless NP is equivalent to P. As a result, exact mathematical methods are typically reserved for problems of a smaller scale.

Researchers have explored alternative strategies (approximation techniques) to identify feasible solutions within a reasonable timeframe rather than abandoning the effort. These techniques can be broadly categorized into heuristics and metaheuristics. The primary difference between the two is that heuristics are closely tied to the specific nature of a problem, making them effective for particular challenges but less so for others. In contrast, metaheuristics present a more universal algorithmic structure or a black-box optimization tool suitable for almost any optimization problem (OP). These advanced heuristics, designed to address a range of OPs, are termed metaheuristics (MHs). Numerous metaheuristic algorithms (MHAs) have been successfully employed in recent times to navigate complex challenges11. A key benefit of these algorithms in addressing intricate optimization tasks is their capability to identify commendable solutions, irrespective of the problem's scale or intricacy12. MH algorithms have been utilized across a spectrum of optimization challenges, encompassing both single and multi-objective, as well as continuous and discrete scenarios13.

In the real world, problems often present multiple objectives, some of which might conflict with others. As a result, multi-objective optimization problems (MOPs) align more closely with these multifaceted challenges than single-objective optimization does14. A common approach to solving MOPs is to treat each objective as an individual OP, addressing them one after the other based on their respective significance15. Alternatively, individual solvers can exchange insights across successive iterations.

Techniques for multi-objective optimization can be divided into three primary categories16,17: a priori, a posteriori and interactive approaches.

-

A priori techniques: these strategies rely on preliminary knowledge about the problem and its objectives. They aim to pinpoint the Pareto optimal solutions even before the optimization process kicks off. A prevalent tactic in these techniques is transforming a MOP into a single-objective challenge at the outset. Notable examples of a priori techniques encompass weighting methods, goal-oriented programming and lexicographic strategies18.

-

A posteriori technique: these strategies draw from the results secured during the optimization phase. They strive to determine the Pareto optimal solutions post the completion of the optimization. Evolutionary algorithms like genetic algorithms, particle swarm optimization and simulated annealing fall under this category, as do Multi-objective Genetic Algorithms such as NSGA-II, SPEA2, MOEA/D and NSGA-II19.

-

Interactive techniques: these strategies necessitate human engagement throughout the optimization phase. They grant the decision-maker the ability to engage with the optimization process and offer insights on the outcomes produced by the algorithm20. Examples of interactive techniques include interactive genetic algorithms, interactive evolutionary tactics and interactive particle swarm optimization.

While a priori techniques are generally straightforward to deploy and can yield prompt results, they might miss out on capturing the genuine Pareto optimal solutions. On the other hand, a posteriori technique, though potentially offering more precise outcomes, can be intricate to implement and might be resource-intensive. Interactive techniques strike a balance, enabling the decision-maker to influence the outcomes, potentially enhancing solution quality. The techniques are illustrated in Fig. 1.

Multi-objective evolutionary algorithms (MOEAs) have gained popularity as effective a posteriori technique for addressing MOPs. Their strength lies in their ability to approximate the Pareto set (PS) and/or Pareto front (PF) within a single execution21. A standard MOEA operates in two main stages for every generation. The first stage employs evolutionary mechanisms like crossover and mutation to generate new solutions in the decision realm. The second stage involves selecting superior solutions from both the newly created and pre-existing solutions for the subsequent generation22. Depending on the selection methodologies employed, most contemporary MOEAs can be categorized into four types: domination-based, indicator-based, decomposition-based and hybrid MOEAs23,24. To determine the quality of selection, many MOEAs assess the function values of all the freshly produced solutions. Consequently, these algorithms often necessitate numerous function evaluations to achieve convergence25. Yet, the long-term outcomes of traditional optimization methods leave much to be desired26. Issues such as the sensitivity to the problem's initial estimation, reliance on the precision of the differential equation solver's solution and the risk of becoming ensnared in local optima conventional optimization techniques27. In addressing highly nonlinear challenges, there is a heightened risk of settling for a solution that is merely locally optimal28.

Metaheuristics for MOPs, commonly referred to as MOEA, can be categorized based on their solution selection methodologies into four primary groups: dominance-based, decomposition-based, indicator-based and hybrid selection mechanisms.

-

1.

Dominance-based MOEAs. These prioritize achieving optimal convergence by assigning fitness scores to individuals according to the Pareto-dominance principle. Additionally, a specific strategy is provided to maintain diversity. A widely recognized approach in this category ranks individuals through rapid non-dominated sorting, emphasizing reduced time complexity29,30,31,32. When multiple individuals possess the same non-domination rank, density metrics ensure diversity, leading to a more uniform distribution of solutions. However, as the number of objectives increases, the proportion of non-dominated solutions grows exponentially, making Pareto-dominated MOEAs less suitable for many-objective optimization. This can hinder the evolutionary process, diminishing selection pressure, diversity and convergence.

-

2.

Decomposition-based MOEAs. These segment a MOP into multiple scalar optimization sub-tasks using a decomposition strategy. Solutions for each subtask are optimized by conducting evolutionary operations among its various neighboring sub-problems. The most frequently employed decomposition-based MOEA is MOEA/D, introduced by Zhang and Li30. The MOEA/D structure can incorporate various traditional single-objective optimization and localized search methods33,34,35.

-

3.

Indicator-based MOEAs. These MOEAs utilize performance metrics related to solution quality as selection standards, guiding the search towards consistent enhancement of the overall population's anticipated attributes36,37. IBEA, a pioneering indicator-based MOEA, was developed by Zitzler and Kunzl38. It employed a binary indicator to evaluate the comparative benefits of two approximation solution sets, aligning with the decision-maker's preference. Given its compatibility with the Pareto principle, this indicator can be employed to determine fitness. This approach offers a foundational model for future indicator-based MOEAs. Some studies suggest that HV indicators might be a more computationally efficient alternative to other indicators while retaining similar theoretical properties.

-

4.

Hybrid MOEAs. Addressing intricate MOPs often necessitates leveraging the strengths of multiple algorithms, leading to the introduction of various hybrid MO algorithms39,40,41,42. The primary considerations for hybrid algorithms revolve around the selection of algorithms to merge and the methodology for their integration. Contemporary research predominantly centers on amalgamating different strategies to generate offspring populations. However, there is a need for further exploration in other domains.

Other popular multi-objective (MO) Algorithms include MO ant lion optimizer (MOALO)43, MO equilibrium optimizer (MOEO)44, MO slime mould algorithm (MOSMA)45, MO arithmetic optimization algorithm (MOAOA)46, non-dominated sorting ions motion algorithm (NSIMO)47, MO marine predator algorithm (MOMPA)48, multi-objective multi-verse optimization (MOMVO)49, non-dominated sorting grey wolf optimizer (NS-GWO)50, MO gradient-based optimizer (MOGBO)51, MO plasma generation optimizer (MOPGO)52, non-dominated sorting Harris hawks optimization (NSHHO)53, MO thermal exchange optimization (MOTEO)54, decomposition based multi-objective heat transfer search (MOHTS/D)55, Decomposition-Based Multi-Objective Symbiotic Organism Search (MOSOS/D)56, MOGNDO Algorithm57, Non-dominated sorting moth flame optimizer (NSMFO)58, Non-dominated sorting whale optimization algorithm (NSWOA)59, Non-Dominated Sorting Dragonfly Algorithm (NSDA)60, a reference vector based multiobjective evolutionary algorithm with Q-learning for operator adaptation61, a many-objective evolutionary algorithm based on hybrid dynamic decomposition62 and use of two penalty values in multiobjective evolutionary algorithm based on decomposition63.

Several algorithms have been crafted to adeptly navigate intricate OPs. One notable example is the exponential distribution optimizer (EDO) algorithm introduced by Mohamed Abdel-Basset et al.64. This innovative algorithm has been applied across diverse sectors, from engineering challenges to manufacturing processes and scientific modeling. The foundational principle of the EDO algorithm draws integrates two approaches geared towards exploitation and exploration tactics. During the exploitation phase, the algorithm employs three core principles: the absence of memory property, a guiding solution and the exponential disparity observed among the exponential random variables to refine the existing solutions.

In recent times, the exponential distribution optimizer (EDO), a math inspired metaheuristic method rooted in the fractal theorem, was presented. Our research aims to introduce a novel multi-objective iteration of the EDO algorithm tailored for MOPs. The proposed version integrates the NDS, CD and IFM principle and a stochastic learning mechanism. To gauge the potency of this proposed method, we employ distinct benchmark test functions: ZDT65, DTLZ66, Constraint67,68 (CONSTR, TNK, SRN, BNH, OSY and KITA) and real-world engineering design Brushless DC wheel motor69 (RWMOP1), Helical spring68 (RWMOP2), Two-bar truss68 (RWMOP3), Welded beam70 (RWMOP4), Disk brake71 (RWMOP5). The objective of this assessment is to compare the efficacy of our proposed method against MOMPA, NSGA-II, MOAOA, MOEA/D and MOGNDO, using metrics like generational distance (GD)34, inverse generational distance (IGD)35, hypervolume36, Spacing37, Spread36 and run time (RT). The approximations of the Pareto-front produced by our method are evaluated using these metrics.

The key contributions of this research are succinctly outlined as follows:

-

1.

Introduction of the multi-objective exponential distribution optimizer (MOEDO) Algorithm, incorporating non-dominated sorting (NDS) and crowding distance (CD) principles.

-

2.

Integration of the information feedback mechanism (IFM) to decompose multi-objective challenges into single-objective sub-tasks, enhancing the algorithm's efficiency.

-

3.

Utilization of the IFM approach to ensure a balanced dynamic between exploration and exploitation, fostering improved convergence and the capability to bypass local minima.

-

4.

Comprehensive evaluation of MOEDO's performance against established multi-objective methods using benchmark datasets like ZDT, DTLZ, CONSTR and real-world engineering design problems.

-

5.

Employment of metrics such as generational distance (GD), inverse generational distance (IGD), hypervolume (HV), spacing, spread and run time (RT) to assess results, demonstrating the superior capabilities of our proposed technique in various test scenarios.

The structure of our comprehensive research is as follows: "Methodology" elaborates on the exponential distribution optimizer. "Results and discussion" provides an in-depth look at our proposed MOEDO method for multi-objective global optimization. "Conclusion" presents our experimental results.

Methodology

This section delves into the EDO algorithm64 fundamental components. We will first discuss the inspiration behind EDO, followed by a detailed explanation of its initialization. We will also examine the explorative and exploitative features of EDO before discussing its mathematical structure.

Exponential distribution optimizer (EDO)

Exponential distribution model

The EDO approach takes its foundational principles from the theories of exponential distribution. This distribution, which is continuous in nature, has been instrumental in explaining several occurrences in the natural world. For instance, the time span between the present moment and the occurrence of an earthquake can be represented using this distribution. Similarly, the time-based likelihood of a car reaching a toll station aligns with the exponential distribution. Exponential random variables have frequently been utilized to shed light on past events, particularly focusing on the duration leading up to a specific incident. Here, we delve into the mathematical construct of the exponential distribution and elucidate its unique attributes. Imagine having an exponential random variable, represented by \(x\), associated with a parameter, \(\lambda\). This relationship can be expressed as \(x\sim {\text{EXP}}(\lambda )\). The Probability Density Function (PDF) of this variable is indicative of the duration by:

As time is a continuous factor, it must always hold a non-negative value, i.e., \((x\ge 0)\). Significantly, the \(\lambda\) parameter, which is always positive, denotes the rate of occurrence in the exponential distribution. The exponential distribution cumulative distribution function (CDF) can be derived through a specific formula:

A higher value of the rate of occurrence, \(\lambda\), indicates a reduced probability of the concerned random variable.

CDF function ascends, commencing from the foundational exponential rate and intensifying in proportion to the growth of the exponential random variable. For an exponentially distributed random variable, its mean \(\left(\mu \right)\) and variance \(\left({\sigma }^{2}\right)\) can be articulated using specific mathematical expressions:

These expressions reveal an inverse relationship between the \(\lambda\) parameter and both the mean and variance. To put it succinctly, as \(\lambda\) increases, both the mean and variance decline. Moreover, the standard deviation \((\sigma )\) mirrors the mean value and its derivation follows a particular computation

The memoryless nature of exponential distribution

One of the unique characteristics of certain statistical probability distributions is the 'memoryless' attribute. This implies that the chance of a forthcoming event transpiring is independent of past events. In simpler terms, previous occurrences do not influence future probabilities. The exponential distribution, which is a continuous type, encapsulates this memoryless attribute, especially when gauging the timespan before an event occurrence. When a random variable, denoted by \(x\), adheres to the exponential distribution with this memoryless feature, it means for any positive whole numbers \(s\) and \(t\) belonging to the series \(\left\{\mathrm{0,1},2,\dots ,\infty \right\}\) the following holds true:

Launching with the initial group

During the onset or the initiation stage, we cultivate a group termed (\({X}_{\text{winners}}\)), comprising \(N\) diversely valued, randomly formed solutions. To depict this search operation, we utilize an assortment of exponential distributions. Every potential solution is perceived as an embodiment of the exponential distribution. The respective positions of each solution are considered as exponential random variables conforming to this distribution. These are then structured as vectors, having a dimension \(d\).

In this setup, \({X}_{{\text{winners}} }\) symbolizes the \(j\) element of the ith candidate within the exponential distribution vector. Following this, we can define the preliminary group, \({X}_{\text{winners}}\).

To randomly generate each variable within the candidate exponential distribution in the solution space, we utilize a particular formula.

Here, \(lb\) and \(ub\) delineate the lower and upper limits of the given problem. The term 'rand' signifies a randomly derived number within the span [0,1]. Upon concluding the initiation, we embark on the optimization phase. This leans on the exploratory and refinement skills of our proposed approach over iterative rounds. Subsequent sections will elucidate the two core techniques (exploration and refinement within EDO) for pinpointing the global pinnacle.

EDO exploitation strength

The exploitation component of EDO harnesses various facets of the exponential distribution model, including its memoryless trait, exponential rate, typical variance and average value. Additionally, a guiding solution steers the search towards the global peak. Initially in EDO, a set of random solutions is cultivated, mimicking an array of exponential distribution patterns. These solutions undergo evaluation via an objective function and are subsequently ranked in terms of performance. For maximization problems, they are ordered in descending efficiency, while for minimization challenges, they are arranged in ascending order. Areas surrounding a robust solution are fertile grounds for pinpointing the global apex. This is why several algorithms probe spaces around robust solutions, drawing weaker ones towards them. Therefore, the global apex quest focuses on the guiding solution. The guiding solution, labeled (\(Xguide_{{\text{~}}}^{{{\text{time}}}}\)), is derived from the mean of the top three solutions in a sorted group.

In this context, time replaces the term iteration, alluding to the period until the subsequent event in the exponential distribution. The maximum number of iterations is symbolized by Max_time. This guiding solution offers invaluable insights about the global apex. Instead of exclusively leaning on the current best solution, the guiding one is prioritized. Though the leading solution has vital details about the global peak, an exclusive focus on it could lead to convergence around a local maximum. By introducing a current guiding solution, this challenge is mitigated. To emulate the memoryless characteristic of the exponential distribution, a matrix, termed memoryless, is formulated. This matrix houses the latest solutions, irrespective of their present efficiency scores. Initially, it mirrors the original population. Following this, solutions generated at the present time, regardless of their efficiency, are stored in the matrix, dismissing their past contributions. Operating in line with the memoryless property, if current solutions do not fare well against their counterparts in the original group, their success probabilities in subsequent iterations match those of the present. Therefore, past failures do not dictate future outcomes. Within the memoryless matrix, there are two categories of solutions: winners and losers. While losers can still contribute to the optimization process alongside winners, a solution is deemed victorious if its efficiency surpasses that of its counterpart in the \({X}_{{\text{winners}} }\) group. If a solution is updated in both the \({X}_{{\text{winners}} }\) and memoryless matrices, it a winner, otherwise, it a loser.

Our designed exploitation model, focused on updating solutions adhering to the exponential distribution, relies on both winning and losing solutions. The updated solution, \(\left({V}_{i}^{timx+1}\right)\) is modelled around a specific involving random and adaptive parameters. This strives to locate the global peak near an efficient solution, creating a new solution for future populations. The delves deep, examining how winners and losers navigate within the search space, leveraging valuable data from winners:

Additionally, the exponential rate, in relation to the mean, can be deduced:

The exponential mean arises from averaging the guiding solution and the associated memoryless vector, which can be either a winner or loser.

EDO exploration potential

This section delves into the exploration aspect of the introduced algorithm. The exploration segment pinpoints potential areas within the search domain that likely house the ideal, global solution. The EDO exploratory blueprint derives from two prominent solutions, or winners, from the primary population, which adhere to the exponential distribution pattern:

Updating the new solution involves with \({M}^{{time}}\) representing the average of all solutions sourced from the primary group. This average is computed by adding all exponential random variables from the same dimension and then dividing by the total population, denoted as \(N\). The term \(c\) signifies a refined parameter, indicating the proportion of information drawn from the \({Z}_{1}\) and \({Z}_{2}\) vectors towards the contemporary solution and is formulated as:

Here, \(d\) stands as a flexible parameter. Initially set to zero, it undergoes gradual decrement as time progresses. Here, 'time' alludes to the present moment, while 'Max_time' signifies the comprehensive duration or iterations. Both \({Z}_{1}\) and \({Z}_{2}\) are viewed as potential vectors, formed by:

Furthermore, \({D}_{1}\) and \({D}_{2}\) delineate the distance between the average solution and the 'winners' randomly picked from the initial population. At the inception of the optimization journey, a notable disparity exists between the average solution and the standout performers. Yet, as the process nears its end, this gap between the prominent solutions and their corresponding variances narrows. To establish the \({Z}_{1}\) and \({Z}_{2}\) vectors, exploration is conducted around the average solution, aided by a pair of randomly chosen outstanding solutions.

Optimizing with exponential distribution optimizer (EDO)

The EDO method we are introducing follows a series of steps to thoroughly navigate the search space, aiming for the global optimum. Initially, we create a collection of solutions, randomly generated and marked by a wide range of values. The search process is depicted using various exponential distributions and as such, the location of each solution can be seen as random variables adhering to this distribution. We design a matrix without memory to mimic the absence of memory and initially, it mirrors the original group of solutions. Leveraging the exploratory and refining stages of our method, every solution starts moving closer to the global optimum over time. During the refining stage, the matrix without memory is used to store the outcomes from the prior step, irrespective of their past, allowing them to play a pivotal role in shaping the new solutions. This leads to the categorization of solutions into two groups: the successful ones and the unsuccessful ones. Additionally, we incorporate various properties of the exponential distribution, like its mean, rate and variance. The successful solution is guided by a leading solution, while the unsuccessful one follows the successful one, aiming to discover the global optimum nearby. In the exploration stage, the fresh solution is influenced by two randomly chosen successful solutions from the initial group and the average solution. At the start, both the average solution and its variance are distant from the global optimum. However, the gap between the average solution and the global optimum narrows down until it reaches its lowest point during the optimization. A toggle parameter determines whether to embark on the exploration or refining stage, based on a probability where \((a<0.5)\).

After crafting the new solutions, each solution boundaries are verified and then they are stored in the matrix without memory. A selection strategy is employed to incorporate the new solutions from both stages into the initial group. If a new solution proves beneficial, it integrated into the primary group. By the optimization conclusion, all solutions cluster around the global optimum. In the best solution, both the mean and variance are anticipated to be minimal, while the scale parameter λ is expected to be significant. The pseudo code of single objective EDO shown in Algorithm 1.

Proposed multi-objective exponential distribution optimizer (MOEDO)

Preliminaries of multi-objective optimization

In multi-objective optimization tasks (MOPs), there is a simultaneous effort to minimize or maximize at least two clashing objective functions. While a single-objective optimization effort zeroes in on one optimal solution with the prime objective function value, MOO presents a spectrum of optimal outcomes known as Pareto optimal solutions. An elaboration on the idea of domination and associated terminologies are illustrated in Fig. 2.

Multi-objective exponential distribution optimizer (MOEDO)

MOEDO algorithm starts with a random population of size \(N\). the current generation is \(t, {x}_{i}^{t}\) and \({x}_{i}^{t+1}\) the \(i\)th individual at \(t\) and \((t+1)\) generation. \({u}_{i}^{t+1}\) the \(i\)th individual at the \((t+1)\) generation generated through the EDO algorithm and parent population \({P}_{t}\). the fitness value of \({u}_{i}^{t+1}\) is \({f}_{i}^{t+1}\) and \({U}^{t+1}\) is the set of \({u}_{i}^{t+1}\). Then, we can calculate \({x}_{i}^{t+1}\) according to \({u}_{i}^{t+1}\) generated through the EDO algorithm and Information Feedback Mechanism (IFM)72 Eq. (20).

where \({x}_{k}^{t}\) is the \(k\) th individual we chose from the \(t\) th generation, the fitness value of \({x}_{k}^{t}\) is \({f}_{k}^{t},{\partial }_{1}\) and \({\partial }_{2}\) are weight coefficients. Generate offspring population \({Q}_{t}\). \({Q}_{t}\) is the set of \({x}_{i}^{t+1}.\) The combined population \({R}_{t}={P}_{t}\cup {Q}_{t}\) is sorted into different w-non-dominant levels \(\left({F}_{1},{F}_{2},\dots ,{F}_{l}\dots ,{F}_{w}\right)\). Begin from \({F}_{1}\), all individuals in level 1 to \(l\) are added to \({S}_{t}={\bigcup }_{i=1}^{l} {F}_{i}\) and remaining members of \({R}_{t}\) are rejected illustrated in Fig. 3. If \(\left|{S}_{t}\right|=N\) no other actions are required and the next generation is begun with \({P}_{t+1}={S}_{t}\) directly. Otherwise, solutions in \({S}_{t}/{F}_{l}\) are included in \({P}_{t+1}\) and the remaining solutions \(N-{\sum }_{i=0}^{l-1} \left|{F}_{i}\right|\) are selected from \({F}_{l}\) according to the crowding distance (CD) mechanism, the way to select solutions is according to the CD of solutions in \({F}_{l}\). The larger the crowding distance, the higher the probability of selection and check termination condition is met. If the termination condition is not satisfied, \(t=t+1\) than repeat and if it is satisfied, \({P}_{t+1}\) is generated represent in Algorithm 2, it is then applied to generate a new population \({Q}_{t+1}\) by EDO algorithm. Such a careful selection strategy is found to computational complexity of \(M\)-Objectives \(O\left({N}^{2}M\right)\). MOEDO that incorporates proposed information feedback mechanism to effectively guide the search process, ensuring a balance between exploration and exploitation. This leads to improved convergence, coverage and diversity preservation, which are crucial aspects of multi-objective optimization. MOEDO algorithm does not require to set any new parameter other than the usual EDO parameters such as the population size, termination parameter and their associated parameters. The flow chart of MOEDO algorithm can be shown in Fig. 4.

Results and discussion

In this section, we present the results derived from our research and experiments, which were conducted to evaluate the efficacy of the proposed method and showcase its capabilities. To ensure robust and statistically significant results, each experiment was replicated 30 times independently with population size = 40 and maximum number of iterations = 500. All techniques were executed using MATLAB R2020a on an Intel Core i7 computer equipped with a 1.80 GHz processor and 8 GB RAM.

Description of benchmark test functions

Our experimental evaluation of the MOEDO algorithm's performance utilized three separate benchmark test set functions:

-

Zitzler–Deb–Thiele (ZDT) test suite: from this benchmark collection31, we selected ZDT1, ZDT2, ZDT3, ZDT4, ZDT 5 and ZDT6 (Appendix A). A brief overview of these problems is as follows: ZDT1 presents a continuous and uniformly distributed convex Pareto front. ZDT2 showcases a concave Pareto front. ZDT3 displays five non-convex discontinuous fronts. ZDT4 has multiple local Pareto Fronts and ZDT6 features a disjointed Pareto front with an irregular mapping between the objective function space and decision variable space. Each ZDT function typically encompasses two objective functions, a common feature in Pareto optimization, especially in engineering contexts.

-

Deb–Thiele–Laumanns–Zitzler (DTLZ) test suite: the DTLZ (Appendix B and Appendix C) suite, crafted by Deb et al.32, stands out from other multi-objective test challenges due to its adaptability to any objective count. This unique feature has facilitated numerous recent studies into what are commonly termed as many objective challenges. The DTLZ suite encompasses nine test functions, but only DTLZ8 and DTLZ9 have side constraints.

-

Constraint67,68 CONSTR, TNK, SRN, BNH, OSY and KITA (Appendix D) and real-world engineering design (Appendix E) Brushless DC wheel motor69 (RWMOP1), Helical spring68 (RWMOP2), Two-bar truss68 (RWMOP3), Welded beam70 (RWMOP4), Disk brake71 (RWMOP5).

Performance metrics for evaluation

For this research, we employed six performance metrics: hypervolume (HV), generational distance (GD), inverted generational distance (IGD), spread (SD), spacing (SP) and run time (RT). Subsequently, we provide a concise explanation of each metric to enhance comprehension shown in Fig. 5. a statistical evaluation. “+/−/~” Wilcoxon signed-rank test (WSRT) was conducted at a significance level of 0.05 between the total amount of test problems on which the corresponding optimizers has a better performance, a worse performance and an equal performance for solving MO problems.

Performance analysis on benchmark test functions

Analysis using the GD metric

Table 1 showcases the final solution distributions when juxtaposing our MOEDO algorithm against MOMPA, NSGA-II, MOAOA, MOEA/D and MOGNDO using the GD metric. Within the ZDT benchmark, our algorithm emerged as the top performer, particularly excelling in ZDT1, ZDT2, ZDT3 and ZDT6 in terms of mean and standard deviation. In the DTLZ benchmark, MOEDO was showed commendable performance in 9/14 problems. For the constraint test suite, the third benchmark, MOEDO outshined in both the best and average metrics, especially in SRN, OSY and KITA. A WRST test was conducted to determine the overall standing of each multi-objective method concerning the GD metric across all benchmarks. The results, presented in Table 1's, place MOEDO at the top and MOMPA bottom behind other algorithms across all benchmarks.

Analysis using the IGD metric

Table 2 presents the final solution distributions when comparing MOEDO algorithm against MOMPA, NSGA-II, MOAOA, MOEA/D and MOGNDO using the IGD metric. For the ZDT3 and ZDT 6 test function, our algorithm surpassed its counterparts. In the DTLZ benchmark, MOEDO was notably superior in 8/14 metrics. Furthermore, in the third benchmark, MOEDO exhibited superior performance, especially in TNK, OSY and KITA both in the best and average metrics. MOMPA and MOEA/D consistently underperformed in all benchmarks. The WRST test's results, displayed in Table 2's final row, highlight MOEDO's dominance, securing the top rank among the evaluated multi-objective variants.

Analysis using the spacing (SP) metric

Table 3 displays the final solution distributions when comparing MOEDO algorithm against MOMPA, NSGA-II, MOAOA, MOEA/D and MOGNDO using the spacing metric. MOEDO showcased commendable performance in ZDT1 for average metrics, while in ZDT4, it excelled in the average metric. For DTLZ 9/14, our method stood out in the standard metric. In the constraint benchmark, encompassing TNK, OSY, BNH and KITA, MOEDO consistently scored higher across all metrics. MOEA/D lagged behind in all benchmarks. The WRST test for the Spacing metric positioned MOEDO as the top performer, followed by MOGNDO and NSGA-II.

Analysis using the spread (SD) metric

Table 4 presents the final solution distributions of MOEDO algorithm against MOMPA, NSGA-II, MOAOA, MOEA/D and MOGNDO using the Spread metric. MOEDO was dominant in ZDT2 across all metrics and in ZDT2, ZDT3, ZDT4 and ZDT5 for both the std and average metrics. In the DTLZ benchmark, our method was superior in 10/14 for both std and average metrics. For the constraint benchmark, MOEDO outshined in TNK and OSY for the standard metric. MOEA/D consistently underperformed. The WRST test, based on the results from Table 4, highlighted MOEDO as the leading method among the evaluated algorithms.

Analysis using the HV metric

Table 5 delineates the final solution distributions when contrasting MOEDO algorithm against MOMPA, NSGA-II, MOAOA, MOEA/D and MOGNDO using the HV metric. Our method outshone in 15 out of the 26 problems. It was particularly dominant in ZDT3 and ZDT5 across metrics. In the DTLZ benchmark, MOEDO was unparalleled, especially in DTLZ4. Lastly, in the constraint test suites, MOEDO excelled in TNK, OSY and KITA across all metrics. In the ZDT benchmarks, MOAOA consistently underperformed. The WRST test outcomes for the HV metric positioned MOEDO in the second spot among the five evaluated multi-objective methods.

Analysis using the RT metric

Table 6 illustrates the final solution distributions of MOEDO algorithm against MOMPA, NSGA-II, MOAOA, MOEA/D and MOGNDO using the initial gap metric. MOEDO surpassed its counterparts in DTLZ3 and DTLZ5 across all metrics, namely Best, Avg and Std. In the constraint functions, our method was superior in CONSTR, TNK, SRN and KITA for the RT metric. MOEA/D consistently trailed its peers. The WRST test, based on the results from Table 6, positioned MOEDO at the best among the tested methods.

The visual representation of the final solutions from our proposed MOEDO method. Figures 6, 7, 8 and 9 reveals MOEDO superior PF. This section provides a thorough assessment of MOEDO performance against leading multi-objective methods using metrics like GD, IGD, HV, Spacing, Spread and RT across standard test suites such as ZDT, Constraint and DTLZ. MOEDO commendable performance is attributed to its rapid convergence and a harmonious balance between exploration and exploitation. This is achieved by integrating the strengths of the IFM technique, the adept search capabilities of the EDO algorithm and the enhanced convergence facilitated by a IFM mechanism. Conversely, many of the compared multi-objective methods struggled with maintaining a balanced exploration–exploitation ratio and lacked efficient convergence.

Application to real-world engineering design problems

Table 7 presents the performance of MOEDO concerning the spacing metric, compared against MOMPA, NSGA-II, MOAOA, MOEA/D and MOGNDO. A lower spacing value denotes superior coverage performance. The data reveals MOEDO performance RWMOP1, RWMOP2, RWMOP3 and RWMOP4 was suboptimal. The WRST test, as illustrated in Table 7, positions MOEDO as the top performer in terms of spacing values among all others.

Table 8 showcases the findings of MOEDO for the HV metric, juxtaposed against other algorithms. A higher HV value is indicative of better performance. The data suggests that MOEDO excels across RWMOP1, RWMOP2, RWMOP5 and RWMOP6. However, the WRST test results in Table 8 indicate room for improvement in the HV metric for MOEDO.

Table 9 showcases the performance of MOEDO concerning the run time metric, juxtaposed against other MOEDO others MOMPA, NSGA-II, MOAOA, MOEA/D and MOGNDO. Ideally, a lower RT metric value is preferred. Specifically, it showcased superior results for RWMOP2, RWMOP3, RWMOP4 and RWMOP5 in both Average and Best metrics. The overall ranking of each algorithm, based on the Best and Avg results, was determined using the WRST test. As illustrated in the concluding row of Table 5, MOEDO emerged as the top performer for the RT metric among the others.

Figure 10 emphasizes MOEDO superior performance for the RWMOP function, as its results closely align with the PF.

Conclusion

In this research, we introduce a multi-objective variant of the exponential distribution optimizer (EDO) method, a recently proposed metaheuristic algorithm rooted in specific principles of mathematics exponential distribution theory. We present a multi-objective EDO termed MOEDO, which integrates concepts of multi-objectivity, NDS, CD and IFM theory into the conventional EDO framework. The IFM approach, drawing from diverse strategies, ensures a balanced exploration–exploitation dynamic, promoting enhanced convergence and the ability to bypass local minima. experimental outcomes indicate that our MOEDO algorithm surpasses MOMPA, NSGA-II, MOAOA, MOEA/D and MOGNDO, in 72.58% of test scenarios using the ZDT, DTLZ. Constraint (CONSTR, TNK, SRN, BNH, OSY and KITA) and real-world engineering design Brushless DC wheel motor (RWMOP1), Helical spring (RWMOP2), Two-bar truss (RWMOP3), Welded beam (RWMOP4), Disk brake (RWMOP5) problems, especially in metrics like GD, IGD, HV, spacing (SP), Spread (SD) and RT. In the WRST test outcomes, MOEDO leads in all metrics. Looking ahead, we envision developing a binary version of MOEDO to tackle diverse and intricate real-world challenges. Additionally, the potential enhancements of the proposed method in its many-objective format for various optimization challenges remain an exciting avenue for future exploration.The MOEDO source code is available at: https://github.com/kanak02/MOEDO.

Data availability

The data presented in this study are available through email upon request to the corresponding author.

References

Shi, M., Lv, L. & Xu, L. A multi-fidelity surrogate model based on extreme support vector regression: Fusing different fidelity data for engineering design. Eng. Comput. 40(2), 473–493. https://doi.org/10.1108/EC-10-2021-0583 (2023).

Zhou, S. et al. On the convergence of stochastic multi-objective gradient manipulation and beyond. Adv. Neural. Inf. Process. Syst. 35, 38103–38115 (2022).

Cao, B., Zhao, J., Gu, Y., Ling, Y. & Ma, X. Applying graph-based differential grouping for multiobjective large-scale optimization. Swarm Evol. Comput. 53, 100626. https://doi.org/10.1016/j.swevo.2019.100626 (2020).

Zhu, B. et al. A critical scenario search method for intelligent vehicle testing based on the social cognitive optimization algorithm. IEEE Trans. Intell. Transp. Syst. 24(8), 7974–7986. https://doi.org/10.1109/TITS.2023.3268324 (2023).

Cao, B., Wang, X., Zhang, W., Song, H. & Lv, Z. A many-objective optimization model of industrial Internet of things based on private blockchain. IEEE Netw. 34(5), 78–83. https://doi.org/10.1109/MNET.011.1900536 (2020).

Zhang, C., Zhou, L. & Li, Y. Pareto optimal reconfiguration planning and distributed parallel motion control of mobile modular robots. IEEE Trans. Ind. Electron. https://doi.org/10.1109/TIE.2023.3321997 (2023).

Li, S., Chen, H., Chen, Y., Xiong, Y. & Song, Z. Hybrid method with parallel-factor theory, a support vector machine, and particle filter optimization for intelligent machinery failure identification. Machines 11(8), 837. https://doi.org/10.3390/machines11080837 (2023).

Zhang, L., Sun, C., Cai, G. & Koh, L. H. Charging and discharging optimization strategy for electric vehicles considering elasticity demand response. eTransportation 18, 100262. https://doi.org/10.1016/j.etran.2023.100262 (2023).

Cao, B. et al. Multiobjective 3-D topology optimization of next-generation wireless data center network. IEEE Trans. Ind. Inform. 16(5), 3597–3605. https://doi.org/10.1109/TII.2019.2952565 (2020).

Duan, Y., Zhao, Y. & Hu, J. An initialization-free distributed algorithm for dynamic economic dispatch problems in microgrid: Modeling, optimization and analysis. Sustain. Energy Grids Netw.orks 34, 101004. https://doi.org/10.1016/j.segan.2023.101004 (2023).

Almufti, S. M. Historical survey on metaheuristics algorithms. Int. J. Sci. World 7(1), 1. https://doi.org/10.14419/ijsw.v7i1.29497 (2019).

Alorf, A. A survey of recently developed metaheuristics and their comparative analysis. Eng. Appl. Artif. Intell. 117, 105622. https://doi.org/10.1016/j.engappai.2022.105622 (2023).

Dokeroglu, T., Sevinc, E., Kucukyilmaz, T. & Cosar, A. A survey on new generation metaheuristic algorithms. Comput. Ind. Eng. 137, 106040. https://doi.org/10.1016/j.cie.2019.106040 (2019).

Zhou, A. et al. Multiobjective evolutionary algorithms: A survey of the state of the art. Swarm Evol. Comput. 1(1), 32–49. https://doi.org/10.1016/j.swevo.2011.03.001 (2011).

Hu, X., & Eberhart, R. Multiobjective optimization using dynamic neighborhood particle swarm optimization. In Proceedings of the 2002 Congress on Evolutionary Computation, 2 (pp. 1677–1681). CEC'02. IEEE Publications (Cat. No. 02TH8600) (2002).

Gunantara, N. A review of multi-objective optimization: Methods and its applications. Cogent Eng. 5(1), 1502242. https://doi.org/10.1080/23311916.2018.1502242 (2018).

Sharma, S. & Kumar, V. A comprehensive review on multi-objective optimization techniques: Past, present and future. Arch. Comput. Methods Eng. 29(7), 5605–5633. https://doi.org/10.1007/s11831-022-09778-9 (2022).

Pereira, J. L. J., Oliver, G. A., Francisco, M. B., Cunha, S. S. & Gomes, G. F. A review of multi-objective optimization: Methods and algorithms in mechanical engineering problems. Arch. Comput. Methods Eng. 20, 1–24 (2021).

Huy, T. H. B. et al. Multi-objective search group algorithm for engineering design problems. Appl. Soft Comput. 126, 109287. https://doi.org/10.1016/j.asoc.2022.109287 (2022).

Li, Y.-J. & Li, H.-N. Interactive evolutionary multi-objective optimization and decision-making on life-cycle seismic design of bridge. Adv. Struct. Eng. 21(15), 2227–2240. https://doi.org/10.1177/1369433218770819 (2018).

Zhang, J., & Xing, L. A survey of multiobjective evolutionary algorithms. In IEEE International Conference on Computational Science and Engineering (CSE) and IEEE International Conference on Embedded and Ubiquitous Computing, Vol. 1, 93–100 (IEEE Publications, 2017). https://doi.org/10.1109/CSE-EUC.2017.27.

Guliashki, V., Toshev, H. & Korsemov, C. Survey of evolutionary algorithms used in multiobjective optimization. Probl. Eng. Cybern. Robot. 60(1), 42–54 (2009).

Wang, J. et al. A survey of decomposition approaches in multiobjective evolutionary algorithms. Neurocomputing 408, 308–330. https://doi.org/10.1016/j.neucom.2020.01.114 (2020).

Mashwani, W. K. Hybrid multiobjective evolutionary algorithms: A survey of the state-of-the-art. Int. J. Comput. Sci. Issues 8(6), 374 (2011).

Xu, Q., Xu, Z., & Ma, T. (2019). A short survey and challenges for multiobjective evolutionary algorithms based on decomposition. In International Conference on Computer, Information and Telecommunication Systems, CITS, IEEE, 1–5 (2019). https://doi.org/10.1109/CITS.2019.8862046.

Igel, C. No free lunch theorems: Limitations and perspectives of metaheuristics. In Theory and Principled Methods for the Design of Metaheuristics 1–23 (Springer, 2014). https://doi.org/10.1007/978-3-642-33206-7_1.

Chopard, B. & Tomassini, M. Performance and limitations of metaheuristics. In An Introduction to Metaheuristics for Optimization 191–203 (Springer, 2018). https://doi.org/10.1007/978-3-319-93073-2_11.

Dorigo, M. & Stützle, T. The ant colony optimization metaheuristic: Algorithms, applications and advances. In Handbook of Metaheuristics 250–285 (Springer, 2003). https://doi.org/10.1007/0-306-48056-5_9.

Marca, Y., Aguirre, H., Zapotecas, S., Liefooghe, A., Derbel, B., Verel, S., & Tanaka, K. Pareto dominance-based MOEAs on problems with difficult pareto set topologies. In Proceedings of the Genetic and Evolutionary Computation Conference Companion, 189–190 (2018). https://doi.org/10.1145/3205651.3205746.

Zhang, Q., Li, H. & MOEA/D,. MOEA/D: A multiobjective evolutionary algorithm based on decomposition. IEEE Trans. Evol. Comput. 11(6), 712–731. https://doi.org/10.1109/TEVC.2007.892759 (2007).

Khodadadi, N., Talatahari, S. & DadrasEslamlou, A. MOTEO: A novel multi-objective thermal exchange optimization algorithm for engineering problems. Soft Comput. 26(14), 6659–6684. https://doi.org/10.1007/s00500-022-07050-7 (2022).

Houssein, E. H., Çelik, E., Mahdy, M. A. & Ghoniem, R. M. Self-adaptive equilibrium optimizer for solving global, combinatorial, engineering and multi-objective problems. Expert Syst. Appl. 195, 116552. https://doi.org/10.1016/j.eswa.2022.116552 (2022).

Lin, A., Yu, P., Cheng, S. & Xing, L. One-to-one ensemble mechanism for decomposition-based multi-objective optimization. Swarm Evolut. Comput. 68, 101007. https://doi.org/10.1016/j.swevo.2021.101007 (2022).

Zheng, J. et al. A dynamic multiobjective particle swarm optimization algorithm based on adversarial decomposition and neighborhood evolution. Swarm Evolut. Comput. 69, 100987. https://doi.org/10.1016/j.swevo.2021.100987 (2022).

Ben-Said, A., Moukrim, A., Guibadj, R. N. & Verny, J. Using decompositionbased multi-objective algorithm to solve selective pickup and delivery problems with time windows. Comput. Oper. Res. 145, 105867. https://doi.org/10.1016/j.cor.2022.105867 (2022).

Zouache, D. & Abdelaziz, F. B. Guided manta ray foraging optimization using epsilon dominance for multi-objective optimization in engineering design. Expert Syst. Appl. 189, 116126. https://doi.org/10.1016/j.eswa.2021.116126 (2022).

Yin, S., Luo, Q. & Zhou, Y. IBMSMA: An indicator-based multi-swarm slime mould algorithm for multi-objective truss optimization problems. J. Bionic Eng. https://doi.org/10.1007/s42235-022-00307-9 (2022).

Zitzler, E. & Künzli, S. Indicator-based selection in multiobjective search. In PPSN, Vol 4, 832–842 (Springer, 2004). https://doi.org/10.1007/978-3-540-30217-9_84.

Abdi, Y. & Feizi-Derakhshi, M.-R. Hybrid multi-objective evolutionary algorithm based on search manager framework for big data optimization problems. Appl. Soft Comput. 87, 105991. https://doi.org/10.1016/j.asoc.2019.105991 (2020).

Dutta, S., Mallipeddi, R. & Das, K. N. Hybrid selection based multi/manyobjective evolutionary algorithm. Sci. Rep. 12(1), 6861. https://doi.org/10.1038/s41598-022-10997-0 (2022).

Kalita, K., Pal, S., Haldar, S. & Chakraborty, S. A hybrid TOPSIS-PR-GWO approach for multi-objective process parameter optimization. Process Integrat. Optim. Sustain. 6(4), 1011–1026. https://doi.org/10.1007/s41660-022-00256-0 (2022).

Chennuru, V. K. & Timmappareddy, S. R. Simulated annealing based undersampling (SAUS): A hybrid multi-objective optimization method to tackle class imbalance. Appl. Intell. 52(2), 2092–2110. https://doi.org/10.1007/s10489-021-02369-4 (2022).

Mirjalili, S., Jangir, P. & Saremi, S. Multi-objective ant lion optimizer: A multi-objective optimization algorithm for solving engineering problems. Appl. Intell. 46(1), 79–95. https://doi.org/10.1007/s10489-016-0825-8 (2017).

Premkumar, M. et al. Multi-objective equilibrium optimizer: Framework and development for solving multi-objective optimization problems. J. Comput. Design Eng. 9(1), 24–50. https://doi.org/10.1093/jcde/qwab065 (2021).

Premkumar, M. et al. MOSMA: Multi-objective slime mould algorithm based on elitist non-dominated sorting. IEEE Access 9, 3229–3248. https://doi.org/10.1109/ACCESS.2020.3047936 (2020).

Premkumar, M. et al. A new arithmetic optimization algorithm for solving real-world multiobjective CEC-2021 constrained optimization problems: Diversity analysis and validations. IEEE Access 9, 84263–84295. https://doi.org/10.1109/ACCESS.2021.3085529 (2021).

Buch, H. & Trivedi, I. N. A new non-dominated sorting ions motion algorithm: Development and applications. Decis. Sci. Lett. 9(1), 59–76. https://doi.org/10.5267/j.dsl.2019.8.001 (2020).

Jangir, P., Buch, H., Mirjalili, S. & Manoharan, P. MOMPA: Multi-objective marine predator algorithm for solving multi-objective optimization problems. Evolut. Intell. https://doi.org/10.1007/s12065-021-00649-z (2021).

Mirjalili, S., Jangir, P., Mirjalili, S. Z., Saremi, S. & Trivedi, I. N. Optimization of problems with multiple objectives using the multi-verse optimization algorithm. Knowl. Based Syst. 134, 50–71. https://doi.org/10.1016/j.knosys.2017.07.018 (2017).

Jangir, P. & Jangir, N. A new non-dominated sorting grey wolf optimizer (NS-GWO) algorithm: Development and application to solve engineering designs and economic constrained emission dispatch problem with integration of wind power. Eng. Appl. Artif. Intell. 72, 449–467. https://doi.org/10.1016/j.engappai.2018.04.018 (2018).

Premkumar, M., Jangir, P. & Sowmya, R. MOGBO: A new multiobjective gradient-based optimizer for real-world structural optimization problems. Knowl. Based Syst. 218, 106856. https://doi.org/10.1016/j.knosys.2021.106856 (2021).

Kumar, S., Jangir, P., Tejani, G. G., Premkumar, M. & Alhelou, H. H. MOPGO: A new physics-based multi-objective plasma generation optimizer for solving structural optimization problems. IEEE Access 9, 84982–85016. https://doi.org/10.1109/ACCESS.2021.3087739 (2021).

Jangir, P., Heidari, A. A. & Chen, H. Elitist non-dominated sorting Harris hawks optimization: Framework and developments for multi-objective problems. Expert Syst. Appl. 186, 115747. https://doi.org/10.1016/j.eswa.2021.115747 (2021).

Kumar, S., Jangir, P., Tejani, G. G. & Premkumar, M. MOTEO: A novel physics-based multiobjective thermal exchange optimization algorithm to design truss structures. Knowl. Based Syst. 242, 108422. https://doi.org/10.1016/j.knosys.2022.108422 (2022).

Kumar, S., Jangir, P., Tejani, G. G. & Premkumar, M. A decomposition based multi-objective heat transfer search algorithm for structure optimization. Knowl. Based Syst. 253, 109591. https://doi.org/10.1016/j.knosys.2022.109591 (2022).

Ganesh, N. et al. A novel decomposition-based multi-objective symbiotic organism search optimization algorithm. Mathematics 11(8), 1898. https://doi.org/10.3390/math11081898 (2023).

Pandya, S. B., Visumathi, J., Mahdal, M., Mahanta, T. K. & Jangir, P. A novel MOGNDO algorithm for security-constrained optimal power flow problems. Electronics 11(22), 3825. https://doi.org/10.3390/electronics11223825 (2022).

Jangir, P. Non-dominated sorting moth flame optimizer: A novel multi-objective optimization algorithm for solving engineering design problems. Eng. Technol. Open Access J. 2(1), 17–31. https://doi.org/10.19080/ETOAJ.2018.02.555579 (2018).

Jangir, P. & Jangir, N. Non-dominated sorting whale optimization algorithm. Glob. J. Res. Eng. 17(4), 15–42 (2017).

Jangir, P. ‘MONSDA:-A novel multi-objective non-dominated sorting dragonfly algorithm’. glob. J. Res. Eng. F Electr. Electron. Eng. 20, 28–52 (2020).

Jiao, K., Chen, J., Xin, B. & Li, L. A reference vector based multiobjective evolutionary algorithm with Q-learning for operator adaptation. Swarm Evolut. Comput. 76, 101225. https://doi.org/10.1016/j.swevo.2022.101225 (2023).

Li, C., Deng, L., Gong, W., & Qiao, L. A many-objective evolutionary algorithm based on hybrid dynamic decomposition IEEE Congress on Evolutionary Computation (CEC), 2023, 1–8 (IEEE Publications, 2023). https://doi.org/10.1109/CEC53210.2023.10254128.

Pang, L. M., Ishibuchi, H. & Shang, K. Use of two penalty values in multiobjective evolutionary algorithm based on decomposition. IEEE Trans. Cybern. 53(11), 7174–7186. https://doi.org/10.1109/TCYB.2022.3182167 (2023).

Abdel-Basset, M., El-Shahat, D., Jameel, M. & Abouhawwash, M. Exponential distribution optimizer (EDO): A novel math-inspired algorithm for global optimization and engineering problems. Artif. Intell. Rev. 20, 1–72. https://doi.org/10.1016/j.knosys.2022.110248 (2023).

Zitzler, E., Deb, K. & Thiele, L. Comparison of multiobjective evolutionary algorithms: Empirical results. Evol. Comput. 8(2), 173–195. https://doi.org/10.1162/106365600568202 (2000).

Deb, K., Thiele, L., Laumanns, M. & Zitzler, E. Scalable test problems for evolutionary multiobjective optimization. In Evolutionary Multiobjective Optimization 105–145 (Springer, 2005). https://doi.org/10.1007/1-84628-137-7_6.

Binh, T. T., & Korn, U. MOBES: A multiobjective evolution strategy for constrained optimization problems. In The Third International Conference on Genetic Algorithms (Mendel 97), 27 (1997).

Osyczka, A. & Kundu, S. A new method to solve generalized multicriteria optimization problems using the simple genetic algorithm. Struct. Optim. 10(2), 94–99. https://doi.org/10.1007/BF01743536 (1995).

Branke, J., Kaußler, T. & Schmeck, H. Guidance in evolutionary multi-objective optimization. Adv. Eng. Softw. 32(6), 499–507. https://doi.org/10.1016/S0965-9978(00)00110-1 (2001).

De la Hoz, E., de la Hoz, E., Ortiz, A., Ortega, J. & Martínez-Álvarez, A. Feature selection by multi-objective optimisation: Application to network anomaly detection by hierarchical self-organising maps. Knowl. Based Syst. 71, 322–338. https://doi.org/10.1016/j.knosys.2014.08.013 (2014).

Martínez-Álvarez, A., Cuenca-Asensi, S., Ortiz, A., Calvo-Zaragoza, J. & VivasTejuelo, L. A. V. Tuning compilations by multi-objective optimization: Application to apache web server. Appl. Soft Comput. 29, 461–470. https://doi.org/10.1016/j.asoc.2015.01.029 (2015).

Wang, G. G. & Tan, Y. Improving metaheuristic algorithms with information feedback models. IEEE Trans. Cybern. 49(2), 542–555. https://doi.org/10.1109/TCYB.2017.2780274 (2019).

Author information

Authors and Affiliations

Contributions

Conceptualization, K.K., S.B.P., P.J.; formal analysis, K.K., J.V.N.R., L.C., S.B.P., P.J.; investigation, K.K., J.V.N.R., L.C., S.B.P., P.J.; methodology, K.K., J.V.N.R., L.C., S.B.P., P.J., L.A.; software, K.K., S.B.P., P.J.; WRITING—original draft, J.V.N.R., S.B.P., P.J.; writing—review and editing, K.K., L.C., P.J., L.A.; all authors have read and agreed to the published version of the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Kalita, K., Ramesh, J.V.N., Cepova, L. et al. Multi-objective exponential distribution optimizer (MOEDO): a novel math-inspired multi-objective algorithm for global optimization and real-world engineering design problems. Sci Rep 14, 1816 (2024). https://doi.org/10.1038/s41598-024-52083-7

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/s41598-024-52083-7

- Springer Nature Limited