Abstract

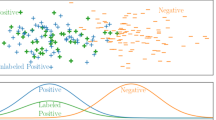

Decision trees that are based on information-theory are useful paradigms for learning from examples. However, in some real-world applications, known information-theoretic methods frequently generate nonmonotonic decision trees, in which objects with better attribute values are sometimes classified to lower classes than objects with inferior values. This property is undesirable for problem solving in many application domains, such as credit scoring and insurance premium determination, where monotonicity of subsequent classifications is important. An attribute-selection metric is proposed here that takes both the error as well as monotonicity into account while building decision trees. The metric is empirically shown capable of significantly reducing the degree of non-monotonicity of decision trees without sacrificing their inductive accuracy.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

Ben-David, A., Sterling, L., & Pao, Y.H. (1989). Learning and classification of monotonic ordinal concepts. Computational Intelligence, 5 (1), 45–49.

Ben-David, A. (1992). Automatic generation of symbolic multiattribute ordinal knowledge-based DSSs: Methodology and applications. Decision Sciences, 23 (6), 1357–1372.

Carter, C., & Catlett, J. (1987). Assessing credit card applications using machine learning. IEEE Expert, Fall 1987, 71–79.

Clark, P., & Niblett, T. (1989). The CN2 induction algorithm. Machine Learning, 3, 261–283.

Hayes-Roth, F., Waterman, D.A., & Lenat, D.B. (1983). Building expert systems, Addison-Wesley.

Jacoby, J., Speller D.E., & Berning, C.K. (1974). Information load: Replication and extension. Journal of Consumer Research, 1, 33–42.

Larichev, O.I., Moshkovich, H.M., & Rebrik, S.B. (1988). Systematic research into human behavior in multiattribute object classification problems. Acta Psychologica, 68, 171–182.

Mantaras, R.L. (1991). A distance-based attribute selection measure for decision tree induction. Machine Learning, 6, 81–92.

Michalski, R.S. (1983). A theory and methodology of inductive learning. In Machine learning: An artificial intelligence approach, R.S. Michalski, J. Carbonell, & T. Mitchell, (Eds.), Morgan Kaufmann Publishing Co., CA.

Nunez, M. (1991). The use of background knowledge in decision tree induction. Machine Learning, 6, 231–250.

Quinlan, J.R. (1983). Learning efficient classification procedures and their applications to chess endgames. In Machine learning: An artificial intelligence approach, R.S. Michalski, J. Carbonell, & T. Mitchell, (Eds.), Tioga Publishing Co., Palo Acto, CA.

Quinlan, J.R. (1986). The effect of noise on concept learning. In Machine learning: An artificial intelligence approach, R.S. Michalski, J. Carbonell, & T. Mitchell, (Eds.), Morgan Kaufmann, CA.

Schlimmer, J.C. & Fisher, D.H. (1986). A case study of incremental concept induction. Proceedings of the Fifth National Conference on Artificial Intelligence, Morgan Kaufmann, CA, 496–501.

Utgoff, P.E. (1989). Incremental induction of decision trees. Machine Learning, 4, 161–186.

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Ben-David, A. Monotonicity Maintenance in Information-Theoretic Machine Learning Algorithms. Machine Learning 19, 29–43 (1995). https://doi.org/10.1023/A:1022655006810

Issue Date:

DOI: https://doi.org/10.1023/A:1022655006810