Abstract

Software Defect Detection (SDD) has always been critical to the development life cycle. A stable defect detection system can not only alleviate the workload of software testers but also enhance the overall efficiency of software development. Researchers have recently proposed various artificial intelligence-based SDD methods and achieved significant advancements. However, these methods still exhibit limitations in terms of reliability and usability. Therefore, we introduce MSDD-(IA)3, a novel framework leveraging the pre-trained CodeT5+ and (IA)3 for parameter-efficient multi-classification SDD. This framework constructs a detection model based on pre-trained CodeT5+ to generate code representations while capturing defect-prone features. Considering the high overhead of pre-trained LLMs, we injects (IA)3 vectors into specific layers, where only these injected parameters are updated to reduce the training cost. Furthermore, leveraging the properties of the pre-trained CodeT5+, we design a novel feature sequence that enriches the input data through the combination of source code with Natural Language (NL)-based expert metrics. Our experimental results on 64K real-world Python snippets show that MSDD-(IA)3 demonstrates superior performance compared to state-of-the-art SDD methods, including PM2-CNN, in terms of F1-weighted, Recall-weighted, Precision-weighted, and Matthews Correlation Coefficient. Notably, the training parameters of MSDD-(IA)3 are only 0.04% of those of the original CodeT5+. Our experimental data and code can be available at (https://gitee.com/wxyzjp123/msdd-ia3/).

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Software Defect Detection (SDD) is an essential part of the development lifecycle that is often overlooked. However, a minor defect can cause impaired software functionality, reduced system stability, or even a system crash, resulting in a negative user experience [1]. In the past, SDD was a tedious and time-consuming process, requiring significant time and effort from developers and quality assurance teams. Therefore, achieving intelligent SDD has always been a research focus in software engineering.

Intelligent SDD methods utilize Machine Learning (ML)/ Deep Learning (DL) techniques to capture defect-prone features in historical software and then predict the presence of defects in forthcoming releases. In early research on SDD, researchers manually collected expert metrics to construct linear classifiers to detect defects within programs [2,3,4]. This phase focused on extracting the effective expert metrics to capture defect-prone features. However, expert metrics only reflect structural features of the program and struggle to extract semantic features of the source code [5]. With the development of DL technology, researchers have begun to utilize DL-based representation learning methods to learn the rich semantic features of source code [5,6,7,8]. By combining the semantic features with expert metrics, the effectiveness of SDD methods has been further enhanced [6, 9].

However, the training process of these DL-based methods is limited to project-specific datasets [10, 11]. Due to the limited training data, their models may generate suboptimal code representations of the source code, leading to inadequate performance. As pre-trained Large Language Models (LLMs) become effective in various code-related tasks, researchers have attempted to address this deficiency by utilizing pre-trained LLMs. By undergoing extensive pre-training tasks on a large corpus, pre-trained LLMs generate superior code representations for the SDD task. Fu et al. [10] proposed LineVul, which leverages a pre-trained LLM known as CodeBERT to generate more meaningful code representations. Their method significantly outperforms traditional DL-based methods. Similarly, Liu et al. [12] proposed PM2-CNN, which combines source code with descriptive text sequences as inputs for the pre-trained UniXcoder, surpassing the performance of LineVul. Although PM2-CNN has achieved satisfactory results compared to previous SDD methods, it still has the following limitations regarding its reliability and usability in practical applications:

Limitation 1: PM2-CNN May Have Suboptimal Capabilities in Understanding the Code Context The UniXcoder utilized in PM2-CNN attempts to utilize a single encoder to support all pre-training tasks, which exposes the model to inter-task interference [13]. This interference may affect the performance of their method in understanding the code context.

Limitation 2: PM2-CNN is Coarse-Grained in Discerning Defect Types PM2-CNN only supports binary defect detection, which predicts whether the program contains defects. However, binary prediction offers limited assistance to software testing engineers because it lacks specific details about defects, especially the types of defects [14].

Limitation 3: PM2-CNN Overlooks the Expensive Resources Required for the Full Fine-Tuning of LLMs The UniXcoder has intricate network structures, and billions of parameters need to be fine-tuned, resulting in high hardware resource demands for software testing teams in practical applications.

To address the aforementioned shortcomings of PM2-CNN, we introduce MSDD-(IA)3, a parameter-efficient multi-classification SDD framework based on pre-trained LLMs. First, MSDD-(IA)3 constructs a detection model based on pre-trained CodeT5+ to generate optimal code representations from programs. Compared to previous SDD methods, our detection model employs a flexible encoder–decoder architecture in the pre-training phase, where its component modules can be dynamically combined to avoid inter-task interference [13]. Second, MSDD-(IA)3 employs a novel feature sequence that enhances the input data through the combination of source code with Natural Language (NL)-based expert metrics. Third, MSDD-(IA)3 is the first-ever SDD framework for multi-classification defect detection in Python software. Specifically, the framework is capable of detecting six common types of defects in Python development: AttributeError, TypeError, ValueError, KeyError, RuntimeError, and IndexError. Last, considering the high cost of previous pre-trained LLMs-based SDD methods during the training phase, MSDD-(IA)3 introduces the (IA)3 strategy to scale activations of particular layers and optimize the allocation of testing resources [15].

64K Python snippets from Github repositories are selected as the experimental dataset to evaluate the performance of MSDD-(IA)3. The experimental findings demonstrate the superiority of MSDD-(IA)3 in terms of reliability and usability. In summary, the main contributions of this paper are presented as follows:

-

This paper introduces MSDD-(IA)3, a novel framework leveraging pre-trained CodeT5+ for multi-classification SDD in Python projects.

-

To enrich the semantic and structural features provided to the model, MSDD-(IA)3 combines the source code with NL-based expert metrics as the input sequence.

-

By introducing the (IA)3 strategy during the training phase, MSDD-(IA)3 achieve superior performance with less training overhead.

-

Extensive experiments are conducted on 64K real-world Python snippets, and the findings demonstrate that MSDD-(IA)3 outperforms state-of-the-art SDD methods across four evaluation metrics. Additionally, MSDD-(IA)3 only has 0.04% trainable parameters and saves 19% of the memory overhead.

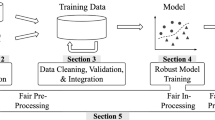

The remainder of this paper is structured as follows: Section 2 introduces related works on SDD. Section 3 details the overall framework of MSDD-(IA)3. Section 4 describes the experimental setup. Section 5 summarizes the experimental results. Section 6 concludes our research and explores future research directions.

2 Related Work

Research on SDD primarily falls into three phases: expert metrics-based SDD, DL-based SDD, and pre-trained LLMs-based SDD. This section provides a comprehensive review of the literature for each phase.

2.1 Expert Metrics-Based Software Defect Detection

Early SDD research focused on designing practical and easily collectable expert metrics. Zhang et al. [2] constructed Bayesian networks for defect detection by collecting metrics such as effort, test items, and residual faults during the software development lifecycle. Okutan et al. [3]built Bayesian networks to analyze the effects of different expert metrics on results. Zhang et al. [4] proposed a novel expert metric called cross entropy based on the semantic features of the source code. Although expert metrics can effectively reflect the structural features of a program, the performance of expert metrics-based SDD methods is less satisfactory due to their inability to utilize the rich semantic features of the source code [5].

2.2 Deep Learning-Based Software Defect Detection

With the development of DL technology, numerous studies have started to exploit DL for capturing semantic and structural features in abstract representations of the source code, which mainly include Abstract Syntax Tree (AST), Control Flow Graph (CFG), and Code Property Graph (CPG). Wang et al. [5] converted the AST sequence to token sequences and constructed a Deep Belief Network (DBN) to capture the semantic features of the source code, achieving superior performance than expert metrics-based SDD methods. Building upon Wang’s work, several researchers utilized Convolutional Neural Networks (CNNs) to capture local semantic features [6, 7, 16, 17]. Deng et al. [8] employed Long Short-Term Memory (LSTM) networks to extract contextual semantic features of the source code. Wang et al. [18] utilized GLOVE to convert AST sequences to embedding vectors and constructed a dual-channel LSTM to combine semantic features with expert metrics. Subsequent research revealed that using Graph Neural Networks (GNNs) is more effective, as GNNs can efficiently capture dependencies in code contexts [19,20,21]. However, the training process of these methods is limited to specific datasets. The limited size of training sets enables these DL-based language models to generate suboptimal code representations [10, 11].

2.3 Pre-trained LLMs-Based Software Defect Detection

Recently, pre-trained LLMs have achieved tremendous success in Natural Language Processing (NLP). Research on LLMs follows the pre-training fine-tuning paradigm. During the pre-training phase, LLMs perform various pre-training tasks on extensive unlabeled data to generate high-quality vector representations. Then, during the fine-tuning phase, LLMs perform supervised learning on labeled data to adapt to specific downstream tasks [13, 22,23,24].

CodeBERT is a bimodal pre-trained LLM that supports both NL and Programming Language (PL). During its pre-training phase, CodeBERT performs two pre-training tasks (masked language modeling and replaced token detection) to understand the connections between NL and PL [23]. CodeBERT has been widely applied in code-related tasks such as natural language code search and code documentation generation. However, the lack of decoder-related pre-training tasks limits the ability of CodeBERT to understand the semantic features of code contexts [25]. UniXcoder overcomes this limitation by employing a unified cross-modal pre-training model for code representation. UniXcoder controls the execution of different modalities (encoder-only, decoder-only, and encoder–decoder) by manipulating masking matrices. Furthermore, UniXcoder generates superior code representation by leveraging cross-modality content such as ASTs and code comments [24]. However, the UniXcoder uses a single encoder for all pre-training tasks, leading to potential interference from different tasks affecting its performance [13]. To avoid these interferences in UniXcoder, Wang et al. [13] proposed CodeT5+, an encoder–decoder architecture-based model. CodeT5+ flexibly combines different component modules through two stages of distinct pre-training tasks to avoid interference from varying tasks. In multiple code understanding and code generation tasks, CodeT5+ achieves optimal performance.

In the field of code representation for SDD, LineVul utilizes pre-trained CodeBERT to learn the feature representation of the source code [10]. PM2-CNN combines the source code with descriptive documents as input features for pre-trained UniXcoder [12]. Their results surpass those of LineVul and other DL-based SDD methods across multiple evaluation metrics. However, due to the limitations of the UniXcoder in the pre-training phase, the code representations generated by the PM2-CNN may not be optimal. Therefore, we design a detection model based on pre-trained CodeT5+ to capture semantic features and generate code representations. Moreover, collecting accurate descriptive documents for each program is challenging (as pointed out by the ablation study in PM2-CNN) [12]. Thus, in our research, instead of using descriptive documents, we opt for expert metrics, which are easier to collect.

3 Methodology

To address the limitations of existing pre-trained LLMs-based SDD methods for code representation and expensive training costs, this paper introduces MSDD-(IA)3, a parameter-efficient multi-classification SDD framework based on pre-trained CodeT5+ and (IA)3. MSDD-(IA)3 constructs a detection model based on pre-trained Code-T5+ to extract deep features from programs and introduces the (IA)3 strategy during the training process to reduce training expenses. The overall framework of MSDD-(IA)3 is shown in Fig. 1. Specifically, MSDD-(IA)3 includes the following four modules:

-

Code Pre-processing Module This module extracts sixteen expert metrics from each Python snippet and transforms them into the text formats described in NL, while retaining the original content of the source code.

-

Tokenization Module To convert the source code and the corresponding NL-based expert metrics into the ideal input, this module employs a Byte-level Byte Pair Encoding (BBPE) subword tokenizer to split the input features into tokens based on subword frequency and then transforms the obtained tokens into numeric vectors.

-

Deep Feature Generation Module To avoid the inadequacies in code representation of previous methods, this module employs pre-trained CodeT5+ to capture deep features of the program. Considering the high training overhead required by CodeT5+, the (IA)3 strategy is introduced into the multi-head attention layer and Feed-Forward Network (FFN) to reduce the number of trainable parameters.

-

Prediction Module This module constructs a Multilayer Perceptron (MLP) [26] as the underlying classifier to perform multi-classification operations on the learned features, calculates the loss based on the predicted results and updates the model parameters.

The flow path through these modules is shown in Fig. 2

3.1 Code Pre-processing Module

In this module, we use a Python tool called RadonFootnote 1 to extract sixteen expert metrics from each program and add their values to an NL-based text template.

The expert metrics used in this experiment have been validated by prior SDD research. Specifically, these metrics include six raw metrics, eight halstead metrics, cyclomatic complexity, and Maintainability Index (MI) as follows:

-

Raw Metrics Raw metrics are used to assess the scale and complexity of a program [27]. In this research, we consider six raw metrics: Lines of Code (LOC), Logical Lines of Code (LLOC), Source Lines of Code (SLOC), comments, multi, and single comments. Table 1 presents details regarding these metrics.

-

Halstead Metrics Halstead metrics are designed to evaluate the maintainability, testing effort, and overall quality of a program by quantifying the number of operators and operands in the source code [28]. This research considers eight Halstead metrics: program vocabulary, program length, calculated program length, program volume, program difficulty, effort, time, and bugs. Table 2 presents details regarding these metrics.

-

Cyclomatic Complexity Cyclomatic complexity is used to measure the number of distinct code paths through the program flow [29]. A high cyclomatic complexity value means a more complex program, which makes it more difficult for software developers to maintain. Additionally, a program with high complexity is more susceptible to defects.

-

Maintainability Index MI is used to assess the difficulty of maintaining (easy to support and change) a program [30]. MI considers vital factors such as cyclomatic complexity, SLOC, and program volume. In our research, the calculation formula for the MI is presented as follows:

$$\begin{aligned}{} & {} MI = \max \left( 0, 100*\frac{171 - 5.2 \ln V - 0.23 G - 16.2 \ln L + 50 \sin \left( \sqrt{2.4 \times C}\right) }{171}\right) , \end{aligned}$$(1)where \(V\) denotes the program volume, \(G\) corresponds to the cyclomatic complexity, \(L\) indicates the SLOC, and \(C\) represents the percentage of comment lines. A higher MI value indicates the superior maintenance of a program.

In contrast to previous methods that rely on the numerical values of expert metrics, MSDD-(IA)3 transforms these extracted expert metrics into descriptive text paragraphs, as illustrated in Fig. 3. Given the robust NL understanding capabilities of pre-trained CodeT5+, we use these concise paragraphs to enable the detection model to comprehend the value of each expert metric.

3.2 Tokenization Module

This module employs a BBPE subword tokenizer to convert the source code and NL-based expert metrics into an ideal input for the model.

Constructing a BBPE tokenizer involves the following three steps: (1) An initial vocabulary that contains all representations of a single byte (256 in total) is constructed. (2) The most frequent occurring subword pairs (two consecutive subwords) are identified and merged them into a new subword unit to update the vocabulary. This process is repeated until the maximum size of the vocabulary is reached or no more meaningful mergers are possible. (3) The resulting vocabulary is used to tokenize new inputs [31].

The BBPE tokenizer offers the advantage of splitting any new word into recognized byte sequences, which maintains the contextual coherence of input features and prevents information loss due to unknown words during training. MSDD-(IA)3 utilizes the pre-trained BBPE tokenizer with CodeT5+. The tokenizer is trained on an expanded version of the CodeSearchNet dataset, which includes nine distinct PLs: Python, Java, Ruby, JavaScript, Go, PHP, C, C++, and C#. Upon completion of the pre-training, the tokenizer will generate a vocabulary containing 32,000 subword units [25].

Upon completing the tokenization process, the NL-based expert metrics are combined with the source code to form a feature sequence. Furthermore, three additional tokens are added to the sequence to enhance the ability of the model to distinguish among different components. For instance, the feature sequence representation of programi can be expressed as follows:

where the \(CLS\) token indicates the beginning of the feature sequence, the \(SEP\) token separates the NL-based expert metrics from the source code, and the \(EOS\) token denotes the end of the sequence. The length of each feature sequence is set to 512 tokens. We employ the \(PAD\) token for feature sequences shorter than 512 tokens to extend them to the required length. Conversely, for sequences that exceed this size, we truncate the length of the source code.

3.3 Deep Feature Generation Module

The initial step in this module involves generating embedding vectors for each token within the feature sequences. Subsequently, the pre-trained CodeT5+ is used to extract the deep features from the embedding vectors. Considering the high expense of training CodeT5+,(IA)3 vectors are injected into both the multi-head attention layer and the FFN to enhance efficiency.

CodeT5+ (version 110 M) consists of twelve transformer encoders. Transformer encoders excel at capturing the contextual information of inputs, but their intricate computational processes cause expensive training overheads [32]. Each transformer encoder consists of a multi-head attention layer, an FFN, two residual connection and layer normalization (Add &Norm) blocks. The architecture of the transformer encoder with (IA)3 is shown in Fig. 4.

3.3.1 Word and Position Embeddings

The deep features of each token are highly dependent on its contextual relationships (i.e., relationships with surrounding tokens) and its positional placement within the feature sequence. Therefore, adding positional information to each token at the embedding layer is crucial. This step generates two vectors for each token: a word encoding vector and a positional encoding vector. Word encoding vectors are obtained by mapping each token to a vector space of fixed dimensionality, while positional encoding vectors are calculated as follows:

where \(pos\) is the position of a token within the feature sequence and \(i\) refers to the dimension. The positional encoding vector is added to the word encoding vector to form the final embedding vector as inputs to the encoder.

3.3.2 Multi-head Attention Layer with (IA)3

The multi-head attention layer generates distinct feature representations for each token using multiple independent attention heads in parallel, enabling the model to capture richer contextual information.

Each attention head utilizes the self-attention layer to assign attention weights to tokens. The self-attention layer introduces three distinct vectors: Query (Q), Key (K), and Value (V). These vectors are obtained by multiplying the embedding vector \( X \) with three different weight matrices, \( W^Q \), \( W^K \), and \( W^V \). In the original self-attention layer, these weight matrices are initialized and continuously optimized through the training process. The computation process is described as follows:

where \( W^o \) denotes a linear transformation matrix applied to concatenate the outputs of all the attention heads, and \(\alpha \) represents the index of the attention head.

Upon obtaining the Q, K, and V vectors, the relevance between two tokens is measured by computing the dot product of the Q and K vectors. First, these relevance scores are scaled by dividing by \(\sqrt{d}\) (where \(d\) represents the dimension of the K vector) to avoid large dimensions. Then, the scaled scores are normalized using the Softmax function to generate attention weight matrices. Finally, weight V vectors are obtained by multiplying the V vectors by the corresponding attention weights. The calculation process of the original self-attention layer is presented as follows:

To reduce trainable parameters and training overhead, we inject the (IA)3 vectors into the self-attention layer. (IA)3 is a novel Parameter-Efficient Fine-Tuning (PEFT) strategy that utilizes learned vectors to rescale the inner activations of a specific layer. These learned vectors are the only parameters updated in that layer throughout the training process, while the other original parameters remain frozen. The dimensions of these learned vectors are determined by the size of the weight matrices in the target layer. In this study, we inject an \( L_{q} \) vector to scale the Q vector into the self-attention layer. Only the \( L_{q} \) vector will be updated throughout the training process. The computation of the self-attention layer with (IA)3 is described as follows:

where \(\odot \) represents element-wise multiplication. During the training process, the Q vector can be updated via element-wise multiplication with the \( L_{q} \) vector.

3.3.3 Feed-Forward Network with (IA)3

The FFN performs a nonlinear transformation on the input vector to enhance the expressive ability of the model. Specifically, the FFN comprises two fully connected layers and an activation function. The first fully connected layer maps the input vector to a higher dimensional space, while the second fully connected layer projects it back to the original dimension. A nonlinear transformation is performed on the output of the first fully connected layer by applying the Rectified Linear Unit (ReLU) function. The original computation process is described as follows:

where \(x\) denotes the input vector, \(W_1\) represents the weight matrix of the first fully connected layer, and \(W_2\) represents the weight matrix of the second fully connected layer.

To reduce trainable parameters and computational overhead, we introduce the \( L_{ff} \) vector following the nonlinear transformation. During the training process, only the \( L_{ff} \) vector is learned to update the activations, while the other weight parameters are frozen. The computation process of the FFN with (IA)3 is presented as follows:

3.3.4 Residual Connection and Layer Normalization Block

There is an Add & Norm module following both the multi-head attention layer and the FFN, each including two steps: a residual connection and a layer normalization. This design is beneficial for learning deep features. The residual connection addresses the issues of weight matrix degeneration and gradient vanishing, while layer normalization ensures a consistent data distribution across the outputs of each hidden layer to accelerate convergence. It is calculated as follows:

where \( X \) denotes the input vector of the preceding layer, and sublayer represents the multi-head attention or FFN.

3.4 Prediction Module

In the prediction module, we construct an MLP classifier with two fully connected layers to classify code representations.

The first fully connected layer maps the code representations generated by the previous module to a feature space with 256 hidden units. The second fully connected layer transforms these features into an 8-dimensional vector, where each dimension corresponds to the logit of a specific class. First, the Softmax function is applied to normalize the output vectors, converting these logits into a probability distribution. Next, the cross-entropy loss function is used to calculate the discrepancy between the predicted outputs and the actual labels. Finally, the AdamW optimizer is employed to update the model parameters, leveraging its improved ability to handle weight decay for superior training. The detailed procedure of the MSDD-(IA)3 is presented in Algorithm 1 and the parameter information is provided in Table 3.

4 Experiment

4.1 Dataset

The experimental dataset is derived from PyTraceBugs [33] and includes 23,736 defective snippets and 41,012 clean snippets. The non-defective snippets in this dataset are collected from stable projects in distinct GitHub repositories, while the defective snippets are selected from bug fixes and pull requests in various top repositories. The Defect types encompass six common types of defects in Python development, as well as other types. The distribution of types in the defective snippets is presented in Table 4. Each snippet is labeled according to the following rule: non-defective snippets are marked as 0, defective snippets with six major types of defects are labeled from 1 to 6, and snippets with other defects are marked as 7. The dataset is randomly divided into training, validation, and testing sets at an 8:1:1 ratio, maintaining a consistent proportion of defects in each part.

4.2 Development Environment

The experiment is conducted on a Linux device with eight AMD MI210 GPUs, and all experimental steps are implemented in a Python 3.8 environment, mainly relying on three Python libraries: PyTorch (version 2.1.1+rocm5.6), Transformers (version 4.35.2), and Peft (version 0.6.2).

4.3 Evaluation Metrics

Similar to previous SDD research [10, 12, 34], we use four evaluation metrics to examine experimental results: F1-score, Recall, Precision, and MCC. Considering the context of the experimental dataset, we opt for the weighted versions of the F1-score, Recall, and Precision instead of the default binary [35]. These weighted metrics assign different weights to the values for each class based on its relative size, mitigating the bias toward more prevalent classes.

In the experiment, we compute weighted metrics by tallying the True Positives (TP), False Negatives (FN), False Positives (FP), and True Negatives (TN) for each class:

-

Recall-Weighted Recall is utilized to measure the proportion of positive instances correctly identified by the model out of all actual positive instances. Recall-weighted is the weighted sum of the Recall values for each class, calculated as follows:

$$\begin{aligned}{} & {} Recall-weighted\nonumber \\{} & {} \quad = \sum _{i \in n} \left( \frac{Sum_i}{Sum} \right) \left( \frac{TP_i}{TP_i + FN_i} \right) , \end{aligned}$$(13)where \(i\) denotes the \(i\)-th class, \(Sum_i \) represents the size of the \(i\)-th class, \(Sum \) refers to the total size, and \(n\) is the index of the class.

-

Precision-Weighted Precision is used to measure the proportion of true positive predictions in the total number of positive predictions. The formula for calculating the Precision-weighted is as follows:

$$\begin{aligned}{} & {} Precision-weighted \nonumber \\{} & {} \quad = \sum _{i \in n} \left( \frac{Sum_i}{Sum} \right) \left( \frac{TP_i}{TP_i + FP_i} \right) \end{aligned}$$(14) -

F1-Weighted F1-score is the harmonic mean of Precision and Recall. The formula for calculating the F1-weighted value is as follows:

$$\begin{aligned}{} & {} F1-weighted \nonumber \\{} & {} \quad = \sum _{i \in n} \left( \frac{Sum_i}{Sum} \right) \left( \frac{2TP_i}{2TP_i + FP_i + FN_i} \right) \end{aligned}$$(15) -

MCC MCC considers TP, FP, FN, and TN across all classes to evaluate the performance of classifiers, providing a reliable measure of classification performance, particularly in the context of an imbalanced dataset.

The values of F1-weighted, Recall-weighted, and Precision-weighted range from 0 to 1, while the value of MCC ranges from -1 to 1. Higher values of these metrics indicate superior performance of the method.

5 Results

5.1 RQ1: How Does MSDD-(IA)3 Perform Compared to the State-of-the-Art SDD Methods?

5.1.1 Overview

We introduce MSDD-(IA)3, which constructs a detection model based on the pre-trained CodeT5+. To validate the superiority of MSDD-(IA)3, we compare it with two DL-based SDD methods (TSE, PHAN) and two state-of-the-art pre-trained LLMs-based SDD methods (LineVul, PM2-CNN).

5.1.2 Approach

The baseline methods involved in RQ1 are listed as follows:

-

(1)

TSE: This method designs a two-stage SDD using self-attention mechanism and tree-based LSTMs [34].

-

(2)

PHAN: This method employs a positional hierarchical attention network to extract semantic features from programs [36].

-

(3)

LineVul: This method leverages pre-trained CodeBERT to capture semantic features from the source code [10].

-

(4)

PM2-CNN: This method employs pre-trained UniXcoder for generating code representations and constructs a Text-CNN to capture local features within code representations [12].

Table 5 and Fig. 5 illustrate the experimental results according to four evaluation metrics. A bold figure in Table 5 denotes the highest performance achieved by the corresponding method. The experimental results show that MSDD-(IA)3 achieves the optimal performance across all the metrics. In terms of F1-weighted, MSDD-(IA)3 improves the values of TSE by 12.3%, of PHAN by 8.5%, of LineVul by 1.7%, and of PM2-CNN by 1.1%, respectively. In terms of Recall-weighted, MSDD-(IA)3 improves the values of TSE by 9.5%, of PHAN by 1.8%, of LineVul by 1.2%, and of PM2-CNN by 1.1%, respectively. In terms of Precision-weighted, MSDD-(IA)3 improves the values of of TSE by 12.5%, of PHAN by 7.3%, of LineVul by 1.6%, and of PM2-CNN by 0.9%, respectively. In terms of MCC, MSDD-(IA)3 improves the values of of TSE by 18.1%, of PHAN by 13.0%, of LineVul by 3.1%, and of PM2-CNN by 2.1%, respectively. The notable improvement of the PM2-CNN over LineVul also validates the effectiveness of our replication, especially considering that the PM2-CNN does not release its source code. Figure 6 shows the confusion matrix for evaluating the classification performance of MSDD-(IA)3, with predicted results in rows and actual results in columns. Elements on the diagonal of the confusion matrix represent correctly identified samples.

The experimental results show that the performance of DL-based TSE and PHAN is significantly inferior to that of the pre-trained LLMs-based SDD methods. This inferiority is mainly attributed to the limited training data used by DL-based methods, which leads to suboptimal code representations and affects the prediction performance. Moreover, the superior performance of MSDD-(IA)3 over LineVul and PM2-CNN can be attributed to its application of the pre-trained CodeT5+ for generating optimal code representations. CodeT5+ employs a flexible encoder–decoder architecture that enhances the comprehension of code context. In contrast, CodeBERT (utilized by LineVul) relies on encoder-only pre-training tasks, while UniXcoder (utilized by PM2-CNN) utilizes a single encoder to support all pre-training tasks.

5.1.3 Finding1

MSDD-(IA)3 outperforms the state-of-the-art SDD methods according to four evaluation metrics.

5.2 RQ2: Does the Combination of Semantic Metrics with NL-Based Expert Metrics Indicate More Benefits for MSDD-(IA)3?

5.2.1 Overview

Previous DL-based SDD studies have shown that combining source code with expert metrics can achieve superior performance. [6, 18] Leveraging the robust understanding capabilities of pre-trained CodeT5+ in both PL and NL, this experiment examines the impact of different feature combination strategies on the performance.

5.2.2 Approach

In this step, we analyze the following three feature combination strategies:

-

(1)

PL: Like LineVul [10], this strategy uses the source code as inputs for pre-trained CodeT5+.

-

(2)

PL-EM: Like previous DL-based SDD methods [6, 18], this strategy concatenates deep features extracted by pre-trained CodeT5+ from the source code with numerical vectors of expert metrics as inputs for the classifier.

-

(3)

PL-NL: Based on the characteristics of pre-trained CodeT5+, MSDD-(IA)3 combines source code with NL-based expert metrics as input sequences for pre-trained CodeT5+.

Table 6 presents the experimental results of different feature combination strategies according to our four evaluation metrics, where a bold figure represents the highest performance achieved by the corresponding strategy. PL-NL achieves the optimal performance across all the metrics. Compared to PL, which solely utilizes source code, PL-NL improves the F1-weighted value by 0.16%, the Recall-weighted value by 0.16%, the Precision-weighted value by 0.04%, and the MCC value by 0.29%. Compared to PL-EM, PL-NL surpasses these metrics by 1.6%, 1.2%, 0.96%, and 2.18%.

Expert metrics contain crucial information about code quality and structure. PL-NL provides richer code information by combining source code with NL-based expert metrics. Adding the \(SEP\) token to the feature sequence also helps the model more clearly distinguish and understand the relationship between the source code and expert metrics. Therefore, this feature combination strategy improves the ability of the model to understand the overall structure and semantic features within programs. In contrast, while PL-EM also introduces expert metrics, its approach of simply concatenating numeric values makes it difficult for the classifier to accurately distinguish the meaning and importance of each metric, potentially even disrupting the original contextual features of the source code, leading to the poorest results.

5.2.3 Finding2

MSDD-(IA)3 achieves superior performance through a feature combination strategy that combines source code and NL-based expert metrics.

5.3 RQ3: How do Different Underlying Classifiers Affect MSDD-(IA)3?

5.3.1 Overview

Different underlying classifiers demonstrate distinct capabilities in processing deep features, and this difference directly affects the performance of SDD methods [37, 38]. Therefore, selecting an appropriate underlying classifier is equally crucial.

5.3.2 Approach

Logistic Regression (LR) is the most commonly used classifier in previous pre-trained LLMs-based studies. [10, 12, 39]. Therefore, we introduce the following classifiers, which combine LR with other neural networks, into MSDD-(IA)3:

-

(1)

LR: LR transforms the output into classification probabilities using the logistic function. In this experiment, LR is used to directly classify the features extracted by CodeT5+.

-

(2)

Bi-directional LSTM (Bi-LSTM): LSTM employs its unique gating mechanism to capture long-distance dependencies in input sequences, including forget, input, and output gates. Bi-LSTM combines two independent LSTM layers to extract features along the forward and backward directions of the input sequence.

-

(3)

Gated Recurrent Unit (GRU): GRU is a variant of LSTM that aims to simplify the model structure while retaining the advantages of LSTM. GRU can effectively capture dependencies within sequences by using update and reset gates to regulate the information flow.

-

(4)

Bi-directional GRU (Bi-GRU): Similar to Bi-LSTM, Bi-GRU is the bidirectional version of GRU.

Furthermore, to validate the effectiveness of LR, we replace the following four classifiers while preserving the structure of MSDD-(IA)3:

-

(1)

Random Forest (RF): RF is an ensemble learning algorithm that improves prediction performance and reduces overfitting by combining the predictions of multiple decision trees.

-

(2)

Gaussian Naive Bayes (GaussianNB): GaussianNB assumes that the features follow a Gaussian (normal) distribution and is widely used for classification tasks with continuous data.

-

(3)

Support Vector Machine (SVM): SVM is capable of handling both linear and non-linear data through its kernel methods. It is known for its effectiveness in high-dimensional spaces and its ability to create optimal boundaries between different classes.

-

(4)

Decision Tree (DT): DT classifies data by learning decision rules from features and constructs a tree-like structure.

Table 7 and Fig. 7 show the results on the testing dataset. A bold figure in Table 7 denotes the highest performance achieved by the corresponding classifier. The MLP classifier achieves the highest performance across four metrics; it improves the F1-weighted values of LR by 0.4%, of Bi-LSTM by 1.7%, of GRU by 0.3%, of Bi-GRU by 0.8%, of RF by 0.4%, of GaussianNB by 0.4%, of SVM by 0.3%, and of DT by 0.6%. Additionally, the MLP classifier shows improvements of 0.4%, 1.4%, 0.1%, 0.7%, 0.3%, 0.4%, 0.3%, and 0.6% in the Recall-weighted values; 0.3%, 1.5%, 0.2%, 0.7%, 0.5%, 0.4%, 0.4%, and 0.7% in the Precision-weighted values; and 0.7%, 2.3%, 0.2%, 1.1%, 0.6%, 0.6%, 0.6%, and 1.0% in the MCC values, respectively.

The experimental results indicate that, with the prerequisite of two fully connected layers, LR (implemented as an MLP) achieves superior performance over other ML-based classifiers. In addition, the results demonstrate that the RNN-based classifier fail to achieve a satisfactory performance. The advantage of RNN-based classifiers lies in their ability to capture long-distance dependencies between tokens [40, 41]. However, when using deep features generated by training CodeT5+ as inputs to the classifier, the contextual relationships of tokens are already embedded within these features. Thus, employing an RNN-based classifier in this context increases unnecessary complexity, which may lead to a degradation in the final performance.

5.3.3 Finding3

Using an MLP as the underlying classifier for MSDD-(IA)3 achieves the optimal performance.

5.4 RQ4: How is the Training Overhead of MSDD-(IA)3?

5.4.1 Overview

Pre-trained LLMs-based SDD methods pose significant resource demands on software development teams. To reduce the application cost of our method, we incorporate the (IA)3 strategy during the training process. In this experiment, we analyze the impact of introducing the (IA)3 strategy on training costs and detection performance.

5.4.2 Approach

In this step, we analyze the impact of introducing the (IA)3 strategy during the training process from three perspectives: trainable parameters, memory usage during the training process, and overall performance. Trainable parameters refer to the number of parameters that are updated during the training process. To assess the memory usage, we use the interface provided by PyTorch to record the memory usage throughout the training process. Figure 8 illustrates the performance differences between introducing the (IA)3 strategy and Full-FT.

In terms of training cost: \(\textcircled {1}\) MSDD (denoting Full-FT) requires the updating of 110 M parameters during the training process, whereas MSDD-(IA)3 only needs to train 46,336 parameters, which is just 0.042% of the original parameters of CodeT5+; \(\textcircled {2}\) MSDD occupies 17.6 GB of memory during the training process, while MSDD-(IA)3 requires only 14.3 GB, representing a 19% reduction in memory consumption compared to Full-FT. The introduction of the (IA)3 strategy significantly reduces the number of trainable parameters and memory consumption during the training process, lowering the application threshold of MSDD-(IA)3.

In terms of performance: using the (IA)3 strategy yields superior improvements across four metrics, with an increase of 0.3% for the F1-weighted value, an increase of 0.2% for the Recall-weighted value, an increase of 0.2% for the Precision-weighted value, and an increase of 0.4% for the MCC value. The superior performance achieved using the (IA)3 strategy can be primarily attributed to its avoidance of the "catastrophic forgetting" that occurs in specific scenarios with Full-FT. This phenomenon refers to the loss of knowledge acquired during the pre-training phase as the model learns new tasks. MSDD-(IA)3 effectively preserves the knowledge gained during the pre-training phase by freezing the pre-trained weights of LLMs and applying minimal intervention to the model.

5.4.3 Finding4

Compared with MSDD, MSDD-(IA)3 reduces training costs while achieving superior performance.

6 Conclusion

This paper introduces MSDD-(IA)3, a novel parameter-efficient multi-classification SDD framework based on pre-trained LLMs, effectively filling the research gap in Python multi-classification SDD. The framework introduces a detection model based on pre-trained CodeT5+ to capture contextual semantic information and extract defect-prone features. Furthermore, the framework enriches the input features by combining source code with NL-based expert metrics. Unlike previous pre-train LLMs-based SDD methods, which consume significant overhead, we incorporate (IA)3 vectors into the multi-head attention layer and FFN of the detection model, achieving superior performance compared to Full-FT while using only 0.04% of the original parameters. The experiment is conducted on 64K real-world Python snippets, and the findings show that MSDD-(IA)3 outperforms state-of-the-art pre-trained LLMs-based SDD methods across four evaluation metrics. In addition, the findings indicate that the introduction of (IA)3 significantly improves training efficiency and mitigates the ’catastrophic forgetting’ issue in pre-trained LLMs.

In the future, our work will focus on the optimal utilization of expert metrics. This direction includes refining the natural language descriptions of expert metrics to provide richer information and employing interpretability methods to analyze the impact of various metrics on detection results [42]. Additionally, we plan to extend the application of MSDD-(IA)3 to other popular PLs, such as Java, C++, and JavaScript.

Data Availability Statement

All the data involved in this paper can be available from the corresponding author upon request.

References

Yang, P., Zhu, L., Zhang, Y., Ma, C., Liu, L., Yu, X., Hu, W.: On the relative value of clustering techniques for unsupervised effort-aware defect prediction. Expert Systems with Applications, p. 123041 (2023)

Zhang, D.: Applying machine learning algorithms in software development. In: Proceedings of the 2000 Monterey Workshop on Modeling Software System Structures in a Fastly Moving Scenario, pp. 275–291 (2000)

Okutan, A., Yıldız, O.T.: Software defect prediction using Bayesian networks. Empir. Softw. Eng. 19, 154–181 (2014)

Zhang, X., Ben, K., Zeng, J.: Cross-entropy: A new metric for software defect prediction. In: 2018 IEEE International Conference on Software Quality, Reliability and Security (QRS). pp. 111–122. IEEE (2018)

Wang, S., Liu, T., Tan, L.: Automatically learning semantic features for defect prediction. In: Proceedings of the 38th International Conference on Software Engineering, pp. 297–308 (2016)

Li, J., He, P., Zhu, J., Lyu, M.R.: Software defect prediction via convolutional neural network. In: 2017 IEEE International Conference on Software Quality, Reliability and Security (QRS), pp. 318–328. IEEE (2017)

Pan, C., Lu, M., Xu, B., Gao, H.: An improved cnn model for within-project software defect prediction. Appl. Sci. 9(10), 2138 (2019)

Deng, J., Lu, L., Qiu, S.: Software defect prediction via lstm. IET Softw. 14(4), 443–450 (2020)

Lin, J., Lu, L.: Semantic feature learning via dual sequences for defect prediction. IEEE Access 9, 13112–13124 (2021)

Fu, M., Tantithamthavorn, C.: Linevul: A transformer-based line-level vulnerability prediction. In: Proceedings of the 19th International Conference on Mining Software Repositories, pp. 608–620 (2022)

Fu, M., Tantithamthavorn, C., Le, T., Nguyen, V., Phung, D.: Vulrepair: a t5-based automated software vulnerability repair. In: Proceedings of the 30th ACM Joint European Software Engineering Conference and Symposium on the Foundations of Software Engineering, pp. 935–947 (2022)

Liu, J., Ai, J., Lu, M., Wang, J., Shi, H.: Semantic feature learning for software defect prediction from source code and external knowledge. J. Syst. Softw., p. 111753 (2023)

Wang, Y., Le, H., Gotmare, A.D., Bui, N.D., Li, J., Hoi, S.C.: Codet5+: open code large language models for code understanding and generation. arXiv preprint arXiv:2305.07922 (2023)

Mamede, C., Pinconschi, E., Abreu, R., Campos, J.: Exploring transformers for multi-label classification of java vulnerabilities. In: 2022 IEEE 22nd International Conference on Software Quality, Reliability and Security (QRS), pp. 43–52. IEEE (2022)

Liu, H., Tam, D., Muqeeth, M., Mohta, J., Huang, T., Bansal, M., Raffel, C.A.: Few-shot parameter-efficient fine-tuning is better and cheaper than in-context learning. Adv. Neural. Inf. Process. Syst. 35, 1950–1965 (2022)

Malohtra, R., Yadav, H.S.: An improved cnn-based architecture for within-project software defect prediction. In: Soft Computing and Signal Processing: Proceedings of 3rd ICSCSP 2020, Volume 1. pp. 335–349. Springer (2021)

Li, S., Wang, J., Song, Y., Wang, S., Wang, Y.: A lightweight model for malicious code classification based on structural reparameterisation and large convolutional kernels. Int. J. Comput. Intell. Syst. 17(1), 1–18 (2024)

Wang, H., Zhuang, W., Zhang, X.: Software defect prediction based on gated hierarchical lstms. IEEE Trans. Reliab. 70(2), 711–727 (2021)

Zeng, C., Zhou, C.Y., Lv, S.K., He, P., Huang, J.: Gcn2defect: Graph convolutional networks for smotetomek-based software defect prediction. In: 2021 IEEE 32nd International Symposium on Software Reliability Engineering (ISSRE), pp. 69–79. IEEE (2021)

Tang, L., Tao, C., Guo, H., Zhang, J.: Software defect prediction via gcn based on structural and context information. In: 2022 9th International Conference on Dependable Systems and Their Applications (DSA), pp. 310–319. IEEE (2022)

Šikić, L., Kurdija, A.S., Vladimir, K., Šilić, M.: Graph neural network for source code defect prediction. IEEE Access 10, 10402–10415 (2022)

Devlin, J., Chang, M.W., Lee, K., Toutanova, K.: Bert: pre-training of deep bidirectional transformers for language understanding. arXiv preprint arXiv:1810.04805 (2018)

Feng, Z., Guo, D., Tang, D., Duan, N., Feng, X., Gong, M., Shou, L., Qin, B., Liu, T., Jiang, D., et al.: Codebert: a pre-trained model for programming and natural languages. arXiv preprint arXiv:2002.08155 (2020)

Guo, D., Lu, S., Duan, N., Wang, Y., Zhou, M., Yin, J.: Unixcoder: unified cross-modal pre-training for code representation. arXiv preprint arXiv:2203.03850 (2022)

Wang, Y., Wang, W., Joty, S., Hoi, S.C.: Codet5: Identifier-aware unified pre-trained encoder-decoder models for code understanding and generation. arXiv preprint arXiv:2109.00859 (2021)

Hornik, K., Stinchcombe, M., White, H.: Multilayer feedforward networks are universal approximators. Neural Netw. 2(5), 359–366 (1989)

Gil, Y., Lalouche, G.: On the correlation between size and metric validity. Empir. Softw. Eng. 22(5), 2585–2611 (2017)

Halstead, M.H.: Elements of Software Science (Operating and programming systems series). Elsevier Science Inc. (1977)

McCabe, T.J.: A complexity measure. IEEE Trans. Softw. Eng. 4, 308–320 (1976)

Oman, P., Hagemeister, J.: Metrics for assessing a software system’s maintainability. In: Proceedings Conference on Software Maintenance 1992, pp. 337–338. IEEE Computer Society (1992)

Wang, C., Cho, K., Gu, J.: Neural machine translation with byte-level subwords. In: Proceedings of the AAAI conference on artificial intelligence. vol. 34, pp. 9154–9160 (2020)

Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A.N., Kaiser, Ł., Polosukhin, I.: Attention is all you need. Advances in neural information processing systems 30 (2017)

Akimova, E.N., Bersenev, A.Y., Deikov, A.A., Kobylkin, K.S., Konygin, A.V., Mezentsev, I.P., Misilov, V.E.: Pytracebugs: a large python code dataset for supervised machine learning in software defect prediction. In: 2021 28th Asia-Pacific Software Engineering Conference (APSEC), pp. 141–151. IEEE (2021)

Zhoua, Y., Lua, L., Zoub, Q., Lic, C.: Two-stage ast encoding for software defect prediction. In: 2022 34th International Conference on Software Engineering and Knowledge Engineering (SEKE), pp. 196–199 (2022)

Sklearn evaluation metrics. [online] Available: https://scikit-learn.org/stable/modules/model_evaluation.html#model-evaluation

Yi, X., Xu, H., Lu, L., Zou, Q., Yang, Z.: Software defect prediction via positional hierarchical attention network(S). In: 2023 35th International Conference on Software Engineering and Knowledge Engineering (SEKE). pp. 228–231 (2023)

Yu, X., Liu, L., Zhu, L., Keung, J.W., Wang, Z., Li, F.: A multi-objective effort-aware defect prediction approach based on nsga-ii. Appl. Soft Comput. 149, 110941 (2023)

Zou, Q., Lu, L., Yang, Z., Xu, H.: Multi-source cross project defect prediction with joint wasserstein distance and ensemble learning. In: 2021 IEEE 32nd International Symposium on Software Reliability Engineering (ISSRE). pp. 57–68. IEEE (2021)

Ai, Z., Yijia, Z., Mingyu, L.: A domain knowledge transformer model for occupation profiling. Int. J. Comput Intell. Syst. 16(1), 1–13 (2023)

Pham Thi, Q.T., Dao, Q.H., Nguyen, A.D., Dang, T.H.: Document-level chemical-induced disease semantic relation extraction using bidirectional long short-term memory on dependency graph. Int. J. Comput Intell. Syst. 16(1), 131 (2023)

Qin, X., Wang, C., Yuan, Y., Qi, R.: Prediction of in-class performance based on mfo-attention-lstm. Int. J. Comput Intell. Syst. 17(1), 13 (2024)

Zheng, W., Shen, T., Chen, X., Deng, P.: Interpretability application of the just-in-time software defect prediction model. J. Syst. Softw. 188, 111245 (2022)

Acknowledgements

We thank Michael Fu for providing us the source code of LineVul. The authors would like to thank the editor and reviewers for their valuable comments to improve the quality of this paper.

Funding

This research is funded by the Key Field Research and Development Plan of Guangdong Province (2022B0101070001), Guangdong Natural Science Fund Project (2024A1515010204) and the second batch of cultivation projects of Pazhou Laboratory (PZL2022KF0008).

Author information

Authors and Affiliations

Contributions

Xuanye Wang developed the idea for this research and wrote the paper. Lu Lu and Zhanyu Yang reviewed the article. Qingyan Tian and Haishan Lin conducted a review of the literature and methodology.

Corresponding author

Ethics declarations

Conflict of interest

The authors declare no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Wang, X., Lu, L., Yang, Z. et al. Parameter-Efficient Multi-classification Software Defect Detection Method Based on Pre-trained LLMs. Int J Comput Intell Syst 17, 152 (2024). https://doi.org/10.1007/s44196-024-00551-3

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s44196-024-00551-3