Abstract

Entwistle learning approaches are an evidence-based lens for analysing and improving student learning. Quantifying potential effects on attainment and in specific medical curriculum types merits further attention. This study aimed to explore medical students’ learning approaches in an integrated, problem-based curriculum, namely their validity, reliability, distribution, and how they change with student progression; their association with satisfaction; their association with cumulative attainment (examinations). Within the pragmatism paradigm, two series of mixed-methods questionnaires were analysed multi-cross-sectionally and longitudinally. Of seven surveys of Liverpool medical students (n ~ 115 to 201 responders, postal) and one of prospective medical students (n ~ 968 responders, on-campus), six included Entwistle 18-item Short RASI—Revised Approaches to Studying Inventory and six included ‘satisfaction’ items. Comparing four entry-cohorts, three academic years (9-year period), four levels (year-groups), and follow-ups allowed: cross-tabulation or correlation of learning approaches with demography, satisfaction, and attainment; principal components analysis of learning approaches; and multiple regression on attainment. Relatively high deep and strategic approach and relatively low surface approach prevailed, with strategic approach predominating overall, and deep and strategic approach waning and surface approach increasing from pre-admission to mid-Year 5. In multivariable analysis, deep approach remained associated with sustained (cumulative) high attainment and surface approach was inversely associated with passing Year 1 examinations first time (adjusted odds ratio = 0.89, p = 0.008), while higher ‘satisfaction’ was associated with higher strategic and lower surface approach but not with attainment. This study illuminates difficulties in maintaining cohesive active learning systems while promoting deep approach, attainment, and satisfaction and dissuading surface approach.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Background

Deep, surface, and strategic learning approaches are an evidence-based lens to study complex health professions education. Discussing them critically may well clarify ways to improve educational practice and student attainment (Dinsmore and Alexander 2012), consistent with education scholarship goals [“improving the readiness… for unknown and unexpected futures” (Eva 2020, p. 492)]. Learning approaches are patterns of motivation, strategy, self-regulation, and behaviour that vary with personal characteristics, context, discipline, curriculum, content, task, workload, and assessment (Baeten et al. 2010; Entwistle and Ramsden 1983; Kember et al. 2008; Lonka et al. 2004; Sadlo and Richardson 2003; Scouller 1998). While ideally, all learners want to learn better (Kytle 2012), some struggle to flex their learning (Fabry and Giesler 2012) or to ‘meet minds’ with educators (Karagiannopoulou and Entwistle 2019). Deep approach requires flexibility, which resonates with the scholarships of education and of discovery, i.e. connecting, communicating, and constructing knowledge (Maconachie 2002).

Entwistle built his deep–surface–strategic theoretical framework on Marton and Säljö’s (1976a, b) seminal qualitative evidence of university students studying long texts using deep or surface approaches depending on how they perceived tasks and outcomes. Entwistle and Ramsden (1983) added strategic approach (Entwistle et al. 2001).

The evidence base uses various Entwistle instruments (Entwistle and McCune 2004) and Biggs’ (1979) Study Process Questionnaire (SPQ) (Biggs et al. 2001). Entwistle’s (2000) 18-item Short RASI (Revised Approaches to Studying Inventory ASI) is just one way of measuring the three subscales on an individual:

-

Deep approach intrinsically seeks meaning (intends to understand); is interested in and relates ideas; seeks evidence; seeks patterns and underlying principles.

-

Surface approach is extrinsically motivated to avoid disapproval or failure (intends to learn what is required) and cope with course; memorizes and reproduces in wrong situations; lacks purpose; is syllabus-focussed.

-

Strategic approach competes to achieve; adapts, is organized; manages time; is assessment-focussed.

Of note, these deep–surface–strategic terms label the approaches rather than the individuals (Coffield et al. 2004a, b; Coffield 2008) and, while deep and surface scores tend to be inversely related, strategic is arguably a subdomain (Biggs et al. 2001; Kember and Leung 1998) closer to deep approach. Deep approach should integrate “the whole with its purpose… the parts into a whole… the task with oneself” (Entwistle 2018, pp. 70–71) to give meaning, connect knowledge, and use personal experience, respectively.

Entwistle learning approaches are highly cited (Calma and Davies 2015) and well-grounded in research and theory (Coffield et al. 2004a, b; Coffield 2008; Lonka et al. 2004), but the literature often mislabels them as ‘learning styles’ (Curry 1999)—evidence for that concept is unconvincing (Coffield 2008; Cook 2005; Hargreaves et al. 2005; Norman 2009, 2018; Pashler et al. 2008; Scott 2010) (e-Appendix 1). In contrast, Coffield et al.’s extensive review (2004a, b) classified Entwistle learning approaches [and Vermunt’s (2005) related ‘learning patterns’, e.g. ‘meaning-directed learning’] to a useful family of evidence-based process models (‘Approaches, strategies, orientations, and conceptions’) that education, effort, and experience can adapt (Scott 2010).

Illustrating the versatility of Entwistle learning approaches for different countries, disciplines, and purposes, the University of Helsinki reported institution-wide use of a modified 12-item Entwistle Approaches to Learning and Studying Inventory (ALSI) complementing another Entwistle inventory about the perceived learner–educator environment. Measuring learning approaches helped to counsel students, enhance their deep approach (seek understanding, seek evidence, and relate ideas), and investigate learning in many disciplines. The other inventory identified student problems with stressful workloads, time management, or disorganized studying, and together these instruments prompted curriculum redesign, sharing of good practice, and staff development.

Evidence and theory

Evidence and theory about learning approaches span higher education. Health professions education should benefit from further exploration though of how much learning approaches adapt, their association with satisfaction, and their association with attainment:

Change with progression

Many university disciplines prefer deep approach, but promoting it is an enduring challenge (Asikainen and Gijbels 2017; Baeten et al. 2010; Mattick and Knight 2007; Reid et al. 2005) requiring constructive alignment with student experience (Biggs et al. 2001). Asikainen and Gijbels’s (2017) systematic review of 43 longitudinal studies dispelled assumptions that deep approach must automatically improve just by attending university. Deep approach may well wane while surface approach increases (Entwistle and Ramsden 1983), yet longitudinal evidence suggests that medical students using a deep approach do likewise as doctors and are happier career-wise (McManus et al. 2004).

Association with satisfaction

In theory, deep or strategic approach should enhance complex practice and satisfaction. Deep approach has been associated positively with student satisfaction about educators, curricula, and collegiate experience generally (Prosser and Trigwell 1990; Nelson Laird 2008). Better learning possibly “[transforms structures learnt from the educator] into a personally satisfying form” (Entwistle and Entwistle 1991, p. 223).

Association with attainment

Ultimately though, student attainment is crucial and should reflect meaning-directed learning. For example, Vermunt’s (2005) meaning-directed learning (akin to deep approach and particularly age- and discipline-dependent) is associated with good attainment.

Besides prior academic performance, aptitude (intellect), and sociodemographic factors (age, sex, socioeconomic status), there are numerous likely predictors of undergraduate ‘grade-point average’ (GPA) (Richardson et al. 2012). The learning approaches domain (deep, strategic, surface) accounted for only 3/42 non-intellectual predictors that Richardson et al. (2012) identified, overlapping with four other psychological domains (personality, motivation, self-regulation, and learning strategies) and psychosocial context. Learning approaches and personality might mediate how ability affects academic performance (Chamorro-Premuzic and Furnham 2008), but quantifying possible attainment effects of learning approaches, particularly deep, is elusive (Lindblom-Ylänne and Lonka 1999; McCune and Entwistle 2011). Recommended research strategies include multivariable analysis of individual follow-up from (pre)-entry to graduation while acknowledging the learning context (Richardson et al. 2012). Specific academic curricula need exploring to ensure that assessments across higher education are “rewarding engaged critical thinking and connections [being built]” (Herrmann et al. 2017, p. 40).

In medicine, Lindblom-Ylänne and Lonka (1999) found that students whose study orientation embraced deep approach achieved significantly better preclinical and clinical grades. While deep approach may be “a necessary, but not a sufficient, condition for productive studying” (Lonka et al. 2004, p. 307), success in medicine might also require the persistence of strategic approach, required for “organized studying and effort” (Entwistle 2018, p. 239). Furthermore, students must also self-regulate well, conceive knowledge relativistically, and not be derailed by traditional medical learning environments (Lindblom-Ylänne and Lonka 1999). Some individuals who are ‘reproduction-orientated’ (towards memorizing rather than constructing knowledge) and ‘externally regulated’ (dependent on others to control learning) show atypical scoring patterns across their learning approaches and conceptions of knowledge. Such ‘dissonant study orchestrations’ are associated with suboptimal performance and can include high-scoring on both deep and surface approach, induced by misleading curriculum cues (Lindblom-Ylänne and Lonka 1999; Entwistle 2018). Unexpected scoring across learning approaches should prompt researchers to consider how the academic environment might derail deep approach and confuse students (Entwistle 2018). Considering the academic context (educational philosophy and curriculum implementation) is crucial when researching learning approaches, particularly aspects that might favour one or more of the three approaches. Researchers should also consider how students perceive their knowledge and their educator’s role as further potential influences.

Relatively few studies report learning approaches longitudinally, even fewer report cumulative (Richardson et al. 2012) outcomes, and medical education studies focus mostly on ‘junior’ (Ward 2011) rather than ‘senior’ year-groups (Cipra and Müller-Hilke 2019). Medical students’ learning approaches have been modestly associated with academic performance (by ASI: Clarke 1986; Newble et al. 1988, including longitudinally: Arnold and Feighny 1995; Duckwall et al. 1991; by SPQ: Isik et al. 2018, including longitudinally: McManus et al. 1998) but less so clinical performance, possibly due to the inventories (Isik et al. 2018).

Other evidence has been unconvincing or unsupportive. For example, on multiple regression, Leiden et al.’s (1990) Years 1–3 Nevada medical students showed no significant association between deep, strategic, or surface approaches (from ASI) and grade-point average (GPA) or National Board of Medical Examiners (NBME) Part I scores. This was despite correlations, respectively, being (albeit unquantified and non-significant): positive, positive, negative. Assessment outcomes in conventional curricula tend to correlate negatively with surface approach rather than positively with deep approach (Clarke and McKenzie 1994). Strategic approach might even outperform deep approach (Ward 2011), fostering academic success for some medical students and less anxiety (e.g. Year 1 anatomy, Cipra and Müller-Hilke 2019) plus better objective structured clinical examination (OSCE) performance (Year 3, Martin et al. 2000).

Educational context, problem-based curricula, and medical students

Reid et al. (2012) urged further exploration by curriculum type, particularly problem-based, after finding medical students’ learning approaches hardly changed over a hybrid programme where PBL focussed on promoting deep approach. Theoretically ‘whole-system’ problem-based curricula (Dangerfield et al. 2007) should improve deep approach (Baeten et al. 2010), based on improving active learning by constructing flexible knowledge, critical reasoning, self-directed learning, collaborative skills, and intrinsic motivation (Hmelo-Silver and Eberbach 2012). Aligning with deep approach, students discuss how concepts and principles relate, apply these to authentic scenarios, and integrate resources, knowledge, and skills (Dolmans et al. 2016), but evidence of this encouraging better learning approaches is mixed after early promise. Coles (1985) found learning approaches worsened significantly by the end-of-Year 1 in a conventional medical curriculum, but surface approach decreased significantly in a problem-based curriculum (from a similar baseline) while deep approach held steady. Cross-sectionally, Newble and Clarke (1986) found that Years 1 and 3 had significantly higher deep and lower surface approaches (but similar strategic approach) in a problem-based versus conventional curriculum. While in the former, deep learning scored similarly between Years 3 and 5/6, in the latter deep learning increased significantly and progressively over Years 1, 3, and 5/6.

Dolmans et al.’s (2016) systematic review responded to “inconsistency and ambiguity” (p. 1089) in research about deep and surface approaches across higher education (e.g. Baeten et al. 2010). They concluded that PBL does indeed improve deep approach, even though average effect-sizes (Cohen’s d) were small and non-significant—at + 0.11 standard deviations for deep approach (positive effect in 11/21 studies, negative effect in four, no effect in six, and more positive for whole curriculum implementations). Good student experiences of the occupational therapy educational environment are associated significantly with deep approach, positively, but the association is stronger with surface approach, negatively (Sadlo and Richardson 2003). Discouraging surface approach may indeed be more important than promoting deep approach (Ward 2011). Chung et al. (2015) found that Year 1–3 medical students’ deep approach was unchanged after case-based learning. Regarding wider implications, Cipra and Müller-Hilke (2019) found that surface approach was associated significantly with trait anxiety (rs = 0.50) in a problem-based curriculum, risking burnout from suboptimal attainment.

Besides curriculum ethos and setting, assessments might change students’ preferred learning approaches (Baeten et al. 2010; Scouller 1998). Heavy assessment might even promote surface approach [especially immediately pre-examination (Newble and Clarke 1986)] and strategic approach (Ramsden 1988). Multiple-choice tests might promote surface approach, whereas essay-type coursework promotes deep approach, but both show surface approach associated negatively with performance (Scouller 1998).

In summary

Priorities in learning approaches research include more longitudinal analyses (Chonkar et al. 2018; Dolmans et al. 2016; Herrmann et al. 2017), how much medical students change their dominant learning approach (Ward 2011), the elusive relationship between deep approach and attainment (Entwistle 2018) for specific disciplines and curriculum types (Herrmann et al. 2017), and the relevance of satisfaction (Rienties and Toetenel 2016). We might therefore ask: How do learning approaches change and relate to satisfaction and attainment in an integrated, problem-based curriculum?, expecting that such whole-system designs should improve learning, attainment, and satisfaction.

Aim

To explore medical students’ learning approaches in an integrated, problem-based curriculum, namely (a) their validity, reliability, distribution, and how they change with student progression, (b) their association with satisfaction, (c) their association with cumulative attainment (examinations).

Setting

The 5-year Liverpool problem-based curriculum admitted medical student entry-cohorts (n ~ 200 to 270), by two-person interview, between 1996 and 2013. Problem-based learning (PBL) in groups of 7–10 was the main learning mode, particularly in Years 1–2. A problem-based philosophy of active learning used clinically relevant scenarios for context, complemented by early clinical simulation and contact (clinical skills, communication skills, clinical placements); spiral curriculum; community-orientation; and integration across subject boundaries with no preclinical–clinical divide. The goal was more meaningful learning and attainment and less overload. Focussed on critical understanding, written examinations tested core content by multiple-choice, extended-matching, and short-answer items (including key clinical features). OSCEs assessed clinical and communication skills; a Year 4 clinical examination involved patients. Liverpool 4-year graduate-entry programme started in 2003 for n ~ 29 students, combining 2 years into one bespoke year before students joined main Year 3. From 2006, a satellite campus at another university also delivered the 5-year Liverpool degree for n ~ 50, before becoming its own degree-awarding medical school 12 years later. Initial national notification of these satellite places came too late to start a new selection process, so inaugural entrants were mostly applicants just missing the interview score for a Liverpool main-campus place.

Despite the community orientation, only 16% of ‘subsequently admitted’ prospective medical students expressed their career intention as general practice (Maudsley et al. 2010). Of these though, three-quarters retained that intention when surveyed 5 years later (2006/2007) (n = 74 double responders who remained in cohort by Year 5 or intercalated another degree between Year 4–5). Both those surveys and several similar contemporaneous surveys had also captured Entwistle learning approaches, allowing this current study to collate evidence comprehensively across and within four entry-cohorts.

Methods

Design

Situated within the pragmatism paradigm (Creswell 2003; Maudsley 2011) and receiving appropriate approvals (see “Ethical approval” statement), this study extracted learning approaches and related data from mixed-methods questionnaires designed for two study-series by the author. The S-series of six questionnaires S1–S6 (e.g. Maudsley et al. 2007, 2008, 2010) focussed more on students’ ideas about ‘Studying to learn’, but only the four questionnaires S3–S6 of 2001/2002 feature here. The K-series of four questionnaires (K1–K3: 2006/2007; K4: 2010/2011) focussed more on students’ ideas about the ‘Knowledge-base to be learnt’. The S- and K-labels are used here for shorthand (timeline and items: Fig. 1; e-Appendix 1).

Timeline of questionnaire surveys re Entwistle learning approachesL, ‘satisfaction’S, and the ‘ideal tutor’T: Liverpool medical students

Note •Four entry-cohorts: 1999 (S5); 2001 (S3 + S6); 2002 (S4 + K2); 2006 (K1 + K3 + K4). •In three academic years: 2001/2002, 2006/2007, 2010/2011. •At four levels: applicants and Years 1, 3, 5. •Including three individual follow-ups: start-to-end-of-Year 1 (S3 + S6, 2001/2002); linking learning approaches; S4–applicants (2001/2002) to K2–mid-Year 5 (2006/2007) if admitted (S4 + K2) linking learning approaches; start-to-end-of-Year 1 (2006/2007) to end-of-Year 5 (2010/2011): K1 + K3 + K4 linking satisfaction

A multi-cross-sectional and longitudinal design used (+ denotes individual follow-up linking):

-

Seven postal questionnaires (S3 + S6, S5; K1 + K3 + K4; K2) to Liverpool medical students, three of which (K1 + K3 + K4) also involved the satellite campus.

-

An eighth questionnaire (S4) to Liverpool medical school applicants on campus on their interview day (November–March of annual admissions cycle).

This new analysis now collates and explores learning approaches data from six of the above questionnaires and context about satisfaction from six and about ‘ideal tutor’ perceptions from four.

Questionnaire cover letters outlined the research, assured confidentiality, and stated that the unique identifier allowed reminders plus linking to other (outcome) information. The questionnaires sought parental occupation (coded medical or not), postcode for Townsend score (1999 and 2001 entry-cohorts) (Hoare 2003), and ethnicity of United Kingdom (UK)—‘Home’ students [as per Office for National Statistics (ONS) censuses: 1991, 2011 (Laux 2019)]. Class lists allowed cross-checking or supplementation of demographic data about age, sex, and ‘Home’ (UK plus European Community unless otherwise stated) versus ‘overseas’ status.

Three item sets used 5-point Likert items (•Agree = 5 •Agree somewhat = 4 •Unsure = 3 •Disagree somewhat = 2 •Disagree = 1), as follows:

-

Entwistle Short RASI (18-item) learning approaches (from Approaches and Study Skills Inventory for Students (ASSIST), with permission) in six questionnaires, with each subscale scoring /30, six items per subscale (items listed in e-Appendix 2), e.g. Deep subscale: “Ideas in course books or articles often set me off on long chains of thought of my own”.

-

Three items designed as proxies for ‘satisfaction’ (piloted in S3, then used unchanged): Five questionnaires used all three; a sixth (K3) used item 3 only (e-Appendix 3): “If I had my time again, I would still do Medicine”. “If I had my time again, I would still do Medicine in a problem-based curriculum”. “If I had my time again, I would still do Medicine in this Liverpool problem-based curriculum”.

-

Items designed about: ‘Ideally, my problem-based learning (PBL) tutor should…’: Open-ended answers in a prior study (end-of-Year 1, S2, 1999 entry-cohort, Maudsley et al. 2008) informed a 38-item pilot-set for S3, used unchanged in S5 and S6 (Maudsley 2005), then reduced to a 24-item set for K1 (Maudsley 2009) (e-Appendix 1), e.g. “Give us the faculty learning objectives”. These four questionnaires generated regression-based z-scores (mean = 0, standard deviation = 1) on the two top components from principal components analysis (data not shown, see Maudsley 2005, 2009), i.e. “tell me what to learn” and “help me with how to learn” (e-Appendix 1), which in K1, for example, had Cronbach’s alpha of 0.7 and 0.6, respectively, for items loading at ≥ 0.4.

Data checking (e.g. distributions, missing data) and description (using IBM-SPSS-24 and StatsDirect-3) involved simple frequencies and cross-tabulations. Comparison of response rates (%) was by cohort, age, sex, and (non-)White British.

Analysis

Analysis of learning approaches (using statistical significance at p < 0.05) explored (e-Appendix 4) the following:

-

(a)

Distribution, validity and reliability [principal components analysis and Cronbach’s alpha of each subscale (and ‘if item deleted’): Brace et al. 2003; Field 2000; Tabachnick and Fidell 2000], and changes.

-

(b)

Correlation with satisfaction.

-

(c)

Relationship with summative examination outcomes (multivariable analysis: Field 2000; Sperandei 2014).

Using forced-entry multiple logistic regression (IBM-SPSS-24), several models provided odds ratios (ORs) for 5-year students’ cumulative examination outcomes. The dependent variable was ‘Progressed in-cohort by passing all examinations with(out) resit(s)’. To avoid overfitting (Babyak 2004) and drawing on Field (2000) and Sperandei (2014), choice of number and type of independent variables heeded the following:

-

Indicative number ~ n/10, where n = smallest number of students passing with(out) resit(s)

-

Expected associations from literature; associations noted in targeted cross-tabulations; possible explanatory power (Cox and Snell R2, Nagelkerke R2); avoidance of multicollinearity (checking variance inflation factors via multiple linear regression using each independent variable as the dependent variable)

-

Stability [Hosmer–Lemeshow p value; unstandardized coefficients with p value; model significance (Omnibus p)] and small subgroups

-

Intuitive importance

-

Forced-entry multiple linear regression (IBM-SPSS-24) on ‘cumulative points-score’ considered ~ n/10 variables (n = overall sample), analysis of variance (ANOVA) for model p value, and adjusted R2 for potential variation explained. The most stable and intuitive model of B-coefficients was chosen.

Results

Survey response

Across eight studies, response rates varied markedly (Table 1; e-Appendices 1-footnote, 3, 5) from 31 to 91%. Differences between responders and non-responders were minimal.

Context: Student satisfaction and perceptions of the tutor

From six surveys, cross-sectionally in three entry-cohorts and longitudinally in a fourth (start-to-end-of-Year 1, then to end-of-Year 5), the vast majority of responders ‘agreed + agreed somewhat’ that, given their time again, they would still do Medicine (e-Appendix 3). The cross-sectional downtrend in the four entry-cohorts was significant (p = 0.011) from 95.9% (end-of-Year 1, 2001/2002) to 87.4% (end-of-Year 5, 2010/2011) (e-Appendix 3-footnote*), but highest agreement was 98.6% in mid-Year 1, 2006/2007. By 2010/2011, only 54.2% of Year 5 would still do Medicine in a problem-based curriculum, 50.0% for this problem-based curriculum (67.5% and 64.3%, respectively, in 2006/2007 Year 5), which differed from the significantly more positive contemporaneous annual NSS—National Student Survey result from this cohort (Higher Education Funding Council for England 2011). In the NSS, 70% (183/304) ‘mostly + definitely’ agreed that “Overall, I am satisfied with the quality of the course” (McNemar χ2(1) = 26.46; p < 0.0001).

In paired data (n = 50 or n = 49) from following mid-Year 1 individuals longitudinally (2006/07) to end-of-Year 5 (2010/2011), mean difference in ‘agreed + agreed somewhat’ showed a small significant decline [95% confidence interval (CI)] for still doing (e-Appendix 3):

-

Medicine = −0.34 (95% CI −0.09, −0.59).

-

Medicine in a problem-based curriculum = −0.65 (−0.25, −1.05).

-

Medicine in this Liverpool problem-based curriculum = −0.78 (−0.41, −1.15).

Just under one-half of declining satisfaction (0.34 of the 0.78 above) was potentially attributable to Medicine itself. A problem-based design rather than locality or implementation potentially underpinned the remainder (0.65‒0.34 = 0.31 versus 0.78‒0.65 = 0.13). ‘Satisfaction’ with Medicine in the Liverpool problem-based curriculum did not change significantly in paired mid-to-end-of-Year 1 data (p = 0.190) (e-Appendix 3).

In all three surveys containing the tutoring items, ‘ideally… tutor should tell me what to learn’ correlated negligibly with ‘Medicine’ satisfaction but (mostly highly) significantly and negatively with problem-based and Liverpool problem-based satisfaction items (rs2 potentially only explaining 4–8% though). The lower the expectations for PBL tutors to ‘tell me what to learn’, the more satisfaction (e-Appendix 3).

Learning approaches: validity, reliability, distribution, changes

Validity and internal consistency

Learning approaches showed modest–satisfactory internal reliability over the six surveys. Cronbach alpha was highest for strategic approach (Table 2):

-

0.633–0.722 deep.

-

0.671–0.744 strategic.

-

0.608–0.731 surface.

Items performed as expected in their effect on Cronbach’s alpha ‘if item deleted’ (Table 2-footnote; e-Appendix 2-footnote).

In principal components analysis, the 18-item model had good factorability (Kaiser-Meyer-Olkin (KMO) measures = 0.7–0.8), validating its use. Items mostly loaded as expected in the best (3-component) solution, showing modest–satisfactory reliability (e-Appendix 2).

Distribution

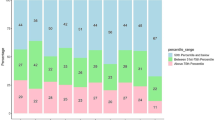

Learning approaches showed similar distributions in all six surveys (Table 2; e-Appendix 5). Strategic approach dominated [median: 22.0–27.0], closely followed by deep approach [median: 21.0–24.0], then low surface approach [median: 13.0–15.0].

For each learning approach, the percentage scoring it highest differed significantly between the four main entry-cohorts at, respectively, pre-entry, start-of-Year 1, mid-Year 3 (2001/2002), and end-of-Year 5 (2010/2011) (e-Appendix 5). Cross-sectionally, strategic approach predominated in about one-half to two-thirds of responders, surface approach predominated least (1.0%) at pre-entry, and deep approach predominated most for start-of-Year 1 (40.8%) (e-Appendix 5).

Mean pre-entry scores (Table 2) (and proportion with subscale predominating and overall points proportion for subscale: data not shown) did not differ significantly between responders subsequently admitted or not. Of admitted responders, similar proportions of 2002 entrants (n = 220) or deferred 2003 entrants (n = 18) had each learning approach predominating [data not shown]. Deferred entrants’ overall proportion of points for strategic approach appeared slightly lower (39.3% versus 41.7%), but p = 0.056 (e-Appendix 5). Mean strategic score appeared lower, but p = 0.079 if equal variances assumed (Table 2-footnote).

In ‘K4-End-of-Year 5’ responders (2010/2011), mean deep approach differed significantly by intercalation status: highest if currently intercalating, then Year 5 who had intercalated previously, then non-intercalating Year 5 (p = 0.04) (Table 2) [K2–Year 5 intercalation variable was unavailable to analyse].

Changes in learning approaches? Two cohorts, each cross-sectionally at Year 5

Each Year 5 (2006/2007 and 2010/2011) had each learning approach predominating in similar proportions (deep: 26.1% versus 24.0%; strategic: 67.0% versus 66.9%; surface: 7.0% versus 9.1%, χ2(2) = 0.66, p = 0.80) with similar mean scores (deep: 21.07 versus 21.54, t(234) = 0.91, p = 0.36; strategic: 23.40 versus 24.25, t(234) = 1.60, p = 0.11; surface: 15.33 versus 14.90, t(234) = 0.71, p = 0.48).

Changes in learning approaches? Two cohorts, each followed longitudinally

Deep and surface scores did not change significantly in n = 160 paired sets from start-to-end-of-Year 1 (S3–S6, 2001/2002). Mean (median) changes in individuals were +0.04 (0) and +0.33 (+0.5), respectively (paired t159 = 0.16, p = 0.874; t159 = –1.03, p = 0.305). Mean strategic score increased modestly from 22.42 to 23.21, with a significant mean (median) increase of +0.79 (+1.0, i.e. the difference, for example, between agree and strongly agree on one of six items) (paired t159 = 2.70, p = 0.008; 95% CI on +0.79 difference: +0.21, +1.37).

All three approaches changed significantly in 74 paired responses from pre-admission to mid-Year 5 (2001/2002–2006/2007) (Table 2). Mean (median) changes in deep and surface scores were –2.6 (−3.0) and +2.2 (+1.5), respectively (paired t(73) = −5.46, p < 10–6; 95% CI on −2.59 difference −1.65, −3.54; paired t(73) = 3.44, p < 0.001; 95% CI on 2.20 difference 0.93, 3.48). Mean strategic score dropped significantly from 26.78 to 24.04, a mean (median) decrease of −2.7 (−2.0) (paired t(73) = −5.78, p < 10–7; 95% CI on −2.74 difference −1.80, −3.69). Pre-admission-to-mid-Year 5 correlations (rs) of deep = 0.36 (p = 0.002), strategic = 0.38 (p = 0.001), and surface = 0.26 (p = 0.024) echoed most change being towards surface approach.

Learning approaches and satisfaction

Relationship between satisfaction and learning approach

In all four cohorts, strategic approach correlated significantly and positively (rs2 potentially explaining 4–19%) and surface approach significantly and negatively (potentially explaining 3–24%) with the satisfaction items. In mid-Year 5, 2006/2007, correlations were strongest and very highly significant between (e-Appendix 3):

-

Strategic approach and ‘would still do Medicine’, e.g. rs(111) = 0.44.

-

Surface approach negatively and ‘would still do Medicine in a problem-based curriculum’ (rs(112) = −0.49) and ‘…in this Liverpool problem-based curriculum’ (rs(113) = −0.48), respectively.

Deep approach had mostly negligible correlation, but mid-Year 3 (2001/2002) showed significant weak–modest positive correlations with all three satisfaction items.

Learning approaches and summative examination outcomes

Overview of summative examination outcomes available in the three entry-cohorts

Most 1999 entrants still in-cohort by end-of-Year 4 had passed all examinations first time at all three levels (end-of-Y1, mid-Year 3, end-of-Y4): 118/184 (64.1%). At each level, respectively, the percentage passing without resitting was 74.4% (163/219), 77.9% (155/199), and 83.9% (156/186, denominator included two students not then progressing in-cohort).

Of the 2001 entrants taking end-of-Year 1 examinations (268/272), 197/268 (73.5%) passed first time.

For the 2006 entrants to the 5-year programme ‘progressing in-cohort’ (including those now intercalating post-Year 4, 2010/2011) (n = 275), their end-of-Year 4 ‘cumulative points-score’ was approximately Normally distributed. This was also so when including others now joining the 2006 entrants in Year 5 such as returning intercalators from the year above (n = 406). Most 2006 entrants had taken at least one resit in what had now become four sets of end-of-year examinations. Very highly significantly fewer passed first time than in the 1999 entry-cohort in the original three-stage system (109/275, 39.6% versus 64.1%: χ2(1) = 26.46; p < 0.0001).

Overview of summative examination outcomes by subgroups in the three entry-cohorts

Of the 1999 entrants, cumulative performance by end-of-Year 4 (passing versus resitting) did not differ significantly by: age, male, White British, Home status (versus ‘international’), medical parent(s) [data not shown], affluence of England and Wales resident postcodes at entry, or ‘would still do Medicine in this Liverpool problem-based curriculum’ (e-Appendix 6-footnote). Mean regression-based score about ideal tutor (from principal components analysis, mid-Year 3) did not differ significantly either:

-

‘ideally… tutor should tell…’: −0.12 (n = 88 with no resits) versus 0.14 (n = 53) (t(139) = −1.46, p = 0.146)

-

‘ideally… tutor should help…’: 0.13 versus −0.18 (t(91.7) = 1.78, p = 0.079, assuming unequal variances, as Levene’s Test: F = 4.34, p = 0.039). Statistical evidence was insufficient to support a suggestion here that ‘passing all examinations first time throughout’ was associated with viewing the ideal tutor as ‘helping’ with learning (rather than ‘telling me what to learn’).

Of the 2001 entrants (Year 1 outcomes only), students passing all end-of-Year 1 examinations first time were significantly older (mean age at entry = 20.4 years, n = 177) than those passing via resit (19.3 years, n = 46) (t = 3.48, p = 0.001 assuming unequal variances, as Levene’s Test: F = 13.41, p < 0.001; 95% CI on 1.16 difference 0.50, 1.82). They did not differ significantly though by the other variables, including affluence and ‘satisfaction’ (e-Appendix 6-footnote). It was notable that female, White British (χ2(1) = 3.31; p = 0.069), and Home students (χ2(1) = 3.01; p = 0.083) had a non-significant but higher percentage passing without resits [data not shown]. This mirrored a non-significant pattern for 1999 entrants’ end-of-Year 4 cumulative outcome, as did the direction of start-of-Year 1 and end-of-Year 1 mean ideal tutor (regression-based) scores for passing without resits. Mean end-of-Year 1 ‘satisfaction’ (with Liverpool problem-based curriculum) of those passing versus passing with resits was: 4.25 versus 3.92 (n = 152, n = 36) (t(186) = 1.69, p = 0.093).

For the 2006 entrants staying in-cohort, mean ‘cumulative points-score’ (out of 6.75) was significantly higher if female, White British, Home status (e-Appendix 6-footnote), and of non-medical parents: 4.89 (n = 153) versus 4.22 (n = 26) (t(177) = 4.27, p = 0.00003: 95% CI on 0.68 difference 0.36, 0.99). Home status entrants on the Liverpool campus scored slightly and significantly better than on the satellite campus (inaugural cohort without an overseas entry) (n = 222: 4.85 versus n = 39: 4.49 (t(259) = 2.70, p = 0.007)). ‘Cumulative points-score’ was also associated inversely with the mid-Year 1 score on ‘ideally… tutor should tell me what to learn’ (rs(273) = −0.22, n = 120, p = 0.018).

Those passing everything without resits were more likely to be:

-

Female: 80/109 (73.4%) versus 90/166 (54.2%) (χ2(1) = 10.25, p = 0.001)

-

White British: 57/70 (81.4%) versus 69/119 (58.0%) (χ2(1) = 10.90, p = 0.001)

-

Of non-medical parent(s): 62/66 (93.9%) versus 91/113 (80.5%) (χ2(1) = 6.03, p = 0.014)

-

Low-scoring on ‘ideally… tutor should tell’ (mid-Year 1): −0.27 (n = 43) versus 0.12 (n = 77) (t(118) = −2.04, p = 0.043)

but not significantly different by the other variables (e.g. ‘satisfaction’: e-Appendix 6-footnote).

Overview of bivariable analysis of examination outcomes by learning approach in the three entry-cohorts

In bivariable analysis, passing without resits was associated with learning approach in all three cohorts (e-Appendix 6).

For the 1999 entrants, at each of the three assessment levels the general pattern was higher mean deep and strategic approaches and lower surface approach (mid-Year 3 scores) if passing first time versus resitting [data not shown], but this was non-significant. End-of-Year 1 examinations gave the strongest evidence, with slightly lower mean surface approach of 15.3 (n = 123) versus 17.0 (n = 32) (t(153) = –1.95, p = 0.053: 95% CI on −1.64 difference −3.29, 0.02). The cumulative performance by end-of-Year 4 showed the general pattern [data not shown], with significantly higher mean deep scores if passing all examinations without resitting, i.e. 21.2 (n = 89) versus 19.7 (n = 53) (t(140) = 2.10, p = 0.038: 95% CI on 1.50 difference 0.85, 2.92).

For the 2001 entrants, general pattern recurred. If passing all examinations without resitting, mean surface approach (start-of-Year 1) was significantly slightly lower: 14.4 (n = 147) versus 16.8 (n = 41) (t(186) = −3.07, p = 0.002: 95% CI on −2.41 difference −0.86, −3.95). The strategic approach difference fell short of significance though: 22.7 versus 21.5, respectively (t(186) = 1.81, p = 0.072: 95% CI on 1.27 difference −0.12, 2.66). Deep approach did not differ significantly [data not shown]. Using end-of-Year 1 measures instead, only the mean surface score followed the general pattern, i.e. 15.1 (n = 153) without resit versus 16.4 (n = 36) with resit, but short of significance (t(187) = −1.80, p = 0.074: 95% CI on −1.36 difference −2.86, 0.13).

For the 2006 entrants staying in-cohort plus peers with them in Year 5, their strategic and surface approaches (measured end-of-Year 5 or end-of-intercalation-year) showed no clear pattern of association (rs) with the ‘cumulative points-score’, a top three-quarters ‘cumulative points-score’, or whether they had avoided resitting examinations throughout. A small positive association with deep approach was non-significant—higher mean deep approach versus a top three-quarters ‘cumulative points-score’ had the smallest p value, i.e. 21.2 (n = 67) versus 18.9 (n = 12) (t(77) = 1.92, p = 0.058: 95% CI on 2.26 difference −0.08, 4.61).

Overall in the bivariable analysis above, surface (negatively) and deep approach (positively) had the strongest associations with attainment (e-Appendix 6 summary table).

Multiple regression analysis on assessment outcomes

In the three entry-cohorts studied for ‘progressing in-cohort’ (the 2001 entrants through to end-of-Year 1 outcomes; the 1999 and the 2006 entrants cumulatively through to end-of-Year 4), the best multiple logistic regression models for ‘passing all examinations without resits’ and percentage variation possibly explained (without obvious collinearity) were as follows:

-

2001 entry-cohort through to end-of-Year 1: 4-variable model of: deep and surface approaches; whether Home status; whether male (e-Appendix 7a): Surface approach was significantly negatively associated with passing first time (OR 0.89) and Home status was significantly positively associated (3.3 times the odds). The model ‘explained’ 6.9–10.6% of variation (p = 0.009; n = 188, three outliers).

-

1999 entry-cohort cumulatively through to end-of-Year 4: 5-variable model of: deep and surface approaches measured mid-Year 3; ‘ideally… tutor should tell’ score; whether Home status; whether male (e-Appendix 7b): Deep approach remained significantly associated (positively) with passing everything first time (OR 1.10, p = 0.033). The model ‘explained’ 6.7–9.1% of variation (p = 0.081; n = 141, no outliers).

-

2006 entry-cohort cumulatively through to end-of-Year 4: 5-variable model of: end-of-Year 5 deep approach scores; whether of medical parent(s); whether White British; whether male; whether on the satellite campus) (e-Appendix 7c): The last three variables had a suggested association with passing everything first time (positively, negatively, negatively, respectively), but p values fell short of significance (p = 0.058, p = 0.067, p = 0.079). The model ‘explained’ 13.3–17.9% of variation (p = 0.051; n = 77, one outlier).

The best multiple linear regression model on the 2006 entry-cohort’s ‘cumulative points-score’ was

-

2006 entry-cohort cumulatively through to end-of-Year 4: 7-variable model (e-Appendix 7d): Deep approach (positively, B = 0.045, p = 0.039) and whether Home status (positively), whether medical parent(s) (negatively), and whether on the satellite campus (negatively) were significantly associated with the ‘cumulative points score’. According to standardized beta, ‘effect sizes’ were 0.2–0.3 standard deviations per one standard deviation change in those four independent variables. Assuming similarity to Cohen’s d (McGough and Faraone 2009) would deem this ‘weak–modest’, but maybe educational researchers interpret this as stronger (Kraft 2020) or even controversial (Simpson 2021). The model ‘explained’ about 18.1% of variation (p = 0.004, n = 77).

Age and satisfaction did not enhance any of the four models presented and nor did affluence in the two models with the available data [data not shown].

Summary of association between learning approaches and summative examination outcomes

Overall, in the bivariable and multivariable analysis above, deep approach was associated with Year 1–4 sustained high examination attainment and surface approach was associated inversely with Year 1 outcome.

In bivariable analysis (above and e-Appendix 6)

-

In the two entry-cohorts with analysis of Year 1 examination performance, the 2001 entrants showed it to be significantly inversely associated with surface approach, and the 1999 entrant data suggested similarly (albeit p = 0.053).

-

In the two entry-cohorts with analysis of sustained Year 1–4 examination performance, the 1999 entrants showed it to be significantly associated with deep approach, and the 2006 entrant data suggested similarly (albeit p = 0.058) for a top three-quarters Year 1–4 ‘medical school performance’ score.

In multivariable analysis (above and e-Appendix 7a–d), progress through end-of-Year 1 examinations without resits remained significantly inversely associated with surface approach when adjusted for deep approach, Home status (which remained a significant predictor), and being male (2001 entry-cohort). In two further multivariable models, sustained Year 1–4 attainment remained significantly associated with deep approach when using

-

‘progress without resits’ (adjusted for surface approach, ‘ideally… tutor should tell…’, Home status, and male) (1999 entry-cohort) or

-

‘cumulative points score for all assessments’ (available only for this most recent 2006 entry-cohort). Of note, after adjustment, the ‘cumulative points score’ also remained inversely associated with medical parent(s), the satellite campus (which accepted slightly lower-scoring interviewees that initial year), and overseas (‘not Home’) status but not with strategic or surface approach or being male.

Discussion

This study of medical students found relatively high deep and strategic approach and relatively low surface approach in four entry-cohorts, with strategic approach predominating overall, and deep and strategic approach waning and surface approach increasing from pre-admission to mid-Year 5. Deep approach was associated with sustained (cumulative) high attainment and surface approach was inversely associated with passing Year 1 examinations first time, while higher ‘satisfaction’ was associated with higher strategic and lower surface approach but not with attainment.

Effect sizes and changes were relatively modest but, despite some Short RASI items being ill-suited to this specific curriculum, learning approaches were consistent. Pre-entry and Year 1 measures are important. Baseline learning approaches probably influence how students: perceive their educational context and tasks; choose learning approaches; and perform academically (Arnold and Feighny 1995).

The conceptual lexicon of deep–strategic–surface approaches remains crucial in discussing how best to learn (Coffield et al. 2004a, b) and capturing complexity in higher education, including “everyday idiosyncrasy” (Entwistle et al. (2001) p. 104).

Relating the findings to the evidence base and what this study adds

Learning approaches: validity, reliability, distribution, changes

The four entry-cohorts studied showed valid expression of the subscales and a consistent strategic > deep > surface pattern. Chonkar et al. (2018) found similar (with each predominating, respectively: 50.8%, 40.3%, 8.8%) in a Singapore hospital’s students from two medical schools (but including visiting students on elective). In contrast, Cipra and Müller-Hilke’s (2019) medical students (Rostock, Germany) had deep approach predominating in 70.2% compared with Liverpool start-of-Year 1 at 40.8% and end-of-Year 1 at 40.4% versus Ward’s (2011) medical students—start-of-Year 1: 51.1% (and 41.2% strategic).

The current study had strategic approach highest-scoring pre-entry, unlike Leiden et al.’s (1990) Year 3 medical students having strategic approach significantly higher than Years 1 and 2 (but that was cross-sectional). Strategic and deep approach then remained relatively high in both Year 5s studied, despite decreasing significantly (while surface learning increased significantly) longitudinally in individuals by mid-Year 5. Improving on medical students’ relatively high entry-level deep approach might be difficult—“What scores are ‘deep enough’ at each stage…?” anyway (Chung et al. 2015, p. 210). The literature reports very little such follow-up of individual medical students and pre-entry applicants for longer-term assessment outcomes as Richardson et al. (2012) recommended.

Of the significant rs correlations for each approach with itself across this pre-entry-to-mid-Year 5 period, deep = 0.36, strategic = 0.38, surface = 0.26, the first two were similar to McManus et al. (1998): 0.37, 0.34, 0.42 (all p < 0.001). McKee et al. (2009) reported similar but mostly non-significant changes cross-sectionally between first and final year for nursing and medical students completing anonymous unlinked surveys—deep and strategic decreased slightly and surface approach increased.

In the current study, the only Year 1 change was strategic approach increasing significantly, whereas Tooth et al.’s (1989) Year 1 increased surface approach and decreased deep and strategic significantly and Reid et al.’s (2005) Year 2 decreased deep and strategic significantly. Whatever the change, most might occur in the first semester (Fabry and Giesler 2012). Concerning the longer follow-up here, after 4 years, intercalating responders scored significantly more on deep approach than non-intercalating Year 5, as for McManus et al. (1999), but Year 5 who had intercalated the previous year were in-between, suggesting a transient effect.

Reported research use of this specific Short RASI is unusual. Using the 2013 updated Short RASI with general practitioners (n = 544) across the UK, Curtis et al. (2018) found their mean deep, strategic, and surface approaches (/30) to be 20.9 (significantly higher), 20.2 (similar), 13.9 (significantly lower) versus general practice specialty registrars (n = 461): 20.2, 20.0, 15.2. Corresponding scores for end-of-Year 5 Liverpool medical students (e-Appendix 3) differed significantly, respectively, 21.1, 23.4, 15.3. Liverpool students thus approximated to GPs on deep approach and GP registrars on surface approach but out-scored both on strategic approach, possibly from having just completed an intense year of presenting a required portfolio of clinical and academic evidence for final summative assessment.

Learning approaches and satisfaction

In the current study, ‘satisfaction’ was unchanged during the Year 1 of individual follow-up but then declined significantly by mid-Year 5. Medicine itself possibly explained one-half of that decline. Satisfaction was significantly inversely associated with expecting tutors to ‘tell…’ you what to learn (albeit only 4–8% of variation), unlike how PBL is meant to be. Only one-half of end-of-Year 5 agreed (somewhat) that: …I would still do Medicine in this Liverpool problem-based curriculum, but this cohort’s contemporaneous annual NSS result (Higher Education Funding Council for England 2011) was significantly more positive, with 70% being ‘satisfied’.

Turkish medical students with deep approach predominating [on Biggs et al.’s Revised 2-Factor SPQ (2001)] were significantly more satisfied with PBL than if surface approach predominated (Gurpinar et al. 2013). In Baeten et al.’s (2010) review, more than the environment itself, students’ perceptions of it (satisfaction with tutor, workload, or relevance) were positively associated with deep approach prevailing, supported by Gustin et al.’s (2018) path analysis.

In the current study, across four entry-cohorts, strategic and surface approaches correlated significantly positively and negatively, respectively, with satisfaction. Deep approach correlated significantly (positively) only for the earliest entry-cohort though, whereas Nelson Laird (2008) (using a comparable concept of satisfaction: “If you could start over again, would you go to the same institution you are now attending?”) found such association consistently in senior students across all university disciplines.

Crucially, the current study showed no clear association between satisfaction and attainment, consistent with the large-scale findings across 151 Open University modules (Rienties and Toetenel 2016) and thus reinforcing that finding with medical students.

Learning approaches and summative examination outcomes

The 2001 entry-cohort had start-of-Year 1 surface approach significantly inversely associated with Year 1 examination performance as Papinczak (2009) also found in a problem-based curriculum, whereas Mattick et al. (2004) found a significant Year 1 association with deep approach for ‘progress testing’ performance.

The current study finding that deep approach was associated with sustained (cumulative) high attainment adds more insight to a scattered and tentative evidence base. On meta-analysis, Watkins (2001) and Richardson et al. (2012) found weak–modest correlations between university students’ deep (positively), strategic (positively), and surface (negatively) approaches and academic scores. May et al.’s (2012) Year 4 higher-performing medical students on clinical examination scored significantly higher on deep approach (especially patient interaction and patient satisfaction) and lower on surface approach. McManus et al. (1998) found that, unlike learning approaches at application, Year 5 SPQ deep, strategic, surface approaches correlated (minimally but) significantly positively, positively, negatively with combined academic and clinical final examination performance.

Using Parpala and Lindblom-Ylänne’s (2012) LEARN inventory and adjusting for age, sex, and year of study, Herrmann et al.’s (2017) multilevel model found surface approach inversely related to examination performance but variably so across social science disciplines. Unlike the current study, deep approach did not predict academic performance, but ‘organized effort’ (an extension of strategic approach) did, similar to Tooth et al.’s (1989) Year 1 medical students.

That the 2006 entrants’ Year 1–4 ‘cumulative points score’ remained inversely associated (after adjustment) with having medical parent(s) was interesting; that entry-cohort had also not shown association between medical parentage and medical school admission (Maudsley et al. 2010). Maybe medical parents misadvised about non-traditional examinations.

In this study, age, ‘satisfaction’, and affluence did not enhance the models despite (on bivariable analysis) students passing Year 1 examinations without resits in one entry-cohort being significantly slightly older and all four entry-cohorts having, respectively, strategic and surface approach correlating significantly positively and negatively with satisfaction. In contrast, Richardson et al.’s (2012) meta-analysis found older, female, and more affluent students performed significantly better academically, but each weighted r was only ~ 0.1. May et al.’s (2012) female medical students scored significantly higher on strategic approach (but similarly to males on deep and surface), which also correlated significantly with patient satisfaction in a clinical examination. Females’ better study habits may also improve academic achievement (Alzahrani et al. 2018).

In this study, evidence in one cohort of higher sustained attainment by White British students fell short of statistical significance, but ‘overseas’ status remained a significant negative predictor of end-of-Year 1 and of sustained Y1–Y4 examination performance in models adjusted for relevant learning approaches in different cohorts. Isik et al.’s (2018) evidence among Amsterdam medical students suggested that poorer attainment in ethnic minority groups might reflect assessment type, for example, if it rewards strategic approach but they either used deep or errant strategic approach, but the current study adjusted for some learning approaches. Overall, well-documented poorer academic attainment in non-white UK medical students is under scrutiny as a complex inequality probably partly arising from negative stereotyping and suboptimal social learning networks (Claridge et al. 2018; Woolf et al. 2008, 2012). Woolf et al.’s (2011) meta-analysis found that examiner bias or candidate communication skills were unlikely reasons as machine-marked written examinations showed similar-sized inequalities. In the current study, ‘examinations’ combined machine-marked items and anonymously marked items besides face-to-face practical examinations.

‘So what?’ for this type of curriculum

Why does this evidence still matter? Firstly, it cannot be assumed that medical students will necessarily improve their learning approaches just because the curriculum is problem-based. In the current study, while learning approaches were not associated with selection to medical school, deep and strategic approaches then waned significantly longitudinally and surface approach increased (pre-entry-to-mid-Year 5). The problem-based design and philosophy prevailed most though in Years 1 and 2 (weakening in senior clinical placements), and another entry-cohort did maintain deep approach longitudinally in Year 1. Balasooriya et al. (2009) found a similarly complex and polarized student response to an integrated, self-directed teamworking curriculum, whereby deep approach decreased and surface approach increased in some students, associated with intolerance of uncertainty and of integrated learning across body systems. Indeed, successful curriculum integration is tricky, requiring explicit support (Chipamaunga and Prozesky 2019). While Sadlo and Richardson (2003) found significantly higher deep approach and lower surface approach in problem-based versus subject-based curricula, in occupational therapy across several countries, their data were cross-sectional.

Secondly, students using much surface learning may well be uncomfortable with problem-based curricula, but satisfaction is not a key determinant of sustained high attainment. National attention on ‘satisfaction’-focussed league tables might distract from using educationally robust ways of challenging students out of surface approach. Papinczak’s (2009) high-scoring students on both deep and strategic approaches appeared protected in PBL, being more positive and less stressed about the experience, but a ‘metacognitive intervention’ (Papinczak et al. 2008) did not reverse worsening self-efficacy and deep and strategic approaches over Year 1.

Thirdly, showing that deep approach was associated with sustained (cumulative) high attainment complements an evidence base requiring more clarity about the key curriculum design features. Gustin et al.’s (2018) path analysis suggested that curriculum integration surpasses PBL in promoting deep approach, with educational context and students perception’ of it possibly explaining one-quarter of variance in deep approach.

Strengths and limitations

As Dinsmore and Alexander (2012) recommended, the current study used a clear definition of learning approaches, from Entwistle’s conceptual framework, and explored these within a specified type of learning environment. Furthermore, several entry-cohorts showed acceptable construct validity (by principal components analysis) with modest–satisfactory reliability (Cronbach’s alpha) (Lance et al. 2006) of the Short RASI—the instrument has featured little in the literature. This study explored not just: “what is the relation between levels of processing and learning outcomes” but also “for whom, at what point in development, in what situations, and for what end?” (Dinsmore and Alexander 2012, p. 522).

Percentage questionnaire response ranged from low–modest to excellent, and responders were suitably representative overall. Applicant response rate was similar, for example, to McManus et al.’s (1998) postal response of 92%. It was a strength that this ‘domain’/discipline-specific (Lonka et al. 2004) study of medical students involved multiple entry-cohorts, year-groups, and academic years and undertook some paired comparisons. Good governance rightly constrained research access though, i.e. when and how often different research projects could study specific entry-cohorts, but researching within everyday educational practice involves such compromises, as accommodated within a ‘horses-for-courses’ pragmatism paradigm. Only one medical school was studied, using self-reported data, with longitudinal comparisons mostly two-point and some rather small analytical subgroups. Relatively small sample sizes limited how many variables the multivariable models could legitimately test and regression analyses were not hierarchical to reflect likely complexity.

Analysed outcomes merged quite different assessment modes and contents, and potential selection bias lay in studying only those remaining in-cohort of the 5-year curriculum but not leavers, year-repeaters, or 4-year programme entrants. Despite some Short RASI items being unsuited to this curriculum though, a coherent pattern of modest associations emerged, even when measuring some learning approaches after the index assessment. Here, the two entry-cohorts followed for sustained examination performance unfortunately excluded the entry-cohort followed from pre-entry. Both multivariable models had rather weak explanatory potential (4-variable, including deep and surface: 7–9%; 5-variable, including deep: 13–18%) but were consistent in magnitude with Richardson et al.’s (2012) meta-analysis, where the deep–strategic–surface combination could explain 9% of variance in ‘grade-point average. Data were unavailable to adjust for many key influences, e.g. ability, prior academic qualifications, personality. The datasets for the three entry-cohorts were unsuitable to combine for a multilevel mixed analysis with entry-cohort as the clustering variable, and no suitable common assessment measure was available for repeated measures analysis.

Conclusion

In-depth analysis of learning approaches illuminated difficulties in maintaining cohesive active learning systems while promoting deep approach, attainment, and satisfaction and dissuading surface approach. This study provides further evidence about ‘How much?’ learning approaches might change and ‘With what outcome?’.

High-quality learning has many barriers (Mattick and Knight 2007). How to prompt more deep approach in an integrated, self-directed curriculum based on small-groupwork is unclear and must be balanced with support and structure for struggling (Balasooriya et al. 2009) or dissatisfied students. While curriculum–assessment alignment should dissuade students (Biggs et al. 2001; Ramsden 2005) from the “unreflectiveness… unrelatedness… memorization” of surface approach (Entwistle 2018, p. 71), it is debatable by how much, particularly in different stages and contexts (and how much deep or strategic approach is required).

Research agendas should focus on the mechanisms that might explain—the relationships between learning approaches, satisfaction, and attainment; how struggling subgroups might be supported; and how learning systems might reassure students and improve satisfaction about learning and attaining in educationally robust ways.

Data availability

Data generated or analysed during this study are included in this published article and its supplementary information files. Consent was not obtained from participants for data-sharing beyond such anonymized presentations.

Code availability

Not applicable.

References

Alzahrani SS, Park YS, Tekian A (2018) Study habits and academic achievement among medical students: a comparison between male and female subjects. Med Teach 40:S1–S9. https://doi.org/10.1080/0142159x.2018.1464650

Arnold L, Feighny KM (1995) Students’ general learning approaches and performances in medical school: a longitudinal study. Acad Med 70(8):715–722. https://doi.org/10.1097/00001888-199508000-00016

Asikainen H, Gijbels D (2017) Do students develop towards more deep approaches to learning during studies? A systematic review on the development of students’ deep and surface approaches to learning in higher education. Educ Psychol Rev 29(2):205–234. https://doi.org/10.1007/s10648-017-9406-6

Babyak MA (2004) What you see may not be what you get: a brief, nontechnical introduction to overfitting in regression-type models. Psychosom Med 66(3):411–421. https://doi.org/10.1097/01.psy.0000127692.23278.a9

Baeten M, Kyndt E, Struyven K, Dochy F (2010) Using student-centred learning environments to stimulate deep approaches to learning: factors encouraging or discouraging their effectiveness. Educ Res Rev 5(3):243–260. https://doi.org/10.1016/j.edurev.2010.06.001

Balasooriya CD, Hughes C, Toohey S (2009) Impact of a new integrated medicine program on students’ approaches to learning. High Educ Res Dev 28(3):289–302. https://doi.org/10.1080/07294360902839891

Biggs J (1979) Individual differences in study processes and the quality of learning outcomes. High Educ 8(4):381–394. https://doi.org/10.1007/bf01680526

Biggs J, Kember D, Leung DYP (2001) The revised two-factor Study Process Questionnaire: R-SPQ-2F. Br J Educ Psychol 71(1):133–149. https://doi.org/10.1348/000709901158433

Brace N, Kemp R, Snelgar R (2003) SPSS for psychologists: a guide to data analysis using SPSS for Windows, 2nd edn. Palgrave Macmillan, Basingstoke

Calma A, Davies M (2015) Studies in higher education 1976–2013: a retrospective using citation network analysis. Stud High Educ 40(1):4–21. https://doi.org/10.1080/03075079.2014.977858

Chamorro-Premuzic T, Furnham A (2008) Personality, intelligence and approaches to learning as predictors of academic performance. Pers Individ Dif 44(7):1596–1603. https://doi.org/10.1016/j.paid.2008.01.003

Chipamaunga S, Prozesky D (2019) How students experience integration and perceive development of the ability to integrate learning. Adv Health Sci Educ 24(1):65–84. https://doi.org/10.1007/s10459-018-9850-1

Chonkar SP, Ha TC, Chu SSH, Ng AXH, Lim MLS, Ee TX, Ng MJ, Tan KH (2018) The predominant learning approaches of medical students. BMC Med Educ 18(17):1–8. https://doi.org/10.1186/s12909-018-1122-5

Chung E-K, Elliott D, Fisher D, May W (2015) A comparison of medical students’ learning approaches between the first and fourth years. South Med J 108(4):207–210. https://doi.org/10.14423/smj.0000000000000260

Cipra C, Müller-Hilke B (2019) Testing anxiety in undergraduate medical students and its correlation with different learning approaches. PLoS ONE 14(3):1–11. https://doi.org/10.1371/journal.pone.0210130

Claridge H, Stone K, Ussher M (2018) The ethnicity attainment gap among medical and biomedical science students: a qualitative study. BMC Med Educ 18(325):1–12. https://doi.org/10.1186/s12909-018-1426-5

Clarke RM (1986) Students’ approaches to learning in an innovative medical school: a cross-sectional study. Br J Educ Psychol 56(3):309–321. https://doi.org/10.1111/j.2044-8279.1986.tb03044.x

Clarke DM, McKenzie DP (1994) Learning approaches as a predictor of examination results in preclinical medical students. Med Teach 16(2–3):221–227. https://doi.org/10.3109/01421599409006734

Coffield F (2008) Just suppose teaching and learning became the first priority. Learning and Skills Development Agency, Learning & Skills Network, London, 75 pp. ISBN 9781845727086. As at Dec-2021: https://weaeducation.typepad.co.uk/wea_education_blog/files/frank_coffield_on_teach_and_learning.pdf

Coffield F, Moseley D, Hall E, Ecclestone K (2004a) Learning styles and pedagogy in post-16 learning: a systematic and critical review. Learning & Skills Resource Centre, London, 173 pp. ISBN 1853389188. As at Dec-2021: https://www.researchgate.net/publication/232929341_Learning_styles_and_pedagogy_in_post_16_education_a_critical_and_systematic_review

Coffield F, Moseley D, Hall E, Ecclestone K (2004b) Should we be using learning styles? What research has to say to practice. Learning & Skills Resource Centre, London, 82 pp; ISBN 1-85338-9145. As at Dec-2021: https://www.researchgate.net/publication/244441072_Should_We_Be_Using_Learning_Styles_What_Research_Has_to_Say_to_Practice

Coles CR (1985) Differences between conventional and problem-based curricula in their students’ approaches to studying. Med Educ 19(4):308–309. https://doi.org/10.1111/j.1365-2923.1985.tb01327.x

Cook DA (2005) Learning and cognitive styles in web-based learning: theory, evidence, and application. Acad Med 80(3):266–278. https://doi.org/10.1097/00001888-200503000-00012

Creswell JW (2003) Research design: qualitative, quantitative, and mixed methods approaches, 2nd edn. Sage Publications, London, 246 pp.

Curry L (1999) Cognitive and learning styles in medical education. Acad Med 74(4):409–413. https://doi.org/10.1097/00001888-199904000-00037

Curtis P, Taylor G, Harris M (2018) How preferred learning approaches change with time: a survey of GPs and GP specialist trainees. Educ Prim Care 29(4):222–227. https://doi.org/10.1080/14739879.2018.1461027

Dangerfield P, Dornan T, Engel CE, Maudsley G, Naqvi J, Powis D, Sefton A (2007) A whole system approach to problem-based learning in dental, medical and veterinary sciences—a guide to important variables. Centre for Excellence in Enquiry-Based Learning (CEEBL) (University of Manchester), Manchester. As at Dec-2021: http://www.ceebl.manchester.ac.uk/resources/guides/pblsystemapproach_v1.pdf

Dinsmore DL, Alexander PA (2012) A critical discussion of deep and surface processing: what it means, how it is measured, the role of context, and model specification. Educ Psychol Rev 24(4):499–567. https://doi.org/10.1007/s10648-012-9198-7

Dolmans D, Loyens SMM, Marcq H, Gijbels D (2016) Deep and surface learning in problem-based learning: a review of the literature. Adv Health Sci Educ 21(5):1087–1112. https://doi.org/10.1007/s10459-015-9645-6

Duckwall JM, Arnold L, Hayes J (1991) Approaches to learning by undergraduate students: a longitudinal study. Res High Educ 32(1):1–13. https://doi.org/10.1007/bf00992829

Entwistle NJ (2000) The short revised Approaches to Studying Inventory. In: Scoring key for the Approaches and Study Skills Inventory for Students (ASSIST), 1st edn. University of Edinburgh, Centre for Research into Learning and Instruction, Edinburgh. (With permission, 2001.) [Tyler S, Entwistle N 2013 Approaches to learning and studying inventory (ASSIST), 3rd edn. As at Dec-2021: https://www.researchgate.net/publication/50390092_Approaches_to_learning_and_studying_inventory_ASSIST_3rd_edition]

Entwistle NJ (2018) Student learning and academic understanding: a research perspective with implications for teaching. Academic Press, Amsterdam, 387 pp. https://doi.org/10.1016/c2014-0-02037-0

Entwistle NJ, Entwistle A (1991) Contrasting forms of understanding for degree examinations: the student experience and its implications. High Educ 22(3):205–227. https://doi.org/10.1007/bf00132288

Entwistle N, McCune V (2004) The conceptual bases of study strategy inventories. Educ Psychol Rev 16(4):325–345. https://doi.org/10.1007/s10648-004-0003-0

Entwistle NJ, Ramsden P (1983) Understanding student learning. Croom Helm, London. https://doi.org/10.4324/9781315718637

Entwistle N, McCune V, Walker P (2001) Conceptions, styles and approaches within higher education: analytical abstractions and everyday experience (chapter 5). In: Sternberg RJ, Zhang LF (eds) Perspectives on thinking, learning and cognitive styles. Lawrence Erlbaum, Mahwah, pp 103–136. https://doi.org/10.4324/9781410605986-5

Eva KW (2020) Strange days. Med Educ 54(6):492–493. https://doi.org/10.1111/medu.14164

Fabry G, Giesler M (2012) Novice medical students: individual patterns in the use of learning strategies and how they change during the first academic year. GMS Z Med Ausbild [Journal for Medical Education]. https://doi.org/10.3205/zma000826

Field AP (2000) Discovering statistics using SPSS for Windows: advanced techniques for the beginner. Sage, London

Gurpinar E, Kulac E, Tetik C, Akdogan I, Mamakli S (2013) Do learning approaches of medical students affect their satisfaction with problem-based learning? Adv Physiol Educ 37(1):85–88. https://doi.org/10.1152/advan.00119.2012

Gustin MP, Abbiati M, Bonvin R, Gerbase MW, Baroffio A (2018) Integrated problem-based learning versus lectures: a path analysis modelling of the relationships between educational context and learning approaches. Med Educ Online 23(1):1–12. https://doi.org/10.1080/10872981.2018.1489690

Hargreaves D on behalf of: Beere J, Swindells M, Wise D, Desforges C, Goswami U, Wood D, Horne M, Lownsbrough H (2005) About learning. Report of the Learning Working Group. Demos, London, 25 pp. ISBN 1-84180-140-2. As at Dec-2021: https://www.demos.co.uk/files/About_learning.pdf

Herrmann KJ, McCune V, Bager-Elsborg A (2017) Approaches to learning as predictors of academic achievement—results from a large scale, multi-level analysis. Högre Utbildning [Higher Education] 7(1):29–42. https://doi.org/10.23865/hu.v7.905

Higher Education Funding Council for England (HEFCE) (2011) National Student Survey data for all institutions, 2011. HEFCE, Bristol. As at Dec 2021: Archived at: https://webarchive.nationalarchives.gov.uk/20180319122413/, http://www.hefce.ac.uk/lt/nss/results/2011/

Hmelo-Silver C, Eberbach C (2012) Learning theories and problem-based learning (chapter 1). In: Bridges S, McGrath C, Whitehill TL (eds) Problem-based learning in clinical education: the next generation. Springer, Dordrecht, pp 3–17

Hoare J (2003) Comparison of area-based inequality measures and disease morbidity in England, 1994–1998. Health Stat Q 18(Summer):18–24. As at Dec-2021: Archived at: https://webarchive.nationalarchives.gov.uk/20151014023318http://www.ons.gov.uk/ons/rel/hsq/health-statistics-quarterly/no--18--summer-2003/index.html

Isik U, Wilschut J, Croiset G, Kusurkar RA (2018) The role of study strategy in motivation and academic performance of ethnic minority and majority students: a structural equation model. Adv Health Sci Educ 23(5):921–935. https://doi.org/10.1007/s10459-018-9840-3

Karagiannopoulou E, Entwistle N (2019) Students’ learning characteristics, perceptions of small-group university teaching, and understanding through a “meeting of minds.” Front Psychol 10(444):1–12. https://doi.org/10.3389/fpsyg.2019.00444

Kember D, Leung DYP (1998) The dimensionality of approaches to learning: an investigation with confirmatory factor analysis on the structure of the SPQ and LPQ. Br J Educ Psychol 68(3):395–407. https://doi.org/10.1111/j.2044-8279.1998.tb01300.x

Kember D, Leung DYP, McNaught C (2008) A workshop activity to demonstrate that approaches to learning are influenced by the teaching and learning environment. Active Learn High Educ 9(1):43–56. https://doi.org/10.1177/1469787407086745

Kraft MA (2020) Interpreting effect sizes of education interventions. Educ Res 49(4):241–253. https://doi.org/10.3102/0013189X20912798

Kytle J (2012) To want to learn. Insights and provocations for engaged learning, 2nd edn. Palgrave Macmillan, New York, pp 111–136

Lance CE, Butts MM, Michels LC (2006) The sources of four commonly reported cutoff criteria—what did they really say? Organ Res Methods 9(2):202–220. https://doi.org/10.1177/1094428105284919

Laux R (2019) 50 years of collecting ethnicity data [History of Government blog]. UK Government, Cabinet Office, London, 7 Mar. As at Dec-2021: https://history.blog.gov.uk/2019/03/07/50-years-of-collecting-ethnicity-data/

Leiden LI, Crosby RD, Follmer H (1990) Assessing learning-style inventories and how well they predict academic performance. Acad Med 65(6):395–401. https://doi.org/10.1097/00001888-199006000-00009

Lindblom-Ylänne S, Lonka K (1999) Individual ways of interacting with the learning environment—are they related to study success? Learn Instr 9(1):1–18. https://doi.org/10.1016/s0959-4752(98)00025-5

Lonka K, Olkinuora E, Mäkinen J (2004) Aspects and prospects of measuring studying and learning in higher education. Educ Psychol Rev 16(4):301–323. https://doi.org/10.1007/s10648-004-0002-1

Maconachie D (2002) The scholarships of interaction. HERDSA News 24(1):10–12. ISSN 0157-1826

Martin IG, Stark P, Jolly B (2000) Benefiting from clinical experience: the influence of learning style and clinical experience on performance in an undergraduate objective structured clinical examination. Med Educ 34:530–534. https://doi.org/10.1046/j.1365-2923.2000.00489.x

Marton F, Säljö R (1976a) On qualitative differences in learning-I: outcome and process. Br J Educ Psychol 46(Feb):4–11. https://doi.org/10.1111/j.2044-8279.1976.tb02980.x

Marton F, Säljö R (1976b) Qualitative differences in learning-II: outcome as a function of the learner’s conception of task. Br J Educ Psychol 46(Jun):115–127. https://doi.org/10.1111/j.2044-8279.1976.tb02304.x

Mattick K, Knight L (2007) High-quality learning: harder to achieve than we think? Med Educ 41(7):638–644. https://doi.org/10.1111/j.1365-2923.2007.02783.x

Mattick K, Dennis I, Bligh J (2004) Approaches to learning and studying in medical students: validation of a revised inventory and its relation to student characteristics and performance. Med Educ 38(5):535–543. https://doi.org/10.1111/j.1365-2929.2004.01836.x

Maudsley G (2005) Medical students’ expectations and experience as learners in a problem-based curriculum: a ‘mixed methods’ research approach. [Doctor of Medicine (MD) thesis; Public Health/Medical Education]. The University of Liverpool, Liverpool, 407 pp.

Maudsley G (2009) Medical students’ personal epistemology in a problem-based curriculum. [MA in Learning and Teaching in Higher Education dissertation]. The University of Liverpool, Liverpool, 122 pp.

Maudsley G (2011) Mixing it but not mixed-up: mixed methods research in medical education (a critical narrative review). Med Teach 33(2):e92–e104. https://doi.org/10.3109/0142159x.2011.542523

Maudsley G, Williams EMI, Taylor DCM (2007) Junior medical students’ notions of a ‘good doctor’ and related expectations: a mixed methods study. Med Educ 41(5):476–486. https://doi.org/10.1111/j.1365-2929.2007.02729.x

Maudsley G, Williams EMI, Taylor DCM (2008) Problem-based learning at the receiving end: a ‘mixed methods’ study of junior medical students’ perspectives. Adv Health Sci Educ 13(4):435–451. https://doi.org/10.1007/s10459-006-9056-9

Maudsley G, Williams EMI, Taylor DCM (2010) Medical students’ and prospective medical students’ uncertainties about career intentions: cross-sectional and longitudinal studies [Web-paper]. Med Teach 32(3):e143–e151. https://doi.org/10.3109/01421590903386773

May W, Chung EK, Elliott D, Fisher D (2012) The relationship between medical students’ learning approaches and performance on a summative high-stakes clinical performance examination. Med Teach 34(4):E236–E241. https://doi.org/10.3109/0142159x.2012.652995

McCune V, Entwistle N (2011) Cultivating the disposition to understand in 21st century university education. Learn Individ Differ 21(3):303–310. https://doi.org/10.1016/j.lindif.2010.11.017

McGough JJ, Faraone SV (2009) Estimating the size of treatment effects: moving beyond P values. Psychiatry 6(10):21–29