Abstract

Background

In the context of the growth of pharmacovigilance (PV) among developing countries, this systematic review aims to synthesise current research evaluating developing countries’ PV systems’ performance.

Methods

EMBASE, MEDLINE, CINAHL Plus and Web of Science were searched for peer-reviewed studies published in English between 2012 and 2021. Reference lists of included studies were screened. Included studies were quality assessed using Hawker et al.'s nine-item checklist; data were extracted using the WHO PV indicators checklist. Scores were assigned to each group of indicators and used to compare countries’ PV performance.

Results

Twenty-one unique studies from 51 countries were included. Of a total possible quality score of 36, most studies were rated medium (n = 7 studies) or high (n = 14 studies). Studies obtained an average score of 17.2 out of a possible 63 of the WHO PV indicators. PV system performance in all 51 countries was low (14.86/63; range: 0–26). Higher average scores were obtained in the ‘Core’ (9.27/27) compared to ‘Complementary’ (5.59/36) indicators. Overall performance for ‘Process’ and ‘Outcome’ indicators was lower than that of ‘Structural’.

Conclusion

This first systematic review of studies evaluating PV performance in developing countries provides an in-depth understanding of factors affecting PV system performance.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Pharmacovigilance (PV) with its ultimate goal of minimising risks and maximising the benefits of medicinal products serves as an important public health tool [1, 2]. The World Health Organization (WHO) defines PV as “the science and activities relating to the detection, assessment, understanding and prevention of adverse effects or any other drug-related problem”(p. 7) [3].

Prior to approval by regulatory authorities, drug products are required to undergo extensive testing and rigorous evaluation during clinical trials, to establish their safety and efficacy [4, 5]. The rationale for post-marketing PV is based on the need to mitigate the limitations of pre-marketing/registration clinical trials including small population sizes, a short length of time and the exclusion of special population groups (e.g. pregnant women and children) [6, 7]. Therefore, unexpected or severe adverse drug reactions (ADRs) are often not identified before regulatory approval resulting in increased morbidity, mortality and financial loss [8, 9]. PV allows for the post-marketing (i.e. real-world) collection of drug safety and efficacy information thereby reducing patients' drug-related morbidity and mortality [10]. Moreover, PV reduces the financial costs associated with the provision of care for patients affected by such problems [11, 12]. This is achieved by communicating medicines’ risks and benefits thus enhancing medication safety at various levels of the healthcare system [13] as well as providing information and knowledge informing regulatory actions [14,15,16]. It is important to note that PV activities are not limited to protecting patient safety in the post-marketing phase but apply to a drug product’s entire lifecycle and are a continuation and completion of the analysis performed on medicines from the pre-registration clinical trials [17]. PV also plays a role in helping drug manufacturing firms in carrying out patient outreach through communicating with patients about drug products’ risk–benefit profile thus making them better informed and building their trust in the industry [18]. As the collective payers for drug products, insurance firms rely on PV information as a measure of drug products’ demonstrated value to patients in making decisions about reimbursement [18, 19].

PV systems’ differences in developing countries are influenced by local contextual factors such as healthcare expenditure, disease types and prevalence, and political climate [20]. These differences can lead to variability in medicine use and the profile of adverse effects suffered by patients which makes it essential that every country establish its own PV system [21]. Most developed countries started PV activities after the thalidomide disaster in the 1960s by establishing PV systems and joining the WHO Programme for International Drug Monitoring (PIDM) [22,23,24]. Developing countries did not join the PIDM until the 1990s or later [22,23,24], but since then, the number of developing countries implementing PV and joining WHO PIDM has steadily increased [23, 24].

Over the past few decades, both national and international legislative organisations, as well as national medicines regulatory authorities (NMRAs) have published a considerable amount of legislation and guidance to provide countries with a legal foundation and practical implementation guidance for national PV systems [25]. Among these is the Guidelines on Good Pharmacovigilance Practices (GVP) implemented by the European medicines agency (EMA) in 2012 which aim to facilitate the performance of PV in the European Union (EU) [26]. Many developing countries wishing to align their new and evolving national PV frameworks with international standards use the EMA’s GVP guidelines as a reference for setting up their national PV systems [25, 27].

The WHO recommends that PV systems incorporate evaluation and assessment mechanisms with specific performance criteria [28]. Despite the growth in PV development and practice among developing countries, a gap remains in efforts to assess, evaluate, and monitor their systems’ and activities’ status, growth, and impact [29]. To promote patient safety and enhance efforts aimed at strengthening PV systems in developing countries with nascent PV systems, it is imperative to assess existing conditions [13, 30]. Such assessment can help define the elements of a sustainable PV strategy and areas for improvements as the basis to plan for improved public health and safety of medicines [13, 29, 31].

This review aims to systematically identify published peer-reviewed research that evaluates the characteristics, performance, and/or effectiveness of PV systems in developing countries.

Methods

This systematic review was conducted in accordance with the Preferred Reporting Items for Systematic Reviews and Meta-analyses (PRISMA) statement [32]. A PRISMA checklist is included in Online Resource 1.

Theoretical Framework

As a theoretical framework, this study adopted the WHO PV indicators, which measure inputs, processes, outputs, outcomes, and impacts. These WHO indicators “provide information on how well a pharmacovigilance programme is achieving its objectives” (p. 4) [30]. Details on how the WHO PV indicators were derived and validated have been described by Isah and Edwards [29]. The indicator-based pharmacovigilance assessment tool (IPAT) was considered but not chosen because its sensitivity and specificity as a measurement tool have not been established [33].

There are 63 WHO PV indicators, which are classified into three main types: 1—Structural (21 indicators): assess the existence of key PV structures, systems and mechanisms; 2—Process (22 indicators): assess the extent of PV activities, i.e. how the system is operating; 3—Outcome/impact (20 indicators): measure effects (results and changes), i.e. the extent of realisation of PV objectives [30]. Each of these types is further subdivided into two categories: 1—Core (total 27) indicators are considered highly relevant, important and useful in characterising PV, and 2—Complementary (total 36) are additional measurements that are considered relevant and useful [30].

Information Sources and Search Strategy

Four electronic databases (EMBASE, MEDLINE, CINAHL Plus and Web of Science) were searched for international peer-reviewed research evidence published between 1st January 2012 (the year when the EMA’s guidelines on GVP were due for implementation) and 16th July 2021. The search was initiated using the term ‘pharmacovigilance’ and its synonyms in combination with other groups of keywords that covered ‘evaluation’. The search terms are listed in Table 1 (see Online Resource 2 for search strategy). Reference lists of included studies were also screened.

Data Screening

Once all duplicate titles had been removed, screening of abstracts and then full texts against the inclusion/exclusion criteria (Table 2) was conducted by the lead author. Both co-authors were consulted where queries arose, and the decision on which articles to include in the review was discussed and agreed upon by all authors.

Data Extraction, Synthesis and Quality Assessment

Data were extracted independently by the lead author and checked by the co‐authors, using a data extraction tool based on the WHO PV indicators checklist. Data were extracted at two levels: overall study and studied country/countries. For each study, data were extracted related to which of the WHO PV indicators the study provided information, while for individual countries assessed in the studies, data (qualitative and quantitative) relating to each indicator were extracted. The data were placed into Microsoft Excel and NVivo and analysed thematically to aid comparison between studies and particular countries.

A scoring system was developed for the purpose of this review to quantify the indices thus highlighting countries’ PV system strengths and deficiencies in numerical terms. Each of the 63 indicators was scored separately and a final score was calculated for each study. If information relating to an indicator was present, a score of 1 was given. A score of 0 was given where data were not provided, missing, not applicable or not clear. Where information for a particular country was provided by more than one study, the latest study was used. In cases where country data were available for more than one system level (e.g. national level and institutional level), the information from the higher level was used. The final scores were used to benchmark national PV performance and compare countries both within and across regions.

The quality of included studies was evaluated using Hawker et al.’s nine‐item checklist [34] for appraising disparate studies. The checklist allows scoring of individual parameters and a total score that allows the comparison of strengths and weaknesses within and across studies. Total scores could range from 9 to 36, by scoring studies as “Good” (4), “Fair” (3), “Poor” (2), “Very poor” (1) for each checklist item (title, introduction and aims, method and data, sampling, data analysis, ethics and bias, results, transferability or generalisability, implications and usefulness). To categorise the sum quality ranking of studies, previously used cut-offs were adopted: [35, 36] high (30–36 points), medium (24–29 points) and low quality (9–23 points).

Results

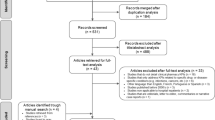

Following the removal of duplicates (n = 2175), 8482 studies were screened, with 8462 studies excluded following title, abstract, and full-text review. Screening of reference lists of the remaining studies (n = 20) lead to a total of 21 included studies. Figure 1 presents a PRISMA flowchart demonstrating this process.

Study Characteristics

The 21 included studies (Table 3) evaluated PV systems in 51 countries across single or multiple countries’ National PV Centres (NPVCs), Public Health Programmes (PHPs), healthcare facilities (e.g. hospitals) or pharmaceutical companies. Most of the studies (n = 13) had been published since 2016. Eleven studies focusesd on African countries [37,38,39,40,41,42,43,44,45,46,47] with one of these also including India [42]. Four studies involved Middle Eastern and/or Eastern Mediterranean countries [48,49,50,51], and four covered East or South-East Asian countries [52,53,54,55]. One study dealt with countries in the Asia–Pacific region [56] and one study focussed on a country in South America [57].

Ten studies employed self-completion questionnaires for data collection [45, 48,49,50,51,52,53, 55,56,57], and nine employed mixed-methods [37,38,39,40,41, 43, 44, 46, 47] including interviewer-administered questionnaires alongside a documentary review. Two studies [42, 54] employed only qualitative methods including interviews and literature or documentary review. Sixteen studies [37,38,39,40,41,42,43,44,45,46,47, 49, 53,54,55,56,57] evaluated or assessed PV practice or performance. The remaining five studies [48, 50,51,52, 55] surveyed or provided an overview of countries’ PV situation and offered insights into the maturity of PV systems.

Eight studies [39, 44, 48, 50, 52,53,54,55] focussed on national PV centre(s), while three [37, 38, 41] took more of a system-wide approach by also including other levels, i.e. healthcare facilities and PHPs. Three studies [43, 46, 51] focussed on PV at the regional level within a country. Five studies [40, 45, 47, 56, 57] focussed on PV in stakeholder institutions including pharmaceutical companies/manufacturers, Public Health Programmes (PHPs), drugstores and medical institutions.

Thirteen studies [37,38,39,40,41,42,43,44, 46, 47, 49, 53, 55] employed an analytical approach that relied on the use of a framework. The most frequently used frameworks (n = 3) used were the IPAT framework [37, 38, 41] and the WHO PV indicators [46, 47, 55]. Two studies used the East African Community (EAC) harmonised pharmacovigilance indicators tool [39, 40] and two used the WHO minimum requirements for a functional PV system [42, 53]. Two studies [43, 44] employed the Centres for Disease Control and Prevention (CDC) updated guidelines for evaluating public health surveillance systems [58] alongside the WHO PV indicators [30]. One study employed a framework that combined indicators from the IPAT and the WHO PV indicators [49].

Study Quality

Using Hawker et al.’s [34] nine-item checklist, the overall quality of included studies was deemed as ‘medium’ for seven and ‘high’ for 14. See Online Resource 3 for detailed scoring. The lowest scoring parameter was “ethics and bias” (Average = 1.9, Standard Deviation ± 0.6); the highest scoring parameter was “abstract and title” (3.9 ± 0.3). The methods used were considered appropriate for all included studies; however, seven did not provide sufficient detail on the data collection and recording process [38, 44, 45, 50,51,52, 57]. Clear sample justification and approaches were only described in three studies [43, 44, 46]. Only three studies [45, 50, 57] were rated poorly or very poorly with respect to data analysis due to limited or no detail. Apart from one study [51], studies provided clear descriptions of findings. Only three studies [41,42,43] detailed ethical issues such as confidentiality, sensitivity and consent. No studies described or acknowledged researcher bias/reflexivity. Study transferability or generalisability was affected by the use of small sample sizes [37, 41], survey non-response [45, 48,49,50, 55], focus on the national PV centre [53], the institutional level rather than the individual (Healthcare Professional (HCP) or patient) level, exclusion of some types of institutions [56] and non-testing of questionnaire reliability [52]. Only four studies [41, 52,53,54] achieved a score of 4 for the “implications and usefulness” parameter by making suggestions for future research and implications for policy and/or practice.

The main limitation described by the reviewed studies related to information validity and completeness. Eight studies [39, 40, 42, 43, 48, 50, 52, 56] cited limitations that included pertinent data missing, reliance on the accuracy of information provided or inability to verify or validate information. The second limitation was related to the collected data's currency [39, 48, 50, 56].

Finally, two studies [41, 46] reported limitations related to the evaluation tools used to evaluate PV performance. Kabore et al. [41] highlighted four limitations inherent to the IPAT including 1—Its sensitivity and specificity had not been established, 2—Possible imprecision in the quantification of responses in the scoring process, 3—The assessment’s reliance on respondents’ declarations and 4—The necessity of local adaptation due to the tool's limited testing and validation. Two studies [46, 47] raised limitations of using the WHO PV indicators including lack of trained personnel, poor documentation and the need for in-depth surveys which nascent systems are unable to execute. Furthermore, the WHO PV indicators were said to lack a scoring system that could quantify the indices thereby highlighting system deficiencies numerically [46].

Studies’ Coverage of WHO Pharmacovigilance Indicators

When investigating the number of all 63 WHO PV indicators, the studies achieved an average score of 17.2 (see Fig. 2). The highest score was 33.0 [39] and the lowest was 4.0 [45]. Studies placed a higher emphasis on evaluating ‘Core’ compared to ‘Complementary’ indicators as demonstrated by the median and average scores obtained for ‘Core’ (12.0 and 11.6/27, respectively) versus 4.0 and 5.6/36 for ‘Complementary’. Studies obtained higher median and average scores for ‘Structural’ indicators (8.0 and 7.0/10 for ‘Core’ and 4.0 and 3.3/11 for ‘Complementary’, respectively) compared to ‘Process’ (3.0 and 2.7/9 for ‘Core’ along with 1.0 and 1.5/13 for ‘Complementary’, respectively) and ‘Outcome’ indicators (2.0 and 1.9/8 for ‘Core’ and 0 and 0.8/12 for ‘Complementary’). Further detail is supplied in Online Resource 4.

Regions’ and Countries’ Pharmacovigilance Performance

Total Pharmacovigilance System Performance

The average and median scores achieved by all countries were 14.86 and 15.0/63, respectively. Although 51% of countries had a higher-than-average total score and 49% had a score above the median, none of them achieved more than 40% of the WHO indicators. The Middle East and North Africa achieved the highest average total score (15.89), and Latin America and the Caribbean the lowest (10.5). In comparison, the highest median score was achieved by the Middle East and North Africa (18.0), and the lowest was achieved by South Asia (10.0). The highest achieving country was Tanzania (26.0). Bahrain, Syria, Djibouti and Myanmar all scored zero. See Figs. 3 and 4 for the regions’ and countries’ aggregate scores, respectively, Online Resource 4 for detailed information relating to each indicator, and Online Resource 5 for detailed information on aggregate scores.

Core Indicators Performance

Out of a possible score of 27 for ‘Core’ indicators, the average was 9.27 while the median was 9.0. East Asia and the Pacific achieved the highest average score (10.17), whereas South Asia had the lowest (7.3). On the other hand, in terms of the median score, the highest was observed in Sub-Saharan Africa (11.5). And the lowest was in South Asia (7.0). The highest scoring countries among the different regions were Nigeria, Indonesia and Malaysia (15.0), whereas Bahrain, Syria, Djibouti and Myanmar scored zero.

Structural Indicators

For ‘Core Structural’ indicators, the average score for the 51 countries was 6.5 and the median was 7.0. The highest average and median scores, regionally, were observed in Sub-Saharan Africa (7.07 and 8.5, respectively), whereas the lowest were observed in Latin America and the Caribbean (5.0 and 5.5, respectively). Egypt had the highest country-level score (10.0) while Bahrain and Syria, Djibouti and Myanmar scored zero.

A facility for carrying out PV activities was reported as existing in 92% of countries, and PV regulations existed in 80% of countries. There were inconsistencies in the reported information concerning PV regulations in Oman, Yemen and Cambodia. In Oman, two studies [48, 50] reported that such regulations were present, whereas a third [49] reported they were absent. In Yemen, Qato [49] reported the presence of regulations, whereas Alshammari et al. [48] indicated the opposite. For Cambodia, conflicting information was reported by Suwankesawong et al. [53] and Chan et al. [52]. In all such cases, the latest published results were adopted.

Concerning resources, regular financial provision for conducting PV activities was reported as present in only 35% of countries, most of which were among the highest achieving countries overall. There was an inconsistency in the information provided for this indicator in Oman and the United Arab Emirates (UAE) with two studies [48, 50] stating that this was present, and one [49] that it was not. In terms of human resources, 75% of countries were found to possess dedicated staff carrying out PV activities.

Most countries (86%) were found to possess a standardised ADR reporting form. However, it was only highlighted in 16 countries whether the form included medication errors; counterfeit/substandard medicines; therapeutic ineffectiveness; misuse, abuse, or dependence on medicines; or reporting by the general public.

For only four countries (China, Egypt, Ethiopia and Uganda) was it reported that PV was incorporated into the national HCP curriculum. In 22 countries (43%), it was either unknown if a PV information dissemination mechanism existed, or it did not exist. Sixty-three per cent of countries had a PV advisory committee. Information regarding this indicator was inconsistent between Qato [49] and Alshammari et al. [48] with the former reporting Jordan and Tunisia possessed an advisory committee, the latter reporting the opposite.

Process Indicators

The overall average and median scores for ‘Core Process’ indicators were 2.06 and 2.0/9, respectively. The highest average score was in East Asia and the Pacific (2.9), whereas South Asia (1.0) achieved the lowest. Similarly, in terms of the median score, East Asia and the Pacific (3.0) was the highest while South Asia (1.0) was the lowest. No country achieved a higher score than Malaysia (7.0), while seven countries scored zero.

The absolute number of ADR reports received per year by the countries’ PV system ranged from zero (Afghanistan, Bahrain, Comoros, Qatar, and Rwanda) to 50,000 (Thailand). Most countries (n = 27) received less than 10,000 reports per year, with Iran reporting the highest yearly rate (7532 reports) and Laos and Lebanon reporting the lowest (3 reports). Only four countries reported receiving 10,000 reports or more yearly, namely China (32,513 reports), Malaysia (10,000 reports), Singapore (21,000 reports) and Thailand (50,000 reports). The remaining 20 countries either did not receive any reports or no data were provided.

The number of ADR reports increased over time in 12 countries (Algeria, Cambodia, Egypt, Iraq, Jordan, Kuwait, Morocco, Oman, Palestine, Saudi Arabia, Tunisia and Yemen), whereas they decreased in eight countries (Laos, Malaysia, Philippines, Singapore, Sudan, Thailand, the UAE and Vietnam). The percentage of total annual reports satisfactorily completed and submitted to the PV centre was reported only in Nigeria (maximum of 84.6%).

Only Singapore and Thailand reported cumulative numbers of reports as more than 100,000, while 17 countries had fewer than 20,000 reports cumulatively. Some inconsistencies for this indicator were reported by Suwankesawong et al. [53] and Chan et al. [52] for Malaysia, the Philippines, Singapore and Vietnam, with the numbers reported by the former higher than the latter.

Overall, the provision of ADR reporting feedback was poor, with all the countries either not performing this or no information being provided. Documentation of causality assessment was also poor, with only Ethiopia (2%), Kenya (5.5%), Tanzania (97%) and Zimbabwe (100%) reportedly performing this. The percentage of reports submitted to WHO was reported only in Vietnam (28%) and Zimbabwe (86%).

Among the countries which reported performing active surveillance, Algeria was the most active with 100 projects followed by Tunisia and Morocco with 50 and 10 activities, respectively. All remaining countries had fewer than seven.

Outcome Indicators

The average and mean scores overall for the ‘Core Outcome’ indicators were 0.69 and 1.0/8, respectively. Countries from East Asia and the Pacific (0.92) had the highest average score collectively, whereas South Asia (0.33) had the lowest. In terms of the median score, sub-Saharan Africa (1.0) had the highest, whereas South Asia (zero) had the lowest. Nine countries achieved the highest score (2.0), while 25 countries only scored zero.

Signal detection was reported to have occurred in 10 countries, with the highest number observed in Kenya (31 signals), whereas seven countries scored zero. The reported number of signals detected was above 10 in only three countries: Kenya, Tanzania (25 signals) and Singapore (20 signals). Among the 23 countries where information regarding the number of regulatory actions taken was reported, the highest number of actions taken was in Egypt (930 actions), whereas in 15 countries, no actions had been taken.

The number of medicine-related hospital admissions per 1000 admissions was only reported in Nigeria and ranged from 0.01 to 1.7. The reporting of pertinent data regarding the remaining five Core Outcome indicators (CO3–CO8) was inadequate as no information was provided for any of the countries.

Complementary Indicators Performance

For ‘Complementary’ indicators, the overall average and median scores were 5.59 and 6.0/36, respectively. The Middle East and North Africa (6.89 and 8.5, respectively) achieved the highest average and median scores among the regions, whereas Latin America and the Caribbean (3.5 and 4.0, respectively) achieved the lowest. The highest scoring country was Tanzania (12.0), whereas Bahrain, Syria, Djibouti and Myanmar scored zero.

Structural Indicators

For ‘Complementary Structural’ indicators, the average and mean scores were 4.24 and 4.0/11, respectively. The highest average and median scores were achieved by the Middle East and North Africa (5.44 and 6.0, respectively), whereas Latin America and the Caribbean (2.5 and 3.0, respectively) had the lowest. Five countries achieved a score of 8.0, namely Jordan, Saudi Arabia, the UAE, Ethiopia and Tanzania. Seven countries scored zero.

Three-fourths of the countries were reported to possess dedicated computer facilities to carry out PV activities as well as a database for storing and managing PV information. There was inconsistency in the data reported for Libya, with Qato [49] indicating the presence of a computer, whereas Alshammari et al. [48] reported it absent. It was indicated that in 47% of the countries, functioning communication facilities such as telephone, fax, or internet were available. A library containing reference materials on drug safety was found to be available in only 19 countries. For all the countries, it was either reported that they did not have a source of data on consumption and prescription of medicines, or no information was available.

In all 51 countries investigated, it was either reported that web-based PV training tools for both HCPs and the public were not available, or no information was reported. It was found that in 30 (60%) of countries training courses for HCPs were organised by the PV centre. There was insufficient information about the availability of training courses for the public in all countries. Less than half (41% and 49%, respectively) of countries possessed a programme with a laboratory for monitoring drug quality or mandated MAHs to submit Periodic Safety Update Reports (PSURs). Only 8% of countries had an essential medicines list and only 18% used PV data in developing treatment guidelines.

Process Indicators

The 51 countries achieved average and median scores of 1.4 and 1.0/13, respectively, for the ‘Complementary Process’ indicators. Regionally, the highest average and median scores were achieved by the Middle East and North Africa (1.44 and 2.0, respectively), while the lowest scores were achieved by Latin America and the Caribbean (both 1.0). The highest total scores were achieved by Kenya and Tanzania (both 4.0), while 12 countries scored zero.

Data regarding the percentage of healthcare facilities possessing a functional PV unit (i.e. submitting ≥ 10 reports annually to the PV centre) was reported for seven countries. However, only three of these reported a number above zero (Kenya 0.14%, Tanzania 0.26% and Zimbabwe 2.2%).

In terms of the total number of reports received per million population; it was found that Singapore had the highest number (3853 reports/year/million population), while Laos had the lowest (0.4 reports/year/million population). In 17 countries, it was indicated that HCPs represented the primary source of submitted ADR reports. Medical doctors were reported as the primary HCPs to submit ADR reports in five countries, namely Lebanon (100%), Libya (50%), Morocco (50%), Tunisia (96%) and Yemen (90%). In eight countries, manufacturers were found to be the primary source of ADR reports, namely Algeria (71%), Jordan (90%), Kuwait (93%), Mexico (59%), Pakistan 88%), Palestine (100%), Saudi Arabia (50%) and the UAE (72%).

The number of HCPs who received face-to-face training over the previous year was only reported in Ethiopia (90,814), Tanzania (76,405), Rwanda (43,725) and Kenya (8706).

No information was found in any of the studies concerning the ‘Complementary Process’ indicators 4, 6 and 9–13.

Outcome Indicators

Out of a possible score of 12, the overall average and median scores achieved for the ‘Complementary Outcome’ indicators of the studied countries were both zero, with no information reported concerning these indicators.

Discussion

To the best of the authors’ knowledge, this is the first systematic review of studies focussing on PV system performance in developing countries. The review included 21 studies covering 51 countries from different regions across the globe. Using the WHO PV indicators (both ‘Core’ and ‘Complementary’) [30] as a framework, this review focussed on identifying the areas of strength and weakness within these countries’ PV systems. The review also helped identify where different developing countries’ systems lay on the performance level spectrum. Moreover, the features associated with better performing systems were highlighted. The insights from this review can be used to inform recommendations for addressing areas requiring intervention or modification, particularly within countries with PV systems at a nascent stage of development.

The review revealed a lack of standardisation regarding the methods of evaluating PV systems. While some studies focussed on the WHO indicators, others used assessment tools developed by other organisations including the United States Agency for International Development (USAID), East African Community (EAC), the United States Centre for Disease Control (CDC) or some combination of these. The review also found that, overall, both studies’ coverage of the WHO PV indicators and developing countries’ PV system performance were both low. Furthermore, there was a mix of some indicators which were present in most or all studies/countries, while others were universally absent or only sporadically present. Generally, indicators that were either universally absent or only sporadically present in the studies/countries in this review belonged to the ‘Process’ and ‘Outcome’ indicator classes. In terms of the reviewed studies, both the ‘Complementary Process’ and ‘Complementary Outcome’ indicators’ presence was mixed with some being universally absent (e.g. number of reports from each registered pharmaceutical company received by the NPVC in the previous year and cost savings attributed to PV activities, respectively) and others being sporadically present (e.g. number of face-to-face training sessions in PV organised in the previous year and average number of medicines per prescription, respectively). Most of the ‘Core Process’ and ‘Core Outcome’ and ‘Complementary Structural’ indicators were sporadically present (e.g. percentage of reports on medication errors reported in the previous year, average cost of treatment of medicine-related illness and existence of an essential medicines list which is in use, respectively), whereas most of the ‘Core Structural’ indicators were frequently present (e.g. the NPVC has human resources to carry out its functions properly) and only a few were sporadically present (incorporation of PV into the national curriculum of the various HCPs).

In terms of the studied countries, all the ‘Complementary Outcome’ (e.g. percentage of medicines in the pharmaceutical market that is counterfeit/substandard) indicators were universally absent. The ‘Core Outcome’ and ‘Complementary Process’ indicators' presence was found to be mixed with some being universally absent (e.g. number of medicine-related deaths and percentage of MAHs submitting PSURs to the NMRA, respectively) while others were sporadically present (e.g. number of signals detected in the past five years and percentage of HCPs aware of and knowledgeable about ADRs per facility). Most of the ‘Core process’ (e.g. percentage of submitted ADR reports acknowledgement or issued feedback) indicators were found to be sporadically present. Therefore, PV system performance was found to be low in terms of the ‘Process’ and ‘Outcome’ indicators. This reflects immaturity and the inability to collect and utilise local data to identify signals of drug-related problems and to support regulatory decisions [22, 59,60,61].

With regard to ‘Structural’ indicators, most of the ‘Core’ (e.g. an organised centre to oversee PV activities) and some of the ‘Complementary’ (e.g. existence of a dedicated computer for PV activities) structural indicators were found to be frequently present among the studied countries. Hence, performance with respect to the class of ‘Structural’ indicators was relatively high. This points to government policymakers taking active steps towards establishing a PV system as a means of improving drug safety [3, 21].

High-performing PV systems in developing countries in this review were distinguished by the presence of a budget specifically earmarked for PV, a means of communicating drug safety information to stakeholders (e.g. a newsletter or website) and technical assistance via an advisory committee. On the other hand, lack of incorporation of PV into the national curriculum of HCPs and underreporting of ADRs plagued both high- and low-performing systems. This suggests that strengthening PV systems in developing countries requires targeted measures addressing these factors. In what follows, this review’s key findings described above will be discussed in more detail in the context of the WHO PV indicators[30] and existing research.

The 63 indicators developed by the WHO were not all assessed in the included studies. This meant that the data collection process in some instances necessitated extracting data from other sections of the studies such as the ‘Background’ or ‘Discussion’. In other instances, inferences were made for certain indicators based on information provided for others. A notable example was inferring the presence of a computer for PV activities when it was indicated that a computerised case report management system existed. Evaluation is defined as the systematic and objective assessment of the relevance, adequacy, progress, efficiency, effectiveness and impact of a course of action in relation to objectives while considering the resources and facilities that have been deployed [62]. An evaluation based only on a few indicators is not likely to provide a complete, unbiased evaluation of the system since multiple indicators are needed for tracking the system’s implementation and effects [58]. While the optimal number of indicators required to perform a proper assessment is likely to vary depending on the evaluation’s objectives, it could be argued that, based on definition, addressing the full set of ‘Core’ indicators should be required to provide a satisfactory evaluation [33].

This review found that the presence of a dedicated budget for PV was associated with higher system performance [30, 59, 60, 63]. The absence of sustained funding for PV hinders effective system operation since it prevents the development of the necessary infrastructure [64]. According to the WHO, funding is what allows the carrying out of PV activities in the setting [30] and it “signifies a gesture, the commitment and political will of the sponsors and the general importance given to PV” (p. 20) [30]. It is only when the other structural components of a PV system are paired with a regular and sustainable budget that real action and long-term planning can be achieved [65,66,67]. Any investment in PV should consider the substantial diversity in country characteristics such as size and population as well as the anticipated rate at which the system is going to generate reports [21, 68].

In this review, countries that had a PV information dissemination tool as part of the system achieved higher-performance scores than those that did not. The WHO indicates that an expected function of a country’s PV system is the effective dissemination of information related to medicines’ safety to both HCPs and the public [3, 30, 69]. The lack of such a tool in many developing countries systems points to the absence of clear routine and crises communication strategies [30]. The use of a drug bulletin has been cited as an effective tool for improving safety communication as well as increasing ADR reporting [70,71,72].

A feature of better performing PV systems was the presence of a PV (or ADR) advisory committee. The WHO views the existence of such a committee as essential given its influential role in developing a clear communication strategy as well as providing technical assistance to the drug regulatory process. The absence of such a committee negatively impacts system processes such as causality assessment, risk assessment and management, as well as outcomes such as communication of recommendations on safety issues and regulatory actions. Evidence from developed countries has demonstrated the value of such a committee’s scientific and clinical advice to support and promote drug safety [73, 74].

PV was found to be absent from the national curricula of HCPs in most of the countries studied, which may explain low levels of competency regarding PV and ADR reporting [75]. Studies have demonstrated that the implementation of PV-related training as a module or course for HCP students has a positive effect on their PV knowledge [76,77,78] and sensitises HCPs to issues regarding drug safety [30].

This review found that ADR reporting rates were low overall, suggesting underreporting by ADR reporters [23, 79], which may be partly due to the passive nature of the reporting systems in these [59]. Underreporting points to the PV system’s inability to collate data on the safety, quality and effectiveness of marketed drugs that have not been tested outside the confines of clinical trials. Consequently, system processes and outcomes, including data analysis, signal identification, regulatory actions, and communication and feedback mechanisms, will remain stagnant. The WHO’s guidance points to the number of ADR reports received by the system as being an indicator of PV activity in the setting, the awareness of ADRs and the willingness of HCPs to report [30]. Despite underreporting being a significant barrier to the effective functioning of PV systems in both developing and developed countries [65, 74], reporting rates have been found to be lower in developing countries than in developed ones [80]. Based on international evidence, it is reasonable to expect a developed system to target an annual reporting rate of 300 reports per million inhabitants [81]. Countries struggling with underreporting should utilise the WHO’s global database (VigiBase) as a reference for monitoring drug-related problems [60]. Furthermore, data from countries with similar population characteristics and co-morbidities receiving smaller numbers of ADR can be gathered into a single database which would allow an analysis of the pooled data to provide relevant solutions [60, 64].

This review has a few limitations. First, the included studies were very heterogeneous and differed in their aim, structure, content, method of evaluation and targeted level of PV system/activity, which may limit the extent of the findings’ generalisability. This was partially overcome by applying the WHO indicators as a means of standardising the extracted information. Second, a limitation of the WHO PV indicators is the lack of a scoring system to quantifiably measure PV system performance. This was overcome by the development of a scoring system thus enabling a comparison of a country’s PV system performance status against the WHO PV indicators and that of other countries.

Conclusion

This is the first systematic review that focuses on studies that evaluate PV performance and activities in developing countries, using WHO PV indicators. The included studies provide an in-depth understanding of the various factors affecting PV system performance and activities. This study’s findings demonstrate that a multistakeholder approach towards strengthening PV systems in developing countries is required and the necessity of resource and data consolidation and the establishment of regional collaborations to assist PV systems that are in their nascent stage. Furthermore, it highlights the need for applying a holistic approach that takes into account the resources and infrastructure available when addressing the policy and programmatic gaps in each country.

References

Segura-Bedmar I, Martinez P. Special issue on mining the pharmacovigilance literature. J Biomed Inform. 2014;49:1–2.

Jeetu G, Anusha G. Pharmacovigilance: a worldwide master key for drug safety monitoring. J Young Pharm. 2010;2(3):315–20.

World Health Organization (WHO). The Importance of Pharmacovigilance: Safety Monitoring of Medicinal Products Geneva: World Health Organization; 2002. https://apps.who.int/iris/handle/10665/42493. Accessed 28 Feb 2022

World Health Organization (WHO). Clinical trials Geneva: World Health Organization; 2022. https://www.who.int/health-topics/clinical-trials#tab=tab_1. Accessed 1 March 2022

NIH National Institute on Aging. What Are Clinical Trials and Studies? Baltimore, MD.: National Institutes of Health (NIH) National Institute on Aging (NIA); 2020. https://www.nia.nih.gov/health/what-are-clinical-trials-and-studies. Accessed 1 March 2022

Brewer T, Colditz GA. Postmarketing surveillance and adverse drug reactions: current perspectives and future needs. JAMA. 1999;281(9):824–9.

Montastruc JL, Sommet A, Lacroix I, Olivier P, Durrieu G, Damase-Michel C, et al. Pharmacovigilance for evaluating adverse drug reactions: value, organization, and methods. Joint Bone Spine. 2006;73(6):629–32.

Edwards IR. Who cares about pharmacovigilance? Eur J Clin Pharmacol. 1997;53(2):83–8.

Harmark L, van Grootheest AC. Pharmacovigilance: methods, recent developments and future perspectives. Eur J Clin Pharmacol. 2008;64(8):743–52.

Babigumira JB, Stergachis A, Choi HL, Dodoo A, Nwokike J, Garrison LP. A framework for assessing the economic value of pharmacovigilance in low- and middle-income countries. Drug Saf. 2014;37:127–34.

Gyllensten H, Hakkarainen KM, Hägg S, Carlsten A, Petzold M, Rehnberg C, et al. Economic Impact of adverse drug events—a retrospective population-based cohort study of 4970 adults. PLoS ONE. 2014. https://doi.org/10.1371/journal.pone.0092061.

Pirmohamed M, James S, Meakin S, Green C, Scott AK, Walley TJ, et al. Adverse drug reactions as cause of admission to hospital: prospective analysis of 18 820 patients. BMJ. 2004;329(7456):15–9.

Strengthening Pharmaceutical Systems (SPS) Program. Supporting Pharmacovigilance in Developing Countries: The Systems Perspective. Submitted to the U.S. Agency for International Development by the SPS Program Arlington, VA.: Management Sciences for Health; 2009. https://www.gims-foundation.org/wp-content/uploads/2016/06/Supporting-Pharmacovigilance-in-developing-countries-2009.pdf. Accessed 28 Feb 2022

World Health Organization (WHO). Pharmacovigilance: Ensuring the Safe Use of Medicines. Geneva, 2004

Council for International Organizations of Medical Sciences (CIOMS). Practical Approaches to Risk Minimisation for Medicinal Products: Report of CIOMS Working Group IX. Geneva: Council for International Organizations of Medical Sciences (CIOMS); 2014. https://cioms.ch/publications/product/practical-approaches-to-risk-minimisation-for-medicinal-products-report-of-cioms-working-group-ix/. Access Date

European Medicines Agency (EMA). Pharmacovigilance London: European Medicines Agency (EMA); 2015. https://www.ema.europa.eu/en/documents/leaflet/pharmacovigilance_en.pdf. Accessed 27 Feb 2022

Mammì M, Citraro R, Torcasio G, Cusato G, Palleria C, di Paola ED. Pharmacovigilance in pharmaceutical companies: an overview. J Pharmacol Pharmacother. 2013;4(Suppl 1):S33–7.

du Plessis D, Sake J-K, Halling K, Morgan J, Georgieva A, Bertelsen N. Patient centricity and pharmaceutical companies: is it feasible? Ther Innov Regul Sci. 2017;51(4):460–7.

Hagemann U. Behind the scenes: ‘silent factors’ influencing pharmacovigilance practice and decisions. In: Edwards IR, Lindquist M, editors. Pharmacovigilance: critique and ways forward. Cham: Springer; 2017. p. 67–79.

Vaidya SS, Bpharm JJG, Heaton PC, Steinbuch M. Overview and comparison of postmarketing drug safety surveillance in selected developing and well-developed countries. Drug Inf J. 2010;44(5):519–33.

Uppsala Monitoring Centre (UMC). Safety monitoring of medicinal products: Guidelines for setting up and running a pharmacovigilance centre Uppsala: WHO-UMC; 2000. https://www.who-umc.org/media/1703/24747.pdf. Accessed 28 Feb 2022

Isah AO, Pal SN, Olsson S, Dodoo A, Bencheikh RS. Specific features of medicines safety and pharmacovigilance in Africa. Ther Adv Drug Saf. 2012;3(1):25–34.

Elshafie S, Zaghloul I, Roberti AM. Pharmacovigilance in developing countries (part I): importance and challenges. Int J Clin Pharm. 2018;40(4):758–63.

Ampadu HH, Hoekman J, de Bruin ML, Pal SN, Olsson S, Sartori D, et al. Adverse drug reaction reporting in Africa and a comparison of individual case safety report characteristics between Africa and the rest of the world: analyses of spontaneous reports in VigiBase®. Drug Saf. 2016;39(4):335–45.

Peters T, Soanes N, Abbas M, Ahmad J, Delumeau JC, Herrero-Martinez E, et al. Effective pharmacovigilance system development: EFPIA-IPVG consensus recommendations. Drug Saf. 2021;44(1):12.

European Medicines Agency (EMA). Good pharmacovigilance practices Amsterdam: European Medicines Agency (EMA); 2022. http://www.ema.europa.eu/ema/index.jsp?curl=pages/regulation/document_listing/document_listing_000345.jsp&mid=WC0b01ac058058f32c. Accessed 27 Feb 2022

Xie Y-M, Tian F. Interpretation of guidelines on good pharmacovigilance practices for European Union. Zhongguo Zhong yao za zhi = Zhongguo zhongyao zazhi = China Journal of Chinese Materia Medica. 2013;38(18):2963–8.

World Health Organization (WHO). The Safety of Medicines in Public Health Programmes: Pharmacovigilance an Essential Tool Geneva: World Health Organisation (WHO); 2006. https://www.who.int/medicines/areas/quality_safety/safety_efficacy/Pharmacovigilance_B.pdf. Accessed 28 Feb 2022

Isah AO, Edwards IR. Pharmacovigilance indicators: desiderata for the future of medicine safety. In: Edwards IR, Lindquist M, editors. Pharmacovigilance: critique and ways forward. 1st ed. Switzerland: ADIS; 2017. p. 99–114.

World Health Organization (WHO). WHO pharmacovigilance indicators: A practical manual for the assessment of pharmacovigilance systems Geneva: World Health Organisation (WHO); 2015. https://apps.who.int/iris/handle/10665/186642. Accessed 27 Feb 2022

Radecka A, Loughlin L, Foy M, de Ferraz Guimaraes MV, Sarinic VM, Di Giusti MD, et al. Enhancing pharmacovigilance capabilities in the EU regulatory network: the SCOPE joint action. Drug Saf. 2018;41(12):1285–302.

Moher D, Shamseer L, Clarke M, Davina Ghersi D, Liberati A, Petticrew M, et al. Preferred reporting items for systematic review and meta-analysis protocols (PRISMA-P) 2015 statement. Syst Rev. 2015;4:1.

Strengthening Pharmaceutical Systems (SPS) Program. Indicator-based pharmacovigilance assessment tool: manual for conducting assessments in developing countries. Submitted to the U.S. agency for international development by the SPS program Arlington, VA: Management Sciences for Health; 2009. http://pdf.usaid.gov/pdf_docs/PNADS167.pdf. Accessed 28 Feb 2022

Hawker S, Payne S, Kerr C, Hardey M, Powell J. Appraising the evidence: reviewing disparate data systematically. Qual Health Res. 2016;12(9):1284–99.

Braithwaite J, Herkes J, Ludlow K, Testa L, Lamprell G. Association between organisational and workplace cultures, and patient outcomes: systematic review. BMJ Open. 2017;7(11): e017708.

Lorenc T, Petticrew M, Whitehead M, Neary D, Clayton S, Wright K, et al. Crime, fear of crime and mental health: Synthesis of theory and systematic reviews of interventions and qualitative evidence. Public Health Res. 2014;2(2)

Abiri OT, Johnson WCN. Pharmacovigilance systems in resource-limited settings: an evaluative case study of Sierra Leone. J Pharm Policy Prac. 2019;12(1):8.

Allabi AC, Nwokike J. A situational analysis of pharmacovigilance system in republic of Benin. J Pharmacovigilance. 2014. https://doi.org/10.4172/2329-6887.1000136.

Barry A, Olsson S, Minzi O, Bienvenu E, Makonnen E, Kamuhabwa A, et al. Comparative assessment of the national pharmacovigilance systems in East Africa: Ethiopia, Kenya, Rwanda and Tanzania. Drug Saf. 2020;43(4):339–50.

Barry A, Olsson S, Khaemba C, Kabatende J, Dires T, Fimbo A, et al. Comparative assessment of the pharmacovigilance systems within the neglected tropical diseases programs in East Africa—Ethiopia, Kenya, Rwanda, and Tanzania. Int J Environ Res Public Health. 2021;18(4):1941.

Kabore L, Millet P, Fofana S, Berdai D, Adam C, Haramburu F. Pharmacovigilance systems in developing countries: an evaluative case study in Burkina Faso. Drug Saf. 2013;36(5):349–58.

Maigetter K, Pollock AM, Kadam A, Ward K, Weiss MG. Pharmacovigilance in India, Uganda and South Africa with reference to WHO’s minimum requirements. Int J Health Policy Manag. 2015;4(5):295–305.

Muringazuva C, Chirundu D, Mungati M, Shambira G, Gombe N, Bangure D, et al. Evaluation of the adverse drug reaction surveillance system Kadoma City, Zimbabwe 2015. Pan Afr Med J. 2017;27:55.

Mugauri H, Tshimanga M, Mugurungi O, Juru T, Gombe N, Shambira G. Antiretroviral adverse drug reactions pharmacovigilance in Harare City, Zimbabwe, 2017. PLoS ONE. 2018;13(12): e0200459.

Nwaiwu O, Oyelade O, Eze C. Evaluation of pharmacovigilance practice in pharmaceutical companies in Nigeria. Pharma Med. 2016;30(5):291–5.

Opadeyi AO, Fourrier-Réglat A, Isah AO. Assessment of the state of pharmacovigilance in the South-South zone of Nigeria using WHO pharmacovigilance indicators. BMC Pharmacol Toxicol. 2018;19:27.

Ejekam CS, Fourrier-Réglat A, Isah AO. Evaluation of pharmacovigilance activities in the national HIV/AIDS, malaria, and tuberculosis control programs using the World Health Organization pharmacovigilance indicators. Sahel Med J. 2020;23(4):226–35.

Alshammari TM, Alenzi KA, Ata SI. National pharmacovigilance programs in Arab countries: a quantitative assessment study. Pharmacoepidemiol Drug Saf. 2020;29(9):1001–10.

Qato DM. Current state of pharmacovigilance in the Arab and Eastern Mediterranean region: results of a 2015 survey. Int J Pharm Pract. 2018;26(3):210–21.

Wilbur K. Pharmacovigilance in the middle east: a survey of 13 Arabic-speaking countries. Drug Saf. 2013;36(1):25–30.

Mustafa G, Saeed Ur R, Aziz MT. Adverse drug reaction reporting system at different hospitals of Lahore, Pakistan—an evaluation and patient outcome analysis. J Appl Pharm. 2013;5(1):713–9.

Chan CL, Ang PS, Li SC. A survey on pharmacovigilance activities in ASEAN and selected non-ASEAN countries, and the use of quantitative signal detection algorithms. Drug Saf. 2017;40(6):517–30.

Suwankesawong W, Dhippayom T, Tan-Koi W-C, Kongkaew C. Pharmacovigilance activities in ASEAN countries. Pharmacoepidemiol Drug Saf. 2016;25(9):1061–9.

Kaewpanukrungsi W, Anantachoti P. Performance assessment of the Thai National Center for pharmacovigilance. Int J Risk Saf Med. 2015;27(4):225–37.

Zhang XM, Niu R, Feng BL, Guo JD, Liu Y, Liu XY. Adverse drug reaction reporting in institutions across six Chinese provinces: a cross-sectional study. Expert Opin Drug Saf. 2019;18(1):59–68.

Shin JY, Shin E, Jeong HE, Kim JH, Lee EK. Current status of pharmacovigilance regulatory structures, processes, and outcomes in the Asia-Pacific region: survey results from 15 countries. Pharmacoepidemiol Drug Saf. 2019;28(3):362–9.

Rorig KDV, de Oliveira CL. Pharmacovigilance: assessment about the implantation and operation at pharmaceutical industry. Lat Am J Pharm. 2012;31(7):953–7.

Contributors AC. Updated guidelines for evaluating public health surveillance systems Atlanta, GA: Centers for Disease Control and Prevention (CDC); 2001. https://www.cdc.gov/mmwr/preview/mmwrhtml/rr5013a1.htm. Accessed 28 Feb 2022

Olsson S, Pal SN, Dodoo A. Pharmacovigilance in resource-limited countries. Expert Rev Clin Pharmacol. 2015;8(4):449–60.

Olsson S, Pal SN, Stergachis A, Couper M. Pharmacovigilance activities in 55 low- and middle-income countries: a questionnaire-based analysis. Drug Saf. 2010;33(8):689–703.

Vaidya SS, Guo JJF, Heaton PC, Steinbach M. Overview and comparison of postmarketing drug safety surveillance in selected developing and well-developed countries. Drug Inf J. 2010;44(5):519–33.

World Health Organization (WHO) (1998) Terminology. A glossary of technical terms on the economics and finance of health services Copenhagen: World Health Organization—Regional Office for Europe. https://www.euro.who.int/__data/assets/pdf_file/0014/102173/E69927.pdf. Accessed 28 Feb 2022

Elshafie S, Roberti AM, Zaghloul I. Pharmacovigilance in developing countries (part II): a path forward. Int J Clin Pharm. 2018;40(4):764–8.

Pirmohamed M, Atuah KN, Dodoo ANO, Winstanley P. Pharmacovigilance in developing countries. BMJ. 2007;335:462.

Biswas P. Pharmacovigilance in Asia. J Pharmacol Pharmacother. 2013;4(Sup 1):S7–19.

Zhang L, Wong LYL, He Y, Wong ICK. Pharmacovigilance in China: current situation. Successes and Chall Drug Saf. 2014;37(10):765–70.

Alharf A, Alqahtani N, Saeed G, Alshahrani A, Alshahrani M, Aljasser N, et al. Saudi vigilance program: challenges and lessons learned. Saudi Pharm J. 2018;26(3):388–95.

Babigumira JB, Stergachis A, Choi HL, Dodoo A, Nwokike J, Garrison LP. A framework for assessing the economic value of pharmacovigilance in low- and middle-income countries. Drug Saf. 2014;37(3):127–34.

World Health Organization (WHO). Minimum requirements for a functional pharmacovigilance system Geneva: World Health Organisation. http://www.who.int/medicines/areas/quality_safety/safety_efficacy/PV_Minimum_Requirements_2010_2.pdf. Accessed 28 Feb 2022

Baniasadi S, Fahimi F, Namdar R. Development of an adverse drug reaction bulletin in a teaching hospital. Formulary. 2009;44(11):333–5.

Jose J, Rao PGM, Jimmy B. Hospital-based adverse drug reaction bulletin. Drug Saf. 2007;30(5):457–9.

Castel JM, Figueras A, Pedrós C, Laporte JR, Capellà D. Stimulating adverse drug reaction reporting: effect of a drug safety bulletin and of including yellow cards in prescription pads. Drug Saf. 2003;26(14):1049–55.

Aagaard L, Stenver DI, Hansen EH. Structures and processes in spontaneous ADR reporting systems: a comparative study of Australia and Denmark. Pharm World Sci. 2008;30(5):563–70.

Kaeding M, Schmälter J, Klika C. Pharmacovigilance in the European Union: practical implementation across Member States. Wiesbaden: Springer; 2017.

Reumerman M, Tichelaar J, Piersma B, Richir MC, van Agtmael MA. Urgent need to modernize pharmacovigilance education in healthcare curricula: review of the literature. Eur J Clin Pharmacol. 2018;74(10):1235–48.

Arici MA, Gelal A, Demiral Y, Tuncok Y. Short and long-term impact of pharmacovigilance training on the pharmacovigilance knowledge of medical students. Indian J Pharmacol. 2015;47(4):436–9.

Tripathi RK, Jalgaonkar SV, Sarkate PV, Rege NN. Implementation of a module to promote competency in adverse drug reaction reporting in undergraduate medical students. Indian J Pharmacol. 2016;48:S69–73.

Palaian S, Ibrahim MIM, Mishra P, Shankar PR. Impact assessment of pharmacovigilance-related educational intervention on nursing students’ knowledge, attitude and practice: a pre-post study. J Nurs Educ Pract. 2019;9(6):98–106.

Khalili M, Mesgarpour B, Sharifi H, Golozar A, Haghdoost AA. Estimation of adverse drug reaction reporting in Iran: correction for underreporting. Pharmacoepidemiol Drug Saf. 2021;30(8):1101–14.

Aagaard L, Strandell J, Melskens L, Petersen PS, Holme HE. Global patterns of adverse drug reactions over a decade: analyses of spontaneous reports to VigiBase™. Drug Saf. 2012;35(12):1171–82.

Meyboom RHB, Egberts ACG, Gribnau FWJ, Hekster YA. Pharmacovigilance in perspective. Drug Saf. 1999;21(6):429–47.

Funding

The study was undertaken as part of a PhD fully funded by the Kuwaiti Ministry of Health. The authors were not asked nor commissioned by the Kuwaiti Ministry of Health to carry out this study and had no role in its design, data collection and analysis, decision to publish or preparation of the manuscript. Open Access was funded through PhD fees managed by the University of Manchester.

Author information

Authors and Affiliations

Contributions

All three authors conceived and designed the study. Planning, data extraction and data analysis were led and performed by HYG and supported by DKS and EIS. Screening and identification of citations were completed by HYG. The manuscript was written by HYG and commented on by DKS and EIS. All authors read and approved the final manuscript for submission for publication.

Corresponding author

Ethics declarations

Conflict of interest

Hamza Y. Garashi is an employee on a PhD scholarship from the Kuwaiti Ministry of Health. Douglas T. Steinke and Ellen I. Schafheutle have no conflict of interest that is directly relevant to the content of this study.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Garashi, H.Y., Steinke, D.T. & Schafheutle, E.I. A Systematic Review of Pharmacovigilance Systems in Developing Countries Using the WHO Pharmacovigilance Indicators. Ther Innov Regul Sci 56, 717–743 (2022). https://doi.org/10.1007/s43441-022-00415-y

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s43441-022-00415-y