Abstract

The “voice areas” in the superior temporal cortex have been identified in both humans and non-human primates as selective to conspecific vocalizations only (i.e., expressed by members of our own species), suggesting its old evolutionary roots across the primate lineage. With respect to non-human primate species, it remains unclear whether the listening of vocal emotions from conspecifics leads to similar or different cerebral activations when compared to heterospecific calls (i.e., expressed by another primate species) triggered by the same emotion. Using a neuroimaging technique rarely employed in monkeys so far, functional Near Infrared Spectroscopy, the present study investigated in three lightly anesthetized female baboons (Papio anubis), temporal cortex activities during exposure to agonistic vocalizations from conspecifics and from other primates (chimpanzees—Pan troglodytes), and energy matched white noises in order to control for this low-level acoustic feature. Permutation test analyses on the extracted OxyHemoglobin signal revealed great inter-individual differences on how conspecific and heterospecific vocal stimuli were processed in baboon brains with a cortical response recorded either in the right or the left temporal cortex. No difference was found between emotional vocalizations and their energy-matched white noises. Despite the phylogenetic gap between Homo sapiens and African monkeys, modern humans and baboons both showed a highly heterogeneous brain process for the perception of vocal and emotional stimuli. The results of this study do not exclude that old evolutionary mechanisms for vocal emotional processing may be shared and inherited from our common ancestor.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Since the 1990s, (George et al., 1995; Pihan et al., 1997), many neuroimaging studies have investigated the activity of the human temporal cortex during emotional voice processing. Hence, functional Magnetic Resonance Imaging (fMRI) and more recently functional Near Infrared Spectroscopy (fNIRS) data pointed out the role of the bilateral superior temporal gyrus (STG), superior temporal sulcus (STS), and middle temporal gyrus (MTG) in the processing of emotional prosody (Grandjean, 2020; Grandjean et al., 2005; Kotz et al., 2013; Plichta et al., 2011; Wildgruber et al., 2009; Zatorre & Belin, 2001) and more specifically in the recognition of positive and negative emotions (Bach et al., 2008; Frühholz & Grandjean, 2013; Johnstone et al., 2006; Zhang et al., 2018).

Despite calls for an evolutionary-based approach to emotions that considered the adaptive functions and the phylogenetic continuity of emotional expression and identification (Bryant, 2021; Greenberg, 2002), few comparative studies have investigated the human temporal cortex activity during the recognition of emotional cues in human voices (conspecific) and other species vocalizations (heterospecific), expressed especially by non-human primates (NHP), our closest relatives (Perelman et al., 2011). In the few studies published so far, fMRI conjunction analysis has interestingly identified commonalities in the human cerebral response to human and other animal vocalizations including macaques (Macaca mulatta) and domestic cats (Felis catus). More activations were indeed found in the medial posterior part of the human right orbitofrontal cortex (OFC) during the listening of agonistic vocalizations expressed by both humans and other animals compared to affiliative ones (Belin et al., 2008). On the contrary, Fritz and colleagues demonstrated a greater involvement of the human STS and the right planum temporale (PT) for the identification of human emotional voices contrasted to chimpanzees (Pan troglodytes) and then macaque calls (Fritz et al., 2018). Similar results were found in the STS and STG when human emotional voices were compared to various animal sounds, non-vocal stimuli, or non-biological noises (Bodin et al., 2021; Pernet et al., 2015) suggesting a sensitivity of the superior regions of the human temporal cortex but not of the frontal cortex for conspecific voices.

Is this sensitivity of the temporal cortex to emotional cues expressed by conspecifics found in NHP? In other words, are the cerebral mechanisms of vocal emotion perception shared across primate species, or has the auditory cortex of Homo sapiens evolved differently?

The previous literature on primates emphasizes brain continuity between humans and NHP for the auditory processing of conspecific emotions. For instance, fMRI studies in macaques have revealed a greater involvement of the STG for the perception of conspecific emotional calls compared to heterospecific ones including calls from other primate and non-primate species, environmental sounds and scrambled vocalizations (Joly et al., 2012; Ortiz-Rios et al., 2015; Petkov et al., 2008). Following this, positron emission tomography (PET scan) studies have shown the predominant role of the right PT in chimpanzees (Taglialatela et al., 2009) and of the STS in macaques (Gil-da-Costa et al., 2004) for the processing of conspecific emotional calls. Additionally, neurobiological findings in macaques and marmosets (Callithrix jacchus) have suggested a greater involvement of the STG and of the primary auditory cortex in the passive listening of emotional conspecific calls compared to environmental sounds, scrambled, or time-reversed vocalizations (Belin, 2006; Ghazanfar & Hauser, 2001; Poremba et al., 2004). Overall, as for humans, the literature in NHP suggests a sensitivity of the great ape and monkey temporal cortex for the processing of conspecific emotional vocalizations.

Despite these results, the question of the specific status of conspecific emotions in NHP remains poorly explored with respect to heterospecific vocalizations. In particular, because of the species-dependent results in humans highlighted above and the phylogenetic proximity across primate species, it seems necessary to include heterospecific stimuli from other NHP to reconstruct the phylogenetic evolution of primate vocal emotion processing (Bryant, 2021).

The present study investigated temporal cortex involvement in three female baboons, Talma, Rubis, and Chet, during exposure to conspecific vs. heterospecific agonistic vocalizations, using fNIRS. Building on a growing interest over the past decade (Boas et al., 2014; Pan et al., 2019), we used fNIRS because of its non-invasiveness, its poor sensitivity to motion artifacts (Balardin et al., 2017) and its suitability for comparative research (Debracque et al., 2021; Fuster et al., 2005; Kim et al., 2017; Lee et al., 2017; Wakita et al., 2010). According to the existing literature on NHP and humans suggesting a sensitivity of the primates’ temporal cortex for conspecific calls, we expected (i) more activation in the temporal cortex for the passive listening of baboon sounds compared to chimpanzee stimuli, and (ii) a greater involvement of the temporal cortex for the perception of agonistic conspecific vocalizations in comparison to the other sounds.

Method

Subjects

The few existing studies using fNIRS in NHP mostly include a single subject (Fuster et al., 2005; Wakita et al., 2010). Three healthy female baboons (Talma, 13.5 years old; Rubis, 18.4 years old; and Chet, 11.8 years old) were included in the present study, contingent with their yearly health check-up; this sample size was consistent with prior work on the perception of affective stimuli by female macaques (Lee et al., 2017). In addition, as male baboons have large and thick masticatory muscles above their temporal cortex, they were excluded from the experimental protocol. Sexual dimorphism being particularly pronounced in baboons (Phillips-Conroy & Jolly, 1981), the female sex was assigned to the subjects based on their facial morphology and red buttocks. Moreover, preventing any ambiguity about the subjects’ sex, the three female baboons had already given birth to offspring that they breastfed. Following this, based on the annual health assessment and the daily behavioral surveys made by the veterinary and animal welfare staff, the subjects had normal hearing abilities and did not present a structural neurological impairment (confirmed with respective T1w anatomical brain images—0.7 × 0.7 × 0.7 resolution—collected in vivo under anesthesia in the 3Tesla MRI Brunker machine). All procedures were approved by the “C2EA-71 Ethical Committee of neurosciences” (INT Marseille) and performed in accordance with the relevant French law, CNRS guidelines, and the European Union regulations. The subjects were born in captivity and housed in social groups at the Station de Primatologie in which they have free access to both outdoor and indoor areas. All enclosures are enriched by wooden and metallic climbing structures as well as substrate on the group to favor foraging behaviors. Water is available ad libitum and monkey pellets, seeds, fresh fruits, and vegetables were given every day. The three subjects were lightly anesthetized with propofol and passively exposed to auditory stimuli as described below (see also Debracque et al., 2021 for the complete protocol).

Stimuli

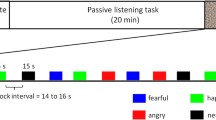

Auditory stimulations consisted of agonistic vocalizations produced by baboon (conspecific—see Fig. 1a) and chimpanzee (heterospecific—see Fig. 1b) individuals as well as energy-matched white noises to control for this low-level acoustic feature and for its unfolding (i.e., the temporal structure of energy of the vocalizations). Aggressor screams and distress calls expressed in an agonistic (i.e., conflictual) context are commonly used in the literature to investigate affective states associated with threat and distress respectively in primate vocalizations (Briefer, 2012; Kret et al., 2020). More specifically, studies on the baboons’ vocal repertoire have shown the link between agonistic vocalizations produced during conflicts and the threatening or distressful emotional state of the caller (Kemp et al., 2017; Seyfarth & Cheney, 2009).

Each auditory stimulus had a duration of 20 s and was repeated six times (see Debracque et al., 2021 for more details). The auditory stimuli were pseudo-randomized, alternating vocalizations, and white noises and were separated by 15 s of silence. Additionally, auditory stimulations were broadcasted either binaurally or monaurally in the right or left ear.

fNIRS

Recordings

Brain activations were measured using two light and wireless fNIRS devices (Portalite, Artinis Medical Systems B.V., Elst, The Netherlands). Based on tissue transillumination (Bright, 1831), fNIRS measures using near-infrared lights blood oxygenation changes (e.g., Hoshi, 2016; Jöbsis, 1977) related to the hemodynamic response function constituted of Oxyhemoglobin (O2Hb) and deoxyhemoglobin. fNIRS is a non-invasive technique poorly sensitive to motion artifacts (Balardin et al., 2017) and fully wearable. The fNIRS optical probes were placed on the right and left temporal cortices of the subjects using T1 MRI scanner images previously acquired at the Station de Primatologie on baboons (see Fig. 2). Data were obtained at 50 Hz with two wavelengths (760 and 850 nm) using three measurements, i.e., channels per hemisphere (ch1, ch2, ch3) with three inter-distance probes (3–3.5–4 cm) investigating three different cortical depths (1.5–1.7–2 cm, respectively).

fNIRS optode and channel locations according to 89 baboons T1 MRI template (Love et al., 2016). Blue and green crosses represent optical receivers and transmitters, respectively. Ch1, Ch2, and Ch3 indicate the three channels on the right and left temporal cortex

Reducing the potential disturbing impact of the fNIRS protocol on the subjects, each experimental session was planned during the baboons’ routine health inspection at the Station de Primatologie. As part of the health check, subjects were isolated from their social group and anesthetized with an intramuscular injection of ketamine (5 mg/kg—Ketamine 1000®) and medetomidine (50 µg/kg—Domitor®). Then Sevoflurane (Sevotek®) at 3 to 5% and atipamezole (250 µg/kg—Antisedan®) were administered before recordings. Each baboon was placed in ventral decubitus position on the table, and the head of the individual was maintained using foam positioners, cushions, and Velcro strips to remain straight and to reduce potential motion occurrences. Vital functions were monitored (SpO2, Respiratory rate, ECG, EtCO2, T°), and a drip of NaCl was put in place during the entire anesthesia. Before fNIRS recordings, temporal areas on the baboons’ scalps were shaved and sevoflurane inhalation was stopped. Subjects were further sedated with a minimal amount of intravenous injection of Propofol (Propovet®) with a bolus of around 2 mg/kg every 10 to 15 min or by infusion rate of 0.1–0.4 mg/kg/min. After the recovery period, subjects were put back in their social group and monitored by the veterinary staff.

Analysis

SPM_fNIRS toolbox (Tak et al., 2016) and custom-made codes on Matlab 7.4 R2009b (The MathWorks Inc., 2009) were used to perform first-level analysis on raw fNIRS data following this procedure: (i) O2Hb concentration changes were calculated using the Beer-Lambert law (Delpy et al., 1988); (ii) motion artifacts were removed manually in each individual and each channel. In total, 10 s (1.3%) were removed from the O2Hb signal of Rubis and 35 s (4.8%) for Talma and Chet; (iii) a low-pass filter based on the HRF (Friston et al., 2000) was applied to reduce physiological confounds; (iv) a baseline correction was applied in subtracting the pre-stimulus baseline from the post-stimulus O2Hb concentration changes of each trial and (v) O2Hb signal was averaged for Talma in a window of 4 to 12 s post-stimulus onset for each trial and for Rubis and Chet in a window of 2 to 8 s post-stimulus onset in order to select the range of maximum O2Hb concentration changes following Debracque et al. (2021). Shortly, the differences of concentration range are explained by the presence of tachycardia episodes for both Rubis and Chet during the experiment, involving an HRF almost twice as fast as the one found for Talma.

The second level analysis was made on R. studio (Team, 2020) using the permuco package (Frossard & Renaud, 2019). Through the same data sample, we already demonstrated in Debracque et al.(2021) the robustness of our method and results regarding hemispheric lateralization following motor and auditory stimulations. In the present paper, we wanted to investigate a higher level of the brain process, i.e., the perception of conspecific and heterospecific sounds. Hence, in each Hemisphere (right, left), we used non-parametric permutation tests with 5,000 iterations to assess O2Hb concentration changes for each subject (Talma, Rubis, Chet) as they enable repeated measures ANOVA in small sample sizes by multiplying the design and response variables (Kherad-Pajouh & Renaud, 2015). Stimuli (call, white noise); Species (baboon, chimpanzee); Channels (ch1, ch2, ch3), and Stimulus sides (right, left, both ears) were selected as fixed factors. As recommended, contrast effects of Species and Stimuli within channels were assessed with 2,000 permutations (Kherad-Pajouh & Renaud, 2015). Both p values for permutation F (pperm) and parametric F are reported.

Results

First, regarding the subject Talma, permutation tests revealed significant differences of O2Hb concentration changes between the three Channels for the right, F (2,3) = 161.5, p | pperm < 0.001 and left hemisphere, F (2,3) = 33.91, p | pperm < 0.001. The main factor Species was also found significant for the left hemisphere only, right: F (1,2) = 0.34, p | pperm = 0.057; left: F (1,2) = 4.24, p | pperm < 0.05. The main factor Stimuli as well as the interactions Stimuli*Species, Stimuli*Channels, Species*Channels, and Stimuli*Species*Channels did not reach significance within the right or left hemisphere (see Supplementary Material). Following these analyses, contrasts within each channel showed that for Talma’s left hemisphere, the perception of baboon sounds led to lower O2Hb concentration changes compared to chimpanzee sounds in ch1, ch2, and ch3, right: F (1,2) = 0.15, p | pperm = 0.69; left: F (1,2) = 4.07, p | pperm = 0.05—see Fig. 3a.

Right and left temporal cortex activations for the baboons a Talma, b Chet, and c Rubis during the perception of agonistic baboon (conspecific) and chimpanzee (heterospecific) vocalizations as well as their energy-matched white noises. The mean concentration changes of O2Hb (y axis) are represented in micromolar (µM) for each fNIRS channel (x axis). Colorful dots and dark lines represent stimuli and confidence intervals respectively. Results of the permutation tests within channels are shown with *p < 0.05; p = 0.07. The ggplot2 package (Wickham et al., 2021) on R.studio (Team, 2020) was used for visualizing the data

Second, for the subject Chet, significant differences of O2Hb concentration changes between the three Channels were found for the right, right: F (2,3) = 3,99, p | pperm < 0.05 and left hemisphere, F (2,3) = 25.68, p | pperm < 0.001. The main factor Species was also found significant for the right hemisphere only, right: F (1,2) = 5.03, p | pperm < 0.05; left: F (1,2) = 0.24, p | pperm = 0.62. Additionally, statistics showed a significant two-way interaction between Stimuli and Species for the left hemisphere only, right: F (1,2) = 0.01, p | pperm = 1; left: F (1,2) = 4.13, p | pperm = 0.05. The main factor Stimuli as well as the interactions Stimuli*Channels, Species*Channels and Stimuli*Species*Channels did not reach significance within the right or left hemisphere (see Supplementary Material). Finally, contrasts within each channel revealed that for Chet, while her right hemisphere had a tendency to be more activated for the perception of baboon sounds compared to chimpanzee stimuli in ch1, ch2, and ch3, right: F (1,2) = 3.74, p | pperm = 0.07; left: F (1,2) = 0.22, p | pperm = 0.65, her left hemisphere had a tendency to be more activated for the passive listening of baboon agonistic calls and chimpanzee white noises when compared to baboon white noises and chimpanzee agonistic calls in ch1, ch2, and ch3, right: F (1,2) = 0.01, p | pperm = 1; left: F (1,2) = 3.75, p | pperm = 0.07—see Fig. 3b.

Third, for the subject Rubis, only a significant difference of O2Hb concentration changes between the three Channels was found for the right hemisphere, right: F (2,3) = 8, 99, p | pperm < 0.001; left: F (2,3) = 2.15, p | pperm = 0.12—see Fig. 3c. None of the other main effects or interactions reached significance within the left or right hemisphere (see Supplementary Material).

Note that for Talma, Rubis, and Chet, the factor Stimulus sides (sounds broadcasted either binaurally or monaurally in the right or left ear) did not reach significance and thus, do not statistically explain differences in O2Hb concentration changes underpinning the perception of baboon and chimpanzee sounds by our three subjects (see Debracque et al., 2021 for more details related to auditory asymmetries).

In sum, across the three channels, more O2Hb concentration changes were found in Talma’s left temporal cortex for the perception of chimpanzee sounds compared to baboon stimuli. Conversely, for Chet, permutation analyses revealed more O2Hb concentration changes in the right temporal cortex for the passive listening of baboon sounds, especially baboon agonistic calls comparing to chimpanzee stimuli. Additionally, her left temporal cortex was more activated by baboon agonistic calls and chimpanzee white noises than for chimpanzee agonistic calls and baboon white noises. For Rubis, the perception of baboon and chimpanzee sounds did not affect the O2Hb concentration changes of her bilateral temporal cortex. Finally, to the exception of Rubis’ left hemisphere, the different cortical depths of the channels (1.5–1.7–2 cm) had an impact on the O2Hb measurement of Talma, Chet, and Rubis’ temporal cortices.

Discussion

The present fNIRS study in baboons underlines a highly heterogeneous process for the auditory perception of conspecific and heterospecific affective stimuli.

Using valid statistical methods and analyses (Debracque et al., 2021; Lee et al., 2017) as well as the inclusion of three subjects instead of one, as is usually the case in the relevant literature (Fuster et al., 2005; Wakita et al., 2010), fNIRS data revealed large inter-individual differences between Talma and Chet for the significant main effect Species. The left temporal cortex of Talma was overall more activated for chimpanzee sounds (calls and white noise) than for baboon ones; in contrast, results were reversed in the right temporal cortex of Chet, where statistical analyses highlighted an increase of O2Hb concentration changes for the passive listening of agonistic baboon sounds, especially baboon agonistic calls compared to chimpanzee sounds. In addition, in her left temporal cortex, we documented an increase in O2Hb concentration led by both the perception of agonistic baboon calls and chimpanzee white noises. No significant results were found for Rubis, although this may have been a consequence of her constant tachycardia during the health check and experiment (see Debracque et al., 2021).

Beyond this apparent absence of congruence in our fNIRS data, our results underlined in fact a highly heterogeneous process for auditory perception in baboons. Well-known in neuroscience research with human participants, inter-individual differences are for instance at play in the location of voice-selective areas in the human auditory cortex (Belin et al., 2000). In the same line, Pernet and colleagues also demonstrated using fMRI, a great inter-individual variability in the involvement of human STG and STS for the listening of conspecific emotional voices compared to non-vocal sounds (Pernet et al., 2015). As for humans, the location of the voice-selective areas as well as the cortical response of the superior temporal cortex in baboons would be subject to a high heterogeneity. This claim is in line with the results of Xu et al. (2019) who have shown in five anesthetized and awake macaques great inter-individual variabilities in the functional connectivity of different cortical regions. Interestingly, the authors compared macaques’ fMRI data to human ones and concluded on a similar heterogeneity in functional connectivity across primate species. As highly cited human neuroimaging studies (Szucs & Ioannidis, 2020), future non-invasive comparative research should include more subjects to take in consideration this inter-individual variability in brain mechanisms. The necessity to increase NHP subjects to address limits in statistical power faces ethical aspects related to animal welfare in the case of invasive neuroimaging studies. The development of fNIRS (Debracque et al., 2021) and longitudinal studies in comparative neuroscience (Song et al., 2021) would thus allow answering parts of these challenges.

Often explored using fMRI or Pet scan (e.g., Bodin et al., 2021; Gil-da-Costa et al., 2004; Ortiz-Rios et al., 2015), our fNIRS data remain however inconclusive regarding the processing of conspecific vocalizations compared to white noises. In contrast, comparative research on macaques and marmoset (Callithrix jacchus) showed a greater sensitivity of the temporal cortex in its anterior part than in its posterior area for the contrast conspecific calls vs. control sounds (Bodin et al., 2021). Future fNIRS studies would help determinate whether this lack of effect replication from our present study might be addressed by improving the probe location on the scalp of baboons and its spatial sensitiveness to this expected effect.

Finally, out of the scope of this paper, permutation test analyses highlighted consistent fNIRS data for the channels 1 and 2 compared to channel 3 on both the right and left hemispheres. This result suggests that, for fNIRS in baboons, the best inter-probe distance to assess cortical activations in the temporal cortex would be between 3 and 3.5 cm. Interestingly, these distances are commonly used for fNIRS experiments in human adults (Ferrari & Quaresima, 2012).

To conclude, our fNIRS data mainly pointed out the existence of a highly heterogonous process across individuals for the perception of conspecific emotional vocalizations in baboons. Whereas such an inter-individual heterogeneity is also well documented in humans, we do thus not exclude a potential phylogenetic continuity with non-human primates in the brain processing of conspecific emotional vocalizations which might be inherited from our common ancestor. Our results remain however inconclusive, notably in regards to the lack of contrasts in conspecific agonistic vocalizations vs. white noises (control sounds), which are often explored meaningfully using fMRI or Pet scan (e.g., Bodin et al., 2021; Gil-da-Costa et al., 2004; Ortiz-Rios et al., 2015). This highlights one of the limitations of our study. fNIRS, Pet scan, and fMRI measure hemodynamic changes, however, the latter have a much higher spatial resolution (Gosseries et al., 2008) than fNIRS (Scholkmann et al., 2014). Secondly, another limitation is that our experiment focused on baboons and it is unclear whether it will replicate in other NHP species such as Central and South American monkeys. In fact, Fitch and Braccini (2013) have already suggested differences between monkeys in terms of mechanisms for the processing of conspecific and heterospecific vocalizations. A final limitation of our study is that only agonistic vocalizations were included in the present fNIRS protocol. Similarly to humans, NHP might have some distinctive brain mechanisms for negative and positive emotions (e.g., Davidson, 1992; Frühholz & Grandjean, 2013; Zhang et al., 2018).

Overall, our study does not exclude the existence of common evolutionary roots for auditory processing across primate species to explain the inter-individual variability generally reported in those studies and underlines the importance of comparative research in monkeys to understand brain mechanisms at play in modern humans.

References

Bach, D., Grandjean, D., Sander, D., Herdener, M., Strik, W., & Seifritz, E. (2008). The effect of appraisal level on processing of emotional prosody in meaningless speech. NeuroImage, 42, 919–927. https://doi.org/10.1016/j.neuroimage.2008.05.034

Balardin, J. B., Zimeo, G. A., Morais, R. A., Furucho, L. T., Vanzella, P., Biazoli, C., & Sato, J. R. (2017). Imaging brain function with functional near-infrared spectroscopy in unconstrained environments. Frontiers in Human Neuroscience, 11. https://doi.org/10.3389/fnhum.2017.00258

Barreda, S. (2015). phonTools: Tools for phonetic and acoustic analyses [computer program]. Version 0.2–2.1. https://CRAN.R-project.org/package=phonTools. Accessed 27 Sept 2021.

Belin, P. (2006). Voice processing in human and non-human primates. Philosophical Transactions of the Royal Society b: Biological Sciences, 361(1476), 2091–2107. https://doi.org/10.1098/rstb.2006.1933

Belin, P., Zatorre, R. J., Lafaille, P., Ahad, P., & Pike, B. (2000). Voice-selective areas in human auditory cortex. Nature, 403(6767), 309–312. https://doi.org/10.1038/35002078

Belin, P., Fecteau, S., Charest, I., Nicastro, N., Hauser, M. D., & Armony, J. L. (2008). Human cerebral response to animal affective vocalizations. Proceedings. Biological Sciences, 275(1634), 473–481. https://doi.org/10.1098/rspb.2007.1460

Boas, D. A., Elwell, C. E., Ferrari, M., & Taga, G. (2014). Twenty years of functional near-infrared spectroscopy: Introduction for the special issue. NeuroImage, 85, 1–5. https://doi.org/10.1016/j.neuroimage.2013.11.033

Bodin, C., Trapeau, R., Nazarian, B., Sein, J., Degiovanni, X., Baurberg, J., Rapha, E., Renaud, L., Giordano, B. L., & Belin, P. (2021). Functionally homologous representation of vocalizations in the auditory cortex of humans and macaques. Current Biology: CB, 31(21), 4839-4844.e4. https://doi.org/10.1016/j.cub.2021.08.043

Briefer, E. F. (2012). Vocal expression of emotions in mammals: mechanisms of production and evidence. Journal of Zoology, 288(1), 1–20.

Bright, R. (1831). Reports of medical cases selected with a view of illustrating the symptoms and cure of diseases by reference to morbid anatomy, Case Ccv. Diseases of the Brain and Nervous System, 2(3), 431.

Bryant, G. A. (2021). The evolution of human vocal emotion. Emotion Review, 13(1), 25–33. https://doi.org/10.1177/1754073920930791

Davidson, R. J. (1992). Anterior cerebral asymmetry and the nature of emotion. Brain and Cognition, 20(1), 125–151. https://doi.org/10.1016/0278-2626(92)90065-T

Debracque, C., Gruber, T., Lacoste, R., Grandjean, D., & Meguerditchian, A. (2021). Validating the use of functional near-infrared spectroscopy in monkeys: The case of brain activation lateralization in Papio anubis. Behavioural Brain Research, 403, 113133. https://doi.org/10.1016/j.bbr.2021.113133

Delpy, D. T., Cope, M., van der Zee, P., Arridge, S., Wray, S., & Wyatt, J. (1988). Estimation of optical pathlength through tissue from direct time of flight measurement. Physics in Medicine and Biology, 33(12), 1433–1442.

Ferrari, M., & Quaresima, V. (2012). A brief review on the history of human functional near-infrared spectroscopy (fNIRS) development and fields of application. NeuroImage, 63(2), 921–935. https://doi.org/10.1016/j.neuroimage.2012.03.049

Fitch, W. T., & Braccini, S. N. (2013). Primate laterality and the biology and evolution of human handedness: A review and synthesis. Annals of the New York Academy of Sciences, 1288, 70–85. https://doi.org/10.1111/nyas.12071

Friston, K. J., Josephs, O., Zarahn, E., Holmes, A. P., Rouquette, S., & Poline, J. (2000). To smooth or not to smooth? Bias and efficiency in fMRI time-series analysis. NeuroImage, 12(2), 196–208. https://doi.org/10.1006/nimg.2000.0609

Fritz, T., Mueller, K., Guha, A., Gouws, A., Levita, L., Andrews, T. J., & Slocombe, K. E. (2018). Human behavioural discrimination of human, chimpanzee and macaque affective vocalisations is reflected by the neural response in the superior temporal sulcus. Neuropsychologia, 111, 145–150. https://doi.org/10.1016/j.neuropsychologia.2018.01.026

Frossard, J., & Renaud, O. (2019). Permutation tests for regression, ANOVA and comparison of signals. The Permuco Package, 32.

Frühholz, S., & Grandjean, D. (2013). Multiple subregions in superior temporal cortex are differentially sensitive to vocal expressions: A quantitative meta-analysis. Neuroscience and Biobehavioral Reviews, 37(1), 24–35. https://doi.org/10.1016/j.neubiorev.2012.11.002

Fuster, J., Guiou, M., Ardestani, A., Cannestra, A., Sheth, S., Zhou, Y.-D., Toga, A., & Bodner, M. (2005). Near-infrared spectroscopy (NIRS) in cognitive neuroscience of the primate brain. NeuroImage, 26(1), 215–220. https://doi.org/10.1016/j.neuroimage.2005.01.055

George, M. S., Ketter, T. A., Parekh, P. I., Horwitz, B., Herscovitch, P., & Post, R. M. (1995). Brain activity during transient sadness and happiness in healthy women. The American Journal of Psychiatry, 152(3), 341–351. https://doi.org/10.1176/ajp.152.3.341

Ghazanfar, A. A., & Hauser, M. D. (2001). The auditory behaviour of primates: A neuroethological perspective. Current Opinion in Neurobiology, 11(6), 712–720. https://doi.org/10.1016/S0959-4388(01)00274-4

Gil-da-Costa, R., & Hauser, M. D. (2006). Vervet monkeys and humans show brain asymmetries for processing conspecific vocalizations, but with opposite patterns of laterality. Proceedings of the Royal Society b: Biological Sciences, 273(1599), 2313–2318. https://doi.org/10.1098/rspb.2006.3580

Gil-da-Costa, R., Braun, A., Lopes, M., Hauser, M. D., Carson, R. E., Herscovitch, P., & Martin, A. (2004). Toward an evolutionary perspective on conceptual representation: Species-specific calls activate visual and affective processing systems in the macaque. Proceedings of the National Academy of Sciences, 101(50), 17516–17521. https://doi.org/10.1073/pnas.0408077101

Gosseries, O., Demertzi, A., Noirhomme, Q., Tshibanda, J., Boly, M., Op de Beeck, M., Hustinx, R., Maquet, P., Salmon, E., Moonen, G., Luxen, A., Laureys, S., & De Tiège, X. (2008). Functional neuroimaging (fMRI, PET and MEG): What do we measure? Revue Medicale De Liege, 63(5–6), 231–237.

Grandjean, D. (2020). Brain networks of emotional prosody processing. Emotion Review. https://doi.org/10.1177/1754073919898522

Grandjean, D., Sander, D., Pourtois, G., Schwartz, S., Seghier, M. L., Scherer, K. R., & Vuilleumier, P. (2005). The voices of wrath: Brain responses to angry prosody in meaningless speech. Nature Neuroscience, 8(2), 145–146. https://doi.org/10.1038/nn1392

Greenberg, L. S. (2002). Evolutionary perspectives on emotion: Making sense of what we feel. Journal of Cognitive Psychotherapy, 16(3), 331–347. https://doi.org/10.1891/jcop.16.3.331.52517

Hoshi, Y. (2016). Hemodynamic signals in FNIRS. Progress in Brain Research, 225, 153–179. https://doi.org/10.1016/bs.pbr.2016.03.004

Jöbsis, F. F. (1977). Noninvasive, infrared monitoring of cerebral and myocardial oxygen sufficiency and circulatory parameters. Science (New York N.Y.), 198(4323), 1264–67.

Johnstone, T., van Reekum, C. M., Oakes, T. R., & Davidson, R. J. (2006). The voice of emotion: An fMRI study of neural responses to angry and happy vocal expressions. Social Cognitive and Affective Neuroscience, 1(3), 242–249. https://doi.org/10.1093/scan/nsl027

Joly, O., Pallier, C., Ramus, F., Pressnitzer, D., Vanduffel, W., & Orban, G. A. (2012). Processing of vocalizations in humans and monkeys: A comparative FMRI study. NeuroImage, 62(3), 1376–1389. https://doi.org/10.1016/j.neuroimage.2012.05.070

Kemp, C., Rey, A., Legou, T., Boë, L-. J., Berthommier, F., Becker, Y. R., & Fagot, J. R. (2017). Vocal repertoire of captive Guinea baboons (Papio Papio). In L. J. Boë, J. Fagot, P. Perrier, J. L. Schwartz, & Peter Lang (Eds.), Origins of human language: Continuities and discontinuities with nonhuman primates, Speech Production and Perception (pp. 15–58). Peter Lang. https://doi.org/10.3726/b12405

Kherad-Pajouh, S., & Renaud, O. (2015). A general permutation approach for analyzing repeated measures ANOVA and mixed-model designs. Statistical Papers, 56(4), 947–967. https://doi.org/10.1007/s00362-014-0617-3

Kim, H. Y., Seo, K., Jeon, H. J., Lee, U., & Lee, H. (2017). Application of functional near-infrared spectroscopy to the study of brain function in humans and animal models. Molecules and Cells, 40(8), 523–32. https://doi.org/10.14348/molcells.2017.0153

Kotz, S. A., Kalberlah, C., Bahlmann, J., Friederici, A. D., & John-D. Haynes. (2013). Predicting vocal emotion expressions from the human brain. Human Brain Mapping, 34(8), 1971–1981. https://doi.org/10.1002/hbm.22041

Kret, M. E., Prochazkova, E., Sterck, E. H. M., & Clay, Z. (2020). Emotional expressions in human and non-human great apes. Neuroscience & Biobehavioral Reviews. https://doi.org/10.1016/j.neubiorev.2020.01.027

Lee, Y.-A., Pollet, V., Kato, A., & Goto, Y. (2017). Prefrontal cortical activity associated with visual stimulus categorization in non-human primates measured with near-infrared spectroscopy. Behavioural Brain Research, 317, 327–331. https://doi.org/10.1016/j.bbr.2016.09.068

Love, S. A., Marie, D., Roth, M., Lacoste, R., Nazarian, B., Bertello, A., Coulon, O., Anton, J.-L., & Meguerditchian, A. (2016). The average baboon brain: MRI templates and tissue probability maps from 89 individuals. NeuroImage, 132, 526–533. https://doi.org/10.1016/j.neuroimage.2016.03.018

Ortiz-Rios, M., Kuśmierek, P., DeWitt, I., Archakov, D., Azevedo, F. A. C., Sams, M., Jääskeläinen I. P., Keliris, G. A., Rauschecker, J. P. (2015) Functional MRI of the vocalization-processing network in the macaque brain. Frontiers in Neuroscience, 9. https://doi.org/10.3389/fnins.2015.00113

Pan, Y., Borragán, G., & Peigneux, P. (2019). Applications of functional near-infrared spectroscopy in fatigue, sleep deprivation, and social cognition. Brain Topography, 32(6), 998–1012. https://doi.org/10.1007/s10548-019-00740-w

Perelman, P., Johnson, W. E., Roos, C., Seuánez, H. N., Horvath, J. E., Moreira, M. A. M., Kessing, B., Pontius, J., Roelke, M., Rumpler, Y., Maria, P. C., Schneider, A. S., O’Brien, S. J., & Pecon-Slattery, J. (2011). A molecular phylogeny of living primates. PLOS Genetics, 7(3), e1001342. https://doi.org/10.1371/journal.pgen.1001342

Pernet, C. R., McAleer, P., Latinus, M., Gorgolewski, K. J., Charest, I., Bestelmeyer, P. E. G., Watson, R. H., Fleming, D., Crabbe, F., Valdes-Sosa, M., & Belin, P. (2015). The human voice areas: Spatial organization and inter-individual variability in temporal and extra-temporal cortices. NeuroImage, 119, 164–174. https://doi.org/10.1016/j.neuroimage.2015.06.050

Petkov, C. I., Kayser, C., Steudel, T., Whittingstall, K., Augath, M., & Logothetis, N. K. (2008). A voice region in the monkey brain. Nature Neuroscience, 11(3), 367–374. https://doi.org/10.1038/nn2043

Phillips-Conroy, J. E., & Jolly, C. J. (1981). Sexual dimorphism in two subspecies of Ethiopian baboons (Papio hamadryas) and their hybrids. American Journal of Physical Anthropology, 56(2), 115–129. https://doi.org/10.1002/ajpa.1330560203

Pihan, H., Altenmüller, E., & Ackermann, H. (1997). The cortical processing of perceived emotion: A DC-potential study on affective speech prosody. NeuroReport, 8(3), 623–627.

Plichta, M. M., Gerdes, A. B. M., Alpers, G. W., Harnisch, W., Brill, S., Wieser, M. J., & Fallgatter, A. J. (2011). Auditory cortex activation is modulated by emotion: A functional near-infrared spectroscopy (fNIRS) study. NeuroImage, 55(3), 1200–1207. https://doi.org/10.1016/j.neuroimage.2011.01.011

Poremba, A., Malloy, M., Saunders, R. C., Carson, R. E., Herscovitch, P., & Mishkin, M. (2004). Species-specific calls evoke asymmetric activity in the monkey’s temporal poles. Nature, 427(6973), 448–451. https://doi.org/10.1038/nature02268

Scholkmann, F., Kleiser, S., Metz, A. J., Zimmermann, R., Pavia, J. M., Wolf, U., & Wolf, M. (2014). A review on continuous wave functional near-infrared spectroscopy and imaging instrumentation and methodology. NeuroImage, 85(Pt 1), 6–27. https://doi.org/10.1016/j.neuroimage.2013.05.004

Seyfarth, R. M., & Cheney, D. L. (2009). Agonistic and affiliative signals: Resolutions of conflict. In Encyclopedia of Neuroscience (pp. 223–26). Elsevier.

Song, X., García-Saldivar, P., Kindred, N., Wang, Y., Merchant, H., Meguerditchian, A., Yang, Y., Stein, E. A., Bradberry, C. W., Hamed, S. B., Jedema, H. P., & Poirier, C. (2021). Strengths and challenges of longitudinal non-human primate neuroimaging. NeuroImage, 236, 118009. https://doi.org/10.1016/j.neuroimage.2021.118009

Szucs, D., & Ioannidis, J. P. A. (2020). Sample size evolution in neuroimaging research: An evaluation of highly-cited studies (1990–2012) and of latest practices (2017–2018) in high-impact journals. NeuroImage, 221, 117164. https://doi.org/10.1016/j.neuroimage.2020.117164

Taglialatela, J. P., Russell, J. L., Schaeffer, J. A., & Hopkins, W. D. (2009). Visualizing vocal perception in the chimpanzee brain. Cerebral Cortex (new York, NY), 19(5), 1151–1157. https://doi.org/10.1093/cercor/bhn157

Tak, S., Uga, M., Flandin, G., Dan, I., & Penny, W. D. (2016). Sensor space group analysis for fNIRS data. Journal of Neuroscience Methods, 264, 103–112. https://doi.org/10.1016/j.jneumeth.2016.03.003

Team, R. (2020). RStudio: Integrated development for R. RStudio. RStudio Inc.

The MathWorks Inc. (2009). MATLAB (Version 7.9 (R2009b)).

Wakita, M., Shibasaki, M., Ishizuka, T., Schnackenberg, J., Fujiawara, M., & Masataka, N. (2010). Measurement of neuronal activity in a macaque monkey in response to animate images using near-infrared spectroscopy. Frontiers in Behavioral Neuroscience, 4, 31. https://doi.org/10.3389/fnbeh.2010.00031

Wickham, H, Chang, W., Henry, L., Pedersen, T. L., Takahashi, K., Wilke, C., Woo, K., Yutani, H., Dunnington, D., & RStudio. (2021). ggplot2: Create elegant data visualisations using the grammar of graphics [computer program]. Version 3.3.5. https://CRAN.R-project.org/package=ggplot2. Accessed 02 Oct 2021.

Wildgruber, D., Ethofer, T., Grandjean, D., & Kreifelts, B. (2009). A cerebral network model of speech prosody comprehension. International Journal of Speech-Language Pathology, 11(4), 277–281. https://doi.org/10.1080/17549500902943043

Xu, T., Sturgeon, D., Ramirez, J. S. B., Froudist-Walsh, S., Margulies, D. S., Schroeder, C. E., Fair, D. A., & Milham, M. P. (2019). Interindividual variability of functional connectivity in awake and anesthetized rhesus macaque monkeys. Biological Psychiatry. Cognitive Neuroscience and Neuroimaging, 4(6), 543–553. https://doi.org/10.1016/j.bpsc.2019.02.005

Zatorre, R. J., & Belin, P. (2001). Spectral and temporal processing in human auditory cortex. Cerebral Cortex (New York, N. Y.: 1991), 11(10), 946–53. https://doi.org/10.1093/cercor/11.10.946

Zhang, D., Zhou, Yu., & Yuan, J. (2018). Speech prosodies of different emotional categories activate different brain regions in adult cortex: An fNIRS study. Scientific Reports, 8(1), 218. https://doi.org/10.1038/s41598-017-18683-2

Acknowledgements

We thank the Swiss National Foundation and the European Research Council for funding this work. We warmly thank the vet Pascaline Boitelle, the animal care staff as well as Jeanne Caron-Guyon, Lola Rivoal, Théophane Piette, and Jérémy Roche for assistance during the recordings. CD also thanks Dr. Ben Meuleman for his wise advices on permutation test analyses.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Funding

Open access funding provided by University of Geneva. This work was supported by the Swiss National Science Foundation (SNSF, grant P1GEP1_181492 to CD), the European Research Council (grant 716931—GESTIMAGE—ERC-2016-STG to AM) as well as the French grant “Agence Nationale de la Recherche” (ANR-16-CONV-0002, ILCB to AM) and the Excellence Initiative of Aix-Marseille University (A*MIDEX to AM). TG was additionally supported by a career grant from the SNSF during the final writing of this article (grant PCEFP1_186832).

Conflict of Interest

The authors declare no competing interests.

Data Availability

Raw data are freely available https://doi.org/10.26037/yareta:3h7qyho5p5dmtmwgqstii3apgy.

Code Availability

Custom Matlab and R. studio codes are https://yareta.unige.ch/deposit/73c79ecc-89ac-46e8-8022-54fa3f0c3cb7/detail/d9ff0c1d-dd7f-46c9-b2c6-84a6846c0f36/files.

Authors’ Contributions

Not applicable.

Ethics Approval

All animal procedures were approved by the “C2EA-71 Ethical Committee of neurosciences” (INT Marseille) under the application number APAFIS#13553–201802151547729 and were conducted at the Station de Primatologie CNRS (UPS 846, Rousset-Sur-Arc, France) within the agreement number C130877 for conducting experiments on vertebrate animals. All methods were performed in accordance with the relevant French law, CNRS guidelines, and the European Union regulations (Directive 2010/63/EU).

Consent to Participate

Not applicable.

Consent for Publication

Not applicable.

Additional information

Handling Editor: Karen Bales

Adrien Meguerditchian and Didier Grandjean are joint senior authors.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Debracque, C., Gruber, T., Lacoste, R. et al. Cerebral Activity in Female Baboons (Papio anubis) During the Perception of Conspecific and Heterospecific Agonistic Vocalizations: a Functional Near Infrared Spectroscopy Study. Affec Sci 3, 783–791 (2022). https://doi.org/10.1007/s42761-022-00164-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s42761-022-00164-z