Abstract

With the ever-increasing demand in urban mobility and modern logistics sector, the vehicle population has been steadily growing over the past several decades. One natural consequence of the vehicle population growth is the increase in traffic congestion. Almost all (metropolitan) cities including the major ones, like Los Angeles, Beijing, New York, are suffering from heavy traffic congestion. Statistics show that, in 2015, 43 cities in China are suffering a prolonged travel time of more than 1.5 h every day during rush hours. In the meanwhile, traffic accidents are plaguing the economic development as well.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

1.1 Background

With the ever-increasing demand in urban mobility and modern logistics sector, the vehicle population has been steadily growing over the past several decades. One natural consequence of the vehicle population growth is the increase in traffic congestion. Almost all (metropolitan) cities including the major ones, like Los Angeles, Beijing, New York, are suffering from heavy traffic congestion. Statistics show that, in 2015, 43 cities in China are suffering a prolonged travel time of more than 1.5 h every day during rush hours. In the meanwhile, traffic accidents are plaguing the economic development as well.

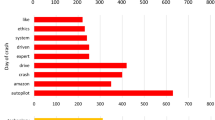

As it is shown in Fig. 1, the number of traffic accidents has been maintaining in a high number during the past five years and people are having more and more vehicles. It is estimated that there is at least one person dying from traffic accidents worldwide every minute. Besides traffic accidents and congestions, there are still miscellaneous issues making people uncomfortable. It is more and more difficult to find an available Parking spot during rush hours in urban areas. People usually spend more than 20 min searching for a Parking spot, which is meaningless and quite annoying as the searching time increases. Environmental pollution is another big issue. With the increasing number of vehicles, vehicle emissions of \(\hbox {SO}_{2}\), NOx, CO, \(\hbox {CO}_{2}\), dust particles, smog and noise have reached or even exceeded levels comparable to those from industrial production and are harmful to the environment and human health.

With the help of recent development in artificial intelligence (AI), we are able to make vehicles intelligent enough so that the aforementioned problems can be solved.

1.2 What is AIV

Artificial intelligence for vehicles (AIV) aims at applying both practical and advanced AI techniques to vehicles so that vehicles can perform human-like or even superhuman behaviors [1, 2]. The algorithms such as deep neural networks are designed to mimic the working principle of the brain and trained over large data sets to perform various tasks. Intelligent vehicles combine AI techniques such as environmental perception, map building and path planning and integrate them with multi-scale auxiliary driving services and

other functions [1, 2], so that vehicles are able to make intelligent decisions. It focuses on the applications of artificial intelligence, machine learning and automatic control to vehicles, as depicted in Fig. 2.

1.3 Why do Vehicles Need AI

With rapid economic development, intelligent vehicles are in urgent need. Along with the sustained and rapid growth of car ownership, almost every country is facing severe traffic congestion, road safety and environmental pollution problems. In the meanwhile, the number of fatal traffic accidents is increasing each year and most of them are caused by human operating errors. With the continued growth of car ownership, the number of fatal traffic accidents is expected to grow. Relying on advanced AI techniques, we can solve the aforementioned problems. Figure 3 summarizes four main factors which make vehicles in urgent need of AI techniques.

(1) China strategic needs of economy China, as the leading developing country, has been late in developing other innovative technologies related to vehicles, such as electric vehicles. However, recent booming of AI techniques grant the country new opportunities to take the lead in developing AI-enabled vehicles.

(2) China artificial intelligence 2.0 China AI 2.0 has put the development of new trends of AI technologies such as hybrid intelligence, multi-modal data fusion technologies in a very important strategy point. Developing novel AI techniques for vehicles clearly aligns with such strategies.

(3) Society needs of China automobile China has its unique traffic situation. In urban areas, the driving scenarios are too complex and it is very difficult for the drivers to always make the right driving decision. This makes China in the most need of AI-enabled vehicles which can react to complex changing driving environment.

(4) Changes in the business model of automobile With the development of communication technologies, new modes of business models, such as car sharing, Uber and DiDi, of automobile companies are emerging. Almost all of the new business models need AI techniques to support and reach optimized decisions.

1.4 State-of-the-Art of AIV

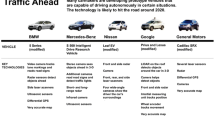

Currently, enterprises and universities all around the world have taken initiative to layout strategic investments in AIVs [3, 4]. National policies and regulations are speeding up to release restrictions for the expected development. The USA, France, Britain, Germany, Japan, South Korea and other countries have developed a number of smart car-related policies to promote the integration of intelligent vehicles and existing transportation systems. Traditional car manufacture and technology companies have invested billions of dollars to support the development of AIVs.

1.4.1 Worldwide Government Strategies

The US Transportation Secretary announced that they will render the test and application of automated driving in capital and give $4 billion support in the next 10 years. At the same time, they will exempt the entire automotive industry, 2500 intelligent vehicles which comply with the relevant provisions of the existing traffic safety within two years. France launched the new industrial France strategy which will be listed as one of the main focuses for the development of automatic driving in the 2013. In July 2016, the Minister of Commerce and the Ministry of transport in France announced that they will remove the rules which restricts automatic driving. Germany has allowed Bosch’s automatic driving technology for road test since 2013.

The Japanese government plans to allocate 34 billion yen (around $300 million) in the Tsukuba Science City construction intelligent vehicle test site, wishing that the country can put the newly pilot-less automobile into operation before the opening of the Tokyo Summer Olympics in 2020. The South Korean government plans to invest 40 billion won (about $33 million 920 thousand) for unmanned vehicles in the next 3 years. In August 2016, NuTonomy, the world’s first driver-less taxi which began operating passenger, was also approved in Singapore officially.

1.4.2 Enterprise Strategies

In the enterprise field, the leapfrog development of automobile intelligent Internet technology manufacturers and many industry giants and emerging companies displayed the latest in the automotive technology products and services such as Tesla and Google have launched the automatic driving car to the road test. At the same time, the traditional car manufactures are gradually advancing the degree of fusion of ‘Smart + connected’ technologies.

1.4.3 Universities and Research Institutes

In universities and research institutes, automotive intelligent technology is making full use of the latest achievements in artificial intelligence. In the beginning of 2015, Carnegie Mellon University and Uber secretly set up a ‘center high-technology research and development institutions in Pittsburgh to research and develop automatic driving vehicle. Stanford University and Massachusetts Institute of Technology were awarded $50 million by Toyota Corporation for the development of full automatic driving technology. In early 2016, the University of Cambridge developed the SegNet system and the PoseNet system, which made a new breakthrough in the car around the object perception and self-positioning. At the same time, University of Oxford has set up an Oxbotica company to develop unmanned software.

1.4.4 China Strategies and Opportunities

Although China started relatively late but developed rapidly, China is expected to take the lead in the introduction of national standards from the top-level design. In June 2016, the national intelligent & connected vehicles (Shanghai) demonstration area for closed test is opened. In 2016, Society of Automotive Engineering of China released a 450 page automatic driving technology road map, which is expected to lay the foundation for the intelligent vehicle infrastructure communication standards in 2018.

1.5 Next-Generation AIV

With the rapid development of AI techniques and vehicle-related technologies, it can be foreseen that the next generation of AIV will see more standardization and the related AI functionalities will be modular. Figure 6 shows the envisions of the next-generation AIV framework. In the next 10–20 years, AIVs will be put into specific application scenarios and have clear definitions over the related AI functionalities. For AI functionalities, it will be divided into three parts, namely world models, planner and decision maker and computing platform.

In order to realize vision automatic positioning system under high speed, it is crucial to establish a high-precision map. World Models aim at building the high-precision map for future AIVs, planner and decision maker will perform path planning and other-related driving decision-making functionalities, and computing platform will provide the in-car computation environment for the execution of map building as well as decision making.

2 Survey on Artificial Intelligences

2.1 Introduction

Recently, deep learning (DL)-based perception, conception and decision maker are more and more popular in artificial intelligence. LeCun et al. [5] utilize deep learning in image understanding with deep convolutional networks and distributed representations and language processing. Mnih et al. [6] combined deep learning and reinforcement learning, and implemented the human-level control for games, and then, Silver et al. [7] create computer go based on a combination of deep neural networks and tree search which can play at the level of the strongest human players. Deep reinforcement learning as the core technology demonstrated the artificial intelligence in finite and full defined domain which is referred as artificial normal intelligence (ANI). With ANI, AIV is able to achieve assistant driving.

With the breakthrough of human-level conception and the computation technology, Lake et al. [8] proposed a computational model which can capture human learning abilities for handwritten form the world’s alphabets. Moser et al. [9] realized brain-like localization and navigation and acquired the Nobel Prize in Physiology/Medicine in 2014. It follows brain-like, spiking neural network (SNN), the generation [10] neural net gradually board on the AI stage. Based on the brain-like conception, the artificial intelligence can be applied in different generalized domains with human equal ability which is called artificial generalized intelligence (AGI). With AGI, it makes advanced auxiliary driving and autonomous driving possible in AIV.

The human–machine-based artificial intelligence springs up. Human–machine concept coupling artificial intelligence performs playing with young children in school [11]. Human–machine semi-physical coupling AI is a foreground in AIV. Huang [12] utilized human–machine physical coupling AI on interactive learning for human-powered augmentation lower exoskeleton [12, 13]. Human–machine-based AI is referred as artificial super intelligence (ASI). With ASI, the machine will hold on the total ability for dealing with transportation and moving services, and even surpass the human intelligence in every domains.

AI with deep learning and reinforcement learning as the core technology can be divided into practical artificial intelligence (PAI) and advanced artificial intelligence (advanced AI). We will introduce the PAI and advanced AI in the following sections.

2.2 Practical Artificial Intelligence

The basic AI algorithms roughly include the following four: (1) Artificial neural network (ANN) is one of the most important basic artificial intelligences, namely shallow neural network, consisting of a computational elements (neurons) heavily connected to each other. The number of network inputs can be much greater than the traditional architectures. This makes the network a useful tool for analyzing high-dimensional data. (2) Compared to ANN, the study of deep learning focuses on deep neural network. It uses a cascade of many layers of nonlinear processing units for feature extraction and transformation and learns multiple levels of representations that correspond to different levels of abstraction. (3) Support vector machine (SVM) classification methods are the most precise discriminatory methods used in classification. (4) Simulated annealing is widely and successfully applied to production scheduling and control engineering.

As computers and robots become intelligent, smart computers are changing society. Practical artificial intelligence now is everywhere in our daily lives. Siri is a typical instance of practical artificial intelligence (PAI). It is a computer program that works as an intelligent personal assistant and knowledge navigator, part of Apple Inc.’s iOS, watch OS, macOS and tvOS operating systems. The feature uses a natural language user interface to answer questions, make recommendations and perform actions by delegating requests to a set of web services. It makes user’s life more convenient and efficient. Another application use case of PAI is Google Search. It is based on parallel computing, big data and deep learning algorithm to complete intelligent analysis for data and problems. Every time users search on Google, they actually help to carry out deep learning for Google Search.

2.3 Advanced Artificial Intelligence

The advanced AI technologies include deep neural network, recurrent neural network, spiking neuron network and transfer learning and reinforcement learning on multi-domain and multi-time level.

Deep neural networks (DNNs), recurrent neural networks 260 (RNNs) and transfer learning combined with reinforcement learning make breakthroughs in traditional AI applications. RNNs can deliver excellent performance in many tasks when trained to predict the next output token given the input and previous tokens. This can be applied successfully in machine translation, speech recognition and so on. Transform learning enables the agent to reuse or transfer the knowledge learning from one task to another. SNN is the brain-like simulator which can efficiently simulate bio-inspired spiking neural networks consisting of different neural models. SNN can integrate event-driven and time-driven computation schemes. SNN shows promising capabilities in achieving a performance similar to that of living brains due to their more faithful similarity to biological neural networks, notably, in pattern recognition task.

2.4 State-of-the-Art of AI

Artificial intelligence has been applied in many practical fields. In March, 2016, AlphaGo developed by Google DeepMind beat Li Shishi. Not just AlphaGo, DeepMind team made important breakthrough in so many fields, such as speech synthesis, lip reading and differentiable neural computer. In 2016, so many companies and institutes got involved in unmanned vehicle’s research and development. In August 2016, the first unmanned taxi in the world, named NuTonomy, was drove on the road and it is also the first company which open unmanned vehicle to the public. In September 2016, Google published an article to introduce the new machine translation system they invented: Google Neural Machine Translation. This system achieved the best improvements of machine translation so far. In October 2016, Microsoft published an article ‘Achieving Human Parity in Conversational Speech Recognition.’ The speech recognition system achieved an equivalent or even a low word error rate when compared to humans.

The core technology of AI is the combination of deep learning and reinforcement learning. Deep learning allows computational models that are composed of multiple processing layers to learn representations of data with multiple levels of abstraction. Sys terms combining deep learning and reinforcement learning produce impressive results in learning to play difficult and different games.

2.4.1 Deep Neural Networks

With the development of computing resources and pre-training technology, deep learning has made a breakthrough in the field of artificial intelligence, including speech recognition, visual object recognition and detection and other fields. At present, the typical deep learning models include convolutional neural network (CNNs), recurrent neural networks (RNNs), deep belief networks (DBNs) and stacked autoencoder (SAE).

Recently, using CNNs to automatically learn features has become a tendency. VGG16 [14] was applied to extract features, and a cascade AdaBoost classifier was trained based on these features [15, 16]. Their good performance testified that CNNs have a strong power of extracting general and representative features without the need of human interference. However, these methods were always based on rectangle window of a fixed size, so they had to firstly get proposals provided by other methods such as ACF [17], stixel [18, 19], edge boxes [20], BING [21], selective search [22], objectness [23] and CPMC [24]. Moreover, their methods were not end-to-end and several stages of processes had to be gone through before giving the final results. Although they had achieved good performance, sophisticated operations limit their practical use and may be time-consuming as well. Bei Tong et al. present an end-to-end network based on faster R-CNN and neural cascade classifier for pedestrian detection in [25]. Different from faster R-CNN which only makes use of the last convolutional layer, they utilize features from multiple layers and feed them to a neural cascade classifier. Such an architecture favors more low-level features and implements a negative mining process in the network. Both of these two factors are important in pedestrian detection. The classifier is jointly trained with the faster R-CNN in the unifying network.

Furthermore, mediated perception approaches [26] involve multiple initial subcomponents for recognizing driving-relevant objects, such as lanes, traffic signs, traffic lights, cars, pedestrians [27]. The recognition results are then combined into a consistent world representation of the car’s immediate surroundings. Behavior reflex approaches construct a direct mapping from an image to a driving action. This idea dates back to the late 1980s when [28, 29] used a neural network to construct a direct mapping from an image to steering angles. Chenyi Chen et al. [30] propose a direct perception approach for autonomous driving; they propose to map an input image to a small number of key perception indicators which is directly related to the affordance of a road/traffic state for driving [31]. Their representation provides a set of compact yet complete descriptions of the scene to enable a simple controller to drive autonomously. And they train a deep CNN with 12 h of human driving and show that their model can work well to drive a car in a very diverse set of virtual environments. The model structure of DNN and RNN is shown in Fig. 4.

2.4.2 Reinforcement Learning

Reinforcement learning (RL) as shown in Fig. 5 addresses the problem of a decision maker faced with a sequential decision problem and using evaluative feedback as a performance measure [32]. The general purpose of RL is to find a ‘good’ mapping that assigns ‘perceptions’ to ‘actions’ and classically addresses situations in which a single decision maker interacts with a stationary environment. The powerful methods and impressive results of RL [33, 34] have rendered this framework quite popular among the computer science and robotic communities, and recent years have witnessed increasing interest in extending RL methods to multi-agent problems. Markov games (also known as stochastic games) and several variations or specializations thereof have been used to model multi-agent RL problems [35]. Several researchers have applied single-agent RL methods (with adequate adaptations) to this multi-agent framework.

Burden involved Nash-Q iterations, while retaining the convergence properties of the latter in most classes of games. In a somewhat related line of work, joint-action learners combine Q-learning with fictitious play in fully cooperative multi-agent MDPs [36]. Fictitious play was also combined with prioritized sweeping to address planning in adversarial scenarios [37]. Gradient-based learning policies are analyzed in detail in [38]. In another work, Bowling and Veloso [39] propose a policy-based learning method that applies policy hill-climbing with a varying learning step, using the principle of ‘win or learn fast’ (WoLF-PHC). Many other works on multi-agent learning systems can be found in the literature—see, for example, Sen and Wei [40].

With the deepening of the research on reinforcement learning algorithm and theory, reinforcement learning algorithm has been widely used in practical engineering optimization and control. The reinforcement learning method in nonlinear control, robot control, artificial intelligence, problem solving, combinatorial optimization and scheduling, communication and digital signal processing, multi-agent, pattern recognition and traffic control and other fields has made some successful application. In recent years, especially in the automatic driving technology of intelligent vehicle, RL learning has a great potential, for example, in 2016, the AlphaGo computer system combines RL algorithm and depth learning to make the computer go to the level even more than the level of the top professional players, causing a sensation in the world. Therefore, reinforcement learning, as a universal learning algorithm that can solve the intelligent vehicle problem from perception to decision control, will be more widely used in various fields of real life.

3 Artificial Intelligence for Vehicles

3.1 Introduction

Figure 6 shows the framework on how to apply AI techniques to assist vehicle development. As AI techniques develop from ANI, to AGI and ASI, it will help the development of vehicles in different scales. In the intelligent vehicle field, as AI develops, it will make vehicles more and more intelligent, from L1 to L4 as defined by SAE standards [41]. For the connected vehicle field, AI techniques will help the connected vehicle technology from in-car computation to cloud computation and realize real-time communication between vehicles and roadside units.

3.2 Autonomous Vehicles (AV)

3.2.1 Key Technologies in AV

In AV, the driving environment perception, cognition map, path planning and strategy control are the equivalent important task in AV [42,43,44]. How to drive like human beings is the most important task. Developing AV needs to integrate multimodal high-dimensional data processing in real time, high-precision cognitive map building and positioning technology, the optimal path planning and decision-making control technology, human–computer interaction and redundancy compensation technology. Recently, deep reinforcement learning techniques are widely applied in AV [45, 46].

3.2.2 Current Prevailing AV Use Cases

In 2010, seven Google driverless cars to form a team began to try on the road in California. In August 2012, Google announced that under the control of the computer, it has more than ten driverless cars that have been safe to travel 480 thousand km. On May 8, 2013, Nevada Motor Vehicle Administration officially issued the first unmanned vehicle license to Google. NuTonomy, a Singapore company, hopes to provide users with mobile phone driverless taxi. In 2016, the company’s test car successfully runs across the various obstacles passed the first test in Singapore. The company will also continue to commercial test of this kind of car in Singapore and plans for the next few years in the city with thousands of driverless taxi. It uses the coordination of unmanned aerial vehicle (UAV) algorithm to manage driverless cars; NuTonomy said that it will improve the car efficiency, thereby reducing traffic congestion and emissions of carbon dioxide gas. NuTonomy algorithm contains a ‘formal logic’ function which gives car flexibility, which can make it in violation of the less important traffic rules. It can make use of complex judgment to transcend parked cars side by side without affecting the traffic.

3.2.3 Key Barriers of AV

Among the main obstacles to widespread adoption of autonomous vehicles, in addition to the technological challenges, are disputes concerning liability; the time period needed to turn an existing stock of vehicles from nonautonomous to autonomous; resistance by individuals to forfeit control of their cars; consumer concern about the safety of driverless cars; implementation of legal framework and establishment of government regulations for self-driving cars; risk of loss of privacy and security concerns, such as hackers or terrorism; concerns about the resulting loss of driving-related jobs in the road transport industry; and risk of increased suburbanization as driving becomes faster and less onerous without proper public policies in place to avoid more urban sprawl.

3.3 Connected Vehicles (CV)

3.3.1 What are Connected Vehicles

The car with intelligent network is the device which is equipped with vehicle sensor, controller and actuator advanced, etc. And it is a new generation of intelligent vehicles which is the integration of modern communication and network technology to achieve complex environmental perception, intelligent decision-making and control functions, so that it can be integrated to achieve energy saving, environmental protection and comfort of driving. The car can realize inter- and intravehicle communication, as well as with the road traffic through a certain equipment which can fuse car networks, inter-vehicle networks to realize car communications, internal communications and vehicle road communication (car connection with network center, intelligent transportation systems and other service centers) [47,48,49], so that it can achieve the information exchange between the inside and outside network and solve the problem of the information exchange between the vehicle and the environment. The key factors of AI in connected vehicles are shown in Fig. 7.

3.3.2 Key Benefits of Connected Vehicles

-

(1)

Provide information sharing services to ensure safe travel, convenient travel

Connected vehicles [50, 51] can make traffic reports and electronic map through the GPS global satellite positioning system, according to the current road condition such as traffic congestion, complex road conditions, traffic safety, collision warning and route guidance, so that it can achieve early prediction of the speed limit in front of the intersection and the installation of illegal traffic cameras to ensure safe driving.

-

(2)

Satellite positioning navigation and autodetection

Connected vehicles can determine the location of stolen vehicle and route through the GPS satellite positioning technology, in order to search and track vehicle recovery and arrest the thieves. In addition, the vehicle performance and condition can be automatically monitored, transmitted with remote expert consultation in many places to guide vehicle maintenance, etc.

-

(3)

Road rescue and vehicle emergency warning system

In the course of driving, if there is a traffic accident, the driver can contact the emergency services or car service station through the emergency call button of the telematics system [52,53,54]; When the vehicle is in a dangerous situation, the driver can receive from emergency warning and emergency response plans issued by the road traffic management department to ensure road safety and smooth road rescue.

3.3.3 New Technologies of CV

The new technologies of CV are composed of sensing, decision, control, communication positioning and data platform, mainly including the following aspects:

-

(1)

Advanced sensing technology, which includes machine vision image recognition technology, radar (laser, millimeter wave, ultrasound) of the surrounding obstacle detection technology, detect and monitor the driver’s physiological status by a flexible electronic photonic device and so on.

-

(2)

Communication location [55] and mapping, which includes necessary information sharing and collaborative control communication between numbers of intelligent network security technology of automobile, mobile self-organization network technology, the high-precision positioning technology, construction technology and high-precision map and local scene.

-

(3)

Intelligent decision technology, including risk situation modeling technology, risk warning and control priority division, multi-objective collaborative technology, vehicle trajectory planning, driver diversity analysis, human–computer interaction.

-

(4)

The vehicle control technology [41, 56], which includes longitudinal motion control system based on the drive and braking system, lateral motion control based on steering system, vertical motion control based on driving/braking/steering/chassis integrated control and suspension, and at the same time, it can use communication and vehicle sensor to achieve team collaboration and cooperative vehicle.

-

(5)

Data platform technology which includes nonrelational database schema, efficient data storage and retrieval, association analysis and deep mining of large data cloud operating system and information security mechanism.

3.3.4 Current Prevailing CV Use Cases

China Association of Automobile Manufacturers (CAAM) defined CV as vehicles equipped with advanced vehicle sensors, controllers, actuators and other devices, and the integration of modern communications and network technology to achieve the car and X (people, cars, roads, background, etc.) intelligent information exchange sharing, with complex environmental awareness, intelligent decision-making, collaborative control and implementation functions, can achieve safe, comfortable, energy efficient, efficient driving, and ultimately replace the operation of a new generation of cars [57, 58].

4 Key Technologies in AIV

4.1 World Model

World model aims at providing the precise representation of the world. Precision is the key parameter measuring the performance of a map for intelligent vehicles [59]. [60] propose to use multiple support regions (MSRs) of different sizes surrounding an interest point to choose the best scale of the support region. In [61], the paper proposes a novel method to enhance a driver’s situation awareness by dynamically providing a global view of surroundings for the driver. At present, high-precision maps are sorted into two classes, namely ADAS and HAD, respectively. ADAS maps’ accuracy is in the scale of meters, while HAD maps can achieve the accuracy of centimeters. HAD maps are more precise than ADAS maps, with more specific road information, such as lane and crosswalk lines. This provides the basic recovery of the real road scene in the data. Therefore, HAD maps can be used in self-driving cars.

Automobile intelligence is the trend of the automobile industry, which requires high-precision maps with high update rate. To reach the state of fully automated driving, high-precision map is the foundation; real-time information is also required.

Gaode completed the development of ADAS map for freeways and city expressways by the end of 2015 and for national highways and provincial highways by the end of 2016. Also, Gaode completed the development of HAD map for freeways in 2016. In 2017, Gaode is going to develop ADAS maps in more than 30 cities and HAD maps in national highways and provincial highways. Currently, HAD maps have narrowed the scale into centimeters. If traditional maps are printed for humans, the HAD maps are built for vehicles. It allows automobiles driving by themselves in the freeways. Figures 7 and 8 show the HAD map construction framework. The localization functionality is based on image, high-precision cognitive map is based on deep learning, and vehicle data are fetched from the GIS acquisition module.

4.2 Planner and Decision Maker

The decision module integrates path planning, behavior planning, reference planning and motion planning, makes the final intelligent decision and drives the smart car [62,63,64]. [65] proposed a development framework and novel algorithms for road situation analysis based on driving action behavior, where the safety situation is analyzed by simulating real driving action behaviors. Based on the input of HAD maps and the expectation of the driver, the scheme of path planning, behavior planning, reference planning and motion planning is proposed. (1) Path planning part is to propose the most suitable route for driver according to the maps and the application of large data navigation algorithm; (2) behavior planning proposes an anthropomorphic driving scheme according to the map and the driver’s historical behavior; (3) reference planning predicts the future trajectory of the reference target based on the model input of the moving obstacles in the map; (4) motion planning combines other vehicle trajectories and proposes the specific short time trajectory [31].

The decision maker [66,67,68] is based on the prediction of the behavior of other vehicles and makes decisions accordingly. This decision maker must be accepted by passengers (comfortable, reliable, agile, etc.) and also be accepted by other traffic participants (for example, cannot cause panic, ambiguity, strange and other associations). The detailed framework is shown in Fig. 9.

4.3 Computing Platform for AIV

There are two major directions for the solution in existing computing platform. One is the central computation way which is represented by NVIDIA PX2. The other is the distributed computation which is represented by Intel, NXP and Infineon, etc. Intel and NVIDIA are competing to promote driverless cars. Both Intel Go and NVIDIA Drive PX2 have the same goals—to train the computer to be more intelligent, to help the car to detect pedestrians and identify lanes and stop signals, to make decision based on the data gathered by algorithm, cameras and sensors.

The new platform for computation and development aims at making a breakthrough in the area of integrating vehicle with AI computing architecture and developing an intelligent interface model for vehicle AI. It shows that a vehicle-mounted high-performance computing platform which can deal with big data is absolutely necessary in the process of driverless technologies driving into new stage quickly and smoothly. Figure 10 shows one example of the computing platform.

4.4 AIV Use Cases

Advanced driver assistance system (ADAS) makes use of various kinds of in-car sensors; collects real-time information about the environment, recognizing the static as well as dynamic objects; and then recommends the most suitable driving actions to the driver to avoid dangerous situations [69]. Normally, ADAS includes GPS navigation system, intelligent transportation services (ITS), automatic parking (AP), adaptive cruise control (ACC) and lane keeping assist system (LKAS).

GPS navigation system bases on current traffic information and short-term forecast and will recommend the most suitable route to the driver. ITS provides miscellaneous traffic information to the driver, such as real-time congestion information, traffic light information. AP aids drivers with Parking maneuver actions and provides useful information for Parking, such as the distance to the rear wall. ACC will release drivers with boring driving situations and keep a constant driving speed in the highway. LKAS keeps the vehicle in the lane and provides warning to the driver if the driver makes unintentional lane crossing actions. Figure 11 shows the car-embedded products.

5 China’s Strategies on Developing AIV

Vehicle AI 2.0 is a new generation of automotive intelligence that achieved the new goal based on the new environment of changing information. Among them, the new environment refers to the popularity of connected vehicle, penetration of cross-media vehicle sensor, multidimensional large data and so on. The new goal refers to the anthropomorphic driving field of ‘learning’ and ‘interaction’ in the process of thinking like human beings.

‘Made in China 2025,’ ‘Internet+ three years of artificial intelligence implementation plan,’ ‘Thirteen five auto industry development plan,’ ‘Artificial Intelligence 2.0’ are proposed by Chinese Academy of Engineering. In 2016, the first ‘National Intelligent Connected Vehicle (Shanghai) pilot demonstration area’ closed test area approved by the Ministry of Industry held an opening ceremony in Jiading.

The demonstration area is located in Shanghai International Automobile City, which belongs to Shanghai Anting Town, Jiading District. There is an area of 90 square kilometers, where it will carry out the overall test of intelligent connected vehicle and intelligent traffic demonstration. In the closed test area, the first period will form 29 functional test scenarios. There will form nearly 100 test scenarios within three years and explore the realization of vehicle traffic warning, bus priority, automatic parking and other demonstration applications on the open road gradually, combined with intelligent lighting to carry out the relevant applications.

China pays attention to unmanned driving at the national level, make top-level design and scientific planning for research and industrialization of unmanned technology and revise and improve unmanned laws and regulations as soon as possible, and provide system protection for development of unmanned vehicles, testing and commercial applications.

As the driver’s control of the car is reduced, the focus of legislative regulation should also be more biased toward car manufacturers and software developers. In the process of automobile production, the Ministry of Industry and Information Technology should introduce the special inspection standard for the unmanned vehicles, and research on the access conditions and examination requirements of the production enterprises involved in different parts and software programs in unmanned vehicles, and the special inspection standards of products. In the process of sales, the business sector also should take appropriate measures to increase market supervision for unmanned vehicles and regulate the unmanned driving sales. For the unmanned car accident about the division of responsibilities, it should be determining the responsibility of the accident by the fault of the parties and driving situation as the traditional traffic accidents.

6 Conclusion and Future Work

This paper surveys the literature of artificial intelligence for vehicles, reviews the history of vehicle development as well as AI development. We give out the four main contents of AIV and lay out the overall framework of AIV. In the near future, AIV will be a boosting factor in the vehicle industry; head into the next generation of vehicles which provides human-level intelligence.

The current information technology revolution is driving car design turn into a new page; intelligent vehicle technology is changing people’s driving habits, at the same time, improving the traffic safety, energy conservation and emissions reduction, bring city traffic planning layout again. Future intelligent vehicles will be toward the direction of environmental protection, energy saving, intelligent, personalized, safe and comfortable. The perception, communication technology and the development of the embedded system will strongly support the development of intelligent vehicles. At present, the development of intelligent vehicle technology is still in the assistant driving. It may take time to the highest level of semi-automatic and fully automatic phase, but with the accumulation of intelligence technology, together with the formulate of the relevant laws and regulations and the acceptance of people, intelligent vehicle technology will achieve rapid growth and ultimately promote the intelligent car popularity.

Change history

26 September 2018

The original version of the abstract and keywords of this article has been revised to incorporate recent updates. The revised abstract and keywords are given below.

References

Faezipour, M., Nourani, M., Saeed, A., et al.: Progress and challenges in intelligent vehicle area networks. Commun. ACM 55(2), 90–100 (2012)

Oh, S.I., Kang, H.B.: Object detection and classification by decision-level fusion for intelligent vehicle systems. Sensors 17(1), 207 (2017)

Jadhav AU, Wagdarikar NM (2015) A review: control area network (CAN) based Intelligent vehicle system for driver assistance using advanced RISC machines (ARM). In: International Conference on Pervasive Computing. IEEE, pp 1–3

Jose, D., Prasad, S., Sridhar, V.G.: Intelligent vehicle monitoring using global positioning system and cloud computing. Proc. Comput. Sci. 50, 440–446 (2015)

Le Cun, Y., et al.: Deep learning. Nature 521, 436 (2015)

Mnih, V., et al.: Human-level control through deep reinforcement learning. Nature 7540, 529 (2015)

Silva, D., et al.: Mastering the game of Go with deep neural networks and tree search. Nature 7587, 484–489 (2016)

Lake, B.M., et al.: Human-level concept learning through probabilistic program induction. Science 350(6266), 1332–1338 (2016)

Moser, M.-B., Moser. E.I.: The brain’s GPS tells you where you are and where you’ve come from. Scientific American (2016)

Service: The brain chip. Sci. Mag. 345(6197), 614–616 (2014)

Abbott, L.F., et al.: Building functional networks of spiking model neurons. Nat. Neurosci. 19(3), 350 (2016)

Huang, R., et al.: Hierarchical interactive learning for a human-powered augmentation lower exoskeleton. In: ICRA (2016)

Huang, R., et al.: Interactive learning for sensitivity factors of a human-powered augmentation lower exoskeleton. In: IROS (2015)

Simonyan, K., Zisserman, A.: Very deep convolutional networks for large-scale image recognition (2014). arXiv preprint: arXiv:1409.1556

Cao, J., Pang, Y., Li, X.: Learning multilayer channel features for pedestrian detection (2016). arXiv preprint:arXiv:1603.00124

Yang, B., Yan, J., Lei, Z., Li, S.Z.: Convolutional channel features. In: 2015 IEEE International Conference on Computer Vision, ICCV 2015, Santiago, Chile, 7–13 December, pp. 82–90 (2012)

Doll’ar, P., Appel, R., Belongie, S., Perona, P.: Fast feature pyramids for object detection. IEEE Trans. Pattern Anal. Mach. Intell. 36(8), 1532–1545 (2014)

Benenson, R., Mathias, M., Timofte, R., Van Gool, L.: Fast stixel computation for fast pedestrian detection. In: Fusiello, A., Murino, V., Cucchiara, R. (eds.) ECCV 2012 Ws/Demos, Part III. LNCS, vol. 7585, pp. 11–20 (2012)

Benenson, R., Mathias, M., Timofte, R., Van Gool, L.: Pedestrian detection at 100 frames per second. In: 2012 IEEE Conference on Computer Vision and Pattern Recognition (CVPR) IEEE, pp. 2903–2910 (2012)

Zitnick, C.L., Doll’ar, P.: Edge boxes: locating object proposals from edges. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds.) ECCV 2014, Part V. LNCS, vol. 8693, pp. 391–405. Springer, Heidelberg (2014)

Cheng, M.M., Zhang, Z., Lin, W.Y., Torr, P.: BING: binarized normed gradients for objectness estimation at 300fps. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 3286–3293 (2014)

Uijlings, J.R., van de Sande, K.E., Gevers, T., Smeulders, A.W.: Selective search for object recognition. Int. J. Comput. Vis. 104(2), 154–171 (2013)

Alexe, B., Deselaers, T., Ferrari, V.: Measuring the objectness of image windows. IEEE Trans. Pattern Anal. Mach. Intell. 34(11), 2189–2202 (2012)

Carreira, J., Sminchisescu, C.: CPMC: automatic object segmentation using constrained parametric min-cuts. IEEE Trans. Pattern Anal. Mach. Intell. 34(7), 1312–1328 (2012)

Tong, B., Fan, B., Wu, F.: Convolutional Neural Networks with Neural Cascade Classifier for Pedestrian Detection. In: Pattern Recognition. Springer, Singapore (2016)

Ullman, S.: Against direct perception. Behav. Brain Sci. 3(3), 373–381 (1980)

Geiger, A., Lenz, P., Stiller, C., Urtasun, R.: Vision meets robotics: the KITTI dataset. Int. J. Robot. Res. 32(11), 1231–1237 (2013)

Pomerleau, D.A.: Alvinn: An autonomous land vehicle in a neural network. Technical report, DTIC Document (1989)

Pomerleau, D.A.: Neural network perception for mobile robot guidance. Technical report, DTIC Document (1992)

Gibson, J.J.: The Ecological Approach to Visual Perception. Psychology Press, Routledge (1979)

Chen, C., Seff, A., Kornhauser, A., et al.: DeepDriving: learning affordance for direct perception in autonomous driving. In: IEEE International Conference on Computer Vision. IEEE, pp. 2722–2730 (2015)

Sutton, R.S., Barto, A.G.: Reinforcement Learning: An Introduction. Adaptive Computation and Machine Learning Series, 3rd edn. MIT Press, Cambridge, MA (1998)

Crites, R.H., Barto, A.G.: Elevator group control using multiple reinforcement learning agents. Mach. Learn. 33(2–3), 235–262 (1998)

Tesauro, G.: Td-gammon, a self-teaching backgammon program, achieves master-level play. Neural Comput. 6(2), 215–219 (1994)

Littman, M.L.: Markov games as a framework for multi-agent reinforcement learning. In: de Mántaras, R.L., Poole, D. (eds.) Proceedings of the 11th International Conference on Machine Learning (ICML’94), pp. 157–163, San Francisco, CA. Morgan Kaufmann Publishers (1994)

Junling, H., Wellman, M.P.: Nash Q-learning for general sum stochastic games. J. Mach. Learn. Res. 4, 1039–1069 (2003)

Claus, C., Boutilier, C.: The dynamics of reinforcement learning in cooperative multiagent systems. In: Proceedings of the 15th National Conference on Artificial Intelligence (AAAI’98), pp. 746–752 (1998)

Bowling, M., Veloso, M.: Scalable learning in stochastic games. In: Proceedings of the AAAI Workshop on Game Theoretic and Decision Theoretic Agents (GTDT’02), pp. 11–18. The AAAI Press. Published as AAAI Technical Report WS-02-06 (2000)

Uther, W., Veloso, M.: Adversarial reinforcement learning. Technical Report CMU-CS-03-107, School of Computer Science, Carnegie Mellon University (2003)

Sen, S., Wei, G.: Learning in Multiagent Systems, chapter 6, pp. 259–298. The MIT Press (1999)

Malikopoulos, A. A., Cassandras, C.G.: Decentralized optimal control for connected and automated vehicles at an intersection. In: 55th Conference on Decision and Control. arXiv:1479353 (2016)

FranzÈ, G., Lucia, W.: The obstacle avoidance motion planning problem for autonomous vehicles: a low-demanding receding horizon control scheme. Syst. Control Lett. 77, 1–10 (2015)

Sharifi, F., Chamseddine, A., Mahboubi, H., et al.: A distributed deployment strategy for a network of cooperative autonomous vehicles. IEEE Trans. Control Syst. Technol. 23(2), 737–745 (2015)

Xargay, E., Dobrokhodov, V., Kaminer, I., et al.: Time-critical cooperative control of multiple autonomous vehicles: robust distributed strategies for path-following control and time-coordination over dynamic communications networks. IEEE Control Syst. 32(5), 49–73 (2012)

Lenz, D., Kessler, T., Knoll, A.: Stochastic model predictive controller with chance constraints for comfortable and safe driving behavior of autonomous vehicles. In: IEEE, pp. 292–297 (2015)

Gray, A., Gao, Y., Hedrick, J.K., et al.: Robust predictive control for semi-autonomous vehicles with an uncertain driver model. In: Intelligent Vehicles Symposium. IEEE, pp. 208–213 (2013)

Lu, N., Cheng, N., Zhang, N., et al.: Connected vehicles: solutions and challenges. IEEE Internet Things J. 1(4), 289–299 (2014)

Ali, R.Y., Gunturi, V.M.V., Shekhar, S., et al.: Future connected vehicles: challenges and opportunities for spatio-temporal computing. In: Sigspatial International Conference on Advances in Geographic Information Systems. ACM 14 (2015)

Dimitrakopoulos, G.: Intelligent transportation systems based on internet-connected vehicles: fundamental research areas and challenges. In: International Conference on ITS Telecommunications, pp. 145–151 (2015)

Lee, J., Park, B.: Development and evaluation of a cooperative vehicle intersection control algorithm under the connected vehicles environment. IEEE Trans. Intell. Transp. Syst. 13(1), 81–90 (2012)

Schoettle, B., Sivak, M.: A survey of public opinion about connected vehicles in the U.S., the U.K., and Australia. In: 2014 International Conference on Connected Vehicles and Expo (ICCVE), Vienna, pp. 687–692 (2014)

Elazab, M., Noureldin, A., Hassanein, H.S.: Integrated cooperative localization for connected vehicles in urban canyons. In: IEEE Global Communications Conference. IEEE, pp. 1–6 (2015)

Amadeo, M., Campolo, C., Molinaro, A.: Information-centric networking for connected vehicles: a survey and future perspectives. IEEE Commun. Mag. 54(2), 98–104 (2016)

Lee, E., Lee, E.K., Gerla, M., et al.: Vehicular cloud networking: architecture and design principles. IEEE Commun. Mag. 52(2), 148–155 (2014)

Chun, B.G., Ihm, S., Maniatis, P., et al.: CloneCloud: elastic execution between mobile device and cloud. In: Conference on Computer Systems, pp. 301–314 (2011)

Rios-Torres, J., Malikopoulos, A., Pisu, P.: Online optimal control of connected vehicles for efficient traffic flow at merging roads. In: IEEE, International Conference on Intelligent Transportation Systems. IEEE, pp. 2432–2437 (2015)

Azevedo Sá, H., Neto, M.S., Meggiolaro, M.A.: Artificial Intelligence based semi-autonomous control system for military vehicle. In: 5o COLLOQUIUM SAE BRASIL DE ELETRO ELETRÔNICA EMBARCADA E MOSTRA DE ENGENHARIA (2015)

Charissis, V., Papanastasiou, S.: Artificial intelligence rationale for autonomous vehicle agents behaviour in driving simulation environment. Intech (2017)

Hata, A., Wolf, D.: Road marking detection using LIDAR reflective intensity data and its application to vehicle localization. In: 17th International IEEE Conference on Intelligent Transportation Systems (ITSC), Qingdao, pp. 584–589 (2014)

Cheng, H., Liu, Z., Yang, J.: Multi-support-region image descriptors and its application to street landmark localization. Mach. Vis. Appl. 23(4), 805–819 (2012)

Cheng, H., Liu, Z., Zheng, N., Yang, J.: Enhancing a driver’s situation awareness using a global view map. In: International Conference Multimedia Exploration, pp. 1019–1022 (2017)

Hermans, T., Rehg, J.M., Bobick, A.F.: Decoupling behavior, perception, and control for autonomous learning of affordances. In: IEEE International Conference on Robotics and Automation. IEEE, pp. 4989–4996 (2013)

Aly, M.: Real time detection of lane markers in urban streets. Comput. Sci. 36(5), 7–12 (2014)

Geiger, A., Lauer, M., Wojek, C., et al.: 3D traffic scene understanding from movable platforms. IEEE Trans. Pattern Anal. Mach. Intell. 36(5), 1012–25 (2014)

Cheng, H., Zheng, N., Zhang, X., Qin, J., van de Wetering, H.: Interactive road situation analysis for driver assistance and safety warning systems: frameworks and algorithms. IEEE Trans. Intell. Transp. Syst. 8(1), 157–167 (2017)

Koutník, J., Cuccu, G., Schmidhuber, J., et al.: Evolving large-scale neural networks for vision-based reinforcement learning. Mach. Learn. 25(8), 541–548 (2013)

Zhang, J., Cho, K.: Query-efficient imitation learning for end-to-end autonomous driving. CoRR 1605.06450 (2010)

Ur, E., Ahin, E.: Traversability: a case study for learning and perceiving affordances in robots. Adapt. Behav. 18(18), 258–284 (2010)

Brookhuis, K.A., De Waard, D., Janssen, W.H.: Behavioural impacts of advanced driver assistance systems—an overview. EJTIR 1(3), 245–253 (2001)

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Li, J., Cheng, H., Guo, H. et al. Survey on Artificial Intelligence for Vehicles. Automot. Innov. 1, 2–14 (2018). https://doi.org/10.1007/s42154-018-0009-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s42154-018-0009-9