Abstract

In this article, we present roadmaps and research issues pertaining to multiagent social simulation to illustrate the directions of technological achievements in that domain. Compared with physical simulation, social simulation is still in the phase of establishing simulation models. We focus on four issues, namely “undetermined model”, “awareness effects”, “obscure boundary”, and “incomplete data”, and consider ways to overcome these issues using the massive computational power of high-performance computing. We select three applications, namely evacuation, road traffic, and market, and estimate the required computational cost of real applications. Moreover, we investigate research issues on the application side and categorize possible future works on multiagent social simulations.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Multiagent social simulation (MASS) is gaining prominence as a powerful and practical tool for research on social science and design of social systems. MASS presents technical challenges in terms of developing models of human behaviors and interactions of these behaviors, as well as regenerating the processes of social-system behaviors, which consists of human behaviors. If MASS can be used to regenerate social-system behaviors with a certain preciseness, it can be used to verify specific models or conditions of behaviors of human individuals and social systems. Moreover, if a model used for MASS is verified, it can be used to validate new designs of social systems/services.

Recent progress in MASS was enabled by two technologies, namely big data and high-performance computing (HPC). Both technologies have been developed in the 21st century. Big data obtained from Internet connected and mobile devices depict various social situations. For example, NTT DoCoMo offers “mobile spatial statistics”, which indicate the dynamics of distribution maps of people based on mobile phone locations. Using such big data, we can develop more authentic models of societies. HPC, which has been improved based on Moore’s law and massively parallel architectures, helps realize large-scale and complex calculations of social phenomena. For example, [1] showed the possibility of city-wide evacuation simulation, including the walking behaviors of individuals, using supercomputers. With the use of such HPC, we can develop full-scale simulations, including those of the behaviors of individual humans.

However, MASS is a developing technology. In social sciences, there is no conclusive or definitive basic theory about human behaviors. Each MASS uses a tentative model based on several hypotheses. Therefore, the results of such MASS must be analyzed carefully. Moreover, the methodologies used to apply MASS to real problems are also not straightforward. In addition to the uncertainty of MASS models, we must focus on inconsistency of humans’ behaviors when they know the simulation results.

To overcome these difficulties, several novel approaches have recently been developed in the domain of computational social science. Exhaustive simulation is a typical methodology for facilitating the advancement of MASS as a strong tool. These developments have created the potential for a wide variety of MASS applications in the scientific and engineering domains.

In this article, we discuss various directions in MASS research and attempt to find a roadmap and research issues of MASS for the coming decade.

Features of social simulation

Difficulties in social simulation

The most significant difficulty in researches on social simulation is the impracticability of performing realistic experiments. The basic schema of social simulation is the same as that of physical simulations (Fig. 1), that is, to establish a simulation model f(x) based on data from the real world or real experiments and to calculate future or unknown situations using the model f(x). However, it is difficult to perform realistic experiments; therefore, sophisticated models for simulating social phenomena have not been built thus far.

The above difficulty is also highlighted by the following four critical issues:

-

Undetermined model Owing to the lack of adequate real experimental data, most models of social phenomena are not determined at the same level as those of physical phenomena. In particular, human intelligence is the most difficult part from the viewpoint of modeling social phenomena, because the intelligence consists of multi-level knowledge, inference, and recognition.

-

Awareness effects The main difference between human and physical entities is “awareness”. Physical entities never change their behaviors even when the simulation results are known. By contract, human entities (agents) may alter their behaviors when they are aware of the simulation results. This means that in MASS, the simulation itself induces changes in its results.

-

Obscure boundary In general, there are few trivial boundaries or separations among social phenomena. Each social phenomenon affects each other in several abstraction levels, and relates widely spread area and time. Therefore, it is difficult to find independent factors in social phenomena.

-

Incomplete data It is difficult to acquire well-formed and complete data about social phenomena. Unlike physical phenomena, the measurement of human activities, especially psychological activities, is subjective and difficult to reproduce. Even where psychological big data are available, they are significantly incomplete and irregular. Moreover, we cannot ignore uncertain rare cases involved in social phenomena, and these cannot be covered by any set of big data.

Possibility of exhaustive simulation

Roadmap image of researches on complex/social simulation (taken and translated from [9])

One of methodologies to overcome the difficulty described in “Difficulties in social simulation” section is the use of exhaustive simulation. Based on the nature of social phenomena, it is difficult to precisely predict the actual state of the social system in detail by means of simulation. The behaviors of individual humans include non-negligible randomness. In addition, interactions among the behaviors of individual humans tend to amplify the randomness and induce macro-level changes. An exhaustive simulation attempts to compensate for such randomness by considering almost all of possible simulation conditions which may include various phenomena induced by such randomness.

Transdiciplinary Federation [9], mentioned the importance of MASS as a tool to establish bifurcation theory in the context of social systems. In this report, they discuss the roadmap of research on complex systems including social systems. In the accompanying analysis, complex systems are classified into three categories, namely integrable, nonintegrable, and social systems (Fig. 2). While bifurcation theories for integrable and nonintegrable systems have benn established at least at the basic level, such theories for social systems have not been investigated thus far. Understanding bifurcation is the first step toward overcoming the aforementioned difficulties associated with social simulations. Therefore, it was concluded that bifurcation theories for social simulation must be established by the year 2030 [9].

Exhaustive simulation is an important tool for analyzing the bifurcation of a given system. The simplest way to obtain a bifurcation diagram in a parameter space is to execute a simulation considering all conditions of the parameter space exhaustively. This will be a bootstrap for establishing the bifurcation theories for social systems.

Computational roadmaps of social simulations

As described above, our purpose is to determine how HPC contributes to the advancement of research on social simulation or to clarify the computational power required for real applications of social simulation. In this section, we focus on three applications and try to develop roadmaps for them.

In the development of these roadmaps, we adopted two indexes to measure the computational cost, “number of situations” and “complexity of one simulation session”. As discussed in “Difficulties in social simulation” section, we considered exhaustive evaluation by simulation as a key methodology of social simulation. Therefore, to evaluate the model, examining many conditions and models is important. The index of “number of situations” indicates this number. Meanwhile, ordinal computational cost of a simulation, which is determined by the number of entities and the number of interactions among the entities, is important. In addition, in multiagent simulation, the computational cost of thinking of each agent is significant. In the following discussion, we integrate these complexities as “complexity of one simulation session”.

Evacuation/pedestrian simulation

The main target of evacuation simulation is not to find an optimal plan of evacuation for a given disaster situation, but to evaluate the feasibility and robustness of executable candidates of evacuation plans or guidance policies. Because of natures of disasters, it is difficult to acquire complete information to determine the conditions of evacuations in the event of a disaster. Therefore, it is almost impossible to validate optimality for each disaster. Instead, local governments should strive to establish feasible plans that will work robustly in most situations of disasters. This means evaluation of evacuation plans should be done under widely varying disaster scenarios. A massively parallel computer simulation will make such evaluations easy and effective.

Several simulations have been performed for evaluating such evacuation plans [6, 10,11,12]. For example, a simulation of an evacuation from a Tsunami struck city in Tokai area in Japan was performed, where a massive damage is expected to occur due to the great Tokai–Tonankai earthquake. To help understand the importance of the relationship between evacuation scale (populations of evacuees) and effectiveness of evacuation plans, we conducted the following exhaustive simulations considering various sizes and evacuation policies (evacuee’s origin-destination (OD) plans). The simulation results indicate that the scale of evacuation can be grouped into two categories, namely “large” (> 3000 evacuees) and “small” (< 3000 evacuees). Each evacuation plan has similar relative effectiveness in each category. The actual evacuation size (population) may change based on various factors such as daytime/nighttime, number of visitors/travelers, weather, and special events. This implies that citizens and local governments should consider at least two plans for large- and small-scale evacuations.

We execute the evacuation simulation described above to arrive at a reference point for illustrating computational costs of various actual applications. In the above simulation, we considered the following scenarios:

-

2187 OD plans and

-

8 cases of evacuation population (70–10,000 agents).

Therefore, in total, 17,497 simulation scenarios were executed over about 30 days when using a single process on Xeon E5 CPU (2.7 GHz). We denote this reference point as the rectangle “city zone, TSUNAMI” in Fig. 3.

We can easily extend the simulation scale. Although a population of only 10,000 is considered in “city zone, TSUNAMI”, we can extend the simulation to a more densely populated area such as in Tokyo. For example, we performed a similar simulation analysis in the Kanazawa area, which is located on the coast along the Japan Sea and experiences snowfall in the winter. In this case, the population size is similar (about 6000 agents), but the number of combinations of scenarios increases to 4,194,304 (\(2^{22}\)). The rectangle “city zone, TSUNAMI and HEAVY SNOW” in Fig. 3 denotes this calculation cost.

We can further extend the simulation to a large scale with a larger number of scenarios. Kitasenju area, a large transfer station surrounded by rivers, has a population of 70,000, and the computational cost of simulating this area is denoted by “dense-population zone, complex disaster” in Fig. 3. Because this area is densely populated and complex, we have combinations of 44 policy candidates, that is \(2^{44}\) scenarios. In the case of Tokyo, we need additional computational power. In Fig. 3, “megacity” corresponds a huge city such as Tokyo. In this case, the size of evacuation and the number of possible scenarios is very large. Therefore, peta- or exa-scale HPC is required to handle such simulations.

Traffic simulation

Road traffic is an important domain from the viewpoint of applying social simulation. Traffic simulation has been extensively researched over a long period, and recently, the focus has been on multiagent simulation, in which each agent behaves according to its own preferences and inference rules. Big data advances in computational power enable us to perform such detailed simulations.

[7] have been developing a traffic simulator called IBM Mega Traffic Simulator that can run large-scale traffic simulations on XASDI middleware. The main feature of this simulator is its ability to reflect individual drivers’ preferences. Using this feature, according to big data, we can adapt parameters in the simulation that cause differences in drivers’ tendency.

To create a reference point for the roadmap of the traffic simulation, we considered the case of evaluating road restriction policies for road construction in the Hiroshima area[8]. In this case, we performed simulations of the following scales:

-

70,000 agents (trips), 120,000 road links, and 15 hours and

-

20 cases

In this case, the calculation required about one day when using a single process on Xion E5 CPU. We denote this reference point as “million city, road plan” in Fig. 4.

We can draw out the roadmap from this reference point. When considering the Tokyo area, the number of agents increases up to about 2 million and the number of road links increases to about 610,000. Moreover, if we consider a larger area such as the Tokyo metropolitan area, the population increases to about 4 million and the number of road links increases to 2.5 million. These calculation costs are plotted as “Tokyo, traffic control” and “metropolis, traffic control” in Fig. 4.

When we consider a big event, we must list a large number of cases to evaluate the robustness of road traffic to accidents, whereas the scenarios mentioned above pertain to normal situations that are repeated every day. Because various situations affect traffics, the number of situations increases quickly. These costs are plotted as “Tokyo, big event”, “metropolis, big event” and “whole Japan, big event” in Fig. 4, and they require exa-scale computational power.

Market simulation

Market simulations are another important application of multiagent simulations, in which agents directly affect each other by selling/buying stocks and/or currencies [3]. Compared with evacuation and traffic simulations, market simulations are not constrained by physical space. Therefore, the time cycles of agents’ interactions may be quite short. Moreover, the ways of thinking of agents show large variations. This means that the market simulations also require huge computational cost.

As the reference point of the calculation cost in market simulations, we present the case of “tic size” evaluation. In this scenario, we conducted a simulation of multiple markets having different tic sizes, which is the minimum price unit for trading stocks. Market companies such as Japan Exchange Group internationally compete with each other by providing attractive services to traders. A small tic size is one of such services that considerably increases cost. Therefore, such organizations need evaluations of changes to such services in advance. In collaborative works with Japan Exchange Group, we conducted a simulation experiment to find key conditions that determine market share among markets. In the simulation, we considered the following scenario:

-

one good in two markets, 1000 agents, and 10 million cycles

-

five cases of tic size and 100 simulation runs per case

This simulation takes about one day when using a single thread on a Xeon E5 CPU. As the reference point, we plot this as “tic size” in Fig. 5.

We are considering extending the market simulations to various applications used for stock market analyses. For example, it is in the interest of market companies to determine “daily limit” and “cut-off” prices[4]. In this case, the simulation must handle 10–20 goods. Moreover, evaluating the effects of “arbitrage” [3], which involves trading rather quickly in intervals of milliseconds, is important from the viewpoint of maintaining sound market conditions. This will increase the computational cost, as plotted in Fig. 5. Another topic is the evaluation of “Basel Capital Accords”, which deal with the soundness of banks in markets. In the present study, we executed the case of three names for the Basel Accords, but we will extend it to 100 names in the real application.

The evaluation of “systemic risks of inter-bank network” is an important issue in market evaluation. However, currently, the computational cost of a naive simulation exceeds exa-scale HPC.

Research issues and methodologies of MASS

Application fields of MASS

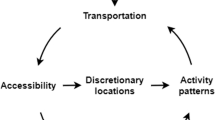

Three level of application domain of mass and their roadmap (taken and translated from [9])

MASS can be applied to a variety of domains such as economy, transportation, welfare, and administration. Transdiciplinary Federation [9], classified the applications of MASS into three levels, namely person, organization, and social. Each level has a roadmap and stages of research as shown in Fig. 6. In addition, they introduced a four-phase transition of simulation output as follows; as-is , as-if, would-be and will-be. In the as-is phase, the main target of MASS is to reproduce a real-world situation by means of data assimilation. In the as-if phase, the initial conditions of the simulation can be modified to estimate what may happen under the new conditions. In the would-be phase, a large number of simulation conditions are listed to cover variations of possible worlds [2]. The computational roadmap described in “Computational roadmaps of social simulations” section corresponds to this phase. Finally, in the will-be phase, the simulation can be used to design social systems to satisfy certain criteria or functionalities. There are several application domains at each level and each phase as shown in Fig. 6 in the form of a roadmap.

Ways to use simulation

Similar to other simulation technologies, MASS can be used in various ways.

The most typical and simple use of MASS is optimization. In this use case, the goal is to find the best solution to maximize a certain evaluation function of a certain social system. The will-be phase in the previous section corresponds to this use case. For example, traffic simulation can be used to determine the best road-development plan for maximizing the smooth flow of traffic in a town.

Furthermore, finding trade-off structures is an important use of MASS. Most social problems involve multiple evaluation functions that should be maximized. MASS can be used for such multiple objective optimization to determine the trade-off in a given social problem. This use case falls under the would-be phase. For example, the implementation of disaster countermeasure generally involves a trade-off between effectiveness and cost of the countermeasure. It will be possible to develop a MASS to estimate both effectiveness and cost and apply it to find a set of Pareto-optimal solutions of the trade-off.

Another important use of MASS is to determine prime factors of problem states. If we can develop MASS in the as-if phase, we can test various conditions to determine the simulation conditions that influence the occurrence of problem states. Furthermore, we can apply MASS to find problems. We tend not to recognize whether a current social situation involves a certain problem because most social situations exist because of historical changes. Using MASS, we can release social conditions from historical biases and consider new possible forms of society. For example, [5] highlighted the advantages of an on-demand bus system operation at the city-wide scale as a replacement for current public transportation systems using MASS.

Concluding remarks

In this article, we attempted to illustrate roadmaps and research issues pertaining to multiagent social simulation for various real applications. Our roadmap comprises 2D planes with two axes, “number of situations” and “complexity of one simulation session”. We have placed possible application examples and their scale-ups on the planes based on computational costs.

In the roadmaps, we naively counted the number of scenarios because we needed to know the actual numbers of possible scenarios. We can apply several methods based on design of experiment and other optimization/learning methods to reduce the number of scenarios that must be run.

Moreover, we need to investigate the cost of the thinking by each agent. In the above evaluation, we assumed that the intelligence of each agent will not change; therefore, the complexity of thinking of each agent is constant. However, in further studies, we will need to introduce more sophisticated and complex thinking engines to realize more intelligent and adaptive behaviors such as those humans.

As mentioned in "Introduction" section, multiagent social simulation is an evolving research domain and is currently being established. However, the demand for applications from various domains is becoming stronger. Therefore, it is important to determine a measure of importance of the achievements in this domain. The roadmaps shown in this article will serve as a testbed to develop such measures.

References

Aguilar, L., Lalith, M., Ichimura, T., Hori, M. (2017). On the performance and scalability of an hpc enhanced multi agent system based evacuation simulator. Procedia Computer Science, (Vol. 108(Supplement C), pp. 937–947). International Conference on Computational Science, ICCS 2017, 12–14 June 2017, Zurich, Switzerland.

Casti, J. L. (1997). Would-be Worlds: How simulation is changing the frontiers of science. New York: Wiley

Kawakubo, S., Izumi, K., & Yoshimura, S. (2014). Analysis of an option market dynamics based on a heterogeneous agent model. Intelligent Systems in Accounting, Finance and Management, 21, 105–128.

Mizuta, T., Izumi, K., Yagi, I., & Yoshimura, S. (2013). Design of financial market regulations against large price fluctuations using by artificial market simulations. Journal of Mathematical Finance, 3(2A), 15–22.

Noda, I., Masayuki, O., Kumada, Y., & Nakashima, H. (2005). Usability of dial-a-ride systems. In Proceedings of AAMAS-2005 (p. 726)

Nurdin, Y., Yuliana, D. K., Noda, I., Soeda, S., & Yamashita, T. (2010). Disaster evacuation simulation with multi-agent system approach using netmas for contingency planning (meulaboh case study). In Proceedings of 5th annual international workshop & expo on sumatra tsunami disaster & recovery 2010, AIWEST-2010.

Osogami, T., Imamichi, T., Mizuta, H., Morimura, T., Raymond, R., Suzumura, T., Takahashi, R., & Ide, T. (2013). Ibm mega traffic simulator. IBM Research and Development Journal

Osogami, T., Mizuta, H., & Ide, T. (2013). Identifying the optimal road closure with simulation. In Proceedings of the 20th ITS World Congress Tokyo 2013.

Transdiciplinary Federation, (Ed.) (2009). Academic Roadmap of Multidisciplinary Science and Technology (in Japanese) (Chapter 4, pp. 85–169). Transdiciplinary Federation of Science and Technology.

Yamashita, T., Matsushima, H., & Noda, I. (2014). Exhaustive analysis with a pedestrian simulation environment for assistant of evacuation planning. In Prof. of PED 2014 (pp. SE05–3).

Yamashita T., Soeda S., Noda I. (2009). Evacuation planning assist system with network model-based pedestrian simulator. In: J. J. Yang, M. Yokoo, T. Ito, Z. Jin, P. Scerri (Eds.), Principles of practice in multi-agent systems. PRIMA 2009. Lecture notes in computer science (pp. 649–656). Heidelberg: Springer.

Yamashita, T., Soeda, S., & Noda, I. (2010). Assistance of evacuation planning with high-speed network model-based pedestrian simulator. In Proceedings of fifth international conference on pedestrian and evacuation dynamics (PED 2010) (p. 58. PED 2010).

Acknowledgements

This work is supported by MEXT as Exploratory Challenges on Post-K computer(Study on multilayered multiscale spacetime simulations for social and economical phenomena)”, and JST CREST.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Noda, I., Ito, N., Izumi, K. et al. Roadmap and research issues of multiagent social simulation using high-performance computing. J Comput Soc Sc 1, 155–166 (2018). https://doi.org/10.1007/s42001-017-0011-8

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s42001-017-0011-8