Abstract

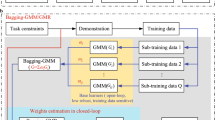

This paper develops an enhanced teaching interface tested on both a Baxter robot and a KUKA iiwa robot. Movements are collected from a human demonstrator by using a Kinect v2 sensor, and then the data is sent to a remote PC for the teleoperation with Baxter. Meanwhile, data is saved locally for the playback process of the Baxter. The dynamic movement primitive (DMP) is used to model and generalize the movements. In order to learn from multiple demonstrations accurately, dynamic time warping (DTW), is used to pretreat the data recorded by the robot platform and Gaussian mixture model (GMM), aiming to generate multiple patterns after the teaching process, are employed for the calculation of the DMP. Then the Gaussian mixture regression (GMR) algorithm is applied to generate a synthesized trajectory with smaller position errors in 3D space. This proposed approach is tested by performing two tasks on a KUKA iiwa and a Baxter robot.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

With the advance of new robotic technology, the application of robots in both industry and social service fields has been widely used. The natural and friendly human–robot interaction plays an increasingly important role to bring robot technology into human daily life (Chin and Yue 2011). Especially, robotic skill learning receives much attention in the robotics area. Wherein, through imitation and skill learning from human operators, the robots are able to optimize the execution of a specific task. The research focusing on understanding the human motion based on teaching by demonstration (TbD) has attracted wide interest during the past decades (Li et al. 2017). In Li et al. (2014), a slave/master controller based TbD is developed to retain the kinematic constraint by using the tracking system. A trajectory learning approach for multi-robot interaction for welding task has been developed in Chernova and Veloso (2008). The ability of human skills transferred from demonstrators has a huge influence on robot intelligence, and is an important way of robot learning, successfully avoiding the difficulties of the control of the complex movement imitated from human.

The teaching process from a human operator transfers the motor skills to the imitator (robot) through recording the motion of the learning movement and generalized output Calinon et al. (2014). In Chin and Yue (2011), a research team develops a problem-based learning (PBL) method on an autonomous vacuum robot with mechatronics systems, by doing this, the students are able to have hands-on experience. In addition, an actuated dynamics technology based proportion-integral-derivative (PID) controller is proposed in Chin et al. (2016), and the global exponentially boundedness of the unactuated dynamics has been established. The acquisition of teaching data is realized through body sensation, which conveys human–computer dialogue effectively. This has a wide usage in intelligent identification and control system as a natural way of human–computer interaction. Common somatosensory devices include wear systems, such as 3DSuit, data gloves, and optical movement capture system, such as Microsoft’s Kinect somatosensory camera (Frick and Alberts 2006).

Gaussian mixture model (GMM) is a commonly used clustering algorithm. It approximates the complex distribution accurately. It first extracts a feature element of each unit in the sample, then uses the Gaussian mixture model to cluster these features as an object, finally obtaining the segmentation result. In Huang et al. (2005), the authors used continuous myoelectric signals and employed GMMs for multiple limb motion classification. In addition, earlier in the 19th century, scientists used a finite GMM whose parameters are estimated through the EM algorithm by estimating a probability density function of human skin color (Yang and Ahuja 1998). Playback process of a robot includes movement control and movement trajectory reproduction. Trajectory reproduction is employed when the encoded data is sent to regression techniques, such as Gaussian Mixed Regression (GMR) (Grollman and Jenkins 2008). Movement control is the result of mapping robot movement trajectories, which is aiming to acting the reproduction. To be precise, it is the playback process after the learning process from a demonstrator. In addition, human skill transfer can be achieved optimally by programming with special characteristic methods, such as dynamic movement primitive (DMP) (Schaal et al. 2005).

With a purpose to making robots complete operation tasks such as grabbing objects in dynamic and complicated environments, robots should have the ability of obstacle avoidance. The dynamic movement primitive (DMP) is used to model and generalize the motion trajectory inside the obstacle environment via combining the specific planning algorithm with its generalization and anti-jamming. In Kormushev et al. (2011), the DMP model combined with the synthetic capacity discipline method is used to comprehend the trajectory generation for the spherical impediment. In Stulp et al. (2013), the authors presented that the linear forcing term of the DMP can be represented by defining a function approximator. The method calls for unique obstacle records to calculate the repulsive pressure within the vicinity of the impediment. Gaussian mixture model (GMM) is broadly used in pattern recognition and facts evaluation, wherein, Gaussian mixture regression (GMR) is a widely-used quantitative analysing approach. The GMM can clean the probability distribution of arbitrary shapes. In Pervez and Lee (2018) and Hersch et al. (2006), authors developed a proposed Gaussian Mixture Model to represent the non-linear term, which is not required to manually specify the parameters of the basis functions. However, most probability estimation methods are often not able to attain whole information. For example, the sample is known, however the sample category of which Gaussian distribution is unknown. Hence it is desirable to employ the EM solution (most expectations value).

In addition, research in the field of human physical motions through tracking system has attracted public focus recently Yang et al. (2011). In Faion et al. et al. (2012), a research team developed a method, wherein a Bayesian based object tracking system with special focus on Microsoft KinectTM devices to intelligently schedule a network of multiple RGBD sensors. A kinematics based skeletal tracking system using Microsoft Kinect sensor with an upper limb virtual reality rehabilitation system has been investigated (Tao et al. 2013). In Raheja et al. (2011), a method of tracking the fingertips and palm centre has been developed using the Kinect sensor.

Dynamic Time Warping (DTW) is a measurement of the similarity between two time series. Its usage has been in the area of speech recognition to discover whether two words constitute the same phrase. In the time collection, the period of the two time series may not be identical, and the DTW calculates the similarity between the two time collection by using extending and shortening the time series (Calinon et al. 2007). In this paper, a Baxter robot has firstly been used to test the proposed teaching method by performing obstacle avoidance with different heights after the DMP is applied. Then a KUKA iiwa robot has been used to prove the achievement of our designed teaching method by drawing curves in a horizontal flat paper programming by recording a sequence of actions taught by a human demonstrator, after that we use the DTW and GMR to analyse and generalize the recorded movements. By doing this, the robot could playback in the vertical plan, which shows the success of our developed teaching interface.

2 Preliminary

2.1 Baxter robot

Baxter robot (Fig. 1) has been used mainly in the industrial research fields. The humanoid Baxter robot of Rethink Robotics is a popular collaborative robot (Li et al. 2017). The Baxter robot includes one torso, one 2-DOF head and two 7-DOF arms, which are shoulder joints: \(s_{0}\), \(s_{1}\), elbow joints: \(e_{0}\), \(e_{1}\) and wrist joints: \(w_{0}\), \(w_{1}\), \(w_{2}\), respectively, running under the ROS and Linux operating systems (Li et al. 2017).

2.2 KUKA iiwa robot

KUKA iiwa robot (with man-machine collaboration capabilities) is the first mass production of sensitive robots. The KUKA iiwa robot is able to achieve direct cooperation between human and robot to complete tasks of high sensitivity requirements (Schreiber et al. 2010). It has an advanced precision 7° of flexibility (DOFs) robot arm (Schreiber et al. 2010). The arm has been programmed via Workbench, which is a standard KUKA modifying platform employing KUKA robot language (KRL) and Java (Schreiber et al. 2010). The KUKA iiwa is controlled by the KUKA SmartPad shown in Fig. 2.

2.3 Kinect v2

The Kinect v2 is an RGB-D device, released by Microsoft, which captures depth, colour, and IR images (Khoshelham and Elberink 2012). By use of the software development kit (SDK) released with the sensor, captured colour and depth information can be transformed into real-world frames, known as Camera Space. These frames are referenced to the centre of the depth sensor (Webb and Ashley 2012). The users also are able to obtain the skeletal tracking system via Kinect 2.0 SDK. This feature has been used in this paper, where the position of operators can be tracked by standing in front of the device.

2.4 Gaussian mixture model

The Gaussian Mixture Model refers to the estimation of the probability density distribution of the samples, and the estimated model is the weighted sum of several Gaussian models (Ren et al. 2017). Each Gaussian model represents a class. The data in the sample are projected on several Gaussian models, respectively, and the probability of each class is obtained (Ren et al. 2017). Then the probability of the largest class for the results of the calculation can be selected by GMM Pervez and Lee (2018). In addition, the significance of GMM is to construct a series of GMMs to describe the joint density, and then obtain the probability density and regression function from each GMM.

The formulation of GMM is defined as,

where k is the number of the model, \(p_{k}\) is the weight of the \(k_{th}\) Gaussian, and it is also the \(k_{th}\) Gaussian probability density function, the average value of GMM is \(\mu _{k}\), the variance is \(\sigma _{k}\). Our estimation of this probability density is to require \(p_{k}\), \(\mu _{k}\) and \(\sigma _{k}\) variables. When the expression is obtained, the result of the summation is the probability of the sample \(x_{exp}\) belonging to each class.

2.5 Dynamic time warping

The dynamic time regular method DTW is a typical optimization method. It describes the time correspondence between the test template and the reference template with the time regular function W(n) to solve the regular function corresponding to the minimum distance of the two templates (Petitjean et al. 2014). This algorithm is developed from dynamic programming (DP), and is widely used to match the templates with different lengths (Muda et al. 2010). It follows a classic algorithm for speech recognition.

The DTW calculates the similarity between two time series by extending and shortening the time series. As shown in Fig. 3, the upper and lower solid lines represent two time series, and the dashed lines between the time series represent similar points between the two time series. The DTW uses the sum of the distances between all these similarities, which is called Warp Path Distance, to measure the similarity between the two time series.

Modified from Petitjean et al. (2014)

Warping example between two time series.

2.6 Dynamic movement primitive

DMP, that is a dynamical system developed from investigations into organic studies, learns from movement primitives to generate a sophisticated one (Mueen and Keogh 2016). The concept of movement dynamic primitives can be divided into two categories: constitute the states using unique formulations based on dynamical structures (Petitjean et al. 2014); and generating trajectories by way of interpolating the through factors (Ma et al. 2017). DMP consists of 2 components: a converted system r and a canonical system h. The formula is given as follows,

where x is the transformed system states, s is the canonical system states and w is the transforming parameters of the canonical system output.

3 The enhanced teaching interface

In this section, we investigate the algorithm method for teaching process, playback and the generalizing task, including the DMP, the GMR and the DTW.

3.1 Calculation of arm joint angles

According to our previous work in Li et al. (2016), a Denavit Hartenberg (DH) featured system chart has been created shown in Fig. 4 to represent the 7-DOF model of our human arm. The DH kinematic parameters of the human arm are indexed in Table 1. In step with the DH approach, the outline of the coordinate frames transformation from body i to border \(i-1\) can be calculated.

Human arm model and its DH coordinate frames modified from Li et al. 2016

The skeleton data of a human in 3-d positions could be obtained by using the Kinect sensor, which consist of 25 joints and this is shown in the right side of Fig. 5. Then, we make the arm model visible by creating the geometry model, which is shown in the left side of Fig. 5 in our previous work (Li et al. 2016). Next, the point Hip-Left is selected as the origin, at the same time as x-axis is within the identical path of vector \(\overrightarrow{AO}\) and y-axis is in conjunction with vector \(\overrightarrow{OC}\) (Li et al. 2016). It is simple to align the regular vector of every axis to the base coordinate, \(\overrightarrow{X_{0}}\), \(\overrightarrow{Y_{0}}\) and \(\overrightarrow{Z_{0}}\) (Li et al. 2016):

The geometry model for human arm in joint space and screenshot of skeleton tracking system modified from Li et al. 2016

In our previous work (Li et al. 2016), it was found that the plane COD and plane xOy form the supplement angle \(\theta _{1}\).

\(\theta _{2}\) is the angle formed by vector \(\overrightarrow{CD}\) and y-axis, which is shown as follows (Li et al. 2016):

Similarly, the plane BCD and plane CDE form the angle \(\theta _{3}\) in (Li et al. 2016).

\(\theta _{5}\) is the angle between plane CDE and DEH Li et al. (2016).

Angle \(\theta _{6}\) is formed by vector \(\overrightarrow{ED}\) and plane EFH according to Li et al. (2016).

However, the angle \(\theta _{7}\) is difficult to calculate using the above method, which is the yaw angle of wrist (Li et al. 2016). \(\theta _{7}\) could be treated as forming by \(\overrightarrow{X_{5}}\) and \(\overrightarrow{Y_{7}}\) (Li et al. 2016). While,

so now the problem becomes to solve \(\overrightarrow{X_{5}}\). We know that \(\overrightarrow{X_{5}}\) is in the plane of EFH, and \(\overrightarrow{X_{5}}\) is perpendicular to \(\overrightarrow{DE}\). Supposing that:

There are:

Therefore,

By doing the calculation above, the every single joint angle is able to be obtained. Here we still need to consider two situations in order to make the calculations exact: (1) the left thumb must be inside the equal plane with the palm. (2) The angles of vectors change from zero to \(\pi \), here further problems need be addressed (Li et al. 2016). Those above seven angles and their preliminary positions are shown in Fig. 4 in the joint space. The proposed geometry vector technique is based on the precept of cosine cost of two vectors proven in (11). Furthermore, the attitude among two planes may be calculated by giving their regular vector (Li et al. 2016).

3.2 Pretreatment of the experimental data

The DTW algorithm is based totally on the concept of dynamic programming, and its purpose is to locate the shortest distance and highest quality matching path among two distinct check samples and reference templates. Let us define the reference time collection as \(T=\left\{ t_{1},t_{2},t_{3},\cdots ,t_{i},\cdots ,t_{L_{1}} \right\} \) and the test sample as \(R=\left\{ r_{1},r_{2},r_{3},\cdots ,r_{j},\cdots ,r_{L_{2}} \right\} \), wherein \(t_{i}\) and \(r_{j}\) denote the joint attitude values of the time factors, \(L_{1}\) and \(L_{2}\) denote the vector lengths. The space matrix D(i, j) collects when the vectors T and R are non-linearly matched is (Petitjean et al. 2014):

where \(d(t_{i},r_{j})\) is the distance function of \(t_{i}\) and \(r_{j}\), the \(D(L_{1},L_{2})\) is the shortest distance between T and R. The smaller the \(D(L_{1},L_{2})\) is, the closer distance between T and R is.

In our research, the DTW approach has been used to align the recorded patterns by giving a warped characteristic \(W=\left\{ w_{1},w_{2},\cdots ,w_{p},\cdots ,w_{P} \right\} \), where \(w(p)= (i_{p},j_{p})\) is the match factor (Petitjean et al. 2014). Here the warped characteristic W is needed to decrease the gap between the check sample vector and the reference template vector, therefore the above equation may be rewritten as:

where \(d[w(p)]=d[T_{i}(p),R_{j}(p)]\) describes the distance measure between the \(i(p)_{th}\) feature of the test sample vector and the \(j(p)_{th}\) feature of the reference template vector, which is usually characterized by a square measure which is defined as follows:

Assuming that the most desirable direction between the factors (1, 1) to (i, j) in the coordinate system is not related to the route after the point (i, j), the recursive method can be used to locate the most fantastic course. Here we outline the minimum cumulative distance among the two factors as \(D_{Acc}(i, j)\), then we find that Petitjean et al. (2014):

where \((q_{i}, q_{j})\) belongs to the set of all points within a certain path that exists between points (1, 1) and (i, j). It can be seen from the above components that the minimal cumulative distance of the factor (i, j) is related to not only the local distance \(d(T_{i}, R_{j})\) of the eigenvalues \(T_{i}\), \(R_{j}\), but also the minimal cumulative distance earlier than this point in the coordinate system Petitjean et al. (2014).

Hence we conclude that \((i, j-1)\), \((i-1, j)\) and \((i-1, j)\) for any point \(c(p) = (i, j)\) in the coordinate system can reach the preceding point of c(p), so the selection of the preceding factor only needs to align with the three above factors. According to the equation below, we can calculate the equal DTW distance among the check pattern vector and the reference template vector, which is shown as follows:

3.3 Trajectory generation

The canonical system of DMP is an exponential differential equation given by:

where \(\tau \) > 0 is the temporal scaling factor, \(\alpha _{h}\) > 0 is the stable parameter and s is the phase value varied from 0 to 1.

The transformed system is made up of two contents (Bodiroža et al. 2013): a spring damping system and a nonlinear term, which is described as follows in Cartesian Space:

where \(x \in R\) is the Cartesian position, \(x_{0}\) is the start position, \(v \in R\) is the velocity of the robot end-effector, g donates the target, k and c are the coefficients for spring and damping respectively. The transformation function f presents the complex nonlinear system, and it transforms the result of the canonical system, which is given by:

where N is the number of GMM, \(p_{i} \in R\) is the weight, l is the variable value of the normalized radial.

After selecting the start line \(x_{0}\) and goal g of the canonical system s = 0, and integrating the canonical system, we are able to generate a motion by the usage of the weight parameter (Hogan and Sternad 2012). The significance of DMP here is to obtain the nonlinear transformation characteristic f(s) through skill transfer from the demonstrator. However, there is an issue in creating the transformed system through the usage of more than one verified path (Yin and Chen 2017). Therefore we applied the GMM to overcome the above problems.

The GMM is the estimation of the probability density distribution of the samples (Reynolds et al. 2000). The estimated version is the weighted sum of numerous Gaussian models and every Gaussian version represents a class (Reynolds et al. 2000). In this paper, the joint probability of the nonlinear system is the teaching data encoded through GMM, and the records is reconstructed via GMR to generalize the movement trajectory. For any degree of freedom, given j teaching data factors \(\xi _{j}= \left\{ s_{j}, f_{j} \right\} \), j \(\in R\), where \(s_{j}\) and \(f_{j}\) had been defined in DMP segment, N is the number of records points contained in a single training, every teaching data \(\xi _{j}\) follows the subsequent probability distribution:

where p(k) is the prior probability, \(p(\xi _{j}\mid k)\) is the conditional probability distribution, which follows the Gaussian distribution, and k is the number of Gaussian model distribution. Thus, the whole set of teaching data can be expressed by the Gaussian mixture model as follows (Yu et al. 2016):

where D is the dimension of the GMM encoding the teaching data. Here we used BIC method to obtain the value of k (Burnham and Anderson 2004).

where \(L(\xi _{j})\) measures the model’s characterization of data, n(k) is the number of free parameters of the model, which is a measure of the complexity of the model.

The parameters of GMM need to be determined, which are denoted as \(\left\{ \pi _{k}, \mu _{k}, \sum _{k} \right\} \). That is the \(k_{th}\) component of prior probability, expectations and variance, respectively. The EM algorithm is used to estimate the GMM parameters, which are obtained by giving the maximum similarity estimation of the parameters in the probability model, expectations and variance, respectively.

The teaching data \(\xi _{j}\) is used as the query point, and the corresponding spatial value \({\xi }'_{f}\) is estimated using GMR. It is known that \(p(\xi _{j}\mid k)\) satisfies the Gaussian distribution, \(\left( {\begin{array}{c}\xi _{f,k}\\ \xi _{s,k}\end{array}}\right) \sim N(\mu _{k},\sum _{k})\), where \(\mu _{k}\)= \(\left\{ \mu _{f,k},\mu _{s,k} \right\} \), \(\sum _{k}\)=\( \begin{Bmatrix} \sum _{f,k}&\sum _{fs,k} \\ \sum _{sf,k}&\sum _{s,k} \end{Bmatrix}\), and the conditional probability of \(\xi _{f}\) and k satisfies the Gaussian distribution as given \(\xi _{s}\), k (Yu et al. 2016).

Then we have

where we could calculate the variance \({\sum }'_{f}\) and the average \({\mu }'_{f}\) of the k th GMM component, which is shown as follows (Yu et al. 2016):

where \({\mu _{f}}'\) is the estimation acquired through the distribution of the expected conditions, and \(\xi _{s}\) is similar to the reconstruction of area values (Yu et al. 2016). The generalized data of points are \({\xi _{f}}',\xi _{s}\), which are included in the teaching data and able to produce a smooth motion trajectory under the covariance constraint \({\sum _{f}}'\).

4 Experimental studies

A Baxter robot and a KUKA iiwa robot are used in our experiments to verify the effectiveness of the proposed method. Baxter has an advanced precision 7° of flexibility (DOFs) robot arm. The arm could be programmed utilizing Ubuntu operaion system, which is a standard Baxter programming platform employing python language. KUKA iiwa robot has man-machine collaboration capabilities. It is able to achieve direct cooperation between human and robot to complete the task of high sensitivity requirements. As for the experimental platform, PC operation system is Windows 10. There is also Kinect SDK for Windows, Visual studio 2013 and OpenCV library. The KUKA robot needs to be programmed via Workbench, which is a common modifying platform combined with KUKA robot language (KRL) and Java. The experiments are conducted under laboratory conditions under adequate lighting.

4.1 Obstacle avoidance experiment

In this experiment, several tests have been designed to test the performance of our designed system by controlling the Baxter to avoid a high obstacle. Only one person stands forward to Kinect with a distance of about 2 m. Here, the operator guilds the Baxter to avoid the high obstacle by using teleoperation, which is shown in Fig. 6. At the same time, those data of each joints of operator’s arm is recorded, which is used for the playback of the Baxter robot. After that, we increase the height of the obstacle, it is noticed that the Baxter is not able to pass the obstacle successfully. Hence, the DMP has been employed to generalize the trajectory of the Baxter robot. By doing this, the Baxter is finally able to pass through the obstacle successfully with the increasing height.

4.2 Trajectory generalizing experiment

For this experiment, we test on a KUKA iiwa robot, the recorded data processed by using MATLAB from the movements of a demonstrator saved locally. Then the result data generated by the trajectories are sent to a separate computer and obtained access to control the robot arm. A marker pen is connected to draw patterns on a horizontal flat surface. A sine wave is chosen to be demonstrated, wherein the ability of the designed technique is tested extraordinarily with complex shapes. During the experimental process, we use a pre-revealed template shape on a sheet of A4 paper. Then, a human operator guides the KUKA robot to follow the template by handing the robot 5 times, which is shown in Fig. 7. The movement of the robotic endpoint is recorded at some stage in demonstration.

The five recorded motion based trajectories in Cartesian space are saved, where the analysed data are initiated by K-means method and EM algorithm to obtain the GMMs. The experimental trajectories are plotted through MATLAB. After that, the DTW is used to align the 5 trajectories, where the first curve is selected as the reference to align all the other patterns, which is shown in Fig. 8 for the warping results. Then, GMM is used to encode the trajectories. Finally, the KUKA robot is able to reproduce a generalized curve on the vertical surface.

4.3 Results

The first group of experiments aim to verify the performance of the proposed DMP, including the ability of generalization, i.e., spatial scaling, and the learning performance when the demonstration is defective. In this experiment, the demonstration process uses the joints s1, e0 and w2 fixed, and the angles of the joints s0, e1, w0 and w1 which are recorded during the process. Then the demonstration data are used for the training of the modified DMP. The training result is shown in Fig. 9. It can be seen from the graph that the maximum and the minimum values of Shoulder Pitch between the data from demonstration and playback, in some specific time point, are differing about 0.4 radians, meaning that the range of the arm motion of Baxter is increasing at about 0.4, which leads to an accurate motion. The motion of joint Shoulder Pitch is regenerated from the demonstration, which synthesizes the features of the demonstration and enables the robot to perform the obstacle passing task successfully as shown in Fig. 6d.

As shown in the left side of Fig. 10, for the second experiment, five distinctly separate curves are drawn horizontally. It can be concluded that, the number of GMMs effect the result trajectories. Hence, to achieve good performance of the generated trajectories, the number of GMM components is chosen as 20 in this paper. In addition, the optimal result trajectory is shown in the right side of Fig. 10. By using the GMR method, we transform the data retrieval problem of TbD into a joint distribution estimation problem, which is approximated by a mixture of Gaussians. During the calculation, the key point of learning process is correlative to the number of points in the sample set of data linearly. Here the prediction process relies on this number.

After that, we modified the DMP code to apply the spatial and temporal generalization. The generalized curve is then able to be drawn on a vertical flip chart pad by the playback process of KUKA robot, as is shown in Fig. 11, a smooth curve is retrieved from multiple demonstrations using the modified DMP, where the playback process can be achieved with 5 times’ speed, which is proved to achieve the proposed temporal generalization. In our future work, some teaching by demonstration based tasks would be researched by applying the DMP segmentation.

5 Conclusion

A GMR and DMP combined with DTW based teaching by demonstration technology has been developed in this paper, which is an effective and superior method for humans to interact with the robot. The Kinect V2 sensor is used to teleoperate the Baxter so that the manipulator is able to achieve the generated motions more accurately. For the motion generation, the discrete DMP is selected as the basic motion model, which can achieve the generalization of the motions. To improve the learning performance of the DMP model, the GMM and GMR are employed for the estimation of the unknown function of the motion model. The DMP model is enabled to retrieve a better motion from multiple demonstrations of a specific task. Two experiments have been applied to test the performance of our designed teaching interface. The experimental results have verified the effective generalization of the proposed methods. Compared with the standard teaching approaches, the emphasis of the proposed teaching interface evaluates the DMP for multiple demonstrations by combining with GMR, meanwhile, all the experimental data have been initially pre-treated by DTW.

References

Bodiroža, S., Doisy, G., Hafner, V.V.: Position-invariant, real-time gesture recognition based on dynamic time warping. In: Human-Robot Interaction (HRI), 2013 8th ACM/IEEE International Conference on, pp. 87–88. IEEE (2013)

Burnham, K.P., Anderson, D.R.: Multimodel inference: understanding aic and bic in model selection. Sociol. Methods Res. 33(2), 261–304 (2004)

Calinon, S., Guenter, F., Billard, A.: On learning, representing, and generalizing a task in a humanoid robot. IEEE Trans. Syst. Man Cybern. Part B (Cybernetics) 37(2), 286–298 (2007)

Calinon, S., Bruno, D., Malekzadeh, M.S., Nanayakkara, T., Caldwell, D.G.: Human-robot skills transfer interfaces for a flexible surgical robot. Comput. Methods Progr. Biomed. 116(2), 81–96 (2014)

Chernova, S., Veloso, M.: Teaching multi-robot coordination using demonstration of communication and state sharing. In: Proceedings of the 7th international joint conference on Autonomous agents and multiagent systems-Volume 3, pp. 1183–1186. International Foundation for Autonomous Agents and Multiagent Systems (2008)

Chin, C.S., Yue, K.M.: Application of an intelligent table-top vacuum robot cleaner in mechatronics system design education. J. Robot. Mechatron. 23(5), 645 (2011)

Chin, C., Yue, K.: Vertical stream curricula integration of problem-based learning using an autonomous vacuum robot in a mechatronics course. Eur. J. Eng. Educ. 36(5), 485–504 (2011)

Chin, C.S., Lau, M.S., Low, E., Seet, G.L.: A robust controller design method and stability analysis of an underactuated underwater vehicle. Int. J. Appl. Math. Comput. Sci. 16, 345–356 (2006)

Faion, F., Friedberger, S., Zea, A., Hanebeck, U.D.: Intelligent sensor-scheduling for multi-kinect-tracking. In: Intelligent Robots and Systems (IROS), 2012 IEEE/RSJ International Conference on, pp. 3993–3999. IEEE (2012)

Frick, E.M., Alberts, J.L.: Combined use of repetitive task practice and an assistive robotic device in a patient with subacute stroke. Phys. Ther. 86(10), 1378–1386 (2006)

Grollman, D.H., Jenkins, O.C.: Sparse incremental learning for interactive robot control policy estimation. In: Robotics and automation, 2008. ICRA 2008. IEEE International Conference on, pp. 3315–3320. IEEE (2008)

Hersch, M., Guenter, F., Calinon, S., Billard, A.G.: Learning dynamical system modulation for constrained reaching tasks. In: Humanoid Robots, 2006 6th IEEE-RAS International Conference on, pp. 444–449. IEEE (2006)

Hogan, N., Sternad, D.: Dynamic primitives of motor behavior. Biol. Cybern. pp. 1–13 (2012)

Huang, Y., Englehart, K.B., Hudgins, B., Chan, A.D.: A gaussian mixture model based classification scheme for myoelectric control of powered upper limb prostheses. IEEE Trans. Biomed. Eng. 52(11), 1801–1811 (2005)

Khoshelham, K., Elberink, S.O.: Accuracy and resolution of kinect depth data for indoor mapping applications. Sensors 12(2), 1437–1454 (2012)

Kormushev, P., Calinon, S., Caldwell, D.G.: Imitation learning of positional and force skills demonstrated via kinesthetic teaching and haptic input. Adv. Robot. 25(5), 581–603 (2011)

Li, C., Ma, H., Yang, C., Fu, M.: Teleoperation of a virtual icub robot under framework of parallel system via hand gesture recognition. In: Fuzzy Systems (FUZZ-IEEE), 2014 IEEE International Conference on, pp. 1469–1474. IEEE (2014)

Li, C., Yang, C., Liang, P., Cangelosi, A., Wan, J.: Development of kinect based teleoperation of nao robot. In: Advanced Robotics and Mechatronics (ICARM), International Conference on, pp. 133–138. IEEE (2016)

Li, C., Yang, C., Wan, J., Annamalai, A.S., Cangelosi, A.: Teleoperation control of baxter robot using kalman filter-based sensor fusion. Syst. Sci. Control Eng. 5(1), 156–167 (2017)

Liang, P., Ge, L., Liu, Y., Zhao, L., Li, R., Wang, K.: An augmented discrete-time approach for human-robot collaboration. Discrete Dynamics in Nature and Society 2016 (2016)

Ma, K., Schirru, M.M., Zahraee, A.H., Dwyer-Joyce, R., Boxall, J., Dodd, T.J., Collins, R., Anderson, S.R.: Robot mapping and localisation in metal water pipes using hydrophone induced vibration and map alignment by dynamic time warping. In: Robotics and Automation (ICRA), 2017 IEEE International Conference on, pp. 2548–2553. IEEE (2017)

Muda, L., Begam, M., Elamvazuthi, I.: Voice recognition algorithms using mel frequency cepstral coefficient (mfcc) and dynamic time warping (dtw) techniques. arXiv preprint arXiv:1003.4083 (2010)

Mueen, A., Keogh, E.: Extracting optimal performance from dynamic time warping. In: Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp. 2129–2130. ACM (2016)

Pervez, A., Lee, D.: Learning task-parameterized dynamic movement primitives using mixture of gmms. Intell. Serv. Robot. 11(1), 61–78 (2018)

Petitjean, F., Forestier, G., Webb, G.I., Nicholson, A.E., Chen, Y., Keogh, E.: Dynamic time warping averaging of time series allows faster and more accurate classification. In: Data Mining (ICDM), 2014 IEEE International Conference on, pp. 470–479. IEEE (2014)

Raheja, J.L., Chaudhary, A., Singal, K.: Tracking of fingertips and centers of palm using kinect. In: Computational intelligence, modelling and simulation (CIMSiM), 2011 third international conference on, pp. 248–252. IEEE (2011)

Ren, H., Xu, D., Li, W.: Modeling the uncertainty in finger-vein authentication by the gaussian mixture model. In: Proceedings of the 2017 4th International Conference on Biomedical and Bioinformatics Engineering, pp. 39–42. ACM (2017)

Reynolds, D.A., Quatieri, T.F., Dunn, R.B.: Speaker verification using adapted gaussian mixture models. Dig. Signal Process. 10(1–3), 19–41 (2000)

Schaal, S., Peters, J., Nakanishi, J., Ijspeert, A.: Learning movement primitives. Robotics Research pp. 561–572 (2005)

Schreiber, G., Stemmer, A., Bischoff, R.: The fast research interface for the kuka lightweight robot. In: IEEE Workshop on Innovative Robot Control Architectures for Demanding (Research) Applications How to Modify and Enhance Commercial Controllers (ICRA 2010), pp. 15–21. Citeseer (2010)

Stulp, F., Raiola, G., Hoarau, A., Ivaldi, S., Sigaud, O.: Learning compact parameterized skills with a single regression. In: Humanoid robots (Humanoids), 2013 13th IEEE-RAS International Conference on, pp. 417–422. IEEE (2013)

Tao, G., Archambault, P.S., Levin, M.: Evaluation of kinect skeletal tracking in a virtual reality rehabilitation system for upper limb hemiparesis. In: Virtual Rehabilitation (ICVR), 2013 International Conference on, pp. 164–165. IEEE (2013)

Webb, J., Ashley, J.: Beginning Kinect Programming with the Microsoft Kinect SDK. Apress (2012)

Yang, M.H., Ahuja, N.: Gaussian mixture model for human skin color and its applications in image and video databases. In: Storage and retrieval for image and video databases VII, vol. 3656, pp. 458–467. International Society for Optics and Photonics (1998)

Yang, H., Shao, L., Zheng, F., Wang, L., Song, Z.: Recent advances and trends in visual tracking: a review. Neurocomputing 74(18), 3823–3831 (2011)

Yu, J., Men, Y., Ruan, X., Zhao, S.: The research and implementation of behavior imitation system about nao robot based on kinect. CAAI Trans. Intell. Syst. 2, 006 (2016)

Yin, X., Chen, Q.: Trajectory generation with spatio-temporal templates learned from demonstrations. IEEE Transac. Industr. Electro. 64(4), 3442–3451 (2017)

Author information

Authors and Affiliations

Corresponding author

Additional information

This work was partially supported by Royal Society International Exchanges award IE170247, Newton Mobility Grant IE150858, and Changzhou International Collaboration grant CZ20170018.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Li, C., Yang, C., Ju, Z. et al. An enhanced teaching interface for a robot using DMP and GMR. Int J Intell Robot Appl 2, 110–121 (2018). https://doi.org/10.1007/s41315-018-0046-x

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s41315-018-0046-x