Abstract

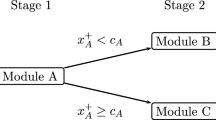

This article introduces conditional maximum-likelihood (CML) item parameter estimation in multistage designs based on probabilities \(p^{[b]}(x_{+}^{[b]})\) for choosing a particular module \({\textbf {m}}^{[b+1]}\) conditional on a raw score \(x_{+}^{[b]}\) in a previous module \({\textbf {m}}^{[b]}\). This type of multistage design is applied to ensure a minimum exposure rate for all items, for example, in international large-scale assessments (ILSAs). For the item parameter estimation, various likelihood-based methods are available. While the marginal maximum-likelihood method (MML) provides consistent estimates in multistage designs, the CML method in its original formulation leads to biased item parameter estimates. In this contribution, we will propose a modification of the common CML method for probabilistic routing strategies, based on the approach for deterministic routing strategies (Zwitser & Maris, 2015, Psychometrika), that provides practically unbiased item parameter estimates for the Rasch model. In a simulation study, it is shown that this modified CML estimation method also provides in probabilistic multistage designs, practically unbiased item parameter estimates.

Similar content being viewed by others

Notes

For the following illustration and the associated improvement of readability of the equations, we will refrain from using an additional index to differentiate the module assignment into stages. For more complex designs, an additional index for stages should be introduced.

data generating parameters can be found here: https://osf.io/us6nd/?view_only=ac149eadd25141fbacea40d32b251987.

References

Andersen EB (1970) Asymptotic properties of conditional maximum-likelihood estimators. J Roy Stat Soc: Ser B (Methodol) 32(2):283–301. https://doi.org/10.1111/j.2517-6161.1970.tb00842.x

Andersen EB (1972) The numerical solution of a set of conditional estimation equations. J Roy Stat Soc: Ser B (Methodol) 34(1):42–54. https://doi.org/10.1111/j.2517-6161.1972.tb00887.x

Andersen EB (1973) Conditional inference and models for measuring. Mentalhygiejnisk Forlag

Andrich D, Marais I (2019) A course in Rasch measurement theory. Meas Educ Soc Health Sci. https://doi.org/10.1007/978-981-13-7496-8

Aryadoust V, Tan HAH, Ng LY (2019) A scientometric review of Rasch measurement: the rise and progress of a specialty. Front Psychol. https://doi.org/10.3389/fpsyg.2019.02197

Baker FB, Kim S-H (2004) Item response theory. Parameter Estim Tech. https://doi.org/10.1201/9781482276725

Bechger T, Koops J, Partchev I, Maris G (2019) dexterMST: CML Calibration of Multi Stage Tests (R Package Version 0.1.2). https://CRAN.R-project.org/package=dexterMST Accessed on 03 April 2020

Betz NE, Weiss DJ (1974) Simulation studies of two-stage ability testing (Research Report No. 74-4). Psychometric methods program, department of psychology, University of Minnesota, Minneapolis

Bond T, Yan Z, Heene M (2020) Applying the Rasch model: fundamental measurement in the human sciences. Springer. https://doi.org/10.4324/9780429030499

Boone WJ (2016) Rasch analysis for instrument development: why, when, and how? CBE Life Sci Educ 15(4):rm4. https://doi.org/10.1187/cbe.16-04-0148

Cai L, Choi K, Hansen M, Harrell L (2016) Item response theory. Annu Rev Stat Appl 3:297–321. https://doi.org/10.1146/annurev-statistics-041715-033702

Campbell JR, Hombo CM, Mazzeo J (2000) NAEP 1999 trends in academic progress: three decades of student performance (NCES No. 2000-469). DC: National Center for Educational Statistic

Chang H-H (2015) Psychometrics behind computerized adaptive testing. Psychometrika 80(1):1–20. https://doi.org/10.1007/s11336-014-9401-5

Chen Y, Li X, Liu J, Ying Z (2021) Item response theory–a statistical framework for educational and psychological measurement. ArXiv e-prints. arxiv:2108.08604

Chen H, Yamamoto K, von Davier M (2014) Controlling multistage testing exposure rates in international large-scale assessments. In: Yan A, von Davier AA, Lewis C (eds) Computerized multistage testing: theory and applications (pp 391–409). CRC Press. https://doi.org/10.1201/b16858

Cronbach LJ, Gleser GC (1957) Psychological tests and personnel decisions. University of Illinois Press

De Boeck P (2008) Random item IRT models. Psychometrika 73(4):533. https://doi.org/10.1007/s11336-008-9092-x

Drasgow F, Luecht RM, Bennett RE (2006) Technology and testing. In: Bennett R (ed) Educational measurement (4th ed., pp 471–515). American Council on Education/Praeger

Eggen TJHM, Verhelst ND (2006) Loss of information in estimating item parameters in incomplete designs. Psychometrika 71(2):303–322. https://doi.org/10.1007/s11336-004-1205-6

Eggen TJHM, Verhelst ND (2011) Item calibration in incomplete testing designs. Psicologica: Int J Methodol Exp Psychol 32(1):107–132

Engelhard G (2012) Invariant measurement: using Rasch models in the social, behavioral, and health sciences. Routledge. https://doi.org/10.4324/9780203073636

Fischer GH (1973) The linear logistic test model as an instrument in educational research. Acta Physiol (Oxf) 37(6):359–374. https://doi.org/10.1016/0001-6918(73)90003-6

Fischer GH (1974) Einführung in die Theorie psychologischer Tests: Grundlagen und Anwendungen [Introduction into Theory of Psychological Tests]. Huber

Fischer GH (1995) Derivations of the Rasch model. In: Fischer, GH, Molenaar, IW (eds) Rasch models: foundations, recent developments, and applications (pp 15–38). Springer. https://doi.org/10.1007/978-1-4612-4230-7_2

Fischer GH (2007) Rasch models. In: Rao CR, Sinharay S (eds) Handbook of statistics: psychometrics (pp 515–585, Vol. 26). Elsevier. https://doi.org/10.1016/S0169-7161(06)26016-4

Fishbein B, Martin MO, Mullis IV, Foy P (2018) The TIMSS 2019 item equivalence study: examining mode effects for computer-based assessment and implications for measuring trends. Large-scale Assess Educ 6(1):1–23. https://doi.org/10.1186/s40536-018-0064-z

Formann AK (1986) A note on the computation of the second-order derivatives of the elementary symmetric functions in the Rasch model. Psychometrika 51(2):335–339. https://doi.org/10.1007/BF02293990

Formann AK (1995) Linear logistic latent class analysis and the Rasch model. In: Fischer GH, Molenaar IW (eds) Rasch models: foundations, recent developments, and applications (pp 239–255). Springer. https://doi.org/10.1007/978-1-4612-4230-7_13

Glas CAW (1988) The Rasch model and multistage testing. J Educ Stat 13(1):45–52. https://doi.org/10.2307/1164950

Hendrickson A (2007) An NCME instructional module on multistage testing. Educ Meas Issues Pract 26(2):44–52. https://doi.org/10.1111/j.1745-3992.2007.00093.x

Holland PW (1990) On the sampling theory roundations of item response theory models. Psychometrika 55(4):577–601. https://doi.org/10.1007/BF02294609

Jodoin MG, Zenisky A, Hambleton RK (2006) Comparison of the psychometric properties of several computer-based test designs for credentialing exams with multiple purposes. Appl Measur Educ 19(3):203–220. https://doi.org/10.1207/s15324818ame1903_3

Kim H, Plake BS (1993) Monte carlo simulation comparison of two-stage testing and computerized adaptive testing [Paper presented at the annual meeting of the national council on measurement in education, Atlanta, GA]

Kim S, Moses T, Yoo HH (2015) Effectiveness of item response theory (IRT) proficiency estimation methods under adaptive multistage testing. ETS Res Rep Ser 2015(1):1–19. https://doi.org/10.1002/ets2.12057

Kubinger KD, Steinfeld J, Reif M, Yanagida T (2012) Biased (conditional) parameter estimation of a Rasch model calibrated item pool administered according to a branched testing design. Psychol Test Assess Model 52(4):450–460

Lamprianou I (2019) Applying the Rasch model in social sciences using R and Bluesky statistics. Routledge. https://doi.org/10.4324/9781315146850

Linacre JM (1999) Understanding Rasch measurement: estimation methods for Rasch measures. J Outcome Meas 3:382–405

Linacre JM (2004) Rasch model estimation: further topics. J Appl Meas 5(1):95–110

Lord FM (1971) A theoretical study of two-stage testing. Psychometrika 36(3):227–242. https://doi.org/10.1007/BF02297844

Lord FM (1980) Applications of item response theory to practical testing problems. Erlbaum. https://doi.org/10.4324/9780203056615

Lord FM, Novick MR, Birnbaum A (1968) Statistical theories of mental test scores. Addison-Wesley

Luecht RM, Nungester RJ (1998) Some practical examples of computer-adaptive sequential testing. J Educ Meas 35(3):229–249. https://doi.org/10.1111/j.1745-3984.1998.tb00537.x

Magis D, Yan D, von Davier AA (2017) Computerized adaptive and multistage testing with R: using packages catR and mstR. Springer. https://doi.org/10.1007/978-3-319-69218-0

Maris G, Bechger T (2007) Scoring open ended questions. In: Rao CR, Sinharay S (eds) Handbook of statistics: psychometrics (pp 663–681, Vol. 26). Elsevier. https://doi.org/10.1016/S0169-7161(06)26020-6

Masters GN (1982) A Rasch model for partial credit scoring. Psychometrika 47(2):149–174. https://doi.org/10.1007/BF02296272

Mislevy RJ, Sheehan KM (1989) The role of collateral information about examinees in item parameter estimation. Psychometrika 54(4):661–679. https://doi.org/10.1007/BF02296402

Molenaar IW (1995a) Some background for item response theory and the Rasch model. In: Fischer GH, Molenaar I (eds) Rasch models: foundations, recent developments, and applications (pp 3–14). Springer. https://doi.org/10.1007/978-1-4612-4230-7_1

Molenaar, I (1995b) Estimation of item parameters. In: Fischer GH, Molenaar IW (eds) Rasch models: foundations, recent developments, and applications (pp 39–512). Springer. https://doi.org/10.1007/978-1-4612-4230-7_3

Mullis I, Martin MO (2019) PIRLS 2021 assessment frameworks [Retrieved from Boston College, TIMSS PIRLS International Study Center website: https://timssandpirls.bc.edu/pirls2021/frameworks/]

OECD (2010) PISA computer-based assessment of student skills in science. OECD Publishing. https://doi.org/10.1787/9789264082038-en

OECD (2016) PISA 2018 integrated design (tech. rep.). OECD Publishing. https://www.oecd.org/pisa/pisaproducts/PISA-2018-INTEGRATED-DESIGN.pdf

OECD (2019a) PISA 2018 assessment and analytical framework. OECD Publishing. https://doi.org/10.1787/b25efab8-en

OECD (2019b) Technical report of the survey of adult skills (PIAAC) (third edition) (2019). OECD Publishing

R Core Team (2020) R: A language and environment for statistical computing. The R Foundation for Statistical Computing, Vienna, Austria. https://www.R-project.org/ Accessed 1 February 2020

Rasch G (1960) Probabilistic models for some intelligence and attainment tests. Pædagogiske Institut

Rasch G (1977) On specific objectivity. An attempt at formalizing the request for generality and validity of scientific statements. In: Blegvad M (ed) The Danish year-book of philosophy (pp 58–94). Munksgaard

Robitzsch A (2020) sirt: Supplementary item response theory models (R Package Version 3.9-4) https://CRAN.R-project.org/package=sirt (accessed on 03 April 2020)

Rost J, von Davier M (1995) Polytomous mixed Rasch models. In: Fischer GH, Molenaar IW (eds) Rasch models: foundations, recent developments, and applications (pp 371–379). Springer. https://doi.org/10.1007/978-1-4612-4230-7_20

Rubin DB (1976) Inference and missing data. Biometrika 63(3):581–592. https://doi.org/10.1093/biomet/63.3.581

San Martin E, De Boeck, P (2015) What do you mean by a difficult item? On the interpretation of the difficulty parameter in a Rasch model. In: Millsap RE, Bolt DM, van der Ark LA, Wang W-C (eds) Quantitative psychology research. The 78th annual meeting of the psychometric society (pp 1–14). Springer. https://doi.org/10.1007/978-3-319-07503-7

Scheiblechner H (1972) Das Lernen und Lösen komplexer Denkaufgaben [Learning and solving complex thinking tasks]. Zeitschrift für Experimentelle Angewandte Psychologie 19:476–506

Skrondal A, Rabe-Hesketh S (2022) The role of conditional likelihoods in latent variable modeling. Psychometrika. https://doi.org/10.1007/s11336-021-09816-8

Steinfeld, J, Robitzsch, A (2019) tmt: Estimation of the Rasch model for multistage tests (R Package Version 0.2.1-0) https://CRAN.R-project.org/package=tmt Accessed on 03 April 2020

Steinfeld J, Robitzsch A (2021) Item parameter estimation in multistage designs: a comparison of different estimation approaches for the Rasch model. Psych 3(3):279–307. https://doi.org/10.3390/psych3030022

Svetina D, Liaw Y-L, Rutkowski L, Rutkowski D (2019) Routing strategies and optimizing design for multistage testing in international large-scale assessments. J Educ Meas 56(1):192–213. https://doi.org/10.1111/jedm.12206

van der Linden WJ (2005) Linear models for optimal test design. Springer. https://doi.org/10.1007/0-387-29054-0

van der Linden WJ, Hambleton R (1997) Handbook of modern item response theory. Springer. https://doi.org/10.1007/978-1-4757-2691-6

van der Linden WJ, Glas CA (2010) Elements of adaptive testing. Springer. https://doi.org/10.1007/978-0-387-85461-8

Verhelst ND (2019) Exponential family models for continuous responses. In: Veldkamp BP, Sluijter C (eds) Theoretical and practical advances in computer-based educational measurement (pp 135–160). Springer. https://doi.org/10.1007/978-3-030-18480-3_7

Verhelst ND, Glas C, Van der Sluis A (1984) Estimation problems in the Rasch model: the basic symmetric functions. Comput Stat Q 1(3):245–262

Wainer H, Dorans NJ, Flaugher R, Green BF, Mislevy RJ, Steinberg L, Thissen D (2000) Computerized adaptive testing: a primer (2. ed.). Lawrence Erlbaum

Wang C, Chen P, Jiang S (2019) Item calibration methods with multiple subscale multistage testing. J Educ Meas. https://doi.org/10.1111/jedm.12241

Weiss DJ (1982) Improving measurement quality and efficiency with adaptive testing. Appl Psychol Meas 6(4):473–492

Weiss DJ (1983) New horizons in testing. Academic Press. https://doi.org/10.1633/016/C2009-0-03014-1

Weiss DJ, Kingsbury GG (1984) Application of computerized adaptive testing to educational problems. J Educ Meas 21(4):361–375. https://doi.org/10.1111/j.1745-3984.1984.tb01040.x

Wilson M (2004) Constructing measures: an item response modeling approach. Routledge. https://doi.org/10.4324/9781410611697

Wright BD, Stone MH (1979) Best test design. Mesa Press

Wu M, Tam HP, Jen T-H (2016) Educational measurement for applied researchers: theory into practice. Springer. https://doi.org/10.1007/978-981-10-3302-5

Yamamoto K, Khorramdel L (2018) Introducing multistage adaptive testing into international large-scale assessments designs using the example of piaac. Psychol Test Assess Model 60(3):347–368

Yamamoto K, Shin HJ, Khorramdel L (2018) Multistage adaptive testing design in international large-scale assessments. Educ Meas Issues Pract 37(4):16–27. https://doi.org/10.1111/emip.12226

Yamamoto K, Shin HJ, Khorramdel L (2019) Introduction of multistage adaptive testing design in PISA 2018 (OECD Education working paper No 209). https://doi.org/10.1787/b9435d4b-en

Yen W (2006) Item response theory. In: Brennan RL (ed) Educational measurement: psychometrics (pp 111–154). Praeger. https://doi.org/10.1016/S0169-7161(06)26016-4

Zenisky A, Hambleton RK, Luecht RM (2009) Multistage testing: issues, designs, and research. In: van der Linden WJ, Glas CA (eds) Elements of adaptive testing (pp 355–372). Springer. https://doi.org/10.1007/978-0-387-85461-8

Zhang T, Xie Q, Park BJ, Kim YY, Broer M, Bohrnstedt G (2016) Computer familiarity and its relationship to performance in three NAEP digital-based assessments. In: AIR-NAEP Working Paper# 01-2016. American Institutes for Reasearch

Zwitser RJ, Maris G (2015) Conditional statistical inference with multistage testing designs. Psychometrika 80(1):65–84. https://doi.org/10.1007/s11336-013-9369-6

Funding

The authors have not disclosed any funding.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors have no conflict of interest directly relevant to the content of this article to declare.

Additional information

Communicated by Kensuke Okada.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

About this article

Cite this article

Steinfeld, J., Robitzsch, A. Conditional maximum-likelihood estimation in probability-based multistage designs. Behaviormetrika (2024). https://doi.org/10.1007/s41237-024-00228-3

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s41237-024-00228-3