Abstract

We review the field of collisionless numerical simulations for the large-scale structure of the Universe. We start by providing the main set of equations solved by these simulations and their connection with General Relativity. We then recap the relevant numerical approaches: discretization of the phase-space distribution (focusing on N-body but including alternatives, e.g., Lagrangian submanifold and Schrödinger–Poisson) and the respective techniques for their time evolution and force calculation (direct summation, mesh techniques, and hierarchical tree methods). We pay attention to the creation of initial conditions and the connection with Lagrangian Perturbation Theory. We then discuss the possible alternatives in terms of the micro-physical properties of dark matter (e.g., neutralinos, warm dark matter, QCD axions, Bose–Einstein condensates, and primordial black holes), and extensions to account for multiple fluids (baryons and neutrinos), primordial non-Gaussianity and modified gravity. We continue by discussing challenges involved in achieving highly accurate predictions. A key aspect of cosmological simulations is the connection to cosmological observables, we discuss various techniques in this regard: structure finding, galaxy formation and baryonic modelling, the creation of emulators and light-cones, and the role of machine learning. We finalise with a recount of state-of-the-art large-scale simulations and conclude with an outlook for the next decade.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

The current standard cosmological model describes a Universe accelerated by a cosmological constant (\(\varLambda \)) and dominated by cold dark matter (CDM), where structure arose from minute initial perturbations—seeded in the primordial quantum Universe—which collapsed on ever larger scales over cosmic time (Planck Collaboration 2020; Alam et al. 2017; Betoule et al. 2014). The nonlinear interplay of these ingredients have formed the cosmic web and the intricate network of haloes harbouring galaxies and quasars.

Over the last decades, numerical simulations have played a decisive role in establishing and testing this \(\varLambda \)CDM paradigm. Following pioneering work in the 1980s, numerical simulations steadily grew in realism and precision thanks to major advances in algorithms, computational power, and the work of hundreds of scientists. As a result, various competing hypotheses and theories could be compared with observations, guiding further development along the years. Ultimately, \(\varLambda \)CDM was shown to be quantitatively compatible with virtually all observations of the large-scale structure of the Universe, even for those that involve nonlinear physics and that are inaccessible to any method other than computer simulations (see e.g., Springel et al. 2006; Vogelsberger et al. 2020).

Nowadays, simulations have become the go-to tool in cosmology for a number of tasks: (i) the interpretation of observations in terms of the underlying physics and cosmological parameters; (ii) the testing and aiding of the development of perturbative approaches and analytic models for structure formation; (iii) the production of reliable input (training) data for data-driven approaches and emulators; (iv) the creation of mock universes for current and future large-scale extragalactic cosmological surveys, from which we can quantify statistical and systematic errors; (v) the study of the importance of various aspects of the cosmological model and physical processes, and determining their observability.

Despite the remarkable agreement between simulations and the observed Universe, there are indications that \(\varLambda \)CDM might not be ultimately the correct theory (Planck Collaboration 2020; Riess et al. 2019; Asgari et al. 2021; DES Collaboration 2021). Future cosmological observations will provide enough data to test competing explanations by probing exceedingly large sub-volumes of the Universe in virtually all electromagnetic wavelengths, and including increasingly fainter objects and smaller structures (e.g. Laureijs et al. 2011; Bonoli et al. 2021; DESI Collaboration 2016; Ivezić et al. 2019; Ade et al. 2019; Merloni et al. 2012). These observations will be able to test the physics of our Universe beyond the standard model: from neutrino masses, over the nature of dark matter and dark energy, to the inflationary mechanism. Since these observations are intimately connected to the nonlinear regime of structure formation, any optimal exploitation of the cosmological information will increasingly rely on numerical simulations. This will put numerical simulations in the spotlight of modern cosmology: they can either confirm or break the standard \(\varLambda \)CDM paradigm, and therefore will play a key role in the potential discovery of new physics.

The required accuracy and precision to make predictions of the necessary quality poses many important challenges and requires a careful assessment of all the underlying assumptions. This is the main topic of this review; we cover the ample field of cosmological simulations, starting from the fundamental equations to their connection with astrophysical observables, highlighting places where current research is conducted.

1.1 Large-scale simulations

The spatial distribution and dynamics of the large-scale structure give us access to fundamental aspects of the Universe: its composition, the physical laws, and its initial conditions. For instance, the overall shape of the galaxy power spectrum is sensitive to the abundance of baryons and dark matter; the anisotropy induced by redshift-space distortions can constrain the cosmic density-velocity relation which in turn is set by the gravity law; and high-order cumulants of the galaxy field can encode non-Gaussian signatures inherited from an early inflationary period.

To extract this information from observations of the large-scale distribution of galaxies, quasars or other tracers, we must rely on a model that predicts their observed distribution as a function of cosmic time for a given cosmological model. That is, we require a prediction for the distribution of the mass density and the velocity field together with the properties and abundance of collapsed objects. Furthermore, we need to predict how galaxies or other astronomical objects will populate these fields. This is a well-posed but very challenging problem, due to the complexity and nonlinear nature of the underlying physics.

On large scales and/or at early times, density fluctuations are small and the problem can be tackled analytically by expanding perturbatively the relevant evolution equations. However, a rigorous perturbation theory can only be carried out on scales unaffected by shell-crossing effects. On intermediate scales, instead, so far only effective models with several free parameters exist (that are themselves either fitted to simulations or tested with simulations). On smaller very nonlinear scales, the only way to accurately solve the problem is numerically.

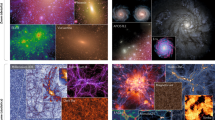

We illustrate the complicated nature of the nonlinear universe in Fig. 1, which shows the simulated matter field in a region \(40 h^{-1}\mathrm{Mpc}\) wide. In the top panel we can appreciate the distribution of dark matter halos and their variety in terms of sizes, masses, and shapes. In the middle and bottom panels we show the same region but on much thinner slices which emphasise the small-scale anisotropies and ubiquitous filamentary structure.

The distribution of dark matter in thin slices as predicted by a cosmological N-body simulation. Each panel shows a region \(40 h^{-1}\mathrm{Mpc}\) wide with different levels of thickness—40, 2, and \(0.1 h^{-1}\mathrm{Mpc}\), from top to bottom—which highlight different aspects of the simulated density field, from the distribution of dark matter halos in the top panel, to the filamentary nature of the nonlinear structure in the bottom panel. Image adapted from Stücker et al. (2018)

When one is concerned with the large-scale structure of the Universe, the dynamics is dominated by gravity, and baryons and dark matter can be considered as a single pressureless (cold) fluid. This poses an ideal situation for computer simulations: the initial conditions as well as the relevant physical laws are known and can be solved numerically considering only gravitational interactions. We review in detail the foundations of such numerical simulations in Sects. 2, 3, 4, 5, and 6. Specifically, in Sect. 2 we describe the derivation of the relevant equations solved by numerical simulations, in Sect. 3 how they can be discretised by either an N-body approach or by alternative methods. As we will discuss later, considering different discretisations will be crucial to test the robustness of the predictions from N-body simulations. In Sect. 4 we discuss how to evolve the discretised equations in time, and pay attention to common approaches for computational optimization, whereas in Sect. 5 we discuss various numerical techniques to compute gravitational interactions. Finishing our recap of the main numerical techniques underlying large-scale simulations, in Sect. 6 we discuss several aspects of how to set their initial conditions.

The importance of numerical solutions for structure formation is that they provide currently the only way to predict the highly nonlinear universe, and potentially extract cosmological information from observations at all scales. In contrast, if one is restricted to scales where perturbative approaches are valid and shell-crossing effects are negligible (i.e., have only a sub-per cent impact on summary statistics), then a huge amount of cosmological information is potentially lost.

The primary role of numerical simulations for cosmological constraints has already been demonstrated for several probes focusing mostly on small scales, and in setting constraints on the properties of the dark matter particle, as exemplified by constraints from the Ly-\(\alpha \) forest, the abundance and properties of Milky-Way satellites, and strong lensing modelling. They all rely on a direct comparison of observations with numerical simulations, or with models calibrated and/or validated using numerical simulations. In Sect. 7 we discuss several ways in which the distinctive properties of various potential dark matter candidates can be modelled numerically, including neutralinos, warm dark matter, axions, wave dark matter, and decaying and self-interacting dark matter. In the future, this will also be the case for large-scale clustering, and we discuss the current and potential challenges to be address in Sect. 8.

1.2 Upcoming challenges

Given that simulations are increasingly used for the inference of cosmological parameters, a question of growing importance is therefore how one can demonstrate the correctness of a given numerical solution. For instance, any simulation-based evidence—of massive neutrinos or a departure from GR—would necessarily need to prove its accuracy in modelling the relevant physics in the nonlinear regime. A proper understanding is of paramount importance: simulators need to demonstrate that a potential discovery relying on simulations is not simply a numerical inaccuracy or could be explained by uncertain/ill understood model parameters (i.e. the uncertainties due to all “free” parameters must be quantified). We devote Sect. 7 to this topic.

Unfortunately still only a limited set of exact solutions is known for which strong convergence can be demonstrated. For useful predictions, the correctness of the solution has to be established in a very different, much more non-linear regime. However, far from these relatively simplistic known solutions. There has been significant progress in this direction over the last decade: from large code comparisons, a better understanding of the effect of numerical noise and discreteness errors, the impact of code parameters that control the accuracy of the solution, to the quality of the initial conditions used to set-up the simulations. These tests, however, presuppose that the N-body method itself converges to the right solution for a relatively small number of particles. Clear examples where the N-body approach fails have emerged: most notably near the free-streaming scale in warm dark matter simulations, and in the relative growth of multi-fluid simulations. Very recently, methods that do not rely on the N-body approach have become possible which have allowed to test for such errors. Although so far no significant biases have been measured for the statistical properties of CDM simulations, many more careful and systematic comparisons will be needed in the future.

In parallel, there has been significant progress in the improved modelling of the multi-physics aspect of modern cosmological simulations. For instance, accurate two-fluid simulations are now possible capturing the distinct evolution of baryons and cold dark matter, as are simulations that quantify the non-linear impact of massive neutrinos with sophisticated methods. Further, the use of Newtonian dynamics has been tested against simulations solving general relativistic equations in the weak field limit, and schemes to include relativistic corrections have been devised. We discuss all these extensions, which seek to improve the realism of numerical simulations, in Sect. 7.

An important aspect for confirming or ruling out the \(\varLambda \)CDM model will be the design of new cosmic probes that are particularly sensitive to departures from \(\varLambda \)CDM. For this it is important to understand the role of non-standard ingredients on various observables and in structure formation in general. This is an area where rapid progress has been seen with advances in the variety and sophistication of models, for instance regarding the actual nature of dark matter and simulations assuming neutralinos, axions, primordial black holes, or wave dark matter. Likewise, modifications of general relativity as the gravity law, and simulations with primordial non-Gaussianity have also reached maturity.

To achieve the required accuracy and precision that is necessary to make optimal use of upcoming observational data, it is blatantly clear that, ultimately, all systematic errors in simulations must be understood and quantified, and the impact of all the approximations made must be under control. One of them is that on nonlinear scales baryonic effects start to become important since cold collisionless and collisional matter start to separate, and baryons begin being affected by astrophysical processes. Hence, it becomes important to enhance the numerical simulation with models for the baryonic components, either for the formation of galaxies or for the effects of gas pressure and feedback from supernovae or supermassive black holes. We discuss different approaches to this problem in Sect. 9.

In parallel to increasing the accuracy of simulations, the community is focusing on improving their “precision”. Cosmological simulations typically push international supercomputer centers and are among the largest calculations. New technologies and algorithmic advances are an important part of the field of cosmological simulations, and we review this in Sect. 10. We have seen important advances in terms of adoption of GPUs and hardware accelerators and new algorithms for force calculations with improved precision, computational efficiency, and parallelism. Thank to these, state-of-the-art simulations follow regions of dozens of Gigaparsecs with trillions of particles, constantly approaching the level required by upcoming extragalactic surveys.

The field of cosmological simulations have been also reviewed in the last decade by other authors, who have focused on different aspects of the field and from different perspectives. For more details, we refer the reader to the excellent reviews by Kuhlen et al. (2012), Frenk and White (2012), Dehnen and Read (2011), and by Vogelsberger et al. (2020).

1.3 Outline

In the following we briefly outline the contents of each subsection of this review.

Section 2: This section provides the basic set of equations solved by cosmological dark matter simulations. We emphasise the approximations usually adopted by most simulations regarding the weak-field limit of General Relativity and the properties of dark matter. This section sets the stage for various kinds of simulations we discuss afterwards.

Section 3: This section discusses the possible numerical approaches for solving the equations presented in Section 2. Explicitly, we discuss the N-body approach and alternative methods such as the Lagrangian submanifold, full phase-space techniques, and Schrödinger–Poisson.

Section 4: This section derives the time integration of the relevant equations of motion. We discuss the symplectic integration of the dynamics at second order. We also review individual and adaptive timestepping, and the integration of quantum Hamiltonians.

Section 5: We review various methods for computing the gravitational forces exerted by the simulated mass distribution. Explicitly, we discuss the Particle-Mesh method solved by Fourier and mesh-relaxation algorithms, Trees in combination with traditional multipole expansions and Fast multipole methods, and their combination.

Section 6: In this section we outline the method for setting the initial conditions for the various types of numerical simulations considered. Explicitly, we review the numerical algorithms employed (Zel’dovich approximation and higher-order formulations) as formulated in Fourier or configuration space.

Section 7: This section is focused on simulations relaxing the assumption that all mass in the Universe is made out of a single cold collisionless fluid. That is, we discuss simulations including both baryons and dark matter; including neutrinos; assuming dark matter is warm; self-interacting; made out of primordial black holes; and cases where its quantum pressure cannot be neglected on macroscopic scales. We also discuss simulations easing the restrictions that the gravitational law is given by the Newtonian limit of General Relativity, and that the primordial fluctuations were Gaussian.

Section 8 This section discusses the current challenge for high accuracy in cosmological simulations. We consider the role of the softening length, cosmic variance, mass resolution, among others numerical parameters. We also review comparisons of N-body codes and discuss the validity of the N-body discretization itself.

Section 9: This section covers the connection between simulation predictions and cosmological observations. We discuss halo finder algorithms, the building of merger trees, and the construction of ligthcones. We also briefly review halo-occupation distribution, subhalo-abundance-matching, and semi-analytic galaxy formation methods.

Section 10: This section provides a list of state-of-the-art large-scale numerical simulations. We put emphasis in the computational challenge they face in connection with current and future computational trends and observational efforts.

In the final section, we conclude with an outlook for the future of cosmological dark matter simulations.

2 Gravity and dynamics of matter in an expanding Universe

Large-scale dark matter simulations are (mostly) carried out in the weak-field, non-relativistic, and collisionless limit of a more fundamental Einstein–Boltzmann theory. Additionally, since these simulations neglect any microscopic interactions in the collisionless limit right from the start (we will not consider them until we discuss self-interacting dark matter), one operates in the Vlasov-Einstein limit. This is essentially a continuum description of the geodesic motion of microscopic particles since only (self-)gravity is allowed to affect their trajectories. Despite these simplifications, this approach keeps the essential non-linearities of the system, which gives rise to their phenomenological complexity.

In this section, we first derive the relevant relativistic equations of motion in a Hamiltonian formalism. We then take the non-relativistic weak-field limit by considering perturbations around the homogeneous and isotropic FLRW (Friedmann-Lemaître–Robertson–Walker) metric. This Vlasov–Poisson limit yields ordinary non-relativistic equations of motion, with the twist of a non-standard time-dependence due to the expansion of space in a general FLRW space time. Due to the preservation of symplectic invariants, the expansion of space leads to an intimately related contraction of momentum space to preserve overall phase space volume.

With the general equations of motion at hand, we consider the cold limit (owed to a property of cold dark matter (CDM) that is well constrained by observations) which naturally arises since the expansion of space (or rather the compression of momentum space) reduces any intrinsic momentum dispersion of the particle distribution over time. In the cold limit, the distribution function of dark matter takes a particularly simple form of a low-dimensional submanifold of phase space. These discussions are aimed to provide a formal foundation for the equations of motion as well as a motivation for many of the techniques and approximations discussed and reviewed in later sections.

2.1 Equations of motion and the Vlasov equation

Since we are, due to the very weak interactions of dark matter, interested mostly in the collisionless limit, we are essentially looking at freely moving particles in a curved space-time. To describe the motion of these particles, it is much easier to work with Lagrangian coordinates in phase space, i.e., the positions and momenta of particles. In general relativity, we have in full generality an eight dimensional relativistic extended phase space of positions \(x_\mu \) and their conjugate momentaFootnote 1\(p^\mu \). Kinetic theory in curved space time is discussed in many introductory texts on general relativity in great detail (e.g. Ehlers 1971; Misner et al. 1973; Straumann 2013; Choquet-Bruhat 2015 for the curious reader), but mostly without connection to a Hamiltonian structure. For our purposes, we can eliminate one degree of freedom by considering massive particles and neglecting processes that alter the mass of the particles. In that case the mass-shell condition \(p^\mu p_\mu = -m^2 = \mathrm{const}\) holdsFootnote 2 and allows us to reduce dynamics to 3+3 dimensional phase space with one parameter (e.g. time) that can be used to parameterise the motion. Note that we employ throughout Einstein’s summation convention, where repeated indices are implicitly summed over, unless explicitly stated otherwise.

Geodesic motion of massive particles In the presence of only gravitational interactions, the motion of particles in general relativity is purely geodesic by definition. Let us therefore begin by considering the geodesic motion of a particle moving between two points A and B. The action for the motion along a trajectoryFootnote 3 (X(t), P(t)), parametrised by coordinate time t, between the spacetime points A and B is

From Eq. (1), one can immediately read off that the Lagrangian \(\mathscr {L}\) and Hamiltonian \(\mathscr {H}\) of geodesic motion are given by the usual Legendre transform pair (e.g. Goldstein et al. 2002)

respectively, meaning that \(P_0\) represents the Hamiltonian itself [as one finds also generally in extended phase space, cf. Lanczos (1986)]. It is easy to show in a few lines of calculation that the coordinate-time canonical equations of motion in curved spacetime are then given by two dynamical equationsFootnote 4 (e.g. Choquet-Bruhat 2015)

The Christoffel symbols of the metric simplify here to this simple partial derivative due to the mass-shell condition, but otherwise these two equations are equivalent to the geodesic equation. Note the formal similarity of these equations compared to the non-relativistic equations, with the ‘gravitational interaction’ absorbed into the derivative of the metric. Eqs. (3) determine the particle motion given the metric. The metric in turn is determined by the collection of all particles in the Universe through Einstein’s field equations, which we will address in the next section.

Statistical Mechanics When considering a large number of particles, it is necessary to transition to a statistical description and consider the phase-space distribution (or density) function of particles in phase-space over time, i.e. on \((\varvec{x},\varvec{p},t) \in \mathbb {R}^{3+3+1}\), rather than individual microscopic particle trajectories \((\varvec{X}(t),\varvec{P}(t))\). The on-shell phase space distribution function \(f_{\mathrm{m}}(\varvec{x},\varvec{p},t)\) can be defined e.g. through the particle number, which is a Lorentz scalar, per unit phase space volume. This phase-space density is then related to the energy-momentum tensor as

where g is the determinant of the metric. For purely collisionless dynamics, the evolution of \(f_{\mathrm{m}}\) is therefore determined by the on-shell Einstein–Vlasov equation (e.g. Choquet-Bruhat 1971; Ehlers 1971)

where \(\widehat{L}_{\mathrm{m}}\) is the on-shell Liouville operator in coordinate time. This equation relates Hamiltonian dynamics and incompressibility in phase space: the Vlasov equation is simply the continuum limit of Hamiltonian mechanics with only long-range gravitational interactions (i.e., geodesic motion). This can be seen by observing that particle trajectories \(\left( X^i(t),P_i(t)\right) \)following Eqs. (3) solve the Einstein–Vlasov equation as characteristic curves, i.e. \(\mathrm{d}f_\mathrm{m}\left( X^i(t),P_i(t),t\right) /\mathrm{dt}=0\).

2.2 Scalar metric fluctuations and the weak field limit

Metric perturbations The final step needed to close the equations is made through Einstein’s field equations \(G_{\mu \nu } = 8\pi G T_{\mu \nu }\). The field equations connect the evolution of the phase-space density \(f_{\mathrm{m}}\), which determines the stress-energy tensor \(T^{\mu \nu }\), with the force 1-form \(F_i\), which is determined by the metric. The results presented above are valid non-perturbatively for an arbitrary metric. Here, we shall make a first approximation by considering only scalar fluctuations using two scalar potentials \(\phi \) and \(\psi \)Footnote 5. This approximation is valid if velocities are non-relativistic, i.e. \(\left| P_i/P^0\right| \ll 1\). In this case, the only dynamically relevant component of \(T^{\mu \nu }\) is the time-time component. Let us thus consider the metric (which corresponds to the “Newtonian gauge” with conformal time), following largely the notation of Bartolo et al. (2007),

where x are co-moving coordinates. The metric determinant is given by \(\sqrt{|g|}=a^4\exp \left( \psi -3\phi \right) \).

The kinetic equation in GR is simply a geodesic transport equation and will thus only depend on the gravitational “force” 1-form \(F_i\), which can be readily computed for this metric to be

If the vector and tensor components are non-relativistic [see e.g. Kopp et al. (2014), Milillo et al. (2015) for a rigorous derivation of the Newtonian limit], we are left only with a constraint equation from the time-time component of the field equations. The time-time component of the Einstein tensor \(G_{\mu \nu }\) is found to be

where a prime indicates a derivative w.r.t. \(\tau \). Inserting this in the respective field equation and performing the weak field limit (i.e. keeping only terms up to linear order in the potentials) one finds the following constraint equation

where \(\rho :=T^0{}_{0}\), \(\mathcal {H}:= a^{\prime}/a\), and G is Newton’s gravitational constant. Note that this equation alone does not close the system, since we have no evolution equation for \(a(\tau )\) yet.

Separation of background and perturbations The usual assumption is that backreaction can be neglected, i.e. the homogeneous and isotropic FLRW case is recovered with \(\phi ,\psi \rightarrow 0\) and density \(\rho \rightarrow \overline{\rho }(\tau )\). In this case, \(a(\tau )\) is given by the solution of this equation in the absence of perturbations which becomes the usual Friedmann equation

where \(\rho _{\mathrm{c},0} := \frac{3H_0^2}{8 \pi G}\) is the critical density of the Universe today, \(H_0\) is the Hubble constant, and the \(\varOmega _{X\in \{\mathrm{r},\nu ,\mathrm{m},\mathrm{k},\varLambda \}}\) are the respective density parameters of the various species in units of this critical density (at \(a=1\)). Note that massive neutrinos \(\varOmega _\nu (a)\) have a non-trivial scaling with a (see Sect. 7.8.2 for details). In the inhomogeneous case one can subtract out this FLRW evolution—neglecting by doing so any non-linear coupling, or ‘backreaction’, between the evolution of a and the inhomogeneities—and finds finally

This is an inhomogeneous diffusion equation (cf. e.g., Chisari and Zaldarriaga 2011; Hahn and Paranjape 2016) reflecting the fact that the gravitational potential does not propagate instantaneously in an expanding Universe so that super-horizon scales, where density evolution is gauge-dependent, are screened.

2.3 Newtonian cosmological simulations

Newtonian gravity In the absence of anisotropic stress the two scalar potentials in the metric (6) coincide and one has \(\psi =\phi \). One can further show that on sub-horizon scales [where \(\rho \) must be gauge independent, see e.g. Appendix A of Hahn and Paranjape (2016)] one then recovers from Eq. (11) the non-relativistic Poisson equation,

Note that this Poisson equation is however a priori invalid on super-horizon scales. Formally, when carrying out the transformation that removed the extra terms from Eq. (11), the Poisson source \(\rho \) has been gauge transformed to the synchronous co-moving gauge. If a simulation is initialised with density perturbations in the synchronous gauge and other quantities are interpreted in the Newtonian gauge, then the Poisson equation consistently links the two. In addition, we have in the non-relativistic weak field limit that \(p^0=a^{-1} m\) so that we also recover the Newtonian force law

Note that such gauge mixing can be avoided and horizon-scale effects can be rigorously accounted for by choosing a more sophisticated gauge (Fidler et al. 2016, 2017b) in which the force law is required to take the form of Eq. (13) and coordinates and momenta are interpreted self-consistently in this ‘Newtonian motion’ gauge to account for leading order relativistic effects. A posteriori gauge transformations exist to relate gauge-dependent quantities, but remember that observables can never be gauge dependent.

Non-relativistic moments For completeness and reference, we also give the components of the energy-momentum tensor (4) as moments of the distribution function \(f_{\mathrm{m}}\) in the non-relativistic limit

defining the mass density \(\rho \), momentum density \(\varvec{\pi }\) and second moment \(\varPi _{ij}\), which is related to the stress tensor as \(\varPi _{ij} - \pi _i \pi _j / \rho \).

The equations solved by standard N-body codes. Finally, the equations of motion in cosmic time \(\mathrm{d}t = a\, \mathrm{d}\tau \), assuming the weak-field non-relativistic limit, are

with the associated Vlasov–Poisson system

where \(\overline{\rho }(t)\) is the spatial mean of \(\rho \) that is used also in the Friedmann equation \(\mathcal {H}^2 = \frac{8\pi G}{3} a^2 \overline{\rho }\) which determines the evolution of a(t). It is convenient to change to a co-moving matter density \(a^{-3}\rho \), eliminating several factors of a in these equations. In particular, the Poisson equation can be written as

if gravity is sourced by matter perturbations alone so that \(\rho (\varvec{x},t) = (1+\delta (\varvec{x},t))\,\varOmega _{\mathrm{m}} \rho _{\mathrm{c},0} a^{-3}\). Note that we have also introduced here the fractional overdensity \(\delta := \rho /\overline{\rho }-1\).

2.4 Post-Newtonian simulations

While traditionally all cosmological simulations were carried out in the non-relativistic weak-field limit, neglecting any back-reaction effects on the metric, the validity and limits of this approach have been questioned (Ellis and Buchert 2005; Heinesen and Buchert 2020). In addition, with upcoming surveys reaching horizon scales, relativistic effects need to be quantified and accounted for correctly. Since such effects are only relevant on very large scales, where perturbations are assumed to be small, various frameworks have been devised to interpret the outcome of Newtonian simulations in relativistic context (Chisari and Zaldarriaga 2011; Hahn and Paranjape 2016), which suggested in particular that some care is necessary in the choice of gauge when setting up initial conditions. Going even further, it turned out to be possible to define specific fine-tuned gauges, in which the gauge-freedom is used to absorb relativistic corrections, so that the equations of motion are strictly of the Newtonian form (Fidler et al. 2015; Adamek et al. 2017a; Fidler et al. 2017a). This approach requires only a modification of the initial conditions and a re-interpretation of the simulation outcome. Alternatively, relativistic corrections can also be included at the linear level by adding additional large-scale contributions computed using linear perturbation theory to the gravitational force computed in non-linear simulations (Brandbyge et al. 2017).

Going beyond such linear corrections, recently the first Post-Newtonian cosmological simulations have been carried out (Adamek et al. 2013, 2016) which indicated however that back-reaction effects are small and likely irrelevant for the next generation of surveys. Most recently, full GR simulations are now becoming possible (Giblin et al. 2016; East et al. 2018; Macpherson et al. 2019; Daverio et al. 2019) and seem to confirm the smallness of relativistic effects. The main advantage of relativistic simulations is that relativistic species, such as neutrinos, can be included self-consistently. In all cases investigated so-far, non-linear relativistic effects have however appeared to be negligibly small on cosmological scales. However, such simulations will be very important in the future to verify the robustness of standard simulations regarding relativistic effects on LSS observables (e.g. gravitational lensing, gravitational redshifts, e.g. Cai et al. 2017, or the clustering of galaxies on the past lightcone, e.g. Breton et al. 2019; Guandalin et al. 2021; Lepori et al. 2021; Coates et al. 2021, all of which have been proposed as tests of gravitational physics on large scales).

2.5 Cold limit: the phase-space sheet and perturbation theory

The cold limit All observational evidence points to the colder flavours of dark matter (but see Sect. 7 for an overview over various dark matter models). A key limiting case for cosmological structure formation is therefore that of an initially perfectly cold scalar fluid (i.e. vanishing stress and vorticity). In this case, the dark matter fluid is at early enough times fully described by its density and (mean) velocity field, which is of potential nature. The higher order moments (14c) are then fully determined by the lower order moments (14a–14b) and the momentum distribution function at any given spatial point is a Dirac distribution so that \(f_{\mathrm{m}}\) is fully specified by only two scalar degrees of freedom, a density \(n(\varvec{x})\), and a velocity potential, \(S(\varvec{x})\), at some initial time, i.e.

Since S is differentiable, it endows phase space with a manifold structure and the three-dimensional hypersurface of six-dimensional phase space on which f is non-zero is called the ‘Lagrangian submanifold’. In fact, if at any time one can write \(\varvec{p}=m\varvec{\nabla }S\), then Hamiltonian mechanics guarantees that the Lagrangian submanifold preserves its manifold structure, i.e. it never tears or self-intersects. It can however fold up, i.e. lead to a multi-valued field \(S(\varvec{x},t)\), invalidating the functional form (18). Prior to such shell-crossing events (as is the case at the starting time of numerical simulations) this form is, however, perfectly meaningful and by taking moments of the Vlasov equation for this distribution function, one obtains a Bernoulli–Poisson system which truncates the infinite Boltzmann hierarchy already at the first moment, leaving only two equations (Peebles 1980) in terms of the density contrast \(\delta = n/\overline{n}-1\) and the velocity potential S,

supplemented with Poisson’s equation (Eq. 17). Note that this form brings out also the connection to Hamilton-Jacobi theory. After shell-crossing, this description breaks down, and all moments in the Boltzmann hierarchy become important.

Eulerian perturbation theory For small density perturbations \(|\delta |\ll 1\), it is possible to linearise the set of equations (19). One then obtains the ODE governing the linear instability of density fluctuations

The solutions can be written as \(\delta (\varvec{x},\tau ) = D_+(\tau ) \delta _+(\varvec{x}) + D_-(\tau ) \delta _-(\varvec{x})\) and in \(\varLambda \)CDM cosmologies given in closed form (Chernin et al. 2003; Demianski et al. 2005) as

where \( f_\varLambda := \varOmega _\varLambda / (\varOmega _{\mathrm{m}} a^{-3})\), and \({}_2F_1\) is Gauss’ hypergeometric function. In general cases, especially in the presence of trans-relativistic species such as neutrinos, Eq. (20) needs to be integrated numerically however. Moving beyond linear order, recursion relations to all orders in perturbations of Eqs. (19) have been obtained in the 1980s (Goroff et al. 1986) and provide the foundation of standard Eulerian cosmological perturbation theory [SPT; cf. Bernardeau et al. (2002) for a review].

Lagrangian perturbation theory Alternatively to considering the Eulerian fields, the dynamics can be described also through the Lagrangian map, i.e. by considering trajectories \(\varvec{x}(\varvec{q},t) = \varvec{q} + \varvec{\varPsi }(\varvec{q},t)\) starting from Lagrangian coordinates \(\varvec{q} = \varvec{x}(\varvec{q},t=0)\). It becomes then more convenient to write the distribution function (18) in terms of the Lagrangian map, i.e.

Mass conservation then implies that the density is given by the Jacobian \(\mathrm{J} := \det J_{ij} := \det \partial x_i/\partial q_j\) as

which is singular if any eigenvalue of \(\varvec{\nabla }_q\otimes \varvec{x}\) vanishes. This is precisely the case when shell crossing occurs. The canonical equations of motion (15) can be combined into a single second order equation, which in conformal time reads

where we now consider trajectories not for single particles but for the Lagrangian map, i.e. \(\varvec{x}=\varvec{x}(\varvec{q},t)\). By taking its divergence, this can be rewritten as an equation including only derivatives w.r.t. Lagrangian coordinates

In Lagrangian perturbation theory (LPT), this equation is then solved perturbatively using a truncated time-Taylor expansion of the form \(\varvec{\varPsi }(\varvec{q},\tau ) = \sum _{n=1}^\infty D(\tau )^n \varvec{\varPsi }^{(n)}(\varvec{q})\) (Buchert 1989, 1994; Bouchet et al. 1995; Catelan 1995). At first order \(n=1\), restricting to only the growing mode, one finds the famous Zel’dovich approximation (Zel’dovich 1970)

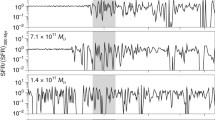

where \(\delta _+(\varvec{q})\) is, as above, the growing mode spatial fluctuation part of SPT. All-order recursion relations have also been obtained for LPT (Rampf 2012; Zheligovsky and Frisch 2014; Matsubara 2015). LPT solutions are of particular importance for setting up initial conditions for simulations. Both SPT and LPT are valid only prior to the first shell-crossing since the (pressureless) Euler–Poisson limit of Vlasov–Poisson ceases to be valid after. This can be easily seen by considering the evolution of a single-mode perturbation in the cold self-gravitating case, as shown in Fig. 2. Prior to shell-crossing, the mean-field velocity \(\langle \varvec{v}\rangle (\varvec{x},\,t)\) coincides with the full phase-space description \(\varvec{p}(\varvec{x}(\varvec{q};\,t);\,t)/m\). Then the DF from Eq. (18) guarantees that the (Euler/Bernoulli-) truncated mean field fluid equations describe the full evolution of the system. This is no longer valid after shell-crossing, accompanied by infinite densities where \(\det \partial x_i/\partial q_j=0\), when the velocity becomes multi-valued. Nevertheless, LPT provides an accurate bridge between the early Universe and that at the starting redshift of cosmological simulations, which can then be evolved further deep into the nonlinear regime. This procedure will be discussed in detail in Sect. 6.

Heuristic models based on PT Additionally, perturbation theory has been used as the backbone for approximate, but computationally extremely fast, descriptions of the nonlinear structure [see Monaco (2016) or a review and Chuang et al. (2015b) for a comparison of approaches]. These methods overcome the shell-crossing limitation of perturbation theory in different ways. The introduction of a viscuous term in the adhesion model (Gurbatov et al. 1985; Kofman and Shandarin 1988; Kofman et al. 1992) prevents the crossing of particle trajectories. In an alternative approach, Kitaura and Hess (2013) replaced the LPT displacement field on small-scales by values motivated from spherical collapse (Bernardeau 1994; Mohayaee et al. 2006). A similar idea is implemented in Muscle (Neyrinck 2016; Tosone et al. 2021). Numerous models have been developed based on an extension of the predictions of LPT via various small-scale models that aim to capture the collapse into halos or implement empirical corrections: Patchy (Kitaura et al. 2014), PTHalos (Scoccimarro and Sheth 2002; Manera et al. 2013), EZHalos (Chuang et al. 2015a), HaloGen (Avila et al. 2015). Finally, Pinocchio (Monaco et al. 2002, 2013) and WebSky (Stein et al. 2020) both combine LPT displacements with an ellipsoidal collapse model and excursion set theory to predict the abundance, mass accretion history, and spatial distribution of halos. The low computational cost of these approaches makes them useful for the creation of large ensembles of “simulations” designed at constructing covariance matrices for large-scale structure observations, or for a direct modelling of the position and redshift of galaxies. However, due to their heuristic character, their predictions need to be constantly quantified and validated with full N-body simulations.

Evolution of a single-mode perturbation from early times (\(a=0.1\), left panels), through shell-crossing (at \(a=1\), middle panels), to late times (\(a=10\), right panels). The top row shows the phase space, where the cold distribution function occupies only a one-dimensional line. Density is shown in the middle row, with singularities of formally infinite density appearing at and after the first shell-crossing. The bottom panels show the mean fluid velocity (\(\langle \varvec{v}\rangle = \varvec{\pi }/\rho \), Eq. 14b), which is identical to the phase-space diagram up to the first shell-crossing, but develops a complicated structure with discontinuities in the multi-stream region (indicated by green shading). Since the distribution function has a manifold structure, its tangent space (indicated in orange) can be evolved in a “geodesic deviation” equation, or it can be approximated by tessellations. Caustics appear where \(\partial x/\partial q = 0\). N-body simulation do not track this manifold structure, and sample only the distribution function

2.6 Deformation and evolution in phase space

The canonical equations of motion describe the motion of points in phase space over time. Moving further, it is also interesting to consider the evolution of an infinitesimal phase space volume, spanned by \((\mathrm{d}\varvec{x},\mathrm{d}\varvec{p})\), which is captured by the “geodesic deviation equation”.

As we have just discussed, in the cold case, the continuum limit leads to all mass occupying a thin phase sheet in phase space and one can think of the evolution of the system as the mapping \(\varvec{q}\mapsto (\varvec{x},\varvec{p})\) between Lagrangian and Eulerian space (cf. Fig. 2). One can take the analysis of deformations of pieces of phase space one level further beyond the cold case by considering a general mapping of phase space onto itself, i.e. \((\varvec{q},\varvec{w})\mapsto (\varvec{x},\varvec{p})\) (but note that this definition is formally not valid as \(a\rightarrow 0\) since in the canonical cosmological case the momentum space blows up in this limit). The associated phase-space Jacobian matrix \(\mathsf{\varvec {D}}\), which reflects the effect in Eulerian space of infinitesimal changes to the Lagrangian coordinates, is

where in the last equality, we have split the 6D tensor into four blocks. Its dynamics are fully determined by the canonical equations of motion, which we can obtain after a few steps as (Habib and Ryne 1995; Vogelsberger et al. 2008).

This is equation is called the “geodesic deviation equation” (GDE) in the literature and it quantifies the relative motion in phase space along the Hamiltonian flow. For separable Hamiltonians \(\mathscr {H}=T(\varvec{p},t)+V(\varvec{x},t)\), the coupling matrix \(\mathsf{\varvec {H}}\) becomes

and shows a coupling to the gravitational tidal tensor (Vogelsberger et al. 2008; Vogelsberger and White 2011). The evolution of \(\mathsf{\varvec {D}}\) can be used to track the evolution of an infinitesimal environment in phase space around a trajectory \((\varvec{x}(\varvec{q},\varvec{w};\,t),\,\varvec{p}(\varvec{q},\varvec{w};\,t))\). In particular, from Eq. (23) follows that zero-crossings of the determinant of the \(\mathsf{\varvec {D}}_{\mathrm{xq}}\) block correspond to infinite-density caustics, so that it can be used to estimate the local (single) stream density, and count the number of caustic crossings. Infinite density caustics would cause singular behaviour in the evolution of \(\mathsf{\varvec {D}}\), so that its numerical evolution has to be carried out with sufficient softening (Vogelsberger and White 2011; Stücker et al. 2020). Since it is sensitive to caustic crossings, the GDE can be used to quantify the distinct components of the cosmic web (Stücker et al. 2020, see also Sect. 9.5). The GDE has an intimate connection also to studies of the emergence of chaos in gravitationally collapsed structures since it quantifies the divergence of orbits in phase space and has an intimate connection to Lyapunov exponents (Habib and Ryne 1995). An open problem is how rapidly a collapsed system achieves efficient phase space mixing since discreteness noise in N-body simulations could be dominant in driving phase space diffusion if not properly controlled (Stücker et al. 2020; Colombi 2021).

3 Discretization techniques for Vlasov–Poisson systems

The macroscopic collisionless evolution of non-relativistic self-gravitating classical matter in an expanding universe is governed by the cosmological Vlasov–Poisson (VP) set of Eqs. (16a–16b) derived above. VP describes the evolution of the density \(f(\varvec{x},\varvec{p},t)\) in six-dimensional phase space over time. Due to the non-linear character of the equations and the attractive (focusing) nature of the gravitational interaction, intricate small-scale structures (filamentation) emerge in phase space already in 1+1 dimensions as shown in Fig. 2, and chaotic dynamics can arise in higher dimensional phase space. Various numerical methods to solve VP dynamics have been devised, with intimate connections also to related techniques in plasma physics. The N-body approach is clearly the most prominent and important technique to-day, however, other techniques have been developed to overcome its shortcomings in certain regimes and to test the validity of results. A visual representation of the various approaches to discretise either phase space or the distribution function is shown in Fig. 3.

3.1 The N-body technique

The most commonly used discretisation technique for dark matter simulations is the N-body method, which has been used since the 1960s as a numerical tool to study the Hamiltonian dynamics of gravitationally bound systems such as star and galaxy clusters (von Hoerner 1960; Aarseth 1963; Hénon 1964) by Monte-Carlo sampling the phase space of the system. They started being used to study cosmological structure formation beginning in the early 1970s (Peebles 1971; Press and Schechter 1974; Miyoshi and Kihara 1975), followed by an explosion of the field in the first half of the 1980s (Doroshkevich et al. 1980; Klypin and Shandarin 1983; White et al. 1983; Centrella and Melott 1983; Shapiro et al. 1983; Miller 1983). These works demonstrated the web-like structure of the distribution of matter in the Universe and established that cold dark matter (rather than massive neutrinos) likely provides the “missing” (dark) matter. By the late 1990s, the resolution and dynamic range had increased sufficiently so that it became possible to study the inner structure of dark matter haloes, leading to the discovery of universal halo profiles (Navarro et al. 1997), the large abundance of substructure in CDM subhaloes (Moore et al. 1999; Klypin et al. 1999b), and predictions of the mass function of collapsed structures in the Universe over a large range of masses and cosmic time (Jenkins et al. 2001). The N-body method is now being used in virtually all large state-of-the-art cosmological simulations as the method of choice to simulate the gravitational collapse of cold collisionless matter (cf. Sect. 10 for a review of state-of-the-art simulations). In “total matter” (often somewhat falsely called “dark matter only”) simulations, the N-body mass distribution serves as a proxy of the potential landscape in which galaxy formation takes place. And also in multi-physics simulations that simulate the distinct evolution of dark matter and baryons (see also Sect. 7.8.1), collisionless dark matter is solved via the N-body method, while a larger diversity of methods are employed to evolve the collisional baryonic component (see e.g. Vogelsberger et al. 2020, for a review).

The N-body discretisation Underlying all these simulations is the fundamental N-body idea: the Vlasov equation is the continuum version of the Hamiltonian equations of motion, which implies that phase-space density is conserved along Hamiltonian trajectories. The non-linear coupling in Hamilton’s equations arises through the coupling of particles with gravity via Poisson’s equation, which is only sourced by the density field. Therefore, as long as a finite number of N particles is able to fairly sample the density field, the evolution of the system can be approximated by these discrete trajectories.

A practical complication is that the (formally) infinitely extended mass distribution has to be taken into account. Most commonly, this complication is solved by restricting the simulation to a finite cubical volume \(V=L^3\) of co-moving linear extent L with periodic boundary conditions. This can formally be written as considering infinite copies of this fundamental cubical box. The effective N-body distribution function is then given by a set of discrete macroscopic particle locations and momenta \(\left\{ \left( \varvec{X}_i(t),\varvec{P}_i(t)\right) ,\,i=1\dots N\right\} \) along with the infinite set of periodic copies, so that

is an unbiased sampling of the true distribution function. Here \(M_i\) is the effective particle mass assigned to an N-body particle, m the actual microscopic particle mass, and \(\varvec{X}_i(t)\) and \(\varvec{P}_i(t)\) are the position and momentum of particle i at time t. The most widespread choice of discretisation is one in which all particles are assumed to have equal mass, \(M_i = \overline{M} = \varOmega _m \rho _{\mathrm{c,0}} V / N\). Note, however, that using different masses is also possible and sometimes desirable (e.g., for multi-resolution simulations, see Sect. 6.3.4).

Initial conditions Since the particles are to sample the full six-dimensional distribution function, a key question is how the initial positions \(\varvec{X}_i(t_0)\) and momenta \(\varvec{P}_i(t_0)\) should be chosen. For cold systems, we have derived a consistent approach above and it is given by Eq. (18) which can be readily evaluated from a discrete sampling of the Lagrangian manifold alone, i.e. by choosing an (ideally homogeneous and isotropic) uniform sampling in terms of Lagrangian coordinates \(\varvec{Q}_i\) for each N-body particle (discussed in more detail in Sect. 6.4) and then obtaining the Eulerian position \(\varvec{X}_i\) and momentum \(\varvec{P}_i\) at some initial time \(t_0\) from the Lagrangian map \(\varvec{\varPsi }(\varvec{Q}_i,t_0)\) (see Sect. 6.2 for more details). This means that, in the case of a cold fluid, the particles sample the mean flow velocity exactly. The situation is considerably more involved if the system has a finite temperature which requires to sample not only the three-dimensional Lagrangian submanifold but the full six-dimensional phase space density. This implies that in some sense each particle in the cold case needs to be sub-divided into many particles that sample the momentum part of the distribution function. Particularly for hot distribution functions, where the momentum spread is large compared to mean momenta arising from gravitational instability (such as in the case of neutrinos), this has caused a formidable challenge due to the large associated sampling noise. To circumvent these problems various solutions have been proposed, e.g. using a careful sampling of momentum space using shells in momentum modulus and an angle sampling based on the healpix sphere decomposition (Banerjee et al. 2018), or reduced variance sampling based on the control variates method (Elbers et al. 2021). Such avenues are discussed in more detail in the context of massive neutrino simulations in Sect. 7.8.2 below.

Equations of motion Once the initial particle sampling has been determined, the subsequent evolution is fully governed by VP dynamics. Moving along characteristics of the VP system, the canonical equations of motion for particle \(i=1\dots N\) in cosmic time are obtained from \(\mathrm{d}f(\varvec{X}_i(t),\,\varvec{P}_i(t),\,t)/\mathrm{d}t=0\) as

These are consistent with a cosmic-time Hamiltonian system with a pair interaction potential \(I(\varvec{x},\varvec{x}^{\prime})\) of the form

The resulting acceleration term is given by

which has no contribution from the background \(\overline{\rho }\) for symmetry reasons (Peebles 1980). The co-moving configuration space density \(\rho \) that provides the Poisson source arises from Eq. (30) and is given by

Here, we additionally allowed for a regularisation kernel \(W(\varvec{r})\) that is convolved with the discrete N-body density in order to improve the regularity of the density field and speed up convergence to the collisionless limit (or so one hopes). It represents a softening kernel (also called ‘assignment function’ depending on context) that regularises gravity and accounts for the fact that each particle is not a point-mass (like a star or black hole), but corresponds to an extended piece of phase space so that two-body scattering between the effective particles is always artificial and must be suppressed. We discuss how the acceleration obtained from this infinite sum is solved in practice in Sect. 5.

Discreteness effects The quality of the force calculation rests on how good an approximation the force associated with the density from Eq. (34) is. The hope is that by appropriate choice of W and an as large as possible number N of particles, the evolution remains close to the true collisionless dynamics and microscopic collisions remain subdominant. Due to the discrete nature of the particles, problems of the N-body approach are known to arise when force and mass resolution are not matched in which case the evolution of the discrete system can deviate strongly from that of the continuous limit (Centrella and Melott 1983; Centrella et al. 1988; Peebles et al. 1989; Melott and Shandarin 1989; Diemand et al. 2004b; Melott et al. 1997; Splinter et al. 1998; Wang and White 2007; Melott 2007; Melott and Shandarin 1989; Marcos 2008; Bagla and Khandai 2009). A slow convergence to the correct physical solution can, however, usually be achieved by keeping the softening so large that individual particles are never resolved. At the same time, if the force resolution in CDM simulations is not high enough at late times, then sub-haloes are comparatively loosely bound and prone to premature tidal disruption, leading to the ‘overmerging’ effect and the resulting orphaned galaxies (i.e., if the subhalo hosted a galaxy, it would still be a distinct system rather than having merged with the host), e.g. Klypin et al. (1999a), Diemand et al. (2004a), van den Bosch et al. (2018). In this case, one would want to choose the softening as small as possible. We discuss this in more detail in Sect. 8.2.

More sophisticated choices of W beyond a global (possibly time-dependent) softening scale are possible, for instance, the scale can depend on properties of particles, such as the local density (leading to what is called “adaptive softening”). We discuss the aspect of force regularisation by softening in more detail in Sect. 8.2. The gravitational acceleration that follows from Eq. (34) naturally has to take into account the cosmological Poisson equation, i.e., include the subtraction of the mean density and assume periodic boundary conditions. All aspects related to the time integration of cosmological Hamiltonians will be discussed in Sect. 4, those related to computing and evaluating gravitational interactions efficiently in Sect. 5 below.

Discretisations used in the numerical solution of Vlasov–Poisson: a the N-body method which samples the fine grained distribution function (light gray line) at discrete locations, b the ‘GDE’ method that can evolve the local manifold structure along with the particles (the green eigenvectors of \(\mathsf {D}_{\mathsf {xq}}\) are tangential to the Lagrangian submanifold, c the sheet tessellation method, which uses interpolation (here linear) between particles to approximate the Lagrangian submanifold with a tessellation, d a finite volume discretisation of the full phase space with uniform resolution \(\varDelta x\) in configuration space and \(\varDelta p\) in momentum space

3.2 Phase space deformation tracking and Lagrangian submanifold approximations

For large enough N, the N-body method is expected to converge to the collisionless limit. Nonetheless, an obvious limitation of this approach is that the underlying manifold structure is entirely lost as the particles retain only knowledge of positions and momenta and all other quantities (e.g. density, as well as other mean field properties) can only be recovered by coarse-graining a larger number of particles. Two different classes of methods, that we shall discuss next, have been developed over recent years that overcome this key limitation in various ways. The first class is based on promoting particles (which are essentially vectors) to tensors and re-write the canonical equations of motion to evolve them accordingly, resulting in equations of motion reminiscent of the geodesic deviation equation (GDE) in general relativity. The second class retains the particles but promotes them to vertices of a tessellation whose cells provide a discretisation of the manifold.

3.2.1 Tracking deformation in phase space—the GDE approach

We already discussed in Sect. 2.6 how infinitesimal volume elements of phase space evolve under a Hamiltonian flow. In particular, Eq. (28) is the canonical equation of motion for the phase-space Jacobian matrix. In the ‘GDE’ approach, instead of evolving only the vector \((\varvec{X}_i,\varvec{P}_i)\) for each N-body particle, one evolves in addition the tensor \(\mathsf{\varvec {D}}_i\) for each particle [cf. Vogelsberger et al. (2008), White and Vogelsberger (2009), but see also Habib and Ryne (1995) who derive a method to compute Lyapunov exponents based on the same equations]. Of particular interest is the \(\mathsf{\varvec {D}}_{\mathrm{xq}}\) sub-block of the Jacobian matrix since it directly tracks the local (stream) density associated with each N-body particle through \(\delta _i+1 = \left( \det \mathsf{\varvec {D}}_{\mathrm{xq},i}\right) ^{-1}\). The equations of motion for the relevant tensors associated to particle i are

and are solved alongside the N-body equations of motion (31) by computing the tidal tensor \(\mathsf{\varvec {T}}\). One caveat with the GDE approach is that the evolution of \(\mathsf{\varvec {D}}_\mathrm{xq}\) is determined not by the force but by the tidal field—which contains one higher spatial derivative of the potential than the force—and therefore is significantly less regular than the force field (see the detailed discussion and analysis in Stücker et al. (2021c) who have also studied the stream density evolution in virialised halos, based on a novel low-noise force calculation). This approach thus requires larger softening to achieve converged answers than a usual N-body simulation, and possibly cannot be shown to converge in the limit of infinite density caustics.

Evolving \(\mathsf{\varvec {D}}_{\mathrm{xq}}\) provides additional information about cosmic structure that is not accessible by standard N-body simulations. For instance, solving for the GDE enabled (Vogelsberger et al. 2008; Vogelsberger and White 2011) to estimate the number of caustics in dark matter haloes, which might add a boost to the self-annihilation rate of CDM particles, or the amount of chaos and mixing in haloes. A key result of Vogelsberger and White (2011) was that despite the large over-densities reached in collapsed structures, each particle is nonetheless inside of a stream with a density not too different from the cosmic mean density. This is possible since haloes are built like pâte feuilletée as a layered structure of many stretched and folded streams, as can be seen in panel a) of Fig. 5.

Images reproduced with permission [a-b] from Abel et al. (2012) and (c, d) from Stücker et al. (2020), copyright by the authors

a, b The density field obtained from the same set of N-body particles as a simple particle N-body density in (a) and in terms of a phase space sheet interpolation in terms of tetrahedral cells in (b). c, d The GDE method and the sheet tessellation method provide direct access to the stream density, which is shown in Lagrangian \(\varvec{q}\)-space for c the GDE approach and d the sheet tessellation approach

3.2.2 The dark matter sheet and phase space interpolation

A different idea to reconstruct the Lagrangian submanifold from existing N-body simulations was proposed by Abel et al. (2012) and Shandarin et al. (2012) who noted that a tessellation of Lagrangian space, constructed by using the initial positions of the N-body particles at very early times as vertices (i.e., the particles generate a simplicial complex on the Lagrangian submanifold), is topologically preserved in Hamiltonian evolution. This means that initially neighbouring particles can be connected up as non-overlapping tetrahedra (in the case of a 3D submanifold of 6D phase space). Their deformation and change of position and volume reflect the evolution of the phase-space distribution (and thus changes in the density field). A visual impression of the difference between an N-body density and this tessellation based density is given in Fig. 4. No holes can appear through Hamiltonian dynamics, but since the divergence of initially neighbouring points depends on the specific dynamics (notably the Lyapunov exponents that described the divergence of such trajectories), the edge connecting two vertices can become a tangled curve due to the complex dynamics in bound systems, e.g., Laskar (1993), Habib and Ryne (1995). As long as the tetrahedra edges still approximate well the submanifold, the simplicial complex provides access to a vast amount of information about the distribution of matter in phase space in an evolved system that is difficult or even impossible to reconstruct from N-body simulations. Most notably, it yields an estimate of density that is local but defined everywhere in space, shot-noise free, and produces sharply delineated caustics of dark matter after shell-crossing (Abel et al. 2012), leading also to new rendering techniques for 3D visualisation of the cosmic density field (Kähler et al. 2012; Igouchkine et al. 2016; Kaehler 2017), and very accurate estimators of the cosmic velocity field (Hahn et al. 2015; Buehlmann and Hahn 2019).

Since the density is well defined everywhere in space just from the vertices, and reflects well the anisotropic motions in gravitational collapse, Hahn et al. (2013) have proposed that this density field can be used as the source density field when solving Poisson’s equation as part of the dynamical evolution of the system. The resulting method, where few N-body particles define the simplicial complex that together determine the density field, solves the artificial fragmentation problem of the N-body method for WDM initial conditions (Hahn et al. 2013). The complex dynamics in late stages of collapse, however, limits the applicability of a method with a fixed number of vertices. This problem was later solved by allowing for higher order reconstructions of the Lagrangian manifold from N-body particles—corresponding in some sense to non-constant-metric finite elements in Lagrangian space—, and dynamical refinement (Hahn and Angulo 2016; Sousbie and Colombi 2016). For systems that exhibit strong mixing (phase or even chaotic, such as dark matter haloes), following the increasingly complex dynamics by inserting new vertices becomes quickly prohibitive (e.g., Sousbie and Colombi 2016; Colombi 2021 report an extremely rapid growth of vertices over time in a cosmological simulation with only moderate force resolution). Stücker et al. (2020) have carried out a comparison of the density estimated from phase space interpolation and that obtained from the GDE and found excellent agreement between the two except in the center of halos. The comparison between the two density estimates, shown in Lagrangian space, is reproduced in the bottom panels of Fig. 4.

The path forward in this direction lies likely in the use of hybrid N-body/sheet methods that exploit the best of both worlds as proposed by Stücker et al. (2020). Panel a) of Fig. 5 shows a 1+1D cut through 3+3D phase space for the case of a CDM halo comparing the result of a sheet-based simulation, where the cut results in a finite number continuous lines (top) and the equivalent results for a thin slice from an N-body simulation. The general impact of spurious phase space diffusion driven by the N-body method is still not very well understood with detailed comparison between various solvers under way (e.g., Halle et al. 2019; Stücker et al. 2020; Colombi 2021).

For hot distribution functions, such as e.g. neutrinos, the phase space distribution of matter is not fully described by the Lagrangian submanifold. While a 6D tessellation is feasible in principle, it has undesirable properties due to the inherent shearing along the momentum dimensions. However, Lagrangian submanifolds can still be singled out to provide a foliation of general six-dimensional phase space by selecting multiple Lagrangian submanifolds that are offset from each other initially by constant momentum vectors as proposed by Dupuy and Bernardeau (2014), Kates-Harbeck et al. (2016).

Comparison of evolved structures from N-body simulations with other discretisation approaches. a Comparison of a 1+1 dimensional phase space cut from simulations of a three-dimensional collapse of a CDM halo using a sheet tessellation with refinement (top, cf. Sousbie and Colombi 2016), and a reference N-body particle mesh simulation (bottom). The panels show an infinitely thin slice in the sheet case, and a finitely thin projection in the N-body case. b Simulations of collapse in 1+1D phase space with a particle mesh N-body method, the integer lattice method proposed by Mocz and Succi (2017), as well as two finite volume approaches, one where slabs in velocity space are allowed to continuously move against each other (‘moving mesh’). Images repsroduced with permission from [a] Colombi (2021), copyright by the author; and [b] from Mocz and Succi (2017)

Another version of phase space interpolation has been discussed in the context of collisionless dynamics by Colombi and Touma (2008, 2014), the so-called ‘waterbag’ method, which allows for general non-cold initial data but is restricted to 1+1 dimensional phase space. In this approach one exploits that the value of the distribution function is conserved along characteristics. If one now traces out isodensity countours of f in phase space, one finds a sequence of n (closed) lines defining the level set \(\left\{ (x,p)\;|\;f(x,p,t_0)=f_i\right\} \) of 1+1D phase space with \(i=1\dots n\) at the initial time \(t_0\). In 1+1D, these are closed curves. The curves can then be approximated using a number of vertices and interpolation between them. Moving the vertices along characteristics then guarantees that they remain part of the level set at all times as phase space density is conserved along characteristics. The number of vertices can be adaptively increased in order to maintain a high quality representation of the set contour interpolation at all times. The acceleration of the vertices can be conveniently defined in terms of integrals over the contours (cf. Colombi and Touma 2014).

3.3 Full phase-space techniques

Almost as old as the N-body approach to solve gravitational Vlasov–Poisson dynamics are approaches to directly solve the continuous problem for an incompressible fluid in phase space (cf. Fujiwara 1981 for 1+1 dimensions). By discretising phase space into cells of finite size in configuration and momentum space (\(\varDelta x\) and \(\varDelta p\) respectively) standard finite volume, finite difference methods or semi-Lagrangian techniques for incompressible flow can be employed. The main disadvantage of this approach is that memory requirements can be prohibitive since, without adaptive techniques or additional sophistications, the memory needed to evolve a three-dimensional system scales as \(\mathcal {O}(N_x^3\times N_p^3)\) to achieve a linear resolution of \(N_x\) cells per configuration space dimension and \(N_p\) cells per momentum space dimension. Only rather recently this has become possible at all as demonstrated for gravitational interactions by Yoshikawa et al. (2013) and Tanaka et al. (2017). The limited resolution that can be afforded in 3+3 dimensions leads to non-negligible diffusion errors even with high order methods, so that this direct approach is arguably best suited for hot mildly non-linear systems such as e.g. neutrinos (Yoshikawa et al. 2020, 2021), as the resolution required for colder systems is prohibitive. As a way to reduce such errors, Colombi and Alard (2017) proposed a semi-Lagrangian ‘metric’ method that uses a generalisation of the ‘GDE’ deformation discussed above to improve the interpolation step and reduce the diffusion error in such schemes.

As another way to overcome the diffusion problem, integer lattice techniques have been discussed (cf. Earn and Tremaine 1992), which exploit that if the time step is matched to the phase-space discretisation, i.e., \(\varDelta t = m (\varDelta x / \varDelta p)\), then the configuration space advection is exact and a reversible Hamiltonian system can be obtained for the lattice model discretisation. While this approach does not overcome the \(\mathcal {O}(N^6)\) memory scaling problem of a full phase-space discretisation technique, recently Mocz et al. (2017) have proposed important optimisations that might allow \(\approx \mathcal {O}(N^4)\) scaling by overcomputing, but that, to our knowledge, have not been demonstrated yet in 3+3 dimensional simulations. Results obained by Mocz et al. (2017) comparing the various techniques are shown in Fig. 5.

3.4 Schrödinger–Poisson as a discretisation of Vlasov–Poisson

An entirely different approach to discretise the Vlasov–Poisson system by exploiting the quantum-classical correspondence has been proposed by Widrow and Kaiser (1993) in the 1990s. Hereby one exploits that full information about the system, such as density, velocity, etc., can be recovered from the (complex) wave function, and phase space is discretised by a (here tuneable, not physical) quantisation scale \(\hbar =2\varDelta x\varDelta p\). Since the Schrödinger–Poisson system converges in the limit of \(\hbar \rightarrow 0\) to Vlasov–Poisson (Zhang et al. 2002), it can be used as a UV modified analogue model also for classical dynamics if one restricts attention (i.e. smoothes) on scales larger than \(\hbar \). It is important to note that the phase of the wave function is intimately related to the Lagrangian submanifold—both are given by a single scalar degree of freedom. For this reason, the Schrödinger–Poisson analogue has the advantage that it provides a full phase space theory with only a three-dimensional field (the wave function) that needs to be evolved. Following the first implementation by Widrow and Kaiser (1993), this model has found renewed interest recently (Uhlemann et al. 2014; Schaller et al. 2014; Kopp et al. 2017; Eberhardt et al. 2020; Garny et al. 2020). It is important to note that the underlying equations are identical to those of ‘fuzzy dark matter’ (FDM) models of ultralight axion-like particles, which we discuss in more detail in Sect. 7.4, in the absence of a self-interaction term. In the case of FDM, the quantum scale \(\hbar /m_{\mathrm{FDM}}\) is set by the mass \(m_{\mathrm{FDM}}\) of the microscopic particle and is (likely) not a numerical discretisation scale dictated by finite memory.

4 Time evolution

As we have shown above, large-scale dark matter simulations have an underlying Hamiltonian structure, usually with a time-dependent Hamiltonian. Mathematically, such Hamiltonian systems have a very rigid underlying structure, where the phase-space area spanned by canonically conjugate coordinates and momenta is conserved over time. Consequentially, specific techniques for the integration of Hamiltonian dynamical systems exist that preserve such underlying structure even in a numerical setting. For this reason, this section focuses almost exclusively on integration techniques for Hamiltonian systems as they arise in the context of cosmological simulations.

4.1 Symplectic integration of cosmological Hamiltonian dynamics

In the cosmological N-body problem, Hamiltonians arising in the Newtonian limit are typically of the non-autonomous but separable type, i.e. can be written

where \(\varvec{X}_i\) and \(\varvec{P}_i\) are canonically conjugate, and \(\alpha (t)\) and \(\beta (t)\) are time-dependent functions that absorb all explicit time dependence (i.e. all factors of ‘a’ are pulled out of the Poisson equation for V). In cosmic time t one has \(\alpha =a(t)^{-2}\) and \(\beta =a(t)^{-1}\), which is not a clever choice of time coordinate since then the time dependence appears in both terms which complicates higher order symplectic integration schemes as we discuss below. The unique best choice is to not forget the relativistic origin of this Hamiltonian and consider time as a coordinate in extended phase space (cf. Lanczos 1986), using a parametric time \(\tilde{t}\) with \(\mathrm{d}\tilde{t} = a^{-2} \mathrm{d}t\) so that \(\alpha =1\) and \(\beta =a(t)\). This coincides with the “super-conformal time” first introduced by Doroshkevich et al. (1973) and extensively discussed by Martel and Shapiro (1998) under the name “super-comoving” coordinates.

Grouping coordinates and momenta together as \(\varvec{\xi }_j:=(\varvec{X},\varvec{P})_j\) and remembering that the equations of motion can be written in terms of Poisson bracketsFootnote 6 as \(\dot{\varvec{P}}_j = \left\{ \varvec{P}_j,\,\mathscr {H} \right\} \) and \(\dot{\varvec{X}}_j = \left\{ \varvec{X}_j,\,\mathscr {H} \right\} \), one can write the canonical equations as a first order operator equation

which defines the drift and kick operators \(\hat{D}\) and \(\hat{K}\), respectively. This first order operator equation has the formal solution

where Dyson’s time-ordering operator \(\mathcal {T}\) is needed because the operator \(\hat{\mathscr {H}}\) is time-dependent. Upon noticing that the kick acts only on the momenta and it depends only on V (and therefore on the positions), and that the drift acts only on the positions and depends only on the momenta, one can seek for time-explicit operator factorisations that split the coordinate and momentum updates in the form

with appropriately chosen coefficients \(\epsilon _j\) that in general depend on (multiple) time integrals of \(\alpha \) and \(\beta \) (Magnus 1954; Oteo and Ros 1991; Blanes et al. 2009). This is a higher-order generalisation of the Baker–Campbell–Hausdorff (BCH) expansion in the case that \(\alpha \) and \(\beta \) are constants (Yoshida 1990). The cancellation of commutators in the BCH expansion by tuning of the coefficients \(\epsilon _j\) determines the order of the error exponent m on the right hand side of Eq. (39). It is important to note that if both \(\alpha \) and \(\beta \) are time-dependent then the generalised BCH expansion contains unequal-time commutators and the error is typically at best \(\mathcal {O}(\epsilon ^3)\). It is therefore much simpler to consider only the integration in extended phase space in super-conformal time, in which no unequal-time commutators appear and standard higher order BCH expansion formulae can be used. While some N-body codes (e.g., Ramses, Teyssier 2002) use super-conformal time, one finds numerous other choices of integration time for second order accurate integrators in the literature (e.g., Quinn et al. 1997; Springel 2005). In order to allow for generalisations to higher orders, we discuss here how to construct an extended phase-space integrator. Consider the set of coordinates \((\varvec{X}_j,\,a)\), \(j=1\dots N\), including the cosmic expansion factor, with conjugate momenta \((\varvec{P}_j,\,p_a)\) along with the new extended phase-space Hamiltonian in super-conformal time

Then the second order accurate “leap-frog” integrator is found when \(\epsilon _1=\epsilon _3=\epsilon /2\) and \(\epsilon _2=\epsilon \) in Eq. (39) (all higher orders \(\epsilon _{3\dots n}=0\)) after expanding the operator exponentials to first order into their generators \(\exp [\epsilon \hat{D}]\simeq I + \epsilon \hat{D}\). The final integrator takes the form

or explicitly as it could be implemented in code