Abstract

In the real world, it is not uncommon to face an optimization problem with more than three objectives. Such problems, called many-objective optimization problems (MaOPs), pose great challenges to the area of evolutionary computation. The failure of conventional Pareto-based multi-objective evolutionary algorithms in dealing with MaOPs motivates various new approaches. However, in contrast to the rapid development of algorithm design, performance investigation and comparison of algorithms have received little attention. Several test problem suites which were designed for multi-objective optimization have still been dominantly used in many-objective optimization. In this paper, we carefully select (or modify) 15 test problems with diverse properties to construct a benchmark test suite, aiming to promote the research of evolutionary many-objective optimization (EMaO) via suggesting a set of test problems with a good representation of various real-world scenarios. Also, an open-source software platform with a user-friendly GUI is provided to facilitate the experimental execution and data observation.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

The field of evolutionary multi-objective optimization has developed rapidly over the last two decades, but the design of effective algorithms for addressing problems with more than three objectives (called many-objective optimization problems, MaOPs) remains a great challenge. First, the ineffectiveness of the Pareto dominance relation, which is the most important criterion in multi-objective optimization, results in the underperformance of traditional Pareto-based algorithms. Also, the aggravation of the conflict between convergence and diversity, along with increasing time or space requirement as well as parameter sensitivity, has become key barriers to the design of effective many-objective optimization algorithms. Furthermore, the infeasibility of solutions’ direct observation can lead to serious difficulties in algorithms’ performance investigation and comparison. All of these suggest the pressing need of new methodologies designed for dealing with MaOPs, new performance metrics and benchmark functions tailored for experimental and comparative studies of evolutionary many-objective optimization (EMaO) algorithms.

In recent years, a number of new algorithms have been proposed for dealing with MaOPs [1], including the convergence enhancement based algorithms such as the grid-dominance-based evolutionary algorithm (GrEA) [2], the knee point-driven evolutionary algorithm (KnEA) [3], the two-archive algorithm (Two_Arch2) [4]; the decomposition-based algorithms such as the NSGA-III [5], and the evolutionary algorithms based on both dominance and decomposition (MOEA/DD) [6], and the reference vector-guided evolutionary algorithm (RVEA) [7]; the performance indicator-based algorithms such as the fast hypervolume-based evolutionary algorithm (HypE) [8]. In spite of the various algorithms proposed for dealing with MaOPs, the literature still lacks a benchmark test suite for evolutionary many-objective optimization.

Benchmark functions play an important role in understanding the strengths and weaknesses of evolutionary algorithms. In many-objective optimization, several scalable continuous benchmark function suites, such as DTLZ [9] and WFG [10], have been commonly used. Recently, researchers have also designed/presented some problem suites specially for many-objective optimization [11,12,13,14,15,16]. However, all of these problem suites only represent one or several aspects of real-world scenarios. A set of benchmark functions with diverse properties for a systematic study of EMaO algorithms are not available in the area. On the other hand, existing benchmark functions typically have a “regular” Pareto front, overemphasize one specific property in a problem suite, or have some properties that appear rarely in real-world problems [17]. For example, the Pareto front of most of the DTLZ and WFG functions is similar to a simplex. This may be preferred by decomposition-based algorithms which often use a set of uniformly distributed weight vectors in a simplex to guide the search [7, 18]. This simplex-like shape of Pareto front also causes an unusual property that any subset of all objectives of the problem can reach optimality [17, 19]. This property can be very problematic in the context of objective reduction, since the Pareto front degenerates into only one point when omitting one objective [19]. Also for the DTLZ and WFG functions, there is no function having a convex Pareto front; however, a convex Pareto front may bring more difficulty (than a concave Pareto front) for decomposition-based algorithms in terms of solutions’ uniformity maintenance [20]. In addition, the DTLZ and WFG functions which are used as MaOPs with a degenerate Pareto front (i.e., DTLZ5, DTLZ6 and WFG3) have a nondegenerate part of the Pareto front when the number of objectives is larger than four [10, 21, 22]. This naturally affects the performance investigation of evolutionary algorithms on degenerate MaOPs.

This paper carefully selects/designs 15 test problems to construct a benchmark test suite for evolutionary many-objective optimization. The 15 benchmark problems are with diverse properties which cover a good representation of various real-world scenarios, such as being multimodal, disconnected, degenerate, and/or nonseparable, and having an irregular Pareto front shape, a complex Pareto set or a large number of decision variables (as summarized in Table 1). Our aim is to promote the research of evolutionary many-objective optimization via suggesting a set of benchmark functions with a good representation of various real-world scenarios. Also, an open-source software platform with a user-friendly GUI is provided to facilitate the experimental execution and data observation. In the following, Sect. “Function definitions” details the definitions of the 15 benchmark functions, and Sect. “Experimental setup” presents the experimental setup for benchmark studies, including general settings, performance indicators, and software platform.

Function definitions

-

D: number of decision variables

-

M: number of objectives

-

\(\mathbf {x} = (x_1, x_2, \ldots , x_D)\): decision vector

-

\(f_i\): ith objective function

MaF1 (modified inverted DTLZ1 [23])

with

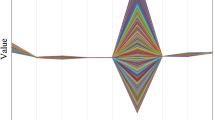

where the number of decision variable is \(D = M + K - 1\), and K denotes the size of \(\mathbf {x}_M\), namely \(K = |\mathbf {x}_M|\), with \(\mathbf {x}_M = (x_M,\ldots ,x_D)\). As shown in Fig. 1, this test problem has an inverted PF, while the PS is relatively simple. This test problem is used to assess whether EMaO algorithms are capable of dealing with inverted PFs. Parameter settings of this test problem are: \(\mathbf {x} \in [0, 1]^D\) and \(K = 10\) (Fig. 2).

MaF2 (DTLZ2BZ [19])

with

where the number of decision variable is \(D = M + K - 1\), and K denotes the size of \(\mathbf {x}_M\), namely \(K = |\mathbf {x}_M|\), with \(\mathbf {x}_M = (x_M,\ldots ,x_D)\). This test problem is modified from DTLZ2 to increase the difficulty of convergence. In original DTLZ2, it is very likely that the convergence can be achieved once the \(g(\mathbf {x}_M) = 0\) is satisfied; by contrast, for this modified version, all the objective have to be optimized simultaneously to reach the true PF. Therefore, this test problem is used to assess the whether and MOEA is able to perform concurrent convergence on different objectives. Parameter settings are: \(\mathbf {x} \in [0, 1]^D\) and \(K = 10\).

MaF3 (convex DTLZ3 [5])

with

where the number of decision variable is \(D = M + K - 1\), and K denotes the size of \(\mathbf {x}_M\), namely \(K = |\mathbf {x}_M|\), with \(\mathbf {x}_M = (x_M,\ldots ,x_D)\). As shown in Fig. 3, this test problem has a convex PF, and there a large number of local fronts. This test problem is mainly used to assess whether EMaO algorithms are capable of dealing with convex PFs. Parameter settings of this test problem are: \(\mathbf {x} \in [0, 1]^D\), \(K = 10\) (Fig. 4).

MaF4 (inverted badly scaled DTLZ3)

with

where the number of decision variable is \(D = M + K - 1\), and K denotes the size of \(\mathbf {x}_M\), namely \(K = |\mathbf {x}_M|\), with \(\mathbf {x}_M = (x_M,\ldots ,x_D)\). Parameter settings are \(a = 2\). Besides, the fitness landscape of this test problem is highly multimodal, containing a number of \((3^k - 1)\) local Pareto-optimal fronts. This test problem is used to assess whether EMaO algorithms are capable of dealing with badly scaled PFs, especially when the fitness landscape is highly multimodal. Parameter settings of this test problem are: \(\mathbf {x} \in [0, 1]^n\), \(K = 10\) and \(a = 2\).

MaF5 (convex badly scaled DTLZ4)

with

where the number of decision variable is \(D = M + K - 1\), and K denotes the size of \(\mathbf {x}_M\), namely \(K = |\mathbf {x}_M|\), with \(\mathbf {x}_M = (x_M,\ldots ,x_D)\). As shown in Fig. 5, this test problem has a badly scaled PF, where each objective function is scaled to a substantially different range. Besides, the PS of this test problem has a highly biased distribution, where the majority of Pareto optimal solutions are crowded in a small subregion. This test problem is used to assess whether EMaO algorithms are capable of dealing with badly scaled PFs/PSs. Parameter settings of this test problem are: \(\mathbf {x} \in [0, 1]^D\), \(\alpha = 100\) and \(a = 2\).

MaF6 (DTLZ5(I,M) [24])

with

where the number of decision variable is \(D = M + K - 1\), and K denotes the size of \(\mathbf {x}_M\), namely \(K = |\mathbf {x}_M|\), with \(\mathbf {x}_M = (x_M,\ldots ,x_D)\). As shown in Fig. 6, this test problem has a degenerate PF whose dimensionality is defined using parameter I. In other words, the PF of this test problem is always an I-dimensional manifold regardless of the specific number of decision variables. This test problem is used to assess whether EMaO algorithms are capable of dealing with degenerate PFs. Parameter settings are: \(\mathbf {x} \in [0, 1]^D\), \(I = 2\) and \(K = 10\).

MaF7 (DTLZ7 [9])

with

where the number of decision variable is \(D = M + K - 1\), and K denotes the size of \(\mathbf {x}_M\), namely \(K = |\mathbf {x}_M|\), with \(\mathbf {x}_M = (x_M,\ldots ,x_D)\). As shown in Fig. 7, this test problem has a disconnected PF where the number of disconnected segments is \(2^{M - 1}\). This test problem is used to assess whether EMaO algorithms are capable of dealing with disconnected PFs, especially when the number of disconnected segments is large in high-dimensional objective space. Parameter settings are: \(\mathbf {x} \in [0, 1]^n\) and \(K = 20\).

MaF8 (multi-point distance minimization problem [11, 12])

This function considers a two-dimensional decision space. As its name suggests, for any point \(\mathbf {x} = (x_1,x_2)\) MaF8 calculates the Euclidean distance from \(\mathbf {x}\) to a set of M target points \((A_1,A_2,\ldots ,A_M)\) of a given polygon. The goal of the problem is to optimize these M distance values simultaneously. It can be formulated as

where \(d(\mathbf {x},A_i)\) denotes the Euclidean distance from point \(\mathbf {x}\) to point \(A_i\).

One important characteristic of MaF8 is its Pareto optimal region in the decision space is typically a 2D manifold (regardless of the dimensionality of its objective vectors). This naturally allows a direct observation of the search behavior of EMaO algorithms, e.g., the convergence of their population to the Pareto optimal solutions and the coverage of the population over the optimal region.

In this test suite, the regular polygon is used (to unify with MaF9). The center coordinates of the regular polygon (i.e., Pareto optimal region) are (0, 0) and the radius of the polygon (i.e., the distance of the vertexes to the center) is 1.0. Parameter settings are: \(\mathbf {x}\in [-10{,}000, 10{,}000]^2\). Figure 8 shows the Pareto optimal regions of the three-objective and ten-objective MaF8.

MaF9 (multi-line distance minimization problem [25])

This function considers a two-dimensional decision space. For any point \(\mathbf {x} = (x_1,x_2)\), MaF9 calculates the Euclidean distance from \(\mathbf {x}\) to a set of M target straight lines, each of which passes through an edge of the given regular polygon with M vertexes \((A_1,A_2,\ldots ,A_M)\), where \(M \ge 3\). The goal of MaF9 is to optimize these M distance values simultaneously. It can be formulated as

where \(\overleftrightarrow {A_iA_j}\) is the target line passing through vertexes \(A_i\) and \(A_j\) of the regular polygon, and \(d(\mathbf {x},\overleftrightarrow {A_iA_j})\) denotes the Euclidean distance from point \(\mathbf {x}\) to line \(\overleftrightarrow {A_iA_j}\).

One key characteristic of MaF9 is that the points in the regular polygon (including the boundaries) and their objective images are similar in the sense of Euclidean geometry [25]. In other words, the ratio of the distance between any two points in the polygon to the distance between their corresponding objective vectors is a constant. This allows a straightforward understanding of the distribution of the objective vector set (e.g., its uniformity and coverage over the Pareto front) via observing the solution set in the two-dimensional decision space. In addition, for MaF9 with an even number of objectives (\(M = 2k\) where \(k \ge 2\)), there exist k pairs of parallel target lines. Any point (outside the regular polygon) residing between a pair of parallel target lines is dominated by only a line segment parallel to these two lines. This property can pose a great challenge for EMaO algorithms which use Pareto dominance as the sole selection criterion in terms of convergence, typically leading to their populations trapped between these parallel lines [14].

For MaF9, all points inside the polygon are the Pareto optimal solutions. However, these points may not be the sole Pareto optimal solutions of the problem. If two target lines intersect outside the regular polygon, there exist some areas whose points are nondominated with the interior points of the polygon. Apparently, such areas exist in the problem with five or more objectives in view of the convexity of the considered polygon. However, the geometric similarity holds only for the points inside the regular polygon. The Pareto optimal solutions that are located outside the polygon will affect this similarity property. So, we set some regions infeasible in the search space of the problem. Formally, consider an M-objective MaF9 with a regular polygon of vertexes \((A_1,A_2,\ldots ,A_M)\). For any two target lines \(\overleftrightarrow {A_{i-1}A_i}\) and \(\overleftrightarrow {A_{n}A_{n+1}}\) (without loss of generality, assuming \(i<n\)) that intersect one point (O) outside the considered regular polygon, we can construct a polygon (denoted as \(\Phi _{A_{i-1}A_iA_{n}A_{n+1}}\)) bounded by a set of \(2(n-i)+2\) line segments: \(\overline{A_iA_n'}, \overline{A_n'A_{n-1}'}, \ldots , \overline{A_{i+1}'A_i'}, \overline{A_i'A_n}, \overline{A_nA_{n-1}}, \ldots , \overline{A_{i+1}A_i}\), where points \(A_i', A_{i+1}',\ldots , A_{n-1}', A_n'\) are symmetric points of \(A_i, A_{i+1},\ldots A_{n-1}, A_n\) with respect to central point O. We constrain the search space of the problem outside such polygons (but not including the boundary). Now the points inside the regular polygon are the sole Pareto optimal solutions of the problem. In the implementation of the test problem, for newly produced individuals which are located in the constrained areas of the problem, we simply reproduce them within the given search space until they are feasible.

In this test suite, the center coordinates of the regular polygon (i.e., Pareto optimal region) are (0, 0) and the radius of the polygon (i.e., the distance of the vertexes to the center) is 1.0. Parameter settings are: \(\mathbf {x}\in [-10{,}000, 10{,}000]^2\). Figure 9 shows the Pareto optimal regions of the three-objective and ten-objective MaF9.

MaF10 (WFG1 [10])

with

where the number of decision variable is \(D=K+L\), with K denoting the number of position variables and L denoting the number of distance variables. As shown in Fig. 10, this test problem has a scaled PF containing both convex and concave segments. Besides, there are a lot of transformation functions correlating the decision variables and the objective functions. This test problem is used to assess whether EMaO algorithms are capable of dealing with PFs of complicated mixed geometries. Parameter settings are: \(\mathbf {x}\in \prod _{i=1}^{D}[0,2i]\), \(K=M-1\), and \(L=10\).

MaF11 (WFG2 [10])

with

where the number of decision variable is \(n=K+L\), with K denoting the number of position variables and L denoting the number of distance variables. As shown in Fig. 11, this test problem has a scaled disconnected PF. This test problem is used to assess whether EMaO algorithms are capable of dealing with scaled disconnected PFs. Parameter settings are: \(\mathbf {x}\in \prod _{i=1}^{D}[0,2i]\), \(K=M-1\), and \(L=10\).

MaF12 (WFG9 [10])

with

where the number of decision variable is \(D=K+L\), with K denoting the number of position variable and L denoting the number of distance variable. As shown in Fig. 12, this test problem has a scaled concave PF. Although the PF of this test problem is simple, its decision variables are nonseparably reduced, and its fitness landscape is highly multimodal. This test problem is used to assess whether EMaO algorithms are capable of dealing with scaled concave PFs together with complicated fitness landscapes. Parameter settings are: \(\mathbf {x}\in \prod _{i=1}^{D}[0,2i]\), \(K=M-1\), and \(L=10\).

MaF13 (PF7 [13])

with

where the number of decision variable is \(D=5\). As shown in Fig. 13, this test problem has a concave PF; in fact, the PF of this problem is always a unit sphere regardless of the number of objectives. Although this test problem has a simple PF, its decision variables are nonlinearly linked with the first and second decision variables, thus leading to difficulty in convergence. This test problem is used to assess whether EMaO algorithms are capable of dealing with degenerate PFs and complicated variable linkages. Parameter setting is: \(\mathbf {x}\in [0,1]^2\times [-2,2]^{D-2}\).

MaF14 (LSMOP3 [16])

with

where \(N_k\) denotes the number of variable subcomponent in each variable group \(\mathbf {x}^s_i\) with \(i = 1,\ldots ,M\), and \(u_i\) and \(l_i\) are the upper and lower boundaries of the ith decision variable in \(\mathbf {x}^s\). Although this test problem has a simple linear PF, its fitness landscape is complicated. First, the decision variables are non-uniformly correlated with different objectives; second, the decision variables have mixed separability, i.e., some of them are separable while others are not. This test problem is mainly used to assess whether EMaO algorithms are capable of dealing with complicated fitness landscape with mixed variable separability, especially in large-scale cases. Parameter settings are: \(N_k = 2\) and \(D = 20\times M\).

MaF15 (inverted LSMOP8 [16])

with

where \(N_k\) denotes the number of variable subcomponent in each variable group \(\mathbf {x}^s_i\) with \(i = 1,\ldots ,M\), and \(u_i\) and \(l_i\) are the upper and lower boundaries of the ith decision variable in \(\mathbf {x}^s\). Although this test problem has a simple convex PF, its fitness landscape is complicated. First, the decision variables are non-uniformly correlated with different objectives; second, the decision variables have mixed separability, i.e., some of them are separable while others are not. Different from MaF14, this test problem has non-linear (instead of linear) variable linkages on the PS, which further increases the difficulty. This test problem is mainly used to assess whether EMaO algorithms are capable of dealing with complicated fitness landscape with mixed variable separability, especially in large-scale cases. Parameter settings are: \(N_k = 2\) and \(D = 20\times M\) in Figs. 14 and 15.

Experimental setup

To conduct benchmark experiments using the proposed test suite, users may follow the experimental setup as given below.

General settings

-

Number of objectives (M) 5, 10, 15

-

Maximum population size Footnote 1 \(25\times M\)

-

Maximum number of fitness evaluations (FEs) Footnote 2 \(\max \{100000, 10000\times D\}\)

-

Number of independent runs 31

Performance metrics

-

Inverted generational distance (IGD) Let \(P^*\) be a set of uniformly distributed points on the Pareto front. Let P be an approximation to the Pareto front. The inverted generational distance between \(P^*\) and P can be defined as:

$$\begin{aligned} \mathrm{IGD}(P^*,P) = \frac{\sum _{\mathbf {v} \in P^*}d(v,P)}{|P^*|}, \end{aligned}$$(51)where \(d(\mathbf {v},P)\) is the minimum Euclidean distance from point \(\mathbf {v}\) to set P. The IGD metric is able to measure both diversity and convergence of P if \(|P^*|\) is large enough, and a smaller IGD value indicates a better performance. In this test suite, we suggest a number of 10,000 uniformly distributed reference points sampled on the true Pareto frontFootnote 3 for each test instance.

-

Hypervolume (HV) Let \(\mathbf {y}^* = (y_1^*, \ldots , y_m^*)\) be a reference point in the objective space that is dominated by all Pareto optimal solutions. Let P be the approximation to the Pareto front. The HV value of P (with regard to \(\mathbf {y}^*\)) is the volume of the region which is dominated by P and dominates \(\mathbf {y}^*\). In this test suite, the objective vectors in P are normalized using \(f^j_i = \frac{f^j_i}{1.1 \times y_i^{\mathrm{nadir}}}\), where \(f^j_i\) is the ith dimension of jth objective vector, and \(y_i^{\mathrm{nadir}}\) is the ith dimension of nadir point of the true Pareto front.Footnote 4 Then we use y* = (1,...,1) as the reference point for the normalized objective vectors in the HV calculation.

Software platform

All the benchmark functions have been implemented in MATLAB code and embedded in a recently developed software platform—PlatEMO.Footnote 5 PlatEMO is an open source MATLAB-based platform for evolutionary multi- and many-objective optimization, which currently includes more than 50 representative algorithms and more than 100 benchmark functions, along with a variety of widely used performance indicators. Moreover, PlatEMO provides a user-friendly graphical user interface (GUI), which enables users to easily perform experimental settings and algorithmic configurations, and obtain statistical experimental results by one-click operation.

In particular, as shown in Fig. 16, we have tailored a new GUI in PlatEMO for this test suite, such that participants are able to directly obtain tables and figures comprising the statistical experimental results for the test suite. To conduct the experiments, the only thing to be done by participants is to write the candidate algorithms in MATLAB and embed them into PlatEMO. The detailed introduction to PlatEMO regarding how to embed new algorithms can be referred to the users manual attached in the source code of PlatEMO [26]. Once a new algorithm is embedded in PlatEMO, the user will be able to select the new algorithm and execute it on the GUI shown in Fig. 16. Then the statistical results will be displayed in the figures and tables on the GUI, and the corresponding experimental result (i.e., final population and its performance indicator values) of each run will be saved to a .mat file.

Notes

The size of final population/archive must be smaller the given maximum population size, otherwise, a compulsory truncation will be operated in final statistics for fair comparisons.

Regardless of the number of objectives, every evaluation of the whole objective set is counted as one FE.

The specific number of reference points for IGD calculations can vary a bit due to the different geometries of the Pareto fronts. All reference point sets can be automatically generated using the software platform introduced in Sect. “Software platform”.

The nadir points can be automatically generated using the software platform introduced in Sect. “Software platform”.

PlatEMO can be downloaded at http://bimk.ahu.edu.cn/index.php?s=/Index/Software/index.html.

References

Li B, Li J, Tang K, Yao X (2015) Many-objective evolutionary algorithms: a survey. ACM Comput Surv 48(1):13

Yang S, Li M, Liu X, Zheng J (2013) A grid-based evolutionary algorithm for many-objective optimization. IEEE Trans Evol Comput 17(5):721–736

Zhang X, Tian Y, Jin Y (2015) A knee point driven evolutionary algorithm for many-objective optimization. IEEE Trans Evol Comput 19(6):761–776

Wang H, Jiao L, Yao X (2015) Two_arch2: an improved two-archive algorithm for many-objective optimization. IEEE Trans Evol Comput 19(4):524–541

Deb K, Jain H (2014) An evolutionary many-objective optimization algorithm using reference-point-based nondominated sorting approach, part I: solving problems with box constraints. IEEE Trans Evol Comput 18(4):577–601

Li K, Zhang Q, Kwong S (2015) An evolutionary many-objective optimization algorithm based on dominance and decomposition. IEEE Trans Evol Comput 19(5):694–716

Cheng R, Jin Y, Olhofer M, Sendhoff B (2016) A reference vector guided evolutionary algorithm for many-objective optimization. IEEE Trans Evol Comput 20(5):773–791

Bader J, Zitzler E (2011) HypE: an algorithm for fast hypervolume-based many-objective optimization. Evol Comput 19(1):45–76

Deb K, Thiele L, Laumanns M, Zitzler E (2005) Scalable test problems for evolutionary multiobjective optimization. In: Abraham A, Jain L, Goldberg R (eds) Evolutionary multiobjective optimization. Theoretical advances and applications, Springer, Berlin, pp 105–145

Huband S, Hingston P, Barone L, While L (2006) A review of multiobjective test problems and a scalable test problem toolkit. IEEE Trans Evol Comput 10(5):477–506

Köppen M, Yoshida K (2007) Substitute distance assignments in NSGA-II for handling many-objective optimization problems. In: Evolutionary multi-criterion optimization (EMO), pp 727–741

Ishibuchi H, Hitotsuyanagi Y, Tsukamoto N, Nojima Y (2010) Many-objective test problems to visually examine the behavior of multiobjective evolution in a decision space. In: International Conference on Parallel Problem Solving from Nature (PPSN), pp 91–100

Saxena D, Zhang Q, Duro J, Tiwari A (2011) Framework for many-objectivet test problems with both simple and complicated Pareto-set shapes. In: Evolutionary multi-criterion optimization (EMO), pp 197–211

Li M, Yang S, Liu X (2014) A test problem for visual investigation of high-dimensional multi-objective search. In: IEEE Congress on Evolutionary Computation (CEC), pp 2140–2147

Cheung Y-M, Gu F, Liu H-L (2016) Objective extraction for many-objective optimization problems: Algorithm and test problems. IEEE Trans Evol Comput 20(5):755–772

Cheng R, Jin Y, Olhofer M, Sendhoff B (2016) Test problems for large-scale multiobjective and many-objective optimization. IEEE Trans Cybern (in press)

Masuda H, Nojima Y, Ishibuchi H (2016) Common properties of scalable multiobjective problems and a new framework of test problems. In: IEEE Congress on Evolutionary Computation (CEC). IEEE, pp 3011–3018

Cheng R, Jin Y, Narukawa K (2015) Adaptive reference vector generation for inverse model based evolutionary multiobjective optimization with degenerate and disconnected pareto fronts. In: Proceedings of the International Conference on Evolutionary Multi-Criterion Optimization. Springer, New York, pp 127–140

Brockhoff D, Zitzler E (2009) Objective reduction in evolutionary multiobjective optimization: theory and applications. Evol Comput 17(2):135–166

Li M, Yang S, Liu X (2016) Pareto or non-Pareto: Bi-criterion evolution in multi-objective optimization. IEEE Trans Evol Comput 20(5):645–665

Saxena D, Duro J, Tiwari A, Deb K, Zhang Q (2013) Objective reduction in many-objective optimization: linear and nonlinear algorithms. IEEE Trans Evol Comput 17(1):77–99

Ishibuchi H, Masuda H, Nojima Y (2016) Pareto fronts of many-objective degenerate test problems. IEEE Trans Evol Comput 20(5):807–813

Jain H, Deb K (2014) An evolutionary many-objective optimization algorithm using reference-point based nondominated sorting approach, part II: handling constraints and extending to an adaptive approach. IEEE Trans Evol Comput 18(4):602–622

Deb K, Saxena DK (2006) Searching for Pareto-optimal solutions through dimensionality reduction for certain large-dimensional multi-objective optimization problems. In: IEEE Congress on Evolutionary Computation (CEC), pp 3353–3360

Li M, Grosan C, Yang S, Liu X, Yao X (2017) “Multi-line distance minimization: A visualized many-objective test problem suite. IEEE Trans Evol Comput (in press)

Tian Y, Cheng R, Zhang X, Jin Y (2016) Platemo: a matlab platform for evolutionary multi-objective optimization. IEEE Comput Intell Mag (under review)

Acknowledgements

This work was supported in part by the National Natural Science Foundation of China under Grant 61590922, the Engineering and Physical Sciences Research Council of U.K. under Grant EP/M017869/1, Grant EP/K001310/1 and Grant EP/K001523/1.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Cheng, R., Li, M., Tian, Y. et al. A benchmark test suite for evolutionary many-objective optimization. Complex Intell. Syst. 3, 67–81 (2017). https://doi.org/10.1007/s40747-017-0039-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40747-017-0039-7