Abstract

Stimuli with no specific biological relevance for the organism can acquire multiple functions through conditioning procedures. Conditioning procedures involving compound stimuli sometimes result in blocking, related to the phenomenon of overshadowing. This can affect the establishment of conditioned stimuli in classical conditioning and discriminative stimuli in operant conditioning. The aim of the current experiment was to investigate whether a standard blocking procedure might block the establishment of a conditioned reinforcer—in addition to blocking discriminative control by that stimulus in rats. We used successive discrimination training to establish a tone or a light as a discriminative stimulus for chain pulling, upon which an unconditioned reinforcer (water) was contingent. Next, we trained a tone–light compound stimulus the same way. Finally, we conducted two tests, one for stimulus control and one for a conditioned reinforcing effect on a new response. Little or no discriminative control was evident by the second stimulus, which was added to the previously established discriminative stimulus later during training. The subsequent test showed blocking of conditioned reinforcement in five of the seven rats. Procedures that generate blocking can have a practical impact on attempts to establish discriminative stimuli and/or conditioned reinforcers in applied settings and needs careful attention.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

If a compound stimulus holding two elements, A and B, is repeatedly paired with an unconditioned stimulus, both elements will usually demonstrate stimulus control when tested separately. However, if only one of the elements (A) is paired with the reinforcer prior to the compound-stimulus (A and B) pairings with the reinforcer, stimulus control by B may be weak or absent during a test for stimulus control. This phenomenon is referred to as blocking (Williams, 1975).

Blocking was first described in studies of classical (or Pavlovian) conditioning (Kamin, 1968). For example, if a dog is repeatedly exposed to a tone (the first conditioned stimulus, CS1), together with food (the unconditioned stimulus, US), the dog salivates when the tone is presented (conditioned response, CR). After several consecutive conditioning trials, this time with the tone (CS1) and a light (CS2) together with the US, the dog does not salivate to the light (CS2) when tested separately later. Stimulus control by CS2 has then been blocked by the earlier pairing of CS1 with the US.

Varying degrees of blocking have been reported across blocking experiments. Complete or full blocking has been demonstrated (Kamin, 1968, 1969), whereas others have produced more moderate (e.g., Bergen, 2002; Chase, 1966; Lyczak & Tighe, 1975; Mackintosh, 1975a) or no effects (e.g., LoLordo, Jacobs, & Foree, 1982; Maes et al., 2016). Blocking in classical conditioning has been widely demonstrated in both nonhumans (Kamin, 1969; Kim, Krupa, & Thompson, 1998; Palmer, 1988; Rescorla & Wagner, 1972) and humans (Arcediano, Matute, & Miller, 1997; Delgado, 2016).

Blocking has also been demonstrated in operant discrimination in both nonhumans (e.g., Feldman, 1971; Mackintosh, 1965; Williams, 1996, 1999) and humans (e.g., Bergen, 2002). For example, if a light is first established as a discriminative stimulus for key pecking in pigeons, and a tone–light compound is presented in the next stage, the tone alone might acquire little or no control of key pecking in later trials. Vom Saal and Jenkins (1970) conducted two experiments to isolate prior discrimination learning on cue A as the factor that blocks the subsequent establishment of stimulus control by cue B. First, one group of pigeons received single discrimination training on A1 (red) versus A2 (green), where only responding in the presence of A1 led to reinforcement. Then a second redundant cue was added in the next phase; A1 (red) and B1 (tone) versus A2 (green) and B2 (noise) and training continued. Another group of pigeons received no training in the first phase but was given the same two-cue training (compound A1B1 versus compound A2B2) in the second phase as the first group. A third group received only reinforced A1 trials in the first phase before the same two-cue training in the second phase. A fourth group received intermittent reinforcement on A1 in the first phase prior to the two-cue training in the second phase. A test for stimulus control followed for all groups after the second phase was completed. The test was assessed to evaluate the control exerted by each cue, and they found that the added cue (B1) had less influence on the performance of the group that received discrimination training in the first phase than on the performance of the groups with no such prior discrimination training.

Through different conditioning procedures, a neutral stimulus can acquire eliciting, discriminative, and reinforcing functions. If, in the presence of a light, a water-deprived rat presses a lever and is immediately given water, the light may acquire all three functions. The light acquires an eliciting function when it leads to behavior typically elicited by water (e.g., sniffing, saliva production). The light acquires a discriminative function when it reliably occasions lever presses. Further, the light serves a reinforcing function if it increases the occurrence of a specific type of response, for example, pulling a chain, upon which it is contingently presented.

Although many experiments on blocking of both the eliciting and discriminative functions of stimuli in many different species have been published (e.g., Arcediano et al., 1997; Bergen, 2002; Blaisdell, Gunther, & Miller, 1999; Fowler, Goodman, & DeVito, 1977; Mackintosh, 1975b; Seraganian & vom Saal, 1969; Taylor, Joseph, Balsam, & Bitterman, 2008; vom Saal & Jenkins, 1970; Williams, 1975), demonstrations of blocking of conditioned reinforcing effects of stimuli are rare. Williams (1975) conducted two experiments to examine the blocking of reinforcement in a delayed reinforcement contingency in pigeons. However, the blocking in this experiment concerned the effect of an unconditioned reinforcer on a new response, and not the blocking of the establishment of a conditioned reinforcer. Palmer (1988) examined whether a blocking paradigm in a classical conditioning procedure with pigeons would produce blocking of conditioned reinforcement functions of a stimulus. Classical conditioning was used in the blocking procedure and then a test of conditioned reinforcing functions by contingent presentation of the putative conditioned reinforcers upon established responses was conducted. After pretraining key pecking in 24 pigeons, the experimental pigeons received repeated pairings of a tone or a light with food. Next, they received the same number of pairings of a compound stimulus (tone and light) and food. Eight control pigeons received independent light–food and tone–food pairings in this second phase, after exposure to the same procedure as the experimental pigeons in the first phase. Another eight control pigeons had no training in the first phase, and then the same training as the experimental pigeons in the second phase. Finally, all pigeons received a test where pecks on one key resulted in presentations of the light, and pecks on another key resulted in the presentation of the tone. The relative rate of key pecking measured the effectiveness of the two stimuli as conditioned reinforcers. The results showed that even though both stimuli had been repeatedly paired with an unconditioned reinforcer, both the discriminative and reinforcing function of the added stimuli in the compound were blocked.

To our knowledge, direct demonstrations of blocking of the conditioned reinforcing function of a stimulus using operant conditioning are sparse or nonexistent. However, it has been suggested that the effect of a stimulus as a conditioned reinforcer is correlated with its eliciting function (Donahoe, 2014; Donahoe, Crowley, Millard, & Stickney, 1982), and if the eliciting function can be blocked, the reinforcing function may be blocked as well (Palmer, 1988). Likewise, experiments have suggested that there is a correlation between the effect of a stimulus as a conditioned reinforcer and its function as a discriminative stimulus in an operant contingency (Holth, Vandbakk, Finstad, Grønnerud, & Sørensen, 2009; Lepper, Petursdottir, & Esch, 2013; Lovaas et al., 1966; Skinner, 1938/1991; Taylor-Santa, Sidener, Carr, & Reeve, 2014; Vandbakk, Olaff, & Holth, 2019).

The purpose of the present experiment was to investigate blocking of stimulus control in rats using an operant discrimination procedure (e.g., Seraganian & vom Saal, 1969; vom Saal & Jenkins, 1970). If blocking of stimulus control was demonstrated, the purpose was to investigate directly whether the blocking effect extends to the effect of those stimuli as conditioned reinforcers (Palmer, 1988). First, different visual or auditory stimuli were established as discriminative stimuli for pulling a chain, followed by unconditioned reinforcement. Second, a visual and auditory stimulus was added in a compound stimulus and trained the same way. Then, a test of stimulus control was conducted twice, before a final test of the conditioned reinforcing effect on a new response.

Method

Subjects

Seven Wistar albino male rats (Han Tac) were obtained from a commercial supplier (Charles River Breeding Centre, Germany). The rats were 42 days old with body weights ranging from 150 g to 162 g at the start of the experiment. The rats were housed separately in techniplast cages (1290D Eurostandard Type III from Scanbur) and placed in a ventilated holding rack (Camfil) with temperature kept stable at 20 ± 2°C, and humidity at 55 ± 10%. The animal quarter was lit between 06:00 am and 06:00 pm. The cages were 42.5 × 26.6 × 15.5 (height) cm, with a floor area of 820 cm2, and with a raised standard wire lid (Series-123, from Scanbur) that gave a total height of 21.5 cm. Each cage had Aspen bedding and was enrichened with a tinted polycarbonate tunnel (15.5 × 7.5 cm). The rats had free access to food (801002 RM1 (E) from Special Diet Services, UK).

After the initial sessions of habituation, and before the magazine training, the rats were deprived of water for 22½ hr and they had free access to water for 1 hr after each experimental session. Daily sessions were conducted from 09:00 am for 68 consecutive days and the session duration was 45–60 min. The study was preapproved by the National Animal Research Authority (NARA) and was carried out according to the Norwegian laws and regulations controlling experiments/procedures using live animals.

Apparatus

The experiment was conducted in identical standard Campden (8003) ventilated operant chambers, placed in sound-attenuating Campden (4120-2) isolation chambers, and each rat used the same chamber throughout all sessions. A 15 W domestic bulb located in the center of the ceiling illuminated the cage. The rats’ working space was 24.2 × 20.0 × 21.0 (height) cm. All chambers had clear Plexiglas front walls, and ceilings; the side and back walls were aluminum. The floors were constructed of stainless-steel grids (0.5 cm in diameter). Each chamber was equipped with a removable chrome plated pull chain (12 cm long, and 0.3 cm in diameter) hanging down from the middle of the ceiling and two standard Campden (4460-M) retractable levers (placed 10.9 cm apart on one wall, and 5 cm above the grid floor). Both the chain and the levers required a force of at least 0.1 N for depression. The reinforcer was 0.04 ml of tap water, dispensed by a peristaltic pump into a tray located halfway between the levers. The tray opening (4.5 × 4.0 (height) cm) was covered by a hinged plastic flap door. Access to the tray required opening of the hinged plastic flap door, with a required force of less than 0.1 N. A LED-light in the tray turned on (lit for 2 s) simultaneously with the water delivery. The water pump operated for 1 s while producing a motor-humming sound. Nonconsumed water would remain in the tray.

A speaker mounted in the ceiling and connected to the computer could deliver two different prerecorded sounds as the auditory stimuli. A tone (1000 cps and 82 db (re .0002 dyne/cm2)) served as stimulus A+ (S1+) and a noise (76-db white noise) was stimulus A- (S1-). Two lights (15 W) positioned 2.6 cm above each of the levers served as the visual stimuli. The light above the left lever served as stimulus V+ (S1+) and the light above the right lever served as stimulus V- (S1-). The condition prevailing during intertrial intervals (ITI) when all of these visual and auditory stimuli were absent was referred to as “no stimulus presentation.”

Each chamber was connected by an interface (ADU208 USB Relay I/O) to a laptop (HP, Compaq nw 8440, with Microsoft Windows XP Professional 2002, Service pack 3, using custom made software written in Microsoft Visual Basic 1.0 (rev. 141) 2010 Express) where the experimental conditions were administrated. The presentation and removal of stimuli, flap door openings and lever presses/chain pulls were recorded automatically and saved in separate files on the computer.

Procedure

The experiment followed a mixed design. To counterbalance the modality of the stimuli, three ratsFootnote 1 (rats 1, 2, and 3) were first exposed to auditory stimuli A+ (tone) and A- (noise), and four rats (rats 4, 5, 6, and 7) were first exposed to visual stimuli V+ (left-positioned light and V- (right-positioned light), in a single-stimulus successive discrimination training (Phase 1). Only responses in the presence of A+ and V+ were reinforced. In a consecutive compound-stimulus successive discrimination training (Phase 2), the visual S+ and the auditory S- were added to the auditory S+ and the visual S- and formed two compound stimuli (AV+ and AV-) for all seven rats (see Table 1). Then two tests of stimulus control (Test 1a and 1b) followed, where the difference in response rate in the presence of the different stimulus presentations evaluated the stimulus control. Finally, a conditioned reinforcer effect (Test 2) was accomplished. Two operanda represented a choice between producing stimulus A+ or stimulus V+, counterbalanced across the rats (see Table 2).

Pretraining

All seven rats received two sessions of habituation to the experimental chamber, followed by 20 sessions of magazine training where water drops were delivered according to a variable time 20–variable time 40 (VT 20-VT 40) schedule. Next and prior to discrimination training, chain pulling was shaped and continuously reinforced.

Phase 1: Single-stimulus successive discrimination training

The duration of all stimulus presentations was fixed at 3 s. Chain pulling was reinforced only on the S+ trials, and chain pulling would also terminate the duration of the S+ (visual or auditory, depending on the programmed conditions). Chain pulls during S- did not affect the S- duration, and no water was delivered. During Phase 1 and 2 sessions, S+ and S- were alternatingly presented with a mean intertrial interval (ITI) of 30 s with a range from 10 to 50 s. A 10-s reset delay (RD) on chain pulling was programmed during the ITI to make it more likely that chain pulling eventually would come under control of the S+. The purpose of the RD was to prevent adventitious reinforcement of chain pulling (Iversen, 1992). Three rats received 30 presentations of stimulus A+ and 30 presentations of stimulus A- (both auditory stimuli) intermixed in each session for 18 consecutive days. Chain pulling in the presence of A+ led to immediate delivery of water. No programmed reinforcers were delivered in the presence of A-. The other four rats received the same training but with stimuli V+ and V- (both visual stimuli) whereas chain pulls only in the presence of V+ led to delivery of water. Chain pulls in the presence of V- were not reinforced.

During ITIs the house light remained on, but none of the specific auditory or visual stimuli (i.e., A+, A-, V+, or V-) used during discrimination training were present. Responses occurring in the ITIs or in the presence of the single S- had no programmed effect (except for producing a reset delay of 10 s, dependent on the remaining time to next scheduled trial) and were never reinforced. See Table 2 for an overview of the reinforcement schedules and other arrangement in the different training phases.

From the sixth session, and for the rest of the phase, the reinforcement schedule changed from continuous reinforcement (FR 1) to a variable ratio 2 (VR 2) schedule. The rationale for thinning the schedule was to make responding more resistant to extinction during later tests. The discrimination index criterion for stimulus control was set to a minimum of 0.75 [S+/ (S+ plus S-)] of the occurrence of reinforced chain pulling (responses in the presence of S+) at least over four successive sessions.

Phase 2: Compound-stimulus successive discrimination training

The training in this phase had a similar structure to that in Phase 1. All rats received daily 60-trial sessions for 18 consecutive days (30 S+ and 30 S- trials in a mixed sequence as in Phase 1) in each session. Phase 2 stimuli for the rats were the same as in Phase 1, but with an added stimulus forming a compound stimulus trial. All the compound S+ trial type now consisted of A+/V+ (tone plus left-positioned light stimuli), and the compound S- trial type consisted of A-/V- (noise plus right-positioned light stimuli). The ITI and RD were the same as in Phase 1. Chain pulling led to the delivery of water only in the presence of AV+, and responses occurring between trials or in the presence of AV- were never reinforced. The reinforcement schedule in AV+ was thinned to a VR 6 from sessions 50–55 and remained at VR 6, with a range from 4–8 for the rest of Phase 2. The discrimination index criterion for stimulus control was the same as in Phase 1.

Test 1a: Test of stimulus control

Then, in one session, all rats received the test for stimulus control in extinction. Trials were programmed as they had been previously, except that responding no longer interrupted the ITI or stimulus duration. Each test session lasted for 60 min and consisted of 120 nonreinforced trials with 20 presentations of each of the stimuli in a planned mixed sequence, starting with: tone alone (A+), noise alone (A-), left-positioned light alone (V+), right-positioned light alone (V-), tone plus left-positioned light compound (AV+), and noise plus right-positioned light compound (AV-). The next sequence would start with A-, V-, AV+, AV-, A+, V+, and then with V+, AV+, AV-, A+, A-, V- and so on until 20 presentations were completed (see Table 3). Chain pulls in the presence of any of the different stimuli and compounds were recorded and the rate of responding in the presence of A+ relative to V+, would be the test for blocking of stimulus control. Any responses during the ITI (the “no-stimulus” condition) were recorded. The ITI was 20 s during the test.

Reestablishment of stimulus control (I)

With the purpose to reestablish stimulus control, one session similar to the last one in Phase 2 was conducted prior to the second stimulus control test. Responding to the compound stimulus was reinforced on a VR 4, ranging from 2 to 6.

Test 1b: Retest of stimulus control

In this session, stimulus control was tested while responding in the presence of the compound S+ (AV+) continued to be reinforced. Reinforcers were delivered upon chain pulls during the compound AV+ to maintain the overall responding in the test trial. The test was otherwise executed as Test 1a except that chain pulling now terminated the compound S+ presentation (as water was delivered). As in test 1a, the response rate in the presence of A+ relative to V+, would be the test for blocking of stimulus control.

Reestablishment of stimulus control (II)

In order to reestablish stimulus control, three sessions identical to the last reestablishment session were conducted prior to the conditioned reinforcer test. Responding to the compound stimulus was reinforced on a VR 4, ranging from 2 to 6.

Test 2: Conditioned reinforcer test

Before the test session, the chain was removed, and the levers were concurrently available. Presses on the left lever led to a presentation of A+ (tone), and presses on the right lever led to a presentation of V+ (left-positioned light), according to a VR 4 (as in the reestablishing session); thereby the rat invoked the interstimulus interval. No water was delivered during the test session. Lever presses on the left and the right lever during this condition were interpreted as in favor of a conditioned reinforcer effect of the A+ or V+, respectively. The session started with a forced-choice procedure, in which only one lever was presented at a time, so that the rats were exposed to the consequences of pressing each of the levers once. Following the initial forced choice, quasi-randomly starting with either lever, both levers were accessible throughout the rest the session. The test session lasted for 60 min.

Results

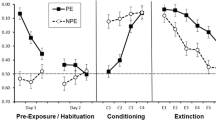

Figure 1 shows the discrimination between the stimuli during the successive discrimination training in Phase 1. All rats showed a significantly higher response rate in the presence of the S1+ (A+ or V+) and thus demonstrated discrimination between the S1+ and the S1- (A- or V-) (see Table 4 for the individual rats` response rate). The range of S1+ responses per minute was 19–37 (Rat 1), 25–50 (Rat 2), 38–51 (Rat 3), 22–43 (Rat 4), 27–44 (Rat 5), 21–47 (Rat 6), and 26–44 (Rat 7). The highest S1- response rate in this phase was 6 (Rat 1), 6 (Rat 2), 3 (Rat 3), 6 (Rat 4), 5 (Rat 5), 8 (Rat 6), and 8 (Rat 7). Figure 2 displays further discrimination between the compound stimuli during Phase 2, and that the same response pattern as seen in Phase 1 continued—the response rate was markedly higher in the presence of the compound S1+/S2+ (AV+) than in the presence of the compound S1-/S2- (AV-; see Table 5 for the individual response rates). The S1+/S2+ response rate per minute was 1–40 (Rat 1), 26–60 (Rat 2), 38–64 (Rat 3), 29–46 (Rat 4), 32–48 (Rat 5), 29–54 (Rat 6), and 25–60 (Rat 7). The highest S1-/S2- response rate per minute in this phase was 3 (Rat 1), 6 (Rat 2), 6 (Rat 3), 10 (Rat 4), 2 (Rat 5), 2 (Rat 6), and 6 (Rat 7). During Phase 1, the discrimination index criterion of 0.75 was met in sessions 40–43 for all the rats. In Phase 2, all sessions met this criterion for six out of seven rats; Rat 1 however, met the discrimination criterion in the third session of the phase.

In the first test of stimulus control by single and compound stimuli (Figure 3), all rats showed highest response rates in the presence of the single S1+, and second highest in the presence of the compound S1+/S2+, whereas the presence of the added stimuli (S2+) from Phase 2 accumulated modest response rates. The whiskers in Figure 3 shows that there is some overlap between the highest response rates in the presence of the compound S1+/S2- and the lowest in the presence of the S1+, but the interquartile range does not overlap. Responding in the presence of the single S1- or in the compound S1-/S2- was almost nonexistent in all rats (see also Table 6). In Figure 4, the subsequent retest of stimulus control during intermittent reinforcement displays a pattern similar to the first test, although somewhat lower rates of responding for the auditory stimulus rats. The interquartile range overlap is greater in this test (see also Table 7). However, the response rates during this maintenance condition was similar to response rates during the similar condition without water reinforcement in Test 1a.

Response Rate in the Presence of the Different Stimuli during Test 1a (extinction) for Blocking of Stimulus Control in All Rats. Note. S1+ is the trained SD (A+ or tone for the auditory stimulus rats and V+ or left light for the visual stimulus rats) in the first phase. S2+ is the added stimulus in the second phase (V+ for the auditory stimulus rats and A+ for the visual stimulus rats). S1+/S2+ is the compound AV+ for all rats. S1- is the trained Sdelta in the first phase, and S2- is the added S in the second phase, forming a compound S1-/S2- (AV- for all rats). “No” represents the ITI with no stimulus presentations

Response Rate per Minute in the Presence of the Different Stimuli during Test 1b (with Maintenance of Intermittent Reinforcement on Chain Pulls in the Presence of the S1+/S2+ (the Compound AV+)) for Blocking of Stimulus Control in All Rats. Note. S1+ is the trained SD (A+ or tone for the auditory stimulus rats and V+ or left light for the visual stimulus rats) in the first phase. S2+ is the added stimulus in the second phase (V+ for the auditory stimulus rats and A+ for the visual stimulus rats). S1+/S2+ is the compound AV+ for all rats. S1- is the trained Sdelta in the first phase, and S2- is the added S in the second phase, forming a compound S1-/S2- (AV- for all rats). “No” represents the ITI with no stimulus presentations.

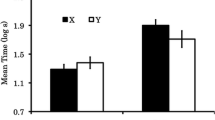

In the test for a conditioned reinforcer effect (Figure 5), six out of seven rats responded more on the lever that produced the stimulus from Phase 1. Rats 1, 2, and 3 responded more on the left lever, which produced the S1+ (the tone (A+) trained in Phase 1 for these rats), and rats 4, 6, and 7 had more responses on the right lever, which produced the S1+ (the left light (V+) trained in Phase 1 for these rats). Only Rat 5 showed more responding when producing the added stimulus from Phase 2. Rats 1 and 4 showed an exclusive preference for producing the stimulus from Phase 1. Relative reinforcer effect indices were 0.91 for Rat 3, 0.85 for Rat 6, 0.63 for Rat 7, and 0.57 for Rat 2.

The Relative Reinforcement Effect Index for All Rats in the Conditioned Reinforcer Test. Note. Responses on one lever produce the S+ (auditory (A+) for rats 1, 2, and 3, and visual (V+) for rats 4, 5, 6, and 7), and responses on the other lever produce the S- (A- and V- for the same respective rats). An index of 1.0 implies an exclusive preference for producing the S+. 0.5 indicates an equal distribution of lever presses, and 0 signifies an exclusive preference for producing the S-. Which levers producing S+ and S- were counterbalanced across the rats

Discussion

We first investigated whether blocking of stimulus control could be demonstrated in rats, using an operant discrimination procedure. The results showed that when blocking of stimulus control of the added stimulus in the compound was established, this effect extended to the function of that stimulus as a conditioned reinforcer for a new response as well. This correspondence between the effect of stimuli as discriminative and as conditioned reinforcers is an interesting one for a number of applied reasons.

Blocking of Stimulus Control

The results from Tests 1a and 1b showed blocking of stimulus control in all seven rats. In general, the results from the blocking of stimulus control in the present study are in line with results reported by previous studies using operant discrimination training, both in nonhumans (Mackintosh & Honig, 1970; Seraganian & vom Saal, 1969; vom Saal & Jenkins, 1970; Williams, 1996) and humans (e.g., Dittlinger & Lerman, 2011). In accord with the results of the present study, all these experiments demonstrated blocking of stimulus control by a second stimulus, which was added to a stimulus that had already acquired control. The added stimulus seemed to maintain its neutral status even when perfectly correlated with the presentation of a reinforcer, which was replicated in a second test of stimulus control.

Conditions in nonhuman animal experiments may differ from those in applied and clinical settings with humans in ways that make it difficult to translate and generalize even the most robust findings. Although there is an abundance of research demonstrating the phenomenon of blocking, there is also a number of studies showing the elusive nature of blocking, and that the phenomenon may be sensitive to even minor disturbances in the environment during acquisition or training (e.g., LoLordo et al., 1982; Maes et al., 2016). Lyczak and Tighe (1975) failed to demonstrate blocking of stimulus control in young children and suggested that disruptive incidents at the transition between experimental phases might explain the failure. Likewise, Bergen (2002) also mentioned the possible effect of any discriminable phase transition as an inhibitor of blocking. However, such discriminable phase transitions were probably effectively eliminated in the present computer-controlled experiment in the animal lab.

Bergen (2002) discussed another variable that might counteract blocking, namely, a relatively short Phase 1 training with the single S+ before the Phase 2 training with the compound S+. The current experiment was based on a standard blocking procedure and thus involved the same number of trials in Phase 1 with the single S+ and in Phase 2 with the compound S+. It is inevitable that because the single S+ was also present with the added stimulus in Phase 2, which included the same number of sessions as Phase 1, the total number of exposures to S+ was exactly twice the number of exposures to the added stimulus. This unequal number of exposures to the stimulus may have influenced the outcome in the present study. Nevertheless, the number of exposures to the added stimulus was set to be the same as the number of exposures to S+ to ensure that the rats had the same experience with the compound S+ as the single S+. Maes et al. (2016) conducted a series of 15 experiments without being able to replicate a blocking effect, and they suggested that blocking has been overemphasized in theories of learning. It is possibly relevant to their negative finding that none of the 15 experiments involved operant discrimination training, whereas the test was concerned with the stimulus control of operant behavior. Also, the studies had relatively few training sessions as well as an uneven number of sessions in the first and the second phase. These are all matters that likely affect blocking.

A number of studies have shown a lack of equipotentiality of different stimuli despite efforts to avoid the problem (e.g., Barker et al., 2010; Foree & LoLordo, 1973; Keller, 1941; Mackintosh, 1975a; Seraganian & vom Saal, 1969). Seraganian and vom Saal (1969) showed in a typical blocking experiment with one group of pigeons that responding was suppressed by stimuli, such as noise and light, when no reinforcement was available in the presence of those stimuli. Mackintosh (1975a) revealed blocking of a tone by light but not the other way around in conditioned suppression in rats. In a series of experiments with pigeons, LoLordo et al. (1982) first found that when using food reinforcement, stimulus control was more quickly established with a visual than with an auditory stimulus. In avoidance training, however, control by the auditory stimulus was more quickly established. Hence, they described the visual stimulus as more relevant to the appetitive task, and auditory stimuli as more relevant to avoidance training. Next, they found that added but more relevant stimuli did acquire control after prior training with a less relevant stimulus, and they suggested that the relevant added stimulus overshadowed the less relevant stimulus in spite of the completed blocking procedure. In the present experiment we counterbalanced the modality of the stimuli and we found no such evidence of overshadowing.

Blocking of Conditioned Reinforcement

The results from Test 2 in the present experiment showed conspicuous blocking of conditioned reinforcement in four of seven rats, less clearly in one more rat, and none in the last two rats. One of these last two rats (Rat 5) emitted slightly more lever presses that produced the added stimulus than responses that produced the original S+. One other rat (Rat 2) pressed both levers at a relatively equal rate. We see no obvious explanation for the deviant results for these two rats. Research on blocking of a conditioned reinforcement effect is meager but results from Palmer (1988) on blocking in classical conditioning are in accord with our findings of blocking in operant discrimination training. The relative rate of key pecking in pigeons measured the effectiveness of the paired stimulus (the first conditioned stimulus [CS1]) to the blocked stimulus (the second conditioned stimulus [CS2]). All 24 pigeons in that study increased their relative rate of key pecking when a conditioned reinforcement contingency was instituted. The results could be interpreted as a demonstration of a stronger conditioned reinforcer effect of the CS1, when contingent—though intermittently—upon key pecking, compared with the reinforcing effect of the intermittently presented CS2 contingent on pecking another key. In the present experiment, we found an increased rate of lever presses upon which the putative conditioned reinforcer was contingent (the S+) in five of seven rats. Because the levers had not been present before, there was no history of discriminative control of lever pressing. Therefore, the increased rate of lever presses can hardly be classified as a discriminative function of the stimuli, which would have confounded the role of the S+ as a reinforcer (e.g., Melching, 1954). Other researchers have reported an increase in the overall responding, described as a general motivating effect (e.g., Donahoe & Wessels, 1980). We found no such overall increased response rate, but five of the seven rats in the present experiment responded more frequently on the alternative operandum, producing the contingent delivery of the single stimulus (S+) from the first phase rather than the stimulus added to the compound in the second phase. The higher number of responses on the S+ lever could potentially have indicated a difference in stimulus salience or a general preference for response positions, but lever position (left or right) as well as stimulus type (auditory or visual) were counterbalanced in the present experiment. In theory, a preference could also have resulted from the fact that any first response produced a potentially reinforcing stimulus, so that responses to the other lever never occurred. However, the initial forced-choice arrangement ensured responding on both levers, followed by exposure to both the initial stimulus (from Phase 1) and to the added stimulus (from Phase 2).

Blocking in Applied Work

So far, we have shown that a procedure that produces blocking of stimulus control can also lead to blocking of a conditioned reinforcer effect of stimuli. If stimuli are established as discriminative, they will usually also function as conditioned reinforcers, although exceptions have been reported (e.g., Kelleher & Gollub, 1962). On the other hand, if the establishment of a new stimulus as a discriminative stimulus is blocked, it is reasonable to expect the reinforcing function of the stimulus to be blocked as well. For example, when the child already reliably approaches the trainer for an instruction every time the trainer fiddles with the candy bag, the learning of “come here” as a discriminative stimulus for the same behavior may be blocked. A test of the effectiveness of the instruction “come here” versus fiddling with candy bag might reveal a superiority of the fiddling with the candy bag as an SD for the child’s approach.

Even though blocking might be adaptive when the organism responds only to the stimulus most reliably correlated with reinforcement, it can also include examples where critical learning does not take place, such as when the environment is changing, and control by new stimuli would be useful. Whether the purpose is to ensure learning in the presence of a stimulus and block disturbances from other stimuli, or to reduce the likelihood that prior learning will block the establishment of control by new stimuli, a clarification of the circumstances under which blocking may occur is needed. Former discrimination training can block the differential responding to new stimuli, both the discriminative and reinforcing functions of such stimuli. Further, discrimination training can prevent (or block) other random stimuli from being established as discriminative or as conditioned reinforcers, as may very well happen in a stimulus–stimulus pairing procedure, where no response requirement ensures control by the relevant stimulus. Yet, the pairing procedure is typically recommended for the establishment of conditioned reinforcers (e.g., Catania, 2007; Martin & Pear, 1996; Pryor, 1984; Schlinger, 1995). If instead the stimulus is established as a discriminative stimulus for a target response that leads to a reinforcer, control by relevant properties is implied even when the stimulus is not originally salient. Because of the discriminative stimulus–response contingency that might prevent accidental stimuli from becoming more salient and thereby overshadow the programmed discriminative stimulus, one might expect blocking to be more easily demonstrated in operant discrimination training than in respondent conditioning. On the other hand, an operant discrimination contingency may also be an effective means to prevent blocking, because stimulus control by specific stimuli can be “forced,” or at least facilitated, by making reinforcement contingent upon responding in their presence.

Notes

Originally eight rats were assigned to the experiment, but one rat had to be removed from the experiment due to illness—with extremely low response rates.

References

Arcediano, F., Matute, H., & Miller, R. R. (1997). Blocking of Pavlovian conditioning in humans. Learning & Motivation, 28(2), 188–199.

Barker, D. J., Sanabria, F., Lasswell, A., Thrailkill, E. A., Pawlak, A. P., & Killeen, P. R. (2010). Brief light as a practical aversive stimulus for the albino rat. Behavioural Brain Research, 214(2), 402–408.

Bergen, A. E. (2002). Blocking of stimulus control over human operant behavior. (MQ76892 M.A.) (Master’s thesis, University of Manitoba, Canada. Retrieved from http://search.proquest.com/docview/305461553?accountid=26439

Blaisdell, A. P., Gunther, L. M., & Miller, R. R. (1999). Recovery from blocking achieved by extinguishing the blocking CS. Animal Learning & Behavior, 27(1), 63–76. https://doi.org/10.3758/BF03199432.

Catania, A. C. (2007). Learning (4th interim ed.). New York, NY: Sloan Publishing.

Chase, S. (1966). The effects of discrimination training on the development of stimulus control by single dimensions of a stimulus compound. (Doctoral diss., City University of New York). Ann Arbor, MI: University Microfilms, No. 66-11-385.

Delgado, D. (2016). Blocking in humans: Logical reasoning versus contingency learning. The Psychological Record, 66(1), 31–40.

Dittlinger, L. H., & Lerman, D. C. (2011). Further analysis of picture interference when teaching word recognition to children with autism. Journal of Applied Behavior Analysis, 44(2), 341–349.

Donahoe, J. W. (2014). Evocation of behavioral change by the reinforcer is the critical event in both the classical and operant procedures. International Journal of Comparative Psychology, 27(4), 537–543.

Donahoe, J. W., Crowley, M. A., Millard, W. J., & Stickney, K. A. (1982). A unified principle of reinforcement. Quantitative Analyses of Behavior, 2, 493–521.

Donahoe, J. W., & Wessels, M. G. (1980). Language, learning, and memory. New York, NY: Harper & Row.

Feldman, J. M. (1971). Added cue control as a function of reinforcement predictability. Journal of Experimental Psychology, 91(2), 318–325. https://doi.org/10.1037/h0031888.

Foree, D. D., & LoLordo, V. M. (1973). Attention in the pigeon: Differential effects of food-getting versus shock-avoidance procedures. Journal of Comparative & Physiological Psychology, 85(3), 551.

Fowler, H., Goodman, J. H., & DeVito, P. L. (1977). Across-reinforcement blocking effects in a mediational test of the CS's general signaling property. Learning & Motivation, 8(4), 507–519. https://doi.org/10.1016/0023-9690(77)90048-0.

Holth, P., Vandbakk, M., Finstad, J., Grønnerud, E. M., & Sørensen, J. M. A. (2009). An operant analysis of joint attention and the establishment of conditioned social reinforcers. European Journal of Behavior Analysis, 10(2), 143–158.

Iversen, I. H. (1992). Skinner's early research: From reflexology to operant conditioning. American Psychologist, 47(11), 1318.

Kamin, L. J. (1968). Attention-like processes in classical conditioning. In: Miami symposium on the prediction of behavior: Aversive stimulation, ed. Jones, M. R.. Miami: University of Miami Press.

Kamin, L. J. (1969). Predictability, surprise, attention and conditioning. In B. A. Campbell & R. M. Church (Eds.), Punishment and aversive behavior (pp. 279–296). New York, NY: Appleton-Century-Crofts.

Kelleher, R. T., & Gollub, L. R. (1962). A review of positive conditioned reinforcement. Journal of the Experimental Analysis of Behavior, 5, 543–597.

Keller, F. S. (1941). Light-aversion in the white rat. The Psychological Record, 4(17), 235–250.

Kim, J. J., Krupa, D. J., & Thompson, R. F. (1998). Inhibitory cerebello-olivary projections and blocking effect in classical conditioning. Science, 279, 570–573. https://doi.org/10.1126/science.279.5350.570.

Lepper, T. L., Petursdottir, A. I., & Esch, B. E. (2013). Effects of operant discrimination training on the vocalizations of nonverbal children with autism. Journal of Applied Behavior Analysis, 46(3), 656–661.

LoLordo, V. M., Jacobs, W. J., & Foree, D. D. (1982). Failure to block control by a relevant stimulus. Animal Learning & Behavior, 10(2), 183–192.

Lovaas, O. I., Freitag, G., Kinder, M. I., Rubenstein, B. D., Schaeffer, B., & Simmons, J. Q. (1966). Establishment of social reinforcers in two schizophrenic children on the basis of food. Journal of Experimental Child Psychology, 4(2), 109–125.

Lyczak, R., & Tighe, T. (1975). Stimulus control in children under a blocking paradigm. Child Development, 46(1), 115–122.

Mackintosh, N. J. (1965). Selective attention in animal discrimination learning. Psychological Bulletin, 64(2), 124.

Mackintosh, N. J. (1975a). Blocking of conditioned suppression: Role of the first compound trial. Journal of Experimental Psychology: Animal Behavior Processes, 1(4), 335–345. https://doi.org/10.1037/0097-7403.1.4.335.

Mackintosh, N. J. (1975b). A theory of attention: Variations in the associability of stimuli with reinforcement. Psychological Review, 82(4), 276–298. https://doi.org/10.1037/h0076778.

Mackintosh, N. J., & Honig, W. K. (1970). Blocking and enhancement of stimulus control in pigeons. Journal of Comparative & Physiological Psychology, 73(1), 78–85. https://doi.org/10.1037/h0030021.

Maes, E., Boddez, Y., Alfei, J. M., Krypotos, A.-M., D’Hooge, R., De Houwer, J., & Beckers, T. (2016). The elusive nature of the blocking effect: 15 failures to replicate. Journal of Experimental Psychology: General, 145(9), e49–e71. https://doi.org/10.1037/xge0000200.

Martin, G., & Pear, J. (1996). Behavior modification: What it is and how to do it (5th ed.). Upper Saddle River, NJ: Prentice Hall.

Melching, W. H. (1954). The acquired reward value of an intermittently presented neutral stimulus. Journal of Comparative & Physiological Psychology, 47(5), 370.

Palmer, D. C. (1988). The blocking of conditioned reinforcement. (Doctoral diss., University of Massachusetts, Amherst, MA).

Pryor, K. (1984). Don’t shoot the dog! New York, NY: Bantam Books.

Rescorla, R. A., & Wagner, A. (1972). A theory of Pavlovian conditioning: Variations in the effectiveness of reinforcement and non reinforcement. In A. H. Black & W. F. Prokasy (Eds.), Classical conditioning II: Current research and theory (pp. 64–99). New York, NY: Appleton-Century-Crofts.

Schlinger, H. D. (1995). A behavior analytic view of child behavior. New York, NY: Plenum Press.

Seraganian, P., & vom Saal, W. (1969). Blocking the development of stimulus control when stimuli indicate periods of nonreinforcement. Journal of the Experimental Analysis of Behavior, 12(5), 767–772. https://doi.org/10.1901/jeab.1969.12-767.

Skinner, B. F. (1938/1991). The behavior of organisms. Acton, MA: Copley (Original work published 1938).

Taylor, K. M., Joseph, V. T., Balsam, P. D., & Bitterman, M. E. (2008). Target-absent controls in blocking experiments with rats. Learning & Behavior, 36(2), 145–148. https://doi.org/10.3758/LB.36.2.145.

Taylor-Santa, C., Sidener, T. M., Carr, J. E., & Reeve, K. F. (2014). A discrimination training procedure to establish conditioned reinforcers for children with autism. Behavioral Interventions, 29(2), 157–176.

Vandbakk, M., Olaff, H. S., & Holth, P. (2019). Conditioned reinforcement: the effectiveness of stimulus—stimulus pairing and operant discrimination procedures. The Psychological Record, 69(1), 67–81.

vom Saal, W., & Jenkins, H. M. (1970). Blocking the development of stimulus control. Learning & Motivation, 1(1), 52–64. https://doi.org/10.1016/0023-9690(70)90128-1.

Williams, B. A. (1975). The blocking of reinforcement control. Journal of the Experimental Analysis of Behavior, 24(2), 215–225. https://doi.org/10.1901/jeab.1975.24-215.

Williams, B. A. (1996). Evidence that blocking is due to associative deficit: Blocking history affects the degree of subsequent associative competition. Psychonomic Bulletin & Review, 3(1), 71–74. https://doi.org/10.3758/BF03210742.

Williams, B. A. (1999). Associative competition in operant conditioning: Blocking the response-reinforcer association. Psychonomic Bulletin & Review, 6(4), 618–623. https://doi.org/10.3758/BF03212970.

Funding

Open Access funding provided by OsloMet - Oslo Metropolitan University.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of Interest

On behalf of all authors, the corresponding author states that there is no conflict of interest.

Ethical Approval

All applicable international, national, and institutional guidelines for the care and use of animals were followed. The study was preapproved by the National Animal Research Authority (NARA) and was carried out according to the Norwegian laws and regulations controlling experiments/procedures using live animals with the identification number: id8278. Some of the data were previously presented in a poster at the annual ABAI conference in Kyoto, Japan, in November 2015. This work has not been previously published; nor is this work under consideration for publication elsewhere.

Availability of Data and Materials

The datasets generated during and/or analyzed during the current study are available from the corresponding author on reasonable request.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Vandbakk, M., Olaff, H.S. & Holth, P. Blocking of Stimulus Control and Conditioned Reinforcement. Psychol Rec 70, 279–292 (2020). https://doi.org/10.1007/s40732-020-00393-3

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40732-020-00393-3