Abstract

The influence of parameters on system states for parametric problems in power systems is to be evaluated. These parameters could be renewable generation outputs, load factor, etc. Polynomial approximation has been applied to express the nonlinear relationship between system states and parameters, governed by the nonlinear and implicit equations. Usually, sampling-based methods are applied, e.g., data fitting methods and sensitivity methods, etc. However, the accuracy and stability of these methods are not guaranteed. This paper proposes an innovative method based on Galerkin method, providing global optimal approximation. Compared to traditional methods, this method enjoys high accuracy and stability. IEEE 9-bus system is used to illustrate its effectiveness, and two additional studies including a 1648-bus system are performed to show its applications to power system analysis.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Parametric problems [1] are depending on parameters in the real world, which are modelled as a set of equations involving parameters, i.e., parametric equations. The parameters addressed here are referred to important variables, which are analysed to evaluate or comprehend events, phenomena, or situations in practical problems. The parameters could be inputs of physical models, measurable factors of systems, or characteristics of projects, etc. For example, in power systems, the parameters could be generation outputs, load factor, wind power output, etc.

Many problems in power systems are formulated as nonlinear equations implicitly, e.g., AC load flow equations [2, 3]. To evaluate the relationship between the parameters and system states governed by the implicit nonlinear equations, two categories of methodologies are usually adopted:

-

1)

Sampling methods refer to approaches that repeatedly evaluate the states under different values of parameters. The widely used Monte Carlo methods work by random sampling to obtain numerical results, which are simple and easy to implement.

-

2)

Analytical methods express the states in the form of explicit analytical formulas with respect to parameters. Because many problems are modelled as a set of nonlinear equations, it is hard to derive the states directly in an explicit expression in many cases, so that approximation is often used.

Sampling methods are the most straightforward approaches, whose results are normally considered to be the exact solutions for validating other approaches. Although sampling methods are simple, but the drawbacks are obvious: ① huge computation effort is required particularly if the sample size is very large; ② they produce discrete rather than continuous results, which means additional data processing for sampling results is necessary if the continuous relation is required. For example, sensitivities analysis cannot be carried out directly based on the discrete results.

As for the analytical approaches, some approaches use results from sampling methods to derive analytical approximations. The traditional data fitting method is applied in [4, 5]. The accuracy of data fitting method is closely related to the number of samples and sample choice. Thus, the problem of overfitting may be engaged, if the sampling points are too rare or the target function is too complex. Besides, the collocation method [6, 7] has been utilized in many studies. This method finds polynomial functions satisfying original models at a number of points (called collocation points). However, the collocation points should be carefully designed in order to achieve high accuracy, and the stability of this method is not guaranteed.

Many other analytical approaches are proposed not relying on the sampling results. One type of these approaches simply uses linear models so that the exact solution of each state with respect to parameters can be directly obtained. For example, the active power DC-load flow model in this category achieves many applications, e.g., in probabilistic load flow [8,9,10], contingency analysis [11, 12], transmission management [13], etc. Some approaches linearise AC load flow equations at an operation point. The linear model enjoys high computational efficiency but neglects the nonlinearity of power systems, which may cause large errors in severely nonlinear systems, e.g., distribution networks.

To handle the nonlinearity more effectively, a promising approach is to use polynomial function to serve as an estimated expression between states and parameters. One direct approach is to use Taylor expansion at one local operation point, which is derived from sensitivities of different orders. In [14], region boundaries of voltage security are investigated based on second-order polynomials approximation according to the first-order and second-order sensitivities. The sensitivities are normally calculated based on differential equations derived from load flow equations, i.e., Jacobian matrix and Hessian matrix. However, it is very complicated to derive the sensitivity differential equations in higher order cases, and moreover the approximation is local rather than global, meaning that only the information in the neighbourhood of the specific point is preserved in the approximation.

The main purpose of this paper is to derive a global optimal approximation, which can reflect the relationship governed by the original model as well as possible. A predefined polynomial function containing unknown coefficients are utilized to express the approximate solutions, expressed in the form of orthogonal basis representation. Then, Galerkin method is introduced to identify the unknown coefficients. The Galerkin equations are formed by taking orthogonal projection in the whole domain of parameters, meaning that the approximation considers the global information at the whole domain rather than the local one at a single point. One key property of the orthogonal projection is that the optimal approximation can be obtained with high stability. Parametric load flow problems are investigated to illustrate the implementation of this novel algorithm.

The contribution of our work can be summarized as follows: ① the explicit expression can facilitate our analysis and give further insights into power system performance; ② the global optimal approximation results can fully reflect the information on the whole domain of parameters, which is very suitable to the problems of region boundaries.

The rest of this paper is organized as follows. Section 2 presents the problem to be resolved. In Section 3 the detailed solution method is introduced. In Section 4, the IEEE 9-bus system is used to demonstrate the properties of our approach, followed by two practical case studies in Section 5. The last section is the conclusion of this paper.

2 Problem formulation

2.1 Parametric load flow problems

As we know, the load flow model is usually expressed in either rectangular or polar form. The model in rectangular form is applied in our analysis, which is governed by a set of polynomial equations. The reason is that the integral evaluation, which will be introduced later in this paper, is easier to handle in polynomial equations. Typically, the voltage V is given by \(V=e+{\text {j}}f\), where \(\text {j}\) is the imaginary number.

The load flow equations are given as:

where \(N_E\) is the total bus number; \(G_{mn}\) and \(B_{mn}\) are the entries of nodal admittance matrix; \(P_m\), \(Q_m\), and \(V_m\) are the active power injection, reactive power injection and voltage magnitude of bus m, respectively.

Here, the voltages, \(e_m\) and \(f_m\), are the system states to be found. Besides of the node voltages, the load flow analysis can be extended to some other system states (e.g., line flow, netloss, etc.) by introducing the corresponding equations. For example, in the problems related to netloss, \(P_{loss}\), the equation is formulated as \(\sum \limits _{m\in {\mathcal {G}}}{P}_{m}-\sum \limits _{m\in \mathcal {L}}{P}_{m}-P_{loss}=0\), where \({\mathcal {G}}\) is the set of generators and \({\mathcal {L}}\) is the set of loads.

To facilitate the following analysis, we use the general and abstract formulation:

where \(F=0\) denotes the load flow equations, the additional equations are formed as \(L=0\); \(\varvec{u}=\{u_m\}\) denotes the state vector (usually, the bus voltages and the states to be investigated) and \(\varvec{p}=\{p_i\}\) is the parameter vector, m = 1,2,…, M, i = 1,2,…, Np.

2.2 Aim of our work

Due to the nonlinearity and implicit formula, an explicit expression in analytical form, \(\varvec{u}^*(\varvec{p})\), is hard to obtain (the upper script \(*\) means the solution). If an analytical function can be found to approximate the relationship between \(\varvec{u}\) and \(\varvec{p}\), then the influence of the parameters on the system states can be evaluated directly based on the approximation.

For the analytical functions used in approximation, a common choice is polynomials up to a certain degree. Then, \(\varvec{u}^*(\varvec{p})\) can be approximated by a polynomial function:

where N is the dimension of polynomial function. Here \(\varvec{u}^*_{N}(\varvec{p})\) is to be identified, which is the main purpose of this manuscript.

It should be mentioned that one necessary condition for the implementation of polynomial approximation is that, the parametric problem to be tackled should be solvable for all \(\varvec{p}\in \text {dom}(\varvec{p})\). For further information, one can refer to [1, 15].

3 Methodology

In this section, some essential mathematical concepts related to our work are given briefly, and then the method and its application to load flow problems are introduced.

3.1 Polynomial basis representation

To obtain the approximate solutions in the form of polynomials, polynomial basis are used to express representation here. In a general real-valued functional space \({\mathbb {R}}^{\mathcal {P}}\) with inner product defined by:

where \({\mathbb {R}}^{{\mathcal {P}}}\) is the support of measure \(W(\varvec{p})\). If \(\{\varPhi _i\}\) is chosen as the set of basis functions for an N-dimensional subspace \({\mathbb {R}}_N^{{\mathcal {P}}}:=\text{ span }\{\varPhi _i\}\subset {\mathbb {R}}^{{\mathcal {P}}}\), i = 1,2,…, N, then every \(x\in {\mathbb {R}}_N^{\mathcal {P}}\) has a unique representation \(x=\sum \limits _{i=1}^{N}\hat{c}_i\varPhi _i\).

In our approach, the basis are chosen from a set of polynomial sequence \(\{\varPsi _i(\varvec{p})\}\), where \(\varPsi _i(\varvec{p})\) is a polynomial of degree i. Then, any arbitrary polynomials in terms of \(\varvec{p}\), \(x(\varvec{p})\in \text{ span }{\{\varPsi _i(\varvec{p})\}}\), can be expressed by linear combination of the polynomial basis:

where \(x(\varvec{p})\) is a polynomial function of degree \(N_d\), and \(\varPsi _{n}(p_{i_1},p_{i_2},\cdots ,p_{i_{n}})\) denotes the polynomial basis of degree n in the parameters \((p_{i_1},p_{i_2},\cdots ,p_{i_{n}})\). For notational convenience, a univocal relation between \(\varPsi\) and \(\varPhi\) is introduced, so that (5) can be rewritten more compactly:

where \(N={{N_p+N_d}\atopwithdelims (){N_p}}\). Conventionally, \(\varPsi _0=\varPhi _0\) is considered, and \(\varPhi (\cdot )\) is ordered with graded lexicographic order [16].

For the problem in Section 2.2, once a set of basis \(\{\varPhi _i\}_{i=1}^N\) is determined, the approximate solutions \(\varvec{u}^*_{N}(\varvec{p})\) can be represented by:

where \(\hat{\varvec{c}}_i\) is the coefficient to be determined.

3.2 Orthogonal polynomial basis and best approximation

In the general inner product spaces, orthogonal polynomial basis is a set of polynomials which are mutually orthogonal under inner product defined as (4). For any two basis, \(\varPhi _i\) and \(\varPhi _j\), if they are orthogonal, then \(\langle \varPhi _i,\varPhi _j\rangle =\delta _{ij}\) holds. Here, \(\delta _{ij}\) is the Kronecker-\(\delta\) function.

One great advantage of the orthogonality is that the unknown coefficients can be readily determined by taking inner products (dot products) onto the corresponding basis. For (7), if \(\{\varPhi _i\}\) is a set of orthogonal basis, then \(\hat{\varvec{c}}_k\) can be determined by taking inner product with its corresponding basis \(\varPhi _k\), that is,

Furthermore, the orthogonality leads to one important theorem of approximation property. The best approximation of \(\varvec{u}^*(\varvec{p})\) can be expressed by

where the global error is minimized. Here, the error is defined by:

In other words, the orthogonal projection leads to the optimal approximation.

Proof

From Section 3.1, it is known that in the space spanned by \(\{\varPhi _i\}\), any arbitrary polynomials with degree no more than \(N_d\) can be expressed by the linear combination of the basis. Here, we suppose there is another \(N_d\)th-order approximation of \(\varvec{u}^*\), denoted as \(\varvec{v}^*_N=\sum \limits _{i=1}^{N} \hat{\varvec{c}}^{(v)}_i\varPhi _i\).

By the orthonormality, we have

which means \((\varvec{u}^*_{N} -\varvec{u}^*)\perp \varPhi _i\), for \(i=1,2,\cdots ,N\).

Note that \(\varvec{v}^*_N-\varvec{u}^*_N=\sum \limits _{i=1}^{N} (\hat{\varvec{c}}^{(v)}_i-\hat{\varvec{c}}_i)\varPhi _i\), then we have

Thus, \((\varvec{u}^*_{N}-\varvec{u}^*)\perp (\varvec{v}^*_N-\varvec{u}^*_N)\) holds.

For the global approximation error of \(\varvec{v}^*_N\) defined as (10),

Here, the equality can be achieved, if and only if \(||\varvec{v}^*_{N}-\varvec{u}^*_{N}||^2=0\), i.e., \(\varvec{v}^*_{N}=\varvec{u}^*_{N}\).

Therefore, the optimal approximation is \(\varvec{u}^*_{N}\) defined as (9). This finishes the proof.

Since the orthogonal polynomial basis is very convenient to work with, many classic orthogonal polynomial sequences have been investigated, from which orthogonal polynomial basis can be chosen. Table 1 gives three types of important orthogonal polynomial sequences. In an early work by Xiu [17], generalized polynomial chaos (gPC) is introduced for solving stochastic differential equations, where each type of polynomials is associated with an identical probability distribution.

In fact, any arbitrary parameter p can be replaced by another parameter on \([-1,1]\), \([-\infty ,\infty ]\) or \([0,\infty ]\), after a linear transformation is applied. For example, if \(p\in [a,b]\), then the transformation is \(p'=\frac{2p-a-b}{b-a}\), where \(p'\in [-1,1]\). Thus, in this paper the orthogonal polynomial sequences listed in Table 1 are chosen as basis to represent the approximations in (7).

3.3 Galerkin method

Galerkin method can be used to identify the coefficients in (7). The key implementation process of this method is summarized as the following steps:

-

1)

A set of orthogonal polynomials is chosen as the basis \(\{\varPhi _i\}\) called trial basis, and then the approximate solutions can be expressed as (7).

-

2)

Substitution (7) into (2) produces a nonzero \(\varvec{R}\), called residual:

$$\begin{aligned} \varvec{R}=A(\varvec{u}^*_{N}(\varvec{p});\varvec{p})=A\left(\sum _{i=1}^{N}{\hat{\varvec{c}}_i \varPhi _i(\varvec{p})};\varvec{p}\right) \end{aligned}$$(14) -

3)

Since one wants to find coefficients to make the residual as small as possible, the projection of \(\varvec{R}\) onto each basis of \(\{\varPhi _i\}\) (called test basis) is set to zero:

$$\langle \varvec{R},\varPhi _k \rangle =0\quad \quad k=1,2,\cdots ,N$$(15)where the inner product \(\langle \cdot ,\cdot \rangle\) is defined in the manner of (4). Equation (15) is named as Galerkin equations. The orthogonality of polynomial basis ensure the error is orthogonal to the functional space spanned by \(\{\varPhi _i\}\) [17]. After taking inner product, the Galerkin equations are only in terms of the unknown coefficients, while all parameters are eliminated by evaluation of integration.

-

4)

Solve Galerkin equations, and then substitute the coefficients into (7) and produce the approximate solutions \(\varvec{u}^*_{N}(\varvec{p})\).

Generally, the main idea of Galerkin method can be summarized as: the approximate solutions are represented as a basis expansion in the trial space spanned by trial basis, and then the unknown coefficients are determined by projecting the residual onto the test space spanned by test basis. It should be noted that the trial basis and test basis are from the same family of basis functions usually.

As for the orthogonality of trial and test basis, we prefer to consider the orthogonal basis, because the orthogonality leads to the best approximation as discussed in Section 3.2. That means, for a required accuracy, fewer basis are needed for the orthogonal basis. If orthogonal basis is applied, the optimal approximation can be obtained, making this approach be advantageous than other methods [1].

Moreover, the coefficients are determined by solving Galerkin equations formed by taking inner products on the whole domain of parameters, so the approximation considers the global information on the whole domain, which is advantageous over the local approximations derived by taking sensitive analysis at one single point. Compared to the data fitting method based on sample results but regardless of the governing equations, Galerkin method has better stability and the error convergence is guaranteed when increasing the polynomial order.

3.4 Implementation to parametric load flow problems

In this section, the Galerkin method is applied to the parametric load flow problems illustrated in Section 2. Without loss of generality, it is assumed that the power injections are assumed to be influenced by the parameter \(\varvec{p}\), which means the active power injection in (1) is \(P_m(\varvec{p})\), and the reactive power injection is \(Q_m(\varvec{p})\). Besides the load flow equations \(F=0\), the additional equations, \(L=0\), are formulated to involve the extended system states. Note that, the total number of unknown system states is equal to the dimension of equations, denoted as M in our analysis.

3.4.1 Choose polynomial basis

As discussed in Section 3.2, after linear transformation, the classic orthogonal polynomial sequences can be used as polynomial basis.

Assume that, the basis is \(\{\varPhi _i\}\). Then, \(P_m(\varvec{p})\) and \(Q_m(\varvec{p})\) can be expressed as \(P_m=\sum \limits _{i=1}^{N}{c}_{i}^{(P_m)} \varPhi _i\) and \(Q_m=\sum \limits _{i=1}^{N}{c}_{i}^{(Q_m)} \varPhi _i\), where the coefficients \({c}_{i}^{(P_m)}\) and \({c}_{i}^{(Q_m)}\) can be readily determined by (8). The states \(e_m\) and \(f_m\) can be represented as:

where \(\hat{c}_{i}^{(e_m)}\) and \(\hat{c}_{i}^{(f_m)}\) are the unknown coefficients to be determined. By utilizing the expansion of polynomial basis, the relationship between states and parameters, which is complicated and usually nonlinear, are effectively expressed in the functional space spanned by polynomial basis. Similarly, the extended system states can be represented in the form of (16) as well. Each system state has N unknown coefficients, so the total number of unknown coefficients is \(M\times N\).

3.4.2 Form the residual

The residual of load flow equations, shown as (17), can be obtained by substituting the representations in (16) into (1). Here, \(R_m^P\), \(R_m^Q\), and \(R_m^V\) are the entries of \(\varvec{R}\) in (14). Similarly, substituting the approximation into \(L=0\) produces the residual, \(R^L\).

3.4.3 Form Galerkin equations

The projection of the residual \(\{R_m^P, R_m^Q, R_m^V, R^L\}^{\text{T}}\) onto each basis of \(\{\varPhi _k\}\) is conducted to form Galerkin equations as (15). The dimension is N times of the original model’s dimension, which is equal to the number of unknown coefficients. By evaluating the inner product, the parameters are eliminated, so that the Galerkin equations are only in terms of coefficients to be determined.

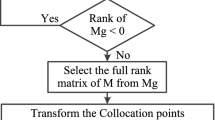

3.4.4 Solve Galerkin equations

The Galerkin equations we obtained are in terms of polynomials, which is usually nonlinear and coupled. Because the Galerkin equations are only in terms of unknown coefficients and the dimension of Galerkin equations is equal to the number of coefficients, the numerical approaches can be used to solve the coefficients, e.g., Newton-Raphson method. Once the coefficients are found, the approximate solutions can be obtained by substituting them into (16).

As we know, the cross effect between different parameters is a problem of combinatory explosion, so there will be too many cross-terms in the polynomial basis to reflect the corresponding cross effects. Thus, one major difficulty for utilizing Galerkin method is the coupled Galerkin equation will have very high dimension if the number of parameters is too large.

4 Numerical simulation

To illustrate the accuracy effectiveness and convergence of the proposed method, a 9-bus system shown in Fig. 1 [18] is used. It is assumed that the active power output of generators at bus-2 and bus-3, \(P_{G2}\in [0,2]\) and \(P_{G3}\in [0,1]\), are parameters (bus-1 is slack bus). The coefficients of approximate solutions is found by Newton-Raphson method, and the iteration threshold is set to \(1\times 10^{-8}\). In our paper, the computation is performed on the platform of Wolfram Mathematica.

4.1 Approximate results

Here, the polynomial basis is chosen from Legendre polynomials. Equation (18) give the approximate solutions of \(F_{7\rightarrow 5}\) (the power flow from bus-7 to bus-5) when \(N_d=3\). As shown, the system states can be expressed in terms of \(P_{G2}\) and \(P_{G3}\) explicitly.

It is very beneficial when we want to evaluate the states quickly for a new set of parameters. The results can be obtained directly by substituting the value of \(P_{G2}\) and \(P_{G3}\) into the approximate solutions, rather than evaluating the whole load flow equations. Furthermore, the explicit formula can provide additional insights of the dependence of states on parameters. For example, it can be seen that \(P_{G2}\) has greater influence than \(P_{G3}\) on \(F_{7\rightarrow 5}\) intuitively. The reason is that the coefficient of \(P_{G2}\) is much greater than any other coefficients, which means the change of \(P_{G2}\) cause the bigger change of \(F_{7\rightarrow 5}\) than other parameters.

Besides, sensitivities can be calculated by derivatives of the explicit expressions directly. For example, the second order sensitivity of \(F_{7\rightarrow 5}\) with respect to \(P_{G2}\) is

4.2 Accuracy

To demonstrate accuracy, the results from a sampling method are considered as the exact solutions for benchmarking, where both \(P_{G2}\) and \(P_{G3}\) are sampled for 21 times from the lower boundary to the upper boundary. The samples are assumed to have equal intervals and calculation is performed repeatedly just as the traditional load flow.

To show the accuracy of all results, root mean square error (RMSE) is used here. The RMSE of e and f at all buses are given in Table 2 with \(N_d\) increasing from 1 to 3. When \(N_d\) becomes larger, the RMSE of every state decreases very fast. The average value of RMSEs of all states decreases from \(4.92\times 10^{-3}\) when \(N_d=1\) to \(5.16\times 10^{-5}\) when \(N_d=3\) exponentially, indicating that the approximate solutions converge to the exact solutions at a very fast speed. As for the computation time, the 3-order approximation only need 0.062 s. Moreover, from a practical view, using 3-degree polynomials to approximate the solution of load flow problems in 9-bus system can produce very accurate results.

4.3 Convergence

In this subsection, the convergence of coefficients with respect to the polynomial basis is investigated. For facilitating analysis, the case with a single parameter \(P_{G2}\in [0,2]\) is considered, while \(P_{G3}\) is set to 0.5, regarded as a constant. Here, the iteration threshold in Newton-Raphson method is set to \(1\times 10^{-15}\).

The absolute value of coefficients of \(e_5\) and \(f_5\) is depicted on a semi-log plot in Fig. 2 when \(N_d=14\). It is noticed that an exponential convergence is achieved and the first few terms make major contribution to the approximation. That means the neglected high-order terms have little influence on the approximation.

4.4 Comparison with Taylor expansion method

The Taylor expansion is derived from partial differential equations in our analysis, which provides a local approximation. As shown in Table 2, the RMSE of \(e_5\) by Galerkin method has the biggest value among the when \(N_d=3\). Thus, the 3rd-order approximation of \(e_5\) is used to show the comparison between Taylor expansion and Galerkin method, and the Taylor expansion is calculated at the point: \(P_{G2}=1.0\) and \(P_{G3}=0.5\).

Figures 3 and 4 show the error of \(e_5\) by Galerkin method and Taylor expansion method, respectively. It can be seen that the Taylor expansion’s error becomes larger when \(P_{G2}\) and \(P_{G3}\) get more far away from the point where the approximation is expanded, while the error by Galerkin method is distributed more uniformly. Moreover, the largest absolute error of \(e_5\) is less than 0.6‰, while the largest absolute error in Taylor expansion is over 1.6‰. When the whole information on the domain is concerned about, it is advantageous to use the global approximation rather than the local one.

4.5 Comparison with data fitting method

Data fitting method is based on square least algorithm here. To ensure the accuracy, the theoretical number of sample points is \((N_d+1)^{N_p}\) according to [19]. Thus, two scenarios are considered in our studies, where 9 sample points (optimal number when \(N_d=2\)) and 16 sample points (optimal number when \(N_d=3\)) are used respectively. The RMSE of \(e_5\) by different methods are given in Table 3. All the errors by Galerkin method are smaller than that by data fitting method because Galerkin method provides the optimal approximation. However, when \(N_d\) increases, the error does not always decrease in data fitting method. In the results of 9 sample points, the RMSE increases from \(4.53\times 10^{-4}\) to \(2.35\times 10^{-3}\) when \(N_d\) increases from 2 to 3, which could be explained as the overfitting problem, because 9 samples is not enough to fit 10 coefficients.

In fact, the accuracy of traditional data fitting method is closely related to the number of samples and the complexity of target function (i.e., the polynomial approximation to be determined). Because \((N_d+1)^{N_p}\) increases very fast when \(N_p\) becomes larger, it is not affordable to handle so many sample results at one go if \(N_p\) is very large. Thus, only parts of these samples are applied by some empirical rules, meaning that the accuracy shall be compromised. However, the approximation results, derived from the orthogonal projection of governed equations, do not rely on the sampling results and the accuracy is guaranteed when \(N_d\) increases.

4.6 Comparison with interpolation method

Spline interpolation is a widely used polynomial approximation approach, where the interpolant is a special type of piecewise polynomial called a spline. In this case, three scenarios are considered: s1) 9 interpolation points have equal intervals lying from the lower boundary to the upper boundary, e.g., \(\{0\%,50\%,100\%\}\); s2) 9 interpolation points are selected from the lower boundary to the upper boundary as \(\{25\%,50\%,75\%\}\); s3) the proposed method.

Figures 5 and 6 show the error distribution of \(e_5\) by s1 and s2 respectively. Compared with Fig. 3, the errors under s1 and s2 are much larger than that under s3, where the largest absolute errors under s1 and s2 are 0.625‰ and 1.56‰ respectively. It can be seen that, the errors at interpolation points are zero, because the interpolation method is to find polynomial approximation satisfying the sample results at interpolation points. That means the approximation accuracy is closely related to the choice of interpolation points, whereas the proposed method does not rely on sampling results. For the global error, the RMSEs of \(e_5\) under s1 and s2 are \(2.47467\times 10^{-4}\) and \(2.81343\times 10^{-4}\) respectively, which are 3.72 and 4.23 times of the one under s3.

4.7 Comparison with collocation method

For the collocation method, the strategy of collocation points selection will influence the approximation accuracy. Here, two strategies are chosen, which are Gaussian quadrature rule [20] and Chebychev rule [21] respectively.

Figures 7 and 8 show the error distribution of \(e_5\) by Gaussian quadrature and Chebychev rule respectively. Compared with Fig. 3, the errors by collocation method are much larger than that by the proposed method, where the largest absolute errors under Gaussian quadrature and Chebychev rule are 1.29‰ and 1.31‰ respectively. For the global error, the RMSEs of \(e_5\) under Gaussian quadrature and Chebychev rule are \(1.809\times 10^{-4}\) and \(1.777\times 10^{-4}\) respectively, which are 2.87 and 2.82 times of the one by the proposed method.

5 Applications to the region boundary problems

In many problems, the boundary is very useful to help us to distinguish the domains with different properties. For example, the static voltage stability region boundaries can be used to describe the critical condition of the static voltage stability [22]. In this section, two examples are introduced to show the applications of Galerkin method to the region boundary problems.

5.1 Example 1

The second-order condition boundary of convexity in power systems is chosen as the first example. As is known by now, it is hard to decide whether the hyperplane of the extended system states determined by load flow equations is convex or not. One possible way is to use sampling methods, where the convexity of hyperplane at each sample point is investigated independently. The Galerkin method introduced in this paper provides an new approach to investigate the convexity.

Here, the 9-bus system in Section 4 is used and the convexity of netloss is investigated. To show the nonconvexity, the data of two transmission line is modified as Table 4.

From [23], it is known that if \(P_{loss}\) is convex in \({\text{dom}}(P_{loss})\), one necessary condition is its Hessian matrix, \(\nabla ^2 P_{loss}\), is positive semidefinite and its eigenvalues are nonnegative. This is also called the second-order condition of convexity. The second-order condition boundary connects the domain where the second-order condition holds and does not holds. Thus, \(\nabla ^2 P_{loss}\) will have a zero eigenvalue at the boundary. Therefore, we have:

…where \(\varvec{\xi }=\{\xi _1,\xi _2\}^\text{T}\) is the right unit eigenvector of \(\nabla ^2 P_{loss}\). In this case, the dimension of (20) is 3.

By applying the basis representation, we have \(\xi _1=\sum _i^N\hat{c}_i^{(\xi _1)}\varPhi _i(P_{G3})\) and \(\xi _2=\sum _i^N\hat{c}_i^{(\xi _2)}\varPhi _i(P_{G3})\), where \(\hat{c}_i^{(\xi _1)}\) and \(\hat{c}_i^{(\xi _2)}\) are the unknown coefficients.

The second-order condition boundary of \(P_{loss}\) can be described as \({\mathcal {B}}(P_{G2},P_{G3})=0\), from which \(P_{G2}\) can be approximated in terms of \(P_{G3}\):

where \(\hat{c}_{i}^{(P_{G2})}\) is the coefficient to be determined. Thus, the total number of unknown coefficients in (20) and (21) is 3N.

Substituting (21) into (20) will result the residual, \(\varvec{R}^{cvex}\), which is only in terms of unknown coefficients and \(P_{G3}\). Then, Galerkin equations can be formulated by projecting \(\varvec{R}^{cvex}\) onto each basis of \(\{\varPhi _i\}\). The dimension of Galerkin equations will be 3N, which is equal to the number of unknown coefficients. The coefficients can be found by solving the Galerkin equations, and then the boundary can be obtained by substituting them into (21).

The area filled with slashes in Fig. 9 shows the regions of \(P_{loss}\) satisfying the second-order condition. It can be interpreted that the graph of \(P_{loss}\) have positive upward curvature.

5.2 Example 2

The second example is from a practical system containing 1648 buses [24], and the influence of different load levels on bus voltage control types is investigated. In our analysis, it is assumed that the load factors in area-1 and area-2 are denoted as \(k_1\) and \(k_2\) respectively, where the original load values are multiplied by the corresponding load factors. As for the computation time of this large-scale system, the 3-order approximation desires about 1.354 s.

It is known that the bus type will switch between PV and PQ, when the reactive power injection reaches its limit. Bus-18 is selected to investigate the impact of load variation, which is located at the boundary of load area-1 and area-2. To show the impact on the maximum reactive power reserve of bus-18, the extended equation \(L=0\) is:

where \(\bar{Q}_{18}\) is the maximum reactive power injection at bus-18.

Assume that the switch condition is formulated as \({\mathcal {S}}(k_1,k_2)=0\). Then, \(k_{1}\) can be expressed as:

where \(\hat{c}_{i}^{(k_{1})}\) is the coefficient to be determined.

Substituting (23) into load flow equations and (22), we can obtain the residual in terms of \(k_2\), denoted as \(\varvec{R}^{QV}\). Then \(\varvec{R}^{QV}\) is projected onto each basis of \(\{\varPhi _i\}\) and the unknown coefficients can be found by solving the resulted Galerkin equations.

Figure 10 shows the PV area and PQ area of bus-18 with different \(k_1\) and \(k_2\). It can be seen that in the blue area, the reactive power capacity is adequate to maintain the voltage at the specified value. But when the load factors become bigger, bus-18 will change into PQ type at the black line between the two areas.

6 Conclusion

This paper proposes a new method to provide a global optimal approximation by using orthogonal polynomial basis and Galerkin method. Numerical simulation results show that this method has better accuracy and stability compared to Taylor expansion and data fitting method. One important application is to obtain the region boundaries satisfying the corresponding critical conditions, and two additional case studies are performed to show the effectiveness.

The major difficulty in the implementation is that when the number of parameters is very large, the high-dimensional Galerkin equations are very hard to solve. However, we may still try some other ways to cope this problem, e.g., clustering the similar parameters into one equivalent parameter, using network partition and analysing a few number of parameters at one go, etc. Our future work will be dedicated to this topic.

References

Giraldi L, Litvinenko A, Liu D et al (2014) To be or not to be intrusive? The solution of parametric and stochastic equations—the Plain Vanilla Galerkin case. SIAM J Sci Comput 36(6):2720–2744

Mehta D, Molzahn DK, Turitsyn K (2016) Recent advances in computational methods for the power flow equations. In: Proceedings of 2016 American control conference, Boston, USA, 6–8 July 2016, 13 pp

An T, Han C, Wu Y et al (2017) HVDC grid test models for different application scenarios and load flow studies. J Mod Power Syst Clean Energy 5(2):262–274

Jazayeri P, Rosehart W, Westwick DT (2007) A multistage algorithm for identification of nonlinear aggregate power system loads. IEEE Trans Power Syst 22(3):1072–1079

Tan T, Chen W, Wang D et al (2012) Wind power prediction based on wind farm output power characteristics using polynomial fitting. In: Proceedings of Asia-Pacific power and energy engineering conference, Shanghai, China, 27–29 March 2012, 4 pp

Wang K, Li G, Jiang X (2013) Applying probabilistic collocation method to power flow analysis in networks with wind farms. In: Proceedings of IEEE PES general meeting, Vancouver, Canada, 21–25 July 2013, 5 pp

Dong H, Jin M (2007) Effect of uncertainties in parameters of load model on dynamic stability based on probabilistic collocation method. In: Proceedings of IEEE Lausanne power tech, Lausanne, Switzerland, 1–5 July 2007, 5 pp

Borkowska B (1974) Probabilistic load flow. IEEE Trans Power Appar Syst PAS 93(3):752–759

Zhang P, Lee S (2004) Probabilistic load flow computation using the method of combined cumulants and gram-charlier expansion. IEEE Trans Power Syst 19(1):676–682

Qiu J, Dong Z, Zhao J et al (2015) A low-carbon oriented probabilistic approach for transmission expansion planning. J Mod Power Syst Clean Energy 3(1):14–23

Lauby M (1988) Evaluation of a local DC load flow screening method for branch contingency selection of overloads. IEEE Trans Power Syst 3(3):923–928

Miao L, Fang J, Wen J et al (2013) Transient stability risk assessment of power systems incorporating wind farms. J Mod Power Syst Clean Energy 1(2):134–141

Bakirtzis A, Biskas P (2002) Decentralised DC load flow and applications to transmission management. IEE Proc Gener Transm Distrib 149(5):600–606

Yang S, Liu F, Zhang D et al (2011) Polynomial approximation of the damping-ratio-based small-signal security region boundaries of power systems. In: Proceedings of IEEE PES general meeting, Detroit, USA, 24–29 July 2011, 8 pp

Kreyszig E (1978) Introductory functional analysis with applications. Wiley, New York

Cox DA, Little J, O’Shea D (1997) Ideals, varieties, and algorithms: an introduction to computational algebraic geometry and commutative algebra, 2nd edn. Springer, New York

Xiu D, Karniadakis GE (2002) The Wiener–Askey polynomial chaos for stochastic differential equations. SIAM J Sci Comput 24(2):619–644

Anderson PM, Fouad AA (2008) Power system control and stability. Wiley, New York

Sudret B (2008) Global sensitivity analysis using polynomial chaos expansions. Reliab Eng Syst Saf 93(7):964–979

Yin H, Zivanovic R (2017) Using probabilistic collocation method for neighbouring wind farms modelling and power flow computation of South Australia grid. IET Gener Transm Distrib 11(14):3568–3575

Gao F, Hu J, Guo Q et al (2017) Distributed combined cooling heating and power optimization in a microgrid based on collocation method. In: Proceedings of China international electrical and energy conference, Beijing, China, 25–27 October 2017, 6 pp

Qiu Y, Wu H, Zhou Y et al (2017) Global parametric polynomial approximation of static voltage stability region boundaries. IEEE Trans Power Syst 32(3):2362–2371

Boyd S, Vandenberghe L (2004) Convex optimization. Cambridge University Press, Cambridge

Siemens Power Technologies International (2018) The 1648 bus system provided by PSS/E software. IEEE Dataport. https://doi.org/10.21227/3j5k-6191

Acknowledgements

This work is supported by National Nature Science Foundation of China (No. 51777184).

Author information

Authors and Affiliations

Corresponding author

Additional information

CrossCheck date: 12 September 2018

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

ZHOU, Y., WU, H., GU, C. et al. Global optimal polynomial approximation for parametric problems in power systems. J. Mod. Power Syst. Clean Energy 7, 500–511 (2019). https://doi.org/10.1007/s40565-018-0469-2

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40565-018-0469-2