Abstract

This paper deals with linear models for a time-dependent response and explanatory variables in a high-dimensional setting. We account for the time dependency in the data by explicitly adding autoregressive terms to the response variable in the model together with an autoregressive process for the residuals. We present a penalized likelihood approach for parameter estimation and discuss its theoretical properties. Finally, we show the successful application of the proposed methodology on simulated data and on two real applications, where we model air pollution and stock market indices, respectively. We provide an implementation of the method in the R package DREGAR, freely available on CRAN, http://CRAN.R-project.org/package=DREGAR.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

This paper deals with fitting a time series-regression model using \(l_1\) regularized inference. In the context of linear models, \(l_1\) penalized approaches have received great interest in recent years as they allow performing variable selection and parameter estimation simultaneously for any data, including high-dimensional datasets, where classical approaches for parameter estimation break down, e.g. [9, 13, 19, 21, 25]. In [25], it is shown that a model where penalties are adapted to each individual regressor enjoys oracle properties. Most of the advances in regularized regression models have been for the case of independent and identically distributed data. A recent line of research has concentrated on regularized models in time dependent frameworks. Amongst these, [22] showed the successful application of \(l_1\) penalised inference in the context of autocorrelated residuals for a fixed order, by proposing the model

and studied its asymptotic properties. We refer later to this model as REGAR(q). Nardi and Rinaldo [15] studied the theoretical properties of a regularized autoregressive process on \(Y_t\) for both low and high dimensional cases, whereas [1, 14, 20] studied the \(l_1\) estimation of vector autoregressive models. Recently, [6] proposed an alternative to \(l_1\) penalisation for vector autoregressive (VAR) models in the high-dimensional framework. In [1, 6, 15, 20], no exogenous variables are included in the model. In contrast to this, [12] studied the asymptotic properties of adaptive lasso in high dimensional time series models when the number of exogenous variables increases as a function of the number of observations and [17] considered the case of VAR models with exogenous variables. While both models cover a lagged regression in the presence of exogenous variables, they do not consider autocorrelated residuals. Recently, [23] proposed an extension of the model in [22] by adding a moving average term as follows

We refer later to this model as REGARMA(p,q). Similar to [22], they proved the consistency of the model in low-dimensional cases. Despite the generality of this model, considering an ARMA process for the errors results in a complex model with a challenging implementation.

In this paper, we propose to account for the time dependency in the data by explicitly adding autoregressive terms of the response variable in the linear model, as in [15], as well as an autocorrelated process for residuals, as in [22], in order to capture complex dynamics parsimoniously. In particular, given fixed orders p and q, we propose the model

We name the resulting model DREGAR(p,q). The model is essentially a double autoregressive model with unbalanced weights for response and explanatory variables. To show this, we rewrite the model in (1), the REGARMA model of [23] and the REGAR model of [22] using the backward shift operator L:

where L(.) represents a stationary polynomial of L and L(\(\theta \))L(\(\phi \)) represents a special case of an \(AR(p+q)\) process. From these equations, one can see how REGAR and REGARMA impose the same autoregressive structure on both response and covariates, whereas DREGAR assumes different structures on each of them. We found this aspect to be particularly advantageous on a number of analyses of real datasets, where DREGAR fits the data better than competitive models, with two of these examples reported in Sect. 6. In contrast to REGAR and DREGAR, REGARMA contains a moving average process on the errors. The MA component, however, induces a higher level of complexity in the parameter estimation and in the proofs of the theoretical results.

All three models belong to the general framework of ARMAX [11], which is common in the system identification and signal processing literature [16] where inference is typically performed in the low-dimensional case. The focus of this paper is on statistical inference for the DREGAR model, in particular for the high-dimensional case where maximum likelihood estimation fails. In particular, we devise a penalised likelihood approach for parameter estimation, in the same spirit to the REGAR and REGARMA contributions. In Sect. 2, we formulate the model and present an \(l_1\) penalized likelihood approach for the estimation of the parameters. In Sect. 3, we prove the asymptotic properties of the model DREGAR(p,0). In Sect. 4, we discuss the implementation of DREGAR. A simulation study, given in Sect. 5, will accompany the theoretical results. In Sect. 6 we apply the model to two real datasets, one on air pollution and another on the stock market, and compare the fit of the model with REGARMA and REGAR models. Finally, we draw some conclusions in Sect. 7.

2 \(L_1\) penalised estimation

The general form of DREGAR consists of a lagged response variable, covariates and autocorrelated residuals. Consider the following Gaussian DREGAR model of order p and q,

where \(x'_t\) is the tth row of the design matrix containing r predictors, \(X'_{T\times r}\); \(\{y_t\}\) and \(\{\epsilon _t\}\) follow stationary time series processes, that is all roots of the polynomials \(1-\sum _{i=0}^{p}\phi _i L^i\) and \(1-\sum _{i=0}^{q}\theta _i L^i\) are unequal and outside the unit circle; \(e_t,\,t=1,\ldots ,T\) are independent and identically normally distributed noises with mean zero and known finite fourth moments, and \(p+q<T\). Moreover, we assume that the error and explanatory variables are mutually independent for all time points. To remove the constants from the model we follow the literature on regularized models, e.g. [7, 21], and standardize the covariates to have zero mean and unit variance and the response to zero mean.

Given the first \(T_\circ =p+q\) observations, maximizing the \(l_1\) penalized conditional likelihood of the model is equivalent to minimizing

where \(\lambda ,\gamma ,\tau \) are tuning parameters and \(\varTheta =(\beta ,\phi ,\theta )\) is the vector of unknown parameters. Following the literature, and given the superior properties of adaptive lasso [25], we also propose an adaptive version of the likelihood

where \(\lambda ^*_i,\gamma ^*_j,\tau ^*_k, i=1,2,\ldots , r; j=1,2,\ldots , p; k=1,2,\ldots , q\) are tuning parameters.

2.1 Matrix representation of the model

For convenience, we write the model in matrix representation. Let \(H'=(H_{(p)},H_{(q)},X')\) be a \(n\times (p+q+r)\) matrix including lags of response (\(H_{(p)}\)), residuals (\(H_{(q)}\)), and explanatory variables (\(X'\)). Let \(\varTheta =(\phi ,\theta ,\beta )'\) denote the vector of corresponding parameters, \(e'=(e_{T_0+1},e_{T_\circ +2}, \ldots ,e_T)\) be the vector of errors, \(T_\circ =p+q\) and \(n=T-T_\circ \), as previously defined. Then, in matrix form, the model can be written as \(Y=H'\varTheta +e\) and the \(l_1\) penalized conditional likelihood given the first \(T_0\) observations is equivalent to

where \(L(\varTheta )=e'e, \lambda '=\{\lambda \}_{1 \times r}, \gamma '=\{\gamma \}_{1 \times p}, \tau '=\{\tau \}_{1 \times q}\). Similarly, the adaptive form of the model is given by

where the parameters are given by \(\lambda ^{*'} =(\lambda ^*_1,\lambda ^*_2, \ldots , \lambda ^*_{r} )\), \(\gamma ^{*'} =(\gamma ^*_1,\gamma ^*_2, \ldots , \gamma ^*_p )\), \(\tau ^{*'} =(\tau ^*_1,\tau ^*_2, \ldots , \tau ^*_q )\), \(\varTheta =(\beta ,\phi ,\theta )'\).

3 Theoretical properties of the model

In order to study the theoretical properties of DREGAR and adaptive-DREGAR, we define the true coefficients by \(\varTheta ^\circ =(\beta ^{\circ },\phi ^{\circ },\theta ^{\circ })'\) and assume that some of the coefficients are zero. The indices of non-zero coefficients in each group of coefficients, \(\beta ,\phi \) and \(\theta \), are denoted by \(s_1,s_2\) and \(s_3\) respectively, whereas \(s^c_1,s^c_2,s^c_3 \) are the complementary sets and contain the indices of zero coefficients. We also define \(\beta ^\circ _{s_1},\phi ^\circ _{s_2},\theta ^\circ _{s_3}\) and their corresponding (DREGAR) estimations by \(\hat{\beta }_{s_1},\hat{\phi }_{s_2},\hat{\theta }_{s_3}\). Similarly, adaptive-DREGAR estimations are denoted by \(\hat{\beta }^*_{s_1},\hat{\phi }^*_{s_2},\hat{\theta }^*_{s_3}\). Finally, different combinations of model parameters are going to be used, with obvious meaning, in particular \(\varTheta _1^\circ =(\beta ^{\circ }_{s_1},\phi ^{\circ }_{s_2} ,\theta ^{\circ }_{s_3})'\), \(\varTheta _2^\circ =(\beta ^{\circ }_{s^c_1},\phi ^{\circ }_{s^c_2} ,\theta ^{\circ }_{s^c_3})'\), \(\hat{\varTheta }_1=(\hat{\beta }_{s_1},\hat{\phi }{_{s_2}},\hat{\theta }_{s_3})'\), \(\hat{\varTheta }_2=(\hat{\beta }_{s^c_1},\hat{\phi }_{s^c_2},\hat{\theta }_{s^c_3})'\), \( \hat{\varTheta }_1^*=(\hat{\beta }^{*}_{s_1},\hat{\phi }^{*}_{s_2},\hat{\theta }^{*}_{s_3})'\), \(\hat{\varTheta }_2^*=(\hat{\beta }^{*}_{s^c_1},\hat{\phi }^{*}_{s^c_2},\hat{\theta }^{*}_{s^c_3})'\).

3.1 Assumptions

To prove the theoretical properties of the estimators, in line with the literature, we make use of the following assumptions:

-

(a)

The response variable is assumed to be stationary and ergodic with finite second order moment. Further, we assume that the two polynomials \(1-\sum _{i=1}^{p}\phi _iL^i\) and \(1-\sum _{i=1}^{q}\theta _i L^i\) have all the roots unequal and outside the unit circle.

-

(b)

Covariates are assumed to be mutually independent of each other and of the error term. Additionally, we assume that \(x_{.s},s=1,\ldots ,r\) are generated from stationary and ergodic processes.

-

(c)

\(e_t\)s are i.i.d Gaussian random variables with finite fourth moments.

-

(d)

\(\frac{1}{n}X'X \overset{a.s}{\rightarrow } \mathbb {E}(X'X)<\infty \) and \(\max _{1 \le i \le r} x_ix'_i<\infty \).

The first three assumptions guarantee that the mean and variance of the whole system remain unchanged over time. The last assumption guarantees the existence and convergence of the sample moments.

3.2 Theoretical properties of \(l_1\) penalized DREGAR(p,0)

In this section we focus on the theoretical properties of \(l_1\) penalized DREGAR estimators. In particular, we concentrate on the theoretical properties of DREGAR(p,0) as we can prove that there is asymptotically no bias in this model. This model differs from REGAR(p) [22] as it imposes an autoregressive process on the response whereas REGAR(p) considers the case of autocorrelated residuals (i.e. similar to a DREGAR(0,q) model). In the next two sections, we distinguish the cases of DREGAR(p,0) and adaptive-DREGAR(p,0), respectively.

3.3 Asymptotic properties of DREGAR(p,0)

Theorem 1

Under assumptions \([\text {a}{-}\text {d}]\) and assuming \(\lambda _n\sqrt{n} \rightarrow \lambda _\circ ,\) \(\gamma _n \sqrt{n} \rightarrow \gamma _\circ ,\) and \(\lambda _\circ ,\gamma _\circ \ge 0,\) it follows that \(\sqrt{n}(\hat{\varTheta }-\varTheta ^\circ ) \overset{d}{\rightarrow } \arg \min {k(\delta )} \) where

and \(\delta =(u',v') \text { is a vector of parameters in }\) \(\mathbb {R}^{(r+p)},\) \(W \sim \,\mathrm{{MVN}}\,(O,\sigma ^2U_B)\) and \(U_B=\mathbb {C}ov(X,H_{(p)})\).

Proof

Let

Note that \(k_n\) reaches the minimum at \(\sqrt{n}(\hat{\varTheta }-\varTheta ^\circ )\). From (2),

The last two terms have limits:

As for the first term:

Setting \(A=(X',H_{(p)})\) and \(e=y -H_{(p)}\phi ^\circ -X'\beta ^\circ \),

which is equivalent to

From left to right, we prove that the first term in (7) is bounded and the next two terms follow (asymptotically) normal distributions:

Similar calculations to [5] show that (8) tends to \(\delta 'U_B\delta \) where \(U_B\) is the covariance matrix of \((X',H_{(p)})\), which is bounded (O(1)). Defining \(S_n\) as a function of n,

and using assumptions \([\text {a}{-}\text {d}]\) and the central limit theorem for martingales result in

where \(\delta =(u',v')\) and \(W\sim \,\mathrm{{MVN}}\,(O,\sigma ^{2} U_B)\). Then

Substituting all results in Eq. (5),

Up to now, we have proved \(k_n(\delta )\overset{d}{\rightarrow }k(\delta )\). To show that \(\arg \min k_n(\delta )=\sqrt{n}(\hat{\varTheta }-\varTheta ^\circ )\overset{d}{\rightarrow }\arg \min k(\delta )\) it is enough to prove that \(\arg \min \{k_n(\delta )\}= O_p(1)\) [8, 9]. This follows from

where \(\epsilon _\circ >0\) is a vector of positive constants. The fourth term in \(k^*_n(\delta )\) for example, comes from the fact that \(\forall \epsilon _\circ >0,\exists N, \text {\textit{if}}\, n\ge N,|\lambda ^\circ -\sqrt{n}\lambda _n|<\epsilon _\circ \). Then, \(\sqrt{n}\lambda _n<\lambda ^\circ +\epsilon _\circ \). In addition, \(k_n(0)=k^*_n(0)\) and \(f_n(\delta )=o_p(1)\). As a result \(\arg \min \{k^*_n(\delta )\}=O_p(1)\) and \(\arg \min \{k_n(\delta )\}=O_p(1)\). The proof of the theorem is completed. \(\square \)

This theorem shows that the DREGAR estimator has the Knight and Fu [9] asymptotic property and it implies that the tuning parameters in \(Q_n(\varTheta )\) do not shrink to zero at the speed faster than \(n^{-1/2}\). In the proof of Theorem 1, the errors must be independent and identically distributed and we do not make a specific assumption about the type of distribution. In other words, the central limit theorem for martingale guarantees the convergence to the normal distribution.

As shown in [9], minimizing \(l_1\) penalized likelihood in the linear model leads to unavoidable bias in the estimates of the non-zero parameters. In the following remark, we show this also in the context of the DREGAR model.

Theorem 2

Taking a special case where \(\beta ^\circ _i>0, \quad 1 \le (\forall i\in \mathbb {N}) \le r\) and \(\phi ^\circ _{i_2}=0\) for \(1 \le {j_1 }\le q,\, 1\le j_2 \le p,\,j_1,\,j_2 \in \mathbb {N},\) assuming that there are enough observations and that the minimizer \(k(\delta )\) correctly identifies the coefficients, that is, \(u \ne 0\) and \(v=0,\) then, \( k(\delta )\) must satisfy

where \(U_{B_{1:r}}\) is the first r rows of \(U_B\) corresponding to the r covariates. From the final equation, DREGAR(p,0) suffers an asymptotic bias, provided the tuning parameter is positive. In other words, lasso regularization of DREGAR(p,0) is not asymptotically consistent. In the next section we discuss the adaptive-DREGAR(p,0) where a fixed level penalty term is replaced by a weighted (adaptive) one. We show that under certain conditions adaptive-DREGAR(p,0) is consistent and enjoys the oracle property.

3.3.1 Adaptive DREGAR(p,0) model

Recall from Eq. (3) that parameter estimation in adaptive-DREGAR(p,q) involves the minimization of

where \(\lambda ^*_i,\gamma ^*_j,\tau ^*_k\) are tuning parameters and \(\varTheta =(\beta ,\phi ,\theta )'\) is parameter space.

To prove the asymptotic property of adaptive-DREGAR(p,0) we follow [9, 22] and define,

where \(a_n\) and \(b_n\) are maximum and minimum penalties for significant and insignificant coefficients respectively.

Theorem 3

Let \(a_n = o(1)\) as \(n \rightarrow \infty \). Then under assumptions \([\text {a}{-}\text {d}]\) there is a local minimiser \(\hat{\varTheta }^*\) of \(Q_n^*(\varTheta )\) such that

Proof

Let \(\alpha _n=n^{-1/2}+a_n\), and \(\{\varTheta ^\circ +\alpha _n\delta :\Vert \delta \Vert \le d,\delta =(u,v)'\}\) be a ball around \(\varTheta ^\circ \). Then for \(\Vert \delta \Vert =d\) we have

where

The last equation holds because of the decreasing speed of \(\alpha _n\). On the other hand, similar calculations to Theorem 1 results in

Because (11) dominates (10), then for any given \(\eta >0\), there is a large enough constant d such that

This result shows that with probability at least \(1-\eta \), there is a local minimiser in the ball \(\{\varTheta ^\circ +\alpha _n\delta :\Vert \delta \Vert \le d\}\) and as a result a minimiser \(Q^*_n(\varTheta )\) such that \(\Vert \hat{\varTheta }^*-\varTheta ^\circ \Vert =O_p(\alpha _n)\) (see [22, Lemma 1], [5]).

The proof is completed. \(\square \)

Theorem 3 implies that there exist a \(\sqrt{n}\text {-consistent}\) local minimiser \(Q_n^*(\varTheta )\), when tuning parameters (for significant variables) in DREGAR(p,0) converge to zero at the speed faster than \(n^{-1/2}\).

In the next step we prove that under the case where the tuning parameter associated with insignificant variables in DREGAR(p,0) shrink to zero at a speed slower than \({n^{-1/2}}\), then their associate coefficients will be estimated exactly equal to zero with probability tending to 1. Further, in the next theorem we show that by increasing the penalties on the zero parameters at a certain speed, the probability of these coefficients to be estimated exactly zero tends to one.

Theorem 4

Let \(b_n\sqrt{n} \rightarrow \infty \) and \(\Vert \hat{\varTheta }^* -\varTheta ^\circ \Vert =O_p(n^{-1/2})\) then

Proof

This proof follows from the fact that \(Q_n^*(\hat{\varTheta }^*)\) must satisfy

where \(U_{{i}}\) is the ith row of \(U_B\) and \(i \in s_1^c\). The second term in (12) is a direct result of adding a \(\pm X'\beta ,\pm H_{(p)}\phi \) to \(L_n(\hat{\varTheta }^*)\). By using the central limit theorem, the first term in Eq. (12), \(\sum _{t}e_tx'_{ti}\), is of order \(O_p(n^{1/2})\) and the second term is \(O_p(n^{1/2})\). Furthermore, both terms are dominated by \(n\lambda ^*_i\) since \(b_n \sqrt{n} \rightarrow \infty \) (expansion of [9, 22]). Then the sign of \(\dfrac{\partial Q^*_n(\hat{\varTheta }^*)}{\partial \beta _i}\) is dominated by the sign of \(\hat{\beta }^*_i\), from which \(\hat{\beta }^*_i=0\) in probability. Analogously, we can show that \(Pr(\hat{\phi }^*_{s_2^c}) \overset{p}{\rightarrow } 1\).

The proof is completed. \(\square \)

Theorem 4 shows that adaptive-DREGAR(p,0) is capable of producing sparse solutions. Theorems 3 and 4 indicate that a \(\sqrt{n}\)-consistent estimator \(\hat{\varTheta }^*\) must satisfy \(Pr(\hat{\varTheta }^*_2=0) \rightarrow 1\). Then, adaptive-DREGAR(p,0) is a sparse model.

Theorem 5

Assume \(a_n\sqrt{n} \rightarrow 0\) and \(b_n\sqrt{n} \rightarrow \infty \). Then, under assumptions \([\text {a}{-}\text {d}]\) we have

where \(U_0\) is the sub-matrix \(U_B\) corresponding to \(\varTheta _1^\circ ,\) and \(\hat{\varTheta }_1^*\) corresponds to non-zero elements of \(\hat{\varTheta }^*.\)

Proof

From Theorems 3 and 4, one can conclude that \(Pr(\hat{\varTheta }_2^*=0) \overset{p}{\rightarrow }1\). Thus, the minimiser \(Q_n^*(\varTheta ) {\xrightarrow {with \quad pr \rightarrow 1}}Q^*_n(\varTheta _1)\). This implies that the lasso estimator \(\hat{\varTheta }^*_1\) satisfies the following equation

From Theorem 3, \(\hat{\varTheta }_1^*\) is a \(\sqrt{n}\)-consistent estimator. Thus a Taylor expansion of the above equation yields

where p() is the tuning function

For n sufficiently large \(p(\hat{\varTheta }_1^*)=p(\varTheta _1^\circ )\). Thus,

The proof is completed. \(\square \)

Theorem 5 implies that, adaptive DREGAR(p,0) is asymptotically an oracle estimator provided \(a_n\) tends to zero at the speed faster than \(\sqrt{n}\) (or \(a_n \sqrt{n} \rightarrow 0\)) and simultaneously \(b_n\) increases at the speed slower than \(\sqrt{n}\) (or \(b_n\sqrt{n} \rightarrow \infty \)).

4 Implementation

The formulation of the model lends itself naturally to its implementation, in contrast to other time series models such as [23]. As the model contains residuals, which are unknown, we apply a two-step optimization procedure

Repeating steps 1 and 2 iteratively provides the solution to DREGAR.

The tuning parameters \(\lambda \), \(\gamma \) and \(\tau \) can be chosen by K-fold cross-validation or by an information criterion such as AIC, BIC or eBIC. For our model these are given by:

where \(\mathrm{par}\) is the number of non-zero estimated parameters. For the simulation and real data analyses, we use eBIC which was found to have a good performance by [4]. For adaptive-DREGAR, we use \(\lambda ^*=\omega / |\tilde{\beta }|\), \(\gamma ^*=\omega / |\tilde{\phi }|\) and \(\tau ^*=\omega / |\tilde{\theta }|\), with \(\tilde{\beta }\), \(\tilde{\phi }\) and \(\tilde{\theta }\) the unpenalized or lasso estimations of the parameters. We assume the same \(\omega \) for both terms, so that we can simplify the problem to the ordinary adaptive-lasso problem, and select this tuning parameter by one of the criteria mentioned above.

A final choice for model selection is setting the orders p and q. We propose two general approaches to choose the optimal orders: (a) setting an upper bound P and Q and choosing the model that achieves the minimum eBIC inside the grid, (b) setting an upper bound P and Q and letting the model choose the optimal orders by keeping or eliminating the coefficients under \(l_1\) sparsity constraints. In the second approach, the fitting is based on \(n=T-(P+Q)\) time points, whereas in the first approach, the number of time points depends on the orders, p and q. Then a rule of thumb is to use the first approach when the number of observations is low and choose the second approach when there are enough observations.

The method is implemented in the R package DREGAR, available in CRAN http://CRAN.R-project.org/package=DREGAR.

5 Simulation study

We design a simulation study to compare the (adaptive) DREGAR with (adaptive) REGAR [22] and (adaptive) lasso [25]. To this end we propose the following configuration:

-

1.

Generate the design matrix, X, using a stationary Gaussian process with \(r=100\) and \(T=50,100,1000\). That is, high-dimensionality is considered in terms of the number of exogenous variables.

-

2.

Set 90% of \(\beta \) coefficients to zero. Assign unequal random numbers in \((-1,1)\) to the non-zero coefficients.

-

3.

Generate data from the DREGAR(2,2) model

$$\begin{aligned} y_{t}= & {} 0.5y_{t-1}+0.2y_{t-2}+X\beta + \epsilon _{t}\\ \epsilon _{t}= & {} 0.3\epsilon _{t-1} -0.1\epsilon _{t-2} +e_t\\ e_t\sim & {} N(0,\sigma ^2), \end{aligned}$$with \(\sigma ^2=0.5,\,1\) and 1.5.

-

4.

Sample 1500 data points from the above model so that the first 50, 100 or 1000 observations are used for parameter estimation (training set) and the rest \(n=1500-T\) points are left for evaluating the model performance (test set).

-

5.

Select tuning parameters by minimizing eBIC and fix the maximum orders P and Q to 3 (i.e. allowing also for variable selection for \(\phi \) and \(\theta \)).

We repeat each combination of models 100 times and calculate mean squared error of \(\hat{\beta }\) and the prediction mean squared error, defined by

where \(\hat{y}_\mathrm{test}\) is calculated using the two steps discussed in the implementation. We compare DREGAR(3,3) with lasso and with a DREGAR(0,6) model, which has the same number of parameters as DREGAR(3,3) and is the closest in the DREGAR family to a REGAR model [22].

Figures 1 and 2 show overall how adaptive DREGAR dominates lasso and REGAR for low and high-dimensional problems in terms of both prediction error and MSE of \(\hat{\beta }\). Table 1 shows the full set of results for PMSE with a better performance of DREGAR across the range of simulations.

6 Real data illustration

6.1 Analysis of air pollution data

In this section, we show the performance of the model on the National Mortality, Morbidity and Air Pollution Study (NMMAPS) dataset. This dataset is publicly available from http://www.ihapss.jhsph.edu/data/NMMAPS/ and contains daily mortality, air pollution, and weather data for 108 cities in the US from January 1, 1987 to December 31, 2000. The variables include six indicators for mortality (total non-accidental, cardiovascular disease, respiratory, pneumonia, chronic obstructive pulmonary disease, accidental), six indicators of air pollution (repairable particulates (PM10)/(PM25), carbon monoxide (CO), ozone (\(\hbox {O}_3\)), sulphur dioxide (\(\hbox {SO}_2\)), nitrogen dioxide (\(\hbox {NO}_2\))) as well as three indicators of weather (temperature (T), dew point temperature (D) and relative humidity (H)). Similar to [23] we study the relationship between ground level of ozone and indicators of air pollution and weather conditions in Chicago in 1995. Differently to [23], we take the effect of carbon monoxide (CO) into account. The covariates in the model consist of \(\hbox {NO}_2\), \(\hbox {SO}_2\), CO, PM10, temperature and relative humidity as well as all two-ways interactions. We show the interactions by initials, for instance NS represents the interaction between \(\hbox {NO}_2\) and \(\hbox {SO}_2\). A total number of 365 observations and 21 covariates are included in the analysis. All covariates and response are normalized to zero mean and unit variance.

Figure 3 shows a difficult choice for the maximum orders P and Q. We therefore follow the second approach in Sect. 4 and propose \(P=5\) and \(Q=5\) and let the model’s parameter inference guide the best orders. The parameters are estimated using the adaptive algorithm in Sect. 4, setting a maximum of 50 iterations and selecting the tuning parameters by minimizing eBIC.

We compare the optimal DREGAR(p,q) model with alternative models of similar complexity or natural subclasses. In particular, we consider DREGAR(p+q,0), DREGAR(p,0), DREGAR(0,q+p) and DREGAR(0,q). Note that the last two are the closest models to [22] in the DREGAR family. In addition, we consider standard non-dynamic models, namely adaptive-lasso and elastic-net. For the latter, we choose the optimal proportion of norms \(\alpha \) over a range of 100 values. We compare the models on the basis of a number of commonly used criteria: eBIC, AIC, Quasi-likelihood Information Criteria (QIC) [18] and Consistent AIC (CAIC) [3].

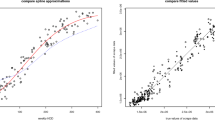

Table 2 provides a detailed illustration of the parameter estimates as well as information on the comparison of the models. Time series coefficients in the middle-bottom of the table propose an order of four and three for DREGAR as well as DREGAR(1,0) and DREGAR(0,3) for the other models, suggesting that the maximum order of 5 for p and q is sufficient. DREGAR(4,3) shows better results than DREGAR(p,0), and DREGAR(0,q) models with respect to model performance as shown in the top panel of the table. In line with [23], our results show several significant interactions, especially those between sulphur dioxide-temperature (ST) and humidity (SH), as well as between particulates and temperature (PT). However, we should stress that the two analyses are not directly comparable, since we consider an additional variable, CO, which shows a significant effect on the ozone ground level and a non-zero effect for the interaction with weather indicators, namely CT and CS. We further report the Ljung–Box test [2] statistics in the bottom of the Table 2. With the exception of lasso, elastic-net, DREGAR(5,0) and DREGAR(10,0), all other models show good fitting, i.e. no evidence against the white noise assumption. Figure 4 displays the scatterplot of fitted versus observed response for lasso and DREGAR(4,3), the residuals from the DREGAR(4,3) mode and the corresponding sample ACF and PACF. The small curvature in the scatter plot, mentioned also by [23], can be an indication of a particular weather condition that results in an interaction between primary pollutants. The residuals’ ACF and PACF suggest that the residuals are white noise as confirmed also by the p-value of the Ljung–Box test (0.61).

Finally, we have also compared the fit of the best DREGAR model, DREGAR(4,3), with a DREGAR(0,7) model (the closest to a REGAR(7) model), in order to assess the benefit in having different autoregressive structures for the response and the predictors, a unique feature of the model that we propose in this paper. Without penalising the coefficients, the maximum likelihood for DREGAR(4,3) is −1106.884 and that of DREGAR(0,7) is −1110.832, suggesting an improved fit for the DREGAR(4,3) model.

6.2 Analysis of stock market data

For the second real application we take an example from the stock market. Data are collected from yahoo finance (https://finance.yahoo.com) and contain 251 closing prices for 30 indices in the DowJones market in 2015. We take the IBM index as the response and the remaining 29 indices as the covariates and study their correlations via the DREGAR family of models. The variables are listed as follows: 3M (MMM), American Express (AXP), Alcoa (AA), AT&T (T), Bank of America (BAC), Boeing (BA), Caterpillar (CAT), Chevron (CVX), Cisco Systems (C), Coca-Cola (KO), DuPont (DD), ExxonMobil (XOM), General Electric (GE), Hewlett-Packard (HPQ), The Home Depot (HD), Intel (INTC), IBM (IBM), Johnson & Johnson (JNJ), JPMorgan Chase (JPM), Kraft (KRFT), McDonald’s (MCD), Merck (MRK), Microsoft (MSFT), Pfizer (PFE), Procter & Gamble (PG), General Motors (GM), United Technologies (UTX), Verizon (VZ), Wal-Mart (WMT), Walt Disney (DIS).

We apply first differences of the log-prices to get stationary returns [10]. Figure 5 shows low orders of auto-correlations in the residuals as typical of financial data.

Adaptive DREGAR(5,5), DREGAR(10,0), DREGAR(0,10), DREGAR(5,0) and DREGAR(0,5) are applied to the data and the tuning parameters are selected using eBIC. In addition, we consider adaptive-lasso and elastic net as well as GARCH, which is typically used for financial data. For GARCH, we use the R package rugarch and choose the optimal model by searching among all models with maximum orders (2,2). The models are compared on the basis of eBIC, AIC, CAIC, QIC, Ljung–Box statistic and sparsity.

Table 3 shows that DREGAR(5,5) performs very well compared to other methods with respect to eBIC, AIC and CAIC as well as sparsity. Fitting adaptive DREGAR(5,5) to data results in an order of 3 for the dynamic term and an order of 4 for the residuals. So the final selected model is DREGAR(3,4), suggesting that a maximum order of 5 for p and q is adequate also for this dataset. Amongst the top selected predictors, there are: MSFT (coefficient 0.3), HPQ (0.23), VZ (0.20), MMM (0.14), MRK (0.13) and CVX (0.10). Figure 6(top) shows observed versus fitted response for lasso and DREGAR(3,4). From this figure, DREGAR(3,4) has a better fit compared to lasso in terms of the correlation between the observed and fitted values (\(\rho _{{\textit{DREGAR}}(3,4)}=0.811\), \(\rho _{{\textit{lasso}}}=0.804\)). Finally, the sample ACF and PACF at the bottom of Fig. 6 confirm the results from the Ljung–Box statistic, showing that the residuals from DREGAR(3,4) behave like white noise.

Similarly to the previous example, we compare the fit of the best DREGAR(3,4) with a DREGAR(0,7) model. Without penalising the coefficients, the maximum likelihood for DREGAR(3,4) is \(-243.98\) and that of DREGAR(0,7) is \(-251.41\), suggesting an improved fit for the DREGAR(4,3) model.

7 Conclusion

This paper addressed the problem of dynamic regression in the presence of autocorrelated residuals by proposing an extension of the regression model of [22] with the inclusion of lags of the response. We showed that adding this dynamic term results in a structure more similar to a general ARMAX model than REGAR [22] and REGARMA [23] and with fewer difficulties in parameter estimations than REGARMA. Further, we proposed an \(l_1\) penalized likelihood approach for variable selection for both regression and time-dependent coefficients and studied its theoretical properties. We proposed two iterative algorithms for parameter estimation and provided an R package that contains the implementations and simulation from the model. Finally, we show the applicability of the model and comparison with existing approaches in the simulation study as well as two real data applications.

Future work could extend the methods presented in this paper by estimating DREGAR coefficients using penalties that strike a trade-off between \(l_1\) and \(l_2\) norms, such as elastic net. We expect these methods to work well, as the \(l_2\) penalty imposes less weight on small coefficients compared to the \(l_1\) penalty. Such an extension is also expected to work well in the presence of correlation among the predictors. Moreover, it would be interesting to add GARCH-type errors to the model, similar to a recent contribution to the literature for the REGARMA model [24]. Finally, it would be of interest to extend the methodology to non-linear and non-stationary cases.

References

Basu, S., Michailidis, G.: Regularized estimation in sparse high-dimensional time series models. Ann. Stat. 43(4), 1535–1567 (2015)

Box, G.E., Pierce, D.A.: Distribution of residual autocorrelations in autoregressive-integrated moving average time series models. J. Am. Stat. Assoc. 65(332), 1509–1526 (1970)

Bozdogan, H.: Model selection and Akaike’s information criterion (AIC): the general theory and its analytical extensions. Psychometrika 52(3), 345–370 (1987)

Chen, J., Chen, Z.: Extended Bayesian information criteria for model selection with large model spaces. Biometrika 95(3), 759–771 (2008). doi:10.1093/biomet/asn034

Fan, J., Li, R.: Variable selection via penalized likelihood and its oracle properties. J. Am. Stat. Assoc. 96(456), 1348–1360 (2001)

Han, F., Lu, h., Liu, H.: A direct estimation of high dimensional stationary vector autoregressions. J. Mach. Learn. Res. 16, 3115–3150 (2015). http://jmlr.org/papers/v16/han15a.html

Huang, J., Ma, S., Zhang, C.H.: Adaptive lasso for sparse high-dimensional regression models. Stat. Sin. 18(4), 1603 (2008)

Kim, J., Pollard, D.: Cube root asymptotics. Ann. Stat. 18(1), 191–219 (1990)

Knight, K., Fu, W.: Asymptotics for lasso-type estimators. Ann. Stat. 28(5), 1356–1378 (2000)

Kwiatkowski, D., Phillips, P.C., Schmidt, P., Shin, Y.: Testing the null hypothesis of stationarity against the alternative of a unit root. J. Econom. 54(1), 159–178 (1992)

Ljung, L.: System Identification: Theory for the User. Pearson Education, Englewood Cliffs (1998)

Medeiros, M.C., Mendes, E.F.: Estimating high-dimensional time series models. Technical report (2012)

Meinshausen, N., Bühlmann, P.: High-dimensional graphs and variable selection with the lasso. Ann. Stat. 34(3), 1436–1462 (2006)

Melnyk, I., Banerjee, A.: Estimating structured vector autoregressive models. In: Balcan, M.F., Weinberger, K.Q. (eds.) Proceedings of the 33rd International Conference on Machine Learning: Proceedings of Machine Learning Research, vol. 48. PMLR, New York, pp. 830–839 (2016)

Nardi, Y., Rinaldo, A.: Autoregressive process modeling via the lasso procedure. J. Multivar. Anal. 102(3), 528–549 (2011)

Nelles, O.: Nonlinear System Identification: From Classical Approaches to Neural Networks and Fuzzy Models. Springer, Berlin (2013)

Nicholson, W.B., Matteson, D.S., Bien, J.: Varx-l: Structured regularization for large vector autoregressions with exogenous variables. Int. J. Forecast. 33(3), 627–651 (2017)

Pan, W.: Akaike’s information criterion in generalized estimating equations. Biometrics 57(1), 120–125 (2001)

Park, T., Casella, G.: The Bayesian lasso. J. Am. Stat. Assoc. 103(482), 681–686 (2008)

Song, S., Bickel, P.: Large vector auto regressions (2011). arXiv:1106.3915

Tibshirani, R.: Regression shrinkage and selection via the lasso. J. R. Stat. Soc. Ser. B 58(1), 267–288 (1996)

Wang, H., Li, G., Tsai, C.: Regression coefficient and autoregressive order shrinkage and selection via the lasso. J. R. Stat. Soc. Ser. B 69(1), 63–78 (2007)

Wu, R., Wang, Q.: Shrinkage estimation for linear regression with ARMA errors. J. Stat. Plan. Inference 142(7), 2136–2148 (2012)

Yoon, Y.J., Lee, S., Lee, T.: Adaptive lasso for linear regression models with ARMA-GARCH errors. Commun. Stat. Simul. Comput. 46(5), 3479–3490 (2017)

Zou, H.: The adaptive lasso and its oracle properties. J. Am. Stat. Assoc. 101(476), 1418–1429 (2006)

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Haselimashhadi, H., Vinciotti, V. Penalised inference for lagged dependent regression in the presence of autocorrelated residuals. METRON 76, 49–68 (2018). https://doi.org/10.1007/s40300-017-0121-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40300-017-0121-3