Abstract

Nowadays, the space exploration is going in the direction of exploiting small platforms to get high scientific return at significantly lower costs. However, miniaturized spacecraft pose different challenges both from the technological and mission analysis point of view. While the former is in constant evolution due to the manufacturers, the latter is an open point, since it is still based on a traditional approach, not able to cope with the new platforms’ peculiarities. In this work, a revised preliminary mission analysis approach, merging the nominal trajectory optimization with a complete navigation assessment, is formulated in a general form and three main blocks composing it are identified. Then, the integrated approach is specialized for a cislunar test case scenario, represented by the transfer trajectory from a low lunar orbit to an halo orbit of the CubeSat LUMIO, and each block is modeled with mathematical means. Eventually, optimal solutions, minimizing the total costs, are sought, showing the benefits of an integrated approach.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Since the beginning of the space era, spacecraft have always been equipped with chemical propulsion engines, characterized by a high value of thrust and a good control authority. For traditional spacecraft, nominal trajectories are designed and optimized in order to satisfy only scientific requirements as well as to comply with system constraints. Although, the nominal path will unlikely be followed by the spacecraft in real-life scenarios due to uncertainty in dynamic model (e.g., gravitational parameters or radiation pressure noisy profiles), navigation (i.e. imperfect state knowledge or approximations in measurement model), and command actuation (i.e., thrust magnitude and pointing angles error) [1], the correction maneuvers needed to compensate deviations are considered to be a minor problem, since changing the trajectory is relatively easy with a single, short burn. Robustness and feasibility assessment of the nominal trajectory against uncertainty are performed a-posteriori through a navigation analysis, with the aim to perform a covariance analysis and compute the achievable state knowledge, and to estimate the correction maneuvers. Thus, the nominal trajectory and the uncertainty assessment are decoupled and their analysis and optimization are done in two separate phases. This approach can lead to sub-optimal solutions. For large spacecraft, this procedure is acceptable since they can produce high thrust levels and they can store relevant propellant quantities; hence, sub-optimal trajectories are not critical.

However, in recent times, the space exploration is going in the direction of exploiting small platforms, such as SmallSat or CubeSat, characterized by: (1) limited-control authority (due to low thrust levels and reduced propellant budget), (2) large uncertainties in the state knowledge (due to novel techniques in navigation [2] or limited access to on-ground facilities), (3) and large errors in command actuation (due to low-maturity components), in order to get scientific and technological return at significantly lower costs [3, 4]. In this kind of probes, the low control authority poses challenges in maneuvering, since a long burn is needed, even for paltry deviations. Therefore, orbit determination and the subsequent correction maneuvers cannot be considered a minor problem and preliminary trajectory design should take them into account, since the classical approach can lead to trajectories requiring an unnecessarily large amount of propellant.

A clear indication of this phenomenon can already be found in some studies about mission having the characteristics summarized above. Lisa PathFinder (LPF) proposed mission extension is a notable example. LPF was a technology demonstrator for the gravitational wave observatory LISA, launched by ESA in 2015. A number of works [5,6,7] studied the possibility of extending LPF beyond its nominal mission, maneuvering the spacecraft in order to pass through a peculiar point of the solar system, the Earth–Sun Saddle Point, where the net gravitational acceleration is almost null, to collect data for a possible confirmation of the MoND.Footnote 1 Although the on-board instruments allowed detecting anomalous MoND gradients nearby the Saddle Point, ESA chose not to go for this option due to high risks, and thus the disposal of LPF was executed in April 2017. At the end of the nominal mission, LPF had a small residual control capacity, estimated into a \(\Delta v\) budget of approximately \({1}\,\mathrm{m/s}\), that could be provided using cold-gas thrusters with a maximum thrust of 100 \(\upmu\)N. Thus, LPF was a very limited control authority spacecraft in a highly unstable environment, and applying velocity changes could be very challenging. Several nominal solutions were found that satisfy the propulsion constraints [8]. However, if the stochastic cost is taken into account, the \(\Delta v\) required to accomplish the mission increases in a sensible way and the feasibility of the mission extension is no longer guaranteed [5]. For example, the best solution in Paretian sense misses the target within a distance of 10 km and requires a deterministic \(\Delta v = {0.657}\,\mathrm{m/s}\). However, the navigation \(\Delta v\) distribution for the given trajectory gives a value of \({4.533}\,\mathrm{m/s}\) for a 95% confidence level in order to counteract errors in model, navigation, and control. This figure is one order of magnitude higher than the deterministic cost, endangering the mission feasibility. A similar behavior can be found also in the LUMIO Phase 0 study [9]. For this mission, a transfer from a Low Lunar Orbit to a halo orbit about Earth–Moon \(\text {L}_2\) is foreseen. In this case, the deterministic cost for the transfer amounts to \({89.97}\,\mathrm{m/s}\), while the \(3\sigma\) stochastic cost sums up to \({97.9}\,\mathrm{m/s}\). Hence, the nominal and navigation \(\Delta v\)s have the same order of magnitude. In such cases, a procedure embedding uncertainty in the preliminary mission design can be useful in cutting down the overall mission costs and produce more robust and feasible solutions.

In the last decades, optimal control and optimization theory have been extensively exploited for the nominal design of space trajectories [10, 11]. However, only in the last ten years, some stochastic-optimal approaches, embedding uncertainty in their core, have been developed for diverse problems. In the early 2000, [12] proposed a statistical targeting algorithm, able to incorporate statistical information directly in the trajectory design. While the usual target method solves a deterministic boundary value problem for the nominal trajectory, this algorithm search for a statistically correct trajectory, i.e., a trajectory able to reach the target state in a stochastic sense. However this approach fails whenever the stochastic trajectories envelope cannot be described as a quasi-Gaussian distribution.

The uncertain Lambert’s problem has been investigated alike, in several papers by [13,14,15] by exploiting Taylor differential algebra. An alternative approach, characterizing the stochastic error by means of the first-order variational equations, is presented in [16]. This approach has been extended considering first the explicit partial derivatives of the transfer velocities [17] and later by implementing a derivative free numerical method, exploiting novelties in uncertainty quantification [18]. Uncertain Lambert’s problem with differential algebra was also exploited in the gravity assist space pruning algorithm presented in [19].

Similarly, approaches to tackle the rendezvous problem were conceived. A multi-objective optimization method, considering a robust performance index based on final uncertainties, was devised for the linear rendezvous problem [20], taking into account both navigation and control errors. A relation among the performance index, the rendezvous time, and the propellant cost was found for short-duration missions. Nonlinear rendezvous model and the possibility of handling long-duration phases were later addressed [21]. Also the asteroid rendezvous in a stochastic sense was investigated, considering the state uncertainty both of the spacecraft and the target [22] together with the optimization of correction maneuver under the Lambert’s problem conditions.

Recently, general procedures of trajectory optimization under uncertainty were developed. A method transcribing the stochastic trajectory optimization into a deterministic problem by means of Polynomial Chaos Expansion and an adaptive pseudospectral collocation method was introduced by [23], while [24] presented a novel approach, based on Belief Markov Decision Process model and then applied this method to the robust optimization of a flyby trajectory of Europa Clipper mission in a scenario characterized by knowledge, execution and observation errors. Stochastic Differential Dynamic Programming has been investigated to design robust Earth–Mars transfer, considering unscented transform to propagate uncertainties [25]. This methodology has been extended subsequently using a hybrid multiple-shooting technique to overcome the limitation of the Differential Dynamic Programming [26]. More recently, the use of a combination of convex optimization and covariance steering have been introduced to solve different classes of robust continuous control problems in astrodynamics, such as interplanetary transfer [27] and reentry [28]. Finally, Reinforcement Learning has been proven to be effective in solving robust deep-space transfers considering different sources of error [29, 30].

The idea of considering both deterministic and stochastic propellant cost has been employed also in the nominal trajectory design for EQUULEUS [31, 32], a 6U CubeSat developed by the University of Tokyo and JAXA and planned to be inserted in a cis-lunar environment by the Artemis 1 mission by NASA and then brought to an halo orbit about the Earth–Moon \(\text {L}_2\) point. However, in this last case, only the transfer cost and the annual station keeping cost are optimized without considering any navigation cost during the transfer phase.

Although uncertainties in the early stages of the trajectory design are considered in recent works to devise robust optimal trajectories, an integrated approach, considering the navigation assessment as part of the trajectory design and optimization, using classical techniques, is still missing. Nevertheless, the paradigm shift proposed in this work can be beneficial in terms of propellant mass consumption. Indeed, it can overtake the natural sub-optimality of the traditional approach by surfing solutions with lower dispersion and better stochastic properties, thus reducing both the navigation costs and the final state scattering with respect to the target. Hence, robust low-cost trajectories in the preliminary mission analysis can increase the scientific return for limited-capability satellite either by giving access to nowadays-impossible mission profiles or by expanding the nominal operative life. On the other hand, even large traditional probes can benefit from an holistic approach, since it can increase the spacecraft performances while reducing the design steps.

This paper is organized as follows. In Sect. 2, the general problem statement of the preliminary mission analysis is presented. Alongside the traditional approach, the revised approach, that embeds deterministic and stochastic considerations in a single step, is introduced. Then, a test case scenario, based on the transfer from a low lunar orbit to an halo orbit, is presented (Sect. 3). The mathematical formulation of the relevant building blocks is shown in Sect. 4. In this section, a novel technique, exploiting the conjugated unscented transformation to solve the stochastic integral associated to the non-intrusive polynomial chaos expansion, is devised in order to efficiently propagate the trajectory under uncertainties. The combination of this technique with kernel estimators to evaluate state statistics and with an ad hoc implementation of the ensemble square root filter to fast determine the spacecraft state knowledge is firstly formulated in the section remainder. In Sect. 2, the revised approach is adapted to the test case scenario. Finally, results are given and a critical assessment is provided in Sect. 6.

2 Problem Statement

2.1 Sequential Approach

In this work, the approach followed nowadays to compute a nominal trajectory, evaluate its statistical properties and retrieve the navigation costs is labeled as sequential or traditional approach. Detailed information about this process can be found in several sources [5, 9]. In this case, the whole procedure is subdivided into two sequential and independent steps (Fig. 1):

-

1.

Trajectory Design and Optimization: nominal trajectory, connecting the initial point to the target, while minimizing the propellant mass, is sought (Fig. 1a). Thus, generally speaking, an optimal control problem is set up, having the aim to determine the state \({{\textbf {x}}}(t)\), the control \({{\textbf {u}}}(t)\) and, possibly, the initial and final times, \(t_0\) and \(t_f\), that minimize the total control effort

$$\begin{aligned} J=\int _{t_0}^{t_f} \left\| {{\textbf {u}}}\right\| \, \text {d}t \end{aligned}$$subject to the ordinary differential equation

$$\begin{aligned} \dot{{{\textbf {x}}}}={{\textbf {f}}}({{\textbf {x}}}, {{\textbf {u}}}, t) \end{aligned}$$and to the boundary constraints

$$\begin{aligned} {{\textbf {x}}}(t_0)={{\textbf {x}}}_0\\ {{\textbf {x}}}(t_f)={{\textbf {x}}}_f \end{aligned}$$The function \({{\textbf {f}}}\) represents the acceleration vector field associated to the spacecraft dynamics. Some additional terminal and path constraints are normally added, considering the characteristics of the specific orbital problem. Several techniques can be exploited to solve the optimization problem. Classical approaches are subdivided in two classes: direct methods and indirect methods [33], and the choice of the most suitable method is based mainly on the mission profile, the spacecraft characteristics, and the desired accuracy. Usually this step is time- and effort-consuming, due to the large search space. For this reason, a preliminary trade-off and/or pruning can be required in order to relieve the total burden.

-

2.

Navigation Assessment: the nominal trajectory feasibility in a real scenario is evaluated by simulating the orbit determination (OD) process and estimating the trajectory correction maneuvers (TCM) along the whole mission. Thus, the Navigation Assessment can be split into two (independent) sub-phases:

-

i.

Knowledge analysis: a covariance analysis is performed to estimate the achievable level of accuracy in the spacecraft state knowledge, i.e., the deviation of the estimated spacecraft state with respect to the true one (Fig. 1b), along the entire trajectory. The initial knowledge, usually represented as a Gaussian distribution with a given covariance \(P_0\) centered in the initial nominal state \({{\textbf {x}}}_0\), is propagated forward in time. Due to its nature, the knowledge covariance increases in time [12]. Indeed, uncertainties affect the knowledge by enlarging the possible state space. In order to improve the accuracy of the estimated state with respect to the real one, an OD process is implemented: the knowledge covariance is reduced by performing multiple indirect measurements of the state in a prescribed time interval, and they are provided to a filtering algorithm, able to reconstruct spacecraft’s position and velocity. Thus, the knowledge covariance increases during propagation and it can be reduced only during the OD phase.

-

ii.

Navigation cost estimation: a stochastic analysis is performed in order to estimate the navigation cost needed to allow the spacecraft to reach the target (Fig. 1c). First, a guidance cycle is defined. The guidance cycle refers to the epochs at which correction maneuvers are performed. Typically, this can change from one mission to another, or from one phase to another inside the same mission. Usually, a guidance cycle with a correction maneuver once a week is assumed as baseline strategy in order to ease on-ground operations. Indeed, currently navigation maneuvers are computed on-ground and then sent to the spacecraft. For this reason, having a guidance cycle following the working week pattern reduces operational complexity and costs. Then, a guidance law is selected in order to compute the correcting impulse \(\Delta v\), starting from the deviation from the nominal trajectory \(\delta x\). At the end, a statistical analysis is performed to give a measure of the needed navigation propellant. Moreover, the trajectory dispersion, i.e., the deviation of the true spacecraft state with respect to the nominal one, can be retrieved.

Knowledge analysis and navigation cost estimation are usually performed independently in the preliminary mission analysis. However, in principle, they cannot be considered totally separate: both sub-phases should share a common timeline and a minimum time interval, the cut-off time, should be considered between the end of the OD phase and the subsequent correction maneuver. This cut-off time is needed to the flight dynamics team to complete the orbit acquisition process, to compute the correction maneuver and to generate the commands.

-

i.

Traditional approach for preliminary mission analysis: a Trajectory design and optimization; b Knowledge analysis; c Navigation cost evaluation. Nominal trajectory is indicated with a black line, true trajectory as an orange line, OD with a grey thick line. Ellipses represent the instantaneous b knowledge c or dispersion. Steps b–c form the navigation assessment (Color figure online)

Figure 2 shows the general architecture for the traditional approach. This two-step approach can lead to sub-optimal solutions, requiring a gratuitous amount of propellant. This behavior is taken to extremes when small satellites are considered, due to maneuver complexity and definite propellant mass. As a matter of fact, some trajectory can be wrongly tagged as infeasible using this approach. An integrated approach is needed to relieve this effect.

2.2 Integrated Approach

A procedure able to comprehend the whole navigation assessment inside the optimization process has to be designed. This method will be tagged as integrated or revised approach. Its aims are to (1) evaluate and minimize deterministic and stochastic cost, (2) estimate the knowledge, (3)and compute the dispersion, at the same time. In order to achieve these objectives, the approach depicted in Fig. 3 is devised. The initial nominal state is given together with the associated initial dispersion. For each state belonging to the initial dispersion, an initial knowledge is considered. These three quantities (nominal state, knowledge and dispersion) are propagated forward. At some prescribed times, an OD process is performed in order to estimate the true trajectory and reduce the knowledge covariance. The estimated trajectory is then used to feed a guidance scheme, compute the correction maneuver and reduce the dispersion. At the end, the final nominal state and the final dispersion can be retrieved. For sake of simplicity, considering a Monte Carlo fashion, the revised approach can be summarized as:

-

For each step of the optimization algorithm:

-

1.

An initial nominal state \({{\textbf {x}}}_0\) (blue dot in Fig. 3) and initial dispersion (blue ellipse) are given;

-

2.

The initial state is propagated up to the final time, in order to generate the nominal trajectory (black line) and compute the deterministic cost (i.e., the impulses on the nominal trajectory that do not depend on the uncertainties, indicated as blue arrows);

-

3.

A number of samples in the initial dispersion \({{\textbf {x}}}_0^i\) (orange dot) are generated, representing the initial state of the possible true trajectories;

-

4.

For each sample:

-

(a)

The initial state \({{\textbf {x}}}_0^i\) and the associated initial knowledge are propagated forward (orange line) up to the first OD time;

-

(b)

In a give time span \(t\in \left[ t^{OD}_0, t^{OD}_f\right]\), the OD is performed (gray thick line) to improve the knowledge (black ellipses);

-

(c)

An estimated state of the true trajectory (purple dot) is retrieved at the end of the OD and pushed forward in time (purple line), in order to compute the TCM (green arrow) through a guidance law;

-

(d)

The real trajectory is propagated up to the correction maneuver time \(t_{TCM}\), when the navigation impulse is applied;

-

(e)

Steps 4a–4d are repeated for each OD and correction maneuver time up to the final time \(t_f\).

-

(a)

-

5.

From the Monte Carlo-like simulation, statistics for the navigation cost can be computed (i.e., the maneuvers needed to control the dispersion) and the final dispersion (red ellipse) can be estimated.

-

6.

The total propellant mass, given by deterministic plus stochastic costs is optimized, while imposing a constraint on the final state.

Revised approach for the preliminary mission analysis. Nominal trajectory is indicated with a black line, a true possible trajectory with an orange line, estimated trajectory with a purple line. The OD process is the gray thick line. Black ellipses represent the instantaneous knowledge; colored ellipses represent the dispersion (Color figure online)

Even though Fig. 3 shows only a nominal maneuver and only an OD window, it can be easily extended to consider multiple deterministic impulses or to a different concept of operations.

Moreover, this general approach can be modified by means of some simplifying assumptions to reduce the computational burden, if needed by the given mission scenario, e.g., by performing the knowledge analysis only on the nominal trajectory and use its results on the real trajectories. Additionally, it is important to stress a significant difference of this concept with respect to the traditional approach. In fact, the final state is no more deterministic, but it can be more coherently represented in a stochastic way by evaluating the dispersion at the final time. Hence, it is convenient to implement the final constraint as a stochastic constraint, i.e., the final points distribution should be relatively close to the target point.

In conclusion, the general fuel-optimal problem of a spacecraft flying in a perturbed environment under the revised approach can be formalized as:

Problem 1

(Fuel-Optimal General Problem) Find the nominal state \({{\textbf {x}}}^*(t)\), the nominal control history \({{\textbf {u}}}^*(t)\) and, possibly, the initial and final times, \(t_0\) and \(t_f\), such that

with \(Q(\Delta v^s)\) a measure of the stochastic cost, is minimized, while the state is subjected to a simplified Itô stochastic differential equation [34]

with \({{\textbf {f}}}\) being the deterministic part of the dynamics and \(\varvec{\omega }\) the process noise associated to uncertainty in dynamics and in maneuver execution.

Moreover, the state is subjected to initial constraints on dispersion

and on knowledge

and a final constraint

with \({\mathcal {E}}\) indicating a generalized uncertainty ellipsoid and \(\hat{{\mathcal {E}}}_\delta\) the desired ellipsoid.

The navigation costs are estimated through a guidance law, fed by the OD scheme. It means

and

with \(\texttt{GL}\) and \(\texttt{OD}\) being the guidance law and orbit determination procedures respectively, \(\hat{{{\textbf {x}}}}\) is the estimated state, \({{\textbf {x}}}\) the real state and \({{\textbf {x}}}^*\) is the nominal state.

Generally speaking, for the integrated approach, three main building blocks can be identified and they are: (1) a procedure to propagate uncertainty and to evaluate the stochastic measures, (2) a OD scheme, and (3) a guidance law that can vary and should be selected properly, depending on the analyzed scenario.

3 Test Case Scenario

A comprehensive method for robust stochastic mission analysis seems to be unfeasible: deep-space exploration missions have diverse characteristics and mission profiles vary so widely that a single technique will be never able to produce a good solution for each situation. In fact, Problem 1 provides a general framework with its building blocks, that should be adapted to the scenario of interest. In this work, the LUMIO transfer phase, from a low lunar orbit (LLO) to a halo orbit, is considered. As already presented in Sect. 1, this scenario provides a relevant environment to test the revised approach and assess its performances. Moreover, there is a increasing interest in the community about spacecraft flying towards Lagrange point orbits. Numerous space missions will be launched in the time frame 2021-2025, using both traditional spacecraft (e.g., James Webb Space Telescope by NASA [35] or ESA’s Euclid [36]) and CubeSats (e.g., EQUULEUS by JAXA [31] or LUMIO [9]). The Lunar Gateway, a small station in lunar environment, will orbit a near-rectilinear halo orbit as well and, of course, several servicing missions are planned to exploit similar transfers [37].

The Lunar Meteoroid Impact Observer (LUMIO) [38] was one of the proposals submitted to the ESA’s SysNova Competition LUnar CubeSats for Exploration (LUCE). LUMIO was selected as one of the four concurrent studies run under ESA contract, and it won ex aequo the challenge. In 2020, ESA considered the mission for further implementation. After a successful Preliminary Requirements Review (PRR), LUMIO entered the Phase B in September 2022 (Fig. 4).

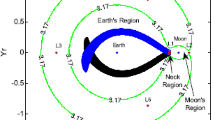

LUMIO space segment is composed by a 12U form-factor CubeSat, having the aim to observe, quantify, and characterize meteoroid impacts on the lunar farside by detecting their flashes, to provide global information on the lunar meteoroid environment and contribute to lunar situational awareness. LUMIO will be released by the carrier on a 600 km×20000 km low lunar orbit with free angular parameters, and must reach the its designated operative orbit, that is a quasi-periodic halo orbit about Earth–Moon \(L_{2}\) characterized by a Jacobi constant \(C_j = 3.09\) [9], depicted in Fig. 5.

The transfer phase, where LUMIO is brought from the low lunar orbit to the operative orbit, will serve as the main topic of this work and will be analyzed in details in the section remainder.

The whole mission profile is summarized in Fig. 4.

LUMIO mission profile (from [9])

During the transfer phase, free transport mechanisms are leveraged to reach the target halo. Specifically, intersection in the configuration space has to be sought between the halo stable manifolds and a selenocentric transition orbit. Since the sought intersection occurs only position-wise, a maneuver is necessary for orbital continuity. This maneuver places the spacecraft on the stable manifold of the target halo and is thus called stable manifold injection maneuver (SMIM) and it will be indicated with \(\Delta v_{\text {SMIM}}\). After the transfer, the halo injection maneuver (HIM), \(\Delta v_{\text {HIM}}\), eventually injects the CubeSat into the final operative orbit. A detailed study of the TCM problem for several LPOs, exploiting simple dynamical systems concepts, has shown that two TCMs provide sufficient degrees of freedom [39]. Thus, two TCMs are scheduled to occur during the transfer along the stable manifold in order to compensate trajectory deviations related to control and dynamics uncertainties. In order to correctly estimate their magnitude an OD phase is foreseen before each TCM, with the first allocated just after the SMIM and the second one scheduled to start after 6 days. Nominally, the first maneuver has to occur at least two days after the SMIM, while the second 8 days after \(\Delta v_{\text {SMIM}}\). The maneuver time is selected in order to give enough time at the ground segment to perform orbit determination, compute correction maneuvers and send commands to the spacecraft. Indeed, at least one day for the OD and one day cut-off time between the end of the OD phase and the application of the TCM should be considered in order to be compliant with ESOC guidelines. A timeline for the transfer phase is given in Fig. 6. Usually, the nominal trajectory does not have impulses when the correction maneuvers are applied. However, a non-null maneuver can be foreseen at each TCM time in order to broaden the feasible transfer trajectories set.

In conclusion, LUMIO transfer phase, as presented in Fig. 6, can be subdivided into three sub-phases:

-

1.

OD phase (between \(t_{ODi}^0\) and \(t_{ODi}^f\)): during this phase, a visibility window is identified (see Sect. 4.2), and the OD algorithm is exploited within it. Nominally, OD phases are placed between days 0 and 1 and between days 6 and 7 after \(t_0\);

-

2.

Cut-off phase (between \(t_{ODi}^f\) and \(t_{TCMi}\))): in this phase the Differential Guidance (Sect. 4.3) is exploited to compute the correction maneuver, which is applied at the end of the phase;

-

3.

Ballistic phase (between \(t_{TCM1}\) and \(t_{OD2}^0\), and between \(t_{TCM2}\) and \(t_f\)): in this phase, the spacecraft undergoes a ballistic flight.

3.1 Dynamics

The motion of the CubeSat in the transfer phase can be described by using the roto-pulsating restricted n-body problem (RPRnBP) [8], in order to have a high-fidelity dynamics, able to correctly represent the highly non-linear trajectory of LUMIO. The use of an adimensional roto-pulsating frame (RPF) eases the motion description both for the transfer trajectory and for the operative orbit, since they are the generalization of trajectory existing in the restricted 3-body problem.

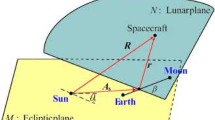

Thus, a non-uniformly rotating, barycentric, adimensional reference frame (\({\hat{\xi }}\), \({\hat{\eta }}\), \({\hat{h}}\)), called synodic frame, is defined in order to write the equation of motion. The center of this system is placed at the primaries barycenter (i.e. Earth–Moon barycenter); the \({\hat{\xi }}\) axis is aligned with the two primaries, with \({\hat{h}}\) orthogonal to the plane of motion. Distances are normalized accordingly to the instantaneous distance between the primaries. The unit distance can be defined as

where \({{\textbf {r}}}_E\) are \({{\textbf {r}}}_M\) the primaries position in J2000. Therefore, k varies in time according to the mutual position of the two primaries, so creating a pulsating reference system. Moreover, time is adimensionalized such that mean motion about their common barycenter \(\omega =\sqrt{\frac{G\left( m_{E} +m_{M}\right) }{{\tilde{a}}^3}}\) is set to unity, with \((m_{E}\) and \(m_{M}\), the Earth and Moon mass respective and \({\tilde{a}}\) the mean semi-major axis value. By choosing a constant mean motion, the average primaries revolution period is \(2\pi\). In this framework, Earth and the Moon are have fixed position, \([-\mu , 0, 0]^T\) and \([1-\mu , 0, 0]^T\) respectively, with \(\mu ={m_{M}}/{\left( m_{E} +m_{M}\right) }\) being the mass parameter of the system (Fig. 7).

The equations of motion for the RPRnBP reads [40]

where \(\varvec{\rho }\) is the spacecraft position in the RPF, the primes representing the derivatives with respect to the adimensional time \(\tau\), dots indicate the time derivatives and \(\nabla \Omega =\partial \Omega /\partial \varvec{\rho }\) is the gradient of the pseudopotential

where \({\mathcal {S}}\) is the set containing the primaries and characterized by the adimensional gravitational parameter \({\hat{\mu }}_j=m_j/(m_{E}+m_{M})\), second harmonics coefficient \(J_{2_{j}}\) related to the non-spherical gravitational distribution and equatorial radius \(R_{B_j}\), \(M=C^T I_z C\), while \(\varvec{\delta }_j=\varvec{\rho }-\varvec{\rho }_j\), and \(\delta _j\) is its magnitude. The adimensionalized solar radiation pressure (SRP) acceleration in Eq. (9) can be expressed, using the cannon ball model, as

where \(\varvec{\delta }_S\) is the Sun position and \(\gamma _0\) is the SRP parameter, defined as

with \(c_r\) the spacecraft reflectivity coefficient, A/m its area-to-mass ratio and c the speed of light in the vacuum.

Mixed derivative notation in Eq. (9) acknowledges that ephemeris data are numeric, discrete, and provided for regular dimensional time. Indeed, planets position \({{\textbf {r}}}_j\) in J2000 are retrieved by using SPICE [41, 42] as well as the physical constants. The transformations from the solar barycentric inertial frame of reference (i.e., J2000) and the RPF are

with \({{\textbf {b}}}\) the Earth–Moon barycenter position

and C the cosine angle matrix between J2000 and the RPF

with

In Table 1, values for the most useful parameters in the dynamics are presented.

3.1.1 Variational Equations

In order to compute the trajectory correction maneuvers and the derivatives of the spacecraft state, useful in the optimization process, the variational equations are required. Being \(\varvec{\chi }\) the spacecraft state in the RPF, the state-space representation of Eq. (9) is

with \(\varvec{\nu }\) the spacecraft velocity.

The state transition matrix (STM) can be computed by integrating the variational equation

with

being the Jacobian of the dynamics right-hand side, where [8]

while

3.1.2 Coordinates Transformation

The initial LLO is provided using Keplerian elements, i.e., a constant semi-major axis \(a={12037.1\,\mathrm{\text {k}\text {m}}}\) and a constant eccentricity \(e=0.65848\) plus a set of free angular parameters \(\varvec{\alpha }_0=\left[ i_0, \Omega _0, \omega _0, \theta _0\right]\), containing the inclination \(i_0\), the right ascension of the ascending node \(\Omega _0\), the argument of the pericenter \(\omega _0\) and the true anomaly \(\theta _0\). Keplerian parameters are given in Moon-centered Moon-equatorial at date (MCME2000) reference frame. In this frame, the z-axis is aligned with the Moon’s spin axis on January 01, 2000, the x-axis is aligned with the Earth mean equinox (First point of Aries) and y-axis completes the right-handed reference frame. Thus an additional transformation is needed to go from the MCME2000 Keplerian elements to the cartesian coordinates in the J2000 reference frame, before being converted in RPF. Indeed, the Keplerian elements are converted into cartesian coordinates \({{\textbf {x}}}_{\text {MCME}}\) [43]

with \(p=a\left( 1+e^2\right)\) the semi-latus rectum, where the matrix \(T_1=R_z\left( \omega _0\right) R_x\left( i_0\right) R_z\left( \Omega _0\right)\) is defined through 3-dimensional rotation matrices. Then, the state is rotated in the Moon-Centered J2000

with

where \(i_M={24\,\mathrm{\deg }}\) is the lunar axial tilt with respect the Earth’s equator [44]. Eventually, the position on the LLO is written in the solar barycentric J2000 by translation of the center from the Moon to the Solar System Baricenter, i.e.

Then, the J2000 initial state \({{\textbf {x}}}_0\) is converted in the RPF one \(\varvec{\chi }_0\) by applying Eqs. (13, 14). The SMIM is applied on top of this initial state.

3.2 Uncertainty

In this test case, uncertainties are considered to be related only to the navigation and command errors. Errors generated by uncertainties in the dynamic model (e.g., solar radiation pressure or residual accelerations) affect the transfer trajectory to a limit extent, due to the short-time propagation, and are dominated by the other errors. Thus, they are not considered in the model.

Navigation errors are taken into account as measurement model deviations in the OD phase and through an imperfect state knowledge at the initial time. The latter leads the initial state to be modeled as a Gaussian random variable with mean as the nominal initial state, i.e.

where \(P_{\varvec{\chi }}={{\,\textrm{diag}\,}}\left( \left[ \sigma ^2_{\varvec{\rho }}I_3, \, \sigma ^2_{\varvec{\nu }}I_3\right] \right)\) is the 6-dimensional diagonal covariance matrix, with \(\sigma ^2_{\varvec{\rho }}\) and \(\sigma ^2_{\varvec{\nu }}\), the initial position and velocity covariances, respectively.

Moreover, in order to compensate for differences between physical model and real world, command actuation errors in the nominal impulses are considered, while TCMs are assumed free from uncertainties. Since uncertainty in the HIM does not affect the transfer phase and can be compensated with the station keeping algorithm foreseen in the operative orbit, the only significant uncertain maneuver is the SMIM. Thrust magnitude and direction are both modeled as Gaussian variables with a standard deviations \(\sigma _{\Delta v}\) in magnitude and \(\sigma _\delta\) in pointing angle. The magnitude error is defined as a fraction of the nominal value, i.e. \(\sigma _{\Delta v}=u\Delta v_{\text {SMIM}}\), with \(u\ll 1\). The covariance matrix computation for the uncertainty on the SMIM requires retrieving SMIM vector in spherical coordinates, thus

where \(\Delta v\) is the magnitude, and \(\alpha\) and \(\epsilon\) are the Azimuth and Elevation respectively. Then, the associated spherical covariance, i.e., \(P_{\Delta v}^s={{\,\textrm{diag}\,}}\left( \sigma _{\Delta v}^2, \sigma _\delta ^2, \sigma _\delta ^2\right)\), is transformed in Cartesian coordinates

with J the Jacobian matrix of the cartesian-to-spherical conversion

The total initial covariance can be computed as a combination of the initial state error, plus the maneuver error

In doing so, the number of random variables can be reduced from 9, i.e. the 6-dimensional initial state plus the 3 SMIM components, to only 6 stochastic states, reducing the probabilistic space. Characteristics of the random variables are reported in Table 2.

4 Methodology

In order to deal with the revised approach for the LUMIO transfer phase case, a proper methodology should be devised, taking into account its peculiarities. It is of paramount importance to clarify: (i) which is the method used for the uncertainty propagation and how stcohastic variables are estimated, (ii) how the trajectory correction maneuver are computed, (iii) and how the orbit determination is performed. Moreover, the simplifying assumptions are presented as a preliminary for the optimization problem statement.

4.1 Uncertainty Propagation

In order to select an appropriate uncertainty quantification (UQ) scheme, four qualitative criteria are considered: (i) Accuracy: it considers how accurate is the method in estimating the propagated uncertainty; (ii) Feasibility: it assesses if the hypotheses are compatible with our problem; (iii) Computational time: it gives a qualitative measure of computational burden requested by the method; (iv) Suitability: it measures the suitability inside an optimization algorithm. The test case scenario is characterized by a limited number of thrust impulses, corresponding to few uncertainties, but the spacecraft is flying in a highly perturbed environment. Indeed, a high nonlinear solution is expected; however, the number of random variables is low. For this reason, a nonlinear uncertainty quantification method is needed [45] and, since the uncertainty vector is small, the curse of dimensionality does have a limited impact. Starting from this assumption, Polynomial Chaos Expansion, specifically in a non-intrusive method fashion, seems to feature the best balance of criteria.

Polynomial Chaos Expansion (PCE) is a nonlinear UQ technique, in which he input uncertainties and the solution are approximated using a series expansion based on some orthogonal polynomials, thus the approximated solution can be written as [46]

where \(\Lambda _{p,d}\) is a set of the multi-index of size d and order p defined on nonnegative integers, \(\varvec{\xi }=\left[ \xi _1,\dots ,\xi _d\right]\) is the set of input random variables, in which each element \(\xi _i\) is an independent identically distributed variable. The basis functions \(\left\{ \psi _{\varvec{\alpha }}(\varvec{\xi })\right\}\) are multidimensional spectral polynomials, orthonormal with respect to the joint probability measure \(\rho \left( \varvec{\xi }\right)\) of the vector \(\varvec{\xi }\)

with \(\Gamma ^d\) representing the d-dimensional hypercube where the random variable \(\varvec{\xi }\) are defined and \(\delta _{\varvec{\alpha }\varvec{\beta }}\) is the Kronecker delta function. Thus, the basis functions choice depends only on \(\rho \left( \varvec{\xi }\right)\). For instance, Hermite polynomials are the basis for normal random variables, while Legendre orthogonal polynomials are bases for the uniform distribution [47].

Generation of a PCE means computing the generalized Fourier coefficients \({{\textbf {c}}}_{\varvec{\alpha }}(t)\) by projection of the exact solution \({{{\textbf {x}}}}(t,\varvec{\xi })\) onto each basis function \(\psi _{\varvec{\alpha }}(\varvec{\xi })\), truncated at the total order p

The statistics of \({{\textbf {x}}}(t,\varvec{\xi })\) can be approximated by those of \(\hat{{{\textbf {x}}}}(t,\varvec{\xi })\) from the coefficients \({{\textbf {c}}}_{\varvec{\alpha }}(t)\) [48]. In fact, the mean is given by

because \(\psi _0=1\) and \(E[\psi _{\varvec{\alpha }}]=0\) for \(\varvec{\alpha }\ne {{\textbf {0}}}\). The covariance can be computed as

where the orthonormality of the polynomial basis is exploited.

PCE coefficients can be estimated by performing a Galerkin projection of the governing stochastic equations onto the \(\left\{ \psi _{\varvec{\alpha }}(\varvec{\xi })\right\}\) subspace (the so-called, intrusive method), or solving a least-square regression or pseduospectral collocation (in the so-called, intrusive methods) [48].

In the selected scenario pseudospectral collocation will be exploited due to its flexibility and reduced computational burden. Pseudospectral collocation is based on the numerical integration of Eq. (34) by collocating \({{\textbf {x}}}(t,\varvec{\xi })\) on certain quadrature nodes defined on \(\Gamma _d\) in order to find \({{\textbf {c}}}_{\varvec{\alpha }}(t)\). This means

where \(\varvec{\xi }_q\) is the set of quadrature nodes, \(\omega _q\) are the quadrature weights, and \({\mathcal {Q}}\) is the quadrature scheme. Generally speaking, for a d-dimensional multivariate case, the quadrature formula can be written as

A total of \(M=\prod _{i=1}^{d}m_i\) points for the function evaluation is needed. If the same number m of points along all the dimensions of \(\xi _i\) are taken, i.e., \(m_1=\dots =m_d=m\), the approximation error for the quadrature formula in Eq. (38) is in the order of \({\mathcal {O}}(M^{-(2s-1)/d})\) for a \({\mathcal {C}}^s\) function \({{\textbf {f}}}(\varvec{\xi })\) [48]. Keeping the error constant, the number of function evaluations M grows exponentially fast with respect the dimensionality d of the random inputs. This issue is the so-called curse of dimensionality. In order to relieve the computational burden, higher-order interactions can be neglected, so to reduce the number of grid points. Sparse grid, like Smolyak’s grid, are designed to serve this purpose.

The number of points can be further reduced using the so-called conjugate unscented transformation (CUT) [49]. CUT is the natural extension of unscented transformation, but, instead of employing only sigma-points on the principal axes of the initial distribution function, it propagates sigma-points chosen on some peculiar non-principal axes, giving the possibility to correctly estimate higher order moments of stochastic integrals [50]. Thus, it can be used to efficiently compute the generalized Fourier PCE coefficients exploiting Eq. (34). So, CUT can be seen as just another way to compute the stochastic integral given in Eq. (37). This hybrid technique, using CUT to estimate PCE coefficient, is unimaginatively labeled PCE-CUT. This approach exhibits several advantages over the standard sparse grid interpolation techniques, such as positive quadrature weights and fewer quadrature points.

Conjugate unscented transformation achieve to provide high-order quadrature rules satisfying the so-called momentum constraints equations (MCE). They are a set of equations that can be found by comparing the definition of a stochastic integral [Eq. (34)] with its quadrature approximation [Eq. (37)]. For the LUMIO transfer, quantities of interest are considered to be correctly represented using quadrature points that can completely satisfy the MCE up to the \(4^{\textrm{th}}\) order. In this case, CUT solution can be found by selecting the axes listed in Table 3, with the numerical value for the parameters shown in Table 4 [50].

The use of PCE-CUT4 requires the propagation of 77 samples in order to compute the quantity of interest. The equivalent full grid tensor product would require \(3^6=729\) samples, while Smolyak’s grid needs 85 points. Thus a 10% saving is expected in the computational times. Moreover, the positive quadrature weights improve the numerical stability, giving more accurate and fast results [51].

CUT4 results are computed by considering normalized Gaussian variables. If the random variables are represented by a generic multivariate Gaussian distribution with mean \(\bar{\varvec{\chi }}\) and covariance matrix P, the generic quadrature point \(\varvec{\zeta }_q\) can be retrieved by exploiting the affine transformation

with S being the Cholesky decomposition of P, i.e., \(P=S^TS\).

In order to assess the converge accuracy of PCE-CUT4 in the LUMIO transfer phase case, the ratio between the i-th and the first PCE coefficient \({{\textbf {c}}}_{{\textbf {0}}}\) is evaluated. Indeed, the fastest is its reduction, the most accurate is the expansion result. Moreover, there is a direct connection between this value and the digit precision [48]. Figure 8 illustrates the convergence accuracy for two representative components of position and velocity, showing that the PCE expansion converges quickly, with 5-precision digit in (adimensionalized) position and 4-precision digit in (adimensionalized) velocity. An alternative assessment can be given by comparing the standard deviation for a Monte Carlo (MC) simulation and the proposed technique. Figure 9 shows that final values are similar both for MC and PCE. However, the PCE-CUT4 converge rate overcomes the MC one, i.e., more Monte Carlo samples are needed to achieve the accuracy given by the PCE.

4.1.1 Stochastic Variables Estimation

Once the PCE coefficients \({{\textbf {c}}}_{\varvec{\alpha }}\) at a given time \(\tau\) are retrieved by means of the 4th-order CUT, the stochastic state at a given time can be estimated as [Eq. (32)]

This solution is expected to be strongly non-Gaussian. For this reason, the final stochastic state and the functions depending on it cannot be described employing only mean and covariance, but the full probability density function (PDF) has to be estimated and then used to evaluate probabilities. In order to do that, kernel density estimation (KDE) [52] is used. In this technique, the surrogate model is exploited to inexpensively produce a number n of samples of the quantity of interest \(q_j=q\left( \varvec{\chi }(\tau ,\varvec{\xi }_j)\right)\), depending on n random variables \(\varvec{\xi }_j\), with \(j=\{1, \dots , n\}\). Then they are used to estimate the PDF as

where h is the bandwidth, and K is the kernel function. The kernel function is selected as the Gaussian PDF, i.e., \(K(z)=\frac{1}{\sqrt{2\pi }}\exp \left[ -\frac{z^2}{2}\right]\). The cumulative distribution function (CDF) can be computed as

where \(G(q)=\int _{-\infty }^{q}G\left( q\right) \, \text {d}q\). In the case of Gaussian kernel, \(G(q) = \frac{1}{2}\left[ 1+{{\,\textrm{erf}\,}}\left( \frac{q}{\sqrt{2}}\right) \right]\).

The selection of the bandwidth is tricky and different algorithms exist. In this work, the Silverman’s rule of thumb [53] is considered: the value of h is selected as the bandwidth minimizing the mean integrated squared error for a Gaussian distribution. In this case,

where \({{\hat{\sigma }}}\) is the standard deviation of the n samples. Using KDE is preferred with respect to a simple counting method, since a smooth \({\mathcal {C}}^\infty\)-class CDF is obtained and this is helpful in the optimization procedure.

In order to estimate the population quantiles, a similar technique called kernel quantile estimation (KQE) is employed. The quantile function is the left-continuous inverse of the CDF

i.e., the function returning the threshold value of q, such that the probability variable being less than or equal to that value equals the given probability p. Using the KQE, the quantile function can be computed as [54]

where \({\tilde{q}}_j, \, j=\{1,\dots n\}\) is the sorted set of \(q_j\) and K is the kernel function. The use of this linear KQE formula give the possibility to obtain reliable estimation for the desired quantile value, while having a \({\mathcal {C}}^\infty\)-class function.

4.2 Orbit Determination Process

In order to determine the spacecraft state knowledge along the transfer phase, a covariance analysis is performed and the knowledge is estimated by means of an orbit determination algorithm.

In this scenario, radiometric tracking is selected as navigation technique. Thus, the spacecraft state is estimated by means of radiometric data processed by a ground station. Radiometric data for range and range-rate are simulated, generating pseudo-measurements as

where \(\gamma\) is the range, \({\dot{\gamma }}\) is the range rate, \(\varvec{\gamma }={{\textbf {r}}}-{{\textbf {r}}}_{GS}\) is the relative distance between LUMIO and the ground station, while \(\varvec{\eta }={{\textbf {v}}}-{{\textbf {v}}}_{GS}\) is the relative velocity. Pseudo-measurements can be performed only if a link between the spacecraft and the selected ground station can be established. Thus, for each OD phase, a visibility window is identified. Visibility windows are portion of the trajectory inside the OD phases, where some geometric conditions are verified. With reference to Fig. 10, the requirements are:

-

The Sun exclusion angle \(\phi\) should be greater than 0.5 deg in order to avoid degradation in the radiometric observable and, in turn, in the trajectory knowledge [55];

-

The Spacecraft elevation above the ground El in ground station location should be higher than a minimum value \(El_{\min }\) in order to avoid low-quality data related to the atmospheric extinction of the radiometric signal and to cope with the mounting constraints of the ground station sensor.

For the LUMIO case, the Sardinia deep space antenna (SDSA) scientific unit (64 ms) located in San Basilio, Cagliari, is assumed as reception baseline option for the ground communications. Currently, SDSA has X-band reception capability. In the future, reception in the Ka-band and transmission in the X- and Ka-bands will be made available.Footnote 2 SDSA performances are summarized in Table 5.

In order to estimate the state, a navigation filter exploiting the pseudo-measurements is needed. The filter embedded in the orbit determination process is an Ensemble square root filter (EnSRF) [56, 57]. This method exploits the capability of PCE to generate inexpensively huge ensembles of samples. Moreover, EnSRF does not require perturbed observations; thus, no sampling error is introduced in Kalman gain matrix, improving the accuracy of the filter.

Inside the visibility window of each OD phase, the time is discretized in evenly spaced intervals following the measurements frequency imposed by the ground station. In these points, the pseudo-measurements are generated based on the current state and then used to feed the EnSRF. Between two consecutive measurements time, the estimated and the real state are propagated forward, together with the associated CUT samples, useful to compute the needed PCE coefficients. Hence,

where subscript k and \(k+1\) are referred to the measurement times \(\tau _k\) and \(\tau _{k+1}\) respectively, \(\varvec{\chi }\) is the real trajectory, \(\hat{\varvec{\chi }}\) is the estimated trajectory, while \(\varvec{\chi }^q\) are the CUT samples. A generic perturbed nonlinear measurement model is considered

with \({{\textbf {z}}}\) the measurement and \(\varvec{\varepsilon }\) is the measurement error. A linear measurement operator is therefore defined as \(H=\frac{\partial {{\textbf {h}}}\left( \varvec{\chi }\right) }{\partial \varvec{\chi }}\bigg |_{\varvec{\chi }=\hat{\varvec{\chi }}}\).

At each \(\tau _k\) the EnSRF embedded in the OD, the PCE coefficients for the real and estimated state are retrieved

with − indicating the variables before the filter correction. The mean and covariance are estimated exploiting the PCE properties [Eqs. (35, 36)]

together with the real state mean

Measurements are obtained for both the forecast (estimated) mean and propagated (real) mean as

Then, n realizations of \(\varvec{\xi }_i\) are generated and associated basis functions are evaluated leading to \(\psi _{\varvec{\alpha }}^{i}=\psi _{\varvec{\alpha }}(\varvec{\xi }_i)\). An ensemble of n forecast state is then computed

The EnSRF Kalman gains are computed

with \(R=E\left[ \varvec{\varepsilon }\varvec{\varepsilon }^T\right]\) the measurement error covariance matrix.

In the EnSRF, mean and deviations are updated separately. Thus, firstly the deviation of forecast states with respect to the mean is computed

and secondly, mean and deviations are updated as

Eventually, a n-dimensional ensemble of corrected states is computed from the mean and the deviations

and then they are used to update the PCE coefficients by using an inexpensive least-square regression [48]

with \(X_k^+\) is the corrected state matrix, containing \(\hat{\varvec{\chi }}_{k,+}^i\), and \(\varvec{\Psi }\) is the measurement matrix.

As last point, the new cubature points for the CUT are retrieved, by computing the corrected state statistics

and then projecting it on the generalized conjugated axes space (Eq. (39))

with S being the Cholesky decomposition of \({\hat{P}}_k^+\), i.e. \({\hat{P}}_k^+=S^TS\).

4.3 Guidance Law

An algorithm, able to compute tailored impulses in order to let the spacecraft fly the nominal path, is needed. Control maneuvers reduce the dispersion with little propellant effort. In order to estimate the trajectory control maneuvers, a dedicated strategy is implemented. In literature, these techniques are usually subdivided into two main groups: (a) Closed-loop control, if control impulses are given to track the reference guidance, or (b) Closed-loop guidance, if control impulses are given to update the whole spacecraft trajectory in order to satisfy the mission objectives. Several different guidance and control laws exist, and the choice of the most suitable method is based essentially on the mission profile, spacecraft characteristics and the general scenario. In this work, only closed-loop control, i.e. the nominal trajectory tracking is used as control strategy. However, the algorithm computing navigation maneuvers will be always tagged as guidance algorithm. Maneuvers are computed at a prescribed time, in order to comply with on-ground segment requirements. The differential guidance (DG) is a commonly used guidance method for deep-space missions [58]. DG aims at canceling the final state deviation using two maneuvers, one at beginning and the other at the end of the considered leg, even though usually the second control maneuver is not applied, since at the final leg time a new maneuver can be computed and the whole algorithm can be repeated in a receding horizon approach. Since the time interval between navigation maneuvers is relatively short, first-order approximation can be used to relate the initial and final deviations, and the TCM can be computed as [5]

where \(\Phi _{rr}\), \(\Phi _{rv}\), \(\Phi _{vr}\), and \(\Phi _{vv}\) are the 3-by-3 blocks of \(\Phi (t_j, t_{j+1})\), i.e., the STM associated to the nominal trajectory between two consecutive TCM times \(t_j\) and \(t_{j+1}\), while \(\delta {{\textbf {r}}}\) and \(\delta {{\textbf {v}}}\) are the deviations with respect to the nominal trajectory. If the OD is considered in the loop, deviations are taken from the estimated trajectory

The parameter q is used either to adjust dimensions or the change the guidance algorithm behavior, favoring position deviation at the expense of velocity deviation and vice versa. In this work, \(q=0.01\) has been selected after some numerical experiments.

In this work, the control impulse in Eq. (65) is applied at each TCM time.

4.3.1 Simplifying Assumptions

Once the foundational blocks are modeled, combining the timeline (Fig. 6) with the methodology illustrated in this Section, a comprehensive method able to retrace the revised approach and to provide knowledge analysis, final dispersion and an estimation of the navigation costs in a single shot can be devised. However, an algorithm using PCE-CUT on each real orbit to estimate both knowledge and dispersion, and using the OD results to perturb randomly the real state at the TCM location, requires an excessively large amount of computational effort. The number of stochastic variables (being 18, 6 from the uncertain states and 12 from the estimated state errors) leads to a huge number of samples to be propagated to obtain the sought results, and thus to the curse of dimensionality. In order to reduce the computational burden, some simplifying assumptions can be made.

First of all, the knowledge analysis is performed only on the nominal trajectory and its results are used also on the real orbits stemming from the initial dispersion. This assumption is valid whenever the real trajectory does not deviate too much from the nominal one, relatively to the Spacecraft-to-Ground Station distance. In this case, the pseudo-measurements on the nominal trajectory are similar to the real trajectory ones and the outputs from the EnSRF are comparable.

Secondly, the estimated state error at the end of the OD phase is not picked randomly from the knowledge distribution at the time, but only the average error is considered to assess the estimated state for all the real trajectories. This strong assumption is able to reduce the number of random variables and, thus, lessen the problem dimensionality. Figure 11 shows stochastic costs for LUMIO trajectory both (a) if the navigation error at the orbit determination time is a Gaussian random variable with mean and covariance given by the filter output and (b) if the navigation error is taken as a deterministic value equal only to the mean. The navigation costs and final dispersion have similar distribution in both cases. Hence, for this scenario, this last assumption is valid.

5 Statement of the Problem

Once the building blocks are established, Problem 1 has to be adapted to cope with the test case scenario, represented by LUMIO transfer phase and the general optimal control problem is converted into a non-linear programming problem. The optimization problem for the test case scenario under the revised approach can be stated as

Problem 2

(Fuel-Optimal Problem) Find the initial and final time, \(\tau _0\) and \(\tau _f\), the two TCM times, \(\tau _{TCM_1}\) and \(\tau _{TCM_2}\), the angular parameter vector \(\varvec{\alpha }_0\), the SMIM vector \(\Delta v_{\text {SMIM}}\), and the nominal trajectory impulse at TCM times, \(\Delta v_{\text {TCM}_{\textrm{1}}}\) and \(\Delta v_{\text {TCM}_{\textrm{2}}}\), such that

with \(Q(0.99, \, \Delta v^s_j)\) representing the 99-percentile of the stochastic cost computed through Eq. (45), is minimized, while the state is subjected to the dynamics illustrated in Eq. (19)

The HIM is computed as

with \(\varvec{\nu }^*\) the nominal velocity and \(\varvec{\rho }_\delta\) is the target halo velocity.

The state is subjected to initial constraints

and

and a final constraint

with \(d=\left\| \varvec{\rho }\left( \tau _f\right) -\varvec{\rho }_\delta \left( \tau _f\right) \right\|\), being a measure of the distance of the real trajectories from the halo at \(\tau _f\), where \(\varvec{\rho }_\delta\) is the target halo.

In order to be compliant with on-ground operation requirements, some linear constraints are added

The navigation costs and the final dispersion are estimated through the comprehensive navigation assessment. It means

and

with \(\texttt{GL}\) and \(\texttt{OD}\) being the Differential Guidance Law (Eq. (65)) and orbit determination processes (Sect. 4.2) on the nominal trajectory respectively, \(\hat{\varvec{\chi }}\) is the estimated state, \(\varvec{\chi }\) the real state and \(\varvec{\chi }^*\) is the nominal state.

The quantile value for the j-th TCM exploiting the KQE (Eq. (45)) is

where \(\widetilde{\Delta v}_j^{s, i}\) are the sorted value of \(\left\| {\Delta v}_j^{s, i}\right\|\). The 100, 000 samples \({\Delta v}_j^{s, i}\) are obtained through an inexpensive Monte Carlo exploiting PCE-CUT technique. The CUT samples \({\Delta v}_j^{s, q}\) for each TCM are obtained by exploiting the DG algorithm (Eq. (65))

with

being the estimated error at k-th TCM time, and where

is the flow of the estimated state, for each CUT sample, propagated from the end of the OD phase up to the next TCM time. As per Sect. 4.3.1,

with the mean final error obtain through Eq. (63).

The PCE coefficients can be retrieved as

and then used to obtain the samples

where \(\psi _{\varvec{\alpha }}^{i}\) are the basis functions evaluated at a random picked value \(\varvec{\xi }_i\). In order to simplify the problem, the bandwidth is considered constant with \(h=0.001\).

The value in Eq. (72) is obtained in the same way, exploiting the KDE as in Eq. (42) with a constant bandwidth \(h=0.0622\).

In summary, the decision variable vector is defined as \({{\textbf {y}}}=\left[ \tau _0, \tau _f, \tau _{TCM_1}, \tau _{TCM_2}, \varvec{\alpha }_0, \Delta v_{\text {SMIM}}, \Delta v_{\text {TCM}_1}, \Delta v_{\text {TCM}_2}\right] ^T\) and its bounds are summarized in Table 6. No bounds are placed on LLO angular parameters.

The procedure used to estimate the cost function in Eq. (67) and the nonlinear constraint in Eq. (72) is summarized in Algorithm 1.

Problem 2 is solved by exploiting a simple shooting technique [59]. This method is selected as the most suitable to solve the optimization problem, since (i) the trajectory lasts only few days and nominally no middle correction is enforced, so low numerical noise is expected in the derivatives, (ii) number of variables is strongly reduced, (iii) and Algorithm 1 can be implemented straightforwardly. In order to speed up the NLP solution, the Jacobian, both for the objective function and the nonlinear constraint, can be provided. Details on the Jacobian construction are given in Appendix A.

5.1 First Guess

An educated guess is required to solve the optimization problem, in order to reduce the wide search space represented by all the possible parking low lunar orbits, the stable manifold insertion time, and the arrival state on the halo as well as to cope with the use of a local optimization scheme. In order to compute these first guesses, a generation mechanism exploiting a patching process is devised: (1) the state is propagated backward from the operative orbit to the first lunar pericenter; (2) a grid generation is used to create all the possible LLO pericenters at a given time; (3) a patching process selects the solutions fulfilling some tolerances in time and space at their pericenter; (4) eventually, solutions of the patching process are then used to feed an optimization algorithm, able to close the gap between the LLO and the stable manifold, while minimizing the needed propellant. Least expensive trajectory coming from the optimization algorithm are then used as first guess for the optimization problem stated in Problem 2. The algorithm is summarized in Fig 12.

5.1.1 Backward Propagation

In the backward leg, the spacecraft trajectory is propagated backward in time from the halo target orbit for 30 days in the high-fidelity restricted roto-pulsating n-body problem. The epoch and the states of the first lunar pericenter pass are saved to be used for patching purposes. Initial points on the halo are drawn from an equally spaced grid of 6 h, starting form the February 1, 2024 and ending on March 1, 2024. This choice reflects the requirement to start the science operation at latest on March 21, 2024 and to have at least 1 week of commissioning and calibration in the operative orbit. In order to escape the fastest way, a \({1}\,\mathrm{m/s} \Delta v\) is applied along the minimum stretching direction. Because in the high-fidelity model the operative orbit is no longer periodic, the minimum stretching direction \(\varvec{\delta }\) is defined as the direction along which for a given perturbation \(\delta \varvec{\chi }_0\) the Euclidian norm of the final perturbation is minimized, i.e.,

where \(C=\Phi ^T_{\tau _0, \tau _f}\Phi _{\tau _0, \tau _f}\) is the Cauchy–Green tensor, defined by exploiting the STM from \(\tau _0\) to \(\tau _f\). Starting from Eq. (84), the minimum stretching direction corresponds to the (unit) eigenvector associated to minimum eigenvalues of the Cauchy–Green tensor. The trajectories computed in this way can be seen as an extension of the CRTBP stable manifolds. Figure 13 shows some trajectories propagated backward from the halo orbit.

5.1.2 Grid Generation

At each of the pericenter epochs computed in the backward propagation, a grid of LLO pericenter points is computed, converted to the RPF and then saved. The Keplerian parameters used to build the grid are presented in Table 7.

5.1.3 Patching Process

The patching process patches backward orbits and the LLO grid points at the periselenium. Since by design the pericenter epoch is exactly the same, only the distance between the LLO points and the stable manifold pericenter is used as patching criterion. Figure 14 shows results of the patching process. Starting from the 321 initial guesses, only 9 have a pericenter distance lower than 250 km.

5.1.4 Optimization

Most promising solutions coming from the patching process (i.e. with pericenter distance \(d<{250\,\mathrm{\text {k}\text {m}}}\)) are used to feed an optimization algorithm, whose aim is to find the intersection between the LLO and the operative orbit stable manifold, while minimizing the propellant mass. Thus the objective is to determine the initial and final times, \(\tau _0\) and \(\tau _f\), the LLO Keplerian parameters \(\varvec{\alpha }_0\) and the \(\Delta v_\text {HIM}\) such that

is minimized. Moreover, the state is subjected to the constraint

where \(\varvec{\rho }_{SM}\) is position of the stable manifold at \(\tau _0\), i.e. the first three components of backward-integrated flow \(\varvec{\varphi }\left( \varvec{\chi }_f, \tau _f; \tau _0\right)\), having as initial state \(\varvec{\chi }_f=\left( \varvec{\chi }_H\left( \tau _f\right) -\left[ {{\textbf {0}}}_3 \, \Delta v_{\text {HIM}}\right] ^T\right)\), that is the halo position at \(\tau _f\) perturbed by the HIM. The stable manifold injection maneuver is computed as

with the state on the LLO computed through Eq. (26).

Figure 14 shows the results after the optimization. From the 9 solutions that survived the patching filter, only 4 solutions have a \(\Delta v<{120}\,\mathrm{m/s}\), which is compatible with LUMIO requirements. The characteristic of these trajectories are listed in Table 8. Althogh the time of flight is similar for the four optimal solutions, the required propellant amount varied widely, going from only \(67.33{\mkern 1mu}\) to \({83.11}\,\mathrm{m/s}\), being one third higher than the minimum.

Pericenter patching process and optimization results. Red asterisks show solutions with \(d<{250\,\mathrm{\text {k}\text {m}}}\). Black diamonds are optimized solutions with \(\Delta v_{\text {SMIM}}<{120}\,{\hbox {m/s}}\). Numbers refer to solutions from Table 8 (Color figure online)

6 Results

The trajectories listed in Table 8 are used as first guesses for Problem 2. The NLP is solved for each of them. The average computational time for the optimization algorithm on a quad-core Intel i7 2.80 GHz processor is about 20 min. Since CUT samples can be propagated forward independently, the runtime can be easily reduced by exploiting parallelization on a multi-core workstation.

Results are summarized in Table 9. Surprisingly, the solution having the best deterministic value (i.e., #64) is not the one having the best performances when stochastic costs are considered, neither in the non-optimized or in the optimized case, and this can lead to an unnecessary waste of propellant mass. However, this choice will be sub-optimal when the stochastic costs are considered. Indeed, Solution #53 needs less propellant in the first guess comprehensive approach, allowing to save about 6% of fuel. This figure increases to 8% in the optimized solution under the integrated approach.

This feature is mainly related to have to possibility to fly a lower dispersion trajectory. Indeed, although the position dispersion (Fig. 15) shows a similar trend for both Solution #53 and #64, the velocity dispersion (Fig. 16) is lower when Solution #53 is considered and this helps the trajectory to have smaller navigation costs. Moreover, a final lower dispersion is beneficial since it allows to satisfy the final constraint with less effort.

The solution #53 has the minimum overall \(\Delta v\) both considering the first guess and the optimized trajectory. Thus, solution #53 would have been selected as the best-performing solution even in the sequential approach. However, solution #289 results show that a great improvement (about the 25%) can be obtained under the stochastic optimization. This feature indicates that, considering a different time-frame or a different operational orbit, it could be possible that the sequential and the integrated approach give different results, leading to a wrong choice of the nominal orbit if the stochastic optimization is not performed.

The decision variables of Problem 2 for Solution #53 are listed in Table 10, while the maneuvers magnitude is provided in Table 11. It can be noted that some propellant is saved also if only the deterministic costs are considered. This behavior relates with the different implementation of final constraint. In fact, in Problem 2 it is enforced with a stochastic formulation. This change modifies the structure of the optimization problem, allowing also a different deterministic solution. Figure 17 shows its trajectory from the LLO to the halo target orbit, with a close-up on the final dispersion. The final dispersion is fully enclosed in the desired 30 km sphere. An analysis of the cumulative distribution function for the two TCMs is given in Fig. 18.

For the sake of completeness, the decision variables for Solution #64 are listed in Table 12, while the associated maneuvers are given in Table 13.

7 Conclusions

In this work, an integrated approach for preliminary mission analysis is devised. This technique has the aim to reduce the propellant mass needed to fly a trajectory by embedding in the trajectory design and optimization the navigation assessment and the associated stochastic costs directly in the preliminary mission analysis. This method can be fundamental in future space mission exploiting limited-capability small spacecraft, where high navigation costs may jeopardize the mission feasibility. In order to assess the performances against the traditional technique, the revised approach has been applied in a test case scenario, representing the transfer from a low lunar orbit to the operational halo orbit of the CubeSat LUMIO. For this scenario, a new technique, using conjugate unscented transformation to compute the polynomial chaos expansion coefficients, labeled PCE-CUT, is devised and used to propagate the uncertainties and estimate both the dispersion and the stochastic costs, while the knowledge analysis is performed by a combination of this technique with an ensemble square-root filter. This method is inserted in the optimization scheme. Four trajectories, coming from a grid search algorithm, are used as educated initial guesses. After the optimization is found that the solution with the best deterministic value is not the one with the minimum overall cost and the 8% of the propellant mass is saved by the integrated approach optimal solution (Fig. 19).

Data availability

Data generated during the current study are available from the corresponding author on request.

Notes

MoND is a theory, alternative to the theory of dark matter, proposing a modification of the classical Newton’s law to account for the observed motion of the galaxies.

https://www.asi.it/en/the-agency/the-space-centers/sardina-deep-space-antenna-sdsa/ (Last accessed on January 13, 2022)

References

Fehse, W.: Automated Rendezvous and Docking of Spacecraft. Cambridge Aerospace Series, vol. 16. Cambridge University Press, Cambridge (2003). https://doi.org/10.1017/CBO9780511543388

Franzese, V., Topputo, F.: Optimal beacons selection for deep-space optical navigation. J. Astronaut. Sci. 67, 1775–1792 (2020). https://doi.org/10.1007/s40295-020-00242-z

Poghosyan, A., Golkar, A.: CubeSat evolution: analyzing CubeSat capabilities for conducting science missions. Prog. Aerosp. Sci. 88, 59–83 (2017). https://doi.org/10.1016/j.paerosci.2016.11.002

Walker, R., Binns, D., Bramanti, C., et al.: Deep-space CubeSats: thinking inside the box. Astron. Geophys. 59(5), 24–30 (2018). https://doi.org/10.1093/astrogeo/aty232

Dei Tos, D.A., Rasotto, M., Renk, F., et al.: LISA Pathfinder mission extension: a feasibility analysis. Adv. Space Res. 63(12), 3863–3883 (2019). https://doi.org/10.1016/j.asr.2019.02.035

Trenkel, C., Kemble, S., Bevis, N., et al.: Testing Modified Newtonian Dynamics with LISA Pathfinder. Adv. Space Res. 50(11), 1570–1580 (2012). https://doi.org/10.1016/j.asr.2012.07.024

Fabacher, E., Kemble, S., Trenkel, C., et al.: Multiple Sun–Earth saddle point flybys for LISA Pathfinder. Adv. Space Res. 52(1), 105–116 (2013). https://doi.org/10.1016/j.asr.2013.02.005

Dei Tos, D.A., Topputo, F.: High-fidelity trajectory optimization with application to saddle-point transfers. J. Guid. Control Dyn. 42(6), 1343–1352 (2019). https://doi.org/10.2514/1.G003838

Cipriano, A.M., Dei Tos, D.A., Topputo, F.: Orbit design for LUMIO: the lunar meteoroid impacts observer. Front. Astron. Space Sci. 5, 29 (2018). https://doi.org/10.3389/fspas.2018.00029

Longuski, J.M., Guzmán, J.J., Prussing, J.E.: Optimal Control with Aerospace Applications. Space Technology Library, vol. 32. Springer, New York (2014). https://doi.org/10.1007/978-1-4614-8945-0

Ross, I.M.: A Historical Introduction to the Convector Mapping Principle. In: Proceedings of Astrodynamics Specialists Conference, AAS 05-332 (2005)

Park, R.S., Scheeres, D.J.: Nonlinear mapping of Gaussian statistics: theory and applications to spacecraft trajectory design. J. Guid. Control Dyn. 29(6), 1367–1375 (2006). https://doi.org/10.2514/1.20177

Di Lizia, P., Armellin, R., Ercoli-Finzi, A., et al.: High-order robust guidance of interplanetary trajectories based on differential algebra. J. Aerosp. Eng. Sci. Appl. 1(1), 43–57 (2008)

Di Lizia, P., Armellin, R., Bernelli-Zazzera, F., et al.: High order optimal control of space trajectories with uncertain boundary conditions. Acta Astronaut. 93, 217–229 (2014). https://doi.org/10.1016/j.actaastro.2013.07.007

Di Lizia, P., Armellin, R., Morselli, A., et al.: High order optimal feedback control of space trajectories with bounded control. Acta Astronaut. 94(1), 383–394 (2014). https://doi.org/10.1016/j.actaastro.2013.02.011

Schumacher, P.W., Jr., Sabol, C., Higginson, C.C., et al.: Uncertain Lambert Problem. J. Guid. Control Dyn. 38(9), 1573–1584 (2015). https://doi.org/10.2514/1.G001019

Zhang, G., Zhou, D., Mortari, D., et al.: Covariance analysis of Lambert’s problem via Lagrange’s transfer-time formulation. Aerosp. Sci. Technol. 77, 765–773 (2018). https://doi.org/10.1016/j.ast.2018.03.039

Adurthi, N., Majji, M.: Uncertain Lambert problem: a probabilistic approach. J. Astronaut. Sci. 67, 361–386 (2020). https://doi.org/10.1007/s40295-019-00205-z

Armellin, R., Di Lizia, P., Topputo, F., et al.: Gravity assist space pruning based on differential algebra. Celest. Mech. Dyn. Astron. 106(1), 1–24 (2010). https://doi.org/10.1007/s10569-009-9235-0

Hy, Li., Luo, Y.Z., Tang, G.J., et al.: Optimal multi-objective linearized impulsive rendezvous under uncertainty. Acta Astronaut. 66(3–4), 439–445 (2010). https://doi.org/10.1016/j.actaastro.2009.06.019

Yang, Z., Yz, Luo, Zhang, J.: Robust planning of nonlinear rendezvous with uncertainty. J. Guid. Control Dyn. 40(8), 1954–1967 (2017). https://doi.org/10.2514/1.G002319

Balducci, M., Jones, B.A.: Asteroid rendezvous maneuver design considering uncertainty. In: Spaceflight Mechanics 2019. Advances in the Astronautical Sciences, vol. 168, pp 2951–2967 (2019)

Xiong, F., Xiong, Y., Xue, B.: Trajectory Optimization under Uncertainty based on Polynomial Chaos Expansion. In: AIAA Guidance, Navigation, and Control Conference, p 1761, (2015) https://doi.org/10.2514/6.2015-1761

Greco, C., Campagnola, S., Vasile, M.: Robust space trajectory design using belief optimal control. J. Guid. Control Dyn. 45(6), 1060–1077 (2022). https://doi.org/10.2514/1.G005704

Ozaki, N., Campagnola, S., Funase, R.: Tube stochastic optimal control for nonlinear constrained trajectory optimization problems. J. Guid. Control Dyn. 43(4), 645–655 (2020). https://doi.org/10.2514/1.G004363

Marmo, N., Zavoli, A., Ozaki, N., et al.: A hybrid multiple-shooting approach for covariance control of interplanetary missions with navigation errors. In: 33rd AAS/AIAA Space Flight Mechanics Meeting, Austin, TX (2023)

Benedikter, B., Zavoli, A., Wang, Z., et al.: Convex approach to covariance control with application to stochastic low-thrust trajectory optimization. J. Guid. Control, Dyn. 45(11), 2061–2075 (2022). https://doi.org/10.2514/1.G006806