Abstract

Background

Coaches, sport scientists, clinicians and medical personnel face a constant challenge to prescribe sufficient training load to produce training adaption while minimising fatigue, performance inhibition and risk of injury/illness.

Objective

The aim of this review was to investigate the relationship between injury and illness and longitudinal training load and fatigue markers in sporting populations.

Methods

Systematic searches of the Web of Science and PubMed online databases to August 2015 were conducted for articles reporting relationships between training load/fatigue measures and injury/illness in athlete populations.

Results

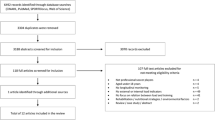

From the initial 5943 articles identified, 2863 duplicates were removed, followed by a further 2833 articles from title and abstract selection. Manual searching of the reference lists of the remaining 247 articles, together with use of the Google Scholar ‘cited by’ tool, yielded 205 extra articles deemed worthy of assessment. Sixty-eight studies were subsequently selected for inclusion in this study, of which 45 investigated injury only, 17 investigated illness only, and 6 investigated both injury and illness. This systematic review highlighted a number of key findings, including disparity within the literature regarding the use of various terminologies such as training load, fatigue, injury and illness. Athletes are at an increased risk of injury/illness at key stages in their training and competition, including periods of training load intensification and periods of accumulated training loads.

Conclusions

Further investigation of individual athlete characteristics is required due to their impact on internal training load and, therefore, susceptibility to injury/illness.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Athletes training load and fatigue should be monitored and modified appropriately during key stages of training and competition, such as periods of intensification of work training load, accumulated training load and changes in acute training load, otherwise there is a significant risk of injury. |

Immunosuppression occurs following a rapid increase in training load. Athletes who do not return to baseline levels within the latency period (7–21 days) are at higher risk of illness during this period. |

Individual characteristics such as fitness, body composition, playing level, injury history and age have a significant impact on internal training loads placed on the athlete. Longitudinal management is therefore recommended to reduce the risk of injury and illness. |

1 Introduction

Previous research has demonstrated that training and competition stress result in temporary decrements in physical performance and significant levels of fatigue post-competition [1–3]. These decrements are typically derived from increased muscle damage [3, 4], impairment of the immune system [1], imbalances in anabolic–catabolic homeostasis [5], alteration in mood [6, 7] and reduction in neuromuscular function (NMF) [2, 7, 8]. The resultant fatigue from these variables can take up to 5 days to return to baseline values post-competition [5], with sports that have frequent competition (i.e. often weekly in team sports) also inducing accumulative fatigue over time [9]. In addition to the significant amounts of fatigue induced by competition, many athletes experience fatigue as a result of the work required to develop the wide variety of physical qualities that contribute significantly to performance. For example, in both team and individual sports, speed, strength, power and endurance are required in addition to technical and tactical skills [10]. To achieve optimal development and performance, these physical qualities must be trained and developed, which, irrespective of the level of training loads used, may also induce further levels of fatigue [10, 11].

1.1 Training Load, Fatigue, Injury and Illness Definitions

Training load, fatigue, injury and illness have become widely used terms within exercise science and sports such as soccer and the various rugby codes; however, there has been a lack of consistency regarding these definitions and their use. When describing load/workload throughout this paper, unless otherwise stated, load refers to training load and is defined as the stress placed on the body by the performed activity [12]. Training load comprises internal and external workload, whereby internal training load quantifies the physical loading experienced by an athlete and external training load describes the quantification of work external to the athlete [13]. Fatigue can be defined as the decrease in the pre-match/baseline psychological and physiological function of the athlete [14]. An accumulation of fatigue can result in overtraining, which has a significant negative impact on performance [15]. For example, the investigation by Johnston et al. [16] regarding the physiological responses to an intensified period of rugby league competition over a 5-day period found that cumulative fatigue appeared to compromise high-intensity running, maximal accelerations and defensive performance in the final game. This suggests that when athletes do not receive adequate time to recover between training and competition, fatigue will accumulate, compromise key aspects of performance and result in an increased risk of injury and illness to the athlete [1, 15–17]. The definition of injury has recently been realigned to the notion of impairment used by the World Health Organization [18, 19]. As a result injury can be categorised into three domains: clinical examination reports, athlete self-reports and sports performance, according to the Injury Definitions Concept Framework (IDCF) [18, 19].

1.2 Monitoring Tools

Due to the highly complex nature of fatigue [9, 20], as well as individualised responses to similar training loads [21, 22], it is important to monitor global athlete fatigue levels (i.e. mental, physical and emotional) in response to prescribed training loads in order to minimise injury and illness [23]. Given the link between training load and injury incidence is now established, measures aimed at controlling and reducing the risk factors for the development of a sports injury are critical to primary, secondary and tertiary injury prevention [149]. Monitoring tools are used extensively in elite sport as valid indicators of recovery status of the athlete [17] and to inform support staff making decisions regarding the balance between prescribing training and recovery/rest so that performance is optimised and injury/illness minimised. Various aspects of global training load and fatigue can be measured that impact the day-to-day readiness of the athlete [17], with a range of subjective and objective measures adopted to monitor both load (e.g. training volume/duration/exposure, number of skill repetitions, rating of perceived exertion [RPE], session RPE [sRPE], global positioning systems [GPS]) and fatigue (e.g. perceptual wellness scales, neuromuscular fatigue, biochemical markers, immunological markers and sleep quantity/quality) [17].

1.3 The Relationship Between Training Load and Fatigue Markers and Injury and Illness

The majority of training load/fatigue monitoring research has focused on acute responses to measure recovery of performance variables and the acceleration of this process through the implementation of recovery modalities [8, 24, 25]. In contrast, fewer attempts have been made to monitor acute and/or cumulative load and fatigue variables longitudinally to determine the association with injury/illness. Longitudinal monitoring refers to the investigation of how change or accumulation in training load/fatigue is associated with injury/illness over time. The use of long-term monitoring allows for the measurement of training load and fatigue variables to identify any injury/illness trends in order to provide practitioners with objective data for planning training over multiple blocks, rather than relying solely on anecdotal evidence, with the aim of reducing overtraining and injury/illness [17, 26]. Any subsequent reduction in injury and illness is likely to have a significant impact on team performance due to the large percentages of athletes from training squads (approximately 25 %) in team sports injured at any one time [27], and the association between the number of injuries and matches won [28, 29]. Although recent reviews have provided a summary of the methods available to monitor athlete load and fatigue [17], the relationship between training load in throwing-dominant sports [144], training load and injury, illness and soreness [13], and the relationship between workloads, physical performance, injury and illness in adolescent male football players [150], they have not detailed or critiqued the specific relationship between longitudinal training load, fatigue markers, and subsequent injury and illness. Additionally, previous reviews have adopted strict inclusion criteria, leading to lower numbers of studies included for consideration.

1.4 Objectives

The objective of this study was to perform a systematic review and evaluate the association between longitudinally monitored training load, markers of fatigue, and injury/illness in sporting populations. In doing so, this review gives recommendations regarding appropriate variables to measure training load, and suggestions for further studies investigating longitudinal monitoring and fatigue markers and their relationship with injury and illness.

2 Methods

2.1 Literature Search Methodology

A Cochrane Collaboration [30] review methodology (literature search, assessment of study quality, data collection of study characteristics, analysis and interpretation of results, and recommendations for practice and further research) was used to identify relationships between long-term training load, fatigue markers, injury and illness.

2.2 Search Parameters and Criteria

We searched the Web of Science and PubMed online databases until August 2015 using combinations of the following terms linked with the Boolean operators ‘AND’ and ‘OR’: ‘athlete’, ‘distance’, ‘fatigue’, ‘illness’, ‘injury*’, ‘match’, ‘monitor*’, ‘monitoring’, ‘neuromuscular’, ‘performance’, ‘training’, and ‘wellness’. Articles were first selected by title content, then abstract content, and then by full article content. Manual searches were then conducted from the reference lists of the remaining articles that were selected for the ‘full article content’ stage, using the Google Scholar ‘cited by’ tool and article reference lists. Exclusion criteria included studies that were (i) unavailable in English; (ii) review papers; (iii) purely epidemiological; (iv) studying non-athlete, chronically sick and/or already injured/ill populations; (v) study length <2 weeks; and (vi) acute studies not investigating how change or accumulation in load/fatigue associates with injury/illness over time (Fig. 1 shows the flow of information through the systematic review process). After an initial 5943 articles were identified through online database searching, 2863 were discarded due to duplication, 2558 were discarded due to title content, and 275 were discarded due to abstract content. Subsequent manual searching yielded 205 additional articles that were also assessed for inclusion. Lastly, 384 articles were discarded upon assessment of their full-text content, leaving 68 studies for inclusion in the final review (injury, n = 45; illness, n = 17; injury and illness, n = 6).

2.3 Assessment of Study Quality

As noted in previous systematic reviews [31], the usual method of quality evaluation comprises tools such as the Delphi [32] or PEDro (Physiotherapy Evidence Database) [33] scales whose criteria are often not relevant for specific review study types, including this current review article. For example, similar to Hume et al. [31], 5 of the 11 PEDro scale criteria were not included by any study in this review, including concealed allocation, subject blinding, therapist blinding, assessor blinding and intention-to-treat analysis. Therefore, to reduce the risk of bias, and given the unsuitability of scales such as Delphi and PEDro to assess the literature in this review, two authors independently evaluated each included article using a 9-item custom methodological quality assessment scale with scores ranging from 0 to 2 (total score out of 18). The nine items included (1) study design (0 = retrospective, 1 = prospective cohort, 2 = experimental e.g. intervention or case/control); (2) injury and/or illness inclusion (1 = one of either, 2 = both); (3) injury/illness definition (0 = not stated, 1 = no distinction between performance, self-reported or clinical, 2 = clearly defining if injury was sports performance, self-reported or clinical examination; (4) sporting level (0 = less than sub-elite, 1 = sub-elite, 2 = elite); (5) fatigue and/or load inclusion (1 = one of either, 2 = both); (6) number of fatigue and/or load variables (0 = 1, 1 = 2–3, 2 = more than 3); (7) statistics used (0 = subjective/visual analysis or no direct comparative analysis for fatigue/load and injury/illness associations; 1 = objective statistics for fatigue/load and injury/illness associations, 2 = objective statistics with: (i) adjustments for fatigue/load interactions, or (ii) quantification of injury/illness prediction success); (8) study length (0 = less than 6 weeks, 1 = 6 weeks to 1 year, 2 = more than 1 year); and (9) fatigue and/or load monitoring frequency (0 = less than monthly, 1 = weekly to monthly, 2 = more than weekly). Item 4 (sporting level) definitions were as follows: less than sub-elite—unpaid novices or recreational athletes, e.g. first-time runner [35] or amateur rugby league player who trains once or twice a week and plays weekly matches [36]; sub-elite—experienced athlete who trains regularly with a performance focus, e.g. lower-league soccer player who trains two to three times a week [37]; elite–athletes competing and/or training at national or international level. Item 6 (load/fatigue variables) refers to the number of a particular kind of variable. For example, three immunological markers and five perceptual wellness factors included in a study would be registered as two variables, not eight. A positive approach was taken regarding items 6 and 9, i.e. the variable that scored the greatest on the item scale was included as the final score. For example, if one variable was monitored twice a week and one was measured monthly, the final score for item 9 would be 2. The mean ± standard deviation (SD) study quality score was 11 ± 2 (range 7–15).

2.4 Data Extraction and Analysis

For each article, the year of publication, quality score, sex, sporting level, sample size, injury/illness definition and type, fatigue/load variables, and a summary of findings were extracted and are included in Tables 1, 2, 3 and 4. Only the fatigue/load variables that were associated with injury/illness in each study were included. As much data as possible were included for the summary of findings; however, if large amounts of data were reported in an article then only significant/clear results were used. The magnitude of effects were reported in the following descending order of priority: (i) objective statistics such as risk ratios or mean differences; and (ii) visual trends or descriptive results of data with no statistical test. The probability of effects in the summary findings were reported in the following descending order of priority: (i) exact p values; (ii) significance levels (e.g. p < 0.05, p < 0.01); and (iii) 95 % confidence intervals (CIs). To preserve table space, differences in group means was reported rather than the raw values for each group comparison e.g. +15 rather than 25 versus 10. Although three studies initially provided no direct statistics to assess load/fatigue–injury associations [36, 38, 39], raw group load/fatigue–injury data were analysed using Pearson correlations. A priori level of evidence was evaluated using the van Tulder et al. method [142]. Levels of evidence were defined as strong (consistent findings among multiple high-quality randomised controlled trials [RCTs]), moderate (consistent findings among multiple low-quality RCTs and/or non-RCTs, clinical controlled trials [CCTs], and or one high-quality RCT), limited (one low-quality RCT and/or CCT), conflicting (inconsistent findings among multiple trial RCTs and/or CCTs) and no evidence from trials (no RCTs or CCTs) [142]. The van Tulder et al. [142] method is an accepted method of measuring the strength of evidence [13, 142]. The Oxford Centre of Evidence-Based Medicine Levels of Evidence [151] was utilised to determine the hierarchical level of evidence, whereby the highest level of evidence pertained to a systematic review of RCTs, and the lowest level of evidence pertained to expert opinion without critical appraisal or based on physiology, bench research or ‘first principles’ [13, 151]. The levels of evidence of each study are presented in Tables 1, 2, 3 and 4.

2.5 Definitions of Key Terms

Training load, fatigue injury and illness have previously been defined (see Sect. 1.1). Latency period is defined as the period between training load and the onset of injury or illness [13]. Finally, we used the term ‘exposure’ to refer to time spent participating in a particular training/competition activity.

3 Results

3.1 Retention of Studies

Overall, 68 studies were retained for inclusion in the final review (Fig. 1), of which 45 (66 %) investigated injury only, 17 (25 %) investigated illness only, and 6 (8 %) investigated both injury and illness. In addition, 42 (61 %) articles focused on load–injury/illness relationships, 11 (16 %) focused on fatigue–injury/illness relationships only, and 15 (22 %) included both load and fatigue variables. In the 57 studies that investigated load–injury/illness relationships, many different load measures were used, including training exposure (n = 14, 24 %) [35, 40–52]; number of sessions/matches (n = 5, 8 %) [46, 47, 53–55], number of skill repetitions [e.g. number of deliveries bowled for cricketers] (n = 6, 10 %) [56–61]; days between/frequency of matches (n = 8, 14 %) [53, 55, 56, 62–66]; heart rate (n = 4, 7 %) [48, 55, 67, 68]; RPE (n = 2, 3 %) [69, 70]; sRPE (n = 21, 36 %) [26, 36, 40, 54, 57, 68, 70–84]; number/intensity of collisions (n = 2, 3 %) [64, 65]; distance [both self-reported and GPS derived] (n = 6, 10 %) [34, 49, 68, 69, 85, 86]; velocity/acceleration GPS-derived measures (n = 2, 3 %) [38, 85]; metabolic equivalents [MET] (n = 1, 1 %) [87]; the Baecke Physical Activity Questionnaire [88] (n = 1, 1 %) [89]; and a combined volume and intensity ranking [1–5 scale] (n = 1, 1 %) [90]. A number of fatigue measures were also used in the 26 studies that investigated fatigue–injury/illness relationships, including perceptual wellness scales (n = 13, 50 %) [37, 39, 48–50, 75, 80, 81, 91–95]; sleep quantity/quality (n = 6, 23 %) [39, 48, 71, 80, 95, 96]; immunological markers (n = 12, 46 %) [49, 54, 73, 82, 83, 87, 89, 90, 97–100]; and stress hormone levels (n = 6, 23 %) [75, 81–83, 100, 101].

3.2 Definitions of Key Terms

Thirty-seven (54 %) studies defined injury/illness as ‘sports incapacity’ [102, 109 ] events (i.e. the injury/illness caused time-loss from, or an alteration in, normal training schedule), whereas 26 studies (38 %) did not distinguish between what category the injury/illness orientated from, and defined injury/illness by measures such as the ‘Wisconsin Upper Respiratory Symptom Survey’ [104] for upper respiratory illness (URI), or as any pain or disability experienced by a player during a match or training session [105] for injury. Only two studies did not clarify which type of injury/illness definition was used [42, 74].

3.3 Statistical Analysis Methods

A range of statistical analysis methods were also used, including Pearson correlations [68], mean differences in load/fatigue between injured and non-injured groups [86], a Cox proportional regression frailty model [34], logistic regression with binomial distribution [26], linear regression [78] and multinomial regression [71], with only one study adjusting for interactions between load and fatigue measures [50]. Main et al. used linear mixed modelling to assess the interactive associations between training exposure and psychological stressors with signs and symptoms of illness in 30 sub-elite triathletes [50]. In addition, only two studies provided an indication of the success of load to predict injury. Specifically, Gabbett [26] achieved this using a sensitivity and specificity analysis, while Foster [74] reported the percentage excursions beyond their derived load thresholds that did not result in illness.

3.4 Sporting Populations

A number of different sporting populations were represented, from recreational to elite level; namely, American Football (n = 1) [89]; Australian Football [AF] (n = 6) [68, 70, 84, 85, 94, 96]; basketball (n = 2) [81, 106]; cricket [fast bowlers] (n = 5) [56–58, 60, 61]; futsal (n = 1) [82]; soccer (n = 21) [37, 38, 40, 43–47, 53, 55, 62, 63, 67, 71, 75, 83, 92, 93, 95, 100, 101]; road cycling (n = 1) [73]; rugby league (n = 13) [26, 34, 36, 39, 64–66, 76–80, 94]; rugby union (n = 5) [41, 52, 54, 72, 94]; running (n = 4) [35, 42, 69, 86]; swimming (n = 4) [49, 74, 97, 98]; triathlon (n = 2) [48, 50]; wheelchair rugby (n = 1) [99]; and yacht racing (n = 1) [90]. Two studies used a mix of various sports [51, 87]. The majority of studies included only male participants (n = 52), with 11 studies including both males and females [35, 49, 50, 74, 86, 87, 92, 93, 97–99] and three including females only [42, 83, 106]. Three studies used intervention [35, 48, 77] and case-control study designs [83, 89, 105], with nine studies [38, 41, 45–47, 67, 75, 77, 82] investigating injury/illness severity as opposed to injury/illness incidence only.

4 Discussion

The aim of this systematic review was to investigate the literature that has examined the longitudinal monitoring of training load and fatigue data, and its relationship with injury/illness in sporting populations. Although a number of common findings were identified from the 68 studies, a lack of consistency and conflicting views are clearly apparent within the literature regarding the definition of key terms, monitoring of the training load and injury and illness, and monitoring of fatigue markers and their relationship to injury and illness.

4.1 Reporting of Terms

This review has identified conflicting levels of evidence for several key terms and their subsequent measures used to longitudinally monitor the athlete, including training load, fatigue, injury and illness. The use of multiple definitions within the literature to describe a singular term may lead to confusion and misuse at both a conceptual and practical level by leading to inadequate and inconsistent criteria for defining samples, and subsequent difficulty in comparing one study with another [77].

4.1.1 Training Load and Fatigue

Use of the terms training load and fatigue was found to have the greatest misinterpretation within this review, primarily the interchangeability of these terms within the literature. For example, a recent study by Hulin et al. [57] applying Bannister’s fitness–fatigue model [107] to training stress-balance (acute:chronic workload ratio) described fatigue as the acute training load (weekly training load total), and fitness as the chronic load (previous 4-weekly total average). Even though this new method of monitoring training load provided by Hulin et al. [57] has enabled a greater understanding of the relationship between training load and injury [108, 131], the application of fitness and fatigue terminology to represent training workload has added further confusion. This has resulted in several recent studies readdressing this issue, whereby the fitness–fatigue model (formerly training stress balance) has been replaced by the term acute:chronic workload ratio for this very reason [108, 131, 143, 145, 146, 148]. The key implication for researchers and practitioners here is that when training load and fatigue are used as terms they should be clearly defined and described as separate entities.

4.1.2 Injury and Illness

Along with the 37 studies that used time-loss injury/illness definitions, 26 studies have simply reported ‘injury’ or ‘illness’ when summarising key findings, without distinguishing between categories. Distinguishing between which category of injury/illness is an important practical consideration. For example, Brink et al. [71] noted differences between traumatic injury, overuse injury and illness associations with training load, while Orchard et al. [59] reported training load-related differences for joint, bone, tendon and muscle injuries. Standardised reporting of injury/illness incidence will further aid comparison between studies, as well as generation of any subsequent meta-analyses [111].

4.1.3 Exposure

Three terms were also used to describe the time spent participating in a particular training/competition activity; namely, duration [71], volume [41, 52] and exposure [44]. However, ‘volume’ was used as a term in only two studies and, in Brooks et al. [41], it was included in the study title and was used interchangeably with ‘exposure’ in the article text. Several studies, such as Buist et al. [35] and Main et al. [50], also used ‘exposure’ in the article text but did not include it in their titles or keywords.

4.1.4 Perceptual Wellness

It should also be noted that the term ‘perceptual wellness scales’ covers a range of inventories that attempt to assess how individuals perceive particular physical and psychological states. The measures used in the studies included in this review ranged from simple 1–5 Likert scale questionnaires for factors such as energy, sleep quality and mood [80], to more detailed and longer multi-question tools such as the Recovery-Stress Questionnaire for Athletes (REST-Q) [39] or the Daily Analysis of Life Demands for Athletes Questionnaire (DALDA) [81].

4.2 Training Load and Injury

Monitoring of training load accounted for 33 of 68 studies in this review, with the majority from team sports (90 %), predominately soccer and rugby league, and the additional 10 % coming from three running studies [35, 42, 86] and one with a mixed sporting group [51] (Table 1). For internal training load, the most common measure was sRPE (n = 21), with exposure the most frequent (n = 15) for external load. The following section discusses the emerging moderate evidence for the relationship between training load and key stages of training and competition, which highlights where athletes were deemed to be more susceptible to increased risk of injury [26, 41, 44, 84, 85].

4.2.1 Periods of Training Load Intensification

Periods of training load intensification, such as preseason, periods of increased competition, and injured players returning to full training, were found to increase the risk of injury. For example, athletes returning for preseason are at significantly greater risk of injury, potentially from the intensification in training workload and detraining effect from the offseason [26, 84]. This may result in the athlete being unable to tolerate the external/internal training load placed on them. Gabbett [26] also reported that the likelihood of non-contact soft tissue injury was 50–80 % (95 % CI) in a rugby league preseason when weekly internal training load (sRPE) was between 3000 and 5000 AU compared with lower weekly training loads, and that increased loads during preseason elevated injury rate at a group level but not during the inseason [105]. Along with high preseason training loads, periods of training and match load intensification, such as periods of congested scheduling, were also investigated in the literature [7, 15, 16]. However, there was conflicting evidence from the six studies investigating associations between shortened recovery cycles and injury. Two studies found shortened recovery to be related to increased injury [53, 63], one study related to decreased injury [64], one study found moderate recovery cycles to have the highest injury risk [65], one study reported different findings depending on injury type [66], and one study found no significant associations [62].

4.2.2 Changes in Acute Training Load

Another facet of this review was how acute change in training load (week to week) is associated with injury risk. Piggot et al. [68] identified that if weekly internal training load was increased by more than 10 %, this explained 40 % of injury in the subsequent 7 days. The other two studies to assess acute changes in training load both found a positive linear relationship between increased acute internal training load (1245–1250 AU) relative to the previous week and injury rate in elite contact-sport athletes [72, 84]. However, in contrast, the investigation by Buist et al. [35] regarding injury incidence among novice runners following a graded training programme (running minutes increased 10.5 %/week) versus a control group (running minutes increased 23.7 %/week) found no difference between groups for running-related injury (RRI) rate (odds ratio 0.8, 95 % CI 0.6–1.3), despite a greater increase in acute weekly training minutes in the control group. This finding is in agreement with the study by Nielsen et al. [86] regarding the development of RRI in novice runners (n = 60) during a 10-week prospective study. Those who sustained an RRI showed an increase in weekly training load of 31.6 %/week when compared with a 22.1 %/week increase among healthy participants; however, this was deemed non-significant (p = 0.07). This lack of increase in injury with increased acute training load may be first explained by the fact that only external load has been measured, with all relationships that have been found adopting internal training load measures. Second, novice runners may be able to improve at a greater rate than experienced athletes [113] and are therefore potentially able to tolerate large relative increases in external training load due to the absolute external and internal training load level being low.

4.2.3 Accumulated Training Load

Another key stage of training and competition that was highlighted was the effect of long-term accumulated training load (chronic workload) on injury incidence. For example, Ekstrand et al. [44] compared external load (training/match exposures) and injury rates between elite soccer players who participated in the international World Cup after the domestic season (World-Cup players) and non-World-Cup players. World-Cup players had greater match exposure and total (training plus match) exposure compared with non-World-Cup players during the domestic season (46 vs. 33 matches). Ekstrand et al. [44] then found that 32 % of this high-exposure group sustained a drop in performance, with 29 % proceeding to sustain injury during the World Cup. Moreover, 60 % of players who had played more than one competition/week in the 10 weeks prior to the World Cup also sustained an injury. aus der Fünten et al. investigated the effect of a reduced winter break (6.5 weeks down to 3.5 weeks) by comparing training exposure and injury rates between the two seasons immediately before and after the change in the length of the winter break [45]. Even though the reduced winter break showed athletes having lower training and match exposures, injury rates were higher, particularly in training. These studies suggest that how coaches and support staff manage key stages of training and competition (e.g. the periodization of starting players, the length of offseason/midseason breaks) has significant implications regarding the maintenance of performance and reduction of injury. A specific example is the management of training/competition load of team-sport athletes during the domestic season, taking into account international and/or club competitions towards the latter end of that season [112].

4.2.4 Training–Injury Prevention Paradox

The results of this study support recent publications on the training–injury prevention paradox [103, 108, 131, 143, 145–148], whereby moderate relationships were identified between training loads and injury, yet there was disparity regarding the direction of findings (i.e. whether increased training load was associated with decreased or increased injury). For example, Brooks et al. [41] found that although higher external acute training volumes (<6.3 h/week vs. high > 9.1 h/week) did not necessarily increase elite rugby union match injury incidence, they were associated with increased severity of all injuries, especially during the latter part of the season and the second half of matches. Linear increases in acute internal training load (1245 AU) were associated with increased injury risk in a group of elite rugby union players [72] but decreased injury risk in 28 elite cricketers [57]. As well as linear training load–injury relationships, ‘U-shaped’ relationships (a phenomenon described in other scientific fields [114, 115]) were evident in several studies. For example, Dennis et al. [56] showed that bowling between 123 and 188 balls had lower injury risk than bowling <123/>188 balls. This U-shaped relationship may be due to low training loads failing to provide sufficient stimulus for attaining ‘acquired resistance’ to injury [56], and high training loads fatiguing athletes to the point where musculoskeletal tissue is less able to deal with the forces it encounters during activity [116, 117]. As with negative and positive linear training load–injury relationships, an inverted U-shaped relationship pattern [118, 119] was also elicited. For example, Arnason et al. [40] found moderate acute match and training exposures to have higher injury rates when compared with low and high exposures in elite soccer players. Collision injury rates were also higher in moderate-length recovery cycles (8 days) versus low (<8 days) and high (>8 days) recovery cycles in 51 elite rugby league players.

Another potential reason for the disparity between the findings of the relationship between training load and injury, such as the negative/positive linear and U/inverted-U patterns, is that the majority of studies report the magnitude of external load (e.g. distance or duration), but not the intensity. Increased external intensity (e.g, velocity of running and load lifted) and internal intensity (e.g. RPE and heart rate) will increase the stress placed on the body and therefore potentially increase injury risk [116, 120, 121]. For example, both Owen et al. [67] and Mallo and Dellal [55] showed increases in training intensity, measured via heart rate, to be associated with increased injury. Gabbett and Ullah [34] also found that when >9 m of sprinting (>7 m/s) per session was performed in elite rugby league players, this resulted in a 2.7-fold greater relative risk of sports performance non-contact, soft-tissue injury when compared with <9 m. In contrast to distances at sprinting velocity, it was found that sessions that had greater distances covered for very-low-intensity (0–1 m/s) and low-intensity running velocities (1–3 m/s) were associated with a reduced risk of time-loss non-contact injury. Low training intensity, such as that reported by Buist et al. [35] (i.e. “All were advised to run at a comfortable pace at which they could converse without losing breath”), may also account for increases in external training load of 23.7 %/week not being associated with increased injury versus 10 %/week increases. These lower intensities reported by Buist et al. and Gabbett et al. may have provided a recovery stimulus [8, 122] or allowed adaptation to occur without excessively fatiguing athletes so as to increase injury risk [116, 120].

4.2.5 Other Measures of Training Load

In addition to acute training load, other variables such as chronic load (previous 3- or 4-week total average load) [57, 85], monotony (total week load/SD of daily load) [74], strain (monotony × total week load) [74] and the acute:chronic workload ratio [57] may be more robust predictors of injury as they objectively account for accumulation of, and variability in, training load over time. As with acute load, both U-shaped and inverted-U-shaped relationships were present, along with positive and negative linear relationships for cumulative load. For example, Hulin et al. showed a linear protective effect for high chronic external load (previous 4-week average) [57], whereas Orchard et al. [58] showed higher 17-day external bowling loads (>100 overs) to increase injury risk 1.8-fold. Cross et al. [72] have also noted a U-shaped relationship with 4-week cumulative internal load, with an apparent increase in risk associated with higher internal loads (>8651 AU). In contrast, Colby et al. [85] found an inverted-U external load–injury relationship using 3-weekly total running distance; between 73 and 87 km was associated with 5.5-fold greater intrinsic (non-contact) injury risk in elite AF players when compared with low (<73 km) and high (>87 km) distances. The difference in patterns highlighted may be injury type-specific, as highlighted by Orchard et al. [59] in their review of the effects of cumulated load in 235 elite cricket fast bowlers over the longest period of study in the current literature (15 years). Previous 3-month load was found to be protective for tendon injury but injurious with respect to bone-stress injury. Increased previous season load was also associated with increased joint injuries but provided a protective effect for muscle injuries. Only one previous study found associations between illness and monotony and strain levels [74]. ‘Spikes’ in training monotony (>2.0) and strain levels were associated with rates of 77 and 89 %, respectively, in relation to illness [74]; however, no other studies reported any associations between injury/illness and monotony and strain levels [73, 106]. The results of our review have highlighted conflicting evidence for the use of monotony and strain. The weight of evidence favouring other metrics, such as change in acute training load [57, 72, 84, 87], and chronic training load [44, 45, 59] indicate that the role of monotony and strain in monitoring and injury prevention is not currently supported by the literature. A potential improvement on using acute and chronic load in isolation to predict injury is the acute:chronic workload ratio measure as it takes into account both acute and cumulative workload by expressing acute load relative to the cumulative load to which athletes are accustomed [57, 107]. The only study to use the acute:chronic workload ratio in this current review found that an acute:chronic ratio of 2.0, when compared with 0.5–0.99 for internal and external training load, was associated with 3.3- to 4.5-fold increased risk of non-contact injury in elite cricket fast bowlers [57].

4.3 Fatigue Markers and Injury

Only nine studies investigated fatigue–injury relationships, seven of which used perceptual wellness scales [37, 39, 80, 92–95]. Three studies used the Hassles and Uplifts Scale (HUS) [123] and showed greater daily hassles to be associated with increased injury in soccer players [37, 92, 93]. Findings from Kinchington et al. [94] support the notion that increased perceptual fatigue is related to increased injury as ‘poor’ scores on the Lower-Limb Comfort Index (LLCI) [124] (i.e. an increase in perceptual fatigue) were related to increased lower-limb injury (r = 0.88; p < 0.001) in elite contact-sport athletes. Laux et al. [95] further support the positive perceptual fatigue–injury relationship in their findings, which reported that increased fatigue and disturbed breaks, as well as decreased sleep-quality ratings, were related to increased injury. In contrast, Killen et al. [80] found increased perceptual fatigue (measured via worse ratings of perceptual sleep, food, energy, mood, and stress) was associated with decreased training injury during an elite rugby league preseason (r = 0.71; p = 0.08). Similarly, King et al. [39] showed increased perceptual fatigue (measured via various REST-Q factors) was associated with decreased sports performance training injuries and time-loss match injuries. These unexpected findings may be due to the fact that when players perceive themselves to be less fatigued they may train/play at higher intensities, increasing injury likelihood [80]. Of the seven studies mentioned above, six used perceptual wellness scales that take approximately 1–4 min to complete. Shorter wellness scales, such as the 1–10 ratings used by Killen et al. [80], that have <1 min completion time may be easier to implement [125]; therefore, there is great practical significance in their association with injury. However, the differences in the levels of evidence for validation between psychometric tools and ‘bespoke’ wellness questionnaires are important factors when considering their use in an applied setting. The benefits of using REST-Q compared with shorter wellness scale questionnaires reflect the fact that REST-Q has undergone extensive tests of rigor, whereas the latter are not as well-validated [39, 139]. Examination of subjective fatigue markers also indicates that current self-report measures fare better than their commonly used objective counterparts [139]. In particular, subjective well-being typically worsened with an acute increase in training load and chronic training load, whereas subjective well-being demonstrated improvement when acute training load decreased [139]. Sleep is a vital part of the body’s recovery process [126, 127], therefore it was surprising that only four studies investigated its relationship with injury [39, 80, 95, 96]. Three studies assessed sleep–injury relationships via sleep-quality ratings [39, 80, 95], with only Dennis et al. [96] investigating objective measures of sleep quality and quantity in relation to injury. No significant differences in sleep duration and efficiency were reported between the week of injury and 2 weeks prior to injury.

4.4 Individual Characteristics and Injury

An important finding from this review is that the individual characteristics of the athlete [36, 128] will significantly impact the internal load and stress placed on the body and thus the athlete’s susceptibility to injury. For example, an athlete’s aerobic fitness level will impact the internal workload they place on themselves. A recent study in AF players reported that for every 1 s slower on the 2-km time-trial performance, there was an increase in sRPE of 0.2. Therefore, the better the time trial performance of the individual, the easier the sessions of the same distances were rated [129]. Furthermore, older athletes or athletes with a previous injury are at a significantly greater risk of injury than other members of their population [27, 40, 84, 112, 130]. A potential reason for this finding is that it is likely older players have experienced a greater number of injuries across the course of their careers than the less experienced younger players [131]. Other individual characteristics, such as body composition, have a significant impact on injury. Zwerver et al. [128] reported a higher risk of RRI among persons with a body mass index (BMI) above 25 kg/m2, which is in agreement with Buist et al., who reported higher BMI scores in injured runners versus non-injured runners (BMI 27.6 vs. 24.8 kg/m2; p = 0.03) [35]. In addition, decreases in aerobic power and muscular power, as well as increases in skinfold thickness towards the end of the playing season, have been reported alongside increased match injury rates in recreational rugby league players [36]. Collectively, these findings suggest that the load/fatigue–injury associations described in this review are significantly influenced by the individual characteristics of the athlete, such as strength, fitness, body composition, playing level, age and injury history, as they determine the amount of internal workload and stress placed on the body and therefore the subsequent reduction or increase in the risk of injury [27, 35, 36, 40, 84, 112, 128–130].

4.5 Training Load and Illness

Monitoring of training load and illness accounted for 17 of 68 studies in this review, with the majority measuring salivary immunoglobulin (Ig) A (s-IgA) and/or cortisol (n = 13) as a marker of immune function. The following section discusses the relationship between monitoring training load and key phases identified with increasing the susceptibility of the athlete to illness.

4.5.1 Intensification of Training Load and Illness

Internal training load, measured via sRPE, explained between 77 and 89 % of illness prevalence over a period of 6 months to 3 years in a mixed-ability group of swimmers [74]. Piggott et al. [68] identified that if weekly internal training load was increased by more than 10 %, this explained 40 % of illness and injury in the subsequent 7 days. This could be associated with elevated psychological stressors from increased internal training load and factors that were significantly associated with signs and symptoms of injury and illness [50]. Cunniffe et al. [54] reported that periods of increased training intensity and reduced game activity just prior to competition resulted in peaks in upper respiratory tract infection (URTI) in elite rugby union players. Despite the consensus that intensified periods in load or reduced game/training activity increased URTI, there was a contradiction in the literature as to whether increases in external load and markers of immune function were associated with the risk of illness [69, 97–99]. For example, Fricker et al. [69] found no significant differences in mean weekly and monthly running distances in elite male distance runners who self-reported illness versus those who did not. With the exception of Fricker et al. [69] and Veugelers et al. [70], the majority of studies that have found no association between load and illness have used mixed ability or disability populations, only measured external load, and used self-reported illness. Therefore, as the individual responses to load will vary dramatically with athlete training level, this may impact on illness rates. Furthermore, having athletes self-report illnesses rather than being diagnosed by a team doctor, and self-reporting load rather than having it measured objectively in terms of external load, may have a significant impact on the results (depending on the individual’s perception of what illness is and the potential for over- or underreporting of the amount of training exposure).

4.6 Fatigue and Illness

Mackinnon and Hooper [49] found the incidence of URTI to be lower in athletes who reported increased perceptual fatigue via the 1–7 wellness rating scales (sleep quality, stress and feelings of fatigue). This study concurs with Killen et al. [80] who found lower injury rates in rugby league athletes who reported increased perceptual fatigue via perceptual wellness scales of 1–10. This is further supported by Veugelers et al. [70] who found that increased perceptual fatigue in their elite AF high internal training load group caused a protective effect against non-contact injury and illness when compared with the low internal training load group. This reduced injury/illness could be possibly due to greater fatigue resulting in a reduction in intensity, as a result of the physiological and psychological stress placed on the body. Another possibility is that the high internal training load group adapted to the load and were therefore able to tolerate higher internal load at a reduced risk of injury/illness. Finally, the lower training load associated with illness may be due to the fact that athletes could have had their training load modified as a result of being ill earlier in the week.

4.6.1 Markers of Immune Function and Illness

A primary finding was the association between s-IgA reduction and increased salivary cortisol due to periods of greater training intensities or reduced game/training activity (preseason, deload weeks), resulting in significant increases in URTI [54, 81, 83, 87, 90, 98, 101]. For example, rugby players who sustained a URTI, when compared with players who did not, had a reduction in s-IgA by 15 % [54]. However, there was contradiction within the literature on whether reduction in s-IgA was linked with URTI as Ferrari et al. [73] found no significant association between training load phase, s-IgA and sustained URTI in sub-elite male road cyclists. This is in agreement with Leicht et al. [99] who found secretion rate had no significant relationship with s-IgA responses and subsequent occurrence of upper respiratory symptoms in elite wheelchair rugby athletes.

4.7 The Latent Period of Illness

A key phase identified with illness was the latency period, which can be defined as the time interval between a stimulus and a reaction [13]. At the onset of a stimulus, such as a spike in training load, reductions can occur in s-IgA or the elevation of salivary cortisol levels for an extended period of 7–21 days. Failure of these markers to return to baseline values during this time period was associated with a 50 % increased risk of URTI [68, 90, 100], which would explain why the majority of illnesses reported in this review occurred during or after week 4 of intensified training [81, 83, 90, 100, 101]. Athletes who do not recover from the initial spike in training load experience an extended period of suppression of immune function, placing the athlete at a significant risk of illness. This finding has implications for practitioners; first, to avoid unplanned spikes in training load and, second, to adjust training loads when an athlete is immunosuppressed to allow the markers of immune system to return to baseline values.

4.8 Limitations

Of the 68 studies included in this review, 39 were only highlighted from the search criteria, with an additional 29 included from searching references of the identified studies, which could have led to the risk of studies not being included. Furthermore, during the manuscript review process, a number of key studies and reviews were published that would have satisfied the inclusion criteria and provided the most up-to-date research [13, 131, 144, 146, 148]. For example, several papers readdressed terminology issues in relation to use of the training stress balance measure, and defined it as the acute:chronic workload ratio [108, 143, 145, 146, 148].

4.9 Directions for Future Research

4.9.1 Definition of Load, Fatigue, Injury and Soreness

Even with the relatively small amount of research undertaken regarding longitudinal monitoring, the research detailed in this review clearly highlights that relationships exist between longitudinally monitored training load and fatigue variables and injury or illness. Further research is now required to establish a common language for load, fatigue, injury and illness, as well as exploring these relationships within more specialised populations, and with a wider range of load, fatigue, injury and illness measures.

4.9.2 Training Load and Fatigue Interactions

A clear gap identified in the literature from the current review is the lack of assessment of load–fatigue interactions in association with injury/illness, as the fatigue state of an individual will essentially define the load they can tolerate before injury/illness risk increases [121, 132]. For example, a case study on a female masters track and field athlete found that, despite no increase in load, signs of overreaching increased significantly due to external psychological stress [133]. Therefore, further study is needed combining both load and fatigue in analyses, as per the study by Main et al. [50].

4.9.3 Monitoring of Neuromuscular Function

The lack of monitoring of NMF variables in respect to injury and illness (n = 0 of the 68 studies included within this review) was highly surprising given the strong theoretical rationale for its association with injury risk [120, 134] and its common use in the recovery and acute monitoring literature [7, 135, 136] in light of its strong association with performance variables such as speed [137]. Future research should investigate the relationship between NMF variables and training load, and their consequent associations between injury and illness; however, there is currently no high-level evidence to support its use in monitoring as mechanism-based reasoning represents the lowest form of evidence [151].

4.9.4 Perceptual Wellness

Of the 13 studies that used perceptual wellness measures, 11 adopted inventories that take approximately 1–4 min to complete. In a busy elite athlete environment or, conversely, a recreational/sub-elite environment where resources are stretched, inventories of such length may be impractical to implement [125]. Shorter wellness scales, such as the 1–10 rating scales used by Killen et al. [80] and the 1–7 rating scales used by MacKinnon and Hooper [49], that have <1 min completion time may be easier to implement [125]. Consequently, more investigation is needed using shortened perceptual wellness scales as there is great practical significance in their association with injury; however, these should undergo suitable tests of their validation in order to ensure they are able to detect the intended domains and constructs in a rigorous manner.

4.9.5 Latent Period of Injury

This review highlighted a lack of studies reporting the latency period of injury. Future studies evaluating the relationship between training load and time frame of injury response will provide information that allows practitioners to adjust training loads during the injury time frame as an injury prevention measure [149].

4.9.6 Injury and Illness

Monitoring of load–fatigue and injury and illness accounted for only 6 of the 68 studies in this review. Although studies investigating both injury and illness accounted for <10 % of the research in this review, the majority (five of six) were above the average quality score (13 vs. 11) (Table 3). Future research should therefore look to measure both injury and illness, not only because of the higher quality scores accorded to studies that did so in this review but also because of the different relationships they will highlight between load and fatigue markers and subsequent injury risk and performance outcomes [29, 110, 138].

4.9.7 Monitoring of Female Athletes

This review has highlighted a lack of studies with female athletes (13 of 68 studies). Further research is essential to understand how the hormonal fluctuations during various stages of the menstrual cycle may influence tolerance to training load, and the subsequent effects on markers of immune function and fatigue markers. This will provide valuable information on load and fatigue and inform the periodization of female athletes to help reduce the risk of injury/illness during periods of greater susceptibility.

4.9.8 Monitoring of Adolescent Athletes

Although this review has highlighted a lack of studies in adolescents, a recent review found that the relationship between workload, physical performance, injury and illness in adolescent male football players was non-linear and that the individual response to a given workload is highly variable [150]. Further investigation into the effects of maturation and training loads, and their relationship between performance, injury and illness, would be invaluable for practitioners working with pediatric athletes.

4.9.9 Session Rate of Perceived Exertion

The widespread use of sRPE as a measure of internal training load is most likely due to its relative ease of implementation compared with other internal load measures, such as heart rate or external load measures from GPS systems [17]. Indeed, a recent review has highlighted that current self-report measures fare better than their commonly used objective counterparts [139]. To advance the use of self-reported measures, splitting sRPE into internal respiratory and muscular load is also warranted to observe how such discrepancies affect injury/illness [140, 141]. The injury/illness mechanisms will differ between these two systems and such differential measurement of internal load will allow more specified information for prevention and recovery [140].

4.9.10 Severity of Injury

Only a small number of studies in this review investigated the severity of injury [38, 41, 45–47, 67, 77] and illness [75, 82], with only two studies quantifying the contact elements of training/competition [64, 65]. Given contact injuries are often more severe than non-contact injuries [41], and the amount of time lost from training/competition is one of the major negative impacts of injury/illness, more studies are needed detailing load/fatigue–injury/illness severity and the contact aspects of training/competition. Information on injury/illness severity relationships will allow coaches and support staff to make even more informed decisions about the risk of going beyond thresholds of load/fatigue, such that they may accept an increased risk of sports performance or low-severity injuries, but not accept increases in the risk of more severe injuries.

5 Conclusions

This paper provides a comprehensive review of the literature that has reported the monitoring of longitudinal training load and fatigue and its relationship with injury and illness. The current findings highlight disparity in the terms used to define training load, fatigue, injury and illness, as well as a lack of investigation of fatigue and training load interactions. Key stages of training and competition where the athlete is at an increased risk of injury/illness risk were identified. These included periods of training load intensification, accumulation of training load and acute change in load. Modifying training load during these periods may help reduce the potential for injury and illness. Measures such as acute change in training load, cumulative training load, monotony, strain and acute:chronic workload ratio may better predict injury/illness than simply the use of acute training load. Acute change in training load showed a clear positive relationship with injury, with other load/fatigue measures producing mixed associations, particularly acute and cumulative training load. The measure most clearly associated with illness was s-IgA, while relationships for acute training load, monotony, strain and perceptual wellness were mixed. The prescription of training load intensity and individual characteristics (e.g. fitness, body composition, playing level, injury history and age) may account for the mixed findings reported as they impact the internal training load placed on the athlete’s body and, therefore, susceptibility to injury/illness.

References

Cunniffe B, Hore AJ, Whitcombe DM, et al. Time course of changes in immuneoendocrine markers following an international rugby game. Eur J Appl Physiol. 2010;108(1):113–22.

McLellan CP, Lovell DI, Gass GC. Markers of postmatch fatigue in professional rugby league players. J Strength Cond Res. 2011;25(4):1030–9.

Takarada Y. Evaluation of muscle damage after a rugby match with special reference to tackle plays. Br J Sports Med. 2003;37(5):416–9.

McLellan CP, Lovell DI, Gass GC. Biochemical and endocrine responses to impact and collision during elite rugby league match play. J Strength Cond Res. 2011;25(6):1553–62.

McLellan CP, Lovell DI, Gass GC. Creatine kinase and endocrine responses of elite players pre, during, and post rugby league match play. J Strength Cond Res. 2010;24(11):2908–19.

Cunniffe B, Proctor W, Baker JS, et al. An evaluation of the physiological demands of elite rugby union using global positioning system tracking software. J Strength Cond Res. 2009;23(4):1195–203.

West DJ, Finn CV, Cunningham DJ, et al. Neuromuscular function, hormonal, and mood responses to a professional rugby union match. J Strength Cond Res. 2014;28(1):194–200.

Gill ND, Beaven C, Cook C. Effectiveness of post-match recovery strategies in rugby players. Br J Sports Med. 2006;40(3):260–3.

Chiu LZ, Barnes JL. The fitness-fatigue model revisited: implications for planning short-and long-term training. Strength Cond J. 2003;25(6):42–51.

Gamble P. Physical preparation for elite-level rugby union football. Strength Cond J. 2004;26(4):10–23.

Gamble P. Periodization of training for team sports athletes. Strength Cond J. 2006;28(5):56–66.

Impellizzeri FM, Rampinini E, Marcora SM. Physiological assessment of aerobic training in soccer. J Sports Sci. 2005;23(6):583–92.

Drew MK, Finch CF. The relationship between training load and injury, illness and soreness: a systematic and literature review. Sports Med. 2016;46(6):861–83.

Allen DG, Lamb GD, Westerblad H. Skeletal muscle fatigue: cellular mechanisms. Physiol Rev. 2008;88(1):287–332.

Meeusen R, Duclos M, Foster C, et al. Prevention, diagnosis, and treatment of the overtraining syndrome: joint consensus statement of the European College of Sport Science and the American College of Sports Medicine. Med Sci Sports Exerc. 2013;45(1):186–205.

Johnston RD, Gabbett TJ, Jenkins DG. Influence of an intensified competition on fatigue and match performance in junior rugby league players. J Sci Med Sport. 2013;16(5):460–5.

Halson SL. Monitoring training load to understand fatigue in athletes. Sports Med. 2014;44(2):S139–47.

Timpka T, Jacobsson J, Bickenbach J, et al. What is a sports injury? Sports Med. 2014;44(4):423–8.

Timpka T, Jacobsson J, Ekberg J, et al. Meta-narrative analysis of sports injury reporting practices based on the Injury Definitions Concept Framework (IDCF): a review of consensus statements and epidemiological studies in athletics (track and field). J Sci Med Sport. 2015;18(6):643–50.

Noakes TD. Fatigue is a brain-derived emotion that regulates the exercise behavior to ensure the protection of whole body homeostasis. Front Physiol. 2012;3:82.

Mann TN, Lamberts RP, Lambert MI. High responders and low responders: Factors associated with individual variation in response to standardized training. Sports Med. 2014;44(8):1113–24.

McLean BD, Petrucelli C, Coyle EF. Maximal power output and perceptual fatigue responses during a Division I female collegiate soccer season. J Strength Cond Res. 2012;26(12):3189–96.

Williams S, Trewartha G, Kemp S, et al. A meta-analysis of injuries in senior men’s professional rugby union. Sports Med. 2013;43(10):1043–55.

Dupont G, McCall A, Prieur F, et al. Faster oxygen uptake kinetics during recovery is related to better repeated sprinting ability. Eur J Appl Physiol. 2010;110(3):627–34.

Taylor T, West DJ, Howatson G, et al. The impact of neuromuscular electrical stimulation on recovery after intensive, muscle damaging, maximal speed training in professional team sports players. J Sci Med Sport. 2015;18(3):328–32.

Gabbett TJ. The development and application of an injury prediction model for noncontact, soft-tissue injuries in elite collision sport athletes. J Strength Cond Res. 2010;24(10):2593–603.

Brooks JH, Fuller C, Kemp S, et al. Epidemiology of injuries in english professional rugby union: part 1 match injuries. Br J Sports Med. 2005;39(10):757–66.

Podlog L, Buhler CF, Pollack H, et al. Time trends for injuries and illness, and their relation to performance in the National Basketball Association. J Sci Med Sport. 2015;18(3):278–82.

Raysmith BP, Drew MK. Performance success or failure is influenced by weeks lost to injury and illness in elite Australian Track and Field athletes: a 5-year prospective study. J Sci Med Sport. 2016 (Epub 7 Jan 2016).

Higgins JP, Green S. Cochrane handbook for systematic reviews of interventions. Chichester: The Cochrane Library; 2006.

Hume PA, Lorimer A, Griffiths PC, et al. Recreational snow-sports injury risk factors and countermeasures: a meta-analysis review and Haddon matrix evaluation. Sports Med. 2015;45:1175–90.

Bizzini M, Childs JD, Piva SR, et al. Systematic review of the quality of randomized controlled trials for patellofemoral pain syndrome. J Orthop Sports Phys Ther. 2003;33(1):4–20.

Maher CG, Sherrington C, Herbert RD, et al. Reliability of the PEDro scale for rating quality of randomized controlled trials. Phys Ther. 2003;83(8):713–21.

Gabbett TJ, Ullah S. Relationship between running loads and soft-tissue injury in elite team sport athletes. J Strength Cond Res. 2012;26(4):953–60.

Buist I, Bredeweg SW, Van Mechelen W, et al. No effect of a graded training program on the number of running-related injuries in novice runners a randomized controlled trial. Am J Sports Med. 2008;36(1):33–9.

Gabbett TJ. Changes in physiological and anthropometric characteristics of rugby league players during a competitive season. J Strength Cond Res. 2005;19(2):400–8.

Ivarsson A, Johnson U. Psychological factors as predictors of injuries among senior soccer players: a prospective study. J Sports Sci Med. 2010;9(2):347.

Carling C, Le Gall F, McCall A, et al. Squad management, injury and match performance in a professional soccer team over a championship-winning season. Eur J Sports Sci. 2015;15(7):573–81.

King D, Clark T, Kellmann M. Changes in stress and recovery as a result of participating in a premier rugby league representative competition. Int J Sports Sci Coach. 2010;5(2):223–37.

Arnason A, Sigurdsson SB, Gudmundsson A, et al. Risk factors for injuries in football. Am J Sports Med. 2004;32(1 Suppl):5S–16S.

Brooks JH, Fuller CW, Kemp SP, et al. An assessment of training volume in professional rugby union and its impact on the incidence, severity, and nature of match and training injuries. J Sports Sci. 2008;26(8):863–73.

Duckham RL, Brooke-Wavell K, Summers GD, et al. Stress fracture injury in female endurance athletes in the United Kingdom: a 12-month prospective study. Scand J Med Sci Sports. 2015;25(6):854–9.

Dvorak J, Junge A, Chomiak J, et al. Risk factor analysis for injuries in football players possibilities for a prevention program. Am J Sports Med. 2000;28(5):S69–74.

Ekstrand J, Waldén M, Hägglund M. A congested football calendar and the wellbeing of players: correlation between match exposure of European footballers before the World Cup 2002 and their injuries and performances during that World Cup. Br J Sports Med. 2004;38(4):493–7.

aus der Fünten K, Faude O, Lensch J, et al. Injury characteristics in the German professional male soccer leagues after a shortened winter break. J Athl Train. 2014;49(6):786–93.

Hägglund M, Waldén M, Ekstrand J. Exposure and injury risk in Swedish elite football: a comparison between seasons 1982 and 2001. Scand J Med Sci Sports. 2003;13(6):364–70.

Hägglund M, Waldén M, Ekstrand J. Injury incidence and distribution in elite football: a prospective study of the Danish and the Swedish top divisions. Scand J Med Sci Sports. 2005;15(1):21–8.

Hausswirth C, Louis J, Aubry A, et al. Evidence of disturbed sleep and increased illness in overreached endurance athletes. Med Sci Sports Exerc. 2014;46(5):1036–45.

Mackinnon LT, Hooper S. Plasma glutamine and upper respiratory tract infection during intensified training in swimmers. Med Sci Sports Exerc. 1996;28(3):285–90.

Main L, Landers G, Grove J, et al. Training patterns and negative health outcomes in triathlon: longitudinal observations across a full competitive season. J Sports Med Phys Fit. 2010;50(4):475–85.

van Mechelen W, Twisk J, Molendijk A, et al. Subject-related risk factors for sports injuries: a 1-yr prospective study in young adults. Med Sci Sports Exerc. 1996;28(9):1171–9.

Viljoen W, Saunders CJ, Hechter GD, et al. Training volume and injury incidence in a professional rugby union team. S Afr J Sports Med. 2009;21(3):97–101.

Bengtsson H, Ekstrand J, Hägglund M. Muscle injury rates in professional football increase with fixture congestion: an 11-year follow-up of the UEFA Champions League injury study. Br J Sports Med. 2013;47(12):743–7.

Cunniffe B, Griffiths H, Proctor W, et al. Mucosal immunity and illness incidence in elite rugby union players across a season. Med Sci Sports Exerc. 2011;43(3):388–97.

Mallo J, Dellal A. Injury risk in professional football players with special reference to the playing position and training periodization. J Sports Med Phys Fit. 2012;52(6):631–8.

Dennis R, Farhart R, Goumas C, et al. Bowling workload and the risk of injury in elite cricket fast bowlers. J Sci Med Sport. 2003;6(3):359–67.

Hulin BT, Gabbett TJ, Blanch P, et al. Spikes in acute workload are associated with increased injury risk in elite cricket fast bowlers. Br J Sports Med. 2014;48(8):708–12.

Orchard JW, Blanch P, Paoloni J, et al. Fast bowling match workloads over 5–26 days and risk of injury in the following month. J Sci Med Sport. 2015;18(1):26–30.

Orchard JW, Blanch P, Paoloni J, et al. Cricket fast bowling workload patterns as risk factors for tendon, muscle, bone and joint injuries. Br J Sports Med. 2015;49(16):1064–8.

Orchard JW, James T, Portus M, et al. Fast bowlers in cricket demonstrate up to 3-to 4-week delay between high workloads and increased risk of injury. Am J Sports Med. 2009;37(6):1186–92.

Saw R, Dennis RJ, Bentley D, et al. Throwing workload and injury risk in elite cricketers. Br J Sports Med. 2011;45:805–8.

Carling C, Le Gall F, Dupont G. Are physical performance and injury risk in a professional soccer team in match-play affected ower a prolonged period of fixture congestion? Int J Sports Med. 2012;33(1):36–42.

Dellal A, Lago-Peñas C, Rey E, et al. The effects of a congested fixture period on physical performance, technical activity and injury rate during matches in a professional soccer team. Br J Sports Med. 2015;49(6):390–4.

Gabbett TJ, Jenkins D, Abernethy B. Physical collisions and injury during professional rugby league skills training. J Sci Med Sport. 2010;13(6):578–83.

Gabbett TJ, Jenkins DG, Abernethy B. Physical collisions and injury in professional rugby league match-play. J Sci Med Sport. 2011;14(3):210–5.

Murray NB, Gabbett TJ, Chamari K. Effect of different between-match recovery times on the activity profiles and injury rates of national rugby league players. J Strength Cond Res. 2014;28(12):3476–83.

Owen AL, Forsyth JJ, del Wong P, et al. Heart rate-based training intensity and its impact on injury incidence among elite-level professional soccer players. J Strength Cond Res. 2015;29(6):1705–12.

Piggott B, Newton M, McGuigan M. The relationship between training load and incidence of injury and illness over a pre-season at an Australian football league club. J Austr Strength Cond Res. 2008;17(3):4–17.

Fricker PA, Pyne DB, Saunders PU, et al. Influence of training loads on patterns of illness in elite distance runners. Clin J Sports Med. 2005;15(4):246–52.

Veugelers KR, Young WB, Fahrner B, et al. Different methods of training load quantification and their relationship to injury and illness in elite Australian football. J Sci Med Sport. 2016;19(1):24–8.

Brink M, Visscher C, Arends S, et al. Monitoring stress and recovery: New insights for the prevention of injuries and illnesses in elite youth soccer players. Br J Sports Med. 2010;44:809–15.

Cross MJ, WIlliams S, Trewartha G, et al. The influence of in-season training loads on injury risk in professional rugby union. Int J Sports Physiol Perform. 2016;11(3):350–5.

Ferrari HG, Gobatto CA, Manchado-Gobatto FB. Training load, immune system, upper respiratory symptoms and performance in well-trained cyclists throughout a competitive season. Biol Sport. 2013;30(4):289.

Foster C. Monitoring training in athletes with reference to overtraining syndrome. Med Sci Sports Exerc. 1998;30(7):1164–8.

Freitas CG, Aoki MS, Franciscon CA, et al. Psychophysiological responses to overloading and tapering phases in elite young soccer players. Pediatr Exerc Sci. 2014;26(2):195–202.

Gabbett TJ. Influence of training and match intensity on injuries in rugby league. J Sports Sci. 2004;22(5):409–17.

Gabbett TJ. Reductions in pre-season training loads reduce training injury rates in rugby league players. Br J Sports Med. 2004;38(6):743–9.

Gabbett TJ, Domrow N. Relationships between training load, injury, and fitness in sub-elite collision sport athletes. J Sports Sci. 2007;25(13):1507–19.

Gabbett TJ, Jenkins DG. Relationship between training load and injury in professional rugby league players. J Sci Med Sport. 2011;14(3):204–9.

Killen NM, Gabbett TJ, Jenkins DG. Training loads and incidence of injury during the preseason in professional rugby league players. J Strength Cond Res. 2010;24(8):2079–84.

Moreira A, Arsati F, de Oliveira Lima-Arsati YB, et al. Monitoring stress tolerance and occurrences of upper respiratory illness in basketball players by means of psychometric tools and salivary biomarkers. Stress Health. 2011;27(3):166–72.

Moreira A, de Moura NR, Coutts A, et al. Monitoring internal training load and mucosal immune responses in futsal athletes. J Strength Cond Res. 2013;27(5):1253–9.

Putlur P, Foster C, Miskowski JA, et al. Alteration of immune function in women collegiate soccer players and college students. J Sports Sci Med. 2004;3(4):234.

Rogalski B, Dawson B, Heasman J, et al. Training and game loads and injury risk in elite Australian footballers. J Sci Med Sport. 2013;16(6):499–503.

Colby M, Dawson B, Heasman J, et al. Accelerometer and GPS-derived running loads and injury risk in elite Australian footballers. J Strength Cond Res. 2014;28(8):2244–52.

Nielsen RO, Cederholm P, Buist I, et al. Can GPS be used to detect deleterious progression in training volume among runners? J Strength Cond Res. 2013;27(6):1471–8.

Gleeson M, Bishop N, Oliveira M, et al. Respiratory infection risk in athletes: Association with antigen-stimulated IL-10 production and salivary IgA secretion. Scand J Med Sci Sports. 2012;22(3):410–7.

Baecke J, Burema J, Frijters J. A short questionnaire for the measurement of habitual physical activity in epidemiological studies. Am J Clin Nutr. 1982;36(5):936–42.

Fahlman MM, Engels H-J. Mucosal IgA and URTI in American college football players: a year longitudinal study. Med Sci Sports Exerc. 2005;37(3):374–80.

Neville V, Gleeson M, Folland JP. Salivary IgA as a risk factor for upper respiratory infections in elite professional athletes. Med Sci Sports Exerc. 2008;40(7):1228–36.

Brink M, Visscher C, Coutts A, et al. Changes in perceived stress and recovery in overreached young elite soccer players. Scand J Med Sci Sports. 2012;22(2):285–92.

Ivarsson A, Johnson U, Podlog L. Psychological predictors of injury occurrence: a prospective investigation of professional Swedish soccer players. J Sport Rehabil. 2013;22(1):19–26.

Ivarsson A, Johnson U, Lindwall M, et al. Psychosocial stress as a predictor of injury in elite junior soccer: a latent growth curve analysis. J Sci Med Sport. 2014;17(4):366–70.

Kinchington M, Ball K, Naughton G. Monitoring of lower limb comfort and injury in elite football. J Sports Sci Med. 2010;9(4):652.

Laux P, Krumm B, Diers M, et al. Recovery-stress balance and injury risk in professional football players: a prospective study. J Sports Sci. 2015;33(20):2140–8.

Dennis J, Dawson B, Heasman J, et al. Sleep patterns and injury occurrence in elite Australian footballers. J Sci Med Sport. 2015;19(2):113–6.

Gleeson M, Hall ST, McDonald WA, et al. Salivary IgA subclasses and infection risk in elite swimmers. Immunol Cell Biol. 1999;77(4):351–5.

Gleeson M, McDonald W, Pyne D, et al. Immune status and respiratory illness for elite swimmers during a 12-week training cycle. Int J Sports Med. 2000;21(4):302–7.

Leicht C, Bishop N, Paulson TA, et al. Salivary immunoglobulin A and upper respiratory symptoms during 5 months of training in elite tetraplegic athletes. Int J Sports Physiol Perform. 2012;7:210–7.

Moreira A, Mortatti AL, Arruda AF, et al. Salivary IgA response and upper respiratory tract infection symptoms during a 21-week competitive season in young soccer players. J Strength Cond Res. 2014;28(2):467–73.

Mortatti AL, Moreira A, Aoki MS, et al. Effect of competition on salivary cortisol, immunoglobulin A, and upper respiratory tract infections in elite young soccer players. J Strength Cond Res. 2012;26(5):1396–401.

Fuller CW, Molloy MG, Bagate C, et al. Consensus statement on injury definitions and data collection procedures for studies of injuries in rugby union. Br J Sports Med. 2007;41(5):328–31.

Gabbett TJ, Ullah S, Finch CF. Identifying risk factors for contact injury in professional rugby league players: application of a frailty model for recurrent injury. J Sci Med Sport. 2012;15(6):496–504.

Barrett B, Brown R, Mundt M, et al. The Wisconsin Upper Respiratory Symptom Survey is responsive, reliable and valid. J Clin Epidemiol. 2005;58(6):609–17.

Gabbett TJ, Domrow N. Risk factors for injury in subelite rugby league players. Am J Sports Med. 2005;33(3):428–34.

Anderson L, Triplett-Mcbride T, Foster C, et al. Impact of training patterns on incidence of illness and injury during a women’s collegiate basketball season. J Strength Cond Res. 2003;17(4):734–8.

Banister E, Calvert T, Savage M, et al. A systems model of training for athletic performance. Aust J Sports Med. 1975;7(3):57–61.

Blanch P, Gabbett TJ. Has the athlete trained enough to return to play safely? The acute: chronic workload ratio permits clinicians to quantify a player’s risk of subsequent injury. Br J Sports Med. 2016;50(80):471–5.

Orchard J, Hoskins W. For debate: Consensus injury definitions in team sports should focus on missed playing time. Clin J Sports Med. 2007;17(3):192–6.

Finch CF, Cook J. Categorising sports injuries in epidemiological studies: the subsequent injury categorisation (SIC) model to address multiple, recurrent and exacerbation of injuries. Br J Sports Med. 2014;48(17):1276–80.

Balazs L, George C, Brelin C, et al. Variation in injury incidence rate reporting: the case for standardization between American and non-American researchers. Curr Orthop Pract. 2015;26(4):395–402.

Ekstrand J, Hägglund M, Waldén M. Epidemiology of muscle injuries in professional football (soccer). Am J Sports Med. 2011;39(6):1226–32.

Häkkinen K. Neuromuscular adaptation during strength training, aging, detraining, and immobilization. Crit Rev Phys Rehabil Med. 1994;63:161–98.

Frijters P, Beatton T. The mystery of the U-shaped relationship between happiness and age. J Econ Behav Org. 2012;82(2):525–42.

Shaper AG, Wannamethee G, Walker M. Alcohol and mortality in British men: explaining the U-shaped curve. Lancet. 1988;332(8623):1267–73.