Abstract

Trial-based economic evaluations are increasingly being conducted to support healthcare decision-making. When analysing trial-based economic evaluation data, different methodological challenges may be encountered, including (i) missing data, (ii) correlated costs and effects, (iii) baseline imbalances and (iv) skewness of costs and/or effects. Despite the broad range of methods available to account for these methodological challenges in effectiveness studies, they may not always be directly applicable in trial-based economic evaluations where costs and effects are analysed jointly, and more than one methodological challenge typically needs to be addressed simultaneously. The use of inappropriate methods can bias results and conclusions regarding the cost-effectiveness of healthcare interventions. Eventually, such low-quality evidence can hamper healthcare decision-making, which may in turn result in a waste of already scarce healthcare resources. Therefore, this tutorial aims to provide step-by-step guidance on how to combine appropriate statistical methods for handling the abovementioned methodological challenges using a ready-to-use R script. The theoretical background of the described methods is provided, and their application is illustrated using a simulated trial-based economic evaluation.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Various methodological challenges may exist when conducting trial-based economic evaluations, including missing data, correlated costs and effects, baseline imbalances, and skewness of costs and/or effects. |

Using inappropriate methods to handle the aforementioned methodological challenges can bias results and mislead healthcare decision-making. |

This tutorial provides step-by-step guidance on how to combine appropriate statistical methods for handling the aforementioned methodological challenges in trial-based economic evaluations using a ready-to-use R script. |

1 Introduction

As healthcare costs are increasing, healthcare decision-makers around the world face tough decisions regarding the allocation of already scarce healthcare resources [1]. To inform such allocation decisions, healthcare decision-makers more and more call upon researchers to demonstrate that healthcare interventions are not only effective but also cost-effective. In other words, researchers are asked to conduct economic evaluations to assess whether a healthcare intervention provides ‘good value for money’ compared with other interventions that could be reimbursed with the same resources. As a consequence, many grant organizations (e.g. the National Institute for Health and Care Research (NIHR) in the United Kingdom and ZonMw in the Netherlands) require that economic evaluations are conducted along with clinical studies that they fund [2, 3].

Economic evaluations can either be conducted on the basis of decision-analytic models or alongside clinical trials. Model-based economic evaluations are conducted on the basis of multiple sources of information from the literature (e.g. clinical trials, cohorts, systematic reviews and metanalyses). In this tutorial, we focus on trial-based economic evaluations, which are economic evaluations conducted alongside randomized controlled trials (RCTs), also referred to as ‘piggyback’ studies [4,5,6]. Within such studies, the additional costs and effects of a new healthcare intervention are compared with control or usual care which can also be an active intervention [4, 5]. An important advantage of trial-based economic evaluations is that random allocation increases the internal validity of results, which allows for making inferences about the cost-effectiveness of healthcare interventions [7,8,9]. To increase the external validity of results, pragmatic trial-based economic evaluations are preferably conducted, meaning that they resemble daily practice as much as possible. Moreover, the marginal cost of conducting an economic evaluation alongside an already funded clinical trial is relatively low, thereby increasing research efficiency.

Several studies indicate, however, that the methodological quality of published trial-based economic evaluations is generally suboptimal [9,10,11,12,13]. Poor methodological quality may lead to biased results, wrong conclusions and, eventually, a waste of already scarce healthcare resources. Therefore, improvement in the statistical analysis of trial-based economic evaluations is much needed. When analysing trial-based economic evaluation data, different methodological challenges may be encountered, including (i) missing data [14, 15], (ii) correlated costs and effects [16, 17], (iii) baseline imbalances [18,19,20], and (iv) skewness of costs and/or effects [21, 22]. Although well-established statistical methods are available in the literature for handling these challenges in effectiveness studies, they may not always be directly applicable in trial-based economic evaluations in which costs and effects are analysed jointly, and more than one methodological challenge typically needs to be addressed simultaneously.

Therefore, this tutorial aims to provide step-by-step guidance on how to combine statistical methods available in the literature to handle the abovementioned methodological challenges in the context of trial-based economic evaluations using a ready-to-use R script. It is presented in two parts. In Part 1, the reasoning behind the choices made regarding the statistical methods is explained. In Part 2, step-by-step guidance is be provided to illustrate how to implement the discussed statistical methods in R using a simulated trial-based economic evaluation.

2 Part 1: Statistical Methods to Handle Methodological Challenges

2.1 Missing Data

Missing data are oftentimes unavoidable in clinical research and are a particular concern in trial-based economic evaluations [15]. This is because total costs and quality-adjusted life years (QALYs) are typically the sum of numerous cost components and utility values, respectively [15]. Hence, one missing cost component or utility value will already result in an incomplete case. A distinct type of missing data is censoring, which occurs when time-to-event outcomes, such as survival time, are only partially observed [23]; for example, we know that a participant survived until 9 months follow-up, but due to missing data at 12 months, we do not know whether they died between 9 months and 12 months.

Simply excluding incomplete cases from the analysis can produce invalid cost-effect estimates if excluded cases are systematically different from the included ones [15, 24]. Naïve methods, such as mean imputation of missing values and last observation carried forward, are discouraged because they do not account for the uncertainty in the imputed observations [15]. More robust methods for handling missing and/or censored data are multiple imputation (MI), inverse probability weighting (IPW), likelihood-based models and Bayesian models [25]. Of them, MI is most frequently used [25, 26] and is a valid method when missing data are related to observed data (e.g. missing at random, MAR) in economic evaluations [27,28,29].

In this tutorial, we opted for MI for a number of reasons. First, MI allows for a separate specification of the imputation and analysis models [14]. Such a separate specification avoids the inclusion of unnecessary variables in both models, thereby providing more precise imputed values and cost-effective estimates [30]. Another reason is that MI allows for missing data to be imputed at a disaggregate level (e.g. resources consumed, utility value), avoiding loss of information that may occur when data are imputed and/or analysed at the total cost and QALY level [14]. To illustrate, MI allows for the inclusion of partially missing cases in the analysis model. When using IPW, for example, partially observed cases are discarded after the weighting, which leads to a loss of information and power [24]. For this reason, MI is generally more efficient than IPW [24]. Moreover, despite MI being shown to be unnecessary when using likelihood-based models for analysing effects [31], for analysing costs MI has been shown to produce more accurate estimates [32]. A detailed description of the MI procedure is available in ESM_1.

2.2 Skewness of Costs and Effects

Cost data are typically heavily right-skewed because costs are naturally bounded by zero and there are typically relatively few patients with (very) high costs. QALY values can also be skewed; for example, when there are relatively few patients with a (very) low health-related quality of life. Consequently, the distributional assumptions of standard parametric tests are not met in smaller samples, while in larger samples statistical efficiency may be negatively impacted. In a recent scoping review, various methods were identified as appropriate for handling skewed cost and/or effect data, including non-parametric bootstrapping, generalized linear models (GLM), hurdle models and Bayesian models with a gamma distribution [25]. In this tutorial, we opted for non-parametric bootstrapping as it allows for comparing arithmetic means without making any distributional assumptions while accounting for the correlation between costs and effects [33, 34]. Advantages of bootstrapping are that it retains the correlation between costs and effects even when separate models are used for analysing costs and effects and it can be nested within a MI procedure to get a valid estimate of uncertainty around cost-effectiveness estimates [33, 35]. A detailed description of the non-parametric bootstrapping procedure is available in ESM_1.

2.3 Correlated Costs and Effects

Costs and effects are typically correlated, because—depending on the disease and/or intervention under study—better health outcomes can be associated with higher costs, or vice versa. In a recent scoping review, various methods were identified as appropriate for handling this correlation between costs and effects, including seemingly unrelated regressions (SUR), bootstrapping costs and effects in pairs, and Bayesian bivariate models [25]. We opted for SUR combined with bootstrapping costs and effects in pairs, because besides both accounting for the correlation between costs and effects, bootstrapping can provide valid estimate of uncertainty around cost-effect estimates [33, 35]. The two SUR regression equations (i.e. one for costs and effects) can be run simultaneously in combination with bootstrapping, which makes the analyses more efficient than an analysis in which two separate regressions equations need to be run [e.g. in case of using ordinary least square (OLS) regressions or GLMs] [16, 36]. Other options to analyse cost-effect data include combining SUR and IPW which would account for the correlation between costs and effects and missing data and/or censoring simultaneously without the need for bootstrapping [36]. In this case, the uncertainty around cost-effect estimates would not be considered if bootstrapping is not applied [33, 35], and information and/or power would be lost if MI is not applied [24]. This tutorial, therefore, focuses on applying SUR in combination with bootstrapping and MI to analyse cost-effect data.

2.4 Baseline Imbalances

In economic evaluations alongside RCTs, the random allocation of participants across study groups theoretically ensures that patient characteristics are similar in both groups. As RCTs do not have infinite samples, however, patient characteristics might still be imbalanced. If these patient characteristics are associated with costs and/or effects, trial-based economic evaluation results may be imprecise and biased if the characteristics are not accounted for in the analysis model. In a recent scoping review, various methods were identified as appropriate for handling such baseline imbalances, including regression-based adjustment, propensity score adjustment and matching [25]. Of them, we opted for regression-based adjustment, as it is easiest to combine with SUR, MI and non-parametric bootstrapping.

3 Part 2: Implementation in R: An Illustrative Example

In this section, we will demonstrate how to implement the statistical methods described above in R. The steps of the tutorial are illustrated using data from a simulated hypothetical trial-based economic evaluation. To run this illustrative example in your computer, please follow the steps provided in ESM_2. All materials used in this tutorial can be downloaded from https://github.com/angelajben/R-Tutorial.

3.1 Trial-Based Economic Evaluation Data

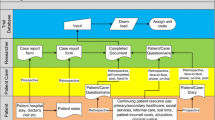

A hypothetical trial-based economic evaluation was simulated using RStudio (dataset.xlsx can be found in ESM_3). The dataset.xlsx includes 200 participants (106 in the control group, 94 in the intervention group), and several variables at baseline and follow-up. Baseline variables include age, sex, utilities (E) and costs (C). Treatment allocation is represented by variable Tr (Tr = 0 if a participant was randomized to the control group and Tr = 1 if randomized to the intervention group). Follow-up variables include utilities and costs at four time points (3 months, 6 months, 9 months and 12 months). We simulated missing values according to the MAR mechanism [15], that is, missing values were introduced into the follow-up variables conditionally on the baseline variables age and sex. An overview of the variables included in the dataset.xlsx is provided in Table 1.

3.2 Multiple Imputation Procedure

Prior to imputing missing values, a descriptive analysis of missing data is usually carried out to identify whether the proportion of missing data is evenly distributed between treatment groups, and whether the missingness of data is associated with baseline variables and/or observed outcomes [14]. On the basis of this information, an imputation model is specified. Detailed information on how to specify an imputation model can be found elsewhere [14, 37, 38]. For this illustrative example, we assumed that a missing data analysis was performed, indicating that missingness in the outcomes was related to two baseline variables, namely age and sex.

For the implementation of the MI procedure, the mice function provided by the mice R package [39] is used (Box 1). The first step is to split the dataset by Tr (#1) to ensure that data are imputed separately by treatment groups as recommended by Faria et al. [14]. Subsequently, a predictor matrix, including all variables of the dataset, is automatically generated by the make.predictorMatrix function and stored in the predMat object (#2). In the predictor matrix, variables in the columns are used to impute missing values of the variables in the rows [38, 39]. A 0 indicates that a certain predictor is not included in the imputation model, while a 1 indicates that it is. In the illustrative example, we attributed zeros to Tr (predMat[,'Tr'] <- 0) because the MI procedure is already stratified by treatment group (#2). Additional information on how to customize and imputation model can be found in Heymans and Eekhout [38].

Box 1 (#3) also shows how to implement the imputation procedure in R. Using the mice function, the PMM procedure (i.e. method = "pmm"), and the prediction matrix (i.e. predictorMatrix = predMat), five imputed datasets are generated (i.e. m = 5). A seed, that is, a specific starting point for the procedure, is specified to ensure that the exact MI procedure can be replicated. The default number of complete donor observations in the mice function is used (i.e. 5) [37].

The rbind function is then used to combine the imputed datasets, after which the complete function stacks all imputed datasets under each other in a data frame format, which is then stored in the impdat object (#4). We also extract the number of imputations, because this information is required at a later stage of the analysis process to pool the results over the imputed datasets using Rubin’s rules (#5).

Total costs (Tcosts) are then calculated as the sum of the costs per time point using all imputed data stored in the impdat object (#6). Similarly, QALYs are calculated using the linear interpolation method (#7) [40], meaning that the mean utility value for two consecutive time points is multiplied by the time between the two time points in expressed in years, after which the results of all periods are summed to calculate total QALYs [19, 40]. Lastly, all imputed datasets are stored separately in a list (#8) to allow for simultaneously implementing the non-parametric bootstrap procedure and SUR analysis to each imputed dataset in the next steps.

3.3 Cost-effectiveness Analysis

For the implementation of the non-parametric bootstrapping procedure (Box 2), we use the boot function provided by the boot R Package [41]. The boot function is used to resample the data, after which a SUR model is fitted on each bootstrap sample using the systemfit function [42]. In doing so, we first need to define the function fsur (#9). In the example, Tcosts are regressed upon Tr, C, age and sex (r1), while QALYs are regressed upon Tr, E, age and sex (r2). Subsequently, the boot function (#10) is used to fit the SUR model on each bootstrap sample and to return the estimated adjusted regression coefficients of the independent variable Tr, namely \({\beta }_{1c}\) and \({\beta }_{1e}\). This is done for each of the five imputed datasets stored in the list impdta. The non-parametric bootstrapping procedure is set to result in 5000 bootstrap samples per imputed dataset, after which the statistics of interest estimated per bootstrap sample are stored in the object bootce (#10).

In Box 3, we illustrate how to extract statistics of interest stored in the bootce object. The lapply function is used to extract \({\beta }_{1c}\) (cost_diff) and \({\beta }_{1e}\) (effect_diff) from each of the five imputed datasets before bootstrapping, and subsequently store these coefficients in a new list named imputed (#11). We also extract the 5000 bootstrapped regression coefficients for costs (bootcost_diff) and effects (booteffect_diff) from each of the five imputed datasets and store these in a new list named postboot (#12).

In Box 4, we illustrate how Rubin’s rules are applied to pool \({\beta }_{1c}\) and \({\beta }_{1e}\) over the imputed datasets. The pooled \({\beta }_{1c}\) and \({\beta }_{1e}\) are calculated as the mean of \({\beta }_{1c}\) and \({\beta }_{1e}\) in each imputed dataset before bootstrapping (#13). The incremental cost-effectiveness ratio (ICER\()\) is then calculated by dividing the pooled \({\beta }_{1c}\) by the pooled \({\beta }_{1e}.\)

Subsequently, a covariance matrix per imputed dataset is computed, including variances and covariances between bootstrapped samples for the parameters of interest stored in the postboot list (#14). The covariance matrix is stored per imputed dataset in a list named cov. Variances stored in cov are pooled using Rubin’s rules (#15, and #16). The pooled covariance is estimated on the basis of the within- and between-variances and stored in a matrix named cov_pooled (#17). Pooled variances stored in cov_pooled are then used to compute the pooled lower and upper limits of the 95% CIs for costs and effects (#18). The FMI for costs and effects is also calculated using their respective within- and between-imputation variances and used to estimate loss of efficiency (#19). In the illustrative example, the losses of efficiency for both costs and effects are below 5%.

3.4 Cost-effectiveness Plane

The cost-effectiveness plane (CE-plane) shows the statistical uncertainty surrounding the ICER. Box 5 shows how the CE-plane is plotted using the ggplot function provided by the tidyverse R package (#18) [43]. The bootstrapped cost differences (\({\beta }_{1c})\) are plotted on the y-axis and the bootstrapped effect differences (\({\beta }_{1e})\) on the x-axis, that is, the blue dots in the plot (Fig. 1). The point estimate representing the pooled cost and effect differences (\({\beta }_{1c}\) and \({\beta }_{1e}\)) is shown as a red dot (i.e. the ICER) (Fig. 1). The axes of the CE-plane can be customized by changing the values specified in the arguments geom_vline, geom_yline, scale_x_continuous, and scale_y_continuous.

3.5 Cost-effectiveness Acceptability Curve (CEAC)

In Box 6, the net benefit approach is used to estimate the probability of an intervention being cost-effective given a willingness-to-pay (WTP) per additional QALY gained through the intervention [44,45,46]. In the example, WTPs are specified as a sequence from 0€ to €80,000 per additional QALY gained, with increments of €1000 (#21).

The net benefit (NB) is defined as \(NB=\lambda \times {\beta }_{1e}-{\beta }_{1c}\), where λ is the WTP and \({\beta }_{1e}\), \({\beta }_{1c}\) are the differences in effects and costs between the intervention and control, respectively. We can then use the pooled variance stored in the covariance matrix (#14) to estimate the probability of the NB being positive conditional on \(\lambda\) using the formula of Löthgren and Zethraeus [47]. Probabilities of cost-effectiveness for each \(\lambda\) stored in CEAC are then used to plot the cost-acceptability curve (#22) (Fig. 2). Of note, a NB > 0 represents that an intervention is cost-effective.

4 Discussion

This tutorial illustrates how to implement available statistical methods to simultaneously handle four methodological challenges that are typically encountered in trial-based economic evaluations using a step-by-step R script. We used MI combined with non-parametric bootstrapping and an adjusted SUR to account for missing data, correlated costs and effects, baseline imbalances, and skewed costs and/or effects. Although we expect that the provided R script is suitable for use in most trial-based cost-effectiveness analyses, there may be specific methodological challenges that need to be added. We will discuss some of these extensions here.

4.1 Linear Mixed Model

The R script can be adapted to implement other statistical models, for example, a linear mixed model (LMM) [48]. This can be done by replacing the SUR equations by LMM equations using R packages such as the lme4 R package [49] or nlme [50]. LMMs are recommended in situations in which observations are clustered, such as in cluster-randomized clinical trials, where patients are randomized at the cluster level (e.g. a hospital) rather than on the individual level [51, 52]. Unlike SUR, however, the LLM must be implemented separately for costs and effects [53]. As a consequence, it does not explicitly account for the correlation between costs and effects when estimating \({\beta }_{1c}\) and \({\beta }_{1e}\), while SUR does. However, if the LLM is combined with bootstrapping cost and effects in pairs, the correlation is maintained. LLM can also be used to analyse cost and effect data longitudinally, with time (i.e. measurement points) clustered within individuals. An advantage of such an approach is that it allows for the estimation of cost and effect differences per measurement point, and hence total costs and QALYs do not need to be estimated prior to the analyses. This effectively reduces the amount of missing data, since partially observed costs and QALYs over the course of the study’s follow-up can still be used [53].

4.2 Multi-arm Trials

The R script can also be adapted for situations in which more than two study arms are being compared. Suppose that the variable Tr has three different treatment arms (0 = A, 1 = B, 2 = C)—then three pairwise comparisons are required (e.g. A versus B, A versus C, and B versus C) [54, 55]. These comparisons can be implemented in the R script by generating dummy variables for the variable treatment and adding those to the SUR regressions or to the LLM as covariates.

4.3 Limitations

4.3.1 Pooling Estimates

In this tutorial, MI is performed first, after which the M complete datasets are bootstrapped (i.e. bootstrapping nested in MI) to handle skewed and missing data simultaneously [35, 56]. Alternative approaches are bootstrapping first and then performing MI (MI nested in bootstrapping) and bootstrapping first followed by single imputation (SI nested in bootstrapping). However, it is still not clear in the literature what the best approach is to combine both statistical methods and pool the results to produce valid confidence intervals [35, 56].

A second limitation is that we now used a normal-based approach to estimate pooled 95% confidence intervals. We judged this to be sound as the sampling distribution of the bootstrapped parameter estimates are approximately normal in our case. However, this might not always be the case, particularly with very small datasets. Alternative options are the percentile method [56] and the bias-corrected and accelerated (BCa) percentile method, which do not rely on the normality assumption [57]. However, the main drawback of this approach is that it is not clear how the variance between imputed datasets should be pooled.

4.3.2 Bayesian Framework

Literature suggests that Bayesian models might be even more flexible than the frequentist methods presented in this R tutorial [58]. For example, a Bayesian bivariate mixed model can account for missing data, correlated costs and effects, baseline imbalances and skewed costs and/or effects, and can more easily be extended to also account for multilevel and longitudinal data than frequentist models [59]. It is important to realize, however, that when researchers rely on the same underlying model of the data, a Bayesian analysis with non-informative priors results in similar estimates as a frequentist analysis. Moreover, the use of Bayesian methods requires a good understanding of probability theory and statistical modelling, since models must often be specifically tailored to the analysis at hand and cannot be implemented in standard statistical software. The R script provided in this tutorial cannot be adapted to include the Bayesian framework. For this, the reader is referred elsewhere [60].

4.3.3 Handling of Skewed Costs and/or Effects

Previous simulation experiments suggest that when the appropriate (skewed) form of cost and/or effect data is known, a degree of efficiency can be gained by using the estimator appropriate for that distribution [33]. This can, for example, be done using a GLM, where various link functions (e.g. logit, identity) and distributions (e.g. gamma, Gaussian) can be specified [61]. Research suggests, however, that even with moderately sized samples drawn from known distributions, the form of the distribution can often not reliably be determined from the data alone, and that estimates based on incorrect distributional assumptions can lead to misleading conclusions [33]. We therefore opted for using non-parametric bootstrapping instead, as it allows for comparing arithmetic means without making any distributional assumptions while accounting for the correlation between costs and effects. If researchers prefer to use a GLM, they can use the codes provided in ESM_2, in which a GLM with a gamma distribution and a log link is combined with MI, regression-based adjustment and non-parametric bootstrapping to get a valid estimate of uncertainty around the model estimates.

5 Conclusion

This tutorial provided step-by-step guidance on how to combine statistical methods available in the literature to handle methodological challenges inherent to trial-based economic evaluations using a ready-to-use R script. We explained the theoretical background of the described methods and illustrated them using a simulated trial-based economic evaluation. Additionally, we presented possible ways to adapt the provided annotated R code and discussed the limitations of the approach chosen in this tutorial.

References

Eurostat. Healthcare expenditure statistics [Internet]. 2021. https://ec.europa.eu/eurostat/statistics-explained/index.php?title=Healthcare_expenditure_statistics. Accessed 12 Feb 2022.

NIHR. National Institute for Health and Care Research [Internet]. National Institute for Health and Care Research. 2022. https://www.nihr.ac.uk/. Accessed 18 Jun 2022.

ZonMw. ZonMw [Internet]. 2022. https://www.zonmw.nl/nl/. Accessed 18 Jun 2022.

Drummond MF, Sculpher MJ, Torranc GW. Methods for the economic evaluation of health care programmes. 3rd ed. Oxford: Oxford University Press; 2005.

Petrou S, Gray A. Economic evaluation alongside randomised controlled trials: design, conduct, analysis, and reporting. BMJ [Internet]. 2011;342:d1548.

O’Sullivan AK, Thompson D, Drummond MF. Collection of health-economic data alongside clinical trials: is there a future for piggyback evaluations? Value Health. 2005;8:67–79.

Gray AM. Cost-effectiveness analyses alongside randomised clinical trials. Clin Trials. 2006;3:538–42.

Willan AR. Statistical analysis of cost-effectiveness data from randomized clinical trials. Expert Rev Pharmacoecon Outcomes Res. 2006;6:337–46.

Hughes D, Charles J, Dawoud D, Edwards RT, Holmes E, Jones C, et al. Conducting economic evaluations alongside randomised trials: current methodological issues and novel approaches. Pharmacoeconomics. 2016;34:447–61.

Ramsey SD, Willke RJ, Glick H, Reed SD, Augustovski F, Jonsson B, et al. Cost-effectiveness analysis alongside clinical trials II—An ISPOR Good Research Practices Task Force report. Value Health. 2015;18:161–72.

El Alili M, van Dongen JM, Huirne JAF, van Tulder MW, Bosmans JE. Reporting and analysis of trial-based cost-effectiveness evaluations in obstetrics and gynaecology. Pharmacoeconomics. 2017;35:1007–33.

Montané E, Vallano A, Vidal X, Aguilera C, Laporte J-R. Reporting randomised clinical trials of analgesics after traumatic or orthopaedic surgery is inadequate: a systematic review. BMC Clin Pharmacol. 2010;10:2.

van Dongen JM, Alili ME, Varga AN, Morel AEG, Ben ÂJ, Khorrami M, et al. What do national pharmacoeconomic guidelines recommend regarding the statistical analysis of trial-based economic evaluations? Expert Rev Pharmacoecon Outcomes Res. 2020;20:27–37.

Faria R, Gomes M, Epstein D, White IR. A guide to handling missing data in cost-effectiveness analysis conducted within randomised controlled trials. Pharmacoeconomics. 2014;32:1157.

Gabrio A, Mason AJ, Baio G. Handling missing data in within-trial cost-effectiveness analysis: a review with future recommendations. PharmacoEconomics Open. 2017;1:79–97.

Willan AR, Briggs AH, Hoch JS. Regression methods for covariate adjustment and subgroup analysis for non-censored cost-effectiveness data. Health Econ. 2004;13:461–75.

DiazOrdaz K, Franchini AJ, Grieve R. Methods for estimating complier average causal effects for cost-effectiveness analysis. J R Stat Soc A Stat Soc. 2018;181:277–97.

van Asselt ADI, van Mastrigt GAPG, Dirksen CD, Arntz A, Severens JL, Kessels AGH. How to deal with cost differences at baseline. Pharmacoeconomics. 2009;27:519–28.

Manca A, Hawkins N, Sculpher MJ. Estimating mean QALYs in trial-based cost-effectiveness analysis: the importance of controlling for baseline utility. Health Econ. 2005;14:487–96.

Nixon RM, Thompson SG. Methods for incorporating covariate adjustment, subgroup analysis and between-centre differences into cost-effectiveness evaluations. Health Econ. 2005;14:1217–29.

Thompson SG, Nixon RM. How sensitive are cost-effectiveness analyses to choice of parametric distributions? Med Decision Mak. 2005;25:416–23.

Nixon RM, Wonderling D, Grieve RD. Non-parametric methods for cost-effectiveness analysis: the central limit theorem and the bootstrap compared. Health Econ. 2010;19:316–33.

Fenwick E, Marshall DA, Blackhouse G, Vidaillet H, Slee A, Shemanski L, et al. Assessing the impact of censoring of costs and effects on health-care decision-making: an example using the Atrial Fibrillation Follow-up Investigation of Rhythm Management (AFFIRM) study. Value Health. 2008;11:365–75.

Seaman SR, White IR. Review of inverse probability weighting for dealing with missing data. Stat Methods Med Res. 2013;22:278–95.

El Alili M, van Dongen JM, Esser JL, Heymans MW, van Tulder MW, Bosmans JE. A scoping review of statistical methods for trial-based economic evaluations: the current state of play. Health Econ. 2022;31:2680–99.

Ling X, Gabrio A, Mason A, Baio G. A scoping review of item-level missing data in within-trial cost-effectiveness analysis. Value Health. 2022.

MacNeil Vroomen J, Eekhout I, Dijkgraaf MG, van Hout H, de Rooij SE, Heymans MW, et al. Multiple imputation strategies for zero-inflated cost data in economic evaluations: which method works best? Eur J Health Econ. 2016;17:939–50.

Burton A, Billingham LJ, Bryan S. Cost-effectiveness in clinical trials: using multiple imputation to deal with incomplete cost data. Clin Trials. 2007;4:154–61.

Leurent B, Gomes M, Cro S, Wiles N, Carpenter JR. Reference-based multiple imputation for missing data sensitivity analyses in trial-based cost-effectiveness analysis. Health Econ. 2020;29:171–84.

White IR, Royston P, Wood AM. Multiple imputation using chained equations: issues and guidance for practice. Stat Med. 2011;30:377–99.

Twisk J, de Boer M, de Vente W, Heymans M. Multiple imputation of missing values was not necessary before performing a longitudinal mixed-model analysis. J Clin Epidemiol. 2013;66:1022–8.

Ben ÂJ, van Dongen JM, Alili ME, Heymans MW, Twisk JWR, MacNeil-Vroomen JL, et al. The handling of missing data in trial-based economic evaluations: should data be multiply imputed prior to longitudinal linear mixed-model analyses? Eur J Health Econ. 2022;24:951–65.

Briggs A, Nixon R, Dixon S, Thompson S. Parametric modelling of cost data: some simulation evidence. Health Econ. 2005;14:421–8.

Desgagné A, Castilloux A-M, Angers J-F, Le Lorier J. The use of the bootstrap statistical method for the pharmacoeconomic cost analysis of skewed data. Pharmacoeconomics. 1998;13:487–97.

Brand J, van Buuren S, le Cessie S, van den Hout W. Combining multiple imputation and bootstrap in the analysis of cost-effectiveness trial data. Stat Med. 2019;38:210–20.

Willan AR, Lin DY, Manca A. Regression methods for cost-effectiveness analysis with censored data. Stat Med. 2005;24:131–45.

van Buuren S. Flexible imputation of missing data [Internet]. Taylor & Francis Group. Chapman & Hall/CRC; 2018. https://stefvanbuuren.name/fimd/. Accessed 14 Jul 2020.

Heymans MW, Eekhout I. Applied missing data analysis [Internet]. 2019. https://bookdown.org/mwheymans/bookmi/. Accessed 11 Apr 2022.

van Buuren S, Groothuis-Oudshoorn K. mice: Multivariate Imputation by Chained Equations in R. J Stat Softw. 2011;45:1–67.

Whitehead SJ, Ali S. Health outcomes in economic evaluation: the QALY and utilities. Br Med Bull. 2010;96:5–21.

Canty A, support) BR (author of parallel. boot: Bootstrap functions [Internet]. 2021. https://CRAN.R-project.org/package=boot. Accessed 11 Apr 2022.

Henningsen A, Hamann JD. systemfit: a package for estimating systems of simultaneous equations in R. J Stat Softw. 2008;23:1–40.

Wickham H. ggplot2: Elegant graphics for data analysis [Internet]. Springer-Verlag New York; 2016. https://ggplot2.tidyverse.org. Accessed 11 Apr 2022.

Hoch JS, Dewa CS. Advantages of the net benefit regression framework for economic evaluations of interventions in the workplace: a case study of the cost-effectiveness of a collaborative mental health care program for people receiving short-term disability benefits for psychiatric disorders. J Occup Environ Med. 2014;56:441–5.

Zethraeus N, Johannesson M, Jönsson B, Löthgren M, Tambour M. Advantages of using the net-benefit approach for analysing uncertainty in economic evaluation studies. Pharmacoeconomics. 2003;21:39–48.

Briggs AH. A Bayesian approach to stochastic cost-effectiveness analysis. Health Econ. 1999;8:257–61.

Löthgren M, Zethraeus N. Definition, interpretation and calculation of cost-effectiveness acceptability curves. Health Econ. 2000;9:623–30.

Twisk JWR. Applied longitudinal data analysis for epidemiology: a practical guide. Cambridge: Cambridge University Press; 2013.

Bates D, Mächler M, Bolker B, Walker S. Fitting linear mixed-effects models using lme4. J Stat Softw. 2015;67:1–48.

version) JP (S, to 2007) DB (up, to 2002) SD (up, to 2005) DS (up, authors (src/rs.f) E, sigma) SH (Author fixed, et al. nlme: Linear and nonlinear mixed effects models [Internet]. 2022. https://CRAN.R-project.org/package=nlme. Accessed 1 May 2022.

Gomes M, Ng ES-W, Grieve R, Nixon R, Carpenter J, Thompson SG. Developing appropriate methods for cost-effectiveness analysis of cluster randomized trials. Med Decision Mak. 2012;32:350–61.

El Alili M, van Dongen JM, Goldfeld KS, Heymans MW, van Tulder MW, Bosmans JE. Taking the analysis of trial-based economic evaluations to the next level: the importance of accounting for clustering. PharmacoEconomics [Internet]. 2020. https://doi.org/10.1007/s40273-020-00946-y.

Gabrio A, Plumpton C, Banerjee S, Leurent B. Linear mixed models to handle missing at random data in trial-based economic evaluations. Health Econ. 2022;31:1276–87.

Juszczak E, Altman DG, Hopewell S, Schulz K. Reporting of multi-arm parallel-group randomized trials: extension of the CONSORT 2010 Statement. JAMA. 2019;321:1610–20.

Twisk JWR. Analysis of data from a stepped wedge trial. In: Twisk JWR, editor. Analysis of data from randomized controlled trials: a practical guide [Internet]. Cham: Springer International Publishing; 2021. p. 73–105. https://doi.org/10.1007/978-3-030-81865-4_6.

Schomaker M, Heumann C. Bootstrap inference when using multiple imputation. Stat Med. 2018;37:2252–66.

Briggs AH, Wonderling DE, Mooney CZ. Pulling cost-effectiveness analysis up by its bootstraps: a non-parametric approach to confidence interval estimation. Health Econ. 1997;6:327–40.

Baio G. Bayesian methods in health economics. New York: Chapman and Hall/CRC; 2013.

Gelman A, Carlin JB, Stern HS, Dunson DB, Vehtari A, Rubin DB. Bayesian data analysis. 3rd ed. New York: Chapman and Hall/CRC; 2015.

Gabrio A, Mason AJ, Baio G. A full Bayesian model to handle structural ones and missingness in economic evaluations from individual-level data. Stat Med. 2019;38:1399–420.

Barber J, Thompson S. Multiple regression of cost data: use of generalised linear models. J Health Serv Res Policy. 2004;9:197–204.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

Authors declare that they have no conflict of interest.

Funding

Financial support for this study was provided entirely by a contract with Vrije Universiteit Amsterdam. The funding agreement ensured the authors’ independence in designing the study, interpreting the data, writing and publishing the report.

Data availability

The dataset generated during and/or analysed during the current study is available in the supplementary material. All materials used in this tutorial are available at https://github.com/angelajben/R-Tutorial

Author contributions:

Concept and design: ÂJB, JMvD, and JEB. Acquisition of data: not applicable. This tutorial did not involve data from human participants. It includes solely simulated data for the demonstration of statistical methods application. Analysis and interpretation of data: ÂJB, JMvD, and MEA; JLE, HMB, and JEB. Drafting of the manuscript: ÂJB, JMvD, and JEB. Critical revision of the paper for important intellectual content: ÂJB, JMvD, and MEA; JLE, HMB, and JEB. Statistical analysis: ÂJB, JMvD, and MEA; JLE and JEB. Provision of study materials or patients: JMvD and JEB. Obtaining funding: JEB. Administrative, technical, or logistic support: ÂJB, JMvD, and JEB. Supervision: JMvD and JEB.

Ethics approval

This tutorial did not involve data from human participants. It includes solely simulated data for the demonstration of statistical methods application.

Consent to participate

Not applicable.

Consent for publication

Not applicable.

Code availability

R scripts and simulated data used in this tutorial are available at https://github.com/angelajben/RTutorial.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License, which permits any non-commercial use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc/4.0/.

About this article

Cite this article

Ben, Â.J., van Dongen, J.M., El Alili, M. et al. Conducting Trial-Based Economic Evaluations Using R: A Tutorial. PharmacoEconomics 41, 1403–1413 (2023). https://doi.org/10.1007/s40273-023-01301-7

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40273-023-01301-7