Abstract

Introduction

In 2018, we published the MONARCSi algorithmic decision support tool showing high inter-rater agreement, moderate sensitivity, and high specificity compared with drug-event pairs (DEPs) previously reviewed using current, industry-established approaches. Following publication, MONARCSi was implemented as a prototype system to facilitate medical review of individual case safety reports (ICSRs). This paper presents subsequent evaluation of MONARCSi-supported causality assessments against an independent, best achievable reference standard.

Objective

This paper describes the development of an independent reference standard (i.e., reference comparator) using a sample of DEPs evaluated by Roche subject matter experts (SMEs) and subsequent performance analysis for both the reference standard and MONARCSi.

Methods

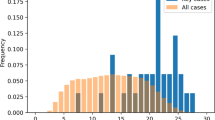

Roche collected a random sample of 131 DEPs evaluated by an external vendor using the MONARCSi prototype during 2020, and collectively referred to as the VMON (Vendor using the MONARCSi system for medical review) dataset. An internal group of causality SMEs (aka CAUSMET) were recruited and trained to assess the same DEPs independently using the MONARCSi structure with Global Introspection to determine their individual assessments of causality. The CAUSMET final causality was determined using a majority voting rule.

Results

Binary comparison of the aggregate results showed substantial agreement (Gwet kappa = 0.81) between the VMON and reference standard CAUSMET assessments. Bayesian latent class modeling showed that both the reference standard and VMON assessments exhibited similar high posterior mean sensitivity and specificity (CAUSMET: 89 and 93%, respectively; VMON: 87 and 94%, respectively). Finally, comparison of the sensitivity and specificity suggested no obvious difference across groups.

Conclusion

Analysis of causality results from the assessments by independent internal SMEs using MONARCSi shows there is no obvious difference in performance between the aggregate CAUSMET and VMON assessments based on the comparison of specificity and sensitivity. These results further support use of MONARCSi as a decision support tool for evaluating drug-event causality in a consistent and documentable manner.

Similar content being viewed by others

References

Agbabiaka TB, Savović J, Ernst E. Methods for causality assessment of adverse drug reactions: a systematic review. Drug Saf. 2008;31(1):21–37. https://doi.org/10.2165/00002018-200831010-00003.

Khan LM, Al-Harthi SE, Osman AM, Sattar MA, Ali AS. Dilemmas of the causality assessment tools in the diagnosis of adverse drug reactions. Saudi Pharm J. 2016;24(4):485–93. https://doi.org/10.1016/j.jsps.2015.01.010.

Théophile H, Arimone Y, Miremont-Salamé G, et al. Comparison of three methods (consensual expert judgement, algorithmic and probabilistic approaches) of causality assessment of adverse drug reactions: an assessment using reports made to a French pharmacovigilance centre. Drug Saf. 2010;33(11):1045–54. https://doi.org/10.2165/11537780-000000000-00000.

Miremont G, Haramburu F, Bégaud B, Péré JC, Dangoumau J. Adverse drug reactions: physicians’ opinions versus a causality assessment method. Eur J Clin Pharmacol. 1994;46(4):285–9. https://doi.org/10.1007/BF00194392.

Macedo AF, Marques FB, Ribeiro CF, Teixeira F. Causality assessment of adverse drug reactions: comparison of the results obtained from published decisional algorithms and from the evaluations of an expert panel, according to different levels of imputability. J Clin Pharm Ther. 2003;28(2):137–43. https://doi.org/10.1046/j.1365-2710.2003.00475.x.

Macedo AF, Marques FB, Ribeiro CF, Teixeira F. Causality assessment of adverse drug reactions: comparison of the results obtained from published decisional algorithms and from the evaluations of an expert panel. Pharmacoepidemiol Drug Saf. 2005;14(12):885–90. https://doi.org/10.1002/pds.1138.

Doherty MJ. Algorithms for assessing the probability of an adverse drug reaction. Respir Med CME. 2009;2:63–7. https://doi.org/10.1016/j.rmedc.2009.01.004.

Naranjo CA, Busto U, Sellers EM, et al. A method for estimating the probability of adverse drug reactions. Clin Pharmacol Ther. 1981;30(2):239–45. https://doi.org/10.1038/clpt.1981.154.

Meyboom RH, Hekster YA, Egberts AC, Gribnau FW, Edwards IR. Causal or casual? The role of causality assessment in pharmacovigilance. Drug Saf. 1997;17(6):374–89. https://doi.org/10.2165/00002018-199717060-00004.

Michel DJ, Knodel LC. Comparison of three algorithms used to evaluate adverse drug reactions. Am J Hosp Pharm. 1986;43(7):1709–14.

Kane-Gill SL, Forsberg EA, Verrico MM, Handler SM. Comparison of three pharmacovigilance algorithms in the ICU setting: a retrospective and prospective evaluation of ADRs. Drug Saf. 2012;35(8):645–53. https://doi.org/10.1007/BF03261961.

Macedo AF, Marques FB, Ribeiro CF. Can decisional algorithms replace global introspection in the individual causality assessment of spontaneously reported ADRs? Drug Saf. 2006;29(8):697–702. https://doi.org/10.2165/00002018-200629080-00006.

Koch-Weser J, Sellers EM, Zacest R. The ambiguity of adverse drug reactions. Eur J Clin Pharmacol. 1977;11(2):75–8. https://doi.org/10.1007/BF00562895.

Arimone Y, Bégaud B, Miremont-Salamé G, et al. Agreement of expert judgment in causality assessment of adverse drug reactions. Eur J Clin Pharmacol. 2005;61(3):169–73. https://doi.org/10.1007/s00228-004-0869-2.

Arimone Y, Miremont-Salamé G, Haramburu F, et al. Inter-expert agreement of seven criteria in causality assessment of adverse drug reactions. Br J Clin Pharmacol. 2007;64(4):482–8. https://doi.org/10.1111/j.1365-2125.2007.02937.x.

Kosov M, Maximovich A, Riefler J, Dignani MC, Belotserkovskiy M, Batson E. Interexpert agreement on adverse events’ evaluation. Applied Clinical Trials Online; 2016. Available at: http://www.appliedclinicaltrialsonline.com/interexpert-agreement-adverse-events-evaluation. Accessed 21 June 2017.

Meehl PE. Clinical versus statistical prediction: a theoretical analysis and a review of the evidence. Minneapolis: University of Minnesota Press; 1954.

Grove WM, Zald DH, Lebow BS, Snitz BE, Nelson C. Clinical versus mechanical prediction: a meta-analysis. Psychol Assess. 2000;12:19–30.

Grove WM, Lloyd M. Meehl’s contributions to clinical versus statistical prediction. J Abnorm Psychol. 2006;115(2):192–4. https://doi.org/10.1037/0021-843X.115.2.192.

Comfort S, Dorrell D, Meireis S, Fine J. Modified NARanjo Causality Scale for ICSRs (MONARCSi): a decision support tool for safety scientists. Drug Saf. 2018;41(11):1073–85. https://doi.org/10.1007/s40264-018-0690-y.

Bass I. Six sigma statistics with EXCEL and MINITAB, 1st edn. McGraw Hill Companies Inc; 2007.

Lim C, Wannapinij P, While L, Day Nicholas PJ, Cooper Ben S, Peacock Sharon J, Limmathurotsakul D. Using a web-based application to define the accuracy of diagnostic tests when the gold standard is imperfect. PLoS ONE. 2013;8(11): e79489. https://doi.org/10.1037/journal.pone.0079489.

Joseph L, Gyorkos TW, Coupal L. Bayesian estimation of disease prevalence and the parameters of diagnostic tests in the absence of a gold standard. Am J Epidemiol. 1995;141(3):263–72. https://doi.org/10.1093/oxfordjournals.aje.a117428.

Bolstad WM. Introduction to Bayesian statistics. 2nd ed. New York: Wiley; 2007.

Viera AJ, Garrett JM. Understanding Interobserver Agreement: the kappa statistic. Fam Med. 2005;37:360–3.

Davies EC, Rowe PH, James S, Nickless G, Ganguli A, Danjuma M, et al. An investigation of disagreement in causality assessment of adverse drug reactions. Pharm Med. 2011;25:17–24.

Report of CIOMS Working Group VI. Management of safety information from Clinical Trials, 1st edn. CIOMS; 2005.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Funding

Funding for this study was supplied by Genentech, a Member of the Roche Group.

Conflict of interest

Shaun Comfort, Darren Dorrell, Sunita Dhar, Chris Eden and Francis Donaldson were employed by Roche at the time this research was completed. Bruce Donzanti was an independent consultant with Donzanti PV Services, LLC at the time this research was completed.

Ethics approval

Not applicable as all human subject data used in this analysis were used in a de-identified format.

Consent to participate

Not applicable.

Consent for publication

Not applicable.

Availability of data and material (data transparency)

De-identified CAUSMET Dataset is provided as ESM, in Microsoft Excel format.

Code availability

Not applicable.

Author contributions

All co-authors contributed to the conceptualization of this work. SC and DD performed the data analysis, and BD was involved in drafting the manuscript. All co-authors revised, edited, and approved the manuscript. All authors read and approved the final version.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Comfort, S.M., Donzanti, B., Dorrell, D. et al. Comparison of the MOdified NARanjo Causality Scale (MONARCSi) for Individual Case Safety Reports vs. a Reference Standard. Drug Saf 45, 1529–1538 (2022). https://doi.org/10.1007/s40264-022-01245-5

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40264-022-01245-5