Abstract

Introduction

The management of acute postoperative pain remains a significant challenge for physicians. Poorly controlled postoperative pain is associated with poorer overall outcomes.

Methods

Between April and May 2017, physicians from an online database who regularly prescribe intravenous (IV) medications for acute postoperative pain completed a 47-question survey on topics such as patient demographics, IV analgesia preferences, factors that influence prescribing decisions, and the challenges and unmet needs for the treatment of acute postoperative pain.

Results

Of 501 surveyed physicians, 55% practiced in community hospitals, 60% had been in practice for > 10 years, and 60% were surgeons. The three categories of IV pain medications most likely to be prescribed to patients with moderate-to-severe pain immediately after surgery were morphine, hydromorphone, or fentanyl (95.8% of respondents); COX-2 inhibitors or nonsteroidal anti-inflammatory drugs (73.7%); and acetaminophen (60.5%). Past clinical experience (81.6%), surgery type (78.2%), and onset of analgesia (67.1%) were practice-related factors that most determined their medication choice. Key patient-related risk factors, such as avoidance of medication-related adverse events (AEs), each influenced prescription decisions in > 75.0% of physicians. Nausea and vomiting were among the most common challenges associated with postoperative pain management (76.2 and 60.3%, respectively), and avoidance of analgesic medication-related AEs was among the three most influential patient-related factors that determined prescribing decision (75%). Physicians reported the top unmet need for acute pain management in patients experiencing moderate-to-severe postoperative pain was more medications with fewer side effects (i.e., nausea, vomiting, and respiratory depression; 80.7%).

Conclusions

Opioids remain an integral component of multimodal acute analgesic therapy for acute postoperative pain in hospitalized patients. The use of all IV analgesic medications is limited by concerns over AEs, particularly with opioids and in high-risk patients. There remains a key unmet need for effective analgesic medications that are associated with a lower risk of AEs.

Funding

Trevena, Inc.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Nearly 86% of patients undergoing surgery report postoperative pain, which is often moderate to severe in intensity [1]. Managing postoperative pain can be challenging for the physician, with many patients experiencing poorly controlled pain or analgesic medication-related adverse events (AEs) [1, 2]. Poorly controlled pain has a number of negative consequences for the patient, including a delay in hospital discharge, a delay in functional recovery, and an increased risk of chronic pain [3,4,5,6,7,8,9,10,11]. Although a number of analgesic therapies are available, the high incidence of postoperative pain among patients indicates that there are still significant treatment challenges.

To prevent poorly controlled pain and limit analgesia medication-related AEs, clinical guidelines on multimodal analgesic strategies have been developed for different types of surgery [12]. These strategies simultaneously employ several therapies, often with different targets or mechanisms of action, to create an additive and synergistic effect. A key benefit of multimodal strategies is their ability to limit AEs, which may occur with high dosages of individual analgesics [13].

Intravenous (IV) opioids remain an integral component of a multimodal approach to postoperative pain, particularly for invasive procedures and the treatment of deep visceral pain. Other important components include IV nonsteroidal anti-inflammatory drugs (NSAIDs), acetaminophen, and local anesthetics. Adequate physician knowledge and a patient-centric approach to treatment are the prerequisites for the effective management of acute postoperative pain and the optimization of patient outcomes.

Despite their proven efficacy, the use of IV opioids is constrained by dose-limiting opioid-related adverse events (ORAEs), such as respiratory depression and postoperative nausea, vomiting, and ileus [2, 12, 14]. Specific patient characteristics increase the risk of specific ORAEs, and this knowledge may guide the use of opioids in individual cases [15,16,17,18,19]. ORAEs can delay patients’ postoperative recovery and discharge, as well as increase total healthcare costs, as compared with those patients who do not experience them [20,21,22].

This survey of treating physicians was conducted to evaluate the current practice patterns and challenges associated with the management of acute postoperative pain. The key outcome was to identify physicians’ perspectives on IV pain medications, such as the factors that determine their selection and which ones they ultimately prescribe for their patients. Supportive outcomes from the survey were the identification of factors that influence practice patterns and the most pressing unmet needs for physicians who manage the care of patients with acute postoperative pain. These findings can be used to determine where efforts to improve current practice should be focused.

Methods

This survey (available as Supplemental Material) was jointly designed by HealthiVibe, LLC (Arlington, VA, USA), Epstein Health, Trevena, Inc. (the study sponsor), and investigators with content expertise (PGW and TJG). The survey received institutional review board approval and is reported in a manner consistent with the CHERRIES guidelines [23, 24]. This article does not contain any studies with human participants or animals.

Recruitment

US-based physicians participated in an online survey using a Health Insurance Portability and Accountability Act–compliant web survey platform (Amazon Web Services). Participants were identified from an opt-in healthcare database built from physician communities, continuing medical education sites, and clinical content portals. Invitations to participate were sent via e-mail, which included a link to the survey website with a disclaimer that the results would be kept confidential. To achieve the desired response rate, the first invitation e-mail was followed by a second invitation, which was sent within 7 days of the first. Before participating in the survey, all participants provided consent via completion of a screening item asking for their agreement to participate. Each respondent could submit only one completed survey. Upon completion, survey respondents received a confirmatory “thank you” note via e-mail. Respondents also received modest compensation for completing the survey in its entirety. This was considered necessary for participant recruitment and to encourage completion of the entire survey. Neither the survey nor the invitation included any reference to the sponsor.

Screening

There was a short screening process, expected to take no more than 5 min to complete, during which physicians responded to questions regarding their willingness to participate in the core survey content and to confirm that they were eligible according to the inclusion/exclusion criteria. To be eligible, physicians must have practiced within one of the required medical specialties: surgery, anesthesiology, critical care/emergency medicine, or hospitalist. Surgeons must have completed ≥ 1 of 27 listed surgical procedures in six surgical categories (general or bariatric, cardiothoracic, colorectal, orthopedic or neurosurgery, plastic or cosmetic, or vascular). At the time of this survey, all physicians must have currently be practicing in an academic medical center, community hospital, or ambulatory care setting, and involved in one or more surgeries per month (e.g., surgeons must have been performing surgery, anesthesiologists must have been managing administration of IV pain medications). Surgical categories were chosen based on a clinical assessment of patient types and surgical procedures that were most likely to lead to moderate-to-severe pain and require IV analgesia medications following surgery.

Full Survey

The 47-question survey was designed to take an estimated 12–15 min to complete, incorporating screening, demographic, and core survey questions. The survey contained 40 screens: a landing page, 38 individual question screens, and a “thank you” page. Following each answer, the respondent was directed to the next question. Respondents were unable to go back and change answers in order to avoid creating survey bias (as future questions could potentially influence respondents to change their previous answers). Respondents could not move forward in the survey without providing an answer to each question; in this way, unanswered questions were not permitted.

The survey contained questions elucidating the types of medication that physicians prescribe to manage acute postoperative pain, the factors that influence their postsurgical pain medication regimens, the challenges they face when treating acute postoperative pain, and their perceptions of unmet needs regarding current pain therapies. The survey also included demographic questions including physicians’ primary practice settings, years in clinical practice, and geographic locations.

The survey questions were presented in the following formats: questions that asked the respondent to choose one response from a defined list of possible statements; questions that asked the respondent to choose multiple responses (e.g., “Select all that apply”); statements where the respondent was asked to indicate agreement or disagreement using a Likert scale of 1–5 (1 = “Strongly disagree” to 5 = “Strongly agree”); dichotomous questions (yes/no); and statements where the respondent is asked to indicate the frequency with which they engage in an activity (1 = Very often, 2 = Often, 3 = Sometimes, 4 = Never, 5 = Not sure/not applicable). The presentation of response statements within a question set was rotated in an attempt to reduce bias.

Outcomes

Each question included in the survey was designed to gain a specific insight that was intended to gain an overall understanding of current practice patterns and treatment challenges experienced by physicians managing patients’ acute postoperative pain.

When designing the study, the key outcome of interest was the identification of factors influencing physicians’ choice of IV pain medications. This was specifically addressed in two survey items (see Supplemental Material)—“Please select the top three IV pain medications that you are most likely to prescribe for your patients who experience moderate-to-severe pain immediately following surgery” and “What clinical practice-related factors most determine which IV pain medications you prescribe for postsurgical patients you care for during their hospitalization?”

The supportive outcomes of the study were to determine the factors that influenced practice patterns and the most pressing unmet needs for physicians managing acute postoperative pain, which were addressed with several questions.

Data Handling

Personally identifiable information was not collected as part of the survey and all survey data were collected, de-identified, and stored by the third-party platform. The host platform passed an internal identifier (along with security tokens) to the survey. Once a survey was completed, this status and the internal identifier was communicated to the panel platform. Similarly, if a respondent was disqualified from the survey, this was also communicated to the panel platform. Access to the survey was therefore restricted at the platform level. The platform identifier was stored with the survey results while the survey was live, and used to verify that no duplicate completions existed in the data.

The data cleaning tool within the survey platform was used to identify “speedy” responses (≤ 3 s per question), which were quarantined and excluded from the sample. The host platform only recorded visitors who completed ≥ 1 question and did not provide data to calculate the view rate, participation rate, or completion rate.

Statistical Analysis

The inclusion of approximately 500 physicians was considered a feasible and adequate sample size that could accurately reflect the current views among treating physicians. During the recruitment process, invitations targeted surgeons, anesthesiologists, and critical care/emergency medicine physicians or hospitalists, at proportions of 6:3:1. This reflected the breadth of physicians who commonly participate in the management of moderate-to-severe acute postoperative pain requiring IV analgesic medications. The eligibility of the respondents was checked at the time of initial screening, so that only those meeting the criteria could complete the survey.

The aim of this study was to identify the current practice patterns and treatment challenges. Consequently, descriptive statistics were used to present and interpret the data.

Results

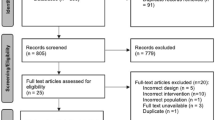

The survey was open from April to May 2017, and was closed shortly after the target number of responses had been received. In total, 3803 email invitations were sent.

Of 501 physicians who completed the survey, 54.9% practiced in community hospitals and 59.7% had been in practice for > 10 years. Sixty percent of respondents were surgeons, 29.9% were anesthesiologists, and 10.0% were critical care/emergency room physicians. No hospitalists completed the survey. Most surgeons specialized in general/bariatric surgery (51.8%) or plastic/cosmetic surgery (17.3%; Table 1).

When asked which individuals played a role in creating postoperative pain management plans for elective surgery patients, most physicians agreed that surgeons primarily fulfilled this role, which included responsibilities such as educating the patient about pain management before (82.2%) and after surgery (77.0%), as well as making analgesic medication prescription decisions following surgery (89.4%).

When asked to select the three IV medications that they were most likely to prescribe to patients with moderate-to-severe pain immediately following surgery, physicians most commonly selected opioids, including morphine, hydromorphone, and fentanyl (95.8%); COX-2 inhibitors or NSAIDs, such as ketorolac or ibuprofen (73.7%); and IV acetaminophen (60.5%). Local anesthetics such as bupivacaine, chloroprocaine, or lidocaine were among the top three medications selected by 45.5% of respondents. Other drug classes were each selected by < 9.0% of physicians each. Past clinical experience (81.6%), surgery type (78.2%), and onset of analgesic effect (67.1%) were the top clinical practice-related factors that most influenced physicians’ choice of pain medications during postsurgical hospitalizations (Table 2).

The patient-related factors that most commonly affected physicians’ IV pain medication prescription decisions during the hospitalization of postsurgical patients were risk factors such as age, comorbidities, or prior surgeries; postsurgical mobility; and avoidance of pain medication-related AEs, including nausea, vomiting, and respiratory depression; each of these categories was selected by > 75% of physicians (Table 3). Interestingly, the patient risk of addiction and abuse was chosen as a factor by only 45% of physicians, suggesting that despite the societal problems with opioid abuse in the community, this is a relatively low-ranking concern with the acute postsurgical use of IV opioids in an inpatient setting.

Physicians considered respiratory depression, chronic opioid use, age > 75 years, and respiratory comorbidities to be the main patient characteristics of concern when using IV opioid pain medications in the hospital following surgery (Table 4).

According to physician respondents, the three most important factors affecting patients’ postoperative recovery process were well-controlled pain (61.3%); postsurgical mobility (50.9%); and patients having realistic expectations for the recovery process (48.7%). Other commonly selected factors (i.e., in the top three for > 30% of respondents) were patient satisfaction (39.7%) and few to no comorbidities that complicated recovery following surgery (31.5%).

Nausea and vomiting were among the most common challenges observed or encountered by physicians prescribing analgesic medications during the postoperative period (76.2 and 60.3%, respectively; Table 5). When asked about the top three categories of non-analgesic medications prescribed to postoperative patients with moderate-to-severe pain, antiemetics were the most common and were listed in the top three for 84.2% of physicians.

Only 3.5% (18/501) of physicians reported any dissatisfaction with patients’ pain responses to postoperative analgesics while hospitalized, with the majority (13/18) reporting dissatisfaction with the trade-off between pain control and drug-induced side effects such as nausea, vomiting, or respiratory depression. The small number of physicians reporting dissatisfaction limits our ability to evaluate the causative reasons.

Physicians reported that the top unmet needs for acute pain management of patients experiencing moderate-to-severe postoperative pain were more medications associated with fewer side effects, such as nausea, vomiting, and respiratory depression (80.7%); an ability to avoid or reduce unwanted pain medication-related AEs (64.9%); not being able to use some pain medications due to unavailability or costs (56.5%); and difficulties patients had understanding their role in acute pain management (e.g., how to communicate location and intensity of pain; 55.1%). Other unmet needs were selected by < 50% of respondents.

As IV opioids were expected to be commonly prescribed in these situations, physicians were also asked to rate various characteristics of three widely prescribed parenteral opioids (fentanyl, hydromorphone, and morphine) on a scale of 1 (least preferred) to 10 (most preferred). No opioid rated above a 7.7 for any specific characteristic. Among the lowest-scoring drug characteristics (i.e., indicating the greatest room for improvement) were the occurrence of AEs and concerns regarding their use in high-risk patients (Table 6).

Discussion

This survey was conducted to better understand physicians’ perceptions and experiences treating patients’ acute postoperative pain in the inpatient setting. Participants in the online survey were mostly surgeons (60%) or anesthesiologists (30%) from all US geographic regions. Most had > 10 years’ experience practicing.

The key outcome of the survey was to characterize physicians’ perspectives on IV analgesic medications. Findings confirmed our expectations that opioids are an integral component of multimodal postoperative analgesia therapy for invasive or moderate-to-severely painful procedures. The most important factors influencing prescribing decisions were past clinical experiences, surgery type, and onset of analgesic effect. Overall, the responses demonstrated that the predictability and reliability of pain relief were important factors for physicians choosing an IV analgesia therapy.

Physician responses provided meaningful insights into the factors that influenced practice patterns and identified the most pressing unmet needs for the management of acute postoperative pain. Responses suggested that physicians were very aware of the potential for AEs associated with IV analgesic medications, particularly ORAEs. Patient-related risk factors, such as age, comorbidities, or prior surgeries, as well as the reduction or avoidance of analgesia medication-related AEs, were important considerations affecting physicians’ approach to postoperative pain management. Furthermore, patient characteristics that are known to increase the risk of AEs, including respiratory comorbidities, chronic opioid use, and older age, were reported as of “very much concern” for patients receiving IV opioid therapy. Overall, 80.7% of physicians agreed that medications associated with fewer side effects (such as nausea, vomiting, and respiratory depression) was a top unmet need for the management of acute postoperative pain.

The top three most pressing concerns regarding postoperative pain management were related to AEs, namely nausea (76.2%), constipation (67.3%), and vomiting (60.3%). These findings are in agreement with a previous survey of physicians writing > 20 opioid prescriptions per month for either chronic or acute pain, where 58% reported that the reduction of AEs was an unmet need [25]. Other responses highlighted the concern that physicians share regarding uncontrolled postoperative pain; for instance, 40.5% noted that delays in hospital discharge due to uncontrolled pain was among their most frequently encountered challenges of postoperative pain management. Other related issues included delays in rehabilitation or discharge from the post-anesthesia care unit, chronic pain, and the risk of re-hospitalization due to pain or pain-related complications. Poorly controlled postoperative pain is a well-recognized unmet clinical need, especially for patients experiencing moderate-to-severe pain [1, 26]. Poorly controlled pain can delay patient recovery by interrupting sleep and causing delays in recovery after surgery [3, 4]. It can also delay patient discharge and is a risk factor for developing chronic pain [5,6,7,8,9]. Improving the efficacy of acute pain management can reduce the incidence of chronic pain, as demonstrated in a postoperative study where the risk decreased by 10% for every unit of improvement on the 11-point Quality of Acute Postoperative Pain Management Scale [11].

Despite high levels of patient satisfaction with postoperative analgesic regimens, the commonality and severity of pain support the conclusion that postoperative pain is often sub-optimally controlled [1, 26,27,28]. This is consistent with the reported preferences for patient-controlled analgesia [29] and with results of a recent survey of 296 maternity and orthopedic patients in tertiary care hospitals in Lebanon, where the perceived timeliness of pain medication receipt was significantly associated with patients’ overall satisfaction with pain management [28]. Notably, fear of adverse reactions was reported in ~ 50% of patients in this study, demonstrating that this remains a common barrier to the use of pain medications [28].

Although opioids including fentanyl, hydromorphone, and morphine were the most commonly prescribed IV analgesic medications in the present survey, results suggest that their clinical utility is limited by the risk of ORAEs. This was particularly the case for high-risk patients, such as those with a history of chronic opioid use, respiratory comorbidities, older age, obesity, and renal impairment. There are also ongoing concerns regarding the abuse potential of opioids, which warrant expert physician knowledge, careful prescribing patterns, and a patient-centric approach to longer-term opioid use [30,31,32,33,34,35]. Despite these clear reservations, our findings are consistent with other studies that have found opioids to be the most prescribed type of medication for the treatment of acute postoperative pain, with up to 95% of surgical patients receiving opioids or opioid combination drugs [1, 26, 30, 36]. These findings from practicing physicians serve to confirm that finding safer analgesia medications that are as efficacious as opioids remains an important unmet need.

Shortcomings of survey studies include the limited response rates, the possibility that respondents do not accurately recall their past experiences due to the number or diversity of patients they treat, or that respondents may not fully represent the target sample (in this case, physicians who treat postoperative pain in hospitals). Our study utilized a convenience (non-randomized) sample of 501 physicians that may not be wholly representative of the intended population. This is a small proportion of all physicians who are involved in surgery and manage post-operative pain. The applicability of our results to all surgeons may be affected by the fact that most respondents were general or bariatric surgeons; however, these physicians are among the most likely to directly manage patients experiencing postoperative pain. Additionally, although invitations were extended to hospitalists, none completed the survey. The responses in our survey were also somewhat restricted by the fixed response options. This was partially overcome by adding extensive choices, ranking questions, and “other” options, but the format did preclude physicians from providing further details or adding unanticipated responses. Furthermore, in was not in the scope of this survey to evaluate the patient’s perspective of post-surgical analgesia, which is an important component of the overall success of analgesia and may highlight other unmet needs.

Conclusions

Despite the limitations outlined above, this study offers some key insights into the experiences, concerns, and challenges of physicians treating acute postoperative pain in the inpatient setting. Specifically, results clearly show that the incidence of AEs is of key concern to physicians. Although opioids are the most commonly used IV therapy for moderate-to-severe acute postoperative pain, physicians still feel that there is significant room for improvement in terms of the therapeutic and safety profiles of these medications. Complications such as nausea, vomiting, and respiratory depression, and an ability to avoid or reduce the incidence of these medication-related AEs, particularly in high-risk patients, were the top unmet needs. Overall, these findings highlight the need for more effective analgesic medications that are associated with a lower risk of AEs.

References

Gan TJ, Habib AS, Miller TE, White W, Apfelbaum JL. Incidence, patient satisfaction, and perceptions of post-surgical pain: results from a US national survey. Curr Med Res Opin. 2014;30:149–60.

Wheeler M, Oderda GM, Ashburn MA, Lipman AG. Adverse events associated with postoperative opioid analgesia: a systematic review. J Pain. 2002;3:159–80.

Wylde V, Rooker J, Halliday L, Blom A. Acute postoperative pain at rest after hip and knee arthroplasty: severity, sensory qualities and impact on sleep. Orthop Traumatol Surg Res. 2011;97:139–44.

Strassels SA, Chen C, Carr DB. Postoperative analgesia: economics, resource use, and patient satisfaction in an urban teaching hospital. Anesth Analg. 2002;94:130–7.

Pavlin DJ, Chen C, Penaloza DA, Polissar NL, Buckley FP. Pain as a factor complicating recovery and discharge after ambulatory surgery. Anesth Analg. 2002;95:627–34.

Sommer M, de Rijke JM, van Kleef M, et al. The prevalence of postoperative pain in a sample of 1490 surgical inpatients. Eur J Anaesthesiol. 2008;25:267–74.

Kehlet H, Jensen TS, Woolf CJ. Persistent postsurgical pain: risk factors and prevention. Lancet. 2006;367:1618–25.

Perkins FM, Kehlet H. Chronic pain as an outcome of surgery. A review of predictive factors. Anesthesiology. 2000;93:1123–33.

Peters ML, Sommer M, de Rijke JM, et al. Somatic and psychologic predictors of long-term unfavorable outcome after surgical intervention. Ann Surg. 2007;245:487–94.

Tasmuth T, Estlanderb AM, Kalso E. Effect of present pain and mood on the memory of past postoperative pain in women treated surgically for breast cancer. Pain. 1996;68:343–7.

Liu SS, Buvanendran A, Rathmell JP, et al. A cross-sectional survey on prevalence and risk factors for persistent postsurgical pain 1 year after total hip and knee replacement. Reg Anesth Pain Med. 2012;37:415–22.

Chou R, Gordon DB, de Leon-Casasola OA, et al. Management of postoperative pain: a clinical practice guideline from the American Pain Society, the American Society of Regional Anesthesia and Pain Medicine, and the American Society of Anesthesiologists’ Committee on Regional Anesthesia, Executive Committee, and Administrative Council. J Pain. 2016;17:131–57.

Buvanendran A, Kroin JS. Multimodal analgesia for controlling acute postoperative pain. Curr Opin Anaesthesiol. 2009;22:588–93.

Roberts GW, Bekker TB, Carlsen HH, Moffatt CH, Slattery PJ, McClure AF. Postoperative nausea and vomiting are strongly influenced by postoperative opioid use in a dose-related manner. Anesth Analg. 2005;101:1343–8.

Apfel CC, Läärä E, Koivuranta M, Greim CA, Roewer N. A simplified risk score for predicting postoperative nausea and vomiting: conclusions from cross-validations between two centers. Anesthesiology. 1999;91:693–700.

Gan TJ, Diemunsch P, Habib AS, et al. Consensus guidelines for the management of postoperative nausea and vomiting. Anesth Analg. 2014;118:85–113.

Overdyk FJ, Dowling O, Marino J, et al. Association of opioids and sedatives with increased risk of in-hospital cardiopulmonary arrest from an administrative database. PLoS One. 2016;11:e0150214.

Taylor S, Kirton OC, Staff I, Kozol RA. Postoperative day one: a high risk period for respiratory events. Am J Surg. 2005;190:752–6.

Jarzyna D, Jungquist CR, Pasero C, et al. American Society for Pain Management Nursing guidelines on monitoring for opioid-induced sedation and respiratory depression. Pain Manag Nurs. 2011;12(118–45):e10.

Kessler ER, Shah M, Gruschkus SK, Raju A. Cost and quality implications of opioid-based postsurgical pain control using administrative claims data from a large health system: opioid-related adverse events and their impact on clinical and economic outcomes. Pharmacotherapy. 2013;33:383–91.

Oderda GM, Gan TJ, Johnson BH, Robinson SB. Effect of opioid-related adverse events on outcomes in selected surgical patients. J Pain Palliat Care Pharmacother. 2013;27:62–70.

Pizzi LT, Toner R, Foley K, et al. Relationship between potential opioid-related adverse effects and hospital length of stay in patients receiving opioids after orthopedic surgery. Pharmacotherapy. 2012;32:502–14.

Eysenbach G. Improving the quality of web surveys: the Checklist for Reporting Results of Internet E-Surveys (CHERRIES). J Med Internet Res. 2004;6:e34.

Eysenbach G. Correction: Improving the quality of web surveys: the Checklist for Reporting Results of Internet E-Surveys (CHERRIES). J Med Internet Res. 2012;14:e8.

Gregorian RS Jr, Gasik A, Kwong WJ, Voeller S, Kavanagh S. Importance of side effects in opioid treatment: a trade-off analysis with patients and physicians. J Pain. 2010;11:1095–108.

Apfelbaum JL, Chen C, Mehta SS, Gan TJ. Postoperative pain experience: results from a national survey suggest postoperative pain continues to be undermanaged. Anesth Analg. 2003;97:534–40.

Meissner W, Coluzzi F, Fletcher D, et al. Improving the management of post-operative acute pain: priorities for change. Curr Med Res Opin. 2015;31:2131–43.

Ramia E, Nasser SC, Salameh P, Saad AH. Patient perception of acute pain management: data from three tertiary care hospitals. Pain Res Manag. 2017;2017:7459360.

Ballantyne JC, Carr DB, Chalmers TC, Dear KB, Angelillo IF, Mosteller F. Postoperative patient-controlled analgesia: meta-analyses of initial randomized control trials. J Clin Anesth. 1993;5:182–93.

Rodgers J, Cunningham K, Fitzgerald K, Finnerty E. Opioid consumption following outpatient upper extremity surgery. J Hand Surg Am. 2012;37:645–50.

Bates C, Laciak R, Southwick A, Bishoff J. Overprescription of postoperative narcotics: a look at postoperative pain medication delivery, consumption and disposal in urological practice. J Urol. 2011;185:551–5.

Harris K, Curtis J, Larsen B, et al. Opioid pain medication use after dermatologic surgery: a prospective observational study of 212 dermatologic surgery patients. JAMA Dermatol. 2013;149:317–21.

Warner M, Chen LH, Makuc DM. Increase in fatal poisonings involving opioid analgesics in the United States, 1999–2006. NCHS data brief, no 22. Hyattsville, MD: National Center for Health Statistics; 2009. https://www.cdc.gov/nchs/data/databriefs/db22.pdf.

Volkow ND, McLellan TA, Cotto JH, Karithanom M, Weiss SR. Characteristics of opioid prescriptions in 2009. JAMA. 2011;305:1299–301.

Centers for Disease Control and Prevention. Vital signs: overdoses of prescription opioid pain relievers—United States, 1999–2008. MMWR Morb Mortal Wkly Rep. 2011;60:1487–92.

Wunsch H, Wijeysundera DN, Passarella MA, Neuman MD. Opioids prescribed after low-risk surgical procedures in the United States, 2004–2012. JAMA. 2016;315:1654–7.

Acknowledgements

The authors would like to thank the physicians who took part in the survey; Anne Lewis, MS, Project Director at HealthiVibe, LLC, for project management; and Barbara Menzel, of Epstein Health, who helped to design the survey.

Funding

This survey was sponsored by Trevena, Inc., who has also funded the journal’s article processing charges.

Medical Writing Assistance

Medical writing support was provided by Jennifer Bodkin, PhD, CMPP, of Engage Scientific Solutions, and was funded by Trevena, Inc.

Authorship

All authors had full access to all of the data in this study and take complete responsibility for the integrity of the data and accuracy of the data analysis. All named authors meet the International Committee of Medical Journal Editors criteria for authorship for this article, take responsibility for the integrity of the work as a whole, and have given their approval for this version to be published.

Disclosures

Sheikh Usman Iqbal and Tehseen Salimi were employees of Trevena, Inc. at the time the research was conducted. Tong Joo Gan, Robert S. Epstein, and Peter G. Whang have served as consultants to Trevena, Inc. Megan L. Leone-Perkins is a consultant to HealthiVibe, LLC, and received compensation for her time and support associated with the research.

Compliance with Ethics Guidelines

This article does not contain any studies with human participants or animals.

Data Availability

The datasets generated during the current study can be requested from the corresponding author on reasonable request.

Open Access

This article is distributed under the terms of the Creative Commons Attribution-NonCommercial 4.0 International License (http://creativecommons.org/licenses/by-nc/4.0/), which permits any noncommercial use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

Author information

Authors and Affiliations

Corresponding author

Additional information

Enhanced Digital Features

To view enhanced digital features for this article go to https://doi.org/10.6084/m9.figshare.7172843.

Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

This article is published under an open access license. Please check the 'Copyright Information' section either on this page or in the PDF for details of this license and what re-use is permitted. If your intended use exceeds what is permitted by the license or if you are unable to locate the licence and re-use information, please contact the Rights and Permissions team.

About this article

Cite this article

Gan, T.J., Epstein, R.S., Leone-Perkins, M.L. et al. Practice Patterns and Treatment Challenges in Acute Postoperative Pain Management: A Survey of Practicing Physicians. Pain Ther 7, 205–216 (2018). https://doi.org/10.1007/s40122-018-0106-9

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40122-018-0106-9