Abstract

Clostridium difficile infection (CDI) is a leading cause of healthcare-associated infections, accounting for significant disease burden and mortality. The clinical spectrum of C. difficile ranges from asymptomatic colonization to toxic megacolon and fulminant colitis. CDI is characterized by new onset of ≥ 3 unformed stools in 24 h and is confirmed by laboratory test for the presence of toxigenic C. difficile. Currently, laboratory tests to diagnose CDI include toxigenic culture, glutamate dehydrogenase (GDH), nucleic acid amplification test (NAAT), and toxins A/B enzyme immunoassay (EIA). The sensitivities of these tests are variable with toxin EIA ranging from 53 to 60% and with NAAT at about 95%. Overall, the specificity is > 90% for these methods. However, the positive predictive value (PPV) depends on the disease prevalence with lower CDI rates associated with lower PPVs.

Notably, the widespread use of the highly sensitive NAAT and its relatively lower clinical specificity have led to overdiagnosis of C. difficile by identifying carriers when NAAT is used as the sole diagnostic method. Overdiagnosis of C. difficile has resulted in unwarranted treatment, possibly attributing to resistance to metronidazole and vancomycin, increased risk for overgrowth of vancomycin-resistant enterococci strains in stool specimens, and increased hospitalization thereby impacting patient safety and healthcare costs.

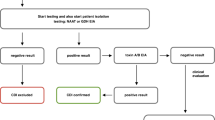

Strategies to optimize the clinical sensitivity and specificity of current laboratory tests are critical to differentiate the clinical CDI from colonization. To achieve high diagnostic yield, if preagreed institutional criteria for stool submission are not used, a multistep approach to CDI diagnosis is recommended, such as either GDH or NAAT followed by toxins A/B EIA in conjunction with laboratory stewardship by evaluating C. difficile test orders for appropriateness and providing feedback. Furthermore, antimicrobial stewardship, along with provider education on appropriate testing for C. difficile, is vital to differentiate CDI from colonization.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

C. difficile NAAT testing cannot distinguish between colonization and infection and can result in overdiagnosis and inappropriate treatment, especially when ordered in patients with low pretest probability. |

Clinical assessment for CDI is critical to appropriate diagnosis and interpretation of laboratory findings. |

Inappropriate treatment of patients colonized with C. difficile without actual clinical infection can increase the risk of multidrug-resistant pathogens such as VRE, recurrent C. difficile, hospital readmissions, and healthcare costs. |

We recommend utilizing a multistep testing algorithm to maximize the sensitivity and specificity of available C. difficile tests and avoid the diagnosis of asymptomatic colonizers. Avoid retesting within 7 days of a negative test or as a test of cure after successful treatment. |

We recommend involvement of the antimicrobial stewardship programs to provide oversight of antibacterial use and to guide C. difficile testing. |

Digital Features

This article is published with digital features, including a summary slide, to facilitate understanding of the article. To view digital features for this article go to https://doi.org/10.6084/m9.figshare.14035649

Introduction

Clostridium difficile infection (CDI) is a significant contributor to the morbidity and mortality of healthcare-associated infections in the USA. According to the Centers for Disease Control and Prevention (CDC), the US burden of CDI is approximately 224,000 infections, contributing up to 13,000 deaths with 1 billion dollars of attributable healthcare costs in 2017 [10]. Relative to the estimate of 272,000 CDI cases in 2015, the disease burden decreased; however, CDI is still the most common healthcare-associated infection [20, 29, 57]. Therefore, accurate diagnosis and prevention of CDI are of paramount importance.

The presentation of C. difficile ranges from asymptomatic colonization, mild and self-limiting diarrhea, to fulminant colitis characterized by hypotension, shock, megacolon, or ileus [32]. For C. difficile to cause disease, a person must have sufficient contact with the spores of a toxin-producing strain of C. difficile to permit the pathogen to reside in the host, followed by overgrowth in the colon, most commonly occurring because of alteration of normal colonic microbiota [8]. The fact that asymptomatic C. difficile carriage can occur in 3.4–8.1% upon hospital admission presents a further challenge to CDI diagnosis and emphasizes the importance of clinical correlation before ordering C. difficile diagnostic tests [30, 34, 55]. Exposure to antibacterial agents with a spectrum of activity sparing anaerobic pathogens such as C. difficile is the most critical contributor to gut microbiota alteration and allows for a proliferation of C. difficile [39]. Intravenous antibiotics have been correlated with a two-fold higher risk for antibacterial-associated diarrhea and development of CDI compared to oral antibiotics [21]. Although most antibacterial agents have been associated with an increased risk of CDI, third- or fourth-generation cephalosporins, carbapenems, fluoroquinolones, and clindamycin have been found to pose the highest risk [23, 42]. Two meta-analyses of community-associated CDI identified clindamycin, fluoroquinolones, and cephalosporins as high-risk antibiotics, while macrolides, sulfonamides, and penicillins were assessed as lower-risk antibiotics [7, 13]. Third-generation cephalosporins, clindamycin, second-generation cephalosporins, and fourth-generation cephalosporins had the strongest associations with hospital-acquired CDI [47]. Additionally, the use of fluoroquinolones has been associated with a significant increase in a hypervirulent strain of C. difficile (ribotype 027) that can cause more severe infection [44]. Therefore, antibiotics with high risk for CDI must be used judiciously to minimize the likelihood of antibiotic-associated CDI [3].

Progression from colonization to CDI is diagnosed by the presence of abdominal symptoms, usually watery diarrhea (i.e., ≥ 3 loose stools in 24 h) and either a stool test positive for C. difficile toxins or detection of toxigenic C. difficile, or colonoscopic or histopathologic findings revealing pseudomembranous colitis [32]. Several diagnostic tools are available for CDI, which vary in sensitivity and specificity (Table 1) [5, 9, 12, 32]. Currently, there is no consensus on the most appropriate laboratory diagnostic method for CDI. Careful consideration of the testing method is critical as the detection of C. difficile does not always equate to clinical infection that requires treatment, unlike colonization. Variation in diagnostic testing capability to distinguish colonization from infection with toxin production coupled with controversy over the optimal testing methodology continues to be a challenging barrier to accurate diagnosis of patients with clinical CDI. Furthermore, in a single-center retrospective study, the appropriateness of C. difficile testing was found to be only 19.6% with indeterminate and inappropriate testing rates of 65.5% and 14.8%, respectively [26].

In this article, we will evaluate the current status of C. difficile testing, the impact of C. difficile over-testing, and its effect on overdiagnosis of colonization and provide recommendations to improve the detection of clinically significant CDI. This review will discuss implications of testing results on treatment but will not review clinical treatment and management as practice guidelines for treatment in adults and children are available by the Infectious Diseases Society of America (IDSA) and the Society for Healthcare Epidemiology of America [32]. This article is based on previously conducted studies and does not contain any studies with human participants or animals performed by any of the authors.

Review of C. difficile Diagnostic Tests

The current IDSA Guidelines on C. difficile updated in 2018 recommend testing for CDI for patients with unexplained and new onset of ≥ 3 unformed stools in 24 h [32]. The diagnostic methods are defined based on presence or absence of preagreed institutional criteria for patient stool submission such as to not submit stool specimens on patients receiving laxatives or other potential known causes of diarrhea and to submit stool specimens only from patients with unexplained and new onset ≥ 3 unformed stools in 24 h [32]. For instance, if preagreed criteria for stool submission are present, a NAAT alone or a multistep algorithm that includes a stool toxin test is recommended. However, if preagreed criteria for stool submission are not used, a stool toxin test as part of a multi-step algorithm rather than NAAT alone is recommended [32]. Historically, the laboratory gold standard for diagnosing C. difficile was toxigenic culture (Table 1) [8]. Toxigenic culture (TC) requires culture of C. difficile from stool, followed by testing of the isolates to determine their ability to produce toxins. Although this process has a high sensitivity > 95% for detecting C. difficile, its utility is limited by the slow turnaround time of 3–5 days, making it unsuitable for routine diagnostic testing [5, 6]. In addition, toxigenic culture alone is often associated with false-positive results because of the presence of non-toxigenic strains [5]. Based on these limitations, toxigenic culture is typically used as a reference method rather than a diagnostic method.

Prior to 2009, the primary method of laboratory testing for C. difficile was performed by a two-step process of glutamate dehydrogenase (GDH) antigen detection followed by a toxin immunoassay (EIA) for toxins A and B (Table 1) [8]. A GDH EIA can detect the highly conserved metabolic enzyme present in all C. difficile isolates. However, GDH is present in both toxigenic and non-toxigenic strains of C. difficile. Therefore, while GDH EIAs have a high sensitivity up to > 90%, they are unable to differentiate between CDI versus asymptomatic colonization or presence of non-toxigenic strains resulting in a low testing specificity of about 70% [5, 9, 12]. To account for this limitation, positive GDH EIA can serve as a screening tool and be followed by toxins A/B EIA, which have lower sensitivity (53%–60%) but higher specificity (97%–100%) according to the pooled analysis by Crobach et al. [12]. The reported sensitivity of toxins A/B EIA is variable with the historical standard of care being to order C. difficile EIA three times, and the average sensitivity is about 60% compared to toxigenic culture but higher at about 83% compared to the cell cytotoxicity neutralization assay (CCNA) [6, 12]. Together, GDH EIA screening followed by toxins A/B EIA allows for a sensitive, specific, and practical method for diagnosing CDI.

Subsequently, in 2009, the US Food and Drug Administration approved the first nucleic acid amplification test (NAAT) for C. difficile (Table 1) [5, 8, 12]. Amplification of the C. difficile DNA is performed via polymerase chain reaction (PCR), which detects the genes encoding the toxins, tcdA for toxin A gene and tcdB for toxin B gene; however, it cannot distinguish between pathogen presence versus active toxin production or infection. Although the NAAT assay is more expensive than the other methods, it has been widely adopted as the preferred laboratory diagnostic tool because of its high sensitivity of up to 100%, rapid turnaround time, and one-step strategy [12].

After many institutions adopted NAAT as the sole method of diagnosing CDI, hospitals in the US began observing significant increases in C. difficile cases and concomitant increases in anti-C. difficile antibacterial use. Retrospective studies comparing the overall incidence of CDI before and after NAAT implementation found a > 50% increase in the healthcare facility-associated CDI rate [36]. Further analysis of the NAAT method found that while it is highly sensitive in detecting the presence of toxigenic C. difficile, it is not specific enough to differentiate between C. difficile colonization versus active infection with toxin production. For instance, Polage et al. found that only 44.7% of hospitalized adults with positive C. difficile PCR had toxins detected. Upon further analysis of these patient groups, nearly all CDI-related complications or deaths within 30 days occurred in patients with toxin-positive results. Patients with a positive PCR and a negative toxin test result had outcomes similar to patients without C. difficile infection, raising the concern for overdiagnosis with exclusive PCR use [40]. The negative predictive value of NAAT is remarkably high at > 96% at various CDI prevalence rates, aiding clinicians to rule out CDI [12]. However, the positive predictive value varies significantly depending on the disease prevalence. For instance, in a hypothetical CDI prevalence of 5%, the positive predictive value of the NAAT alone is estimated to be as low as 46% [12]. Therefore, the use of NAAT alone, like GDH EIA alone, has the potential to overdiagnose CDI by identifying asymptomatic carriers of C. difficile. Moreover, the NAAT can remain positive in > 50% of patients after completion of appropriate treatment courses for CDI, augmenting the challenge of interpreting results in patients with a prior infection [1]. Because of the imperfect clinical specificity and low positive predictive value of the NAAT test, there has been a substantial increase in the diagnosis of C. difficile colonization leading to several notable implications.

Impact of Overdiagnosis

The increase in the incidence of CDI is likely multifactorial, stemming from decades of overuse of broad-spectrum antibacterial agents and the emergence of more virulent C. difficile strains. However, the challenges posed by testing methodologies incapable of accurately distinguishing clinical infection with C. difficile versus colonization have likely led to an overdiagnosis in an era of high-sensitivity molecular testing such as NAAT. Regardless of the cause, increased diagnosis of CDI has led to several noteworthy implications, both clinically and financially.

One of the most concerning consequences of conflating CDI with colonization and subsequent overdiagnosis is the resultant unnecessary use of antibacterial agents for CDI treatment. This has signaled a new era of increasing resistance against commonly used agents for C. difficile. Previously, metronidazole was considered a first-line agent for the treatment of CDI. Before 2000, several randomized control trials comparing oral metronidazole to oral vancomycin found no difference in outcomes [52, 54]. Unfortunately, with the rise of CDI cases and treatment, metronidazole has resulted in clinical cure rates lower than those of vancomycin by 13%–20% with reports of resistance to C. difficile [25, 56]. While susceptibility testing of C. difficile against metronidazole or vancomycin is not routinely performed, surveillance data are available. A national survey of C. difficile in Israel found that 18.3% of 208 isolates tested were resistant to metronidazole using the EUCAST breakpoint susceptibility cut-off < 2 mcg/ml [2]. Similarly, an integrated human and swine population in the US reported 13.3% of 271 C. difficile isolates as resistant to metronidazole [38]. Although the surveillance data on C. difficile resistance from outside the US have reported a significant increase in metronidazole resistance, the most recent US surveillance data reported that metronidazole resistance is low at 3.6% and 1.3% for 2011–2012 and 2013–2016, respectively [48, 53]. Due to the emergence of data reporting inferior clinical response of metronidazole against C. difficile relative to vancomycin, the IDSA clinical guidelines no longer recommend metronidazole as a first-line agent for CDI of any severity [32]. With only a few treatment options available for CDI, metronidazole resistance raises significant concern for overall management of CDI and must be monitored closely.

Similarly, there has also been an emergence of vancomycin non-susceptible C. difficile isolates. A ribotype 027 C. difficile strain with reduced susceptibility to vancomycin has been reported in Israel and is the most common clinical strain isolated [2]. The US-based national sentinel surveillance study revealed that when comparing isolates from 1984–2003 to 2011–2012, the minimum inhibitory concentration to inhibit the growth of 90% (MIC90) for vancomycin increased from 1 mcg/ml to 4 mcg/ml with resistance rate of 17.9% using EUCAST breakpoints [48]. These data raised an alarm that vancomycin resistance to C. difficile was emerging. However, the subsequent US-based national surveillance data of near 1900 C difficile isolates from 2013 to 2016 reported lower vancomycin resistance rates of 6.5% with MIC90 of 2 mcg/ml [53]. Despite the apparent decrease in vancomycin resistance, the fluctuation of the resistance rate and MIC90 highlights the critical need for testing strategies that clearly distinguish clinically significant infection from colonization with C. difficile.

Another major concern for oral vancomycin use is the acquisition of vancomycin-resistant enterococcus (VRE) colonization and, to a lesser extent, infections. Shay et al. observed a marginal association between oral vancomycin and VRE bloodstream infections with the odds ratio of 3.4 (95% CI 0.9–15) [46]. In contrast, the restriction of oral vancomycin was temporarily associated with a decrease in VRE colonization or infection during a period of high intravenous vancomycin use [31]. Furthermore, oral vancomycin therapy was found to promote VRE overgrowth in stool, and new detection of VRE stool colonization was observed in 8–31% of patients [3, 31, 37]. Use of oral vancomycin for the prevention of CDI also carries the potential to increase risk of VRE overgrowth. Although a recent meta-analysis found no significant increase in risk of VRE infection after oral vancomycin prophylaxis, this finding is yet to be validated by prospective blinded randomized controlled trials [4]. Similarly, metronidazole receipt was reported to be independently associated with new or incident VRE colonization after controlling for clinical covariates [33]. VRE colonization has been noted to precede VRE infection. In particular, among immunocompromised patients including hematopoietic stem cell transplantation, not only VRE colonization is hard to eradicate after initial colonization, but also recolonization occurs in 29% of patients, further raising the risk of VRE infection in this vulnerable patient population [17, 22, 24]. Given the data linking oral vancomycin and metronidazole to new VRE stool colonization, a focus on diagnostic stewardship and efforts to discontinue therapy in patients without CDI should be prioritized to mitigate the risk of developing VRE colonization or infection.

Due to the reported resistance to metronidazole and vancomycin combined with a concern for recurrence, the IDSA clinical guidelines currently recommend fidaxomicin as one of the first-line treatment options [32]. Based on the selection pressure of antibiotic use, there is also concern that increased use of fidaxomicin may promote a selection of fidaxomicin-resistant C. difficile strains. Nonetheless, the phase 3 fidaxomicin trial and the systematic review assessing > 8000 isolates through 2017 found no resistance to fidaxomicin [19, 45]. Of note, because fidaxomicin has been reported to have activity against VRE, there is potentially lower risk of VRE acquisition, yet it has the potential to promote selection of preexisting subpopulations of VRE with elevated fidaxomicin MICs [37]. Considering the risks for selection of fidaxomicin-resistant C. difficile and VRE strains, it is imperative to employ stewardship efforts to prevent overdiagnosis of C. difficile.

Moreover, the overdiagnosis of CDI has substantial financial implications. Hospital-acquired (HA) CDI has been associated to an increase in hospital length of stay, augmenting up to additional 5 days [15]. This is problematic as the treatment of inappropriately diagnosed CDI will increase hospitalization costs and resource utilization. One study estimated the excess acute care inpatient costs because CDI alone can add up to $4.8 billion annually [50]. According to a retrospective study of patients discharged from California hospitals between 2009 and 2011, 28% of all 30-day readmissions were infection related, of which CDI comprised 4.2% [18]. Furthermore, in a sample of Medicare claims between 2009 and 2011 for hospitalizations with primary or secondary diagnosis of CDI, 13% of patients were readmitted within 90 days with CDI, increasing the overall burden to healthcare systems [41]. Therefore, it would be prudent to avoid overdiagnosis of CDI to prevent incurrence of unnecessary expense to patients and healthcare systems.

Mitigation Strategies

Several important strategies may be employed to improve appropriate testing of C. difficile and to differentiate colonization from clinical CDI. One of the most essential interventions includes the proper use and interpretation of diagnostic tests for CDI, or diagnostic stewardship. Patients should only be tested for C. difficile if they present with clinical signs and symptoms of actual infection, for example, unexplained and new-onset watery diarrhea (≥ 3 liquid stools within 24 h) without alternate explanations such as laxative use or antibacterial/chemotherapy-induced diarrhea. At present, the recommended diagnostic methods include a 2–3 step algorithm to maximize sensitivity and specificity. Acceptable options include either GDH detection or NAAT followed by toxins A/B EIA. Patients should only be considered for treatment if they have positive toxin EIA results, indicating they have clinical CDI rather than C. difficile colonization. In general, the utilization of NAAT alone is not recommended because of its low positive predictive value especially in the absence of predefined testing criteria [12]. For example, enhancement of electronic medical records combining prescriber attestation for CDI-related symptoms and auto-population of objective C. difficile parameters such as laxative use and previous CDI test can successfully reduce inappropriate testing by > 60% [43]. Dunn et al. recently published a meta-analysis of 11 retrospective non-randomized trials to determine whether the clinical decision support alerts related to CDI diagnostic stewardship are effective in reducing inappropriate testing and CDI rates among hospitalized adult patients [16]. Six of these studies reported a significant decrease in CDI testing volume, and three studies showed an approximately 15% absolute increase in appropriate testing rates ranging from 50 to 98% with the clinical decision support alerts. Most studies showed a significant decrease in the CDI rate, which mirrored the reduction in CDI testing volume. Therefore, electronic alerts and clinical decision tools appear to be valuable in reducing inappropriate CDI testing and overdiagnosis. Prospective trials evaluating additional effects of alerts such as alert fatigue or durability of changes in testing practices would further enhance the understanding of the impact of the alerts and diagnostic stewardship.

Repeat testing for CDI should be avoided within 7 days of a negative result because of low diagnostic yield at about 2%, and patients with successful treatment should not be tested as a test of cure because > 60% of patients may test remain positive [32]. Repeat testing within the 7 days during the same episode of diarrhea after a negative result is indicated only if clear changes in the character of diarrhea or new supporting clinical evidence of CDI is present [32]. The use of a laxative or stool softeners, oral contrast administration in the previous 48 h, tube-feeding initiation, gastrointestinal bleeding, and other infectious causes of diarrhea must be evaluated first before ordering a C. difficile test. It is critical to recognize that the diagnosis of CDI is a clinical and laboratory diagnosis. In a study of 111 patients tested for C. difficile by EIA, none of the patients with low pretest probability had a positive EIA or developed CID within 30 days after the initial test [27]. Thus, the C. difficile test should be ordered primarily for those patients with moderate to high pretest probability for CDI.

Likewise, antimicrobial stewardship is one of the most important approaches to prevent CDI. Several strategies can be implemented to minimize the frequency and duration of high-risk antibacterial therapy and the number of antibacterial agents prescribed. To reduce the overall antibacterial exposure, prescribers are encouraged to specify the duration of therapy for antibacterial orders to prevent unnecessarily prolonged therapy. Alternatively, antibacterial orders exceeding a pre-defined length of therapy (i.e., 7 days) should be evaluated by the antimicrobial stewardship program (ASP) to assess the appropriateness of continued treatment. The majority of infections except for endovascular or central nervous system infections have a recommended duration of up to 7 days; hence, interventions aimed to discontinue antimicrobials at this time would be beneficial [35, 49, 51]. Furthermore, depending on local epidemiology and the C. difficile prevalence, restricting the use of fluoroquinolones, clindamycin, carbapenems, and third- or fourth-generation cephalosporins can be considered to ensure judicious use of broad-spectrum antibacterial agents. For example, diagnostic stewardship consisting of ASP preauthorization with education was reported to have reduced the hospital-wide CDI incidence rate from 8.5 per 10,000 patient days to 6.5 per 10,000 patient days [11]. These recommendations are supported by the IDSA guidelines, which recommend the involvement of ASP programs to contain CDI.

Several of these stewardship interventions have been evaluated and linked to significant reductions in the overdiagnosis and treatment of C. difficile. For example, following implementation of several national control policies, the incidence of C. difficile in England has declined by nearly 80% since 2006, proving the fluoroquinolone restriction strategy the most effective intervention [14]. Similarly, a study in Scotland evaluated the effect of C. difficile infection after restricting the use of high-risk antibiotics including fluoroquinolones, clindamycin, amoxicillin-clavulanate, and cephalosporins [28]. Following a 50% reduction in high-risk antibiotics, C. difficile infection rates declined by an estimated 68%. These studies highlight the importance of antimicrobial stewardship efforts in combination with diagnostic stewardship to reduce the incidence and inappropriate treatment of C. difficile infection.

Conclusion

CDI remains an urgent health threat throughout the nation. Recognition of clinical CDI and distinguishing this entity from asymptomatic carriage or colonization are crucial to achieve the dual goals of reducing CDI rates and the risk for antibacterial resistant C. difficile strains. Diagnostic and antimicrobial stewardship must be coupled with provider education on appropriate testing of C. difficile and is instrumental in decreasing the incidence of CDI due to broad-spectrum antibacterial use and to reduce the risk of inappropriate treatment of C. difficile colonization.

References

Abujamel T, et al. Defining the vulnerable period for re-establishment of Clostridium difficile colonization after treatment of C. difficile infection with oral vancomycin or metronidazole. PLoS ONE. 2013;8(10):e76269.

Adler A, et al. A national survey of the molecular epidemiology of Clostridium difficile in Israel: the dissemination of the ribotype 027 strain with reduced susceptibility to vancomycin and metronidazole. Diagn Microbiol Infect Dis. 2015;83(1):21–4.

Al-Nassir WN, et al. Both oral metronidazole and oral vancomycin promote persistent overgrowth of vancomycin-resistant enterococci during treatment of Clostridium difficile-associated disease. Antimicrob Agents Chemother. 2008;52(7):2403–6.

Babar S, et al. Oral vancomycin prophylaxis for the prevention of. Infect Control Hosp Epidemiol. 2020;41(11):1302–9.

Bartlett JG. Detection of Clostridium difficile infection. Infect Control Hosp Epidemiol. 2010;31(Suppl 1):S35-37.

Brecher SM, et al. Laboratory diagnosis of Clostridium difficile infections: there is light at the end of the colon. Clin Infect Dis. 2013;57(8):1175–81.

Brown KA, et al. Meta-analysis of antibiotics and the risk of community-associated Clostridium difficile infection. Antimicrob Agents Chemother. 2013;57(5):2326–32.

Burnham CA, Carroll KC. Diagnosis of Clostridium difficile infection: an ongoing conundrum for clinicians and for clinical laboratories. Clin Microbiol Rev. 2013;26(3):604–30.

Carroll KC, Mizusawa M. Laboratory Tests for the Diagnosis of Clostridium difficile. Clin Colon Rectal Surg. 2020;33(2):73–81.

CDC (2019). "Antibiotic Resistance Threats in the United States, 2019. Atlanta, GA: U.S. Department of Health and Human Services, CDC."

Christensen AB, et al. Diagnostic stewardship of C. difficile testing: a quasi-experimental antimicrobial stewardship study. Infect Control Hosp Epidemiol. 2019;40(3):269–75.

Crobach MJ, et al. European Society of Clinical Microbiology and Infectious Diseases: update of the diagnostic guidance document for Clostridium difficile infection. Clin Microbiol Infect. 2016;22(Suppl 4):S63-81.

Deshpande A, et al. Community-associated Clostridium difficile infection and antibiotics: a meta-analysis. J Antimicrob Chemother. 2013;68(9):1951–61.

Dingle KE, et al. Effects of control interventions on Clostridium difficile infection in England: an observational study. Lancet Infect Dis. 2017;17(4):411–21.

Dubberke ER, Olsen MA. Burden of Clostridium difficile on the healthcare system. Clin Infect Dis. 2012;55(Suppl 2):S88-92.

Dunn, A. N., et al. (2020). "The impact of clinical decision support alerts on Clostridioides difficile Testing: a systematic review." Clin Infect Dis.

Ford CD, et al. Vancomycin-resistant enterococcus colonization and bacteremia and hematopoietic stem cell transplantation outcomes. Biol Blood Marrow Transplant. 2017;23(2):340–6.

Gohil SK, et al. Impact of hospital population case-mix, including poverty, on hospital all-cause and infection-related 30-Day readmission rates. Clin Infect Dis. 2015;61(8):1235–43.

Goldstein EJ, et al. Comparative susceptibilities to fidaxomicin (OPT-80) of isolates collected at baseline, recurrence, and failure from patients in two phase III trials of fidaxomicin against Clostridium difficile infection. Antimicrob Agents Chemother. 2011;55(11):5194–9.

Guh AY, et al. Trends in U.S. Burden of. N Engl J Med. 2020;382(14):1320–30.

Haran JP, et al. Factors influencing the development of antibiotic associated diarrhea in ED patients discharged home risk of administering IV antibiotics. Am J Emerg Med. 2014;32(10):1195–9.

Hefazi M, et al. Vancomycin-resistant Enterococcus colonization and bloodstream infection: prevalence, risk factors, and the impact on early outcomes after allogeneic hematopoietic cell transplantation in patients with acute myeloid leukemia. Transpl Infect Dis. 2016;18(6):913–20.

Hensgens MP, et al. Time interval of increased risk for Clostridium difficile infection after exposure to antibiotics. J Antimicrob Chemother. 2012;67(3):742–8.

Hughes HY, et al. A retrospective cohort study of antibiotic exposure and vancomycin-resistant Enterococcus recolonization. Infect Control Hosp Epidemiol. 2019;40(4):414–9.

Johnson S, et al. Vancomycin, metronidazole, or tolevamer for Clostridium difficile infection: results from two multinational, randomized, controlled trials. Clin Infect Dis. 2014;59(3):345–54.

Kelly SG, et al. Inappropriate Clostridium difficile Testing and Consequent Overtreatment and Inaccurate Publicly Reported Metrics. Infect Control Hosp Epidemiol. 2016;37(12):1395–400.

Kwon JH, et al. Evaluation of correlation between pretest probability for Clostridium difficile infection and clostridium difficile enzyme immunoassay results. J Clin Microbiol. 2017;55(2):596–605.

Lawes T, et al. Effect of a national 4C antibiotic stewardship intervention on the clinical and molecular epidemiology of Clostridium difficile infections in a region of Scotland: a non-linear time-series analysis. Lancet Infect Dis. 2017;17(2):194–206.

Lessa FC, et al. Burden of Clostridium difficile infection in the United States. N Engl J Med. 2015;372(24):2369–70.

Longtin Y, et al. Effect of detecting and isolating Clostridium difficile carriers at hospital admission on the incidence of c difficile infections: a quasi-experimental controlled study. JAMA Intern Med. 2016;176(6):796–804.

Luber AD, et al. Relative importance of oral versus intravenous vancomycin exposure in the development of vancomycin-resistant enterococci. J Infect Dis. 1996;173(5):1292–4.

McDonald LC, et al. Clinical practice guidelines for Clostridium difficile infection in adults and children: 2017 update by the infectious diseases society of america (IDSA) and society for healthcare epidemiology of america (SHEA). Clin Infect Dis. 2018;66(7):e1–48.

McKINNELL JA, et al. Patient-level analysis of incident vancomycin-resistant enterococci colonization and antibiotic days of therapy. Epidemiol Infect. 2016;144(8):1748–55.

Meltzer E, et al. Universal screening for Clostridioides difficile in a tertiary hospital: risk factors for carriage and clinical disease. Clin Microbiol Infect. 2019;25(9):1127–32.

Metlay JP, et al. Diagnosis and treatment of adults with community-acquired pneumonia. an official clinical practice guideline of the american thoracic society and infectious diseases society of america. Am J Respir Crit Care Med. 2019;200(7):e45–67.

Moehring RW, et al. Impact of change to molecular testing for Clostridium difficile infection on healthcare facility-associated incidence rates. Infect Control Hosp Epidemiol. 2013;34(10):1055–61.

Nerandzic MM, et al. Reduced acquisition and overgrowth of vancomycin-resistant enterococci and Candida species in patients treated with fidaxomicin versus vancomycin for Clostridium difficile infection. Clin Infect Dis. 2012;55(Suppl 2):S121-126.

Norman KN, et al. Comparison of antimicrobial susceptibility among Clostridium difficile isolated from an integrated human and swine population in Texas. Foodborne Pathog Dis. 2014;11(4):257–64.

Peng Z, et al. Update on antimicrobial resistance in Clostridium difficile: resistance mechanisms and antimicrobial susceptibility testing. J Clin Microbiol. 2017;55(7):1998–2008.

Polage CR, et al. Overdiagnosis of Clostridium difficile infection in the molecular test era. JAMA Intern Med. 2015;175(11):1792–801.

Psoinos CM, et al. post-hospitalization treatment regimen and readmission for C. difficile colitis in medicare beneficiaries. World J Surg. 2018;42(1):246–53.

Pépin J, et al. Emergence of fluoroquinolones as the predominant risk factor for Clostridium difficile-associated diarrhea: a cohort study during an epidemic in Quebec. Clin Infect Dis. 2005;41(9):1254–60.

Quan KA, et al. Reductions in Clostridium difficile infection (CDI) rates using real-time automated clinical criteria verification to enforce appropriate testing. Infect Control Hosp Epidemiol. 2018;39(5):625–7.

Redmond SN, et al. Impact of reduced fluoroquinolone use on. Pathog Immun. 2019;4(2):251–9.

Saha S, et al. Increasing antibiotic resistance in Clostridioides difficile: A systematic review and meta-analysis. Anaerobe. 2019;58:35–46.

Shay DK, et al. Epidemiology and mortality risk of vancomycin-resistant enterococcal bloodstream infections. J Infect Dis. 1995;172(4):993–1000.

Slimings C, Riley TV. Antibiotics and hospital-acquired Clostridium difficile infection: update of systematic review and meta-analysis. J Antimicrob Chemother. 2014;69(4):881–91.

Snydman DR, et al. U.S.-based national sentinel surveillance study for the Epidemiology of Clostridium difficile-associated diarrheal isolates and their susceptibility to fidaxomicin. Antimicrob Agents Chemother. 2015;59(10):6437–43.

Solomkin JS, et al. Diagnosis and management of complicated intra-abdominal infection in adults and children: guidelines by the surgical infection society and the infectious diseases society of america. Clin Infect Dis. 2010;50(2):133–64.

Song X, et al. Rising economic impact of Clostridium difficile-associated disease in adult hospitalized patient population. Infect Control Hosp Epidemiol. 2008;29(9):823–8.

Stevens DL, et al. Practice guidelines for the diagnosis and management of skin and soft tissue infections: 2014 update by the Infectious Diseases Society of America. Clin Infect Dis. 2014;59(2):e10-52.

Teasley DG, et al. Prospective randomised trial of metronidazole versus vancomycin for Clostridium-difficile-associated diarrhoea and colitis. Lancet. 1983;2(8358):1043–6.

Thorpe CM et al. "U.S.-Based National Surveillance for Fidaxomicin Susceptibility of Clostridioides difficile-Associated Diarrheal Isolates from 2013 to 2016." Antimicrob Agents Chemother 2019;63(7).

Wenisch C, et al. Comparison of vancomycin, teicoplanin, metronidazole, and fusidic acid for the treatment of Clostridium difficile-associated diarrhea. Clin Infect Dis. 1996;22(5):813–8.

Zacharioudakis IM, et al. Colonization with toxinogenic C. difficile upon hospital admission, and risk of infection: a systematic review and meta-analysis. Am J Gastroenterol. 2015;110(3):381–90.

Zar FA, et al. A comparison of vancomycin and metronidazole for the treatment of Clostridium difficile-associated diarrhea, stratified by disease severity. Clin Infect Dis. 2007;45(3):302–7.

Zhang S, et al. Cost of hospital management of Clostridium difficile infection in United States-a meta-analysis and modelling study. BMC Infect Dis. 2016;16(1):447.

Acknowledgements

Funding

No funding or sponsorship was received for this study or publication of this article.

Authorship

All named authors meet the International Committee of Medical Journal Editors (ICMJE) criteria for authorship for this article, take responsibility for the integrity of the work as a whole, and have given their approval for this version to be published

Compliance with Ethics Guidelines

This article is based on previously conducted studies and does not contain any studies with human participants or animals performed by any of the authors.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution-NonCommercial 4.0 International License, which permits any non-commercial use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by-nc/4.0/.

About this article

Cite this article

Lee, H.S., Plechot, K., Gohil, S. et al. Clostridium difficile: Diagnosis and the Consequence of Over Diagnosis. Infect Dis Ther 10, 687–697 (2021). https://doi.org/10.1007/s40121-021-00417-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40121-021-00417-7