Abstract

The problem of multicollinearity among predictor variables is a frequent issue in longitudinal data analysis. In this context, this paper proposes a mixed ridge regression model via shrinkage methods to analyze such data. Furthermore, in view of obtaining more efficient estimators, we propose preliminary and Stein-type estimators using prior information for fixed-effects parameters. The model parameters are estimated via the EM algorithm. A simulation study is also presented to assess the performance of the estimators under different estimation methods. An application to the HIV data is also illustrated.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

In longitudinal data setup, repeated measures of some variables of interest are collected over a specified time period for different independent subjects or individuals. Such types of data are commonly encountered in medical research where the responses are subject to various time-dependent and time-constant effects such as pre- and post-treatment types, gender effect, baseline measures and among others (see Mamode Khan et al. [1], Yuan et al. [2], Verbeke et al. [3], Temesgen and Kebede [4], Seyoum et al. [5] and the references therein). It is quite natural, in the above examples, the repeated measures shall exhibit some forms of dependence that may be resulted from some serial or random effects as outlined by Zeger and Liang [6], Thall and Vail [7], Laird and Ware [8], Sutradhar [9] and Sutradhar and Jowaheer [10]. Thus, the main purpose of the longitudinal studies is to estimate the effects of the various parameters and determine their significance while the dependence estimate is treated as secondary. In this context, FitzMaurice and Laird [11] and Sutradhar et al. [12] have proposed various likelihood-based and pseudo-likelihood-based estimation procedures to estimate the regression effects but the efficiency of the estimators in these approaches may be questionable, in particular, under multi-collinearity among the predictor variables as considered by Eliot et al. [13], Hossain et al. [14] and Saleh et al. [15].

Since longitudinal data mostly arise from clinical studies, the expert knowledge about the parameters has vital impact on the output and is thus an important component in the estimation of model parameters. The preliminary test and shrinkage techniques are mostly used mechanisms in which a prior knowledge can be included in the estimation stage (see papers by Ali and Saleh [16], Ahmed and Fallahpour [17] ,Roozbeh and Arashi [18] and Yuzbasi and Ahmed [19], Yuzbasi et al. [20] and Asar [21] and the references therein). In this paper, we develop the preliminary test and shrinkage estimation methods for the analysis of longitudinal data in ridge regression context, where some parameters are subject to certain/uncertain restrictions. By this, we improve the estimation technique, in both the mean squared error (MSE) and mean prediction error (MPE) senses.

We begin with the linear mixed effects (LM) model given by

where \(i=1,\ldots ,n\) represents individual, \({\mathbf{y}}_i=(y_{i1},y_{i2},\ldots ,y_{in_i})^{\mathrm{T}}\) is a vector of \(n_i\) observations for individual i, and \({\mathbf{x}}_i\) is the corresponding \(n_i\times p\) design matrix of fixed-effect covariates. We further assume \({\mathbf{b}}_i\sim N_q(0,{\mathbf{D}})\) is person-specific random effects, \({\mathbf{z}}_i\) the corresponding random effects design matrix and \({\varvec{\varepsilon }}_i\sim N_n(0,\sigma ^2{\mathbf{I}}_{n_i\times n_i})\). Let \({\mathbf{Y}}, {\mathbf{X}}\) and \({\mathbf{Z}}\) be appropriately defined matrices representing the concatenation of the corresponding variables over all individuals i. Then, the LM model in matrix notation has form

where \({\varvec{\mu}}\): \(N\times 1\), \({\mathbf{X}}\): \(N\times p\), \({\varvec{\beta}}\): \(p\times 1\), \({\mathbf{Z}}\): \(N\times nq\) and \({\mathbf{b}}\): \(nq\times 1\). Here, \(N=\sum ^n_{i=1}n_i\).

The log-likelihood function of \({\mathbf{Y}}\) based on this model is given by

where \({\mathbf{V}}={\text{Var}}({\mathbf{Y}})={\mathbf{Z}}{\mathbf{D}}{\mathbf{Z}}^{\mathrm{T}}+\sigma ^2{\mathbf{I}}\) and \({\mathbf{V}}_i\) is the variance component corresponding to individual i. Maximizing (3) with respect to the fixed-effects parameter vector, \({\varvec{\beta }}\), in the non-penalized setting is equivalent to minimizing the least squares objective function that gives the estimate of \({\varvec{\beta }}\) as

Consider a situation in which the multi-collinearity problem is present. The ridge regression approach designed specifically to handle correlated predictors involves introducing a shrinkage penalty k to the least squares equation, and subsequently solving for the value of \({\varvec{\beta }}\) such that

The structure of the paper is therefore organized as follows: In “Shrinkage approach” section, the novel estimation approach based on shrinkage method is proposed which also includes some computational aspects and the EM algorithm. “Simulation study” section provides the Monte Carlo simulation experiments followed by the section on the application of the new techniques to the HIV data, and a comparative study is also presented. The conclusion is presented in final section.

Shrinkage approach

In this section, we consider the mixed ridge (MR) model (2) and develop preliminary test and Stein-type estimation of the fixed-effects parameter \({\varvec{\beta }}\) when it is a priori suspected that \({\varvec{\beta }}={\varvec{\beta }}_0\). Usually, \({\varvec{\beta }}\) comes from different sources: (1) a fact known from theoretical or experimental considerations, (2) hypothesis that may have to be tested or (3) an artificially imposed condition to reduce redundancy in the description of the model. Here, we interpret as expert knowledge.

Incorporating expert knowledge

In the statistical literature, preliminary test estimation of parameters was introduced by Bancroft [22] to estimate the parameters of a model when it is suspected that some “uncertain prior information” (UPI) on the parameter of interest is available. The method involves a statistical test of the UPI based on an appropriate statistic and a decision on whether the model-based sample estimate or the prior information-based estimate of the model parameters should be taken.

In our case, if we suspect \({\varvec{\beta }}\) to be \({\varvec{\beta }}_{0},\) then \({\varvec{\beta }}_{0}\) is termed as the restricted estimator (RE) that must be incorporated in the analysis. Otherwise, \({\hat{{\varvec{\beta }}}}_\mathrm{MR}\) is used. In practice, the prior information that \({\varvec{\beta }}={\varvec{\beta }}_0\) is uncertain. The doubt on this prior information can be removed by testing the following hypothesis:

As a result of this test, we choose \({\varvec{\beta }}_0\) or not based on the rejection or acceptance of \({\mathbf{H}}_{0}\). Accordingly, we develop the estimator

called the preliminary test MR (PTMR) estimator. Using indicator function, this estimator can be rewritten as

where \(I_A\) is the indicator function of the set A, L is the Wald statistic given by \(L=( {\hat{{\varvec{\beta }}}} - {\varvec{\beta }}_0)^{\mathrm{T}} ({\mathbf{X}}^{\mathrm{T}} {\mathbf{V}}^{-1} {\mathbf{X}}) ( {\hat{{\varvec{\beta }}}} - {\varvec{\beta }}_0)\) and \(L_n(\alpha )\) is the upper \(\alpha \) level critical value of the \(\chi \)-distribution with p d.f.

The PTMR estimator is highly dependent on the level of significance \(\alpha \) and has discrete nature which is simplified to one of the extremes \({\hat{\varvec{\beta }}}_\mathrm{MR}\) and \({\varvec{\beta }}_0\), according to the output of test. In this respect, making use of a continuous and \(\alpha \)-free estimator may make more sense.

Stein-type estimation was introduced by Stein [23] and James and Stein [24] in the statistical literature. It combines UPI on the parameters of interest and the sample observation from the statistical model. In the context of LM model, using the same approach as in Saleh [15], the Stein-type estimator of \({\varvec{\beta }}\) has form

Unlike the PTMR estimator, the Stein-type estimator is a smooth function and independent of the level of significance \(\alpha \).

Notice that the forms of \({\hat{{\varvec{\beta }}}}_\mathrm{MR}^S\) and \({\hat{{\varvec{\beta }}}}_\mathrm{MR}^{\rm PT}\) are similar where Eq. (5) obtained from Eq. (4) by replacing \(I(L_n < L_n(\alpha ))\) by \(cL_n^{-1}\) to make it independent of \(\alpha \).

Computational aspects

Consider the setting in which the variance parameters \(\varvec{\theta }=({\mathbf{D}},\sigma ^2)\) are unknown. Eliot et al. [13] proposed an extension of the expectation–maximization (EM) algorithm described by Laird and Ware [8] that includes an additional step for estimation of the ridge component. We refine their procedure to evaluate shrinkage estimators as well.

Further, for the ease of computations, we use the estimate of Hoerl and Kenard [25] for the ridge parameter as the initial value. It is given by

where \({\hat{\alpha }}_{\max }=\max ({\hat{\alpha }}_{1}, \ldots ,{\hat{\alpha }}_{p})\) in which \({\hat{{\varvec{\alpha }}}}={\varvec{\Gamma }}^{\mathrm{T}} {\hat{{\varvec{\beta }}}}\) and \({\varvec{\Gamma }}\) is the orthogonal eigenvector is spectral decomposition of \({\mathbf{X}}^{\mathrm{T}}{\mathbf{X}}\), i.e., \({\mathbf{X}}^{\mathrm{T}}{\mathbf{X}}={\varvec{\Gamma }} {\varvec{\Lambda }} {\varvec{\Gamma }}^{\mathrm{T}}\), \({\varvec{\Lambda }}=diag(\lambda _1, \ldots ,\lambda _p)\), where \(\lambda _i\) is the ith eigenvalue of \({\mathbf{X}}^{\mathrm{T}}{\mathbf{X}}\). In what follows, we propose the refined EM algorithm.

Simulation study

A Monte Carlo simulation study is conducted to evaluate the performance of the proposed PTMR and shrinkage estimators compared to the MR estimator of Eliot et al. [13]. In our simulation scheme, we fix \(n_i = 4\) measurements for each subject i and generate data according to the model of (2) where \({\varvec{\beta }} = (0.0,0.1,0.2,0.4,0.8)\), \(b_{ijk} \sim N(0,0.6)\) and \({\varvec{\varepsilon }}_{ijk}\sim N(0,1)\). To generate multicollinear data, using the “EnvStats” package in R, each predictor variable is assumed to arise from N(5, 1), and the correlation \(\rho \) between predictor variables is taken from the set \(\left\{ 0.0, 0.2, 0.5, 0.7, 0.9 \right\} \). Initial values of the variance components are set to be the estimates obtained from fitting a mixed model with no ridge component. In total, \(B = 100\) simulations are conducted for each of the \(\rho \) values based on \(n = 40\) individuals. For further assumed the expert prior knowledge is \({\varvec{\beta }} =\mathbf{0 }\), for the computation of the PTMR and Stein-type estimators. Simulation results are summarized in Tables 1 and 2. From Table 2, it is clear the estimation is dependent on the level of significance \(\alpha \).

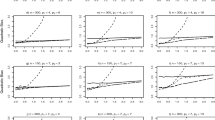

From Table 1, it is apparent the shrinkage mixed ridge (shrinkage MR) estimator has smaller MSE and standard error (sd). Hence, the shrinkage MR is the best among all other competitors; i.e., the shrinkage MR performs better than the mixed, MR and shrinkage mixed (shrinkage M). Knowing this, the preliminary test approach is only applied to the mixed ridge estimator, giving rise to the PTMR estimator. According to the results of Table 2, as the level of significance increases, the MSE increases. The graphs of the MSE against the different values of \(\rho \) are also shown in Fig. 1.

Although as level of multi-collinearity increases, so does the MSE values, the proposed PTMR estimator has smaller MSE among all. Further, the PTMR and shrinkage MR estimators perform better than the M and MR estimators in multi-collinear situations.

HIV data analysis

In a similar framework as explained in Temesgen and Kebede [4], this section focuses on analyzing HIV data using the linear mixed model. In particular, in this study, we analyze the performance of the proposed estimators using the aids dataset taken from “JMbayes” package in R. The dataset consists of seven covariates for each \(n=467\) patients. The response variable is the CD4, and we consider the gender and prevOI variables as the random effects, in our study. Didanosine versus zalcitabine in HIV patients, a randomized clinical trial data were collected to compare the efficacy and safety of two antiretroviral drugs in treating patients who had failed or were intolerant of zidovudine (AZT) therapy. Table 3 introduces variables of this study:

Variables have been measured \(n_i\) time for each individuals, so we have a longitudinal dataset; some of the variables like \({\text{gender}}\) in this set will put the subjects in special groups, so we can consider these variables as random effects and we can use mixed models to analyze this dataset, but we know the high degree of correlation among predictors is expected, because variables are measured several times, so we have multi-collinearity data; to combat this difficulty, we can use mixed ridge regression (see Eliot et al. [13]). Information on the important variables is summarized in Table 4.

In addition, we use shrinkage methods to increase estimation efficiency. For the purpose of utilizing the mixed model in “Shrinkage approach” section, the log transform was applied to the CD 4 counts.

Table 5 shows the mixed, MR, shrinkage M, shrinkage MR and PTMR estimators, respectively, denoted by \({\hat{\varvec{\beta }}}_M\), \({\hat{\varvec{\beta }}}_\mathrm{MR}\), \({\hat{\varvec{\beta }}}_M^S\), \({\hat{\varvec{\beta }}}_\mathrm{MR}^S\) and \({\hat{\varvec{\beta }}}_\mathrm{MR}^{\rm PT}\). From Table 6, it is clear that the drug ddI is more effective in curing the infected cells than ddC. In fact, the rate of improvement through using ddI increases by 63.41% as compared to using ddC. This conforms with the negative sign of the parameter “obstime” which indicates that the proliferation of the HIV virus is under control as the time point increases by an approximate rate of 5.715\(\%\). The negative sign in the “death” parameter also denotes that using ddC, the rate of deaths and the time to survival improve by 47.83\(\%\) and 8.36\(\%\) respectively. Besides, the standard deviation estimates illustrate that these explanatory variables are significant, and hence based on the above dataset, the drug ddI (didanosine) is a recommendable remedy.

From the medical point of view as well, it is shown that ddI yields better treatment in controlling the growth of the HIV virus in the human body (see Molina et al. [26]) while the drug ddC (zalcitabine) has been strongly recommended to be unused due to its countereffects as discussed in the book “clinical neurotoxicology” by Dobbs [27] and Bilgrami and O’Keefe [28].

To compare the performance of the shrinkage MR estimator, we evaluate the MPE; the lesser, the better. In what follows, we describe the scheme we used to derive the MPE. For our purpose, a K-fold cross-validation is used to obtain an estimate of the prediction errors of the model. In a K-fold cross-validation, the dataset is randomly divided into K subsets of roughly equal size. One subset is left aside, \(\{({\mathbf{X}}^{\rm test},\mathbf{y }^{\rm test})\}\), termed as test set, while the remaining \(K-1\) subsets, called the training set, are used to fit model. The resultant estimator is called \({\hat{{\varvec{\beta }}}}^{\rm train}\). The fitted model is then used to predict the responses of test dataset. Finally, prediction errors are obtained by taking the squared deviation of the observed and predicted values in the test set, i.e.,

where \({\hat{\mathbf{y}}}^{\rm test}_k = {\mathbf{X}}^{\rm test}_k {\hat{{\varvec{\beta }}}}^{\rm train}_k\). The process is repeated for all K subsets, and the prediction errors are combined. To account for the random variation of the cross-validation, the process is reiterated N times and the average prediction error is estimated which is given by

where \({\text{PE}}^k_i\) is the prediction error of considering kth test set in ith iteration. If the value of MPE is lesser, the estimator is preferred, comparatively.

Our results are based on \(N=500\) case re-sampled bootstrap sample. In Table 5, we report the estimates and MPE values. Based on the results, the proposed shrinkage MR estimator performs better than the others, in MPE sense. Further, the absolute value of estimates in the shrinkage MR estimates is lesser than the others. The box plots of the PE are shown in Fig. 2.

From the results in the estimation table, it could be deduced that the didanosine drug provides a better treatment.

Conclusion

In this paper, we developed a preliminary test and Stein-type ridge regression estimation in linear mixed model for longitudinal data analysis. Hence, we considered a penalized likelihood approach and proposed the shrinkage mixed ridge estimator for the vector of regression coefficients. An EM algorithm is also exhibited to solve the penalized likelihood for the unknown parameters. Simulation studies demonstrated the good performance of the proposed estimator for multicollinear situations compared to the maximum likelihood estimator. In addition, the above model has contributed largely to justify the use of didanosine in improving the health states of HIV patients, as stated in various biomedical studies. Henceforth, such model and its estimation step based on shrinkage is highly commendable for medical studies of such genre.

References

Mamode Khan, N., Jowaheer, V., Sunecher, Y., Bourgignon, M.: Modeling longitudinal INMA(1) with COM–Poisson innovation under non-stationarity: application to medical data. Comput. Appl. Math. 6(17), 1–22 (2018)

Yuan, S., Zhang, H.H., Davidian, M.: Variable selection for covariate-adjusted semiparametric inference in randomized clinical trials. Stat. Med. 31, 3789–3804 (2012)

Verbeke, G., Fieuws, S., Molenberghs, G., Davidian, M.: The analysis of multivariate longitudinal data: a review. Stat. Methods Med. Res. 23(1), 42–59 (2014)

Temesgen, A., Kebede, T.: Joint modeling of longitudinal CD4 count and weight measurements of HIV/tuberculosis co-infected patients at Jimma University specialized hospital. Ann. Data Sci. 3(3), 321–338 (2016)

Seyoum, A., Ndlovu, P., Temesgen, Z.: Joint longitudinal data analysis in detecting determinants of CD4 cell count change and adherence to highly active antiretroviral therapy at Felege Hiwot Teaching and Specialized Hospital, Northwest Ethiopia (Amhara Region). AIDS Res. Ther. 14(14), 1–13 (2017)

Zeger, S.L., Liang, K.Y.: Longitudinal data analysis for discrete and continuous outcomes. Biometrika 42(1), 121–130 (1986)

Thall, P., Vail, S.: Some covariance models for longitudinal count data with overdispersion. Biometrics 46(3), 657–671 (1990)

Laird, N., Ware, J.: Random-effects models for longitudinal data. Biometrics 38, 963–974 (1982)

Sutradhar, B.C.: An overview on regression models for discrete longitudinal responses. Stat. Sci. 18(3), 377–393 (2003)

Sutradhar, B.C., Jowaheer, V.: Analyzing longitudinal count data from adaptive clinical trials: a weighted generalized quasi-likelihood approach. J. Stat. Comput. Simul. 76(12), 1079–1093 (2006)

FitzMaurice, G.M., Laird, N.M.: A likelihood-based method for analysing longitudinal binary responses. Biometrika 80(1), 141–151 (1993)

Sutradhar, B.C., Jowaheer, V., Rao, P.: Remarks on asymptotic efficient estimation for regression effects in stationary and non-stationary models for panel count data. Braz. J. Prob. Stat. 28(2), 241–254 (2014)

Eliot, M., Ferguson, J., Reilly, M.P., Foulkes, A.S.: Ridge regression for longitudinal biomarker data. Biostatistics 7, 1–11 (2011)

Hossain, S., Thomson, T., Ahmed, E.: Shrinkage estimation in linear mixed models for longitudinal data. Metrika 81(5), 569–586 (2018)

Saleh, A.K.E., Arashi, M., Tabatabaey, S.M.M.: Statistical Inference for Models with Multivariate t-Distributed Errors. Wiley, New Jersey (2014)

Ali, A.M., Saleh, A.K.E.: Estimation of the mean vector of a multivariate normal distribution under symmetry. J. Stat. Comput. Simul. 35, 209–226 (1990)

Ahmed, S.E., Fallahpour, S.: Shrinkage estimation strategy in quasi-likelihood models. Stat. Probab. Lett. 82, 2170–2179 (2012)

Roozbeh, M., Arashi, M.: Shrinkage ridge regression in partial linear models. Commun. Stat. Theory Methods 45(20), 6022–6044 (2016)

Yuzbasi, B., Ahmed, S.E.: Shrinkage and penalized estimation in semi-parametric models with multicollinear data. J. Stat. Comput. Simul. 86(17), 3543–3561 (2016)

Yuzbasi, B., Ahmed, S.E., Gungor, M.: Improved penalty strategies in linear regression models. REVSTAT Stat. J. 15(2), 251–276 (2017)

Asar, Y.: Some new methods to solve multicollinearity in logistic regression. J. Commun. Stat. Simul. Comput. 46(4), 2576–2586 (2017)

Bancroft, T.A.: On biases in estimation due to the use of preliminary tests of significance. Ann. Math. Stat. 15, 190–204 (1944)

Stein, C.: Inadmissibility of the usual estimator for the mean of a multivariate normal distribution. In: Proceedings of the Third Berkeley Symposium on Mathematical Statistics and Probability, vol. 1, pp. 197–206 (1956)

James, W., Stein, C.: Estimation with quadratic loss. In: Proceedings of the Fourth Berkeley Symposium on Mathematical Statistics and Probability, vol. 1, pp. 361–379 (1961)

Hoerl, A., Kennard, R.: Ridge regression: applications to nonorthogonal problems. Technometrics 12, 69–82 (1970)

Molina, J.M., Marcelin, A.G., Pavie, J., Heripret, L., Boever, C.M.D., Troccaz, M., Leleu, G.: Didanosine in HIV-1) infected patients experiencing faiure of antiretroviral therapy: a randomized placebo-controlled trial. J. Infect. Dis. 191(6), 840–847 (2005)

Dobbs, M.R.: Clinical Neurotoxicology: Syndromes, Substances, Environments. Elsevier, Amsterdam (2009)

Bilgrami, M., O’Keefe, P.: Neurologic diseases in HIV-infected patients. Handb. Clin. Neurol. 121, 1321–1344 (2014)

Acknowledgements

We would like to thank the referees for constructive comments which greatly improved the presentation of paper.

Author information

Authors and Affiliations

Contributions

All authors have contributed equally in the entire work from writing the draft untill the last revision.

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Rahmani, M., Arashi, M., Mamode Khan, N. et al. Improved mixed model for longitudinal data analysis using shrinkage method. Math Sci 12, 305–312 (2018). https://doi.org/10.1007/s40096-018-0270-4

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40096-018-0270-4