Abstract

The public has access to a range of mobile applications (apps) for disasters. However, there has been limited academic research conducted on disaster apps and how the public perceives their usability. This study explores end-users’ perceptions of the usability of disaster apps. It proposes a conceptual framework based on insights gathered from thematically analyzing online reviews. The study identifies new usability concerns particular to disaster apps’ use: (1) content relevance depends on the app’s purpose and the proximate significance of the information to the hazard event’s time and location; (2) app dependability affects users’ perceptions of usability due to the life-safety association of disaster apps; (3) users perceive advertisements to contribute to their cognitive load; (4) users expect apps to work efficiently without unnecessary consumption of critical phone resources; (5) appropriate audio interface can improve usability, as sounds can boost an app’s alerting aspect; and, finally (6) in-app browsing may potentially enhance users’ impression of the structure of a disaster app. As a result, this study argues for focussed research and development on public-facing disaster apps. Future research should consider the conceptual framework and concerns presented in this study when building design guidelines and theories for disaster apps.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Populations are increasingly using mobile apps as communication tools to get information during disasters (Reuter and Spielhofer 2017). Apps used by the public during disasters can either be general apps—familiar and popular apps (for example, social media apps like Twitter, Facebook) or disaster apps—apps designed specifically for use during crises (Tan et al. 2017). This study investigates the latter. Disaster apps are defined in this study as mobile apps that help members of the public in retrieving, understanding, and using time and location critical information to enhance their decision-making process during a disaster.

Using social media apps has been proven to provide benefits for communication during disasters (Houston et al. 2015). However, there are limitations to social media as many have raised concerns, such as distrust due to misinformation, privacy, and quality and timeliness of information (Schimak et al. 2015; Fallou et al. 2019). Appleby-Arnold et al's. (2019) study found that the public perceives disaster apps to be more reliable than social media as a communication platform between citizens and authorities.

While disaster apps have the potential for improving the public’s preparedness and response to disasters, the usability of these apps from the perspective of the public as end-users is an understudied topic (Tan et al. 2017). Usability is “the degree to which something is able or fit to be used” (Oxford Dictionary 2019). The importance of usability is acknowledged in the field of safety–critical systems as the lack of usability can lead to product discontent and compromised safety (Kwee-Meier et al. 2017). Well-designed technological systems can aid users in making informed decisions in times of crises. However, technologies can also hinder users if they are not designed in the context of their end-users. A disaster app will be of little or no value if a user finds it unusable when interacting with it during crises (Appleby-Arnold et al. 2019; Bopp et al. 2019).

The conditions in which disaster apps are used may be different from ordinary everyday use apps. Two contexts differentiate the use of disaster apps. (1) Low frequency of use—users do not expect to use disaster apps often and perceive disasters to be low likelihood events (Reuter et al. 2017). (2) Acute scenario of use—disaster apps, as designed, will be most useful when there are disaster events. However, when used during a disaster situation, users may find themselves in a stressful environment where their information-processing capabilities may be impaired (Stratmann and Boll 2016). Provided these contexts, the study asks the question: What usability concerns are particular to disaster apps? By answering this question, the study contributes to the literature by contextualizing usability for the disaster apps domain, particularly investigating it from the perspectives of the end-users.

This article is structured as follows. It starts with providing an overview of past studies on disaster apps and usability, and it then explains the method employed to acquire insights on usability from disaster app users. The article then presents the modified conceptual framework, highlighting new insights from the user reviews. Then the article answers the research question detailing the usability concerns particular to disaster apps. Finally, the article concludes by discussing the relevance and limitations of the study and areas for future research.

2 Disaster Apps and Usability

Studies on disaster apps mostly focus on evaluating operational capabilities with only a few assessing usability (Tan et al. 2017). Several studies (Gómez et al. 2013; Bachmann et al. 2015) have conducted investigations into large numbers of disaster apps available in the markets. These provided insightful typologies and feature-descriptions of the apps, but they did not cover the apps’ usability. Only a few academic studies have investigated the usability of disaster apps from the perspective of the public as end-users.

The few studies that do focus on usability concentrate on one or two use-cases or proofs-of-concept (Estuar et al. 2014; Sarshar et al. 2015). The usability study on the eBayanihan app by Estuar et al. (2014) highlights that designing disaster apps requires both an understanding of the functional requirements as well as of the user. Similarly, the evaluation of the apps GDACSmobile and SmartRescue emphasizes the need to consider human–computer interaction when designing disaster apps and the need for future research to explore various ways to gauge usability (Sarshar et al. 2015). Beyond the studies about technical and functional aspects of the apps, research should look into the use and usability of these apps (Karl et al. 2015; Reuter et al. 2017).

2.1 Usability of Mobile Applications

Mobile application usability theory has anchored on general usability literature, which is heavily based on software and website contexts (Hoehle and Venkatesh 2015). The concepts are primarily defined by the International Organization for Standardization (ISO) and Nielsen (1994) (Coursaris and Kim 2011; Zahra et al. 2017). ISO (1998) describes usability in three parts: effectiveness, efficiency, and satisfaction, and Nielsen (1994) identifies five attributes to usability: efficiency, satisfaction, learnability, memorability, and errors.

However, software- or website-based usability models may be insufficient for the context of mobile application use, as issues such as mobility and limited screen size are often neglected (Zhang and Adipat 2005; Harrison et al. 2013). Addressing these mobile app context issues, multiple studies have reconceptualized mobile application usability by combining and extending the usability dimensions from ISO’s and Nielsen’s concepts. Table 1 lists examples of mobile application usability models that have combined and added dimensions to address the context of mobile application use. Although these mobile application usability models (Zhang and Adipat 2005; Coursaris and Kim 2011; Harrison et al. 2013) have consistently used traditional usability dimensions, such as effectiveness, efficiency, and satisfaction, there is no consensus on what constitutes mobile application usability.

At present, standard usability evaluation measurements, such as task completion, error rate, and time, are the most commonly used to measure efficiency and effectiveness as parts of usability (Zhang and Adipat 2005; Balapour and Walton 2017). Although these standard metrics provide useful means to understand the interaction between the user, technology, task, and context, there is a limited understanding of the factors that contribute to the desired result of usability (Balapour and Walton 2017). The current usability models do not sufficiently provide dimensions that will help guide the development of usable mobile application designs and interfaces (Zahra et al. 2017).

A holistic conceptualization of mobile app usability developed by Hoehle and Venkatesh (2015) tries to address the gap in the literature by introducing a model that moves away from the website–software usability stream. Furthermore, their model includes antecedents of usability that can guide the development of apps. The model demonstrates six constructs that represented mobile application usability: app design, app utility, user interface (UI) graphics, UI output, UI input, and UI structure. However, one of the limitations of Hoehle and Venkatesh's (2015) model is that it has only been applied to social media mobile applications. Further research on their usability conceptualization is encouraged to see whether the model translates to other types of applications, such as disaster apps.

2.2 End-Users’ Perspectives

Disaster management tools can lead to negative consequences if they lack usability, and it is essential to consider the target audience’s perspectives when building interventions for emergency management (Cosgrove 2018). This study considers usability from a “perceived usability” standpoint where the usability of a system is taken from the experience of its users (Hertzum 2010). End-users’ can provide valuable insights into their behavior of use (McCurdie et al. 2012). Perceived usability has been traditionally solicited from users through questionnaires, interviews, and observations, but it can also be gathered through analyzing user-generated content (Nielsen 1994; Balapour and Walton 2017). For example, interpreting user reviews from online systems can help unfold the experience of the users of real-time applications, especially in obtaining aspects that are important to users that might otherwise be uncaptured through solicited means (Gebauer et al. 2008).

3 Method

This study aimed to gain insights into what comprises usability for disaster apps from the perspectives of their end-users. We acquired the end-users’ viewpoint through an app store analysis approach. This study investigates feedback from users of disaster apps that can be found in the two most prominent app markets: the iOS App Store (Apple Inc. 2016) and Google Play (Google 2016). The app stores provide convenient platforms for users to freely convey their experiences through writing reviews (McIlroy et al. 2015). Analyzing large volumes of self-reports, such as user reviews, makes it possible to draw inferences regarding usability that otherwise may not be gathered through structured surveys (Gebauer et al. 2008; Hedegaard and Simonsen 2013). This study analyzed app store data, specifically user reviews, to infer usability aspects that are important to disaster apps users. The app store analysis process involves (1) selecting the appropriate apps; (2) collecting the user reviews from the selected apps; and (3) analyzing the collected data.

3.1 App Selection

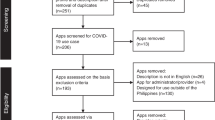

The research process started with selecting the disaster apps available from the iOS App Store and Google Play markets. Our search strategy employed various iterations of keywords. First, the search used the base keywords: “disaster,” “emergency,” and “hazards.” The succeeding searches attached extended words to the base words, such as “management,” “tools,” and “utilities.” Iterations of the base words and extended words were employed such that the combined results from both stores produced a total of 4024 app results (1033 from iOS App Store and 2991 from Google Play). Duplication removal reduced the number to 3003 apps in total. We employed three rounds of inclusion–exclusion criteria to filter relevant apps:

-

(1)

We only considered apps using the English language;

-

(2)

We included apps purposefully designed for the public to use in the context of disaster preparedness and response. We excluded the following categories: games, references and study guides, lifestyle and entertainment, legal services, insurance services, business continuity apps, and radio scanners; and

-

(3)

We excluded tools that can be used during emergencies but do not provide information on the impending or ongoing event. Examples of such apps would be light and sound makers, apps that only provide checklists for emergencies, apps that store vital information on a user’s phone, and apps that allow easy dial access to emergency numbers.

After the exclusions, 353 apps remained. Among these, only a few apps have significant analyzable content. Since the purpose of this study was to gather insights from the users, significant user content was needed. Some apps had less than 1000 downloads and these often had no reviews. We observed that the apps that had at least 35 user reviews had a minimum download count of 1000, with most having download counts in the range of 10,000–50,000. For the purpose of this study, we only included apps that have considerable traction with users. Thus, we set a threshold of reviewing apps that had at least 35 user reviews. Fifty-eight apps met this requirement.

Included in these 58 (See Table 2) are apps that provide warnings and information on hazard threats. Some apps cater towards specific hazards such as earthquakes (for example, Earthquake Alert), extreme weather (for example, Tornado by American Red Cross), wildfires (for example, FireReady), and other apps offer information on multiple hazards (for example, FEMA). Although the 58 apps differ in the scope of the hazards, they share the common purpose to provide the public information on potential hazards at the onset, during, and after an event.

3.2 Data Collection

The purpose of this study is to draw qualitative concepts of usability as experienced by the users inferred through their reviews rather than to focus on frequencies or to claim statistical significance. Analysis of user reviews reveals recurrent issues as well as extreme sentiments that provide valuable information on instances where the products perform well or poorly (Hedegaard and Simonsen 2013). Negative comments help identify red flags while positive comments identify good practices in usability.

We scraped the app stores and collected user reviews from the 58 apps. Non-proportional quota sampling of 35 reviews from each of the 58 apps resulted in 2030 reviews. We used non-proportional quota sampling (the quota size is not proportional to the size of the entire set of reviews for a given app) because we wanted the corpus of reviews to represent the sentiments from all the 58 apps adequately. When sampling for data mining, non-proportional strategies become appropriate when the primary concern of the study is not the precision of estimates, but having “enough to assure that we will be able to talk about even small groups in the population” (Gu et al. 2000, p. 11). By using non-proportional sampling, we avoid the bias of reflecting just the reviews from popular apps, and ensure that the sentiments of users from the less popular apps are adequately represented.

We appraised the 2030 user reviews to determine whether they contained valuable content. The reviews had an average length of 132 characters but range between 1 and 1579 characters. Reviews containing less than nine characters were eliminated as they did not contain significant content to be analyzed. For example, comments such as “ok,” “good,” or “great” do not provide valuable insights. We also employed the same removal criteria for reviews longer than nine characters that did not provide useful content. We excluded remarks of general praise, general complaint, sarcasm, or mockery. In total, we excluded 625 user reviews, resulting in 1405 user reviews with meaningful content.

The review system in app markets provides an avenue for users to provide feedback without any predefined structure (Palomba et al. 2015). Users give reviews in app stores in an open-ended format where they can share many commendations or complaints within one entry. The user reviews we found in this study contained one to eight separate comments. To systematize the analysis, we broke each of the reviews into further coding units. The coding units are the various comments contained within user reviews. The 1405 user reviews yielded 2082 comments (coding units) for data analysis.

3.3 Data Analysis

We used thematic analysis to examine the 2082 comments. Thematic analysis is a “method for identifying, analyzing and reporting patterns within data” (Braun and Clarke 2006, p. 80), which begins with familiarization of the data. For this research, we initiated familiarization through the app selection and data collection process described in the preceding sections.

The initial coding of the comments was theoretically driven using the Hoehle and Venkatesh's (2015) model. The model was chosen as the basis of initial coding because it provides a holistic picture that captures the context of mobile applications. It includes antecedents for usability that can help guide the development of usable mobile app designs and interfaces. The model defines mobile application usability with six higher level constructs, and each of these constructs is detailed further with respective formative lower level constructs (See Fig. 1). “App design” is formed by branding, data preservation, instant start, and orientation. “App utility” is formed by collaboration, content relevance, and search. “UI graphics” is formed by aesthetic graphics, realism, and subtle animation. “UI input” is formed by control obviousness, de-emphasis on settings, effort minimization, and fingertip size controls. “UI output” is formed by concise language, standardized UI element, and user-centric terminology. “UI structure” is formed by logical path and top-to-bottom structure.

Mobile app usability conceptualization by Hoehle and Venkatesh (2015)

The initial coding involved categorizing the comments based on Hoehle and Venkatesh's (2015) model’s lower level constructs. Through the coding process, we found comments that did not fall within the existing constructs, and we coded these inductively to form new themes. From this point forward, the term “themes” refers to newly formed concepts and the term “constructs” refer to the items from Hoehle and Venkatesh's (2015) model.

Each succeeding comment analyzed was compared to the existing constructs and newly developed themes. Themes were subsequently developed and refined as more comments were interpreted. After coding the 2082 comments, we looked at the patterns from the constructs and themes. We analyzed and reorganized the groupings to ensure that the higher level constructs reflect a consistent essence. As a result of these steps, we built a conceptual framework for disaster app usability. Figure 2 shows a visual summary of the coding process from the user reviews to yield the conceptual framework.

3.4 Validity

Investor triangulation was applied through documenting the entire coding process. The primary coder continuously updated a coding manual. Furthermore, an inter-rater reliability test was conducted. A research assistant independently coded a sample dataset (n = 247) to check the validity of the coding. We ran Cohen’s kappa to determine if there is an agreement between the primary coder and the secondary coder: κ = 0.613 (confidence level: 95%, confidence interval, 0.546–0.630), p < 0.0005. Kappa greater than 0.60 indicates that there was a substantial agreement between the two coders’ judgements (Landis and Koch 1977).

4 Modified Usability Framework for Disaster Apps

This study aimed to capture the end-user perspectives on the usability of disaster apps through the analysis of comments made in the app stores markets. Figure 3 shows the observed frequency counts of comments per construct and theme. All the lower level constructs from Hoehle and Venkatesh’s (2015) model, except for subtle animation and realism, were observed from the comments. Notably, “content relevance” had the largest proportion of observations with 897 comments.

We acknowledge that there are no observations for the constructs for “subtle animation” and “realism.” Although there is no means to interpret unreported issues in qualitative data, it does not indicate that these are unimportant (Braun and Clarke 2013). Since the nature of these constructs is that they are relatable yet subtle, the users are less likely to comment on these. “Subtle animation” is about using effects subtly but effectively to aid users in transitions and actions (Hoehle and Venkatesh 2015). The construct “realism,” on the other hand, is about incorporating realistic icons or pictures, such as the rubbish-bin symbolizing the delete action (Hoehle and Venkatesh 2015). We retain these two constructs in the conceptual framework to be further validated in future studies.

More importantly, 21% of the user comments (434 of 2082) are new observations that are not yet clearly captured by the Hoehle and Venkatesh (2015) mobile app usability framework. The observations formed six new lower-level themes.

4.1 Six New Observations Relating to Usability

The following six new themes were observed in reviews multiple times across the set of 58 apps and never detected in isolation to just a single app. We found the theme “error-free operation” in 47 of 58 apps and the theme “phone resource usage” in 29 out of the 58 apps. Even the themes with lower frequency were observed across several apps: “audio output” (18 of 58 apps), “minimal advertisements” (16 out of 58 apps), “minimal external links” (11 of 58 apps), and “clean exit” (4 out of 58 apps). Below, we discuss the insights gathered from the users’ comments on these new themes.

-

Error-free operation: There were 112 comments that discussed the reliability of the app. The comments narrated the users’ experiences of crashes and errors. Some of the users articulated dissatisfaction and lack of trust when the apps exhibited critical errors. There is an expectation of seamless operation for disaster apps to support use, especially when a crisis arises.

-

Phone resource usage: Users expect the app to be designed efficiently. Sixty-two comments mentioned the amount of battery power consumed by the app or interference of the app with other essential functionalities of the phone. Given that the apps are designed for use during emergencies, there is an expectation that it should be able to achieve its purpose without using too much battery power or memory space.

-

Audio output: Thirty-two comments highlighted the importance of the audio interface, particularly for alerting. The comments paid particular attention to how the notifications sounded. The users commented on the notifications being too loud, too quiet, too frequent, or too similar to sound effects used by other installed apps (that is, text messaging sounds).

-

Minimal advertisements: Twenty-seven comments expressed negative views regarding the inclusion of advertisements in the apps. The comments raised users’ concerns about advertisements interfering with the actual use of the app.

-

Minimal external links: Seventeen comments emphasized the need to reduce external browsing. Aside from page layout and inter-page navigation, users also remarked on the tendency for an app to direct users to read the content using an external app or browser. Users questioned the app’s usefulness if it does not provide essential information internally and forces the user to seek content elsewhere.

-

Clean exit: Many comments described the app’s ability to start instantly, but a few users also commented on how the app exits. Six comments expressed their expectations that the app should close seamlessly without interfering with the phone’s other functions.

Some uncategorized comments (178 observations) did not relate to usability directly. For example, some comments pertained to the costs of purchasing the app, the developer’s response to the reviews, and the apps’ susceptibility to viruses/malware.

4.2 The Conceptual Framework

After identifying the six new themes, we looked at patterns on how the six fit into the existing model. We refined the groupings, developing one higher level theme and integrating the six into the lower level to form the conceptual framework. Figure 4 shows the proposed conceptual framework, highlighting the new themes juxtaposed with the original model.

In this conceptual framework, we propose a new higher level theme, “app dependability.” Patterns from the comments showed that four newly identified themes, along with four existing constructs at the lower level form into two distinct thematic groups: “app dependability” and “app design” (Fig. 5).

We observed that the new themes, “error-free operation” and “clean exit,” correspond with the existing constructs, “instant start” and “data preservation,” to form a thematic group. These four themes relate to users’ distinct perception of operational dependability through a usage lifecycle. Overall, the group covers users’ expectation that the app will start instantly, will preserve data automatically, will function dependably without crashing, and will close without any problems. “App dependability” forms an overarching theme that unites “instant start,” “data preservation,” “error-free operation,” and “clean exit.” “App dependability” is defined as the degree to which a user perceives that an app can operate dependably from start to end of a usage session.

“App design” maintains its definition as “the degree to which a user perceives that a mobile application is generally designed well” (Hoehle and Venkatesh 2015, p. 447). Existing constructs “branding” and “orientation” only had a few mentions but were observed as concerns for users (2 and 10 observations, respectively). However, the comments raised two new distinctive concerns on “app design”: on “minimal advertisements” and “phone resource usage.” Twenty-seven comments challenged the design decision on incorporating advertisements in disaster apps, and 62 comments narrated frustration with an app’s design when users experienced exhaustion of their phone’s resources (for example, battery and memory). Grouping these two new themes with “branding” and “orientation” frames the concept of “app design” for disaster apps. A disaster app’s overall design should consider branding, design for horizontal/vertical orientation, design decisions on including advertisements, and design resolutions affecting phone resource usage.

5 Relevant Usability Concerns

From the insights from the app market, we have shown a conceptual framework contextualizing usability to the disaster apps domain. In the process, we highlight end-users’ usability concerns for disaster apps. In this section, we answer the research question: What usability concerns are particular to disaster apps? We discuss six items. First, we look into the construct that had the highest frequency of observations to reveal what “content relevance” means to disaster app users. Second, we discuss the applicability of the new higher-level theme “app dependability” to disaster apps because of life-safety associations. The last four items discuss new themes at the lower-level of the framework: “minimal advertisements,” “phone resource usage,” “audio output,” and “minimal external links.” Some quotes from the user reviews are cited to emphasize the user opinion. We also align the discussion with existing research from other technologies such as from Web2.0, websites, mHealth, and mobile device literature.

5.1 Content Relevance for Disaster App Users

The highest percentage of comments (43% of total) in the review was on content relevance. Hoehle and Venkatesh (2015, p. 447) loosely defined content relevance as “the degree to which a user perceives that the app focusses on the most relevant content.” The reviews provided deeper insights as to what relevance meant for disaster app users, who demand three forms of relevance from their disaster apps:

-

(1)

Spatial proximity—Disaster app users seek information that is most relevant to their location. The comments showed that users expect the apps to reduce information overload by showing content related to their current location or a particular selected location. One comment reads:

Need to be able to set a relevance radius—otherwise information overload. (App# 28, RSOE EDIS Notifier Lite. Date: 27/08/2013)

-

(2)

Temporal proximity—Content should also portray relevance to time. A few of the comments expressed the expectation that disaster apps should provide the most recent information. Some comments voiced dissatisfaction and viewed the apps to be unusable when the information provided was not timely.

The app gives you warnings about the weather in your area, but they’re always 2–3 h late! It’s completely useless. (App#56, Tornado by American Red Cross. Date: 15/06/2015)

-

(3)

Purpose proximity (significance to the app’s purpose)—Relevance also means that the app provides content that matches the user’s expectations of the app’s purpose. Comments from the users often described whether they viewed the app capable of fulfilling its primary purpose (for example, alert, information, and so on). Also, the comments provided insights into the form of content users expect (for example, level of detail, the frequency of alert, sound effects, information through images or maps, and so on). One comment expressed this sentiment:

I really like that you can have all the info concerning weather and traffic in one place. Very helpful when travelling. I would like to see the developers put an optional warning system that would pop up when there was a warning in your area. (App#27 ReadyTN. Date: 26/02/2012)

5.2 App Dependability

The conceptual framework shows that app dependability is an essential aspect of how users perceive the usability of disaster apps. This finding matches earlier studies on technologies for disasters. Mills et al.'s (2009) study on Web2.0 and disasters note that a crash in the system would be critical, prohibiting the use of the technology entirely. When users encounter errors during the usage lifecycle, they can lose confidence that the app can dependably deliver. As we found from the comments in this study, users tend to gain confidence in the app when they do not encounter errors. The following sample comment showcases this sentiment:

App has got better and better over the last year with updates making the app more and more easy to use and adding more and more features. […] Never crashes and does as it says. (App#18, GeoNet. Date: 26/01/2015)

Users also lose confidence when the disaster app does not operate as expected. Improved perception of usability may be a result of avoiding negative experiences, such as crashes (Ding and Chai 2015). The users’ perception of usability is not only influenced by the look and feel of the interface but also by dependability. A comment from a user highlights this:

I think, in principle, this app is a good idea. It needs some solid makeovers though. Nice user interface but crashes after 40 s. That doesn’t sound so useful. (App#56, Tornado by American Red Cross. Date: 25/05/2015)

The finding of “app dependability” as a new theme is important as the consequences of errors may raise life-safety concerns. In mobile health (mHealth) literature, a study raises concerns on the possible harm an app could cause if it had usability problems (Ettinger et al. 2016). For mHealth apps, clinician users must be able to trust that the app will perform reliably to help them with their jobs (Ettinger et al. 2016). Similarly, disaster app users must be able to perceive that the app will operate dependably during the entire duration of use, especially in the context of critical situations.

5.3 Advertisements in Disaster Apps

Past literature on web usability shows that advertisement integration contributes to the overall perception of the site’s usability (Brajnik and Gabrielli 2010; Dou et al. 2010). In web design, and similarly in app design, this involves subtle advertising or strategic product positioning (Dou et al. 2010). However, usability problems can occur due to the presence of advertising. Adverse effects of advertising include reduced usability that affects reading and information seeking tasks (Brajnik and Gabrielli 2010). Users of disaster apps, as observed in the analysis of reviews, can also find advertisements a hindrance to the app’s usability. An extracted comment shows this:

Useful but far too many advertisements are appearing. If they continue, I’ll uninstall. (App#4, Brisbane & Queensland Alert. Date: 9/10/2015)

The possible negative impact of advertisements on users’ information processing capabilities must be considered for disaster apps. Advertisements could contribute to information overload when users are trying to retrieve critical details about a hazard event. Crisis information systems must focus on designing human processes and information systems in an optimal configuration for human cognition (Hiltz et al. 2010). Disaster app design, therefore, should reduce cognitive load to enable its users to process information to enhance life-safety effectiveness. Advertisements are counterproductive for disaster apps. The objective, considering life-safety, should be to minimize the use of advertisements so as not to burden users’ cognitive load when receiving information.

5.4 Phone Resource Usage Efficiency

For disaster app usability, it is critical to consider the app performance within the smartphone itself. Resources, such as battery life and memory, are necessary for the phone and its apps to work during disasters. In an emergency, the smartphone could be used in a variety of ways aside from the use of the disaster app itself (for example, for making phone calls, SMS messaging, lights and sounds, and so on). The minimum expectation is that the app can operate efficiently without draining too much of the phone’s capacity for the user to perform other functionalities. Sample user comments from the analysis mention the practicality of the disaster app concerning phone resource use:

Had to uninstall it because it drained my battery—couldn’t make it through a day without charging. (App#38, CodeRED Mobile Alert iOS. Date: 26/01/2014)

I don’t care how good it is—it isn’t worth 6 MB of system memory. (App#5, CodeRED Mobile Alert Android. Date: 5/09/2012)

Unintentional software design decisions can make apps power-hungry and rely on operating systems for power management (Ferreira et al. 2011). Users take steps to preserve the battery life of their devices and battery consumption is a critical usability issue for app usage (Ferreira et al. 2011). Other resource limitations to consider for mobile devices are disk space and memory. For a disaster app to be considered usable, it has to operate efficiently in the possible context of use during disasters, and needs to deliver its purpose without draining the phone’s resources. An app that overuses resources could prevent the user from using other smartphone functions during emergencies. Given that disaster apps are likely to be used infrequently, they risk being uninstalled if users perceive them to be too resource hungry.

5.5 Auditory Output for Disaster Apps

UI output refers to “the degree to which a user perceives that the app presents content effectively” (Hoehle and Venkatesh 2015, p. 450). Most of the comments on UI output focussed on the visualization aspect of the apps. However, we found 32 comments that highlighted the importance of audio interface in disaster apps, in particular to alerting.

Often, users of general apps disable or mute audio as they may not deem it useful (Korhonen et al. 2007). However, for disaster app users, audio UI adds value to the app’s usability. Sounds can act as prompts that draw the user’s attention to an alert for an oncoming, recently occurred, or an ongoing situation. Some comments from the users express the opinion that the notification sound coming from the disaster app should be distinct from other apps’ sounds. For example, one comment indicates that the audio effect should be distinguishable when heard:

It’s missing one critical option: notification sound. Being able to set a different sound for each type of alert would be ideal. You should be able to tell whether that was a tornado warning or if it’s your turn in a game […] without having to look at your phone. (App#2, Alabama SAF-T-Net. Date: 22/05/2013)

On user acceptance of audio notifications, Westermann (2017) affirms that the content of the application dictates the sound settings users anticipate. Users may want the most noticeable sound for thunderstorm alerts and less so for notifications from apps that contain less critical information, such as games.

Consequently, setting the default volume on disaster apps stands out as one of the significant challenges in audio UI. App notifications are designed to make users aware of an event. However, excessiveness can cause disruption or annoyance that could lead users to uninstall an app or to ignore notifications (Felt et al. 2012). The comments on audio UI show that users appreciate high volume audibility when messages contain critical information. However, users can find the app irritating if the sounds are too loud for warnings that are not imminent or significant. One comment expresses this annoyance:

Can’t change the alert sound or volume […] The alert component still seems to run, though; which results in the app waking me up with an annoyingly loud noise in the middle of the night to [only] let me know it’s raining or windy. Pity. Uninstalled. (App#19, Hazards-Red Cross. Date: 28/07/2016)

Audio UI influences the disaster app user experience. The comments expressed the view that for audio UI to positively affect usability, it must be explicitly designed to have a distinctive sound as well as have appropriate volume settings. Users’ volume inclinations are constantly changing, which provides a challenge in setting audio preferences for apps (Rosenthal et al. 2011). The audio interface of disaster apps is an important area for potential future research.

Aside from audio UI, the use of haptic cues may be an area for further investigation for disaster apps. In this study, none of the user reviews mentioned vibration. However, it is worth noting that in other studies on notifications and disruptions, the auditory modality often goes hand-in-hand with other sensory outputs (Mashhadi et al. 2014). A study by Westermann (2017) shows that users associate audio and vibration cues with critical messages. Furthermore, the use of vibration as sensory cues may influence users to see notifications earlier. The overall usability of an app can be improved by enhancing audio UI, and possibly other sensory UI.

5.6 External Browsing

UI structure is defined as “the degree to which a user perceives that the app is structured effectively” (Hoehle and Venkatesh 2015, p. 450). From the user comments regarding disaster apps, whether or not apps are perceived to be effective structurally mostly depends on the page layout (top-to-bottom structure) and navigation between pages (logical path).

Furthermore, 17 comments emphasized a new theme relating to interface structure: minimal external links. Aside from page layout and inter-page navigation, users also remarked on the tendency for an app to direct users to read content on an external source (that is, through another app or web browser).

Usually, criticism arises when the content of the app is insufficient, prompting users to seek more information. Users want to see information internally rather than finding the information elsewhere. For example, a user can find an app useless if the app diverts the users to a different source:

Real time alerts replaced with links to other sites… this is an improvement… seriously? ~ Uninstalling. (App#34, Wildfire—American Red Cross. Date: 21/08/2013)

Some of the comments convey the preference to have access to the supplementary information within the app:

Also, I like the capability to automatically surf over to the USGS web page without leaving the app. […] The app […] takes you to the website and gives the ability to email the page to others. I use this app every day—is tremendous with great potential. (App#49, FloodWatch. Date: 3/05/2011)

Improving usability means that disaster apps should minimize the need to open another app or browser to display content. Recent trends in popular apps, such as Facebook and Twitter, show increased use of in-app browsing, in which the browsing of external content occurs within the app, taking away the need to open a separate browser. For disaster app users, this may provide added value, allowing the app to display more content while staying within the app. It may reduce the stress of opening and relying on a separate browser to deliver pertinent information. In-app browsing has the potential to contribute to usability. It can also help the perceived structure of the user interface. However, further investigation is needed to make this finding conclusive.

6 Discussion and Limitations

Even though the broader field of safety–critical systems sees the topic of usability as an essential area of research, only a small number of academic studies has investigated the usability of disaster apps that are accessible for public use. The contexts when disaster apps are used are different from general apps. Disaster apps may be used less regularly (Reuter et al. 2017) and, when used, the users may be in a high-risk environment (Sarshar et al. 2015). This study contributes to the body of knowledge for usability research as it has modified a usability framework that now captures the contexts of disaster apps use.

Insights drawn from the user reviews in the app market revealed new themes, showing that there are particular considerations for disaster apps. For example, the identification of app dependability shows consideration to users’ perceptions that an app can operate dependably during critical scenarios. This indicates that users have life-safety concerns associated with disaster apps. Users need to perceive that a disaster app can dependably deliver information when the situation arises. Future research should consider the usability of disaster apps with a life-safety lens and develop specific usability guidelines accordingly.

Aside from app dependability, other new insights have been identified, such as the users’ expectation on content relevance, minimal advertisements, phone resource usage, audio output, and minimal external links. The existence of such new themes shows that there are particular considerations for disaster apps that were not captured in the previous Hoehle and Venkatesh (2015) model. The new insights provide an argument that the approach to the research and development of disaster apps need to be specialized, and should be different from general everyday use apps. Further discourse on disaster app usability is encouraged. The modified conceptual framework proposed in this study can be used as a starting point to build a robust model for disaster app usability and to develop design guidelines and evaluation tools for disaster apps.

We acknowledge that this study is exploratory in nature, as it aimed to gain an understanding of usability through the lens of user reviews from disaster apps. Future research planned by the authors will look to strengthen this conceptual framework through quantitative validation and actual engagement with users. It would also be beneficial to investigate how this conceptual framework can translate into a set of guidelines for developing and evaluating disaster apps.

Moreover, the new themes resulting from this analysis may potentially apply to different typologies of apps outside the disaster context. However, since the insights were drawn from the analysis of reviews from disaster apps, we can only relate the discussion of these concerns to the domain of disaster apps. It would be worth exploring in future studies whether the new usability themes can apply to any other app typologies. We recommend that researchers, designers, and developers of disaster apps consider these emerging insights for future disaster app projects.

7 Conclusion

This study investigated user reviews and identified new usability concerns particular to disaster apps. It defined a new usability factor, app dependability, relating to the life-safety context of disaster apps. It also highlighted users’ opinions of other themes such as the relevance of content and phone resource usage, among others. Researchers, designers, and developers should make an effort to consider the new themes, along with other existing usability constructs, when conceptualizing, building, and evaluating disaster apps.

References

Apple Inc. 2016. The app store. https://www.apple.com/nz/ios/app-store/. Accessed 9 Aug 2019.

Appleby-Arnold, S., N. Brockdorff, L. Fallou, and R. Bossu. 2019. Truth, trust, and civic duty: Cultural factors in citizens’ perceptions of mobile phone apps and social media in disasters. Journal of Contingencies and Crisis Management 29(4): 293–305.

Bachmann, D.J., N.K. Jamison, A. Martin, J. Delgado, and N.E. Kman. 2015. Emergency preparedness and disaster response: There’s an app for that. Prehospital and Disaster Medicine 30(5): 486–490.

Balapour, A., and S.M. Walton. 2017. Usability of apps and websites: A meta-regression study. In Proceedings of the Twenty-third Americas Conference on Information Systems, 10–12 August 2017, Boston, MA, USA, ed. AMCIS 2017 organization, 1–10. Atlanta, GA: AIS

Bopp, E., J. Douvinet, and D. Serre. 2019. Sorting the good from the bad smartphone applications to alert residents facing disasters—Experiments in France. In Proceedings of the 16th International Conference on Information Systems for Crisis Response and Management (ISCRAM), ed. Z. Franco, J.J. Gonzales, and J.H. Canós, 435–449. València: ISCRAM

Brajnik, G., and S. Gabrielli. 2010. A review of online advertising effects on the user experience. International Journal of Human-Computer Interaction 26(10): 971–997.

Braun, V., and V. Clarke. 2006. Using thematic analysis in psychology. Qualitative Research in Psychology 3(2): 77–101.

Braun, V., and V. Clarke. 2013. Moving towards analysis. In Successful qualitative research: A practical guide for beginners, ed. V. Braun, and V. Clarke, 173–200. London: SAGE.

Cosgrove, S. 2018. Exploring usability and user-centered design through emergency management websites. Communication Design Quarterly Review 6(2): 93–102.

Coursaris, C., and D. Kim. 2011. A meta-analytical review of empirical mobile usability studies. Journal of Usability Studies 6(3): 117–171.

Ding, Y., and K.H. Chai. 2015. Emotions and continued usage of mobile applications. Industrial Management & Data Systems 115(5): 833–852.

Dou, W., K.H. Lim, C. Su, N. Zhou, and N. Cui. 2010. Brand positioning strategy using search engine marketing. MIS Quarterly 34(2): 261–279.

Estuar, M.R.J., M. De Leon, M.D. Santos, J.O. Ilagan, and B.A. May. 2014. Validating UI through UX in the context of a mobile-web crowdsourcing disaster management application. In Proceedings of 2014 International Conference on IT Convergence and Security (ICITCS), 28–30 October 2014, Beijing, China, ed. D. Lee, F. He, and H. Kim, 1–4. Red Hook, NY: IEEE.

Ettinger, K.M., H. Pharaoh, R.Y. Buckman, H. Conradie, and W. Karlen. 2016. Building quality mHealth for low resource settings. Journal of Medical Engineering & Technology 40(7–8): 431–443.

Fallou, L., L. Petersen, and F. Roussel. 2019. Efficiently allocating safety tips after an earthquake—Lessons learned from the smartphone application LastQuake. In Proceedings of the 16th International Conference on Information Systems for Crisis Response and Management (ISCRAM), ed. Z. Franco, J.J. Gonzales, and J.H. Canós, 263–275. València: ISCRAM.

Felt, A.P., S. Egelman, and D. Wagner. 2012. I’ve got 99 problems, but vibration ain’t one: A survey of smartphone users’ concerns. In Proceedings of the Second ACM Workshop on Security and Privacy in Smartphones and Mobile Device, ed. T. Yu, W. Enck, and X. Jiang, 33–44. Raleigh: ACM.

Ferreira, D., A.K. Dey, and V. Kostakos. 2011. Understanding human-smartphone concerns: A study of battery life. In International Conference on Pervasive Computing, ed. K. Lyons, J. Hightower, and E.M. Huang, 19–33. Berlin, Heidelberg: Springer.

Gebauer, J., Y. Tang, and C. Baimai. 2008. User requirements of mobile technology: Results from a content analysis of user reviews. Information Systems and E-Business Management 6(4): 361–384.

Gómez, D., A.M. Bernardos, J.I. Portillo, P. Tarrío, and J.R. Casar. 2013. A review on mobile applications for citizen emergency management. In Proceedings of International Conference on Practical Applications of Agents and Multi-Agent Systems–PAAMS, ed. J.M. Corchado, J. Bajo, J. Kozlak, P. Pawlewski, J.M. Molina, V. Julian, R.A. Silveira, R. Unland, and S. Giroux, 190–201. Berlin: Springer.

Google. 2016. Google play. https://play.google.com/store/apps?hl=en. Accessed 9 Aug 2019.

Gu, B., F. Hu, and H. Liu. 2000. Sampling and its application in data mining: A survey. Singapore: National University of Singapore.

Harrison, R., D. Flood, and D. Duce. 2013. Usability of mobile applications: Literature review and rationale for a new usability model. Journal of Interaction Science 1: Article 1.

Hedegaard, S., and J.G. Simonsen. 2013. Extracting usability and user experience information from online user reviews. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems—CHI’13, ed. W.E. Mackay, S. Brewster, and S. Bødker, 2089–2098. New York: Association for Computing Machinery.

Hertzum, M. 2010. Images of usability. International Journal of Human-Computer Interaction 26(6): 567–600.

Hiltz, S.R., B.V. de Walle, and M. Turoff. 2010. The domain of emergency management information. In Information systems for emergency management, ed. B.V. de Walle, M. Turoff, and S.R. Hiltz, 3–20. New York: Routledge.

Hoehle, H., and V. Venkatesh. 2015. Mobile application usability: Conceptualization and instrument development. MIS Quarterly 39(2): 435–472.

Houston, J.B., J. Hawthorne, M.F. Perreault, E.H. Park, M.G. Hode, M.R. Halliwell, S.E.T. McGowen, R. Davis, et al. 2015. Social media and disasters: A functional framework for social media use in disaster planning, response, and research. Disasters 39(1): 1–22.

ISO (International Organization for Standardization). 1998. ISO 9241-11:1998 Ergonomic requirements for office work with visual display terminals (VDTs)—Part 11: Guidance on usability. Geneva: ISO.

Karl, I., K. Rother, and S. Nestler. 2015. Crisis-related apps: Assistance for critical and emergency situations. International Journal of Information Systems for Crisis Response and Management 7(2): 19–35.

Korhonen, H., J. Holm, and M. Heikkinen. 2007. Utilizing sound effects in mobile user interface design. In Human-Computer Interaction–INTERACT 2007: Proceedings of the 11th IFIP TC 13 International Conference, Rio de Janeiro, Brazil, 10–14 September 2007, ed. C. Baranauskas, P. Palanque, J. Abascal, S.D.J. Borbosa, 283–296. Berlin: Springer.

Kwee-Meier, S.T., M. Wiessmann, and A. Mertens. 2017. Integrated information visualization and usability of user interfaces for safety-critical contexts. In Lecture notes in computer science, ed. D. Harris, 71–85. Berlin: Springer.

Landis, J.R., and G.G. Koch. 1977. The measurement of observer agreement for categorical data. International Biometric Society 33(1): 159–174.

Mashhadi, A., A. Mathur, and F. Kawsar. 2014. The myth of subtle notifications. In Proceedings of the 2014 ACM International Joint Conference on Pervasive and Ubiquitous Computing: Adjunct publication, ed. A.J. Brush, and A. Friday, 111–114. New York: Association for Computing Machinery.

McCurdie, T., S. Taneva, M. Casselman, M. Yeung, C. Mcdaniel, W. Ho, and J. Cafazzo. 2012. mHealth consumer apps: The case for user-centered design. Biomedical Instrumentation & Technology: Mobile Health 46(s2): 49–56.

McIlroy, S., N. Ali, H. Khalid, and A.E. Hassan. 2015. Analyzing and automatically labelling the types of user issues that are raised in mobile app reviews. Empirical Software Engineering 21(3): 1067–1106.

Mills, A., R. Chen, J. Lee, and H.R. Rao. 2009. Web 2.0 emergency applications: How useful can Twitter be for emergency response? Journal of Information Privacy & Security 5(3): 3–26.

Nielsen, J. 1994. Usability engineering. Amsterdam: Elsevier.

Oxford Dictionary. 2019. Definition of usability in English. Oxford: Oxford University Press.

Palomba, F., M. Linares-Vasquez, G. Bavota, R. Oliveto, M.D. Penta, D. Poshyvanyk, and A. De Lucia. 2015. User reviews matter! Tracking crowdsourced reviews to support evolution of successful apps. In Proceedings of IEEE 31st International Conference on Software Maintenance and Evolution, 29 September–1 October 2015, Bremen, Germany, ed. R. Koschke, J. Krinke, and M. Robillard, 291–300. Bassau: IEEE.

Reuter, C., and T. Spielhofer. 2017. Towards social resilience: A quantitative and qualitative survey on citizens’ perception of social media in emergencies in Europe. Technological Forecasting and Social Change 121: 168–180.

Reuter, C., M.-A. Kaufhold, T. Spielhofer, and A.S. Hahne. 2017. Social media in emergencies: A representative study on citizens’ perception in Germany. PACM on Human-Computer Interaction 1(2): Article 90.

Rosenthal, S., A.K. Dey, and M. Veloso. 2011. Using decision-theoretic experience sampling to build personalized mobile phone interruption models. In Proceedings of the 9th International Conference on Pervasive Computing, 12–15 June 2011, San Francisco, CA, USA, ed. K. Lyons, J. Hightower, and E.M. Huang, 170–187. Berlin: Springer.

Sarshar, P., V. Nunavath, and J. Radianti. 2015. On the usability of smartphone apps in emergencies: An HCI analysis of GDACSmobile and SmartRescue apps. In Human-computer interaction: Interaction technologies, ed. M. Kurosu, 765–774. Cham: Springer.

Schimak, G., D. Havlik, and J. Pielorz. 2015. Crowdsourcing in crisis and disaster management—Challenges and considerations. In Environmental software systems. Infrastructures, services and applications, ed. R. Denzer, R.M. Argent, G. Schimak, and J. Hřebíček, 56–70. Cham: Springer.

Stratmann, T.C., and S. Boll. 2016. Demon hunt—The role of Endsley’s demons of situation awareness in maritime accidents. In Human-centered and error-resilient systems development, ed. C. Bogdan, J. Gulliksen, S. Sauer, P. Forbrig, M. Winckler, C. Johnson, P. Palanque, R. Bernhaupt, and F. Kis, 203–212. Berlin: Springer.

Tan, M.L., R. Prasanna, K. Stock, E. Hudson-Doyle, G. Leonard, and D. Johnston. 2017. Mobile applications in crisis informatics literature: A systematic review. International Journal of Disaster Risk Reduction 24: 297–311.

Westermann, T. 2017. Permission requests and notification settings. In User acceptance of mobile notifications, ed. T. Westermann, 81–102. Singapore: Springer.

Zahra, F., A. Hussain, and H. Mohd. 2017. Usability evaluation of mobile applications; where do we stand? In Proceedings of the 2nd International Conference on Applied Science and Technology, ed. F.A.A. Nifa, K.L. Chong, and A. Hussain, Vol. 1891, Article 020056. Melville, NY: AIP Publishing.

Zhang, D., and B. Adipat. 2005. Challenges, methodologies, and issues in the usability testing of mobile applications. International Journal of Human-Computer Interaction 18(3): 293–308.

Acknowledgements

The authors would like to acknowledge the financial support of Education New Zealand, Massey University–College of Humanities and Social Sciences (CoHSS), and Resilience to Nature’s Challenges (RNC). The authors would like to thank Jessica Geronimo, Thadeus Tayona, and Prince Domer for their assistance to the project.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Tan, M.L., Prasanna, R., Stock, K. et al. Modified Usability Framework for Disaster Apps: A Qualitative Thematic Analysis of User Reviews. Int J Disaster Risk Sci 11, 615–629 (2020). https://doi.org/10.1007/s13753-020-00282-x

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13753-020-00282-x