Abstract

This paper illustrates how years 1 and 2 students were guided to engage in data modelling and statistical reasoning through interdisciplinary mathematics and science investigations drawn from an Australian 3-year longitudinal study: Interdisciplinary Mathematics and Science Learning (https://imslearning.org/). The project developed learning sequences for 12 inquiry-based investigations involving 35 teachers and cohorts of between 25 and 70 students across years 1 through 6. The research used a design-based methodology to develop, implement, and refine a 4-stage pedagogical cycle based on students’ problem posing, data generation, organisation, interpretation, and reasoning about data. Across the stages of the IMS cycle, students generated increasingly sophisticated representations of data and made decisions about whether these supported their explanations, claims about, and solutions to scientific problems. The teacher’s role in supporting students’ statistical reasoning was analysed across two learning sequences: Ecology in year 1 and Paper Helicopters in year 2 involving the same cohort of students. An explicit focus on data modelling and meta-representational practices enabled the year 1 students to form statistical ideas, such as distribution, sampling, and aggregation, and to construct a range of data representations. In year 2, students engaged in tasks that focused on ordering and aggregating data, measures of central tendency, inferential reasoning, and, in some cases, informal ideas of variability. The study explores how a representation-focused interdisciplinary pedagogy can support the development of data modelling and statistical thinking from an early age.

Similar content being viewed by others

Explore related subjects

Find the latest articles, discoveries, and news in related topics.Avoid common mistakes on your manuscript.

Interdisciplinary approaches to mathematics and science learning from early childhood through to secondary school typically engage students in inquiry-led investigations that reflect the way these disciplines contribute to problem solving in authentic contexts. Lehrer (2021) maintains that such interdisciplinary work opens up possibilities of knowledge transfer between disciplines as science and mathematics interact, and emphasises disciplinary knowledge as relevant to solving important problems. This approach builds the connected and structured knowledge systems that expert Science, Technology, Engineering, and Mathematics (STEM) practitioners display. Data modelling gives rise to statistical reasoning, integral to interdisciplinary mathematics and science learning (Lehrer & English, 2018; Watson et al., 2022). The foundations of statistics essentially begin with data modelling whereby students pose questions to solve authentic problems, decide what is worth noticing (i.e., identifying attributes of the phenomena), and organise, structure, visualise, and represent data (Lehrer & Schauble, 2012; Makar, 2014, 2016). Statistical reasoning involves posing questions, collecting data, comparing groups, making predictions, representing and making inferences from data, and understanding variability—critical to statistical literacy (Lehrer & English, 2018; Makar, 2014, 2016; Makar & Rubin, 2018; Pfannkuch, 2018; Watson et al., 2018, 2020).

Although the need to preserve the integrity of disciplinary knowledge in mathematics and science is well recognised, insufficient attention has been paid to the central role of data modelling and statistics, particularly for young students. Inquiry-based authentic investigations, often focused on scientific problems, support the development of statistical knowledge and decision-making and are well recognised in research and practice (e.g., English, 2012, 2013; Fielding-Wells, 2018a; Fielding-Wells & Makar, 2015; Fielding & Makar, 2022; Leavy & Hourigan, 2018; Watson et al., 2018). Such studies with young students have illustrated their engagement in data exploration through problem posing, categorising and ordering data, and representational forms such as pictorial icons, tallies, simple tables, and self-constructed data displays (Chick, 2003; English, 2012; Estrella, 2018; Frischemeier, 2018; Mulligan, 2015; Suh et al., 2021). Moreover, young students can be engaged in the practice of statistics where they develop, through experience, concepts such as distribution, sampling and aggregation, and predictive and inferential reasoning (Ben-Zvi & Sharett-Amir, 2005; diSessa, 2004; Makar & Rubin, 2018; Oslington et al., 2020). By engaging in meta-representational practices, students can be guided to explore the interrelationships between these practices which support their conceptual knowledge and statistical reasoning.

However, such statistical practices are not necessarily integrated with or prioritised in the teaching and learning of mathematics or science or other STEM initiatives, particularly in the early years. One explanation is that statistics is considered developmentally inappropriate or unnecessary, with limited reference in primary mathematics or science curricula (Australian Curriculum and Reporting Authority [ACARA], 2022). Teachers also face many challenges in the practical implementation of investigations and in accessing the statistical content knowledge required to support students’ learning (Callingham & Watson, 2011; Fielding-Wells, 2018a). Studies that focus on how teachers engage and support young students’ statistical meaning-making through interdisciplinary investigations can provide new insights into the effectiveness and appropriateness of particular pedagogies and models (e.g., Leavy, 2008; Lehrer, 2009; Wild & Pfannkuch, 1999). Few longitudinal studies have focused on the collaboration of researchers, teachers, and students to plan and engage in pedagogical cycles or ‘moves’ that centre on the interdisciplinary nature of data modelling leading to statistical inquiry. Furthermore, ascertaining the effectiveness and efficacy of designing tasks and relevant problems across the primary school, adopting an interdisciplinary approach, can contribute to a more coherent view about the critical role of data modelling and statistical reasoning in mathematical and scientific learning. We assert that teacher-guided interrelated scientific and mathematical inquiry can contextualise and support the emergence of early statistical ideas. These foundational experiences can contribute to the development of statistical literacy and critical thinking (Lehrer & English, 2018; Pfannkuch, 2018; Watson et al., 2020). The IMS project was conceptualised and designed to implement an interdisciplinary pedagogical approach with a range of authentic problems across years 1 through 6. A pedagogical model underpinning IMS focused on the invention, evaluation, refinement, and coordination of representational systems in both science and mathematics (Tytler et al., 2022). Data modelling and statistical reasoning were integral to the development and implementation of learning sequences. In this paper, we investigate a key research question:

How does teacher implementation of the IMS pedagogical cycle support the development and application of statistical reasoning through interdisciplinary mathematical and scientific investigations?

In the context of the IMS longitudinal study, this paper addresses our question with a sample of 32 year 1 students followed through to year 2, illustrated by two investigations, Ecology and Paper Helicopters, respectively.

Background literature

Research on how statistical reasoning can be developed in early childhood and in the primary school has gained momentum, given growing evidence that students can acquire and apply statistical concepts through inquiry-based investigations. Such investigations are common to mathematics and science classrooms (Fielding & Makar, 2022; Lehrer, 2021; Mulligan et al., 2022; Tytler et al., 2021; Watson et al., 2020). They include data modelling (Chick, 2003; English, 2012, 2013; Watson et al., 2018), ideas about sampling (Lehrer & Schauble, 2017), distribution (Ben-Zvi & Sharett-Amir, 2005; diSessa, 2004), and variability (Chick et al., 2018; Lehrer & English, 2018; Watson et al., 2022). Other studies highlight students’ ability to develop emergent inferential practices (Makar & Fielding-Wells, 2011; Makar & Rubin, 2018), predictive reasoning (Kinnear & Clark, 2014; Oslington et al., 2020), and meta-representational competence (Estrella, 2018; Leavy & Hourigan, 2018; MacGillivray & Pereira-Mendoza, 2011). Student-constructed representations are well recognised as central to mathematical problem solving. Studies focused on data modelling with young students have described the development of statistical thinking in constructing and interpreting graphs from data collected for example, in investigations of ice melting, growth of plants and measures of change in the growth pattern of chickens (Mulligan, 2015). Watson and Moritz (2001), English (2012), and Kinnear (2018) also describe predictive strategies used by young students which included seeking missing or unused numbers. Studies using picture books have been used as a stimulus for data modelling (English, 2012; Kinnear, 2018; Leavy, 2008; Leavy & Hourigan, 2018) and have, for example, investigated students' eye colour (Makar, 2018) or show size (Fielding-Wells, 2018a; Makar, 2016). In studies of statistical reasoning, students as young as year 3 have been found to use predictive reasoning to interpret the aggregate properties and variability of a ‘real-world’ data set comprising monthly maximum temperatures over time (Oslington et al., 2020; in press). Other studies indicate that year 3 students can be guided to develop a concept of average which included middle or representative values as well as outliers, the construction of a reference population, and to consider variability between samples (Makar, 2014; Makar & Rubin, 2018). Young students’ informal understanding of variation has also been found in other studies (Chick et al., 2018). Studies with older students, for example, year 5, used sampling to infer aggregate properties of a population (Aridor & Ben-Zvi, 2017), and in another study, students used generalised models to compare two data sets (Doerr et al., 2017).

Meta-representational competence

The construction of student representations is particularly productive for interpreting data because it necessitates the visualisation of distributions and allows relationships between variables to be observed, analysed, and revised. Different types of representation can prompt alternative interpretations of a data set (Gattuso & Ottaviani, 2011). Transnumeration, in turn, is the process of forming and refining a data representation to better understand the data (Wild & Pfannkuch, 1999). It is a critical process necessary for young students to engage in informal inferential reasoning and making predictions (Makar, 2014, 2016), data tracking (Leavy & Hourigan, 2018; Makar, 2018), and the separation of qualitative and quantitative variables (Estrella, 2018). An understanding of graphing conventions supports the development of structural components such as collinearity, equal spacing, data sequencing, and coordination of bivariate data (Mulligan, 2015; Tytler et al., 2022). With this development, students can also engage in the organisation of other transnumerative steps that may precede graphing, including collating data frequencies, calculating means, or constructing data tables (Chick, 2003).

In practising how to visualise, experiment with, and analyse their own data representations, students can be guided to learn about the purposes, suitability, limitations, and effective affordances of different disciplinary representations, such as the use of diagrams in science and timelines in mathematics. To learn to model data successfully in either subject, students need to reason about how these representations are organised and interpreted. In this way, reasoning and meta-representational competence are mutually necessary as students are taught disciplinary practices and come to understand the bases for key concepts. Student-constructed representations can in turn support the development of inferential reasoning when students interpret further what they have represented (Lehrer, 2009; Makar, 2014; Mulligan, 2015; Oslington et al., 2020; Prain & Tytler, 2012, 2021).

Progression in statistical reasoning

Teacher planning for, and assessment of student development of meta-representational competence and statistical reasoning through engaging with authentic, interdisciplinary problems has given rise to the notion of developmental models or constructs proposed as ‘progressions’ or ‘stages’ of growth. Konold et al. (2015) describe statistical understanding as a ‘loose hierarchy’, moving from a focus on observations unrelated to data towards a rich and multi-faceted concept of data including noticing the range in data values, aggregate properties, modal clumps, and variability. Students represent these fundamentally different ideas in which they may perceive data as four distinct ‘lenses’ as follows:

-

1.

Pointer: The student makes no clear distinction between the data and the event: rather, the data ‘points’ to the event in which the data was collected. There is no clear perceptual unit.

-

2.

Case value: The student focuses on an individual data point or points only and does not consider the broader pattern. The individual data point is the perceptual unit.

-

3.

Classifier: The student focuses upon similarities between observations (e.g., by identifying a modal clump). There is a group perceptual unit.

-

4.

Aggregate: The student interprets the data set holistically, identifying properties of aggregation as well as variation between individual data points. The perceptual unit is the entire set.

The ‘data lenses’ approach is advantageous in analysing students’ written and verbal reflections of their data displays and representational processes, particularly for students in the middle primary years. This approach enables researchers and teachers to identify developmental markers in students’ reasoning but leaves open the questions of disciplinary purposes and intended learning outcomes served by this statistical knowledge and these processes. Complementary to Konold’s approach, Lehrer (2022) describes key constructs and progressions in data modelling that can inform how teachers support student learning in science and mathematics (https://datamodeling.app.vanderbilt.edu/). The development of statistical concepts occurs by teachers engaging students in models of measuring variability (Lehrer et al., 2014). Early development in data modelling may involve the teacher or student in posing a question or making statements about a potentially measurable object of interest and in identifying measurable attributes (qualities). Students’ statistical reasoning development is evident when they can explain, justify, and demonstrate the use of particular properties of a unit of measure. Students’ perceptions of data, particularly the ways they might construct or interpret a display (e.g., a graph), provide a means to better understand the phenomenon in question.

Lehrer describes (Lehrer et al., 2014) five increasing levels of abstraction in the development of data displays as follows:

-

1.

Create or interpret data displays without relating them to the goals of the inquiry

-

2.

Interpret and/or produce data displays as collections of individual cases

-

3.

Notice or construct groups of similar values

-

4.

Recognise or apply scale properties to the data

-

5.

Consider the data in aggregate when interpreting or creating a display

Meta-representational competence encompasses the creation, interpretation, and comparison of various forms and functions of data displays. At early levels, students display emerging and elementary representational competencies, followed by the capacity to articulate how features of the display reveal something about the structure of the data. We consider that these levels provide explicit indicators of the broad categories of student growth in statistical reasoning. In this paper, we present a descriptive, interpretive analysis of how teachers enacted the IMS pedagogical model to guide young students to develop elementary ideas about statistics through generating and refining representational tools and conventions related to data modelling in science and mathematics.

Theoretical approach

Our theoretical approach draws on Peirce’s (1955) foundational theory of meaning-making and on the sociocultural theory of disciplines as cultural practices with particular assumptions, goals, methods, and procedures for making, testing, and confirming or proving knowledge claims (Lemke, 1998). For Peirce (1931–1958; 1955), meaning-making at the most fundamental level entails the abstracting process of having signs or representations stand in for referents or other signs, thus enabling signs to function as tools for communication, reasoning, and further sign- and meaning-making. Learning to model data thus entails students engaging in multiple abstraction processes with and from signs. From a sociocultural perspective, to learn and practice a discipline, students need to use particular representational practices for meaning-making in this discipline and understand their underlying logic. While science, mathematics, and statistics as cultural practices entail collecting, ordering, modelling, and analysing data, they proceed from different assumptions, draw on different logics, and address contrasting purposes. To develop disciplinary reasoning in science and mathematics, students need to learn how and why we collect data, sample adequately for particular purposes, choose effective measures and representations, interpret data variability, track growth over time, and recognise differences between categorical and continuous data. Statistical reasoning entails concepts and principles that can be adapted to varied purposes and warrant conclusions in different disciplines and is therefore a crucial component in making and justifying claims in science and mathematics.

The IMS approach to interdisciplinarity assumes that inquiry-based designs involve new learning in each of the science and mathematical disciplines and that they mutually reinforce. We built on Lehrer’s (Lehrer, 2021) principle of focusing on concepts common to science and mathematics but treated distinctively in the two disciplines. The sequences involved, to different degrees, an explicit focus on statistical reasoning, including reasoning about measurement and data modelling. We argue that, while the project was not conceived of as focusing exclusively on statistical reasoning, students’ data modelling and statistical reasoning are developed and enriched, driven by deep engagement with science-related questions that are meaningful to students. In turn, strategic, considered generation and analysis of data enriches the pursuit of scientific questions. In that spirit, the current paper focuses on the ways in which each stage of the IMS pedagogical model strategically contribute to the development of student’s statistical reasoning, analysing the teacher-student interactions that drive this process, and illustrating the student representation constructions and refinements that occur.

The IMS pedagogical model

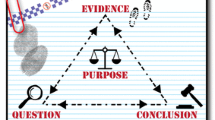

Several pedagogical models focused on statistical investigations have been developed for teaching and learning contexts. A widely utilised model developed by Wild and Pfannkuch (1999) is based on a five-stage cycle that progresses through problem posing, planning, data collection and analysis, making claims, and drawing conclusions based on the data. This PPDAC model—Problem, Plan, Data, Analysis, Conclusion––highlights planning in the investigation cycle. The IMS pedagogical model reflects the PPDAC model in the Orienting stage but emphasises representation construction of data and refinement of representations in order to build consensus. We drew on the work of Lehrer and colleagues who describe their approach as establishing the need to engage in data exploration, to create representations, explore what they reveal, make decisions about appropriate representations, and engage with an expanded set of representational tools (Lehrer & Schauble, 2020). Thus, the IMS model (Fig. 1) enables students to develop scientific and mathematical concepts and tools that could be applied across a range of contexts or problems. The co-design process driving the project led to the refinement of the pedagogical model, developed over the first year of the project based on analysis of teachers’ practice involving video capture of teacher-student interactions, students’ representations and assessment data, and teacher and student interviews. The model consists of four stages, each of which involves distinctive teacher-student negotiation of concepts and practices: Orienting; Posing Representational Challenges; Building Consensus; and Applying and Extending Conceptual Understanding (Fig. 1).

The Interdisciplinary Mathematics and Science (IMS) pedagogical model (from Tytler et al., 2021)

In some sequences, an iterative process involved more than one cycle of stages focused on the refinement of the same concept (e.g., motion and force or variability) or developing a sequence of concepts (shadow patterns leading to modelling of earth’s rotation).

Learning sequences: a framework for connecting science, mathematics, and statistics

Table 1 provides an overview of seven of the 12 learning sequences where science, mathematical, and statistical concepts and meta-representational processes are aligned. Although they appear to be segregated in the table, interdisciplinary learning is interrelated in the pedagogical process.

Methodology

The project overall adopted a design-research approach (Bakker, 2018; Cobb et al., 2003) employing a cycle of collaborative planning, trialling, data generation and analyses, review and refinement in four schools and across years 1 through 6. The project was implemented over a 3-year period tracking a subset of students and teachers across years 1 through 3 and years 4 through 6. The analysis adopted an interpretive and qualitative approach (Creswell, 2013) using an ethnographic methodology drawing on classroom video capture, student and teacher interviews, pre-post assessment, and artefact collection (Tytler et al., 2021). The data generation and analyses primarily focused on the teacher’s role in guiding students through the four stages of the IMS model and on the development of students’ mathematical and scientific knowledge and meta-representational competence. In this paper, we use an ethnographic approach to illustrate how the IMS cycle supported first and second year students’ engagement in data modelling and statistical reasoning through two learning sequences, Ecology and Paper Helicopters, respectively.

Context and participants

The study reported in this paper was conducted in two metropolitan state government primary schools in Victoria, Australia. The schools were located in middle socio-economic areas, with students drawn from diverse cultural backgrounds. In consultation with school principals, the schools were selected on the basis of their previous collaborative partnerships with the research team and on their willingness and capacity to undertake a new, longitudinal project. Mathematics and science teaching programmes in these schools were consistent with the aims and outcomes of the Victorian mathematics and science syllabi (Victorian Curriculum and Assessment Authority [VCAA] 2022) which are consistent with the Australian Curriculum (ACARA, 2022). Mathematics programmes in the schools embedded Statistics and Probability syllabus content, but this was limited to basic activities in data interpretation of tables and pictographs. Science programmes were limited to a weekly timeslot between 1 and 2 h of typically allocated topics across year levels often utilising the inquiry-based modules of Primary Connections (Australian Academy of Science, 2020). The two schools supported an inquiry-based approach to teaching science, but data modelling and statistics were not adequately represented in the mathematics programmes or explicitly integrated with scientific inquiry.

In this paper, we draw on the pedagogical practice of four year 1 teachers, Anna, Kylie, Colin, and Vanessa (pseudonyms) in the Ecology sequence, and two year 2 teachers, Cerise and Emily (pseudonyms) in the Paper Helicopters sequence. Colin and Vanessa, Anna and Kylie, and Cerise and Emily collaborated as teaching partners (pairs) in their respective schools (two schools). There were 15 and 17 ‘case study’ students as a subset of each cohort at each school, respectively, selected on the basis of teacher judgement to represent a range of abilities, and in consideration of prior classroom-based assessment of the students’ mathematics and science learning. Students were aged between 5 years 4 months and 6 years 3 months (year 1) and between 6 years 5 months and 7 years 2 months (year 2). These ‘case study’ students were interviewed after each sequence, and their work was collected for all phases of the IMS pedagogical cycle. The Ecology and Paper Helicopters’ sequences were enacted in the first and second years, respectively, of the study, involving the same cohort of students.

Professional learning, planning, and review

The teachers collaborated with the research team in the development of the learning sequences, engaged in pre- and post-lesson review and refinement, and were interviewed following each learning sequence (for details, see Tytler et al., 2021). Professional learning workshops afforded opportunities for teachers to engage in data modelling and raise questions about the mathematical and scientific disciplinary knowledge and statistical ideas inherent in the investigations. For each learning sequence, detailed plans were developed by the research team in consultation with the teachers. Guidelines included aims and links with syllabus outcomes, lesson plans providing inquiry-based questions, and tasks and resources aligned with each stage of the pedagogical model (see learning sequences at https://imslearning.org/resources/). Samples of student work from comparable cohorts were included in the guidelines where possible. The sharing of teaching practice and discussion about students’ representations between the teachers and the research team encouraged them to review and refine their practice and extend the interdisciplinary inquiry to meet the needs and interests of their students. Professional planning and review meetings with participating teachers were also conducted by the research team where the learning sequences were evaluated and refined.

Implementation of learning sequences: procedures for collecting data

Ecology and Paper Helicopters were implemented in the first and second years of the project in term 2 in each case. Members of the research team provided support to the teachers and students during the lessons. The researcher-observers maintained their view of the classroom by situating themselves at various locations and circulating among students during the exploratory and representational phases of the lessons. Video capture of the lessons focused on teacher-student interactions utilised an iPad mounted on a Swivl robot. As the teacher produced class displays, and when students drew their representations and wrote descriptions or explanations, the researcher-observer took photographs of the work and later collected these work samples. The data was recorded in a workbook dedicated to the IMS project.

Data sources

For the teachers and students, data sources included video capture and field notes from a sample of lessons, teacher and student interviews, work samples, and pre- and post-assessment data. (For further details of data sources and collection procedures, see Tytler et al., 2021, 2022). In this paper, we draw on data from four lessons on Ecology from year 1 in each of two schools, and three lessons from the year 2 learning sequence on Paper Helicopters.

Data analysis

Our main aim in this paper is to illustrate how the IMS pedagogical model supported students’ data modelling and statistical reasoning across the two learning sequences. In pursuing this aim, we recognise the necessary interaction between the theory underpinning the pedagogical model, the IMS model stages and teacher discursive moves on the one hand, and student reasoning as judged by engaging with whole-class reasoning, creating and refining representations, and demonstrating statistical reasoning in interview tasks. The data generation draws on all these sources. The analysis process required a review of the primary analyses of data which focused on the pedagogy supporting scientific inquiry more broadly. In the secondary analysis reported here, we focused explicitly on students’ increasing sophistication in organising and structuring data, constructing and interpreting various data displays, and growth in meta-representational competence and reasoning about the data. The team engaged in independent multiple views of students’ representations and through repeated comparison and discussion reached group consensus on descriptors of students’ representations as they developed across the lesson sequences. Descriptors of these statistical concepts and processes are presented in Table 1. In the second round of our interpretation of students’ representations, we aligned Lehrer’s levels of data modelling described earlier (Lehrer et al., 2014). This was followed by sorting and categorising representations indicative of students’ levels of statistical thinking at different stages of the learning sequences. This process then instigated a search for supporting evidence from video, field notes, interviews and assessment data. Video transcripts were annotated by the researcher who had observed the lessons, and these were reviewed by the other members of the research team. A content analysis of each stage of the pedagogical cycle, e.g., Building Consensus, was included in the annotations. Field notes were discussed and aligned with excerpts of video transcript to support our interpretation of the teacher-student interactions and student learning. Salient student and teacher interviews were transcribed for more detailed interpretation, providing deeper insights into students’ emerging concepts and teachers’ views of student learning. Data from these sources were then coordinated to illustrate students’ developing statistical reasoning. The research team compared these data for the two learning sequences, to search for distinctive features of teacher-student engagement with statistical concepts at the two developmental stages of learning.

Results: illustrations of data modelling and statistical reasoning

Ecology: year 1

Ecology engaged year 1 students in identifying the distribution of living things in the schoolground. In this sequence, we see the emergence of students’ understanding of distribution and sampling, growth in meta-representational forms, and refinement through comparative evaluation and consensus.

Orienting

Through the Orientation stage, the teachers involved students in discussion about the classification of living/non-living things and students’ predictions about the type and number of living things they would find. Students reasoned why they would need to identify living things from a number of plots distributed across different areas of the schoolground. Students worked in small groups where they devised methods of counting, recording, categorising, and representing the distribution of living things in one plot and contributed their data to form a class display.

Posing Representational Challenges

In the Posing Representational Challenge stage, students were supported to think about how they would represent their findings of living things in the schoolground, focused on counts and location. Teachers differed in the ways they supported students. In Colin’s class, students had discussed tally processes previously in mathematics, and he offered a strategic reminder of this in a discussion on how they would identify and count living things in plots. In the schoolground, in pointing out the different sample plots students would be exploring, Colin raised the issue of what the ‘samples’ represented, based on the practicality of investigating the whole schoolground space:

Colin: (Indicating the large area of the schoolground) we’d find so many living things it would take so long. So that’s why we’re going to get samples from our plots (pointing to the particular plot location).

Students’ counting and recording methods mostly used tallies but ranged from pictorial representations without quantification, to simple unordered tallies, and to more organised recording using tallies and a table. Figure 2 is an example of a student’s pictorial representation of the plot showing both these features, although the tallies are not organised in a manner that clearly advances the data from localised counting to an ordered display of the distribution within the plot.

In this data generation phase, teachers circulated among students and encouraged them to refine their representations (see Tytler & Prain, 2022). The teachers’ focus, then, in this early representation challenge phase, was on shifting students’ attention from a focus on individual living things to the idea of the distribution of living things in a sample plot. We interpreted this type of thinking as consistent with Lehrer’s level 2 ‘interpret and/or produce data displays as collections of individual cases’ (Lehrer et al., 2014).

Posing Representational Challenges (second challenge)

Back in the classroom, groups were challenged to construct a common class display which required negotiation of an appropriate way to represent the type and number of living things.

Figure 3 shows a group’s display of their collated data representing their combined findings, displaying three features of informal data representation: icons drawn as an initial view of the data, categorisation of type and frequency of living things using tallies and recorded as a list, and classification of living (L) and non-living (NL) things using symbols. An important distinction can be drawn here—the students did not classify the L and NL things first—the categories were assigned after the list had been compiled. Early indications of the need to organise and represent data in a systematic way began to emerge through negotiation within the group and guiding questions by the teacher. One group, for instance, engaged in a discussion about whether it was reasonable to add the numbers of living things observed by individual group members, whether this would result in double counting, and how they could decide. Validity of measure was thus an issue that students grappled with. The shift between Figs. 2 and 3 represents a move from Lehrer’s level 2 ‘interpret and/or produce data displays as collections of individual cases’ to a level 3 focus on ‘organising data into ordered groups’ (Lehrer et al., 2014).

In a further Posing Representational Challenges task, students were challenged to re-represent their data in graphical form. The teacher (Vanessa) discussed the task of representing the data in more structured ways. Vanessa’s students were new to graphing, and she allowed time for them to try a range of approaches, gathering the class together regularly to compare and evaluate their representations. Individual students, with support from the teacher and their peers, explored different approaches to representing scale.

Figure 4 shows the distribution of living things for one plot. The group collected and recorded their data for one plot initially as a list with tallies. Vanessa strategically supported the task by using a grid to model the data. One-to-one correspondence was highlighted to help the student coordinate the frequency and category of living things. Encouraging the students to label their graph supported their understanding of the purpose of the graph. We can see here the imposition of a visual order and scale on the previous tally representation. Students were then encouraged to invent ways of constructing their own graphical representation freehand without the use of grid paper. The teacher supported students’ development of a scale to represent the count. Figure 5 shows a student’s representation of their group’s plot data previously recorded as tallies. It shows the distribution of eight types of living things as an informal column graph, representing the number of each living thing as a dot. Empty segments in each column are superfluous and drawn irregularly.

Here, students seem to treat the graphical columns as unitary counts of ‘individual cases’ without showing, or recognising the need for, scale properties. This was consistent with Lehrer’s level 2. We can see in Fig. 5 the student’s attempts to construct multiple vertical scales, although they lack the structure of the organised bar chart shown in Fig. 4. These staged representations can be interpreted as a bridging device linking the material ‘living thing’ counting experience to the more formal graphical convention, and then linked iconically in Peircean terms through a sequenced structural resemblance (Cripps Clark & Ferguson, 2022).

Figure 6 is more structured than the representation shown in Fig. 5. The student initially draws a grid freehand to organise and represent data. This is an advance on using grid paper because the student establishes links between the vertical and horizontal axes. The scale is limited to 10, although some data is represented beyond this. At this stage, the student does not see the need to remove the vertical lines because these help the student link the axes. The more formal attention to numbers of organisms of different types represented in these graphs reflects Lehrer’s level 3 ‘notice or construct groups of similar values’ (Lehrer et al., 2014).

Building Consensus—review and refinement

In the Building Consensus stage of the pedagogy, teachers guided students to evaluate and refine their representations and move them towards recognition, in this case, of the formal conventions of graphical work and the statistical reasoning that underpins this. In lesson 4, students compared and collated individual/group findings and shared their ‘way of representing’ using various graphical forms. As a whole class and with individuals, teachers encouraged students to make meaning from others’ representations and discussed what makes some representations effective.

Interviewer A: So how did the Building Consensus phase, where students compared and other students’ data recording, impact on the learning process?

Colin: I find that they learn best when they look at someone else's graph who is on the right track ... they learned more by peer feedback, so it was an important process.

Vanessa: We talked about why this is a good graph, how could this be better, what is missing here? All those discussions led to the children tucking it into the back of their minds for the next time they do it.

Another teacher (Anna), through a comparison of different students’ representations, pointed out the value of having a scale clearly and evenly spaced, highlighting this as an important structural feature (see Fig. 7). At this stage, we see students recognising the importance of intervals and scale, moving towards Lehrer’s level 4 ‘recognise or apply scale properties to the data’, and opening up discussion towards level 5 ‘capacity to articulate how features of display reveal something about the structure of the data’ (Lehrer et al., 2014).

At the next stage of the pedagogical cycle, applying and extending conceptual understanding, students were challenged to notice and interpret the distribution of particular animals across the different plots. This stage was not completed by all teachers, given time constraints, and in some classes was restricted to more confident students while others engaged with refining their first set of graphs such as in Fig. 7. Teachers orchestrated the entry of data from each group into a class table, and each group was assigned at least one animal, and then challenged to systematically organise, record, and represent (aggregate) the data across the sample plots.

Figure 8 shows Vanessa’s construction of a class data display prior to individual students representing the variation of particular animals across the sample plots.

During this process of re-configuring the class data, students generally progressed to more confident graphical representations of the distribution of their chosen animal, coordinating the vertical and horizontal axes. In Fig. 9, the student uses a ruler as a tool to align the data across four plots. They understand that the axes must be coordinated and the columns of equal width and interval. The gradations of the scale became decreasingly spaced because the paper height limited the drawing of the graph. This growing attention to scale corresponds to Lehrer’s level 4 ‘recognise or apply scale properties to the data’ (Lehrer et al., 2014).

Drawing on these transformed data representations showing the distribution of living things across different plots, teachers used guided questioning to establish the conditions in the different plots that could explain the variation in type and number of living things.

Anna: Why do you think we found different living things in the five plots?

Student: It wasn't the same in each plot because there were lots of plots and in different areas, for different habitats for different living things because of the soil and the sun and the shade.

In the class discussion, Anna drew attention to the data as evidence for students’ justifications. She constructed with her class a list of features of each of the sample plots (sunny or in shade, type of vegetation, moist soil, mulch, etc.), and then challenged students to interpret the patterns of data—which plot had the most living things, and which plot had the most different living things—in terms of plot features (see, Tytler et al., 2022). At this stage, students viewed the data as aggregated when creating and interpreting displays, Lehrer’s level 4 (Lehrer et al., 2014). Students’ reasoning about these relationships between the type and distribution of living things and different plot features represents an informal idea of sampling, with the common features of habitats suitable for supporting particular living things, generalised, albeit in an emergent way.

Paper Helicopters: year 2

The learning sequence Paper Helicopters engaged the same students one year later, where they reasoned about the method of recording data and the variation in drop-times, followed by comparison of different weight and wing conditions. The students now grappled with ideas of aggregating the data, graphing using an interval scale, measures of central tendency, and interpreting variability.

This 3-lesson sequence began with students constructing a paper helicopter. In the Orientation stage of the first lesson the question was posed: ‘What affects how long a helicopter takes to fall to the ground?’ Teachers organised group trials of standardised helicopters with stop watches, discussing how a test of drop-time can be made fair. This focus on measurement variation required attention to protocols ensuring the generation of reliable data.

In Fig. 10, the teacher (Emily) recorded on the board the drop-times as they occurred. She then discussed with students what they noticed in the data, prompting them to identify the shortest and longest times, and to re-order the data from fastest to slowest (1.34 to 2.02 s).

The following excerpt shows a student’s response to the question of ‘common time’ enabled supported by the ordered record.

Teacher: What is the data telling you?

Student: It is telling me that 1.34 is the lowest and 2.02 is the biggest number on my number line.

Teacher: What is the common time?

Student: The most common is 1.92 and 1.99 is common of all the groups (referring to the frequencies of drop-times).

In this representation challenge stage of the lesson, another teacher (Cerise) questioned students as to whether a horizontal scale (referred to as a number line) might be useful to display the data and what it might look like. One student volunteered a horizontal, scaled number line with arrows on both ends ‘because it keeps going’. Figure 11 shows a student subsequently structuring a scale for their trial to accurately indicate drop-times in seconds, 1.22, 1.25, 1.3, 1.39 1.49, and 1.5.

In both Cerise and Emily’s classes, statistical ideas were developed using a co-construction process of question and answer supported by students’ representations. The teachers discussed the transformation of the data set encouraging students to notice clumps of numbers. Subsequently, in the Building Consensus class discussion, students noted the modal value as a possible representative value, but subsequently, the counter suggestion of the middle value (median) was adopted as appropriate (see Fig. 12). In the second lesson, which focused on Applying and Extending Conceptual Understanding, the effect of weight on helicopter drop-time was investigated, using a series of tests for each of 0, 1, 2 and 3 paper clips. Groups constructed their own data sets, and a class data set was also compiled and displayed.

The following interview excerpt illustrates how a student reasons about the class data set, by taking a holistic view.

Interviewer: With the class data, you said you did see the class data. What did the class data tell you or not tell you?

Student: It told me that more paper clips make the whirlybird go faster and less make it go slower.

Figure 12 shows a class data set displayed as a table. Students were directed to look for patterns in the data: more weight resulted in drop-time decreasing from 2.1 s (no paperclips) to 0.9 s (3 paperclips). Students engaged in teacher-led discussion about the most common time (mode) and the time that was ‘about in the middle’ (median) for each trial depending on the number of paper clips.

In the final lesson, Application and Extension of Conceptual Understanding, groups were challenged to adapt the wing design of their helicopters to maximise the drop time. Following the re-design, students tested their helicopter and compared their drop-times with other students’ results (see Fig. 13). A class table was constructed from recording 3 drop-times for each design, with the median values displayed on a number line. The class discussion centred on comparing new design features of the slowest and fastest helicopters.

At the end of the three lessons, after orientation to the need for multiple measures, the students had engaged with and refined data using tables, and ordered sets of numbers as a whole class and individually. The representational challenge phase had opened up multiple data representations for discussion. The Building Consensus Building Consensus phase extended this to agreement on measures of a ‘common time’.

Post lesson sequence: semi-structured interview and assessment

Following the last stage of the pedagogical cycle, students were assessed through a written post-assessment, followed by a semi-structured interview (with a member of the research team). We were able to probe students’ statistical reasoning alongside their mathematical and scientific conceptual understandings (see Tytler et al., 2021). In a post-assessment task, the majority of students represented unfamiliar data sets, generated by fictitious characters ‘Ben’ and ‘Tamara’, of five sample drop-times and could order and plot these values on an interval scale. Questions were designed to probe students’ understanding of the need to collect data for repeat trials and explain variation in drop-times:

Why did Ben and Tamara measure the drop five times instead of once?

Why did the times vary from trial to trial?

In interview, students reasoned about for the need for repeat measures to provide comparisons such as ‘you can tell if it’s the same, different or changed’, or for accuracy purposes, ‘to be more accurate’ or ‘to make sure’, indicative of Lehrer’s level 5 involving ‘recognition of the value of aggregated data’ (Lehrer et al., 2014). Two thirds of students were explicit about the inevitability of variation in measure or the need to check and compare. They reasoned that possible causes of variation in measure were due to variations in helicopter wing design and weight, or differences in drop height. Only one student alluded to the notion of variability in terms of timing error. Students were able to make choices about the most appropriate form of representation to interpret the data. Further assessment questions probed students’ predictive reasoning by asking for estimates (‘best guess’) of drop-times for further trials:

If the students repeated this experiment what drop-times are possible?

Each of the 15 students interviewed provided a different series of drop-times, but these were distributed similarly to the data set provided in the first question. This explanation demonstrated a student’s understanding of the measures being samples of a wider possible set, aligned with Lehrer’s level 5 ‘consider the data in aggregate when interpreting or creating displays’ (Lehrer et al., 2014).

I didn’t want to choose them the same because it’s really unlikely that they’re going to be the same. So, I chose different ones because I think that some of them are going to be slow and some of them would be a bit fast.

Discussion

Our illustrations of the IMS pedagogical cycle revealed the challenges and affordances of engaging students in mathematical and scientific inquiry through data modelling. In the inquiry process, students needed to reason about the meaning of their data in solving authentic problems. This process saw these students draw upon, and grapple with scientific and mathematical concepts as well as grasping statistical ideas and new meta-representational skills that were novel or intuitively formed. We draw on the analysis of the two sequences to respond to our research question:

How does teacher implementation of the IMS pedagogical cycle support the development and application of statistical reasoning through interdisciplinary mathematical and scientific investigations?

Development and application of statistical reasoning

In Ecology, we observed how year 1 students applied informal statistical methods to develop concepts of sampling, distribution, and adaptive features of habitat. While not all students could use their spatial and numerical skills to develop early ideas of distribution and sampling, we did see impressive attempts by students to predict that similar types of living things would be found in plots having the same living conditions. We consider this an emergent form of generalisation. In Paper Helicopters, year 2 students engaged in experimental methods exploring concepts of gravity, force and motion, and flight and air flow. Teachers encouraged students to grapple with speed/time/distance relationships and justify the importance of repeated trials and accuracy in measuring height and time. They guided students to notice patterns in the data leading to agreement on measures of central tendency. This structured guidance through the explicit stages of the IMS pedagogical model contrasts with direct instruction traditionally favoured to establish scientific and statistical concepts.

Our findings support those of other studies indicating that young students can develop informal statistical concepts from as early as 5 years of age. For example, our study showed that year 1 students developed, represented, and explained ideas about sampling and distribution, supporting the seminal work of Ben-Zvi and Sharett-Amir (2005). In both years, our students drew increasingly sophisticated graphical representations of their data from which they made inferences about their findings that supported their emerging scientific concepts (for discussion of informal inference, see Fielding-Wells, 2018b; Makar, 2014, 2016; Makar & Rubin, 2018). Consistent with other studies (Chick, 2003; English, 2012, 2013; Kinnear, 2018; Mulligan, 2015), our students recognised common values and the range of values in small data tables, constructed their own data sets as well as represented the data in informal ways and extended representations to bar and column graphs. From the Paper Helicopters’ trial data, students made increasingly accurate predictions showing that predictive reasoning and emergent notions of variability may develop even earlier than previously found (consistent with the findings of Tytler et al., 2021; Watson et al., 2022). The IMS study is longitudinal, cross-sectional (years 1 through 6), and broader in scope than studies focused on particular statistical concepts with one age group. Across the stages of the IMS cycle for each learning sequence, students generated increasingly sophisticated representations of data and made decisions about whether these supported their explanations, claims about, and solutions to scientific problems. Young students in this study engaged in this process from years 1 through 2.

Progression in data modelling

Our illustrative examples provide fresh insights into fine-grained features of the data-modelling process supporting statistical reasoning (Lehrer et al., 2014). We identified a progression in students’ representations and their reasoning aligned with Lehrer’s levels of data modelling and the emergence of statistical ideas. However, individual students developed different aspects of data modelling in a variety of ways depending on the learning context and the IMS pedagogical stage of engagement. In turn, our data does not necessarily reflect a neat developmental progression. In Ecology, the year 1 students were supported to move beyond the identification and iconic representation of different individual living things, to an understanding of the distribution of living things across plots. The focus increasingly shifted towards an aggregate view of the data, first within the sample plot and then across the plots. Students noticed similarities (the habitat features-in-common of different plots) and variation (across plots due to different conditions). They progressed to increasingly abstract representational forms such as tallying, constructing a table, bar chart, or column graphs, and eventually using an informal scale. In Paper Helicopters, year 2 students transformed individual data to an ordered pattern, arranged the times on an interval scale, considered time as representative of the aggregated data and as measures of central tendency. This allowed the comparison of data sets for different experimental conditions and noticing variability between times due to drop conditions and measurement imprecision. However, the notion of variability did not extend to an understanding of natural variation in measure. In view of Lehrer’s description of meta-representational competence, students showed a capacity to articulate how features of display reveal something about the structure of the data (Lehrer et al., 2014). The focus of class discussions was on the clarity of graphical displays of living things, and of what a horizontal scale (timeline) offered that ordered sets of numbers did not. While in neither sequence can we claim that students operated at Lehrer’s level 4, most students were able to ‘consider the data in aggregate when interpreting or creating displays’ (Lehrer et al., 2014).

The IMS pedagogical cycle

In the learning sequences, we traced the way that students’ data modelling, their representations, and reasoning were supported by teachers using particular discursive moves associated with the different stages of the pedagogical model. This involved (i) engaging students with an authentic inquiry focus and establishing what patterns could be noticed and what data should be gathered, and how; (ii) challenging students to represent the data, moving them through guided questioning towards evaluating and refining their representations and reasoning; (iii) through guided comparison, establishing consensus about principles for representing data sets; and (iv) opening up opportunities for extending these representations to new situations. In both cases, the teachers crafted the tasks and the pedagogical process to draw attention to similarities and differences, and patterns in the data, and to make inferences from the data to engage with the scientific ideas. This resulted in a shift towards students’ appreciation and representation of more sophisticated ideas such as aggregation, measures of central tendency, and variability. Acquiring disciplinary knowledge in both learning sequences involved carefully designed pedagogical moves and validation of students’ emerging meta-representational competencies. Not all students progressed through the pedagogical cycle in the same way or at the same pace. Teachers synchronised their pedagogical moves with the students’ existing and emerging knowledge to elicit students’ sense-making. This involved establishing meaning across increasingly abstract sign systems, involving iconic transformations where students’ attention is drawn to structural resemblances. This process is akin to transnumeration and the development of increasing structural features in students’ representations (Estrella, 2018; Lehrer et al., 2009; Oslington et al., 2020; Wild & Pfannkuch, 1999). Through the pedagogy, students made meaningful links between the structural features of the successively abstracted graphical or tabular forms back through tallies or timelines, through categorisation of data, to the systematic recording of individual living things, or of helicopter drop-times (Tytler et al., 2022; Watson & Moritz, 2001). Teachers’ expectations of and capacity to engage with statistical reasoning was dependent on curriculum expectations for the year level, such that the higher-level reasoning around aggregated data and measures of central tendency were challenging for years 1 and 2 students. Nevertheless, they were able to productively engage with data-modelling and statistical reasoning at both year levels, well in advance of teacher expectations, demonstrating that the introduction of data modelling and statistical concepts in the early years of primary school is both possible and productive.

Limitations

In this paper, our analysis of how the IMS pedagogical model supports statistical reasoning is limited to 6 teachers and their ‘case study’ students for one iteration of two learning sequences. Other learning sequences also provided evidence of young students’ impressive statistical thinking (e.g., see Table 1). However, our data, which consisted of evidence from students’ engagement with productive classroom discourse around statistical representational work, individual representational generation, and explanations and justifications elicited in interview, were limited to performance during two learning sequences and small student samples. What we do not know is how the students’ transferred their meta-representational competence to other situations between learning sequences. Further, we cannot claim that our findings can be generalised to primary school students or a more diverse range of participants and learning contexts. What we can say is that our project provides unique opportunities and supporting evidence that young students can be guided to engage in data modelling and statistical thinking beyond curriculum expectations through an interdisciplinary pedagogical model.

Conclusions and implications

This paper contributes to the emerging field of research on the design and efficacy of pedagogical approaches that support data modelling and the development of statistical reasoning in the early years of schooling. It also indicates how Lehrer’s model (2021) of progression in data modelling can articulate important milestones in interpreting students’ representations and reasoning. Through adopting an interdisciplinary pedagogical approach to data modelling, we have illustrated how teachers used an explicit representational focus to support young students in their development of statistical ideas not usually expected at such as early age, such as distribution, sampling, range, aggregation, measures of central tendency, and variability when embedded in scientific inquiry. The IMS approach offers an explicit practical focus responding to the challenge identified by Wild and Pfannkuch (1999) of the importance of transnumeration to support statistical reasoning. Subtle and fine-grained progressions in young students’ thinking and their invented ways of representing and interpreting data need to be noticed, valued, and encouraged. This is relevant for each of the domains of statistics, mathematics education and science education. Another challenge is promoting the important role of data modelling and statistical reasoning in the Australian Curriculum (ACARA, 2022). Lifting our expectations of the statistical capacities of young students and providing opportunities for them to be active participants in data collection and interpretation is critical to advancing statistical literacy. Without well-coordinated, systematic interdisciplinary research involving data modelling, convincing evidence of the beneficial long-term outcomes for students’ mathematics learning remains a challenge for future education policy, curricula, and practice.

References

Aridor, K., & Ben-Zvi, D. (2017). The co-emergence of aggregate and modelling reasoning. Statistics Education Research Journal, 16(2), 38–63. https://iase-web.org/Publications.php?p=SERJ

Australian Academy of Science. (2020). Primary connections: Linking science with literacy. Resources and pedagogies. https://primaryconnections.org.au/resources-and-pedagogies

Australian Curriculum, Assessment and Reporting Authority [ACARA]. (2022). Australian Curriculum. https://www.australiancurriculum.edu.au/

Bakker, A. (2018). Design research in education a practical guide for early career researchers. Routledge.

Ben-Zvi, D., & Sharett-Amir, Y. (2005). How do primary school students begin to reason about distributions? Reasoning about distributions: A collection of recent research studies. Proceedings of the Fourth International Research Forum for Statistical Reasoning, Thinking and Literacy (SRTL-4. University of Auckland (New Zealand). https://www.academia.edu/976792/How_do_primary_school_students_begin_to_reason_about_distributions

Callingham, R., & Watson, J. (2011). Measuring levels of statistical pedagogical content knowledge. In C. Batanero, G. Burrill, & C. Reading (Eds.), Teaching statistics in school mathematics-challenges for teaching and teacher education. ICMI Study Series Vol. 14. (pp. 283–293). Springer.

Chick, H. (2003). Tools for transnumeration: Early stages in the art of data representation. In L. Bragg, C. Campbell, G. Herbert, & J. Mousley (Eds.), Mathematics education research: Innovation, networking, opportunity (Proceedings of the 26th annual conference of the Mathematics Education Research Group of Australasia) (pp. 167–174). Geelong: Mathematics Education Research Group of Australasia.

Chick, H., Watson, J., & Fitzallen, N. (2018). “Plot 1 is all spread out and Plot 2 is all squished together”: Exemplifying statistical variation with young students. In J. Hunter, P. Perger, & L. Darragh (Eds.), Making waves, opening spaces (Proceedings of the 41st annual conference of the Mathematics Education Research Group of Australasia) (pp. 218–225). Auckland: Mathematics Education Research Group of Australasia.

Cobb, P., Confrey, J., DiSessa, A., Lehrer, R., & Schauble, L. (2003). Design experiments in educational research. Educational Researcher, 32(1), 9–13. https://doi.org/10.3102/0013189X032001009

Creswell, J. W. (2013). Research design: Quantitative, qualitative and mixed method approaches (2nd ed.). SAGE.

Cripps Clark, J., & Ferguson, J. (2022). Analysing student graphing in mathematics and science: The methodological utility of a semiotic framework. In P. White, R. Tytler, J. Ferguson, & J. Cripps Clark (Eds.), Methodological approaches to STEM education research, Vol. 3 (pp. 308–325). Cambridge Scholars Publishing.

Doerr, H., Delmas, R., & Makar, K. (2017). A modeling approach to the development of students’ informal inferential reasoning. Statistics Educational Research Journal, 16(2), 86–115.

diSessa, A. (2004). Metarepresentation: Native competence and targets for instruction. Cognition and Instruction, 22(3), 293–331.

English, L. D. (2012). Data modelling with first-grade students. Educational Studies in Mathematics, 81(1), 15–30. https://doi.org/10.1007/s10649-011-9377-3

English, L. D. (2013). Reconceptualizing statistical learning in the early years. In L. English, & J. Mulligan (Eds.), Reconceptualizing early mathematics learning (pp. 67–82). Springer. https://doi.org/10.1007/978-94-007-6440-8_5

Estrella, S. (2018). Data representations in early statistics: Data sense, meta-representational competence and transnumeration. In A. Leavy, M. Meletiou-Mavrotheris, & E. Paparistodemou (Eds.), Statistics in early childhood and primary education: Supporting early statistical and probabilistic thinking (pp. 239–256). Springer.

Fielding-Wells, J. (2018a). Scaffolding statistical inquiries for young children. In A. Leavy, M. Meletiou-Mavrotheris, & E. Paparistodemou (Eds.), Statistics in early childhood and primary education: Supporting early statistical and probabilistic thinking (pp. 109–127). Springer.

Fielding-Wells, J. (2018b). Dot plots and hat plots: Supporting young students emerging understandings of distribution, center and variability through modeling. ZDM, 50(7), 1125–1138.

Fielding-Wells, J., & Makar, K. (2015). Inferring to a model: Using inquiry-based argumentation to challenge young children’s expectations of equally-likely outcomes. In S. Zieffler & E. Fry (Eds.), Reasoning about uncertainty: Learning and teaching informal inferential reasoning (pp. 1–28). Catalyst Press.

Fielding, J., & Makar, K. (2022). Challenging conceptual understanding in a complex system: Supporting young students to address extended mathematical inquiry problems. Instructional Science, 50, 35–61. https://doi.org/10.1007/s11251-021-09564-3

Frischemeier, D. (2018). Design, implementation, and evaluation of an instructional sequence to lead primary school students to comparing groups in statistical projects. In A. Leavy, M. Meletiou-Mavrotheris, & E. Paparistodemou (Eds.), Statistics in early childhood and primary education: Supporting early statistical reasoning and probabilistic thinking (pp. 217–238). Springer.

Gattuso, L., & Ottaviani, M. G. (2011). Complementing mathematical thinking and statistical thinking in school mathematics. In C. Batanero, G. Burrill, & C. Reading (Eds.), Teaching statistics in school mathematics-challenges for teaching and teacher education. ICMI Study Series Vol. 14. (pp. 212–132). Springer.

Kinnear, V. (2018). Initiating interest in statistical problems: The role of picture storybooks. In A. Leavy, M. Meletiou-Mavrotheris, & E. Paparistodemou (Eds.), Statistics in early childhood and primary education: Supporting early statistical reasoning and probabilistic thinking (pp. 183–199). Springer.

Kinnear, V., & Clark, J. (2014). Probabilistic reasoning and prediction with young children. In I. Anderson, M. Cavanagh, & A. Prescott (Eds.), Curriculum in focus: Research guided practice (Proceedings of the 37th annual conference of the Mathematics Education Research Group of Australasia) (pp. 335–342). Sydney: Mathematics Education Research Group of Australasia.

Konold, C., Higgins, T., Russell, S. J., & Khalil, K. (2015). Data seen through different lenses. Educational Studies in Mathematics, 88(3), 305–325. https://doi.org/10.1007/s10649-013-9529-8

Leavy, A. (2008). An examination of the role of statistical investigation in supporting the development of young children’s statistical reasoning. In O. N. Saracho & B. Spodek (Eds.), Contemporary perspectives on mathematics in early childhood education (pp. 215–232). Information Age Publishing.

Leavy, A., & Hourigan, M. (2018). Inscriptional capacities and representations of young children engaged in data collection during a statistical investigation. In A. Leavy, M. Meletiou-Mavrotheris, & E. Paparistodemou (Eds.), Statistics in early childhood and primary education: Supporting early statistical reasoning and probabilistic thinking (pp. 89–108). Springer.

Lehrer, R. (2009). Designing to develop disciplinary dispositions: Modelling natural systems. American Psychologist, 64(8), 759–771.

Lehrer, R. (2021). Promoting transdisciplinary epistemic dialogue. In M-C. Shanahan, B. Kim, K. Koh, P. Preciado-Babb, & M.A. Takeuchi (Eds.), The learning sciences in conversation: Theories, methodologies, and boundary spaces. Routledge.

Lehrer, R. (2022, October 16). Data modelling. Retrieved October 16, 2022 from https://datamodeling.app.vanderbilt.edu/

Lehrer, R., & English, L. (2018). Introducing children to modelling variability. In D. Ben-Zvi, K. Makar, & J. Garfield (Eds.), The international handbook of research in statistics education (pp. 229–260). Springer. https://doi.org/10.1007/978-3-319-66195-7_7

Lehrer, R., Kim, M-J., Ayers, E., & Wilson, M. (2014). Toward establishing a learning progression to support the development of statistical reasoning. In J. Confrey, A. P. Maloney, & K. H. Nyuyen (Eds.), Learning over time: Learning trajectories in mathematics education (pp. 31–60). Information Age Publishing.

Lehrer, R., & Schauble, L. (2012). Seeding evolutionary thinking by engaging children in modelling its foundations. Science Education, 96(4), 701–724.

Lehrer, R., & Schauble, L. (2017). Children’s conceptions of sampling in local ecosystems investigations. Science Education, 101(6), 968–984. https://doi.org/10.1002/sce.21297

Lehrer, R., & Schauble, L. (2020). Stepping carefully: Thinking through the potential pitfalls of integrated STEM. Journal for STEM Education Research, 4, 1–26.

Lemke, J. (1998). Multiplying meaning: Visual and verbal semiotics in scientific text. In J. R. Martin & R. Veel (Eds.), Reading Science (pp. 87–113). Routledge.

MacGillivray, H., & Pereira-Mendoza, L. (2011). Teaching statistical thinking through investigative projects. In C. Batanero, G. Burrill, & C. Reading (Eds.), Teaching statistics in school mathematics-challenges for teaching and teacher education. ICMI Study Series Vol. 14. (pp. 109–120). Springer. https://doi.org/10.1007/978-94-007-1131-0_14

Makar, K. (2014). Young children’s explorations of average through informal inferential reasoning. Educational Studies in Mathematics, 86(1), 61–78. https://doi.org/10.1007/s10649-013-9526-y

Makar, K. (2016). Developing young children’s emergent inferential practices in statistics. Mathematical Thinking and Learning, 18(1), 1–24. https://doi.org/10.1080/10986065.2016.1107820

Makar, K. (2018). Theorising links between context and structure to introduce powerful statistical ideas in the early years. In L. English, A. Leavy, M. Meletiou-Mavrotheris, & E. Paparistodemou (Eds.), Statistics in early childhood and primary education: Supporting early statistical and probabilistic thinking (pp. 3–20). Springer.

Makar, K., & Fielding-Wells, J. (2011). Teaching teachers to teach statistical investigations. In C. Batanero, G. Burrill, & C. Reading (Eds.), Teaching statistics in school mathematics-challenges for teaching and teacher education. ICMI Study Series Vol. 14. (pp. 347–358). Springer. https://doi.org/10.1007/978-94-007-1131-0_33

Makar, K. & Rubin, A. (2018). Learning about statistical inference. In D. Ben-Zvi, K. Makar, & J. Garfield (Eds.), The international handbook of research in statistics education (pp. 261–294). Springer. https://doi.org/10.1007/978-3-319-66195-7_7

Mulligan, J. (2015). Moving beyond basic numeracy: Data modeling in the early years of schooling. ZDM, 47(4), 653–663.

Mulligan, J., Kirk, M., Tytler, R., White, P., & Capsalis, M. (2022). Investigating students’ heights through a data-modelling approach. Australian Primary Mathematics Classroom, 27(1), 17–21.

Oslington, G., Mulligan, J. T., & van Bergen, P. (2020). Developing third graders’ predictive reasoning. Educational Studies in Mathematics, 104(1), 5–24. https://doi.org/10.1007/s10649-020-09949-0

Oslington, G., Mulligan, J. T., & van Bergen, P. (in press). Shifts in students’ predictive reasoning from data tables in Years 3 and 4. Mathematics Education Research Journal

Peirce, C. S. (1931/1958). Collected papers of Charles Sanders Peirce. In C. Hartshorne, P. Weiss & A. W. Burks (Eds.), (Vol. 1–6), & A. W. Burks (Ed.), (Vol. 7–8). Harvard University Press.

Peirce, C. S. (1955). “Logic as semiotic: The theory of signs”. In J. Buchler (Ed.), Philosophical Writings of Peirce. New York: Dover.

Pfannkuch, M. (2018). Reimagining curriculum approaches. In D. Ben-Zvi (Ed.), International Handbook of Research in Statistics Education (pp. 387–412). Cham: Switzerland: Springer. https://doi.org/10.1007/978-3-319-66195-7_12

Prain, V., & Tytler, R. (2012). Learning through constructing representations in science: A framework of representational construction affordances. International Journal of Science Education, 34(17), 2751–2773. https://doi.org/10.1080/09500693.2011.626462

Prain, V., & Tytler, R. (2021). Theorising learning in science through integrating multimodal representations. Research in Science Education, 52, 805–817. https://doi.org/10.1007/s11165-021-10025-7

Suh, J. M., Wickstrom, M., & English, L. D. (Eds.), (2021). Exploring mathematical modeling with young learners. Springer.

Tytler, R., Mulligan, J., Prain, V., White, P., Xu, L., Kirk, M., Neilsen, C., & Speldewinde, C. (2021). An interdisciplinary approach to primary school mathematics and science learning. International Journal of Science Education, 43, 1926–1949. https://doi.org/10.1080/09500693.2021.1946727

Tytler, R. & Prain, V. (2022). Supporting student transduction of meanings across modes in primary school astronomy. Frontiers in Communication. https://doi.org/10.3389/fcomm.2022.863591

Tytler, R., Prain, V., Kirk, M., Mulligan, J., Nielsen, C., Speldewinde, C., White, P., & Xu, L. (2022). Characterising a representation construction pedagogy for integrating science and mathematics in the primary school. International Journal of Science and Mathematics Education. https://doi.org/10.1007/s10763-022-10284-4

Victorian Curriculum and Assessment Authority [VCAA] (2022). Victorian Curriculum Foundation–10. https://victoriancurriculum.vcaa.vic.edu.au

Watson, J., & Moritz, J. (2001). Development of reasoning associated with pictographs: Representing, interpreting, and predicting. Educational Studies in Mathematics, 48(1), 47–81.

Watson, J., Fitzallen, N., Fielding-Wells, J., & Madden, S. (2018). The practice of statistics. In D. Ben-Zvi, K. Makar, & J. Garfield (Eds.), International handbook of research in statistics education (pp. 105–137). Springer.

Watson, J., Fitzallen, N., Chick, H. (2020). What is the role of statistics in integrating STEM education? In J. Anderson, & Y. Li (Eds.), Integrated approaches to STEM education. Advances in STEM education. Springer. https://doi.org/10.1007/978-3-030-52229-2_6

Watson, J., Wright, S., Fitzallen, N., & Kelly, B. (2022). Consolidating understanding of variation as part of STEM: Experimenting with plant growth. Mathematics Education Research Journal. https://doi.org/10.1007/s13394-022-00421-1

Wild, C., & Pfannkuch, M. (1999). Statistical thinking in empirical enquiry. International Statistical Review, 67, 223–265.

Acknowledgements

The authors acknowledge the assistance of the research team: Russell Tytler (Deakin University), Joanne Mulligan (Macquarie University), Peta White, Lihua Xu, Vaughan Prain, Chris Nielsen, Melinda Kirk, Chris Speldewinde (Deakin University), Richard Lehrer, Leona Schauble (Vanderbilt University). Particular thanks to Richard Lehrer for his advice and commentary on data modelling in this project. Our thanks extend to the Department of Education and Training Victoria, schools, teachers, and students.

Funding

Open Access funding enabled and organized by CAUL and its Member Institutions. This study was funded as part of an Australian Research Council Discovery (ARC) (DP180102333) Enriching mathematics and science learning: An interdisciplinary approach. Any opinions, findings, conclusions, or recommendations reported are those of the authors and do not necessarily reflect the views of the ARC.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Ethical approval

The research was completed in a manner consistent with the principles of the research ethics of the American Psychological Association and approved through Deakin University Human Research Ethics Committee HAE-18053.

Informed consent

Principals, teachers, and parents of participants provided informed consent. The 32 students whose data were used in the study reported in this paper had written parental permission for their data to be used.

Conflict of interest

The authors declare no competing interests.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article