Abstract

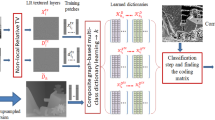

Limited spatial resolution and varieties of degradations are the main restrictions of today’s captured depth map by active 3D sensing devices. Typical restrictions limit the direct use of the obtained depth maps in most of 3D applications. In this paper, we present a single depth map upsampling approach in contrast to the common work of using the corresponding combined color image to guide the upsampling process. The proposed approach employs a multi-level decomposition to convert the depth upsampling process to a classification-based problem via a multi-level classification-based learning algorithm. Hence, the lost high frequency details can be better preserved at different levels. The adopted multi-level decomposition algorithm utilizes \(l_{1} ,\) and \(l_{0}\) sparse regularization with total-variation regularization to keep structure- and edge-preserving smoothing with robustness to noisy degradations. In addition, the proposed classification-based learning algorithm supports the accuracy of discrimination by learning discriminative dictionaries that carry original features about each class and learning common shared dictionaries that represent the shared features between classes. The proposed algorithm has been validated via different experiments under variety of degradations using different datasets from different sensing devices. Results show superiority to the state of the art, especially in case of upsampling noisy low-resolution depth maps.

Similar content being viewed by others

References

Altantawy, D. A., Saleh, A. I., & Kishk, S. (2017). Single depth map super-resolution: A self-structured sparsity representation with non-local total variation technique. In Proceedings of the IEEE conference on intelligent computing and information systems (ICICIS) (pp. 43–50).

Aujol, J. F., Gilboa, G., Chan, T., & Osher, S. (2006). Structure-texture image decomposition—Modeling, algorithms, and parameter selection. International Journal of Computer Vision, 67(1), 111–136.

Boyd, S., Parikh, N., Chu, E., Peleato, B., & Eckstein, J. (2011). Distributed optimization and statistical learning via the alternating direction method of multipliers. Foundations and Trends® in Machine Learning, 3(1), 1–122.

Dong, C., Loy, C. C., He, K., & Tang, X. (2016). Image super-resolution using deep convolutional networks. IEEE Transactions on Pattern Analysis and Machine Intelligence, 38(2), 295–307.

Diebel, J., & Thrun, S. (2006). An application of markov random fields to range sensing. In Advances in neural information processing systems (pp. 291–298).

Eichhardt, I., Chetverikov, D., & Jankó, Z. (2017). Image-guided ToF depth upsampling: A survey. Machine Vision and Applications, 28(3–4), 267–282.

Ferstl, D., Ruther, M., & Bischof, H. (2015). Variational depth superresolution using example-based edge representations. In Proceedings of the IEEE international conference on computer vision (ICCV) (pp. 513–521).

Ferstl, D., Reinbacher, C., Ranftl, R., Rüther, M., & Bischof, H. (2013). Image guided depth upsampling using anisotropic total generalized variation. In Proceedings of the IEEE international conference on computer Vision (ICCV) (pp. 993–1000).

Freeman, W. T., Jones, T. R., & Pasztor, E. C. (2002). Example-based super-resolution. IEEE Computer Graphics and Applications, 22(2), 56–65.

Gong, X., Ren, J., Lai, B., Yan, C., & Qian, H. (2014). Guided depth upsampling via a cosparse analysis model. In Proceedings of the IEEE conference on computer vision and pattern recognition workshops (pp. 724–731).

Gilboa, G., Sochen, N., & Zeevi, Y. Y. (2004). Image enhancement and denoising by complex diffusion processes. IEEE Transactions on Pattern Analysis and Machine Intelligence, 26(8), 1020–1036.

Hui, T. W., Loy, C. C., & Tang, X. (2016). Depth map super-resolution by deep multi-scale guidance. In European conference on computer vision (pp. 353–369). Cham: Springer.

Huang, J. B., Singh, A., & Ahuja, N. (2015). Single image super-resolution from transformed self-exemplars. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 5197–5206).

He, K., Sun, J., & Tang, X. (2013). Guided image filtering. IEEE Transactions on Pattern Analysis and Machine Intelligence, 6, 1397–1409.

Hornácek, M., Rhemann, C., Gelautz, M., & Rother, C. (2013). Depth super resolution by rigid body self-similarity in 3d. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 1123–1130).

Kong, S., & Wang, D. (2012). A dictionary learning approach for classification: Separating the particularity and the commonality. In European conference on computer vision (pp. 186–199). Berlin: Springer.

Kopf, J., Cohen, M. F., Lischinski, D., & Uyttendaele, M. (2007). Joint bilateral upsampling. ACM Transactions on Graphics (ToG), 26(3), 96.

Liang, Z., Xu, J., Zhang, D., Cao, Z., & Zhang, L. (2018). A hybrid l1-l0 layer decomposition model for tone mapping. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 4758–4766).

Liu, W., Chen, X., Yang, J., & Wu, Q. (2017). Robust color guided depth map restoration. IEEE Transactions on Image Processing, 26(1), 315–327.

Lu, X., Guo, Y., Liu, N., Wan, L., & Fang, T. (2017). Non-convex joint bilateral guided depth upsampling. Multimedia Tools and Applications, 77(12), 15521–15544.

Liu, W., & Li, S. (2014). Sparse representation with morphologic regularizations for single image super-resolution. Signal Processing, 98, 410–422.

Liu, M. Y., Tuzel, O., & Taguchi, Y. (2013). Joint geodesic upsampling of depth images. In Proceedings of the IEEE conference on computer vision and pattern recognition (169–176).

Li, Y., Xue, T., Sun, L., & Liu, J. (2012). Joint example-based depth map super-resolution. In Proceedings of the IEEE conference on multimedia and expo (ICME) (pp. 152–157).

Lijun, S., ZhiYun, X., & Hua, H. (2010). Image super-resolution based on MCA and wavelet-domain HMT. IEEE International Forum on Information Technology and Applications (IFITA), 2, 264–269.

Middleburry datasets: http://vision.middlebury.edu/stereo/data/. Accessed November 12, 2018.

Mac Aodha, O., Campbell, N. D., Nair, A., & Brostow, G. J. (2012). Rigid synthesis for single depth image super-resolution. In European conference on computer vision (pp. 71–84). Berlin: Springer.

Nguyen, R. M., & Brown, M. S. (2015). Fast and effective L0 gradient minimization by region fusion. In Proceedings of the IEEE international conference on computer vision (pp. 208–216).

Park, J., Kim, H., Tai, Y. W., Brown, M. S., & Kweon, I. (2011). High quality depth map upsampling for 3d-tof cameras. In Proceedings of the IEEE conference in computer vision (ICCV) (pp. 1623–1630).

Riegler, G., Rüther, M., & Bischof, H. (2016). Atgv-net: Accurate depth super-resolution. In European conference on computer vision (pp. 268–284). Cham: Springer.

Shabaninia, E., Naghsh-Nilchi, A. R., & Kasaei, S. (2017). High-order Markov random field for single depth image super-resolution. IET Computer Vision, 11(8), 683–690.

Song, X., Dai, Y., & Qin, X. (2016) Deep depth super-resolution: Learning depth super-resolution using deep convolutional neural network. In Asian conference on computer vision (pp. 360–376). Cham: Springer.

Timofte, R., Smet, V., & Gool, L. (2013). Anchored neighborhood regression for fast example-based super-resolution. In Proceedings of the IEEE conference in computer vision (ICCV) (pp. 1920–1927).

Unger, M., Pock, T., Werlberger, M., & Bischof, H. (2010). A convex approach for variational super-resolution. In Joint pattern recognition symposium (pp. 313–322). Berlin: Springer.

Xie, J., Feris, R. S., & Sun, M. T. (2016). Edge-guided single depth image super resolution. IEEE Transactions on Image Processing, 25(1), 428–438.

Xie, J., Chou, C. C., Feris, R., & Sun, M. T. (2014). Single depth image super resolution and denoising via coupled dictionary learning with local constraints and shock filtering. In Proceedings of the IEEE conference on multimedia and expo (ICME) (pp. 1–6).

Xu, L., Lu, C., Xu, Y., & Jia, J. (2011). Image smoothing via L 0 gradient minimization. ACM Transactions on Graphics (TOG), 30(6), 174.

Yang, J., Ye, X., Li, K., Hou, C., & Wang, Y. (2014). Color-guided depth recovery from RGB-D data using an adaptive autoregressive model. IEEE Transactions on Image Processing, 23(8), 3443–3458.

Yang, J., Wright, J., Huang, T. S., & Ma, Y. (2010). Image super-resolution via sparse representation. IEEE Transactions on Image Processing, 19(11), 2861–2873.

Yang, M., Zhang, L., Yang, J., & Zhang, D. (2010). Metaface learning for sparse representation based face recognition. In 17th IEEE international conference on image processing (ICIP), 2010 (pp. 1601–1604). IEEE.

Zhao, F., Cao, Z., Xiao, Y., Zhang, X., Xian, K., & Li, R. (2017). Depth image super-resolution via semi self-taught learning framework. In Videometrics, range imaging, and applications XIV, 10332: 103320R. International Society for Optics and Photonics.

Zhou, W., Li, X., & Reynolds, D. (2017). Guided deep network for depth map super-resolution: How much can color help? In Proceedings of the IEEE conference in acoustics, speech and signal processing (ICASSP) (pp. 1457–1461).

Zhang, Y., Zhang, Y., & Dai, Q. (2015) Single depth image super resolution via a dual sparsity model. In Proceedings of the IEEE conference in multimedia & expo workshops (ICMEW) (pp. 1–6).

Zhang, Z., Xu, Y., Yang, J., Li, X., & Zhang, D. (2015). A survey of sparse representation: Algorithms and applications. IEEE Access, 3, 490–530.

Zeyde, R., Elad, M., & Protter, M. (2010) On single image scale-up using sparse-representations. In International conference on curves and surfaces (pp. 711–730). Berlin: Springer.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors certify that they have NO affiliations with or involvement in any organization or entity with any financial interest (such as honoraria; educational grants; participation in speakers’ bureaus; membership, employment, consultancies, stock ownership, or other equity interest; and expert testimony or patent-licensing arrangements), or non-financial interest (such as personal or professional relationships, affiliations, knowledge or beliefs) in the subject matter or materials discussed in this manuscript.

Appendices

Appendix 1

For solving the proposed learning model in Eq. 19 as

An alternative optimization process, by updating the targeted variable while fixing all other variables, is followed. In this procedure, we need to update the overall structured dictionary considering both the shared and main dictionaries besides updating the coding matrix \(S\) considering both the shared and the main codes.

For Updating Dictionaries

The overall composite dictionary \({\mathcal{A}}\) is expressed as \({\mathcal{A}} = \left[ {\dot{A}_{{B_{2} }}^{HL} , \dot{A}_{{B_{2} ,{{Q}}}}^{HL} ,\dot{A}_{{D_{1} }}^{HL} ,\dot{A}_{{D_{1} ,{{Q}}}}^{HL} ,\dot{A}_{{D_{2} }}^{HL} ,\dot{A}_{{D_{2} ,{{Q}}}}^{HL} ,\dot{A}_{{{Q}}}^{HL} } \right]\) = \(\left[ {\dot{A}_{{B_{2} ,1}}^{HL} , \ldots ,\dot{A}_{{B_{2} ,C_{{B_{2} }} }}^{HL} ,\dot{A}_{{B_{2} ,{{Q}}}}^{HL} ,\dot{A}_{{D_{1} ,1}}^{HL} , \ldots ,\dot{A}_{{D_{1} ,C_{{D_{1} }} }}^{HL} ,\dot{A}_{{D_{1} ,{{Q}}}}^{HL} , \dot{A}_{{D_{2} ,1}}^{HL} , \ldots ,\dot{A}_{{D_{2} ,C_{{D_{2} }} }}^{HL} ,\dot{A}_{{D_{2} ,{{Q}}}}^{HL} , \dot{A}_{{{Q}}}^{HL} } \right]\). We have two types of dictionaries main particular dictionaries \(\dot{A}_{i}^{HL}\) and common dictionaries \(\dot{A}_{{i,{{Q}}}}^{HL}\) and \(\dot{A}_{{{Q}}}^{HL} .\) Hence, in each time, we will update only one kind of dictionaries while fixing the others beside, of course, all coding matrices

2.1 For Updating \(\dot{\varvec{A}}_{\varvec{i}}^{{\varvec{HL}}}\)

\(\dot{A}_{i}^{HL}\) can be updated subclass by subclass. Hence, when updating the subclass dictionary \(\dot{A}_{i,j}^{HL}\), all others \(\dot{A}_{a,b}^{HL}\),\(a \ne i\) & \(b \ne j\) are fixed. Hence, the energy minimization problem in Eq. 19 is turned into

where \(\mathop \sum \nolimits_{v = 1, v \ne j}^{{C_{i} + 1}} \left\| {\dot{A}_{i,j}^{{HL^{T} }} \dot{A}_{i,v}^{HL} } \right\|_{2}^{2}\) is a general incoherence term between \(\dot{A}_{i,j}^{HL}\) and all other sub-classes’ dictionaries. Then, Eq. (19.1) can be converted into Eq. (19.2) in terms of the targeted \(\dot{A}_{i,j}^{HL}\) as

Then, to the following simplified formula as

where \({\overset{\lower0.5em\hbox{$\smash{\scriptscriptstyle\smile}$}}{\mathcal{M}} } = {\mathcal{M}} - \mathop \sum \nolimits_{{\begin{array}{*{20}c} {a = 1,\forall a \ne i } \\ \end{array} }}^{{{\dot{C}}}} \mathop \sum \nolimits_{{\begin{array}{*{20}c} {b = 1,\forall b \ne j } \\ \end{array} }}^{{{{\dot{C}}}_{a} + 1}} \dot{A}_{a,b}^{HL} S_{a,b}^{HL} - \dot{A}_{{{Q}}}^{HL} S_{{{Q}}}^{HL}\), and \(\overset \smile{\dot {\text M}}_{i}^{HL} = \dot{M}_{i}^{HL} - \mathop \sum \nolimits_{p = 1}^{{{{\dot{C}}}_{i} + 1}} \dot{A}_{i,p}^{HL} S_{i,p}^{HL} - \dot{A}_{{{Q}}}^{HL} S_{{{Q}}}^{HL}\). Equation (19.3) is a quadratic programming problem can be solved by updating \(\dot{A}_{i,j}^{HL}\) atom by atom [39].

2.2 For Updating the Common Featured Dictionary of Subclasses \(\dot{\varvec{A}}_{{\varvec{i},\varvec{Q}}}^{{\varvec{HL}}}\)

The energy minimization problem in Eq. 19 is turned into the following problem in terms of \(\dot{\varvec{A}}_{{\varvec{i},{\mathbf{{Q}}}}}^{{\varvec{HL}}}\) as

which can be rewritten as

where \(\overline{\overline{{\mathcal{M}}}} = {\mathcal{M}} - \mathop \sum \nolimits_{i = 1}^{{{C}}} \mathop \sum \nolimits_{{\begin{array}{*{20}c} {j = 1 } \\ \end{array} }}^{{C_{i} }} \dot{A}_{i,j}^{HL} S_{i,j}^{HL} - \dot{A}_{{{Q}}}^{HL} S_{{{Q}}}^{HL}\), and \(\overline{\overline{{{\dot{\text{M}}}}}}_{i,j}^{HL} = \dot{M}_{i,j}^{HL} - \dot{A}_{i,j}^{HL} S_{i,j}^{HL}\). Similar to Eq. (19.3), Eq. (19.6) is another quadratic problem can be solved by updating \(\dot{A}_{{i,{{Q}}}}^{HL}\) atom by atom [39].

2.3 For Updating the Common Dictionary \(\dot{\varvec{A}}_{\varvec{Q}}^{{\varvec{HL}}}\)

The energy minimization of Eq. 19 can be turned, in terms of \(\dot{A}_{\varvec{Q}}^{{\varvec{HL}}}\), into

which can be turned into

which can be simplified to

where \({\bar{\mathcal{M}}} = {\mathcal{M}} - \mathop \sum \nolimits_{i = 1}^{{{ \dot{C} }}} \left( {\dot{A}_{i}^{HL} S_{i}^{HL} + \dot{A}_{{i,{{Q}}}}^{HL} S_{{i,{{Q}}}}^{HL} } \right)\), and \({{\bar{\dot{\text{{M}}}}}}_{i}^{HL} = \dot{M}_{i}^{HL} - \dot{A}_{i}^{HL} S_{i}^{HL}\). Equation (19.9) is again another quadratic problem can be solved by updating \(\dot{A}_{{{Q}}}^{HL}\) atom by atom [39].

Updating the Coding Coefficients

In this step when updating any type of coding matrices, all dictionaries are kept fixed.

3.1 Updating the Main Coding Coefficients \(\varvec{S}_{\varvec{i}}^{{\varvec{HL}}}\)

The main coding coefficient matrix will be updated through the coding coefficient of subclasses \(S_{i,j}^{HL}\) as

which can turned into

which can be simplified to

which is a LASSO problem can be solved using the feature sign algorithm [43].

3.2 Updating the Shared Subclasses Coding Matrix \(\varvec{S}_{{\varvec{i},\varvec{Q}}}^{{\varvec{HL}}}\)

For updating \(S_{{i,{{Q}}}}^{HL}\), the energy minimization problem of Eq. 19 is converted to

which can be turned into

which is another LASSO problem can be solved using the feature sign algorithm [43]

3.3 Updating the Classes Shared Coding Matrix \(\varvec{S}_{{\mathbf{{Q}}}}^{{\varvec{HL}}}\)

For updating \(S_{{{Q}}}^{HL}\), the energy minimization problem of Eq. 19 is converted to

which can be simplified as

which is another LASSO problem can be solved using the feature sign algorithm [43].

Rights and permissions

About this article

Cite this article

Altantawy, D.A., Saleh, A.I. & Kishk, S.S. Hybrid Multi-level Regularizations with Sparse Representation for Single Depth Map Super-Resolution. 3D Res 9, 58 (2018). https://doi.org/10.1007/s13319-018-0208-5

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s13319-018-0208-5