Abstract

Objective

To review knowledge of computed tomography (CT) parameters and their influence on patient dose and image quality amongst a cohort of clinical specialist radiographers (CSRs) and examining radiologists.

Methods

A questionnaire survey was devised and distributed to a cohort of 65 examining radiologists attending the American Board of Radiology exam in Kentucky in November 2011. The questionnaire was later distributed by post to a matching cohort of Irish CT CSRs. Each questionnaire contained 40 questions concerning CT parameters and their influence on both patient dose and image quality.

Results

A response rate of 22 % (radiologists) and 32 % (CSRs) was achieved. No difference in mean scores was detected between either group (27.8 ± 4 vs 28.1 ± 4, P = 0.87) although large ranges were noted (18–36). Considerable variations in understanding of CT parameters was identified, especially regarding operation of automatic exposure control and the influence of kilovoltage and tube current on patient dose and image quality. Radiologists were unaware of recommended diagnostic reference levels. Both cohorts were concerned regarding CT doses in their departments.

Conclusions

CT parameters were well understood by both groups. However, a number of deficiencies were noted which may have a considerable impact on patient doses and limit the potential for optimisation in clinical practice.

Key points

• CT users must adapt parameters to optimise patient dose and image quality.

• The influence of some parameters is not well understood.

• A need for ongoing education in dose optimisation is identified.

Similar content being viewed by others

Introduction

Computed tomography (CT) has revolutionised modern medicine, allowing physicians to non-invasively visualise the internal structures of the body, thus facilitating rapid diagnosis and monitoring of disease processes. Such an advance has inevitably improved the standard of care for patients and made CT the imaging modality of choice for a host of medical indications. However, CT is also associated with some of the highest radiation doses in diagnostic radiology and, given its increasing use worldwide [1], is fast becoming the largest contributor to population dose from medical exposures [2]. Concern is increasingly being raised regarding the potential detriment that CT may have on both populations [3, 4] and individuals [5, 6] especially if used inappropriately, given its carcinogenic potential [7].

Once justified, all CT examinations must obey the “as low as reasonably achievable” (ALARA) principle, whereby doses delivered to patients must be kept as low as possible to ensure that the benefit to patients always outweighs the potential risks involved [8]. Vital to such optimisation is an astute knowledge of all the various parameters that control the radiation output in CT and their impact on CT image quality, such as the peak kilovoltage (kVp), tube current–time product (mAs), pitch, slice thickness, etc. Image quality in CT is directly proportional to the amount of radiation used, therefore it is vital to use sufficient quantities to ensure diagnostic yield, while avoiding excessive amounts which only add to the patient’s risk. There are a vast amount of combinations of CT parameters for users to choose from which produce varying blends of image quality and dose, some of which may be manufacturer specific. However, default settings and manufacturer recommended protocols may be designed to optimise image quality rather than patient dose [9]. To ensure optimisation, users must tailor CT parameters to match the presenting indication, region being scanned and patient size, as not all examinations require the highest level of detail. This does, however, require a specialist understanding of CT along with a time input which is not insignificant within busy departments.

The literature reports large variations in dose between sites and across countries, even for similar-sized patients [10], which may be attributed to differences in CT equipment and to local scan protocols. Such dose discrepancies may also point to a lack of understanding or manipulation of parameters, especially on an individual basis. Work has already shown that up to 25 % of radiologists are unaware of specific CT parameters used for their routine examinations [11]. As CT technology has undergone numerous recent advancements, there may also be difficulties for CT users to acquaint themselves with all the features of their particular system, especially if operating multiple scanner models from various manufacturers. The recent development of automated tube current modulation (ATCM) has greatly assisted users when individualising patient doses, and its success [12] has meant that further automated systems are also being introduced [13]. However, a concrete understanding of such software is required to ensure correct operation and use [14, 15]. This work sets out to examine current knowledge amongst a select cohort of radiologists and radiographers and to identify any potential deficits that require attention.

Materials and methods

Ethical exemption was first obtained from the local institution. Full ethics review was not deemed necessary as the survey population did not include any at-risk groups and anonymity was assured to all respondents. A questionnaire was developed containing 47 questions within two sections (Appendix). The first section collected basic demographic information and opinions on CT doses, while the second contained questions on specific CT scan protocols, parameters and diagnostic reference levels. Questions were mostly in true/false and multiple choice tick-box format, as well as some open-ended questions. Correct answers to the questions on specific CT parameters (n = 40) were given a score of 1, while incorrect or incomplete answers were given a score of 0. All questionnaires were distributed with a cover letter explaining the survey and instructions for those completing it, with the added assurance that all responses would be anonymous. The questionnaire was first piloted with three persons: one radiologist and two university CT lecturers. This resulted in a small number of formatting changes as well as rewording of some questions to improve clarity in the questionnaire.

Recruitment involved convenience sampling, initially for a cohort of radiologists, where examining radiologists at the November 2011, American Board of Radiology examinations in Louisville, Kentucky were invited to complete the questionnaire. Questionnaires were distributed to each radiologist on the first day of examinations and collection boxes were made available at multiple break-out rooms over the following 3 days. A reminder request for completion was also distributed on the penultimate day of examinations. It was planned to do a comparison between American and Irish radiologists by recruiting a similar number of Irish radiologists, but when the questionnaire was piloted in Ireland, feedback was that Irish radiologists had much less input or experience with specific CT scan parameters as this tends to be under operational control of radiographers and, in particular, the CT clinical specialist radiographers (CSRs). To more accurately represent Irish practices, it was therefore decided to survey the CSR cohort instead.

There are currently 65 CT CSRs in Ireland [16] and each was contacted in advance of distribution. Questionnaires were then sent via post, along with stamped addressed envelopes to encourage completion [17]. While electronic surveys are cheaper and allow easier analysis, research has shown that postal surveys produce higher response rates [18] All CSRs were asked to return the questionnaire no later than 3 weeks from date of receipt. Follow-up emails were subsequently sent to remind all of the return date. Answers to the questions assessing CT parameter knowledge were compared with recently published CT textbooks [19, 20].

Statistical analysis

Statistical analysis was performed using SPSS version 18 and a P value of <0.05 was considered significant. Descriptive statistics were used to analyse questions and depending on results of the Kolmogorov-Smirnov normality testing, either Mann Whitney U test or independent samples t-tests were used. Open-ended questions were analysed using thematic analysis to identify common threads.

Results

In total, 65 questionnaires were distributed to both the radiologist and CSR cohort with 14 (22 %) and 21 (32 %) being returned respectively. The radiologist cohort had a median of greater than 25 years’ CT experience in comparison to 12–15 years for the CSR group.

Concerns regarding CT doses

Forty percent of CSRs stated concerns about the doses within their departments compared with over 64 % of radiologists. While radiologists did not provide further comments, CSRs identified individual exams of concern and mentioned “the increased number of repeat studies, i.e. abdomen/pelvis for collections and thorax-abdomen-pelvis examinations for oncology every 6 weeks, increased number of multiphase studies” as well as “higher doses involved with thin-slice scanning”. Three respondents without concerns did comment on their use of a regular audit, which provided reassurance.

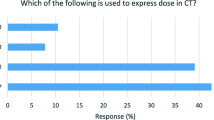

CT protocols

When asked about CT protocols, 50 % of radiologists reported that they alone decide on the routine scan protocols selected, the remainder doing so in cooperation with a physicist (14 %), a physicist and radiographer (14 %), an applications specialist (7 %), a physicist and applications specialist (7 %) or with a combination of all three (7 %). In comparison, only 14 % of Irish departments reported that the radiologist alone decides on the routine CT protocols. Instead the majority of CSRs reported that protocols were decided on by a combination of the radiologist and CSR (52 %). In four departments (19 %) an applications specialist was also involved, while in one (5 %) the CSR, radiologist and physicist all inputted.

Both groups were asked which of four options (patient size, anatomical region, study indication and patient age) would they alter the routine CT scanning parameters, and a difference emerged between the two groups, with 85 % (12/14) of radiologists varying protocols based on all four reasons, while only 30 % of CSRs citing likewise. Instead, 19 % (4/21) of CSRs vary protocols based on size alone, while only 43 % (9/21) of respondents considered the anatomical region and 52 % (11/21) the study indication.

CT parameters

Respondents were asked to rate their confidence to alter the CT parameters correctly, considering image quality and radiation dose (1 = excellent, 5 = poor) and the CSR group recorded a median value of two while the ABR radiologists had a median of three, although this difference was not statistically significant (P = 0.716) when examined using the Mann–Whitney U test. Overall mean scores and descriptive statistics for both groups are given in Table 1.

Mean scores for both groups were compared using independent samples t-test and the difference was not significant (P = 0.87). The majority of both radiologists (79 %) and CSRs (86 %) stated that further education in the area of optimisation of CT scan parameters would be beneficial.

ATCM operation

Both groups were asked to indicate their agreement with a series of questions related to automated tube current modulation (ATCM) operation. Results are shown in Table 2.

Peak kilovoltage (kVp)

When asked of the effects of decreasing the tube voltage from 120 to 100kVp, a small number of CSRs (14 %) believe there is no reduction in patient dose, 38 % feel that image noise does not increase, while 48 % replied that vessel enhancement does not increase during contrast examinations. This is compared with 7 %, 21 % and 36 % of radiologists, respectively.

Tube Current (mA)

Understanding of the relationship between tube current and dose also showed that 60 % of radiologists and 76 % CSRs believe that tube current has a linear relationship with dose, while 54 % and 55 % think the tube current has a linear relationship with noise by stating that a reduction of 50 % of tube current results in a twofold increase in noise.

Image noise

Respondents were all asked to indicate the CT parameters that influenced image noise. While 93 % of radiologists believe that kVp selection influences noise in CT, only 67 % of CSRs did likewise. One half of radiologist respondents (7/14) felt image noise was influenced by the window width setting and 71 % by reconstruction algorithm, compared with 62 % and 86 % of CSRs, respectively.

Awareness of diagnostic reference levels

Both groups were questioned regarding their knowledge of national diagnostic reference levels (DRLs) and an obvious trend was noted. For CSRs, a majority answered that they knew of DRLs for the brain, sinus, chest, HRCT and abdomen examinations. However, when asked to quote the specific value a proportion of the respondents in each category quoted incorrect values (Table 3). The majority of ABR radiologists (86 %, 12/14) were not aware of American College of Radiology (ACR) DRLs for the three specified examinations: brain, abdomen and paediatric abdomen; and of the two radiologists who reported in the positive, just one correctly quoted all three values.

Discussion

Demographics

There was an almost twofold difference in the median number of years of experience between the radiologists and the CSR group, which is attributable to the radiologist cohort included here , consisting of senior examining radiologists, rather than a representative cross-section. Despite the gap in experience levels, there was no statistical difference in the mean number of questions answered correctly by both groups. The experience gap in years may potentially have been offset by the fact that CSRs spend the majority of their time working in CT, manipulating parameters on a daily basis, in comparison to radiologists who may spend their time across a number of different modalities. However there was quite a large range of scores evident amongst both groups suggesting varying levels of understanding of parameters. Given the stated desire for further education amongst both cohorts in this area, a refresher course or updates for both professionals would seem a worthwhile endeavour.

CT protocols

The ACR recommends that the lead radiologist, lead CT technologist and medical physicist should converge to design all new or modified protocol settings [21], to ensure both radiation dose and image quality are appropriate. However, it is clear from this work that compliance amongst both groups here is low. The surveyed radiologists still perform this task predominantly single-handedly (50 %), with only 14 % of those surveyed reporting to be in compliance with ACR recommendations. In Ireland, while the majority of respondents report that such decisions are made by a combination of the radiologist and CSR (55 %), only one site (5 %) also includes physicists in this process. It would seem an obvious benefit to include representatives from each of the three professions during such a process to safeguard patients and also improve quality processes.

Worryingly, a significant number of responding CSRs do not alter CT parameters based on either anatomical region (57 %) or study indication (48 %), indicating that patients may potentially be exposed to higher doses than necessary contrary to the ALARA principle. Varying parameters according to study indication has already been demonstrated to permit significant reductions in dose, especially for examinations with high inherent contrast where high image quality is not required, such as renal stone protocols [22, 23], lung nodule follow-up [24] or sinus examinations [25]. Likewise each anatomical region has differing levels of contrast between the various organs, so naturally distinct CT protocols are required to optimise image quality in each.

A larger proportion of the radiologists were identified as having concerns about CT doses in comparison to Irish CSRs, possibly as a result of recent literature and cases in the USA where radiation incidents have gotten large academic and public media exposure in recent years. Although no radiologist respondents commented further on this, CSRs did reference the increased use of multiple and repeat scans and higher doses involved with use of thinner slice scanning. A number of CSRs had no concerns regarding their doses, largely due to recent audits that were carried out. This emphasises the value of regular QA programmes, which can promote high standards and ensure patient safety as well as instilling confidence in CT users.

ATCM operation

Dose reductions of between 35 and 60 % [26] have already been reported in various studies when ATCM is used in comparison to fixed tube current settings. However, such reductions are dependent on operators correctly both positioning the patient within the gantry and selecting an appropriate image quality metric for the examination. Encouragingly the majority of both cohorts (85 % and 90 %) were well aware of the fact that ATCM can be influenced by the centring of patients within the gantry, which is reassuring, especially as reports suggest dose variations of up to 41 % can be associated with incorrect use [14]. Image quality measures must also be specified by operators when using ATCM, usually in the form of either a noise index setting or quality reference tube current. It is of concern that almost a third of CSRs here felt that the non-contrast phase of an abdominal examination requires the same noise setting as the contrast phase. Conducting multiphase studies can involve considerable patient doses [27] and an effective method of optimising these is by reducing the image quality requirements of non-contrast or delayed scans, as the same level of image quality is not necessarily required in each [28].

There was a distinct lack of consensus amongst both groups regarding the use of ATCM in the presence of metallic implants, with more than half of CSRs and three quarters of radiologists surveyed believing that ATCM should not be used in such instances. As such software operates by measuring the patient attenuation and adjusting the tube current accordingly, theoretically the increased attenuation caused by implants or prostheses could result in dramatic increases in tube current and consequently patient doses. This may also occur despite the fact that increasing the tube current has little if any effect on the beam hardening and streak artefacts created by such metal and merely contributes to additional patient dose [29]. However, studies have shown that while the tube current may increase over the region of the implant, overall ATCM still provides a net reduction in tube current when compared with fixed tube current settings [30]. Also, more recent versions of ATCM software can recognise the presence of such implants during the initial scanogram and disregard the increased attenuation from the tube current calculation [31], thus avoiding unnecessary increases altogether. However, the results here would indicate that patients may not be fully benefitting from ATCM technology, emphasising the importance of CT users keeping abreast of developments in the modality to ensure doses are optimised. This is especially important given the continued development in the area with tube potential modulation [32] now being offered by some manufacturers.

Peak kilovoltage (kVp)

The peak kilovoltage controls the overall energy of the X-ray photons, so any change will influence the number of photons penetrating the body tissue, with a resultant effect on both radiation dose and image noise [20]. While most CT systems operate at a standard 120 kVp, increasingly alternative values from 80–140kVp are available. Studies [33–35] already highlight the optimisation potential of appropriate kVp selection, especially for patients below a certain size and also during angiographic studies, given the added advantage of increased vessel attenuation with lower tube voltages [36]. Almost 40 % of CSRs did not associate reductions in kVp with image noise increases, which is of concern, although this may be due to the belief that ATCM systems will automatically increase the tube current to compensate for any change in kVp to ensure the image noise is maintained at a constant level. However, ATCM systems operate differently for each manufacturer, so users need to be aware of their own particular system to ensure how variations in kVp selection can affect CT image quality [37]. Only 52 % of CSRs and 74 % of radiologist respondents agreed that lower tube voltages result in increased vessel enhancement during angiographic examinations. It is this increased vessel attenuation and contrast-to-noise ratio that facilitates dose reduction of up to 56 % during such studies [38] and a lack of understanding of this parameter will obviously limit the potential available for optimisation within such studies.

Tube current (mA)

While the majority of both groups of respondents correctly agreed that tube current has a linear relationship with dose (71 % of radiologists and 80 % of CSRs) some confusion existed regarding the impact of tube current on noise levels, with a majority of both cohorts incorrectly believing that tube current has a linear relationship with image noise, where in fact it is approximately inversely proportional to the square root of the tube current [19, 39]. This has considerable relevance as noise is likely the single largest determinant of acceptable image quality within CT and, if attempting to optimise patient doses, users have to understand its relationship with each of the influencing CT parameters, should they wish to maximise the optimisation potential. Noise reduces the low contrast detectability within CT images and may hence obscure important anatomical findings [40]. Window width and reconstruction filters [20, 40] also influence the perception of noise within CT images, but again a considerable number from each cohort were not in agreement. Appropriate manipulation of both of these parameters can significantly alter the visibility of noise within images and coupled with optimisation of the above-mentioned CT parameters can combine to assist the lowering of radiation doses within CT

Awareness of DRLs

All CT users need to be aware of indicators of overexposure such as through the adoption and use of diagnostic reference levels (DRLs). Overexposure in CT is quite difficult to recognise otherwise, given the wide latitude of the modality, in which excessive exposures do not adversely affect image quality. Already, incidents of CT overexposure have attracted widespread academic and media interest in recent years [5, 6] and, given the large doses involved in CT, the importance of DRLs cannot be stressed enough. It is clear that the majority of radiologists here are unaware of recommended DRL values, which increases the possibility for excessive doses to go undetected. Although not compulsory, monitoring of patient doses and comparisons to national values are routinely recommended [41] and perhaps mandatory reporting of CT doses within the diagnostic report would increase the use of this important optimisation tool. Self-reported knowledge of DRLs amongst the CSR cohort is much higher with up to 86 % of respondents reporting that they knew specific values for the five different examinations asked, although this higher response rate may have been influenced by the longer time period CSRs had to complete the questionnaire. CSRs were most familiar with values for the brain (86 %), thorax (86 %) and abdomen (81 %) examinations, which is important given the current prevalence of these examinations, which constitute approximately 80 % of the total number of CT examinations annually in Ireland [10]. However, a sizeable proportion of respondents when asked to quote these listed incorrect values. This would stress the importance of having DRL values ready to hand in all CT facilities and displayed in a prominent position so that staff are both aware of and can utilise these regularly.

Limitations

This study suffered from a number of limitations; the principle ones being the selective cohorts utilised and the small sample sizes included. The response rate of 22 and 32 % from both cohorts while low is in line with other studies and potentially indicative of questionnaire surveys [42], especially those investigating knowledge levels. This study included lead experts in Radiology and expert CT radiographers, so is not fully representative of either professions, and may render the sample over-representative in nature. Further work is encouraged to gauge the understanding amongst cross-sections of both professions using larger sample sizes. It could be argued that selection bias may also be inherent within this questionnaire as respondents who are more confident of their answers are more likely to participate. Finally, although all participants were asked not to refer to other information sources, such as textbooks, internet, etc., while completing the questionnaires this could not be guaranteed given the nature of the survey.

Whilst the findings of this questionnaire study are principally positive, the need for ongoing education focused upon optimisation has been identified. Obvious deficiencies were identified amongst both cohorts, especially in relation to the influence of certain parameters on both patient dose and image quality, as well as knowledge of specific diagnostic reference levels. This may have a considerable impact on patient doses and limit the optimisation potential in clinical practice.

References

UNSCEAR (2008) Sources and effects of ionizing radiation. Report to the general assesmbly with scientific annexes. United Nations, New York

NCRP (2009) Ionizing radiation exposure of the population of the United States. National Council on Radiation Protection and Measurement, Bethesda

Brenner DJ, Hall EJ (2007) Computed tomography—an increasing source of radiation exposure. N Engl J Med 357:2277–2284

Pearce MS et al (2012) Radiation exposure from CT scans in childhood and subsequent risk of leukaemia and brain tumours: a retrospective cohort study. Lancet 380:499–505

Bogdanich W (2010) After stroke scans, patients face serious health risks. In: The New York Times, New York

Zarembo A (2009) Cedars-Sinai investigated for significant overdoses of 206 patients. In: Los Angeles Times, Los Angeles

USDHHS (2005) Report on Carcinogens, Eleventh Edition. National Toxicology Program, US Department of Health and Human Sciences, Public Health Service, National Toxicology Program, Washington

Valentin J; International Commission on Radiation Protection (2007) Managing patient dose in multi-detector computed tomography (MDCT). Publication 102. Ann ICRP 37:1–79

Amis ES Jr et al (2007) American College of Radiology White Paper on radiation dose in medicine. J Am Coll Radiol 4:272–284

Foley SJ, McEntee MF, Rainford LA (2012) Establishment of CT diagnostic reference levels in Ireland. Br J Radiol 85:1390–1397

Hollingsworth C et al (2003) Helical CT of the body: a survey of techniques used for pediatric patients. AJR Am J Roentgenol 180:401–406

Rizzo S et al (2006) Comparison of angular and combined automatic tube current modulation techniques with constant tube current CT of the abdomen and pelvis. AJR Am J Roentgenol 186:673–679

Yu L et al (2010) Automatic selection of tube potential for radiation dose reduction in CT: a general strategy. Med Phys 37:234–243

Matsubara K et al (2009) Misoperation of CT automatic tube current modulation systems with inappropriate patient centering: phantom studies. AJR Am J Roentgenol 192:862–865

Gudjonsdottir J et al (2009) Efficient use of automatic exposure control systems in computed tomography requires correct patient positioning. Acta Radiol 50:1035–1041

HSE (2010) Population dose from CT Scanning: 2009. Health Services Executive, Dublin

Edwards P et al (2007) Methods to increase response rates to postal questionnaires. Cochrane Database Syst Rev 18:MR000008

Shih T-H, Fan X (2009) Comparing response rates in e-mail and paper surveys: a meta-analysis. Educ Res Rev 4:26–40

Seeram E (2009) Computed tomography: Physical principles, clinical applications and quality control, 3rd edn. Sanders, St Louis

Hsieh J (2009) Computed tomography. Principles, design, artifacts and recent advances, 2nd edn. SPIE & Wiley, Bellingham

ACR (2011) ACR practice guidelines for performing and interpreting diagnostic computed tomography. American College of Radiology, Reston

Kalra MK et al (2005) Detection of urinary tract stones at low-radiation-dose CT with z-axis automatic tube current modulation: phantom and clinical studies. Radiology 235:523–529

Kluner C et al (2006) Does ultra-low-dose CT with a radiation dose equivalent to that of KUB suffice to detect renal and ureteral calculi? J Comput Assist Tomogr 30:44–50

Christe A et al (2011) CT screening and follow-up of lung nodules: Effects of tube current-time setting and nodule size and density on detectability and of tube current-time setting on apparent size. AJR Am J Roentgenol 197:623–630

Tack D et al (2003) Comparison between low-dose and standard-dose multidetector CT in patients with suspected chronic sinusitis. AJR Am J Roentgenol 181:939–944

Soderberg M, Gunnarsson M (2010) Automatic exposure control in computed tomography—an evaluation of systems from different manufacturers. Acta Radiol 51:625–634

Guite KM et al (2011) Ionizing radiation in abdominal CT: Unindicated multiphase scans are an important source of medically unnecessary exposure. J Am Coll Radiol 8:756–761

McNitt-Gray M (2011) Tube current modulation approaches: overview, practical issues and potential pitfalls. AAPM 2011 Summit on CT Dose, Denver

Haramati N et al (1994) CT scans through metal scanning technique versus hardware composition. Comput Med Imaging and Graph 18:429–434

Rizzo SM et al (2005) Do metallic endoprostheses increase radiation dose associated with automatic tube-current modulation in abdominal-pelvic MDCT? A phantom and patient study. AJR Am J Roentgenol 184:491–496

Dalal T et al (2005) Metallic prosthesis: technique to avoid increase in CT radiation dose with automatic tube current modulation in a phantom and patients. Radiology 236:671–675

Winklehner A et al (2011) Automated attenuation-based tube potential selection for thoracoabdominal computed tomography angiography: improved dose effectiveness. Investig Radiol 46:767–773

Sigal-Cinqualbre AB et al (2004) Low-kilovoltage multi-detector row chest CT in adults: feasibility and effect on image quality and iodine dose. Radiology 231:169–174

Leschka S et al (2008) Low kilovoltage cardiac dual-source CT: attenuation, noise, and radiation dose. Eur Radiol 18:1809–1817

Kim MJ et al (2009) Multidetector computed tomography chest examinations with low-kilovoltage protocols in adults: effect on image quality and radiation dose. J Comput Assist Tomogr 33:416–421

Bogot NR et al (2011) Image quality of low-energy pulmonary CT angiography: comparison with standard CT. AJR Am J Roentgenol 197:W273–W278

Keat N (2005) CT scanner automatic exposure control systems. Report 05016. ImPACT, London

Nakayama Y et al (2005) Abdominal CT with low tube voltage: preliminary observations about radiation dose, contrast enhancement, image quality, and noise. Radiology 237:945–951

Primak AN et al (2006) Relationship between noise, dose, and pitch in cardiac multidetector row CT. Radiographics 26:1785–1794

Goldman LW (2007) Principles of CT: radiation dose and image quality. J Nucl Med Technol 35:213–225

Husband J, Padhani A (2006) Recommendations for cross-sectional imaging in cancer management. Royal College of Radiologists, London

Kumar R (2011) Research methodology. A step by step guide for beginners. SAGE Publications, London

Conflicts of interest

The authors declare no conflicts of interest. No funding was received for this work.

Author information

Authors and Affiliations

Corresponding author

Appendix: Questionnaire

Appendix: Questionnaire

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution License which permits any use, distribution, and reproduction in any medium, provided the original author(s) and the source are credited.

About this article

Cite this article

Foley, S.J., Evanoff, M.G. & Rainford, L.A. A questionnaire survey reviewing radiologists’ and clinical specialist radiographers’ knowledge of CT exposure parameters. Insights Imaging 4, 637–646 (2013). https://doi.org/10.1007/s13244-013-0282-4

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13244-013-0282-4