Abstract

Infrastructure systems are increasingly facing new security threats due to the vulnerabilities of cyber-physical components that support their operation. In this article, we investigate how the infrastructure operator (defender) should prioritize the investment in securing a set of facilities in order to reduce the impact of a strategic adversary (attacker) who can target a facility to increase the overall usage cost of the system. We adopt a game-theoretic approach to model the defender-attacker interaction and study two models: normal form game—where both players move simultaneously—and sequential game—where attacker moves after observing the defender’s strategy. For each model, we provide a complete characterization of how the set of facilities that are secured by the defender in equilibrium vary with the costs of attack and defense. Importantly, our analysis provides a sharp condition relating the cost parameters for which the defender has the first-mover advantage. Specifically, we show that to fully deter the attacker from targeting any facility, the defender needs to proactively secure all “vulnerable facilities” at an appropriate level of effort. We illustrate the outcome of the attacker–defender interaction on a simple transportation network. We also suggest a dynamic learning setup to understand how this outcome can affect the ability of imperfectly informed users to make their decisions about using the system in the post-attack stage.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

In this article, we consider the problem of strategic allocation of defense effort to secure one or more facilities of an infrastructure system that is prone to a targeted attack by a malicious adversary. The setup is motivated by the recent incidents and projected threats to critical infrastructures such as transportation, electricity, and urban water networks [38, 41, 43]. Two of the well-recognized security concerns faced by infrastructure operators are: (i) How to prioritize investments among facilities that are heterogeneous in terms of the impact that their compromise can have on the overall efficiency (or usage cost) of the system; and (ii) Whether or not an attacker can be fully deterred from launching an attack by proactively securing some of the facilities. Our work addresses these questions by focusing on the most basic form of strategic interaction between the system operator (defender) and an attacker, modeled as a normal form (simultaneous) or a sequential (Stackelberg) game. The normal form game is relevant to situations in which the attacker cannot directly observe the chosen security plan, whereas the sequential game applies to situations where the defender proactively secures some facilities, and the attacker can observe the defense strategy.

In recent years, many game-theoretical models have been proposed to study problems in cyber-physical security of critical infrastructure systems; see [5, 35] for a survey of these models. These models are motivated by the questions of strategic network design [25, 34, 45], intrusion detection [4, 18, 23, 46], interdependent security [6, 39], network interdiction [22, 49], and attack-resilient estimation and control [17, 47].

Our model is relevant for assessing strategic defense decisions for an infrastructure system viewed as a collection of facilities. In our model, each facility is considered as a distinct entity for the purpose of investment in defense, and multiple facilities can be covered by a single investment strategy. The attacker can target a single facility and compromise its operation, thereby affecting the overall operating efficiency of the system. Both players choose randomized strategies. The performance of the system is evaluated by a usage cost, whose value depends on the actions of both players. In particular, if an undefended facility is targeted by the attacker, it is assumed to be compromised, and this outcome is reflected as a change in the usage cost. Naturally, the defender aims to maintain a low usage cost, while the attacker wishes to increase the usage cost. The attacker (resp. defender) incurs a positive cost in targeting (resp. securing) a unit facility. Thus, both players face a trade-off between the usage cost and the attack/defense costs, which results in qualitatively different equilibrium regimes.

We analyze both normal form and sequential games in the above-mentioned setting. First, we provide a complete characterization of the equilibrium structure in terms of the relative vulnerability of different facilities and the costs of defense/attack for both games. Secondly, we identify ranges of attack and defense costs for which the defender gets the first-mover advantage by investing in proactive defense. Furthermore, we relate the outcome of this game (post-attack stage) to a dynamic learning problem in which the users of the infrastructure system are not fully informed about the realized security state (i.e., the identity of compromised facility).

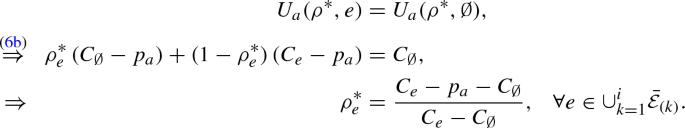

We now outline our main results. To begin our analysis, we make the following observations. Analogous to [24], we can represent the defender’s mixed strategy by a vector with elements corresponding to the probabilities for each facility being secured. The defender’s mixed strategy can also be viewed as her effort on each facility. Moreover, the attacker/defender only targets/secures facilities whose disruption will result in an increase in the usage cost (Proposition 1). If the increase in the usage cost of a facility is larger than the cost of attack, then we say that it is a vulnerable facility.

Our approach to characterizing Nash equilibrium (NE) of the normal form game is based on the fact that it is strategically equivalent to a zero-sum game. Hence, the set of attacker’s equilibrium strategies can be obtained as the optimal solution set of a linear optimization program (Proposition 2). For any given attack cost, we show that there exists a threshold cost of defense, which distinguishes two equilibrium regime types, named as type I and type II regimes. Theorem 1 shows that when the defense cost is lower than the cost threshold (type I regimes), the total attack probability is positive but less than 1, and all vulnerable facilities are secured by the defender with positive probability. On the other hand, when the defense cost is higher than the threshold (type II regimes), the total attack probability is 1, and some vulnerable facilities are not secured at all.

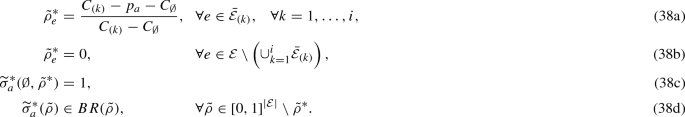

We develop a new approach to characterize the subgame perfect equilibrium (SPE) of the sequential game, noting that the strategic equivalence to zero-sum game no longer holds in this case. In this game, the defender, as the first mover, either proactively secures all vulnerable facilities with a threshold security effort so that the attacker does not target any facility, or leaves at least one vulnerable facility secured with an effort less than the threshold while the total attack probability is 1. For any attack cost, we establish another threshold cost of the defense, which is strictly higher than the corresponding threshold in the normal form game. This new threshold again distinguishes the equilibrium strategies into two regime types, named as type \(\widetilde{\text {I}}\) and type \(\widetilde{\text {II}}\) regimes. Theorem 2 shows that when the defense cost is lower than the cost threshold (type \(\widetilde{\text {I}}\) regimes), the defender can fully deter the attacker by proactively securing all vulnerable facilities with the threshold security effort. On the other hand, when the defense cost is higher than the threshold (type \(\widetilde{\text {II}}\) regimes), the defender maintains the same level of security effort as that in NE, while the total attack probability is 1.

Our characterization shows that both NE and SPE satisfy the following intuitive properties: (i) Both the defender and attacker prioritize the facilities that results in a high usage cost when compromised; (ii) the attack and defense costs jointly determine the set of facilities that are targeted or secured in equilibrium. On the one hand, as the attack cost decreases, more facilities are vulnerable to attack. On the other hand, as the defense cost decreases, the defender secures more facilities with positive effort, and eventually when the defense cost is below a certain threshold (defined differently in each game), all vulnerable facilities are secured with a positive effort; (iii) each player’s equilibrium payoff is non-decreasing in the opponent’s cost, and non-increasing in her own cost.

It is well known in the literature on two-player games that so long as both players can choose mixed strategies, the equilibrium utility of the first mover in a sequential game is no less than that in a normal form game [8, pp. 126], [48]. However, cases can be found where the first-mover advantage changes from positive to zero when the attacker’s observed signal of the defender’s strategy is associated with a noise [7]. In the security game setting, the paper [12] analyzed a game where there are two facilities, and the attacker’s valuation of each facility is private information. They identify a condition under which the defender’s equilibrium utility is strictly higher when his strategy can be observed by the attacker. In contrast, our model considers multiple facilities and assumes that both players have complete information of the usage cost of each facility.

In fact, for our model, we are able to provide sharp conditions under which proactive defense strictly increases the defender’s utility. Given any attack cost, unless the defense cost is “relatively high” (higher than the threshold cost in the sequential game), proactive defense is advantageous in terms of strictly improving the defender’s utility and fully deterring the attack. However, if the defense cost is “relatively medium” (lower than the threshold cost in sequential game, but higher than that in the normal form game), a higher security effort on each vulnerable facility is required to gain the first-mover advantage. Finally, if the defense cost is “relatively low” (lower than the threshold cost in the normal form game), then the defender can gain advantage by simply making the first move with the same level of security effort as that in the normal form game.

Note that our approach to characterizing NE and SPE can be readily extended to models with facility-dependent cost parameters and less than perfect defense. We conjecture that a different set of techniques will be required to tackle the more general situation in which the attacker can target multiple facilities at the same time; see [22] for related work in this direction. However, even when the attacker targets multiple facilities, one can find game parameters for which the defender is always strictly better off in the sequential game.

Finally, we provide a brief discussion on rational learning dynamics, aimed at understanding how the outcome of the attacker–defender interaction—which may or may not result in compromise of a facility (state)—affects the ability of system users to learn about the realized state through a repeated use of the system. A key issue is that the uncertainty about the realized state can significantly impact the ability of users to make decisions to ensure that their long-term cost corresponds to the true usage cost of the system. We explain this issue using a simple transportation network as an example, in which rational travelers (users) need to learn about the identity of the facility that is likely to be compromised using imperfect information about the attack and noisy realizations of travel time in each stage of a repeated routing game played over the network.

The results reported in this article contribute to the study on the allocation of defense resources on facilities against strategic adversaries, as discussed in [12, 40]. The underlying assumption that drives our analysis is that an attack on each facility can be treated independently for the purpose of evaluating its impact on the overall usage cost. Other papers that also make this assumption include [2, 11, 13, 16]. Indeed, when the impact of facility compromises is related to the network structure, facilities can no longer be treated independently, and the network structure becomes a crucial factor in analyzing the defense strategy [26]. Additionally, network connections can also introduce the possibility of cascading failure among facilities, which is addressed in [1, 29]. These settings are not covered by our model.

The paper is structured as follows: In Sect. 2, we introduce the model of both games and discuss the modeling assumptions. We provide preliminary results to facilitate our analysis in Sect. 3. Section 4 characterizes NE, and Sect. 5 characterizes SPE. Section 6 compares both games. We discuss some extensions of our model and briefly introduce dynamic aspects in Sect. 7.

All proofs are included in Appendix.

2 The Model

2.1 Attacker–Defender Interaction: Normal Form Versus Sequential Games

Consider an infrastructure system modeled as a set of components (facilities) \(\mathcal {E}\). To defend the system against an external malicious attack, the system operator (defender) can secure one or more facilities in \(\mathcal {E}\) by investing in appropriate security technology. The set of facilities in question can include cyber or physical elements that are crucial to the functioning of the system. These facilities are potential targets for a malicious adversary whose goal is to compromise the overall functionality of the system by gaining unauthorized access to certain cyber-physical elements. The security technology can be a combination of proactive mechanisms (authentication and access control) or reactive ones (attack detection and response). Since our focus is on modeling the strategic interaction between the attacker and defender at a system level, we do not consider the specific functionalities of individual facilities or the protection mechanisms offered by various technologies.

We now introduce our game-theoretic model. Let us denote a pure strategy of the defender as \(s_d\subseteq \mathcal {E}\), with \(s_d\in S_d= 2^{\mathcal {E}}\). The cost of securing any facility is given by the parameter \(p_d\in \mathbb {R}_{>0}\). Thus, the total defense cost incurred in choosing a pure strategy \(s_d\) is \(|s_d| \cdot p_d\), where \(|s_d|\) is the cardinality of \(s_d\) (i.e., the number of secured facilities). The attacker chooses to target a single facility \(e\in \mathcal {E}\) or not to attack. We denote a pure strategy of the attacker as \(s_a\in S_a=\mathcal {E}\cup \{\emptyset \}\). The cost of an attack is given by the parameter \(p_a\in \mathbb {R}_{>0}\), and it reflects the effort that attacker needs to spend in order to successfully target a single facility and compromise its operation.

We assume that prior to the attack, the usage cost of the system is \(C_{\emptyset }\). This cost represents the level of efficiency with which the defender is able to operate the system for its users. A higher usage cost reflects lower efficiency. If a facility \(e\) is targeted by the attacker but not secured by the defender, we consider that \(e\) is compromised and the usage cost of the system changes to \(C_{e}\). Therefore, given any pure strategy profile \((s_d, s_a)\), the usage cost after the attacker–defender interaction, denoted \(C(s_d, s_a)\), can be expressed as follows:

To study the effect of timing of the attacker–defender interaction, prior literature on security games has studied both normal form game and sequential game [5]. We study both models in our setting. In the normal form game, denoted \(\varGamma \), the defender and the attacker move simultaneously. On the other hand, in the sequential game, denoted \(\widetilde{\varGamma }\), the defender moves in the first stage and the attacker moves in the second stage after observing the defender’s strategy. We allow both players to use mixed strategies. In \(\varGamma \), we denote the defender’s mixed strategy as \(\sigma _d{\mathop {=}\limits ^{\varDelta }}\left( \sigma _d(s_d)\right) _{s_d\in S_d} \in \varDelta (S_d)\), where \(\sigma _d(s_d)\) is the probability that the set of secured facilities is \(s_d\). Similarly, a mixed strategy of the attacker is \(\sigma _a{\mathop {=}\limits ^{\varDelta }}\left( \sigma _a(s_a)\right) _{s_a\in S_a} \in \varDelta (S_a)\), where \(\sigma _a(s_a)\) is the probability that the realized action is \(s_a\). Let \(\sigma =\left( \sigma _d, \sigma _a\right) \) denote a mixed strategy profile. In \(\widetilde{\varGamma }\), the defender’s mixed strategy \(\widetilde{\sigma }_d{\mathop {=}\limits ^{\varDelta }}\left( \widetilde{\sigma }_d(s_d)\right) _{s_d\in S_d} \in \varDelta (S_d)\) is defined analogously to that in \(\varGamma \). The attacker’s strategy is a map from \(\varDelta (S_d)\) to \(\varDelta (S_a)\), denoted by \(\widetilde{\sigma }_a(\widetilde{\sigma }_d) {\mathop {=}\limits ^{\varDelta }}\left( \widetilde{\sigma }_a(s_a, \widetilde{\sigma }_d)\right) _{s_a\in S_a} \in \varDelta (S_a)\), where \(\widetilde{\sigma }_a(s_a, \widetilde{\sigma }_d)\) is the probability that the realized action is \(s_a\) when the defender’s strategy is \(\widetilde{\sigma }_d\). A strategy profile in this case is denoted as \(\widetilde{\sigma }= \left( \widetilde{\sigma }_d, \widetilde{\sigma }_a(\widetilde{\sigma }_d)\right) \).

The defender’s utility is comprised of two parts: the negative of the usage cost as given in (1) and the defense cost incurred in securing the system. Similarly, the attacker’s utility is the usage cost net the attack cost. For a pure strategy profile \(\left( s_d, s_a\right) \), the utilities of defender and attacker can be, respectively, expressed as follows:

For a mixed strategy profile \(\left( \sigma _d, \sigma _a\right) \), the expected utilities can be written as:

where \(\mathbb {E}_{\sigma } [C]\) is the expected usage cost, and \(\mathbb {E}_{\sigma _d}[|s_d|]\) (resp. \(\mathbb {E}_{\sigma _a} [|s_a|]\)) is the expected number of defended (resp. targeted) facilities, i.e.:

An equilibrium outcome of the game \(\varGamma \) is defined in the sense of Nash equilibrium (NE). A strategy profile \(\sigma ^*=(\sigma _d^*, \sigma _a^*)\) is a NE if:

In the sequential game \(\widetilde{\varGamma }\), the solution concept is that of a subgame perfect equilibrium (SPE), which is also known as Stackelberg equilibrium. A strategy profile \(\widetilde{\sigma }^*=(\widetilde{\sigma }_d^*, \widetilde{\sigma }_a^*(\widetilde{\sigma }_d))\) is a SPE if:

Since both \(S_d\) and \(S_a\) are finite sets, and we consider mixed strategies, both NE and SPE exist.

2.2 Model Discussion

One of our main assumptions is that the attacker’s capability is limited to targeting at most one facility, while the defender can invest in securing multiple facilities. Although this assumption appears to be somewhat restrictive, it enables us to derive analytical results on the equilibrium structure for a system with multiple facilities. The assumption can be justified in situations where the attacker can only target system components in a localized manner. Thus, a facility can be viewed as a set of collocated components that can be simultaneously targeted by the attacker. For example, in a transportation system, a facility can be a vulnerable link (edge), or a set of links that are connected by a vulnerable node (an intersection or a hub). In Sect. 7.1, we briefly discuss the issues in solving a more general game where multiple facilities can be simultaneously targeted by the attacker.

Secondly, our model assumes that the costs of attack and defense are identical across all facilities. We make this assumption largely to avoid the notational burden of analyzing the effect of facility-dependent attack/defense cost parameters on the equilibrium structures. In fact, as argued in Sect. 7.1, the qualitative properties of equilibria still hold when cost parameters are facility-dependent. However, characterizing the equilibrium regimes in this case can be quite tedious and may not necessarily provide new insights on strategic defense investments.

Thirdly, we allow both players to choose mixed strategies. Indeed, mixed strategies are commonly considered in security games as a pure NE may not always exist. A mixed strategy entails a player’s decision to introduce randomness in her behavior, i.e., the manner in which a facility is targeted (resp. secured) by the attacker (resp. defender). Consider, for example, the problem of inspecting a transportation network facing risk of a malicious attack. In this problem, a mixed strategy can be viewed as randomized allocation of inspection effort on subsets of facilities. Mixed strategy of the attacker can be similarly interpreted.

Fourthly, we assume that the defender has the technological means to perfectly secure a facility. In other words, an attack on a secured facility cannot impact its operation. As we will see in Sect. 3, the defender’s mixed strategy can be viewed as the level of security effort on each facility, where the effort level 1 (maximum) means perfect security, and 0 (minimum) means no security. Under this interpretation, the defense cost \(p_d\) is the cost of perfectly securing a unit facility (i.e., with maximum level of effort), and the expected defense cost is \(p_d\) scaled by the security effort defined by the defender’s mixed strategy.

Fifthly, we do not consider a specific functional form for modeling the usage cost. In our model, for any facility \(e\in \mathcal {E}\), the difference between the post-attack usage cost \(C_{e}\) and the pre-attack cost \(C_{\emptyset }\) represents the change of the usage cost of the system when \(e\) is compromised. This change can be evaluated based on the type of attacker–defender interaction one is interested in studying. For example, in situations when attack on a facility results in its complete disruption, one can use a connectivity-based metric such as the number of active source–destination paths or the number of connected components to evaluate the usage cost [25, 26]. On the other hand, in situations when facilities are congestible resources and an attack on a facility increases the users’ cost of accessing it, the system’s usage cost can be defined as the average cost for accessing (or routing through) the system. This cost can be naturally evaluated as the user cost in a Wardrop equilibrium [13], although socially optimal cost has also been considered in the literature [3].

Finally, we note that for the purpose of our analysis, the usage cost as given in (1) fully captures the impact of player’ actions on the system. For any two facilities \(e, e^{'}\in \mathcal {E}\), the ordering of \(C_{e}\) and \(C_{e^{'}}\) determines the relative scale of impact of the two facilities. As we show in Sects. 4–5, the order of cost functions in the set \(\{C_e\}_{e\in \mathcal E}\) plays a key role in our analysis approach. Indeed, the usage cost is intimately linked with the network structure and way of operation (e.g., how individual users are routed through the network and how their costs are affected by a compromised facility). Barring a simple (yet illustrative) example, we do not elaborate further on how the network structure and/or the functional form of usage cost changes the interpretations of equilibrium outcome. We also do not discuss the computational aspects of arriving at the ordering of usage costs.

3 Rationalizable Strategies and Aggregate Defense Effort

We introduce two preliminary results that are useful in our subsequent analysis. Firstly, we show that the defender’s strategy can be equivalently represented by a vector of facility-specific security effort levels. Secondly, we identify the set of rationalizable strategies of both players.

For any defender’s mixed strategy \(\sigma _d\in \varDelta (S_d)\), the corresponding security effort vector is \(\rho (\sigma _d)=\left( \rho _e(\sigma _d)\right) _{e\in \mathcal {E}}\), where \(\rho _e(\sigma _d)\) is the probability that facility \(e\) is secured:

In other words, \(\rho _e(\sigma _d)\) is the level of security effort exerted by the defender on facility \(e\) under the security plan \(\sigma _d\). Since \(\sigma _d(s_d) \ge 0\) for any \(s_d\in S_d\), we obtain that \(0 \le \rho _e(\sigma _d)=\sum _{s_d\ni e} \sigma _d(s_d) \le \sum _{s_d\in S_d} \sigma _d(s_d) = 1\). Hence, any \(\sigma _d\) induces a valid vector of probabilities \(\rho \in [0,1]^{|\mathcal {E}|}\). In fact, any vector \(\rho \in [0, 1]^{|\mathcal {E}|}\) can be induced by at least one feasible \(\sigma _d\). The following lemma provides a way to explicitly construct one such feasible strategy.

Lemma 1

Consider any feasible security effort vector \(\rho \in [0, 1]^{|\mathcal {E}|}\). Let m be the number of distinct positive values in \(\rho \), and define \(\rho _{(i)}\) as the i-th largest distinct value in \(\rho \), i.e., \(\rho _{(1)}> \dots > \rho _{(m)}\). The following defender’s strategy is feasible and induces \(\rho \):

For any remaining \(s_d\in S_d\), \(\sigma _d(s_d)=0\).

We now re-express the player utilities in (2) in terms of \(\left( \rho (\sigma _d), \sigma _a\right) \) as follows:

Thus, for any given attack strategy and any two defense strategies, if the induced security effort vectors are identical, then the corresponding utility for each player is also identical. Henceforth, we denote the player utilities as \(U_d(\rho , \sigma _a)\) and \(U_a(\rho , \sigma _a)\) and use \(\sigma _d\) and \(\rho _e(\sigma _d)\) interchangeably in representing the defender’s strategy. For the sequential game \(\widetilde{\varGamma }\), we analogously denote the security effort vector given the strategy \(\widetilde{\sigma }_d\) as \(\tilde{\rho }(\widetilde{\sigma }_d)\), and the defender’s utility (resp. attacker’s utility) as \(\widetilde{U}_d(\tilde{\rho }, \widetilde{\sigma }_a)\) (resp. \(\widetilde{U}_a(\tilde{\rho }, \widetilde{\sigma }_a)\)).

We next characterize the set of rationalizable strategies. Note that the post-attack usage cost \(C_{e}\) can increase or remain the same or even decrease, in comparison with the pre-attack cost \(C_{\emptyset }\). Let the facilities whose damage results in an increased usage cost be grouped in the set \(\bar{\mathcal {E}}\). Similarly, let \(\widehat{\mathcal {E}}\) denote the set of facilities such that a damage to any one of them has no effect on the usage cost. Finally, the set of remaining facilities is denoted as  . Thus,

. Thus,

Clearly,  . The following proposition shows that in a rationalizable strategy profile, the defender does not secure facilities that are not in \(\bar{\mathcal {E}}\), and the attacker only considers targeting the facilities that are in \(\bar{\mathcal {E}}\).

. The following proposition shows that in a rationalizable strategy profile, the defender does not secure facilities that are not in \(\bar{\mathcal {E}}\), and the attacker only considers targeting the facilities that are in \(\bar{\mathcal {E}}\).

Proposition 1

The rationalizable action sets for the defender and attacker are given by \(2^{\bar{\mathcal {E}}}\) and \(\bar{\mathcal {E}}\cup \{\emptyset \}\), respectively. Hence, any equilibrium strategy profile \(\left( \rho ^{*}, \sigma _a^*\right) \) in \(\varGamma \) (resp. \(\left( \tilde{\rho }^{*}, \widetilde{\sigma }_a^*\right) \) in \(\widetilde{\varGamma }\)) satisfies:

If \(\bar{\mathcal {E}}=\emptyset \), then the attacker/defender does not attack/secure any facility in equilibrium. Henceforth, to avoid triviality, we assume \(\bar{\mathcal {E}}\ne \emptyset \). Additionally, we define a partition of facilities in \(\bar{\mathcal {E}}\) such that all facilities with identical \(C_e\) are grouped in the same set. Let the number of distinct values in \(\{C_{e}\}_{e\in \bar{\mathcal {E}}}\) be \(K\), and \(C_{(k)}\) denote the k-th highest distinct value in the set \(\{C_{e}\}_{e\in \bar{\mathcal {E}}}\). Then, we can order the usage costs as follows:

We denote \(\bar{\mathcal {E}}_{(k)}\) as the set of facilities such that if any \(e\in \bar{\mathcal {E}}_{(k)}\) is damaged, the usage cost \(C_{e}=C_{(k)}\), i.e., \(\bar{\mathcal {E}}_{(k)}{\mathop {=}\limits ^{\varDelta }}\left\{ e\in \bar{\mathcal {E}}| C_{e}=C_{(k)}\right\} \). We also define \(E_{(k)} {\mathop {=}\limits ^{\varDelta }}|\bar{\mathcal {E}}_{(k)}|\). Clearly, \(\cup _{k=1}^{K} \bar{\mathcal {E}}_{(k)}=\bar{\mathcal {E}}\), and \(\sum _{k=1}^{K} E_{(k)}=|\bar{\mathcal {E}}|\). Facilities in the same group have identical impact on the infrastructure system when compromised.

4 Normal Form Game \(\varGamma \)

In this section, we provide complete characterization of the set of NEs for any given attack and defense cost parameters in game \(\varGamma \). In Sect. 4.1, we show that \(\varGamma \) is strategically equivalent to a zero-sum game, and hence the set of attacker’s equilibrium strategies can be solved by a linear program. In Sect. 4.2, we show that the space of cost parameters \((p_a, p_d) \in \mathbb {R}_{>0}^2\) can be partitioned into qualitatively distinct equilibrium regimes.

4.1 Strategic Equivalence to Zero-Sum Game

Our notion of strategic equivalence is the same as the best response equivalence defined in Rosenthal [42]. If \(\varGamma \) and another game \(\varGamma ^0\) are strategically equivalent, then given any strategy of the defender (resp. attacker), the set of attacker’s (resp. defender’s) best responses is identical in the two games. This result forms the basis of characterizing the set of NE.

We define the utility functions of the game \(\varGamma ^0\) as follows:

Thus, \(\varGamma ^0\) is a zero-sum game. We denote the set of defender’s (resp. attacker’s) equilibrium strategies in \(\varGamma ^0\) as \(\varSigma _d^0\) (resp. \(\varSigma _a^0\)).

Lemma 2

The normal form game \(\varGamma \) is strategically equivalent to the zero-sum game \(\varGamma ^0\). The set of defender’s (resp. attacker’s) equilibrium strategies in \(\varGamma \) is \(\varSigma ^{*}_d \equiv \varSigma _d^{0}\) (resp. \(\varSigma ^{*}_a\equiv \varSigma _a^{0}\)). Furthermore, for any \(\sigma _d^*\in \varSigma ^{*}_d\) and any \(\sigma _a^*\in \varSigma ^{*}_a\), \((\sigma _d^*, \sigma _a^*)\) is an equilibrium strategy profile of \(\varGamma \).

Based on Lemma 2, the set of attacker’s equilibrium strategies \(\varSigma ^{*}_a\) can be expressed as the optimal solution set of a linear program.

Proposition 2

The set \(\varSigma ^{*}_a\) is the optimal solution set of the following optimization problem:

Furthermore, (10) is equivalent to the following linear optimization program:

where \(v=\left( v_e\right) _{e\in \bar{\mathcal {E}}}\) is an \(|\bar{\mathcal {E}}|\)-dimensional variable.

In Proposition 2, the objective function \(V(\sigma _a)\) is a piecewise linear function in \(\sigma _a\). Furthermore, given any \(\sigma _a\) and any \(e\in \bar{\mathcal {E}}\), we can write:

Thus, we can observe that if \(\sigma _a(e)\) is equal to \(p_d/\left( C_{e}-C_{\emptyset }\right) \), then \(-\sigma _a(e) \cdot C_{\emptyset }-p_d= -\sigma _a(e) \cdot C_{e}\), i.e., if a facility \(e\) is targeted with the threshold attack probability \(p_d/(C_{e}-C_{\emptyset })\), the defender is indifferent between securing \(e\) versus not. The following lemma analyzes the defender’s best response to the attacker’s strategy and shows that no facility is targeted with probability higher than the threshold probability in equilibrium.

Lemma 3

Given any strategy of the attacker \(\sigma _a\in \varDelta (S_a)\), for any defender’s security effort \(\rho \) that is a best response to \(\sigma _a\), denoted \(\rho \in BR(\sigma _a)\), the security effort \(\rho _e\) on each facility \(e\in \mathcal {E}\) satisfies:

Furthermore, in equilibrium, the attacker’s strategy \(\sigma _a^*\) satisfies:

Lemma 3 highlights a key property of NE: The attacker does not target at any facility \(e\in \bar{\mathcal {E}}\) with probability higher than the threshold \(p_d/(C_{e}-C_{\emptyset })\), and the defender allocates a nonzero security effort only on the facilities that are targeted with the threshold probability.

Intuitively, if a facility \(e\) were to be targeted with a probability higher than the threshold \(p_d/(C_{e}-C_{\emptyset })\), then the defender’s best response would be to secure that facility with probability 1, and the attacker’s expected utility will be \(-C_{\emptyset }-p_a\sigma _a(e)\), which is smaller than \(-C_{\emptyset }\) (utility of no attack). Hence, the attacker would be better off by choosing the no attack action.

Now, we can rewrite \(V(\sigma _a)\) as defined in (10) as follows:

and the set of attacker’s equilibrium strategies maximizes this function.

4.2 Characterization of NE in \(\varGamma \)

We are now in the position to introduce the equilibrium regimes. Each regime corresponds to a range of cost parameters such that the qualitative properties of equilibrium (i.e., the set of facilities that are targeted and secured) do not change in the interior of each regime.

We say that a facility e is vulnerable if \(C_{e}-p_a>C_{\emptyset }\). Therefore, given any attack cost \(p_a\), the set of vulnerable facilities is given by \(\{\bar{\mathcal {E}}| C_{e}-p_a>C_{\emptyset }\}\). Clearly, only vulnerable facilities are likely targets of the attacker. If \(p_a> C_{(1)}-C_{\emptyset }\), then there are no vulnerable facilities. In contrast, if \(p_a< C_{(1)}-C_{\emptyset }\), we define the following threshold for the per-facility defense cost:

We can check that for any \(i=1, \dots , K-1\) (resp. \(i=K\)), if \(C_{(i+1)}-C_{\emptyset }\le p_a< C_{(i)}-C_{\emptyset }\) (resp. \(0<p_a<C_{(K)}-C_{\emptyset }\)), then

Recall from Lemma 3 that \(\sigma _a^*(e)\) is upper bounded by the threshold attack probability \(p_d/(C_{e}-C_{\emptyset })\). If the defense cost \(p_d<\bar{p}_d(p_a)\), then \(\sum _{k=1}^{i} \frac{E_{(k)}p_d}{C_{(k)}-C_{\emptyset }}<1\), which implies that even when the attacker targets each vulnerable facility with the threshold attack probability, the total probability of attack is still smaller than 1. Thus, the attacker must necessarily choose not to attack with a positive probability. On the other hand, if \(p_d>\bar{p}_d(p_a)\), then the no attack action is not chosen by the attacker in equilibrium.

Following the above discussion, we introduce two types of regimes depending on whether or not \(p_d\) is higher than the threshold \(\bar{p}_d(p_a)\). In type I regimes, denoted as \(\{\varLambda ^i| i=0, \dots , K\}\), the defense cost \(p_d<\bar{p}_d(p_a)\), whereas in type II regimes, denoted as \(\{\varLambda _j| j=1, \dots , K\}\), the defense cost \(p_d>\bar{p}_d(p_a)\). Hence, we say that \(p_d\) is “relatively low” (resp. “relatively high”) in comparison with \(p_a\) in type I regimes (resp. type II regimes). We formally define these \(2K+1\) regimes as follows:

- (a)

Type I regimes \(\varLambda ^i\), \(i=0, \dots , K\):

If \(i=0\):

$$\begin{aligned} p_a> C_{(1)}-C_{\emptyset }, \text { and }p_d>0 \end{aligned}$$(18)If \(i=1, \dots , K-1\):

$$\begin{aligned} C_{(i+1)}-C_{\emptyset }< p_a< C_{(i)}-C_{\emptyset }, \text { and } 0<p_d< \left( \sum _{k=1}^{i} \frac{E_{(k)}}{C_{(k)}-C_{\emptyset }}\right) ^{-1} \end{aligned}$$(19)If \(i=K\):

$$\begin{aligned} 0< p_a< C_{(K)}-C_{\emptyset }, \text { and } 0<p_d<\left( \sum _{k=1}^{K} \frac{E_{(k)}}{C_{(k)}-C_{\emptyset }}\right) ^{-1} \end{aligned}$$(20)

- (b)

Type II regimes, \(\varLambda _j\), \(j=1, \dots , K\):

If \(j=1\):

$$\begin{aligned} 0<p_a< C_{(1)}-C_{\emptyset }, \text { and } p_d> \left( \frac{E_{(1)}}{C_{(1)}-C_{\emptyset }}\right) ^{-1} \end{aligned}$$(21)If \(j=2, \dots , K\):

$$\begin{aligned} 0<p_a< C_{(j)}-C_{\emptyset }, \text { and } \left( \sum _{k=1}^{j} \frac{E_{(k)}}{C_{(k)}-C_{\emptyset }}\right) ^{-1}< p_d<\left( \sum _{k=1}^{j-1} \frac{E_{(k)}}{C_{(k)}-C_{\emptyset }}\right) ^{-1} \end{aligned}$$(22)

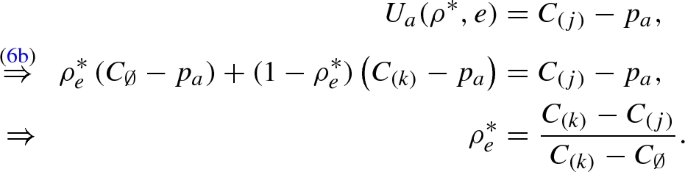

We now characterize equilibrium strategy sets \(\varSigma ^{*}_d\) and \(\varSigma ^{*}_a\) in the interior of each regime.Footnote 1

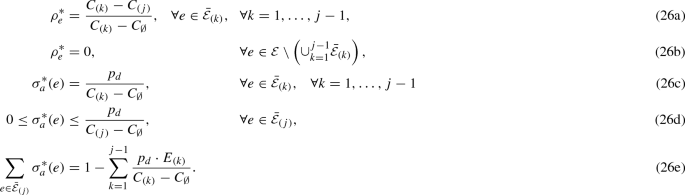

Theorem 1

The set of NE in each regime is as follows:

- (a)

Type I regimes \(\varLambda ^i\):

If \(i=0\),

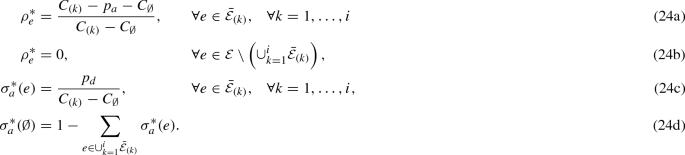

$$\begin{aligned} \rho _e^{*}&=0, \quad \forall e\in \mathcal {E}\end{aligned}$$(23a)$$\begin{aligned} \sigma _a^*(\emptyset )&=1. \end{aligned}$$(23b)If \(i=1, \dots , K\),

- (b)

Type II regimes \(\varLambda _j\):

\(j=1\):

\(j=2, \dots , K\):

Let us discuss the intuition behind the proof of Theorem 1.

Recall from Proposition 2 and Lemma 3 that the set of attacker’s equilibrium strategies \(\varSigma _a^{*}\) is the set of feasible mixed strategies that maximizes \(V(\sigma _a)\) in (15), and the attacker never targets at any facility \(e\in \mathcal {E}\) with probability higher than the threshold \(p_d/(C_{e}-C_{\emptyset })\). Also recall that the costs \(\{C_{(k)}\}_{k=1}^K\) are ordered according to (8). Thus, in equilibrium, the attacker targets the facilities in \(\bar{\mathcal {E}}_{(k)}\) with the threshold attack probability starting from \(k=1\) and proceeding to \(k=2, 3, \dots K\) until either all the vulnerable facilities are targeted with the threshold attack probability (and no attack is chosen with remaining probability), or the total attack probability reaches 1.

Again, from Lemma 3, we know that the defender secures the set of facilities that are targeted with the threshold attack probability with positive effort. The equilibrium level of security effort ensures that the attacker gets an identical utility in choosing any pure strategy in the support of \(\sigma _a^*\), and this utility is higher or equal to that of choosing any other pure strategy.

The distinctions between the two regime types are summarized as follows:

- (1)

In type I regimes, the defense cost \(p_d< \bar{p}_d(p_a)\). The defender secures all vulnerable facilities with a positive level of effort. The attacker targets at each vulnerable facility with the threshold attack probability, and the total probability of attack is less than 1.

- (2)

In type II regimes, the defense cost \(p_d>\bar{p}_d(p_a)\). The defender only secures a subset of targeted facilities with positive level of security effort. The attacker chooses the facilities in decreasing order of \(C_{e}-C_{\emptyset }\) and targets each of them with the threshold probability until the attack resource is exhausted, i.e., the total probability of attack is 1.

5 Sequential Game \(\widetilde{\varGamma }\)

In this section, we characterize the set of SPEs in the game \(\widetilde{\varGamma }\) for any given attack and defense cost parameters. The sequential game \(\widetilde{\varGamma }\) is no longer strategically equivalent to a zero-sum game. Hence, the proof technique we used for equilibrium characterization in game \(\varGamma \) does not work for the game \(\widetilde{\varGamma }\). In Sect. 5.1, we analyze the attacker’s best response to the defender’s security effort vector. We also identify a threshold level of security effort which determines whether or not the defender achieves full attack deterrence in equilibrium. In Sect. 5.2, we present the equilibrium regimes which govern the qualitative properties of SPE.

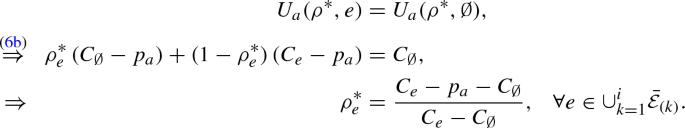

5.1 Properties of SPE

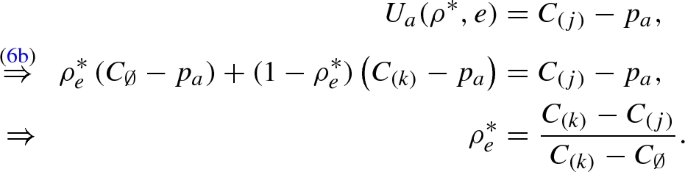

By definition of SPE, for any security effort vector \(\tilde{\rho }\in [0, 1]^{|\mathcal {E}|}\) chosen by the defender in the first stage, the attacker’s equilibrium strategy in the second stage is a best response to \(\tilde{\rho }\), i.e., \(\widetilde{\sigma }_a^*(\tilde{\rho })\) satisfies (3b). As we describe next, the properties of SPE crucially depend on a threshold security effort level defined as follows:

The following lemma presents the best response correspondence \(BR(\tilde{\rho })\) of the attacker:

Lemma 4

Given any \(\tilde{\rho }\in [0, 1]^{|\mathcal {E}|}\), if \(\tilde{\rho }\) satisfies \(\tilde{\rho }_e\ge \widehat{\rho }_e\), for all \(e\in \{\bar{\mathcal {E}}| C_{e}-p_a>C_{\emptyset }\}\), then \(BR(\tilde{\rho })=\varDelta (\bar{\mathcal {E}}^{*}\cup \{\emptyset \})\), where:

Otherwise, \(BR(\tilde{\rho })=\varDelta (\bar{\mathcal {E}}^{\diamond })\), where:

In words, if each vulnerable facility \(e\) is secured with an effort higher or equal to the threshold effort \(\widehat{\rho }_e\) in (27), then the attacker’s best response is to choose a mixed strategy with support comprised of all vulnerable facilities that are secured with the threshold level of effort (i.e., \(\bar{\mathcal {E}}^{*}\) as defined in (28)) and the no attack action. Otherwise, the support of attacker’s strategy is comprised of all vulnerable facilities (pure actions) that maximize the expected usage cost (see (29)). In particular, no attack action is not chosen in attacker’s best response.

Now recall that any SPE \((\tilde{\rho }^{*}, \widetilde{\sigma }_a^*(\tilde{\rho }^{*}))\) must satisfy both (3a) and (3b). Thus, for an equilibrium security effort \(\tilde{\rho }^{*}\), an attacker’s best response \(\widetilde{\sigma }_a(\tilde{\rho }^{*}) \in BR(\tilde{\rho }^{*})\) is an equilibrium strategy only if both these constraints are satisfied. The next lemma shows that depending on whether the defender secures each vulnerable facility \(e\) with the threshold effort \(\widehat{\rho }_e\) or not, the total attack probability in equilibrium is either 0 or 1. Thus, the defender being the first mover determines whether the attacker is fully deterred from conducting an attack or not. Additionally, in SPE, the security effort on each vulnerable facility \(e\) is no higher than the threshold effort \(\widehat{\rho }_e\), and the security effort on any other edge is 0.

Lemma 5

Any SPE \((\tilde{\rho }^{*}, \widetilde{\sigma }_a^*(\tilde{\rho }^{*}))\) of the game \(\widetilde{\varGamma }\) satisfies the following property:

Additionally, for any \(e\in \{\bar{\mathcal {E}}| C_{e}-p_a>C_{\emptyset }\}\), \(\tilde{\rho }^{*}_e\le \widehat{\rho }_e\). For any \(e\in \mathcal {E}\setminus \{\bar{\mathcal {E}}| C_{e}-p_a>C_{\emptyset }\}\), \(\tilde{\rho }^{*}_e=0\).

The proof of this result is based on the analysis of the following three cases:

Case 1 There exists at least one facility \(e\in \{\bar{\mathcal {E}}|C_{e}-p_a>C_{\emptyset }\}\) such that \(\tilde{\rho }^{*}_e<\widehat{\rho }_e\). In this case, by applying Lemma 4, we know that \(\widetilde{\sigma }_a^*(\tilde{\rho }^{*}) \in BR(\tilde{\rho }^{*}) = \varDelta (\bar{\mathcal {E}}^{\diamond })\), where \(\bar{\mathcal {E}}^{\diamond }\) is defined in (29). Hence, the total attack probability is 1.

Case 2 For any \(e\in \{\bar{\mathcal {E}}|C_{e}-p_a>C_{\emptyset }\}\), \(\tilde{\rho }^{*}_e>\widehat{\rho }_e\). In this case, the set \(\bar{\mathcal {E}}^{*}\) defined in (28) is empty. Hence, Lemma 4 shows that the total attack probability is 0.

Case 3 For any \(e\in \{\bar{\mathcal {E}}|C_{e}-p_a>C_{\emptyset }\}\), \(\tilde{\rho }^{*}_e\ge \widehat{\rho }_e\), and the set \(\bar{\mathcal {E}}^{*}\) in (28) is non-empty. Again from Lemma 4, we know that \(\widetilde{\sigma }_a^*(\tilde{\rho }^{*}) \in BR(\tilde{\rho }^{*}) = \varDelta (\bar{\mathcal {E}}^{*}\cup \{\emptyset \})\). Now assume that the attacker chooses to target at least one facility \(e\in \bar{\mathcal {E}}^{*}\) with a positive probability in equilibrium. Then, the defender can deviate by slightly increasing the security effort on each facility in \(\bar{\mathcal {E}}^{*}\). By introducing such a deviation, the defender’s security effort satisfies the condition of Case 2, where the total attack probability is 0. Hence, this results in a higher utility for the defender. Therefore, in any SPE \(\left( \tilde{\rho }^{*}, \widetilde{\sigma }_a^*(\tilde{\rho }^{*})\right) \), one cannot have a second-stage outcome in which the attacker targets facilities in \(\bar{\mathcal {E}}^{*}\). We can thus conclude that the total attack probability must be 0 in this case.

In both Cases 2 and 3, we say that the attacker is fully deterred.

Clearly, these three cases are exhaustive in that they cover all feasible security effort vectors, and hence we can conclude that the total attack probability in equilibrium is either 0 or 1. Additionally, since the attacker is fully deterred when each vulnerable facility is secured with the threshold effort, the defender will not further increase the security effort beyond the threshold effort on any vulnerable facility. That is, only Cases 1 and 3 are possible in equilibrium.

5.2 Characterization of SPE

Recall that in Sect. 4, type I and type II regimes for the game \(\varGamma \) can be distinguished based on a threshold defense cost \(\bar{p}_d(p_a)\). It turns out that in \(\widetilde{\varGamma }\), there are still \(2 K+1\) regimes. Again, each regime denotes distinct ranges of cost parameters and can be categorized either as type \(\widetilde{\text {I}}\) or type \(\widetilde{\text {II}}\). However, in contrast to \(\varGamma \), the regime boundaries in this case are more complicated; in particular, they are nonlinear in the cost parameters \(p_a\) and \(p_d\).

To introduce the boundary \(\widetilde{p}_d(p_a)\), we need to define the function \(p_d^{ij}(p_a)\) for each \(i=1, \dots , K\) and \(j=1, \dots , i\) as follows:

For any \(i=1, \dots , K\), and any attack cost \(C_{(i+1)}-C_{\emptyset }\le p_a< C_{(i)}-C_{\emptyset }\) (or \(0<p_a<C_{(K)}-C_{\emptyset }\) if \(i=K\)), the threshold \(\widetilde{p}_d(p_a)\) is defined as follows:

Lemma 6

Given any attack cost \(0\le p_a<C_{(1)}-C_{\emptyset }\), the threshold \(\widetilde{p}_d(p_a)\) is a strictly increasing and continuous function of \(p_a\).

Furthermore, for any \(0<p_a<C_{(1)}-C_{\emptyset }\), \(\widetilde{p}_d(p_a)>\bar{p}_d(p_a)\). If \(p_a=0\), \(\widetilde{p}_d(0)=\bar{p_d}(0)\). If \(p_a\rightarrow C_{(1)}-C_{\emptyset }\), \(\widetilde{p}_d(p_a) \rightarrow +\infty \).

Since \(\widetilde{p}_d(p_a)\) is a strictly increasing and continuous function of \(p_a\), the inverse function \(\widetilde{p}_d^{-1}(p_d)\) is well defined. Now we are ready to formally define the regimes for the game \(\widetilde{\varGamma }\):

- (1)

Type \(\widetilde{\text {I}}\) regimes \(\widetilde{\varLambda }^i\), \(i=0, \dots , K\):

If \(i=0\):

$$\begin{aligned} p_a>C_{(1)}-C_{\emptyset }, \text { and } \quad p_d>0. \end{aligned}$$(32)If \(i=1, \dots , K-1\):

$$\begin{aligned} C_{(i+1)}-C_{\emptyset }<p_a< C_{(i)}-C_{\emptyset }, \text { and } \quad 0<p_d< \widetilde{p}_d(p_a). \end{aligned}$$(33)If \(i=K\):

$$\begin{aligned} 0<p_a< C_{(K)}-C_{\emptyset }, \text { and } \quad 0<p_d< \widetilde{p}_d(p_a). \end{aligned}$$(34)

- (2)

Type \(\widetilde{\text {II}}\) regimes \(\widetilde{\varLambda }_j\), \(j=1, \dots , K\):

If \(j=1\):

$$\begin{aligned} 0< p_a< \widetilde{p}_d^{-1}(p_d), \text { and } \quad p_d> \left( \frac{E_{(1)}}{C_{(1)}-C_{\emptyset }}\right) ^{-1} \end{aligned}$$(35)If \(j=2, \dots , K\):

$$\begin{aligned} 0< p_a< \widetilde{p}_d^{-1}(p_d), \text { and } \quad \left( \sum _{k=1}^{j} \frac{E_{(k)}}{C_{(k)}-C_{\emptyset }}\right) ^{-1}<p_d<\left( \sum _{k=1}^{j-1} \frac{E_{(k)}}{C_{(k)}-C_{\emptyset }}\right) ^{-1} \end{aligned}$$(36)

Analogous to the discussion in Sect. 4.2, we say \(p_d\) is “relatively low” in type \(\widetilde{\text {I}}\) regimes, and “relatively high” in type \(\widetilde{\text {II}}\) regimes. We now provide full characterization of SPE in each regime.

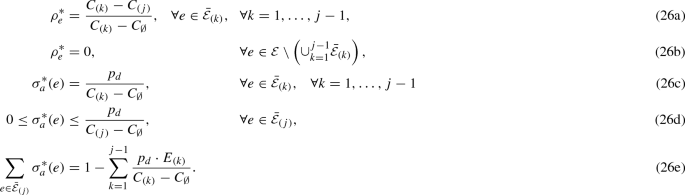

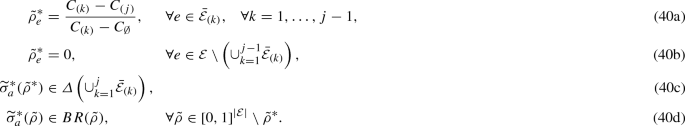

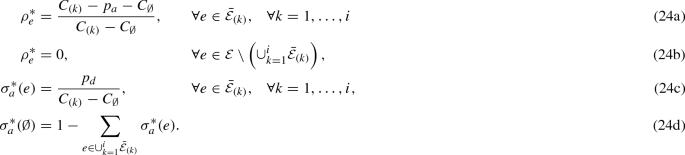

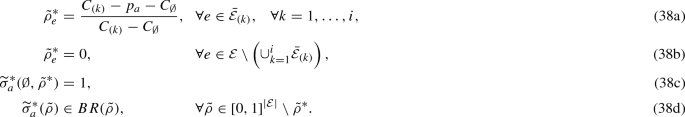

Theorem 2

The defender’s equilibrium security effort vector \(\tilde{\rho }^{*}=\left( \tilde{\rho }^{*}_e\right) _{e\in \mathcal {E}}\) is unique in each regime. Specifically, SPE in each regime is as follows:

- (1)

Type \(\widetilde{\text {I}}\) regimes \(\widetilde{\varLambda }^i\):

If \(i=0\),

If \(i=1, \dots , K\),

- (2)

Type \(\widetilde{\text {II}}\) regimes \(\widetilde{\varLambda }_j\):

If \(j=1\),

If \(j=2, \dots , K\),

In our proof of Theorem 2 (see Appendix C), we take the approach by first constructing a partition of the space \((p_a, p_d) \in \mathbb {R}_{>0}^2\) defined in (52), and then characterizing the SPE for cost parameters in each set in the partition (Lemmas 7–8). Theorem 2 follows directly by regrouping/combining the elements of this partition such that each of the new partitions has qualitatively identical equilibrium strategies.

From the discussion of Lemma 5, we know that only Cases 1 and 3 are possible in equilibrium and that in any SPE, the security effort on each vulnerable facility \(e\) is no higher than the threshold effort \(\widehat{\rho }_e\). It turns out that for any attack cost, depending on whether the defense cost is lower or higher than the threshold cost \(\widetilde{p}_d(p_a)\), the defender either secures each vulnerable facility with the threshold effort given by (31) (type \(\widetilde{\text {I}}\) regime), or there is at least one vulnerable facility that is secured with effort strictly less than the threshold (type \(\widetilde{\text {II}}\) regimes):

In type \(\widetilde{\text {I}}\) regimes, the defense cost \(p_d<\widetilde{p}_d(p_a)\). The defender secures each vulnerable facility with the threshold effort \(\widehat{\rho }_e\). The attacker is fully deterred.

In type \(\widetilde{\text {II}}\) regimes, the defense cost \(p_d>\widetilde{p}_d(p_a)\). The defender’s equilibrium security effort is identical to that in NE of the normal form game \(\varGamma \). The total attack probability is 1.

6 Comparison of \(\varGamma \) and \(\widetilde{\varGamma }\)

Section 6.1 deals with the comparison of players’ equilibrium utilities in the two games. In Sect. 6.2, we compare the equilibrium regimes and discuss the distinctions in equilibrium properties of the two games. We identify situations in which the defender has first-mover advantage.

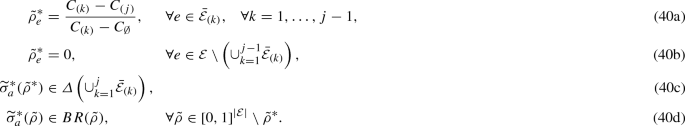

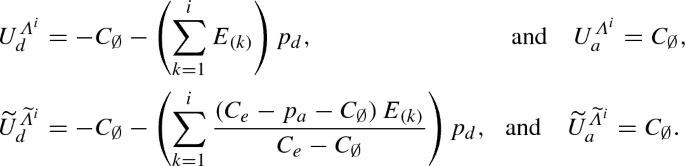

6.1 Comparison of Equilibrium Utilities

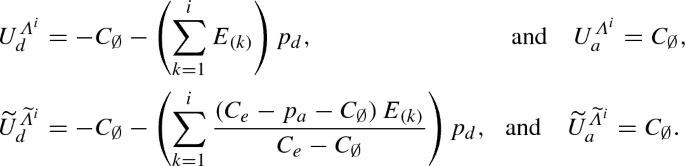

The equilibrium utilities in both games are unique and can be directly derived using Theorems 1 and 2. We denote the equilibrium utilities of the defender and attacker in regime \(\varLambda ^i\) (resp. \(\varLambda _j\)) as \(U_d^{\varLambda ^i}\) and \(U_a^{\varLambda ^i}\) (resp. \(U_d^{\varLambda _j}\) and \(U_a^{\varLambda _j}\)) in \(\varGamma \), and \(\widetilde{U}_d^{\widetilde{\varLambda }^i}\) and \(\widetilde{U}_a^{\widetilde{\varLambda }^i}\) (resp. \(\widetilde{U}_d^{\widetilde{\varLambda }_j}\) and \(\widetilde{U}_a^{\widetilde{\varLambda }_j}\)) in regime \(\widetilde{\varLambda }^i\) (resp. \(\widetilde{\varLambda }_j\)) in \(\widetilde{\varGamma }\).

Proposition 3

In both \(\varGamma \) and \(\widetilde{\varGamma }\), the equilibrium utilities are unique in each regime. Specifically,

- (a)

Type I \((\widetilde{\text {I}})\) regimes \(\varLambda ^i\)\((\widetilde{\varLambda }^i)\):

If \(i=0\):

$$\begin{aligned} U_d^{\varLambda _0}&=\widetilde{U}_d^{\widetilde{\varLambda }^{0}}=-C_{\emptyset }, \text { and } \quad U_a^{\varLambda _0}= \widetilde{U}_a^{\widetilde{\varLambda }^0}=C_{\emptyset }.\\ \end{aligned}$$If \(i=1, \dots , K\):

- (b)

Type II (\(\widetilde{\text {II}}\)) regimes \(\varLambda _j\) (\(\widetilde{\varLambda }_j\)):

If \(j=1\):

$$\begin{aligned} U_d^{\varLambda _1}&=\widetilde{U}_d^{\widetilde{\varLambda }_{1}}=-C_{(1)},\text { and } \quad U_a^{\varLambda _1}=\widetilde{U}_a^{\widetilde{\varLambda }_{1}}=C_{(1)}-p_a. \end{aligned}$$If \(j=2, \dots , K\):

$$\begin{aligned} U_d^{\varLambda _j}&=\widetilde{U}_d^{\widetilde{\varLambda }_j}=-C_{(j)}-\sum _{k=1}^{j-1} \frac{\left( C_{(k)}-C_{(j)}\right) p_dE_{(k)}}{C_{(k)}-C_{\emptyset }} , \text { and } \quad U_a^{\varLambda _j}=\widetilde{U}_a^{\widetilde{\varLambda }_j}=C_{(j)}-p_a.\\ \end{aligned}$$

From our results so far, we can summarize the similarities between the equilibrium outcomes in \(\varGamma \) and \(\widetilde{\varGamma }\). While most of these conclusions are fairly intuitive, the fact that they are common to both game-theoretic models suggests that the timing of defense investments do not play a role as far as these insights are concerned. Firstly, the support of both players equilibrium strategies tends to contain the facilities, whose compromise results in a high usage cost. The defender secures these facilities with a high level of effort in order to reduce the probability with which they are targeted by the attacker. Secondly, the attack and defense costs jointly determine the set of facilities that are targeted or secured in equilibrium. On the one hand, the set of vulnerable facilities increases as the cost of attack decreases. On the other hand, when the cost of defense is sufficiently high, the attacker tends to conduct an attack with probability 1. However, as the defense cost decreases, the attacker randomizes the attack on a larger set of facilities. Consequently, the defender secures a larger set of facilities with positive effort, and when the cost of defense is sufficiently small, all vulnerable facilities are secured by the defender. Thirdly, each player’s equilibrium payoff is non-decreasing in the opponent’s cost, and non-increasing in her own cost. Therefore, to increase her equilibrium payoff, each player is better off as her own cost decreases and the opponent’s cost increases.

6.2 First-Mover Advantage

We now focus on identifying parameter ranges in which the defender has the first-mover advantage; i.e., the defender in SPE has a strictly higher payoff than in NE. To identify the first-mover advantage, let us recall the expressions of type I regimes for \(\varGamma \) in (18)–(20) and type \(\widetilde{\text {I}}\) regimes for \(\widetilde{\varGamma }\) in (32)–(34). Also recall that, for any given cost parameters \(p_a\) and \(p_d\), the threshold \(\bar{p}_d(p_a)\) (resp. \(\widetilde{p}_d(p_a)\)) determines whether the equilibrium outcome is of type I or type II regime (resp. type \(\widetilde{\text {I}}\) or \(\widetilde{\text {II}}\) regime) in the game \(\varGamma \) (resp. \(\widetilde{\varGamma }\)). Furthermore, from Lemma 6, we know that the cost threshold \(\bar{p}_d(p_a)\) in \(\varGamma \) is smaller than the threshold \(\widetilde{p}_d(p_a)\) in \(\widetilde{\varGamma }\). Thus, for all \(i=1, \dots , K\), the type I regime \(\varLambda ^i\) in \(\varGamma \) is a proper subset of the type \(\widetilde{\text {I}}\) regime \(\widetilde{\varLambda }^i\) in \(\widetilde{\varGamma }\). Consequently, if \(p_a< {C_{(1)}} - {C_{\emptyset }}\), we can have one of the following three cases:

\(0<p_d< \bar{p}_d(p_a)\): The defense cost is relatively low in both \(\varGamma \) and \(\widetilde{\varGamma }\). We denote the set of \(\left( p_a, p_d\right) \) that satisfy this condition as \(L\) (low defense cost). That is,

$$\begin{aligned} L{\mathop {=}\limits ^{\varDelta }}\left\{ \left( p_a, p_d\right) |0<p_d< \bar{p}_d(p_a)\right\} =\cup _{i=1}^{K} \varLambda ^i. \end{aligned}$$(41)\(\bar{p}_d(p_a)<p_d< \widetilde{p}_d(p_a)\): The defense cost is relatively high in \(\varGamma \), but relatively low in \(\widetilde{\varGamma }\). We denote the set of \(\left( p_a, p_d\right) \) that satisfy this condition as \(M\) (medium defense cost). That is,

$$\begin{aligned} M{\mathop {=}\limits ^{\varDelta }}\left\{ \left( p_a, p_d\right) |\bar{p}_d(p_a)<p_d< \widetilde{p}_d(p_a) \right\} =\cup _{i=1}^{K} \left( \widetilde{\varLambda }^i\setminus \varLambda ^i\right) . \end{aligned}$$(42)\(p_d>\widetilde{p}_d(p_a)\): The defense cost is relatively high in both \(\varGamma \) and \(\widetilde{\varGamma }\). We denote the set of \(\left( p_a, p_d\right) \) that satisfy this condition as \(H\) (high defense cost). That is,

$$\begin{aligned} H{\mathop {=}\limits ^{\varDelta }}\left\{ \left( p_a, p_d\right) |p_d>\widetilde{p}_d(p_a)\right\} =\cup _{j=1}^{K} \widetilde{\varLambda }_j. \end{aligned}$$

We next compare the properties of NE and SPE for cost parameters in each set based on Theorems 1 and 2, and Propositions 3.

Set \(L\):

Attacker In \(\varGamma \), the total attack probability is nonzero but smaller than 1, whereas in \(\widetilde{\varGamma }\), the attacker is fully deterred. The attacker’s equilibrium utility is identical in both games, i.e., \(U_a=\widetilde{U}_a\).

Defender The defender chooses identical equilibrium security effort in both games, i.e., \(\rho ^{*}=\tilde{\rho }^{*}\), but obtains a higher utility in \(\widetilde{\varGamma }\) in comparison with that in \(\varGamma \), i.e., \(U_d<\widetilde{U}_d\).

Set \(M\):

Attacker In \(\varGamma \), the attacker conducts an attack with probability 1, whereas in \(\widetilde{\varGamma }\) the attacker is fully deterred. The attacker’s equilibrium utility is lower in \(\widetilde{\varGamma }\) in comparison with that in \(\varGamma \), i.e., \(U_a>\widetilde{U}_a\).

Defender The defender secures each vulnerable facility with a strictly higher level of effort in \(\widetilde{\varGamma }\) than in \(\varGamma \), i.e., \(\tilde{\rho }^{*}_e>\rho _e^{*}\) for each vulnerable facility \(e\in \{\mathcal {E}|C_{e}-p_a>C_{\emptyset }\}\). The defender’s equilibrium utility is higher in \(\widetilde{\varGamma }\) in comparison with that in \(\varGamma \), i.e., \(U_d<\widetilde{U}_d\).

Set \(H\):

Attacker In both games, the attacker conducts an attack with probability 1 and obtains identical utilities, i.e. \(U_a=\widetilde{U}_a\).

Defender The defender chooses identical equilibrium security effort in both games, i.e., \(\rho ^{*}=\tilde{\rho }^{*}\), and obtains identical utilities, i.e., \(U_d=\widetilde{U}_d\).

Importantly, the key difference between NE and SPE comes from the fact that in \(\widetilde{\varGamma }\), the defender as the leading player is able to influence the attacker’s strategy in her favor. Hence, when the defense cost is relatively medium or low (both sets \(M\) and \(L\)), the defender can proactively secure all vulnerable facilities with the threshold effort to fully deter the attack, which results in a higher defender utility in \(\widetilde{\varGamma }\) than in \(\varGamma \). Thus, we say the defender has the first-mover advantage when the cost parameters lie in the set \(M\) or \(L\). However, the reason behind the first-mover advantage differs in each set:

In set \(M\), the defender needs to proactively secure all vulnerable facilities with strictly higher effort in \(\widetilde{\varGamma }\) than that in \(\varGamma \) to fully deter the attacker.

In set \(L\), the defender secures facilities in \(\widetilde{\varGamma }\) with the same level of effort as that in \(\varGamma \), and the attacker is still deterred with probability 1.

On the other hand, in set \(H\), the defense cost is so high that the defender is not able to secure all targeted facilities with an adequately high level of security effort. Thus, the attacker conducts an attack with probability 1 in both games, and the defender no longer has first-mover advantage.

Finally, for the sake of illustration, we compute the parameter sets L, M, and H for transportation network with three facilities (edges); see Fig. 1. If an edge \(e\in \mathcal {E}\) is not damaged, then the cost function is \(\ell _e(w_e)\), which increases in the edge load \(w_e\). If edge \(e\) is successfully compromised by the attacker, then the cost function changes to \(\ell _e^{\otimes }(w_e)\), which is higher than \(\ell _e(w_e)\) for any edge load \(w_e>0\). The network faces a set of non-atomic travelers with total demand \(D=10\). We define the usage cost in this case as the average cost of travelers in Wardrop equilibrium [21]. Therefore, the usage costs corresponding to attacks to different edges are \(C_1=20\), \(C_2=19\), \(C_3=18\) and the pre-attack usage cost is \(C_{\emptyset }=17\). From (8), \(K=3\), and \(\bar{\mathcal {E}}_{(1)}=\{e_1\}\), \(\bar{\mathcal {E}}_{(2)}=\{e_2\}\) and \(\bar{\mathcal {E}}_{(3)}=\{e_3\}\). In Fig. 2, we illustrate the regimes of both \(\varGamma \) and \(\widetilde{\varGamma }\), and the three sets H, M, and L distinguished by the thresholds \(\bar{p}_d(p_a)\) and \(\widetilde{p}_d(p_a)\).

7 Model Extensions and Dynamic Aspects

In this section, we first discuss how relaxing our modeling assumptions influences our main results. Next, we introduce a dynamic setup in which the users of the infrastructure system face uncertainty about the outcome of attacker–defender interaction (i.e., identity of the compromised facility) and follow a repeated learning procedure to make their usage decisions.

7.1 Relaxing Model Assumptions

Our discussion centers around extending our results when the following modeling aspects are included: facility-dependent cost parameters, less than perfect defense, and attacker’s ability to target multiple facilities.

- (1)

Facility-dependent attack and defense costs.

Our techniques for equilibrium characterization of games \(\varGamma \) and \(\widetilde{\varGamma }\)—as presented in Sects. 4 and 5, respectively—can be generalized to the case when attack/defense costs are non-homogeneous across facilities. We denote the attack (resp. defense) cost for facility \(e\in \mathcal {E}\) as \(p_{a,e}\) (resp. \(p_{d, e}\)). However, an explicit characterization of equilibrium regimes in each game can be quite complicated due to the multidimensional nature of cost parameters.

In normal form game \(\varGamma \), it is easy to show that the attacker’s best response correspondence in Lemma 3 holds except that the threshold attack probability for any facility \(e\in \bar{\mathcal {E}}\) now becomes \(p_{d, e}/(C_{e}-C_{\emptyset })\). The set of vulnerable facilities is given by \(\{\mathcal {E}|C_{e}-p_{a,e}>C_{\emptyset }\}\). The attacker’s equilibrium strategy is to order the facilities in decreasing order of \(C_{e}-p_{a,e}\) and target the facilities in this order each with the threshold probability until either all vulnerable facilities are targeted or the total probability of attack reaches 1. As in Theorem 1, the former case happens when the cost parameters lie in a type I regime, and the latter case happens for type II regimes, although the regime boundaries are more complicated to describe. In equilibrium, the defender chooses the security effort vector to ensure that the attacker is indifferent among choosing any of the pure actions that are in the support of equilibrium attack strategy.

In the sequential game \(\widetilde{\varGamma }\), Lemmas 4 and 5 can be extended in a straightforward manner except that the threshold security effort for any vulnerable facility \(e\in \{\mathcal {E}|C_{e}-C_{\emptyset }>p_{a,e}\}\) is given by \(\widehat{\rho }_e=(C_{e}-p_{a,e}-C_{\emptyset })/(C_{e}-C_{\emptyset })\). The SPE for this general case can be obtained analogously to Theorem 2, i.e., comparing the defender’s utility of either securing all vulnerable facilities with the threshold effort to fully deter the attack, or choosing a strategy that is identical to that in \(\varGamma \). These cases happen when the cost parameters lie in (suitably defined) Type \(\widetilde{\text {I}}\) and Type \(\widetilde{\text {II}}\) regimes, respectively. The main conclusion of our analysis also holds: The defender obtains a higher utility by proactively defending all vulnerable facilities when the facility-dependent cost parameters lie in type \(\widetilde{\text {I}}\) regimes.

- (2)

Less than perfect defense in addition to facility-dependent cost parameters.

Now consider that the defense on each facility is only successful with probability \(\gamma \in (0,1)\), which is an exogenous technological parameter. For any security effort vector \(\rho \), the actual probability that a facility \(e\) is not compromised when targeted by the attacker is \(\gamma \rho _e\). Again our results on NE and SPE in Sects. 4, 5 can be readily extended to this case. However, the expressions for thresholds for attack probability and security effort level need to be modified. In particular, for \(\varGamma \), in Lemma 3, the threshold attack probability on any facility \(e\in \bar{\mathcal {E}}\) is \(p_{d, e}/\gamma (C_{e}-C_{\emptyset })\). For \(\widetilde{\varGamma }\), the threshold security effort \(\widehat{\rho }_e\) for any vulnerable facility \(e\in \{\mathcal {E}|C_{e}-C_{\emptyset }>p_{d, e}\}\) is \((C_{e}-p_{a,e}-C_{\emptyset })/\gamma (C_{e}-C_{\emptyset })\). If this threshold is higher than 1 for a particular facility, then the defender is not able to deter the attacker from targeting it.

- (3)

Attacker’s ability to target multiple facilities.

If the attacker is not constrained to targeting a single facility, his pure strategy set would be \(S_a=2^{\mathcal {E}}\). Then, for a pure strategy profile \(\left( s_d, s_a\right) \), the set of compromised facilities is given by \(s_a\setminus s_d\), and the usage cost is \(C_{s_a\setminus s_d}\). Unfortunately, our approach cannot be straightforwardly applied to this case. This is because the mixed strategies cannot be equivalently represented as probability vectors with elements representing the probability of each facility being targeted or secured. In fact, for a given attacker’s strategy, one can find two feasible defender’s mixed strategies that induce an identical security effort vector, but result in different players utilities. Hence, the problem of characterizing defender’s equilibrium strategies cannot be reduced to characterizing the equilibrium security effort on each facility. Instead, one would need to account for the attack/defense probabilities on all the subsets of facilities in \(\mathcal {E}\). This problem is beyond the scope of our paper, although a related work [22] has made some progress in this regard.

Finally, we briefly comment on the model where all the three aspects are included. So long as players’ strategy sets are comprised of mixed strategies, the defender’s equilibrium utility in \(\widetilde{\varGamma }\) must be higher or equal to that in \(\varGamma \). This is because in \(\widetilde{\varGamma }\), the defender can always choose the same strategy as that in NE to achieve a utility that is no less than that in \(\varGamma \). Moreover, one can show the existence of cost parameters such that the defender has strictly higher equilibrium utility in SPE than in NE. In particular, consider that the attacker’s cost parameters \(\left( p_{a,e}\right) _{e\in \mathcal {E}}\) satisfy that there is only one vulnerable facility \(\bar{e}\in \mathcal {E}\) such that \(C_{\bar{e}}-C_{\emptyset }>p_{a, \bar{e}}\), and the threshold effort on that facility \(\widehat{\rho }_{\bar{e}}=\left( C_{\bar{e}}-p_{a,\bar{e}}-C_{\emptyset }\right) /\gamma (C_{\bar{e}}-C_{\emptyset })<1\). In this case, if the defense cost \(p_{d, \bar{e}}\) is sufficiently low, then by proactively securing the facility \(\bar{e}\) with the threshold effort \(\widehat{\rho }_{\bar{e}}\), the defender can deter the attack completely and obtain a strictly higher utility in \(\widetilde{\varGamma }\) than that in \(\varGamma \). Thus, in this case, the defender gets the first-mover advantage in equilibrium.

7.2 Rational Learning Dynamics

We now discuss an approach for analyzing the dynamics of usage cost after a security attack. Recall that the attacker–defender model enables us to evaluate the vulnerability of individual facilities to a strategic attack for the purpose of prioritizing defense investments. One can view this model as a way to determine the set of possible post-attack states, denoted \(s\in S{\mathop {=}\limits ^{\varDelta }}\mathcal {E}\cup \{\emptyset \}\). In particular, we consider situations in which the distribution of the system state, denoted \(\theta \in \varDelta (S)\), is determined by an equilibrium of attacker–defender game (\(\varGamma \) or \(\widetilde{\varGamma }\)). In \(\varGamma \), for each \(s\in S\), the probability \(\theta (s)\) is given as follows:

For \(\widetilde{\varGamma }\), the probability distribution \(\theta \) can be analogously defined in terms of \(\widetilde{\sigma }_a^*\) and \(\tilde{\rho }^{*}\).

Let the realized state be \(s=e\); i.e., the facility \(e\in \mathcal {E}\) is compromised by the attacker. If this information is known perfectly to all the users immediately after the attack, they can shift their usage choices in accordance with the new state. Then, the cost resulting from the users’ choices indeed corresponds to the usage cost \(C_s=C_{e}\), which governs the realized payoffs of both attacker and defender. However, from our results (Theorems 1 and 2), it is apparent that the support of equilibrium player strategies (and hence the support of \(\theta \)) can be quite large. Due to inherent limitations in perfectly diagnosing the location of attack, in some situations, the users may not have full knowledge of the realized state. Then, the issues of how users with imperfect information make their decisions in a repeated learning setup and whether or not the long-run usage cost converges to the actual cost \(C_{e}\) become relevant.

To contextualize the above issues, consider the situation in which a transportation system is targeted by an external hacker and that the operation of a single facility is compromised. Furthermore, the nature of attack is such that travelers are not able to immediately know the identity of this facility. This situation can arise when the diagnosis of attack and/or dissemination of information about the attack is imperfect. Examples include cyber-security attacks to transportation facilities that can result in hard-to-detect effects such as compromised traffic signals of a major intersection, or tampering of controllers governing the access to a busy freeway corridor. Then, one can study the problem of learning by rational but imperfectly informed travelers using a repeated routing game model. We now discuss the basic ideas behind the study of this problem. A more rigorous treatment is part of our ongoing work and will be detailed in a subsequent paper.

Let the stages of our repeated routing game be denoted as \(t\in T=\{1, 2, \dots \}\). In this game, travelers are imperfectly informed about the network state. In particular, in each stage \(t\in T\), they maintain a belief about the state \(\theta ^t\). The initial belief \(\theta ^0\) can be different from the prior state distribution \(\theta \). However, we require that \(\theta ^0\) is absolutely continuous with respect to \(\theta \) [32]:

That is, the initial belief of travelers does not rule out any possible state.

The solution concept we use for this repeated game is Markov-perfect Equilibrium (see [37]), in which travelers use routes with the smallest expected cost based on the belief in each stage. Equivalently, the equilibrium routing strategy in stage \(t\) is a Wardrop equilibrium of the stage game with belief \(\theta ^t\) [21]. We also consider that at the end of each stage, travelers receive noisy information of the realized costs on routes that are taken. However, no information is available for routes that are not chosen by any traveler. Based on the received information, travelers update their belief of the state using Bayes’ rule.

We note that numerous learning schemes have been studied in the literature; for example, fictitious play [15, 28, 30]; reinforcement learning [10, 19, 20], and regret minimizations [14, 36]. These learning schemes typically assume that strategies in each stage are determined by a certain function of the history payoff or actions. To explain the learning dynamics in our setup, we consider that in each stage travelers are rational, and they aim to maximize the payoff myopically based on their current belief about other travelers’ strategies. The players update their beliefs based on observed actions on the play path. This so-called rational learning dynamics has been investigated in the literature, e.g., [9, 27, 32, 33]. Our model is different from the ones in the literature in that travelers are uncertain about the payoff functions, but correctly anticipate the opponents’ strategies. Additionally, the information of the payoff in each stage is noisy and limited (only the realized costs on the taken routes are known).

The game can be understood easily via an example of a transportation network in Fig. 1. In each stage \(t\), travelers with inelastic demand \(D\) choose route \(r_1\) (\(e_2-e_1\)) or route \(r_2\) (\(e_3-e_1\)). We denote the equilibrium routing strategy in stage \(t\) as \(q^{t*}(\theta ^t)=\left( q^{t*}_r(\theta ^t)\right) _{r \in \{r_1, r_2\}}\), where \(q^{t*}_r(\theta ^t)\) is the demand of travelers using route r given the belief \(\theta ^t\). Hence, aggregate flow on edge \(e_2\) (resp. \(e_3\)) is \(w_2^{t*}(\theta ^t)=q_1^{t*}(\theta ^t)\) (resp. \(w_3^{t*}(\theta ^t)=q_2^{t*}(\theta ^t)\)), and the aggregate flow on edge \(e_1\) is \(w_1^{t*}(\theta ^t)=D\). Each stage game is a congestion game and hence admits a potential function. The equilibrium routing strategy \(q^{t*}(\theta ^t)\) can be computed efficiently for this game. Moreover, in each stage, the equilibrium is essentially unique in that the equilibrium edge load is unique for a given belief [44].

The realized cost on each edge \(e\in \mathcal {E}\), denoted \(c_e^s(w_e^{t*}(\theta ^t))\), is equal to the cost (shown in Fig. 1 for the example network) plus a random variable \(\epsilon _e\):

We illustrate two cases that can arise in rational learning:

Long-run usage cost is equal to\(C_s\)for any\(s\in \{e_1, e_2, e_3, \emptyset \}\).

Consider the case where the initial belief is \(\theta (e_1)=1/12\), \(\theta (e_2)=1/3\), \(\theta (e_3)=1/12\), \(\theta (\emptyset )=1/2\) (The initial belief can be any probability vector which satisfies the continuity assumption). For any \(e\in \mathcal {E}\), the random variable \(\epsilon _e\) in (44) is distributed as \(U[-\,3, 3]\). The total demand \(D=10\). Figure 3 shows how the belief of each state evolves. We see that eventually travelers learn the true state, and hence the long-run usage cost converges to the actual post-attack usage cost \(C_s\), even though initially all travelers are imperfectly informed about the state.

Long-run usage cost is higher than\(C_s\).

Consider the case when, as a result of attack on edge \(e_2\), the cost function on \(e_2\) changes to \(\ell _2^\otimes (w_2)=7/3w_2+50\). The total demand is \(D=5\), and the initial belief is \(\theta (e_1)=1/12\), \(\theta (e_2)=1/3\), \(\theta (e_3)=1/12\), \(\theta (\emptyset )=1/2\). Starting from this initial belief, travelers exclusively take route \(r_2\), and hence they do not obtain any information about \(e_2\). Even when the realized state is \(s=\emptyset \), travelers end up repeatedly taking \(r_2\) as if \(e_2\) is compromised. Thus, the long-run average cost is \(C_{e_2}\), which is higher than the cost corresponding to the true state \(C_{\emptyset }\). Therefore, rational learning dynamics can lead to long-run inefficiency. We illustrate the equilibrium routing strategies and beliefs in each stage in Fig. 4a, b, respectively.

These cases illustrate that if the post-attack state is not perfectly known by the users of the system, then the cost experienced by the users depends on the learning dynamics induced by the repeated play of rational users. Particularly, the learning dynamics can induce a higher usage cost in the long run in comparison with the cost corresponding to the true state. Following previously known results [31], one can argue that if sufficient amount of “off-equilibrium” experiments are conducted by travelers, then the learning will converge to Wardrop equilibrium with the true state. However, such experiments are in general not costless.

As a final remark, we note another implication of proactive defense strategy in ranges of attack/ defense cost parameters where the first-mover advantage holds. In particular, when the cost parameters are in the sets L and M as given in (41)–(42), the attack is completely deterred in the sequential game \(\widetilde{\varGamma }\) and there is no uncertainty in the realized state. In such a situation, one does not need to consider uncertainty in the travelers’ belief about the true state and the issue of long-run inefficiency due to learning behavior does not arise.

Notes

For the sake of brevity, we omit the discussion of equilibrium strategies when cost parameters lie exactly on the regime boundary, although this case can be addressed using the approach developed in this article.

References

Acemoglu D, Malekian A, Ozdaglar A (2016) Network security and contagion. J Econ Theory 166:536–585

Alderson DL, Brown GG, Carlyle WM, Wood RK (2011) Solving defender-attacker-defender models for infrastructure defense. Technical report, Naval Postgraduate School, Monterey, CA, Department of Operations Research

Alderson DL, Brown GG, Carlyle WM, Wood RK (2017) Assessing and improving the operational resilience of a large highway infrastructure system to worst-case losses. Transp Sci 52(4):739–1034

Alpcan T, Basar T (2003) A game theoretic approach to decision and analysis in network intrusion detection. In: Proceedings of the 42nd IEEE conference on decision and control, 2003, IEEE. 3:2595–2600

Alpcan T, Başar T (2010) Network security: a decision and game-theoretic approach. Cambridge University Press, Cambridge

Amin S, Schwartz GA, Sastry SS (2013) Security of interdependent and identical networked control systems. Automatica 49(1):186–192

Bagwell K (1995) Commitment and observability in games. Games Econ Behav 8(2):271–280

Başar T, Olsder GJ (1998) Dynamic noncooperative game theory. SIAM, Philadelphia

Battigalli P, Gilli M, Molinari MC (1992) Learning and convergence to equilibrium in repeated strategic interactions: an introductory survey. Università Commerciale” L. Bocconi”, Istituto di Economia Politica

Beggs AW (2005) On the convergence of reinforcement learning. J Econ Theory 122(1):1–36

Bell MGH, Kanturska U, Schmöcker J-D, Fonzone A (2008) Attacker-defender models and road network vulnerability. Philos Trans R Soc Lond A: Math Phys Eng Sci 366(1872):1893–1906

Bier V, Oliveros S, Samuelson L (2007) Choosing what to protect: strategic defensive allocation against an unknown attacker. J Public Econ Theory 9(4):563–587

Bier VM, Hausken K (2013) Defending and attacking a network of two arcs subject to traffic congestion. Reliabil Eng Syst Saf 112:214–224