Abstract

In this paper we carry out a comprehensive analysis of the model of oligopoly with sticky prices with full analysis of prices’ behaviour outside their steady-state level in the infinite horizon case. An exhaustive proof of optimality is presented in both open loop and closed loop cases.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Dynamic oligopoly models have a long history, starting from Clemhout et al. [12] and encompassing many issues, including, among other things, advertisement (e.g. Cellini and Lambertini [8]), adjustment costs (e.g. Kamp and Perloff [24], Jun and Viwes [23]), goodwill (e.g. Benchekroun [7]), pricing (see e.g. Jørgensen [22]), hierarchical structures (e.g. Chutani and Sethi [11]), nonstandard demand structure (e.g. Wiszniewska-Matyszkiel [29] with demand derived from dynamic optimization of consumers at a specific market) or a combination of several of these aspects (e.g. De Cesare and Di Liddo [15], Dockner and Feichtinger [16] and some papers cited previously). One of important issues considered in such models is price stickiness.

In this paper we do a comprehensive analysis of the model of oligopoly with sticky prices, first proposed by Simaan and Takayama [27]. The analysis of the model, using game theoretic tools, was afterwards continued by Fershtman and Kamien [19, 20], Cellini and Lambertini [9] and further generalised in many directions by, among others, Benchekroun [6], Cellini and Lambertini [10], Dockner and Gaunersdorfer [17] and Tsutsui and Mino [28].

Comprehensive reviews of differential games modelling oligopolies including models with sticky prices can be found in, among others, Dockner et al. [13] and Esfahani [18].

Both open loop and feedback information structures in the infinite horizon case were considered in [19] and [9]. In both papers, the analysis of the open-loop problem was restricted to the calculation of steady states only. When the feedback case is considered, off-steady-state behaviour was considered only in [19] and only for 2 players, but even in this case the focus was on the steady state. Consequently, the only results that could be compared in the \(N\) players model with infinite horizon were steady states for the open loop and feedback cases. This seems very partial solution of the problem.

First, the optimal stationary pair (costate variable, price) and, consequently, optimal stationary pair (production, price) for the open-loop case in all the previous papers was proved to be a saddle point, so unstable in the sense of Lapunov.

In such a case, an obvious expectation for almost all initial conditions is that solutions would not converge to this steady state. However, we shall prove that this is not going to happen and that the lack of stability is only apparent. Moreover, previous calculations are correct. These statements may seem a contradiction and, therefore, we shall return to this issue and give more emphasis on it in Remark 1 in Sect. 3.1. Here we only emphasise that stability results could not be proved without precise off-steady-state analysis, which we do in this paper.

Our calculations allow us to compare solutions of both types for the same initial price. Note that comparing trajectories of the open loop and feedback case solutions originating from the same initial price is impossible if we have only information about their steady states, which differ, since at least one trajectory in such a case is not stationary. Therefore, an analysis of the off-steady-state behaviour is really needed.

Another issue, important to obtain completeness of reasoning, is an appropriate infinite horizon terminal condition. As we can see from this paper, in order to have the standard terminal condition for the Bellman equation fulfilled in the feedback case, we have to impose additional constraints on the initial problem. Also in the open-loop case, applying an appropriate form of Pontryagin maximum principle (as it is well known, the standard maximum principle does not have to be fulfilled in the infinite horizon case), is nontrivial, even using the latest findings in infinite horizon optimal control theory.

To address these two issues, in this paper we concentrate on the off-steady-state analysis of the model, both in the open loop and the feedback information structure cases, and we give a rigorous proof, including applicability of a generalisation of a Pontryagin maximum principle and checking terminal conditions in both.

When the feedback case is considered, we use the standard Bellman equation stated in e.g. Zabczyk [30], since the value function is proved to be smooth.Footnote 1

2 The Model

We consider a model of an oligopoly consisting of \(N\ge 2\) identical firms, each of them with a cost function \(C_i(q)=cq +\frac{q^2}{2}\). At this stage we only assume that amounts, \(q\), are nonnegative.

We assume that the inverse demand function is \(P(q_1,\dots ,q_N)=A-\sum _{i=1}^N q_i\), with \(q_i\) being the amount of production of \(i\)-th firm and \(A\) a positive constant substantially greater than \(c\). This defines how the price would react to firms’ production levels if the adjustment was immediate.

However, since prices are sticky, they adjust according to an equation of the form \(\dot{p}=s(P-p)\), where \(s>0\) denotes the speed of adjustment. This equation will be fully specified in Sect. 2.2.

2.1 The Static Case

If we consider the static case, with prices adjusting immediately, then each firm maximises over its own strategy \(q_i\) the instantaneous payoff \(pq_i -C(q_i)\), where the price \(p\) can be treated in two ways.

First, we can look for a standard Nash equilibrium solution. Generally, a profile of players’ strategies is a Nash equilibrium if no player can increase his payoff by changing strategy unless the remaining players change their strategies. By applying the concept of Nash equilibrium to the static oligopoly model with strategies being production levels, we obtain the standard Cournot equilibrium, which is often referred to as the Cournot-Nash equilibrium.

At the Cournot-Nash equilibrium, each of the firms knows its influence on the price, therefore, it maximises \(P(q_1,\dots ,q_N)q_i-C(q_i)\). The resulting simultaneous optimization of each firm, as it can be easily calculated, results in the equilibrium production of each firm \(q_i^{\text {CN}}=\frac{A-c}{N+2}\) and the price level \(p^{\text {CN}}=\frac{2A+Nc}{N+2}\).

For comparison, at the competitive equilibrium, in which firms are price takers, and maximise with \(p\) treated as a constant, production of each firm \(q_i^{\text {Comp}}=\frac{A-c}{N+1}\) and the price level \(p^{\text {Comp}}=\frac{A+Nc}{N+1}\).

2.2 Dynamics Resulting from Sticky Prices

Now, we introduce dynamics reflecting price stickiness.

Using open-loop strategies, i.e. measurable functions \(q_i:\mathbb {R}_+ \rightarrow \mathbb {R}_+\), we can formulate the equation which determines the price as

which is assumed to hold almost everywhere, as we do not assume a priori continuity of \(q_i\).

Given a measurable \(q_i\), the corresponding trajectory of price is absolutely continuous. At this stage we do not have to assume that prices are nonnegative. Obviously, we shall prove in Proposition 1 that at every equilibrium they are positive.

We denote the set of open-loop strategies by \(\fancyscript{S}^{\mathrm {OL}}\). Players maximise discounted accumulated payoff defined as follows. For open-loop strategies \(q_1,q_2,\cdots ,q_N\in \fancyscript{S}^{\mathrm {OL}}\), the payoff function of player \(i\) is described by

where \(p\) is a solution to Eq. (1).

3 Open-Loop Nash Equilibria

It is well known that generally in the infinite time horizon the standard transversality condition \(\lambda (t) {{\mathrm{e}}}^{-\rho t}\rightarrow 0\) (where \(\lambda \) is a costate variable) is not necessary. There are many papers with counterexamples to this transversality condition, see e.g. Halkin [21] and Michel [25].

For the specific case considered in this paper, we can prove that the standard transversality condition is necessary. As our problem is nonautonomous, the well known Aseev and Kryazhimskiy [1, 2] results cannot be directly applied. However, we use a very general result of Aseev and Veliov [3, 4], which extends the Pontryagin maximum principle and can be applied to nonautonomous infinite horizon optimal control problems.

As we prove, applying these necessary conditions to the optimal control arising from calculation of the open-loop Nash equilibrium, and given the initial price, restricts the set of possible solutions to a singleton, so it is enough to check that the optimal solution exists to prove sufficiency of the condition. To this end, we use existence of an optimal solution proven by Balder [5].

This issue is tackled in Appendix. Necessary conditions generalising standard Pontryagin maximum principle from Aseev and Veliov [3, 4] and Balder’s [5] existence theorem are stated in sections “Aseev and Veliov Extension of the Pontryagin Maximum Principle” and “Existence of Optimal Solution”, while we prove our model fulfils the assumptions of those theorems in sections “Checking Assumptions for Theorem 5 for the Model Described in Sect. 2” and “Checking Assumptions for Theorem 6 for the Model Described in Sect. 2”, respectively.

This also allows us to determine, what is not so obvious in infinite horizon optimal control problems and differential games, not only the terminal condition for the costate variable at infinity, but also the initial condition for it, which, in turn, determines uniquely what is the trajectory of the state variable and the optimal control for every initial value of the state variable—the initial price.

In the open-loop Nash equilibrium, each player \(i\) faces the optimization problem, given strategies of the remaining players:

for \(J^i\) described by Eq. (2).

3.1 Application of the Necessary Conditions

In this section we are going to use the necessary condition given by Theorem 5 to derive the optimal production and price at Nash equilibria for the open-loop case.

However, to simplify further calculations, instead of the usual hamiltonian we use present value hamiltonian \(H^{\text {PV}}(t,x,u,\lambda )=g(t,x,u)+<f(t,x,u),\lambda >\) (using notation of section “Aseev and Veliov Extension of the Pontryagin Maximum Principle”), where the new costate variable \(\lambda (t)=e^{\rho t}\Psi (t)\). We rewrite the maximum principle formulated in Theorem 5 for optimization of payoff by player \(i\) with fixed strategies of the remaining players \(q_j\in \fancyscript{S}^{\mathrm {OL}}\).

The present value hamiltonian is of the form

First, we prove two technical lemmata, being immediate result of application of the necessary conditions given by Theorem 5.

Lemma 1

Let \(i\in \{1,2,\cdots ,N\}\) be an arbitrary number and let \(q_j\in \fancyscript{S}^{\mathrm {OL}}\) for \(j\ne i\) be any strategies such that the trajectory corresponding to the strategy profile \((q_1,\dots ,0,\dots ,q_N)\), where \(0\) is on the \(i\)-th coordinate, is nonnegative.

If \(q_i^* \in {{\mathrm{Argmax}}}_{q_i\in \fancyscript{S}^{\mathrm {OL}}} J^i_{0,x_0}(q_1,\dots ,q_N)\), then there exists an absolutely continuous \(\lambda _i :\mathbb {R}_+ \rightarrow \mathbb {R}\) such that for a.e. \(t\)

and

Proof

As it is proved in section “Checking Assumptions for Theorem 5 for the Model Described in Sect. 2” the assumptions of the Aseev-Veliov maximum principle—Theorem 5 are fulfilled.

Formulae (5) and (6) are immediate application of relations of Theorem 5 with \(\psi ^*(t)=\lambda (t)e^{-\rho t}\). To prove nonnegativity of \(\lambda \) and terminal condition (7) we use the terminal condition given in Theorem 5. We obtain that \(I(t)=\int _t^{\infty } e^{-\rho w}e^{-sw}q^*_i(w) dw\) converges absolutely and that \(\lambda \) fulfils

Given the constraints on the control parameters which we can impose by Proposition 1 from section “Checking Assumptions for Theorem 5 for the Model Described in Sect. 2” we have

and

Therefore, \(\lambda (t)e^{-\rho t} \rightarrow 0\) for all \(t\) and it is nonnegative. \(\square \)

Lemma 2

Under the assumptions of Lemma 1, the necessary condition for \(q_i\) to be an optimal control for optimization problem given by Eq. (2) with dynamics of \(p\) given by Eq. (1) is as follows.

There exists an absolutely continuous \(\lambda _i\) such that

and

Proof

It is immediate as a result of application of maximum principle to our problem, given by Lemma 1. \(\square \)

Lemma 3

At every open-loop Nash equilibrium there exist costate variables \(\lambda _i \) such that for every \(t\ge 0\), \(\lambda _i (t)>0\).

Proof

By Lemma 1 the adjoint variable \(\lambda _i(t)\) is nonnegative.

Assume that \(\lambda _i(\bar{t})=0\) for some \(\bar{t}>0\). It implies \(\forall w\ge \bar{t}\), \(q_i(w)=0\). Suppose \(p(w)>c\) for some \(w\ge \bar{t}\). In this case we can increase payoff by increasing \(q_i\) to \(\epsilon \) on some small interval \([w,w+\delta ]\). This contradicts the assumption that we are at the Nash equilibrium.

Now we shall concentrate on symmetric Nash equilibria. Note that if \(p_0 \ge c\), then at equilibrium it is impossible to have \(p(w)< c\) for any \(w\), since in such a case instantaneous profit at some interval is negative for positive \(q_i\) for \(p(w)\le c\), which implies that optimization of profit results in \(q_i(w)=0\) for \(p(w)\le c\). Since the same analysis holds for all players, \(p(w)=c\) implies \(\dot{p} (w)>0\).

So the only case left is \(p(w)=c\) for all \(w\ge \bar{t}\), which we have just excluded. \(\square \)

Note, that in the symmetric case, if all costate variables are identical, the formula (10) from Lemma 2 naturally divides the first quadrant of the plane \((\lambda ,p)\) into two sets

and

In fact, we have \(q(t)=0\) if \((\lambda (t),p(t))\in \Omega _1\) and \(q(t)>0\) if \((\lambda (t),p(t))\in \Omega _2\). Later, we will show that in the case of a symmetric open-loop Nash equilibrium, the costate variable and the strategy fulfil the following system of ODEs

Before stating and proving the theorem considering the case of a symmetric open-loop Nash equilibrium, we prove the following result.

Theorem 1

Let \((\lambda (t),p(t))\) be a solution to Eq. (13) with initial value \((\lambda _0,p_0)\). Then \(\lambda (t){{\mathrm{e}}}^{-\rho t}>0\) and converges to \(0\) as \(t\rightarrow \infty \) if and only if \((\lambda _0,p_0)\in \Gamma \), where \(\Gamma \) is a stable manifold of the steady-state \((p^{\mathrm {OL},*},\lambda ^*)\) of Eq. (13).

The point \((p^{\mathrm {OL},*},\lambda ^*)\in \Omega _2\) (for \(\Omega _1, \Omega _2\) defined in (11) and (12)) and

Moreover, the curve \(\Gamma \) intersects with the line \(p = s\lambda +c\) at point \((\bar{\lambda }, \bar{p})\) with

for \(\Delta \) defined by

The stable manifold \(\Gamma \) is given by the following formulae \(\Gamma = \Gamma _1\cup \{(\lambda ^*,p^{\mathrm {OL},*})\}\cup \Gamma _2\) with

while

and

with

Proof

First note, null-clines for Eq. (13) are the following. For the first variable (i.e. \(p\)) the null-cline reads

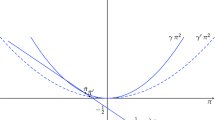

(see green thick line in Fig. 1). The null-cline for the second variable reads

(see red thick line in Fig. 1). It is easy to see that the slope of the \(p\)-null-cline is smaller than the slope of the line \(p=\lambda s+c\). Thus, the system (13) has exactly one steady state in the positive quadrant (to which our solution belongs). Moreover, the steady state lies in \(\Omega _2\). A simple calculation gives (14).

The phase portrait of Eq. (13). Solid red line with vertical bars denotes \(\lambda \)-null-cline. Solid green line with horizontal bars denotes \(p\)-null-cline. Dashed blue line is \(p=s\lambda +c\) that divides the first quarter into region \(\Omega _1\) (below this line) and \(\Omega _2\) (above it). Dark brown thick lines with arrows denote a stable manifold of the steady state, while lighter brown thick lines with arrows denote an unstable manifold (Color figure online)

The phase diagram of Eq. (13) is presented in Fig. 1.

In the region \(\Omega _2\), Eq. (13) reads

Since \(\det {B_2}<0\), the matrix \(B_2\) has two real eigenvalues of opposite signs, so the steady state is a saddle point.

Let \(\Gamma _1\) be a part of the stable manifold of the steady state for \(p<p^{\mathrm {OL},*}\) and \(\Gamma _2\) be a part of the stable manifold of the steady state for \(p>p^{\mathrm {OL},*}\). Looking at the phase portrait we can deduce the following behaviour of the solution to Eq. (13). If the initial point \((\lambda _0,p_0)\) lies left to the stable manifold of the steady state (a thick dark brown line in Fig. 1), then there exists \(\bar{t}>0\) such that \(\lambda (\bar{t} )\le 0\).

On the other hand, if the initial point \((\lambda _0,p_0)\) lies right to the stable manifold of the steady state, then the solution enters eventually the region \(\Omega _1\) with \(\lambda >(A-c)/s\). Thus, the asymptotic of the solution as \(t\rightarrow +\infty \) is

Therefore, \(\lambda (t){{\mathrm{e}}}^{-\rho t}\) does not converge to \(0\).

It remains to calculate the stable manifold of the steady state. The stable manifold is connected with negative eigenvalues of \(B_2\), which reads

for \(\Delta \) defined by (17). The eigenvector reads

Thus, the upper part \(\Gamma _2\) of the stable manifold is given by

In order to find the bottom part of \(\Gamma _1\) of the stable manifold we find an intersection of the curve

with the line \(\rho =s\lambda +c\). This curves intersect at \(\zeta =\bar{\zeta }\) such that \(p^{\mathrm {OL},*}-s\lambda ^*-c = \bar{\zeta }\Bigl (\rho +(N+1)s+\sqrt{\Delta }\Bigr )\). A straightforward calculation leads to (18).

Plugging \(\bar{\zeta }\) given by (18) into (20) we obtain

that is Eq. (16), and \(\bar{p} =p^{\mathrm {OL},*}+\bar{\zeta }\Bigl (\rho +(N+3)s+\sqrt{\Delta }\Bigr )\). A tedious calculation leads to (15).

Now we calculate the part of stable manifold of the steady state that lies in \(\Omega _1\). In \(\Omega _1\) the solution to (13) reads

Taking \((\lambda _0,p_0) = (\bar{\lambda },\bar{p})\) and \(t<0\) we have the part of \(\Gamma _1\cap \Omega _1\) reads

which completes the proof. \(\square \)

Corollary 1

If \((\lambda _0,p_0)\in \Gamma \) then solution \(p(t)\) of Eq. (13) is given by the following formulae. If \(p_0<\bar{p}\) for \(\bar{p}\) given by Eq. (15), then

where

and \(\eta \) is given by (19).

If \(p_0\ge \bar{p}\) then

Now we are ready to formulate theorem considering the symmetric open loop Nash equilibrium.

Theorem 2

The symmetric open-loop Nash equilibrium strategies \(q^{\text {OL}}_i\) fulfil for a.e. \(t\)

where \(\bar{p}\) is given by (15).

Proof

At a Nash equilibrium every player maximises his payoff treating strategies of the other players as given. Let us consider a player \(i\). The formula for his optimal strategy \(q_i\) and costate variable \(\lambda _i\) is derived in Lemma 2.

If we consider a symmetric open-loop Nash equilibrium, then all \(q_i\) are identical, and, therefore, all the costate variables of players are identical, so at this stage we can skip the subscript \(i\) in \(\lambda _i\) and substitute \(q_j(t)=q_i(t)\), which implies the state equation becomes \(\dot{p}(t)=s(A-Nq_i(t)-p(t))\).

The condition appearing in Eq. (10) splits the first quadrant of \((\lambda ,p)\) plane into two sets: \(\Omega _1\) defined by Eq. (11), and \(\Omega _2\) defined by Eq. (12). Using them, and Theorem 1 we can deduce that \(p\) and \(q\) fulfil Eq. (13). \(\square \)

Remark 1

We have proved that the steady-state \((\lambda ^*, p^{\mathrm {OL},*})\) from the necessary conditions of each player’s optimization problem is globally asymptotically stable on the set of possible initial conditions (fulfilling these necessary conditions).

We emphasise this fact, since the previous papers on dynamic oligopolies with infinite horizon and sticky prices, which considered only steady-state analysis in the open-loop case, ended the analysis by conclusion that the steady state is a saddle point, which means lack of stability in the sense of Lapunov. This lack of Lapunov stability was on the whole space \((\lambda , p)\) or \((q,p)\). Our calculations, mainly Theorem 1, prove that there is a unique costate variable \(\lambda _0\) for given \(p_0\) for which the necessary conditions are fulfilled and this \(\lambda _0\) places the pair \((\lambda _0, p_0)\) on the stable saddle path, which implies that whatever the initial \(p_0\) is, \(\lambda , p\), and consequently, \(q\) converges to their steady states.

Now, we prove the following Lemma that we will use instead of checking sufficiency condition for the candidate for optimal control.

Lemma 4

Let us consider any player \(i\) and fixed strategies of the remaining players \(q_j\in \fancyscript{S}^{\mathrm {OL}}\) for \(j\ne i\) such that the trajectory corresponding to the strategy profile \((q_1,\dots ,0,\dots ,q_N)\) (with \(0\) in the \(i\)-th coordinate) is nonnegative. Then the set of optimal solutions of the optimization problem of player \(i\) is a singleton.

Proof

The optimal control exists by Balder’s existence Theorem 6 (see section “Existence of Optimal Solution”).

The necessary condition for \(q_i\) to be an optimal control is, by (6)

therefore, analogously to Theorem 1, we prove that there is a unique path fulfilling the necessary conditions.

Indeed, the matrix \(B\) which appears in the costate-state linear differential equation resulting from the necessary conditions given by Lemma 1, which has the form

where \(b(t)\) is a measurable function and

has two real eigenvalues \(\tilde{\eta }_1\) and \(\tilde{\eta }_2\) of opposite signs and the positive one, \(\tilde{\eta }_1\), is greater than \(\rho \). Thus, each solution to (25) is of the form

where \(\begin{bmatrix}\tilde{\lambda }\\\tilde{p}\end{bmatrix}\) is a solution to a homogenous version of Eq. (25). Because we need to have \(\lambda (t){{\mathrm{e}}}^{-\rho t}\) to be bounded and nonnegative (in order the terminal condition to be fulfilled), the solution \(\begin{bmatrix}\tilde{\lambda }\\\tilde{p}\end{bmatrix}\) has to belong to the subspace of solutions connected with negative eigenvalue \(\tilde{\eta }_2\). As this subspace is one dimensional, this solution is chosen uniquely for any given \(p_0\). This yields \(q_i\) is unique. \(\square \)

Theorem 3

There is a unique open-loop Nash equilibrium and it is symmetric.

If \(p_0< \bar{p}\) for \(\bar{p}\) given by (15), then the equilibrium production is given by

where \(\bar{t}\) is given by (22), \(\eta \) is given by (19), \(\bar{p}\) and \(\bar{\lambda }\) are given by (15) and (16), respectively.

Otherwise,

The equilibrium price level is given by Eq. (21) for \(p_0< \bar{p}\) and by Eq. (23) otherwise.

Proof

First, we prove uniqueness. By Proposition 1, \(p(t)\) is bounded and positive. Besides, \(p\) is a continuous function. Note that the equation for \(\lambda _i\) is identical for each \(i\):

Therefore, by Lemma 8, Eq. (28) has at most one solution which is bounded for \(t \ge 0\), while all unbounded solutions are either negative or they do not fulfil the terminal condition \(\lambda _i {{\mathrm{e}}}^{-\rho t}\rightarrow 0\). This implies that every open-loop Nash equilibrium, if it exists, is symmetric.

Further, we calculate the equilibrium. By Corollary 1, for \(p_0<\bar{p}\), \(p\) is given by Eq. (21), while in the opposite case by Eq. (23). Thus, to derive formula for \(q^{\mathrm {OL}}\) we consider two cases.

Consider the case \(p_0<\bar{p}\), where \(\bar{p}\) is defined by (15). By Theorem 1, \((\lambda ,p)\in \Gamma _1\). Using this relation, Eq. (21) and equation for \(q^{\mathrm {OL}}\), Eq. (24), we deduce that \(q^{\mathrm {OL}}\) is given by Eq. (26). On the other hand, if \(p_0\ge \bar{p}\) then using the same arguments as above and formula Eq. (23) instead of Eq. (21) we obtain

where \(p^{\mathrm {OL},*}\) and \(\lambda ^*\) are given by Eq. (14). We have \((\lambda _0,p_0)\in \Gamma _1\cap \Omega _2\) and therefore,

with \(\Delta \) defined in Eq. (19). Therefore, if \(p_0\ge \bar{p}\), we arrive at Eq. (27).

So we have obtained a candidate for optimal control with the corresponding state and costate variables trajectories fulfilling the necessary condition. In Lemma 4, we have proved that there exists a unique optimal control for any strategies of the remaining players such that the trajectory corresponding to the strategy profile \((q_1,\dots ,0,\dots ,q_N)\) is nonnegative. Therefore, a strategy which fulfils the necessary condition is the only optimal control. Applying this to all players, together with the fact that there is only one symmetric profile fulfilling the necessary condition, implies that there is exactly one symmetric Nash equilibrium. \(\square \)

4 Feedback Nash Equilibria

We consider the same problem, but with feedback information structure, i.e. players’ strategies dependent on the state variable, price, only. This is a situation in which each firm chooses the level of production based on a decision rule that depends on the market price. Consequently, the equation determining the price becomes

while the objective function of player \(i\) is

Generally, to guarantee the existence and uniqueness of the solution to Eq. (29), regularity assumptions on \(q_i\) stronger than in the open-loop case are required. Usually, at least continuity of \(q_i\) is assumed a priori as a part of definition of a feedback strategy. In Fershtman and Kamien [19] as well as Cellini and Lambertini [8], even differentiability of \(q_i\) was assumed a priori. We shall prove that this assumption is not fulfilled in the case when the initial price is below a certain level.

It is worth emphasising that assuming even only continuity is in many cases too restrictive, since it excludes, among others, so called bang-bang solutions, which are often optimal. Therefore, the only thing we assume a priori besides measurability is that a solution to Eq. (29) exists for initial conditions in \([c,+\infty )\) and it is unique (if a solution is not continuous at certain point we assume Eq. (29) holds almost everywhere). As the symbol of all feedback strategies we use \(\fancyscript{S}^{\text {F}}\).

Here, we want to mention that in some works this form of information structure and, consequently, strategies is called closed loop. In this paper, as in most papers, we shall understand by closed-loop information structure as consisting of both time and state variable, so using the notation of closed-loop strategy \(q_i(t,p)\) we can encompass, as trivial cases, both open loop and feedback strategies.

We can formulate the following result.

Theorem 4

Let

and

with

The feedback equilibrium is defined by

while the corresponding price trajectory is defined by

To prove Theorem 4, we need the following sequence of lemmata.

Lemma 5

-

(a)

Consider a dynamic optimization problem of a player \(i\) with the feedback information structure, with dynamics of prices described by (29) and the objective function (30), but with the set of possible control parameters available at a price \(p\) extended to some interval \([-B|p|-b,B|p|+b]\), with some constants \(B,b>0\). Assume that the strategies of the remaining players are described by \(\hat{q}(p)=p(1-sk)-c-sh\) for constants \(k\), \(h\) given by (33) and (34), respectively, and \(g\) given by

$$\begin{aligned} g=\frac{c^{2}-sh(sh-2A-2N(c+sh))}{2\rho }. \end{aligned}$$(37)Then, there exists \(B\), \(b>0\) such that the quadratic function

$$\begin{aligned} V_{i}(p)=\frac{kp^{2}}{2}+hp+g \end{aligned}$$is the value function of this optimization problem, while \(\hat{q}\) is the optimal control.

-

(b)

For these \(B\), \(b\), \(q_i\equiv \hat{q}\) for \(k_i=k\), \(h_i=h\) defines a symmetric feedback Nash equilibrium. Moreover, equality \(\hat{q}(\tilde{p})=0\) holds. The corresponding price level fulfils

$$\begin{aligned} p^{\mathrm {F}}(t)=\left[ p_{0}-\frac{A+N(c+sh)}{N(1-sk)+1}\right] {{\mathrm{e}}}^{s(Nsk-N-1)t}+\frac{A+N(c+sh)}{N(1-sk)+1}. \end{aligned}$$(38)

Proof

Assume that some quadratic function (with unknown constants)

is the value function of optimization problem of a player \(i\).

To prove this, we use a standard textbook sufficient condition stating that if a \(C^1\) function \(V_i\) fulfils the infinite horizon Bellman equation for problems with discounting (see e.g. Zabczyk [30])

with a terminal condition saying that for every admissible trajectory \(p\)

then it is the value function of the optimization problem of a player \(i\) at a feedback Nash equilibrium and the \(q_i(p)\) at which the maxima attained define the optimal control.

The maximum of the right-hand side of the Bellman Eq. (39) is attained at

Because we only look at symmetric equilibria, let us assume that \(g_{i}\equiv g\), \(k_{i}\equiv k\), \(h_{i}\equiv h\) and \(q_{i}\equiv q^\mathrm{cand}\). We can rewrite (39) as

Now let us substitute \(q^{\text {cand}}_{i}\) calculated in (41) into (42). After ordering we get

The above equation is satisfied for all values of \(p\) if and only if all coefficients are equal to zero. Therefore, we get the following system of three equations with three variables (\(g\), \(k\) and \(h\)):

Solving this system, we obtain

We consider the case with minus before square root in the expression for \(k\) (since, unlike the other one, it can imply fulfilment of the terminal condition (40)), and we obtain formulae (37), (34) and (33).

The price corresponding to \(q^{\text {cand}}\) calculated in (41) is

for \(k\) and \(h\) defined by (33) and (34), respectively.

Note that \(2s(Nsk-N-1)t\le \rho \), which implies that \({{\mathrm{e}}}^{\rho t}V(p^{\text {cand}}(t))\rightarrow 0\). Therefore, we can take \(B=1-sk+\epsilon \) for a small \(\epsilon \) (we can easily check that \(1-sk>0\)) and an arbitrary \(b>|c+sh|\), then we have both \({{\mathrm{e}}}^{\rho t}V(p(t))\rightarrow 0\) for every admissible trajectory, which means that the terminal condition (40) is fulfilled and \(q^{\text {cand}}\) belongs to the set of admissible controls \(|q_i|\le B|p|+b\). This ends the proof of (a).

The point (b) follows immediately. \(\square \)

Lemma 6

-

(a)

Consider a dynamic optimization problem of a player \(i\) with feedback information structure. Assume that the strategies of the remaining players are described by \( \hat{q}(p)=p(1-sk)-c-sh\) for \(k\), \(h\) defined by (33) and (34), respectively. For \(p\ge \tilde{p}\), where \(\tilde{p}\) is defined by (31) the value function fulfils \(V_{i}(p)=V_i^+(p)\) for \(V_i^+=\frac{k p^2}{2}+hp+q\) defined in Lemma 5, while the optimal control is

$$\begin{aligned} q_i(p)=q^{\text {cand}}(p) \end{aligned}$$ -

(b)

The equation

$$\begin{aligned} q_i(p)=q^{\text {cand}}(p) \text { for } p\ge \tilde{p}, \end{aligned}$$holds at a symmetric Nash equilibrium.

Proof

-

(a)

If the remaining players choose \(q^{\text {cand}}\), then \(q^{\text {cand}}\) is the optimal control over a larger class of controls, since \([-B|p|-b,B|p|+b]\) contains \([0,q_{\max }]\) (by Proposition 1, this set of control parameters leads to results equivalent to the initial case with the set of control parameters \(\mathbb {R}_+\)). By (38), if \(p_0\ge \tilde{p}\), then for all \(t\ge 0\), the corresponding trajectory fulfils \(p(t)\ge \tilde{p}\). The price \(\tilde{p}\) is the threshold price such that for \(p\ge \tilde{p}\), \(q^{\text {cand}}\ge 0\), otherwise it is negative. To conclude, the optimal control for analogous optimization problem with larger set of controls is in this case contained in our set of controls, so it is optimal in our optimization problem. The point (b) follows immediately.\(\square \)

Lemma 7

-

(a)

Consider a dynamic optimization problem of a player \(i\) with feedback information structure. Assume that the strategies of the remaining players are \(q^{\text {F}}_i\) defined by Eq. (35). If the optimal control of a player \(i\) is also given by (35) , then the value function fulfils

$$\begin{aligned} V_{i}(p)={\left\{ \begin{array}{ll} \frac{kp^{2}}{2}+hp+g &{} \text { for } p\ge \tilde{p},\\ (A-p)^{-\frac{\rho }{s}}(A-\tilde{p})^{\frac{\rho }{s}}\left( \frac{k\tilde{p}^{2}}{2}+h\tilde{p}+g\right) &{} \text{ otherwise. } \end{array}\right. } \end{aligned}$$(43) -

(b)

The function \(V_i\) defined this way is continuous and continuously differentiable.

Proof

-

(a)

In this case the set of \(q_i\) for which the maximum of right-hand side of the Bellman Eq. (39) is calculated is \([0,q_{\max }]\). By Lemma 6, for \(p\ge \tilde{p}\), the value function fulfils \(V_{i}(p)=V_i^+(p)=\frac{k\tilde{p}^{2}}{2}+h\tilde{p}+g\) and the optimal control for these \(p\) coincides with \(q_i^{\text {F}}\). If we take \(p<\tilde{p}\) and we substitute \(q_i^{\text {F}}\), we get that

$$\begin{aligned} V_i(p)&= \int _0^{\tilde{t}}{{\mathrm{e}}}^{-\rho t}\left( (p^{\text {F}}(t)-c)q_i^{\text {F}}(p^{\text {F}}(t))- \frac{q_i^{\text {F}}(p^{\text {F}}(t))^2}{2}\right) dt\\&\quad +\int _{\tilde{t}}^{\infty }{{\mathrm{e}}}^{-\rho t}\left( (p^{\text {F}}(t)-c)q_i^{\text {F}}(p^{\text {F}}(t))- \frac{q_i^{\text {F}}(p^{\text {F}}(t))^2}{2}\right) dt\\&= \int _0^{\tilde{t}}0dt+\int _{\tilde{t}}^{\infty }{{\mathrm{e}}}^{-\rho t}\left( (p^{\text {F}}(t)-c)q_i^{\text {F}}(p^{\text {F}}(t)) -\frac{q_i^{\text {F}}(p^{\text {F}}(t))^2}{2}\right) dt\\&= {{\mathrm{e}}}^{-\rho \tilde{t}}V_i(\tilde{p}), \end{aligned}$$for \(\tilde{t}\) defined by (32). Let us introduce another auxiliary function \(V_i^-\) defined on \([c,A)\) by

$$\begin{aligned} V_i^-(p)=(A-p)^{-\frac{\rho }{s}}(A-\tilde{p})^{\frac{\rho }{s}}\left( V_i^+(\tilde{p}) \right) \end{aligned}$$(44)and consider also \(V_i^+ \) as a function defined on \([c,A)\). The fact that proposed \(q_i\) is optimal, results in the value function

$$\begin{aligned} V_{i}(p)={\left\{ \begin{array}{ll} V_i^+ &{} \text { if }p\ge \tilde{p}\\ V_i^- &{} \text{ otherwise. } \end{array}\right. } \end{aligned}$$(45) -

(b)

Continuity is immediate, while to prove continuous differentiability we have to check whether the function \(V_i\) defined by (43) is \(C^1\) at \(\tilde{p}\), which is equivalent to equality of derivatives of \(V_i^+\) and \(V_i^-\) at \(\tilde{p}\):

$$\begin{aligned} (V_i^+(\tilde{p}))'=(V_i^-(\tilde{p}))'=\frac{\rho }{s}\frac{V_i^+(\tilde{p})}{A-\tilde{p}}. \end{aligned}$$(46)Checking it does not require a dull substitution of predefined constants, since by the Bellman equation derived in Lemmas 5 and 6 and the fact that the maximum in the Bellman equation for \(p=\tilde{p}\) is attained at \(0\), \(\rho V_i^+(\tilde{p})=(V_i^+(\tilde{p}))'s(A-\tilde{p})\). Thus, Eq. (46) is equivalent to \((V_i^+(\tilde{p}))'=\frac{1}{s}\frac{(V_i^+(\tilde{p}))'s(A-\tilde{p})}{A-\tilde{p}}\), which reduces to the required equality.\(\square \)

Proof

(of Theorem 4) Fix player \(i\) and assume all the other players choose strategies \(q_i^{\text {F}}\). Since the candidate function \(V_i\) defined by Eq. (43) is \(C^1\), we can use the standard technique of Bellman equation to prove that

is a symmetric feedback Nash equilibrium strategy and \(V_i\) is the value function.

First, we have to prove that the Bellman equation is fulfilled and \(q_{i}^{\text {F}}(p)\) maximises the right-hand side of the Bellman equation. For \(p\ge \tilde{p}\) it has been already proved in Lemma 6.

We have to prove it also for \(p<\tilde{p}\). Let us define

Obviously, \(z(p)=p-c-(V_i^-(p))'s\). This function is strictly concave on the interval \([c,A)\), negative at \(c\) and in a neighbourhood of \(A\) and \(0\) at \(\tilde{p}\).

If we show that the derivative of \(z\) at \(\tilde{p}\) is positive, it means that for \(p\in [c, \tilde{p}]\), \(z\) is strictly increasing, which implies that for all \(p<\tilde{p}\),

Indeed, we have \(z'(\tilde{p})=1-(V_i^-(\tilde{p}))''s=1-\frac{\rho }{s}\left( \frac{\rho }{s}+1 \right) \frac{V_i^+(\tilde{p})}{(A-\tilde{p})^2}s\). By Bellman equation for \(\tilde{p}\), proven in Lemmata 5 and 6, \(\rho V_i^+(\tilde{p})=(V_i^+(\tilde{p}))'s (A-\tilde{p})\), which reduces the above to \(z'(\tilde{p})=1-\left( \frac{\rho }{s}+1 \right) \frac{(V_i^+(\tilde{p}))'}{(A-\tilde{p})}s\).

By Lemma 6(b), we have \((V_i^+(\tilde{p}))'s=\tilde{p}-c\). Therefore, the inequality \(z'(\tilde{p})\ge 0\) is equivalent to

Plugging the definition of \(\tilde{p}\) into the above inequality we arrive at

Using the definition of \(k\) and \(h\), after some algebraic manipulation, we conclude that the right-hand side of (48) is equal to

Clearly, \(\rho < \sqrt{4 \left( N^2+2\right) s^2+4 (N+1) s \rho +\rho ^2}\), and therefore, inequality (48) holds for all \(N\ge 2\).

The terminal condition for the optimization problem is also fulfilled. First, the set of prices is bounded from above, therefore \( \mathrm limsup _{t\rightarrow \infty } V_i(p(t)){{\mathrm{e}}}^{-\rho t}\le 0\). On the other hand, \(q_i\in [0,q_{\max }]\), therefore, \(\dot{p}\ge s(A-Nq_{\max }-p)\). Hence, \(p(t)\ge c_1+c_2 {{\mathrm{e}}}^{-st}\), which implies that \( \mathrm limsup _{t\rightarrow \infty } V_i(p(t)){{\mathrm{e}}}^{-\rho t}\ge 0\).

Now, let us calculate the trajectory of the price corresponding to the symmetric Nash equilibrium we have just determined. We start from the first case in Eq. (36): \(p(0)=p_{0}\) is such that \(p_{0}\ge \tilde{p}\).

After substituting \(q^{\text {F}}_{i}\) calculated in (47) into (29) we obtain

Solving the above equation with the initial condition \(p(0)=p_{0}\) we get

where \(k\) and \(h\) are given by (33) and (34), respectively.

Now, let us consider the second case in which the initial condition \(p(0)=p_{0}\) such that \(p_{0} \le \tilde{p}\) and time before reaching \(p=\tilde{p}\). After substituting \(q^{\text {F}}_{i}(p)=0\) into (29) we obtain

Solving the above equation with the initial condition \(p(0)=p_{0}\) with \(p_0< \tilde{p}\) we get

up to time \(\tilde{t}\) in which \(p(t)\) reaches \(\tilde{p}\). Solving \(A+(p_{0}-A)e^{-s\tilde{t}}=\tilde{p}\) we obtain Eq. (32). Afterwards, the solution behaves according to formula (49) with \(p_0=\tilde{p}\) and \(t\) replaced by \(t-\tilde{t}\), which immediately leads to the required formula. This completes the proof. \(\square \)

5 Relations and Comparison

In this section we compare two classes of equilibria for various values of parameters.

First it is obvious that whatever the initial condition is, the open loop Nash equilibrium is not a degenerate feedback equilibrium. We obtain it immediately by the fact that the steady states of the state variable—price—for open loop and feedback equilibrium are different and globally asymptotically stable.

5.1 Asymptotic Values of the Nash Equilibria with Very Slow and Very Fast Price Adjustment

Consider the asymptotic of the Nash equilibria for \(s\rightarrow 0\) and \(s\rightarrow +\infty \).

For the open-loop case, the asymptotic price level is \(p^{\mathrm {OL},*}\) given by (14), while from (26) we deduce that the asymptotic production level is

Letting \(s\rightarrow 0\) we easily obtain

and

Note, that it is equal to \(p^{\text {Comp}}\) and \(q^{\text {Comp}}\), respectively. On the other hand, if we consider \(s\) very large, we obtain

and

Now, consider the feedback case. From (36) we deduce

Using (35) we have

First, we rewrite \(k\) eliminating the minus sign before the square root. We have

It is easy to see \(k\rightarrow 1/\rho \) and therefore, \(h\rightarrow -c/\rho \) as \(s\rightarrow 0\). Now we can deduce that

while

The case \(s\rightarrow +\infty \) is more complicated. First, we calculate the asymptotic behaviour of \(sk\) as \(s\rightarrow +\infty \).

Plugging this into the expression (34) for \(h\) we obtain

Using these two expressions we can deduce

Multiplying the numerator and the denominator by \((N+1+\sqrt{N^2+2})\sqrt{N^2+2}\) and collecting terms with \(\sqrt{N^2+2}\) we obtain

Removing the square root from the denominator, we obtain

Using (36) we obtain

5.2 Graphical Illustration

In this section we present the results graphically. We compare the open loop and feedback solutions in various aspects of the model, taking influence of changing parameters into account. We fix the following parameters:

We check dependence of optimal solutions on parameters describing price stickiness, \(s\), and the number of firms, \(N\).

5.2.1 Relations Between Open Loop and Feedback Equilibria

As we can see in Fig. 2, time at which firms start production is shorter in the feedback case, \(\tilde{t}<\bar{t}\). After \(\tilde{t}\) at each time, instant production at the feedback Nash equilibrium is larger than at the open-loop Nash equilibrium, while the price is smaller (see Fig. 3).

Open loop and feedback equilibria for the same initial price, the parameters given by (51) and \(s=0.2\), \(N=10\). Open-loop equilibrium marked with a thick solid blue line, feedback equilibrium marked with a thick dashed red line. Production levels of the static Cournot equilibrium and the static competitive equilibrium marked with thin dashed lines for comparison (Color figure online)

Open loop and feedback equilibrium price levels for the same initial price, the parameters given by (51) and \(s=0.2\), \(N=10\). Open-loop equilibrium marked with a thick solid blue line, feedback equilibrium marked with a thick dashed red line. Production levels of the static Cournot equilibrium and the static competitive equilibrium marked with thin dashed lines for comparison (Color figure online)

It implies that the feedback Nash equilibrium ensures higher utility to the consumers, while open-loop Nash equilibrium yields higher profits to the producers. In other words, the feedback Nash equilibrium is more competitive, which is a result consistent with the previous literature, among others, Fershtman and Kamien [19] and Cellini and Lambertini [9].

The reason for the difference between these two types of equilibria results from the way in which players perceive the influence of their current decisions about production level on future trajectory of prices. In the feedback case, every player has to take into account the fact that his/her current decision affects future trajectory of prices not only directly, but also indirectly, since it affects future decisions of the remaining players, which in the feedback case is dependent on the market price. Therefore, there are two contradictory effects: negative effect that increase of his/her production has on future prices, and indirect inverse effect, resulting from the fact that the other players’ production level is an increasing function of prices. In this second effect an increase of player’s production, by resulting in a decrease of future price, indirectly decreases the other players’ future production decisions. Consequently, as a sum of two effects of opposite signs, the resulting decrease of prices is smaller. In the open-loop case, in which only direct influence on future prices is considered in players’ optimization problems, such an inverse effect does not take place.

5.2.2 Dependence on the Number of Firms

As we can see in Figs. 4 and 5, production starts earlier as the number of firms increases. Production of a single firm converges to the steady state, which becomes smaller as the number of firms increases, but the initial production growth is faster, which results in an intersection of trajectories for these two cases. Therefore, a production for larger \(N\) is first below the respective production for a smaller \(N\), but later the relation changes in both open loop and feedback cases.

Open loop and feedback equilibrium production as the number of firms increases. Open-loop equilibrium production for \(N=2\) and \(N=10\) marked with a solid blue line and a solid dark blue line, respectively. Feedback equilibrium production for \(N=2\) and \(N=10\) marked with a dashed red line and a dashed dark red line, respectively. Others parameters are given by (51), and \(s=0.25\) (Color figure online)

Nevertheless, there is no such intersection for aggregate production or price, we can see in Figs. 5 and 6: aggregate production increases with N, while price decreases. So, as the number of firms increases, we have both decrease of individual production but increase in aggregate production.

Open loop and feedback equilibrium aggregate production as the number of firms increases. Open-loop equilibrium aggregate production for \(N=2\) and \(N=10\) marked with a solid blue line and a solid dark blue line, respectively. Feedback equilibrium aggregate production for \(N=2\) and \(N=10\) marked with a dashed red line and a dashed dark red line, respectively. Others parameters are given by (51), and \(s=0.25\) (Color figure online)

Open loop and feedback equilibrium price levels as the number of firms increases. Open-loop equilibrium price levels for \(N=4\) and \(N=10\) marked with a solid blue line and a solid dark blue line, respectively. Feedback equilibrium price levels for \(N=4\) and \(N=10\) marked with a dashed red line and a dashed dark red line, respectively. Others parameters are given by (51), and \(s=0.25\) (Color figure online)

Another thing that we can illustrate graphically is the asymptotic behaviour of price and production, presented in Fig. 7, as well as the difference between feedback and open-loop production as a function of the number of firms, presented in Fig. 8.

Dependence of the asymptotic (as \(t\rightarrow +\infty \)) of the production level (in the left-hand side panel) and the price level (in the right-hand side panel) in the Nash equilibrium on the number of firms \(N\). The open-loop case is marked with a solid blue line, while the feedback case is marked with a red dashed line. Others parameters are given by (51), and \(s=0.25\) (Color figure online)

Dependence of the difference \(q^{{\mathrm {F}},*}-q^{\mathrm {OL},*}\) on the number of firms \(N\) for various values of the price stickiness. Others parameters are given by (51) (Color figure online)

5.2.3 Dependence on the Speed of Adjustment

If we consider growth of \(s\), the steady-state production increases, while the steady-state price decreases, as we can see in Fig. 9 and 10. The decrease concerns the whole trajectory of prices. Conversely, Fig. 9 also shows that the relation between production levels changes over time. First, the growth is faster, then there is an intersection of trajectories and convergence to a lower steady state for larger \(s\).

Open loop and feedback equilibria as the speed of adjustment increases. Open-loop equilibrium for \(s=0.25\) and \(s=0.9\) marked with a solid blue line and a solid dark blue line, respectively. Feedback equilibrium for \(s=0.25\) and \(s=0.9\) marked with a dashed red line and a dashed dark red line, respectively. Production levels of the static Cournot equilibrium and the static competitive equilibrium marked with thin dashed lines for comparison. Others parameters are given by (51), and \(N=4\) (Color figure online)

Open loop and feedback equilibrium price levels as the speed of adjustment increases. Open-loop equilibrium for \(s=0.25\) and \(s=0.9\) marked with a solid blue line and a solid dark blue line, respectively. Feedback equilibrium for \(s=0.25\) and \(s=0.9\) marked with a dashed red line and a dashed dark red line, respectively. Prices of the static Cournot equilibrium and the static competitive equilibrium marked with dashed lines for comparison. Others parameters are given by (51), and \(N=4\) (Color figure online)

The steady-state levels converge as \(s\rightarrow \infty \): for the open-loop case to the static Cournot-Nash equilibrium, for the feedback case to a level between the competitive and Cournot-Nash equilibria. Fig. 11 shows how the steady-state production and price depend on price stickiness. Dependence of the steady state on \(s\) can also be read from Fig. 8 in which joint dependence on \(N\) and \(s\) is presented.

Dependence of the asymptotic (as \(t\rightarrow +\infty \)) of the production level (in the left-hand side panel) and the price level (in the right-hand side panel) in the Nash equilibrium on the price stickiness \(s\). The open-loop case is marked with a solid blue line, while the feedback case is marked with a red dashed line. Others parameters are given by (51), and \(N=4\) (Color figure online)

6 Conclusions

In this paper we study a model of oligopoly with sticky prices performing a complete analysis of trajectories of production and prices at symmetric open loop and feedback Nash equilibria. We consider not only constant trajectories, resulting from assuming that initial values are steady states of these equilibria, respectively, but also all admissible Nash equilibrium trajectories. This allows us to compare two approaches in a way similar to comparisons observed in the real life, in which it makes sense to compare only trajectories of both kinds of equilibria starting from the same initial value. It also allows us to find interesting properties which cannot be observed when only the steady-state behaviour is analysed, like intersection of trajectories of production level for various number of firms or speed of adjustment. In both cases for larger value of the parameter considered, there was first faster increase of production and afterwards convergence to a lower steady state.

We also proved, by refining previous results, that the steady state in the open-loop case, is, as in the feedback case, globally asymptotically stable.

Notes

Generally, value functions obtained in similar dynamic game theoretic problems may have a point at which they are not differentiable. To solve such problems, there are generalisations of the standard Bellman equation for continuous but nonsmooth value function, using viscosity solution approach. This approach was already used in dynamic games with applications in dynamic economic problems, e.g. by Dockner at al. [13], Dockner and Wagener [14] or Rowat [26].

References

Aseev SM, Kryazhimskiy AV (2004) The pontriagin maximum principle and transversality conditions for a class of optimal control problems with infinite time horizons. SIAM J Control Optim 43:1094–1119

Aseev SM, Kryazhimskiy AV (2008) Shadow prices in infinite-horizon optimal control problems with dominating discounts. Appl Math Comput 204:519–531

Aseev S, Veliov V (2011) Maximum principle for infinite-Horizon optimal control problems with dominating discounts. Report 2011–06. Technische Universität Wien

Aseev S, Veliov V (2012) Needle variations in infinite-horizon optimal control. Report 2012–04. Technische Universität Wien

Balder EJ (1983) An existence result for optimal economic growth problems. J Math Anal Appl 95:195–213

Benchekroun H (2003) The closed-loop effect and the profitability of horizontal mergers. Can J Econ 36:546–565

Benchekroun H (2007) A unifying differential game of advertising and promotions. Int Game Theory Rev 09:183–197

Cellini R, Lambertini L (2003) Advertising with spillover effects in a differential oligopoly game with differentiated goods. Cent Eur J Oper Res 11:409–423

Cellini R, Lambertini L (2004) Dynamic oligopoly with sticky prices: closed-loop, feedback and open-loop solutions. J Dyn Control Syst 10:303–314

Cellini R, Lambertini L (2007) A differential oligopoly game with differentiated goods and sticky prices. Eur J Oper Res 176:1131–1144

Chutani A, Sethi SP (2012) Cooperative advertising in a dynamic retail market oligopoly. Dyn Games Appl 2:347–371

Clemhout S, Leitmann G, Wan HY Jr (1971) Econometrica 39:911–938

Dockner E, Jørgensen S, Long NV, Sorger G (2000) Differential games in economics and management science. Cambridge University Press, Cambridge

Dockner E, Wagener F (2013) Markov-Perfect nash equilibria in models with a single capital stock. CeNDEF Working Paper, pp 13–03

De Cesare L, Di Liddo A (2001) A stackelberg game of innovation diffusion: pricing, advertising and subsidy strategies. Int Game Theory Rev 3:325–339

Dockner E, Feichtinger G (1986) Dynamic advertising and pricing in an oligopoly: a nash equilibrium approach. In: Başar T (ed) Dynamic games and applications in economics. Lecture Notes in Economics and Mathematical Systems, vol 265. Springer, pp 238–251

Dockner E, Gaunersdorfer A (1985) On profitability of horizontal mergers in industries with dynamic competition. Jpn World Econ 13:195–216

Eshafani H (2012) Essays in Industrial Organization. PhD Thesis, Universita di Bologna

Fershtman C, Kamien MI (1987) Dynamic duopolistic competition with sticky prices. Econometrica 55:1151–1164

Fershtman C, Kamien MI (1990) Turnpike properties in a finite-horizon differential game: dynamic duopoly with sticky prices. Int Econ Rev 31:49–60

Halkin H (1974) Necessary conditions for optimal control problems with infinite horizon. Econometrica 42:267–272

Jørgensen S (1986) Optimal dynamic pricing in an oligopolistic market: a survey. In: Başar T (ed) Dynamic games and applications in economics. Lecture Notes in Economics and Mathematical Systems, vol 265. Springer, pp 179–237

Jun B, Viwes X (2004) Strategic incentives in dynamic oligopoly. J Econ Theory 116:249–281

Kamp LS, Perloff JM (1993) Open-loop and feedback models of dynamic oligopoly. Int J Ind Organ 11:369–389

Michel P (1982) On the transversality condition in infinite horizon optimal problems. Econometrica 50:975–985

Rowat C (2007) Non-linear strategies in a linear quadratic differential game. J Econ Dyn Control 31:3179–3202

Simaan M, Takayama T (1978) Game theory applied to dynamic duopoly with production constraints. Automatica 14:161–166

Tsutsui S, Mino K (1990) Nonlinear strategies in dynamic duopolistic competition with sticky prices. J Econ Theory 52:136–161

Wiszniewska-Matyszkiel A (2008) Dynamic oligopoly as a mixed large game—toy market. In: Neogy SK, Bapat RB, Das AK, Parthasarathy T (eds) Mathematical programming and game theory for decision making, pp 369–390

Zabczyk J (1992) Mathematical control theory: an introduction. Birkhäuser

Acknowledgments

This research of A. Wiszniewska-Matyszkiel supported by Polish National Science Centre grant 2011/01/D/ST6/06981.

Author information

Authors and Affiliations

Corresponding author

Appendix: Open loop—existence of optimal solution and appropriate necessary conditions for infinite horizon optimal control problem

Appendix: Open loop—existence of optimal solution and appropriate necessary conditions for infinite horizon optimal control problem

In this section we formulate the necessary condition, analogous to the core Pontryagin principle for finite time horizon, in the case of infinite time horizon.

We consider an optimal control problem with the state space \(\mathbb {X} \subseteq \mathbb {R}^n\), the set of control parameters \(\mathbb {U}\subseteq \mathbb {R}^m\) and the open-loop information structure, i.e. the set of open-loop control functions \(\fancyscript{U}^{\mathrm {OL}}=\{u:\mathbb R_+\rightarrow \mathbb {U} \text { measurable}\}\). As the objective we consider maximisation of

where the trajectory \(x\) is the trajectory corresponding to \(u\) and it is defined by

the discount rate is \(\rho >0\), and the integration denotes integration with respect to the Lebesgue measure.

We assume a priori that the functions \(g\) and \(f\) are such that the objective function is finite for every \(u\in \fancyscript{U}^{\mathrm {OL}}\) and the corresponding trajectory \(x\).

An absolutely continuous function \(x:\mathbb {R}_+\rightarrow \mathbb {X}\) being a solution to the system (53) with \(u\in \fancyscript{U}^{\mathrm {OL}}\) is called admissible trajectory corresponding to \(u\).

We denote this dynamic optimization problem by (P).

In all further results we assume that both sets \(\mathbb {U}\) and \(\mathbb {X}\) are nonempty, \(\mathbb {U}\) is compact, and the functions \(f:\mathbb {R}_+\times \mathbb {X} \times \mathbb {U}~\rightarrow \mathbb {R}^{n}\), and \(g:\mathbb {R}_+\times \mathbb {X} \times \mathbb {U}~\rightarrow \mathbb {R}\) are measurable.

Any pair \((u,x)\), where \(u\) is a control and \(x\) is an admissible trajectory corresponding to it, is called an admissible solution.

A pair \((u^{*},x^{*})\) is called an optimal solution of the problem (P) if it is an admissible solution, and the value of \(J_{0,x_0}(u^*)\) is maximal, that is \(J_{0,x_0}(u)\le J_{0,x_0}(u^*)\) for every admissible solution \((u,x)\).

1.1 Aseev and Veliov Extension of the Pontryagin Maximum Principle

Here we cite the maximum Pontryagin principle for the problem (P), which is infinite horizon, nonautonomous, discounted dynamic optimization problem. As it has been mentioned before (see Sect. 3), the maximum principle, especially the terminal condition \(\lim _{t\rightarrow \infty }\lambda (t){{\mathrm{e}}}^{-\rho t}=0\), is not necessary in such a problem.

Results that can be applicable in this paper were proved by Aseev and Veliov [3, 4]. First, we formulate four suitable assumptions: Consider the dynamic optimization problem (P) and let \((u^*,x^*)\) be an optimal solution to it.

-

(1)

The functions \(f\) and \(g\) and their partial derivatives with respect to \(x\) are continuous in \((x,u)\) for every fixed \(t\) and uniformly bounded as functions of \(t\) over every bounded set of \((x,u)\).

-

(2)

There exist numbers \(\mu ,r ,\kappa ,c_1\ge 0\) and \(\beta >0\) such that for every \(t\ge 0\)

-

(i)

\(\Vert x^*(t)\Vert \le c_1 {{\mathrm{e}}}^{\mu t}\) and

-

(ii)

for every control \(u\) for which the Lebesgue measure of \(\{t:u(t)\ne u^*(t)\}\le \beta \), the corresponding trajectory exists on \(\mathbb {R}_+\) and \(\Vert \frac{\partial g(t,y,u^*(y))}{\partial x}\Vert \le \kappa (1+\Vert y \Vert ^r)\) for every \(y\in \mathrm {conv}\{x(t),x^*(t)\}\), where \(\mathrm {conv}\) denotes the convex hull.

-

(3)

There are numbers \(\eta \in \mathbb {R}, \gamma >0\) and \(c_2\ge 0\) such that for every \(\zeta \in \mathbb {X}\) with \(\Vert \zeta -x_0\Vert <\gamma \) Eq. (53) with initial condition replaced by \(x(0)=\zeta \) has a solution \(x^{\zeta }\) defined on \(\mathbb {R}_+\), such that \(x^{\zeta }(t)\in \mathbb {X}\), for all \(t\ge 0\), and

$$\begin{aligned} \Vert x^{\zeta }(t)-x^*(t)\Vert \le c_2\Vert \zeta -x_0\Vert {{\mathrm{e}}}^{\eta t}. \end{aligned}$$ -

(4)

\(\rho >\eta +r \max \{ \eta ,\mu \}\) for \(r,\eta , \mu \) from (2) and (3).

The formulation of necessary conditions uses a hamiltonian function \(H\) and an adjoint variable \(\psi \).

Definition 1

The hamiltonian is a function \(H:\mathbb {R}_+\times \mathbb {X} \times \mathbb {U}\times \mathbb {R}^{n}\rightarrow \mathbb {R}\) such that

where \(\langle \cdot ,\cdot \rangle \) denotes the inner product in \(\mathbb {R}^n\).

Definition 2

For an admissible solution \((u^*,x^*)\) an absolutely continuous function \(\psi : \mathbb {R}_+\rightarrow \mathbb {R}^n\) is called an adjoint (or costate) variable corresponding to \((x^{*},u^{*})\), if it is a solution to the following system

Definition 3

We say that an admissible pair \((x^{*},u^{*})\) together with an adjoint variable \(\psi ^*\) corresponding to \((x^{*},u^{*})\), satisfy the core relations of the normal-form Pontryagin maximum principle for the problem (P), if the following maximum condition holds on \([0,+\infty )\)

Let us turn to the main part of this section—the definition of the Pontryagin maximum principle for the infinite time horizon:

Theorem 5

(Aseev-Veliov maximum principle) Suppose that the conditions (1)–(4) are satisfied and \((x^{*},u^{*})\) is an optimal solutionFootnote 2 for problem (P). Then there exists an adjoint variable \(\psi ^*\) corresponding to \((x^{*},u^{*})\) such that

-

(i)

\((x^{*},u^{*})\), together with \(\psi ^*\) satisfy the core relations of the normal-form Pontryagin maximum principle,

-

(ii)

for every \(t\ge 0\) the integral

$$\begin{aligned} I^{*}(t)=\int \limits _{t}^{\infty }e^{-\rho w}\left[ Z_{(x^{*},u^{*})}(w)\right] ^{-1}\frac{\partial g(w,x^{*}(w),u^{*}(w))}{\partial x}dw, \end{aligned}$$where \(Z_{(x^{*},u^{*})}(t)\) is the normalised fundamental matrix of the following linear system

$$\begin{aligned} \dot{z}(t)=-\frac{\partial f(t,x^{*}(t),u^{*}(t))}{\partial x}z(t), \end{aligned}$$converges absolutely and

-

(iii)

\(\psi ^*(t)=Z_{(x^{*},u^{*})}(t)I^{*}(t)\).

1.2 Existence of Optimal Solution

We use the existence theorem of Balder [5, Theorem 3.6], which we cite in a simplified form, suiting our model in which both state and control variables sets are constant, not coupled, and the initial condition is fixed.

-

(A)

For all \(t\ge 0\) \(f(t,\cdot ,\cdot )\) is continuous, \(g(t,\cdot ,\cdot )\) is upper semicontinuous with respect to \((x,u)\) and the sets \(\mathbb {X}\) and \(\mathbb {U}\) are closed.

-

(B)

For all \(x \in \mathbb {X}\), and \(t\in \mathbb {R}^+\), the set

$$\begin{aligned} Q(t,x)=\{(z_{0},z)\in \mathbb {R}^{n+1}:z_{0}\le g(t,x,u){{\mathrm{e}}}^{-\rho t}, z=f(t,x,u), u\in \mathbb {U}\} \end{aligned}$$-

(a)

is convex, and

-

(b)

\(\displaystyle Q(t,x)=\bigcap _{\delta >0} \mathrm {cl}\biggl (\bigcup _{|x-y|\le \delta } Q(t,y)\biggr )\), where \(\mathrm {cl}(Q)\) is a closure of the set \(Q\).

-

(a)

-

(C)

There exists a constant \(\alpha \in \mathbb {R}\) such that the set of admissible pairs

$$\begin{aligned} \Omega _{\alpha }=\left\{ (x,u)\mathrm{admissible\, pairs }: J_{0,x_0}(u)\ge \alpha \right\} \end{aligned}$$is nonempty, \(\displaystyle \{f(\cdot ,x(\cdot )),u(\cdot )|_{[0,T]}:(x,u)\in \Omega _{\alpha } \}\) is uniformly integrable for each \(T\ge 0\), and

$$\begin{aligned} G=\{g^+(\cdot ,x(\cdot ),u(\cdot )){{\mathrm{e}}}^{-\rho \cdot }:(x,u)\in \Omega _{\alpha } \} \end{aligned}$$(where \(g^+\) denotes \(\max \{0,g\}\)), is strongly uniformly integrable, that is for every \(\epsilon >0\) there exists \(h\in L_1^+(\mathbb {R}_+)\) such that \(\displaystyle \sup _{\zeta \in G}\int _{\{t:|\zeta (t)|\ge h\}}|\zeta (t)|dt\le \epsilon \).

Theorem 6

(Balder) If conditions (A)–(C) are fulfilled, then there exists an optimal pair \((x^{*},u^{*})\) for the problem (P).

1.3 Checking Assumptions for Theorem 5 for the Model Described in Sect. 2

Before checking the assumptions of Theorems 5 and 6, we want to be able to restrict sets of control and state variables.

The set of control variables is unbounded. However, it is convenient to have bounded prices.

Proposition 1

The set of Nash equilibria remains unchanged if we consider the model with sets of players’ control parameters of the form \([0,q_{\max }]\) and set of possible prices \([0,p_{\max }]\), \((0,p_{\max })\) or \([c,p_{\max }]\) for sufficiently large \(q_{\max {}}\) and \(p_{\max {}}\).

Proof

If we assume that prices are nonnegative and bounded from above by some \(p_{\max }\), a problem of optimization of payoff of player \(i\) has the same solutions as the problem in which the set of controls of player \(i\) is \([0,q_{\max }]\) for some large \(q_{\max }\), since for \(q_i\rightarrow \infty \) the instantaneous payoff is negative, while for \(0\) it is \(0\), due to the form of payoff functional (2)..

The set of prices, without any prior constraints equal to \(\mathbb {R}\), can be obviously replaced by \((-\infty ,p_{\max }]\) since for \(p_0>A\) every admissible trajectory is contained in \((-\infty ,p_0]\), due to (1).

The next step is to restrict the set of admissible trajectories to \([c,p_{\max }]\), which implies the remaining two. Although the Eq. (1) of price stickiness, written as it is, can lead even to negative prices, prices below \(c\) can never happen at equilibrium.

Indeed, let us assume that the price at some time instant \(t\) is below \(c\). Let us denote by \(\bar{t}\) a time instant before \(t\) at which price is \(c\) and such that in the time interval \([\bar{t},t]\), \(p\) is not greater than \(c\). Then the optimal strategy of every player is \(0\) at a.e. point in \([\bar{t}, t ]\), due to (2). This implies that \(\dot{p}(t)>0\) at this interval; therefore, values below \(c\) cannot be reached. \(\square \)

Let us consider optimization problem of player \(i\) given open-loop strategies of the remaining players \(q_j\) with \(q_i(t),q_j(t)\in [0,q_{\max }]\). We check whether the conditions (1)–(3) which are given in section “Aseev and Veliov extension of the Pontryagin Maximum Principle” are satisfied.

-

(I)

The function \(g(t,p,q_i)=(p-c)q_i-\frac{q_i^2}{2}\) and \(f(t,p,q_i)=s\Bigl (A-p-q_i-\sum \limits _{j\ne i}q_j(t)\Bigr )\). For all \(t\) both functions and their derivatives with respect to \(p\) are continuous in \((p,q_i)\). The function \(g\) is independent of time, therefore uniformly bounded, while uniform boundedness of \(f\) is implied by the fact that \(q_j(t)\) is uniformly bounded.

-

(II)

-

(i)

Since \(\Vert p \Vert \le p_{\max }\), \(c_1=p_{\max }, \mu =0\);

-

(ii)

For every control \(q_i\) the corresponding trajectory exists for on \(\mathbb {R}_+\) and \(\Vert \frac{\partial g(t,p,q_i(t))}{\partial p}\Vert \!=\!\Vert q_i(t)\Vert \le q_{\max }(1+\Vert y\Vert )^0\) for every \(y\), therefore, \(\kappa \!=\!q_{\max }, r\!=\!0\).

-

(i)

-

(III)

It is easy to calculate

$$\begin{aligned} \Vert p^\zeta (t)-p^*(t)\Vert&= \Vert \zeta {{\mathrm{e}}}^{-s t} +{{\mathrm{e}}}^{-s t}\int _0^t {{\mathrm{e}}}^{sw}u^*(w)dw-(p_0 {{\mathrm{e}}}^{-s t} \\&+{{\mathrm{e}}}^{-s t}\int _0^t {{\mathrm{e}}}^{sw}u^*(w)dw)\Vert \\&= \Vert \zeta -p_0\Vert {{\mathrm{e}}}^{-st}, \end{aligned}$$therefore \(c_2=1, \eta =-s\) and \(\gamma \) is arbitrary.

-

(IV)

The condition \(\rho >\eta +r\max \{\eta ,\mu \}\) for our constants reduces to \(\rho >-s\), which is obviously fulfilled.

1.4 Checking Assumptions for Theorem 6 for the Model Described in Sect. 2

-

(a)

This assumption is obviously fulfilled.

-

(b)

The set \(Q(t,p)=\Big \{(z_{0},z)\in \mathbb {R}^{2}:z_{0}\le q_{i}\cdot \left[ p-c-\frac{1}{2}q_{i}\right] \), \(z=s\cdot [A-p-Nq_{i}]\), \(q_{i} \in [0,q_{max}]\Big \}\). It is convex and \(Q(t,p)=\bigcap _{\delta >0} \mathrm cl \bigcup _{|p-y|\le \delta } Q(t,y).\)

-

(c)

We take \(\alpha =0\), and \(\Omega _0=\left\{ (p,q_i):q_i \mathrm corresponding to q_i,J^i_{0,x_0}(q_i)\ge 0\right\} \) is nonempty since \(q_i\equiv 0\) is always available. For every admissible trajectory \(\dot{p}(t)\in [s(A-p-Nq_{\max }),s(A-p)]\), therefore, \(p(t)\in [p_0+c_1 {{\mathrm{e}}}^{-st}, p_0+c_2 {{\mathrm{e}}}^{-st}]\). Besides \(q_i \in [0,q_{\max }]\). Both \(p\) and \(q\) are measurable. This implies that \(f(\cdot ,p(\cdot ),q_i(\cdot ))\) for all \((p,q_i)\) is uniformly integrable on every finite interval. Similarly, \(g^+(t,p(t),q_i(t)){{\mathrm{e}}}^{-\rho t}\in [0,pq_{\max }{{\mathrm{e}}}^{-\rho t}]\) together with measurability of functions \(p,q_i\) and \({{\mathrm{e}}}^{-\rho t}\), implies strong uniform integrability of \(G\).

1.5 Technical Lemma Required to Prove Uniqueness of Open Loop Nash Equilibrium

Lemma 8

Let \(b:\mathbb {R}\rightarrow (0,+\infty )\) be a continuous bounded function, and \(\sigma >0\), \(s>0\) be given constants. Then, equation

has at most one bounded solution for \(t\rightarrow +\infty \). Moreover, if the solution to (56) is unbounded as \(t\rightarrow +\infty \), then it is either negative or \(x(t)\sim C {{\mathrm{e}}}^{\sigma t}\) for some constant \(C>0\).

Proof

Note first, any bounded solution \(x_b\) to (56) is positive. If for some \(\bar{t}\) we have \(x_b(\bar{t})\le 0\), then due to positivity of \(b\) we have \(x_b(\bar{t}+\varepsilon )<0\) and \(x_b(t) \le x_b(\bar{t}+\varepsilon ) \exp \Bigl ((\sigma +s)(t-\bar{t} -\varepsilon )\Bigr )\) that converges to \(-\infty \).

Assume that \(x_b\) is a solution to (56), bounded for \(t\rightarrow +\infty \). Let \(x(t)\) be an arbitrary solution to (56) different from \(x_b(t)\). Due to the fact that solution to (56) for a given initial data \(x(t_0)=x_0\) is unique, we can have two possibilities. Either \(x(t)>x_b(t)\) for all \(t\ge 0\) or \(x(t)<x_b(t)\) for all \(t\). Consider the first case and denote \(w(t) = x(t)-x_b(t)>0\). If \(s x(t) > sx_b(t) \ge b(t)\), then \(\dot{w}(t) = \sigma w(t)\). On the other hand, if \(b(t)>s x(t) > sx_b(t)\), then \(\dot{w}(t) = (\sigma +s)w(t) > \sigma w(t)\). The last possible case is \(s x(t) \ge b(t) > s x_b(t)\). We have here \(\dot{w}(t) = \sigma w(t) - s x_b(t) + b(t)> \sigma w(t)\). Therefore,

This proves that \(x(t)\) is unbounded. Due to the assumption that \(b\) is bounded, this means \(s x(t)>b(t)\) for all \(t>\tilde{t}\), for some \(\tilde{t}\) and this yields the assertion on the asymptotic behaviour of \(x\).

The case \(x(t)<x_b(t)\) is proved by analogous argument. \(\square \)

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution License which permits any use, distribution, and reproduction in any medium, provided the original author(s) and the source are credited.

About this article

Cite this article

Wiszniewska-Matyszkiel, A., Bodnar, M. & Mirota, F. Dynamic Oligopoly with Sticky Prices: Off-Steady-state Analysis. Dyn Games Appl 5, 568–598 (2015). https://doi.org/10.1007/s13235-014-0125-z

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13235-014-0125-z