Abstract

The pollution of groundwater, essential for supporting populations and agriculture, can have catastrophic consequences. Thus, accurate modeling of water pollution at the surface and in groundwater aquifers is vital. Here, we consider a density-driven groundwater flow problem with uncertain porosity and permeability. Addressing this problem is relevant for geothermal reservoir simulations, natural saline-disposal basins, modeling of contaminant plumes and subsurface flow predictions. This strongly nonlinear time-dependent problem describes the convection of a two-phase flow, whereby a liquid flows and propagates into groundwater reservoirs under the force of gravity to form so-called “fingers”. To achieve an accurate numerical solution, fine spatial resolution with an unstructured mesh and, therefore, high computational resources are required. Here we run a parallelized simulation toolbox ug4 with a geometric multigrid solver on a parallel cluster, and the parallelization is carried out in physical and stochastic spaces. Additionally, we demonstrate how the ug4 toolbox can be run in a black-box fashion for testing different scenarios in the density-driven flow. As a benchmark, we solve the Elder-like problem in a 3D domain. For approximations in the stochastic space, we use the generalized polynomial chaos expansion. We compute the mean, variance, and exceedance probabilities for the mass fraction. We use the solution obtained from the quasi-Monte Carlo method as a reference solution.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Motivation

Accurate prediction of the movement of contamination in groundwater is essential. Certain pollutants are soluble in water and can leak into groundwater systems, such as seawater into coastal aquifers or wastewater leaks. Indeed, some pollutants can change the density of a fluid and induce density-driven flows within the aquifer. This causes faster propagation of the contamination due to convection. Thus, simulation and analysis of this density-driven flow play an important role in predicting how pollution can migrate through an aquifer (Kobus 2000; Fan et al. 1997).

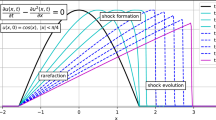

In contrast to the transport of pollution by molecular diffusion, convection due to density-driven flow is an unstable process that can undergo quite complicated patterns of distribution. The Elder problem is a simplified but comprehensive model that describes the intrusion of salt water from a top boundary into an aquifer (Elder 1967a, b; Voss and Souza 1987; Elder et al. 2017). The evolution of the concentration profile is typically referred to as fingering. Note that due to the nonlinear nature of the model, the distribution of the contamination strongly depends on the model parameters, so that the system may have several stationary solutions (Johannsen 2003).

Hydrogeological formations typically have a complicated and heterogeneous structure, and geological media may consist of layers of porous media with different porosities and permeability coefficients (Schneider et al. 2012; Reiter et al. 2017). Difficulty in specifying hydrogeological parameters of the media, as well as measuring the position and configuration of the layers, gives rise to certain errors. Typically, the averaged values of these quantities are used. However, due to the nonlinearities of the flow model, averaging of the parameters does not necessarily lead to the correct mathematical solution. Uncertainties arise from various factors, such as inaccurate measurements of the different parameters and the inability to measure parameters at each spatial location at any given time. To model the uncertainties, we can use random variables, random fields, and random processes. However, uncertainties in the input data spread through the model and make the solution uncertain too. Thus, an accurate estimation of the output uncertainties is crucial.

Many techniques are used to investigate the propagation of uncertainties associated with porosity and permeability into the solution, such as classical Monte Carlo (MC) sampling. Although MC sampling is dimension-independent, it converges slowly and therefore requires a lot of samples, and these simulations may be time-consuming and costly. Alternative, less costly techniques use collocation, sparse grid, and surrogate methods, each with their advantages and disadvantages. But even advanced techniques may require hundreds to thousands of simulations and assume a certain smoothness in the quantity of interest. Our simulations contain up to 4.5 million spatial mesh points and 1000–3000 time-steps and are therefore run on a massively parallel cluster. Here, we develop methods that require much fewer simulations, from a few dozen to a few hundred.

Perturbation methods, which expand the quantity of interest (QoI) with respect to random parameters in a Taylor series, were considered and compared in Cremer and Graf (2015).

Here, we use the well-established generalized polynomial chaos expansion (gPC ) technique (Xiu and Karniadakis 2002b), where we compute the gPC coefficients by applying a Clenshaw-Curtis quadrature rule (Barthelmann et al. 2000). We use gPC to compute QoIs such as the mean, the variance, and the exceedance probabilities for the mass fraction (in this case, a saltwater solution). We validate our obtained results using the quasi-Monte Carlo (qMC ) approach. Both methods require the computation of multiple simulations (scenarios) for variable porosity and permeability coefficients.

To the best of our knowledge, no other reported works have solved the Elder problem (Voss and Souza 1987; Elder et al. 2017) with uncertain porosity and permeability parameters using gPC .

Fingering in a highly unstable free convective flow has been studied in Xie et al. (2012). Overviews of the uncertainties in modeling groundwater solute transport (Carrera 1993) and modeling soil processes (Vereecken et al. 2016) have been performed, as well as reconnecting stochastic methods with hydrogeological applications (Bode et al. 2018), which included recommendations for optimization and risk assessment. Fundamentals of stochastic hydrogeology, an overview of stochastic tools and accounting for uncertainty have been described in Rubin (2003).

Recent advances in uncertainty quantification, probabilistic risk assessment, and decision-making under uncertainty in hydrogeologic applications have been reviewed in Tartakovsky (2013), where the author reviewed probabilistic risk assessment methods in hydrogeology under parametric, geologic, and model uncertainties. Density-driven vertical transport of saltwater through the freshwater lens has been modeled in Post and Houben (2017).

Various methods can be used to compute the desired statistics, such as direct integration methods (Monte Carlo, quasi Monte Carlo, collocation) and surrogate-based methods (generalized polynomial chaos approximation, stochastic Galerkin) (Espig et al. 2014; Matthies and Keese 2005b; Babuška et al. 2004). Direct integration methods compute statistics by sampling unknown input coefficients (they are undefined and therefore uncertain) and solving the corresponding PDE, while the surrogate-based method computes a low-cost functional (polynomial, exponential, trigonometric) approximation of QoI. Examples of surrogate-based methods include radial basis functions (Liu and Görtz 2014; Bompard et al. 2010; Loeven et al. 2007; Giunta et al. 2004), sparse polynomials (Chkifa et al. 2015; Blatman and Sudret 2010), polynomial chaos expansion (Xiu and Karniadakis 2003; Najm 2009; Conrad and Marzouk 2013; Xiu 2009; Ghili and Iaccarino 2017) and low-rank tensor approximation (Dolgov et al. 2015; Kim et al. 2006; Dolgov et al. 2014; Litvinenko et al. 2013; Litvinenko and Matthies 2011). A nonintrusive stochastic Galerkin method was introduced in Giraldi et al. (2014). The surrogate-based methods have an additional advantage if gradients are available (Liu et al. 2017). Sparse grid methods to integrate high-dimensional integrals are considered in Smolyak (1963); Bungartz and Griebel (2004); Griebel (2006); Klimke (2008); Novak and Ritter (1997, 1996); Gerstner and Griebel (1998); Novak and Ritter (1999); Conrad and Marzouk (2013); Constantine et al. (2012); Ghili and Iaccarino (2017). A sparse grid package is available in https://sparsegrids.org/software/.

The quantification of uncertainties in stochastic PDEs can pose a great challenge due to the possibly large number of random variables involved, and the high cost of each deterministic solution. For problems with a large number of random variables, the methods based on a regular grid (in stochastic space) are less preferable due to the high computational cost. Instead, methods based on a scattered sampling scheme (MC, qMC) give more freedom in choosing the sample number N and protect against sample failures (i.e., when the numerical code crashes for a given sample point). The MC quadrature and its variance-reduced variants have dimension-independent convergence with rate \(\mathcal {O}(N^{-\frac{1}{2}})\), and qMC has the worst case convergence rate \(\mathcal {O}(\log ^M(N)N^{-1})\), where M is the dimension of the stochastic space (Matthies 2007). Collocations on sparse or full grids are affected by the dimension of the integration domain (Babuška et al. 2007; Nobile et al. 2015, 2008). In this work, we use the Halton rule to generate quasi-Monte Carlo quadrature points (Caflisch 1998; Joe and Kuo 2008). A numerical comparison of other qMC sequences has been described in Radović et al. (1996).

The construction of a gPC -based surrogate model Matthies (2007) is similar to computing a Fourier expansion, where only the Fourier coefficients need to be computed. Some well-known polynomials, such as multivariate Legendre, Hermite, Chebyshev, and Laguerre functions, are commonly used as a basis Xiu and Karniadakis (2002b). These expansion functions should possess some useful properties, e.g., orthogonality. Usually, a small number of quadrature points are used to compute these coefficients. Once the surrogate is constructed, its sampling can be performed at a lower cost than a sampling of the original stochastic PDE.

To tackle the high numerical complexity, we implement a two-level parallelization. First, we run all available quadrature or sampling points (also referred to as scenarios) in parallel, with each scenario on a separate computing node. Second, each scenario is itself computed in parallel on 2–32 cores.

This work is structured as follows: In Sect. 2, we outline a model of density-driven groundwater flow in porous media and the numerical methods for this type of problem. We describe the stochastic modeling, integration methods, and the generalized polynomial chaos expansion technique in Sect. 3. We present details of the parallelization of the computations in Sect. 4. Our multiple numerical results, described in Sect. 5, demonstrate the practical performance of the parallelized solver for Elder-type problems with uncertain coefficients in 3D computational domains. We conclude this work with a discussion in Sect. 6.

1.1 Our contribution

We applied the gPC expansion to approximate the solution of the density driven flow, which is modeled by a time-dependent, nonlinear, second order differential equation. We estimated propagation of input uncertainties in the porosity and permeability into the mass fraction.

2 Problem setup

2.1 Density-driven groundwater flow

Groundwater in a porous medium such as an aquifer is subject to gravity, and when there is any spatial variation in groundwater density, a flow will be induced. In this work, we consider an intrusion of saltwater into the aquifer and the dependence of density on the mass fraction of the dissolved salt. Traditional approaches for modelling of this system are described in Bear (1972, 1979), Bear and Bachmat (1990), Diersch and Kolditz (2002), Post and Houben (2017). In this section, we briefly review the model that we employ.

We consider a domain \(\mathcal {D}\subset \mathbb {R}^d\), \(d=3\) that consists of two phases: an immobile solid porous matrix with a mobile liquid within its pores. We denote the porosity of the matrix by \(\phi : \mathcal {D}\rightarrow \mathbb {R}\) and its permeability by \({\mathbf {K}}: \mathcal {D}\rightarrow \mathbb {R}^{d \times d}\). The liquid is a brine solution of NaCl in water. The mass fraction of the brine (saturated saltwater solution) in the liquid phase is \(c(t, \mathbf {x}): [0, +\infty ) \times \mathcal {D}\rightarrow [0, 1]\). The density of the liquid phase is \(\rho = \rho (c)\) and its viscosity \(\mu = \mu (c)\).

The motion of the liquid phase through the solid matrix is characterized by the Darcy velocity \({\mathbf {q}}(t, \mathbf {x}): [0, +\infty ) \times \mathcal {D}\rightarrow \mathbb {R}^d\). Mass conservation of the liquid phase and the salt can be written as

where the tensor field \({\mathbf {D}}\) represents molecular diffusion and mechanical dispersion of the salt. For the velocity \({\mathbf {q}}\), Darcy’s law is used:

Here, \(p= p(t, \mathbf {x}): [0, +\infty ) \times \mathcal {D}\rightarrow \mathbb {R}\) is the hydrodynamic pressure and \({\mathbf {g}}= (0, \dots , - g)^T \in \mathbb {R}^d\) the acceleration due to gravity.

In this work, we consider a special case of this general model. We assume that the porous medium is isotropic and characterized by the scalar permeability K:

For the density, we set

where \(\rho _0\) and \(\rho _1\) are the densities of pure water and the brine, respectively. Note that \(c\in [0, 1]\), where \(c= 0\) corresponds to pure water and \(c= 1\) to the fully saturated solution. The viscosity is assumed to be constant. Finally, dispersion is neglected:

where \(D_m\) is the molecular diffusion coefficient.

Fields \(\phi (\mathbf {x})\) and \(K(\mathbf {x})\) will depend on the stochastic variables. Values of the other parameters used in this work are presented in Table 1.

In our numerical experiments, variants of the Elder problem (Voss and Souza 1987; Elder et al. 2017) are considered. In the Elder problem, a concentrated solution intrudes through the upper boundary of an aquifer filled with pure water. Due to the difference in densities of the water and the brine, a complicated, unstable flow arises. This leads to a specific distribution pattern of the intruding solution known as “fingering” (Johannsen 2003). The time evolution of the movement of the brine and the pressure in (1)–(3) is determined by the initial conditions of \(c\). Note that the system (1)–(3) is nonlinear, and the model may have several stationary states (Johannsen 2003). Any changes in the porosity and permeability fields may have a substantial effect on the solution, i.e., the same initial and boundary data may correspond to fundamentally different asymptotic behaviors. The influence of this factor can be estimated by applying the stochastic model.

In Litvinenko et al. (2019) we considered the same problem with a two-dimensional reservoir, and in this work we consider two three-dimensional reservoirs: a rectangular parallelepiped \(\mathcal {D}= [0,600] \times [0,600] \times [0,150]\) \(\mathrm {m}^3\) (Fig. 1a) and an elliptic cylinder (Fig. 1a) with \(x\in [-300,300]\) (the major axis), \(y\in [-150,150]\) (the minor axis) and \(z\in [-150,0]\) (the depth). The cylindrical domain is considered to investigate the influence of the shape on “fingers”. We impose the Dirichlet boundary condition \(c= 1\) on the red area on the top of \(\mathcal {D}\) (\(z = 0\)), and \(c= 0\) on the green area on the top and on the other boundaries. For Eq. (1), we impose no-flux boundary conditions on the bottom and the vertical walls. The boundary conditions for p differ for both geometries; for the parallelepiped, we impose the no-flux boundary conditions on all of the boundaries. However, to fix p, we set \(p = 0\) on the edges between the green and the blue faces. For the cylinder, we set \(p = 0\) on the whole top boundary.

For the parallelepiped, we try to mimic the standard 2D Elder problem that closes the upper boundary. In this case, if the pressure is not fixed at any point, it is determined up to a constant. We fix it and preserve the symmetry of the solution. For the cylinder, for which the setting is unsymmetric anyway, we model a different situation: We impose the “atmospheric pressure” on the top, i.e., assume that the top is the surface of the Earth. Note that these differences are not essential for numerical procedures.

For the spatial discretization of (1)–(3), we use a vertex-centered finite volume (FV) scheme. Degrees of freedom (DoFs) for \(c\) and p are located in the grid nodes of the primary mesh. The domain in Fig. 1a is covered by a grid of 524, 288 hexahedra, so that the total number of the DoFs is 1, 098, 306. For the domain in Fig. 1b, we use an unstructured mesh of 241, 152 tetrahedra with 89, 586 DoFs.

The resolution of the spatial and temporal discretizations play an important role in the accuracy of the simulations. The distribution of the hydrogeological parameters of the porous medium should be represented correctly. Moreover, smoothing the flow on a coarse grid due to numerical diffusion can lead to a loss of some phenomena and therefore reduce the accuracy of the uncertainty quantification. To this end, we tested the grid convergence of the simulations with respect to the spatial mesh. We ran our simulation on the twice regularly refined spatial mesh for geometry from Fig. 1b. The domain consisted of 15, 433, 728 cells and has 2, 822, 552 DoFs. The obtained solution was similar to the solution, computed on the coarser mesh mentioned above. Thus, further refinement essentially does not change the behaviour of the solution.

2.2 Stochastic modeling of porosity, permeability and mass fraction

In the model (1)–(3), there are two essential hydrogeological parameters, the porosity \(\phi \) and the permeability \({\mathbf {K}}\), that depend on the spatial coordinates. In general, \(\phi \) and \({\mathbf {K}}\) are completely unknown or known (measured) only in a few locations. These coefficients could be very complicated (discontinuous, jumping, multiscale).

One of the approaches to deal with such unknown or incomplete data is to extrapolate known measurements to the whole domain (Nowak and Litvinenko 2013). Another approach is to use random fields for modeling \(\phi \) and \({\mathbf {K}}\) (Ghanem and Spanos 1991; Keese and Mattthies 2005). The Karhunen–Loève expansion (KLE) is used to approximate these fields. In the KLE approach it is assumed that the covariance matrices of \(\phi \) and \({\mathbf {K}}\) are given. Or, alternatively, these covariance matrices could be estimated from the available data. Then, an auxiliary eigenvalue problem (Khoromskij et al. 2009), where the covariance function is the kernel of a certain integral operator (Ghanem and Spanos 1991; Karhunen 1946), is solved. The solution of this eigenvalue problem builds the KLE expansion, which represents the random field \(\phi \) (and \({\mathbf {K}}\)). This is a very well established approach, (cf. Schwab and Todor 2006, 2003; Ghanem and Spanos 1991). In this work, to avoid a very expensive solution of the eigenvalue problem (which is a very non-trivial task in large domains, distributed over many computational nodes), we directly write:

where \(\varphi _0(\mathbf {x})\) is a deterministic part, \(\{\varphi _i(\mathbf {x})\}_{i=1}^K\) functions, which emulate a basis in \(L_2(\mathcal {D})\), and \(\theta _i\) random variables. Note, that the constructed expansion is not the Karhunen–Loève expansion. Approximating the covariance operator and computing the KLE is beyond the scope of this work. If a parallel eigenvalue solver is on hand, then it could be integrated with our software library, and more realistic expansions (truncated KLE) could be used instead of our simplified models [see, e.g., (27), (28)].

Another approach to deal with uncertainties in the porosity and permeability is to introduce a prior statistical distribution for both and then use the Bayesian (or similar) updating techniques (Rosić et al. 2013; Matthies et al. 2016) to construct the posterior distribution.

To reduce the total numerical complexity, we consider a simplified model, where the permeability is a non-linear function of the porosity. Particularly, the same random variables are used for modeling both coefficients. Some empirical and theoretical laws connecting porosity and permeability have been proposed in the literature (Panda and Lake 1994; Pape et al. 1999; Costa 2006). In the following, we model the porosity (\(\phi (\mathbf {x})\)) by a random field (\(\phi (\mathbf {x},{\varvec{\theta }})\)) and assume that the permeability \({\mathbf {K}}\), cf. (4), is a non-linear function of \(\phi \):

In our experiments, we use a Kozeny–Carman-like dependence (Costa 2006):

where the scaling factor \(\kappa _{KC}\) is chosen to satisfy the equality \(K(\overline{\phi }) = K_{\mathbf {ref}}\), i.e., \( \kappa _{KC}= \dfrac{1 - \overline{\phi }^2}{\overline{\phi }^3}\cdot K_{\mathbf {ref}} \). Here \(K_{\mathbf {ref}}\) is a given value. \(K_{\mathbf {ref}}\) and other parameters of the standard Elder problem are listed in Table 1. Since \(\phi \) and \({\mathbf {K}}\) are uncertain, the solution \(c(\phi ,{\mathbf {K}})\) is uncertain too.

Remark 1

Establishing a relationship between porosity and permeability requires assumptions on the structure of the porous medium in the aquifer. Introducing additional random variables for the permeability would significantly increase the size of the problem and restrict the possibility to perform numerical tests, and at the same time would not principally change the method. For this reason, we used the same random variables for both.

Remark 2

The Kozeny–Carman model is simple, but it models the essential phenomena for our numerical tests. It introduces a non-linear relation between permeability and porosity so that the equations cannot be merely re-scaled. This underlines the non-linear nature of the dependence of the solution (the mass fraction) on these parameters. From this point of view, the Kozeny–Carman law is non-trivial enough for our purpose.

2.3 Solution of the deterministic problem

We start with sampling random variables \(\{{\varvec{\theta }}_i\}\) and computing the realizations of porosity \(\phi _i(\mathbf {x})=\phi (\mathbf {x},{\varvec{\theta }}_i)\) [as in (7)] and then permeability \({\mathbf {K}}_i(\mathbf {x})={\mathbf {K}}(\mathbf {x},{\varvec{\theta }}_i)\). For this data, we solve the system of equations (1)–(2). They are discretized by a vertex-centered finite-volume method on unstructured grids, as presented in Frolkovič (1998). In particular, we used the consistent velocity approach for the approximation of the Darcy velocity (3), (Frolkovič 1998; Frolkovič and Knabner 1996). The implicit Euler scheme for the time discretization was used. The choice of the implicit time-stepping scheme is motivated its unconditional stability, as the velocity depends on the unknowns of the system. This is especially important for variable coefficients as it is difficult to predict the range of the variation of the velocity in the realizations.

The discretization yields a large sparse system of nonlinear algebraic equations in every time step. We solve it using the Newton iteration with a line search method. The sparse linear system appearing in the nonlinear iterations is solved by the BiCGStab method (Zhaojun et al. 2000) with the geometric multigrid preconditioning [V-cycle, (Hackbusch 1985)], which proved to be very efficient in this case. On the multigrid cycle, we used the ILU\({}_\beta \)-smoothers (Hackbusch 1994).

3 Stochastic modeling and methods

Let \({\varvec{\theta }}=(\theta _1,\ldots ,\theta _M)\) be a vector of independent random variables. Let \(S:=(\varTheta , \mathcal {B}, \mathbb {P})\) be a probability space. Here \(\varTheta \) denotes a sample space, \(\mathcal {B}\) a \(\sigma \)-algebra on \(\varTheta \), and \(\mathbb {P}\) a probability measure on \((\varTheta , \mathcal {B})\). The solution \(c(t,\mathbf {x},{\varvec{\theta }})\) lies in the tensor product space \(L_2(D)\otimes L_2(\varTheta )\otimes L_2([0,T])\), where [0, T] is a time interval, on which the initial value problem is solved. After discretization, we obtain \(\mathbf {c}\in \mathbb {R}^{n}\otimes \mathbb {R}^{\vert \mathcal {J}\vert } \otimes \mathbb {R}^{n_t}\), where n is the number of basis functions in the physical space, \(\vert \mathcal {J} \vert \) dimension of the stochastic space, and \(n_t\) the number of time steps.

3.1 Sampling methods

Well-known methods to estimate the mean and the variance are sampling methods, such as the quasi-Monte Carlo, Monte Carlo, collocation, and sparse grid methods. The empirical mean value and the variance of \(c(t,\mathbf {x},{\varvec{\theta }})\) can be computed as follows

with \(N_q\) quadrature points \({\varvec{\theta }}_i\) and weights \(w_i\). Here, \(c(t,\mathbf {x},{\varvec{\theta }}_i)\) is the solution evaluated at a fixed point \({\varvec{\theta }}={\varvec{\theta }}_i \in \mathbb {R}^M\). The sample variance is computed as follows

3.2 (Generalized) polynomial chaos expansion

The dependence of the uncertain physical quantities (the solution of the PDE, or QoI) on random variable \({\varvec{\theta }}:\varTheta \rightarrow \mathbb {R}^M\) can be represented in terms of the (generalized) polynomial chaos expansion (Wiener 1938). Here we require that this physical quantity has a finite variance. Different orthogonal polynomials (Hermite, Legendre, Chebyshev, Laguerre) (Askey and Wilson 1985; Xiu and Karniadakis 2002b, 2003; Sudret 2008), or splines, can be used.

To introduce polynomial expansions, we, first, need to introduce the notions of multiindex and multiindex sets. Let \(\beta \) be a multi-index

and \(\mathcal {J}\) is a multiindex set, containing sequences of non-negative integers. We assume \(\{\varPsi _{\beta } \}_{\beta \in \mathcal {J}}\) is a multivariate basis of \(S=L_2(\varTheta , \mathcal {B}, \mathbb {P})\). The cardinality of the multi-index set \(\mathcal {J}\) is infinite.

Polynomial chaos expansion (PCE) was introduced in Wiener (1938). It was shown [Cameron and Martin theorem (Cameron and Martin 1947)], that if \(\mathbf {c}({\varvec{\theta }})\) is a second-order random variable (a function in \(L_2(\varTheta )\)), then it can be expanded as follows:

This expansion converges in the \(L_2(\varTheta )\) sense. Here \(\{\varPsi _{\beta }\}\) are multivariate Hermite polynomials of multi-dimensional Gaussian random variables (\({\varvec{\theta }}\)). The advantage of PCE is that from this point we may forget the original probability space and only work with \((\varTheta , \varGamma )\) with a Gaussian measure.

Definition 1

For numerical computations, the infinite series above can be truncated, i.e., we keep only M random variables, \({\varvec{\theta }}=(\theta _1,\ldots ,\theta _M)\), and limit the total degree of the multi-variate polynomial \(\varPsi _{\beta }({\varvec{\theta }})\) by P. We denote the new multiindex subset as \(\mathcal {J}_{M,P} \subset \mathcal {J}\).

The truncated multi-index set is defined by restricting the sum of components,

The truncated set contains \(\frac{(M+P)!}{P!M!}\) values. This may become a enormous number even for small P and M. Another approach is to preselect the set of polynomials with the moderate cardinality (Todor and Schwab 2007; Matthies and Keese 2005b; Blatman and Sudret 2010; Chkifa et al. 2015).

The expansion (13) can be extended for time-dependent random fields:

where coefficients \(\mathbf {c}_{\beta }(t,\mathbf {x})\) depend on t and \(\mathbf {x}\). For representing of more general, non-Gaussian fields/processes, the generalized polynomial chaos (gPC ) expansion (Xiu and Karniadakis 2002b; Xiu 2010; Xiu and Karniadakis 2002a) was introduced. In gPC , instead of Hermite orthogonal polynomials, other orthogonal polynomials can be used [(see more in the Appendix or in Xiu and Karniadakis (2002b), Xiu and Karniadakis (2003), Ernst et al. (2012)]. Utilization of other types of orthogonal polynomials could result in a more efficient expansion with a smaller number of terms. In this work, we use multivariate Lagrange polynomials with uniform RVs \({\varvec{\theta }}\). The correspondence between the type of gPC and their underlying random variables is shown in Table 5.1 in Xiu (2010) (the Wiener–Askey scheme). The optimal (exponential) convergence rate of each Wiener–Askey polynomial chaos expansion was numerically demonstrated in Xiu and Karniadakis (2002b).

3.3 Truncated (generalized) polynomial chaos expansion

For numerical purposes, we truncate the infinite gPC series above and obtain

Here, \(\varPsi _{\beta }({\varvec{\theta }})\) are multi-variate Legendre polynomials defined as follows

\(\psi _{\beta _j}(\cdot )\) are univariate Legendre polynomials, defined in (30). The scalar product on \(L_2(\varTheta )\) is

where \(Q_{\alpha }=\mathbb {E}\left( \varPsi _{\alpha }^2\right) =q_{\alpha _1}\cdots q_{\alpha _M}\), \(q_{\alpha _j}=\langle \psi _{\alpha _j},\psi _{\alpha _j} \rangle =1/(2\alpha _j +1)\), are the normalization constants and \(\delta _{\alpha \beta }=\delta _{\alpha _1\beta _1}\cdots \delta _{\alpha _M \beta _M}\) is the M-dimensional Kronecker delta function.

Example 1

Let \(M=3\) and the maximal polynomial degree be \(P=2\). Then the multiindex \(\beta \) is \(\beta =(\beta _1,\beta _2,\beta _3)\), and the multiindex set contains the following elements

Remark 3

The mass fraction \(c\) is scaled to take value in [0, 1]. The gPC approximation (14) of the mass fraction may take negative values or values larger than 1. A possible remedy is to assume that \(c\) can be written as \(\exp (\gamma )\), where \(\gamma \) varies from \(-\infty \) to \(+\infty \), and then approximate the auxiliary function \(\gamma =\log c\) instead of the mass fraction itself:

We also note that the trick with the \(\log \) function does not prevent \(c\) from taking values larger than 1. Keeping \(c\le 1\) can be achieved by approximating another transformed variable, like the scaled \(\arctan \). Another function that can be used is the error-function \(\hbox {erf}\) (this is sometimes called a “probit” approximation), or the logistic function (called “logit”). In this work, for the sake of simplicity, we did not change the variables. We were lucky that in the settings we used (small variance in the input parameters), we did not observe negative c (or \(c > 1\)). But for the future, we strongly recommend doing such changes of variables.

Decomposition (14) can be understood as a response surface for \(\mathbf {c}(t,\mathbf {x},{\varvec{\theta }})\). As soon as the response surface is built, the value \(\mathbf {c}(t,\mathbf {x},{\varvec{\theta }})\) can be evaluated for any \({\varvec{\theta }}\) almost for free (without further solutions the groundwater flow PDE). It can be very practical if \(10^6\) samples of \(\mathbf {c}(t,\mathbf {x},{\varvec{\theta }})\) are needed, for example. Since \(\{\varPsi _{\beta }\}\) polynomials are \(L_2\)-orthogonal, the coefficients \(\mathbf {c}_{\beta }(t,\mathbf {x})\) can be computed by projection:

where \(w_i\) are weights and \({\varvec{\theta }}_i\) quadrature points, defined, for instance, by a Clenshaw-Curtis integration rule. Once all gPC coefficients are computed, we can compute the required statistics. For estimates of truncation and aliasing errors, see Conrad and Marzouk (2013), Ghili and Iaccarino (2017).

Possible ways to apply dimensionality reduction on PCE in the spirit of the Basis Adaptation techniques are considered in Tipireddy and Ghanem (2014), Tsilifis et al. (2019), Thimmisetty et al. (2017), Tsilifis and Ghanem (2018).

Remark 4

If only the variance and the mean values are of interest, we recommend using the collocation method with some sparse or full-tensor grid. If some additional statistics are required, such as sensitivity analysis (Sudret 2008; Crestaux et al. 2009), parameter identification, or computing cdf or pdf, we recommend computing the gPC expansion.

Quadrature grids for computing multi-dimensional integrals can be constructed from products of 1D integration rules. To keep computational costs to a minimum, a sparse grid technique can be used for certain classes of smooth functions (Bungartz and Griebel 2004).

Since \(\varPsi _{0}({\varvec{\theta }})\equiv 1\), it becomes apparent that the mean value is the first gPC coefficient

\(0=(0,\ldots ,0)\). The variance is the sum of squared gPC coefficients (Xiu and Karniadakis 2002b; Ghanem et al. 2017; Le Maître and Knio 2010):

3.4 Computing probability density functions

Once we have calculated the gPC surrogate \(\widehat{c}(t,\mathbf {x},{\varvec{\theta }})\) in (14), we can approximate the probability density functions (see Fig. 10), exceedance probabilities and quantiles in the selected points. For this, we should evaluate the multi-variate polynomial \(\widehat{c}(t,\mathbf {x},{\varvec{\theta }})\) in a sufficiently large number of points \({\varvec{\theta }}\)

The random variable \(\widehat{c}({\varvec{\theta }})\) can be sampled, e.g., \(N_s=10^6\) times (no additional extensive simulations are required). The obtained sample can be used to evaluate the required statistics and the probability density function. The exceedance probability for some threshold \(c^{*}\) can be estimated as follows:

Having a sufficiently large sample set makes it possible to estimate quantiles Litvinenko et al. (2019).

3.5 Approximation and truncation errors

Applying gPC approximation, will introduce truncation and approximation errors (Constantine et al. 2012; Sinsbeck and Nowak 2015; Conrad and Marzouk 2013; Ghili and Iaccarino 2017; Ernst et al. 2012). By truncating gPC coefficients \(\{\mathbf {c}_{\beta }:\;\beta \in \mathcal {J}\setminus \mathcal {J}_{M,P} \}\), we introduce the truncation error in the \(L_2(\varTheta )\) norm

In further numerical experiments we average the error over \(\varTheta \), i.e., compute the error as a function of time and space \(\hbox {err}_{tr}:=\hbox {err}_{tr}(t,\mathbf {x})\). Additionally, we approximate the coefficients \(\mathbf {c}_{\beta }(t,\mathbf {x})\) by \(\widehat{\mathbf {c}}_{\beta }(t,\mathbf {x})\). This introduces the approximation error

The sum of both errors is

Remark 5

Spatial resolution plays a vital role in the simulations. Thus, the distribution of the parameters of the porous medium should be represented correctly. Furthermore, smoothing the flow on a coarse grid due to numerical diffusion can lead to a loss of some phenomena and therefore reduce the accuracy of the uncertainty quantification. Thus, we must cover \(\mathcal {D}\) with sufficiently fine grids and use sufficiently small time steps.

4 Parallelisation

Because of the massive numerical complexity of the problem, all computations were performed on a Cray XC40 parallel cluster Shaheen II provided by King Abdullah University of Science and Technology (KAUST) in Saudi Arabia (https://www.hpc.kaust.edu.sa/content/shaheen-ii). Shaheen II has 6174 nodes with 2 Intel Haswell 2.3 GHz CPUs per node, and 16 processor cores per CPU. Every node has 128 GB RAM. The nodes communicate via the Cray Aries interconnect network with Dragonfly topology. The system is equipped with the Sonexion Lustre 2000 storage appliance.

There are two levels of parallelism in our implementation. On the top level, we compute all scenarios in parallel. Each scenario corresponds to a particular quadrature or collocation point in the stochastic space. For every scenario, a separate node of the cluster is allocated. All scenarios are independent, so there is no communication between the nodes. We allocate, depending on the experiment, up to 600 computing nodes. This allows us to compute (up to) 600 qMC (or quadrature) simulations simultaneously. Shaheen II uses the SLURM system to manage parallel jobs, and we used the SLURM job arrays for the concurrent computations of the scenarios.

On the bottom layer, computation of each single scenario (one quadrature point) is done in parallel on the 32 cores of one node. This parallelisation is based on the distribution of the discretization grid between the processes and the communication using MPI. As this happens on the cores of one node, the network hardware is not involved. This part is implemented in ug4 . The parallel efficiency of this toolbox for solving two- and three- dimensional Laplace problems is analyzed in Reiter et al. (2013). The scalability of ug4 in the case of the 2D Elder problem is presented (Vogel et al. 2013). Also, the speedup in these computations cannot be ideal (due to the communication); the toolbox demonstrates very high efficiency for this type of problem. We refer also to Heppner et al. (2013), Vogel (2014) for further details.

Thus, in total, we run our code on up to \(600 \times 32=19200\) parallel cores. Depending on the grid step sizes the total computing time could be up to 24 h.

The computations of the mean value, variance, and other statistics are done in the postprocessing phase in parallel on all cores of only one node. Herewith the same distribution of the spatial grid, as for the computation of each realization, is used. The spatial grid is distributed between the cores of that single node, and its parts are processed concurrently. No communication between the processes is required, and the computation of the statistics takes a very short time in comparison with the computing all scenarios.

5 Numerical experiments

5.1 Software

To compute the deterministic solutions, we used d\({}^3\)f plugin of the simulation framework ug4 (Reiter et al. 2013; Vogel et al. 2013). ug4 is a novel flexible software system for simulating PDE based models on high performance parallel clusters. It is capable of numerical treatment of non-stationary non-linear systems of PDEs. This software framework has successfully been applied to a variety of problems such as density-driven and thermohaline flow in porous media, the Navier–Stokes equations, drug diffusion through human skin, and level-set methods. Special care is given to parallel performance issues, scalability, linear algebra algorithms, and data structures. In addition, ug4 is specially designed for user-friendly handling and functionality is made available to non-programming users through the scripts and graphical representations.

5.2 3D computational domains

The geometry of real-life 3D computational domains can be very complicated. Discretization of such domains (reservoirs) may require non-trivial 3D meshes. This can be very challenging in the stochastic context, such as we have here. Therefore, we start with two relatively trivial reservoir geometries: (1) a parallelepiped \(\mathcal {D}=[0,600]\times [0,600]\times [0,150]\) (see Fig. 1a); (2) an elliptic cylinder with the semi-axes 600 and 300 for the base ellipse and height 150. Thus, the longest radius is 300, \(x\in [-300,300]\), the shortest radius is 150, \(y\in [-150,150]\), the height \(z\in [-150,0]\) and the center is in the point \((0,0,-75)\) (see Fig. 1b). The polluted area is a circle with the center in \((-150,0,0)\), and with radius 100.

5.3 Parallelepiped with 3 RVs

For every realization, the porosity and permeability are functions of the spatial coordinates. These functions can be expanded into the Fourier series that would introduce the sine and cosine functions. Therefore, in (27) and (28), we used the sine and cosine functions to model the in-homogeneity of the medium. Note that a natural assumption for the Darcy flow would be that the expansion is finite, i.e., high-frequency modes are truncated. The suggested model is homogenized and does not resolve the scales of the pores. With the current state of the computational equipment, we had to confine ourselves with only a few Fourier modes. Besides that, we used linear factors to introduce some global variation in the porosity.

In the following, we consider an experiment with three random variables \({\varvec{\theta }}=(\theta _1,\theta _2,\theta _3)\) and with the following porosity coefficient

where \(\mathbf {x}=(x,y,z)\in [0,600]\times [0,600]\times [0,150]\) (parallelepiped). With this porosity coefficient we try to model the inhomogeneity of the medium. For every realization, the porosity and the permeability are functions of the spatial coordinates. In general, \(\phi (\mathbf {x},{\varvec{\theta }})\) can be expanded into the Fourier series that would introduce the sine and cosine functions. Note that a natural assumption for the Darcy flow would be that the expansion is finite, i.e., high-frequency modes are truncated. The model is homogenized and does not resolve the scales of the pores. Surely, with the current state of the computational equipment, we had to confine ourselves with only a few Fourier modes.

In Fig. 2, we visualize isosurfaces \(\mathbb {E}\left( c\right) =0.5\) after 1500 time steps of length 0.005 years, computed using (a) 200 qMC simulations (Halton sequence) and (b) gPC response surface of total degree \(P=4\). All gPC coefficients were computed via a 3D quadrature rule with 125 quadrature points. In our numerical experiments with the polynomial degrees \(P<4\) we were not able to compute the variance accurately.

Note that in the present problem setting, the flow field is very complicated. The main process tends to represent the same behaviour as in the 2D version of the Elder problem (Litvinenko et al. 2019). The highly concentrated solution from the top boundary moves downward and displaces the pure water located initially at the bottom. This pure water is pushed up. As the diffusion is very small, the transport of the salt by these opposite flows creates a non-trivial pattern of the concentration field, typically described as “fingering”. The particular pattern depends on the profile of the boundary conditions for \(c\) and the initial flow. In our case, we see 5 “fingers” (4 at the corners and one at the center). As we see, the isosurfaces of \(\mathbb {E}\left( c\right) = 0.5\) are almost identical for both methods.

Isosurface \(\mathbb {E}\left( c\right) =0.5\) computed using a 200 qMC simulations and b gPC response surface of degree 4. The upper square edge corresponds to the boundary of the red area in Fig. 1a

The same holds for the variance. Figure 3 presents the isosurface \({\mathrm {Var}\left[ c\right] }=0.05\) after 1500 time steps for (a) 200 qMC simulations (Halton sequence), (b) gPC response surface of degree 4 and (c) comparison of the isosurfaces from (a) and (b). We see that positions, numbers, and shapes of all the fingers are similar. The isosurfaces, computed via qMC , are slightly larger, especially the one in the middle (see Fig. 3c).

Five different contour lines (isosurfaces) of the deterministic solution \(c(\mathbf {x},{\varvec{\theta }}=0)\) for the porosity, defined in (27), are shown in Fig. 4.

Five isosurfaces of the deterministic solution \({c}(t,\mathbf {x},{\varvec{\theta }}=0)=\{1.0,0.75,0.5,0.25,0.0\}\) after 9.6 years; a view from the top; b view from the bottom. The upper square edge corresponds to the boundary of the red area in Fig. 1a

5.4 Elliptical cylinder with 3 RVs

In this example we consider a cylindrical domain (see Fig. 1b). The goal is to see the influence of the computing domain on the solution. Indeed, as we can see below, the number of fingers and their shapes are different.

The porosity coefficient has three layers and is defined with three RVs as follows:

Figure 5 shows two random realizations of the porosity field.

In Fig. 6 we present a comparison of the variance of \(c\), computed via qMC (200 simulations) and gPC of degree \(P=4\). All \(\frac{(M+P)!}{M!P!}=\frac{7!}{3!4!}\)=35 gPC coefficients are computed with a full Clenshaw–Curtis quadrature Clenshaw and Curtis (1960), containing 125 quadrature points. In Fig. 6a and b the cutting plane has the normal vector (1, 0, 0), and Fig. 6c and d have the normal vector (0, 1, 0). The variances, computed via qMC and gPC , lie in the intervals \({\mathrm {Var}\left[ c\right] }_{qMC\,}\in [0,0.14]\), and \({\mathrm {Var}\left[ c\right] }_{gPC\,}\in [0,0.13]\) respectively. The small difference is not really visible in the pictures. Thus, we conclude that the gPC surrogate model, computed on a Clenshaw-Curtis quadrature grid, can be used for approximating the mean and the variance of \(c\).

Variance of the mass fraction in the cylindrical 3D reservoir, computed via 200 qMC simulations (a) and (c); and gPC of degree 4 with 35 gPC coefficients (b) and (d). \({\mathrm {Var}\left[ c\right] }_{qMC\,}\in [0,0.14]\), \({\mathrm {Var}\left[ c\right] }_{gPC\,}\in [0,0.13]\). The first cutting plane on a–b has normal vector (1, 0, 0), the second on c–d (0, 1, 0)

To demonstrate more similarities between the solutions, computed via qMC and gPC , we visualize isosurfaces of the variance of the mass fraction in the cylindrical 3D reservoir (Figs. 7 and 8). In Fig. 7 we demonstrate two isosurfaces \({\mathrm {Var}\left[ c\right] }=0.025\) computed via a) qMC method, and b) gPC of degree 4 surrogate. Figure 7c shows the comparison of both isosurfaces. The results are very similar, although the isosurface computed via gPC is slightly larger.

In Figure 8 we demonstrate two isosurfaces \({\mathrm {Var}\left[ c\right] }=0.07\) computed via a) qMC method (Fig. 8a, and b) gPC of degree 4 (Fig. 8b). Figure 8c shows the comparison of both isosurfaces. Again, the results are very similar, and it is hard to say which isosurface is “larger” or “smaller”.

In Fig. 9 we compare two isosurfaces \({\mathrm {Var}\left[ c\right] }=0.12\) computed via (1) qMC method and (b) gPC of degree 4. Once again, the results (the number of fingers and their shape) are very similar. The isosurface computed via qMC is slightly larger.

In Fig. 10 (left) and (right) we compare four pdfs, computed with polynomial degrees \(P=\{1,2,3,4\}\), \(M=3\), in two fixed points \((100,0,-50)\) (left) and \((100,0,-25)\) (right) in the cylindrical domain, after \(T=400\) time steps (each step is equal to 0.006 years). We can see that \(P=\{2,3,4\}\) are giving approximately equal results for both points \((t,\mathbf {x})\). The variance for \(P=\{3,4\}\) is slightly smaller as the variance for \(P=2\). The exact value of \(c\) is unknown, and the deterministic value of \(c\) at \((100,0,-50)\) is 0.8, and at \((100,0,-25)\) is 0.995.

5.5 Time evolution of \(\overline{c}(t,\mathbf {x})\) and \({\mathrm {Var}\left[ c\right] }(t,\mathbf {x})\)

Here we repeat the experiment with \(\phi \) as in (28)–(29). The gPC coefficients were computed with 125 quadrature points. Figure 11 shows the evolution of the mean of the mass fraction in the cylindrical 3D reservoir after (a) 0, (b) 0.55, (c) 1.1, (d) 2.2 years. The cutting plane is (150, y, z). The different stages of the fingers building over time are clearly visible.

Figure 12 shows the variance of the mass fraction in the cylindrical 3D reservoir after \(\{0.55, 1.1, 2.2\}\) years (after 100, 200, and 400 time steps, respectively).

Propagation of the variance of the mass fraction in the cylindrical 3D reservoir, cutting plane is (150, y, z). Evolution of \({\mathrm {Var}\left[ c\right] }(t,\mathbf {x})\) in time after a 0.55, b 1.1, c 2.2 years (100, 200, 400 time steps respectively). After 400 time steps \({\mathrm {Var}\left[ c\right] }\in [0,0.17]\)

5.6 Best practices

After numerous numerical tests, we created a best practice guide as outlined below:

-

1.

The number of uncertain variables describing the porosity coefficient depends on the model and assumptions. We recommend to start with 1–3 variables to improve understanding of the phenomena . We note that a heterogeneous porosity field in 3D may require hundreds of random variables. That can mean thousands of gPC coefficients. This is computationally unaffordable unless we use some advanced low-rank tensor techniques (Dolgov et al. 2014) or multi-scale/homogenization strategy. In this work, we considered three random variables. The more random variables that are present, the harder it can be to interpret the results, and additional sensitivity analysis (Sudret 2008; Crestaux et al. 2009) may be required.

-

2.

We assume a smooth dependence of the output QoI on the uncertain input parameters. We recommend to check it at least visually before computing gPC .

-

3.

The gPC -based surrogate, for the cases when the variance of the porosity is not so high, showed reliable results. For the validation, a qMC method can be used.

-

4.

We were unable to achieve a correct variance of \(c\) with the gPC method in tests where the variance of the porosity was large.

-

5.

We recommend the total polynomial degree in gPC to be \(P=4\) or 5. With our computational budget, we failed to get the correct variance of \(c\) for \(P>5\). Additionally, the quadrature rule was probably not robust enough to catch the oscillatory behavior of the gPC polynomials. This is a known drawback of using global polynomials.

-

6.

The coefficients of the gPC surrogate require calculating high-dimensional integrals. It could be hard to compute small gPC coefficients. As a result, it could be hard to compute the variance accurately.

-

7.

The total numerical cost could be reduced by choosing adaptive spatial and time step sizes, depending on the random perturbation (the Multi level Monte Carlo or Multi-index Monte Carlo methods). But we also observed that a large time or spatial step sizes may result in a lack of convergence.

6 Conclusion

In this work, we solved the density driven groundwater flow problem with uncertain porosity and permeability. An accurate solution of this time-dependent and non-linear problem is impossible because of the presence of natural uncertainties in the reservoir such as porosity and permeability. Therefore, we estimated the mean value and the variance of the solution, as well as the propagation of uncertainties from the random input parameters to the solution.

We started by defining the Elder-like problem. Then we described the multi-variate polynomial approximation (gPC ) approach and used it to estimate the required statistics of the mass fraction. Utilizing the gPC method allowed us to reduce the computational cost compared to the classical quasi Monte Carlo method. gPC assumes that the output function \(c(t,\mathbf {x},{\varvec{\theta }})\) is square-integrable and smooth w.r.t uncertain input variables \({\varvec{\theta }}\).

Many factors, such as non-linearity, multiple solutions, multiple stationary states, time dependence and complicated solvers, make the investigation of the convergence of the gPC method a non-trivial task.

We used an easy-to-implement, but only sub-optimal gPC technique to quantify the uncertainty. For example, it is known that by increasing the degree of global polynomials (Hermite, Langange and similar), Runge’s phenomenon appears. Here, probably local polynomials, splines or their mixtures would be better. Additionally, we used an easy-to-parallelise quadrature rule, which was also only suboptimal. For instance, adaptive choice of sparse grid (or collocation) points (Conrad and Marzouk 2013; Nobile et al. 2015; Blatman and Sudret 2010; Constantine et al. 2012; Crestaux et al. 2009) would be better, but we were limited by the usage of parallel methods. Adaptive quadrature rules are not (so well) parallelisable. In conclusion, we can report that: a) we developed a highly parallel method to quantify uncertainty in the Elder-like problem; b) with the gPC of degree 4 we can achieve similar results as with the qMC method.

In the numerical section we considered two different aquifers - a solid parallelepiped and a solid elliptic cylinder. One of our goals was to see how the domain geometry influences the formation, the number and the shape of fingers.

Since the considered problem is nonlinear, a high variance in the porosity may result in totally different solutions; for instance, the number of fingers, their intensity and shape, the propagation time, and the velocity may vary considerably.

The number of cells in the presented experiments varied from 241, 152 to 15, 433, 728 for the cylindrical domain and from 524, 288 to 4, 194, 304 for the parallelepiped. The maximal number of parallel processing units was \(600\times 32\), where 600 is the number of parallel nodes and 32 is the number of computing cores on each node. The total computing time varied from 2 hours for the coarse mesh to 24 h for the finest mesh.

We also demonstrated how to use the parallel multigrid solver ug4 as a black-box solver. An additional novelty of this work is that the gPC technique for uncertainty quantification was implemented on a distributed memory system, where all solutions and gPC coefficients were distributed over multiple nodes. We see great potential in using the ug4 library as a black box solver for quantification of uncertainties. The code is available online in the GitHub repositoryFootnote 1.

Although this work leaves many open questions, it is nonetheless an important investigation on the density driven flow phenomena. The results of this work could potentially be applied for the estimation of contamination risks, monitoring the quality of drinking water, modeling of seawater intrusion into coastal aquifers, radioactive waste disposal, and contaminant plumes.

As a next step in a future study, we will integrate the ug4 multi-grid solver in the Multi Level Monte Carlo framework with the aim to compute samples mostly on coarse grids with just a few samples on fine grids.

Notes

References

Askey, R., Wilson, J.A.: Some Basic Hypergeometric Orthogonal Polynomials that Generalize Jacobi Polynomials. American Mathematical Society, Providence (1985)

Babuška, I., Nobile, F., Tempone, R.: A stochastic collocation method for elliptic partial differential equations with random input data. SIAM J. Numer. Anal. 45, 1005–1034 (2007)

Babuška, I., Tempone, R., Zouraris, G.E.: Galerkin finite element approximations of stochastic elliptic partial differential equations. SIAM J. Numer. Anal. 42, 800–825 (2004)

Barthelmann, V., Novak, E., Ritter, K.: High dimensional polynomial interpolation on sparse grids. Adv. Comput. Math. 12, 273–288 (2000)

Bear, J.: Dynamics of Fluid in Porous Media. Dover Publications, INC, New York (1972)

Bear, J.: Hydraulics of Groundwater. Dover Publications. Inc., Mineola (1979)

Bear, J., Bachmat, Y.: Introduction to Modeling of Transport Phenomena in Porous Media. Kluwer Academic Publishers, Dordrecht (1990)

Blatman, G., Sudret, B.: An adaptive algorithm to build up sparse polynomial chaos expansions for stochastic finite element analysis. Probab. Eng. Mech. 25, 183–197 (2010)

Bode, F., Ferré, T., Zigelli, N., Emmert, M., Nowak, W.: Reconnecting stochastic methods with hydrogeological applications: a utilitarian uncertainty analysis and risk assessment approach for the design of optimal monitoring networks. Water Resour. Res. 54, 2270–2287 (2018)

Bompard, M., Peter, J., Désidéri, J.-A.: Surrogate models based on function and derivative values for aerodynamic global optimization. In: Fifth European Conference on Computational Fluid Dynamics, ECCOMAS CFD 2010, Lisbon, Portugal, (2010)

Bungartz, H.-J., Griebel, M.: Sparse grids. Acta Numer. 13, 147–269 (2004)

Caflisch, R.E.: Monte Carlo and quasi-Monte Carlo methods. Acta Numer. 7, 1–49 (1998)

Cameron, R.H., Martin, W.T.: The orthogonal development of non-linear functionals in series of fourier-hermite functionals. Ann. Math. 48, 385 (1947)

Carrera, J.: An overview of uncertainties in modelling groundwater solute transport. J. Contam. Hydrol., 13, 23 – 48 (1993). Chemistry and Migration of Actinides and Fission Products

Chkifa, A., Cohen, A., Schwab, Ch.: Breaking the curse of dimensionality in sparse polynomial approximation of parametric PDEs. Journal de Mathematiques Pures et Appliques 103, 400–428 (2015)

Clenshaw, C.W., Curtis, A.R.: A method for numerical integration on an automatic computer. Numer. Math. 2, 197–205 (1960)

Conrad, P., Marzouk, Y.: Adaptive smolyak pseudospectral approximations. SIAM J. Sci. Comput. 35, A2643–A2670 (2013)

Constantine, P.G., Eldred, M.S., Phipps, E.T.: Sparse pseudospectral approximation method. Comput. Methods Appl. Mech. Eng. 229–232, 1–12 (2012)

Costa, A.: Permeability-porosity relationship: A reexamination of the kozeny-carman equation based on a fractal pore-space geometry assumption. Geophys. Res. Lett. 33, 1–5 (2006)

Cremer, C.J.M., Graf, T.: Generation of dense plume fingers in saturated-unsaturated homogeneous porous media. J. Contam. Hydrol. 173, 69–82 (2015)

Crestaux, T., Le Maıtre, O., Martinez, Jean-Marc: Polynomial chaos expansion for sensitivity analysis. Reliab. Eng. Syst. Saf. 94, 1161–1172 (2009)

Diersch, H.-J.G., Kolditz, O.: Variable-density flow and transport in porous media: approaches and challenges. Adv. Water Resour. 25, 899–944 (2002)

Dolgov, S., Khoromskij, B.N., Litvinenko, A., Matthies, H.G.: Computation of the response surface in the tensor train data format. arXiv preprint arXiv:1406.2816 (2014)

Dolgov, S., Khoromskij, B.N., Litvinenko, A., Matthies, H.G.: Polynomial chaos expansion of random coefficients and the solution of stochastic partial differential equations in the tensor train format. SIAM/ASA J. Uncertain. Quantif. 3, 1109–1135 (2015)

Elder, J., Simmons, C., Diersch, H.-J., Frolkovič, P., Holzbecher, E., Johannsen, K.: The Elder problem. Fluids 2(1), 11 (2017)

Elder, J.W.: Steady free convection in a porous medium heated from below. J. Fluid Mech. 27, 29–48 (1967)

Elder, J.W.: Transient convection in a porous medium. J. Fluid Mech. 27, 609–623 (1967)

Ernst, O.G., Mugler, A., Starkloff, H.-J., Ullmann, E.: On the convergence of generalized polynomial chaos expansions. ESAIM Math. Modell. Numer. Anal. 46, 317–339 (2012)

Espig, M., Hackbusch, W., Litvinenko, A., Matthies, H.G., Waehnert, Ph: Efficient low-rank approximation of the stochastic Galerkin matrix in tensor formats. High-order finite element approximation for partial differential equations. Comput. Math. Appl. 67, 818–829 (2014)

Fan, Y., Duffy, C.J., Oliver, D.S.: Density-driven groundwater flow in closed desert basins: field investigations and numerical experiments. J. Hydrol. 196, 139–184 (1997)

Frolkovič, P.: Consistent velocity approximation for density driven flow and transport. In: R. Van Keer, et al. (eds.) Advanced Computational Methods in Engineering, Part 2: Contributed papers. Shaker Publishing, Maastricht, pp. 603–611

Frolkovič, P.: Maximum principle and local mass balance for numerical solutions of transport equation coupled with variable density flow. Acta Mathematica Universitatis Comenianae 1, 137–157 (1998)

Frolkovič, P., Knabner, P.: Consistent velocity approximations in finite element or volume discretizations of density driven flow. In: Aldama,A.A. et al. (eds.) Computational Methods in Water Resources XI, pp. 93–100. Computational Mechanics Publication, Southhampten

Gerstner, Th, Griebel, M.: Numerical integration using sparse grids. Numer. Algorithms 18, 209–232 (1998)

Ghanem, R., Owhadi, H., Higdon, D.: Handbook of Uncertainty Quantification, vol. 06. Springer, Berlin (2017)

Ghanem, R., Spanos, P.: Stochastic Finite Elements: A Spectral Approach. Springer, New York (1991)

Ghili, S., Iaccarino, G.: Least squares approximation of polynomial chaos expansions with optimized grid points. SIAM J. Sci. Comput. 39, A1991–A2019 (2017)

Giraldi, L., Litvinenko, A., Liu, D., Matthies, H.G., Nouy, A.: To be or not to be intrusive? the solution of parametric and stochastic equations–the “plain vanilla” galerkin case. SIAM J. Sci. Comput. 36, A2720–A2744 (2014)

Giunta, A.A., Eldred, M.S., Castro, J.P.: Uncertainty quantification using response surface approximation. In: 9th ASCE Specialty Conference on Probabolistic Mechanics and Structural Reliability. Albuquerque, New Mexico, USA (2004)

Griebel, M.: Sparse grids and related approximation schemes for higher dimensional problems. In: Foundations of Computational Mathematics, Santander 2005, vol. 331 of London Mathematical Society Lecture Note Series, pp. 106–161. Cambridge University Press, Cambridge (2006)

Hackbusch, W.: Multi-Grid Methods and Applications. Springer, Berlin (1985)

Hackbusch, W.: Iterative Solution of Large Sparse Systems of Equations. Springer, New York (1994)

Heppner, I., Lampe, M., Nägel, A., Reiter, S., Rupp, M., Vogel, A., Wittum, G.: Software framework ug4: parallel multigrid on the hermit supercomputer. In: Nagel, W.E., Kröner, D.H., Resch, M.M. (eds.) High Performance Computing in Science and Engineering 2012, pp. 435–449. Springer, Berlin (2013)

Joe, S., Kuo, F.: Constructing sobol sequences with better two-dimensional projections. SIAM J. Sci. Comput. 30, 2635–2654 (2008)

Johannsen, K.: On the validity of the boussinesq approximation for the Elder problem. Comput. Geosci. 7, 169–182 (2003)

Karhunen, K.: Zur Spektraltheorie stochastischer Prozesse, Ser. A : 1, Math. Phys., 34, Annales Academiae Scientiarum Fennicae (1946)

Keese, A., Mattthies, H.G.: Hierarchical parallelisation for the solution of stochastic finite element equations. Comput. Struct. 83, 1033–1047 (2005)

Khoromskij, B.N., Litvinenko, A., Matthies, H.G.: Application of hierarchical matrices for computing the Karhunen–Loève expansion. Computing 84, 49–67 (2009)

Kim, N.H., Wang, H., Queipo, N.V.: Efficient shape optimization under uncertainty using polynomial chaos expansions and local sensitivities. AIAA J. 44, 1112–1116 (2006)

Klimke, A.: Sparse grid interpolation toolbox. https://sparsegrids.org (2008)

Kobus, H.: Soil and groundwater contamination and remediation technology in europe. In: Sato, K., Iwasa, Y. (eds.) Groundwater Updates, pp. 3–8. Springer, Tokyo (2000)

Le Maître, O.P., Knio, O.M.: Introduction: Uncertainty Quantification and Propagation. In: Spectral Methods for Uncertainty Quantification, pp. 1–13. Springer, Berlin (2010)

Litvinenko, A., Logashenko, D., Tempone, R., Wittum, G., Keyes, D.: Propagation of uncertainties in density-driven flow. arXiv preprint arXiv:1905.01770 (2019)

Litvinenko, A., Matthies, H.G.: Uncertainties quantification and data compression in numerical aerodynamics. PAMM 11, 877–878 (2011)

Litvinenko, A., Matthies, H.G., El-Moselhy, T. A.: Sampling and low-rank tensor approximation of the response surface. In: Dick, J., Kuo, F. Y., Peters, G. W., Sloan, I. H. (eds.) Monte Carlo and Quasi Monte Carlo Methods 2012, vol. 65 of Springer Proceedings in Mathematics & Statistics, pp. 535–551. Springer, Berlin (2013)

Liu, D., Görtz, S.: Efficient quantification of aerodynamic uncertainty due to random geometry perturbations. In: Dillmann, A., et al. (ed.) New Results in Numerical and Experimental Fluid Mechanics IX, pp. 65–73, Springer, Berlin (2014)

Liu, D., Litvinenko, A., Schillings, C., Schulz, V.: Quantification of airfoil geometry-induced aerodynamic uncertainties–comparison of approaches. SIAM/ASA J. Uncertai. Quantif. 5, 334–352 (2017)

Loeven, G. J. A., Witteveen, J. A. S., Bijl, H.: A probabilistic radial basis function approach for uncertainty quantification. In: Proceedings of the NATO RTO-MP-AVT-147 Computational Uncertainty in Military Vehicle Design Symposium (2007)

Matthies, H.G.: Uncertainty quantification with stochastic finite elements. In: Stein, E., de Borst, R., Hughes, T.R.J. (eds.) Encyclopedia of Computational Mechanics. Wiley, Chichester (2007)

Matthies, H.G., Keese, A.: Galerkin methods for linear and nonlinear elliptic stochastic partial differential equations. Comput. Methods Appl. Mech. Eng. 194, 1295–1331 (2005)

Matthies, H.G., Zander, E., Rosić, B.V., Litvinenko, Alexander: Parameter estimation via conditional expectation: a Bayesian inversion. Adv. Model. Simul. Eng. Sci. 3, 24 (2016)

Matthies, H. G., Zander, E., Rosić, B. V., Litvinenko, A., Pajonk, O.: Inverse problems in a Bayesian setting. In: Ibrahimbegović, A. (ed.) Computational Methods for Solids and Fluids—Multiscale Analysis, Probability Aspects and Model Reduction, vol. 41 of Computational Methods in Applied Sciences, pp. 245–286 (2016)

Najm, H.N.: Uncertainty quantification and polynomial chaos techniques in computational fluid dynamics. Ann. Rev. Fluid Mech. 41, 35–52 (2009)

Nobile, F., Tamellini, L., Tesei, F., Tempone, R.: An adaptive sparse grid algorithm for elliptic PDEs with log-normal diffusion coefficient. MATHICSE Technical Report 04, (2015)

Nobile, F., Tempone, R., Webster, Clayton G.: A sparse grid stochastic collocation method for partial differential equations with random input data. SIAM J. Numer. Anal. 46, 2309–2345 (2008)

Novak, E., Ritter, K.: High dimensional integration of smooth functions over cubes. Numerische Mathematik 75, 79–97 (1996)

Novak, E., Ritter, K.: The curse of dimension and a universal method for numerical integration. In: Multivariate approximation and splines (Mannheim, 1996), vol. 125 of International Series of Numerical Mathematics, pp. 177–187, Birkhäuser, Basel (1997)

Novak, E., Ritter, K.: Simple cubature formulas with high polynomial exactness. Constr. Approx. 15, 499–522 (1999)

Nowak, W., Litvinenko, A.: Kriging and spatial design accelerated by orders of magnitude: combining low-rank covariance approximations with fft-techniques. Math. Geosci. 45, 411–435 (2013)

Panda, M., Lake, W.: Estimation of single-phase permeability from parameters of particle-size distribution. AAPG Bull. 78, 1028–1039 (1994)

Pape, H., Clauser, C., Iffland, J.: Permeability prediction based on fractal pore-space geometry. Geophysics 64, 1447–1460 (1999)

Post, V.E.A., Houben, G.J.: Density-driven vertical transport of saltwater through the freshwater lens on the island of baltrum (germany) following the 1962 storm flood. Investigation of coastal aquifers. J. Hydrol. 551, 689–702 (2017)

Radović, I., Sobol, I.M., Tichy, R.F.: Quasi-monte carlo methods for numerical integration: comparison of different low discrepancy sequences. Monte Carlo Methods Appl. 2, 1–14 (1996)

Reiter, S., Logashenko, D., Vogel, A., Wittum, G.: Mesh generation for thin layered domains and its application to parallel multigrid simulation of groundwater flow. Comput. Vis. Sci. 16, 151–164 (2017)

Reiter, S., Vogel, A., Heppner, I., Rupp, M., Wittum, G.: A massively parallel geometric multigrid solver on hierarchically distributed grids. Comput. Vis. Sci. 16, 151–164 (2013)

Rosić, B.V., Kučerová, A., Sýkora, J., Pajonk, O., Litvinenko, A., Matthies, H.G.: Parameter identification in a probabilistic setting. Eng. Struct. 50, 179–196 (2013). Engineering Structures: Modelling and Computations (special issue IASS-IACM 2012)

Rubin, Yoram: Applied Stochastic Hydrogeology. Oxford University Press, Oxford (2003)

Schneider, A., Kröhn, K.-P., Püschel, A.: Developing a modelling tool for density-driven flow in complex hydrogeological structures. Comput. Vis. Sci. 15, 163–168 (2012)

Schwab, Ch., Todor, R.A.: Sparse finite elements for stochastic elliptic problems–higher order moments. Computing 71, 43–63 (2003)

Schwab, Ch., Todor, R.A.: Karhunen–Loève approximation of random fields by generalized fast multipole methods. J. Comput. Phys. 217, 100–122 (2006)

Sinsbeck, M., Nowak, W.: An optimal sampling rule for nonintrusive polynomial chaos expansions of expensive models. Int. J. Uncertai. Quantif. 5, 275–295 (2015)

Smolyak, S.A.: Quadrature and interpolation formulas for tensor products of certain classes of functions. Sov. Math. Dokl. 4, 240–243 (1963)

Sudret, B.: Global sensitivity analysis using polynomial chaos expansions. Reliab. Eng. Syst. Saf. 93, 964–979 (2008)

Tartakovsky, D.M.: Assessment and management of risk in subsurface hydrology: a review and perspective. Adv. Water Resour. 51, 247–260 (2013). 35th Year Anniversary Issue

Thimmisetty, Ch., Tsilifis, P., Ghanem, R.: Homogeneous chaos basis adaptation for design optimization under uncertainty: application to the oil well placement problem. Artif. Intell. Eng. Des. Anal. Manuf. 31, 265–276 (2017)

Tipireddy, R., Ghanem, R.: Basis adaptation in homogeneous chaos spaces. J. Comput. Phys. 259, 304–317 (2014)

Todor, R.A., Schwab, Ch.: Convergence rates for sparse chaos approximations of elliptic problems with stochastic coefficients. IMA J. Numer. Anal. 27, 232–261 (2007)

Tsilifis, P., Ghanem, R.G.: Bayesian adaptation of chaos representations using variational inference and sampling on geodesics. P. R. Soc. Math. Phys. Eng. Sci. 474, 20180285 (2018)

Tsilifis, P., Huan, X., Safta, C., Sargsyan, Kh, Lacaze, G., Oefelein, J.C., Najm, H.N., Ghanem, R.G.: Compressive sensing adaptation for polynomial chaos expansions. J. Comput. Phys. 380, 29–47 (2019)

Vereecken, H., Schnepf, A., Hopmans, J.W., Javaux, M., Or, D., Roose, T., Vanderborght, J., Young, M.H., Amelung, W., Aitkenhead, M., Allison, S.D., Assouline, S., Baveye, P., Berli, M., Brüggemann, N., Finke, P., Flury, M., Gaiser, T., Govers, G., Ghezzehei, T., Hallett, P., Hendricks Franssen, H.J., Heppell, J., Horn, R., Huisman, J.A., Jacques, D., Jonard, F., Kollet, S., Lafolie, F., Lamorski, K., Leitner, D., McBratney, A., Minasny, B., Montzka, C., Nowak, W., Pachepsky, Y., Padarian, J., Romano, N., Roth, K., Rothfuss, Y., Rowe, E.C., Schwen, A., Šim\({\mathring{u}}\)nek, J., Tiktak, A., Van Dam, J., van der Zee, S.E.A.T.M., Vogel, H.J., Vrugt, J.A., Wöhling, T., Young, I.M.: Modeling soil processes: Review, key challenges, and new perspectives. Vadose Zone J., 15, p. vzj2015.09.0131 (2016)

Vogel, A.: Flexible und kombinierbare Implementierung von Finite-Volumen-Verfahren höherer Ordnung. Dissertation, Universität Frankfurt, (2014)

Vogel, A., Reiter, S., Rupp, M., Nägel, A., Wittum, G.: Ug 4: a novel flexible software system for simulating pde based models on high performance computers. Comput. Vis. Sci. 16, 165–179 (2013)

Voss, C.I., Souza, W.R.: Variable density flow and solute transport simulation of regional aquifers containing a narrow freshwater-saltwater transition zone. Water Resour. Res. 23, 1851–1866 (1987)

Wiener, N.: The homogeneous chaos. Am. J. Math. 60, 897–936 (1938)

Xie, Y., Simmons, C.T., Werner, A.D., Diersch, H.-J.G.: Prediction and uncertainty of free convection phenomena in porous media. Water Resour. Res. 48(2), 2535 (2012)

Xiu, D.: Fast numerical methods for stochastic computations: a review. Commun. Comput. Phys. 5(2–4), 242–272 (2009)

Xiu, D.: Numerical Methods for Stochastic Computations: A Spectral Method Approach. Princeton University Press, Princeton (2010)

Xiu, D., Karniadakis, G.E.: Modeling uncertainty in flow simulations via generalized polynomial chaos. J. Comput. Physics 187, 137–167 (2003)

Xiu, D., Karniadakis, G.E.: Modeling uncertainty in steady state diffusion problems via generalized polynomial chaos. Comput. Meth. Appl. Mech. Eng. 191, 4927–4948 (2002)

Xiu, D., Karniadakis, G.E.: The Wiener-Askey polynomial chaos for stochastic differential equations. SIAM J. Sci. Comput. 24, 619–644 (2002)

Zhaojun, B., Demmel, J., Dongarra, J., Ruhe, A., van der Vorst, H.: Templates for the Solution of Algebraic Eigenvalue Problems: A Practical Guide. SIAM, Philadelphia (2000)

Acknowledgements

Open Access funding provided by Projekt DEAL. This work was supported by funding from the Alexander von Humboldt foundation (chair of Mathematics for Uncertainty Quantification at RWTH Aachen), KAUST core lab, Extreme Computing Research Center, SRI-UQ Strategic Initiative and Computational Bayesian group at King Abdullah University of Science and Technology. We also thank two anonymous reviewers and the associate editor for providing a number of helpful comments on an earlier draft of the paper.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

Since the uncertain input parameters have Uniform distribution, we employ multivariate Legendre polynomials as the gPC basis. Multivariate Legendre polynomials are defined on \([-1,1]^M\), where M is the dimensionality of the stochastic space. The first few Legendre polynomials are

The Legendre polynomials are orthogonal with respect to the \(L_2\) norm on the interval \([-1,1]\). The recursive formula is

The scalar product is

or

where the density function \(\mu (x)\) is a constant (is equal 1/2 on \([-1,1]\)), and where \(\delta _{nm}\) denotes the Kronecker delta. The \(L^2[-1,1]\) norm \(\Vert \psi _n\Vert =\sqrt{\frac{2}{2n+1}}\) and the weighted \(L^{2}_{\mu }[-1,1]\) norm is \(\Vert \psi _n \Vert =\sqrt{\frac{1}{2n+1}}\). The gPC coefficients can be computed as follows

and for \(d>1\) we have

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Litvinenko, A., Logashenko, D., Tempone, R. et al. Solution of the 3D density-driven groundwater flow problem with uncertain porosity and permeability. Int J Geomath 11, 10 (2020). https://doi.org/10.1007/s13137-020-0147-1

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s13137-020-0147-1