Abstract

Arguably, automation is fast transforming many enterprise business processes, transforming operational jobs into monitoring tasks. Consequently, the ability to sustain attention during extended periods of monitoring is becoming a critical skill. This manuscript presents a Brain-Computer Interface (BCI) prototype which seeks to combat decrements in sustained attention during monitoring tasks within an enterprise system. A brain-computer interface is a system which uses physiological signals output by the user as an input. The goal is to better understand human responses while performing tasks involving decision and monitoring cycles, finding ways to improve performance and decrease on-task error. Decision readiness and the ability to synthesize complex and abundant information in a brief period during critical events has never been more important. Closed-loop control and motivational control theory were synthesized to provide the basis from which a framework for a prototype was developed to demonstrate the feasibility and value of a BCI in critical enterprise activities. In this pilot study, the BCI was implemented and evaluated through laboratory experimentation using an ecologically valid task. The results show that the technological artifact allowed users to regulate sustained attention positively while performing the task. Levels of sustained attention were shown to be higher in the conditions assisted by the BCI. Furthermore, this increased cognitive response seems to be related to increased on-task action and a small reduction in on-task errors. The research concludes with a discussion of the future research directions and their application in the enterprise.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

The role of human labor in the workplace is being transformed profoundly by rapid improvements in technology and automation (Autor 2015). This transformation can be seen in the number of "knowledge worker" tasks being automated, leading to increased productivity among a wide variety of professions, replacing tasks requiring operational skills with those requiring monitoring and decision skills (Lacity and Willcocks 2015).

Research in human factors has identified issues concerning how humans interact with automated processes, suggesting that workers must now adapt to changes in the type of task, task structure, and the information available required to perform those tasks (Lee and Seppelt 2009). Adaptions such as these will require humans to better manage cognitive-energetic resources in terms of motivation, fatigue, cognitive load, and sustained attention (SA) while monitoring automated systems to avert or respond to system failures.

Multiple factors can impair human operator levels of SA and thus performance when monitoring automated systems. Mackworth (1948) demonstrated that the ability to detect visual signals declines after 30 min of visual search, this was later correlated with an increase in reaction times (McCormack 1960; Buck 1966). Additionally, human factors research has shown that interacting with automated systems also affects SA, workload, and complacency, which can influence operator performance (Parasuraman et al. 2008; Parasuraman and Manzey 2010). Furthermore, neurophysiological studies have identified other negative factors that affect an operator’s SA, such as drowsiness, motivation, stress, and habituation (Oken et al. 2006). This accumulation of information concerning sustained attention and automated systems highlights a need for systems that either adapt to low levels of operator SA or promote higher levels of SA within the operator.

A novel approach to tackling the problem of reduced SA and other attentional mechanisms is the use of a brain-computer interface (BCI) as assistive technology (Venthur et al. 2010). BCI's are systems that utilize signals from the brain as an input to a computer system to provide a control mechanism, decision support, adapt an interface, or display feedback. These signals are recorded using electroencephalography (EEG) through electrodes on the surface of the scalp. BCI's have been utilized in clinical settings to improve communication and rehabilitation outcomes [see Chaudhary et al. (2016) for a review] and, in some cases, to augment cognitive phenomena in real-world contexts [see Lotte and Roy (2019) for a review].

Creating BCIs for use within real-world contexts has become part of the emerging interdisciplinary field of neuroergonomics, which aims to study the human brain and behavior in real-world settings through the merger of neuroscience, human factors, and design (Parasuraman and Rizzo 2008). This community aims to understand the human-artifact relationship better to enhance human performance and safety in complex environments (Johnson and Proctor 2013). BCIs are also gaining traction in the information system community following several calls for research in neuro-adaptive information systems (vom Brocke et al. 2020; Riedl and Léger 2016) and their integration into "adaptive enterprise systems" (Adam et al. 2017). Moreover, EEG, the favored instrument in BCI research, was introduced to the Information Systems (IS) community by Müller-Putz et al. (2015) who produced a methodological paper on EEG use.

The research objective presented in this manuscript is to answer the following research question: Will integrating a BCI within a long duration monitoring task allow the user to sustain attention over a prolonged period in order to perform better and with less error? Thus, we detail the development of a passive brain-computer interface (pBCI) prototype to combat drops in SA during extended monitoring IT task, through the regulation of a user's SA to increase task performance and reduce on-task error. We applied the design science research framework to inform the business task's design based on business needs, and applicable IS methods. We then operationalized the construct of sustained attention using EEG to serve as input to the BCI following standard EEG methods (Mikulka et al. 2002; Müller-Putz et al. 2015). The artifact design was theoretically supported by the motivational control theory of cognitive fatigue (Hockey 2011) to provide a theoretical foundation with closed-loop control theory (Marken 2009) to integrate the pBCI neurofeedback mechanism that allows users to regulate SA passively. We contribute to the IS and NeuroIS fields by providing prescriptive knowledge concerning the design process of a BCI artifact for long-duration IT monitoring tasks, and propose a novel classification algorithm for the assessment of sustained attention.

2 Background

2.1 Brain-Computer Interfaces

BCI's are part of a multidisciplinary field at the intersection of neuropsychology, physiology, engineering, and computer science (Mason and Birch 2003), and more recently as tools for neuroergonomics research to produce inputs to drive computer, robotic or automated systems (Gramann et al. 2017). Advances in sensor technologies and cerebral activity classification enabled BCIs to be both a useful medical assistive technology (Vaughan 2003; Venthur et al. 2010) and as a general interface technology for human-machine systems (Zander and Kothe 2011).

In information system research, BCIs can be categorized in the family of "neuro-adaptive information systems" (Riedl et al. 2014). Neuro-adaptive information systems comprehend brain-computer interfaces as well as biofeedback systems. Riedl and Léger (2016) differentiate biofeedback systems from BCIs with different contributions. They defined the BCI objective as "to replace input devices (e.g., mouse or keyboard) through specific electroencephalographical measures of brain function that are typically assessed based on EEG". At the same time, biofeedback systems use physiological measures to recognize user states, adapt the system or make users aware of the adaptations so action can be taken. This BCI view emphasizes the notion of control of the IS artifact driven by the electrophysiological measures. On the contrary, biofeedback systems tend to focus on helping users' self-regulation of physiological activity, stress, emotion, or behaviors (Lux et al. 2018; Noorbergen et al. 2019). For example, Astor et al. (2013) presented one of the first biofeedback systems in IS using electrocardiography to measure arousal in an auction game. In this case, arousal was linked to the game difficulty and visual biofeedback, enabling users to self-regulate arousal and increase their performance. Current literature in IS and adaptive systems focus on physiological sensors such as electrocardiography (Hillege et al. 2020; Astor et al. 2013), photoplethysmography (Rouast et al. 2017), or electrodermal activity (Snyder et al. 2015).

A more nuanced approach to BCIs considers similar artifacts to biofeedback systems (neurofeedback). Based on Zander et al. (2009), BCI's can be categorized into three types: active, reactive, and passive BCI. Active and reactive BCI's are used to directly control an interface, whereas passive BCIs (pBCI) are utilized for cognitive-state detection within user support or neurofeedback systems.

State of the art development in BCI technology is driven primarily by active and reactive medical applications [see Lahane et al. (2019) for a detailed review]. In this domain, brain signals primarily derived from motor-imagery tasks are used to enable the control of a prosthesis (Hong and Khan 2017) such as a robotic arm for users with spinal cord injury (Nicolas-Alonso and Gomez-Gil 2012; Müller-Putz et al. 2005, 2018) or as input to controllers for wheelchairs (Carlson and Millan 2013). In these applications, machine learning classifiers such as LDA and K-nearest neighbors (Bhattacharyya et al. 2010), Support Vector Machine (SVM), and Convolutional Neural Networks (CNN) (Tang et al. 2017) are used in conjunction with specific features of brain activity such as the P300 response (Thulasidas et al. 2006) or steady-state evoked potentials (SSVEP) (Prasad et al. 2017) to classify brain signals and provide the input that drives the prosthesis. Currently, training these classifiers involves using synthetic laboratory-based tasks to elicit the desired response, resulting in lengthy training sessions, large preprocessed data sets and increased computational cost. Furthermore, due to their specificity, and the active or reactive nature of these BCIs, they do not derive any information concerning the cognitive state of the user.

Outside of the medical domain, BCI researchers are seeking new applications such as within information system research (Riedl and Léger 2016; vom Brocke et al. 2020), user experience research and entertainment (Muñoz et al. 2017), or in aerospace to explore the effects of mental workload and fatigue upon the P300 response and the alpha-theta EEG bands (Käthner et al. 2014). In addition, there is a recent movement within the BCI community to integrate other psychophysiological signal types into 'hybrid BCIs' (Pfurtscheller et al. 2010; Müller-Putz et al. 2015) to increase the granularity of the monitored response and identify cognitive states as they emerge.

2.2 Sustained Attention

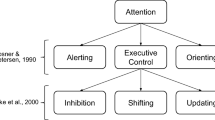

It has been proposed that the human attention system is composed of three networks: alerting, orienting, executive control (Petersen and Posner 2012). The Alerting network refers to the ability to maintain focus and performance during visual search tasks (Posner and Petersen 1990). The Orienting network corresponds to the capacity to focus on specific and essential signal sources or an internal semantic structure previously memorized (Posner 1980). Finally, the Executive control network represents the cognitive process of selecting sensory inputs, resolving conflicting feedback, monitoring and resolving errors (Posner and Rothbart 1998). We refer to the term sustained attention within the current work to cover tonic alertness, attention, and the vigilance decrement as proposed by (Oken et al. 2006).

Work investigating the underlying neuroscience of sustained attention (Sarter et al. 2001), posits that maintaining SA determines performance and effectiveness while performing long-duration tasks requiring a high degree of focus. SA represents the "higher" aspects of attention and cognitive capacity in general. Operationalizing the attentional system as a means to measure sustained attention was first proposed by Pope (1995) who developed an "engagement index" to provide a scalar value of SA. This index is derived from oscillations in frequency bands within the brain, consisting of beta (a measure of focus and alertness), alpha (a measure of inhibition or relaxation) and theta (a measure of active inhibition) to give the ratio β/(α + θ). This research was later reproduced by (Freeman 1999), and the index was utilized for a vigilance task (Mikulka et al. 2002). The engagement index rests on the hypothesis that increased beta power represents an increase in arousal and attention, while decreases in alpha and theta power represent a reduction of attention (Scerbo et al. 2003). The "engagement index" has been used in several more recent studies, such as modulating signal event rates in real-time on a vigilance task (Mikulka et al. 2002) and adapting the difficulty of a Tetris game in real-time (Ewing et al. 2016; Fairclough 2007).

These same frequency bands have shown potential in learning contexts to enhance engagement with passive brain-computer interfaces (Andujar and Gilbert 2013). By combining these bands to create a ratio, Szafir and Mutlu (2013) observed gains in recall and learning when using a BCI that adapted learning content to users. In this research, they extracted the α, β, and θ frequency bands and derived an attention index using β/(α + θ), then smoothed the index values using a 5-s moving average. Hassib et al. (2017) applied the same method to determine an audience's cognitive state to provide engagement information to a presenter in real-time. Kosmyna et al. (2018) used the same index to provide haptic and audio feedbacks for attention regulation in real-time to participants using glasses in a learning context. Pham et al. (2020) developed a similar BCI for drone pilots providing visual feedback of the level of attention rendered upon the operator's glasses. In work by Muñoz et al. (2020), the index was used in VR firearms training scenarios as a proxy for "cognitive readiness" in a pilot study aimed at creating adaptive training practices for police officers. Specifically, they observed that levels of frontal theta changed in response to difficulty within the training scenario. Oken et al. (2018) proposed a BCI measuring drowsiness based on multi-modal features (i.e., amplitude, theta power, alpha power, and blink rate) showing that a hybrid BCI can also be applied in this context.

The work presented in this manuscript outlines the methods and procedures used to develop a novel working prototype of a BCI-neuroadaptive system. The artifact seeks, in real-time, to combat decrements in sustained attention during monitoring tasks within an enterprise system and proposes a novel computational classifier to classify sustained attention. We utilize the β/(α + θ) index proposed by Pope (1995) with a series of dynamic thresholds to address volatility issues that can happen when using hardcoded and conservative thresholds (Ewing et al. 2016; Fairclough 2007).

2.3 Motivational Control Theory of Cognitive Fatigue

Theoretical developments and experimental findings concerning mental effort and cognitive fatigue often assume cognitive resource depletion to be a natural consequence of tasks' demands (Kahneman 1973). However, when speaking in terms of cognitive resource allocation and depletion, there appears to be little evidence to support this view. Hockey (1997) proposed a cognitive-energetical framework by analyzing the effects of stress and high workload on human performance. Within this framework, they state that human performance may be maintained under stress by the recruitment of further resources, but only at the expense of increased subjective effort, and associated behavioural and physiological costs. Furthermore, they state that, alternatively, stability can be achieved by reducing performance goals without further costs.

In later work, Hockey (2011) proposed that effort should be considered an optional response to the awareness and assessment of task demands under the individual's control. In their view, it is the adoption of high effort responses to task demands which drive the fatigue process, rather than the presence of the demands themselves. They further expand upon this by adding controllability as a moderator of the workload-fatigue relationship, in which controllability refers to an individual's perception that they have control over work activities. They support this moderating effect in an experimental study of office work in which workload was manipulated by time pressure and opportunity to schedule tasks (Hockey and Earle 2006). They concluded that, in general, when cognitive activities are self-motivated, particularly when they are regarded as 'play', cognitive effort does not appear to give rise to high fatigue levels.

We utilize the cognitive energetical framework as the theoretical foundation for the design and development of the neurofeedback mechanism of the prototype pBCI and base its architecture upon closed-loop control theory.

3 Artifact Objectives and Requirements

To create the artifact, we started by forming a problem statement: design an artifact that allows users to regulate sustained attention while performing an ecologically valid business task. Following a design science methodology process (Peffers et al. 2007), we formed an objective-centred solution to achieve instantiation validity (Lukyanenko et al. 2014) by applying closed-loop control and the theory of motivational control of cognitive fatigue. Instantiation Validity refers to "the validity of IT artifacts as instantiations of theoretical constructs" (Lukyanenko et al. 2014). Thus, the theoretical foundation of closed-loop and motivational control was operationalized as a novel classification algorithm. This algorithm is then integrated within a technological artifact consisting of a business logistics task, a passive BCI control loop, and a neurofeedback controller driven by the classification algorithm. We reduced the logistics task to the minimum of required actions and took measures to mitigate external factors that could impact closed-loop control. We addressed the scientific and managerial literature to be confident that the proposed prototype meets the research need and theoretical foundations. To this end, we derived a series of requirements for the artifact and supported our design with previous research from the fields of neurophysiology, NeuroIS, human factors and physiological computing.

As discussed previously, we identified a need for a mechanism that allows an operator to regulate sustained attention while performing long-duration tasks such as those found in tasks involving highly automated processes that require extended periods of monitoring interrupted by short decision periods.

Using this problem statement, we derived three requirements:

-

The design of the artifact needs to integrate with and drive an ecologically valid IS environment.

-

The IS task should be of sufficient duration to ensure that a decrease in sustained attention can be observed under normal circumstances.

-

The BCI's core functionality should provide, through neurofeedback and signaling, a means to allow operators to regulate sustained attention and enhance performance without obstructing the IS task.

We identified several essential features needed for both the artifact and the interactive task interface in addition to these core requirements. The task must feel both useful and business-oriented. The user must be consistently engaged in a task that requires business decisions and assesses the impact of these decisions over time.

Furthermore, based on research involving task and process automation (Parasuraman et al. 2000), automating any decisions and actions within the task should be carefully selected to allow careful monitoring and consecutive decision cycles. The timeframe between events should induce a need to be attentive to that task, such that a task requiring sustained attention must test a subject's readiness to detect decision events after a long period of monitoring (Petersen and Posner 2012). Lastly, the artifact needs to display any information required to support the user's decisions and provide feedback concerning performance and the current system state to perform projections (Endsley 1995).

We applied closed-loop control theory to aid in the development of the neurofeedback and signaling requirements (Marken 2009). Closed-loop control (CCT) can shape behavior by mitigating the influence of psychological states and promoting others. The concept of CCT considers every stimulus input as affecting behavioral output, and each behavioral output looping back to affect the sensory input. Within this loop, the effect of output on input is referred to as feedback. Closed-loop neurotechnology works on the same principle but by shaping neurophysiological activation patterns, and by default, related psychological processes and states. From this, we derived a CCT schema (see Fig. 1). This schema integrates neurophysiological input into a system that infers, then classifies SA, and proposes visual neurofeedback as an interface adaption.

adapted from Marken (2009)

A closed-loop control schema for the proposed pBCI

Figure 2 shows the conceptual schema for how the proposed artifact will operate as part of a decision-adaption cycle. Sustained attention is measured via EEG and integrated into the pBCI, and then a classification decision is taken. Depending on the level of measured sustained attention, visual interface neurofeedback is actioned to encourage a positive change in sustained attention if required, which then feeds back to begin the next classification – decision cycle.

We hypothesized that this decision-adaption cycle would increase task performance and decrease on-task error throughout a long-duration monitoring task mediated by the mechanisms proposed in the motivational control theory of cognitive fatigue (Hockey 2011).

4 Design and Development

4.1 Integrating the Task Interface and Feedback Controller

We further iterated the design process as outlined above to differentiate between two development cycles, one for interface development and one for the pBCI, referred to as the feedback controller. The system architecture resulting from this separation is shown in Fig. 3. in which can be seen the technical elements of the closed-loop control and decision-adaption schema. This starts with the user's implicit measurement, then routes through the system architecture for a decision-adaption cycle, before returning to the interface as visual feedback based on the user's SA, which starts the closed-loop cycle again.

We took inspiration from the ecological interface design framework (Rasmussen and Vicente 1989; Vicente and Rasmussen 1992) to aid in designing both the task and the neurofeedback mechanism. In this framework, a user must have the ability to make decisions directly within the monitoring interface. Thus, information displayed upon the interface must follow an isomorphic structure which supports the mapping of the process structure to help the user externalize a mental model of the task and facilitate problem-solving.

To address these requirements, we formed several actionable development goals to create the monitoring task interface to ensure that the user interacts with a partially automated business logistics process task where long periods of monitoring are interrupted by short decision periods. Within the task interface, all the information needed – including neurofeedback – to support the task is displayed and updated in real-time, allowing the user to monitor the automated process and make timely decisions.

Finally, to function effectively as a real-time tool and to allow for post-hoc analysis, all data from the enterprise system concerning the running of the task, behavioral measures, and the pBCI neurological data are saved in secured storage.

5 Instantiation and Implementation

We employed an asynchronous approach to operationalizing the task and BCI architecture (Fig. 3) into a useable prototype that allowed fluid, seamless interface interactions and data captured at each stage of the task and pBCI process. To achieve this, we utilized a web-based application programming directly connected within SAP architecture (Appendix A, available online via http://link.springer.com).

The "meaningfulness" of the feedback is a crucial factor for the user in understanding the adaptive system (Lux et al. 2018). The interface visual neurofeedback design followed a traffic lighting signal paradigm for quick cognitive association: red for critical, amber for unfocused, and white for decision-ready. Research in human factors outlines some principles for interface "alert" design, in which placement, visibility, prioritization, color, and habituation have to be considered (Phansalkar et al. 2010). Thus, traffic lights are an easily generalizable cognitive association and one that has been used in previous related research (Lal et al. 2003).

Participants were introduced to the traffic lighting color scheme during a 15-min baselining task involving a visual search task involving the identification of a moving target and a target signaling task (see Fig. 4). During the target signaling task, participants needed to identify a target shape and outline from a pool of 3 shapes randomly displayed on a screen and outlined using the traffic lighting paradigm, in which the color red was the target outline. Each task lasted 7 min with a 1-min return to baseline period between task types.

For the adaptive interface, we utilize the term neurofeedback here not to signify a direct intervention at the interface but rather in the passive sense, in which the user is aware of a component of the interface changing color in response to their current level of sustained attention. Lux et al. (2018) noted that obstructive biofeedback design could be perceived as distracting and stressful, leading to unattended consequences. Thus, the pBCI (feedback controller) provides an assessment of the user's sustained attention through a visual interface element that does not interfere with the task interface. We first opted for a dedicated UI element, however subjective feedback from participants during the pre-testing phase of development, in which multiple elements of the interface changed in response to the level of sustained attention (Fig. 5), suggested that such changes were too intrusive and detrimental to performing the task.

To address the design constraint that the visual feedback should be visible yet unobtrusive, we opted for an alerting mechanism that followed an ascending color gradient, controlled by the user's level of SA; this would change the color of the background behind the information dashboard unobtrusively (Fig. 6).

5.1 Use Case: A Business Logistics Task

To create an ecologically valid IS task that fulfils the design and interface requirements, we utilized an ERP (Enterprise Resource Planning) system that offers an environment simulating a real-life business. ERPsim is a business simulation based on SAP (Léger 2006). ERPsim provides a simulation environment with enough granularity to provide a platform for experimental research in NeuroIS (Loos et al. 2010). The simulation was modified to allow a task that required several monitoring and decision cycles.

The business task interface (Fig. 5) consists of 5 KPIs (Key Performance Indicators) to help the user complete the task: product contribution margin, percentage of sales per area, total quantity sold per area, current stock, and inventory turnover in days. KPIs were updated every 5 s to present participants with the opportunity to make sales decisions as needed. To develop the information dashboard, we followed design concepts on information presentation (Few 2006, 2012). These same KPI were used to derive performance metrics to determine task completion performance.

Drawing from the design requirements, an ecologically valid business logistics task was created and presented using an information dashboard similar to those found in enterprise planning and resource systems. Task duration was set to 90 min, then event timing between decision and monitoring periods was manipulated to create prolonged periods of monitoring followed by shorter periods of critical decision-making (Fig. 7). The timing was chosen to induce attentional depletion. The participant receives new stocks every 22.5 min, and sales occur every 4.5 min.

The participant was instructed to maximize sales by maintaining minimal stocks in three different regions. When stocks are available, regional sales decisions are required. Decisions are informed by the information displayed within the interface concerning the current state of the "business." The fictitious business follows trends that the participant must identify during the monitoring phase of the task. Right decisions increase sales as stock is consumed, bad decisions accumulate unsold stock in a region and reduce final total sales.

5.2 Classification: Threshold Reactive Adaptive Dynamic Spectrum (ThReADS)

As shown previously (Fig. 3), before classification, the EEG signal is forwarded to and processed by Mensia NeuroRT. The signals were downsampled to 256 Hz. Motion artifacts were identified and flagged for non-use or imputation, where appropriate, using the Riemannian Potato algorithm (Barachant et al. 2013). A bandpass filter (1–50 Hz) and a notch filter at 60 Hz were applied for signal frequency isolation and powerline noise removal. A Butterworth filter bank was incorporated to preprocess the data in the bands of interest: Theta (4–8 Hz), Alpha (8–12 Hz), and Beta (12–30 Hz). The engagement index calculation used within the pBCI is based on the work of Mikulka et al. (2002). We focused on frontal and occipital cortical areas using channels F3, F4, O1, O2 on the international 10–20 system. The band power of Theta, Alpha, and Beta frequency bands are divided by the total power to create a ratio for each frequency band and then used to calculate the index β/ (α + θ). Using this index as a basis, we developed a new algorithmic method to improve its utility and reduce the volatility of real-time classification experienced by similar projects (Labonte-Lemoyne et al. 2018).

As discussed earlier, we calibrate the pBCI with a baseline task inspired by Pattyn et al. (2008). The baseline task is composed of two sub-tasks. The first sub-task measures a low state of sustained attention, the second one a high state, from which a user-specific spectrum is derived. We posit that any changes in the level of sustained attention that remain within an "optimal zone" will be due to self-regulated motivational control of the user’s cognitive resources.

This assertion placed a set of constraints on the development of the SA classifier in addition to addressing the volatility issue of the engagement index values. Thus we developed a method to drive the neurofeedback mechanism in such a way as not to place the user of the BCI in a constant high state of sustained attention but rather to allow drops in sustained attention mediated by the motivation of the user.

The algorithm calculates the index's maximum and average during the decision signaling (high SA) sub-task and the minimum and average monitoring (low SA) sub-task and the two conditions' total average. A series of ratios are derived from those values comparing the total average of the two tasks with high sample maximum, high sample average, low sample average, and low sample minimum. These ratios are multiplied by the cumulative average (CA) of the index to create a moving spectrum during operation. This spectrum of values adapts to the user's attentional state over time. The following example shows the calculation of the cumulative average (1).

The low average is the low task sample ratio divided by the total average of the two conditions multiplied by the cumulative average's current value (2).

where x is the new index data in the real-time index pipeline, l is the sample collected during the baseline representing the low attention state, and h the high cognitive attention, a visual representation of the dynamic adaptive spectrum as shown in (Fig. 8).

To classify SA, we compute thresholds that will follow the participant's dynamic spectrum during the experiment. We classify three levels of attention: level 0 (white), level 1 (amber), and level 2 (red) for critically low attention (Fig. 8). These levels are computed as ratios during the experiment and multiplied by the current cumulative average, as explained in the preceding example. Level 1 and level 2 thresholds, examples (3) and (4) below, are at the midpoint between the cumulative average – low average and low average – low minimum.

where l is the sample collected during the baseline representing the low SA state and h the high SA.

A 5 s (seconds) sliding window of the index is calculated every second and compared in the spectrum space, where a window of less than 3 s has the potential to increase volatility, and anything greater than a 5 s window would not represent the current state of SA. It has been shown that the effects of changes in sustained attention can be observed in the brain for up to 60 s (Huang et al. 2007), post stimuli. However, the artifact required a representative metric of the cognitive state at the time of feedback. Moreover, the 5 s response widow has been utilized in previous research with the same index (Hassib et al. 2017; Szafir and Mutlu 2013). The presented logic (3,4) is calculated every second. System adaptation decisions occur every 5 s based on the cumulative value compared against historical thresholds. The algorithm outputs three possible classifications of sustained attention: 0 for decision-ready, 1 for unfocused, 2 for a critically low level of sustained attention. Classification outputs are then integrated into the adaption model as both real-time measures of SA and drivers of future levels of SA as per the motivational control of the user and within a closed feedback loop.

6 Prototype Evaluation

6.1 Experimental Design

A "between groups" experimental design was utilized for analysis and testing. Participants were split randomly between three conditions: no feedback (NF), continuous feedback (CF), event-based feedback (EF). That is, in the NF condition, participants received no visual feedback at the interface throughout the task. For the CF condition, participants received visual feedback at the interface continuously during the task. In the final condition (EF), participants were provided with visual feedback only during an event phase and dependent on a low state of SA. The type of interface feedback delivered by the pBCI was the only factor manipulated. Participants were given instruction before the logistics tasks, to explain the functions of the interface, the KPIs, and the decision phase. They were told that all this information would also be available during the task by clicking on the top right corner's guide button. When they felt ready, they could start the task autonomously.

6.2 Experimental Setup

For EEG data collection, a 32 electrodes headset was used following the international 10–20 system. The signal was acquired using NeuroRT Acquisition Server, then filtered to remove artifacts and transformed in real-time using Mensia NeuroRT (Paris, France). The server directly captures EEG data from a BrainAmp amplifier connected to an actiCAP 32 Ch Standard-2 from Brain Products. We chose to use NeuroRT due to its ability to provide real-time FFT data, which splits a signal into frequency bands and provides real-time filtering options. Participants sat at a desk with an adjustable chair, approx. 80 cm from a 24" computer monitor, and were provided with a mouse and keyboard to interact with the task interface. No means of determining the current time was available during the experiment to prevent any confounds relating to task time remaining.

From a pool of 31 Participants who took part in the study, 24 (11 female) average age 26.73 (Max = 43; Min = 18) provided data usable for analysis. Participants were screened based on good health, moderate hair thickness, and normal to corrected normal vision. Participants were all business school students with experience with information dashboard interfaces and provided written consent following the University's ethics committee guidelines.

6.3 Results

To analyze the level of SA, we tested the values of the engagement index between condition and event types. We hypothesized that the reported level of SA would differ between the three conditions depending on the type of feedback. The raw index values, aggregated by events and minute blocks, show a higher mean level of SA during decision cycles than monitoring cycles. When conditions are contrasted, this difference becomes more apparent (see Fig. 9), the two active feedback conditions CF (0.947, \(\sigma_{{\overline{x}}}\) = 0.015), and EF(0.949, \(\sigma_{{\overline{x}}}\) = 0.016), show a significantly higher level of SA across both task cycles when compared to the control condition NF (0.75, \(\sigma_{{\overline{x}}}\) = 0.010). Furthermore, on average, decision cycles elicited a higher SA response than monitoring cycles.

To determine if a significant difference exists between the conditions, we performed a one-way analysis of variance (ANOVA). Values were slightly skewed on the left. A Levene's test rejected the equality of variances assumptions. Thus, we used a more conservative nominal alpha level (α = 0,025). We found a significant statistical difference between the three conditions (see Fig. 10), EF – NF and CF – NF (F(2,2297) = 71.78, p < 0.001***). However, we found no significant statistical difference between the CF and EF conditions. The control condition shows a significantly lower level of SA when compared to the other groups. A two-way ANOVA revealed that there is a weak but still significant difference in the level of SA between decision and monitoring cycles (F(1,2177) = 5.72, p < 0.05*) for all conditions.

6.3.1 Classifier Evaluation

To evaluate the SA classification algorithm (ThReADS), we benchmarked our method (see Sect. 5.2) against the fixed baseline approach taken by other researchers in the field. The goal was to compare classifications to determine the percentage of time spent in each SA zone. Importantly, each SA zone classification determines the input to the feedback controller, which then controls the visual interface feedback. The more stable the input, the more accurate the SA state and the more meaningful the feedback is to the user. Fixed baseline classifications were calculated from the average from the baseline tasks to derive high, low, and average threshold values. Table 1 shows the comparison between the two methods as the percentage of classifications throughout the experiment. It can be seen, that the critical state is classified around 10.56%, 8.36% and 8.60% of the time for the EF, CF and NF groups respectively using threads, compared to 23.77%, 19.58% and 26.06% for the EF, CF and NF groups respectively using baseline classification; showing that critical state classification is rarer than the other states in both cases, yet higher for the baseline condition. Considering the classifications for the other zones, more critical zone classifications using the baseline method indicates higher volatility in terms of the number of classifications across all the classification zones, providing a less accurate reflection of current SA at the interface and less meaningful feedback to the user.

The higher classification of the unfocused zone using threads for both the EF (53.73%) and NF (55.02%) groups compared to the CF (47.34%) group can be interpreted in terms of task and lack of active feedback, i.e., no feedback for the NF group and event synchronized feedback for the EF group, which potentially promoted a more significant attention decrement. Interestingly for the CF condition, the difference between the decision ready and unfocused state appears stable, suggesting that continuous feedback allowed users to regulate SA positively.

We employed a common variation of the NASA Task Load Index to measure perceived workload and performance (NASA-TLX). The Raw-TLX is a simplified version; the Raw-TLX scores, mean raw, and subscales per condition are shown in Table 2. The Raw-TLX is the average of the six subscales: mental demand, physical demand, temporal demand, performance, effort, and frustration, scored on a twenty-step scale. The condition with continuous feedback (CF) shows the lowest total score with 7.27 (σ = 3.1). The highest score comes from the event-related feedback (EF) group, who reported a surprisingly high level of frustration and lesser self-reported performance.

We performed a one-way between-groups ANOVA to determine if there was a significant difference in perceived workload between participants in each condition. The normality of the residuals and homogeneity of variance assumptions across the conditions were satisfied. We found no statistical difference (F(2,21) = 1.02, p = 0.378) between the Raw TLX, or the subscales, except for self-perceived performance (F(2,20) = 4305, p = 0.028*), where a significant difference between EF and CF conditions was observed.

6.3.2 Human Factors Measurement

To measure "maximized sales," we created two metrics: total sales and estimated missed sales (Table 3). The CF group had the best performance with an average of 7.46% (σ = 1.76) of estimated missed sales and a mean total sales of 14,785 (σ = 423), compared with 14,180 (σ = 875), 9.62% (σ = 4.91) and 14,529 (σ = 5.10), 9.79% (σ = 2.75) for EF and NF respectively. However, when comparing the conditions via ANOVA, no significant statistical difference was found.

To calculate the total participant activity spent interacting with the interface, we created a metric: actions per minute (APM). The objective was to determine if the feedback type affects the number of user actions during task completion (see Fig. 11.). For the entire task duration, both CF and EF conditions have a higher mean APM, of 3.460 (SE = 0.140) and 3.317 (SE = 0.139) respectively. The NF group displayed a lower APM with 2.65 (\(\sigma_{{\overline{x}}}\) = 0.097). Figure 11 shows a gradual rise in APM during decision cycles compared with monitoring cycles, showing the CF group interacts more with the interface at these times compared to the two other groups.

Contrasting the APM means during monitoring cycles, a significant statistical difference was observed between EF – NF and CF – NF (F(2,2297) = 12.05, p < 0.001***), but no significant difference between EF – CF conditions.

7 Discussion and concluding comments

This paper presents the development of a pBCI prototype directed to support IT tasks requiring SA in an enterprise context. Our iterative design process highlighted some limitations and future implications for design improvements. Firstly, the integration of physiological data within an ERP architecture highlighted an important limitation of the presented design. The use of a transactional database imposed performance limits upon updating information within the interface, showing that in this case the database is reliant on the processing capability of the ERP, which may or may not prove effective at scale. Furthermore, the architecture's composite nature increased the risk of an inter-component communication error, where a micro services-oriented design could mitigate these risks by embedding the BCI in the ERP architecture, thus reducing component dependencies.

Secondly, while the engagement index is conveniently operationalizable and provides useful real-time input for a pBCI, it is limited to predetermined areas of the scalp and within strict frequencies, which prevents a more holistic analysis of the individual's cognitive response. Finally, we selected participants based on prior experience of business-related tasks to reduce the learning curve concerning the specific key performance measures presented during the simulation. However, no demographic profiling was performed to determine the level of knowledge each participant possessed; further revisions of the pBCI should include this as part of the development cycle.

The present pilot study aimed to develop a prototype pBCI integrated with an IS, and in this regard, development was successful. We implemented an artifact that integrated a pBCI into an ERP architecture that provided visual feedback to the user regarding their current SA level. We developed an ecologically valid task specifically for this purpose. We developed a classification algorithm (ThReADS) to assess SA in real-time. We hypothesized that real-time assessment and feedback of sustained attention would increase task performance and reduce error.

The results indicated a significantly higher level of sustained attention for the two active visual feedback groups provided with neurofeedback at the interface compared to the control group. This higher SA level was expressed as a moderate but still significant improvement in task performance and a significant decrease in on-task error. One interesting finding is the observed stability of the level of SA shown by participants within the continuous feedback group. Potentially this finding shows that the pBCI positively influenced user SA, either through closed-loop feedback control or self-regulation.

While the CF condition showed better performance in terms of metrics, differences between scores were not significant. However, a difference between the groups was observed for actions per minute on the interface. This finding has important implications for the understanding of our SA measure. Did the visual feedback mechanism increase actions per minute, or did the activity at the interface, increase the level of SA? We would argue that the relationship between the two is much more nuanced, as the level of SA drives the neurofeedback through the pBCI, which influences APM, which in turn influences SA in a continuous closed feedback cycle. Moreover, when taken as a whole, the results point to the pBCI positively influencing on-task action and errors.

These results provide evidence that the continuous feedback group might have developed a self-regulation strategy in line with the framework provided by Robert and Hockey. In contrast, the event-based feedback potentially created a higher workload level due to a perceived need to maintain a high SA level to perform the task when signalled. Moreover, the EF group's result appears to fit with the cognitive-energetical framework of motivational control as this form of feedback moves from self-regulation of SA through a "play" aspect to a more explicit form of work as perceived by the user. Future work should seek to explicitly explore this aspect by capturing the user's qualitative experience either during use or as a post-doc debriefing. Thus, a deeper understanding of the complex interaction between the user and the artifact may be uncovered.

Our contribution of a novel approach to classifying the engagement index using the ThReADS method allows the feedback controller to adapt to changes in SA over time by considering previous and current levels of engagement, providing flexibility and stability. It represents a significant improvement in SA classification in real-time compared to previous methods reported in the literature. However, future iterations of the pBCI would include more cutting-edge methods based on deep learning and whole-brain approaches. We compared the algorithm to a fixed threshold method; the results show that our approach classifies 8.60% of the critical state compared to 26.06% for the fixed baseline displayed in Fig. 8 when applied to data from the NF group. These insights are essential; the rarity of critical SA classification's provides more meaningful feedback to the user and avoids habituation (Phansalkar et al. 2010; Oken et al. 2006). Moreover, the fixed baseline method shows a less stable classification of users' attention levels, between unfocused and decision-ready states, than with ThReADS. Based on our evaluation, it appears that, in this case, the classification of SA using adaptive thresholding provides more meaningful visual feedback than a classical fixed baseline approach.

We provide, with this research, the first tentative step towards a pBCI technology embeddable in an enterprise system. Already a focus in the NeuroIS field (vom Brocke et al. 2013; vom Brocke et al. 2020), the application of neuroscience as a built-in function of IS provides exciting opportunities for reducing operator error and augmenting the workforce.

References

Adam MT, Gimpel H, Maedche A, Riedl R (2017) Design blueprint for stress-sensitive adaptive enterprise systems. Bus Inf Syst Eng 59(4):277–291

Andujar M, Gilbert JE (2013) Let's learn! enhancing user's engagement levels through passive brain-computer interfaces. In: CHI'13 extended abstracts on human factors in computing systems, pp 703–708

Astor PJ, Adam MTP, Jerčić P, Schaaff K, Weinhardt C (2013) Integrating biosignals into information systems: a neurois tool for improving emotion regulation. J Manag Inf Syst 30(3):247–278

Autor DH (2015) Why are there still so many jobs? the history and future of workplace automation. J Econ Perspect 29(3):3–30

Barachant A, Andreev A, Congedo M (2013) The Riemannian Potato: an automatic and adaptive artifact detection method for online experiments using Riemannian geometry. In: TOBI Workshop lV, pp 19–20

Bhattacharyya S, Khasnobish A, Chatterjee S, Konar A, Tibarewala D (2010) Performance analysis of LDA, QDA and KNN algorithms in left-right limb movement classification from EEG data. In: 2010 International conference on systems in medicine and biology. IEEE, pp 126–131

Buck L (1966) Reaction time as a measure of perceptual vigilance. Psychol Bull 65(5):291

Carlson T, Millan JdR (2013) Brain-controlled wheelchairs: a robotic architecture. IEEE Robotics Autom Mag 20(1):65–73

Chaudhary U, Birbaumer N, Ramos-Murguialday A (2016) Brain-computer interfaces for communication and rehabilitation. Nat Rev Neurol 12(9):513

Endsley MR (1995) Toward a theory of situation awareness in dynamic systems. Hum Factors 37(1):32–64

Ewing KC, Fairclough SH, Gilleade K (2016) Evaluation of an adaptive game that uses EEG measures validated during the design process as inputs to a biocybernetic loop. Front Hum Neurosci 10:223

Fairclough SH (2007) Psychophysiological inference and physiological computer games. In: ACE workshop-brainplay (vol 7)

Few S (2006) Information dashboard design: the effective visual communication of data. O'Reilly Media

Few S (2012) Show me the numbers: designing tables and graphs to enlighten. Analytics Press

Freeman FG, Mikulka PJ, Prinzel LJ, Scerbo MW (1999) Evaluation of an adaptive automation system using three EEG indices with a visual tracking task. Biol Psychol. https://doi.org/10.1016/S0301-0511(99)00002-2

Gramann K, Fairclough SH, Zander TO, Ayaz H (2017) Trends in neuroergonomics. Front Hum Neurosci 11:165

Hassib M, Schneegass S, Eiglsperger P, Henze N, Schmidt A, Alt F (2017) EngageMeter: a system for implicit audience engagement sensing using electroencephalography. In: Proceedings of the 2017 Chi conference on human factors in computing systems, pp 5114–5119

Hillege RH, Lo J, Janssen CP, Romeijn N (2020) The mental machine: classifying mental workload state from unobtrusive heart rate-measures using machine learning. In: International conference on human-computer interaction. Springer, Cham, pp 330–349

Hockey GRJ (1997) Compensatory control in the regulation of human performance under stress and high workload: a cognitive-energetical framework. Biol Psychol 45(1–3):73–93

Hockey GRJ (2011) A motivational control theory of cognitive fatigue. In: Ackerman PL (ed) Decade of behavior/science conference, pp 167–187. https://doi.org/https://doi.org/10.1037/12343-008

Hockey GRJ, Earle F (2006) Control over the scheduling of simulated office work reduces the impact of workload on mental fatigue and task performance. J Exp Psychol Appl 12(1):50

Hong K-S, Khan MJ (2017) Hybrid brain-computer interface techniques for improved classification accuracy and increased number of commands: a review. Front Neurorobotics 11:35

Huang R-S, Jung T-P, Makeig S (2007) Multi-scale EEG brain dynamics during sustained attention tasks. In: 2007 IEEE international conference on acoustics, speech and signal processing. IEEE, pp IV-1173–IV-1176

Johnson A, Proctor R (2013) Neuroergonomics: a cognitive neuroscience approach to human factors and ergonomics. Springer

Kahneman D (1973) Attention and effort. Prentice-Hall, Englewood Cliffs. (Citeseer)

Käthner I, Wriessnegger SC, Müller-Putz GR, Kübler A, Halder S (2014) Effects of mental workload and fatigue on the P300, alpha and theta band power during operation of an ERP (P300) brain–computer interface. Biol Psychol 102:118–129

Kosmyna N, Sarawgi U, Maes P Attentiv U (2018) Evaluating the feasibility of biofeedback glasses to monitor and improve attention. In: Proceedings of the ACM international joint conference and international symposium on pervasive and ubiquitous computing and wearable computers, pp 999–1005

Labonte-Lemoyne E, Courtemanche F, Louis V, Fredette M, Sénécal S, Léger P-M (2018) Dynamic threshold selection for a biocybernetic loop in an adaptive video game context. Front Hum Neurosci 12:282

Lacity M, Willcocks L (2015) What knowledge workers stand to gain from automation. Harv Bus Rev 19

Lahane P, Jagtap J, Inamdar A, Karne N, Dev R (2019) A review of recent trends in EEG based brain-computer interface. In: 2019 International conference on computational intelligence in data science. IEEE, pp 1–6

Lal SK, Craig A, Boord P, Kirkup L, Nguyen H (2003) Development of an algorithm for an EEG-based driver fatigue countermeasure. J Safety Res 34(3):321–328

Lee JD, Seppelt BD (2009) Human factors in automation design. In: Springer handbook of automation. Springer, pp 417–436

Léger P-M (2006) Using a simulation game approach to teach enterprise resource planning concepts. J Inf Syst Educ 17(4):441

Loos P, Riedl R, Müller-Putz GR, vom Brocke J, Davis FD, Banker RD, Léger P-M (2010) NeuroIS: neuroscientific approaches in the investigation and development of information systems. Bus Inf Syst Eng 2(6):395–401

Lotte F, Roy RN (2019) Brain-computer interface contributions to neuroergonomics. In: Neuroergonomics, pp 43–48. https://doi.org/10.1016/B978-0-12-811926-6.00007-5

Lukyanenko R, Evermann J, Parsons J (2014) Instantiation validity in IS design research. In: International conference on design science research in information systems. Springer, pp 321–328

Lux E, Adam MT, Dorner V, Helming S, Knierim MT, Weinhardt C (2018) Live biofeedback as a user interface design element: a review of the literature. Commun Assoc Inf Syst 43(1):18

Mackworth N (1948) The breakdown of vigilance during prolonged visual search. Q J Exp Psychol 1(1):6–21

Marken RS (2009) You say you had a revolution: methodological foundations of closed-loop psychology. Rev Gen Psychol 13(2):137–145

Mason SG, Birch GE (2003) A general framework for brain-computer interface design. IEEE Trans Neural Syst Rehabil Eng 11(1):70–85

McCormack P (1960) Performance in a vigilance task as a function of length of interstimulus interval. Can J Psychol 14(4):265

Mikulka PJ, Scerbo MW, Freeman FG (2002) Effects of a biocybernetic system on vigilance performance. Hum Factors J Hum Factors Ergon Soc 44(4):654–664

Müller-Putz GR, Pereira J, Ofner P, Schwarz A, Dias CL, Kobler RJ, Hehenberger L, Pinegger A, Sburlea AI (2018) Towards non-invasive brain-computer interface for hand/arm control in users with spinal cord injury. In: 6th International conference on brain–computer interface. IEEE, pp 1–4

Müller-Putz GR, Riedl R, Wriessnegger SC (2015) Electroencephalography (EEG) as a research tool in the information systems discipline: foundations, measurement, and applications. Commun Assoc Inf Syst 37:46

Müller-Putz GR, Scherer R, Pfurtscheller G, Rupp R (2005) EEG-based neuroprosthesis control: a step towards clinical practice. Neurosci Lett 382(1–2):169–174

Muñoz J, Rubio E, Cameirao M, Bermúdez S (2017) The biocybernetic loop engine: an integrated tool for creating physiologically adaptive videogames. In: Proceedings of the 4th international conference on physiological computing systems, pp 45–54

Muñoz JE, Quintero L, Stephens CL, Pope AT (2020) A psychophysiological model of firearms training in police officers: a virtual reality experiment for biocybernetic adaptation. Front Psychol 11

Nicolas-Alonso LF, Gomez-Gil J (2012) Brain computer interfaces, a review. Sens 12(2):1211–1279

Noorbergen TJ, Adam MT, Attia JR, Cornforth DJ, Minichiello M (2019) Exploring the design of mHealth systems for health behavior change using mobile biosensors. Commun Assoc Inf Syst 44(1):44

Oken B, Memmott T, Eddy B, Wiedrick J, Fried-Oken M (2018) Vigilance state fluctuations and performance using brain-computer interface for communication. Brain-Comput Interfaces 5(4):146–156

Oken BS, Salinsky MC, Elsas SM (2006) Vigilance, alertness, or sustained attention: physiological basis and measurement. Clin Neurophysiol 117(9):1885–1901

Parasuraman R, Manzey DH (2010) Complacency and bias in human use of automation: an attentional integration. Hum Factors 52(3):381–410

Parasuraman R, Rizzo M (2008) Neuroergonomics: the brain at work, vol 3. Oxford University Press

Parasuraman R, Sheridan TB, Wickens CD (2000) A model for types and levels of human interaction with automation. IEEE Trans Syst Man Cybern – Part A: Syst Hum 30:286–297

Parasuraman R, Sheridan TB, Wickens CD (2008) Situation awareness, mental workload, and trust in automation: viable, empirically supported cognitive engineering constructs. J Cogn Eng Decis Making 2(2):140–160

Pattyn N, Neyt X, Henderickx D, Soetens E (2008) Psychophysiological investigation of vigilance decrement: boredom or cognitive fatigue? Physiol Behav 93(1–2):369–378

Peffers K, Chatterjee S, Rothenberger MA, Tuunanen T (2007) A design science research methodology for information systems research. J Manag Inf Syst 24(3):45–77

Petersen SE, Posner MI (2012) The attention system of the human brain: 20 years after. Annu Rev Neurosci 35:73–89

Pfurtscheller G, Allison BZ, Bauernfeind G, Brunner C, Solis Escalante T, Scherer R, Zander TO, Mueller-Putz G, Neuper C, Birbaumer N (2010) The hybrid BCI. Front Neurosci 4:3

Pham T, Tezza D, Andujar M (2020) Enhancing drone pilots’ engagement through a brain-computer interface. In: International conference on human–computer interaction. Springer, pp 706–718

Phansalkar S, Edworthy J, Hellier E, Seger DL, Schedlbauer A, Avery AJ, Bates DW (2010) A review of human factors principles for the design and implementation of medication safety alerts in clinical information systems. J Am Med Inform Assoc 17(5):493–501

Pope ATB, Edward H, Bartolonne DS (1995) Biocybernetic system evaluates indices of operator engagement in automated task. Biol Psychol 40(1–2):187–195

Posner MI (1980) Orienting of attention. Q J Exp Psychol 32(1):3–25

Posner MI, Petersen SE (1990) The attention system of the human brain. Annu Rev Neurosci 13(1):25–42

Posner MI, Rothbart MK (1998) Attention, self-regulation and consciousness. Philos Trans R Soc Lond B: Biol Sci 353(1377):1915–1927

Prasad PS, Swarnkar R, Prasad KV, Radhakrishnan M, Hashmi MF, Keskar A (2017) SSVEP signal detection for BCI application. In: 7th International advance computing conference. IEEE, pp 590–595

Rasmussen J, Vicente KJ (1989) Coping with human errors through system design: implications for ecological interface design. Int J Man-Mach Stud 31(5):517–534

Riedl R, Davis FD, Hevner AR (2014) Towards a NeuroIS research methodology: intensifying the discussion on methods, tools, and measurement. J Assoc Inf Syst 15(10):I

Riedl R, Léger P-M (2016) Fundamentals of NeuroIS. Studies in neuroscience, psychology and behavioral economics. Springer, Heidelberg

Rouast PV, Adam MT, Cornforth DJ, Lux E, Weinhardt C (2017) Using contactless heart rate measurements for real-time assessment of affective states. In: Davis F et al: Information systems and neuroscience. Springer, Cham, pp 157–163

Sarter M, Givens B, Bruno JP (2001) The cognitive neuroscience of sustained attention: where top-down meets bottom-up. Brain Res Rev 35(2):146–160

Scerbo MW, Freeman FG, Mikulka PJ (2003) A brain-based system for adaptive automation. Theor Issues Ergon Sci 4(1–2):200–219

Snyder J, Matthews M, Chien J, Chang PF, Sun E, Abdullah S, Gay G (2015) Moodlight: exploring personal and social implications of ambient display of biosensor data. In: Proceedings of the 18th ACM conference on computer supported cooperative work & social computing, pp 143–153

Szafir D, Mutlu B (2013) ARTFul: adaptive review technology for flipped learning. In: Proceedings of the SIGCHI conference on human factors in computing systems, pp 1001–1010

Tang Z, Li C, Sun S (2017) Single-trial EEG classification of motor imagery using deep convolutional neural networks. Optik 130:11–18

Thulasidas M, Guan C, Wu J (2006) Robust classification of EEG signal for brain-computer interface. IEEE Trans Neur Syst Rehabil Eng 14(1):24–29

Vaughan TM (2003) Guest editorial brain–computer interface technology: a review of the second international meeting. IEEE Trans Neur Syst Rehabil Eng 11(2):94–109

Venthur B, Blankertz B, Gugler MF, Curio G (2010) Novel applications of BCI technology: psychophysiological optimization of working conditions in industry. In: International conference on systems man and cybernetics. IEEE, pp 417–421

Vicente KJ, Rasmussen J (1992) Ecological interface design: theoretical foundations. IEEE Trans Syst Man Cybern 22(4):589–606

vom Brocke J, Hevner A, Léger PM, Walla P, Riedl R (2020) Advancing a neurois research agenda with four areas of societal contributions. Eur J Inf Syst 29(1):9–24

vom Brocke J, Riedl R, Léger P-M (2013) Application strategies for neuroscience in information systems design science research. J Comput Inf Syst 53(3):1-13

Zander TO, Kothe C (2011) Towards passive brain–computer interfaces: applying brain-computer interface technology to human-machine systems in general. J Neural Eng 8(2):025005

Zander TO, Kothe C, Welke S, Rötting M (2009) Utilizing secondary input from passive brain-computer interfaces for enhancing human-machine interaction. In: International Conference on Foundations of Augmented Cognition. Springer, pp 759–771

Author information

Authors and Affiliations

Corresponding author

Additional information

Accepted after 4 revisions by Alexander Maedche

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Demazure, T., Karran, A., Léger, PM. et al. Enhancing Sustained Attention. Bus Inf Syst Eng 63, 653–668 (2021). https://doi.org/10.1007/s12599-021-00701-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12599-021-00701-3