Abstract

Organizations introduce virtual assistants (VAs) to support employees with work-related tasks. VAs can increase the success of teamwork and thus become an integral part of the daily work life. However, the effect of VAs on virtual teams remains unclear. While social identity theory describes the identification of employees with team members and the continued existence of a group identity, the concept of the extended self refers to the incorporation of possessions into one’s sense of self. This raises the question of which approach applies to VAs as teammates. The article extends the IS literature by examining the impact of VAs on individuals and teams and updates the knowledge on social identity and the extended self by deploying VAs in a collaborative setting. Using a laboratory experiment with N = 50, two groups were compared in solving a task, where one group was assisted by a VA, while the other was supported by a person. Results highlight that employees who identify VAs as part of their extended self are more likely to identify with team members and vice versa. The two aspects are thus combined into the proposed construct of virtually extended identification explaining the relationships of collaboration with VAs. This study contributes to the understanding on the influence of the extended self and social identity on collaboration with VAs. Practitioners are able to assess how VAs improve collaboration and teamwork in mixed teams in organizations.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

In virtual collaboration, teams are required to collaborate via technology (de Vreede and Briggs 2005; Changizi and Lanz 2019) which can result in a lack of a common social identity (Vahtera et al. 2017). With some technologies, such as virtual assistants (VAs), the role of technology is changing from a mere tool for virtual collaboration with other humans to its own virtual collaboration with VAs (Maedche et al. 2019; Seeber et al. 2020a). VAs are software programs that can be addressed via voice or text commands and respond to the users’ input (Brachten et al. 2020). They are increasingly being used in organizations to optimize internal processes by assisting in the execution of work-related tasks (Norman 2017) to achieve, for example, increased customer satisfaction, thus creating substantial advantages over competitors (Benbya and Leidner 2018; Yan et al. 2018). Unlike physical robots, such as Nao or Pepper, which have a physical human representation (Maniscalco et al. 2020), a physical interaction with VAs is not possible. However, VAs are used in virtual collaboration (Seeber et al. 2020a; Panganiban et al. 2020). It is predicted that they will be used by at least a quarter of employees working in virtual teams within the next two years (Maedche et al. 2019). To understand virtual collaboration between humans and machines such as VAs, knowledge from human-to-human collaboration research should be exploited (Demir et al. 2020).

Nowadays, many team members, such as those in global virtual project teams (Massey et al. 2003), are physically widely distributed and collaborate primarily virtually (Plotnick et al. 2016; Hassell and Cotton 2017; Andres and Shipps 2019). Virtual collaboration ranges from working together in virtual computer-generated worlds (Franceschi et al. 2009; Kohler et al. 2011) to collaboration using tools such as Google Drive (Van Ostrand et al. 2016). Successful virtual collaboration is influenced by aspects such as social presence (Franceschi et al. 2009) and social identity (Lin 2015; Vahtera et al. 2017). Identifying with team members at the workplace as a social group contributes significantly to improving the individual performance of each employee and encourages achieving an overarching goal more efficiently (Lin 2015; Porck et al. 2019). One’s own identity can partially be depicted within the framework of a virtual collaboration, for example, by visualizing gender, age, and social class via embodiment through an avatar (Schultze 2010). The social identity of team members can also be transferred to virtual collaboration (Guegan et al. 2017). Social identity describes the identification with other (virtual) team members and the maintenance of one’s own identity by comparing one’s self-concept with other people’s perceived values, norms, and characteristics (Brown 2000).

Research on the role of VAs as team members is not a recent development (Seeber et al. 2020a; Panganiban et al. 2020; Demir et al. 2020). However, it is still largely unexplored whether VAs are perceived as part of one’s team or as a simple tool or object in virtual collaboration. The identification with an object as part of one’s self has been called the “extended self” (Belk 1988; Tian and Belk 2005; Clayton et al. 2015) and has been transferred to the workplace and the digital world. People extend their identity by incorporating capabilities that fit to their self-concept, and thus, positively enhance their self.

In contrast, the theory of social identity focuses on the comparison with other humans in order to form and maintain one’s identity (Tajfel and Turner 1986). This apparent contradiction raises the question of which approach applies to VAs as team members in virtual collaboration. Examining this is fundamental to understand how and with what purpose VAs should be deployed in organizations as collaborative partners. Deploying VAs could help organizations to save valuable resources when they are used as tools to assist employees in work-related tasks or when they behave as team partner in order to increase team identity and therefore team efficiency. To examine the role of VAs in virtual collaboration in detail, our research is guided by the following research question:

How does identification with VAs vs. that with humans as virtual team members differ in virtual collaboration?

To answer the research question, we conducted a laboratory experiment with 50 participants. Those in the experimental group were asked to solve a typical work-related task in collaboration with a text-based VA, while the control group was assisted by another human via chat. We measured and compared the extended self and the social identity for both groups as well as the perceived workload. This paper contributes to research and practice by extending our understanding of the collaboration between employees and VAs in an organizational context to drive future research in this field of high relevance. Information systems (IS) researchers will find the insights helpful to understand what influence the extended self and social identity theory have on virtual collaboration with VAs assisting in work-related tasks. To guide future research, we introduce the concept of virtually extended identification as a combination of social identity and the extended self for virtual collaboration between VAs and employees.

2 Related Work: Virtual Assistants in Organizations

Collaboration technologies have a long history in IS research (Schwabe 2003; Frohberg and Schwabe 2006; Bajwa et al. 2007; You and Robert 2018). For VAs, as one of these technologies, the IS community uses a variety of definitions (e.g., Maedche et al. 2019; Seeber et al. 2020a; Diederich et al. 2020). Luger and Sellen (2016) define CAs as “IS that enable the interaction with users via natural language.” Stieglitz et al. (2018) state that VAs in enterprises “can be addressed via voice or text and that can respond to the users input (i.e. assist) with sought-after information.” VAs can generally be explained as software programs that can be addressed via different modes of communication (e.g., written or spoken natural language), assisting with tasks or executing them autonomously (Brachten et al. 2020). Related terms include but are not limited to chatbots (Stieglitz et al. 2018), conversational agents (Diederich et al. 2020), and digital assistants (Maedche et al. 2019). Research divides the concept of VAs into various categories, such as design characteristics or assistance domain (Knote et al. 2019). However, systems are usually classified along two dimensions (Gnewuch et al. 2017) – their primary mode of communication (e.g., text-based or speech-based) (Lee et al. 2009) and their main purpose (narrow or broad task) (Nunamaker et al. 2011). A categorization into one of these classes is not always possible due to potential overlaps. For example, VAs can be augmented to cope with individual requirements (Chung et al. 2017), and text-based systems might convert human language into text to process information (Gnewuch et al. 2017).

VAs need to be differentiated from a number of related concepts. VAs can distinguish among and interpret the emotions of individuals within teams (McDuff and Czerwinski 2018) and use different language styles to adapt to varying users (Gnewuch et al. 2020). Thereby they might use social cues, including the dimensions of verbal (e.g., jokes, temporal expressions, or self-disclosure), visual (e.g., emoticons, facial expressions, or agent visualization), auditory (e.g., voice gender, grunt, and moan or laughing), and invisible (e.g., first turn, response time, or tactile touch; Feine et al. 2019). Thus, collaborating with VAs might not be restricted to certain commands, phrases, or keywords; rather, individuals can use their habitual language (McTear 2017; Feine et al. 2019). Although VAs theoretically have various verbal, visual, auditory and invisible characteristics that can impact social behavior in humans (Feine et al. 2019), in practice it is still hardly possible to simulate fully human behavior. VAs are usually capable of supporting a narrow task (Davenport 2018), but may not be able to provide appropriate answers in every context. They are therefore usually characterized by a certain selection of social cues, but cannot represent a fully human consciousness (Russel and Norvig 2016).

The ongoing improvements to artificial intelligence (AI) and machine learning (ML) algorithms as a prerequisite to developing collaborative systems had led to an increasing concentration on VAs as work facilitators (Berg et al. 2015; Spohrer and Banavar 2015; Luger and Sellen 2016; Knijnenburg and Willemsen 2016; Nasirian and Ahmadian 2017). The use of VAs in organizations is valuable for facilitating internal processes and supporting employees in better completing their tasks as well as generating additional revenue or cost savings (Quarteroni 2018). VAs are used for direct interaction with consumers, and they positively affect customer satisfaction (Verhagen et al. 2014). Question-and-answer assistants facilitate onboarding processes of new hires (Shamekhi et al. 2018). The workload of employees is reduced by supporting the resolution of customer incidents (McTear 2017) and the execution of work-related tasks (Brachten et al. 2020).

Current research demonstrates that VAs can improve virtual collaboration (Waizenegger et al. 2020; Seeber et al. 2020a). Organizational human teams frequently fall short of their possibilities (Kozlowski and Ilgen 2007), thus the use of a VA as a legitimate virtual team member and socio-technical ensemble (Seeber et al. 2018) might foster decision making and improve team collaboration (Waizenegger et al. 2020; Seeber et al. 2020b). The integration of VAs as virtual colleagues is valuable to increase the effectiveness of virtual collaboration in teams (Goodbody 2005). With their unique characteristics (Maedche et al. 2019; Feine et al. 2019) and ongoing application in practice (Brachten et al. 2020), it can be assumed that an increasing degree of team dynamics from purely human virtual teams can be transferred to human–machine teams.

3 Theoretical Background

3.1 Social Identity

Social identity is a grounded concept that can influence the performance of virtual teams (Lin 2015). In social identity theory, Tajfel and Turner (1986) assume that human identity is not only composed of individually unique character traits and physical characteristics but also of belonging to certain social groups. This might include people of the same age group, family, friends, and even work colleagues (Bartels et al. 2019).

By comparing with other social groups, such as other departments or competing organizations, individuals try to draw a line to better understand who they themselves are (Tajfel and Turner 1986). People, such as employees, try to differentiate from others by means of positive characteristics that they attribute to themselves, which is known as intrinsically motivated positive distinctiveness (Haslam 2004). At the workplace, such characteristics can be team cohesion or quality of work.

In IS research, social identity theory at the workplace has been considered from perspectives including the psychological (Pepple and Davies 2019; Klimchak et al. 2019), the organizational (Dahling and Gutworth 2017; Mueller et al. 2019), and the societal viewpoints (Kenny and Briner 2013).

However, most previous studies have focused on examining social identity in human-to-human collaboration and the resulting social behavior (Kohler et al. 2011). With technologies such as VAs, which are capable of utilizing human social cues (Maedche et al. 2019), the role of technology is changing, and the boundaries between people and technology are blurring (Pickard et al. 2013). According to Young-Jae et al. (2020), people perceive it as increasingly difficult to describe the uniqueness of humans compared to machines and AI as the technology itself could be perceived as a social actor (Wang 2017; Edwards et al. 2019). This actor is less a technological environment than a possible new individual that could be part of an in-group or out-group in the context of social identity formation.

Revealing insights about the relationship between people and AI will open up new opportunities for organizations and interesting insights for further research. However, social identity theory is not the only concept that could explain the role of AI in virtual collaboration. Another concept from psychology addressing the social relationship between humans and objects (e.g., technologies) could also help to better understand the virtual collaboration between humans and machines – the extended self (Belk 2013).

3.2 The Extended Self

People develop and maintain several identities according to the context of their current situation (Burke 2006). Thus, Burke and Stets (2009) argue that people play different roles. For example, people face specific actors and topics at the workplace according to the situation, such as a team meeting or an idea pitch. Likewise, people need to adapt to other situations at home, such as in the context of the education of one’s children. Individuals have various roles prepared for the unique situations they face. Besides those roles, people maintain only one underlying self-concept connected to fundamental rules and values that they develop over time by categorizing in relation to others (Stets and Burke 2000; Burke and Stets 2009). Hence, identity is a well-discussed research area connected to various disciplines, such as psychology (Tajfel and Turner 1986), social psychology (Leary and Tangney 2011), sociology (Stets and Biga 2003), and economic psychology (Belk 1988). However, it is worth analyzing identity in relation to the increasing role of information technology as a new resource in our life and work (Tian and Belk 2005; Carter et al. 2015).

People extend their selves by considering particular possessions in order to supplement their self (Belk 1988, 2013). However, the concept of possessions is not limited to external objectives; it can also include other people or group possessions. Furthermore, under the perspective of upcoming technology, Belk (2013) argues that people can also consider digital possessions as potential extensions of the self. This might be achieved by, for example, dematerialization, sharing, or distributed memories. Particularly in the workplace of technology organizations, Tian and Belk (2005) argue that employees need to decide which part of the self fits the current situation of the work, and how. On one hand, this decision includes the process of negotiations between the “me” and the situation. On the other hand, this decision may stay hidden or might be retracted.

However, due to the integral role of information technology in everyday life and work, understanding information technology, for example, in the form of virtual collaboration and new social actors such as VAs, has become a relevant endeavor for IS research (Carter et al. 2015). In this regard, maintaining and extending the self are two central functions in the context of information technology and identity (Carter and Grover 2015). It is necessary to answer the question “Who am I in relation to this technology?” (Vignoles et al. 2011; Carter et al. 2015). This material perspective focuses on individual thinking and behavior (Dittmar 2011). Therefore, material identities are verified when people gain control and mastery of an object that they are interacting with.

Furthermore, people have a fundamental need to expand the self and seek self-enhancement. They can achieve this by supplementing social or physical resources, perspectives, and identities (Aron et al. 2003). One possible way for people to achieve this enhancement is by consolidating capacities yielded by (material) objects to which they have become emotionally attached (Belk 1988, 2013; Carter et al. 2015).

3.3 Derivation of Hypotheses

Social identity theory and the extended self describe two alternative pathways to maintain and form an individual’s identity (Tajfel and Turner 1986; Belk 1988, 2013; Stets and Burke 2000). Social identity theory holds that identification with other (social) actors leads to a sense of belonging to the group (external attribution of an actor’s values to the self; Tajfel and Turner 1986; Stets and Burke 2000). In comparison, the perspective of the extended self conceptualizes that a positive identification with an (virtual) object leads to an association of capabilities, characteristics, or meanings directly to the self (internal attribution of an actor’s values to the self; Belk 1988, 2013; Tian and Belk 2005). Based on the considerations of the theoretical background, Table 1 contrasts how the extended self and social identity determine the perception of a VA as a team member.

Previous research has stated that VAs can change how we live and how we work (Wang and Siau 2018; Dias et al. 2019); thus, employees and organizations need to find out how to collaborate with VAs within their virtual teams (Seeber et al. 2018). People spend a large part of their lives at their workplaces, where they build and maintain complex social relationships (Ellemers 2004). Their work and team colleagues hence represent important social resources through which individuals build their social identity and develop in-group and out-group behaviors (Tajfel and Turner 1986). Thus, questions arise as to whether VAs are perceived as part of these social resources, and whether they influence the identity of employees remains unanswered. As most VAs are designed as supportive tools (Lamontagne et al. 2014) and not as equivalent virtual team members, they still remain IS (Luger and Sellen 2016). Therefore, it can be assumed that collaborating with a VA as a chat partner or with a human chat partner impacts the identification with that chat partner. We therefore developed the following hypothesis:

H1:

Virtually collaborating with a VA or a human chat partner impacts the identification with the chat partner.

VAs can increase collaboration within virtual teams (Bittner et al. 2019; Seeber et al. 2020a). However, when employees use VAs as supportive tools for solving work-related tasks, it is likely that they interact less with their virtual human team partners. Nevertheless, the time employees spend with their virtual team impacts the team identification (Massey et al. 2003). Therefore, we derived the following hypothesis:

H2:

Identification with the human team is lower after collaborating with a VA than before.

Furthermore, Carter et al. (2012) have shown that young students extended their self-concepts by including the capabilities of their smartphones. According to Tian and Belk (2005) as well as Belk (2013), also digital tools or technology might be considered as part of one’s extended self. This identification and enhancement might also be attained by using, and thus incorporating, the capabilities of a VA in a certain context, such as virtual collaboration at the workplace. It remains unclear whether a new technology such as a VA will be perceived as part of one’s extended self. Thus, we derived the following hypothesis:

H3:

Virtually collaborating with a VA or a human chat partner impacts the perception of the respective collaboration partner as part of one’s extended self.

Research has shown that VAs are perceived as supportive technology (Brachten et al. 2020). However, it still needs to be researched what role such technology plays in self-identification at the workplace. Regarding social identity theory and extended self, two alternative pathways appear to maintain and form an individuals' identity (Tajfel and Turner 1986; Belk 1988). According to social identity theory, identification with other (social) actors leads to a sense of belonging to the group. Those social actors could be human team members or VAs (Edwards et al. 2019). However, perceiving VAs as social actors (Edwards et al. 2019) may contradict the perception of VAs as technology (Lamontagne et al. 2014; Carter et al. 2015). Therefore, it is possible that the approaches of social identity and the extended self interfere in virtual collaboration with VAs. Based on these assumptions, we derive that individuals’ identification with the team contradicts their identification with technology as a part of their extended self. We, therefore, derive the following hypothesis:

H4:

The individual’s identification with the team negatively correlates with the individual’s identification with technology as a part of their extended self.

4 Method

4.1 Participants

In this study, we conducted a laboratory experiment to examine how VAs in virtual teams are perceived when they assist individuals in performing tasks. The experiment was conducted in a lab at a German university between November 12, 2019 and February 10, 2020. We invited people via email, social network sites, and direct contact. Participation was voluntary and could be terminated without providing any reasons. As prerequisites, participants had to be at least 18 years old and experienced in teamwork within an organization. In total, 50 people took part in our study. We randomly assigned the participants into two groups, resulting in a well-balanced sample of 25 participants for each condition. The groups were formed ensuring that the proportion of women and men was approximately equal by frequently checking the distribution of gender across groups. If the distribution of subjects was skewed, the smaller group was prioritized. However, due to extreme responding indicating a response bias, we excluded four participants from the total sample. This yielded a total of 46 participants (24 in the VA group). In the control group, the participants were asked to perform a task with the help of a human chat partner. In our experimental group, the participants were asked to solve the same task using a VA. In both cases, the collaboration with the counterpart was possible via the online chat platform Slack.Footnote 1 In both groups, a trained experimenter supervised the subjects to secure the subjects’ attention during the course of the study. Overall, 84% of the participants were female (N = 39), and ages ranged from 18 to 63 (M = 23.1, SD = 7.54). Furthermore, 73% of the participants had passed the equivalent of their A-levels, while 15% held a bachelor’s degree.

4.2 Materials

For our lab experiment, we used a set of questionnaires and modified scales to measure the constructs of interest. These were composed of questions on the extended self, social identity theory, demographic data, perceived workload, satisfaction, and the evaluation and perception of the VA. The analyses were calculated using the software tools Jamovi (1.0.8.0) and SPSS Statistics (Version 25). All data were presented and gathered via the LimeSurvey interface (Version 3.17.5).

4.2.1 Virtual Assistant

To examine how social identity is influenced and whether a VA expands one’s own self, we developed a text-based system with the help of Google’s cloud service DialogFlow.Footnote 2 By using underlying ML technologies, this platform provides easy access to the development of natural and rich conversational interfaces (Canonico and Russis 2018).

To keep the interaction with the VA as simple as possible, we developed a system using a text-based interface (Araujo 2018), which was integrated into the online chat platform Slack, one of the most widespread systems for simplified organizational communication. Participants were able to interact with the VA simply by using a keyboard and computer screen (cf. Fig. 1). We explicitly avoided using further influential factors, such as voice commands or embodied avatars, to keep the interaction straightforward. Moreover, embodiment does not necessarily affect social behavior (Schuetzler et al. 2018). The VA supported the participants in handling the task by providing answers based on distinct keywords to questions posed. The feedback included a question–answer component (Morrissey and Kirakowski 2013; Lamontagne et al. 2014), which could be queried to gain information, support, and instruction about the specific task. However, the VA is only able to support the user in solving the ask by giving applicable hints but does not provide an actual solution for the task.

We deliberately chose aspects such as response time to be comparable between both groups to reduce potential influences on the performance and identification with the team member (Massey et al. 2003). Furthermore, the name of the VA (DialogFlow Bot) directly points to a VA as a collaboration partner. Therefore, the subjects should be aware that they were interacting with either a human or a VA. Although our VA had basic conversational skills and social cues such as ‘Ask to start’, ‘Tips and advice’, ‘Excuse’ or ‘Greeting and farewell’ (Feine et al. 2019) we did not aim to differ specific social cues between the VA and the human (Feine et al. 2019), because that was not our research focus.

We aimed to provide a medium level of social cues to ensure that the VA does not influence the results in one specific direction. Implementing more social cues may favor the perception of the VA as a social actor. In contrast, less social cues could increase the probability of perceiving the VA as a technical tool. With this, we ensured that potential differences in the perception of the team member are due to the team member’s nature (VA or human). To summarize, the goal is not to deceive the subjects about the chat partner but to investigate the difference in perception of the VAs and humans based on the subject's awareness about the chat partner.

To ensure that the given task is realistic but manageable during the experiment, we conducted a pre-study to verify its suitability. This approach also served as verification of the operability of the VA to guarantee a seamless collaboration during the experiment. The test was performed with a sample of 10 students (6 female, 4 male) with ages ranging from 22 to 31 (M = 25), which were randomly selected at a university. We compared a text-based task (TBT) with the critical path method (CPM). The TBT required participants to read texts about topics that do not rely on previous knowledge. In contrast, the CPM sorts activities according to their dependencies and logical order for determining the overall duration. Both tasks are commonly performed in organizations. The time limit for the execution was 10 minutes. We measured the perceived workload using the NASA Task Load Index (NASA-TLX). On average, participants given the CPM task achieved higher NASA-TLX scores (M = 12.5, SD = 3.85) than the TBT group (M = 6.36, SD = 4.06). This difference of 6.13 was significant (95% CI [0.35, 11.91], t (8) = 2.44, p = 0.040). Furthermore, it represents a large effect, d = 0.98. We assess the CPM task to be more demanding of participants compared to the TBT. Hence, participants benefit more from a VA when being assisted with the CPM, justifying its choice for the experiment.

4.2.2 Social Identity

We used two different questionnaires to measure collective social identity as well as personal identification with the team. For identification with the team, we used the About-Me Questionnaire (Maras et al. 2018), in which the respondents were first asked to indicate how much they felt they belonged to the social group at their workplace. This questionnaire consists of four items, which are rated on a five-point Likert scale. One example item was “I like being with my team.” The subscale of the About-Me Questionnaire had a medium-to-high reliability for the first (α = 0.759) and second (α = 0.732) measurement time points. The About-Me Questionnaire was queried both before and after the interaction with the chat partner to determine a possible change of the specific social identity. In addition to the two measurement time points, we asked whether in the interaction the VA or human chat partner was perceived as part of the social group at work. This took place after the chat interaction. For this purpose, we used a modified About-Me Questionnaire (Identification with the chat partner). An example item was “I am similar to my virtual assistant.” We decided to use the scale directed toward the chat partner to check for possible differences between the general social identity attitude and the social identity attitude toward the interaction scales. The subscale of the modified About-Me Questionnaire had a high reliability, α = 0.835.

4.2.3 The Extended Self

To measure the extended self, we used the extended self scale by Sivadas and Machleit (1994). The scale is largely based on Belk's (1988) view of the extended self. With the scale, Sivadas and Machleit (1994) aimed to assess the degree of incorporation of possessions into the extended self. The scale consists of six components scored on a seven-point Likert scale. The subscale of the general extended self scale (GES) had high reliability, α = 0.839. We chose the scale as it was feasible to adopt for a VA as the considered object for the items. After the chat interaction with the VA or the human, the participants had to answer an adapted version of the extended self scale (AGES) related to the specific chat partner. The AGES measures to what extent the subjects perceiving the chat partner as part of one’s self. An example item was “My virtual assistant is part of what I am.” The subscales of the second measurement scored a high reliability, α = 0.886.

4.2.4 NASA-TLX

To determine the perceived workload of the task, we used the NASA-TLX (Galy et al. 2012), a valid measurement developed by the National Aeronautics and Space Administration (NASA; Hart and Staveland 1988). Examining the perceived workload is important to check whether the new VA influences the performance due to the potential need for increased cognitive resources to interact with a new technology. This assessment tool has successfully been used in several research approaches and proven to be valuable for laboratory experiments (Rubio et al. 2004; Noyes and Bruneau 2007; Cao et al. 2009). The NASA-TLX includes the following six subjective subscales: (1) mental demand, (2) physical demand, (3) temporal demand, (4) performance, (5) effort, and (6) frustration (Hart 2006, p. 904). Mental demand explains how much cognitive activity is needed, and physical demand, in contrast, explains how much manual activity is needed. Temporal demand represents the perceived time pressure. Performance describes the perception of one’s own personal accomplishment, effort is the opinion of how much work had to be done to reach a result, and frustration refers to the level of disappointment during the execution of a task. The subscale scored a high reliability, α = 0.808.

4.2.5 Satisfaction

To analyze the perceived satisfaction of the chat interaction via the communication interface, we used the possession satisfaction index (PSI) by Scott and Lundstrom (1990). Measuring the perceived satisfaction may allow us to reveal potential influences that could be caused by the individual perception of the interaction. The PSI uses a seven-point semantic differential scale and contains of three two-pole items of (1) satisfied/dissatisfied, (2) pleased/displeased, and (3) favorable/unfavorable. Furthermore, the PSI scored a high reliability, α = 0.924.

4.3 Procedure

We divided our experiment into one experimental group and one control group. Both groups were alternately tested and told that they should consider the situation as if they were at a workplace they are used to. In the experimental condition, we requested the participants to solve a task in collaboration with a VA. In the control condition, we replaced the collaboration partner with a human chat partner. The procedure of the experiment followed the structure described in the following. All major steps of our experiment are visualized in Fig. 2.

First, we briefed the participants about the experiment. Furthermore, we asked them to read an introductory text and to start with the survey. We reminded the participants that they should imagine they are in a normal working situation and that they should relate the questions to the perception of their current team at work. Initially, we had administered general questionnaires on the extended self, social identity theory, and demographic data. In addition to demographic data such as age, gender, and educational level, we also collected information about the current professional activity and the industry in which the respondents are currently working.

After that, we asked both groups to solve a CPM. To compare performance between the groups, we awarded a point for each correct path and node. This yielded a maximum achievable score of 28. The goal was to plan a research project for a market research unit of a large company. Participants had to arrange an unordered list with various process steps (such as “develop study idea,” “literature research,” “conducting the study,” and “develop methodology”) to identify the minimal throughput time. They were to read an introductory text and an example to gain a rough understanding of the task, and we told them that they would have to solve a similar task shortly.

We informed the experimental group that they would have the support of a VA who is well versed with the CPM, whereas we told the control group that they would be contacting a human chat partner. The VA as well as the human chat partner could be contacted via a Slack chatroom. To familiarize them with the interaction, we instructed the participants to introduce themselves to the assistant (or human chat partner), whereby the assistant (or human chat partner) guided them through a tutorial dialog. After this familiarization phase, we provided the CPM task, which the participants had to solve within ten minutes. We advised them to contact the VA (or human chat partner) when any questions arose. We designed the task in such a way that the participants did not have all the necessary information for the required solution in advance in order to initiate interactions with the VA. After ten minutes of processing time, the examiner received the solution. We then requested that the participants continue the survey. With the following questions, we aimed to evaluate the assistant and assess their skills during the task. Subsequently, we enquired the questionnaires on social identity theory and extended self a second time to determine a possible difference in perception. After completion of the last question, we provided a short written debriefing to the respondents to explain what had been examined in the study.

To counteract possible disruptive factors that can arise from interaction with a real human in the control group, the human chat partners followed a semi-structured guideline to ensure that the information provided was as similar as possible to that of the VA. The chat partners were controlled by one experimenter, who switched to the adjoining room for both conditions.

4.4 Influence of the Perceived Workload, Satisfaction, and Demographics on the Groups

To ensure that the results would not be unduly influenced by further variables such as the age, gender, or education of the participants or satisfaction with the chat interaction or the perceived workload, we conducted the following analyses. Determining demographical influences on the main constructs of the study revealed no significant correlation between age and gender and the extended self and social identity scales. However, we observed a small correlation between age and the About-Me Questionnaire (Identification with the team), r (46) = 0.313, p = 0.034. Additionally, checking for group differences between the various education levels did not show any significant differences toward the (modified) About-Me Questionnaire (Identification with the chat partner) as well as the GES (Perception of technology of one’s self) and the AGES (Perception of the chat partner as part of one’s self). The mean scores of both groups revealed a medium perceived workload. However, to check for a potential difference, we conducted a t-test for independent samples due to the non-significant Levene and Shapiro–Wilk tests. Overall, there was no significant difference between the VA group (M = 10.7, SD = 3.16) and the human chat partner group (M = 11.2, SD = 3.65), p = 0.611 and d = −0.129. Furthermore, the data did not show a difference between the VA chat partner group (M = 2.88, SD = 1.56) and the human chat partner group (M = 3.11, SD = 1.89) regarding the satisfaction score after the chat interaction, p = 0.113 and d = −0.134.

To check whether satisfaction with the interaction and perceived workload are related, a correlation was calculated between the two variables. To reveal insights about the two groups, we conducted correlations separately for each group. Satisfaction was positively correlated with perceived workload r (24) = 0.662, p < 0.001 in the VA group but not in the human group, r (22) = 0.204, p = 0.363. Table 2 presents further significant correlations in the VA group between perceived satisfaction and the single items of the NASA-TLX score.

5 Results

In this section, first, we check the observed major scales’ (GES, AGES, About-Me, and Modified About-Me) reliability and validity measures (Cronbach and Meehl 1955; O’Leary-Kelly and Vokurka 1998; Peters 2018). Second, we introduce the results regarding social identity theory and the extended self. Table 3 summarizes the values for composite reliability, average variance extracted (AVE), and construct validity. The comprehensive results are shown in the Appendix (available online via http://link.springer.com), including factor loadings as well as correlation coefficients for each item of the major scales. In summary, the described constructs explain on average more than 50% of the variance (Table 3). Regarding the validity measurements, construct validity shows that the modified About-Me Questionnaire might be linked to the AGES.

5.1 Social Identity

To check for potential group differences regarding the distinct social identity questionnaires, we conducted a one-way ANOVA. According to Levene’s test for equality of variances, we cannot assume equality for the collective identity orientation scale (F (1,44) = 6.294, p = 0.016), thus we chose the more robust Welch’s one-way ANOVA. For collective identity orientation, the VA group (M = 2.18, SD = 0.364) differs significantly from the human (M = 2.82, SD = 0.711) group, F (1,30.7), p < 0.001.

To examine social identification with the specific chat partner (bot or human), a linear regression model was calculated that predicts the score on the modified About-Me Questionnaire based on the participant’s group and the control variables age, gender, satisfaction, and perceived workload. According to Levene’s test of equality of variances (p = 0.484) and the Shapiro–Wilk test of normality (p = 0.713), we assume equality of variances as well as normal distribution. Results of the multiple linear regression model indicated no significant effect overall, F (5,49) = 1.44, p = 0.230, R2 = –0.153. The individual predictors were examined further and indicated that satisfaction (t = –2.18, p = 0.035) is a significant predictor in the model (Table 4).

H1 stated that virtual collaboration with a VA, compared to a human partner, affects social identity, that is, the degree of identification with the chat partner. This is not supported by the findings.

To test within each group whether identification with the teams and colleagues differs before and after solving the task, we conducted a paired samples t-test for group differences with a 95% confidence interval and the two measurements of the About-Me Questionnaire as paired variables for each group. For the VA group, the Shapiro–Wilk test of normality was non-significant (p = 0.173), and no violation of normality was therefore assumed. On average in the VA group, the first measurement (M = 3.58, SD = 0.810) of the About-Me Questionnaire was slightly higher than the second measurement (M = 3.34, SD = 0.638). This difference was significant t (23) = 3.15, p = 0.004, with a medium-sized effect (d = 0.64). Therefore, the results support H2, indicating that people who collaborate with VAs indeed identify less with their human team after interaction with the VA than they did before. For the human group, the Shapiro–Wilk test of normality was also non-significant (p = 0.056), so no violation of normality was assumed. Thus, a paired samples t-test was conducted for the human group. The test showed no significant differences (p = 0.773, d = −0.063) between the first measurement of the About-Me Questionnaire (M = 3.38, SD = 0.427) and the second measurement (M = 3.33, SD = 0.633).

5.2 The Extended Self

To examine the role of the extended self in the context of social identity and virtual collaboration, we conducted group comparisons and correlations. We analyzed the score of the GES as well as the score of the AGES regarding the chat interaction used in the experiment.

To reveal potential influences of the groups and control variables on the identification with the chat partner (AGES) as part of one’s self, we applied a linear regression model. Levene’s test for equality of variances was not significant for the AGES (p = 0.279); thus, equality of variances was assumed. Results of the multiple linear regression model indicated no significant effect of the group (human or VA) or the control variables age, gender, satisfaction, and perceived workload on the identification with the chat-partner as part of one’s self (AGES), F (5,49) = 0.666, p = 0.652, R2 = −0.0768. The individual predictors were examined further, and none of them were significant (Table 5). These results do not support an impact of the groups, thus H3 is not supported by the findings.

Furthermore, we investigated the relationship between the two scales of the extended self and the perception of the chat partner (VA and human) as being part of one’s social group at work. To this end, we conducted a bivariate correlation overall for both groups as well as separately for each group. Overall, the GES score, r (46) = 0.467, p = 0.001, and AGES score, r (46) = 0.589, p < 0.001, showed significant positive correlations with the modified About-Me Questionnaire. Analyzing the relationship for the VA group revealed a significant positive correlation between the GES score and the modified About-Me Questionnaire, r (24) = 0.486, p = 0.016. Likewise, the AGES score correlates significantly, r (24) = 0.641, p < 0.001. The human chat partner group showed only a significantly positive correlation for the AGES score and the modified About-Me Questionnaire, p = 0.009, r (22) = 0.540. Therefore, the correlation between the GES score and the modified About-Me Questionnaire was not significant, p = 0.336, r = 0.215. To summarize, the results do not support a negative relationship between individuals’ identification with the team and individuals’ identification with technology as a part of their extended self (H4). However, the results revealed a positive relationship.

6 Discussion

6.1 Key Findings

In this study, we examined how a VA affects social identity and the extended self in virtual collaboration. First, we did not find a significant impact of virtual collaboration with a VA, compared to a human partner, on social identity, that is, on the degree of identification with the team (H1). In this context, VAs may do not differ as a team member compared to a human. This is consistent with the results of Edwards et al. (2019), who found that VAs could act as equal social actors.

However, a key finding of this paper is that people who collaborate with VAs identify less with their (human) team after their interaction with the VA than they did before (H2). This medium-sized effect indicates that working with VAs could influence the social identity of a person in the context of virtual collaboration. This may be explained by the fact that the person feels more independent and able to solve the task alone. Even if, according to Young-Jae et al. (2020), people increasingly face difficulties in expressing the uniqueness of humans compared to AI applications, VAs seem to reduce the social identification with team members. This may be explained by the feeling that people experience less connection to their team after interacting with the VA solely. However, this does not appear to be due to an emotional attachment to the VA as You and Robert (2018) found a connection between team identity and emotional attachment to VAs. Therefore, further questions arise for future IS research: How should we design a VA in order to strengthen the feeling of being connected to the team? How important is the role of identification with one’s own team for future work? What impact will VAs have on team collaboration? What implications will VAs have on the digital workplace?

There is no significant difference in the perceived workload of the task and the achieved score between the group supported by a VA and the group assisted by another human. The workload of solving the CPM assisted by the VA is therefore neither perceived as higher nor as lower. This result is contrary to Moreno et al. (2001) and Brachten et al. (2020), who were able to show that individuals supported by VAs outperform humans who did not use a VA. Furthermore, Mechling et al. (2010) demonstrated that groups advised by a VA reach better outcomes. However, a positive lesson that can be drawn from this is that the task-solving with the VA did not put any additional strain on the participants in solving the tasks. In this respect, the support by a VA seems to be similar to the support by another person.

The results do not suggest an influence of collaboration with a VA or a human chat partner on the perception of the respective collaboration partner as part of one’s extended self (H3). According to identity research, the formation of identity and its extension is a dynamic process that adapts over time (Burke and Stets 2009; Carter et al. 2015). At the point of introducing a new technology, the participants did not perceive the VA and the human chat partner differently regarding the chat partner as a resource for maintaining or enhancing the self.

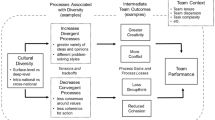

6.2 Implications for Theory: The New Concept of Virtually Extended Identification

As a key finding and in contradiction to H4, the study revealed that someone who identifies with their team members is also more likely to identify with the technology as a part of their extended self and vice versa. This highlights a possible connection between the theory of social identity and the concept of the extended self, as some literature hinted at. We found a positive correlation between the individual’s identification with the team and the individual’s identification with technology as a part of their extended self (H4 not supported). Particularly, for social identification with technology, such as VAs as team members (Seeber et al. 2020a), the underlying concept of the extended self could be considered to explain upcoming interactions. Considering individuals’ mental processes in social groups, individuals divide other team members into either their in-group or out-group. They apply social rules and determine the value of their own group related to other groups (Tajfel and Turner 1986). This conceptualization does not sufficiently consider that technology, specifically, a VA, is capable of being a virtual team member. Working with a VA as a virtual team member might enrich one's social group by perceiving the VA as a team member of the group (external perspective). Furthermore, a VA might support one’s self-esteem by positively identifying with the VA’s characteristics and capabilities, which might lead to enhancing one’s human capabilities (internal perspective). Therefore, VAs may be externally attributed to one’s in-group as a team member or be part of one’s in-group by internally attributing the VA to one’s self. However, past research does not differentiate the two pathways that we examined with H4.

People use newly introduced technology such as a VA and identify with the capabilities and characteristics of these supportive tools when they start to compare themselves with the VA. On one hand, people feel connected to this technology that might lead to improving their own capabilities with the aid of a VA. On the other hand, people then perceive the VA as a social team mate, according to Seeber et al. (2018). This can also be the other way around. Therefore, both concepts are necessary to understand how human behavior is influenced by newly introduced technology such as VAs. Furthermore, analyzing the construct validity has shown that the constructs of the extended self and social identity theory directed toward the VA are connected (Cronbach and Meehl 1955; O’Leary-Kelly and Vokurka 1998). We hence derive that for the context of virtual collaboration, the construct identification with team (members) of the social identity theory and the concept of the extended self are intertwined. Each may represent different facets of the same underlying construct. This becomes evident regarding the aspects of social comparison and positive distinctiveness of the social identity theory and the process of extending the self. People consider personal attributes, other people, groups (e.g., values of the group), or abstract ideas (e.g., morals of society) in regard to their self when forming the self. An extension of the self can take place by regarding these (social) aspects through control (e.g., a technology), knowledge (e.g., a person), or a feeling of belonging (Tajfel and Turner 1986; Belk 1988; Carter et al. 2015). Thus, people compare themselves with people and technology to determine and extend their own identity. This also happens with possessions, such as technology at the workplace (Tian and Belk 2005). By positively identifying with the VA, positive distinctiveness can be brought about, especially in the workplace.

Our findings suggest a positive connection between social identity theory and extended self (H4). We therefore propose combining these two aspects of identification into the overarching construct of virtually extended identification to understand the relationships evolving in virtual collaboration with VAs (see Fig. 3). Virtually extended identification describes the process of maintaining and extending the self by comparing the current self with a VA. On the one hand, the VA substitutes the role of a human collaborator, according to Seeber et al. (2018), Demir et al. (2020), and Panganiban et al. (2020). On the other hand, the VA is also considered as technology, according to Schwabe (2003), Bajwa et al. (2007), Frohberg and Schwabe (2006), and Vahtera et al. (2017). Thus, the observed relationship between the extended self and the social identification with the VA reveals that a VA as a supportive conversational technology has a dual function. This means that people can assess a VA as a social actor as well as a form of technology at the same time. Therefore, virtually extended identification describes the degree to which a person's identity matches the perceived identity of the VA as a team member (social actor as an external attribution to the in-group) and the degree to which the capabilities of the VA are attributed to the person’s self (internal attribution to the in-group by the identification of the VA’s characteristics, values, and capabilities with the self). This dual function of the VA is also based on the results suggesting that VAs do not significantly differ compared to a human chat partner regarding influence on perceived workload, performance (H1 and H3 not supported). However, satisfaction might have an impact on the identification with the chat partner in the context of virtual collaboration as the findings imply. Thus, companies could save valuable resources by deploying VAs in virtual collaboration as a chat partner. VAs should be deployed as both supportive tools to assist work-related tasks and as members of virtual teams to increase social identity and positive distinctiveness. In this way, the positive aspects of both theories (Lin 2015; Vahtera et al. 2017) could be used to achieve an overarching goal more efficiently. The creation of a social presence through social cues (Feine et al. 2019) could further reinforce these aspects (Franceschi et al. 2009).

Thus, one of the most relevant findings of this study is that social identity and the extended self in virtual collaboration with VAs are not contradictory, as assumed in H4. VAs can be perceived simultaneously as team members and as tools. The boundaries between technology as a collaboration platform and tool and technology as a partner for virtual collaboration seem to blur. However, the question arises as to whether our findings can be generalized since we examined a specific VA in our experiment. In this respect, recent research is currently using many VAs, chatbots, and conversational agents that are purely text-based agents (Hofeditz et al. 2019, p. 201; Diederich et al. 2020; Brachten et al. 2020). We used the social cues that are effective according to current knowledge (Feine et al. 2019) and tried to keep the interference factors, such as the influence of a time limit on team performance (Massey et al. 2003), as low as possible. Our insight into the relationship between social identity theory and the extended self in the context of virtual collaboration with VAs leads to an advanced understanding of machines as teammates and can be explained by the existing IS literature (Schwabe 2003; Waizenegger et al. 2020; Seeber et al. 2020a, b).

6.3 Limitations and Further Research

This study examined the effects of a newly introduced technology. It may be possible that the perception of the VA changes over time by using the VA for a longer period. Further studies may use and compare these findings with studies where VAs are used over longer periods of time. The level of anthropomorphism of a VA and the use of different social cues might also influence the perception of a VA. This aspect should be considered in future research.

As we focused on understanding the perception of VAs in the context of social identity and extended self, we examined one cultural background which is Central European. Further studies may consider cross-cultural differences in regard to VA adoption. Moreover, further studies may aim for a larger sample size to show possible unrevealed effects. Furthermore, we strongly recommend testing the proposed construct of virtual identification in different collaborative scenarios to take the next steps in understanding identification in the context of virtual collaboration.

Moreover, not only text-based communication but also interaction via speech may have an influence on the perception of VAs (Edwards et al. 2019). Additionally, the collaboration platform used in which the VA was integrated could also have influenced the social identity (Hu et al. 2017). Furthermore, the virtual collaboration environment might also be an influencing factor on the perception of the VA. We suggest that future research consider potential differences in virtual collaboration between distinct environments.

7 Conclusion

This study provides new insights regarding social identity theory as well as the concept of the extended self in the context of virtual collaboration. First, it was shown that people who work with VAs identify less with their (human) team after their interaction with a VA. Therefore, collaborative VAs may influence the social identity of a person. Second, this study highlights that someone who identifies the VA as part of their extended self is also more likely to identify with (virtual) team members and vice versa. The revealed intertwining emphasized that research needs to change its understanding of (social) identification in the context of virtual collaboration with VAs. Neither concept should be regarded in isolation.

This study contributes to social identity theory as well as the extended self by proposing a new construct to understand identification with team members and technology in a collaborative context. The study reveals that the relationship between social identification with (virtual) team members and expanding the self through technology such as VAs is not contradictory but rather that they complement each other. VAs are not only perceived as resources to maintain and extend one’s identity but also as social actors. This implies that research should not separate these concepts but rather combine their specific aspects to understand human behavior in virtual collaboration. To this end, items of both constructs may be combined and evaluated to develop the new virtually extended identification construct. This concept may be better suited for understanding human behavior in the changing landscape of virtual collaboration.

This study also provides practical contributions. VAs are a collaborative tool with a low entry barrier. The findings suggest that the support of a VA is similar to that of a human. Thus, organizations could save valuable resources by using VAs to support employees in their tasks. Especially in the context of a newly introduced technology, one could expect the effort needed to learn the technology to lead to an increase in perceived workload, but no significant effect was observed. However, the results indicate that the collaboration with a VA might lower the identification with other team members. As a worst-case scenario, employees do not feel part of the human team in return. Thus, decision makers should take measures to encourage the continued identification with other colleagues when introducing such technology within the organization. However, people might identify VAs as resources for expanding their own capabilities, but at the same time VAs might be seen as social actors during collaboration. Overall, VAs are a resource-saving tool that managers may use to support their human employees. In this context, the introduction of VAs should be accompanied by measures to support the continued social identification with other colleagues, such as social events or gatherings.

References

Andres HP, Shipps BP (2019) Team learning in technology-mediated distributed teams. J Inf Syst Educ 21:10

Araujo T (2018) Living up to the chatbot hype: the influence of anthropomorphic design cues and communicative agency framing on conversational agent and company perceptions. Comput Hum Behav 85:183–189. https://doi.org/10.1016/j.chb.2018.03.051

Aron A, Aron E, Norman C (2003) Self-expansion motivation and including other in the self. In: Fletcher GJO, Clark MS (eds) Blackwell handbook of social psychology: interpersonal processes. Blackwell, Oxford

Bajwa D, Lewis L, Pervan G et al (2007) Organizational assimilation of collaborative information technologies: global comparisons. In: 2007 40th annual Hawaii international conference on system sciences

Bartels J, van Vuuren M, Ouwerkerk JW (2019) My colleagues are my friends: the role of facebook contacts in employee identification. Manag Commun Q 33:307–328. https://doi.org/10.1177/0893318919837944

Belk RW (1988) Possessions and the extended self. J Consum Res 15:139. https://doi.org/10.1086/209154

Belk RW (2013) Extended self in a digital world. J Consum Res 40:477–500. https://doi.org/10.1086/671052

Benbya H, Leidner D (2018) How Allianz UK used an idea management platform to harness employee innovation. MIS Q Exec 17:900–933

Berg MM (2015) NADIA: a simplified approach towards the development of natural dialogue systems. In: Biemann C, Handschuh S, Freitas A et al (eds) Natural language processing and information systems. Springer, Cham, pp 144–150

Bittner E, Oeste-Reiß S, Leimeister JM (2019) Where is the bot in our team? Toward a taxonomy of design option combinations for conversational agents in collaborative work. In: Proceedings of the 52nd Hawaii international conference on system sciences

Brachten F, Brünker F, Frick NRJ et al (2020) On the ability of virtual agents to decrease cognitive load: an experimental study. Inf Syst E-Bus Manag 18:187–207. https://doi.org/10.1007/s10257-020-00471-7

Brown R (2000) Social identity theory: past achievements, current problems and future challenges. Eur J Soc Psychol 30:745–778. https://doi.org/10.1002/1099-0992(200011/12)30:6%3c745::AID-EJSP24%3e3.0.CO;2-O

Burke P (2006) Identity change. Soc Psychol Q 69:81–96

Burke PJ, Stets JE (2009) Identity theory. Oxford University Press, New York

Canonico M, Russis LD (2018) A comparison and critique of natural language understanding tools. In: The Ninth International Conference on Cloud Computing, GRIDs, and Virtualization

Cao A, Chintamani KK, Pandya AK, Ellis RD (2009) NASA TLX: software for assessing subjective mental workload. Behav Res Methods 41:113–117. https://doi.org/10.3758/BRM.41.1.113

Carter M, Grover V (2015) Me, my self, and I(T): conceptualizing information technology identity and its implications. MIS Q 39:931–957. https://doi.org/10.25300/MISQ/2015/39.4.9

Carter M, Grover V, Clemson University (2015) Me, my self, and I(T): conceptualizing information technology identity and its implications. MIS Q 39:931–957. https://doi.org/10.25300/MISQ/2015/39.4.9

Carter M, Grover V, Thatcher JB (2012) Mobile devices and the self: developing the concept of mobile phone identity. In: Lee I (ed) Strategy, adoption, and competitive advantage of mobile services in the global economy. IGI Global, Hershey

Changizi A, Lanz M (2019) The comfort zone concept in a human–robot cooperative task. In: Ratchev S (ed) Precision assembly in the digital age. Springer, Cham, pp 82–91

Chung H, Iorga M, Voas J, Lee S (2017) Alexa, can i trust you? Comput 50:100–104. https://doi.org/10.1109/MC.2017.3571053

Clayton RB, Leshner G, Almond A (2015) The extended iSelf: the impact of iphone separation on cognition, emotion, and physiology. J Comput-Mediat Commun 20:119–135. https://doi.org/10.1111/jcc4.12109

Cronbach LJ, Meehl PE (1955) Construct validity in psychological tests. Psychol Bull 52:281–302. https://doi.org/10.1037/h0040957

Dahling JJ, Gutworth MB (2017) Loyal rebels? A test of the normative conflict model of constructive deviance. J Organ Behav 38:1167–1182. https://doi.org/10.1002/job.2194

Davenport T (2018) The AI advantage how to put the artificial intelligence revolution to work, 1st edn. MIT Press, Cambridge

de Vreede G-J, Briggs RO (2005) Collaboration engineering: designing repeatable processes for high-value collaborative tasks. In: Proceedings of the 38th Hawaii International Conference on System Sciences – 2005

Demir M, McNeese NJ, Cooke NJ (2020) Understanding human–robot teams in light of all-human teams: aspects of team interaction and shared cognition. Int J Hum Comput Stud 140:102436. https://doi.org/10.1016/j.ijhcs.2020.102436

Dias M, Pan S, Tim Y (2019) Knowledge embodiment of human and machine interactions: robotic-process-automation at the Finland government. In: Proceedings of the 27th European Conference on Information Systems

Diederich S, Brendel AB, Kolbe LM (2020) Designing anthropomorphic enterprise conversational agents. Bus Inf Syst Eng 62:193–209. https://doi.org/10.1007/s12599-020-00639-y

Dittmar H (2011) Material and consumer identities. In: Schwartz SJ, Luyckx K, Vignoles VL (eds) Handbook of identity theory and research. Springer, New York, pp 745–769

Edwards C, Edwards A, Stoll B et al (2019) Evaluations of an artificial intelligence instructor’s voice: social Identity Theory in human–robot interactions. Comput Hum Behav 3:357–362. https://doi.org/10.1016/j.chb.2018.08.027

Ellemers N (2004) Motivating individuals and groups at work: a social identity perspective on leadership and group performance. Acad Manage Rev 29:459–478

Feine J, Gnewuch U, Morana S, Maedche A (2019) A taxonomy of social cues for conversational agents. Int J Hum Comput Stud 132:138–161. https://doi.org/10.1016/j.ijhcs.2019.07.009

Franceschi K, Lee RM, Zanakis SH, Hinds D (2009) Engaging group e-learning in virtual worlds. J Manag Inf Syst 26:73–100. https://doi.org/10.2753/MIS0742-1222260104

Frohberg D, Schwabe G (2006) Skills and motivation in ad-hoc-collaboration. Collect Collab Electron Commer Technol Res. https://doi.org/10.5167/uzh-61366

Galy E, Cariou M, Mélan C (2012) What is the relationship between mental workload factors and cognitive load types? Int J Psychophysiol 83:269–275. https://doi.org/10.1016/j.ijpsycho.2011.09.023

Gnewuch U, Morana S, Maedche A (2017) Towards designing cooperative and social conversational agents for customer service. In: International conference on information systems, p 15

Gnewuch U, Yu M, Maedche A (2020) The effect of perceived similarity in dominance on customer self-disclosure to chatbots in conversational commerce. In: 28th European conference on information systems

Goodbody J (2005) Critical success factors for global virtual teams. Strateg Commun Manag 9:18–21

Guegan J, Segonds F, Barré J et al (2017) Social identity cues to improve creativity and identification in face-to-face and virtual groups. Comput Hum Behav 77:140–147. https://doi.org/10.1016/j.chb.2017.08.043

Hart SG (2006) Nasa-task load index (NASA-TLX); 20 years later. In: Proceedings of the human factors and ergonomics society 50th annual meeting – 2006, p 5

Hart SG, Staveland LE (1988) Development of NASA-TLX (Task Load Index): results of empirical and theoretical research. In: Hancock PA, Meshkati N (eds) Advances in psychology. Elsevier, North-Holland, pp 139–183

Haslam A (2004) Psychology in organizations: the social identity approach, 2nd edn. SAGE, London

Hassell MD, Cotton JL (2017) Some things are better left unseen: toward more effective communication and team performance in video-mediated interactions. Comput Hum Behav 73:200–208. https://doi.org/10.1016/j.chb.2017.03.039

Hofeditz L, Ehnis C, Bunker D et al (2019) Meaningful use of social bots? possible applications in crisis communication during disasters. In: Proceedings of the 27th European conference on information systems

Hu M, Zhang M, Wang Y (2017) Why do audiences choose to keep watching on live video streaming platforms? An explanation of dual identification framework. Comput Hum Behav 75:594–606. https://doi.org/10.1016/j.chb.2017.06.006

Kenny EJ, Briner RB (2013) Increases in salience of ethnic identity at work: the roles of ethnic assignation and ethnic identification. Hum Relat 66:725–748. https://doi.org/10.1177/0018726712464075

Klimchak M, Ward A-K, Matthews M et al (2019) When does what other people think matter? The influence of age on the motivators of organizational identification. J Bus Psychol 34:879–891. https://doi.org/10.1007/s10869-018-9601-6

Knijnenburg BP, Willemsen MC (2016) Inferring capabilities of intelligent agents from their external traits. ACM Trans Interact Intell Syst 6:1–25. https://doi.org/10.1145/2963106

Knote R, Janson A, Söllner M, Leimeister JM (2019) Classifying smart personal assistants: an empirical cluster analysis. In: Proceedings of the 52nd Hawaii international conference on system sciences

Kohler F, Matzler, et al (2011) Co-creation in virtual worlds: the design of the user experience. MIS Q 35:773. https://doi.org/10.2307/23042808

Kozlowski S, Ilgen D (2007) The science of team success. Sci Am Mind 18:54–61. https://doi.org/10.1038/scientificamericanmind0607-54

Lamontagne L, Laviolette F, Khoury R, Bergeron-Guyard A (2014) A framework for building adaptive intelligent virtual assistants. In: Software engineering/811: parallel and distributed computing and networks/816: artificial intelligence and applications. ACTAPRESS, Innsbruck, Austria

Leary MR, Tangney JP (2011) Handbook of self and identity, 2nd edn. Guilford Press, New York

Lee C, Jung S, Kim S, Lee GG (2009) Example-based dialog modeling for practical multi-domain dialog system. Speech Commun 51:466–484. https://doi.org/10.1016/j.specom.2009.01.008

Lin C-P (2015) Predicating team performance in technology industry: theoretical aspects of social identity and self-regulation. Technol Forecast Soc Change 98:13–23. https://doi.org/10.1016/j.techfore.2015.05.017

Luger E, Sellen A (2016) “Like having a really bad pa”: the gulf between user expectation and experience of conversational agents. In: Proceedings of the 2016 CHI conference on human factors in computing systems

Maedche A, Legner C, Benlian A et al (2019) AI-based digital assistants: opportunities, threats, and research perspectives. Bus Inf Syst Eng 61:535–544. https://doi.org/10.1007/s12599-019-00600-8

Maniscalco U, Messina A, Storniolo P (2020) The human–robot interaction in robot-aided medical care. In: Zimmermann A, Howlett RJ, Jain LC (eds) Smart innovation, systems and technologies. Springer, Split, pp 233–242

Maras P, Thompson T, Gridley N, Moon A (2018) The “about me” questionnaire: factorial structure and measurement invariance. J Psychoeduc Assess 36:379–391. https://doi.org/10.1177/0734282916682909

Massey AP, Montoya-Weiss MM, Hung Y-T (2003) Because time matters: temporal coordination in global virtual project teams. J Manag Inf Syst 19:129–155. https://doi.org/10.1080/07421222.2003.11045742

McDuff D, Czerwinski M (2018) Designing emotionally sentient agents. Commun ACM 61:4–83. https://doi.org/10.1145/3186591

McTear MF (2017) The rise of the conversational interface: a new kid on the block? In: Future and emerging trends in language technology. Machine learning and big data. Springer, Seville

Mechling LC, Gast DL, Seid NH (2010) Evaluation of a personal digital assistant as a self-prompting device for increasing multi-step task completion by students with moderate intellectual disabilities. Educ Train Autism Dev Disabil 45:422–439

Moreno R, Mayer RE, Spires HA, Lester JC (2001) The case for social agency in computer-based teaching: do students learn more deeply when they interact with animated pedagogical agents? Cogn Instr 19:177–213. https://doi.org/10.1207/S1532690XCI1902_02

Morrissey K, Kirakowski J (2013) ‘Realness’ in chatbots: establishing quantifiable criteria. In: Kurosu M (ed) Human–computer interaction. Interaction modalities and techniques. Springer, Berlin, pp 87–96

Mueller SK, Mendling J, Bernroider EWN (2019) The roles of social identity and dynamic salient group formations for ERP program management success in a postmerger context. Inf Syst J 29:609–640. https://doi.org/10.1111/isj.12223

Nasirian F, Ahmadian M (2017) AI-based voice assistant systems: evaluating from the interaction and trust perspectives. In: Americas conference on information systems. p 10

Norman D (2017) Design, business models, and human-technology teamwork: as automation and artificial intelligence technologies develop, we need to think less about human–machine interfaces and more about human–machine teamwork. Res Technol Manag 60:26–30. https://doi.org/10.1080/08956308.2017.1255051

Noyes JM, Bruneau DPJ (2007) A self-analysis of the NASA-TLX workload measure. Ergonomics 50:514–519. https://doi.org/10.1080/00140130701235232

Nunamaker JF, Derrick DC, Elkins AC et al (2011) Embodied conversational agent-based kiosk for automated interviewing. J Manag Inf Syst 28:17–48. https://doi.org/10.2307/41304605

O’Leary-Kelly SW, Vokurka RJ (1998) The empirical assessment of construct validity. J Oper Manag 16:387–405. https://doi.org/10.1016/S0272-6963(98)00020-5

Panganiban AR, Matthews G, Long MD (2020) Transparency in autonomous teammates: intention to support as teaming information. J Cogn Eng Decis Mak 14:174–190. https://doi.org/10.1177/1555343419881563

Pepple DG, Davies EMM (2019) Co-worker social support and organisational identification: does ethnic self-identification matter? J Manag Psychol 34:573–586. https://doi.org/10.1108/JMP-04-2019-0232

Peters G-JY (2018) The alpha and the omega of scale reliability and validity: why and how to abandon Cronbach’s alpha and the route towards more comprehensive assessment of scale quality. Eur Health Psychol 16:56–59. https://doi.org/10.31234/osf.io/h47fv

Pickard MD, Burns MB, Moffitt KC (2013) A theoretical justification for using embodied conversational agents (ECAs) to augment accounting-related interviews. J Inf Syst 27:159–176. https://doi.org/10.2308/isys-50561

Plotnick L, Hiltz SR, Privman R (2016) Ingroup dynamics and perceived effectiveness of partially distributed teams. IEEE Trans Prof Commun 59:203–229. https://doi.org/10.1109/TPC.2016.2583258

Porck JP, Matta FK, Hollenbeck JR et al (2019) Social identification in multiteam systems: the role of depletion and task complexity. Acad Manage J 62:1137–1162. https://doi.org/10.5465/amj.2017.0466

Quarteroni S (2018) Natural language processing for industry: ELCA’s experience. Inform Spektrum 41:105–112. https://doi.org/10.1007/s00287-018-1094-1

Rubio S, Díaz E, Martín J, Puente JM (2004) Evaluation of subjective mental workload: a comparison of SWAT, NASA-TLX, and workload profile methods. Appl Psychol 53:61–86. https://doi.org/10.1111/j.1464-0597.2004.00161.x

Russel S, Norvig P (2016) Artificial intelligence: a modern approach. Addison Wesley, Munich

Schuetzler RM, Giboney JS, Grimes GM, Nunamaker JF (2018) The influence of conversational agent embodiment and conversational relevance on socially desirable responding. Decis Support Syst 114:94–102. https://doi.org/10.1016/j.dss.2018.08.011

Schultze U (2010) Embodiment and presence in virtual worlds: a review. J Inf Technol 25:434–449. https://doi.org/10.1057/jit.2010.25

Schwabe G (2003) Growing an application from collaboration to management support: the example of Cupark. doi:10/gg4ms7

Scott C, Lundstrom WJ (1990) Dimensions of possession satisfactions: a preliminary analysis. J Satisf Dissatisfaction Complain Behav 3:100–1004

Seeber I, Bittner E, Briggs RO, et al (2018) Machines as teammates: a collaboration research agenda. In: Proceedings of the 51st Hawaii international conference on system sciences

Seeber I, Bittner E, Briggs RO et al (2020a) Machines as teammates: a research agenda on AI in team collaboration. Inf Manage 57:103174. https://doi.org/10.1016/j.im.2019.103174

Seeber I, Waizenegger L, Seidel S et al (2020b) Collaborating with technology-based autonomous agents: issues and research opportunities. Internet Res 30:1–18. https://doi.org/10.2139/ssrn.3504587

Shamekhi A, Liao QV, Wang D, et al (2018) Face value? Exploring the effects of embodiment for a group facilitation agent. In: Conference on human factors in computing systems

Sivadas E, Machleit KA (1994) A scale to determine the extent of object incorporation in the extended self. Mark Theory Appl 5:143–149

Spohrer J, Banavar G (2015) Cognition as a service: an industry perspective. AI Mag 36:71–86. https://doi.org/10.1609/aimag.v36i4.2618

Stets JE, Biga CF (2003) Bringing identity theory into environmental sociology. Soc Theory 21:398–423. https://doi.org/10.1046/j.1467-9558.2003.00196.x

Stets JE, Burke PJ (2000) Identity theory and social identity theory. Soc Psychol Q 63:224. https://doi.org/10.2307/2695870

Stieglitz S, Brachten F, Kissmer T (2018) Defining bots in an enterprise context. In: International conference on information systems

Tajfel H, Turner JC (1986) The social identity theory of intergroup behavior. In: Austin WG, Worchel S (eds) Psychology of intergroup relation. Hall Publishers, Chicago, pp 7–24

Tian K, Belk RW (2005) Extended self and possessions in the workplace. J Consum Res 32:297–310. https://doi.org/10.1086/432239

Vahtera P, Buckley PJ, Aliyev M et al (2017) Influence of social identity on negative perceptions in global virtual teams. J Int Manag 23:367–381. https://doi.org/10.1016/j.intman.2017.04.002

Van Ostrand A, Wolfe S, Arredondo A et al (2016) Creating virtual communities that work: best practices for users and developers of e-collaboration software. Int J E-Collab 12:41–60. https://doi.org/10.4018/IJeC.2016100104

Verhagen T, van Nes J, Feldberg F, van Dolen W (2014) Virtual customer service agents: using social presence and personalization to shape online service encounters. J Comput Mediat Commun 19:529–545. https://doi.org/10.1111/jcc4.12066

Vignoles VL, Schwartz SJ, Luyckx K (2011) Introduction: toward an integrative view of identity. In: Schwartz SJ, Luyckx K, Vignoles VL (eds) Handbook of identity theory and research. Springer, New York, NY, pp 1–27

Waizenegger L, Seeber I, Dawson G, Desouza KC (2020) Conversational agents – exploring generative mechanisms and second-hand effects of actualized technology affordances. In: Proceedings of the 53rd Hawaii international conference on system sciences

Wang W (2017) Smartphones as social actors? social dispositional factors in assessing anthropomorphism. Comput Hum Behav 68:334–344. https://doi.org/10.1016/j.chb.2016.11.022

Wang W, Siau K (2018) Artificial intelligence: a study on governance, policies, and regulations. In: MWAIS 2018 proceedings