Abstract

Artificial intelligence has not achieved defining features of biological intelligence despite models boasting more parameters than neurons in the human brain. In this perspective article, we synthesize historical approaches to understanding intelligent systems and argue that methodological and epistemic biases in these fields can be resolved by shifting away from cognitivist brain-as-computer theories and recognizing that brains exist within large, interdependent living systems. Integrating the dynamical systems view of cognition with the massive distributed feedback of perceptual control theory highlights a theoretical gap in our understanding of nonreductive neural mechanisms. Cell assemblies—properly conceived as reentrant dynamical flows and not merely as identified groups of neurons—may fill that gap by providing a minimal supraneuronal level of organization that establishes a neurodynamical base layer for computation. By considering information streams from physical embodiment and situational embedding, we discuss this computational base layer in terms of conserved oscillatory and structural properties of cortical-hippocampal networks. Our synthesis of embodied cognition, based in dynamical systems and perceptual control, aims to bypass the neurosymbolic stalemates that have arisen in artificial intelligence, cognitive science, and computational neuroscience.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Main

The current paradigm of artificial intelligence and machine learning (AI/ML) casts intelligence as a problem of representation learning and weak compute. This view might be summarized as the hope that defining features of biological intelligence will be inevitably unlocked by learning network architectures with the right connectional parameters at a sufficient scale of compute (i.e., proportional to input, model, and training sizes) to be attained in the future. Despite increasingly impressive capabilities, the latest and largest models, like GPT-3, OPT-175, DALL-E 2, and a recent “generalist” model called Gato [1,2,3,4,5,6], continue a long history of moving the goalposts for these biological features [7,8,9,10,11], which include abstraction and generalization; systematic compositionality of semantic relations; continual learning and causal inference; as well as the persistent orders-of-magnitude performance gaps reflected by low sample-complexity and high energy-efficiency. Instead of presuming that scaling beyond trillion parameter models will yield intelligent machines from data soup, we infer that understanding biological intelligence sufficiently to formalize its defining features likely depends on characterizing fundamental mechanisms of brain computation and, importantly, solving how animals use their brains to construct subjective meaning from information [12,13,14,15,16,17,18,19]. In this paper, we first interpret the disciplinary history of approaches to these questions to illuminate underexplored hypothesis spaces. Second, we motivate a perceptual control-theoretic perspective and discuss implications for computing in physical dynamical systems. Third, we synthesize properties of mammalian cortical oscillations and structural connectivity to argue for a nonreductive base layer, i.e., a supraneuronal mechanistic substrate, for neurocognitive computing in brains. To conclude, we discuss implications for bridging AI/ML gaps and incentivizing increased cooperation between theoretical and computational neuroscience, autonomous robotics, and efforts to develop brain-like computing models for AI.

Framing an Integrative Neuroscience of Intelligence

To find space for integrative research in the neuroscience of intelligence, we will sketch the historical entanglement of several fields that have addressed neural computation and intelligence. First, current AI/ML research questions and methods range from reinforcement learning and multilayer neural nets to more recent transformers and graph diffusion models. These models, like those mentioned above, can learn impressive solutions in narrow domains like rule-based games and simulations or classification and recognition tasks. However, these domains substantially constrain the nonstationarity and dimensionality of problem sets, to the degree that merely emulating the intelligence reflected by massive amounts of a contextual training data can often yield effective problem-solving. Given the explosive growth, commercial success, and technological entrenchment of AI/ML, researchers and practitioners have, perhaps sensibly, prioritized the short-term reliable incrementalism of scale [20,21,22] over the uncertain and open-ended project of explicating and formalizing biological intelligence. It has been unclear how to encourage sustained consideration of biological insights in the field [23,24,25], especially given the prevalent belief that some combination of current approaches will eventually achieve artificial general intelligence, however defined.

Second, the “cognitive revolution” of the 1950s [26] installed blinders on both cognitive science and AI in their formative years. Reacting to behaviorism’s near total elision of the mind, the advent of computational cognitivism and connectionist neural nets [27,28,29,30,31] conversely stripped humans and other intelligent animals of everything else that adaptively embeds an organism in the world [32,33,34,35,36]. This rejection of agency, embodiment, interaction, homeostasis, temporality, subjectivity, teleology, etc., served to modularize and centralize minds within brains using superficial and incoherent brain–computer metaphors [37,38,39,40]. An overriding focus on the brain as the hardware of a computational mind, thought to fully encapsulate intelligence, may have contributed to the failure of the original computationalist branch of cognitive science to coalesce an interdisciplinary research program as first envisioned [26, 41]. To add to the collateral damage, the same blinders have skewed the conceptual language and methodologies of neuroscience [42,43,44,45] as it developed into a similarly sprawling field.

Third, computational neuroscience, originally a theoretical and analytical blend of physics, dynamical systems, and connectionism [46,47,48,49,50,51,52,53], found a path toward a working partnership with experimental neuroscience [54,55,56,57,58] largely by conforming to the latter’s neurocentric reductionism, exemplified by the 150-year dominance of the neuron doctrine [59, 60]. The most successful form of reductive modeling in neuroscience has typically targeted deductive, data-driven prediction and validation of experimental observations of single-neuron behavior. This biophysical approach is undergirded by the Hodgkin-Huxley formalism [61,62,63] which quantifies a sufficient subset of lower level, physical/chemical processes—e.g., ionic gradients, reversal potentials, intrinsic conductances, (in)activation nonlinearities, etc.—that remain analytically coherent at the subneuronal level of excitable membranes and cellular compartments. In contrast, modeling supraneuronal group behavior has relied on large-scale simulations of simple interconnected units, efficiently implemented as 1- or 2-dimensional point-neuron models (cf. [64]). These models are akin to connectionist nets that optionally feature computationally tractable features [65,66,67,68,69,70,71,72] including spiking units, lateral inhibition, sparse or recurrent connectivity, and plasticity mechanisms based on local synaptic modification rules (in contrast to the global weight-vector updates from the error backpropagation algorithms used to train AI/ML models). The field has yet to unlock network-level or macroscale approaches that match the explanatory or quantitative success of biophysical modeling.

This historical framing suggests that the multidisciplinary triangle of AI, cognitive science, and computational neuroscience has developed mutually interlocking biases, respectively, technology-driven incrementalism, neurocentric cognitivism, and synaptocentric emergentism. The synaptic learning bias has hindered computational neuroscience explanations for cognitive processes at scale, e.g., interregional cortical gating for learning sensory-cued behaviors [73] or embodiment-related questions of brain–body coupling and environmental interactions [34]. A scientific problem-solving culture that encouraged thinking at organismal—even social and societal—scales could have embraced theory-building as an essential, transformative practice [56]. However, on the road from cybernetics to reductive analysis tool, computational neuroscience bypassed systems-level modeling and inductive theory-building, thus shunting the field from the kind of scale that might have complemented AI. We argue below that new integrative approaches could cooperatively break the mutual frustrations of these three fields.

Countering Observer Bias with Embodied Dynamical Control

A common source of the biases described above is the external observer perspective typically assumed in experimental neuroscience [74,75,76], i.e., that experimenters conceptualize their subjects as input-output systems in which the output is identified with behavior. Inverting this perspective entails seeing peripheral sensory transduction and motoneuron firing as mutual and simultaneous causes and effects of cognition and behavior. Paraphrasing Skarda (1999) [77], embodiment-first theories invert our view of cognition as integrating isolated channels of sensory information into unified internal models, to one of articulating dynamical boundaries within existing global states that already reflect an organism’s cumulative experience in its world (umvelt). In humans, this lifelong context of prior articulations inescapably conditions the meaning that we discern from the momentary flow of experience and, therefore, also guides our planning and future behavior. While Skarda’s argument is strictly noncognitivist and nonrepresentational, we consider these neurodynamical insights more broadly below.

A related position, the dynamical systems view of cognition, was presented by van Gelder (1995) as a viable alternative to computational metaphors [78,79,80]. This argument centralized the continuous temporality, i.e., rate, rhythm, timing, and coordination, of neural activity as causal interactions mutually unfold between an organism and its environment. A key consequence of temporality—and of the ability to express dynamical systems as differential equations—is to view cognitive systems as controllers, because timeliness of the response to a perturbation is a precondition for effective control [81,82,83]. Thus, in the dynamical view, the organization of brain states by time, and not merely by sequential ordering, supports an understanding of cognition as a fundamentally dynamical (noncomputational) controller. van Gelder anchored this argument with a case study of Watt’s eighteenth-century invention of the steam governor [78], which, while compelling, revealed some limitations. In particular, the governor is indeed a continuous-time dynamical controller, but its sole function follows from its design which is necessarily entangled with the intentions, goals, and various capabilities of James Watt as he developed it. Its apparent intelligence is not its own but that of a high-embodiment human engineer; the governor is a tool, not a cognitive agent. More generally, attributing intelligence to low-embodiment tools, machines, or AI/ML models recapitulates the same category error. A related epistemic danger, particularly for high-dimensional artifacts like large language models or generative text-to-image networks, is that increasingly interesting control failures can be mistaken for the emergent complexity of intelligent behavior per se. We next elaborate a control-theoretic interpretation to clarify these distinctions.

Perceptual Control of Self vs. Nonself Entropy

The dynamical view makes a positive case for continuous temporality and control, but the controller for a cognitive agent must be internally referenced, i.e., the command signals that are compared to inputs to produce feedback are set by the agent. Stipulating goal-setting autonomy simply recognizes the agency inherent in embodied life: animals have goals and those goals govern their behavior. Accordingly, Powers (1973) [84] introduced perceptual control theory as a hierarchically goal-driven process in which closed-loop negative feedback reduces deviations of a perceived quantity from an internal reference value; this formulation of biological control was overshadowed by the rise of cybernetics and its long influence over AI, cognitive science, and robotics. Renewed interest in perceptual control reflects recent arguments that the forward comparator models typical of cybernetics research locked in confusions about fundamental control principles [81,82,83], which may have subsequently influenced conceptual developments in neuroscience.

Under perceptual control, behavior is not itself controlled; instead, behavior is the observable net result of output functions that express sufficient motor variability, given simultaneous unobserved or unexpected forces acting on the body and environment, to satisfy an organism’s drives and achieve its goals [81,82,83,84,85,86,87]. Perceptual control shares this view of behavior with an alternative theoretical framework known as radical predictive processing [35, 88]. In this framework, the normative minimization of global prediction errors (viz. variational free-energy [89]) yields a distributed generative model that naturally encompasses an extended brain–body system [90]. Probabilistic Bayesian inference of perceptual hypotheses produced by this generative model arises through active inference [91, 92], i.e., actions and perceptions that maximize model evidence by balancing internal active-state (self) entropy with external sensory-state (nonself) entropy. Active inference thus reflects a compelling insight: agents learn distributed feedback models by adaptively balancing information streams along the self–nonself boundary. More generally, predictive processing assumes that living systems are autopoietic, entailing that system states follow ergodic trajectories. However, we do not find ergodicity to be obviously compatible with the distributional changes in organization that occur across an organism’s lifetime. Thus, we pursue perceptual control here, but with this additional insight that intelligence requires adaptively balancing self vs. nonself entropy.

By taking a perceptual control view, behavior becomes the lowest-level output of the reference signals descending through hierarchically nested controllers, while higher-level outputs encapsulate the externally unobservable processes of cognition, perception, and other aspects of intelligence. Internally referenced control of perceived inputs means that the feedback functions operate in closed loops through the body and the environment. The functional payoff is that an agent’s controller system has access to all of the information it needs to learn what it needs to learn at any moment. Conversely, there is no need for the more complex, error-prone process of integrating sensory data into internal representations and forward models of body kinematics, physics, planning, etc., to predict which motor outputs will achieve a given behavioral goal in an unfamiliar situation. In perceptual control, ascending inputs flow via comparator functions as deviations to the level above, and descending references flow via output functions as error-correcting signals to the level below. Additionally, we can broadly divide a cognitive agent’s inputs into two categories: internal embodied (interoceptive, homeostatic) signals and external situated (exteroceptive, allostatic) signals. Thus, by closing the hierarchical control loop with rich, continuous, organismal feedback, the problem of intelligent behavior shifts from representational model-building to the adaptive management of ascending/descending and self/nonself information streams. We can see now that the steam governor—with mechanical linkages instead of adaptively balanced information boundaries—is not the kind of device that can do the work of intelligence. We next consider the temporal continuity of dynamical systems.

Do Brains Compute with Continuous Dynamics?

The dynamical systems view gives us mutual causal coupling and the unfolding temporality of neural states, but its formalization in differential equations implies that state trajectories are continuous in time. We discuss several implications of this constraint. First, the nature of computing with continuous functions is unclear; e.g., logical operations like checking equality of two real values or conditional execution (“if x > 0, do this; else, do that”) require discrete state changes that violate local continuity. To formalize a theory of analog computing, Siegelmann and Fishman (1998) resolved this difficulty by explicitly disallowing those operations internally but also stipulating that input and output units be discrete [93]. Further analyses of analog neural nets with continuous-valued units and weighted linear inputs revealed impressive capacities for “hypercomputational” transformations of high-dimensional vector spaces [94, 95]. Thus, despite continuous-time recurrent dynamics like neural circuits, the capabilities of analog neural nets mirrored both the generative power and logical-symbolic gaps of AI/ML models. Although structurally and operationally distinct, these two “neural” paradigms share the external-observer bias that mistakes cognitive computing as a linear series of input-output transformations. Dynamical complexity itself is not enough.

Second, the mere fact of neuronal spiking, viz. highly stereotyped all-or-none communication pulses, convinced McCulloch, Pitts, and von Neumann in the formative years of computer technology and symbolist AI that neurons were binary logical devices [96, 97]. While the idea proved useful for computer engineering, the biology of it was wrong enough for Walter Freeman (1995) to call it a “basic misunderstanding of brain function” [98]. Connectionists gave up McCulloch and Pitts’ logical units for rectified linear (or similar) activation functions, yet rectification entails that a coarse-grained binary division still persists in the codebook, between those units with zero vs. nonzero activations for a given input. In sum, continuous-time dynamical systems hinder the formation of discrete states, and continuous-valued AI/ML neural nets are built atop degenerate subdigital states.

Third, and relatedly, prospects for modeling continuous-time, continuous-valued neural systems with emergent logical-symbolic operations remain unclear, bringing us back to the central “paradox” [29] that historically divided symbolists and connectionists [7,8,9]: how can a physical system learn and implement symbolic rules? The argument has more recently taken the form of whether—or not [10,11,12]—the impressive progress represented by large transformer models already reflects an unrecognized emergence of limited symbolic (e.g., compositional) capacities [3, 6, 99, 100]. Alternatively, theory-driven and simulation-based approaches [101] have shown that specially devised nonsymbolic neural nets can facilitate the developmental emergence of finite automata or Turing machine-like computing capacities [102,103,104]. However, rationalist considerations of the neural circuit regularization imposed by the “genomic bottleneck” [105]—bolstered by recent demonstrations of architectural chromatin changes to the genome and epigenome from early learning experiences in mice [106]—suggest that incremental synaptic modification is insufficient to explain the innate robustness and adaptability of simple neural circuits [38, 107]. Thus, to bridge this neurosymbolic divide, we seek a middle way that integrates embodiment-first cognition, perceptual control, and collective neurodynamics.

Lastly, if brains are responsible for determining the various segments, boundaries, and discriminations required for episodic memory, categorical abstraction, perceptual inference, etc., then cognition ought to be characteristically discrete [108]. Convergent lines of evidence, from mice to humans, support an abundance of spatiotemporally discrete neurocognitive states [109,110,111,112,113,114,115], including a characteristic duration—a third of a second—for embodiment-related states revealed by deictic primitives of human movement patterns [116, 117]. Thus, if a useful formalization of biological intelligence is possible, it will require an understanding of discrete neurocognitive states as computational states [118,119,120] and that understanding should reconcile uncertainties about the nature of computing in nested, distributed, physical dynamical systems like brains. We next elaborate what it means to consider a nonreductive mechanistic account of neural computing; then we discuss oscillatory and structural factors that may support mechanisms of discrete neurodynamical computing for embodied perceptual control.

Toward a Nonreductive, Mechanistic Base Layer of Computation

Miłkowski (2013) established epistemological criteria for mechanistic accounts of neural computation in part by requiring a complete causal description of the neural phenomena that give rise to the relevant computational functions [121]. The causal capacities of that computational substrate are then necessarily grounded in constitutive mechanisms—i.e., causal interactions between subcomponents—at some level of organization of the physical-material structure of the brain. Reductionist accounts of neural computation posit that such constitutive mechanisms interlink every level of organization, from the macroscale to neurons to biophysics: one simply attributes computation to all material processes of the brain down to its last electron. Given the dire implications for understanding high-level cognition reductively, the search for an alternative mechanistic account becomes attractive.

What does a nonreductive hypothesis of computing in the brain look like? Instead of assuming a series of causal linkages from macroscale phenomena all the way down to the quantum realm, we presume that there is some middle level beyond which the net causal interactions among lower level components no longer influence the functional states and state transitions that constitute the computational system [121,122,123]. We call this level the base layer of neural computation by analogy to the physical layer of modern computer chips, which consists of the arrays of billions of silicon-etched transistors and conductors whose electronic states are identified with and identical to the lowest-level computational states of the device [124]. The constitutive mechanisms within the base layer itself provide all of the causal interactions necessary to process a given computational state into the next. Thus, a nonreductive base layer is a substrate that computes state trajectories and insulates that computation from the causal influences of lower-level phenomena.

Which level of organization might serve as a base layer for the high-level cortical computations supporting cognition, perception, and other aspects of biological intelligence? Following our discussions above, we consider several criteria for this base layer: the computing layer must (1) encompass a macroscale, hierarchical control structure over which it implements comparator, error, and output functions; (2) adaptively control access to internal and external information flows generated by physical embodiment and situated embedding in a causal environment; and (3) support discrete neurodynamical states and adaptive high-dimensional state transitions across timescales of neural circuit feedback (10 ms), conscious access (40–50 ms [125]), deictic primitives of embodied cognition (300 ms [116]), and deliberative cognitive effort (>1–2 s). In an earlier paper that otherwise correctly focused on the need to close feedback loops across levels, Bell (1999) considered the computing layer question and dismissed its attribution to the level of neuron groups by suggesting that group coordination mainly serves to average away the noise of single-neuron spike trains [126]; as a consequence, Bell argued for a reductionist account, including its requisite quantum implications. Detracting groupwise neuronal coordination as merely signal-to-noise enhancement reflects an impoverished, yet prevalent, view of what neurons and brains do.

What is the Structural Connectivity Basis of Intelligence?

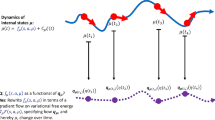

Studies of cortical and hippocampal networks have revealed log-skewed distributions of mostly “specialist” neurons—with relatively few afferent inputs—and long tails of more excitable “generalist” neurons [74, 127]. The generalist neurons and networks can organize, despite their smaller numbers, into highly stable “rich clubs” [128,129,130,131] that robustly distribute higher levels of the hierarchy (Fig. 1a). Conceptual knowledge and remote memories are more robustly accessible than their specific and recent counterparts, due to more extensive consolidation of synaptic traces from hippocampal circuits to the medial temporal lobe and frontal areas [132,133,134]. Collectively, these properties suggest that the cortical-hippocampal memory graph is not a strict hierarchy, but more like a distributed, multilevel arrangement of specialist to generalist neurons. By including loops that skip levels or break modularity, brain networks are more appropriately considered heterarchies (Fig. 1b; cf. [135]). Sparse, log-skewed, heterarchical networks can feature numerous, diverse, connected subgraphs that may support latent cell assemblies.

Brain networks form sparse heterarchies with log-skewed afferent connectivity. a A balanced hierarchy with a core subset of strongly interconnected generalist neurons, viz. a rich club. b Hierarchies strictly require binary, unambiguous superiority relations. However, neurons and neuronal networks inhabit a continuum of input connectivity from specialists to generalists. Sparse, multi-scale arrangements of elements from this skewed distribution naturally form heterarchies with lateral (e.g., modularity break) and ascending/descending (e.g., level skip) violations. c The adapting cell-assembly hypothesis implies that computation at the interface of information streams emanates from self-sustaining activations within the reentrant synaptic loops of interconnected cell assemblies (red circles, 1 and 2). Loops may branch into subloops or aggregate new traces (purple circles, 1′ and 2′) that entail different effects on downstream targets, thus instantiating new and distinct assemblies

In a recent study, optogenetic stimulation induced new place fields and revealed that memory formation is constrained to preconfigured network relationships inferred prior to stimulation [136]. Mature brains provide a vast library of arbitrary, yet stable, latent structures that can be recruited as needed. In contrast, synapses are highly variable due to fluctuations in transmission failure rates, dendritic spine turnover, and synaptic volume and strength [137, 138]. Thus, our hypothesis of supraneuronal computation is grounded in the relative structural stability of neuronal groups and subnetworks (Fig. 1c). Conversely, AI/ML models are trained to convergence and rely on precise and static sets of synaptic weights.

Neurodynamical Computing as Oscillatory Articulation

Freeman (2000) considered the first building block of neurodynamics for a local neuron group to be collective activity patterns that reliably return to a stable nonzero fixed-point following an input or perturbation [139]. Nonzero fixed-point dynamics are robustly associated with the 1–2% sparse intrinsic connectivity and 80:20 excitatory/inhibitory neuron proportions that typify local circuit architectures of mammalian cortex and hippocampus. Depending on input levels, neuromodulation, or other state-dependent factors, this circuit structure supports additional population dynamics that may serve to partition constituent neurons’ spiking according to cycles in the collective activity, e.g., transient negative-feedback waves and stable limit-cycle oscillations, which Freeman labeled as the second and third building blocks [139]. Neural oscillations are measured extracellularly as the net synaptic loop currents in the local volume [139, 140] and the structure of prevalent frequency bands is highly conserved across mammals including humans [141,142,143]. Neuronal spike phase is measured as the relative alignment of spikes to oscillatory cycles and effectively constitutes a distinct spatiotemporal dimension of neural interaction that naturally supports sequence learning, generation, and chunking in biological [144,145,146,147,148,149,150,151,152] and artificial [153,154,155,156] systems. Because various lines of evidence indicate that neuronal spike phase and collective oscillations may be causally bidirectional [157,158,159,160,161,162], the discrete neural states organized by oscillatory cycles are candidate computational states.

Despite all the complexity of brains that causally links phenomena across levels, we posited above that there must be a base layer below which the net causal effects on functional states at that level and above become negligible. Placing this dividing line above the level of neurons entails that myriad biological details of dendrites, somatic integration, ion channels, cell metabolism, quantum states, etc., ideally do not influence the processing of computational states. As a consequence, we focus our search for the fundamental unit of cognitive computation on dynamical flows of energy over neural tissue. These flows include massive internal feedback, which is neglected by AI/ML methods for reasons including (ironically) incompatibility with error backpropagation. While the cell assembly has been incorporated into the everyday language of neuroscience, its intrinsically dynamical conception—by Karl Lashley and Donald Hebb [163]—has been diluted so as to support rigidly neurocentric notions like population codes and memory engrams that elide temporality by simply identifying cognitive states with subsets of coactive neurons [59, 164,165,166]. Instead, we should consider how a neural substrate might support the spatiotemporal waves and vortices that arise in the dissipative energetics of extended and embodied living systems. Additional theoretical research and modeling are needed to systematically analyze the spatiotemporal nonlinearities and constraints (e.g., [167, 168]) imposed by the ignition of reentrant sequence flows as units of computation.

Neurodynamical computing may thus undergird the cognitive flexibility critical to adaptively responding to disruptions or alterations of an organism’s situation, i.e., the frame problem. This flexibility necessarily trades off with the stability of active states [169, 170]; i.e., stable relaxation into the energy well of an attractor must be periodically interrupted to ensure responsivity to new inputs. For example, mice exhibit strong cortical-hippocampal oscillatory entrainment to respiration [114, 171, 172], which may serve to optimize odor sampling by rhythmically phase-locking sniffing behavior to the most responsive (i.e., unstable) neurodynamical states [98, 139, 173]. In rats, the famous theta rhythm (5–12 Hz) was revealed by its clear association, from early in the modern neuroscience era, with voluntary movement and complex ethological behaviors [174, 175]. More recent research has extended this association to show that theta controls the speed and initiation of locomotion behaviors [176,177,178,179]. Moreover, the practice of analyzing hippocampal data aligned to theta cycles has driven subsequent decades of neuroscience research relating oscillatory phenomena to lifelong learning, episodic memory, and spatial navigation in rodents and humans [149, 180,181,182,183]. For instance, a place-cell remapping study in which global context cues (salient light patterns) were abruptly changed demonstrated rhythmic “theta flickering” [184, 185] in which each theta cycle would alternately express one or another spatial map until settling on the new map. Theta flickering, exposed by an unnaturally abrupt change in an animal’s physical context, suggests that the hippocampal cognitive map is completely torn down and reconstructed every single theta cycle. This continual rebuilding of active context-dependent states in hippocampal memory networks hints at the computational efficiency and power of sculpting and refining attractor landscapes over a lifetime. These deep behavioral and cognitive links to slow, ongoing neural oscillations—alternating between stability and instability—may reflect fundamental mechanisms of brain–body coordination [143, 172, 186].

Understanding how oscillations, behaviors, or other factors govern neurodynamical computing will require explicating the high-dimensional chaos that drives state transitions to the next attractor (or phase transitions to a new chart or landscape) [98, 187,188,189,190,191,192]. Crucially, several lines of evidence converge to emphasize the neurodynamical and control-theoretic complexity of systems that intermix positive and negative feedback. First, models of recurrent feedback circuits—like those typical in the brain—can be made functionally equivalent to a feedforward process by learning network states that act as linear filters for subsequent states in a sequence [193]. Second, chaotic attractors form at dynamical discontinuities, or cusps, corresponding to interfaces between positive and negative feedback processes [194]; for example, demonstrations of metastable transitions between inferred cortical states during taste processing [195,196,197] could, speculatively, reflect traversals across the discrete gustatory wings of a high-dimensional chaotic attractor. Third, singularity-theoretic modeling of mixed-feedback control in neuronal bursting and central pattern generator circuits has demonstrated that small perturbations around these winged cusps can evoke persistent bifurcations in dynamics [81, 198, 199], akin to a digital switch. We theorize that the ascending/descending streams of perceptual control could converge on this type of neurodynamical computing interface.

Absorbed Coping as Internalized Entropy Control

Collectively, the above findings suggest neurodynamical articulatory mechanisms for producing itinerant trajectories of discrete computational states. Moreover, the philosopher Hubert Dreyfus (2007) suggested that the accumulation of articulations—via the adaptive, continual refinement and rebuilding of attractor landscapes through experience—may solve the frame problem for intelligent systems [13]. By identifying neurocognitive states, including memory states, with dynamically constructed states, we see that many biological complexities dissolve; but how can an intelligent organism or artificial agent use these principles to construct meaning? A recent study reported neural recordings from the brains of rats as they happily socialized with and learned to play hide-and-seek with human experimenters [200]. Neuroscience crucially requires new, innovative experimental paradigms like this that respect the autonomy, agency, and ethological behavior of the animals we study. Dreyfus (2007) further wrote that “[w]e don’t experience brute facts and, even if we did, no value predicate could do the job of giving them situational significance” [13]. Which is to say: significance, meaning, and relevance need to be built into our experiments and models from the beginning, and then refined with experience. Thus, taking an embodiment-first approach to cognition and intelligence is also, and not incidentally, a meaning-first approach. Many arguments about the possibility of neurosymbolic systems reflect questions about how to give these systems access to intentionality and meaning. A major implication of this perspective article is that we see no easy way around this problem.

Meaning comes from interacting over time with the world and learning about how those interactions affect your body and how they make you feel. In biology, organisms have visceral, affective, and interoceptive homeostasis built in, beginning with early development and undergoing continual refinement throughout life. That refinement occurs through learning and our experience as “absorbed copers” [13] trying to get through the problems of the day. In contrast, supervised training of static, disjointed datasets by iteratively propagating errors through densely connected neural nets is a recipe for the elimination of meaning. Instead, it is critical to rethink our science of intelligence as driven by integrative advances in AI, computational neuroscience, embodied cognition, and autonomous robotics. The agents we study must learn to perceive and cope with being in the world as we do.

References

Brown TB, Mann B, Ryder N, Subbiah M, Kaplan J, Dhariwal P, et al. Language models are few-shot learners. Preprint. 2020. https://doi.org/10.48550/arXiv.2005.14165.

Prato G, Guiroy S, Caballero E, Rish I, Chandar S. Scaling laws for the few-shot adaptation of pre-trained image classifiers. Preprint. 2021. https://doi.org/10.48550/arXiv.2110.06990.

Bommasani R, Hudson DA, Adeli E, Altman R, Arora S, Arx S von, et al. On the opportunities and risks of foundation models. Preprint. 2021. https://doi.org/10.48550/arXiv.2108.07258.

Ramesh A, Dhariwal P, Nichol A, Chu C, Chen M. Hierarchical text-conditional image generation with CLIP latents. Preprint. 2022. https://doi.org/10.48550/arXiv.2204.06125.

Zhang S, Roller S, Goyal N, Artetxe M, Chen M, Chen S, et al. OPT: Open pre-trained transformer language models. Preprint. 2022. https://doi.org/10.48550/arXiv.2205.01068.

Reed S, Zolna K, Parisotto E, Colmenarejo SG, Novikov A, Barth-Maron G, et al. A generalist agent. Preprint. 2022. https://doi.org/10.48550/arXiv.2205.06175.

Fodor JA, Pylyshyn ZW. Connectionism and cognitive architecture: a critical analysis. Cognition. 1988;28:3–71. https://doi.org/10.1016/0010-0277(88)90031-5.

Prince A, Pinker S. Rules and connections in human language. Trends Neurosci. 1988;11:195–202. https://doi.org/10.1016/0166-2236(88)90122-1.

Blank DS, Giles CL, Jani NG, Shastri L, Cohen MS, Coltheart M, et al. Connectionist symbol processing: dead or alive? In: Jagota A, Plate T, Shastri L, Sun R, editors. Neural Computing Surveys, vol 2. 1999. p. 1–40.

Shanahan M, Mitchell M. Abstraction for deep reinforcement learning. Preprint. 2022. https://doi.org/10.48550/arXiv.2202.05839.

Marcus G. Deep learning is hitting a wall. Nautilus. Mar 10, 2022; https://nautil.us/deep-learning-is-hitting-a-wall-14467.

Mitchell M. Why AI is harder than we think. Preprint. 2021. https://doi.org/10.48550/arXiv.2104.12871.

Dreyfus HL. Why Heideggerian AI failed and how fixing it would require making it more Heideggerian. Philos Psychol. 2007;20:247–68. https://doi.org/10.1080/09515080701239510.

Seligman MEP, Railton P, Baumeister RF, Sripada C. Navigating into the future or driven by the past. Perspect Psychol Sci. 2013;8:119–41. https://doi.org/10.1177/1745691612474317.

Brette R. Is coding a relevant metaphor for the brain? Behav Brain Sci. 2019;1–44. https://doi.org/10.1017/S0140525X19000049.

Bender EM, Koller A. Climbing towards NLU: On meaning, form, and understanding in the age of data. Proc Assoc Comput Linguist. 2020;5185–98. https://doi.org/10.18653/v1/2020.acl-main.463.

Kaufeld G, Bosker HR, Ten Oever S, Alday PM, Meyer AS, Martin AE. Linguistic structure and meaning organize neural oscillations into a content-specific hierarchy. J Neurosci. 2020;40:9467–75. https://doi.org/10.1523/JNEUROSCI.0302-20.2020.

Mansouri FA, Freedman DJ, Buckley MJ. Emergence of abstract rules in the primate brain. Nat Rev Neurosci. 2020;21:595–610. https://doi.org/10.1038/s41583-020-0364-5.

Roli A, Jaeger J, Kauffman SA. How organisms come to know the world: fundamental limits on artificial general intelligence. Front Ecol Evol. 2022;9. https://doi.org/10.3389/fevo.2021.806283.

Lipton ZC, Steinhardt J. Troubling trends in machine learning scholarship. Preprint. 2018. https://doi.org/10.48550/arXiv.1807.03341.

Sutton R. The Bitter Lesson. Blog. 2019. http://incompleteideas.net/IncIdeas/BitterLesson.html.

Hooker S. The hardware lottery. Preprint. 2020. https://doi.org/10.48550/arXiv.2009.06489.

Stanley KO, Lehman J. Why greatness cannot be planned: the myth of the objective. Springer. 2015.

Hassabis D, Kumaran D, Summerfield C, Botvinick M. Neuroscience-inspired artificial intelligence. Neuron. 2017;95:245–58. https://doi.org/10.1016/j.neuron.2017.06.011.

Weng J. On post selection using test sets (PSUTS) in AI. Proc Int Joint Conf Neural Netw. 2021;1–8. https://doi.org/10.1109/IJCNN52387.2021.9533558.

Miller GA. The cognitive revolution: a historical perspective. Trends Cogn Sci. 2003;7:141–4. https://doi.org/10.1016/S1364-6613(03)00029-9.

Rumelhart DE, McClelland JL, Asanuma C. Parallel distributed processing: foundations. San Diego, CA: MIT Press; 1986.

Rumelhart DE, Hinton GE, Williams RJ. Learning representations by back-propagating errors. Nature. 1986;323:533–6. https://doi.org/10.1038/323533a0.

Smolensky P. On the proper treatment of connectionism. Behav Brain Sci. 1988;11:1–23. https://doi.org/10.1017/S0140525X00052432.

Kriegeskorte N, Douglas PK. Cognitive computational neuroscience. Nat Neurosci. 2018;21:1148–60. https://doi.org/10.1038/s41593-018-0210-5.

Rescorla M. The computational theory of mind. In: Zalta EN, editor. The Stanford Encyclopedia of Philosophy (Fall 2020 Edition). Metaphysics Research Lab, Stanford University. 2020.

Clark A. Language, embodiment, and the cognitive niche. Trends Cogn Sci. 2006;10:370–4. https://doi.org/10.1016/j.tics.2006.06.012.

Bickhard MH. Is embodiment necessary? Handbook of Cognitive Science. Elsevier. 2008;27–40. https://doi.org/10.1016/B978-0-08-046616-3.00002-5.

Wilson A, Golonka S. Embodied cognition is not what you think it is. Front Psychol. 2013;4:58. https://doi.org/10.3389/fpsyg.2013.00058.

Clark A. Surfing uncertainty: prediction, action, and the embodied mind. Oxford University Press. 2015.

Niv Y. The primacy of behavioral research for understanding the brain. Behav Neurosci. 2021;135:601–9. https://doi.org/10.1037/bne0000471.

Barlow H. The mechanical mind. Annu Rev Neurosci. 1990;13:15–24. https://doi.org/10.1146/annurev.ne.13.030190.000311.

Bongard J, Levin M. Living things are not (20th century) machines: updating mechanism metaphors in light of the modern science of machine behavior. Front Ecol Evol. 2021;9. https://doi.org/10.3389/fevo.2021.650726.

Richards BA, Lillicrap TP. The brain-computer metaphor debate is useless: a matter of semantics. Front Comput Sci. 2022;4. https://doi.org/10.3389/fcomp.2022.810358.

Brette R. Brains as computers: metaphor, analogy, theory or fact? Front Ecol Evol. 2022;10. https://doi.org/10.3389/fevo.2022.878729.

Núñez R, Allen M, Gao R, Miller Rigoli C, Relaford-Doyle J, Semenuks A. What happened to cognitive science? Nat Hum Behav. 2019;3:782–91. https://doi.org/10.1038/s41562-019-0626-2.

Slaney KL, Maraun MD. Analogy and metaphor running amok: an examination of the use of explanatory devices in neuroscience. J Theor & Philos Psychol. 2005;25:153–72. https://doi.org/10.1037/h0091257.

Brette R. Philosophy of the spike: rate-based vs. spike-based theories of the brain. Front Syst Neurosci. 2015;9:151. https://doi.org/10.3389/fnsys.2015.00151.

Krakauer JW, Ghazanfar AA, Gomez-Marin A, MacIver MA, Poeppel D. Neuroscience needs behavior: correcting a reductionist bias. Neuron. 2017;93:480–90. https://doi.org/10.1016/j.neuron.2016.12.041.

Gomez-Marin A. Causal circuit explanations of behavior: are necessity and sufficiency necessary and sufficient? In: Çelik A, Wernet MF, editors. Decoding neural circuit structure and function. Springer. 2017;283–306. https://doi.org/10.1007/978-3-319-57363-2_11.

Wilson HR, Cowan JD. Excitatory and inhibitory interactions in localized populations of model neurons. Biophys J. 1972;12:1–24. https://doi.org/10.1016/S0006-3495(72)86068-5.

Wilson HR, Cowan JD. A mathematical theory of the functional dynamics of cortical and thalamic nervous tissue. Kybern. 1973;13:55–80. https://doi.org/10.1007/BF00288786.

Hopfield JJ. Neural networks and physical systems with emergent collective computational abilities. Proc Natl Acad Sci U S A. 1982;79:2554–8. https://doi.org/10.1073/pnas.79.8.2554.

Amit DJ. Modeling brain function: the world of attractor neural networks. Cambridge University Press. 1989.

Kuramoto Y. Collective synchronization of pulse-coupled oscillators and excitable units. Physica D: Nonlinear Phenom. 1991;50:15–30. https://doi.org/10.1016/0167-2789(91)90075-K.

van Vreeswijk C, Abbott LF. Self-sustained firing in populations of integrate-and-fire neurons. SIAM J Appl Math. 1993;53:253–64. https://doi.org/10.1137/0153015.

Abbott LF. Theoretical neuroscience rising. Neuron. 2008;60:489–95. https://doi.org/10.1016/j.neuron.2008.10.019.

Destexhe A, Sejnowski TJ. The Wilson-Cowan model, 36 years later. Biol Cybern. 2009;101:1–2. https://doi.org/10.1007/s00422-009-0328-3.

Maass W. Searching for principles of brain computation. Curr Opin Behav Sci. 2016;11:81–92. https://doi.org/10.1016/j.cobeha.2016.06.003.

Goldman MS, Fee MS. Computational training for the next generation of neuroscientists. Curr Opin Neurobiol. 2017;46:25–30. https://doi.org/10.1016/j.conb.2017.06.007.

Levenstein D, Alvarez VA, Amarasingham A, Azab H, Gerkin RC, Hasenstaub A, et al. On the role of theory and modeling in neuroscience. Preprint. 2020. https://doi.org/10.48550/arXiv.2003.13825.

Kording KP, Blohm G, Schrater P, Kay K. Appreciating the variety of goals in computational neuroscience. Preprint. 2020. https://doi.org/10.48550/arXiv.2002.03211.

Blohm G, Kording KP, Schrater PR. A how-to-model guide for neuroscience. eNeuro. 2020;7. https://doi.org/10.1523/ENEURO.0352-19.2019.

Yuste R. From the neuron doctrine to neural networks. Nat Rev Neurosci. 2015;16:487–97. https://doi.org/10.1038/nrn3962.

Barack DL, Krakauer JW. Two views on the cognitive brain. Nat Rev Neurosci. 2021;22:359–71. https://doi.org/10.1038/s41583-021-00448-6.

Häusser M. The Hodgkin-Huxley theory of the action potential. Nat Neurosci. 2000;3:1165. https://doi.org/10.1038/81426.

Catterall WA, Raman IM, Robinson HPC, Sejnowski TJ, Paulsen O. The Hodgkin-Huxley heritage: from channels to circuits. J Neurosci. 2012;32:14064–73. https://doi.org/10.1523/JNEUROSCI.3403-12.2012.

Nandi A, Chartrand T, Van Geit W, Buchin A, Yao Z, Lee SY, et al. Single-neuron models linking electrophysiology, morphology, and transcriptomics across cortical cell types. Cell Rep. 2022;40: 111176. https://doi.org/10.1016/j.celrep.2022.111176.

Brunel N. Modeling point neurons: from Hodgkin-Huxley to integrate-and-fire. In: Schutter ED, editor. Computational Modeling Methods for Neuroscientists. MIT Press; 2009. p. 161–85.

Jobe TH, Fichtner CG, Port JD, Gaviria MM. Neuropoiesis: proposal for a connectionistic neurobiology. Med Hypotheses. 1995;45:147–63. https://doi.org/10.1016/0306-9877(95)90064-0.

Monaco JD, Abbott LF. Modular realignment of entorhinal grid cell activity as a basis for hippocampal remapping. J Neurosci. 2011;31:9414–25. https://doi.org/10.1523/JNEUROSCI.1433-11.2011.

Sompolinsky H. Computational neuroscience: beyond the local circuit. Curr Opin Neurobiol. 2014;25:xiii–xviii. https://doi.org/10.1016/j.conb.2014.02.002.

Zenke F, Ganguli S. Superspike: Supervised learning in multilayer spiking neural networks. Neural Comput. 2018;30:1514–41. https://doi.org/10.1162/neco_a_01086.

He K, Huertas M, Hong SZ, Tie X, Hell JW, Shouval H, et al. Distinct eligibility traces for LTP and LTD in cortical synapses. Neuron. 2015;88:528–38. https://doi.org/10.1016/j.neuron.2015.09.037.

Gerstner W, Lehmann M, Liakoni V, Corneil D, Brea J. Eligibility traces and plasticity on behavioral time scales: experimental support of NeoHebbian three-factor learning rules. Front Neural Circuits. 2018;12. https://doi.org/10.3389/fncir.2018.00053.

Bellec G, Scherr F, Subramoney A, Hajek E, Salaj D, Legenstein R, et al. A solution to the learning dilemma for recurrent networks of spiking neurons. Nat Commun. 2020;11:1–15. https://doi.org/10.1038/s41467-020-17236-y.

Taherkhani A, Belatreche A, Li Y, Cosma G, Maguire LP, McGinnity TM. A review of learning in biologically plausible spiking neural networks. Neural Netw. 2020;122:253–72. https://doi.org/10.1016/j.neunet.2019.09.036.

Doron G, Shin JN, Takahashi N, Drüke M, Bocklisch C, Skenderi S, et al. Perirhinal input to neocortical layer 1 controls learning. Science. 2020;370. https://doi.org/10.1126/science.aaz3136.

Buzsáki G. The brain from inside out. Oxford, UK: Oxford University Press; 2019.

Gomez-Marin A, Ghazanfar AA. The life of behavior. Neuron. 2019;104:25–36. https://doi.org/10.1016/j.neuron.2019.09.017.

Pereira TD, Shaevitz JW, Murthy M. Quantifying behavior to understand the brain. Nat Neurosci. 2020;23:1537–49. https://doi.org/10.1038/s41593-020-00734-z.

Skarda CA. The perceptual form of life. J Conscious Stud. 1999;6:79–93.

van Gelder T. What might cognition be, if not computation? J Philos. 1995;92:345–81. https://doi.org/10.2307/2941061.

van Gelder T. The dynamical hypothesis in cognitive science. Behav Brain Sci. 1998;21:615–28. https://doi.org/10.1017/S0140525X98001733.

Favela LH. Dynamical systems theory in cognitive science and neuroscience. Philos Compass. 2020;15: e12695. https://doi.org/10.1111/phc3.12695.

Sepulchre R, Drion G, Franci A. Control across scales by positive and negative feedback. Annu Rev Control Robot & Auton Syst. 2019;2:89–113. https://doi.org/10.1146/annurev-control-053018-023708.

Madhav MS, Cowan NJ. The synergy between neuroscience and control theory: the nervous system as inspiration for hard control challenges. Annu Rev Control Robot & Auton Syst. 2020;3:243–67. https://doi.org/10.1146/annurev-control-060117-104856.

Yin H. The crisis in neuroscience. The interdisciplinary handbook of perceptual control theory. Elsevier. 2020;23–48. https://doi.org/10.1016/B978-0-12-818948-1.00003-4.

Powers WT. Feedback: Beyond behaviorism. Science. 1973;179:351–6. https://doi.org/10.1126/science.179.4071.351.

Bell HC. Behavioral variability in the service of constancy. Int J Comp Psychol. 2014;27:338–60.

Musall S, Urai AE, Sussillo D, Churchland AK. Harnessing behavioral diversity to understand neural computations for cognition. Curr Opin Neurobiol. 2019;58:229–38. https://doi.org/10.1016/j.conb.2019.09.011.

Cisek P. Resynthesizing behavior through phylogenetic refinement. Atten Percept & Psychophys. 2019;81:2265–87. https://doi.org/10.3758/s13414-019-01760-1.

Hohwy J. The self-evidencing brain. Noûs. 2016;50:259–85. https://doi.org/10.1111/nous.12062.

Friston K. The free-energy principle: a unified brain theory? Nat Rev Neurosci. 2010;11:127–38. https://doi.org/10.1038/nrn2787.

Allen M, Friston KJ. From cognitivism to autopoiesis: towards a computational framework for the embodied mind. Synthese. 2018;195:2459–82. https://doi.org/10.1007/s11229-016-1288-5.

Friston K. Hierarchical models in the brain. PLOS Comput Biol. 2008;4: e1000211. https://doi.org/10.1371/journal.pcbi.1000211.

Friston K. What is optimal about motor control? Neuron. 2011;72:488–98. https://doi.org/10.1016/j.neuron.2011.10.018.

Siegelmann HT, Fishman S. Analog computation with dynamical systems. Physica D: Nonlinear Phenom. 1998;120:214–35. https://doi.org/10.1016/S0167-2789(98)00057-8.

Siegelmann HT, Sontag ED. Analog computation via neural networks. Theor Comput Sci. 1994;131:331–60. https://doi.org/10.1016/0304-3975(94)90178-3.

Siegelmann HT. Neural and super-Turing computing. Minds & Mach. 2003;13:103–14. https://doi.org/10.1023/A:1021376718708.

McCulloch WS, Pitts W. A logical calculus of the ideas immanent in nervous activity. Bull Math Biophys. 1943;5:115–33. https://doi.org/10.1007/BF02478259.

Von Neumann J. The computer and the brain. New Haven, CT: Yale University Press; 1958.

Freeman WJ. Chaos in the brain: possible roles in biological intelligence. Int J Intell Syst. 1995;10:71–88. https://doi.org/10.1002/int.4550100107.

Smolensky P, McCoy RT, Fernandez R, Goldrick M, Gao J. Neurocompositional computing: from the central paradox of cognition to a new generation of AI systems. Preprint. 2022. https://doi.org/10.48550/arXiv.2205.01128.

Smolensky P, McCoy RT, Fernandez R, Goldrick M, Gao J. Neurocompositional computing in human and machine intelligence: a tutorial. Microsoft. 2022. Report No.: MSR-TR-2022–5.

DeLanda M. Philosophy and simulation: the emergence of synthetic reason. Bloomsbury Publishing. 2011.

Graves A, Wayne G, Danihelka I. Neural Turing machines. Preprint. 2014. https://doi.org/10.48550/arXiv.1410.5401.

Weng J. Brain as an emergent finite automaton: a theory and three theorems. Int J Intel Sci. 2015;5:20. https://doi.org/10.4236/ijis.2015.52011.

Weng J. Brains as optimal emergent Turing machines. Proc Int Joint Conf Neural Netw. 2016;1817–24. https://doi.org/10.1109/IJCNN.2016.7727420.

Zador AM. A critique of pure learning and what artificial neural networks can learn from animal brains. Nat Commun. 2019;10:3770. https://doi.org/10.1038/s41467-019-11786-6.

Espeso-Gil S, Holik A, Bonnin S, Jhanwar S, Chandrasekaran S, Pique-Regi R, et al. Environmental enrichment induces epigenomic and genome organization changes relevant for cognition. Front Mol Neurosci. 2021;14. https://doi.org/10.3389/fnmol.2021.664912.

Koulakov A, Shuvaev S, Zador A. Encoding innate ability through a genomic bottleneck. Preprint. 2021. https://doi.org/10.1101/2021.03.16.435261.

Dietrich E, Markman AB. Discrete thoughts: why cognition must use discrete representations. Mind & Lang. 2003;18:95–119. https://doi.org/10.1111/1468-0017.00216.

Sols I, DuBrow S, Davachi L, Fuentemilla L. Event boundaries trigger rapid memory reinstatement of the prior events to promote their representation in long-term memory. Curr Biol. 2017;27:3499–504. https://doi.org/10.1016/j.cub.2017.09.057.

Shin YS, DuBrow S. Structuring memory through inference-based event segmentation. Top Cogn Sci. 2021;13:106–27. https://doi.org/10.1111/tops.12505.

Wang C-H, Monaco JD, Knierim JJ. Hippocampal place cells encode local surface-texture boundaries. Curr Biol. 2020;30:1397–409. https://doi.org/10.1016/j.cub.2020.01.083.

Williams JA, Margulis EH, Nastase SA, Chen J, Hasson U, Norman KA, et al. High-order areas and auditory cortex both represent the high-level event structure of music. J Cogn Neurosci. 2022;34(4):699–714. https://doi.org/10.1162/jocn_a_01815.

Geerligs L, Gözükara D, Oetringer D, Campbell K, van Gerven M, Güçlü U. A partially nested cortical hierarchy of neural states underlies event segmentation in the human brain. Preprint. 2021. https://doi.org/10.1101/2021.02.05.429165.

Karalis N, Sirota A. Breathing coordinates cortico-hippocampal dynamics in mice during offline states. Nat Commun. 2022;13:1–20. https://doi.org/10.1038/s41467-022-28090-5.

Zheng J, Schjetnan AGP, Yebra M, Gomes BA, Mosher CP, Kalia SK, et al. Neurons detect cognitive boundaries to structure episodic memories in humans. Nat Neurosci. 2022;25:358–68. https://doi.org/10.1038/s41593-022-01020-w.

Ballard DH, Hayhoe MM, Pook PK, Rao RP. Deictic codes for the embodiment of cognition. Behav Brain Sci. 1997;20:723–42. https://doi.org/10.1017/S0140525X97001611.

Lázaro-Gredilla M, Lin D, Guntupalli JS, George D. Beyond imitation: zero-shot task transfer on robots by learning concepts as cognitive programs. Sci Robot. 2019;4:eaav3150. https://doi.org/10.1126/scirobotics.aav3150.

Song S, Yao H, Treves A. A modular latching chain Cogn Neurodyn. 2014;8:37–46. https://doi.org/10.1007/s11571-013-9261-1.

Miller P. Itinerancy between attractor states in neural systems. Curr Opin Neurobiol. 2016;40:14–22. https://doi.org/10.1016/j.conb.2016.05.005.

Rabinovich MI, Varona P. Discrete sequential information coding: Heteroclinic cognitive dynamics. Front Comput Neurosci. 2018;12:73. https://doi.org/10.3389/fncom.2018.00073.

Miłkowski M. Explaining the computational mind. MIT Press. 2013.

Miłkowski M. From computer metaphor to computational modeling: the evolution of computationalism. Minds & Mach. 2018;28:515–41. https://doi.org/10.1007/s11023-018-9468-3.

Stark E, Levi A, Rotstein HG. Network resonance can be generated independently at distinct levels of neuronal organization. PLOS Comput Biol. 2022;18:1–33. https://doi.org/10.1371/journal.pcbi.1010364.

Miłkowski M. Why think that the brain is not a computer? APA Newsl Philos & Comput. 2016;16:22–8.

Dehaene S. Consciousness and the brain: deciphering how the brain codes our thoughts. Penguin. 2014.

Bell AJ. Levels and loops: the future of artificial intelligence and neuroscience. Philos Trans R Soc Lond Ser B: Biol Sci. 1999;354:2013–20. https://doi.org/10.1098/rstb.1999.0540.

Buzsáki G, Mizuseki K. The log-dynamic brain: how skewed distributions affect network operations. Nat Rev Neurosci. 2014;15:264–78. https://doi.org/10.1038/nrn3687.

van den Heuvel MP, Sporns O. Rich-club organization of the human connectome. J Neurosci. 2011;31:15775–86. https://doi.org/10.1523/JNEUROSCI.3539-11.2011.

Binicewicz F, van Strien N, Wadman W, van den Heuvel M, Cappaert N. Graph analysis of the anatomical network organization of the hippocampal formation and parahippocampal region in the rat. Brain Struct Funct. 2016;221:1607–21. https://doi.org/10.1007/s00429-015-0992-0.

Rees CL, Wheeler DW, Hamilton DJ, White CM, Komendantov AO, Ascoli GA. Graph theoretic and motif analyses of the hippocampal neuron type potential connectome. eNeuro. 2016;3. https://doi.org/10.1523/ENEURO.0205-16.2016.

Schultz K, Villafañe-Delgado M, Reilly EP, Saksena A, Hwang GM. Analyzing emergence in biological neural networks using graph signal processing. In: Rainey LB, Holland OT, editors. Emergent behavior in system of systems engineering. CRC Press. 2022;171–92. https://doi.org/10.1201/9781003160816-10.

Nadel L, Moscovitch M. Memory consolidation, retrograde amnesia and the hippocampal complex. Curr Opin Neurobiol. 1997;7:217–27. https://doi.org/10.1016/S0959-4388(97)80010-4.

Haist F, Gore JB, Mao H. Consolidation of human memory over decades revealed by functional magnetic resonance imaging. Nat Neurosci. 2001;4:1139–45. https://doi.org/10.1038/nn739.

Mok RM, Love BC. Abstract neural representations of category membership beyond information coding stimulus or response. J Cogn Neurosci. 2021;1–17. https://doi.org/10.1162/jocn_a_01651.

McCulloch WS. A heterarchy of values determined by the topology of nervous nets. Bull Math Biophys. 1945;7:89–93. https://doi.org/10.1007/BF02478457.

McKenzie S, Huszár R, English DF, Kim K, Christensen F, Yoon E, et al. Preexisting hippocampal network dynamics constrain optogenetically induced place fields. Neuron. 2021;109:1040-1054.e7. https://doi.org/10.1016/j.neuron.2021.01.011.

Branco T, Staras K. The probability of neurotransmitter release: variability and feedback control at single synapses. Nat Rev Neurosci. 2009;10:373–83. https://doi.org/10.1038/nrn2634.

Mongillo G, Rumpel S, Loewenstein Y. Intrinsic volatility of synaptic connections — a challenge to the synaptic trace theory of memory. Curr Opin Neurobiol. 2017;46:7–13. https://doi.org/10.1016/j.conb.2017.06.006.

Freeman WJ. How brains make up their minds. Columbia University Press. 2000.

Buzsáki G, Anastassiou CA, Koch C. The origin of extracellular fields and currents–EEG, ECoG. LFP and spikes Nat Rev Neurosci. 2012;13:407–20. https://doi.org/10.1038/nrn3241.

Penttonen M, Buzsáki G. Natural logarithmic relationship between brain oscillators. Thal & Relat Syst. 2003;2:145–52. https://doi.org/10.1017/S1472928803000074.

Buzsáki G, Draguhn A. Neuronal oscillations in cortical networks. Science. 2004;304:1926. https://doi.org/10.1126/science.1099745.

Nokia MS, Penttonen M. Rhythmic memory consolidation in the hippocampus. Front Neural Circuits. 2022;16. https://doi.org/10.3389/fncir.2022.885684.

O’Keefe J, Recce ML. Phase relationship between hippocampal place units and the EEG theta rhythm. Hippocampus. 1993;3:317–30. https://doi.org/10.1002/hipo.450030307.

Tsodyks MV, Skaggs WE, Sejnowski TJ, McNaughton BL. Population dynamics and theta rhythm phase precession of hippocampal place cell firing: a spiking neuron model. Hippocampus. 1996;6:271–80. https://doi.org/10.1002/(SICI)1098-1063(1996)6:3%3C271::AID-HIPO5%3E3.0.CO;2-Q.

Sauseng P, Klimesch W. What does phase information of oscillatory brain activity tell us about cognitive processes? Neurosci & Biobehav Rev. 2008;32:1001–13. https://doi.org/10.1016/j.neubiorev.2008.03.014.

Gupta AS, Van Der Meer MA, Touretzky DS, Redish AD. Segmentation of spatial experience by hippocampal theta sequences. Nat Neurosci. 2012;15:1032–9. https://doi.org/10.1038/nn.3138.

Monaco JD, Knierim JJ, Zhang K. Sensory feedback, error correction, and remapping in a multiple oscillator model of place-cell activity. Front Comput Neurosci. 2011;5:39. https://doi.org/10.3389/fncom.2011.00039.

Monaco JD, De Guzman RM, Blair HT, Zhang K. Spatial synchronization codes from coupled rate-phase neurons. PLOS Comput Biol. 2019;15: e1006741. https://doi.org/10.1371/journal.pcbi.1006741.

Wang M, Foster DJ, Pfeiffer BE. Alternating sequences of future and past behavior encoded within hippocampal theta oscillations. Science. 2020;370:247–50. https://doi.org/10.1126/science.abb4151.

Nadasdy Z, Howell DHP, Török Á, Nguyen TP, Shen JY, Briggs DE, et al. Phase coding of spatial representations in the human entorhinal cortex. Sci Adv. 2022;8:eabm6081. https://doi.org/10.1126/sciadv.abm6081.

Cox R, Rüber T, Staresina BP, Fell J. Phase-based coordination of hippocampal and neocortical oscillations during human sleep. Commun Biol. 2020;3:1–11. https://doi.org/10.1038/s42003-020-0913-5.

Monaco JD, Hwang GM, Schultz KM, Zhang K. Cognitive swarming: an approach from the theoretical neuroscience of hippocampal function. In: George T, Islam MS, editors. Micro & Nanotechnol Sens Syst Appl XI. International Society for Optics and Photonics (SPIE). 2019;373–82. https://doi.org/10.1117/12.2518966.

Monaco JD, Hwang GM, Schultz KM, Zhang K. Cognitive swarming in complex environments with attractor dynamics and oscillatory computing. Biol Cybern. 2020;114:269–84. https://doi.org/10.1007/s00422-020-00823-z.

Hadzic A, Hwang GM, Zhang K, Schultz KM, Monaco JD. Bayesian optimization of distributed neurodynamical controller models for spatial navigation. Array. 2022;15: 100218. https://doi.org/10.1016/j.array.2022.100218.

Sar GKK, Ghosh D. Dynamics of swarmalators: a pedagogical review. Europhys Lett. 2022;139:53001. https://doi.org/10.1209/0295-5075/ac8445.

Jahnke S, Memmesheimer R-M, Timme M. Oscillation-induced signal transmission and gating in neural circuits. PLOS Comput Biol. 2014;10: e1003940. https://doi.org/10.1371/journal.pcbi.1003940.

Anastassiou CA, Koch C. Ephaptic coupling to endogenous electric field activity: why bother? Curr Opin Neurobiol. 2015;31:95–103. https://doi.org/10.1016/j.conb.2014.09.002.

Fernández-Ruiz A, Oliva A, Nagy GA, Maurer AP, Berényi A, Buzsáki G. Entorhinal-CA3 dual-input control of spike timing in the hippocampus by theta-gamma coupling. Neuron. 2017;93:1213–26. https://doi.org/10.1016/j.neuron.2017.02.017.

Smith EH, Horga G, Yates MJ, Mikell CB, Banks GP, Pathak YJ, et al. Widespread temporal coding of cognitive control in the human prefrontal cortex. Nat Neurosci. 2019;22:1883–91. https://doi.org/10.1038/s41593-019-0494-0.

Sherfey J, Ardid S, Miller EK, Hasselmo ME, Kopell NJ. Prefrontal oscillations modulate the propagation of neuronal activity required for working memory. Neurobiol Learn & Mem. 2020;173: 107228. https://doi.org/10.1016/j.nlm.2020.107228.

Strüber M, Sauer J-F, Bartos M. Parvalbumin expressing interneurons control spike-phase coupling of hippocampal cells to theta oscillations. Sci Rep. 2022;12:1362. https://doi.org/10.1038/s41598-022-05004-5.

Nadel L, Maurer AP. Recalling Lashley and reconsolidating Hebb. Hippocampus. 2020;30:776–93. https://doi.org/10.1002/hipo.23027.

Eichenbaum H. Barlow versus Hebb: when is it time to abandon the notion of feature detectors and adopt the cell assembly as the unit of cognition? Neurosci Lett. 2018;680:88–93. https://doi.org/10.1016/j.neulet.2017.04.006.

Pruszynski JA, Zylberberg J. The language of the brain: Real-world neural population codes. Curr Opin Neurobiol. 2019;58:30–6. https://doi.org/10.1016/j.conb.2019.06.005.

Josselyn SA, Tonegawa S. Memory engrams: recalling the past and imagining the future. Science. 2020;367. https://doi.org/10.1126/science.aaw4325.

Moutard C, Dehaene S, Malach R. Spontaneous fluctuations and non-linear ignitions: two dynamic faces of cortical recurrent loops. Neuron. 2015;88:194–206. https://doi.org/10.1016/j.neuron.2015.09.018.

Palm G. Neural assemblies: an alternative approach to classical artificial intelligence. Cham, CH: Springer International Publishing. 2022. https://doi.org/10.1007/978-3-031-00311-0_10.

Monaco JD, Levy WB. T-maze training of a recurrent CA3 model reveals the necessity of novelty-based modulation of LTP in hippocampal region CA3. Proc Int Joint Conf Neural Netw. Portland, OR: IEEE. 2003;1655–60. https://doi.org/10.1109/IJCNN.2003.1223655.

Monaco JD, Abbott LF, Kahana MJ. Lexico-semantic structure and the word-frequency effect in recognition memory. Learn Mem. 2007;14:204–13. https://doi.org/10.1101/lm.363207.

Ito J, Roy S, Liu Y, Cao Y, Fletcher M, Lu L, et al. Whisker barrel cortex delta oscillations and gamma power in the awake mouse are linked to respiration. Nat Commun. 2014;5:3572. https://doi.org/10.1038/ncomms4572.

Heck DH, McAfee SS, Liu Y, Babajani-Feremi A, Rezaie R, Freeman WJ, et al. Breathing as a fundamental rhythm of brain function. Front Neural Circuits. 2017;10:115. https://doi.org/10.3389/fncir.2016.00115.

Freeman WJ. The place of “codes” in nonlinear neurodynamics. Prog Brain Res. 2007;165:447–62. https://doi.org/10.1016/S0079-6123(06)65028-0.

Vanderwolf CH. Hippocampal electrical activity and voluntary movement in the rat. Electroencephalogr Clin Neurophysiol. 1969;26:407–18. https://doi.org/10.1016/0013-4694(69)90092-3.

Whishaw IQ, Vanderwolf CH. Hippocampal EEG and behavior: changes in amplitude and frequency of RSA (theta rhythm) associated with spontaneous and learned movement patterns in rats and cats. Behav Biol. 1973;8:461–84. https://doi.org/10.1016/S0091-6773(73)80041-0.

Bender F, Gorbati M, Cadavieco MC, Denisova N, Gao X, Holman C, et al. Theta oscillations regulate the speed of locomotion via a hippocampus to lateral septum pathway. Nat Commun. 2015;6:8521. https://doi.org/10.1038/ncomms9521.

Fuhrmann F, Justus D, Sosulina L, Kaneko H, Beutel T, Friedrichs D, et al. Locomotion, theta oscillations, and the speed-correlated firing of hippocampal neurons are controlled by a medial septal glutamatergic circuit. Neuron. 2015;86:1253–64. https://doi.org/10.1016/j.neuron.2015.05.001.

Wirtshafter HS, Wilson MA. Locomotor and hippocampal processing converge in the lateral septum. Curr Biol. 2019;29:3177–92. https://doi.org/10.1016/j.cub.2019.07.089.

Wirtshafter HS, Wilson MA. Lateral septum as a nexus for mood, motivation, and movement. Neurosci Biobehav Rev. 2021;126:544–59. https://doi.org/10.1016/j.neubiorev.2021.03.029.

Buzsáki G, Moser EI. Memory, navigation and theta rhythm in the hippocampal-entorhinal system. Nat Neurosci. 2013;16:130–8. https://doi.org/10.1038/nn.3304.

Qasim SE, Jacobs J. Human hippocampal theta oscillations during movement without visual cues. Neuron. 2016;89:1121–3. https://doi.org/10.1016/j.neuron.2016.03.003.

Monaco JD, Rao G, Roth ED, Knierim JJ. Attentive scanning behavior drives one-trial potentiation of hippocampal place fields. Nat Neurosci. 2014;17:725–31. https://doi.org/10.1038/nn.3687.

Vivekananda U, Bush D, Bisby JA, Baxendale S, Rodionov R, Diehl B, et al. Theta power and theta-gamma coupling support long-term spatial memory retrieval. Hippocampus. 2021;31:213–20. https://doi.org/10.1002/hipo.23284.

Jezek K, Henriksen EJ, Treves A, Moser EI, Moser M-B. Theta-paced flickering between place-cell maps in the hippocampus. Nature. 2011;478:246–9. https://doi.org/10.1038/nature10439.

Mark S, Romani S, Jezek K, Tsodyks M. Theta-paced flickering between place-cell maps in the hippocampus: a model based on short-term synaptic plasticity. Hippocampus. 2017;27:959–70. https://doi.org/10.1002/hipo.22743.

Klimesch W. The frequency architecture of brain and brain body oscillations: an analysis. Eur J Neurosci. 2018;48:2431–53. https://doi.org/10.1111/ejn.14192.

Aihara K, Leleu T, Baars B, Bressler S, Brown R, Hirsch M, et al. Cognitive phase transitions in the cerebral cortex: enhancing the neuron doctrine by modeling neural fields. Kozma R, Freeman WJ, editors. Springer. 2015. https://doi.org/10.1007/978-3-319-24406-8.

Ito J, Nikolaev AR, van Leeuwen C. Dynamics of spontaneous transitions between global brain states. Hum Brain Mapp. 2007;28:904–13. https://doi.org/10.1002/hbm.20316.

Tsuda I, Fujii H, Tadokoro S, Yasuoka T, Yamaguti Y. Chaotic itinerancy as a mechanism of irregular changes between synchronization and desynchronization in a neural network. J Integr Neurosci. 2004;3:159–82. https://doi.org/10.1142/S021963520400049X.

Tsuda I. Hypotheses on the functional roles of chaotic transitory dynamics. Chaos. 2009;19: 015113. https://doi.org/10.1063/1.3076393.

Tsuda I. Chaotic itinerancy and its roles in cognitive neurodynamics. Curr Opin Neurobiol. 2015;31:67–71. https://doi.org/10.1016/j.conb.2014.08.011.

Chen G, Gong P. Computing by modulating spontaneous cortical activity patterns as a mechanism of active visual processing. Nat Commun. 2019;10:4915. https://doi.org/10.1038/s41467-019-12918-8.

Goldman MS. Memory without feedback in a neural network. Neuron. 2009;61:621–34. https://doi.org/10.1016/j.neuron.2008.12.012.

Guckenheimer J, Holmes P. Nonlinear oscillations, dynamical systems, and bifurcations of vector fields. Springer Science & Business Media. 1983. https://doi.org/10.1007/978-1-4612-1140-2.

Mazzucato L, Fontanini A, La Camera G. Dynamics of multistable states during ongoing and evoked cortical activity. J Neurosci. 2015;35:8214–31. https://doi.org/10.1523/JNEUROSCI.4819-14.2015.

La Camera G, Fontanini A, Mazzucato L. Cortical computations via metastable activity. Curr Opin Neurobiol. 2019;58:37–45. https://doi.org/10.1016/j.conb.2019.06.007.

Mazzucato L. Neural mechanisms underlying the temporal organization of naturalistic animal behavior. Preprint. 2022. https://doi.org/10.48550/arXiv.2203.02151.

Franci A, Drion G, Sepulchre R. Modeling the modulation of neuronal bursting: a singularity theory approach. SIAM J Appl Dynam Syst. 2014;13:798–829. https://doi.org/10.1137/13092263x.

Ribar L, Sepulchre R. Neuromorphic control: designing multiscale mixed-feedback systems. IEEE Control Syst Mag. 2021;41:34–63. https://doi.org/10.1109/MCS.2021.3107560.

Reinhold AS, Sanguinetti-Scheck JI, Hartmann K, Brecht M. Behavioral and neural correlates of hide-and-seek in rats. Science. 2019;365:1180–3. https://doi.org/10.1126/science.aax4705.

Acknowledgements

Additional support was provided to GMH by the Johns Hopkins University Kavli Neuroscience Discovery Institute and the Innovation and Collaboration Janney Program at the Johns Hopkins University Applied Physics Laboratory. The authors thank Patryk Laurent for insightful comments on early versions of the manuscript.

Funding

This work was supported by the National Science Foundation (NCS/FO Award No. 1835279 to JDM and GMH) and the National Institutes of Health/National Institute of Neurological Disorders and Stroke (R03NS109923 and UF1NS111695 to JDM).

Author information

Authors and Affiliations

Contributions

JDM and GMH jointly conceived the work, performed the literature search, and critically revised the paper; JDM drafted the manuscript, designed the figure, and prepared the article for publication.

Corresponding author

Ethics declarations

Ethical Approval

This article does not contain any studies with human participants or animals performed by any of the authors.

Competing Interests

The authors declare no competing interests.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

This material is based on work supported by (while serving at) the National Science Foundation. Any opinion, findings, and conclusions or recommendations expressed in this material are those of the authors and do not necessarily reflect the views of the National Science Foundation.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Monaco, J.D., Hwang, G.M. Neurodynamical Computing at the Information Boundaries of Intelligent Systems. Cogn Comput (2022). https://doi.org/10.1007/s12559-022-10081-9

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s12559-022-10081-9