Abstract

Scientists and regular citizens alike search for ways to manage the widespread effects of the COVID-19 pandemic. While scientists are busy in their labs, other citizens often turn to online sources to report their experiences and concerns and to seek and share knowledge of the virus. The text generated by those users in online social media platforms can provide valuable insights about evolving users’ opinions and attitudes. The objective of this research is to analyze text of such user disclosures to study human communication during a pandemic in four primary ways. First, we analyze Twitter tweet information, generated throughout the pandemic, to understand users’ communications concerning COVID-19 and how those communications have evolved during the pandemic. Second, we analyze linguistic sentiment concepts (analytic, authentic, clout, and tone concepts) in different Twitter settings (sentiment in tweets with pictures or no pictures and tweets versus retweets). Third, we investigate the relationship between Twitter tweets with additional forms of internet activity, namely, Google searches and Wikipedia page views. Finally, we create and use a dictionary of specific COVID-19-related concepts (e.g., symptom of lost taste) to assess how the use of those concepts in tweets are related to the spread of information and the resulting influence of Twitter users.

The analysis showed a surprisingly lack of emotion in the initial phases of the pandemic as people were information seeking. As time progressed, there were more expressions of sentiment, including anger. Further, tweets with and without pictures and/or video had statistically significant differences in text sentiment characteristics. Similarly, there were differences between the sentiment in tweets and retweets and tweets. We also found that Google and Wikipedia searches were predictive of sentiment in the tweets. Finally, a variable representing a dictionary of COVID-related concepts was statistically significant when related to users’ Twitter influence score and number of retweets, illustrating the general impact of COVID-19 on Twitter and human communication. Overall, the results provide insights into human communication as well as models of human internet and social media use. These findings could be useful for the management of global challenges beyond, or different from, a pandemic.

Similar content being viewed by others

Introduction

The COVID-19 pandemic has affected, and continues to affect, lives worldwide in an unprecedented way. At the same time, the amount of information that has been generated during the pandemic is unprecedented. Social media users have created large amounts of publicly available communications that capture their views, opinions, concerns, thoughts, and knowledge about the pandemic. Our research investigates the text content of some of that social media, focusing on Twitter (posts) tweets, but also on Google and Wikipedia searches, to study human communications during the pandemic.

We use text analysis to extract and classify opinions and study how internet search data are predictive of Twitter tweet sentiment. Using both text analysis tools and manual assessment, Twitter tweets are analyzed for their content and expressions of sentiment and psychological content. We study how characteristics of the social media (e.g., pictures or no pictures) lead to different text concepts within Twitter messages. We investigate the relationship between Google and Wikipedia searches and the sentiment of Twitter messages creating a model relating search and social media. We also examine how a dictionary of key COVID concepts discussed in Twitter tweets are related to the extent that social media messages will get retweeted and add to the sender’s reputation in the context of the social media. Throughout, we use text analysis because it provides insight into the social media text provided by Twitter users [1, 2]. Accordingly, we use social media data, in the form of Twitter tweets and Google and Wikipedia searches, all of which were collected during the pandemic. Our specific objectives are to; analyze the sentiment and emotional content in Twitter tweet texts using both computer-based and manual methods; study the differences in text concepts identified in different types of Twitter tweets and retweets; develop a model of “search and tweet” and examine the ability of Google and Wikipedia searches to predict the sentiment in Twitter tweets; investigate the impact of the use of COVID-19 specific concepts (words) on human communication through Twitter; and identify general implications, beyond the COVID-19 pandemic, of the findings identified in this paper.

This research makes several contributions. First, the analysis shows that the text sentiment and emotional content of Twitter messages, with and without video and pictures, is statistically significantly different. Second, the text sentiment and emotional content in COVID-19-based tweets and retweets were statistically significantly different. Third, Google searches and Wikipedia page views are predictive of the percentage of positive and negative sentiment tweets. Fourth, a variable representing a dictionary of words capturing COVID-19 concepts was statistically significant in models of Twitter influence and retweets indicating the impact on human communication. In addition, we propose, as the basis for needed further analysis, a possible COVID-19 health management life cycle and further types of analysis that might provide useful with respect to different types of sentiment (e.g., neutrality, ambivalence).

The remainder of this paper proceeds as follows. The “Data and Methodology” section provides an overview of the data and the methods used. The “Twitter Text Analysis: Tweets vs. Retweets and Pictures vs. No Pictures” section discusses text analysis in Twitter tweets and investigates different levels of emotions in different types of tweets. The “Behavioral Links Between Internet Search and Twitter Sentiment” section examines the behavioral link between internet search, using Google and Wikipedia, and Twitter sentiment. The “Impact of COVID-19 Vocabulary Use of Twitter Reputation and Retweets” section considers the relationship of the impact of COVID-19-based communications on Twitter influence and Twitter retweets. The “Manual Analysis of Ad Hoc Tweets” section manually analyzes the tweets using a sentiment ontology. The “COVID-19 Management Life Cycle, Emerging Pandemic Issues, and Computational Extensions” section presents the notion of a COVID-19 life cycle and discusses additional approaches and extensions, such as Word2Vec, ensemble methods and notions of ambivalence. Finally, the “Summary, Contributions, and Conclusion” section summarizes the paper, discusses the implications of our findings and related research, and proposes further extensions.

Data and Methodology

In response to the pandemic, people sought to gain information about COVID-19 from personal interaction and sharing of stories and content. They shared their concerns, questions, opinions, and knowledge on social media [3]. Accordingly, we use such user provided content as the data for our research, especially Twitter tweets.

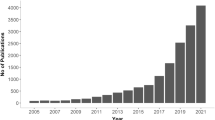

The Emotional Content of Twitter Tweets’ Text

Twitter tweets are often recognized as capturing the sentiment and emotional content of the crowd [4]. With millions of tweets generated daily, Twitter generally is perceived as a useful platform for research. Researchers have investigated many related issues using tweets, including tracking disease propagation, anticipating election results, and predicting sports outcomes [5]. Further recognizing the value of Twitter data, Banda et al. [6] created a large database of tweets related to COVID-19 and made it available to researchers, to provide substantial opportunities for investigation [7]. Other research has also used Twitter data for analyzing issues related to users’ attitudes towards COVID-19 [8,9,10].

Since people express their opinions and ideas in this user-generated content, this online text can be mined to extract corresponding sentiment in the textual disclosures [11]. There are different approaches to text analysis [12, 13]. Efforts related to text analysis related COVID-19 vaccinations have used a wide range of AI-enabled social media analysis on large data sets to accommodate the unstructured nature of the data [14]. As an example, Leibowitz et al. [15] used Linguistic Inquiry and Word Count (LIWC) to investigate the text of Twitter tweets generated by emergency medicine Twitter users and found that approximately 34% of the tweets were positive and 31% were negative; 76.5% focused on the present.

Review of COVID Data Sets and a Data Timeline

This research focuses on studying the early part of the pandemic. We, therefore, track some of the key events from early in the pandemic. Figure 1 shows a timeline of significant COVID-19-related events as the pandemic was identified and progressed during its initial stages when there were predictions that it might end by August 2020.

During the early phases of the COVID-19 pandemic, many people turned to Twitter as a platform to both post and retrieve information on the virus. Twitter tweets collected throughout this timeframe became the source of data for this research. We collected sets of data from Twitter, divided into roughly two periods: as the pandemic was identified and progressed to countries shutting down; and as countries started to reopen and cases continued to rise. These periods, were approximately, before early to mid-June, and after mid-June 2020.

LIWC

LIWC text analysis program [16, 17] was applied to the tweets. LIWC is perhaps the leading software for capturing information regarding psychological concepts from text. LIWC uses a psychology-based bag of words approach to analyze text. Tausczik and Pennebaker [18] provide a history of LIWC and the bag of words approach, which is derived from Freud and others and has a long history in psychology. Different concepts are represented within LIWC, such as “positive emotion” and “negative emotion,” but also related concepts such as “anger” and “power.” For each concept, a dictionary of words is included in LIWC. The software is then used to identify the relative frequency of occurrence of these words in a set of text (e.g., Twitter tweets), thus providing a scientific approach to text analysis. Representative concepts examined in this paper are summarized in Table 1.

LIWC has two different types of measures, “summary variables” and “categories of words.” The other categories of words, for example, “anger” or “power,” are made in a comparative analysis, typically within the existing sample based on their relative occurrence. However, four concepts (analytic, clout, authenticity, and tone) have been established as “summary variables,” which are “standardized composites, based on previous research” [19]. The four summary variable concepts capture the frequency of word occurrences from other categories. LIWC’s summary variables are not analyzed based on the number of occurrences, as are the category variables, but, instead, the number of occurrences is related to an empirical distribution that ranges from 0 to 100, measuring the percentile. Those summary variables allow us to make statements about these measures, independent of additional comparisons, such as in-sample comparisons.

Although we primarily focus on the summary variables, we also chose the categories of affiliation, anger, health, negative emotion, positive emotion, power, and social for various reasons: health, because the coronavirus is an issue associated with health; anger, because we expect an angry reaction to the pandemic; and ranges of positive and negative emotion because we expect that tweets about coronavirus would be emotional. We expected affiliation to be an important distinguishing variable as people connect with each other in a friendship type of gesture. Similarly, we expect that the tweets provide a social outlet for the tweeter. Finally, we included power because the process of tweeting is likely to provide the tweeter with a certain extent of perceived power over a situation.

Our Approach and Use of Data

Users communicate using Twitter, allowing us to capture and analyze conversations in text format. We can use dictionaries to capture concepts, such as sentiment, emotions, or COVID-19, or use other types of words. By focusing on Twitter, we study human communication and behavior during the pandemic. Figure 2 provides an overview of the analysis conducted in this research.Footnote 1

The data sets included: Twitter posts from early March to mid-June 2020 (2200 posts); a set of 900 posts collected in mid-July; and approximately 22,000 online posts collected daily from July–August 2020. These posts were collected by scrapping tweets using tools available on the internet; manual collection; and using https://birdiq.net/. The first data set was the most specific to COVID-19 and collected from reading and searching tweets based on keywords, such as symptom, infection, fever, fatigue, sick, COVID, and coronavirus. It also included mentions of family members or friends who might have had contact with the virus. The targeted collection was to identify the sentiment and emotions of people who were specifically dealing with COVID-19 in a concrete way. The second data set was intended to be used to obtain an overall sense of the attitude towards the virus over time. These tweets were collected automatically in July 2020 and cleaned.Footnote 2 They served as input to a text analysis tool. Daily tweets were collected from mid-July until August. Additionally, we collected data from Google searches and Wikipedia page views to study their predictive relationship with Twitter tweets.Footnote 3 A third data set employs additional data drawn from Hussain et al. [14].

Twitter Text Analysis: Tweets vs. Retweets and Pictures vs. No Pictures

In this section, we analyze a random sample of 20,000 tweets gathered in July 2020, chosen based on whether they contained the term coronavirus in order to study different forms of communication through tweets. We removed the tweets that were not English, resulting in 14,352 tweets. That set of tweets was analyzed using different comparative approaches: tweets vs. retweets and tweets with picture and video content vs. no video content. Of the 14,352 tweets, there were 1627 original tweets, 11,667 retweets, and the rest were replies. We did not analyze replies. Of the 14,352 tweets, 12,021 did not include any picture or video; 2331 did include pictures or videos. We analyzed both the two sets of tweets and the entire dataset using LIWC.

Text Analysis of the Entire Sample of Tweets: Analytic, Clout, Authenticity, and Tone

Applying LIWC to the entire sample, we found that the tweets averaged an “analytic” score of 74.02, which generally suggests more logical thinking. In addition, the tweets averaged a “clout” score of 66.60, which suggests more confidence than average. However, the tweets averaged an “authenticity” score of only 23.97, which is in the lower quarter of such scores. Finally, the “tone” averaged only 35.46, which reveals anxiety, sadness, or hostility. These results suggest that, on average, tweets about the coronavirus were relatively analytic, and came from a position of some clout. However, the tweets were not authentic sounding, suggesting guarded positions, and, generally, were more negative than positive.

Text Analysis of Tweets Using Pictures and Video Versus No Pictures and No Videos

Tweets can appear as text only or supplemented with pictures and videos. An important consideration is the extent of the impact of the pictures and videos on the text messages. Does the text differ if the tweeter includes pictures or videos? Do people express different text emotions when they supplement their text with videos or pictures? If they do, what does that mean? Do tweeters expect the pictures and video to tell the story? This section investigates some of these issues within the context of the coronavirus pandemic, while raising questions for future research.

We conducted a text analysis of the differences between the two groups of tweets: those that did not have pictures or videos; versus those that did. We used a two-sample t-test with unequal variances to test the differences between the two populations, for each of our variables. The results are summarized in Table 2. It is interesting to note that, for each text variable, there is a statistically significant difference between the average values for each of the categories, except health.

The “no picture and no video” tweets had statistically significantly differences, with both more positive and negative emotion and affiliation. The tweets also had greater comparative social context words and were presented with greater power. The differences were statistically significant. Finally, the text in the no picture and no video messages was also statistically significantly more “authentic”; however, the authenticity rating was still in approximately the lower 25%, suggesting a guarded presentation. On the other hand, there were statistically significant differences, with greater analytic vocabulary, clout and tone in the messages with pictures and videos.

This analysis suggests different sentiment and emotional content in the two sets of tweets. It does not establish whether: the use of pictures and video leads to changes in the sentiment and emotion in the text; the type of problem that leads to using a picture or video leads to a different type of text; or if people who communicate using video or pictures use different text than those who do not. Alternatively, it may be some combination of the three. Regardless, these are general issues in human communication and behavior that are subjects for future research.

Text Analysis of Retweets Versus Tweets

We analyzed how the text of tweets that were retweeted differ from those that are original tweets, with the results summarized in Table 3. There was not a statistically significant difference for the variables of affiliation and anger, whereas, for each of the other variables, there was a statistically significant difference. The retweets had greater values for clout, health, affiliation, power, social, negative emotion, and cognitive, whereas the original tweets had larger values for the categories of analytic, authenticity, tone, and positive emotion. Thus, the retweets were more negative in tone, suggested cognitive aspects to the tweet, focused more about health, and approached the tweet from a position of power.

Thus, the results show a statistically significant difference between the text of retweeted tweets and original tweets. What is not clear is whether the sentiment and emotions are related to the likeliness of a tweet to be retweeted. This is a topic for future research, perhaps using behavioral research. Further, although we find a difference in these pandemic-based tweets, whether the same relationship will hold between non-COVID-19 tweets requires future investigation.

General Progression of Sentiment and Emotions Expression

Figure 3 summarizes the findings from the analysis of the Twitter tweets during the early phases of COVID-19. It provides a general timeline of the results from these three data sets, starting with the early manually collected and analyzed tweets and progressing to the LIWC analysis of the automated collection of tweets and retweets.Footnote 4

Behavioral Links Between Internet Search and Twitter Sentiment

In an interesting and recent research paper, Hussain et al. [14] analyze the sentiment related to Twitter tweets during the COVID pandemic.Footnote 5 For their analysis, the researchers collected 10% of the Twitter tweets in a database of COVID pandemic tweets [20]. As part of their analysis, they determined the relative percentage numbers of COVID-related tweets that had either positive, negative, or neutral sentiment, over a 37-week time period, during 2020. For the same period, we gathered both Google searches (from the USAFootnote 6) and all Wikipedia page views, since there is no country-by-country availability. We then used those searches and page views to predict the percentage of numbers of positive, negative, and neutral sentiment tweets.

Our analysis is based on the behavioral model that people would search for information (for example, using Google or Wikipedia) and, after gathering their information, potentially issue a Twitter tweet. In that model, shown in Fig. 4, we would expect that the numbers of Google searches and Wikipedia page views to be predictive of Twitter tweets.

We used a set of variables to capture the relative percent of tweets that had “positive” sentiment (Pos Sent), “negative” sentiment (Neg Sent), and “neutral” sentiment (Neutral Sent). We provide the correlations of the percentage of the numbers of tweets with the numbers of Google searches and Wikipedia page views (for coronavirus and COVID-19). As seen in Table 4, both the numbers of Google searches and Wikipedia page views are statistically significantly related to both the percentage of tweets with positive and negative sentiment. The numbers of searches and page views are negatively related to the percent of positive sentiment tweets, and positively related to the percent of negative sentiment tweets. The percentage of neutral tweets is not statistically significantly related to Google searches or Wikipedia page views.

Next, we investigate the capture of lagged (1 week) variables, to test the ability to forecast the relative percentages of positive and negative sentiment tweets. The relative number of Google searches from Google Trends is the lagged variable “Google-1.” We capture the two different sets of lagged Wikipedia page views as “Wiki-Coronavirus-1” (coronavirus) and “Wiki-COVID-19–1” (COVID-19), based on two different sets of pages (coronavirus and COVID-19). The correlation results for the lagged variables are summarized in Table 5, and the regression models in Table 6.

As can be seen from Tables 4 and 5, the Google search variables and the Wikipedia page view variables are each highly correlated. Unfortunately, that correlation makes using both variables in the same regression equation difficult because of multicollinearity. Accordingly, we used only one of each of the variables in each regression equation, as reported in Table 6.

This analysis showed that both Google searches and Wikipedia page views were statistically significantly predictive of both positive sentiment and negative sentiment, but not neutral sentiment in Twitter tweets. Further, both Google searches (lagged one period) and Wikipedia page views of “COVID-19” and “coronavirus” (lagged one period) are predictive of the percent of Twitter messages with positive and negative sentiment. The percent of Twitter messages associated with positive sentiment was negatively correlated with both Google searches and Wikipedia page views, whereas the number of messages associated with negative sentiment was positively correlated with both the numbers of Google searches and Wikipedia page views.

As a result, it appears that more Google searches and Wikipedia page views, ultimately, are related to more negative sentiment Twitter messages. This is an interesting behavioral finding that should be examined in other settings to determine if the same relationships hold. This is important because it provides a basis for a potential behavioral link between searches for information (Google searches and Wikipedia page views) and statements or positions issued through social media (Twitter).

Impact of COVID-19 Vocabulary Use of Twitter Reputation and Retweets

This section investigates, as an alternative view, the impact of COVID-19 on Twitter use. That is, whether the use of COVID-19 terms in Twitter tweets is statistically significantly related to an “influence measure” of Twitter users and whether the use of those COVID-19 words is related to retweets. Doing so, allows us to study the effects on human communication of the pandemic by tracking the relationship between occurrences of words regarding COVID-19, both on social media influence and reuse of messages. Unfortunately, there has been limited research associated with studying the impact of COVID-19 on these issues.Footnote 7 We, therefore, study the impact using text mining, supported by the generation and use of a dictionary of COVID-19 words. We then investigate the relationship between the dictionary words, and Twitter “influence” scores, and between that dictionary of words and two measures of retweeting. This allows us to gain insights into the impact of COVID-19-based words on communications.

Creating a Coronavirus Dictionary

To assess whether a tweet used COVID-19 concepts, we first generated a dictionary of COVID-19 terms, broadly based on words related to symptoms and controlling the spread of the disease. As a result, we focus on subcategories of detecting the coronavirus (currently have or had in the past), preventing the coronavirus, curing (e.g., a vaccine), symptoms (e.g., fever), and different names for the virus.

There are potentially many different terms that can be used to measure the extent to which text contains information about the coronavirus pandemic. We choose our terms based on the following process. First, we obtained a list of words and phrases that occurred in a list of coronavirus tweets and ranked them by the number of occurrences. Second, those words not related to the disease were discarded; e.g., stop words were removed. Third, we reviewed those frequently occurring words to identify the subcategory to which they belonged. Finally, additional words and phrases from the authors were added. Our focus was on developing a set of words aimed at isolating text related to the coronavirus. Table 7 shows the coronavirus dictionary words.

We aggregated all of the occurrences of these dictionary words under the category “coronavirus.” As with LIWC, we use a bag of words approach, counting the number of words from the dictionary occurring in the tweets, to measure the effects of COVID-19 words on the Twitter communications. As a verification of the importance of the dictionary words in coronavirus text, we conducted a joint, quoted search, using Google to determine co-occurrence of the word with coronavirus as reported in Table 8.

The symptom words (e.g., lost sense of taste) had the lowest co-occurrence in our Google search. However, the symptoms of lost taste and smell in Table 8 seem particularly unique to the coronavirus. Despite, their potential low occurrence rate, we assessed that they would allow us to isolate and characterize coronavirus discussions.

Linguistic Inquiry and Word Count for Control Variables

We used LIWC to provide the control variables for our analysis of influence and retweeting. LIWC was used to capture both structural and semantic information about tweets in order to study the impact of our coronavirus dictionary. LIWC “structural” variables are the number of words in the text (WC), and items related to the style or difficulty of the text, such as the number of six letter (or longer words—Sixltr) and the number of words per sentence (WPS). We choose those control variables for several reasons. Word count provides a measure of the actual length of the message. Both WPS and Sixltr capture the “level” at which the tweets are made. More words per sentence and more than six letter words likely indicate a more “educated” tweeter. Alternatively, together WPS and Sixltr provide measures of “readability” or “ease of readability.” These three variables are measured based on the number of occurrences. Table 9 summarizes the structural variables used in this research.

Although LIWC provides several semantic word sets, we focus on the “summary words” that provide measures of the occurrences of some concepts in the text. They can provide control variables over which to normalize the impact of the text content on our dependent variables, in order to test the specific effects of our COVID dictionary.

Dependent Variables: Influence Score, Retweeted Status User Listed Count and “Is a Retweet”

We choose three dependent variables: the Twitter influence score, retweeted status user listed count, and whether the particular tweet was retweeted (“Is a Retweet”), using a dataset generated from https://birdiq.net/twitter-search. Twitter influence scores capture substantial information about the use of Twitter [21]. Canals [22] indicates that the influence score is a joint function of the number of followers, the number following, and the number of posts in the Twitter account and is heavily historical. The “retweeted status user listed count” and whether a tweet is retweeted (“Is a Retweet”) provide measures of the users’ current interest in the information.

Data

We collected a random sample of 900 Twitter tweets from https://birdiq.net/twitter-search during July 2020 using seed words of COVID and coronavirus. Of those 900, we eliminated the ones that were not written in English, bringing the final number of tweets used to 770. It was important to eliminate non-English terms because our analysis used an English dictionary and is dependent on being able to count the numbers of English words in each category. We identified approximately 85% English tweets and 15% non-English, largely Spanish and French.

Empirical Analysis: Correlation and Regression Analysis

We used both correlation analysis and regression analysis to analyze our data. Since the “Is a Retweet” is a nominal variable, we investigated it using logistic regression. In the regression analysis, we used the “variable inflation factors” (VIFs) to determine the extent of multicollinearity among our independent variables. Our largest VIF score did not exceed 1.3 and, thus, was well below the standard of 4 in the literature [23], suggesting very limited multicollinearity.

Empirical Findings

Our analysis took four different approaches. First, we conducted a correlation analysis between each of our continuous variables. Second, we investigated the Twitter influence score in two steps: first, with the structural control variables and the summary text content variables; and second, with the control variables, the summary text variables, and the coronavirus variable. This allowed us to assess the direct impact of the words in our dictionary. Third, we conducted a regression analysis of the continuous variable, retweeted status user listed count, with all our variables. Fourth, we performed a logistic regression on the nominal variable “Is Retweet,” with our control and dictionary variable.

Correlation Analysis

This section uses correlation analysis to investigate the relationships between our variables with particular emphasis on influence score. The correlations are summarized in Table 10 and the p values in Table 11.

As can be seen from Table 11, the coronavirus variable is statistically significantly correlated with the influence score. In addition, two of the semantic control variables, WC and WPS, are statistically significantly correlated with the influence score. Finally, two of the summary text variables, clout and tone, are also statistically significantly correlated with influence score. For those statistically significantly correlated variables, the signs on word count and coronavirus were negative, whereas the signs on the other three were positive.

Regression Analysis of Tweeter Influence Score

Table 12 shows that a model including the structural variables and the summary text variables generates an R-square value of 0.075. Table 13 summarizes the model variables. The two structural variables control variables of word count and words per sentence, and the summary text variables of analytic, clout, and tone, were statistically significant. We, therefore, conclude that, for this set of tweets, the influence score is statistically significantly related to analytic, clout, and tone text variables. Each of the VIFs (variable inflation factors) are less than 4, suggesting minimal multicollinearity (Hair et al. [24] and others).

Finally, in Tables 14 and 15 we add the findings of our new dictionary variable on the coronavirus. The R-square increases to 0.101, a statistically significant increase. In addition, the same control variables (WC and WPS) and one of the summary text variables (tone) has a coefficient that stays statistically significant. The coefficient on our coronavirus dictionary is also statistically significant and negative, as in the correlation matrix.

Analysis of “Retweeted Status User Listed Count”

We use the same model as in the previous analysis of influence score, to study one aspect of the impact of retweeting the retweeted status user listed count (RSULC) of those re-tweeters. The measure for fit for the equation is in Table 16 and the regression model in Table 17.

These results suggest that the vocabulary in the coronavirus dictionary is positively related to the RSULC. Further, each of the structural variables had p values that were statistically significant.

Analysis of “Is Retweet”—Logistic Regression

In this section, we discuss the analysis of the dependent variable “Is Retweet,” that takes on two values: true and false. As a nominal variable, we use logistic regression to analyze the data. The measures of fit are summarized in Table 18 and the coefficients in Table 19, for the model of “Is Retweet” for each of the control variables, summary text, and coronavirus variable. The p values on the coefficients of the semantic control variables WC and WPS were statistically significant, as they were in the estimation of influence score. However, whereas tone was statistically significant in the estimate of influence score, in the case of “Is Retweet,” the p values on the coefficients for clout and authentic were statistically significant. Finally, the p value on coronavirus was also statistically significant variable in estimating the variable “Is Retweet,” as it was in the model of influence score. The results in Table 19 indicate that only coefficients on word count and the coronavirus were positive, whereas those on words per sentence, clout and authentic were negative.

Summary

This section compares the results across the three dependent variables. The p values for word count and words per sentence are statistically significant throughout this section. In the regression model of the influence score, the coefficient on the word count has a negative sign. However, for the logistic regression model of the retweeting dependent variable, the sign is positive. Words per sentence is statistically significant in each of the three models. The coefficient of the text variable tone is positive and has a statistically significant coefficient in the regression model of influence score. However, the coefficients on clout and authentic were negative and statistically significant in the model of retweets. Finally, the p values for our coronavirus dictionary are statistically significant for all three dependent variables. The statistically significant results on our coronavirus dictionary suggest that the coronavirus pandemic has created a vocabulary of its own, including the previously unknown term COVID-19, and that vocabulary influences human communication as captured in Twitter.

Why does our dictionary have a negative sign on the estimation of the influence score and a positive sign on estimation of the retweet measures? We conclude that this is an indication of change in the information being diffused, and that information needs a “new dictionary” to identify it. Influential Twitter users have an established set of followers, posts, and topics. As a result, there is likely to be a consistency in their tweets. However, new and important topics emerged; for example, the coronavirus pandemic can attract great interest and result in retweets. Our results suggest that those creating the coronavirus tweets are a different set of users, diffusing a different set of information than more established Twitter users normally would do.

These results should be important for information technology research. The coronavirus dictionary allowed us to track the changes in vocabulary use in Twitter communications. This comparison between the impact on influence score and retweets allows us to monitor these changes. Special emerging technology dictionaries could be used with a range of technologies to capture and measure the information diffusion associated with such technologies.

Manual Analysis of Ad Hoc Tweets

In addition to the computer-based analysis, we manually analyzed the posts at a finer granularity, by adopting the work of Scherer [25], who identifies 36 ontological categories that deal with affect: admiration/awe, amusement, anger, anxiety, being touched, boredom, compassion, contempt, contentment, desperation, disappointment, disgust, dissatisfaction, envy, fear, feeling of affection/love, gratitude, guilt, happiness, hatred, hope, humility, interest/enthusiasm, irritation, jealousy, joy, longing, lust, pleasure/enjoyment, pride, relaxation/serenity, relief, sadness, shame, surprise, and tension/stress. These terms help to identify sentiment in natural language [26, 27].

We read each of the tweets and classified them as factual or emotional, based on Scherer’s [25] categories. Approximately, 55% of the posts were factual, simply referring to a fact (without emotion or sentiment) intended to be true at the time of posting. Other tweets were factual with some type of emotion and included direct reporting of patient experiences (5%). The remaining tweets reflected only a few emotions (anger, anxiety, desperation, disgust, hope, and surprise).

An example of a tweet classified as factual (which could be falsified later) was: “The spread of #COVID19 by an asymptomatic or someone who is not showing any symptoms appears to be less likely, said #WHO (@WHO) in the recently published summary of transmission of COVID-19 including symptomatic, pre-symptomatic and asymptomatic patients.” An example of the emotion fear was: “A patient with symptoms of a heart attack refused treatment after reading on Facebook that she would die if she went to hospital during the COVID-19 crisis.” Another tweet (again subject to later falsification), but intended to be factual, was: “@CDCgov issued some very useful current best estimates:—About 1/3 of COVID-19 infections are asymptomatic.—40% of transmission is occurring before people feel sick.—Time from exposure to symptom onset: ~ 6 days on average.”

Data

Using an online tool, https://birdiq.net/Twitter-search, we manually collected tweets based on keywords, such as COVID-19, CDC, and WHO. These tweets (over 2000) were reviewed to identify sentiments and insights that would not be possible to extract using an automated tool, again, to gain insight into user behaviors. We strived to show the value of manual mining, recognizing that this type of analysis is not feasible on a large scale. Table 20 provides examples of tweets and classifies them based on their actual, or assumed, intended (potential or real) significance.

From this sample, the most-likely keyword, COVID-19, revealed a variety of expected tweets on: the spread of the virus, the serious of it based on experiences and testimonials, testing, innovative ways to approaching testing and treatments, and others. The tweets shown from the WHO and DCD relate to advice and awareness.

Searches for Nuggets

The notion of the wisdom of the crowds implies that sometimes the crowd is able to perform better than individuals [28]. We investigated whether the content, as provided by the user community of Twitter (the crowd), could provide insights that might be helpful to the general public, or perhaps even medical professionals. The types of insights we were looking for required a human to identify what might be useful content and extract ideas at the tweet level of analysis [29]. Therefore, we reviewed approximately 2000 tweets posted throughout the pandemic. We attempted to identify nuggets; that is, pieces of information with the potential to have real value or use, beyond just a post. Examples of potentially influential tweets are given below. The first is a best practices suggestion.

Tweet (factual/sharing of best practices): #itvnews Many German patients were given oximeters in the community back in April. Other places have also recommended this. https://www.thailandmedical.news/news/COVID-19-tips-oximeters,-a-potential-home-tool-to-monitor-progress-of-COVID-19-symptoms-from-mild-to-moderate-and-to-detect-COVID-19-pneumonia-early

The following tweet shows passing on blood type information from a legitimate news source. Such information could be useful for someone assessing their own risk (e.g., for potential usefulness).

Tweet (blood types). This study finds COVID patients with type A blood are at much higher risk of developing life-endangering symptoms, patients with type O blood experience a “protective effect” https://www.nytimes.com/2020/06/03/health/coronavirus-blood-type-genetics.html

However, a later study by Harvard showed that people who were symptomatic and had blood types of B + or AB + were more likely to have a positive COVID-19 test than people who were symptomatic with blood type O.Footnote 8

The following tweet is factual. Knowing the potential length of the illness might last would be useful to anyone concerned with whether they are experiencing a typical duration.

Swiss TV news (factual): Half of patients (500/1000) contacted by a COVID 19 follow-up service report symptoms after 6 weeks https://www.rts.ch/play/tv/19h30/video/le-virus-recule-et-le-nombre-de-gueris-du-COVID-sont-tres-nombreux--mais-cette-nouvelle-maladie-laisse-parfois-des-traces-?id=11370777

The following tweet reports on a medial study and would be useful for anyone concerned with how seriously the virus might infect them.

Factual: Low levels of the prognostic biomarker suPAR are predictive of mild outcome in patients with symptoms of COVID-19 - a prospective cohort study. Authors: jesper eugen-olsen, Izzet Altintas, Jens Tingleff, Marius St... http://medrxiv.org/cgi/content/short/2020.05.27.20114678

The following two tweets show associations of patient characteristics and occurrence of the disease. These posts are interesting in the sense that the associations being made are non-intuitive. However, they serve as examples of the types of tweets that might trigger self-reporting of whether a person falls into one of these categories, which, in turn, could lead to further investigation to uphold or falsify the conclusions from the reports.

Factual (implications true or falsified later): In one report, dermatologists evaluated 88 COVID-19 patients in an Italian hospital and found 1 in 5 had some sort of skin symptom, mostly red rashes over the trunk. https://inq.news/COVID-toes

Factual (implications true or falsified later): Most #coronavirus patients had no hair https://www.hulldailymail.co.uk/news/uk-world-news/bald-men-could-risk-more-4194866

The tweets below could be important because they provide information on the virus itself, as well as a potential treatment, but are not scientifically proven.

COVID-19 maybe mutating but it’s for the good. Doctors in Italy have claimed that the symptoms of COVID-19 and their intensity is less than what they experienced with the first wave of patients. This suggests that COVID-19 gets weaker as it spreads. https://elemental.medium.com/could-the-coronavirus-be-weakening-as-it-spreads-928f2ad33f89

A new drug, #famotidine, available over-the-counter for relieving #heartburn, has shown promising results in treating the symptoms of #COVID19 https://www.firstpost.com/health/heartburn-drug-famotidine-may-reduce-symptoms-of-non-hospitalised-COVID-19-patients-suggests-case-series-8452421.html

As these tweets illustrate, they provide useful, or potentially useful, information when so much is unknown about this global crisis. Human judgment is needed to assess the validity of the claims in the tweets with scientific study clearly required for some of them. However, the potential value of the information contained within a tweet could not easily be obtained by software.

Twitter Use

People generally turned to Twitter as a platform to make sense of the pandemic. The tweets showed that people also wanted to provide useful information for others, sharing their opinions and knowledge. There were many compassion posts triggered by personal situations.

Example (desperation/disgust): My father, 62 yr suffering from high fever (103-104) from 9 days with no other apparent symptoms. He tested negative on COVID 19. He has history of CABG in 2006. Our family doctor advised to get him admitted. No hospital is accepting patient with fever. Pls help #caremongers

Example (factual/tension/stress): My friend is a nurse & finally broke her silence. She said she’s seeing COVID-19 patients leaving the hospital after COVID with kidney damage. Others will suffer with COPD like symptoms for the rest of their lives. It’s very scary.

Example (disgust) MILD. There’s a huge amount at stake in term mild – for gov actors, health service planners, clinicians, patients, carers...In the days when my own ‘mild’ #COVID19 symptoms have been manageable (Day 52 now), I reflected on mild COVID-19 for @somatosphere https://t.co/rQ9wFdcSQ7?amp=1

This mining revealed a great deal of posts with different perspectives. Many posts were intended to provide useful information. However, some of the posts which reported information considered to be factual (e.g., do not need to wear masks) had the potential to later be proven false.

Ideally, the mining for nuggets could produce insights for the management of the virus. For example, some cases reported on successful convalescent plasma treatments, leading to requests for plasma donations from recovered patients. Other tweets reported some members of a family getting the virus and others who lived at the same location, not getting it. Such reports might be of interest to researchers trying to find commonalities in these cases. The Appendix contains tweets mined from an additional data set. They reveal a combination of medical innovations (attempted or actual), health information, sentiment and personal reports, opinions, and creative comments. The tweets reveal a need to contribute to an ongoing crisis by providing medical information; contributing to the global conversation on COVID-19; or seeking help.

Themes—Summary

The use of Twitter as a critical social media tool in times of major communication needs was obvious with Twitter text providing valuable insights into users’ opinions and attitudes. The same held true as other world events unfold; for example, the Arab Spring and Japan’s earthquakes [6]. For COVID-19, the sentiment analysis revealed a change over time as the pandemic progressed. The most notable trend was that tweet content progressed from providing, and seeking, factual information to expressing emotions, including anger. Prior research found that Twitter, along with other social media, could be used as a predictor of COVID-19 cases and other threats to community health [30]. It is likely there will be continued use of these platforms. The development of large databases of tweets or other user-generated content should, thus, continue to provide substantial research opportunities to investigate COVID-19 or other issues related to global challenges of such magnitude [7].

Content themes emerged. The tweets emphasized information on testing, treating, reporting of well-known figures who tested positive, warnings about the severity of the disease, and other health-related information. Additional themes related to politics, reported scientific breakthroughs (some of which were later shown to be false), economics, reopening of schools and businesses, and others.

We attempted to understand the content of the tweets using sentiment analysis. Many tweets were factual; other showed predictable sentiment of anger, desperation, and hope. Of interest was how Twitter might be used to identify information nuggets, in the traditional sense of a valuable idea. This involved manual inspection and mining. One nugget was a relatively early suggestion that a hospital in India collect the blood of patients who had recovered from COVID-19. Later, the identification of blood type was scientifically investigated as an indicator of the risk of experiencing the disease. However, there does not appear to be a way for a computer program to connect these two, demonstrating the limitations of tools to extract inherent information in text data [12].

In the same way, there is much intentional or non-intentional sharing of misinformation, often referred to as fake news [31, 32]. A computer program that can deal with sentiment well might be able to identify tweets with specific content and others with opposite or contradictory content. We did not, for example, investigate tweets that suggested the COIVD-19 pandemic should not be taken seriously. Instead, we considered reasons why people elected to share content. Representative examples are provided in Table 21.

Many other investigations are possible. For example, could the impact of international protests be factored into the sentiment analysis? Is it possible to identify a “tipping” point where people realized the importance of being vigilant (wearing masks, etc.) based on posts reported by infected people relaying the seriousness of the disease to others?

Twitter has been used for social debates and expressions of public opinion (e.g., [33]). No doubt, it will continue to be used in this manner for topics of large, public impact. However, with millions of tweets being generated each day, our study has involved a limited number of texts, restricted to those written in English. It would be useful to expand the categories of sentiment we use as well as well as to determine whether there was any age group or gender differences in the negative tweets. Finally, finding the true nuggets will, no doubt, require a huge, semi-automated approach, but doing so might help to identify insights that could lead to the development of better sentiment analysis tools.

What we learn from Twitter as a platform is the potential to reach a large audience and provide much information, informative or otherwise. Of course, there are many issues, but it is not possible to verify them without scientific experimentation and reporting of actual numbers. For example, at one point, based on data from Italy and the UK, a website reported men as having approximately twice the number of deaths as women.Footnote 9 Finally, not all insights can be obtained using existing sentiment analysis tools, but there is a limited amount of insight that can be obtained from manual mining.

COVID-19 Health Management Life Cycle, Emerging Pandemic Issues, and Computational Extensions

The authors would like to thank the guest editor for suggesting some of the content in this section.

This paper has used data collected early in the COVID-19 life cycle. At the time of data collection, it was unimaginable that multiple COVID variants would have emerged. Nor was it foreseeable that, after 2 years, the end of the pandemic is not in sight. However, these realizations suggest that the COVID-19 pandemic has a sustained life cycle with many events that also could be investigated. Because that life cycle has many implications, we examine the basic notion of a COVID-19 life cycle and some of its implications. In addition, we examine some computing extensions for using bags of words in order to address issues, beyond the psychological concerns addressed in this paper. We examine potentially using Word2Vec and other approaches in future models and generate a list of business-based COVID-19 words. We also investigate the potential opportunities for the application of symbolic and sub-symbolic AI for sentiment analysis of COVID-19 as well as other emerging trends.

COVID-19 Health Management Life Cycle and Related Problems

As the COVID-19 pandemic continues, with new variants such as “omicron” emerging, and no solid end in sight, it is clear there is a life cycle to the pandemic (e.g., [34]) that affects healthcare planning, management and resource allocation. The emergent COVID-19 health management life cycle has many things in common with technology life cycles, such as the maturity curve, the hype curve, the adoption curve and others (e.g., [35]). Unfortunately, what it is not clear are the specific concerns, markers, or events, within that pandemic life cycle. Some of those emerging activities within the life cycle appear to include issues, such as managing new outbreaks of COVID, integrating health management efforts across multiple countries, freeing up and allocating resources, and other concerns, whose difficulties and solutions likely have not been established completely because the disease, literally, has been emerging, diffusing, and evolving. A health management life cycle model could be useful for identifying problems associated with each stage in the life cycle, as the disease works through its life cycle. The beginning of one such view of a life cycle is provided in Fig. 5 and includes potential life cycle stages on the horizontal axis and potential problems associated with the stages on the vertical axis. As a companion to this approach, it is easy to imagine a COVID-19 version of the hype cycle that traces the COVID-19 technologies (vaccines, antivirals, infusions, etc.) over time and over the stages, such as the “peak of inflated expectations,” to the “Trough of Disillusionment” to the “Slope of Enlightenment” [36].

This life cycle model could be helpful in text analysis by providing insights in a number of directions. For example, this research is concerned with psychological issues of communication in social media, suggesting the importance of a text mining approach centered in psychology and helping us choose the tool, LIWC. Across the life cycle, there are likely different psychological problems, that potentially might be identified from analysis of text communications. However, analysts may be concerned with other stages and other problems in the life cycle, requiring a different context than a psychological one. In those settings, analysts may need to generate different bags of words to gather meaning from different contexts about different problems, such as outbreak management or integrating efforts across countries.

Generating Bags of Words in Alternative Contexts for Alternative Problems

A number of approaches could be used to generate bags of words for different contexts in the COVID life cycle. Word2Vec [37] provides two approaches that allow the generation of words that are similar to a seed word: “Continuous Bag of Words” (CBOW) and “Continuous Skip Gram Model” (Skip Gram). Word2Vec identifies words that are similar to a seed word or words, in the text from which they are gathered. For example, as noted by Mikolov et al. [37, p. 5] in the analysis of text they found that the approach would help find similar words, such as how “… France is to Paris as Germany is to Berlin ….” We generated a set of 39 words drawn from a business corpus that are presented as Table 22, using both CBOW and Skip Gram.

An analysis of those words can imagine the business concerns, e.g., “downturn,” “slowdown,” “economy,” and “recession” captured in the corpus. In addition, the list includes other related risks to business, such as “BREXIT” and “fears.” Further, some of these words, although related to COVID-19, are not uniquely associated with the pandemic, such as “economy” and “abates.”

Poria et al. [38] and Araque et al. [39] suggest that text analysis should employ ensemble approaches. Interestingly, there are two different approaches within Word2Vec, so its use inherently provides the perspective of an assemble of methods. In addition, other approaches such as “Glove” [19], can be included with the two approaches within Word2Vec, to broaden the ensemble of methods. Each of these algorithms could be used to generate sets of words with different seed words. However, using multiple methods generates redundancies and words that, in general, may not be directly related to the seed word(s) in the sense that the analysis is concerned. As a result, it is important to include a “human-in-the-loop” into the ensemble approach when generating a bag of words about a concept.

Finally, Cambria et al. [40] investigated the application of symbolic and sub-symbolic AI for sentiment analysis. Their approach to capture meaning integrates both top-down and bottom-up computing that employs both computational sub-symbolic computational approaches and symbolic logic and semantic network approaches. In so doing, they built a new version of SenticNet. Future research can focus on integrating this approach to build better word sets that match the domain-specific needs of the particular locations of a COVID-19 life cycle, generating the wordsets for the problems as needed.

Ambivalence

Recently, researchers have begun to explore additional approaches of measuring neutral sentiment. Although there are approaches to capturing neutral sentiment, as discussed in the “Impact of COVID-19 Vocabulary Use of Twitter Reputation and Retweets” section, and in the Python natural language tool kit, recently Wang et al. [43] developed a more fine-grained approach to measuring ambivalence. This is important, because, although much of COVID-related activity is emotionally charged, resulting in demonstrations world-wide, some issues apparently garner ambivalence.Footnote 11 For example, Peng and Chen [42] investigate emotional ambivalence and luxury good consumption during the COVID pandemic. However, as noted by Craig et al. [41], capturing ambivalence can relate to the specific issues being considered and the way in which questions are worded, further emphasizing the importance of specific words.

Summary, Contributions, and Conclusion

As of 6th May 2020, there were almost 4 million known, confirmed cases of COVID-19 worldwide. By mid-July, that number more than tripled. It reached 30 million cases by September, and close to one million deaths.Footnote 12 By December 2021, over 250 million cases and 5 million deaths have been documented. By May 2022, that number grew to over 530 million cases and almost over 6 million deaths. Many global efforts are still being taken to combat the virus. As ordinary people seek to understand the virus, and learn how to protect themselves, they frequently turn to online platforms, such as Twitter, which is often regarded as a good resource from which to analyze opinions from user-generated content [44].

Contributions—Human Communication

This paper makes several contributions to knowledge about human communication using social media, couched in the use of Twitter within the COVID-19 pandemic, which lead to interesting questions for future research. First, we found that the text sentiment and emotions of Twitter messages with and without video and pictures is statistically significantly different. Although not clear why, the findings suggest important differences. Is it a general characteristic of human communication that using pictures results in different text sentiment than if pictures are not used?

Second, the sentiment and emotions of the retweets and the original tweets in the pandemic were statistically significantly different. Future research should investigate the extent to which this finding can be generalized. Is there something in the sentiment and emotions of a tweet that makes it likely to be retweeted? Is it a general characteristic of human communication to use this particular type or amount of sentiment?

Third, Google searches and Wikipedia page views are predictive of the percentage of positive and negative sentiment tweets, suggesting that humans perform internet searches and then communicate the results via social media. Future research should investigate the extent to which this phenomenon occurs in non-pandemic settings and can be considered a general model of human behavior.

Fourth, a variable representing a dictionary of words capturing COVID concepts was statistically significant in models of Twitter influence and retweets. It appears that communication of new topics pursued by new users results in retweets, in contrast to tweets from those with large influence scores derived from established pools of followers and topics. This finding can be used to support future research on new user groups or on technology use during a major event (e.g., [45]).

Relationship to Previous Research with LIWC

We have not discovered similar research to benchmark the findings in our analyses. However, very recently, other researchers have used LIWC for various types of related research into COVID-19-based concerns. Silva et al. [46], for example, used LIWC and Twitter tweets to investigate issues associated with misinformation. Barnes [47] used LIWC in analysis of “terror management theory.” Safa et al. [48], similarly, analyzed the detection of symptoms of depression in Twitter tweets. Ebeling et al. [49] used LIWC to investigate the impact of political polarization during the COVID-19 pandemic. Mosleh et al. [50] used LIWC, to analyze correlations with behavior. These efforts support our use of LIWC, although there are limited in their application to the issues examined in this paper.

Conclusion

The COVID-19 pandemic continues to be a topic of much global interest for both health and economic reasons, as new variants evolve. This research has analyzed text data from Twitter to gain an understanding of human communication based on user-supplied content during the pandemic. Twitter tweets were analyzed manually and using a text analysis tool. The results show changes in user participation over time from information seeking to expressions of anger or other emotions. Users retweeted different content with clout and were most concerned with health. Tweets that include pictures and movies have different text than those that do not. The percentage of positive versus negative sentiments found in COVID-19 tweets could be predicted by Google searches and Wikipedia page views. This research can also be considered as an analysis of human communication where new concepts are discussed using text and images, which provide a firm foundation from which to analyze the implications of events or situations that have wide-spread consequences, such as a pandemic or a natural disaster.

Notes

We thank an anonymous reviewer for suggesting this figure.

For example, posts that were not written in English were deleted without translation, mainly because the sentiment tool used is based on English.

Data is available upon request from the authors.

The authors wish to thank an anonymous referee for suggesting this diagram.

The authors would like to thank an anonymous referee for bringing this paper to their attention.

We did not include the UK, as Hussain et al. [14], because Wikipedia does not provide a country-by-country breakdown.

A quoted Google Scholar search of Twitter, Influence score, COVID, dictionary found 17 items, with none directly related.

There have been reported demonstrations throughout the world on strong reactions to the handling of the COVID-19 emergency measures, rollout of the vaccination and worldwide access; or vaccination requirements (e.g., truckers in Canada).

Based on reporting by worldometers. https://www.worldometers.info/coronavirus/

References

Chua CEH, et al. Developing insights from social media using semantic lexical chains to mine short text structures. Decis Support Syst. 2019;127: 113142.

Yousefinaghani S, et al. An analysis of COVID-19 vaccine sentiments and opinions on Twitter. Int J Infect Dis. 2021.

Leslie D. Tackling COVID-19 through responsible AI innovation: five steps in the right direction. Harvard Data Sci Rev. 2020.

Maddah M, et al. Data collection interfaces in online communities: the impact of data structuredness and nature of shared content on perceived information quality. In Proc 53rd Hawaii int conf sys sci. 2020.

O’Leary DE. Twitter mining for discovery, prediction and causality: applications and methodologies. Intelligent Systems in Accounting, Finance and Management. 2015;22(3):227–47.

Banda JM, et al. A large-scale COVID-19 Twitter chatter dataset for open scientific research—an international collaboration. arXiv preprint arXiv:.03688. 2020.

O’Leary DE. Evolving information systems and technology research issues for COVID-19 and other pandemics. Journal of Organizational Computing Electronic Commerce. 2020;30(1):1–8.

Cornelius J, et al. COVID-19 Twitter monitor: aggregating and visualizing COVID-19 related trends in social media. In Proc fifth social media mining for health applications workshop & shared task. 2020.

Qazi U, Imran M, Ofli F. GeoCoV19: a dataset of hundreds of millions of multilingual COVID-19 tweets with location information. SIGSPATIAL Depcial. 2020;12(1):6–15.

Wang H, et al. Using tweets to understand how COVID-19–related health beliefs are affected in the age of social media: Twitter data analysis study. Med Internet Research. 2021;23(2): e26302.

Pang B, Lee L. Opinion mining and sentiment analysis. Found Trends Inf Retr. 2008;2(1–2):1–135.

Cambria E, et al. Affective computing and sentiment analysis. In: A practical guide to sentiment analysis. Springer; 2017. p. 1–10.

Pandarachalil R, Sendhilkumar S, Mahalakshmi G. Twitter sentiment analysis for large-scale data: an unsupervised approach. Cogn Comput. 2015;7(2):254–62.

Hussain A, et al. Artificial intelligence–enabled analysis of public attitudes on Facebook and Twitter toward COVID-19 vaccines in the United Kingdom and the United States: observational study. J Med Internet Res. 2021;23(4): e26627.

Leibowitz MK, et al. Emergency medicine influencers’ Twitter use during the COVID-19 pandemic: a mixed-methods analysis. Western Journal of Emergency Medicine. 2021;22(3):710.

Pennebaker JW, et al. The development and psychometric properties of LIWC2015. University of Texas at Austin, Austin, TX. 2015, TX.

Pennebaker JW, et al. The development and psychometric properties of LIWC2015. 2015.

Tausczik YR, Pennebaker JW. The psychological meaning of words: LIWC and computerized text analysis methods. 2010;29(1):24–54.

Pennebaker J, et al. LIWC 2015 operator’s manual. Austin, TX: Pennebaker Conglomerates Inc; 2015.

Garmur M, et al. CrowdTangle platform and API. Harvard Dataverse. 2019;3.

Anger I, Kittl C. Measuring influence on Twitter. In Proc 11th int conf knowledge management and knowledge technol. 2011.

Canals E. How is the influencer score calculated?. https://en.support.mention.com/en/articles/2046054-how-is-the-influencer-score-calculated 2021.

Hair JF, et al. Essentials of marketing research, vol. 2. NY: McGraw-Hill/Irwin New York; 2010.

Hair JF, et al. Multivariate data analysis: a global perspective (Vol. 7). 2010, Upper Saddle River, NJ: Pearson.

Scherer KR. What are emotions? And how can they be measured? Soc Sci Inf. 2005;44(4):695–729.

Storey VC, Park E. An ontology of emotion process to support sentiment analysis. Journal of the Association of Information Systems. 2022.

Tabak FS, Evrim V. Comparison of emotion lexicons. In 2016HONET-ICT. 2016. IEEE.

Surowiecki J. The wisdom of crowds. 2005: Anchor.

Saif H, et al. Contextual semantics for sentiment analysis of Twitter. Information Processing Management. 2016;52(1):5–19.

O’Leary D, Storey VC. A Google–Wikipedia–Twitter model as a leading indicator of the numbers of coronavirus deaths. Intelligent Systems in Accounting, Finance and Management. 2020;27(3):151–8.

French AM, Storey VC, Wallace L. Les miserables: the tale of COVID-19 and role of information systems. J Organizational Comp Electronic Commerce. 2021;1–18.

Lazer DM, et al. The science of fake news. Science. 2018;359(6380):1094–6.

Sandhu M, et al. From associations to sarcasm: mining the shift of opinions regarding the supreme court on Twitter. Online Social Networks and Media. 2019;14: 100054.

Oliver N, et al. Mobile phone data for informing public health actions across the COVID-19 pandemic life cycle. Sci Adv. 2020;6(23):eabc0764.

O’Leary DE. The impact of Gartner’s maturity curve, adoption curve, strategic technologies on information systems research, with applications to artificial intelligence, ERP, BPM, and RFID. J Emerg Technol Account. 2009;6(1):45–66.

O’Leary DE. Gartner’s hype cycle and information system research issues. Int J Account Inf Syst. 2008;9(4):240–52.

Mikolov T, et al. Efficient estimation of word representations in vector space. arXiv preprint arXiv:.03688, 2013.

Poria S, et al. Ensemble application of convolutional neural networks and multiple kernel learning for multimodal sentiment analysis. Neurocomputing. 2017;261:217–30.

Araque O, Zhu G, Iglesias CA. A semantic similarity-based perspective of affect lexicons for sentiment analysis. Knowl-Based Syst. 2019;165:346–59.

Cambria E, et al. SenticNet 6: Ensemble application of symbolic and subsymbolic AI for sentiment analysis. In Proceedings of the 29th ACM international conference on information & knowledge management. 2020.

Craig SC, McCarthy AF, Gainous J. Question wording and attitudinal ambivalence: COVID, the economy, and Americans’ response to a real‐life trolley problem. Soc Sc Quarterly. 2021.

Peng N, Chen A. Consumers’ luxury restaurant reservation session abandonment behavior during the COVID-19 pandemic: the influence of luxury restaurant attachment, emotional ambivalence, and luxury consumption goals. Int J Hosp Manag. 2021;94: 102891.

Wang Z, Ho S-B, Cambria E. Multi-level fine-scaled sentiment sensing with ambivalence handling. Internat J Uncertain Fuzziness Knowledge-Based Systems. 2020;28(04):683–97.

Giachanou A, Crestani F. Like it or not: a survey of Twitter sentiment analysis methods. ACM Comput Surv. 2016;49(2):1–41.

Storey VC, Lukyanenko R, Grange C. Fighting pandemics with physical distancing management technologies. J Database Manag. 2021.

Silva M, et al. Predicting misinformation and engagement in COVID-19 Twitter discourse in the first months of the outbreak. arXiv preprint arXiv:2012.02164 2020.

Barnes SJ. Understanding terror states of online users in the context of COVID-19: an application of terror management theory. Comput Hum Behav. 2021;125: 106967.

Safa R, Bayat P, Moghtader L. Automatic detection of depression symptoms in Twitter using multimodal analysis. The J Supercomp. 2021;1–36.

Ebeling R, et al. Quarenteners vs. chloroquiners: a framework to analyze how political polarization affects the behavior of groups. in 2020 IEEE/WIC/ACM international joint conference on web intelligence and intelligent agent technology (WI-IAT). 2020. IEEE.

Mosleh M, et al. Cognitive reflection correlates with behavior on Twitter. Nat Commun. 2021;12(1):1–10.

Acknowledgements

The authors wish to acknowledge the helpful comments of the five anonymous referees, the senior editor, and the editor-in-chief on four previous versions of this paper as well as to acknowledge the help of the managing editor. We also thank Dr. Iris Vessey for valuable comments on an earlier version of this paper. During the development of portions of this paper, Daniel O'Leary was a Franco Americaine Research Fulbright Scholar. This research was supported by the J. Mack College of Business, Georgia State University.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Ethics Approval

This article does not contain any studies with human participants or animals performed by either of the authors.

Conflict of Interest

The authors declare no competing interests.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

Rights and permissions

About this article

Cite this article

Storey, V.C., O’Leary, D.E. Text Analysis of Evolving Emotions and Sentiments in COVID-19 Twitter Communication. Cogn Comput (2022). https://doi.org/10.1007/s12559-022-10025-3

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s12559-022-10025-3