Abstract

In this study, we examined human reactions to other people’s experiences of using assistive robots at work. An online vignette experiment was conducted among respondents from the United States (N = 1059). In the experiment, participants read a written scenario in which another person had started using assistive robots to help with a daily work-related task. The experiment manipulated the closeness of the messenger (familiar versus unfamiliar colleague) and message orientation (positive versus negative). Finding out positive user experiences of a familiar or unfamiliar colleague increased positive attitude toward assistive robots, perceived robot usefulness, and perceived robot use self-efficacy. Furthermore, those who reported higher perceived robot suitability to one’s occupational field and openness to experiences reported more positive attitude toward assistive robots, higher perceived robot usefulness, and perceived robot use self-efficacy. The results suggest that finding out other people’s positive user experiences has a positive effect on perceptions of using assistive robots to help with a daily work-related task. Perceptions of assistive robots at work are also associated with individual and contextual factors such as openness to experiences and perceived robot suitability to one’s occupational field. This is one of the first studies to experimentally investigate the role of social influence in the perceptions of assistive robots at work.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Artificial intelligence and new-generation robots are expected to transform societies across the globe [1,2,3]. Automation and robotic solutions are expected to be introduced in various sectors of working life [4, 5]. Success in implementing novel technologies in social environments such as workplaces is determined not only by individual characteristics, but also by social and contextual factors [6, 7]. The idea that people’s behavior and opinions are affected by social factors has extensively been examined in social psychological research [8,9,10]. This approach has also been applied to technology acceptance models to capture the social processes and norms in people’s adoption of robots and other new technology [11,12,13]. However, technology acceptance models have focused on the influence of social norms instead of information sharing and so far, experimental research on the role of social influence on the perceptions of assistive robots at work have been conducted scarcely.

In this study, we experimentally investigated the role of social influence in the perceptions of assistive robots to help with a daily work-related task using survey data from the United States (U.S.). Instead of social norms, we focused on social influence of shared information in the form of relayed user experience. Our aim was to test whether the closeness of the messenger (i.e., the person whose experiences the respondent learns about) and orientation of the message (i.e., messenger’s positive vs. negative user experiences) influence participants’ perceptions of assistive robots at work. To gain comprehensive knowledge of the perceptions, we included positive attitude toward assistive robots, perceived robot usefulness, and perceived robot use self-efficacy as outcome measures of the experiment. This is one of the first studies to experimentally investigate the role of social influence in the perceptions of assistive robots at work.

1.1 Perceptions of Robots at Work

Perceived attitude, robot usefulness, and robot use self-efficacy are perceptions that could be influenced by other people, and therefore, were suitable measures for our experiment. Attitudes play an important role in technology adoption as an antecedent to behavioral intention or actual use of novel technologies, such as robots [7, 11, 14]. Attitudes are relatively persistent positive, negative, or neutral estimates of the target [15], suggested to be based on social information to some extent [11, 16,17,18]. Perceived usefulness refers to how much individuals think that use of a technology improves their own performance [14]. In extended technology acceptance models, social influence processes have been mapped as a direct determinant of perceived usefulness [13, 19], indicating close relationships between these factors. Self-efficacy beliefs are one’s beliefs in own abilities to undertake courses of action successfully (e.g., to use a robot) and can be strengthened through four main influential sources: mastery experiences, vicarious experiences, verbal persuasion, and one’s own physiological and emotional states [20, 21]. Vicarious experiences reflect that observing similar others’ success in completing a task can enhance one’s beliefs in own abilities to do the same [22]. Studies have suggested that attitudes, perceived usefulness, and self-efficacy beliefs are somewhat interrelated and predict intention to use technology [11, 23,24,25] that in turn predicts actual use of technology [7].

In technology acceptance models, such as technology acceptance model 2 (TAM-2) or the unified theory of acceptance and use of technology (UTAUT), social influence is modeled to predict intention to use technology directly or indirectly though perceived usefulness or ease of use [7, 13, 19, 26]. In some robot acceptance models, such as ALMERE model and robot acceptance model for care (RAM-care) favorable social influence is directly related to intention to or actual use of a robot [11, 27]. Research has also been conducted on social influence of robots on humans [25, 28]. Research in domestic settings has shown that people consider other peoples’ opinions about the use of robots, but other peoples’ opinions may not have a major effect on people's own attitudes [29]. Studies on social influence of human on human regarding perceptions of robots at work are scarce. Some evidence is provided in RAM-care model, where social influence, measured by care workers’ beliefs of own colleagues’ positive perceptions of using care robots, is proposed to predict intention to use care robots [27].

Perceptions of robots are also determined by sociodemographic characteristics. Age, gender, and education have been identified as influential factors of attitudes toward robots [30]. However, these relations are also likely to be mitigated through one’s experiences of technology and technological expertise [31]. The importance of the role of personality in human–robot interaction has also been raised [32]. Among human personality traits, extraversion is most commonly studied and has repeatedly been linked with favorable perceptions of robots [33, 34]. A recent study by Rossi et al. [35] demonstrated positive effects of openness to experiences on perceptions of human–robot interaction. Conscientiousness and openness to experiences have found to be positively associated with attitudes toward robots and perceived robot use self-efficacy [36].

1.2 Social Influence

Social pressure and the information other people provide are influencing factors in the social environment. This is evident in the theory of social influence, which can be divided into normative influence and informational influence [37, 38]. Normative influence is related to confirming with positive expectation of others and informational influence is about accepting information from others as evidence about reality [37]. Previous studies concerning peer influence on health behavior suggest that close proximity to the other person, in terms of social relationship network, increases the likelihood of social influence [39, 40]. In addition to the status of the person providing the information, the information content is likely to determine the direction of the influence, which can be positive or negative. These processes have been widely examined in marketing psychology research under persuasion theoretical frameworks. Two routes to persuasion propose that people could be influenced by the attractiveness or authority of the messenger, or by concentrating on the message itself [41]. Although social influence processes have been studied extensively in the past, also regarding views and use of technologies focusing on social norms [42], there is currently a need for studies investigating information sharing social influence of humans on humans in the context of adoption of assistive robots at work.

1.3 This Study

In this study, we investigated the effects of social influence on the perceptions of assistive robots at work. More specifically, we focused on information sharing and were interested in testing the effects of the closeness of the messenger and orientation of the message on positive attitude toward robots, perceived robot usefulness, and perceived robot use self-efficacy. Our study is based on social influence and persuasion theoretical frameworks [37, 38, 41], and previous empirical studies on social influence in adoption of technologies [18, 27, 29]. Our hypotheses were preregistered [43] prior to data collection and were:

H1

-

1.

Closeness with the messenger increases the positivity of attitude toward assistive robots.

-

2.

Closeness with the messenger increases perceived robot usefulness.

-

3.

Closeness with the messenger increases perceived robot use self-efficacy.

H2

-

1.

Positive message orientation of robot experiences increases the positivity of attitude toward assistive robots compared to a negative message orientation.

-

2.

Positive message orientation of robot experiences increases perceived robot usefulness compared to a negative message orientation.

-

3.

Positive message orientation of robot experiences increases perceived robot use self-efficacy compared to a negative message orientation.

2 Methods

2.1 Participants

An online survey sample was collected among respondents living in the U.S. in April 2020 (N = 1059; 51.71% female, Mage = 37.97 years, SDage = 11.75 years, range 18–79 years). The participants reported living in 49 different states and District of Columbia, and most of the respondents were from California (14.45%), Texas (8.50%), and Florida (6.99%). Most of the respondents reported working full or part time (86.12%), some were in school (2.83%), and some were unemployed (13.88%). Roughly three-fourths of the respondents (74.41%) reported having a college degree or above. Most of the respondents did not have (58.17%) or were not sure if they had (9.16%) firsthand experiences of robots, and approximately one-third (32.67%) of the respondents had interacted or used a robot before.

2.2 Procedure

Respondents were recruited from Amazon Mechanical Turk’s pool of respondents. A procedure proposed by Kennedy et al. [44] was employed to guarantee that the respondents were from the U.S. Only unique participants who had not previously participated in our studies before took part in the current study [45]. Before the survey experiment, respondents were asked about sociodemographic information, personality, and views on work. After the experiment, respondents were asked about experiences and views on robots. Informed consent was obtained from each participant. Before starting to collect the data, project’s research protocol was approved by the Tampere region’s Academic Ethics Committee [decision numbers 89/2018 and 28/2020].

The experiment followed a between-subjects vignette study design [46]. In practice, respondents were randomly assigned to one of the four experimental conditions. Manipulated factors were closeness of the messenger (own colleague vs. a previously unfamiliar person from own field) and orientation of the message (positive vs. negative). Each of the four groups read one written scenario description of a hypothetical situation:

Imagine that you meet your colleague / a previously unfamiliar person from your field who has started using an assistive robot for helping with a daily work-related task. You find out that learning to use the robot has been smooth and the robot has been significantly beneficial in accomplishing the intended task / difficult and the robot has significantly disrupted the accomplishment of the intended task.

Emphases were added to the description for reporting. The first group read about a colleague’s positive user experiences, the second group read about a colleague’s negative user experiences, the third group read about positive user experiences of a previously unfamiliar person from their field, and the fourth group read about negative user experiences of a previously unfamiliar person from their field. No other information was given about the assistive robot or its functionalities other than what was stated in the written scenario. After reading the scenario assigned to them, respondents were instructed as follows: “Keeping in mind the context of your work or study, please answer to what degree you agree with the following statements.” The outcome measures included positive attitude toward assistive robots, perceived robot usefulness, and perceived robot use self-efficacy.

To assess the extent to which randomization of study participants into four conditions was successful, we observed whether there were statistically significant differences among the groups in terms of participants’ age, gender, degree in technology or engineering, firsthand experiences of robots, or personality traits (Neuroticism, Extraversion, Openness, Agreeableness, and Conscientiousness). The randomization of respondents into groups was found successful, as no significant differences were found. For the entire survey, the median response time, including the experiment, was 10 min 27 s, whereas the median response time for the vignette experiment was 29 s (M = 52 s).

2.3 Measures

2.3.1 Positive Attitude Toward Assistive Robots

The first dependent variable of our experiment was a positive attitude toward assistive robots, which was measured with a single-item measure altered from a question from the Special Eurobarometer 427 survey [47]: “I have a positive view on assistive robots in general,” to which respondents answered on a scale ranging from 1 (strongly disagree) to 7 (strongly agree), respectively (M = 5.22, SD = 1.51).

2.3.2 Perceived Robot Usefulness

The second dependent variable of the study was perceived robot usefulness. The four statements were based on items from the technology acceptance model (TAM; Davis et al., [48]). The questions used in the current study were: (a) “Using assistive robots would improve my job performance”; (b) “Using assistive robots in my job would increase my productivity”; (c) “Using assistive robots would enhance my effectiveness on the job”; and (d) “I would find assistive robots useful in my job.” Answers were given on a scale ranging from 1 (strongly disagree) to 7 (strongly agree). Scale adjustment included incorporating “assistive robots.” For analysis, a four-item sum variable was created (α = 0.94, M = 5.03, SD = 1.43).

2.3.3 Perceived Robot Use Self-efficacy

The third dependent variable was perceived robot use self-efficacy. We modified three-items from the robot use self-efficacy scale RUSH-3 [49, 50]. The questions used in this study were: (1) “I’m confident in my ability to learn how to use assistive robots,” (2) “I’m confident in my ability to learn simple programming of assistive robots if I were provided the necessary training,” and 3) “I’m confident in my ability to learn how to use assistive robots in order to guide others to do the same.” Respondents rated their answers on a scale ranging from 1 (strongly disagree) to 7 (strongly agree). Adjustment for the scale included replacing the word “care” with “assistive” indicating the robot of interest. For analysis, a three-item sum variable was created (α = 0.87, M = 5.28, SD = 1.28).

2.3.4 Experimental Groups

The main independent variable was the experimental group, which included the four hypothetical scenarios. This was used as a categorical variable with four possible values for one-way ANOVA (1 = colleague × positive message, 2 = colleague × negative message, 3 = unfamiliar person × positive message, 4 = unfamiliar person × negative message). For two-way ANOVA and regression models, we created two dummy variables indicating the message content orientation (0 = negative, 1 = positive) and closeness of the messenger (0 = unfamiliar person, 1 = colleague). Thus, the first dummy variable combines the original groups of 1 and 3 to indicate a positive message orientation and 2 and 4 to indicate a negative message orientation. The second dummy variable combines the original groups of 1 and 2 to indicate a closer colleague and 3 and 4 to indicate an unfamiliar person from the same field.

2.3.5 The Control Variables

Sociodemographic variables included in analyses were age in years as a continuous variable, gender (0 = male, 1 = female), a degree from engineering or technology (0 = no, 1 = yes), prior robot use experience as binary (0 = no/don’t know, 1 = yes), and occupational status as a categorical variable (1 = student, 2 = working, 3 = unemployed). Personality was established using a 15-item Big Five inventory [51], based on which three-item sum variables were created, ranging from values 1 to 7. Cronbach’s alphas were again calculated: Neuroticism (α = 0.76), Extraversion (α = 0.75), Openness (α = 0.82), Agreeableness (α = 0.67), and Conscientiousness (α = 0.62). Agreeableness was measured with two items only, due to issues with interitem reliability. Perceived robot suitability to one’s occupational field was measured with the item, “Robots suit my occupational field well,” to which answers were given on a scale ranging from 1 (strongly disagree) to 7 (strongly agree).

2.4 Statistical Techniques

The analyses included descriptive statistics, Levene’s test for equality of variances [52], one-way ANOVA [53], Kruskal–Wallis H tests with Bonferroni corrections [54], Games–Howell post hoc tests for variables showing unequal variances (positive attitude toward assistive robots, perceived robot usefulness) [55], Tukey multiple comparison post hoc test for a variable showing equal variances (perceived robot use self-efficacy) [56], two-way ANOVAs, partial eta-squared (η2) and omega squared (ω2) values for effect sizes [57], and OLS regressions [58]. Descriptive statistics were used to describe the data and evaluate the measures. All three measures were slightly negatively skewed (skewness from -0.71 to − 0.88), and leptokurtic (kurtosis from 3.34 to 3.48, when 3 equals the normal distribution). Shapiro–Wilk tests suggested that none of the dependent variables were normally distributed (p < 0.001). Potential outliers were detected by using Cook’s distance measure but were not removed. Based on the relatively large data sample and almost equal sample sizes, we moved forward with the analysis.

We conducted Levene’s tests using the median to assess the equality of variances of non-normally distributed dependent variables [52]. Equal variances were observed for perceived robot use self-efficacy (p = 0.053), but not for perceived robot usefulness (p < 0.001) or positive attitude toward assistive robots (p < 0.001). However, a parametric one-way ANOVA was chosen as a widely used and statistically powerful method to assess the differences between the experimental groups. Non-parametric Kruskal–Wallis H-tests with Bonferroni corrections were also conducted to justify the findings from the ANOVA. As the results did not change, only the results of the one-way ANOVA are reported. Post hoc tests (Games–Howell and Tukey) were conducted to get a close look at the pairwise differences between the groups, that is, to compare all possible combinations of group differences. A two-way ANOVA was conducted to verify our hypotheses by examining if the effects of colleague and positive message orientation were independent. We considered our hypotheses to be confirmed when p-value was less than 0.05 indicating a statistically significant result, and not confirmed when p-value was greater than 0.05 indicating a statistically nonsignificant result. Analyses were conducted mainly with Stata 17. Games–Howell tests and figures were generated with SPSS Statistics 26 software.

3 Results

A descriptive overview of the four experimental groups is depicted in Table 1. Based on the descriptive results, those who read about own colleague’s positive experiences of using a robot reported the most favorable perceptions (positive attitude toward assistive robots M = 5.48; perceived robot usefulness M = 5.33; perceived robot use self-efficacy M = 5.46). The second most favorable perceptions were reported by those reading about unfamiliar person’s positive user experiences (M = 5.36; M = 5.18; M = 5.37). The third most favorable perceptions were reported by those reading about own colleague’s negative user experiences (M = 5.22; M = 5.01; M = 5.28), and the least favorable perceptions were reported by those reading about unfamiliar person’s negative user experiences (M = 4.85; M = 4.66; M = 5.04).

To test whether there were statistical differences between the groups, we conducted three one-way ANOVAs. The results showed that there were statistically significant differences among the four conditions regarding positive attitude toward assistive robots [F(3,1055) = 9.15, p < 0.001, η2 = 0.025, ω2 = 0.023], perceived robot usefulness [F(3,1055) = 11.21, p < 0.001, η2 = 0.031, ω2 = 0.028], and perceived robot use self-efficacy [F(3,1055) = 5.59, p = 0.001, η2 = 0.016, ω2 = 0.013].

Post hoc multiple comparison tests were then conducted to investigate the pairwise difference between the groups. Regarding positive attitude toward assistive robots, the Games–Howell tests showed that respondents exposed to a scenario of an unfamiliar person’s negative experiences of using assistive robots expressed a significantly less positive attitude toward assistive robots compared to those from a group discovering a colleague’s positive (p < 0.001) and negative experiences (p = 0.026), as well as than those reading about an unfamiliar person’s positive experiences of using assistive robots (p = 0.001). Hence, initial support was found for our hypotheses H1.1 and H2.1. The multiple comparisons between the groups regarding positive attitude toward robots are illustrated in Fig. 1.

Regarding perceived robot usefulness, the Games-Howell tests showed that respondents learning of an unfamiliar person’s negative experiences of using assistive robots perceived assistive robots as significantly less useful than those learning of a colleague’s positive (p < 0.001) or negative user experiences (p = 0.026), as well as than those learning of an unfamiliar person’s positive user experiences (p = 0.001). Furthermore, respondents discovering their colleague’s negative user experiences perceived assistive robots as significantly less useful than those reading a scenario of a colleague’s positive experiences (p = 0.026). Hence, initial support was found for our hypotheses H1.2 and H2.2. The multiple comparisons between the groups regarding perceived robot usefulness are illustrated in Fig. 2.

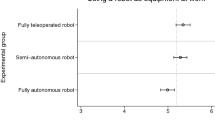

Regarding perceived robot use self-efficacy, the Tukey tests revealed that respondents who read that an unfamiliar person from their field had negative experiences of using assistive robots scored significantly lower in perceived robot use self-efficacy than respondents reading about a colleague’s positive experiences (p = 0.001) and respondents reading about an unfamiliar person’s positive experiences of using assistive robots (p = 0.013). Hence, initial support was found for our hypotheses H1.3 and H2.3. The multiple comparisons between the groups regarding perceived robot use self-efficacy are illustrated in Fig. 3.

To verify our hypotheses, three two-way ANOVAs were conducted to assess the effects of closeness of the messenger and orientation of the message, presented in Tables 2, 3, and 4. The analysis showed that both factors, a colleague and a positive message, had positive direct effects on positive attitude toward assistive robots, perceived robot usefulness, and perceived robot use self-efficacy. Thus, all our hypotheses were confirmed. The interaction between the colleague and positive orientation of the message remained not significant in any of the three models (p > 0.05), which indicates independent effects for both manipulated factors.

In the final part of analyses, OLS regressions were undertaken, aiming to identify individual factors associated with the perceptions of assistive robots at work in the experiment. All three models are reported with robust (Huber-White) standard errors, due to problems in heteroskedasticity detected via significant Breusch–Pagan tests (p < 0.05). A descriptive overview of the variables used in regression models is presented in Table 5. The OLS models incorporated closeness of the messenger as a colleague (0 = unfamiliar person, 1 = colleague) and positive message orientation (0 = negative, 1 = positive). Table 6 summarizes the linear regression models for positive attitude toward assistive robots, perceived robot usefulness, and perceived robot use self-efficacy.

Closeness of the messenger as a colleague was positively and significantly associated with positive attitude toward assistive robots (β = 0.06, p = 0.021), perceived robot usefulness (β = 0.07, p = 0.006), and perceived robot use self-efficacy (β = 0.07, p = 0.007) when considering the background variables. Positive message orientation was positively and significantly associated with positive attitude toward assistive robots (β = 0.10, p < 0.001), perceived robot usefulness (β = 0.12, p < 0.001), and perceived robot use self-efficacy (β = 0.09, p < 0.001) when considering the background variables. Thus, the main results of social influence remain after controlling for other strong predictors of known factors in the same model. Based on the unstandardized and standardized regression coefficients, the effect is larger for positive message orientation than for the closeness of the messenger when predicting positive attitude toward assistive robots, perceived robot usefulness, and perceived robot use self-efficacy—in line with our ANOVA analyses.

Two other factors which were positively and significantly associated with positive attitude toward assistive robots, perceived robot usefulness, and perceived robot use self-efficacy were openness to experiences (β = 0.15, p < 0.001; β = 0.15, p < 0.001; β = 0.28, p < 0.001), and perceived robot suitability to one’s occupational field (β = 0.47, p < 0.001; β = 0.51, p < 0.001; β = 0.29, p < 0.001). Agreeableness was positively and significantly associated with positive attitude toward assistive robots (β = 0.10, p = 0.001) and perceived robot usefulness (β = 0.06, p = 0.034). Further, female gender was negatively and significantly associated with perceived robot usefulness (β = − 0.06, p = 0.017). Conscientiousness was positively associated with perceived robot usefulness (β = 0.07, p = 0.047) and perceived robot use self-efficacy (β = 0.12, p = 0.001). Neuroticism was negatively associated with perceived robot use self-efficacy (β = − 0.09, p = 0.007). In addition, we tested the interaction effects of perceived robot suitability to one’s occupational field with closeness of the messenger and message orientation. We found a significant negative interaction between perceived robot suitability to one’s occupational field and positive message orientation when predicting positive attitudes toward assistive robots (β = − 0.20, p = 0.025).

Altogether, as reported in the tables, the variables explained 35% of the variance for positive attitude toward assistive robots (R2 = 0.35, F = 36.84, p < 0.001), 38% for perceived robot usefulness (R2 = 0.38, F = 39.72, p < 0.001), and 31% of the variance for perceived robot use self-efficacy (R2 = 0.31, F = 30.59, p < 0.001).

4 Discussion

This study experimentally examined U.S. respondents’ reactions to a written scenario in which other person had started using assistive robots to help with a daily work-related task. Reading a scenario involving their own colleague and a positive message describing the other persons’ user experiences of assistive robots both had statistically significant (p < 0.05) positive effects on positive attitude toward assistive robots, perceived robot usefulness, and perceived robot use self-efficacy, confirming all our pre-registered hypotheses. Furthermore, we found that perceptions of assistive robots at work are associated with individual and contextual factors such as openness to experiences and perceived robot suitability to one’s occupational field.

The results showed that closeness of the messenger, that is reading about own colleague’s user experiences had a positive effect on participants’ positive attitude toward assistive robots (H1.1), perceived robot usefulness (H1.2), and perceived robot use self-efficacy (H1.3). As established in previous literature, people are prone to influences from the social environment [37, 38], especially when it comes to close peer relationships [39, 40]. The results also support previous research suggesting that attitudes [11, 16,17,18], perceived usefulness [13, 19], and self-efficacy beliefs [20,21,22] can be socially influenced to some extent. It is noteworthy, however, that participants reacted also rather positively to a scenario involving an unfamiliar colleague’s positive user experiences. This raises a question of what kind of interpretations participants gave to our operationalizations of closeness, i.e., own colleague and an unknown person from one’s field. It is possible that respondents did not perceive a notional colleague as their close peer, i.e., similar other, but a generalized other [18] or a distal peer [39, 40] instead, which may have confounded the results to some extent. Relationally close relationships with colleagues could fuel the social influence processes [18], but having such colleagues is not a reality for all.

Social psychological theories on group dynamics state that minimal group membership information can already activate in-group perceptions and biases [59, 60], and thus, “unfamiliar person from your field” could also represent a colleague from the same in-group whose insights are seen as valuable, even though the person in question is not close to the responder. It is possible that the participants also reacted to unfamiliar colleague’s user experiences to conform to the social norms of a larger social community. Whether this is the case and how these mechanisms work differently for positive versus negative influence needs further research.

Our results showed that positive message orientation, that is reading about positive versus negative user experiences of using a robot, had positive effects on participants’ positive attitude toward assistive robots (H2.1), perceived robot usefulness (H2.2), and perceived robot use self-efficacy (H2.3). These results are in line with the two-route mechanism of persuasive information which in addition to the messenger highlights the relevancy of content of the message [41]. Hence, it seems that individuals’ perception of assistive robots at work can be positively influenced by exposing them to positive user experiences of other professionals in the same field. It should be also noted, however, that although our results highlight the positive influences, social influence could also be negative. Learning that other peoples’ user experiences have been negative may also inhibit one’s own perceptions of assistive robots.

In work life context, orders, or requests to use novel technology typically come from a higher authority rather than a peer colleague. Based on our results, it seems that familiar and unfamiliar colleagues may however have a significant role in what kind of perceptions individuals have of using robots to help with work tasks. In particular, being exposed to positive user experiences of a person working in the same field could help promote acceptance of assistive robots at workplaces. Promoting acceptance is in turn crucial for successful implementation of assistive robot technologies at work and human–robot-interactions. The effects found in our study’s variance analyses are small but should not be ignored because they suggest that perceptions of assistive robots at work are formed contextually, using the knowledge of others’ user experiences.

Furthermore, there are individual differences related to perceptions of assistive robots at work. Controlling for these factors highlighted our main result that social influence has a role in the perceptions of assistive robots at work. Perceived robot usefulness, positive attitude toward assistive robots, and perceived robot use self-efficacy were influenced more by the message (positivity) than the messenger (closeness) attribute. This suggests that especially positive accounts from other people might help in strengthening people’s positive perceptions of assistive robots at work. Personal experiences with robots could influence perceptions more significantly in the long term, but as advanced robot technology is not yet available for everyone it is critical to consider other influencing factors that play a role in acceptance and potential avoidance or rejection before actual personal encounters.

In addition to main findings, our results revealed individual differences regarding predictors of positive attitude toward assistive robots, perceived robot usefulness, and perceived robot use self-efficacy. The two factors positively associated with positive attitude toward assistive robots, perceived robot usefulness, and perceived robot use self-efficacy were openness to experiences and perceived robot suitability to one’s occupational field. These findings are understandable from the perspective that perceiving robots as fitting to own field may reflect that one can already imagine some suitable tasks for robots, which further eases the evaluation. However, future studies can continue to assess this association in more detail as there may be another direction, which is that one’s favorable perceptions of robots may also result in thinking that robots suit one’s field. Those expressing openness to experiences tended to perceive assistive robots favorably. This supports the recent finding of those high in openness to experiences tending to perceive human–robot interaction positively [35]. Potentially, assistive robots hold novelty value for those generally curious to experience use of new technologies. To establish its role in more detail, future studies can assess the role of openness to experiences over time when assistive robots are not as new, thus when the potential novelty effect [61, 62] has decreased.

Moreover, we found that agreeableness was associated positively with positive attitude toward assistive robots and perceived robot usefulness. Female gender was associated negatively with perceived robot usefulness. The importance of sociodemographic factors has been stated previously [30, 31], and researchers should continue examining their role in technology adoption. Conscientiousness and neuroticism were associated with perceived robot use self-efficacy. Similar associations have been found in previous research [36].

4.1 Limitations

Despite its strength in experimental design and relatively large sample size, our study has some limitations. Our study may be limited to traditional limitations of survey methods (e.g., tendency to overuse the positive side of Likert-scales, avoiding the negative answer options). The non-probability data sample of adults from the U.S. was geographically widespread but restricted to one country. Cross-sectional study design should be extended to longitudinal design and analyses to yield more robust conclusions. Positive attitude toward assistive robots and perceived robot suitability to one’s occupational field were measured with single items, which must be recognized as a potential limitation when interpreting the results. The effects of the manipulated factors in the experiment were statistically significant, but according to the variance analyses their effect sizes (η2 and ω2) were small particularly for the effect of a colleague. Therefore, more onsite research is needed to establish what effects learning about a colleague’s experiences has on an individual’s perceptions of assistive robots. It is possible that the closeness with colleagues varies among people and imagining a colleague does not necessarily bring a close person to everyone’s mind, which can be seen as a limitation in our experiment design. The experiment did not involve a control group, which challenges the interpretation of effectiveness of the experiment. In the experiment, a positive message was compared with a negative one: adding a third group (neutral) could have helped to achieve more nuanced results. Finally, no other information was given about the assistive robot or its functionalities or intended task other than what was stated in the written scenario. Thus, participants’ perceptions about the robot may have varied, which should be considered as a potential confounding factor when interpreting our results.

5 Conclusions

In this study, we examined study participants’ reactions to other peoples’ experiences of using assistive robots at work, using hypothetical scenarios. Discovering own colleague’s or unfamiliar colleague’s positive experiences of using assistive robots improved participants’ positive attitude toward assistive robots, and increased perceived robot usefulness, and perceived robot use self-efficacy. This indicates that perceptions of robots are formed contextually, using the knowledge of other’s user experiences. Furthermore, we observed that perceived robot suitability to one’s occupational field and openness to experiences were positively associated with positive attitude toward assistive robots, perceived robot usefulness, and perceived robot use self-efficacy, highlighting that perceptions toward robots depend on individual factors as well. This is one of the first studies to provide experimental evidence on the role of social influence of other humans on the perceptions of assistive robots at work. Instead of social norms previously studied in technology acceptance, our study focused on the information sharing aspect of social influence in the form of relayed user experiences. Communicating other peoples’ positive learning experiences of using assistive robots at work could help promoting acceptance of assistive robots at workplaces.

Data Availability

Data will be made available via Finnish Social Science Data Archive in 2023 (FSD3714 Robots & Us, Sentiment Survey: United States 2020, https://www.fsd.tuni.fi/en/).

References

Makridakis S (2017) The forthcoming artificial intelligence (AI) revolution: its impact on society and firms. Futures 90:46–60. https://doi.org/10.1016/j.futures.2017.03.006

Müller VC, Bostrom N (2016) Future progress in artificial intelligence: a survey of expert opinion. In: Müller VC (ed) Fundamental issues of artificial intelligence. Springer, Berlin, pp 555–572

Wang W, Siau K (2019) Artificial intelligence, machine learning, automation, robotics, future of work and future of humanity: a review and research agenda. J Database Manag 30(1):61–79. https://doi.org/10.4018/JDM.2019010104

Arntz M, Gregory T, Zierahn U (2016) The risk of automation for jobs in OECD countries: a comparative analysis (OECD Social, Employment and Migration Working Papers No. 189). https://doi.org/10.1787/5jlz9h56dvq7-en

Frey CB, Osborne MA (2017) The future of employment: How susceptible are jobs to computerisation? Technol Forecast Soc Chang 114:254–280. https://doi.org/10.1016/j.techfore.2016.08.019

Savela N, Turja T, Oksanen A (2018) Social acceptance of robots in different occupational fields: a systematic literature review. Int J Soc Robot 10:493–502. https://doi.org/10.1007/s12369-017-0452-5

Venkatesh V, Morris MG, Davis GB, Davis FD (2003) User acceptance of information technology: toward a unified view. MIS Q 27(3):425–478. https://doi.org/10.2307/30036540

Cialdini RB, Goldstein NJ (2004) Social influence: compliance and conformity. Annu Rev Psychol 55:591–621. https://doi.org/10.1146/annurev.psych.55.090902.142015

Cialdini RB, Trost MR (1998) Social influence: social norms, conformity and compliance. In: Gilbert DT, Fiske ST, Lindzey G (eds) Handbook of social psychology. McGraw-Hill, New York, pp 151–192

Jones EE (1998) Major developments in five decades of social psychology. In: Gilbert DT, Fiske ST, Lindzey G (eds) Handbook of social psychology. McGraw-Hill, New York, pp 3–57

Heerink M, Kröse B, Evers V, Wielinga B (2010) Assessing acceptance of assistive social agent technology by older adults: the Almere model. Int J Soc Robot 2(4):361–375. https://doi.org/10.1007/s12369-010-0068-5

Im I, Hong S, Kang MS (2011) An international comparison of technology adoption: testing the UTAUT model. Inf Manag 48(1):1–8. https://doi.org/10.1016/j.im.2010.09.001

Venkatesh V, Bala H (2008) Technology acceptance model 3 and a research agenda on interventions. Decis Sci 39(2):273–315. https://doi.org/10.1111/j.1540-5915.2008.00192.x

Davis FD (1989) Perceived usefulness, perceived ease of use, and user acceptance of information technology. MIS Q 13(3):319–340. https://doi.org/10.2307/249008

Haddock G, Maio GR (2015) Attitudes. In: Hewstone M, Stroebe W, Jonas K (eds) Introduction to social psychology. Wiley, Hoboken

Salancik GR, Pfeffer J (1978) A social information processing approach to job attitudes and task design. Adm Sci Q 23(2):224–253. https://doi.org/10.2307/2392563

Yang HD, Yoo Y (2004) It’s all about attitude: revisiting the technology acceptance model. Decis Support Syst 38(1):19–31. https://doi.org/10.1016/S0167-9236(03)00062-9

Rice RE, Aydin C (1991) Attitudes toward new organizational technology: network proximity as a mechanism for social information processing. Adm Sci Q 36(2):219–244. https://doi.org/10.2307/2393354

Venkatesh V, Davis FD (2000) A theoretical extension of the technology acceptance model: four longitudinal field studies. Manag Sci 46(2):186–204. https://doi.org/10.1287/mnsc.46.2.186.11926

Bandura A (1986) Social foundations of thought and action: A social cognitive theory. Prentice-Hall, Hoboken

Bandura A (1997) Self-efficacy: the exercise of control. Freeman, New York

Maddux JE (2002) Self-efficacy: the power of believing you can. In: Snyder CR, Lopez SJ (eds) Handbook of positive psychology. Oxford University Press, Oxford, pp 277–287

Igbaria M, Iivari J (1995) The effects of self-efficacy on computer usage. Omega 23(6):587–605. https://doi.org/10.1016/0305-0483(95)00035-6

Teo T (2009) Modelling technology acceptance in education: a study of pre-service teachers. Comput Educ 52(2):302–312. https://doi.org/10.1016/j.compedu.2008.08.006

Ghazali AS, Ham J, Barakova E, Markopoulos P (2020) Persuasive robots acceptance model (PRAM): roles of social responses within the acceptance model of persuasive robots. Int J of Soc Robot 12:1075–1092. https://doi.org/10.1007/s12369-019-00611-1

Venkatesh V, Thong JY, Xu X (2012) Consumer acceptance and use of information technology: extending the unified theory of acceptance and use of technology. MIS Q. https://doi.org/10.2307/41410412

Turja T, Aaltonen I, Taipale S, Oksanen A (2020) Robot acceptance model for care (RAM-care): a principled approach to the intention to use care robots. Inf Manag 57(5):103220. https://doi.org/10.1016/j.im.2019.103220

Liu B, Tetteroo D, Markopoulos P (2022) A systematic review of experimental work on persuasive social robots. Int J Soc Robot 14(6):1339–1378. https://doi.org/10.1007/s12369-022-00870-5

De Graaf MM, Allouch SB, Klamer T (2015) Sharing a life with Harvey: exploring the acceptance of and relationship-building with a social robot. Comput Hum Behav 43:1–14. https://doi.org/10.1016/j.chb.2014.10.030

Gnambs T, Appel M (2019) Are robots becoming unpopular? Changes in attitudes towards autonomous robotic systems in Europe. Comput Hum Behav 93:53–61. https://doi.org/10.1016/j.chb.2018.11.045

Flandorfer P (2012) Population ageing and socially assistive robots for elderly persons: the importance of sociodemographic factors for user acceptance. Int J Popul Res. https://doi.org/10.1155/2012/829835

Santamaria T, Nathan-Roberts D (2017) Personality measurement and design in human–robot interaction: a systematic and critical review. Proc Hum Fact Ergon Soc Ann Meet 61(1):853–857

Robert L (2018) Personality in the human–robot interaction literature: a review and brief critique. In: Proceedings of the 24th Americas Conference on Information Systems, 16–18. https://ssrn.com/abstract=3308191

Robert L, Alahmad R, Esterwood C, Kim S, You S, Zhang Q (2020) A review of personality in human-robot interactions. Found Trends Inf Syst 4(2):107–212. https://doi.org/10.1561/2900000018

Rossi S, Conti D, Garramone F, Santangelo G, Staffa M, Varrasi S, Di Nuovo A (2020) The role of personality factors and empathy in the acceptance and performance of a social robot for psychometric evaluations. Robotics 9(2):39. https://doi.org/10.3390/robotics9020039

Latikka R, Savela N, Koivula A, Oksanen A (2021) Attitudes toward robots as equipment and coworkers and the impact of robot autonomy level. Int J of Soc Robot 13:1747–1759. https://doi.org/10.1007/s12369-020-00743-9

Deutsch M, Gerard HB (1955) A study of normative and informational social influences upon individual judgment. J Abnorm Soc Psychol 51(3):629–636. https://doi.org/10.1037/h0046408

Kaplan MF, Miller CE (1987) Group decision making and normative versus informational influence: effects of type of issue and assigned decision rule. J Pers Soc Psychol 53(2):306–313. https://doi.org/10.1037/0022-3514.53.2.306

Paek HJ (2009) Differential effects of different peers: further evidence of the peer proximity thesis in perceived peer influence on college students’ smoking. J Commun 59(3):434–455. https://doi.org/10.1111/j.1460-2466.2009.01423.x

Paek HJ, Gunther AC (2007) How peer proximity moderates indirect media influence on adolescent smoking. Commun Res 34(4):407–432. https://doi.org/10.1177/0093650207302785

Petty RE, Cacioppo JT, Schumann D (1983) Central and peripheral routes to advertising effectiveness: the moderating role of involvement. J Consum Res 10(2):135–146. https://doi.org/10.1086/208954

Bhukya R, Paul J (2023) Social influence research in consumer behavior: What we learned and what we need to learn?–A hybrid systematic literature review. J Bus Res 162:113870. https://doi.org/10.1016/j.jbusres.2023.113870

Oksanen A, Savela N, Latikka R (2020) Robots and us: social psychological aspects of the social interaction between humans and robots. Retrieved from https://osf.io/awdyf

Kennedy R, Clifford S, Burleigh T, Waggoner PD, Jewell R, Winter NJ (2020) The shape of and solutions to the MTurk quality crisis. Polit Sci Res Methods 8(4):614–629. https://doi.org/10.1017/psrm.2020.6

Chandler J, Mueller P, Paolacci G (2014) Nonnaïveté among Amazon mechanical turk workers: consequences and solutions for behavioral researchers. Behav Res Methods 46(1):112–130. https://doi.org/10.3758/s13428-013-0365-7

Atzmüller C, Steiner PM (2010) Experimental vignette studies in survey research. Methodology 6:128–138. https://doi.org/10.1027/1614-2241/a000014

Eurobarometer (2015) Special Eurobarometer 427 Autonomous systems. European Commission. https://ec.europa.eu/commfrontoffice/publicopinion/archives/ebs/ebs_427_en.pdf

Davis FD, Bagozzi RP, Warshaw PR (1989) User acceptance of computer technology: a comparison of two theoretical models. Manage Sci 35(8):983–1003. https://doi.org/10.1287/mnsc.35.8.982

Latikka R, Turja T, Oksanen A (2019) Self-efficacy and acceptance of robots. Comput Hum Behav 93:157–163. https://doi.org/10.1016/j.chb.2018.12.017

Turja T, Rantanen T, Oksanen A (2019) Robot use self-efficacy in healthcare work (RUSH): development and validation of a new measure. AI & Soc 34:137–143. https://doi.org/10.1007/s00146-017-0751-2

Lang FR, John D, Lüdtke O, Schupp J, Wagner GG (2011) Short assessment of the big five: robust across-survey methods except telephone interviewing. Behav Res Methods 43:548–567. https://doi.org/10.3758/s13428-011-0066-z

Brown MB, Forsythe AB (1974) Robust tests for the equality 1 of variances. J Am Stat Assoc 69:364–367. https://doi.org/10.1080/01621459.1974.10482955

Fisher RA (1993) Statistical methods, experimental design, and scientific inference. Oxford University Press, Oxford

Dinno A (2015) Nonparametric pairwise multiple comparisons in independent groups using Dunn’s test. Stand Genomic Sci 15(1):292–300. https://doi.org/10.1177/1536867X1501500117

Games PA, Howell JF (1976) Pairwise multiple comparison procedures with unequal n’s and/or variances: a Monte Carlo study. J Educ Stat 1(2):113–125. https://doi.org/10.3102/10769986001002113

Abdi H, Williams LJ (2010) Tukey’s honestly significant difference (HSD) test. In: Salkind N (ed) Encyclopedia of research design. Sage, Thousand Oaks

Pierce CA, Block RA, Aguinis H (2004) Cautionary note on reporting eta-squared values from multifactor ANOVA designs. Educ Psychol Measur 64(6):916–924. https://doi.org/10.1177/0013164404264848

Kutner MH, Nachtsheim CJ, Neter J, Li W (2005) Applied linear statistical models. McGraw-Hill, New York

Tajfel H (1970) Experiments in intergroup discrimination. Sci Am 223(5):96–103

Tajfel H, Billig MG, Bundy RP, Flament C (1971) Social categorization and intergroup behaviour. Eur J Soc Psychol 1(2):149–178. https://doi.org/10.1002/ejsp.2420010202

Rogers EM (1995) Diffusion of innovations. Free Press, Mumbai

Sung J, Christensen HI, Grinter RE (2009) Robots in the wild: understanding long-term use. In: Proceedings of the 4th ACM/IEEE international conference on human–robot interaction 45–52. https://doi.org/10.1145/1514095.1514106

Funding

Open access funding provided by Tampere University including Tampere University Hospital, Tampere University of Applied Sciences (TUNI). This work was supported by the Finnish Cultural Foundation (Robots and Us Project, 2018–2020, PIs Jari Hietanen, Atte Oksanen, and Veikko Sariola) and Kone Foundation (UrbanAI project, 2021–2023, PI Atte Oksanen, Grant 202011325).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors do not have a conflict of interest to declare.

Ethical Approval

The Academic Ethics Committee of the Tampere Region stated prior data collection that the research project did not include any ethical problems.

Consent to Participate

Participation in the study was completely voluntary and participants were informed about their opportunity to withdraw from the study.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Latikka, R., Savela, N. & Oksanen, A. Perceptions of Assistive Robots at Work: An Experimental Approach to Social Influence. Int J of Soc Robotics 15, 1543–1555 (2023). https://doi.org/10.1007/s12369-023-01046-5

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12369-023-01046-5