Abstract

The design of artificial empathy is one of the most essential issues in social robotics. This is because empathic interactions with ordinary people are needed to introduce robots into our society. Several attempts have been made for specific situations. However, such attempts have provided several limitations; thus, diminishing authenticity. The present article proposes “affective developmental robotics (hereafter, ADR),” which provides more authentic artificial empathy based on the concept of cognitive developmental robotics (hereafter, CDR). First, the evolution and development of empathy as revealed in neuroscience and biobehavioral studies are reviewed, moving from emotional contagion to envy and schadenfreude. These terms are then reconsidered from the ADR/CDR viewpoint, particularly along the developmental trajectory of self-other cognition. Next, a conceptual model of artificial empathy is proposed based on an ADR/CDR viewpoint and discussed with respect to several existing studies. Finally, a general discussion and proposals for addressing future issues are given.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Empathic interactions among people are important for realizing true communication. These interactions are even more important in the case of social robots, which are expected to soon emerge throughout society. The importance of “affectivity” in human robot interaction (hereafter, HRI) has been recently addressed in a brief survey from the viewpoint of affective computing [41]. Several attempts have been made to address specific contexts (e.g., [26] for survey) in which a designer specifies how to manifest empathic behaviors towards humans, and, therefore, understand that capabilities regarding empathic interaction seem limited and difficult to extend (generalize) to different contexts.

Based on views from developmental robotics [4, 29], empathic behaviors are expected to be learned through social interactions with humans. Asada et al. [6] discussed the importance of “artificial sympathy” from a viewpoint of CDR [4]. However, such work has not been adequately precise from a neuroscience and biobehavioral perspective. Therefore, the present paper proposes “affective developmental robotics” (hereafter, ADR) in order to better understand affective developmental processes through synthetic and constructive approaches, especially regarding a more authentic form of artificial empathy.

The rest of the article is organized as follows. The next section provides a review of neuroscience and biobehavioral studies assessing the evolution and development of empathy. This begins with a trajectory from emotional contagion to sympathy and compassion, via emotional and cognitive empathy, and ending with envy and schadenfreude. Section 3 introduces ADR with a reconsideration of these terms from an ADR/CDR perspective, particularly along the developmental trajectory of self-other cognition. Section 4 provides a conceptual model of artificial empathy based on an ADR/CDR perspective. This model is then discussed in terms of existing studies in Sect. 5. Finally, current implications and future research directions are discussed.

2 Evolution and Development of Empathy

Asada et al. [6] attempted to define “empathy” and “sympathy” to better clarify an approach to designing artificial sympathy. This was done with the expectation of better understanding these terms since “empathy” and “sympathy” are often mistaken for each other. However, their definitions are not precise and do not seem to be supported by neuroscience and biobehavioral studies. Thus, we begin our review by assessing definitions of empathy and sympathy within these disciplines in order to more clearly understand these terms from an evolutionary and developmental perspective. This added precision should provide a more precise foundation for the creation of artificial empathy.

We adhere to a definition of empathy based on reviews from neuroscience perspectives that include ontogeny, phylogeny, brain mechanisms, context, and psychopathology as outlined by Gonzalez-Liencres et al. [17]. The relevant points are as follows:

-

The manifold facets of empathy are explored in neuroscience from simple emotional contagion to higher cognitive perspective-taking.

-

A distinct neural network of empathy comprises both phylogenetically older limbic structures and neocortical brain areas.

-

Neuropeptides such as oxytocin and vasopressin as well as opioidergic substances play a role in modulating empathy.

The first two points seem to be related; that is, emotional contagion is mainly based on phylogenetically older limbic structures, while higher cognitive perspective-taking is based on neocortical brain areas. Neuromodulation may amplify (reduce) levels of empathy both positively and negatively.

A narrow definition of empathy is simply the ability to form an embodied representation of another’s emotional state while at the same time being aware of the causal mechanism that induced that emotional state [17]. This suggests that the empathizer has interoceptive awareness of his or her own bodily states and is able to distinguish between the self and other, which is a key aspect of the following definitions of empathy-related terms from an evolutionary perspective.

2.1 Emotional Contagion

Emotional contagion is an evolutionary precursor that enables animals to share their emotional states. However, animals are unable to understand what aroused an emotional state in another. An example is an experiment with mice where one mouse (temporally called “A”) observes another mouse receiving an electric shock accompanied by a tone. Eventually, A freezes in response to the tone even though A has never experienced the shock [8]. Here, A’s freezing behavior is triggered by its emotional reaction and might be interpreted as a sign of emotional contagion.

In this sense, emotional contagion seems automatic, unconscious, and fundamental for higher level empathy. De Waal [11] proposed the evolutionary process of empathy in parallel with that of imitation (see Fig. 1) starting from emotional contagion and motor mimicry. Both motor mimicry and emotional contagion are based on a type of matching referred to as perception-action matching (PAM). Beyond the precise definitions of other terms, motor mimicry needs a sort of resonance mechanism from the physical body that supplies a fundamental structure for emotional contagion. Actually, people who are more empathic have been shown to exhibit the chameleon effectFootnote 1 to a greater extent than those who are less empathic [7].

The Russian doll model of empathy and imitation (adopted from [11])

2.2 Emotional and Cognitive Empathy

Both emotional and cognitive empathy (hereafter, EE and CE) occur only in animals with self-awareness such as primates, elephants, and dolphins. Neural representations for such complex emotions and self-awareness are localized in the anterior cingulate cortex and the anterior insula [9]. The differences between emotional and cognitive empathy are summarized as follows.

-

Emotional empathy (EE):

-

an older phylogenetic trait than cognitive empathy

-

allows individuals to form representation of others’ feelings by sharing these feelings through embodied simulation, a process that is triggered by emotional contagion.

-

-

Cognitive empathy (CE):

Compared to emotional contagion that does not require reasoning about the cause of aroused emotions in others, both EE and CE require a distinction between one’s own and others’ mental states and to form a representation of one’s own embodied emotions. The later styles of EE and CE do not necessarily require that an observer’s emotional state match the observed state. Such states can be viewed as sympathy and compassion, which are explained in the next section.

2.3 Sympathy/Compassion and Envy/Schadenfreude

Sympathy and compassion seem similar to empathy in terms of emotional states, but different in terms of responses produced in reference to others’ emotional states. Both require the ability to form representations of others’ emotions, even though the emotion is not necessarily shared; however, in empathy, the emotional states are synchronized [16]. This implies that sympathy and compassion may require the control of one’s own emotions in addition to this self-other discrimination.

More powerful control of one’s own emotions can be observed in envy and schadenfreude, which describe feelings opposite to another’s emotional state and different from sympathy and compassion. Envy and schadenfreude evolved in response to selection pressures related to social coherence among early hunter-gatherers [17].

2.4 The Relationships Among Terms

Figure 2 shows a schematic depiction of the terminology used in the context of empathy thus far. The horizontal axis indicates the “conscious level” starting from “unconscious (left-most)” to “conscious with self-other distinction (right-most).” The vertical axis indicates “physical/motor (bottom)” and “emotional/mental (top)” contrasts. Generally, these axes show discrete levels such as “conscious/unconscious” or “physical/mental.” However, terminology in the context of empathy could be distributed in the zones where it is not always easy to discriminate these dichotomies. In addition, there are two points to be mentioned:

-

In this space, the location indicates the relative weight between both dichotomies, and the arrow to the left (the top) implies that the conscious (mental) level includes the unconscious (physical) one. In other words, the conscious (mental) level exists on the unconscious (physical) level but not vice versa.

-

The direction from left (bottom) to right (top) implies the evolutionary process, and the developmental process, if “ontogeny recapitulates phylogeny.” Therefore, a whole story of empathy follows a gentle slope from the bottom-left to the top-right.

3 Affective Developmental Robotics

Asada et al. have advocated CDR [4, 5], supposing that the development of empathy could be a part of CDR. Actually, one survey [4] introduced a study of empathic development [54] as an example of CDR. For our purposes, we will rephrase a part of CDR as affective developmental robotics (hereafter, ADR).Footnote 2 Therefore, ADR just follows the approach of CDR, particularly focusing on affective development. First, we give a brief overview of ADR following CDR and then discuss how to approach issues of empathic development.

3.1 Key Concepts of ADR

Based on assumptions of CDR, ADR can be stated as follows: affective developmental robotics aims at understanding human affective developmental processes by synthetic or constructive approaches. Its core idea is “physical embodiment,” and more importantly, “social interaction” that enables information structuring through interactions with the environment. This includes other agents, and affective development is thought to connect both seamlessly.

Roughly speaking, the developmental process consists of two phases: individual development at an early stage and social development through an interaction between individuals at a later stage. In the past, the former has been related mainly to neuroscience (internal mechanism) and the latter to cognitive science and developmental psychology (behavior observation). Nowadays, both sides approach each other: the former has gradually instituted imaging studies assessing social interactions and the latter has also included neuroscientific approaches. However, there is still a gap between these approaches owing to differences in the granularity of targets addressed. ADR aims not simply at filling the gap between the two but, more challengingly, at building a paradigm that provides a new understanding of how we can design humanoids that are symbiotic and empathic with us. The summary of this goal is as follows:

-

A:

construction of a computational model of affective development

-

(1)

hypothesis generation: proposal of a computational model or hypothesis based on knowledge from existing disciplines

-

(2)

computer simulation: the simulation of the process is difficult to implement with real robots (e.g., considering physical body growth)

-

(3)

hypothesis verification with real agents (humans, animals, and robots), then go to (1).

-

(1)

-

B:

offer new means or data to better understand the human developmental process \(\rightarrow \) mutual feedback with A

-

(1)

measurement of brain activity by imaging methods

-

(2)

verification using human subjects or animals

-

(3)

providing a robot as a reliable reproduction tool in (psychological) experiments

-

(1)

3.2 Relationship in Development Between Self-Other Cognition and Empathy

Self-other cognition is one of the most fundamental and essential issues in ADR/CDR. Particularly, in ADR,

-

(1)

the relationship between understanding others’ minds and the vicarious sharing of emotions is a basic issue in human evolution [48],

-

(2)

the development of self-other discrimination promotes vicariousness, and

-

(3)

the capability of metacognition realizes a kind of vicariousness, that is, an imagination of the self as others (emotion control).

A typical example of (3) can be observed in a situation where we enjoy sad music [22, 23]. The objective (virtualized) self perceives sad music as sad while the subjective self feels pleasant emotion by listening to this music. This seems to be a form of emotion control by metacognition of the self as others. The capability of emotion control could be gradually acquired along the developmental process of self-other cognition, starting from no discrimination between the self and non-self, including objects. Therefore, the development of self-other cognition accompanies the development of emotion control, which consequently generates the various emotional states mentioned in Sect. 2.4.

3.3 Development of Self-Other Cognition

Figure 3 shows the developmental process of establishing the concept of the self and other(s), partially following Neisser’s definition of the “self” [37]. The term “synchronization” is used to explain how this concept develops through interactions with the external world, including other agents. We suppose three stages of self-development that are actually seamlessly connected.

The first stage is a period when the most fundamental concept of the self sprouts through the physical interaction with objects in the environment. At this stage, synchronization with objects (more generally, environment), through rhythmic motions such as beating, hitting, knocking, and reaching behavior are observed. Tuning and predicting synchronization are the main activities of the agent. If completely synchronized, the phase is locked (phase difference is zero), and both the agent and the object are mutually entrained in a synchronized state. In this phase, we may say the agent has its own representation called the “ecological self” owing much to Gibsonian psychology, which claims that infants can receive information directly from the environment due to their sensory organs being tuned to certain types of structural regularities.Footnote 3 Neural oscillation might be a strong candidate for this fundamental mechanism that enables such synchronization.

The second stage is a period when self-other discrimination starts to be supported by the mirror neuron system (hereafter, MNS) infrastructure inside and caregivers’ scaffolding from the outside. During the early period of this stage, infants regard caregivers’ actions as their own (“like me” hypothesis [30]) since a caregiver works as a person who can synchronize with the agent. The caregiver helps the agent consciously, and sometimes unconsciously, in various manners such as motherese [24] or motionese [35]. Such synchronization may be achieved through turn-taking, which includes catching and throwing a ball, passing an object between the caregiver and agent or calling each other. Then, infants gradually discriminate caregivers’ actions as “other” ones (“different from me” hypothesis [19]). This is partially because caregivers help infants’ actions first, then gradually promote their own action control (less help). This is partly explained by the fact that infants’ not-yet-matured sensory and motor systems make it difficult to discriminate between the self and others’ actions at the early period in this stage. During the later period in this stage, an explicit representation of others occurs in the agent while no explicit representation of others has occurred in the first stage, even though the caregiver is interacting with the agent. The phase difference during turn-taking is supposed to be 180 degrees. Due to an explicit representation of others, the agent may have its own self-representation called the “interpersonal self.” At the later stage of this phase, the agent is expected to learn when to inhibit his/her behavior by detecting the phase difference so that turn-taking between the caregiver and the self can occur.

During the above processes, emotional contagion (simple synchronization), emotional and cognitive empathy (more complicated synchronizations), and further, sympathy and compassion (inhibition of synchronization) can be observed and seem to be closely related to self-other cognition.

This learning is extended in two ways. One is recognition, assignment, and switching of roles such as throwing and catching, giving and taking, and calling and hearing. The other is learning to desynchronize from the synchronized state with one person and to start synchronization with another person due to the sudden leave of the first person (passive mode) or any attention given to the second person (active mode). The latter needs active control of synchronization (switching), and this active control facilitates the agent to take a virtual role in make-believe play. At this stage, the target to synchronize is not limited to the person, but also objects. However, it is not the same as the first stage with regard to real objects, but virtualized ones such as a virtualized mobile phone, virtualized food during make-believe play of eating or giving, and so on. If such behavior is observed, we can say that the agent has the representation of a “social self.” More details of the above process are discussed in [3].

During the above processes, more control of emotion, especially imagination capability, may lead to more active sympathy and compassion (inhibition of synchronization), metacognition of the self as others, and envy and schadenfreude as socially developed emotions.

3.4 Conceptual Architecture for Self-Other Cognitive Development

According to the developmental process shown in Figs. 3, 4 indicates the mechanisms corresponding to these three stages. The common structure is a mechanism of “entrainment.” The target with which the agent harmonizes (synchronizes) may change from objects to others, and along these changes, more substructures are added to the synchronization system in order to obtain higher concepts and control of self/other cognition.

In the first stage, a simple synchronization with objects is realized, while in the second stage, a caregiver initiates the synchronization. A representation of the agent (agency) is gradually established, and a substructure of inhibition is added for turn-taking. Finally, more synchronization control skill is added to switch with which the target the agent is harmonizing. Imaginary actions toward objects could be realized based on the sophisticated skill of switching. These substructures are not added but expected to emerge from previous stages.

4 Toward Artificial Empathy

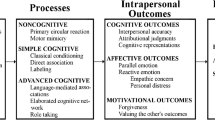

The above development of self/other cognition could parallel empathic development. Figure 5 indicates this parallelism of empathic development with ADR/CDR. The leftmost column shows the correspondence between an ADR/ CDR flow and the development of empathy by projecting the terminology in Fig. 2 onto the Russian doll model in Fig. 1. Here, the downward arrow indicates the axis of the development of self-other cognition (therefore, the Russian doll is turned upside-down to follow the time course). PAM in Fig. 1 is replaced by “physical embodiment,” which connects motor mimicry and emotional contagion. In other words, motor resonance by mimicry induces embodied emotional representation (i.e., emotional contagion).

The next column in Fig. 5 shows seven points along the developmental process of self/other cognition, starting from no discrimination between the self and others with three stages of self-development in the third column of Fig. 3. The first four points correspond to emotional contagion: EE and CE. In cases of sympathy and compassion, one’s emotional state is not synchronized with that of others’, but different emotional states are induced. In the case of listening to sad music [22, 23], the listener’s objective (virtualized) self perceives sad music as sad, while the subjective self feels pleasant emotion. Furthermore, the concept of self-other discrimination can be extended to the in-group/out-group concept, as well as higher order emotional states such as envy and schadenfreude.

The rightmost column in Fig. 5 shows the list of requirements or functions. These are supposed to trigger (promote) the development of both empathy and self-other discrimination when designing the above process as an artificial system.

4.1 Motor Mimicry and Emotional Contagion

As mentioned in Sect. 2.1, emotional contagion is automatic (unconscious), and its design issue is how to embed such a structure into an artificial system. One suggestion is that motor mimicry induces emotional contagion. Both mimicry and emotional contagion are based on perception-action matching (physical embodiment) either to develop empathy or self-other cognition.

The idea of “physical embodiment” is not new. In fact, Roger Sperry argued that the perception-action cycle is the fundamental logic of the nervous system [50]. Perception and action processes are functionally intertwined; perception is a means to action and vice versa.

The discovery of mirror neurons in the ventral premotor and parietal cortices of the macaque monkey [14] provides neurophysiological evidence for a direct matching between action perception and action production [43]. MNS seems closely related to motor mimicry since it recognizes an action performed by another, and produces the same action, which is referred to as motor resonance that could induce emotional contagion. Furthermore, this relates to self-other discrimination, action understanding, joint attention, imitation, and theory of mind (a detailed discussion is given in [3]).

In humans, motor resonance in the premotor and posterior parietal cortices occurs when participants observe or produce goal directed actions [18]. This type of motor resonance system seems fairly hardwired or is at least functional very early in life [49].

4.2 Emotional and Cognitive Empathy

A major part of empathy is both emotional and cognitive, each of which seems to follow a different pathway of development. Therefore, each has different roles and are located within different brain regions. Shamay-Tsoory et al. [47] found that patients with lesions in the ventromedial prefrontal cortex (VMPFC) display deficits in cognitive empathy and theory of mind (ToM), while patients with lesions in the inferior frontal gyrus (IFG) show impaired emotional empathy and emotion recognition. For instance, Brodmann Area 44 (in the frontal cortex, anterior to the premotor cortex) was found to be crucial for emotional empathy, the same area that has been previously identified as part of the MNS in humans [42]. Shamay-Tsoory et al. [47] summarized the differences between these two separate systems (see Table 1).

Even though they appear as separate systems, emotional empathy (EE) and cognitive empathy (CE) seem closely related to each other. Smith [48] proposed seven potential models for the relationship between the two:

-

(1)

CE and EE as inseparable aspects of a unitary system.

-

(2)

CE and EE as two separate systems.

-

(3)

The EE system as a potential extension of the CE system.

-

(4)

The CE system as a potential extension of the EE system.

-

(5)

The EE system as a potential extension of the CE system with feedback.

-

(6)

The CE system as a potential extension of the EE system with feedback.

-

(7)

CE and EE as two separable, complementary systems.

Models 1 and 2 are two extremes of the relationship between EE and CE, and Model 7 is between the two. If we follow Shamay-Tsoory et al. [47], the two seem to be separate: phylogenetically and developmentally, CE comes after EE. However, what is more important than developmental order is how the two relate to each other because adult humans have both types and combine them. Smith [48] hypothesized that Model 7 is generally reasonable.Footnote 4 Smith also predicted two empathy imbalance disorders and two general empathy disorders:

-

(a)

Cognitive empathy deficit disorder (CEDD), consisting of low CE ability, but high EE sensitivity (part of Autism).

-

(b)

Emotional empathy deficit disorder (EEDD), consisting of low EE sensitivity, but high CE ability (part of Antisocial Personality Disorder).

-

(c)

General empathy deficit disorder (GEDD), consisting of low CE ability and low EE sensitivity (part of Schizoid Personality Disorder).

-

(d)

General empathy surfeit disorder (GESD), consisting of high CE ability and high EE sensitivity (part of Williams Syndrome).

From an ADR/CDR perspective, the following issues should be discussed:

-

EE could be an extension of emotional contagion with more capabilities in terms of self-awareness and self-other cognition.

-

Main issues in designing CE are perspective-taking and theory of mind, which are essential in self-other cognition.

-

Smith’s models and hypotheses are suggestive toward designing a control module of EE sensitivity and CE ability supposing that self-other cognition is well developed, and minimum emotion control is acquired.

4.3 Sympathy/Compassion and Envy/Schadenfreude

EE and CE induced emotional and cognitive states are synchronized with others’ states. How can we differentiate our emotional and cognitive states from those of others? A slight difference may occur in cases of sympathy and compassion. In these cases, we understand another person’s distress, but we do not experience the same emotional state (de-synchronized); instead, we switch our emotional state in order to sympathize, owing to our emotion regulation abilities.

Larger differences may occur differently in these two cases. The first is related to metacognition by which one can observe him/herself from another’s perspective. Therefore, the individual self is separated into two states: the observing and observed self. The former may correspond to the subjective (real) self and the latter the objective (virtualized) self. A typical phenomenon in this case can be seen when enjoying sad music. Sad music is perceived as sad by the objective self, while listening to this music itself is felt as pleasant by the subjective self [22, 23].

Envy and schadenfreude is the second case where perceived emotion synchronized with another’s emotional state is induced first. Afterwards, a different (opposite) felt emotion is evoked depending on the social context determined by in-group/out-group cognition.

The above developmental process toward envy and schadenfreude may suggest the increasing (developing) capability of regulating an emotion (synchronize/de-synchronize with others) that is modulated to the extent that self-other cognition and separation are achieved.

5 ADR/CDR Approaches

There are several design issues related to artificial empathy. Figure 6 shows a conceptual overview of the development of artificial empathy by ADR/CDR approaches following the above arguments. The numbers correspond to the second column of Fig. 5.

A flow from the left to right indicates the direction of evolution and development in terms of self-other cognition and emotion regulation. In order to explicitly indicate a hierarchical structure of empathic development, the Russian doll model shown in Fig. 1, and the leftmost column in Fig. 5, is illustrated in the background. Small circles with curved arrows indicate internal emotional states of the agent starting from no self-other discrimination (1) to completely separated agent representations with different emotional states (7). The orientations of the curved arrows indicate emotional states, and they are synchronized between the self and other (e.g., until EE and CE) (4). The underlying structure needed in emotional contagion, EE and CE is a mechanism of synchronization with the environment, including other individuals with which to harmonize, is shown at the left-bottom of Fig. 6. However, afterwards they can be de-synchronized (different emotions) by emotion regulation capabilities.

Sympathy and compassion are examples of emotional states differentiating between the self and others (5). Intuitively, sympathy appears more EE-dominant while compassion is more CE-dominant. This is because sympathetic concerns seem more emotional, while compassion can be realized after logically understanding others’ states. However, this difference is actually modest since both sympathy and compassion require perception of others’ internal states as well as understanding the relevant cause(s). In addition to the fundamental structure of synchronization, inhibition of harmonization with perceived emotion based on the establishment of agency (self-other discrimination) is needed, as shown in the middle bottom of Fig. 6.

The above discrepancy in empathy between the self and others (de-synchronization) is extended in two ways: internally and externally. The internal extension is as follows: the self-emotion space is divided into two (6); one is subjective (top) and the other is objective (virtualized: bottom). This can be a projection of another person’s emotional state. Perception of an emotional state from the objective self (perceived emotion) seems more CE-dominant since it appears to involve an objective decision, while the feeling itself seems more subjective (felt emotion). The external extension is as follows: both the self and others have their own populations (7); and inside the same group, all members are synchronized. However, they are de-synchronized with members of another group. If two groups are competitive (evolutionarily due to natural selection), hostile emotions to the opponent group may emerge. A group can be regarded as an extended self (or other). In both cases, the capabilities of imagination in the virtualized self (6), and more control over self emotions (7) are needed to facilitate these various emotional states as shown in the top-right of Fig. 6.

Hereafter, we review previous studies, some of which were not categorized as ADR but seem related to the topics discussed here.

5.1 Pioneering Studies for Artificial Emotion

There are two pioneering studies related to artificial emotion. The first one is by Prof. Shigeki Sugano’s group.Footnote 5 The authors built an emotional communication robot, WAMOEBA (Waseda-Ameba, Waseda Artificial Mind On Emotion BAse), to study a robot emotional model, particularly focusing on emotional expression during human-robot interactions [38]. Emotional expression is connected to self-preservation based on self-observing systems and defined hormone parameters.

The second is a series of studies assessing the emotion expressing humanoid robot WE (Waseda Eye). The recent WE-4RII displays very rich facial and gestural expressions based on sophisticated mechatronics and software (e.g., [31, 32]Footnote 6). The creators designed mental dynamics caused by stimuli from the internal and external environment based on an instinctual mental model.

Both sets of studies are pioneering in terms of emotion being linked to self-preservation or instinct, with robots being capable of displaying emotional expressions based on these emotion models. However, it is not clear how a robot can share an emotional state with humans. Since almost all of robot behaviors are explicitly specified by the designer, little space is left for robots to learn or develop the capacity to share their emotional states with humans.

5.2 Emotional Contagion, MNS, and EE

Designing a computational model that can explain the developmental process shown in Fig. 6 is very challenging, and such a model does not yet exist. In the following, we review examples of existing studies from ADR/CDR perspectives.

Emotional contagion and motor mimicry are related to each other via PAM (physical embodiment), and motor resonance seems to have a key role in connecting the two. Mori and Kuniyoshi [34] proposed one of the most fundamental structures for behavior generation based on interactions among many and different components. These are 198 neural oscillators, a muscleskelton system with 198 muscles, and the environment. There are two combinations with the environment, one can be the endometrium in the case of fetal simulations, and the other is the horizontal plane under the force of the Earth’s gravity in the case of neonatal simulation. Oscillatory movements of the fetus or the neonate happen in these external worlds, and self-organization of ordered movements is expected through these interactions. This leads to interactions with other agents through multiple modalities such as vision or audition (motor resonance).

Mimicry is one such interaction that may induce emotional contagion, which links to emotional empathy. In this process, a part of the mirror neuron system (MNS) could be included [47]. Mirror neurons in monkeys only respond to goal oriented actions (actions of transitive verbs) with a visible target, while in the case of humans the MNS seems to also respond to actions of intransitive verbs without any target ([43]). This is still a controversial issue that needs more investigation [1]. One plausible interpretation is as follows. In the case of monkeys, due to higher pressure to survive, goal oriented behavior needs to be established and used early. In contrast, humans owe much to caregivers, such that pressure is reduced; therefore, the MNS works not only for goal oriented behavior but also for behavior without goals. Consequently, much room for learning and structuring for generalization is left, and this leads to more social behavior acquisition and extensions to higher cognitive capabilities.

Nagai et al. proposed a computational model for early MNS development, which originates from immature vision [36]. The model gradually increases the spatiotemporal resolution of a robot’s vision while the robot learns sensorimotor mapping through primal interactions with others. In the early stage of development, the robot interprets all observed actions as equivalent because of lower visual resolution and, thus, associates the non-differentiated observation with motor commands. As vision develops, the robot starts discriminating actions generated by itself from those by others. The initially acquired association is, however, maintained via development, which results in two types of associations: one is between motor commands and self-observation and the other between motor commands and other-observation. Their experiments demonstrate that the model achieves early development of the self-other cognitive system, which enables a robot to imitate others’ actions. Figure 7 shows a model for the emergence of the self-other cognitive system originating from immature vision. Actually, this is not empathic development but behavioral (imitation) development. However, considering the strong link between empathy and imitation, this model can be regarded as the process from 1 to a point between 2 and 3 in Fig. 6.

Different from non-human primates, a human’s MNS can work for non-purposeful actions such as play. Kuri- yama et al. [25] revealed a method for interaction rule learning based on contingency and intrinsic motivation for play. The learner obtains new interaction rules via contact with a caregiver. Such non-purposive mother-infant interactions could play a crucial role in acquiring MNS-like functions and also early imitation capabilities, including mimicry. The chameleon effect could be partially explained by consequences of this learning.

The above studies have not been directly related to emotional states such as pleasure (unpleasant) or arousal (sleep), which are regarded as the most fundamental emotional axes [44]. Assuming that human infants are born with this fundamental form of emotion, how can they have variations in emotional states such as happiness and anger?

In developmental psychology, intuitive parenting is regarded as maternal scaffolding based on which children develop empathy when caregivers mimic or exaggerate the child’s emotional facial expressions [15]. Watanabe et al. [54] modeled human intuitive parenting using a robot that associates a caregiver’s mimicked or exaggerated facial expressions with the robot’s internal state to learn an empathic response. The internal state space and facial expressions are defined using psychological studies and change dynamically in response to external stimuli. After learning, the robot responds to the caregiver’s internal state by observing human facial expressions. The robot then facially expresses its own internal state if synchronization evokes a response to the caregiver’s internal state.

Figure 8 (left) shows a learning model for a child developing a sense of empathy through the intuitive parenting of its caregiver. When a child undergoes an emotional experience and expresses his/her feelings by changing his/her facial expression, the caregiver empathizes with the child and shows a concomitantly exaggerated facial expression. The child then discovers the relationship between the emotion experienced and the caregiver’s facial expression, learning to mutually associate the emotion and facial expression. The emotion space in this figure is constructed based on the model proposed by Russell [44]. This differentiation process is regarded as developing from emotional contagion to emotional empathy.

Considering the neural substrates related to empathy reported in past studies (e.g., [13, 27, 47]), a draft of the neuroanatomical structure for the above computational model is devised in Fig. 9. The consistency of neural substrates in past studies is not guaranteed since the authors conducted their experiments under different task paradigms and measures. Rather, this structure is intended to give an approximate network structure. During learning, the caregivers’ facial expressions, which the learner happens to encounter during an interaction, are supposed to be processed in the inferior frontal gyrus (IFG) and/or insula and then mapped onto the dorsal anterior cingulate cortex (dACC). The dACC is supposed to maintain the learner’s emotional space that drives facial muscles to express one’s own emotional states. After learning, the corresponding facial expression is immediately driven by the caregiver’s facial expression.

A neuroanatomical structure for the computational model in [54]

Emotional empathy in Fig. 6 is indicated by two circles. We suppose that the top circle corresponds to the learner’s own internal state (amygdala) and the bottom to a reflection (dACC) of the caregiver’s emotional state inferred from his/her facial expression. In this case, both are synchronized; but after the development of envy and schadenfreude, more emotional control could switch this reflection to de-synchronized emotion of others (sympathy and compassion) or the virtualized (objective) self (metacognition). Partial support is obtained by Takahashi et al. [51] who found a correlation between envy and dACC activation in an fMRI study.

5.3 Perspective-Taking, Theory of Mind, and Emotion Control

In addition to the MNS, cognitive empathy requires “perspective-taking and mentalizing” [11], both of which share functions with “theory of mind” [39]. This is another difficult issue for not only empathic development but, more generally, human development.

Early development of perspective-taking can be observed in 24-month old children as visual perspective-taking [33]. Children are at Level 1 when they understand that the content of what they see may differ from what another sees in the same situation. They are at Level 2 when they understand that they and another person may see the same thing simultaneously from different perspectives. Moll and Tomasello found that 24-month old children are at Level 1 while 18-month-olds are not. This implies that there could be a developmental process between these ages [33].

A conventional engineering solution is the 3-D geometric reconstruction of the self, others, and object locations first. From there, the transformation between egocentric and allocentric coordinate systems proceeds. This calibration process needs a precise knowledge of parameters, such as focal length, visual angle, and link parameters, based on which object (others) location and size are estimated. However, it does not seem realistic to estimate these parameters precisely between the ages of 18 and 24 months.

More realistic solutions could be two related ones among which the second one might include the first one. Both share the knowledge what the goal is.

The first one is the accumulation of goal sharing experiences with a caregiver. Imagine a situation of a reaching behavior to get an object. An infant has experience being successful with this movement, but sometimes fails to reach a distant object. In this case, a caregiver may help the infant from the infant’s backside, on his/her side, and in a face-to-face situation. The infant collects these experiences, including views of its own behavior and the caregiver’s. Based on knowledge regarding the same goal, these views are categorized as the same goal behavior just from different views (different perspectives). Circumstantial evidence for view-based recognition can be seen in face cells in the inferior temporal cortex of a monkey brain (Chap. 26 in [40]), which is selectively activated according to facial orientation. Appearance-based vision could be an engineering method for object recognition and spatial perception.Footnote 7 Yoshikawa et al. [55] propose a method of incremental recovery of the demonstrator’s view using a modular neural network. Here, the learner can organize spatial perception for view-based imitation learning with the demonstrator in different positions and orientations. Recent progress in big data processing provides better solutions to this issue.

The second is an approach that equalizes different views based on a value that can be estimated by reinforcement learning. That is, different views have the same value according to the distance to the shared goal by the self and others. Suppose that the observer has already acquired the utilities (state values in a reinforcement learning scheme). Takahashi et al. [52] show that the observer can understand/recognize behaviors shown by a demonstrator based not on a precise object trajectory in allocentric/egocentric coordinate space but rather on an estimated utility transition during the observed behavior. Furthermore, it is shown that the loop of the behavior acquisition and recognition of observed behavior accelerates learning and improves recognition performance. The state value updates can be accelerated by observation without real trial and error, while the learned values enrich the recognition system since they are based on the estimation of state value of the observed behavior. The learning consequence resembles MNS function in the monkey brain (i.e., regarding the different actions (self and other) as the same goal-oriented action).

5.4 Emotion Control, Metacognition, and Envy/Schadenfreude

Emotion control triggers the development from 3 to 4 in Fig. 6: one understands another’s emotional state (synchronize) first and then shifts their own emotional state to be similar but different (de-synchronize). In the figure, sympathy appears EE dominant while compassion is CE dominant, but both include cognitive processes (understanding another’s state). Therefore, different forms of dominance do not seem as significant as shown in the figure.

Generally, two components of metacognition are considered: knowledge about cognition and regulation of cognition [46]. Among four instructional strategies for promoting the construction and acquisition of metacognitive awareness, regulatory skills (self-monitoring) seem related to emotional state 5 in Fig. 6 where a de-synchronized other’s emotional state (the bottom of 5) is internalized as a target (the bottom of 5) to be controlled inside one’s own emotional state. This target represents the self as others (objective or virtualized self), while the subjective self (the top of 6) monitors this objective self. In the case of sad music [22, 23], a cognitive process perceives sad music as sad, which, therefore, seems objective. During this process, simply switching between the self (subjective) and others (objective) in 4 does not emerge; rather, more control power comes from the cortex. The medial frontal cortex (MFC) is supposed to be the neural substrate for social cognition. The anterior rostral region of the MFC (arMFC) maintains roughly three different categories: self-knowledge, person knowledge, and mentalizing [2]. Therefore, a projection from arMFC to the regions in Fig. 9 seem to be needed in order to enable more emotion control for envy and schadenfreude.

5.5 Expressions

Facial and gestural expressions are a very important and indispensable part of artificial empathy. Classical work from WE-4RII shows very rich facial and gestural expressions, and observers evoke the corresponding emotions (same or different) [31, 32]. Although their design concept and technology were excellent, the realism of interactions depends on the skill of the designer.

We need more realistic research platforms (in two ways) as explained by the ADR approach. One is the design of realistic robots with the computational model of affective development. The other includes platforms for emotional interaction studies between an infant and his/her caregiver. For these purposes, Affetto has the realistic appearance of a 1- to 2-year-old child, [20, 21]. Figure 10 shows an example of “Affetto.”

6 Discussion

We have discussed the development of empathy along with that of self-other cognition from a constructive approach (ADR/CDR) perspective. Here, we expect that the ADR/CDR can fill the gap between neuroscience and developmental psychology. However, this approach needs to be developed further. Here are further points for discussion.

We reviewed empathy terminology, and a conceptual model of empathic development has been proposed in terms of self-other discrimination (Fig. 6). The neural architecture of empathic development [54] has been proposed based on existing research showing a lack of consistency due to differences in task designs, contexts, and measuring equipment (Fig. 9). Rather than detailed neural substrates, which might be different depending on the context and the target emotion, we might hypothesize that a whole functional structure comprises a network through which cortical and subcortical areas work together. Since subcortical areas develop earlier than cortical areas, the former are probably at work first, then the second set come online in situations where one may encounter an event. From this perspective, further imaging studies assessing children and behavioral robotic studies, especially focusing on interactions using constructive methods, are needed to reveal the underlying developmental structure.

A variety of hormones and neurochemical compounds participate in the formation and modulation of empathy. Among them, oxytocin (OT) and dopamine (DA) are the most common. Besides detailed explanations, it seems possible that OT and opioid modulate emotional aspects of empathy, whereas DA modulates cognitive aspects of empathy [17]. We might utilize the functional aspects of these modulators to enhance (reduce) EE sensitivity and CE ability in order to characterize empathic disorders [48] with our computational model.

Theory of mind (ToM) and MN activations have been investigated in several imaging studies with different approaches, including written stories and comic strips. This is an important discrepancy as the involvement and/or requirement of language in ToM is debatable. This is because Broca’s area in humans is supposed to be homologous to a similar brain region in monkeys, close to F5, where mirror neurons are found. Studies assessing severe aphasic patients (e.g., [53]) have reported normal ToM processing. This heavily implies that language capacity is not an essential requirement for ToM [1] and probably not for empathy, as well. Therefore, in the conceptual model in Fig. 6, language faculties are not included.

In the computational or robot model mentioned thus far, we have not considered emotional states coming from visceral organs. Damasio and Carvalho [10] state that a lack of homeostasis in the body will trigger adaptive behavior via brain networks, such as attention to a stranger’s next move. This implies that a homeostasis-like structure is needed to design embodied emotional representations. One of the pioneering WAMOEBA studies [38] proposed a robot emotional model that expresses emotional states connected to self-preservation based on self-observing systems and hormone parameters. This system was adaptive toward external stimuli in order to keep bodily feelings stable. Therefore, the best action is sleeping in order to minimize energy consumption unless external stimuli arise. However, animal behavior, especially among humans, is generated not only by this fundamental structure need to survive but more actively by so-called intrinsic motivation [45].

In the machine learning and developmental robotics community, intrinsic motivation has been obtaining increased attention as a driving structure of various behaviors [28]. Interest seems to be in how to formalize intrinsic motivation from a viewpoint of information theory supposing its existence, not caring as to how it develops. The relationship between empathy and intrinsic motivation has yet to been intensively investigated. We might consider a certain structure of intrinsic motivation as a means to develop artificial empathy. Explicit or implicit? That’s an issue to be addressed further.

7 Conclusion

In terms of artificial empathy, we have argued how it can follow a developmental pathway similar to natural empathy. After reviewing terminology in the context of empathic development, a conceptual constructive model for artificial empathy has been proposed. Following are some concluding remarks.

-

(1)

The development of empathy and imitation might be parallel. Emotional contagion, an early style of empathy linked to motor mimicry, is shared with other animals. Emotional contagion extends to emotional empathy, sympathy (compassion), and higher emotions owing mainly to subcortical brain regions (along a developmental time course; a dependency on subcortical areas diminishes).

-

(2)

While under the control of cortical areas, cognitive empathy develops into compassion (sympathy) (along a developmental time course; control projections from cortical areas increases).

-

(3)

ADR has also been proposed, and a conceptual constructive model of empathic development has been devised in parallel with self-other cognitive development. Here, the concept of the self emerges, develops, and divides (emotion control seems to manipulate these components).

-

(4)

Several existing studies regarding ADR/CDR are discussed in the context of empathy and self-other cognitive development, and possible extensions are discussed.

-

(5)

The proposed constructive model is expected to shed new insight on our understanding of empathic development, which can be directly reflected in the design of artificial empathy.

-

(6)

Still, there are several issues in need of attention, and more investigations, including imaging studies with children and behavioral studies with robots, are needed.

-

(7)

One key issue concerns the emergence of intrinsic motivation through various behaviors. Intrinsic motivation’s relationship with empathy during the developmental process is an interesting topic for future research.

Notes

Unconscious mimicry of behavior from an interacting partner.

ADR starts from a part of CDR but is expected to extend beyond the current scope of CDR.

Valerie Gray Hardcastle, A Self Divided:A Review of Self and Consciousness: Multiple Perspectives Frank S. Kessel, Pamela M. Cole, and Dale L. Johnson (Eds.).

Interestingly, female empathy tends towards Model 1 while male empathy towards Model 2 based on behavioral data analysis.

For more detail, visit http://www.sugano.mech.waseda.ac.jp.

Visit http://www.cs.rutgers.edu/~elgammal/classes/cs534/lectures/appearance-based%20vision.pdf as a general reference.

References

Agnew ZK, Bhakoo KK, Puri BK (2007) The human mirror system: a motor resonance theory of mind-reading. Brain Res Rev 54:286–293

Amodio DM, Frith CD (2006) Meeting of minds: the medial frontal cortex and social cognition. Nat Rev Neurosci 7:268–277

Asada M (2011) Can cognitive developmental robotics cause a paradigm shift? In: Krichmar JL, Wagatsuma H (eds) Neuromorphic and brain-based robots. Cambridge University Press, Cambridge, pp 251–273

Asada M, Hosoda K, Kuniyoshi Y, Ishiguro H, Inui T, Yoshikawa Y, Ogino M, Yoshida C (2009) Cognitive developmental robotics: a survey. IEEE Trans Auton Ment Dev 1(1):12–34

Asada M, MacDorman KF, Ishiguro H, Kuniyoshi Y (2001) Cognitive developmental robotics as a new paradigm for the design of humanoid robots. Robotics Auton Syst 37:185–193

Asada M, Nagai Y, Ishihara H (2012) Why not artificial sympathy? In: Proceedings of the international conference on social robotics, pp 278–287

Chartrand TL, Bargh JA (1999) The chameleon effect: the perception-behavior link and social interaction. J Personal Soc Psychol 76(6):839–910

Chen QL, Panksepp JB, Lahvis GP (2009) Empathy is moderated by genetic background in mice. PloS One 4(2):e4387

Craig AD (Bud) (2003) Interoception: the sense of the physiological condition of the body. Curr Opin Neurobiol 13:500–505

Damasio A, Carvalho GB (2013) The nature of feelings: evolutionary and neurobiological origins. Nat Rev Neurosci 14:143–152

de Waal FBM (2008) Putting the altruism back into altruism. Annu Rev Psychol 59:279–300

Edgar JL, Paul ES, Harris L, Penturn S, Nicol CJ (2012) No evidence for emotional empathy in chickens observing familiar adult conspecifics. PloS One 7(2):e31542

Fana Y, Duncana NW, de Greckc M, Northoffa G (2011) Is there a core neural network in empathy? An fMRI based quantitative meta-analysis. Neurosci Biobehav Rev 35:903–911

Gallese V, Fadiga L, Fogassi L, Rizzolatti G (1996) Action recognition in the premotor cortex. Brain 119(2):593–609

Gergely G, Watson JS (1999) Early socio-emotional development: Contingency perception and the social-biofeedback model. In: Rochat P (ed) Early social cognition: understanding others in the first months of life. Lawrence Erlbaum, Mahwah, pp 101–136

Goetz JL, Keltner D, Simon-Thomas E (2010) Compassion: an evolutionary analysis and empirical review. Psychol Bull 136:351–374

Gonzalez-Liencresa C, Shamay-Tsooryc SG, Brünea M (2013) Towards a neuroscience of empathy: ontogeny, phylogeny, brain mechanisms, context and psychopathology. Neurosci Biobehav Rev 37:1537–1548

Grezes J, Armony JL, Rowe J, Passingham RE (2003) Activations related to “mirror” and “canonical” neuron in the human brain: an fmri study. NeuroImage 18:928–937

Inui T (2013) Embodied cognition and autism spectrum disorder (in japanese). Jpn J Occup Ther 47(9):984–987

Ishihara H, Asada M (2014) Five key characteristics for intimate human-robot interaction: Development of upper torso for a child robot ’affetto’. Adv Robotics, (under review)

Ishihara H, Yoshikawa Y, Asada M (2011) Realistic child robot “affetto” for understanding the caregiver-child attachment relationship that guides the child development. In: IEEE international conference on development and learning, and epigenetic robotics (ICDL-EpiRob 2011)

Kawakami A, Furukawa K, Katahira K, Kamiyama K, Okanoya K (2013) Relations between musical structures and perceived and felt emotion. Music Percept 30(4):407–417

Kawakami A, Furukawa K, Katahira K, Okanoya K (2013) Sadmusic induces pleasant emotion. Frontiers Psychol 4:311

Kuhl P, Andruski J, Chistovich I, Chistovich L, Kozhevnikova E, Ryskina V, Stolyarova E, Sundberg U, Lacerda F (1997) Cross-language analysis of phonetic units in language addressed to infants. Science 277:684–686

Kuriyama T, Shibuya T, Harada T, Kuniyoshi Y (2010) Learning interaction rules through compression of sensori-motor causality space. In: Proceedings of the tenth international conference on epigenetic robotics (EpiRob10), pp 57–64

Leite I, Martinho C, Paiva A (2013) Social robots for long-term interaction: a survey. Int J Soc Robotics 5:291–308

Liddell BJ, Brown KJ, Kemp AH, Barton MJ, Das P, Peduto A, Gordon E, Williams LM (2005) A direct brainstem-amygdala-cortical ’alarm’ system for subliminal signals of fear. NeuroImage 24:235–243

Lopes M, Oudeyer P-Y (2010) Guest editorial active learning and intrinsically motivated exploration in robots: advances and challenges. IEEE Trans Auton Ment Dev 2(2):65–69

Lungarella M, Metta G, Pfeifer R, Sandini G (2003) Developmental robotics: a survey. Connect Sci 15(4):151–190

Meltzoff AN (2007) The ‘like me’ framework for recognizing and becoming an intentional agent. Acta Psychologica 124:26–43

Miwa H, Itoh K, Matsumoto M, Zecca M, Takanobu H, Roccella S, Carrozza MC, Dario P, Takanishi A (2004) Effective emotional expressions with emotion expression humanoid robot we-4rii. In: Proceeding of the 2004 IEEE/RSJ international conference on intelligent robot and systems, pp 2203–2208

Miwa H, Okuchi T, Itoh K, Takanobu H, Takanishi A (2003) A new mental model for humanoid robts for humanfriendly communication-introduction of learning system, mood vector and second order equations of emotion. In: Proceeding of the 2003 IEEE international conference on robotics & automation, pp 3588–3593

Moll H, Tomasello M (2006) Level 1 perspective-taking at 24 months of age. Br J Dev Psychol 24:603–613

Mori H, Kuniyoshi Y (2007) A cognitive developmental scenario of transitional motor primitives acquisition. In: Proceedings of the 7th international conference on epigenetic robotics, pp 165–172

Nagai Y, Rohlfing KJ (2009) Computational analysis of motionese toward scaffolding robot action learning. IEEE Trans Auton Ment Dev 1(1):44–54

Nagai Y, Kawai Y, Asada M (2011) Emergence of mirror neuron system: Immature vision leads to self-other correspondence. In: IEEE international conference on development and learning, and epigenetic robotics (ICDL-EpiRob 2011)

Neisser U (ed) (1993) The perceived self: ecological and interpersonal sources of self knowledge. Cambridge University Press, Cambridge

Ogata T, Sugano S (2000) Emotional communication between humans and the autonomous robot wamoeba-2 (waseda amoeba) which has the emotion model. JSME Int J Ser C 43(3):568–574

Premack D, Woodruff G (1978) Does the chimpanzee have a theory of mind? Behav Brain Sci 1(4):515–526

Purves D, Augustine GA, Fitzpatrick D, Hall WC, LaMantia A-S, McNamara JO, White LE (eds) (2012) Neuroscience, 5th edn. Sinauer Associates Inc, Sunderland

Riek LD, Robinson P (2009) Affective-centered design for interactive robots. In: Proceedings of the AISB symposium on new frontiers in human-robot interaction

Rizzolatti G (2005) The mirror neuron system and its function in humans. Anat Embryol 201:419–421

Rizzolatti G, Sinigaglia C, Anderson TF (2008) Mirrors in the brain—how our minds share actions and emotions. Oxford University Press, Oxford

Russell JA (1980) A circumplex model of affect. J Personal Soc Psychol 39:1161–1178

Ryan RM, Deci EL (2000) Intrinsic and extrinsic motivations: classic definitions and new directions. Contemp Educ Psychol 25(1):54–67

Schraw G (1998) Promoting general metacognitive awareness. Instruct Sci 26:113–125

Shamay-Tsoory SG, Aharon-Peretz J, Perry D (2009) Two systems for empathy: a double dissociation between emotional and cognitive empathy in inferior frontal gyrus versus ventromedial prefrontal lesions. Brain 132:617–627

Smith A (2006) Cognitive empathy and emotional empathy in human behavior and evolution. Psychol Rec 56:3–21

Sommerville JA, Woodward AL, Needham A (2005) Action experience alters 3-month-old infants’ perception of others’ actions. Cognition 96:B1–B11

Sperry RW (1952) Neurology and the mind-brain problem. Am Scientist 40:291–312

Takahashi Hi, Kato M, Matsuura M, Mobbs D, Suhara T, Okubo Y (2009) When your gain is my pain and your pain is my gain: neural correlates of envy and schadenfreude. Science 323:937–939

Takahashi Y, Tamura Y, Asada M, Negrello M (2010) Emulation and behavior understanding through shared values. Robotics Auton Syst 58(7):855–865

Varley R, Siegal M, Want SC (2001) Severe impairment in grammar does not preclude theory of mind. Neurocase 7(6):489–493

Watanabe A, Ogino M, Asada M (2007) Mapping facial expression to internal states based on intuitive parenting. J Robotics Mechatron 19(3):315–323

Yoshikawa Y, Asada M, Hosoda K (2001) Developmental approach to spatial perception for imitation learning: Incremental demonstrator’s view recovery by modular neural network. In: Proceedings of the 2nd IEEE/RAS international conference on humanoid robot

Acknowledgments

This research was supported by Grants-in-Aid for Scientific Research (Research Project Number: 24000012). The author expresses his appreciation for constructive discussions with Dr. Masaki Ogino (Kansai University), Dr. Yukie Nagai (Osaka University), Hisashi Ishihara (Osaka University), Dr. Hideyuki Takahashi (Osaka University), Dr. Ai Kawakami (Tamagawa University), Dr. Matthias Rolf, and other members of the project.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution License which permits any use, distribution, and reproduction in any medium, provided the original author(s) and the source are credited.

About this article

Cite this article

Asada, M. Towards Artificial Empathy. Int J of Soc Robotics 7, 19–33 (2015). https://doi.org/10.1007/s12369-014-0253-z

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12369-014-0253-z